Semantics Based on the Physical Characteristics of Facial Expressions Used to Produce Japanese Vowels

Abstract

1. Introduction

2. Materials and Methods

2.1. Participants

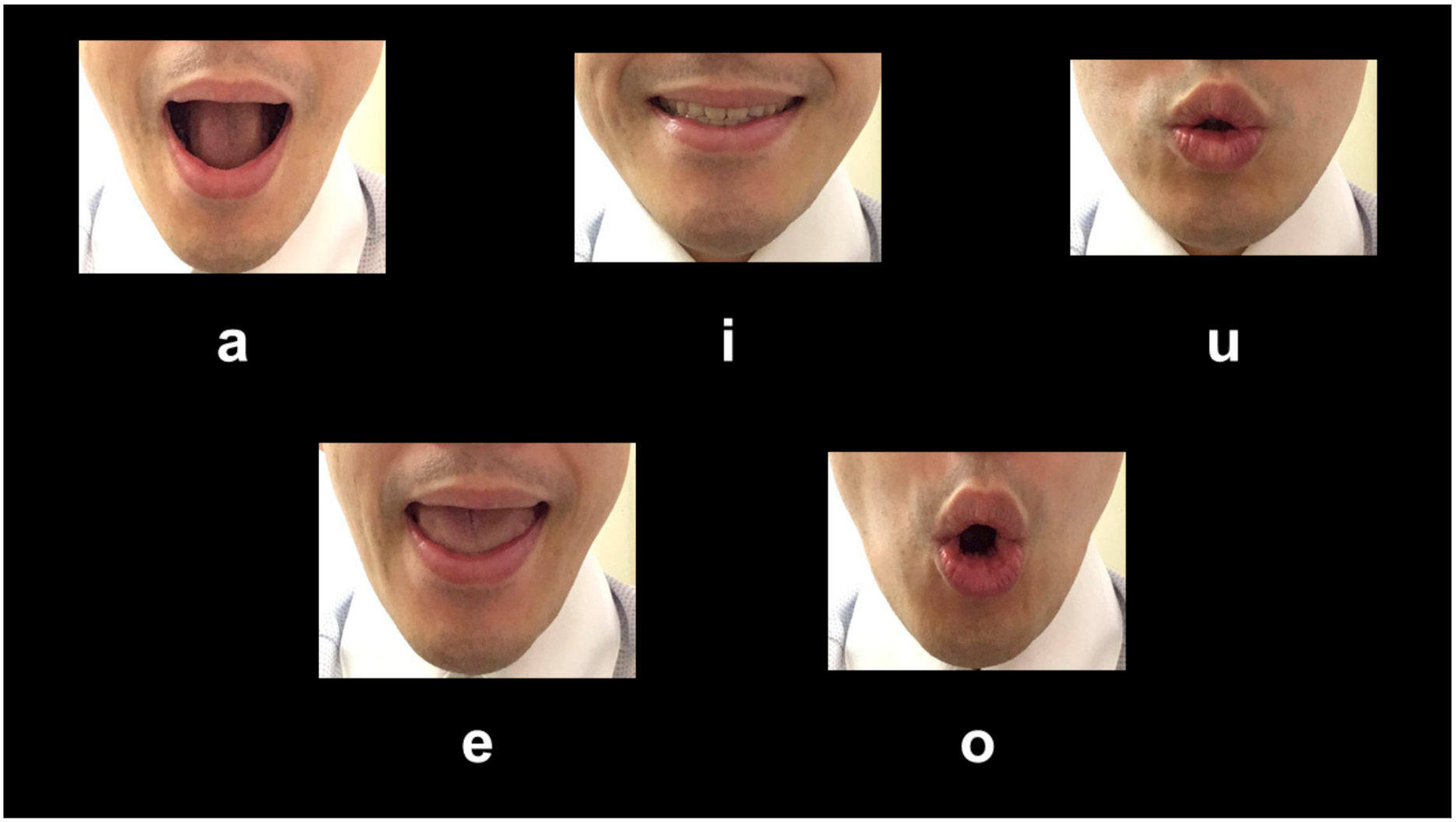

2.2. Materials

2.3. Procedure

2.4. Statistical Analysis

3. Results

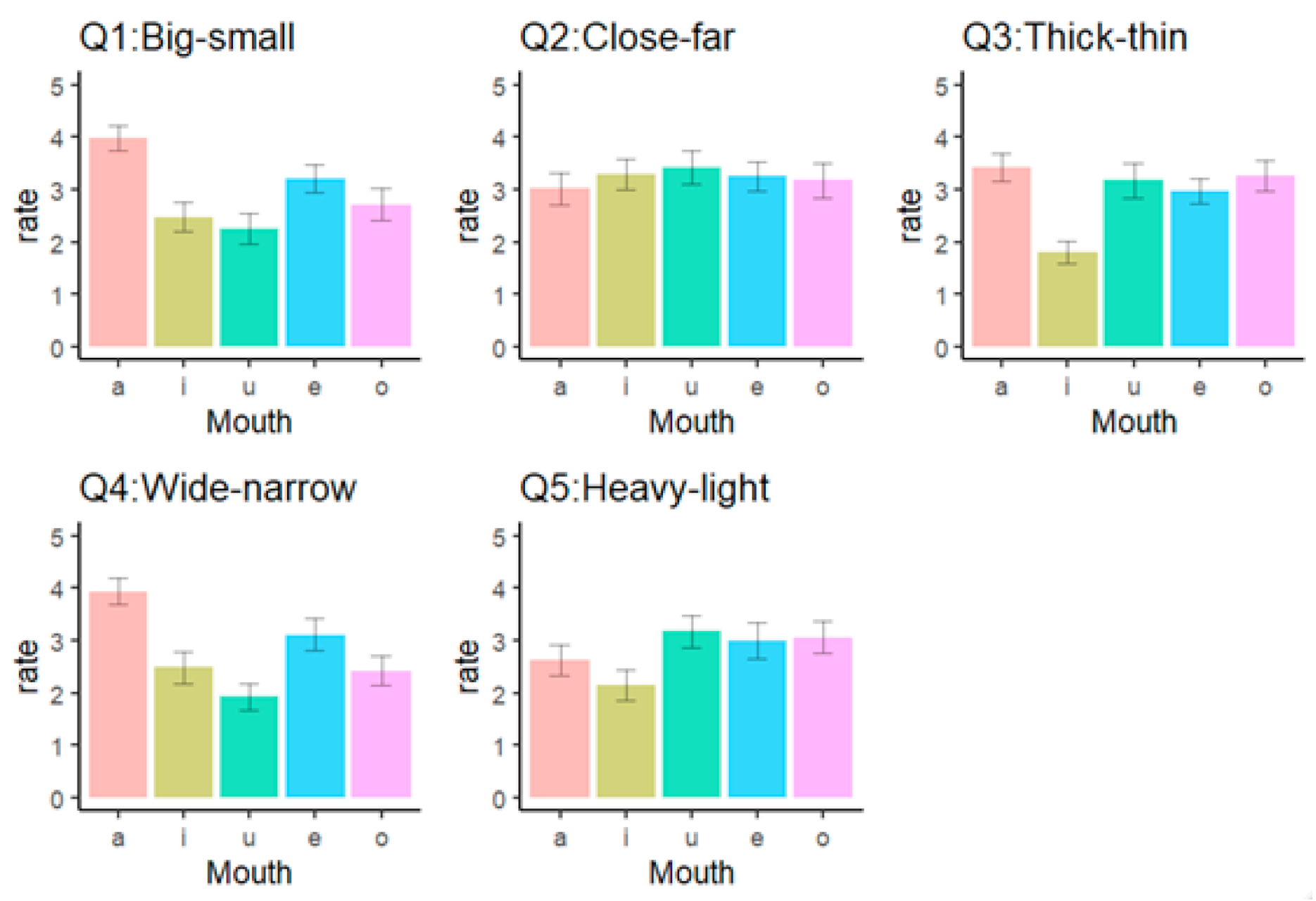

3.1. Big-Small (Q1: Size)

3.2. Close-Far (Q2: Distance)

3.3. Thick-Thin (Q3: Thickness)

3.4. Wide-Narrow (Q4: Extent)

3.5. Heavy-Light (Q5: Weight)

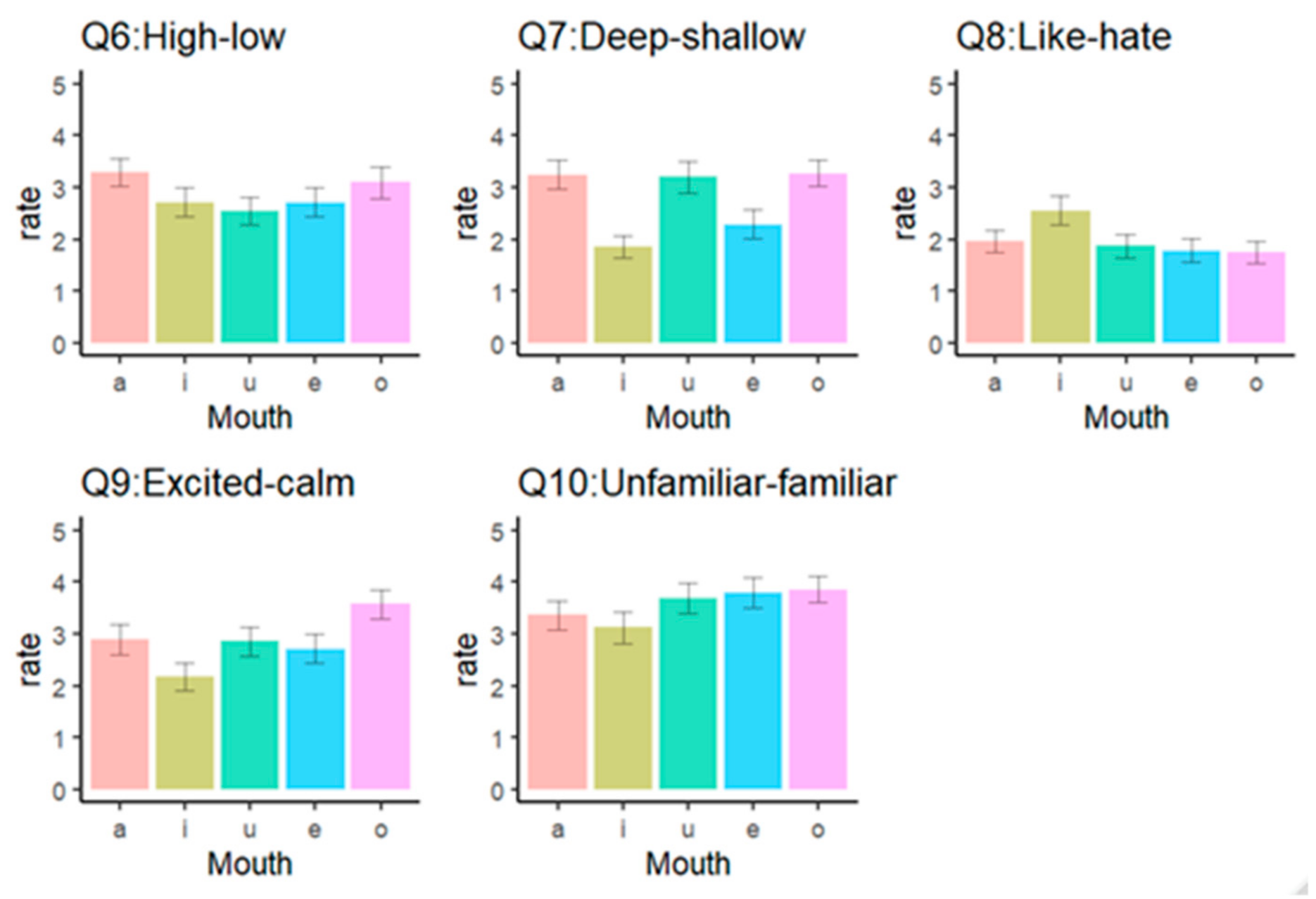

3.6. High-Low (Q6: Height)

3.7. Deep-Shallow (Q7: Depth)

3.8. Like-Hate (Q8: Preference)

3.9. Excited-Calm (Q9: Arousal)

3.10. Unfamiliar-Familiar (Q10: Familiarity: A Reverse Item)

4. Discussion

Limitations

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Data Availability Statement

References

- Köhler, W. Gestalt Psychology; Liveright: New York, NY, USA, 1929. [Google Scholar]

- Maurer, D.; Pathman, T.; Mondloch, C.J. The shape of boubas: Sound-shape correspondences in toddlers and adults. Dev. Sci. 2006, 9, 316–322. [Google Scholar] [CrossRef] [PubMed]

- Ramachandran, V.S.; Hubbard, E.M. Synaesthesia: A window into perception, thought, and language. J. Conscious. Stud. 2001, 8, 3–34. [Google Scholar]

- Newman, S.S. Further experiments on phonetic symbolism. Am. J. Psychol. 1933, 45, 53–75. [Google Scholar] [CrossRef]

- Sapir, E. A study in phonetic symbolism. J. Exp. Psychol. 1929, 12, 225–239. [Google Scholar] [CrossRef]

- Ohala, J.J. An ethological perspective on common cross-language utilization of F₀ of voice. Phonetica 1984, 41, 1–16. [Google Scholar] [CrossRef]

- Gesn, P.R.; Ickes, W. The development of meaning contexts for empathic accuracy: Channel and sequence effects. J. Pers. Soc. Psychol. 1999, 77, 746–761. [Google Scholar] [CrossRef]

- Gaebel, W.; Wölwer, W. Facial expressivity in the course of schizophrenia and depression. Eur. Arch. Psychiatry Clin. Neurosci. 2004, 254, 335–342. [Google Scholar] [CrossRef]

- Kocsor, F.; Kozma, L.; Neria, A.L.; Jones, D.N.; Bereczkei, T. Arbitrary signals of trustworthiness—Social judgments may rely on facial expressions even with experimentally manipulated valence. Heliyon 2019, 5, e01736. [Google Scholar] [CrossRef]

- Krumhuber, E.; Manstead, A.S.R.; Cosker, D.; Marshall, D.; Rosin, P.L. Effects of dynamic attributes of smiles in human and synthetic faces: A simulated job interview setting. J. Nonverbal Behav. 2009, 33, 1–15. [Google Scholar] [CrossRef]

- Suzuki, K.; Naitoh, K. Brief report: Useful information for face perception is described with FACS. J. Nonverbal Behav. 2003, 27, 43–55. [Google Scholar] [CrossRef]

- Wang, Y.; Zhu, Z.; Chen, B.; Fang, F. Perceptual learning and recognition confusion reveal the underlying relationships among the six basic emotions. Cog. Emot. 2019, 33, 754–767. [Google Scholar] [CrossRef] [PubMed]

- Ekman, P. An argument for basic emotions. Cogn. Emot. 1992, 6, 169–200. [Google Scholar] [CrossRef]

- Tomkins, S.S.; McCarter, R. What and where are the primary affects? Some evidence for a theory. Percept. Mot. Skills. 1964, 18, 119–158. [Google Scholar] [CrossRef] [PubMed]

- Crivelli, C.; Fridlund, A.J. Inside-out: From basic emotions theory to the behavioral ecology view. J. Nonverbal Behav. 2019, 43, 161–194. [Google Scholar] [CrossRef]

- Fridlund, A.J. The Behavioral Ecology View of Facial Displays, 25 Years Later. 2015. Available online: http://emotionresearcher.com/the-behavioral-ecology-view-of-facial-displays-25-years-later/ (accessed on 11 March 2020).

- Scarantino, A. How to do things with emotional expressions: The theory of affective pragmatics. Psychol. Inq. 2017, 28, 165–185. [Google Scholar] [CrossRef]

- Barrett, L.F.; Adolphs, R.; Marsella, S.; Martinez, A.M.; Pollak, S.D. Emotional expressions reconsidered: Challenges to inferring emotion from human facial movements. Psychol. Sci. Public Interest 2019, 20, 1–68. [Google Scholar] [CrossRef]

- Durán, J.I.; Reisenzein, R.; Fernández-Dols, J.M. Coherence between emotions and facial expressions: A research synthesis . In The Science of Facial Expression; Fernández-Dols, J.M., Russell, J.A., Eds.; Oxford University Press: New York, NY, USA, 2017; pp. 107–129. [Google Scholar]

- Hyniewska, S.; Sato, W.; Kaiser, S.; Pelachaud, C. Naturalistic emotion decoding from facial action sets. Front. Psychol. 2018, 9, 2678. [Google Scholar] [CrossRef]

- Reschke, P.J.; Walle, E.A.; Knothe, J.M.; Lopez, L.D. The influence of context on distinct facial expressions of disgust. Emotion 2019, 19, 365–370. [Google Scholar] [CrossRef]

- Namba, S.; Kabir, R.S.; Miyatani, M.; Nakao, T. Spontaneous facial actions map onto emotional experiences in a non-social context: Toward a component-based approach. Front. Psychol. 2017, 8, 633. [Google Scholar] [CrossRef]

- Hamano, S. The Sound-Symbolic System of Japanese (Studies in Japanese Linguistics); CSLI Publicatins & Kurosio: Stanford, CA, USA; Tokyo, Japan, 1998. [Google Scholar]

- Rummer, R.; Schweppe, J.; Schlegelmilch, R.; Grice, M. Mood is linked to vowel type: The role of articulatory movements. Emotion 2014, 14, 246–250. [Google Scholar] [CrossRef]

- McIntosh, D.N.; Zajonc, R.B.; Vig, P.S.; Emerick, S.W. Facial movement, breathing, temperature, and affect: Implications of the vascular theory of emotional efference. Cog. Emot. 1997, 11, 171–196. [Google Scholar] [CrossRef]

- Zajonc, R.B.; Murphy, S.T.; Inglehart, M. Feeling and facial efference: Implications of the vascular theory of emotions. Psychol. Rev. 1989, 96, 395–416. [Google Scholar] [CrossRef] [PubMed]

- Ono, M. (Ed.) Nihongo Onomatope Jiten (Japanese Onomatopoeia Dictionary); Shogakukan: Tokyo, Japan, 2007. [Google Scholar]

- Asano, M.; Imai, M.; Kita, S.; Kitajo, K.; Okada, H.; Thierry, G. Sound symbolism scaffolds language development in preverbal infants. Cortex 2015, 63, 196–205. [Google Scholar] [CrossRef] [PubMed]

- Hoshi, H.; Kwon, N.; Akita, K.; Auracher, J. Semantic associations dominate over perceptual associations in vowel–size iconicity. i-Perception 2019, 10, 1–31. [Google Scholar] [CrossRef]

- Imai, M.; Kita, S. The sound symbolism bootstrapping hypothesis for language acquisition and language evolution. Philos. Trans. R. Soc. B 2014, 369, 20130298. [Google Scholar] [CrossRef]

- Imai, M.; Kita, S.; Nagumo, M.; Okada, H. Sound symbolism facilitates early verb learning. Cognition 2008, 109, 54–65. [Google Scholar] [CrossRef]

- Kambara, T.; Umemura, T. The relationships between initial consonants in Japanese sound symbolic words and familiarity, multi-sensory imageability, emotional valence, and arousal. J. Psych. Res. 2020. accepted. [Google Scholar]

- Kantartzis, K.; Imai, M.; Kita, S. Japanese sound-symbolism facilitates word learning in English-speaking children. Cogn. Sci. 2011, 35, 575–586. [Google Scholar] [CrossRef]

- Kawahara, S. Sound symbolism and theoretical phonology. Lang. Linguist. Compass 2020, 14, e12372. [Google Scholar] [CrossRef]

- Motoki, K.; Saito, T.; Park, J.; Velasco, C.; Spence, C.; Sugiura, M. Tasting names: Systematic investigations of taste-speech sounds associations. Food. Qual. Pref. 2020, 80, 103801. [Google Scholar] [CrossRef]

- Osaka, N. Ideomotor response and the neural representation of implied crying in the human brain: An fMRI study using onomatopoeia. Jpn. Psychol. Res. 2011, 53, 372–378. [Google Scholar] [CrossRef][Green Version]

- Shinohara, K.; Kawahara, S. A cross-linguistic study of sound symbolism. In Proceedings of the Annual Meeting of the Berkeley Linguistics Society; University of California Press: Berkeley, CA, USA, 2010; pp. 396–410. [Google Scholar] [CrossRef]

- Klink, R.R. Creating brand names with meaning: The use of sound symbolism. Mark. Lett. 2000, 11, 5–20. [Google Scholar] [CrossRef]

- Adelman, J.S.; Estes, Z.; Cossu, M. Emotional sound symbolism: Languages rapidly signal valence via phonemes. Cognition 2018, 175, 122–130. [Google Scholar] [CrossRef] [PubMed]

- Aryani, A.; Jacobs, A.M. Affective congruence between sound and meaning of words facilitates semantic decision. Behav. Sci. 2018, 8, 56. [Google Scholar] [CrossRef] [PubMed]

- Aryani, A.; Conrad, M.; Schmidtke, D.; Jacobs, A. Why ‘piss’ is ruder than ‘pee’? The role of sound in affective meaning making. PLoS ONE 2018, 13, e0198430. [Google Scholar] [CrossRef]

- Aryani, A.; Hsu, C.T.; Jacobs, A.M. The sound of words evokes affective brain responses. Brain Sci. 2018, 8, 94. [Google Scholar] [CrossRef] [PubMed]

- Aryani, A.; Hsu, C.T.; Jacobs, A.M. Affective iconic words benefit from additional sound-meaning integration in the left amygdala. Hum. Brain Map. 2019, 40, 5289–5300. [Google Scholar] [CrossRef]

- Aryani, A.; Isbilen, E.S.; Christiansen, M.H. Affective Arousal Links Sound to Meaning. Psych. Sci. 2020, 31, 978–986. [Google Scholar] [CrossRef]

- Hara, F.; Endo, K. Dynamic control of lip-configuration of a mouth robot for Japanese vowels. Robot. Auton. Syst. 2000, 31, 161–169. [Google Scholar] [CrossRef]

- Osgood, C.E.; Suci, G.J.; Tannenbaum, P.H. The Measurement of Meaning; University of Illinois Press: Urbana, IL, USA, 1957. [Google Scholar]

- Sato, K.; Mitsukura, Y. Effects of music on image impression and relationship between impression and physical properties. Electron. Commn. Jpn. 2013, 96, 1451–1458. [Google Scholar] [CrossRef]

- Perovic, S.; Folic, N.K. Visual perception of public open spaces in Niksic. Procedia Soc. Behav. Sci. 2012, 69, 921–933. [Google Scholar] [CrossRef][Green Version]

- Hamano, S. The Sound-Symbolic System of Japanese. Ph.D. Dissertation, University of Florida, Gainesville, FL, USA, 1986. [Google Scholar]

- Fukuda, Y.; Hiki, S. Characteristics of the mouth shape in the production of Japanese—Stroboscopic observation. J. Acoust. Soc. Jpn. 1982, 3, 75–91. [Google Scholar] [CrossRef][Green Version]

- Strack, F.; Martin, L.L.; Stepper, S. Inhibiting and facilitating conditions of the human smile: A nonobtrusive test of the facial feedback hypothesis. J. Pers. Soc. Psychol. 1988, 54, 768–777. [Google Scholar] [CrossRef] [PubMed]

- Namba, S.; Makihara, S.; Kabir, R.S.; Miyatani, M.; Nakao, T. Spontaneous facial expressions are different from posed facial expressions: Morphological properties and dynamic sequences. Curr. Psychol. 2017, 36, 593–605. [Google Scholar] [CrossRef]

| Size | Distance | Thickness | Extent | Weight | Height | Depth | Preference | Unfamiliarity | |

|---|---|---|---|---|---|---|---|---|---|

| Distance | −0.11 | ||||||||

| Thickness | 0.30 | 0.03 | |||||||

| Extent | 0.57 | −0.01 | 0.22 | ||||||

| Weight | 0.12 | 0.18 | 0.36 | 0.06 | |||||

| Height | 0.16 | −0.04 | −0.02 | 0.17 | −0.13 | ||||

| Depth | 0.25 | 0.11 | 0.34 | 0.12 | 0.28 | 0.06 | |||

| Preference | 0.00 | −0.03 | −0.18 | 0.07 | −0.16 | 0.04 | −0.09 | ||

| Arousal | 0.10 | 0.08 | 0.24 | 0.01 | 0.19 | 0.02 | 0.23 | −0.18 | |

| Unfamiliarity | −0.03 | 0.04 | 0.13 | −0.12 | 0.23 | −0.07 | 0.08 | −0.43 | 0.23 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Namba, S.; Kambara, T. Semantics Based on the Physical Characteristics of Facial Expressions Used to Produce Japanese Vowels. Behav. Sci. 2020, 10, 157. https://doi.org/10.3390/bs10100157

Namba S, Kambara T. Semantics Based on the Physical Characteristics of Facial Expressions Used to Produce Japanese Vowels. Behavioral Sciences. 2020; 10(10):157. https://doi.org/10.3390/bs10100157

Chicago/Turabian StyleNamba, Shushi, and Toshimune Kambara. 2020. "Semantics Based on the Physical Characteristics of Facial Expressions Used to Produce Japanese Vowels" Behavioral Sciences 10, no. 10: 157. https://doi.org/10.3390/bs10100157

APA StyleNamba, S., & Kambara, T. (2020). Semantics Based on the Physical Characteristics of Facial Expressions Used to Produce Japanese Vowels. Behavioral Sciences, 10(10), 157. https://doi.org/10.3390/bs10100157