Deep Reinforcement Learning-Based Resource Allocation for Content Distribution in IoT-Edge-Cloud Computing Environments

Abstract

1. Introduction

- We tackle the joint resource allocation issue by minimizing network delay, where cross-layer cooperative content caching and request routing are designed to improve the content distribution and network quality of service (QoS) in the asymmetrical IoV environment, including RSUs, BSs and the cloud.

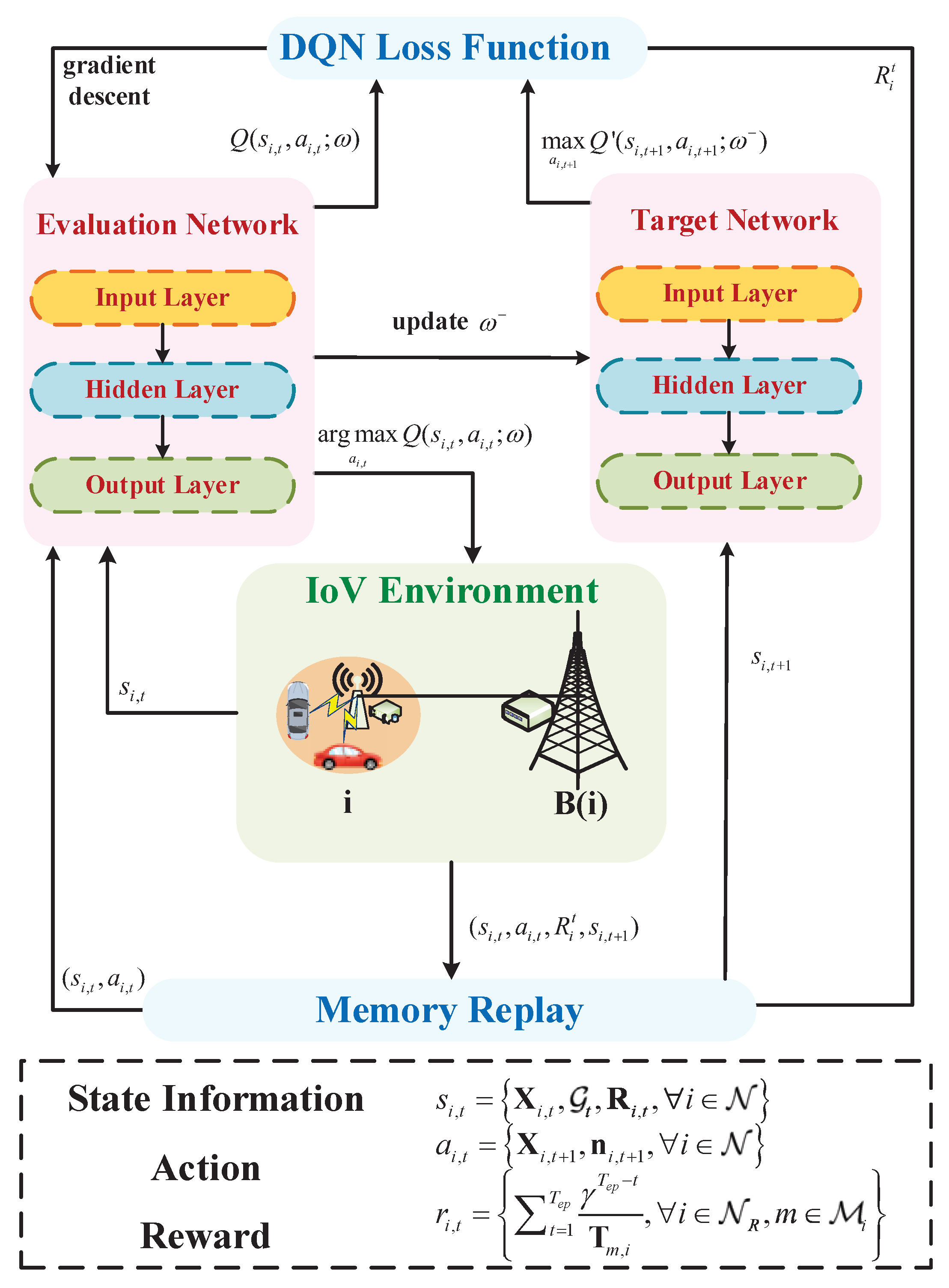

- We propose a new deep Q network (DQN) policy to handle the proposed delay optimization issue by making content caching and request routing decisions on the basis of the perceptive request history and network state.

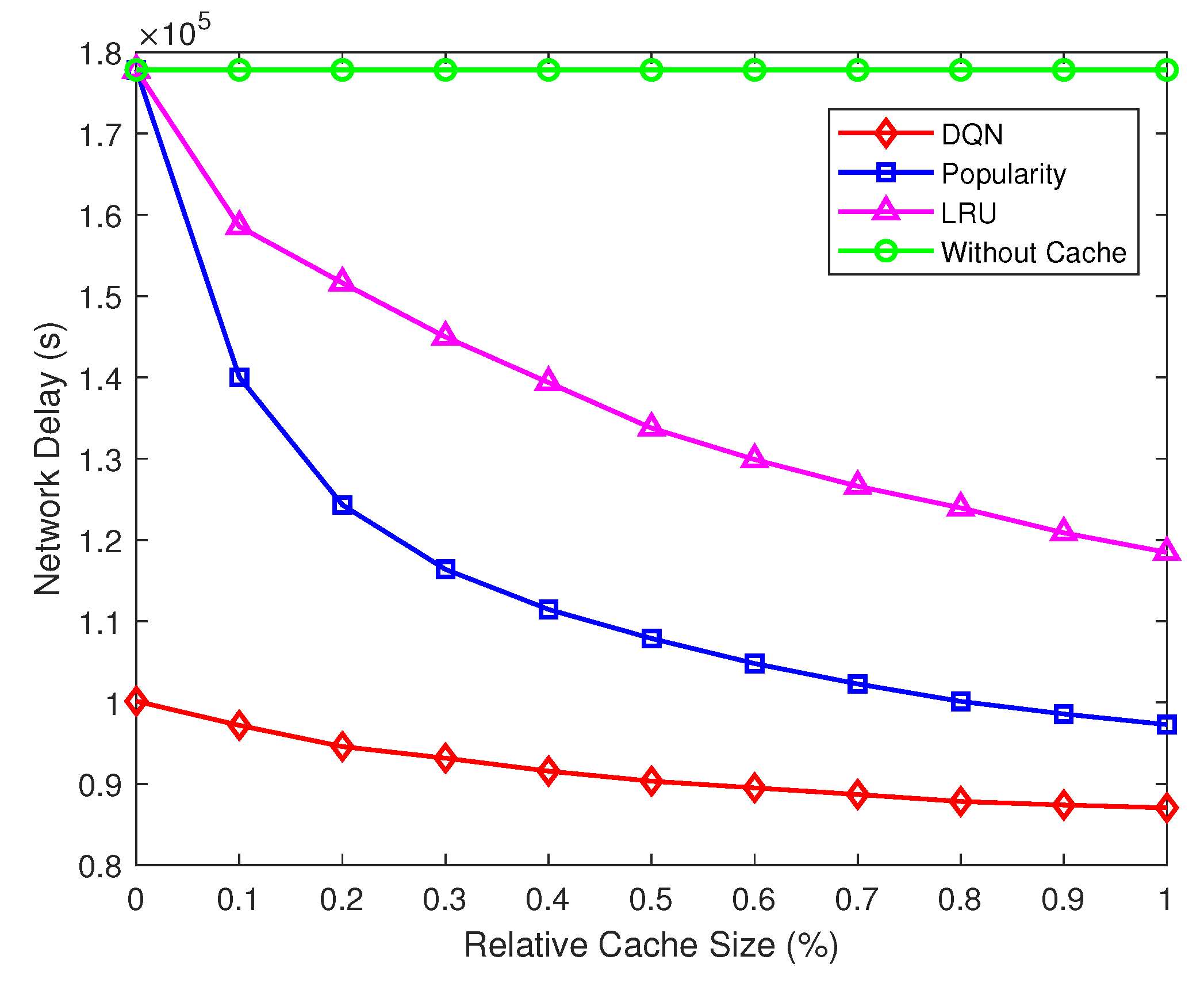

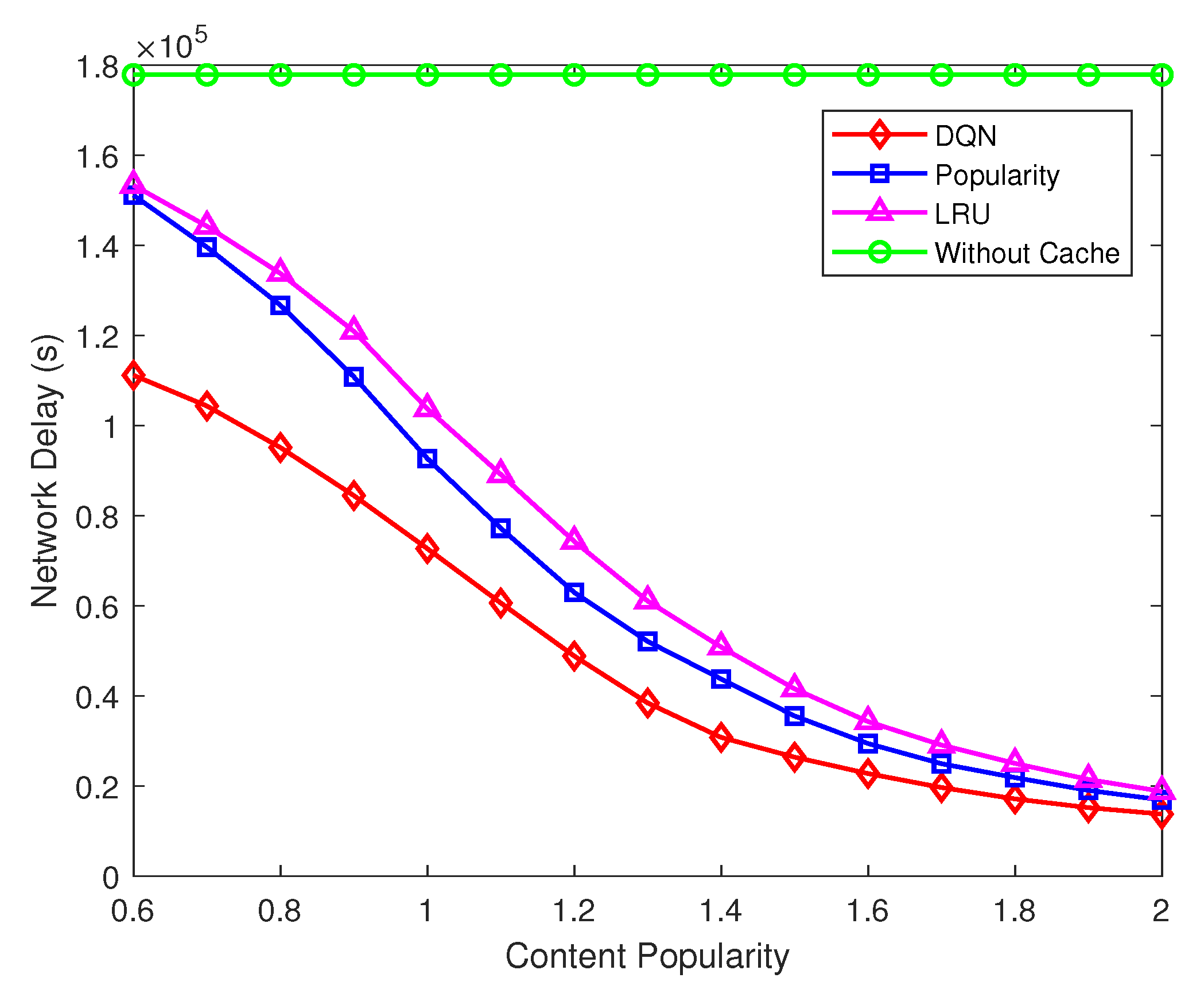

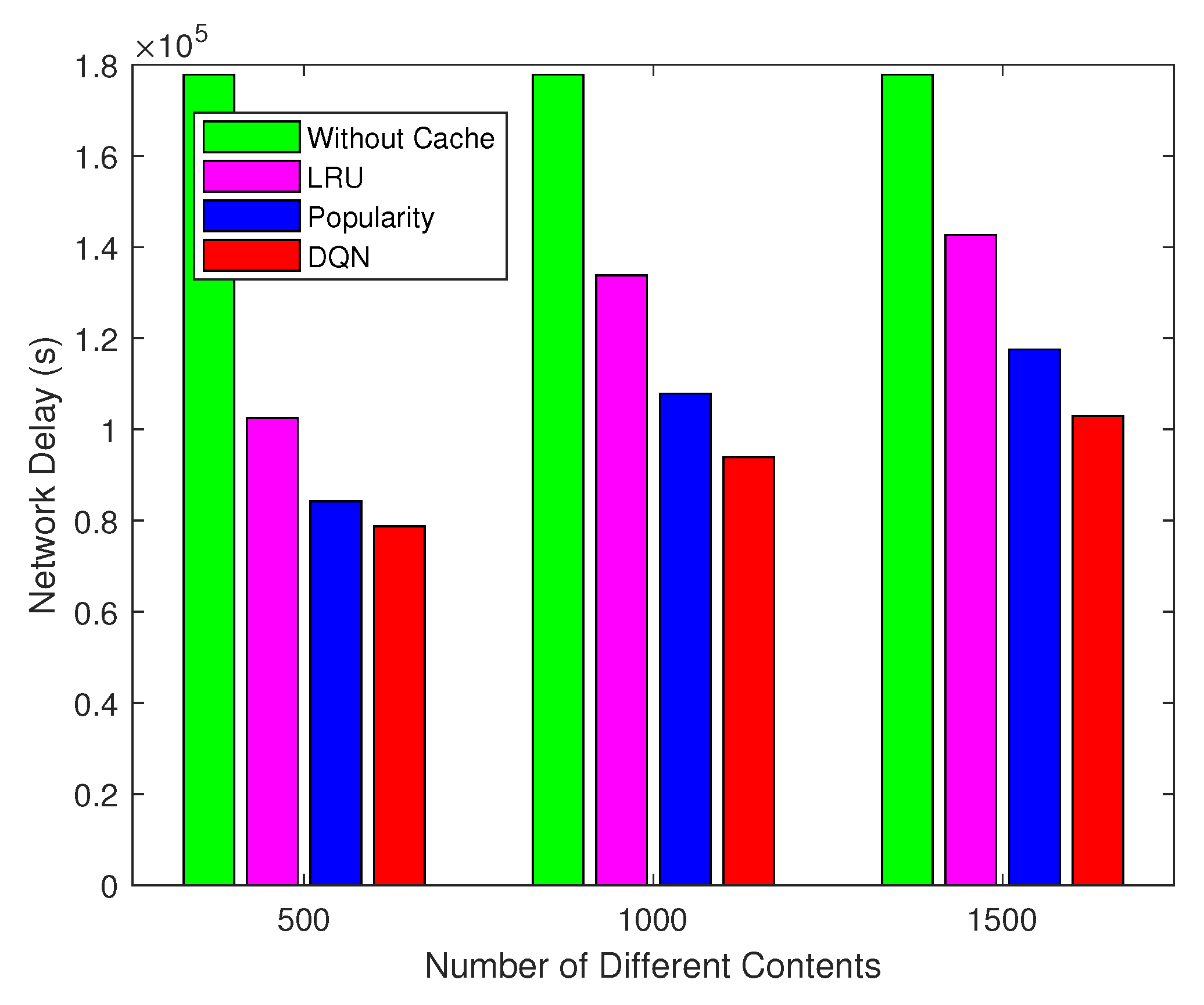

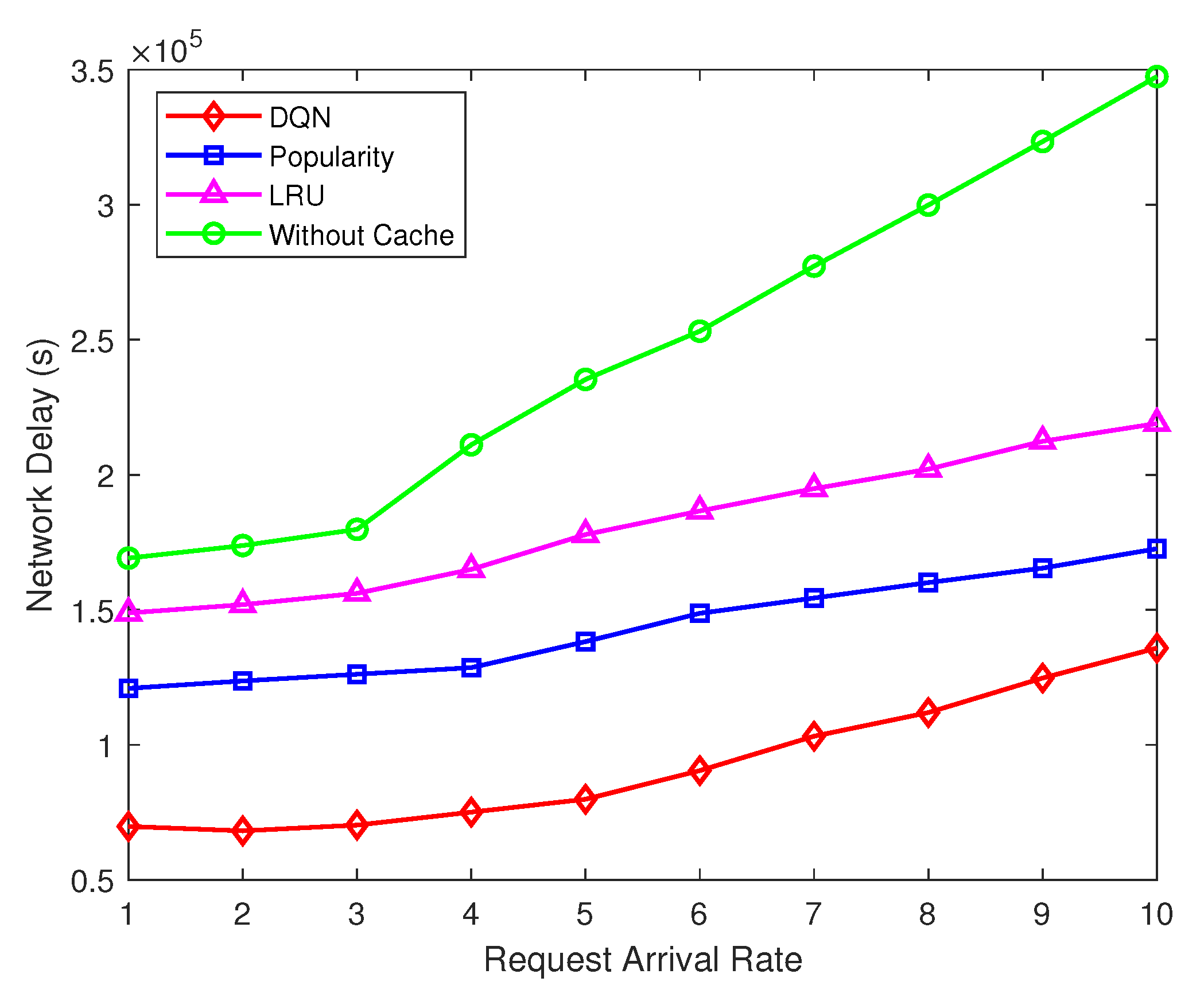

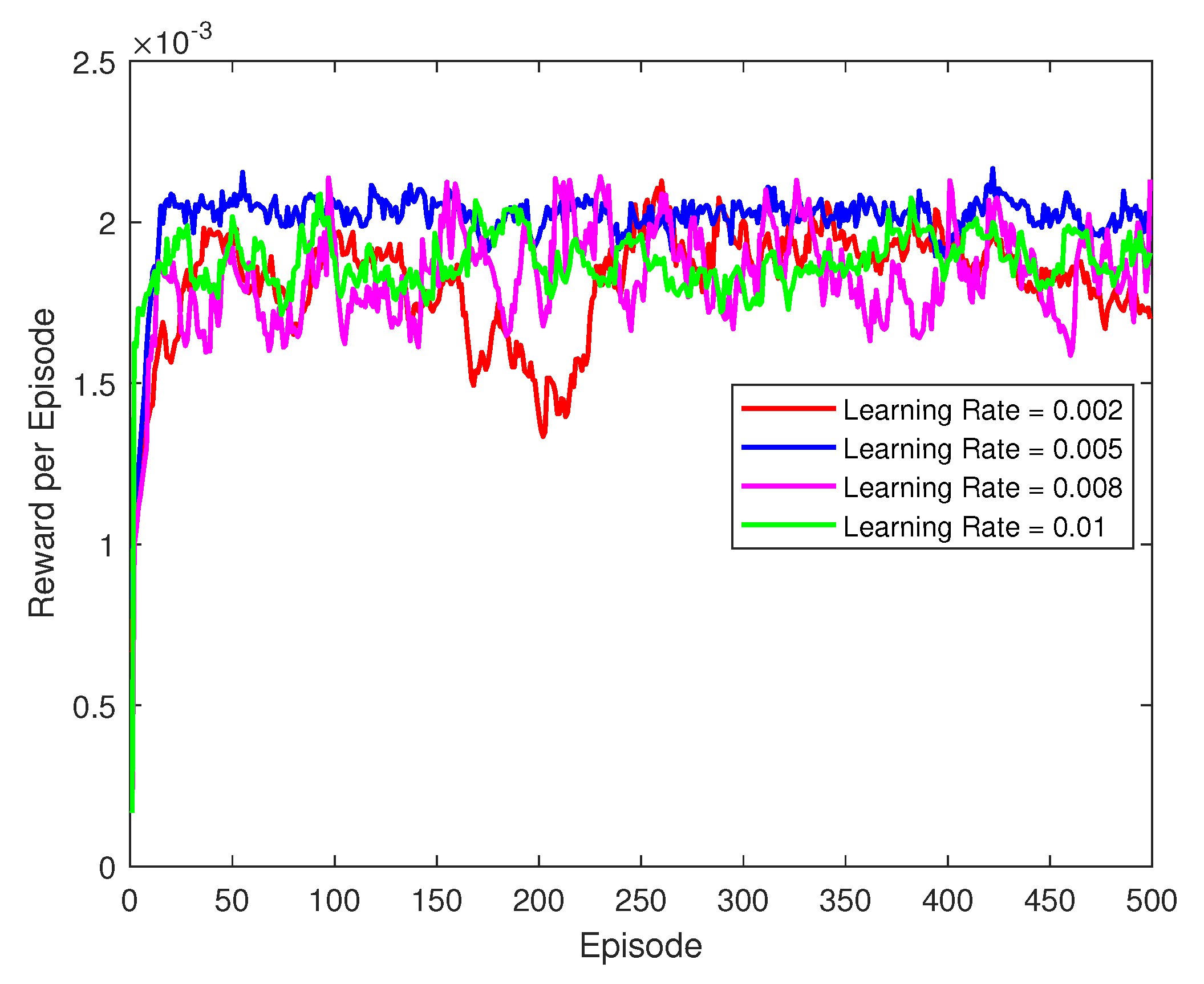

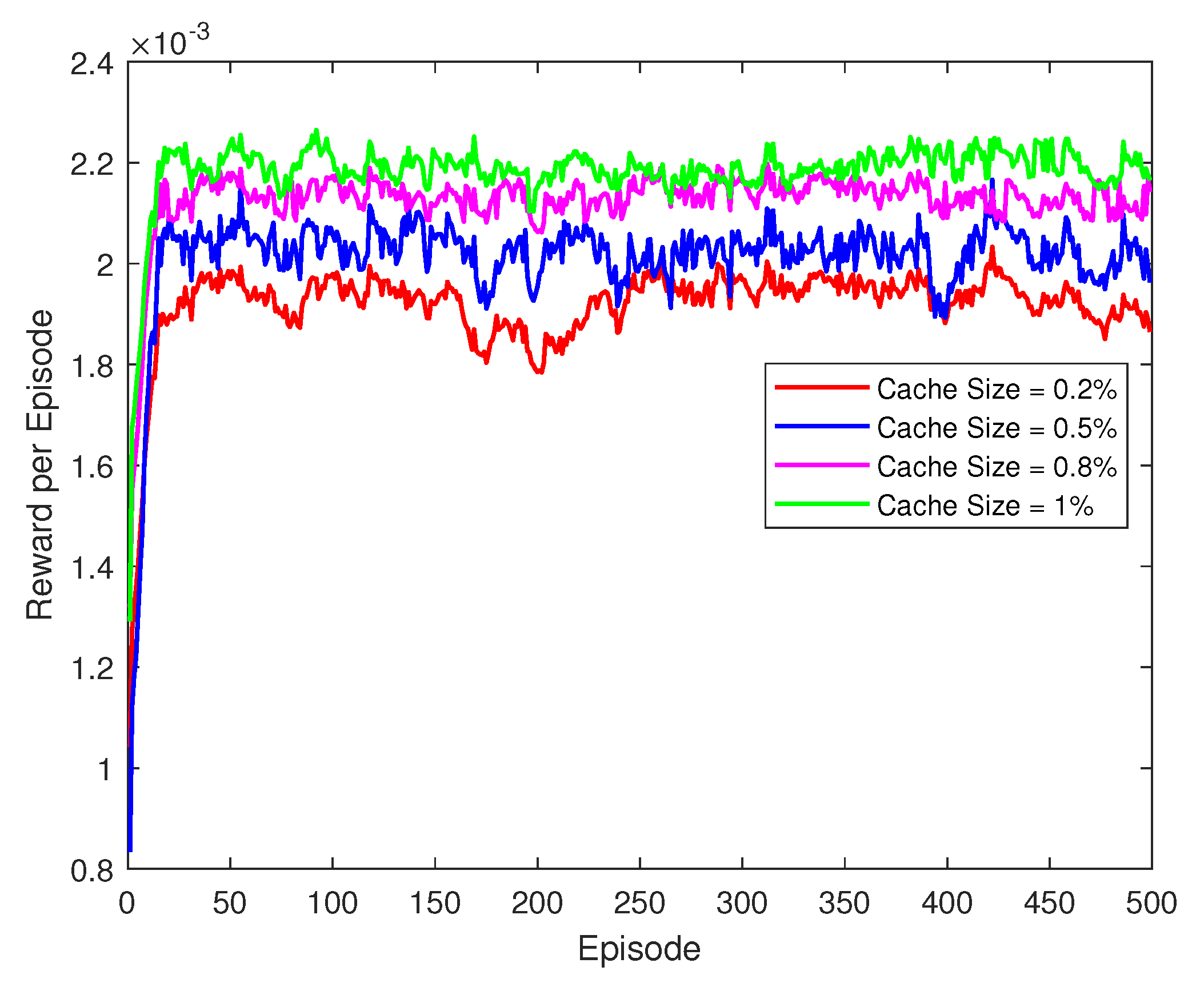

- The performance of our solution is evaluated in different system conditions. Extensive real data-based simulations show that our proposed strategy has lower network latency compared with the current solutions in the cloud-edge collaboration system. In addition, the proposed DQN model can adapt to the changes of network states and user requirements and achieve fast convergence.

2. Related Work

2.1. Delay-Sensitive Resource Allocation in Multi-Access Edge Computing

2.2. Delay-Sensitive Resource Allocation in IoT-Edge-Cloud Computing Environments

3. System Model

3.1. Network Model

3.2. File Popularity Model

3.3. Delay Model

3.3.1. Transmission Delay

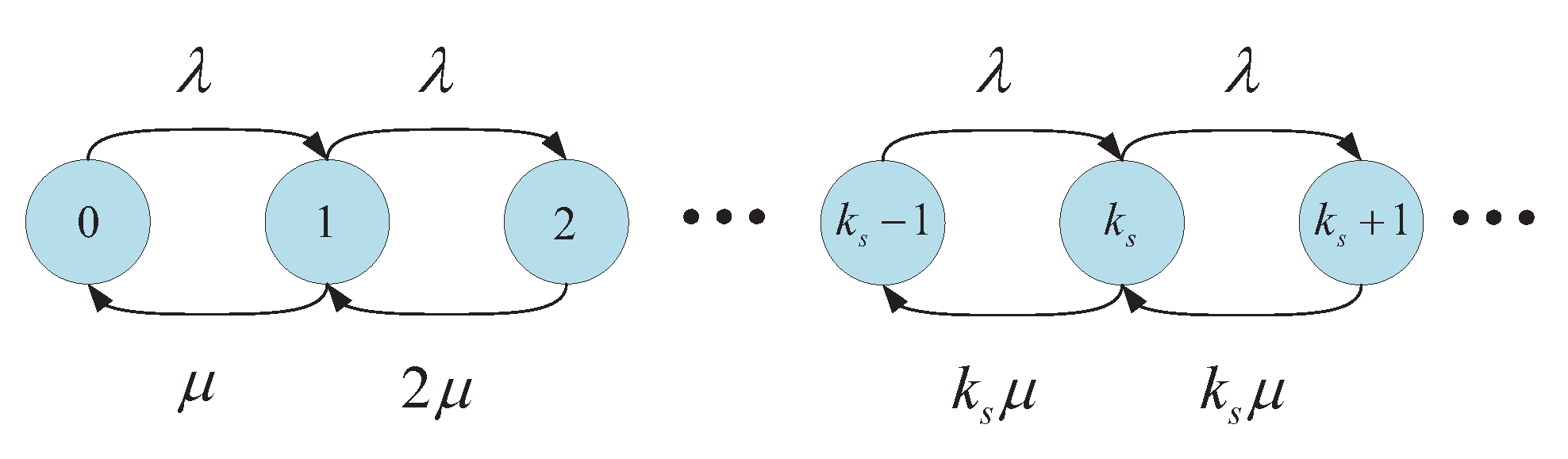

3.3.2. Sojourn Delay

3.4. Problem Formulation

4. Intelligent Caching and Routing Policy

| Algorithm 1 Workflow of the DQN-based cooperative caching and routing algorithm |

|

5. Simulation Results and Discuss

5.1. Simulation Settings

5.2. Simulation Results

6. Summary and Future Work

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Qureshi, K.N.; Din, S.; Jeon, G.; Piccialli, F. Internet of Vehicles: Key Technologies, Network Model, Solutions and Challenges With Future Aspects. IEEE Trans. Intell. Transp. Syst. 2021, 22, 1777–1786. [Google Scholar] [CrossRef]

- Li, X.; Zheng, Y.; Alshehri, M.D.; Hai, L.; Balasubramanian, V.; Zeng, M.; Nie, G. Cognitive AmBC-NOMA IoV-MTS Networks With IQI: Reliability and Security Analysis. IEEE Trans. Intell. Transp. Syst. 2021, 1–12. [Google Scholar] [CrossRef]

- Singh, P.K.; Nandi, S.K.; Nandi, S. A tutorial survey on vehicular communication state of the art, and future research directions. Veh. Commun. 2019, 18, 100164. [Google Scholar] [CrossRef]

- Cooper, C.; Franklin, D.; Ros, M.; Safaei, F.; Abolhasan, M. A Comparative Survey of VANET Clustering Techniques. IEEE Commun. Surv. Tutor. 2017, 19, 657–681. [Google Scholar] [CrossRef]

- Qureshi, K.N.; Abdullah, A.H.; Lloret, J.; Altameem, A. Road-aware routing strategies for vehicular ad hoc networks: Characteristics and comparisons. Int. J. Distrib. Sens. Netw. 2016, 12, 1605734. [Google Scholar] [CrossRef]

- Luo, X.; Liu, Y.; Chen, H.H.; Meng, W. Artificial Noise Assisted Secure Mobile Crowd Computing in Intelligently Connected Vehicular Networks. IEEE Trans. Veh. Technol. 2021, 70, 7637–7651. [Google Scholar] [CrossRef]

- Hu, X.; Wong, K.K.; Yang, K.; Zheng, Z. UAV-Assisted Relaying and Edge Computing: Scheduling and Trajectory Optimization. IEEE Trans. Wirel. Commun. 2019, 18, 4738–4752. [Google Scholar] [CrossRef]

- Cheng, N.; Lyu, F.; Quan, W.; Zhou, C.; He, H.; Shi, W.; Shen, X. Space/Aerial-Assisted Computing Offloading for IoT Applications: A Learning-Based Approach. IEEE J. Sel. Areas Commun. 2019, 37, 1117–1129. [Google Scholar] [CrossRef]

- Liu, Y.; Wang, W.; Chen, H.H.; Lyu, F.; Wang, L.; Meng, W.; Shen, X. Physical Layer Security Assisted Computation Offloading in Intelligently Connected Vehicle Networks. IEEE Trans. Wirel. Commun. 2021, 20, 3555–3570. [Google Scholar] [CrossRef]

- Cao, Y.; Chen, Y. QoE-based node selection strategy for edge computing enabled Internet-of-Vehicles (EC-IoV). In Proceedings of the 2017 IEEE Visual Communications and Image Processing (VCIP), St. Petersburg, FL, USA, 10–13 December 2017; pp. 1–4. [Google Scholar] [CrossRef]

- Hadded, M.; Muhlethaler, P.; Laouiti, A.; Zagrouba, R.; Saidane, L.A. TDMA-Based MAC Protocols for Vehicular Ad Hoc Networks: A Survey, Qualitative Analysis, and Open Research Issues. IEEE Commun. Surv. Tutor. 2015, 17, 2461–2492. [Google Scholar] [CrossRef]

- Das, T.; Chen, L.; Kundu, R.; Bakshi, A.; Sinha, P.; Srinivasan, K.; Bansal, G.; Shimizu, T. CoReCast: Collision resilient broadcasting in vehicular networks. In Proceedings of the 16th Annual International Conference on Mobile Systems, Applications, and Services, Munich, Germany, 10–15 June 2018; pp. 217–229. [Google Scholar]

- Chen, C.; Wang, C.; Qiu, T.; Atiquzzaman, M.; Wu, D.O. Caching in Vehicular Named Data Networking: Architecture, Schemes and Future Directions. IEEE Commun. Surv. Tutor. 2020, 22, 2378–2407. [Google Scholar] [CrossRef]

- An, K.; Yan, X.; Liang, T.; Lu, W. Mobility Prediction Based Vehicular Edge Caching: A Deep Reinforcement Learning Based Approach. In Proceedings of the 2019 IEEE 19th International Conference on Communication Technology (ICCT), Xi’an, China, 16–19 October 2019; pp. 1120–1125. [Google Scholar] [CrossRef]

- Xu, X.; Li, H.; Xu, W.; Liu, Z.; Yao, L.; Dai, F. Artificial intelligence for edge service optimization in Internet of Vehicles: A survey. Tsinghua Sci. Technol. 2022, 27, 270–287. [Google Scholar] [CrossRef]

- Zhou, J.; Wu, F.; Zhang, K.; Mao, Y.; Leng, S. Joint optimization of Offloading and Resource Allocation in Vehicular Networks with Mobile Edge Computing. In Proceedings of the 2018 tenth International Conference on Wireless Communications and Signal Processing (WCSP), Hangzhou, China, 10–20 October 2018; pp. 1–6. [Google Scholar] [CrossRef]

- Sharma, S.; Kaushik, B. A survey on internet of vehicles: Applications, security issues & solutions. Veh. Commun. 2019, 20, 100182. [Google Scholar]

- Yang, L.; Cao, J.; Cheng, H.; Ji, Y. Multi-User Computation Partitioning for Latency Sensitive Mobile Cloud Applications. IEEE Trans. Comput. 2015, 64, 2253–2266. [Google Scholar] [CrossRef]

- Zhang, Y.; Zhang, M.; Wo, T.; Lin, X.; Yang, R.; Xu, J. A Scalable lnternet-of-Vehicles Service over Joint Clouds. In Proceedings of the 2018 IEEE Symposium on Service-Oriented System Engineering (SOSE), Bamberg, Germany, 26–29 March 2018; pp. 210–215. [Google Scholar] [CrossRef]

- Chaqfeh, M.; Mohamed, N.; Jawhar, I.; Wu, J. Vehicular Cloud data collection for Intelligent Transportation Systems. In Proceedings of the 2016 third Smart Cloud Networks Systems (SCNS), Dubai, United Arab Emirates, 19–21 December 2016; pp. 1–6. [Google Scholar] [CrossRef]

- Fang, C.; Yao, H.; Wang, Z.; Wu, W.; Jin, X.; Yu, F.R. A Survey of Mobile Information-Centric Networking: Research Issues and Challenges. IEEE Commun. Surv. Tutor. 2018, 20, 2353–2371. [Google Scholar] [CrossRef]

- Fang, C.; Guo, S.; Wang, Z.; Huang, H.; Yao, H.; Liu, Y. Data-Driven Intelligent Future Network: Architecture, Use Cases, and Challenges. IEEE Commun. Mag. 2019, 57, 34–40. [Google Scholar] [CrossRef]

- Zhang, Y.; Li, C.; Luan, T.H.; Yuen, C.; Fu, Y.; Wang, H.; Wu, W. Towards Hit-Interruption Tradeoff in Vehicular Edge Caching: Algorithm and Analysis. IEEE Trans. Intell. Transp. Syst. 2021, 23, 5198–5210. [Google Scholar] [CrossRef]

- Chen, X.; Jiao, L.; Li, W.; Fu, X. Efficient Multi-User Computation Offloading for Mobile-Edge Cloud Computing. IEEE/ACM Trans. Netw. 2016, 24, 2795–2808. [Google Scholar] [CrossRef]

- Zhou, P.; Chen, X.; Liu, Z.; Braud, T.; Hui, P.; Kangasharju, J. DRLE: Decentralized Reinforcement Learning at the Edge for Traffic Light Control in the IoV. IEEE Trans. Intell. Transp. Syst. 2021, 22, 2262–2273. [Google Scholar] [CrossRef]

- Qi, Q.; Wang, J.; Ma, Z.; Sun, H.; Cao, Y.; Zhang, L.; Liao, J. Knowledge-Driven Service Offloading Decision for Vehicular Edge Computing: A Deep Reinforcement Learning Approach. IEEE Trans. Veh. Technol. 2019, 68, 4192–4203. [Google Scholar] [CrossRef]

- Zou, J.; Hao, T.; Yu, C.; Jin, H. A3C-DO: A Regional Resource Scheduling Framework Based on Deep Reinforcement Learning in Edge Scenario. IEEE Trans. Comput. 2021, 70, 228–239. [Google Scholar] [CrossRef]

- Wang, Y.; Sheng, M.; Wang, X.; Wang, L.; Li, J. Mobile-Edge Computing: Partial Computation Offloading Using Dynamic Voltage Scaling. IEEE Trans. Commun. 2016, 64, 4268–4282. [Google Scholar] [CrossRef]

- You, C.; Huang, K.; Chae, H.; Kim, B.H. Energy-Efficient Resource Allocation for Mobile-Edge Computation Offloading. IEEE Trans. Wirel. Commun. 2017, 16, 1397–1411. [Google Scholar] [CrossRef]

- Bozorgchenani, A.; Tarchi, D.; Corazza, G.E. Centralized and Distributed Architectures for Energy and Delay Efficient Fog Network-Based Edge Computing Services. IEEE Trans. Green Commun. Netw. 2019, 3, 250–263. [Google Scholar] [CrossRef]

- Ren, J.; Yu, G.; He, Y.; Li, G.Y. Collaborative cloud and edge computing for latency minimization. IEEE Trans. Veh. Technol. 2019, 68, 5031–5044. [Google Scholar] [CrossRef]

- Kadhim, A.J.; Naser, J.I. Proactive load balancing mechanism for fog computing supported by parked vehicles in IoV-SDN. China Commun. 2021, 18, 271–289. [Google Scholar] [CrossRef]

- Feng, M.; Krunz, M.; Zhang, W. Joint Task Partitioning and User Association for Latency Minimization in Mobile Edge Computing Networks. IEEE Trans. Veh. Technol. 2021, 70, 8108–8121. [Google Scholar] [CrossRef]

- Shen, B.; Xu, X.; Dai, F.; Qi, L.; Zhang, X.; Dou, W. Dynamic Task Offloading with Minority Game for Internet of Vehicles in Cloud-Edge Computing. In Proceedings of the 2020 IEEE International Conference on Web Services (ICWS), Beijing, China, 19–23 October 2020; pp. 372–379. [Google Scholar] [CrossRef]

- Abbasi, M.; Yaghoobikia, M.; Rafiee, M.; Khosravi, M.R.; Menon, V.G. Optimal Distribution of Workloads in Cloud-Fog Architecture in Intelligent Vehicular Networks. IEEE Trans. Intell. Transp. Syst. 2021, 22, 4706–4715. [Google Scholar] [CrossRef]

- Li, Y.; Xu, S. Collaborative optimization of Edge-Cloud Computation Offloading in Internet of Vehicles. In Proceedings of the 2021 International Conference on Computer Communications and Networks (ICCCN), Online, 19–22 July 2021; pp. 1–6. [Google Scholar] [CrossRef]

- Rahman, G.M.S.; Dang, T.; Ahmed, M. Deep reinforcement learning based computation offloading and resource allocation for low-latency fog radio access networks. Intell. Converg. Netw. 2020, 1, 243–257. [Google Scholar] [CrossRef]

- Dat Tuong, V.; Phung Truong, T.; Tran, A.T.; Masood, A.; Shumeye Lakew, D.; Lee, C.; Lee, Y.; Cho, S. Delay-Sensitive Task Offloading for Internet of Things in Nonorthogonal Multiple Access MEC Networks. In Proceedings of the 2020 International Conference on Information and Communication Technology Convergence (ICTC), Jeju Island, Republic of Korea, 21–23 October 2020; pp. 597–599. [Google Scholar] [CrossRef]

- Yu, S.; Chen, X.; Zhou, Z.; Gong, X.; Wu, D. When Deep Reinforcement Learning Meets Federated Learning: Intelligent Multitimescale Resource Management for Multiaccess Edge Computing in 5G Ultradense Network. IEEE Internet Things J. 2021, 8, 2238–2251. [Google Scholar] [CrossRef]

- Ren, Y.; Chen, X.; Guo, S.; Guo, S.; Xiong, A. Blockchain-Based VEC Network Trust Management: A DRL Algorithm for Vehicular Service Offloading and Migration. IEEE Trans. Veh. Technol. 2021, 70, 8148–8160. [Google Scholar] [CrossRef]

- Katsaros, K.; Xylomenos, G.; Polyzos, G.C. MultiCache: An incrementally deployable overlay architecture for information-centric networking. In Proceedings of the Proceedings IEEE INFOCOM’10, San Diego, CA, USA, 14–19 March 2010. [Google Scholar]

- Fang, C.; Yu, F.R.; Huang, T.; Liu, J.; Liu, Y. Distributed Energy Consumption Management in Green Content-Centric Networks via Dual Decomposition. IEEE Syst. J. 2017, 11, 625–636. [Google Scholar] [CrossRef]

- Wu, J.; Zhou, S.; Niu, Z. Traffic-aware base station sleeping control and power matching for energy-delay tradeoffs in green cellular networks. IEEE Trans. Wirel. Commun. 2013, 12, 4196–4209. [Google Scholar] [CrossRef]

- Wu, J.; Bao, Y.; Miao, G.; Zhou, S.; Niu, Z. Base-station sleeping control and power matching for energy-delay tradeoffs with bursty traffic. IEEE Trans. Veh. Technol. 2015, 65, 3657–3675. [Google Scholar] [CrossRef]

- Fadlullah, Z.M.; Tang, F.; Mao, B.; Kato, N.; Akashi, O.; Inoue, T.; Mizutani, K. State-of-the-Art Deep Learning: Evolving Machine Intelligence Toward Tomorrow’s Intelligent Network Traffic Control Systems. IEEE Commun. Surv. Tutor. 2017, 19, 2432–2455. [Google Scholar] [CrossRef]

- Zhu, H.; Cao, Y.; Wang, W.; Jiang, T.; Jin, S. Deep reinforcement learning for mobile edge caching: Review, new features, and open issues. IEEE Netw. 2018, 32, 50–57. [Google Scholar] [CrossRef]

- Mao, B.; Fadlullah, Z.M.; Tang, F.; Kato, N.; Akashi, O.; Inoue, T.; Mizutani, K. Routing or Computing? The Paradigm Shift Towards Intelligent Computer Network Packet Transmission Based on Deep Learning. IEEE Trans. Comput. 2017, 66, 1946–1960. [Google Scholar] [CrossRef]

- Xu, C.; Liu, S.; Zhang, C.; Huang, Y.; Lu, Z.; Yang, L. Multi-Agent Reinforcement Learning Based Distributed Transmission in Collaborative Cloud-Edge Systems. IEEE Trans. Veh. Technol. 2021, 70, 1658–1672. [Google Scholar] [CrossRef]

- Breslau, L.; Cao, P.; Fan, L.; Phillips, G.; Shenker, S. Web Caching and Zipf-Like Distributions: Evidence and Implications; IEEE: Piscataway, NJ, USA, 1999. [Google Scholar]

- Choi, N.; Guan, K.; Kilper, D.C.; Atkinson, G. In-network caching effect on optimal energy consumption in content-centric networking. In Proceedings of the IEEE International Conference on Communications (ICC), Ottawa, ON, Canada, 10–15 June 2012; IEEE: Piscataway, NJ, USA, 2012; pp. 2889–2894. [Google Scholar]

- Xie, H.; Shi, G.; Wang, P. TECC: Towards collaborative in-network caching guided by traffic engineering. In Proceedings of the 2012 Proceedings IEEE INFOCOM, Orlando, FL, USA, 25–30 March 2012; pp. 2546–2550. [Google Scholar] [CrossRef]

- Li, J.; Liu, B.; Wu, H. Energy-efficient in-network caching for content-centric networking. IEEE Commun. Lett. 2013, 17, 797–800. [Google Scholar] [CrossRef]

- Wang, X.; Chen, M.; Taleb, T.; Ksentini, A.; Leung, V. Cache in the air: Exploiting content caching and delivery techniques for 5g systems. IEEE Commun. Mag. 2014, 52, 131–139. [Google Scholar] [CrossRef]

- Kim, Y.; Yeom, I. Performance analysis of in-network caching for content-centric networking. Comput. Netw. 2013, 57, 2465–2482. [Google Scholar] [CrossRef]

| Symbols | Notations |

|---|---|

| Amount and set of RSUs | |

| Number and set of directly connected edge devices of node i in the same layer | |

| Upper access vertex of node i | |

| Number and set of nodes horizontally connecting to | |

| Number of mobile vehicles accessed to RSU i | |

| Amount and set of different files | |

| Available wireless bandwidth of the link from the mth vehicle to the ith RSU and its traffic for content k | |

| Available wired bandwidth of the link and its traffic for content k | |

| Caching capacity for node i | |

| Average arriving rate of node i and the cloud | |

| Average serving rate of each server in node i and the cloud | |

| Amount of servers in node i and the cloud | |

| Average utilization rate of node i and the cloud | |

| Probability that n requests enter the queuing system of node i and the cloud | |

| users’ waiting probability in node i and the cloud | |

| Amount of requests to process in the queue of node i and the cloud | |

| Average response time of node i and the cloud | |

| Maximal response latency that node i and the cloud tolerate | |

| , , | Maximal bandwidths of the link , and |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Cui, T.; Yang, R.; Fang, C.; Yu, S. Deep Reinforcement Learning-Based Resource Allocation for Content Distribution in IoT-Edge-Cloud Computing Environments. Symmetry 2023, 15, 217. https://doi.org/10.3390/sym15010217

Cui T, Yang R, Fang C, Yu S. Deep Reinforcement Learning-Based Resource Allocation for Content Distribution in IoT-Edge-Cloud Computing Environments. Symmetry. 2023; 15(1):217. https://doi.org/10.3390/sym15010217

Chicago/Turabian StyleCui, Tongke, Ruopeng Yang, Chao Fang, and Shui Yu. 2023. "Deep Reinforcement Learning-Based Resource Allocation for Content Distribution in IoT-Edge-Cloud Computing Environments" Symmetry 15, no. 1: 217. https://doi.org/10.3390/sym15010217

APA StyleCui, T., Yang, R., Fang, C., & Yu, S. (2023). Deep Reinforcement Learning-Based Resource Allocation for Content Distribution in IoT-Edge-Cloud Computing Environments. Symmetry, 15(1), 217. https://doi.org/10.3390/sym15010217