1. Introduction

In most image and video processing applications, image or video frame is partitioned into blocks (usually overlapping ones) to make the processing local. Each block is then processed separately. We focus on transformations, the goal of which is extracting features. The features are stored in a memory location corresponding to the image block. Then, these features are utilized as local image descriptors for recognition.

Traditional approaches in computer vision applications partition the image into smaller two-dimensional blocks and process them sequentially, where a sequential double loop over the blocks is carried out. However, the image matrix is usually stored in memory either in a row-wise or column-wise order. As a result, accessing the entire matrix sequentially has a predictable behavior from the perspective of memory since the accesses confine with the spatial locality. On the other hand, when the image is processed in a block-wise sequence, the memory access patterns are irregular, and thus cache misses and replacement increase. The speed gap between the CPU and memory is considered a major drawback of computer performance [

1], making increased cache misses and replacement a performance issue [

2].

To improve the performance of feature extraction, some processes have to be excluded, namely partitioning the image into blocks, sequentially processing image blocks, and accumulating the result. Motivated by this idea, a fast method for extracting local features from overlapping blocks is introduced in this paper.

The presented method may find applications wherever the block-wise image representation has been used. We refer to a few sample papers where this approach was used in various application areas—in plant biology [

3], in fingerprint recognition [

4], in face recognition on infrared images [

5], in facial expression classification [

6], in optical flow estimation [

7], in denoising of medical images [

8], tamper detection [

9], image compression [

10], and in scalable video coding [

11].

The paper is organized as follows. The main idea of the method is introduced in

Section 2. In

Section 3, we present implementation details, complexity analysis, and experimental comparison to traditional approaches.

2. The Proposed Algorithm

The main idea is to avoid a sequential processing of the blocks, which is usually implemented as a slow “for” loop. We propose special auxiliary matrices that can be pre-computed and and that transfer the sequential processing into a single-step one. Since the auxiliary matrices do not depend on the image content, they can be stored and used repeatedly for different images, which makes the method even more efficient.

Consider image

with the size of

partitioned into overlapped blocks of size

each (as shown in

Figure 1), such that the number of blocks is

, where

and

. Now, let us consider a separable integral transformation with kernel function

. This can stand for Fourier transform, Laplace transform,

z-transform, cosine transform, and many others, but we are particularly interested in

moment transform, where

is a polynomial of degree

n (we refer to [

12] for more information about polynomials and moments in image analysis). The results of this transformation, which is for a single block

, are given as

are called

moments of the block. We can arrange them into a moment matrix

, where

n and

m are the

orders of the moments.

Clearly, the computation of moments up to the given order can be expressed as a matrix multiplication

where

and

represent the matrix of the discretized polynomials

and

, respectively. It is noteworthy that in most programming environments, such as MATLAB and Python, the matrix multiplication is much faster than nested loops thanks to the Intel Math Kernel Library (MKL) [

13,

14].

To compute the moments of all blocks of the image

using (

2), we have

that can be equivalently rewritten into the form

In a shorter notation, Equation (

4) can be expressed as

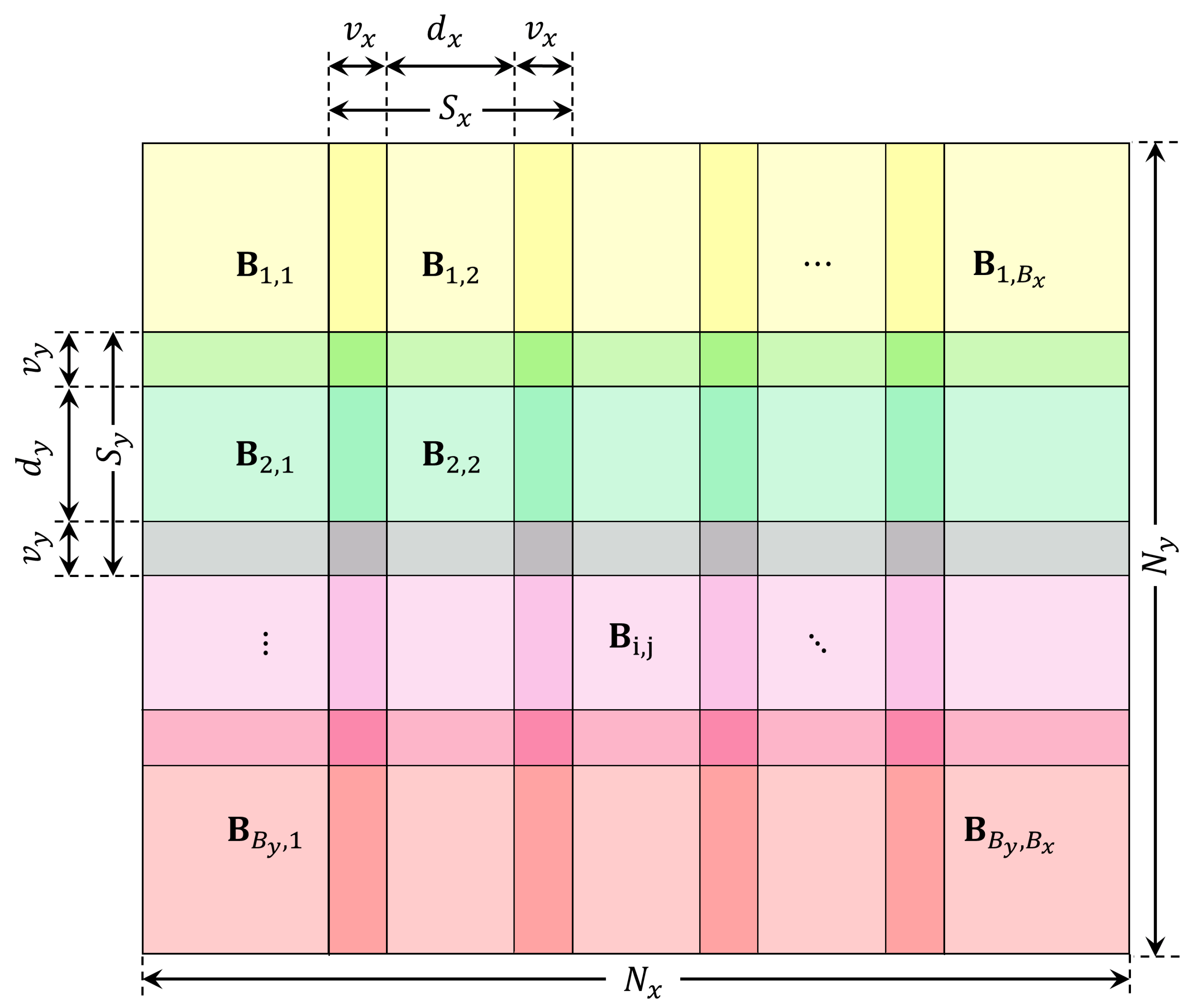

Matrix

denotes the so-called

extended image which is formed by the blocks of the original image

I arranged in such a way that they do not overlap one another, see

Figure 2.

An explicit construction of the extended image

would be time consuming because we would need to extract each block and shift it into a new location. So, we propose to perform this process implicitly by multiplying

I with “shift matrices”

and

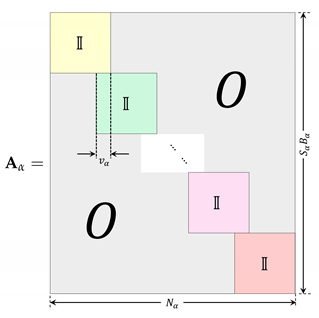

Matrix

(where

stands for

x or

y) is a rectangular matrix of the size

. It is composed of

unit submatrices of the size

, which are arranged diagonally, and in horizontal direction they are mutually shifted by

.

Now, the computation of the moments can be performed directly without the necessity of constructing the extended image. Substituting Equation (

6) into Equation (

5), we obtain

which can be further simplified to the form

where

and

.

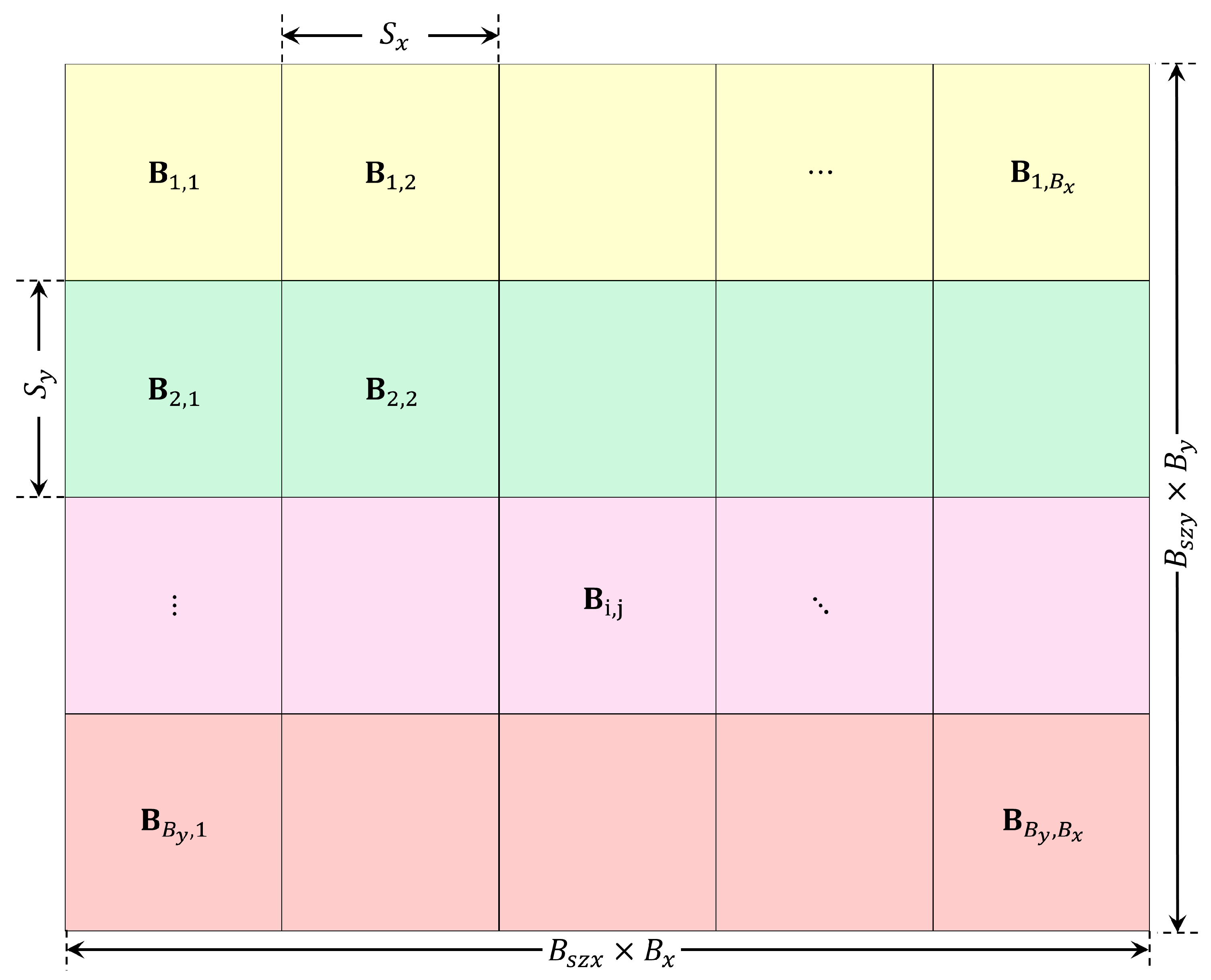

Equation (

9) performs the main result of the paper. The moments of all blocks of

I, arranged into a matrix

(see

Figure 3), can be calculated by a single matrix multiplication, without any loops over the blocks and without construction of the extended image. The matrices

and

depend only on the polynomial basis functions and on the block size and overlap but do not depend on the image

I at all. So, they can be pre-computed only once and used repeatedly. Moreover, in most block-wise representations the blocks are squares, their overlap is the same, and the kernel functions of the transform are the same in both directions. Under these circumstances, the computation simplifies even more as we have

and

.

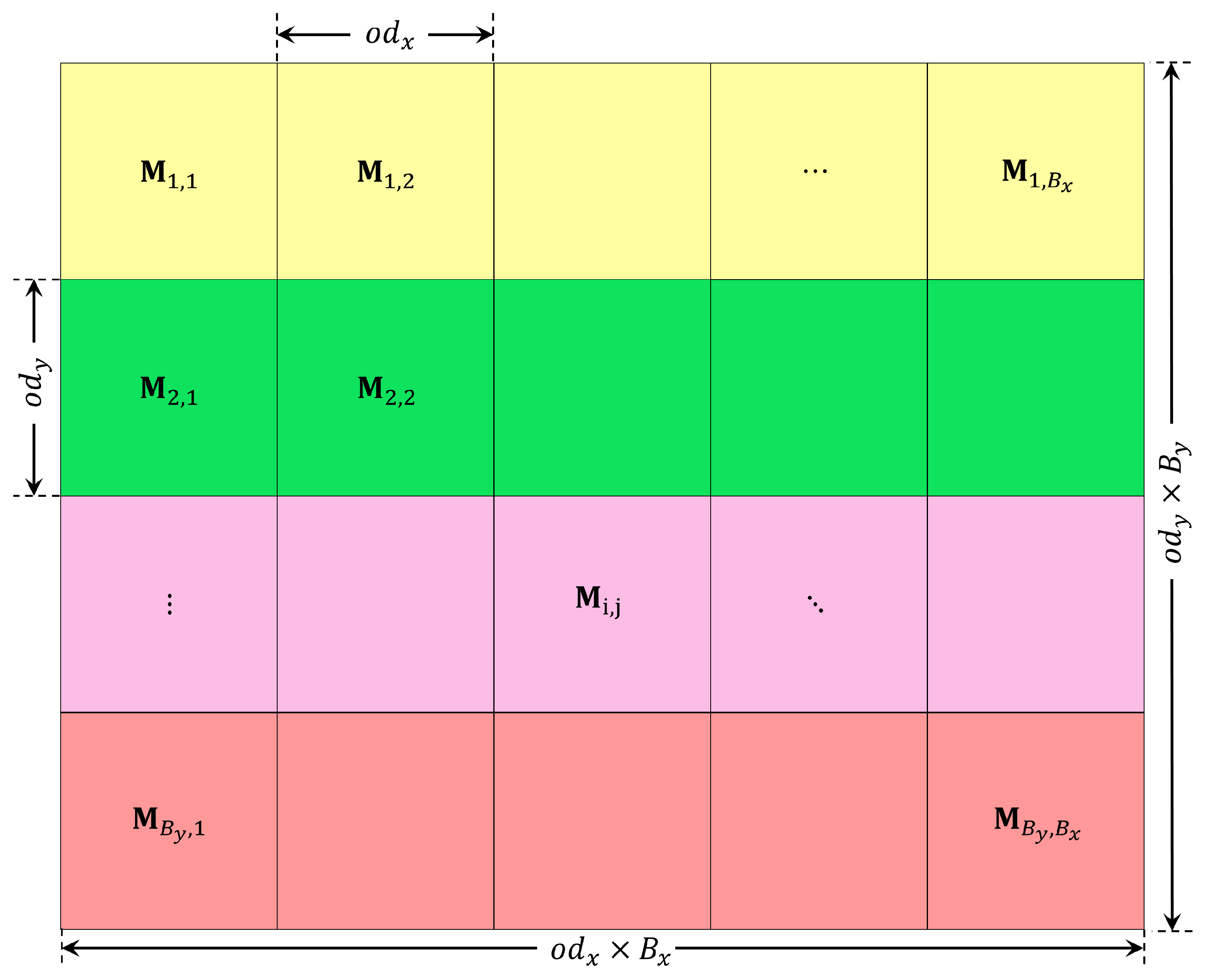

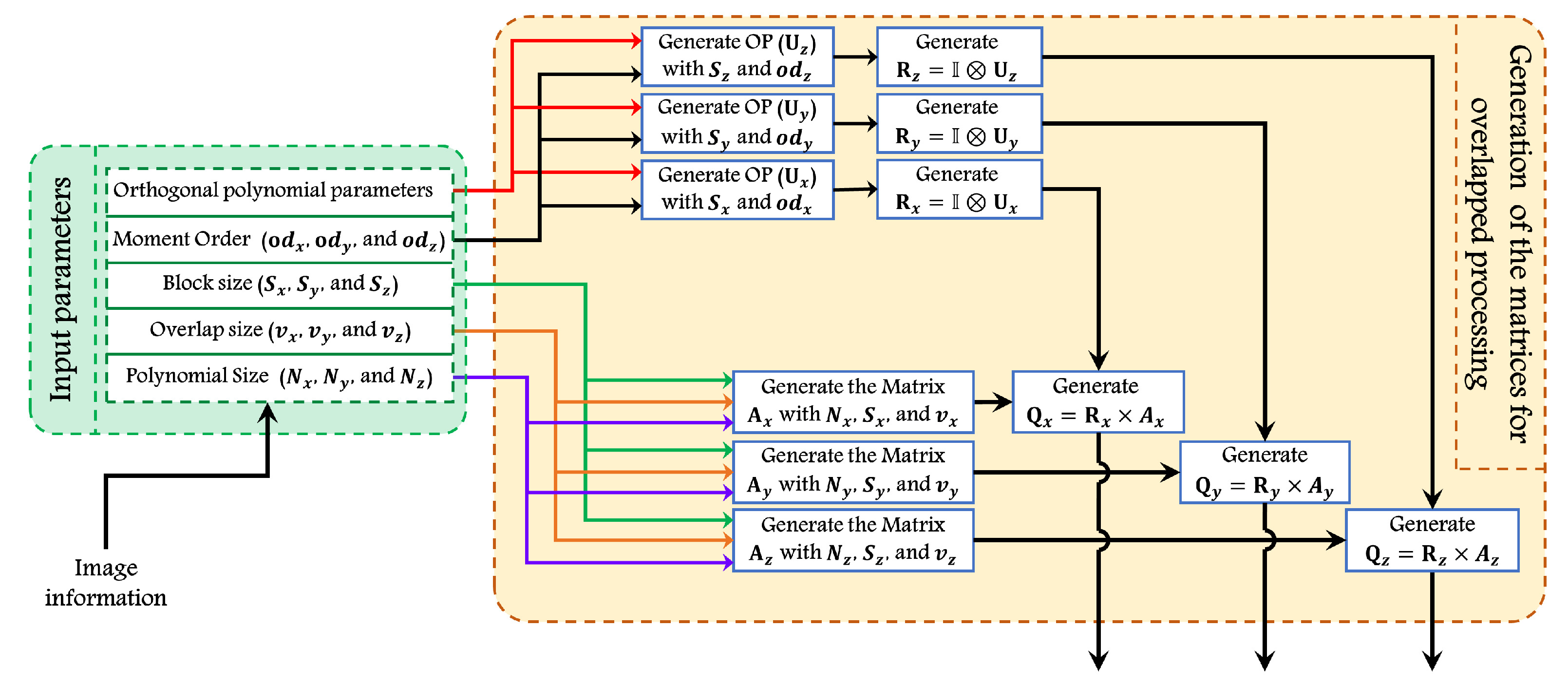

Note that the proposed method is not restricted to 2D images. It can be generalized for 3D signals by introducing a third matrix

. Then, the algorithm performs analogously to the 2D case, as can be seen from the flowchart in

Figure 4 (for more elucidation, see Algorithm 1).

| Algorithm 1 Generate auxiliary matrices for moment computation. |

| | Input: |

| | represents the size of the signal. |

| | represents the overlap size. |

| | represents the block size. Output: |

| 1: | ▹ Compute number of blocks |

| 2: | ▹ Total length of vector |

| 3: | Initialize ▹ Generate the matrix |

| 4: | for do |

| 5: | for do |

| 6: | if then |

| 7: | |

| 8: | end if |

| 9: | end for |

| 10: | end for |

| 11: | Generate polynomial matrices |

| 12: | |

| 13: | |

3. Performance Analysis

In this section, we present implementation details and an experimental analysis of the proposed algorithm. First, the computation cost analysis is presented to show the effectiveness of the proposed algorithm. Second, several numerical experiments are performed on various public datasets and the performance is compared with traditional methods. Finally, we present a similar experiment with 3D objects.

3.1. Computation Cost Analysis

In this section, we compare the computing complexity of our algorithm to traditional methods. The implementation of the proposed algorithm consists of the four following steps (which are described in Algorithms 1 and 2):

Input user-defined parameters: image size, block size, overlap size, polynomial basis, and maximum moment order.

Matrices , , and are generated.

Matrices are calculated.

The moment matrix

is computed using (

9).

For the traditional algorithms used in [

15,

16], the procedure is described in Algorithm 3.

Our hypothesis is that Step 3 of the traditional algorithms, which contains a “for” loop that must be run

times is a bottleneck which makes the calculation slow. Below, we verify this hypothesis experimentally for various setups of the input parameters and various images (see Algorithm 2).

| Algorithm 2 Moment computation using the proposed overlap block processing. |

| | Input: Image with parameters , , , , , and |

| | and represent the size of the image. |

| | and represent the block size in the x and y directions. |

| | and represent the overlap size in the x and y directions. |

| | Output: |

| 1: | Generate polynomial matrices and using Algorithm 1 |

| 2: | for each image in the dataset do |

| 3: | ▹ Computing moments |

| 4: | end for |

3.2. Numerical Experiments

In the first experiments, we used the well-known “boat” image, see

Figure 5. The experiment was repeated 10 times with different values of image sizes (

,

, and

), different block sizes (8, 16, and 32), and different overlaps.

Table 1 depicts the computational time for image size of

. In addition,

Table 1 includes the speed-up ratio between the proposed algorithm and existing works in [

15,

16] (see Algorithm 3). The results show that the time required to compute the moments using the proposed algorithm is less than the existing works [

15,

16] for all values of tested moment orders (2, 4, and 8). In addition, the reported improvement (speed-up ratio) shows that the proposed algorithm outperforms the existing works.

| Algorithm 3 Moment computation using the traditional overlap block processing. |

| | Input: Image with parameters , , , , , and |

| | and represent the size of the image |

| | and represent the block size in the x and y directions. |

| | and represent the overlap size in the x and y directions. |

| | Output: |

| 1: | Generate polynomial matrices and |

| 2: | ▹ Compute number of blocks in the x direction |

| 3: | ▹ Compute number of blocks in the y direction |

| 4: | for each image in the dataset do |

| 5: | for i do=1 to |

| 6: | for j do=1 to |

| 7: | Compute start and end indices (, , , ) for block |

| 8: | Extract block |

| 9: | ▹ Compute moment for each block |

| 10: | end for |

| 11: | end for |

| 12: | end for |

It is noteworthy that the speed-up factor decreases as the moment order increases. This is because our algorithm does not alter the moment computation itself, it only efficiently handles the blocks. For high-order moments, their computation takes more and more time and the impact of block handling is not so apparent. However, in most practical applications one usually works with low-order moments only.

We also repeated this series of experiments for images of the size

,

, and

pixels. The results are summarized in

Table 2,

Table 3 and

Table 4, respectively. We can observe that the results are consistent, but the overall improvement for the given block size decreases as the image size increases. This is probably because the auxiliary matrices in our algorithm are large and their multiplication is not as fast as in the case of smaller images.

In the second experiment, two different datasets have been employed—the ORL and FEI facial datasets. The ORL face database, obtained from AT&T [

17], has been used by many researchers for evaluation purposes. It includes 40 distinct classes (persons). Each class contains 10 images which were taken at different positions and lighting conditions. The size of each image is

pixels [

18].

We calculated the block moments of all ORL images using the proposed method and the reference method. We ran the experiment ten times and calculated the average time. We used the blocks of the size

with the overlap (0,0), (2,2), and (4,4). The results are reported in

Table 5; the time in milliseconds is an average over 10 runs and all images. As in the previous experiment, we witness a significant speed up.

The FEI dataset [

19] is a Brazilian facial dataset composed of 200 faces. In the experiment, we have included 10 images of each person with a size of

. The images show various expressions and head poses. We used the block size of

and five overlap sizes as shown in

Table 6. The results again show a substantial speed up in all settings.

Finally, we tested the performance of the 3D version of our algorithm. We used 19 model images from the well known McGill benchmark dataset [

20]. We alternated each sample by shift and rotation such that 1252 versions of each object were generated, which resulted in a total number of 23,788 objects. The Charlier polynomials are used in this experiment [

21]. The speed analysis for various block sizes and overlaps is given in

Table 7. The last column of the table shows the improvement factor.