1. Introduction

Most platforms continuously generate data in the modern era. Every moment, the deployment of the Internet of Things (IoT) [

1], cloud-based systems [

2], the usage of social platforms [

3], and industrial processes [

4] create a source of massive data. Some data can be stored to conduct analyses later, whereas others require prompt analysis. In stream data, the online analysis and classification of data are crucial in many conditions, such as in the case of non-availability of storage or when prompt reaction according to their content is needed. An example is the analysis of intrusion detection system (IDS) traffic to sense any possible attack in the data [

5]. Another example is the analysis of body network data to identify any abnormal activity in the human data [

6].

Several challenges occur in the learning of data stream. First, the data are numerous, with multivariate nature [

7] and storing them for clustering or classification is not feasible. Thus, data reduction phases must be implemented before learning. Second, the probabilistic distribution of learning algorithms for data stream require pre-defined parameters, a factor that results in changing performance when the data changes. Furthermore, data in real life are naturally evolving, whose handling requires a dynamic update in the internal parameters of the algorithm. Dealing with parameter updates is challenging. It requires a trial-and-error process that is not feasible in autonomous systems [

8]. Thus, this study aims to incorporate artificial systems for this task.

Artificial intelligence (AI) is becoming increasingly useful in solving different types of data handling and processing problems. Examples of valuable AI algorithms include the neural networks (NN) with a powerful learning capability [

9,

10] and the heuristic-based approach useful for encoding experience knowledge [

11]. Using AI for data classification has been proven effective, but a single AI approach suffers from limitations. Hence, integrating multiple AI blocks in one framework is useful to overcome the limitation of an individual AI block, such as integrating genetics algorithms (GA) [

12] with other AI models. Using meta-heuristic searching types algorithm became crucial for stream data applications such as using Cuckoo search algorithm for electric load forecasting [

13], or using integrated Cuckoo search and particle swarm optimization for natural terrain feature extraction [

14], or hybrid chaotic immune algorithm for seasonal load demand forecasting [

15].

The literature contains a wide range of sequential learning algorithms for stream data. Many researchers have adopted AI algorithms for this purpose. The usage of extreme learning machine for classifying them was done by the work of [

16]. In their work, self-adaptive stream data classification framework (SASDC-Framework) was proposed. In SASDC-Framework, subsets of data were created to include the data that arrives at the same time. In addition, bisection method strategy and inverse distance weighted strategy were used to find out the best size of the subset. Obviously, the framework considers the incremental learning of the classifier as the countering part of concept drift. However, the incremental learning is not adequate when fast changes in data distribution occur. Also, other researchers have developed frameworks based on incremental learning. [

17] built a learning framework for classifying streaming data. The framework is composed of a generative network, discriminant structure, and bridge. The first component used dynamic feature learning. The second component involved regularization of the network construction and facilitating parameter learning of the generative network. The third component required the integration of the network and structure by enabling the incorporation of supervision information. However, as it is declared by the authors of [

17], their approach was not good at dealing with concept drift in streaming data.

To deal with the parameter changes, [

18] used an inbuilt forgetting mechanism of NNs. The approach is based on active learning, which requires labels only for interesting examples that are crucial for appropriate model upgrading. The work demands the capability of controlling the speed of the forgetting mechanism. Unfortunately, the forgetting mechanism with lacking speed control is not useful for dealing effectively with parameter changes.

Other researchers have exploited the power of ensemble learning for sequential classification. [

19] developed an ensemble-based sequential classification approach. Boosting the new batches of data was achieved by adding base learners according to its current accuracy. The approach is called iterative boosting streaming ensemble. This approach is satisfactory for learning the new behavior of data. However, ensemble learning is not powerful for handling the concept drift without a mechanism of aggregation that is aware of parameters changes. Hence, adding base learners according to its current accuracy is not optimal to handle the parameter changes.

Many researchers have dealt with concept drift by incorporating clustering algorithms in their sequential classification models in order to identify any emerging in the classes. In [

20] the usage of the capability of fuzzy clustering for handling the concept drift was to serve Dynamic Incremental Semi-Supervised classification. Similarly, the work of [

21] an incremental mixed-data clustering was used to serve in incremental semi-supervised flow network-based IDS. Moreover, [

22] where Cauchy density is used to assign new samples to clusters or to create new clusters. However, it suffers from dependency on the threshold of assignment which is highly sensitivity to statistical distribution of clusters. In addition, it is important to exploit the clustering part from the perspective of overlooking the outdated stored knowledge that effects tracking the dynamic changes without giving it absolute control on the decision of classification due to lacking essential gained knowledge. This concept was missed in the previous approaches that have used clustering for dealing with concept drift. in the work of [

23], a new incremental grid density-based learning framework was proposed. The authors have focused on concept drift with limited labeling. Three techniques were used in the framework: grid density clustering, evolving ensemble of classifiers, uniform grid density sampling mechanism. The framework relies on the concept “classification upon clustering”; however, it does not handle the concept drift in the clustering part, and it considers that simple assisting of classification with clustering can overcome the concept drift.

The dealing with concept drift was not only exclusive to sequential classification approaches. Also, it was under researcher interests for handling the dynamic changes in stream data clustering models. Furthermore, bio-inspired approaches were used to handle such changes. [

24] presented an online, bio-inspired approach to clustering dynamic data streams. The proposed ant colony stream clustering (ACSC) algorithm is a density-based clustering algorithm, whereby clusters are identified as high-density areas of the feature space separated by low-density areas. ACSC identifies clusters as groups of micro-clusters. The tumbling window model is used to read a stream, and rough clusters are incrementally formed during a single pass of a window. A stochastic method is employed to identify these rough clusters, and this process is shown to significantly speed up the algorithm with only a minor cost to performance than with a deterministic approach. The rough clusters are then refined using a method inspired by the observed sorting behavior of ants. Ants pick up and drop items according to their similarity with the surrounding items. Artificial ants sort clusters by probabilistically picking and dropping micro-clusters on the basis of local density and local similarity. Clusters are summarized using their constituent micro-clusters, and these summary statistics are stored offline. This approach suffers from lacking an assisting knowledge representation and storing structure. This was found by Ant inspired swarm optimization-based approach [

25] for multi-density clustering. In this work, the concept drift was addressed by maintaining discovered clusters online and tracking their change using ant swarm optimization. However, this approach might not be capable of tracking fast changes of clusters. Other researchers have also employed optimization models to cluster categorical data streams [

26]. In their model, a cluster validity function is proposed as the objective function to evaluate the effectiveness of the clustering model while each new input data subset is flowing. It simultaneously considers the certainty of the clustering model and the continuity with the last clustering model in the clustering process. An iterative optimization algorithm is proposed to identify the optimal solution of the objective function with some constraints regarding the coefficients of the objective function. Furthermore, the authors have strictly derived a detection index to drift concepts from the optimization model. They proposed a detection method that integrates the detection index and the optimization model to ascertain the evolution trend of cluster structures on a categorical data stream. The new method was claimed to effectively avoid ignoring the effect of the clustering validity on the detection result. However, the cluster validity objective function might not be generalizable to all types of data and clusters. Another clustering-based work that aimed at handling dynamic changes of clusters is the work of [

27] where the algorithm is composed an online phase, which keeps summary information about evolving multi-density data stream in the form of core mini-clusters, and an offline phase that generates the final clusters using an adapted density-based clustering. The grid-based method is used as an outlier buffer to handle both noises and multi-density data and yet is used to reduce the merging time of clustering. This work can handle multi-density-based clusters; however, it is not handling concept drift effectively because of lacking a distinct block or model for detecting concept drift.

Overall, the most of previous literature on semi-supervised streaming data learning has used various techniques from AI to overcome the problematic issue of updating parameters of models based on various approaches some of them are evolutionary and other statistical incremental semi-supervised and heuristics. Accordingly, a novel AI framework for semi-supervised classification of online data streaming with providing better arrangement and interaction between various AI blocks is still a research interest. Unlike previous works, this research proposes a hyper-heuristic framework based on three AI components to perform classification of streaming data by enabling parameter adaptation to overcome the parameter update and to avoid the concept drift. The three components consist of online sequential extreme learning machine (OSELM) [

28], GA, and core online-offline clustering. The framework is designated as hyper-heuristic framework (HH-F). Each component has its role in assuring correct prediction or classification decision with an avoidance of concept drift. OSELM with its online learning capability, genetic with its evolutionary searching for the best parameters, and core online-offline clustering with its fast response to clusters changes all are integrated into one novel framework.

The remainder of this article is organized as follows.

Section 2 establishes the methodology.

Section 3 shows the experimental works and results.

Section 4 provides the conclusion and future work.

2. Methodology

This section presents the developed methodology of HH-F.

Section 2.1 presents the general framework. Then,

Section 2.2 provides the dataset representation. Next,

Section 2.3 describes the core online-offline clustering. Afterwards,

Section 2.4 discusses the genetic optimization. Then,

Section 2.5 provides the NN. The configuration is presented in

Section 2.6.

Section 2.7 presents the class prediction method. Next,

Section 2.8 presents the big O analysis of computational cost.

Section 2.9 list the evaluation metrics. Next,

Section 2.10 provides the dataset description.

2.1. General Framework

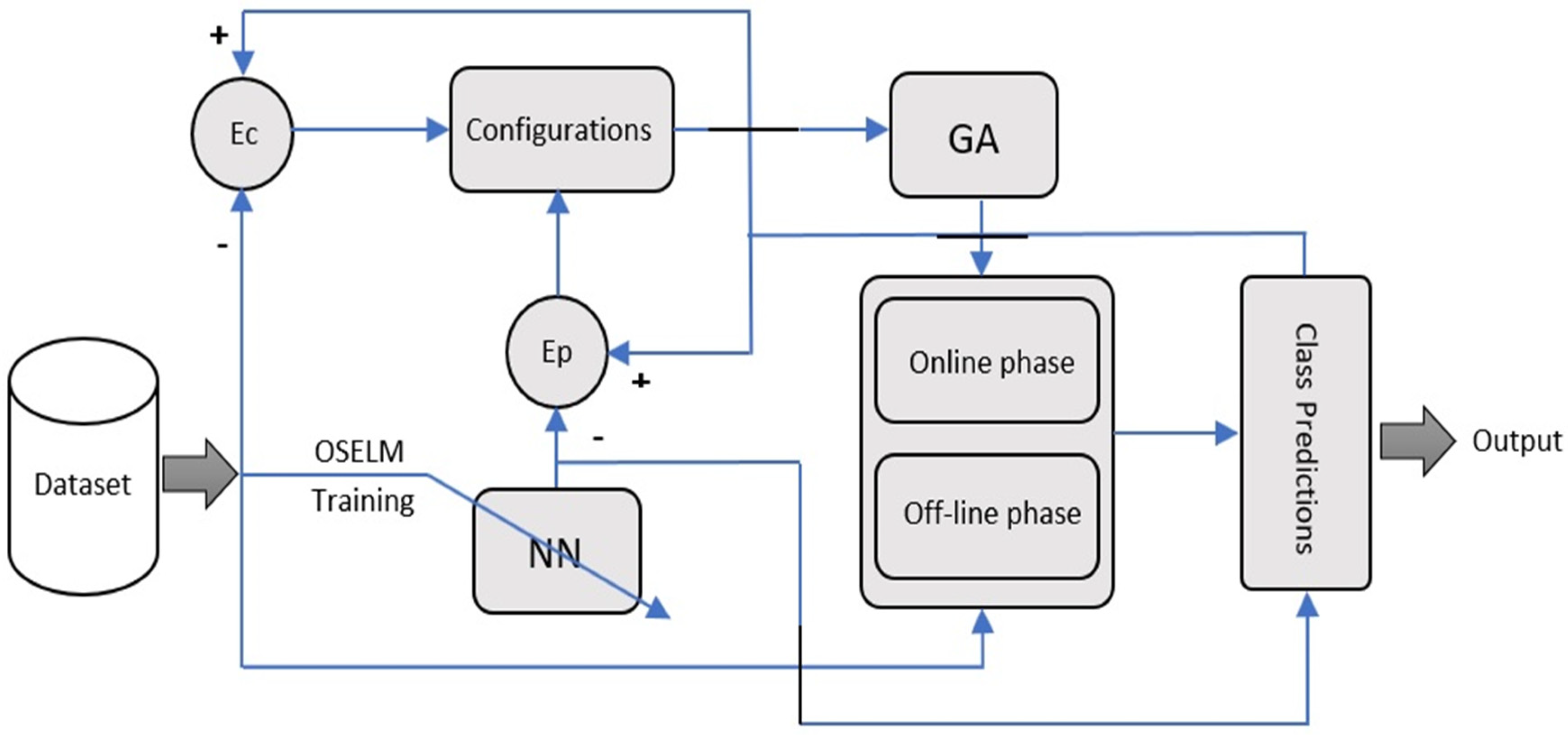

Figure 1 presents the general framework of hyper-heuristic for stream data classification. Main blocks are considered in the system design including dataset generation, the GA, NN, configuration, core (online and offline phases), and class prediction. The input and output of each blocks is provided in

Table 1. The proposed framework is considered hyper-heuristic because it uses genetics with the combination of machine learning technique to provide better clustering results for the core systems. The remaining blocks of the system are discussed below.

The core of the framework is the direct clustering algorithm. In this article, we adopt the core of [

27] because it performs clustering based on two phases, namely, online and offline. Using two phases aims for the clustering in the offline phase to use the result of data reduction that was done in the online phase. Another reason to adopt [

27] is its hybrid nature which combines both grid and distance concepts in one streaming algorithm which makes it more memory and computationally efficient. As mentioned, the problem that arises when dealing with most clustering approaches is their use of a static parameter setting, which creates a concern about the performance when dealing with evolving classes. We solve this problem in our framework by connecting the core clustering block with an optimization block.

The optimization block requires an objective function that reflects the performance of the system. Thus, we use the error of class prediction as an updated objective function. The error is calculated as the difference between the predicted data and the ground truth in the previous chunks. However, constantly knowing the ground truth of the previous chunks is not feasible. Therefore, NN is trained whenever ground truth data are available and used to assist in the calculation of the objective function through its gained knowledge.

denote the difference between the class prediction result in one side and the predicted NN or actual ground truth respectively. The actual ground truth is available partially according to the nature of applications, e.g., in IDS-based classification, the system will be able to identify the critical attacks after a period of time. This arrangement of the AI block in the framework is one of the novelties of this work. Other novelties include the design of the optimization, solution space, and the logic of the configuration block. The following subsections present the related details.

In order to elaborate the sequence of operation of the framework we present the general steps as follows:

Receive new chunk of the data for testing.

Use online-offline clustering block for assigning the records of the chunk to their respective clusters.

Use the NN block to predict the classes of the records of the chunk.

Use the class prediction block to use the prediction of the NN with the result for the online-offline clustering to perform class labeling of the clusters.

Find the error between the result of the class labeling of the clusters and the result of the NN as an objective function for the GA block that will keep modifying the parameters of the online-offline clustering block until reaching the minimum error.

Update the knowledge of NN when receiving labels of old records (ground truth).

Use the configuration error to switch between two types of errors: error with respect to NN and error with respect to ground truth .

If stream data is not finished go to step 1.

2.2. Dataset Representation

The dataset is represented in Equation (1).

where

denotes the number of chunks,

denotes the input feature of the records in one chunk, output features can be denoted by

,

denotes the features in one record, and the output in one record is shown by

. Notably, the dataset is sequentially presented to the system. Moreover, whenever a chunk of data

is provided, the corresponding labels

are not provided, such that the system must predict the classes of

. However, all the labels of the previous chunks are known at that point

.

This is a general representation of any sequential classification problem. For example, it matches the representation of IDS data where we expected to present chunks to classifier for prediction at any moment. Next, whenever true labeling or knowledge of the nature of the records, i.e., attacks or normal, is known, it will be presented again to the system for knowledge update.

2.3. The Core (Online and Off-Line Phase)

The core consists of two phases, namely, online and the offline phases. We explain each of the two phases in the following sections.

2.3.1. Online Phase for Micro-Clusters Update

The role of the online phase is to generate micro-clusters that represents a summary of data distribution in the space. It is composed of two stages: grid projection and micro-clusters update. We explain each of them as follows.

- (1)

Grid: is the first stage of stream data reduction in the online phase. Grid represents the mapping of the data to a pre-defined space partitioning in the dimensions of the data. Square grids are typically the simplest. The grid granularity denotes the resolution of the grid partitioning in the different dimensions. To accommodate the evolving nature of data and consume less memory when the dimension of data is high, grid granularity can be used as part of the solution space in the meta-heuristic part in the previous framework. Grid granularity is denoted as g.

- (2)

Micro-clusters update: The input of the online micro-clusters phase involves streamed data after mapping to the grid. The output is the micro-cluster update. They are intermediate results of clustering before making the final cluster. A micro-cluster is generated from a grid using a procedure presented in

Table 2. Assuming that the data are streamed with dimension m or any sample of the data

, then,

has a weight that is given by Equation (2).

denotes the current moment, and

denotes the forgetting factor which controls the importance of the history in the clustering. The role of the weight model that is related to a declining exponential term with respect to time is to give higher weight for the most recent samples. We assume Grid g contains n points

. First, we calculate the weight of the grid using Equation (3).

The grid (or multiple nearby grids) that contains set of points are named as an outlier. However, after the weight of the grid reaches a certain threshold

it becomes a micro-cluster. Each micro cluster is composed of center and radius. The center of the micro-cluster is generated from the grid using Equation (4).

Then, the radius of the micro-cluster is estimated using Equation (5).

Updating micro-clusters refers to either converting grids from outliers to micro-clusters or converting grids from micro-cluster to outliers. The decision of this update is made according to two thresholds, namely, the lower threshold

and upper threshold

. The grid density

will be compared with the two thresholds, and the conversion will be performed using

Table 2.

2.3.2. Offline Phase for Clustering

The input of the offline phase for cluster updates is the micro-clusters, and the output is the clustering results. The micro cluster must be applied before entering offline clustering. The condition is that the number of points of the micro-cluster should be lower than a pre-defined threshold named .

To update the clusters, a connected graph G will be created for each cluster. The nodes of the graph represent the micro-clusters, and the edges represent the connectivity decision between one micro-cluster and another. The decision to connect two micro-clusters is based on the Euclidian distance. Micro-cluster A is connected to micro-cluster B if and only if . denotes the distance between micro-clusters A and B, denotes the average distance between micro-clusters in the connected graph G, and denotes the average standard deviation of distances between the micro-clusters in the connected graph G.

2.4. The Genetic Algorithm (GA)

The meta-heuristic searching model aims to dynamically update the crucial parameters in the core clustering block. We select five parameters for this purpose. The solution structure contains the essential parameters of the core clustering algorithm. Thus, the solution space is represented as vector S in Equation (6).

where,

denotes the grid granularity.

denotes the threshold of core mini cluster to use it for offline phase.

denotes the forgetting factor.

denotes the threshold for converting a core mini cluster to an outlier.

denotes the threshold for converting an outlier to core mini cluster.

GA will be used for the searching. For more effective searching, we propose our own operators for modified GA. The crossover operation is performed using Equations (7) and (8). we assume that we have two solutions

,

from the pool of solutions in certain generation.

For generating an offspring, a crossover operation between

and

is performed. In order to do the crossover operation, a random number

is generated between 0 and 1, and the crossover is done as represented in Equation (9).

where,

represents a random integer between x and y. Thus, the mutation operator

() is designed and can be shown in Equations (10) and (11).

,

,

,

and

generates a random number around with a maximum difference as T from using uniform distribution. The parameter T is adjusted for each of the elements of the chromosome to define the strength of the mutation. The higher value of T is equivalent to stronger mutation while the lower value of T is equivalent to weaker mutation.

The last part in the genetic modification is applying a boosting for GA, i.e., narrowing down the initial searching zone, by operating the framework for pre-defined number of iterations based on the same boosting data that is used to boost the NN. This will narrow down the searching area to be close to the optimal values of parameters of core.

2.5. The Neural Network

The NN is embedded in the framework in order to exploit the information that can be extracted from the newly labeled data by storing its knowledge in the component of NN. Hence, this component will assist in providing a feedback in the case of any deviation in the prediction from the true gathered knowledge in order to correct the prediction.

In this framework, we adopt an extreme learning machine called OSELM [

29,

30]. This NN has an online variant that permits the updating of the knowledge of the NN according to the provided chunks. Given an activation function

and

hidden neurons, the learning procedure consists of two phases described below.

2.5.1. Boosting Phase Using the Initial Chunks

Given a small initial training set to boost the learning algorithm first through the following boosting procedure.

Assign arbitrary input weight and bias based on a random variable with center and standard deviation .

Calculate the initially hidden layer output matrix in Equation (12).

where,

and,

.

Estimate the initial output weight by the Equation (13).

where,

and

Set

.

2.5.2. Sequential Learning Phase

For each further coming observation

Calculate the hidden layer output vector by using Equation (14).

Calculate the latest output weight

based on Recursive Least Square (RLS) algorithm shown in Equations (15) and (16).

Set

2.6. The Configuration

The configuration is used to activate the GA and enable the objective function that will be used. Two variants of the HH-F hyper-heuristic are available. In the first variant, the GA is run once in each iteration, and the difference between the class prediction and the trained NN will be used as an objective function that must be minimized. In the second variant, the GA will be run twice, and the objective function for the first and second instances will be the difference between the NN and the class prediction and between the ground truth and the class prediction, respectively.

2.6.1. Hyper-Heuristic Variant 1

Table 3 presents the pseudo-code of variant 1 (Hyper 1). The code contains two phases, namely, boosting and iterative phases. The latter is a combination of prediction and correction phases.

The GA and the OSELM are called in the boosting phase. In line 1, the initial parameters of the core are generated randomly. In line 2, the initial weights of the NN are generated on the basis of the boosting data. GA is then initialized. The corresponding objective function is defined by the difference between the class prediction and the ground truth, which is subsequently called for the numberOfIterations based on the defined MutationRate and CrossoverRate. Once the boosting phase is completed, N iterations of the iterative phase are performed, where N is equal to the number of chunks received by the stream data. Two stages are called in each phase. The first is the prediction that enables GA to run for numberOfIterations to change the parameters of the core based on the difference between the NN prediction and the class prediction (lines 7 and 8). Next, the updated parameters are assigned to the core, which performs a prediction that represents the output of the core (line 9). The second stage is the correction stage, which uses the recently known labels to train the supervised model again (line 11).

2.6.2. Hyper-Heuristic Variant 2

Table 4 presents the pseudo-code of variant 2 (Hyper 2). Similar to Hyper 1, the procedure consists of the boosting and iterative phases, and the latter is a combination of prediction and correction phases.

The GA and the OSELM are called in the boosting phase. In lines 1 and 2, the initial parameters of the core are randomly generated, and the initial weights of the NN are generated on the basis of the boosting data, respectively. GA is then initialized, and the corresponding objective function is defined by the difference between the class prediction and the ground truth. The function is subsequently called for the numberOfIterations based on the defined MutationRate and CrossoverRate. The result comprises the initial parameters of core P0 (line 4). Once the boosting phase is completed, N iterations of the iterative phase are performed, where N is equal to the number of chunks received by the stream data. The difference between the two variants is that Hyper 2 contains a correction of the core parameters using GA based on the ground truth (lines 12 and 13). This step allows Hyper 2 to utilize the knowledge of previously known labels using GA, NN. While Hyper 1 utilizes the knowledge of previously known labels using only the NN component because the GA component works depend on NN when running in the prediction phase.

2.7. Class Prediction

The output of the core clustering is a set of distinct clusters. The clustering results are mapped into a classification using the labels of the points that are part of the cluster. Each point x in cluster S has a label y. First, a histogram for the number of points for each class inside the cluster is generated. Next, the peak of the histogram is selected as the class of the cluster. Any new predicted point belonging to this cluster will take the class of the cluster. This step is a separate block in the framework named class prediction.

In the case of partial labeling, only a few points might be labeled, which might result in the unsatisfactory performance of the class prediction. This issue is resolved using the NN output, which is a regular classifier that predicts each sample of the records. In the case of fully labeled data, the ground truth is used instead of NN predictions.

2.8. Big O Analysis for Computation

This study is interested in the computational complexity of the sub-blocks of the supervised learning NN and the optimization GA because both must be called in each iteration to update the parameters of the core for countering the dynamic changes in the stream. Assume that the algorithm works for and .

where,

denotes the number of iterations.

denotes the number of solutions.

the size of the chunk.

denotes the number of hidden neurons.

denotes the number of features.

According to [

31] the computational complexity of ELM has two stages: the first is the projection to hidden layer

and the second is the pseudo-inverse

Given that the GA complexity is represented as

, the total complexity is defined as

.

2.9. Evaluation Measurement

This section presents the evaluation measures used for quantifying the performance of the proposed HH-F and the comparison with state-of-the-art approaches. TP denotes true positive, TN denotes true negative, FP denotes false positive, and FN denotes false negative.

Accuracy

Accuracy represents the number of true predictions divided by all cases of prediction [

32]. The formula is calculated in Equation (17).

Precision (PPV)

PVV represents the number of true positive predicted by the classifier divided by the number of all predicted positive records [

33]. The formula is calculated in Equation (18).

Recall (TPR)

TPR represents the number of TP predicted by the classifier divided by the number of all tested positive records [

34]. The formula is calculated in Equation (19).

Specificity (TNR)

TNR represents the number of TN predicted by the classifiers divided by the number of all tested negative records [

35]. The formula is calculated in Equation (20).

NPV

This measure represents the negative predicted value calculated as the number of predicted TN value over the total number of negative values [

36]. The formula is calculated in Equation (21).

G-mean

This measure is calculated on the basis of the precision and recall [

33]. The formula is calculated in Equation (22).

F-measure

F-measure is the harmonic mean of the precision and recall [

33]. The formula is calculated in Equation (23).

2.10. Dataset Description

This section provides a description of the datasets that will be used for evaluation. Three real data are selected for the evaluation, namely, KDD’99, NSL-KDD, and LandSat.

KDD’99

KDD’99 is the most recognized dataset for evaluating anomaly detection methods [

37]. The dataset is constructed using the data captured in DARPA’98 IDS, including gigabytes of compressed raw (binary) TCP dump data on seven weeks of network traffic, and can be processed into approximately 5 million connection records of nearly 100 bytes each. Moreover, this dataset includes 2 million connection records. Each record contains 41 features and can be regarded as normal, DoS, U2R, R2L, or a probing attack.

1. NSL-KDD

Various studies have encountered issues affecting the performance in the KDD’99 dataset. [

37] proposed a new dataset called NSL-KDD; here, they removed duplicated records in the training and testing data and updated the number of the selected difficult level groups as inversely proportional to the percentage of records in the original KDD dataset. The training dataset consists of 21 different attacks out of the 37 attacks present in the test dataset. The known attack types also occur in the training dataset and in novel attacks that are unavailable for the training data.

2. Landsat satellite

This dataset contains 4435 objects from remote-sensing satellite images. Each object represents a region, and each sub-region is provided by four intensity measurements taken at different wavelengths. The number of attributes is 36. This dataset is utilized by [

27].

3. Experimental Evaluation

We constructed three types of evaluations to evaluate the proposed two approaches of the HH-F hyper-heuristic. First, we generated the classification measures for each approach based on the partial percentage of labeling for LandSat and NSL-KDD (25%, 50%, 75%, and 100%) to evaluate the performance of the approach based on the partially labeled stream data in the sequential learning. Second, we generated the classification measures of the fully sequential learning with full percentage of labeling for each of the two variants of HH-F hyper-heuristics and for two benchmarks, namely, Cauchy [

22] and MuDi [

27]. Lastly, we performed a statistical evaluation to verify the overall superiority of the proposed HH-F hyper-heuristic over the two benchmarks.

Table 5 presents the evaluation results of the performance of Hyper 1 and Hyper 2 in terms of partial labeling. For LandSat, the finding reveals that the accuracy increases with the percentages of labeling, which is a normal occurrence that opens an opportunity to update the knowledge of HH-F. Similarly, the other measures (precision, recall, specificity, NPV, G-mean, and F-measure) demonstrated an increasing trend in LandSat with respect to the percentage of the labeled data in the sequential learning.

This behavior is more evident in LandSat than in NSL-KDD (

Table 6). The results in the LandSat showed that the accuracy reached the highest level at 75% in Hyper 1, which is interpreted as a saturated level of extracted knowledge (i.e., additional training is unnecessary for the model). On the other hand, we observe that in both datasets, the accuracy was high for hyper 1 and hyper 2 even with the smallest percentage of labeling, i.e., percentage of 25%. This is interpreted by the difference between the two datasets in terms of the knowledge that is carried by new records, i.e., in many datasets new incoming chunks do not carry newer knowledge which makes the training update not useful. Another observe is the differences in the values between the precision recalls, and other metrics which is interpreted by the differences in the TP, FP, TN, and FN values. Despite that, all the metrics indicate when they are high to better performance in the prediction.

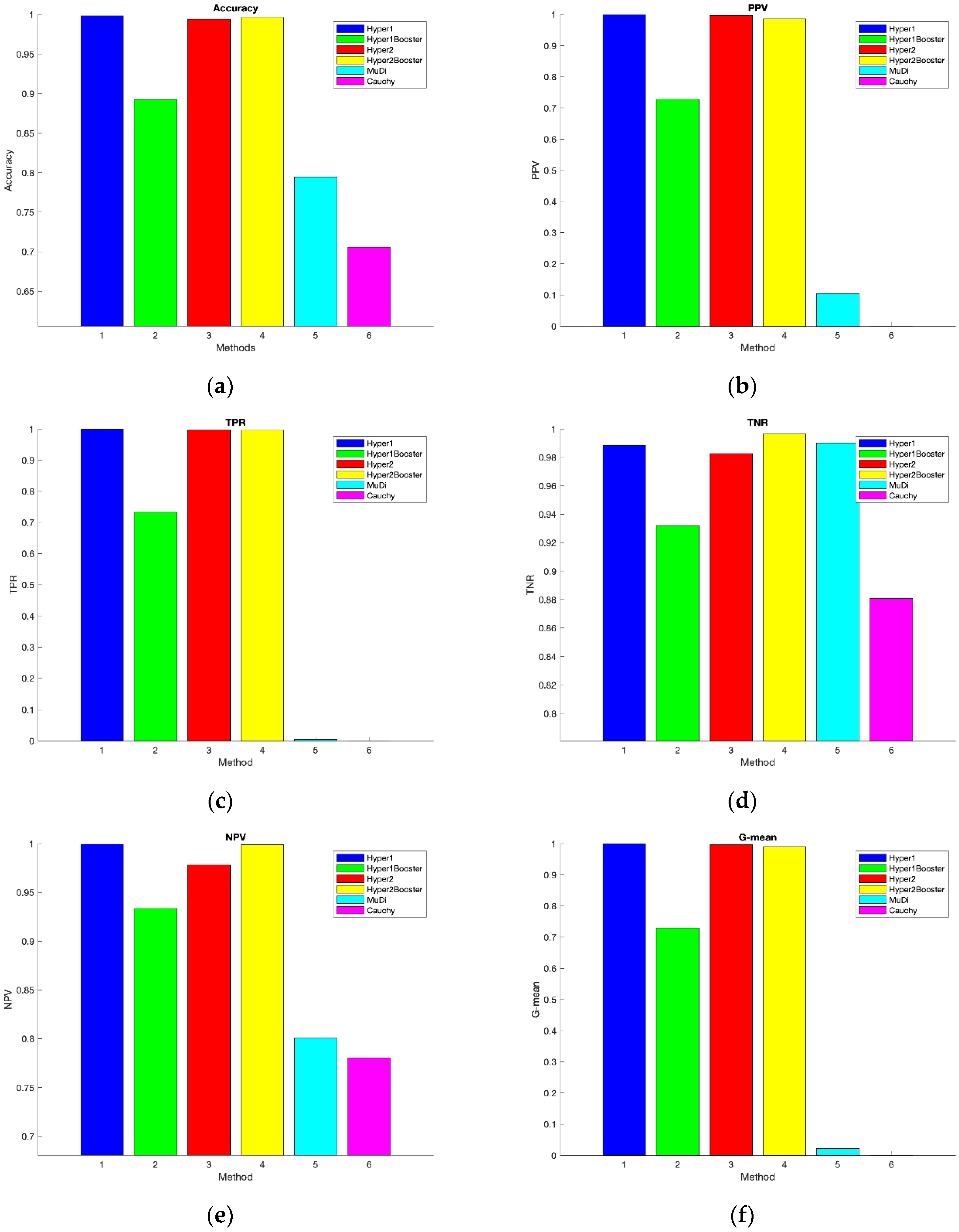

In the second evaluation, Hyper 1 and Hyper 2 are compared with MuDi and Cauchy on the three datasets using fully labeled data.

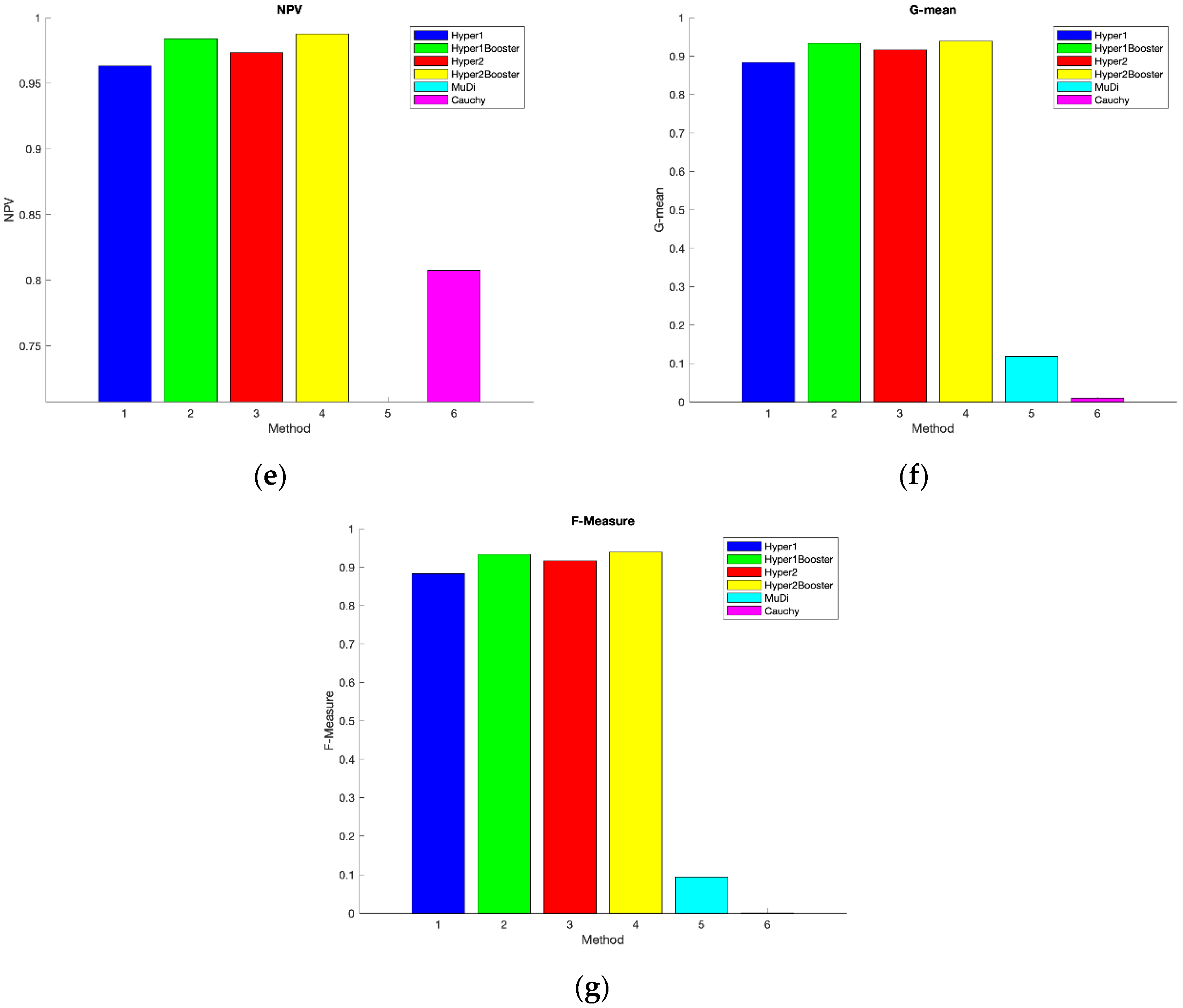

Figure 2 shows the experimental results for the comparison on NSL-KDD. HH-F is found to be superior to the MuDi and Cauchy. Four variants of HH-F are used for the comparison: Hyper 1 with and without boosting and Hyper 2 with and without boosting, all of which are superior over the two benchmarks. Moreover, all variants exhibited similar performances in terms of accuracy. The boosting of GA evidently increased the accuracy of Hyper 1 and Hyper 2 relative to the variant that did not use boosting.

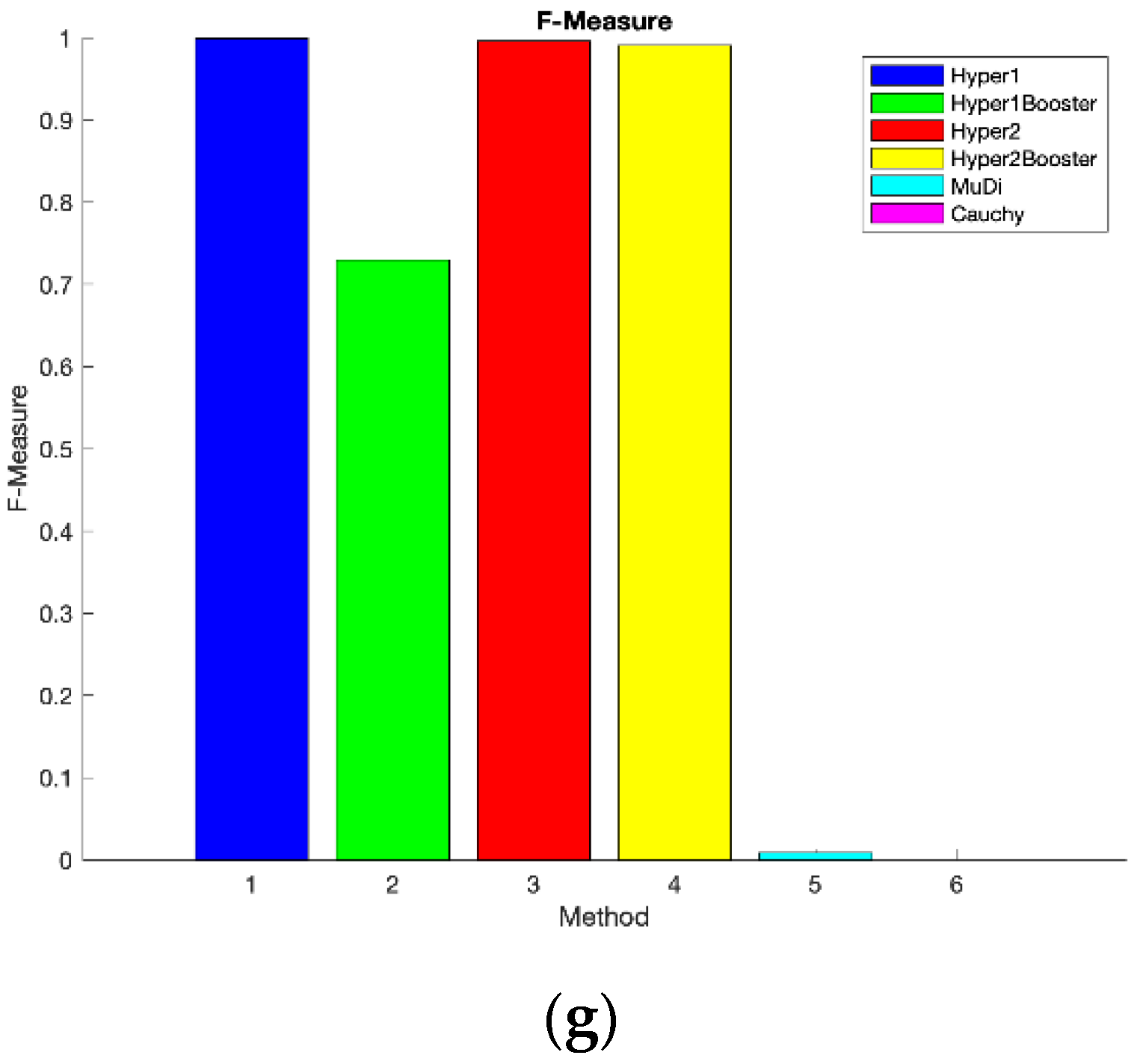

Figure 3 and

Figure 4 illustrate the classification measures of all variants of HH-F and the two benchmarks on KDD’99 and LandSat, respectively. the boosting failed the expectation of improving the accuracy of Hyper 1 over Hyper 2 (

Figure 3). This phenomenon is interpreted by the higher amount of evolution in the KDD dataset than in the NSL-KDD. All variants of the HH-F outperformed MuDi and Cauchy. The accuracies of Hyper 1 and Hyper 2 are relatively similar for the majority of the experiments, but the accuracy of the latter is lower than the former unless boosting is performed to improve the performance.

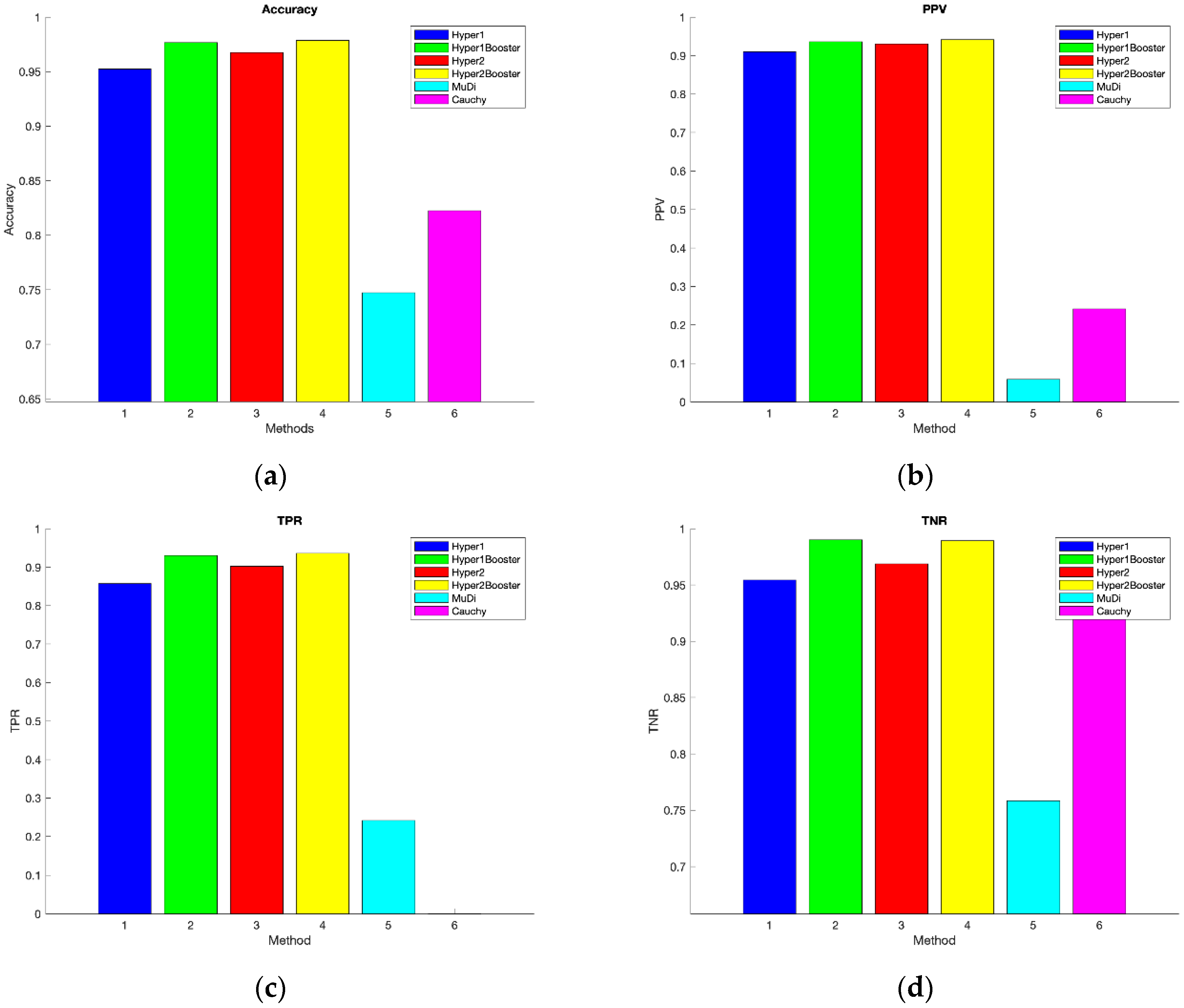

Contrary to the case of (

Figure 3), the boosting has succeeded in of improving the accuracy of Hyper 1 over Hyper 2 (

Figure 4). This phenomenon is interpreted by the less amount of evolution in the LandSat dataset. All variants of the HH-F outperformed MuDi and Cauchy. This is interpreted by the nature of HH-F in terms of embedding a tracking block, i.e., the genetic integrated with the configuration, to update dynamically the parameters of core clustering which assures an ability of avoidance of concept drift impact. On the other side, similar to the majority of existing IDS algorithms, Cauchy and MuDi lack such a handling of concept drift impact.

The last part of the evaluation is the statistical analysis.

Table 7 lists the t-values after comparing the HH-F variants and the two benchmarks in terms of accuracy. Hyper 1 and Hyper 2 demonstrated a statistical significance with respect to the superiority of HH-F over Cauchy and MuDi, which statistically proves that the former is superior over the two benchmarks.

4. Conclusions and Future Work

This study proposed a hyper-heuristic semi-supervised framework on the basis of genetic and online sequential extreme learning machines for sequential data classification called HH-F. This framework is a combination of core online–offline clustering, an NN with one hidden layer, and genetic optimization with configuration and class prediction blocks. The integration of these blocks serves in an overall goal which is dealing with the concept drift and updating the parameters of the model dynamically. NN serves as the knowledge storage component of the model of assigning stream samples to various classes, online-offline clustering serves as the fast reacting block of the changes in the clusters shape, density and distribution and the evolutionary meta-heuristic genetic serves as the tracking component of the changes of the internal parameters of the online-offline clustering by leveraging the knowledge of the ground truth and the gained knowledge within NN. More specifically, the framework trains the NN on previously labeled data and its knowledge is used to calculate the error of the core online–offline clustering block. The genetic optimization is responsible for selecting the best parameters of the core model to counter the concept drift and handle the evolving nature of the classes. Four variants of the proposed framework were created. The first variant called Hyper 1 uses only GA in the prediction phase, whereas the second variant called Hyper 2 uses GA in the prediction and correction phases. Both variants were repeated twice with and without genetic boosting (i.e., the other two variants).

The evaluation was performed on three online datasets, namely NSL-KDD, KDD-99, and LandSat. For NSL-KDD and LandSat, the performance of partially labeled data was assessed. The result showed how the performance of Hyper 1 and Hyper 2 increased with the increase in the percentage of labeled data. Then, the performances of all variants were compared with those of Cauchy and MuDi. The variants outperformed the two benchmarks, and Hyper 1 and Hyper 2, with and without genetic boosting exhibited slight performance variations. Boosting exerts a positive influence on accuracy, except when high data evolution is involved. Future work will incorporate a block in the framework to address the imbalance issue of some data classes in the stream data and test the performance in real-world applications, such as minimizing false alarms in IDS.