Estimation for the Discretely Observed Cox–Ingersoll–Ross Model Driven by Small Symmetrical Stable Noises

Abstract

1. Introduction

2. Problem Formulation and Preliminaries

3. Main Results and Proofs

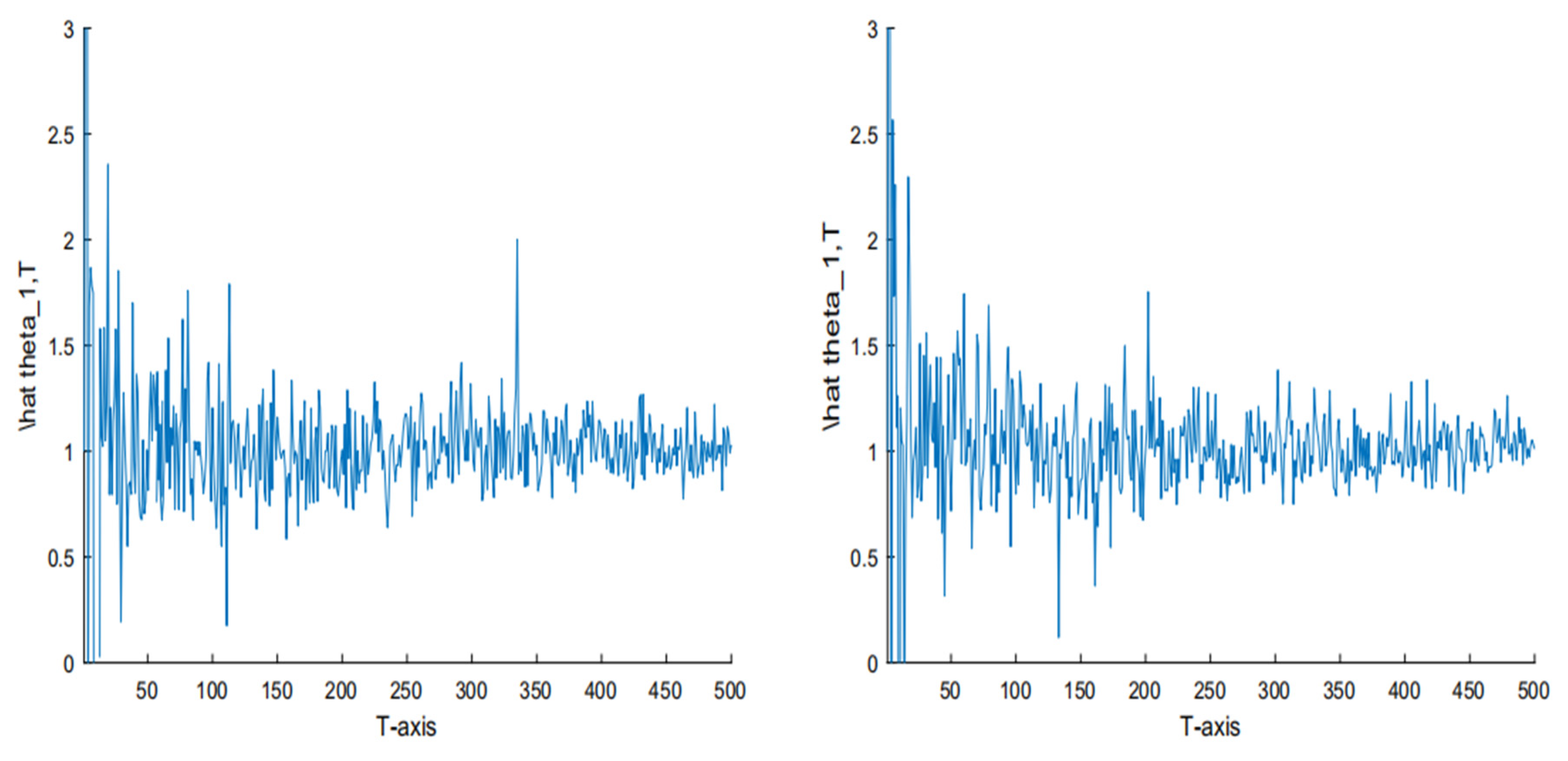

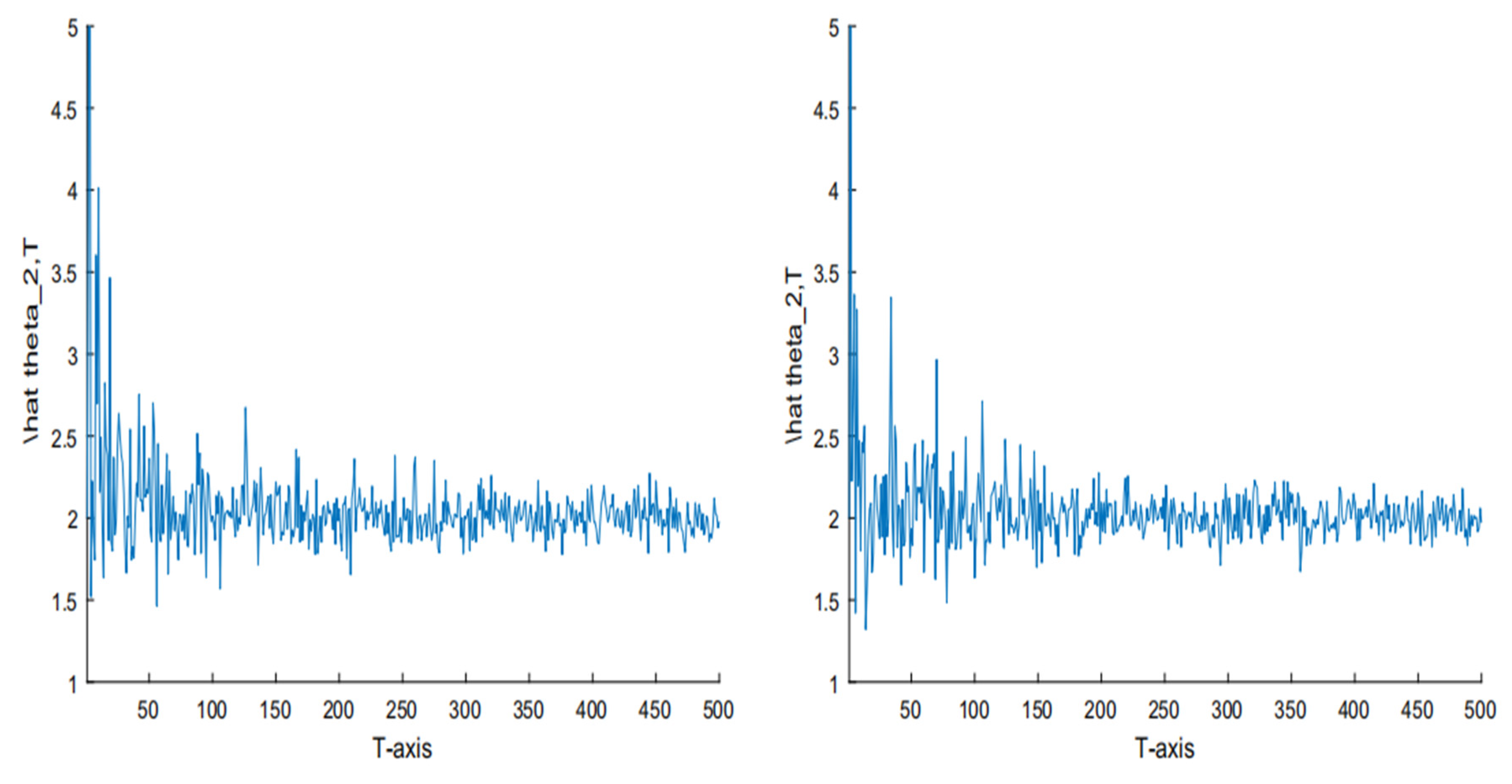

4. Simulation

5. Conclusions

Funding

Conflicts of Interest

References

- Bishwal, J.P.N. Parameter Estimation in Stochastic Differential Equations; Springer: Berlin, Germany, 2008. [Google Scholar]

- Protter, P.E. Stochastic Integration and Differential Equations: Stochastic Modelling and Applied Probability, 2nd ed.; Applications of Mathematics (New York) 21; Springer: Berlin, Germany, 2004. [Google Scholar]

- Lauritzen, S.; Uhler, C.; Zwiernik, P. Maximum likelihood estimation in Gaussian models under total positivity. Ann. Stat. 2019, 47, 1835–1863. [Google Scholar]

- Wen, J.H.; Wang, X.J.; Mao, S.H.; Xiao, X.P. Maximum likelihood estimation of McKean–Vlasov stochastic differential equation and its application. Appl. Math. Comput. 2015, 274, 237–246. [Google Scholar]

- Wei, C.; Shu, H.S. Maximum likelihood estimation for the drift parameter in diffusion processes. Stoch. Int. J. Probab. Stoch. Process. 2016, 88, 699–710. [Google Scholar]

- Lu, W.; Ke, R. A generalized least squares estimation method for the autoregressive conditional duration model. Stat. Pap. 2019, 60, 123–146. [Google Scholar]

- Mendy, I. Parametric estimation for sub-fractional Ornstein-Uhlenbeck process. J. Stat. Plan. Inference 2013, 143, 663–674. [Google Scholar]

- Skouras, K. Strong consistency in nonlinear stochastic regression models. Ann. Stat. 2000, 28, 871–879. [Google Scholar]

- Deck, T. Asymptotic properties of Bayes estimators for Gaussian Ito processes with noisy observations. J. Multivar. Anal. 2006, 97, 563–573. [Google Scholar]

- Kan, X.; Shu, H.S.; Che, Y. Asymptotic parameter estimation for a class of linear stochastic systems using Kalman-Bucy filtering. Math. Probl. Eng. 2012, 2012, 1–12. [Google Scholar]

- Long, H.W. Least squares estimator for discretely observed Ornstein-Uhlenbeck processes with small Levy noises. Stat. Probab. Lett. 2009, 79, 2076–2085. [Google Scholar]

- Long, H.W.; Shimizu, Y.; Sun, W. Least squares estimators for discretely observed stochastic processes driven by small Levy noises. J. Multivar. Anal. 2013, 116, 422–439. [Google Scholar] [CrossRef]

- Cox, J.; Ingersoll, J.; Ross, S. An intertemporal general equilibrium model of asset prices. Econometrica 1985, 53, 363–384. [Google Scholar] [CrossRef]

- Cox, J.; Ingersoll, J.; Ross, S. A theory of the term structure of interest rates. Econometrica 1985, 53, 385–408. [Google Scholar] [CrossRef]

- Vasicek, O. An equilibrium characterization of the term structure. J. Financ. Econ. 1977, 5, 177–186. [Google Scholar] [CrossRef]

- Bibby, B.; Sqrensen, M. Martingale estimation functions for discretely observed diffusion processes. Bernoulli 1995, 1, 17–39. [Google Scholar] [CrossRef]

- Wei, C.; Shu, H.S.; Liu, Y.R. Gaussian estimation for discretely observed Cox-Ingersoll-Ross model. Int. J. Gen. Syst. 2016, 45, 561–574. [Google Scholar] [CrossRef]

- Ma, C.H.; Yang, X. Small noise fluctuations of the CIR model driven by α-stable noises. Stat. Probab. Lett. 2014, 94, 1–11. [Google Scholar] [CrossRef]

- Li, Z.H.; Ma, C.H. Asymptotic properties of estimators in a stable Cox-Ingersoll-Ross model. Stoch. Process. Appl. 2015, 125, 3196–3233. [Google Scholar] [CrossRef]

- Kallenberg, O. Some time change representations of stable integrals, via predictable transformations of local martingales. Stoch. Process. Appl. 1992, 40, 199–223. [Google Scholar] [CrossRef]

- Rosinski, J.; Woyczynski, W.A. Moment inequalities for real and vector p-stable stochastic integrals. In Probability in Banach Spaces V; Lecture Notes in Math; Springer: Berlin, Germany, 1985; Volume 1153, pp. 369–386. [Google Scholar]

| True | Average | AE | RE | ||||

|---|---|---|---|---|---|---|---|

| Size n | |||||||

| (1,1) | 1000 | 1.2632 | 0.7568 | 0.2632 | 0.2432 | 26.32% | 24.32% |

| 2000 | 1.1425 | 0.8673 | 0.1425 | 0.1327 | 14.25% | 13.27% | |

| 5000 | 1.0651 | 0.9586 | 0.0651 | 0.0414 | 6.51% | 4.14% | |

| (2,3) | 1000 | 1.6573 | 3.2538 | 0.3427 | 0.2538 | 17.14% | 8.46% |

| 2000 | 2.1836 | 3.1209 | 0.1836 | 0.1209 | 9.18% | 4.03% | |

| 5000 | 2.0528 | 3.0614 | 0.0528 | 0.0614 | 2.64% | 2.05% |

| True | Average | AE | RE | ||||

|---|---|---|---|---|---|---|---|

| Size n | |||||||

| (1,1) | 10,000 | 1.1346 | 0.8735 | 0.1346 | 0.1265 | 13.46% | 12.65% |

| 20,000 | 1.0538 | 0.9359 | 0.0538 | 0.0641 | 5.38% | 6.41% | |

| 50,000 | 1.0010 | 0.9987 | 0.0010 | 0.0013 | 0.1% | 0.13% | |

| (2,3) | 10,000 | 1.8645 | 3.1452 | 0.1355 | 0.1452 | 6.78% | 4.84% |

| 20,000 | 2.0649 | 3.0722 | 0.0649 | 0.0722 | 3.25% | 2.41% | |

| 50,000 | 2.0028 | 3.0017 | 0.0028 | 0.0017 | 0.14% | 0.06% |

© 2020 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wei, C. Estimation for the Discretely Observed Cox–Ingersoll–Ross Model Driven by Small Symmetrical Stable Noises. Symmetry 2020, 12, 327. https://doi.org/10.3390/sym12030327

Wei C. Estimation for the Discretely Observed Cox–Ingersoll–Ross Model Driven by Small Symmetrical Stable Noises. Symmetry. 2020; 12(3):327. https://doi.org/10.3390/sym12030327

Chicago/Turabian StyleWei, Chao. 2020. "Estimation for the Discretely Observed Cox–Ingersoll–Ross Model Driven by Small Symmetrical Stable Noises" Symmetry 12, no. 3: 327. https://doi.org/10.3390/sym12030327

APA StyleWei, C. (2020). Estimation for the Discretely Observed Cox–Ingersoll–Ross Model Driven by Small Symmetrical Stable Noises. Symmetry, 12(3), 327. https://doi.org/10.3390/sym12030327