1. Introduction

Location-based services (LBS) is an application that uses network-based services that integrate a mobile device’s location or position with other information, to provide added value to the user [

1]. LBS use real-time geo-data from a mobile device or smartphone to provide information, entertainment or security [

2]. According to a recent report by Berg Insight at the end of 2013, about 40 percent of mobile subscribers in Europe were frequent users of at least one location-based service [

3]. LBS applications such as Google Maps, Foursquare, Instagram, Facebook and Waze function perfectly on mobile devices via a mobile network [

4]. LBS such as Waze provides turn-by-turn and best routes navigation; provide information to users on real-time incidents and allow simple social interactions between users [

5].

Nonetheless, despite the benefits that LBS have provided, LBS applications such as mobile devices can collect the locations and identities of users and understand the usage patterns of a user [

2] without any knowledge of a user. For example, recently Apple was alleged to regularly record the locations of iPhone and iPad users in a hidden file within their devices raising very serious security and privacy concerns [

1]. In fact, location information can be useful for marketing and promotional purposes such as for location-based advertising [

2]. Sybil attacks on LBS applications in which a multiple fake devices node in an interconnected network could impersonate a genuine device and trick users into sharing their personal details is another concern. Lately, Sybil attacks have been used by some organizations in reputable systems such as eBay, Google Page Rank and LBS, such as Waze, to obtain fake opinions [

6]. The creation of fake events such as forging congestion to force automatic rerouting of trips is possible due to non-reliable authentication mechanism in place [

7] for users reporting on any real-time updates on incidents. The spread of disinformation phenomena, intending to influence users’ opinions or decisions, is an act aimed at deception [

8]; fake reviews generated via technical-based attacks such as Sybil or non-technical act of malicious users adding fake event has become a non-trivial issue. Because the correlation between security and privacy threats with trust is interchangeable, high trustworthiness towards any technology simply means that users are comfortable in accepting the trade-off between the impacts of security and convenience that they will face while adopting a technology. Thus, in this research the domain of trust will be further elevated to provide a mitigation solution to mitigate the fake reviews/events problem in Internet of Things (IoT) applications such as LBS.

Trust management plays an important role in reliable data fusion and mining, qualified services with context-awareness, and enhanced user privacy and information security [

9]. Trust management helps people to gain confidence while adopting LBS. What remains unknown is how the impacts of trust will affect a user’s perceptions of trustworthiness. Trust involves people’s interactions. For example, users in e-commerce are likely to gather information from their trusted user to make decisions. Trust is uncountable, dynamic, propagative, non-transitive, compostable, asymmetric and event-sensitive [

10]. In theory, trust refers to the willingness of a party to engage in risk-taking and to reduce doubts about the lowest level [

11]. Distrust plays a crucial role in understanding trust. Transitive, asymmetric, dynamic and subjective are examples of the properties of distrust [

12]. Some studies [

12,

13] have found that distrust is a negation of trust and can help in improving trust management. Balance theory and status theory can help in incorporating distrust to improve trust [

12,

14].

The motivation for this research was to explore the trustworthiness concept needed for an LBS application to enhance user acceptance of the technology. The current management model is single faceted and not personalized [

14]. Single facet trust is not suitable for an IoT application, which is distributed by nature. Thus, a multi-faceted trust management model [

11,

15] is worth being explored to tackle the IoT trustworthiness issue. Social theory adopted by [

12] has investigated whether distrust has added value for trust and, thus, increases trust performance. In social theory, two mains theories in the study of trust are balance theory and status theory. In the research of distrust, the balance theory has been tested in checking the nature of its applicability, user acceptability towards this theory and how this theory can be used in current IoT applications. By adopting the matrix factorization technique, the theory is further tested. This paper acknowledges the integration of multi-facet trust and balance trust for IoT applications. Specifically, this paper makes the following contributions:

1.1. Integration of Multi-Facet Trust and Balance Theory in IoT

Most current IoT applications (e.g., social media, LBS) adopt single facet trust, and, with the current demand in securing IoT applications, the need to enhance the current trust model with a multi-faceted model is a must. Based on [

12] findings on the weightage of trust attributes in an Online Social Network (OSN), a similar weightage can be adopted for an IoT application. The concept of balance theory has been adopted widely in distributed computing environments such as OSN. The adoption of this theory in the domain of sensor-enabled applications has not been tested and proved. This current work applies multi-facet adoption into the existing balance theory concept to enhance the trustworthiness of an IoT application such as LBS. In addition, due to failures in terms of lack of authentications in current LBS applications between users and the service providers, challenges have increased, such as fake reviews. In this work; by scoring trust values and adopting balance theory, a new approach in reducing fake reviews among users will be proposed.

1.2. Testing the Relationship Between Trust and Distrust for IoT Application

Low trust is equivalent to high distrust and vice versa [

13], and it has a negative impact on a human relationship. However, because trust and distrust can co-exist, distrust can be incorporated in trust to improve the trust model [

14]. Tang, Hu and Liu (2014) [

12] proved that distrust is not the negation of trust for the domain of the social network, but has a positive impact in enhancing the trustworthiness of social media. In this research, the relationship between trust and distrust in IoT applications will be tested. The outline of the paper is organized as follows:

Section 2 is the literature review of existing LBS applications and trust models.

Section 3 is the detailed design of the proof of concept application, MiniLBS.

Section 4 includes the evaluation and discussion of MiniLBS. Finally, the conclusion and the future work of the current study will be presented.

3. Research Methodology

The research methodology is divided into five phases. In phase 1, the problems existing in the current LBSs such as privacy and security challenges, and incomplete trust management in LBS are examined. After that, the related works regarding the Internet of Things, location-based services and its privacy and security concerns as well as existing trust management technique will be identified. A literature review is important before implementing the proof of concepts application because this review can help us to discover potential problems inherent in a holistic solution. After studying the related works in current location-based service security and privacy issues, case studies on current LBS applications that are available in application stores will be carried out. The privacy setting on each of the applications will be discussed as well.

In phase 2, a prototype of an LBS application was created to accomplish the second research objective. The prototype is used to study the relationship of trust and distrust by implementing balance theory and a multi-faceted model. Different scenarios of how balance theory can be proven must be conducted. The multi-faceted model is implemented in the prototype by the rate that each user identified with the eight trust attributes of belief, faith, honesty, competency, confidence, creditability, reliability, and reputation. The balance theory is calculated by randomly selecting the comments of two users. A user can select whether he/she trusts a comment or not, and the balance theory result will be calculated and use in analyzing the data. To prove the concept of prototype, a survey will be used in phase 3. It is very crucial to know the acceptance of users of the selected trust models. This study used interviews with a small group of users to test the proof of concept for the prototype. The step is necessary because balance theory is related to an undirected signed triad relationship. A prototype was designed by grouping users into groups of three to test the proof of concept prototype and balance theory. The results related to the hypothesis are collected at this stage.

In phase 4, results collected from all the questionnaire surveys will be evaluated by running an analysis through statistical tools. Proof of concept was examined by considering user feedback and then analyzing the performance and effectiveness of the proposed trust management technique. In this stage, a discussion and then the benchmarking of results will be done in support of Hypothesis 1. To answer Hypothesis 2, a suitable trust prediction algorithm was incorporated. In this case, the assumption was that if distrust is the negation of trust, then low trust can be used to predict distrust. By running the trust predictor algorithm, results can be obtained, and the hypothesis can be explicated.

3.1. Experiment Design for H1

Based on the literature review, along with influences from Tang and Liu (2014) [

14] and Heider’s (1946) [

29] balance theory concept, a prototype was designed. Thus, MiniLBS, a location-based service application with a trust rating feature and the balance theory concept, was implemented. MiniLBS is an Android application that has functionalities of a basic LBS application. This location-based social networking adopts the concept of a social networking site including the interactions of the user.

3.2. Adoption of Multi-Faceted Model

MiniOSN is an online social network web page with a trust rating feature implemented. Both of their ideas were influenced by [

34] trust model. In MiniOSN, users can restrict their posts and photos to selected people based on the rating feature. This model can help a user to express his/her subjective views on trust. In MiniLBS, this study slightly modified the ideas. Because MiniLBS is a location-based application, a restriction on posts is unnecessary because the posting is mainly commenting on a route. However, the study did adopt a trust rating feature for friends. Unlike MiniOSN, which needs approval of a friend’s request, MiniLBS only needs to follow a friend, like with Instagram. Before following someone successfully, a user needs to evaluate him by rating him based on the eight trust attributes. These eight trust attributes are: belief, confidence, credibility, competency, faith, honesty, reliability and reputation. One objective of this prototype is to determine the possibility of improving the trust model by incorporating distrust in trust. Therefore, by knowing the trust value of a friend, their relationship to each other can be predicted by the trust level itself. The higher the trust value, the higher the trust level. MiniLBS provides a function that allows a user to add friends by following them. The definitions of the eight trust attributes are shown in

Table 2.

The rating for OSN friends will be calculated based on the eight trust attributes and recorded in a web-based application. Three level of friendship are categorized according to the trust rating.

Table 3 below illustrates the concept of how the value of the range of trust determines the trust level of a friend.

As the value of the trust is indicated from 1 to 10, a borderline of 7.5 was chosen to classify ‘best friend’; an ‘average friendship’ will fall between 0 and 7.5, and a stranger will not be rated. For example, if Z is the account owner, Z will evaluate friends by adding them, Z adds a friend, namely Y. If the rating is higher than 7.5, the name of Y will change to green. This is because, in MiniLBS, a user can comment on the route. Therefore, the name can classify the relationship of the account owner and friends among the list of users that commented on the route. The trust score is calculated by using the Algorithm 1.

Algorithm 1: Calculate Trust Score

(Reputation, Confidence, Belief, Faith, Honesty, Credibility, Competency, Reliability) |

Input: reputation (Vrep), confidence (Vcon), belief (Vbel), faith (Vfai), honesty (Vhon), credibility (Vcre), competency (Vcom), reliability (Vrel)

Output: Best Friend or Average Friend

1.

2. If

3. Return Best Friend

4. Else

5. Return Average Friend |

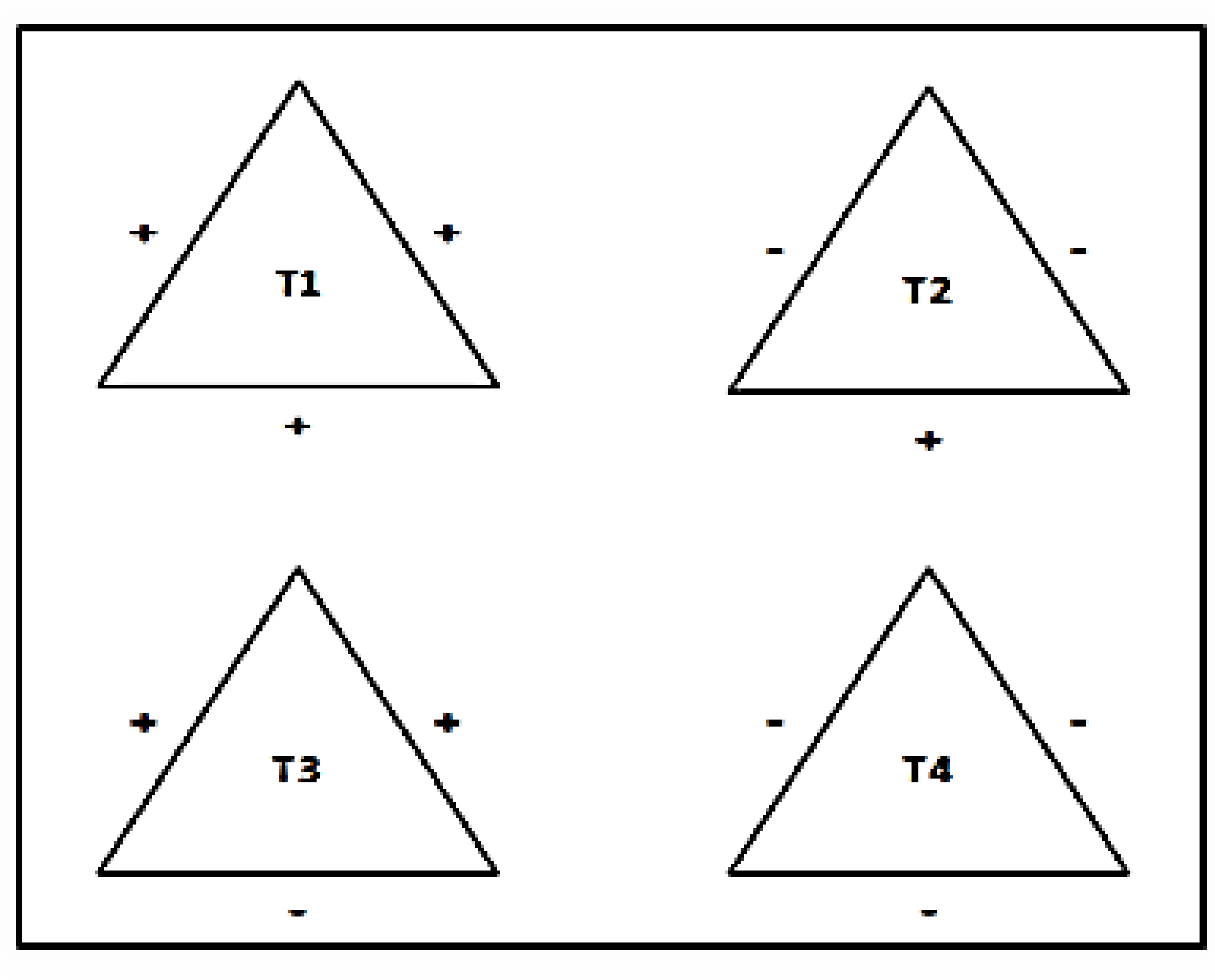

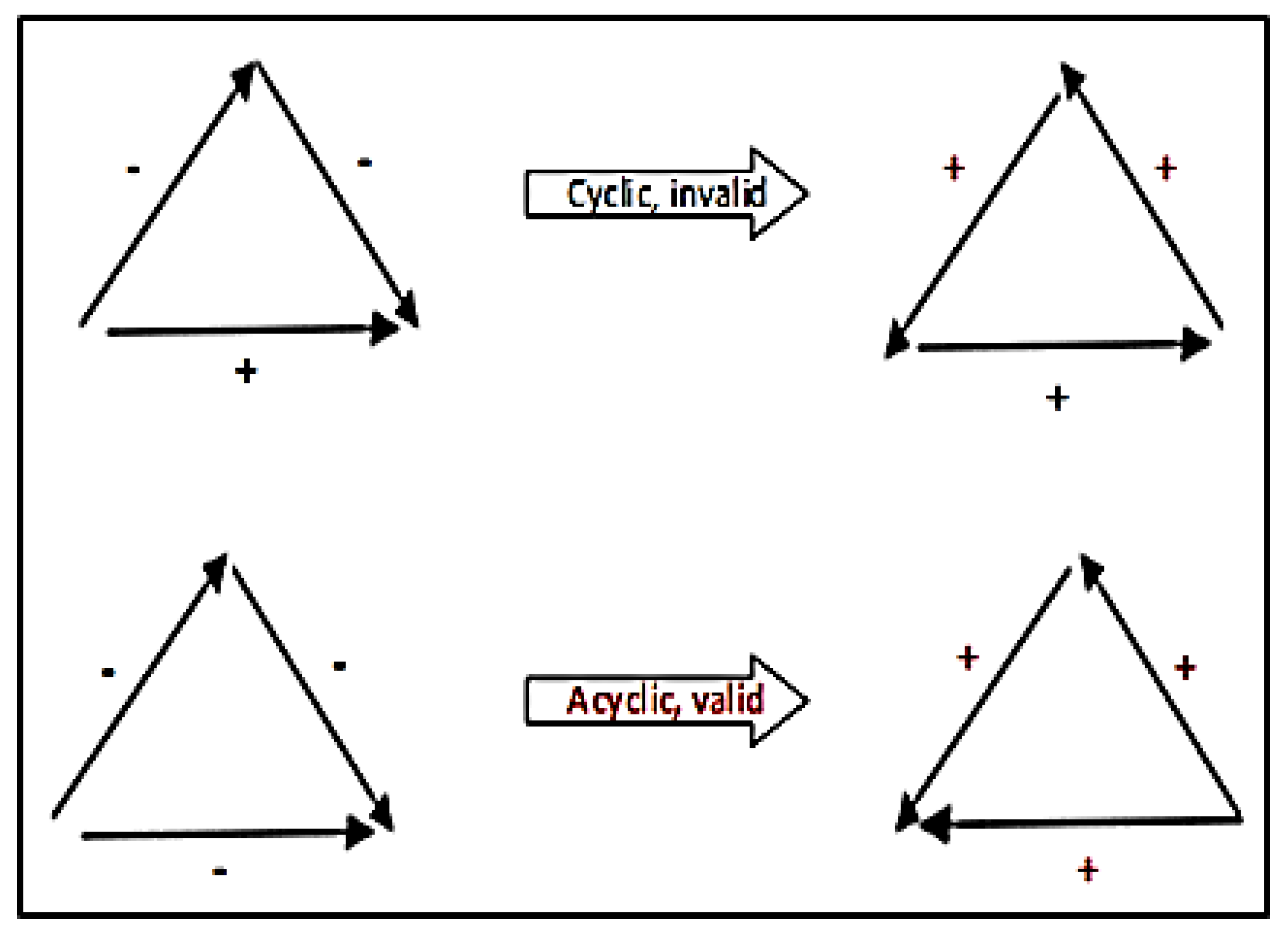

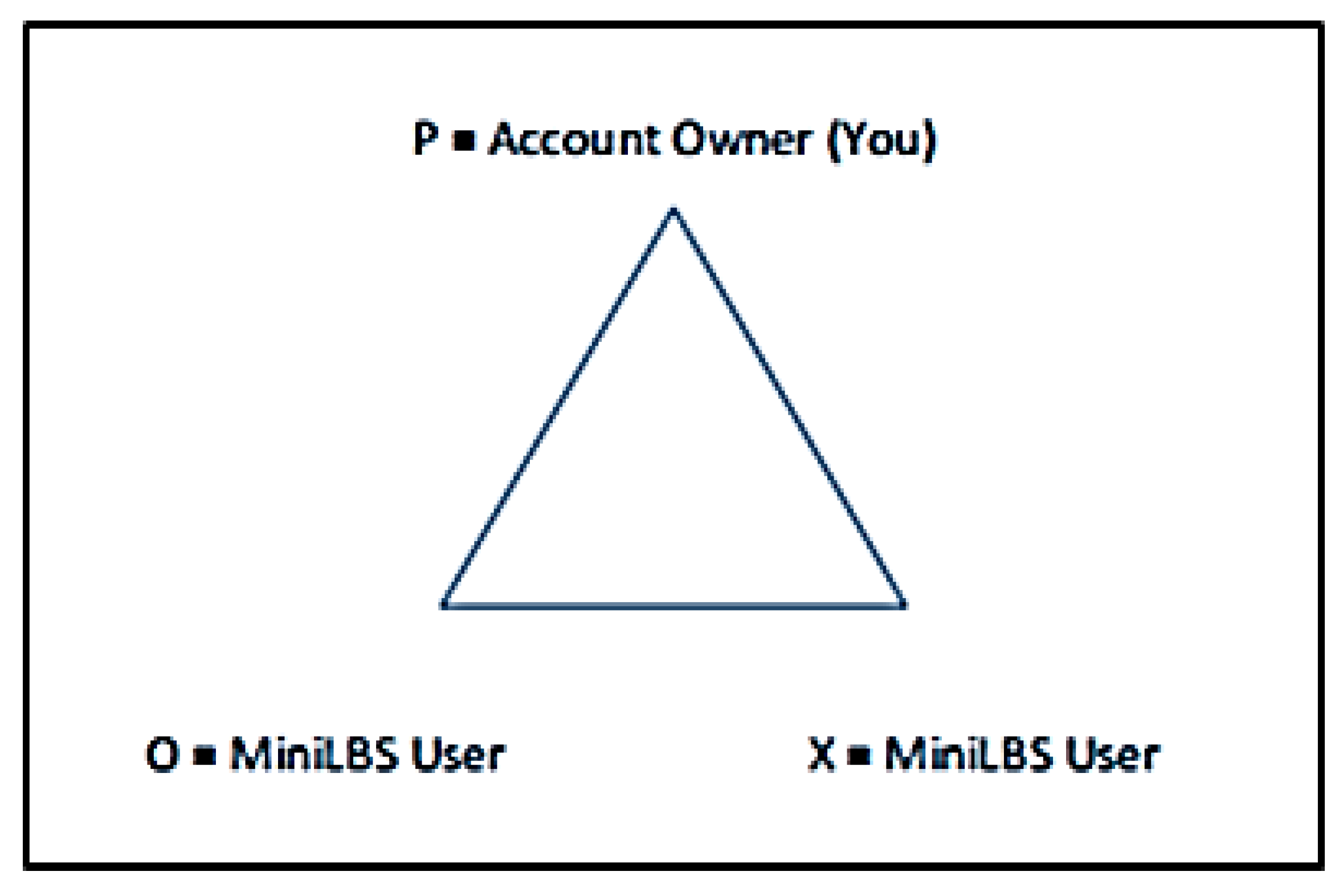

3.3. Adoption of Balance Theory

Balance theory is adopted in MiniLBS. Balance theory explains that attitudes towards persons or objects can influence each other. Heider’s idea [

29] was that we want to maintain psychological stability, and we form relationships that balance our trust and distrust. He developed the P-O-X triangle to examine relationships among three parties. In MiniLBS, the design of triangle P-O-X is a user or route comment as shown in

Figure 3. For example, when a user logs in to MiniLBS, he or she will be denoted as P, which is the account owner in MiniLBS. O and X are the users from MiniLBS. Their relationships with P can be either best friend, average friend or stranger to each other. While the relationship between O and X depends on the type of route’s comment. The type of the route comment can be positive, neutral or negative. In this research, it is assumed that only positive and negative comments are taken into consideration.

The Algorithm 2 below illustrates how balance theory functions on MiniLBS. There are two main functions in this algorithm: the first one is to calculate the balance theory between user and commenter. The second function is to obtain the user-commenter relationship by computing the trust value from eight trust traits.

Algorithm 2: Calculate Balance Theory Action in MiniLBS

(User Id, Comment Id1, First Action, Comment Id2, Second Action) |

Input: userId, commentId1, firstAction, commentId2, secondAction

Output: Balance Theory between three users (userId, user of comment 1 and comment 2)

1. Retrieve comment information from database where id = commentId1

2. Calculate Commenter Relationship for comment 1

3.

4. Retrieve comment information from database where id = commentId2

5. Calculate Commenter Relationship for comment 2

6. If firstAction == ‘Like’

7. User agree comment 1. (+)

8. Else

9. User disagree comment 1. (−)

10. If secondAction == ‘Like’

11. User agree comment 2. (+)

12. Else

13. User disagree comment 2. (−)

14.

15. Save the relationship of the user with commenter 1 and commenter 2 and the action (like or dislike) of the user to comment 1 and 2 information as a Balance Theory Group.

16. If user like either one of comment 1 or comment 2 and dislike another comment

17. Return Negative (−)

18. Else If user like both comment

19. Return Positive (+)

20. Else If user dislike both comments

21. Return Positive (+) |

3.4. MiniLBS Application Usability Tasks

MiniLBS is an application that incorporates the multi-faceted model and the balance theory concept. In this section, the survey findings, as well as the survey discussions, are presented. Several steps are needed for participants to evaluate the prototype, MiniLBS. These are to:

Understand the LBS and eight trust attributes;

Register an account in minilabs;

Select three friends and rate them (multi-faceted model);

Calculate a trust score and classify friends;

Comment on one of the routes;

Run balance theory and calculate the result; and

Complete the user acceptance survey.

MiniLBS is designed to provide a function for offering geo-location services. It is capable of providing the route to a selected location by employing the GPS sensors. For experimental purposes, the selected location was simulated. Once the location is chosen, two environments are simulated: (1) a clear route (no traffic or other disturbance) and (2) a busy route (traffic or with some construction). The steps of using MiniLBS are the following:

The user will register an account and log in. After that, the user will select three friends to evaluate by giving out a trust score based on eight trust attributes. The rating scale for each trust attribute ranges from 1–10. The lowest is 1, and highest is 10. The system will calculate the trust score by adding the trust score for each attribute and dividing it by 8. A friend will be classified. A trust score more than 7.5 is a best friend with high trust, while less than 7.5 is a normal friend.

The user is required to select a route for each normal and disturbance condition. There are three routes provided. Because the collection of comments the balance theory concept is necessary, the user, therefore, needs to comment on the selected route based on the impression of the route.

After that, the user needs to run the balance theory. Two random comments from other users will be selected. The user will add either trust or distrust to the comment. The balance theory result will be calculated. Lastly, the participants are required to complete a user acceptance survey.

The period for conducting in the study was a two-month span lasting from June to August 2017. During these two months, 71 participants were invited to take part in the prototype evaluation. The participants comprised users both with and without IT backgrounds. Of the 71 participants, the ages of 60 participants ranged from 20 to 25 and the ages of 11 participants ranged from 25 to 30. Two types of routes were present in MiniLBS, smooth and disturbance, a participant needed to make a selection from both types of conditions. The distance and time taken to reach a destination were different in these routes.

Table 4 below shows the selection of each route for both of the two different conditions.

The triads generated and users are selected randomly. The trial experiments are done three times and the average result is taken in account. The selection of the route depended on the distance under normal conditions; 35 users selected route 1 as it was the shortest path to reach a selected location X. Only 14 users selected route 3 because it took a longer time and had a longer distance to reach the destination. For the disturbance’s route, route 1 had route construction, route 2 was normal route, while an accident was happening in route 3. As expected, route 2 was the most selected route. The route selection reflected the behavior of a user in response to changes if something was happening. Thus, he/she would try to avoid a route if the route was in trouble. Among the 71 users, 142 balance theory triads were formed in total for both the normal and disturbance condition on the routes. These included 71 triads from the normal route and another 71 triads from the disturbance route.

Table 5 shows that after three trials, an average of 105 of the users or 74% of the users achieved a balance state in the balance theory while the rest did not. One hypothesis tested whether the balance theory could be used in LBS, and, from the results obtained, the nature of the users showed that the balance theory was supported in LBS applications. Of 105 or 74% of the valid balance triads, 63 formed a positive triad, which means the balance state had three positives for each of the edges. Only 42 of them had a negative triad result, which means the combination of two negative edges and one positive edge to form the triad. The ratio was about 6:4 based on the positive and negative triads. Since the test is done within the setting of blind test; the variance of the ratio was because most comments provided on each route were positive comments. Therefore, the chances of getting two positive comments were higher. When a user trusts the first comment, he/she will tend to trust the second positive comment as well.

The balance theory generates the comments randomly by selecting any two comments from different users that commented on the route, and a few possible chances exist for a combination. The first one is that both users are friends of the current user. The second one is that only one of the two users is a friend. The third combination is that both of them are strangers. Each user is required to evaluate three friends during the initial stage of data collection, and the chance of generating a friend’s comments are high. They will tend to trust their friend’s comments rather than the comments of a stranger. As the number of the participants increases, the chances of generating friend’s comment are less due to a huge number of comments.

3.5. Results and Findings in Balance Theory

The balance theory concept applied in MiniLBS focuses on the relationships among observer, person, and object. The relationships among these elements may be either positive or negative. In MiniLBS, the system will generate two random comments after the user has selected the route. The user will need to select whether to either trust or distrust the random comment. After that, the result will be calculated by using the matrix factorization algorithm.

Table 6 below shows the summary of the results for balance theory concept.

First, a user was asked about whether they agreed with the ancient proverb of ‘my friend of my friend is my friend, the enemy of my enemy is my friend’. Accordingly, 72% of them agreed with this phrase because they thought that two opposing parties should work together against a common enemy. Because the respondents were mostly from the Y Generation, they are affected by drama and movies in their lives; this ancient proverb is widely used in many films. Only 20 of them answered ‘No’, and this might have been because they thought that it was not applicable to their life.

More than half of the users thought that balance theory concept was suitable for use in LBS applications. One reason was because they had achieved a balance state with other users when trying out the prototype. The experiment set up in the prototype was easy for them to use. Moreover, the adoption of balance theory did not complicate their usage in a LBS application. Only 17 respondents did not agree that balance theory was suitable for use a LBS application, and this was because they could read all the comments on the route together, and it was pointless to select only two comments from among all the comments.

In the adoption of balance theory concept, many people remain positive about the presence of the balance theory concept in LBS applications. Hypothesis one of this research was proven by getting the result of 74% of the balance state triangle. The balance theory concept comprises sentiment relations or unit relations. The balance theory triad is the relationship between you, another person and an object. Therefore, the balance theory is widely used in product placement in televisions shows and consumer-brand relationships. If a person likes a celebrity and a celebrity likes a product, that person will tend to like the product more to achieve psychological balance. However, if a person already dislikes the product being endorsed by the celebrity, he/she may begin disliking the celebrity, again to achieve psychological balance. In fact, in an LBS application, no consumer-brand relationships exist.

Fake reviews or opinions from users using LBS application could be tackled by adopting multi-facet model as well. Due to the fact, most fake reviews occur as a result of no authentication mechanism between device users and the LBS service provider; this new model will force users to not only identify the users using the model but also to evaluate the other users. Evaluation of other connected users within one LBS network will ensure that only trusted opinion are taken into account. With the adoption of balance theory to further support the evaluations made and correctly designing a triad of three users within one’s LBS connection; the tasks of classifying trustworthy and non-trustworthy users become much more concrete.

In conclusion, more than half of the participants supported both the multi-faceted model and the balance theory implementation. This demonstrated that the model could be used as a solution to enhance trust of LBS technology and mitigate certain security risk such as fake reviews.

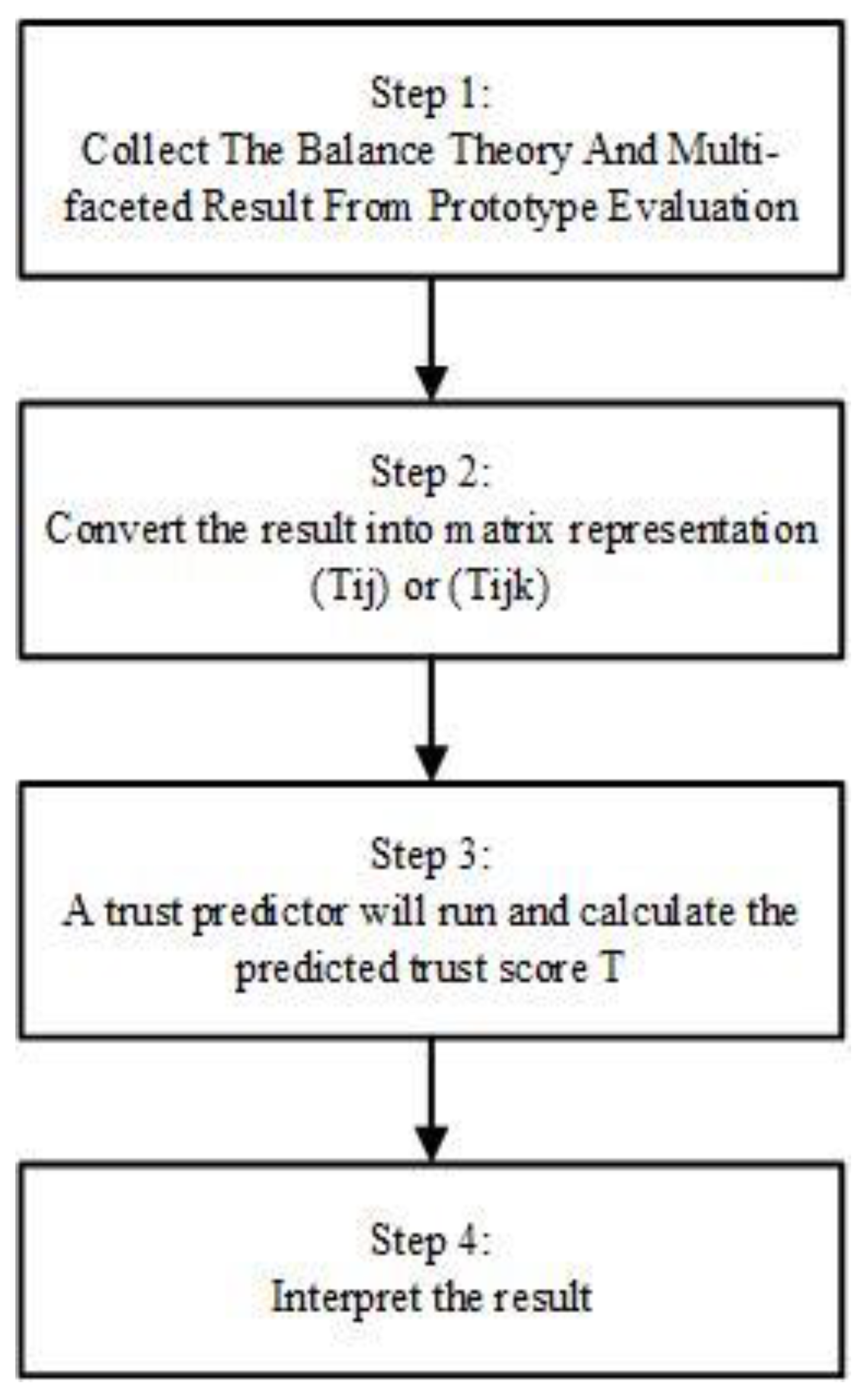

3.6. Experiment Design for H2

Figure 4 represents some steps taken to test and further prove Hypothesis 2. To prove the second hypothesis, a framework was developed by using the result collected from the prototype in

Section 5. Another experiment was developed by using trust propagation [

31] as discussed in

Section 2 earlier. The following sets were used for this experiment.

The result has two parts, the balance theory result involving three users and the multi-faceted result between user and user. A 3*3 matrix that represents a triangle of the balance theory will be formed. A 2*2 matrix that indicates the relationship between user and user was also formed. It is a one to one directed relationship because user A trust user B does not mean that user B will trust user A in the same way. However, the value is more precise compared with the balance theory concept. The relationship between stranger and stranger is denoted as 0.5 instead of 0, which indicated low trust. The trust predictor that will be adopted is the trust propagation algorithm [

31]. The trust propagation Algorithm 3 as below.

| Algorithm 3: Trust Propagation Algorithm |

Input: Belief Matrix, B

Output: Propagated Trust Matrix, P

1. Define the belief matrix, B

2. Case 1: Trust Only

3. Case 2: One-Step Distrust

4. Case 3: Propagated Distrust

5. Calculate the atomic propagation, C by using B

6.

7. Assume Ck be the matrix for trust propagation from user A to user B

8. The final matrix representation, F is calculated.

9. |

After the result from step 3 is obtained, the result will be interpreted by categorizing it as either trust or distrust based on the value.

5. Results and Findings

Based on the testing process for the above scenarios, two different results were obtained. Because the result was in matrix form that result was rounding by finding the average. The results are tabulated in the table below:

The result obtained from the experiment was nearly zero, the smallest one was 3.0253 × 10−95, and the largest value was only 4.967 × 10−43. The results show that distrust is not a negation of trust. This was because if distrust is the negation of trust, a low trust score should accurately indicate distrust. Performance is worsened if the percentage of users is increasing. These results are relatively small and suggest that a low trust score cannot be used to predict distrust. A low trust score and distrust are two different cases. Hence, distrust is not the negation of trust.

5.1. Findings and Discussions

Social scientists, who support distrust as a new dimension of trust, argue that pairs of users with distrust have a very low trust score [

13,

26] phenomenon is especially true with social media data because the users of social media are distributed worldwide, and many pairs of users in the trust network do not know each other. In the context of location-based services, a similar result may occur too. However, in this research, the adoption of a multi-faceted trust framework together with balance theory enhances the overall trust score. The reason is that prior evaluation in scoring the user based on certain attributes contributes to a better adoption of balance theory.

Hypothesis 1 (H1): The balance theory within distrust in trust can enhance trust management model in location-based services (LBS).

However, the performance of balance theory is better than the multi-faceted model; this is because the value we used in balance theory is imprecise. We used 0 or 1 to represent trust and distrust. The balance theory without an actual trust value is not accurate compared with the multi-faceted model that has the real trust score rated by users. The bigger the value, the more precise the result is. When compared with the result in [

12] who adopted the algorithm in SNS, [

12] achieved a better result because the dataset he used is bigger. The number of trust nodes and distrust nodes is greater. Therefore, by using a larger dataset, the result can be improved. Overall, both H1 and H2 were tested with the findings supporting Hypothesis 1 which is the balance theory within distrust in trust can enhance trust management model in LBS. In contrast, for Hypothesis 2, the findings suggest that distrust is not a negation of trust.

5.2. Theoretical and Practical Applications

The main findings of this work suggest that social theories used to prove distrust models can be applicable towards IoT applications such as messaging and location-based services. The adoption of balance theory on its own is incapable of enhancing any trustworthiness towards any application. However, when the theory is merged with a multi-faceted model, overall trust can be enhanced. This proved that the multi-facet trust model is applicable for usage with IoT applications, and theories such as balance theory have a positive impact on this kind of model. A multi-faceted model must be carefully modelled to fit IoT applications, and the significance of using eight attributes is applicable to IoT-based applications. However, this model is only tested on computers and smartphones without even considering the different architectures that both employ. However, on its own, balance theory employed in LBS does not have a positive impact. Overall, the findings of this study conclude that balance theory must be merged with a multi-facet model to achieve its full potential in terms of high trust ratings. Second, this study demonstrates that distrust is not a negation of trust in LBS applications. The result is consistent with [

12] results. Thus, in an IoT-based application, trust and distrust must be tackled as separate representation.

In terms of practicality, a social theory such as balance theory which is used in a distrust model, focuses on social relationships such as a friendship/stranger linkage that has a positive impact on location-based applications. The acceptance of balance theory becomes stronger when a multi-faceted model is applied prior to any usage of an application. Thus, the relationship between trust model and balance theory enhances the trustworthiness of users in the adoption of applications, which are distributed and used in the different context of users. However, even though balance theory, combined with a multi-faceted model, enhanced trustworthiness towards any applications, the negation between trust and distrust is not achieved. In brief, if a user has low trust towards any LBS application/the users using this app or even feedback obtained from the apps’ usage, the balance theory or multi-faceted adoption has no significant contribution in its distrust representation. Thus, trust is not a negation of distrust.

5.3. Limitations of the Study

There are some limitations that need to be considered with respect to this study. They are:

First, the dataset collected is much smaller in contrast to the work of [

14,

31]. Previous studies have used bigger datasets with 12,353 users, 322,044 trust relations and 41,253 distrust relations for evaluation. The size of data reflects the results obtained especially in the matrix factorization steps.

Second, the trust representation of the previous work is a huge interconnected and distributed network. However, in this study, the user and user relationship are represented in balance theory triads. Third, this study has two scenarios of matrix representation, balance theory and multi-faceted model, which might cause differences in the results.