Use of 3-Dimensional Videography as a Non-Lethal Way to Improve Visual Insect Sampling

Abstract

1. Introduction

2. Materials and Methods

2.1. Study Area

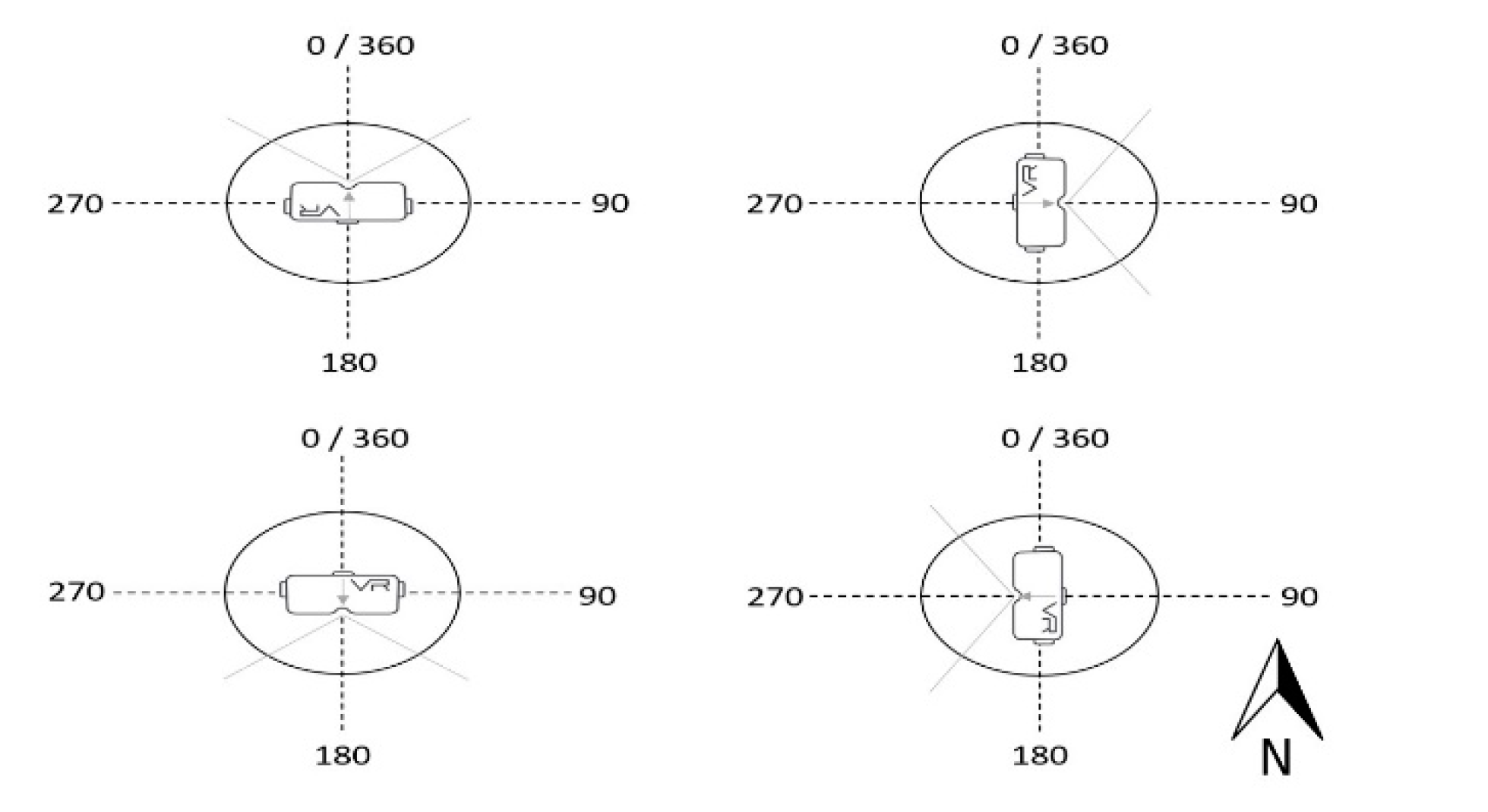

2.2. Use of 3D Camera

2.3. Video Processing

2.4. Observer Trials with Virtual Reality Headset

3. Results

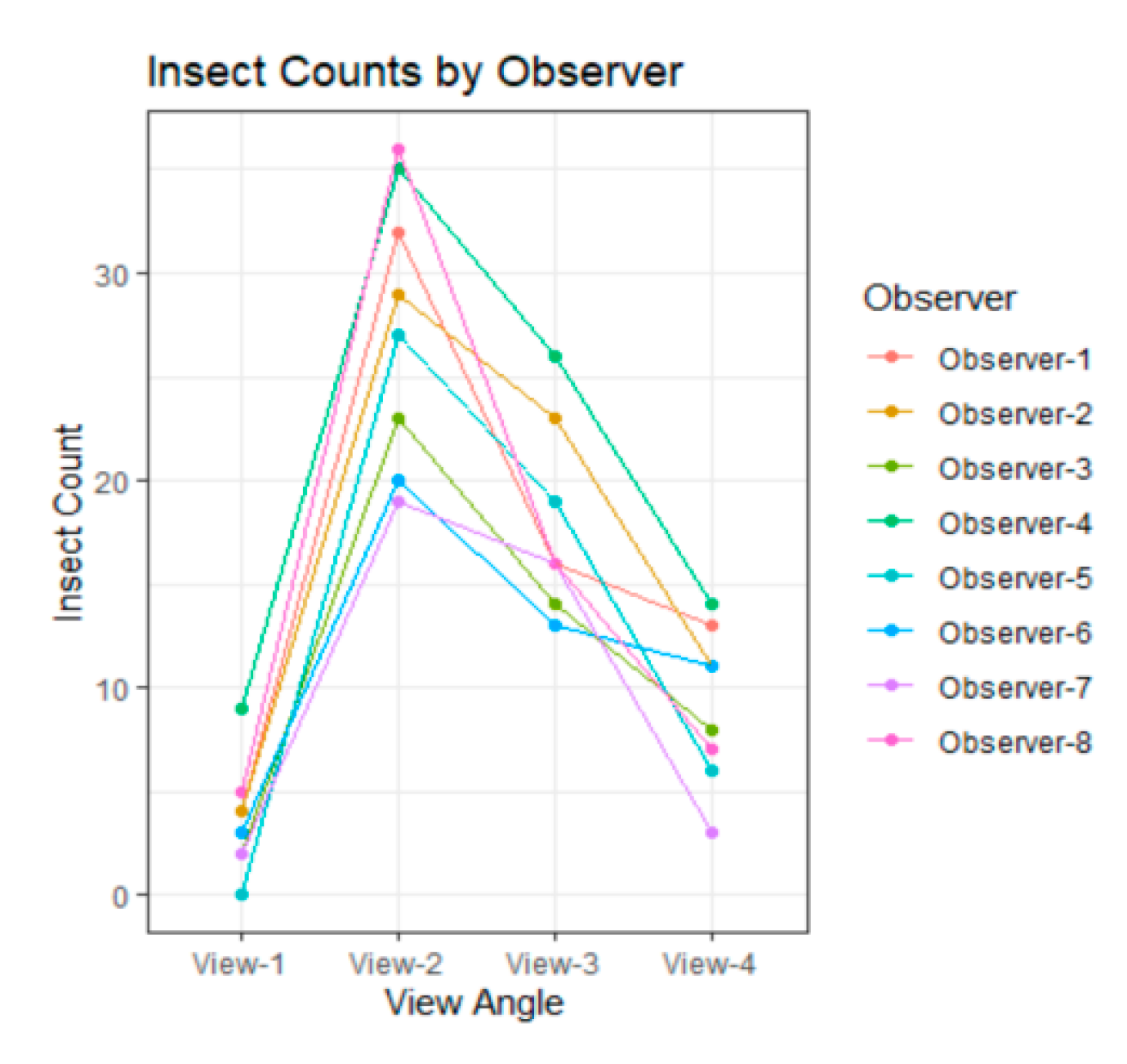

3.1. Variability among Observers

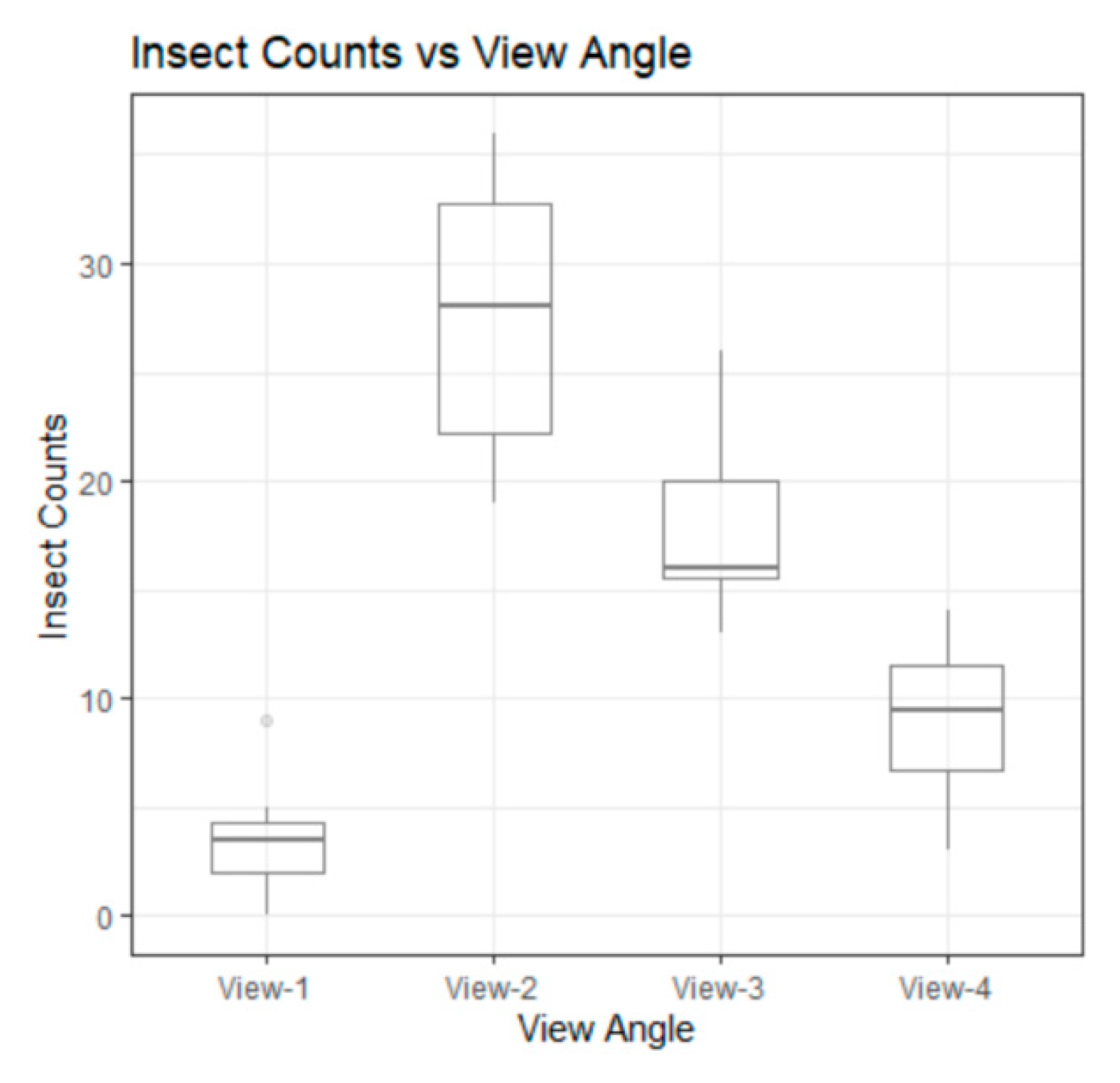

3.2. Difference among Views

4. Discussion

5. Conclusions and Future Research

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Brondizio, E.S.; Settele, J.; Díaz, S.; Ngo, H.T. Global Assessment Report on Biodiversity and Ecosystem Services of the Intergovernmental Science-Policy Platform on Biodiversity and Ecosystem Services; IPBES Secretariat: Bonn, Germany, 2019. [Google Scholar]

- Díaz, S.; Settele, J.; Brondízio, E.S.; Ngo, H.T.; Guèze, M. Summary for Policymakers of the Global Assessment Report on Biodiversity and Ecosystem Services of the Intergovernmental Science-Policy Platform on Biodiversity and Ecosystem Services; IBPES Secretariat: Bonn, Germany, 2020. [Google Scholar]

- Dangles, O.; Casas, J. Ecosystem services provided by insects for achieving sustainable development goals. Ecosyst. Serv. 2019, 35, 109–115. [Google Scholar] [CrossRef]

- Tallamy, D. Bringing Nature Home: How Native Plants Sustain Wildlife in Our GARDENS, Updated and Expanded; Timber Press: Portland, OR, USA, 2009. [Google Scholar]

- Shelomi, M. Why we still don’t eat insects: Assessing entomophagy promotion through a diffusion of innovations framework. Trends Food Sci. Technol. 2015, 45, 311–318. [Google Scholar] [CrossRef]

- Lockwood, J. The Infested Mind: Why Humans Fear, Loathe, and Love Insects; Oxford University Press: Oxford, UK, 2013. [Google Scholar]

- Belovsky, G.E.; Slade, J.B. Insect herbivory accelerates nutrient cycling and increases plant production. Proc. Natl. Acad. Sci. USA 2000, 97, 14412–14417. [Google Scholar] [CrossRef] [PubMed]

- Travers, S.E.; Fauske, G.M.; Fox, K.; Ross, A.A.; Harris, M.O. The hidden benefits of pollinator diversity for the rangelands of the Great Plains: Western prairie fringed orchids as a case study. Rangelands 2011, 33, 20–26. [Google Scholar] [CrossRef]

- Carson, W.P.; Hovick, S.M.; Baumert, A.J.; Bunker, D.E.; Pendergast, T.H. Evaluating the post-release efficacy of invasive plant biocontrol by insects: A comprehensive approach. Arthropod-Plant Interact. 2008, 2, 77–86. [Google Scholar] [CrossRef]

- McGregor, S.E. Insect Pollination of Cultivated Crop Plants; Agriculture Research Service, US Department of Agriculture: Tuscon, AZ, USA, 1976; Volume 496.

- Harmon, J.P.; Ganguli, A.C.; Solga, M.A. An overview of pollination in rangelands: Who, why, and how. Rangelands 2011, 33, 4–9. [Google Scholar] [CrossRef][Green Version]

- Menz, M.M.H.; Phillips, R.D.; Winfree, R.; Kremen, C.; Aizen, M.A.; Johnson, S.D.; Dixon, K.W. Reconnecting plants and pollinators: Challenges in the restoration of pollination mutualisms. Trends Plant Sci. 2011, 16, 4–12. [Google Scholar] [CrossRef]

- Cusser, S.; Goodell, K. Diversity and distribution of floral resources influence the restoration of plant-pollinator networks on a reclaimed strip mine. Restor. Ecol. 2013, 21, 713–721. [Google Scholar] [CrossRef]

- Wratten, S.D.; Gillespie, M.; Decourtye, A.; Mader, E.; Desneux, N. Pollinator habitat enhancement: Benefits to other ecosystem services. Agric. Ecosyst. Environ. 2012, 159, 112–122. [Google Scholar] [CrossRef]

- Kaiser-Bunbury, C.N.; Mougal, J.; Whittington, A.E.; Valentin, T.; Gabriel, R.; Olesen, J.M.; Bluthgen, N. Ecosystem restoration strengthens pollination network resilience and function. Nature 2017, 542, 223–227. [Google Scholar] [CrossRef]

- Harmon-Threatt, A.N.; Hendrix, S.D. Prairie restoration and bees: The potential ability of seed mixes to foster native bee communities. Basic Appl. Ecol. 2015, 16, 64–72. [Google Scholar] [CrossRef]

- Forup, M.L.; Henson, K.S.; Craze, P.G.; Memmott, J. The restoration of ecological interactions: Plant-pollinators networks on ancient and restored heathlands. J. Appl. Ecol. 2008, 3, 742–752. [Google Scholar] [CrossRef]

- Cutting, B.T.; Tallamy, D.W. An evaluation of butterfly gardens for restoration habitat for the monarch butterfly (Lepidoptera: Danaidae). Environ. Entomol. 2015, 44, 1328–1335. [Google Scholar] [CrossRef]

- Westphal, C.; Steffan-Dewenter, I.; Tscharntke, T. Mass flowering crops enhance pollinator densities at landscape scales. Ecol. Lett. 2003, 6, 961–965. [Google Scholar] [CrossRef]

- Longcore, T. Terrestrial arthropods as indicators of ecological restoration success in coastal sage scrub (California, USA). Restor. Ecol. 2003, 11, 397–409. [Google Scholar] [CrossRef]

- Kearns, C.A.; Inouye, D.W. Techniques for Pollination Biologists; University Press of Colorado: Niwot, CO, USA, 1993. [Google Scholar]

- Droege, S.; Engler, J.; Sellers, E.; O’Brien, L. National Protocol Framework for the Inventory and Monitoring of Bees; US Fish and Wildlife Service: Fort Collins, CO, USA, 2016. [Google Scholar]

- Legg, D.E.; Lockwood, J.A.; Brewer, M.J. Variability in rangeland grasshopper (Orthoptera: Acrididae) counts when using the standard, visualized-sampling method. J. Econ. Entomol. 1996, 89, 1143–1150. [Google Scholar] [CrossRef]

- Solga, M.J.; Harmon, J.P.; Ganguli, A.C. Timing is everything: An overview of phenological changes to plants and their pollinators. Nat. Areas J. 2014, 34, 227–235. [Google Scholar] [CrossRef]

- Bechinski, E.J.; Pedigo, L.P. Evaluation of Methods for Sampling Predatory Arthropods in Soybeans. Environ. Entomol. 1982, 3, 756–761. [Google Scholar] [CrossRef]

- Spafford, R.D.; Lortie, C.J. Sweeping beauty: Is grassland arthropod community composition effectively estimated by sweep netting? Ecol. Evol. 2013, 3, 3347–3358. [Google Scholar] [CrossRef]

- Dell, A.I.; Bender, J.A.; Branson, K.; Couzin, J.D.; de Polavieja, G.G.; Noldus, L.P.; Perez-Escudero, A.; Perona, P.; Straw, A.D.; Wikelski, M.; et al. Automated image-based tracking and its application in ecology. Trends Ecol. Evol. 2014, 29, 417–428. [Google Scholar] [CrossRef]

- Wallace, L.; Hillman, S.; Reinke, K.; Hally, B. Non-destructive estimation of above-ground surface and near-surface biomass using 3D terrestrial remote sensing techniques. Methods Ecol. Evol. 2017, 8, 1607–1616. [Google Scholar] [CrossRef]

- Chiron, G.; Gomez-Kramer, P.; Menard, M. Detecting and tracking honeybees in 3D at the beehive entrance using stereo vision. J. Image Video Process. 2013. [Google Scholar] [CrossRef]

- Feltz, C.J.; Miller, G.E. An asymptotic test for the equality of coefficients of variation from k population. Stat. Med. 1996, 15, 647–658. [Google Scholar] [CrossRef]

- R Core Team. R: A Language and Environment for Statistical Computing; R Foundation for Statistical Computing: Vienna, Austria, 2019; Available online: https://www.R-project.org/ (accessed on 20 July 2020).

- Mazerolle, M.J.; Villard, M. Patch characteristics and landscape context as predictors of species presence and abundance: A review. Ecoscience 1999, 6, 117–124. [Google Scholar] [CrossRef]

- Wenninger, E.J.; Inouye, R.S. Insect community response to plant diversity and productivity in a sagebrush-steppe ecosystem. J. Arid Environ. 2008, 72, 24–33. [Google Scholar] [CrossRef]

- Morrison, L.W. Observer bias in vegetation surveys: A review. J. Plant Ecol. 2015, 9, 367–379. [Google Scholar] [CrossRef]

- Vaughan, K.L.; Vaughan, R.E.; Seeley, J.M. Experiential learning in soil science: Use of an augmented reality sandbox. Nat. Sci. Educ. 2017, 46, 1–5. [Google Scholar] [CrossRef]

- Hagler, J.R.; Thompson, A.L.; Stefanek, M.A.; Machtley, S.A. Use of body-mounted cameras to enchance data collection: An evaluation of two arthropod sampling techniques. J. Insect Sci. 2018, 18, 1–8. [Google Scholar] [CrossRef] [PubMed]

- Curran, M.F.; Cox, S.E.; Robinson, T.J.; Robertson, B.L.; Rogers, K.J.; Sherman, Z.A.; Adams, T.A.; Strom, C.F.; Stahl, P.D. Spatially balanced sampling and ground-level imagery for vegetation monitoring on reclaimed well pads. Restor. Ecol. 2019, 27, 974–980. [Google Scholar] [CrossRef]

- Havens, K.; Vitt, P. The importance of phenological diversity in seed mixes for pollinator restoration. Nat. Areas J. 2016, 36, 531–537. [Google Scholar] [CrossRef]

- Curran, M.F.; Crow, T.M.; Hufford, K.M.; Stahl, P.D. Forbs and greater sage-grouse habitat restoration efforts: Suggestions for improving commercial seed availability and restoration practices. Rangelands 2015, 37, 211–216. [Google Scholar] [CrossRef]

- United States Fish and Wildlife Service. Survey Protocols for the Rusty Patched Bumble Bee (Bombus Affinis); US Fish and Wildlife Service: Bloomington, MN, USA, 2019. [Google Scholar]

- Hagler, J.R.; Jackson, C.G. Methods for marking insects: Current techniques and future prospects. Annu. Rev. Entomol. 2001, 46, 511–543. [Google Scholar] [CrossRef]

- Boyle, N.K.; Tripodi, A.D.; Machtley, S.A.; Strange, J.P.; Pitts-singer, T.L.; Hagler, J.R. A nonlethal method to examine Non-Apis bees for mark-capture research. J. Insect Sci. 2018, 18, 1–6. [Google Scholar] [CrossRef] [PubMed]

- Coburn, J.Q.; Freeman, I.; Salmon, J.L. A review of the capabilities of current low cost virtual reality technology and its potential to enhance the design process. J. Comput. Inf. Sci. Eng. 2017, 17, 031013. [Google Scholar] [CrossRef]

- Reilly, J.R.; Artz, D.R.; Biddinger, D.; Bobiwash, K.; Boyle, N.K.; Brittain, C.; Brokaw, J.; Campbell, J.W.; Daniels, J.; Elle, E.; et al. Crop production in the USA is frequently limited by a lack of pollinators. Proc. R. Soc. B 2020, 287. [Google Scholar] [CrossRef]

| View 1 | View 2 | View 3 | View 4 | |

|---|---|---|---|---|

| Mean, 95% C.I. | 3.625, [2.3, 4.94] | 27.625, [23.98, 31.27] | 17.875, [14.95, 20.80] | 9.125, [7.03, 11.22] |

| std. deviation | 2.669 | 6.545 | 4.568 | 3.758 |

| CV | 0.736 | 0.237 | 0.253 | 0.411 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Curran, M.F.; Summerfield, K.; Alexander, E.-J.; Lanning, S.G.; Schwyter, A.R.; Torres, M.L.; Schell, S.; Vaughan, K.; Robinson, T.J.; Smith, D.I. Use of 3-Dimensional Videography as a Non-Lethal Way to Improve Visual Insect Sampling. Land 2020, 9, 340. https://doi.org/10.3390/land9100340

Curran MF, Summerfield K, Alexander E-J, Lanning SG, Schwyter AR, Torres ML, Schell S, Vaughan K, Robinson TJ, Smith DI. Use of 3-Dimensional Videography as a Non-Lethal Way to Improve Visual Insect Sampling. Land. 2020; 9(10):340. https://doi.org/10.3390/land9100340

Chicago/Turabian StyleCurran, Michael F., Kyle Summerfield, Emma-Jane Alexander, Shawn G. Lanning, Anna R. Schwyter, Melanie L. Torres, Scott Schell, Karen Vaughan, Timothy J. Robinson, and Douglas I. Smith. 2020. "Use of 3-Dimensional Videography as a Non-Lethal Way to Improve Visual Insect Sampling" Land 9, no. 10: 340. https://doi.org/10.3390/land9100340

APA StyleCurran, M. F., Summerfield, K., Alexander, E.-J., Lanning, S. G., Schwyter, A. R., Torres, M. L., Schell, S., Vaughan, K., Robinson, T. J., & Smith, D. I. (2020). Use of 3-Dimensional Videography as a Non-Lethal Way to Improve Visual Insect Sampling. Land, 9(10), 340. https://doi.org/10.3390/land9100340