IDN-MOTSCC: Integration of Deep Neural Network with Hybrid Meta-Heuristic Model for Multi-Objective Task Scheduling in Cloud Computing

Abstract

1. Introduction

- To implement an optimal task scheduling model using a hybrid meta-heuristic algorithm that is incorporated with a DNN for deriving multi-objective optimization in a cloud environment.

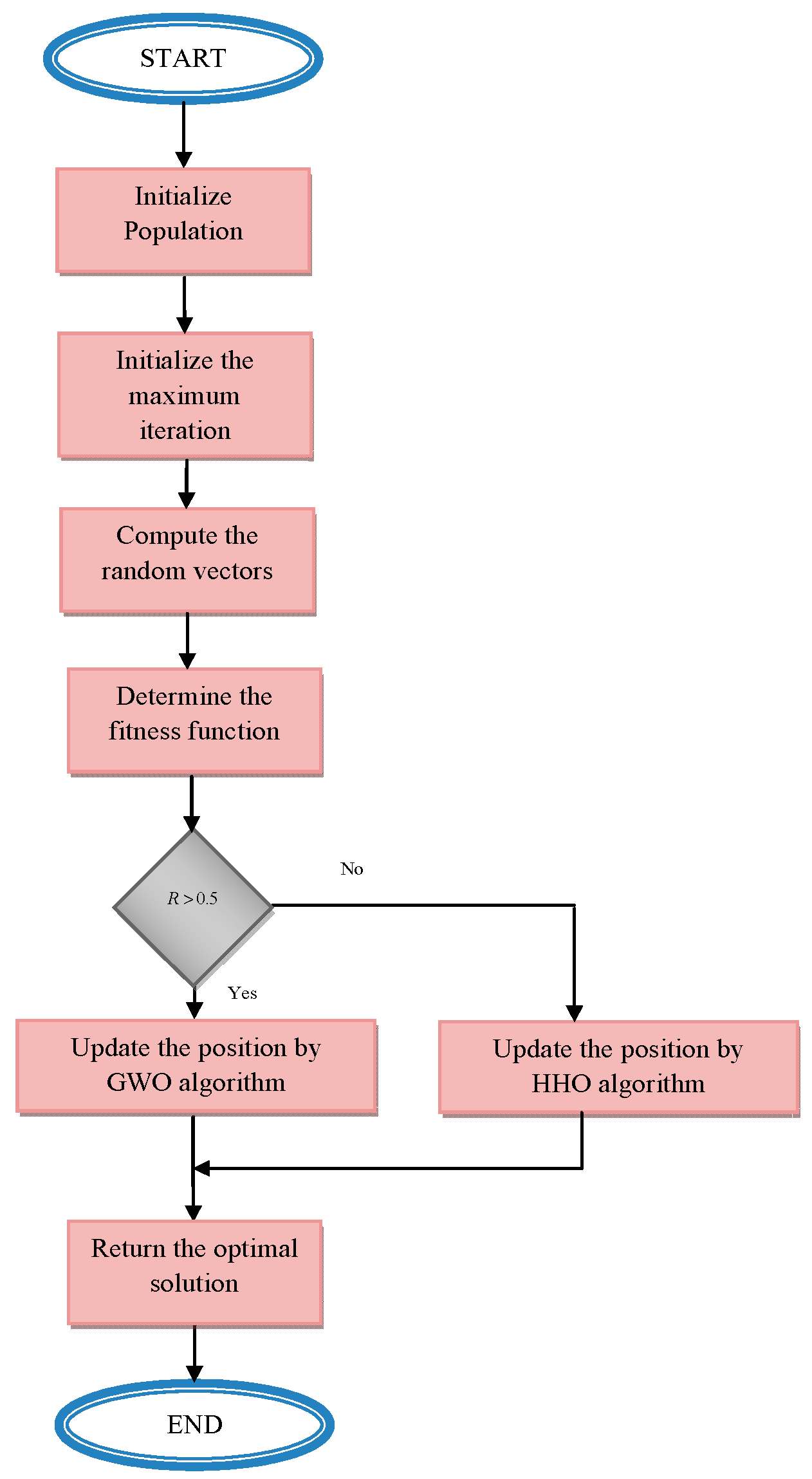

- To develop a hybrid meta-heuristic algorithm named IGW-HHO, in which the existing algorithm GWO is integrated with HHO. It is mainly used to render optimal solutions to enhance scheduling performance and compute the objective function.

- To develop an optimized DNN-based scheduling network, where the number of hidden neurons is optimized by the proposed IGW-HHO algorithm. It is used to obtain the best solution to reduce offloading and overfitting problems while scheduling tasks to VMs.

- To optimize certain factors, an objective function is derived for task scheduling. The derived function is mainly focused on minimizing the makespan and also reducing the processing time for task allocation.

- The performance is analyzed using different error metrics, and a comparative analysis is carried out for convergence with existing optimization algorithms, leading to lower error in optimal task scheduling.

2. Literature Review

2.1. Related Works

2.2. Research Gaps and Challenges

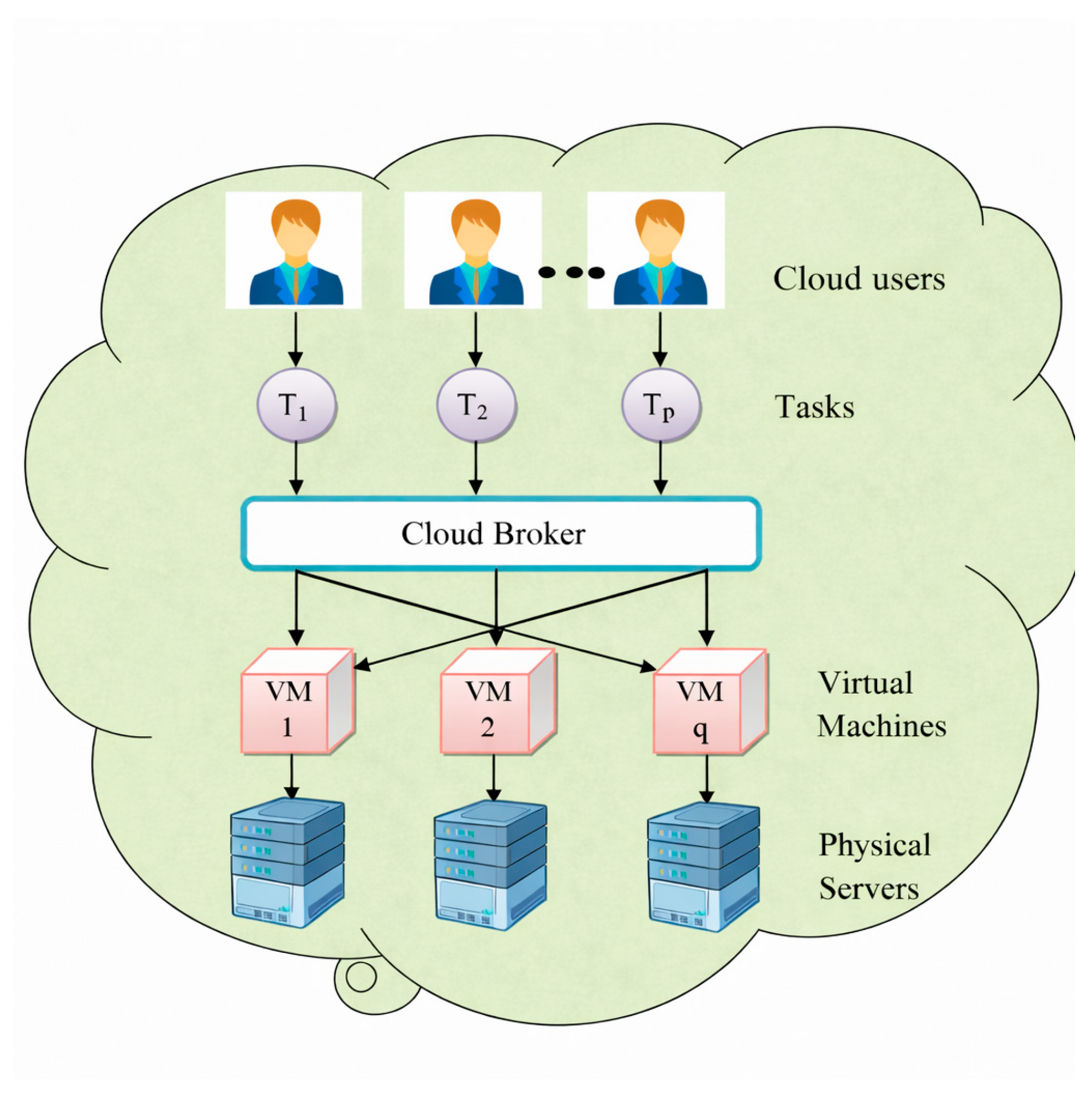

3. Task Scheduling Problem in Cloud Computing: Solution Based on Soft Computing and Deep Learning

3.1. Task Scheduling in Cloud Computing

- Offers services on the basis of request and response.

- Ease of accessing a wide-area network.

- Resource utilization of multiple clients or tenants.

- Increasing the reliability, elasticity, and scalability.

- Monitors the provision of services.

- ➢

- Provides better QoS services.

- ➢

- Manages CPU and memory.

- ➢

- Standard scheduling algorithms increase resource utilization while mitigating task processing time.

- ➢

- Enhances performance by performing all the tasks.

- ➢

- Suitable for real-time application.

- ➢

- Attains high throughput.

- ➢

- Balances workload issues.

3.2. Proposed Task Scheduling Model

3.3. Problem Formulation

4. Solution Generated by Optimized Deep Neural Network for Optimal Task Scheduling

4.1. DNN Model

- (a)

- Irrelevant data are deduced and optimized to acquire the best outcome.

- (b)

- Evades time consumption complexity.

- (c)

- Enhances the robustness of the system.

- (d)

- Used for many applications and has an adaptable nature.

4.2. Optimized DNN Model for Solution Generation

5. Development of Hybrid Meta-Heuristic Algorithm for Task Scheduling in Cloud Computing

5.1. Proposed Optimization

| Algorithm 1: Proposed IGW-HHO algorithm | ||

| Initialize number of population, maximum iteration | ||

| Compute the random vectors, and | ||

| Determine the objective function | ||

| For () | ||

| Determining the fitness function for solutions as | ||

| If | ||

| Solution update by GWO | ||

| Search agents are alpha, beta, and gamma | ||

| Calculate the fitness of each agent | ||

| Update the position of each search agent using Equation (9) | ||

| Update the position for alpha, beta, and gamma wolves | ||

| Else | ||

| Solution update by HHO | ||

| Assume four different age groups for horses | ||

| Update the position vector using Equation (16) | ||

| Using Equation (17), the velocity is computed | ||

| End if | ||

| Obtain the optimal solution | ||

| End for | ||

| Return the best solution | ||

5.2. Derived Objective Function for Optimal Task Scheduling

6. Results and Analysis

6.1. Experimental Setup

6.2. Performance Metrics

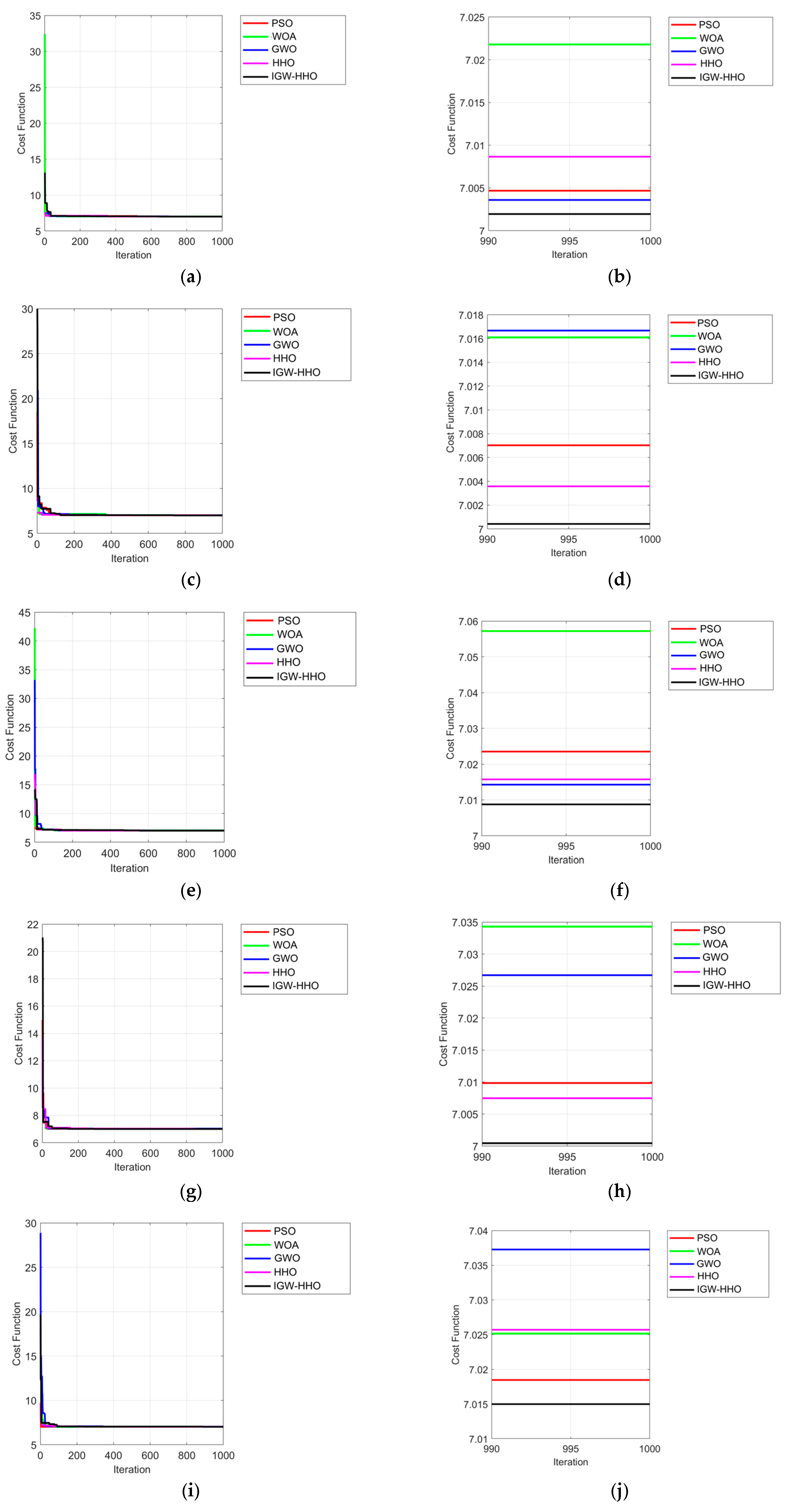

6.3. Convergence Analysis on Task Variation over Heuristic Algorithms

6.4. Convergence Analysis on VM Variation over Heuristic Algorithms

6.5. Overall Analysis of Task Variation over Heuristic Algorithms

6.6. Overall Analysis of VM Variation over Heuristic Algorithms

6.7. Statistical Analysis of Task Variation over Heuristic Algorithms

6.8. Statistical Analysis of VM Variation over Heuristic Algorithms

7. Conclusions and Future Direction

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Panda, S.K.; Jana, P.K. Efficient task scheduling algorithms for heterogeneous multi-cloud environment. J. Supercomput. 2015, 71, 1505–1533. [Google Scholar] [CrossRef]

- Munir, E.U.; Li, J.; Shi, S. QoS sufferage heuristic for independent task scheduling in grid. Inf. Technol. J. 2007, 6, 1166–1170. [Google Scholar] [CrossRef]

- Kumar, A.; Kumar, M.; Mahapatra, R.P.; Bhattacharya, P.; Le, T.T.H.; Verma, S.; Kavita; Mohiuddin, K. Flamingo-Optimization-Based Deep Convolutional Neural Network for IoT-Based Arrhythmia Classification. Sensors 2023, 23, 4353. [Google Scholar] [CrossRef]

- Kaur, K.; Verma, S.; Bansal, A. Iot big data analytics in healthcare: Benefits and challenges. In Proceedings of the 6th International Conference on Signal Processing, Computing and Control (ISPCC), Waknaghat, India, 7–9 October 2021; IEEE: New York, NY, USA, 2021; pp. 176–181. [Google Scholar]

- He, X.; Sun, X.; Von Laszewski, G. QoS guided min-min heuristic for grid task scheduling. J. Comput. Sci. Technol. 2003, 18, 442–451. [Google Scholar]

- Duan, K.; Fong, S.; Siu, S.W.; Song, W.; Guan, S.S.U. Adaptive incremental genetic algorithm for task scheduling in cloud environments. Symmetry 2018, 10, 168. [Google Scholar] [CrossRef]

- Milan, S.T.; Rajabion, L.; Darwesh, A.; Hosseinzadeh, M.; Navimipour, N.J. Priority-based task scheduling method over cloudlet using a swarm intelligence algorithm. Clust. Comput. 2020, 23, 663–671. [Google Scholar] [CrossRef]

- Topcuoglu, H.; Hariri, S.; Wu, M.Y. Performance-effective and low-complexity task scheduling for heterogeneous computing. IEEE Trans. Parallel Distrib. Syst. 2002, 13, 260–274. [Google Scholar] [CrossRef]

- Kfatheen, S.V.; Marimuthu, A. ETS: An efficient task scheduling algorithm for grid computing. Adv. Comput. Sci. Technol. 2017, 10, 2911–2925. [Google Scholar]

- Zhang, Y.; Xu, B. Task scheduling algorithm based-on QoS constrains in cloud computing. Int. J. Grid Distrib. Comput. 2015, 8, 269–280. [Google Scholar] [CrossRef]

- Sinnen, O.; Sousa, L.A. Communication contention in task scheduling. IEEE Trans. Parallel Distrib. Syst. 2005, 16, 503–515. [Google Scholar] [CrossRef]

- Jang, S.H.; Kim, T.Y.; Kim, J.K.; Lee, J.S. The study of genetic algorithm-based task scheduling for cloud computing. Int. J. Control Autom. 2012, 5, 157–162. [Google Scholar]

- Agarwal, M.; Srivastava, G.M.S. A PSO algorithm based task scheduling in cloud computing. Int. J. Appl. Metaheuristic Comput. 2019, 10, 1–17. [Google Scholar] [CrossRef]

- Baital, K.; Chakrabarti, A. Dynamic scheduling of real-time tasks in heterogeneous multicore systems. IEEE Embed. Syst. Lett. 2018, 11, 29–32. [Google Scholar] [CrossRef]

- Lathigara, A.; Aluvalu, R. Clustering based EO with MRF technique for effective load balancing in cloud computing. Int. J. Pervasive Comput. Commun. 2021, 20, 168–192. [Google Scholar]

- Wang, G.; Yu, H.C. Task scheduling algorithm based on improved Min-Min algorithm in cloud computing environment. In Applied Mechanics and Materials; Trans Tech Publications Ltd.: Baech, Switzerland, 2013; Volume 303, pp. 2429–2432. [Google Scholar]

- Ghasemian Koochaksaraei, M.H.; Toroghi Haghighat, A.; Rezvani, M.H. A bartering double auction resource allocation model in cloud environments. Concurr. Comput. Pract. Exp. 2022, 34, e7024. [Google Scholar] [CrossRef]

- Kumar, R.; Bhagwan, J. A comparative study of meta-heuristic-based task scheduling in cloud computing. In Artificial Intelligence and Sustainable Computing: Proceedings of ICSISCET 2020; Springer: Singapore, 2022; pp. 129–141. [Google Scholar]

- Khademi Dehnavi, M.; Broumandnia, A.; Hosseini Shirvani, M.; Ahanian, I. A hybrid genetic-based task scheduling algorithm for cost-efficient workflow execution in heterogeneous cloud computing environment. Clust. Comput. 2024, 27, 10833–10858. [Google Scholar] [CrossRef]

- Kumar, M.; Kumar, A.; Kumar, S.; Chauhan, P.; Selvarajan, S. An African vulture optimization algorithm based energy efficient clustering scheme in wireless sensor networks. Sci. Rep. 2024, 14, 31412. [Google Scholar] [CrossRef]

- Madni, S.H.H.; Abd Latiff, M.S.; Abdullahi, M.; Abdulhamid, S.I.M.; Usman, M.J. Performance comparison of heuristic algorithms for task scheduling in IaaS cloud computing environment. PLoS ONE 2017, 12, e0176321. [Google Scholar] [CrossRef]

- Zhan, S.; Huo, H. Improved PSO-based task scheduling algorithm in cloud computing. J. Inf. Comput. Sci. 2012, 9, 3821–3829. [Google Scholar]

- Kumar, M.; Mukherjee, P.; Verma, S.; Kavita; Shafi, J.; Wozniak, M.; Ijaz, M.F. A smart privacy preserving framework for industrial IoT using hybrid meta-heuristic algorithm. Sci. Rep. 2023, 13, 5372. [Google Scholar] [CrossRef]

- Kfatheen, S.V.; Banu, M.N. MiM-MaM: A new task scheduling algorithm for grid environment. In Proceedings of the 2015 International Conference on Advances in Computer Engineering and Applications, Ghaziabad, India, 19–20 March 2015; IEEE: New York, NY, USA, 2015; pp. 695–699. [Google Scholar]

- Li, G.; Wu, Z. Ant colony optimization task scheduling algorithm for SWIM based on load balancing. Future Internet 2019, 11, 90. [Google Scholar] [CrossRef]

- Arif, M.S.; Iqbal, Z.; Tariq, R.; Aadil, F.; Awais, M. Parental prioritization-based task scheduling in heterogeneous systems. Arab. J. Sci. Eng. 2019, 44, 3943–3952. [Google Scholar] [CrossRef]

- Kothi Laxman, R.R.; Lathigara, A.; Aluvalu, R.; Viswanadhula, U.M. PGWO-AVS-RDA: An intelligent optimization and clustering based load balancing model in cloud. Concurr. Comput. Pract. Exp. 2022, 34, e7136. [Google Scholar] [CrossRef]

- Raghavender Reddy, K.L.; Lathigara, A.; Aluvalu, R.; Viswanadhula, U.M. Scheduling the Tasks and Balancing the Loads in Cloud Computing Using African Vultures-Aquila Optimization Model. In Proceedings of the International Conference on Intelligent Computing and Networking, Hangzhou, China, 17–18 November 2023; Springer Nature: Singapore, 2023; pp. 197–219. [Google Scholar]

- Zhang, A.N.; Chu, S.C.; Song, P.C.; Wang, H.; Pan, J.S. Task scheduling in cloud computing environment using advanced phasmatodea population evolution algorithms. Electronics 2022, 11, 1451. [Google Scholar] [CrossRef]

- Guo, X. Multi-objective task scheduling optimization in cloud computing based on fuzzy self-defense algorithm. Alex. Eng. J. 2021, 60, 5603–5609. [Google Scholar] [CrossRef]

- Al-Maytami, B.A.; Fan, P.; Hussain, A.; Baker, T.; Liatsis, P. A task scheduling algorithm with improved makespan based on prediction of tasks computation time algorithm for cloud computing. IEEE Access 2019, 7, 160916–160926. [Google Scholar] [CrossRef]

- Moon, Y.; Yu, H.; Gil, J.M.; Lim, J. A slave ants based ant colony optimization algorithm for task scheduling in cloud computing environments. Hum.-Centric Comput. Inf. Sci. 2017, 7, 28. [Google Scholar] [CrossRef]

- Sreenivasulu, G.; Paramasivam, I. Hybrid optimization algorithm for task scheduling and virtual machine allocation in cloud computing. Evol. Intell. 2021, 14, 1015–1022. [Google Scholar] [CrossRef]

- Jing, W.; Zhao, C.; Miao, Q.; Song, H.; Chen, G. QoS-DPSO: QoS-aware task scheduling for cloud computing system. J. Netw. Syst. Manag. 2021, 29, 5. [Google Scholar] [CrossRef]

- Shirvani, M.H.; Talouki, R.N. A novel hybrid heuristic-based list scheduling algorithm in heterogeneous cloud computing environment for makespan optimization. Parallel Comput. 2021, 108, 102828. [Google Scholar] [CrossRef]

- Ajmal, M.S.; Iqbal, Z.; Khan, F.Z.; Ahmad, M.; Ahmad, I.; Gupta, B.B. Hybrid ant genetic algorithm for efficient task scheduling in cloud data centers. Comput. Electr. Eng. 2021, 95, 107419. [Google Scholar] [CrossRef]

- Seifhosseini, S.; Shirvani, M.H.; Ramzanpoor, Y. Multi-objective cost-aware bag-of-tasks scheduling optimization model for IoT applications running on heterogeneous fog environment. Comput. Netw. 2024, 240, 110161. [Google Scholar] [CrossRef]

- Dubey, K.; Sharma, S.C. A novel multi-objective CR-PSO task scheduling algorithm with deadline constraint in cloud computing. Sustain. Comput. Inform. Syst. 2021, 32, 100605. [Google Scholar] [CrossRef]

- Wei, X. Task scheduling optimization strategy using improved ant colony optimization algorithm in cloud computing. J. Ambient. Intell. Humaniz. Comput. 2020, 1–12. [Google Scholar] [CrossRef]

- Falsafain, H.; Heidarpour, M.R.; Vahidi, S. A branch-and-price approach to a variant of the cognitive radio resource allocation problem. Ad Hoc Netw. 2022, 132, 102871. [Google Scholar] [CrossRef]

- Ahadi, P.; Balasundaram, B.; Borrero, J.S.; Chen, C. Development and optimization of expected cross value for mate selection problems. Heredity 2024, 133, 113–125. [Google Scholar] [CrossRef] [PubMed]

- Saghezchi, A.; Kashani, V.G.; Ghodratizadeh, F. A Comprehensive Optimization Approach on Financial Resource Allocation in Scale-Ups. J. Bus. Manag. Stud. 2024, 6, 62. [Google Scholar] [CrossRef]

- Jin, W.; Rezaeipanah, A. Dynamic task allocation in fog computing using enhanced fuzzy logic approaches. Sci. Rep. 2025, 15, 18513. [Google Scholar] [CrossRef] [PubMed]

- Chen, Z.; Hu, J.; Chen, X.; Hu, J.; Zheng, X.; Min, G. Computation offloading and task scheduling for DNN-based applications in cloud-edge computing. IEEE Access 2020, 8, 115537–115547. [Google Scholar]

- Mirjalili, S.; Mirjalili, S.M.; Lewis, A. Grey wolf optimizer. Adv. Eng. Softw. 2014, 69, 46–61. [Google Scholar] [CrossRef]

- MiarNaemi, F.; Azizyan, G.; Rashki, M. Horse herd optimization algorithm: A nature-inspired algorithm for high-dimensional optimization problems. Knowl.-Based Syst. 2021, 213, 106711. [Google Scholar]

- Chen, X.; Cheng, L.; Liu, C.; Liu, Q.; Liu, J.; Mao, Y.; Murphy, J. A WOA-based optimization approach for task scheduling in cloud computing systems. IEEE Syst. J. 2020, 14, 3117–3128. [Google Scholar] [CrossRef]

| Author [Citation] | Methodology | Features | Challenges |

|---|---|---|---|

| Xueying Guo et al., 2020 [30] | Fuzzy self-defense algorithm |

|

|

| Maytami et al., 2021 [31] | PTCT |

|

|

| Moon et al., 2017 [32] | ACO |

|

|

| Sreenivasulu and Paramasivam, 2016 [33] | Hybrid optimization algorithm |

|

|

| Jing et al., 2021 [34] | PSO |

|

|

| Sohaib et al., 2021 [35] | ACO and GA |

|

|

| Dubey and Sharma, 2021 [38] | CR-PSO |

|

|

| Xianyong Wei et al., 2021 [39] | ACO |

|

|

|

|

|

|

| Parameter | Value |

|---|---|

| Architecture | Fully Connected DNN |

| Hidden Layers | 3 |

| Hidden Neurons | Optimized (5–255) |

| Activation (Hidden) | ReLU |

| Activation (Output) | Linear |

| Optimizer | Adam |

| Learning Rate | 0.001 |

| Batch Size | 32 |

| Epochs | 100 |

| Regularization | Dropout (0.3), Batch Norm |

| Scenarios | Task Limit |

|---|---|

| Scenario 1 | 200 to 400 |

| Scenario 2 | 400 to 600 |

| Scenario 3 | 600 to 800 |

| Scenario 4 | 800 to 1000 |

| Scenario 5 | 1000 to 1200 |

| Cases | Number of VMs |

|---|---|

| Case 1 | 20 |

| Case 2 | 40 |

| Case 3 | 60 |

| Case 4 | 80 |

| Case 5 | 100 |

| Terms | PSO | WOA | GWO | HHO | IGW-HHO |

|---|---|---|---|---|---|

| Scenario 1 | |||||

| MEP | 41.849 | 41.617 | 40.131 | 35.3 | 31.817 |

| SMAPE | 0.4783 | 0.4756 | 0.4586 | 0.4034 | 0.3636 |

| MASE | 0.5597 | 0.3818 | 0.5268 | 0.4984 | 0.3882 |

| MAE | 505.6 | 283.19 | 365.47 | 272.26 | 263.88 |

| RMSE | 940.49 | 611.01 | 684.07 | 527.26 | 543.52 |

| L1-NORM | 5056 | 2831.9 | 3654.7 | 2722.6 | 2638.8 |

| L2-NORM | 2974.1 | 1932.2 | 2163.2 | 1667.4 | 1718.8 |

| L-INF-NORM | 2156.9 | 1609.3 | 1663.8 | 1308.9 | 1407.1 |

| Scenario 2 | |||||

| MEP | 17.673 | 18.093 | 18.298 | 15.515 | 13.543 |

| SMAPE | 0.202 | 0.2068 | 0.2091 | 0.1773 | 0.1548 |

| MASE | 0.2126 | 0.1061 | 0.1504 | 0.1346 | 0.1253 |

| MAE | 455.18 | 125.24 | 195.05 | 177.18 | 149.99 |

| RMSE | 879.28 | 344.75 | 413.55 | 390.32 | 343.16 |

| L1-NORM | 4551.8 | 1252.4 | 1950.5 | 1771.8 | 1499.9 |

| L2-NORM | 2780.5 | 1090.2 | 1307.8 | 1234.3 | 1085.2 |

| L-INF-NORM | 2066.2 | 1067.9 | 1134.6 | 1082.4 | 959.3 |

| Scenario 3 | |||||

| MEP | 10.209 | 10.371 | 11.075 | 9.2292 | 7.9777 |

| SMAPE | 0.1167 | 0.1185 | 0.1266 | 0.1055 | 0.0912 |

| MASE | 0.1642 | 0.0609 | 0.1087 | 0.0902 | 0.0784 |

| MAE | 411.52 | 54.061 | 193.05 | 171.71 | 154.64 |

| RMSE | 769.35 | 147.51 | 415.83 | 390.59 | 363.02 |

| L1-NORM | 4115.2 | 540.61 | 1930.5 | 1717.1 | 1546.4 |

| L2-NORM | 2432.9 | 466.46 | 1315 | 1235.2 | 1148 |

| L-INF-NORM | 1823.1 | 446.2 | 1126.1 | 1094.6 | 1016.2 |

| Scenario 4 | |||||

| MEP | 7.9421 | 8.3168 | 8.914 | 7.449 | 6.2967 |

| SMAPE | 0.0908 | 0.095 | 0.1019 | 0.0851 | 0.072 |

| MASE | 0.1292 | 0.0605 | 0.0801 | 0.0634 | 0.0533 |

| MAE | 375.91 | 70.948 | 192.17 | 162.45 | 141.73 |

| RMSE | 725.19 | 187.07 | 419.95 | 369.48 | 342.16 |

| L1-NORM | 3759.1 | 709.48 | 1921.7 | 1624.5 | 1417.3 |

| L2-NORM | 2293.2 | 591.58 | 1328 | 1168.4 | 1082 |

| L-INF-NORM | 1762.5 | 556.78 | 1147.9 | 1023.3 | 977 |

| Scenario 5 | |||||

| MEP | 6.423 | 6.8066 | 7.5477 | 6.2313 | 5.0543 |

| SMAPE | 0.0734 | 0.0778 | 0.0863 | 0.0712 | 0.0578 |

| MASE | 0.0916 | 0.0444 | 0.068 | 0.0564 | 0.043 |

| MAE | 343.18 | 76.713 | 152.46 | 115.15 | 95.953 |

| RMSE | 691.57 | 215.56 | 334.19 | 270.04 | 237.32 |

| L1-NORM | 3431.8 | 959.53 | 1524.6 | 1151.5 | 767.13 |

| L2-NORM | 2186.9 | 681.65 | 1056.8 | 853.93 | 750.46 |

| L-INF-NORM | 1726.2 | 661.44 | 921.35 | 767.27 | 685.22 |

| Terms | PSO | WOA | GWO | HHO | IGW-HHO |

|---|---|---|---|---|---|

| Case 1 | |||||

| MEP | 15.029 | 15.023 | 14.824 | 12.853 | 11.495 |

| SMAPE | 0.1718 | 0.1717 | 0.1694 | 0.1469 | 0.1314 |

| MASE | 0.2657 | 1.2255 | 0.1914 | 0.1619 | 0.1329 |

| MAE | 100.02 | 9.5919 | 36.382 | 32.905 | 27.621 |

| RMSE | 171.78 | 57.789 | 70.148 | 65.838 | 15.25 |

| L1-NORM | 1000.2 | 95.919 | 363.82 | 329.05 | 276.21 |

| L2-NORM | 543.23 | 48.224 | 221.83 | 208.2 | 182.75 |

| L-INF-NORM | 383.4 | 25.446 | 183.13 | 173.72 | 156.44 |

| Case 2 | |||||

| MEP | 17.585 | 18.132 | 18.259 | 15.447 | 13.416 |

| SMAPE | 0.201 | 0.2072 | 0.2087 | 0.1765 | 0.1533 |

| MASE | 0.2162 | 0.1058 | 0.1747 | 0.1532 | 0.123 |

| MAE | 448.76 | 158.24 | 179.8 | 147.19 | 138.08 |

| RMSE | 850.46 | 437.79 | 375.3 | 330.68 | 318.2 |

| L1-NORM | 4487.6 | 1582.4 | 1798 | 1471.9 | 1380.8 |

| L2-NORM | 2689.4 | 1384.4 | 1186.8 | 1045.7 | 1006.2 |

| L-INF-NORM | 2024.6 | 1308.2 | 1019.6 | 931.91 | 898.92 |

| Case 3 | |||||

| MEP | 10.158 | 10.638 | 11.107 | 9.2686 | 8.0997 |

| SMAPE | 0.1161 | 0.1216 | 0.1269 | 0.1059 | 0.0926 |

| MASE | 0.1733 | 0.0701 | 0.0974 | 0.0791 | 0.0717 |

| MAE | 404.57 | 138.54 | 196.81 | 176.2 | 155.06 |

| RMSE | 747.49 | 341.53 | 424.05 | 398.2 | 363.87 |

| L1-NORM | 4045.7 | 1385.4 | 1968.1 | 1762 | 1550.6 |

| L2-NORM | 2363.8 | 1080 | 1341 | 1259.2 | 1150.7 |

| L-INF-NORM | 1755.1 | 976.59 | 1151.2 | 1102.9 | 1028 |

| Case 4 | |||||

| MEP | 8.0914 | 8.3844 | 8.8859 | 7.5307 | 6.2855 |

| SMAPE | 0.0925 | 0.0958 | 0.1016 | 0.0861 | 0.0718 |

| MASE | 0.1288 | 0.059 | 0.0847 | 0.0688 | 0.0576 |

| MAE | 389.76 | 124.59 | 196.44 | 165.91 | 136.9 |

| RMSE | 750.86 | 300.78 | 431.85 | 375.42 | 325.81 |

| L1-NORM | 3897.6 | 1245.9 | 1964.4 | 1659.1 | 1369 |

| L2-NORM | 2374.4 | 951.16 | 1365.6 | 1187.2 | 1030.3 |

| L-INF-NORM | 1814 | 856.03 | 1178.3 | 1034.4 | 924.64 |

| Case 5 | |||||

| MEP | 6.3664 | 6.6998 | 7.5193 | 6.2182 | 5.0717 |

| SMAPE | 0.0728 | 0.0766 | 0.0859 | 0.0711 | 0.058 |

| MASE | 0.0979 | 0.1968 | 0.0682 | 0.0578 | 0.0423 |

| MAE | 339.94 | 8.9432 | 157.37 | 133.65 | 105.02 |

| RMSE | 685.99 | 16.695 | 349.24 | 310.59 | 259.52 |

| L1-NORM | 3399.4 | 89.432 | 1573.7 | 1336.5 | 1050.2 |

| L2-NORM | 2169.3 | 52.794 | 1104.4 | 982.17 | 820.67 |

| L-INF-NORM | 1711.6 | 38.176 | 967.21 | 875.48 | 755.1 |

| Algorithms | Best | Mean | Standard Deviation |

|---|---|---|---|

| Scenario 1 | |||

| PSO | 6.3664 | 0.0728 | 0.0979 |

| WOA | 6.6998 | 0.0766 | 0.1968 |

| GWO | 7.5193 | 0.0859 | 0.0682 |

| HHO | 6.2182 | 0.0711 | 0.0578 |

| IGW-HHO | 5.0717 | 0.058 | 0.0423 |

| Scenario 2 | |||

| PSO | 6.3664 | 0.0728 | 0.0979 |

| WOA | 6.6998 | 0.0766 | 0.1968 |

| GWO | 7.5193 | 0.0859 | 0.0682 |

| HHO | 6.2182 | 0.0711 | 0.0578 |

| IGW-HHO | 5.0717 | 0.058 | 0.0423 |

| Scenario 3 | |||

| PSO | 6.3664 | 0.0728 | 0.0979 |

| WOA | 6.6998 | 0.0766 | 0.1968 |

| GWO | 7.5193 | 0.0859 | 0.0682 |

| HHO | 6.2182 | 0.0711 | 0.0578 |

| IGW-HHO | 5.0717 | 0.058 | 0.0423 |

| Scenario 4 | |||

| PSO | 6.3664 | 0.0728 | 0.0979 |

| WOA | 6.6998 | 0.0766 | 0.1968 |

| GWO | 7.5193 | 0.0859 | 0.0682 |

| HHO | 6.2182 | 0.0711 | 0.0578 |

| IGW-HHO | 5.0717 | 0.058 | 0.0423 |

| Scenario 5 | |||

| PSO | 6.3664 | 0.0728 | 0.0979 |

| WOA | 6.6998 | 0.0766 | 0.1968 |

| GWO | 7.5193 | 0.0859 | 0.0682 |

| HHO | 6.2182 | 0.0711 | 0.0578 |

| IGW-HHO | 5.0717 | 0.058 | 0.0423 |

| Algorithms | Best | Mean | Standard Deviation |

|---|---|---|---|

| Case 1 | |||

| PSO | 6.3664 | 0.0728 | 0.0979 |

| WOA | 6.6998 | 0.0766 | 0.1968 |

| GWO | 7.5193 | 0.0859 | 0.0682 |

| HHO | 6.2182 | 0.0711 | 0.0578 |

| IGW-HHO | 5.0717 | 0.058 | 0.0423 |

| Case 2 | |||

| PSO | 6.3664 | 0.0728 | 0.0979 |

| WOA | 6.6998 | 0.0766 | 0.1968 |

| GWO | 7.5193 | 0.0859 | 0.0682 |

| HHO | 6.2182 | 0.0711 | 0.0578 |

| IGW-HHO | 5.0717 | 0.058 | 0.0423 |

| Case 3 | |||

| PSO | 6.3664 | 0.0728 | 0.0979 |

| WOA | 6.6998 | 0.0766 | 0.1968 |

| GWO | 7.5193 | 0.0859 | 0.0682 |

| HHO | 6.2182 | 0.0711 | 0.0578 |

| IGW-HHO | 5.0717 | 0.058 | 0.0423 |

| Case 4 | |||

| PSO | 6.3664 | 0.0728 | 0.0979 |

| WOA | 6.6998 | 0.0766 | 0.1968 |

| GWO | 7.5193 | 0.0859 | 0.0682 |

| HHO | 6.2182 | 0.0711 | 0.0578 |

| IGW-HHO | 5.0717 | 0.058 | 0.0423 |

| Case 5 | |||

| PSO | 6.3664 | 0.0728 | 0.0979 |

| WOA | 6.6998 | 0.0766 | 0.1968 |

| GWO | 7.5193 | 0.0859 | 0.0682 |

| HHO | 6.2182 | 0.0711 | 0.0578 |

| IGW-HHO | 5.0717 | 0.058 | 0.0423 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Kumar, M.; Kant, R.; Gupta, B.K.; Shadab, A.; Kumar, A.; Kant, K. IDN-MOTSCC: Integration of Deep Neural Network with Hybrid Meta-Heuristic Model for Multi-Objective Task Scheduling in Cloud Computing. Computers 2026, 15, 57. https://doi.org/10.3390/computers15010057

Kumar M, Kant R, Gupta BK, Shadab A, Kumar A, Kant K. IDN-MOTSCC: Integration of Deep Neural Network with Hybrid Meta-Heuristic Model for Multi-Objective Task Scheduling in Cloud Computing. Computers. 2026; 15(1):57. https://doi.org/10.3390/computers15010057

Chicago/Turabian StyleKumar, Mohit, Rama Kant, Brijesh Kumar Gupta, Azhar Shadab, Ashwani Kumar, and Krishna Kant. 2026. "IDN-MOTSCC: Integration of Deep Neural Network with Hybrid Meta-Heuristic Model for Multi-Objective Task Scheduling in Cloud Computing" Computers 15, no. 1: 57. https://doi.org/10.3390/computers15010057

APA StyleKumar, M., Kant, R., Gupta, B. K., Shadab, A., Kumar, A., & Kant, K. (2026). IDN-MOTSCC: Integration of Deep Neural Network with Hybrid Meta-Heuristic Model for Multi-Objective Task Scheduling in Cloud Computing. Computers, 15(1), 57. https://doi.org/10.3390/computers15010057