1. Introduction

Wireless communication systems have reached several major milestones, especially within the last decade, and are considered among the fastest dynamically growing technologies. The transition from one generation to the next typically lasts about ten years. The latest generation deployed is 5G, and a massive increase in the number of connected devices has been observed. According to recent studies, 5G networks are required to serve 10 to 100 times more cellular devices compared to previous generations [

1]. In this direction, Internet of Things (IoT) analytics estimates that the number of connected devices will reach 40 billion by 2030 [

2], while GSMA intelligence projects more than 38 billion connections by 2030, with enterprise applications accounting for over

of them [

3]. This figure is nearly four times the projected global population of about

billion in 2030 [

4]. This tremendous growth is driven by the popularization of the Internet of Things, smartphones, tablets, and associated applications. Consequently, the considerable increase in connected devices leads to a significant surge in mobile data traffic demands. Cisco and other industry forecasts indicate that global mobile data traffic will continue to grow at a Compound Annual Growth Rate (CAGR) of around 25–

, reaching several hundred Exabytes per month by 2030 [

5,

6].

Figure 1 illustrates the projected growth of global mobile network data traffic from 2021 to 2030, highlighting both mobile data and Fixed Wireless Access (FWA). It shows a steady increase, with total traffic expected to reach around 310 exabytes per month by 2030 when excluding FWA, and approximately 482 exabytes per month when FWA is included.

To accommodate this significant increase in the number of connected devices and the exponential growth of data traffic demands, technologies such as Millimeter Wave (mmWave), massive Multiple Input Multiple Output (MIMO), beamforming, new sophisticated radio access techniques, and Small Cells (SCs) densification were enabled aiming in enhancing network performances in 5G wireless cellular networks [

7]. Moreover, new architectures are deployed to fit with the huge amount of data traffic demand and achieve the required performance metrics. For instance, Heterogeneous Networks (HetNets), Cloud Radio Access Network (C-RAN), and Cell-Free Massive MIMO (CFMM) networks are considered as efficient solutions to satisfy the traffic demand which are designed to provide additional capacity and superior spectral efficiency. Such new architectures are characterized by an increased number of BSs in order to satisfy with the required traffic. Hence, the deployment of a large number of BSs leads to an increased amount of energy consumption.

1.1. Relevance to the Topic

The topic of energy efficiency is linked not only to telecommunication operators and manufacturers but also to some other fields that can be directly related to this topic including clean energy and environmental agencies. Nowadays, the global trend is to reduce energy consumption and improve efficiency through the deployment of clean sources of energy or setting new policies to reduce financial burden and indirect environmental impact. In

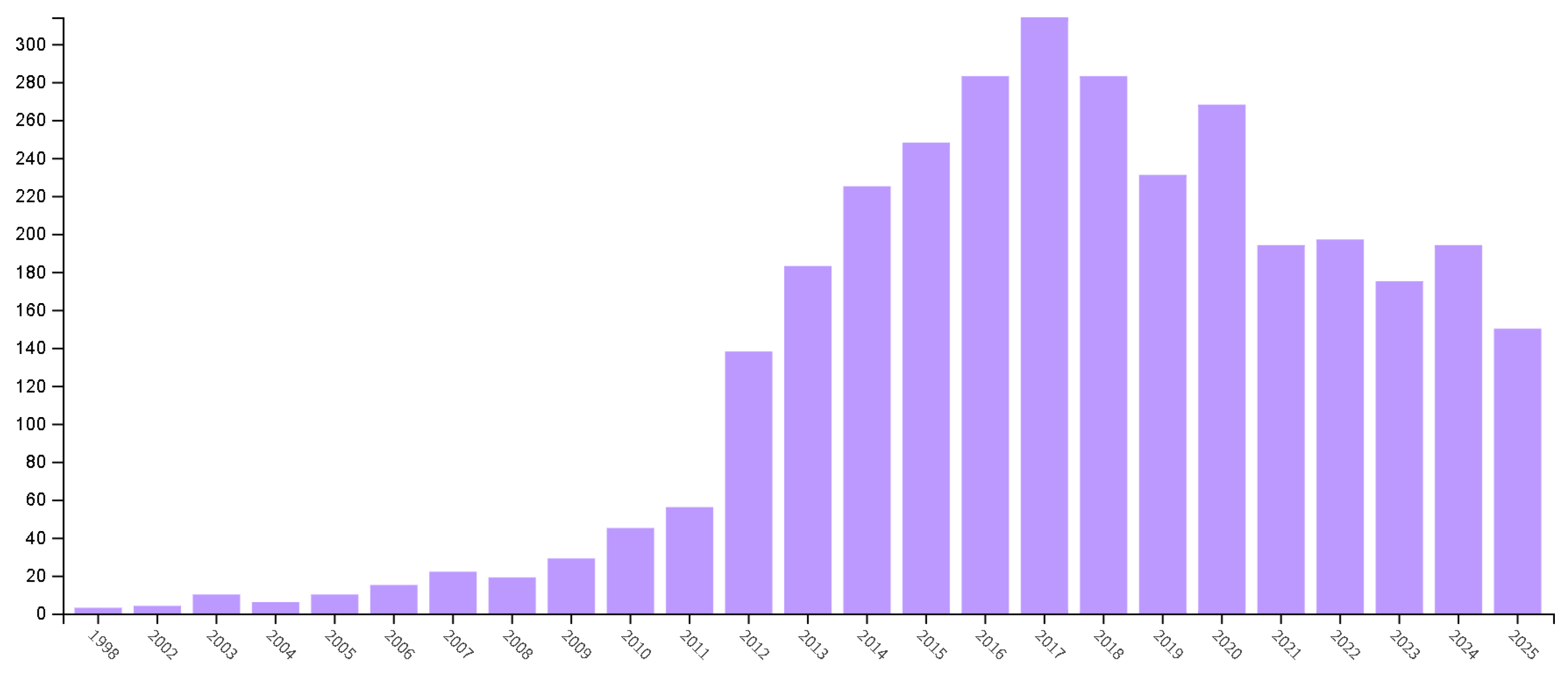

Figure 2, we showcase a bar chart representation of the number of research publications on energy consumption in cellular networks, with the first activities started earlier in 1998 and increasing widely during the deployment of 4G network with the emergence of new high data rate services and new devices. Efforts to reduce energy consumption in cellular network rised intensively during the first steps towards 5G modeling and standardization to embed new algorithms and techniques in the 5G network and beyond.

The 5G network comes with more services, advanced architecture, higher frequency bands, and guaranteed high traffic flow with a data rate approaching 1 Gbps and massive connectivity. Therefore, the awareness of energy efficiency in 5G network started early in 2012 and is still continuing to cover different scenarios.

1.2. Problem Statement

With the deployment of a dense number of base stations, energy consumption inevitably increases, since BSs remain the most energy-intensive components in mobile access networks [

8]. Recent studies analyzing the energy profile of a typical Radio Access Network (RAN) confirm that BSs account for the largest share of energy usage. According to recent IEEE research, BSs are responsible for approximately 60–80% of the total RAN energy consumption [

9,

10]. Consequently, Information and Communication Technology (ICT) as a whole contributes significantly to global electricity demand, estimated between 8% or 21% of worldwide consumption in 2030 [

8]. In this direction, mobile networks consume tens of terawatt-hours annually, making energy efficiency a strategic priority for operators.

Consequently, to reduce the energy consumption in mobile networks, researchers have primarily focused on optimizing the energy usage at the BS, which remains the most power-hungry element in the Radio Access Network. Reducing BS energy consumption has become a major concern not only because of the high Operational Expenditure (OPEX) for network operators but also due to its indirect environmental impact through increased

emissions. Recent IEEE studies confirm that the ICT sector contributes approximately 4% of global

emissions [

11,

12], with mobile networks representing a significant share. Moreover, the level of

emissions grows proportionally with the rapid increase in connected devices, making wireless communication systems a critical sector to address in order to mitigate ICT-related carbon emissions.

In

Figure 3, the operational and the embodied energy consumption of both the Mobile User (MU) and the BS are presented [

13]. As depicted in the figure, the cost of operating the BS is much greater than that of the MU. However, the embodied energy of the MU is much higher than that of the BS due to the fact that mobile users are characterized by a very short life time reaching about 2 years compared to the BS whose operational lifetime is about 10–15 years. Furthermore, much fewer BSs are manufactured relative to the number of MUs. In addition, important improvement has been performed to make MUs more energy efficient. Thus, carbon footprint per subscriber has been reduced from about 100 kg to approximately 25 kg of

emissions per year. However, the overall carbon footprint of mobile users keeps increasing as their volume continues to increase in a spectacular way [

14]. To further reduce the embodied energy of MUs, mobile operators should make the manufacturing process of MUs more energy efficient and enhance their lifetime [

13,

15].

1.3. Paper Organization

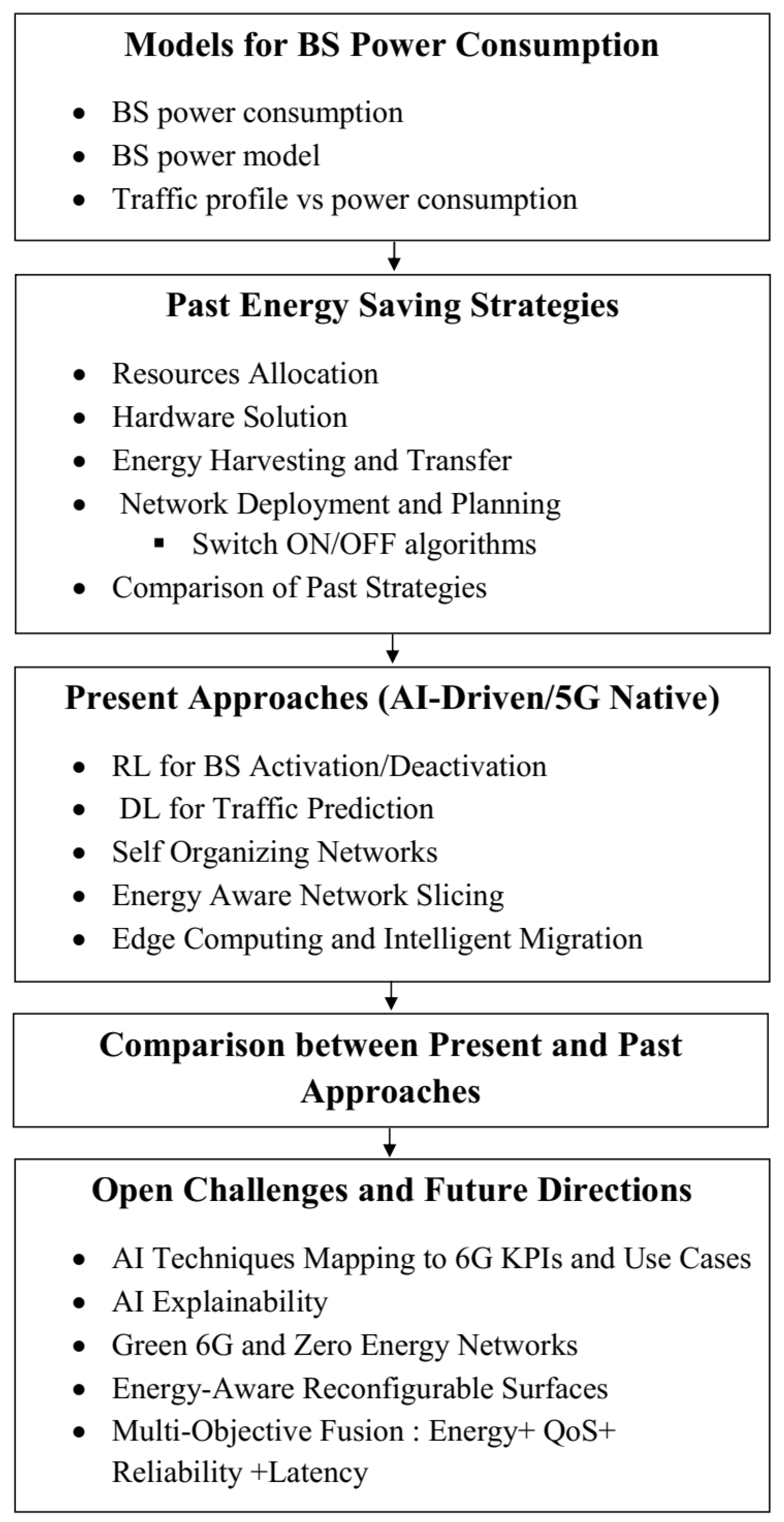

The remainder of this paper is organized as follows. In

Section 2, we discuss the energy consumption at the BS since it represents the component which consumes the greatest amount of energy in the whole network.

Section 3 reviews the classical pre-5G strategies for energy saving, including resource allocation, hardware solution, energy harvesting and transfer, and network deployment and planning including Switch ON/OFF algorithms, also called sleep modes, while highlighting their main limitations.

Section 4 focuses on the recent AI-driven and 5G-native energy optimization techniques, such as reinforcement learning-based BS activation, deep learning-based traffic prediction, self-organizing networks, energy-aware network slicing, and intelligent edge migration. After that,

Section 5 develops a comparison between traditional and present strategies. Then, in

Section 6, we discuss the open challenges and outlines promising research directions toward energy-efficient and sustainable 6G networks. Finally,

Section 7 concludes the paper.

Figure 4 outlines the logical progression of the paper, starting from the analysis of energy consumption at base stations, moving through classical and modern energy-saving strategies, and culminating in a comparative evaluation and future research directions toward sustainable 6G networks.

2. Models for BS Power Consumption

2.1. BS Power Consumption

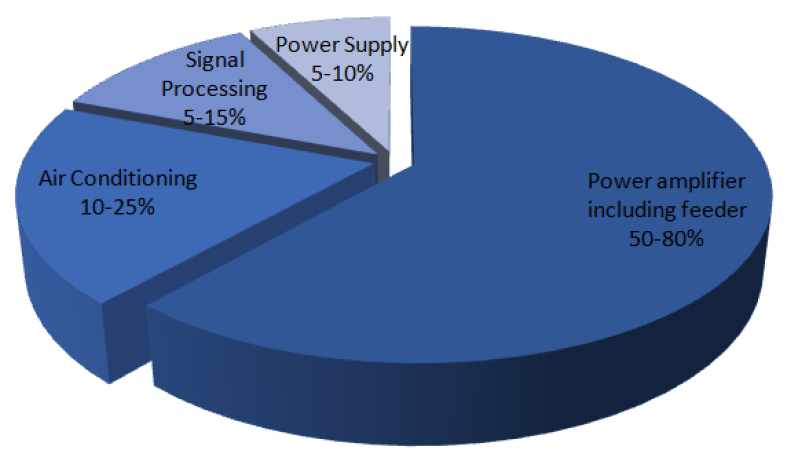

To identify potential energy saving opportunities in a BS, it is essential to analyze the distribution of its total power consumption across individual components.

Figure 5 illustrates a simplified schema of the BS architecture, which can be generalized to different BS types, including macro-, micro-, pico-, and femtocells. Recent IEEE studies confirm that the major BS subsystems, namely the power amplifier (PA), radio frequency (RF) chain, BasebBand (BB) processing unit, and cooling system, contribute differently to the overall energy usage, with the power amplifier and cooling system together accounting for more than 60% of total BS energy consumption [

16]. Understanding this breakdown is crucial for designing energy-efficient BSs and implementing green communication strategies.

A RF transmission module: RF represents the module intended to generate and transmit signals to mobile terminals.

A PA bloc: Its role is to amplify the transmission signals coming from the RF module to a power level suitable for transmission. The most adequate operating point of PA is near the saturation point. However, the PA must operate below this point in a more linear region due to the existence of non-linear effects. Thus, these effects lead to signal distortions by generating adjacent channel interference which leads to a degradation in performance at the receiver. Unfortunately, this high operating back-off results in a low efficiency of the PA , leading to an increase in its power consumption.

One BB unit: The role of the BB unit is to perform digital functionalities including digital up/down conversion, modulation and demodulation, filtering, channel coding and decoding, and signal detection.

One Direct Current-Direct Current (DC-DC) power supply.

One cooling system: This represents the unit responsible for cooling.

One antenna interface: The loss in the antenna can be calculating by including the feeder losses, the antenna band-pass filters, the duplexers, and matching components. Actually, where the BS is physically separated from the antenna, a feeder loss of about dB should be added. Nevertheless, there is no for a feeder when deploying Remote Radio Heads (RRHs) in a macrocell because the PA and the antennas are located at the same location (in the tower). Besides, Small BS (SBS) also have negligible feeder losses.

One Alternating Current–Direct Current (AC-DC) mains power supply.

The cooling system, the mains power supply, and the DC-DC power supply generate further power losses in the BS. Furthermore, SBSs use natural air circulation for cooling and therefore do not incur cooling losses.

According to

Figure 6, the most energy-consuming components are the BB unit, the RF and PA units, the antenna system, and the cooling system.

2.2. BS Power Model

The most well known and used conventional power calculation model is called Earth model [

17]. This model represents an interface between the component and network levels, and allows us to evaluate EE in radio access networks. According to this model, the total BS power consumption is divided into two main parts depending on the state of the BS: the first one is the BS power consumption in sleep mode, meaning the power consumed in the BS when there is no transmission

. The second part is the power consumption when the BS is in active state and transmitting signals to MUs.

where

and

represent, respectively, the total BS power supply and the transmit power, i.e, the per antenna output power measured at the antenna input of the BS.

and

denotes the minimum and maximum transmit power, respectively.

is the slope of the load-dependent power consumption.

indicates the power consumption when the BS is in sleep mode and

N denotes the number of transceiver antennas at the BS.

Table 1 defines these parameters for different BS types [

17]:

2.3. Traffic Profile Versus Power Consumption

In fact, BSs are conventionally planned to serve traffic during peak hours. However, their utilization decreases during off-peak periods. The analysis of a typical cellular traffic profile during a typical day shows a remarkably large variation between peak and off-peak traffic levels as depicted in

Figure 7 which represents an example of daily traffic load variations during a 24 h weekday [

18].

Actually, traffic profile during a typical day is influenced by two main factors [

19]:

The daily traffic profile decreases significantly over time in the early morning periods when the traffic is very low. However, it increases notably in the midday or evening periods where the high traffic peaks are located.

The daily spatial movement of a big number of MUs is in two main periods: in the morning, when they leave their residential locations to go to their offices and commercial zones, and in the evening periods, when they return.

According to these two factors, the network capacity provided should serve the peak traffic demand in all geographical areas and at all times. Hence, network energy consumption requires adaptation to the spatio-temporal variations of the traffic profile. In fact, the amount of BS power consumption is determined according to its traffic load [

19]. Thus, a BS in sleep mode consumes a fixed amount of power, and its proportional power consumption represents more than half of overall consumption at peak load traffic [

20]; for example in LTE, approximately 60% of power consumption is proportional to the traffic load [

21]. Theoretically, the fixed power consumption of a BS in sleep mode should be close to zero. We can conclude that all energy consumption should be perfectly traffic-load proportional [

20].

3. Past Energy Saving Strategies

The power consumption of cellular networks has raised significant economic and environmental concerns due to the increasing cost of energy and the associated greenhouse gas emissions contributing to global warming. This has motivated the research community to investigate energy-efficient solutions capable of reducing both operational expenditures and

emissions in mobile networks, a task that remains highly challenging. In this context, several large-scale research projects have laid the foundations for green wireless communications, including Energy Aware Radio and neTwork tecHnologies (EARTH) [

22], GreenTouch [

23], OPERA-Net 2 [

24], and 5GrEEn [

25].

The EARTH project, initiated in 2010 as a joint industrial–academic effort, was among the first systematic attempts to quantify and reduce energy consumption in radio access networks. Its primary objective was to achieve up to a 50% reduction in RAN energy consumption while maintaining acceptable Quality of Service (QoS). EARTH provided fundamental insights into the distribution of energy consumption across network components and introduced key concepts such as traffic-aware operation and energy-proportional networking [

17]. These concepts later became essential building blocks for energy-efficient mechanisms standardized in LTE-Advanced and subsequently adopted in 5G systems.

Building upon EARTH, the GreenTouch initiative significantly expanded the ambition of green networking research by targeting up to a 1000-fold improvement in network energy efficiency compared to 2010 baselines [

23]. GreenTouch introduced disruptive architectural principles, including network function virtualization, flexible RAN architectures, and deep sleep modes, many of which directly influenced the evolution of cloud-based RAN and centralized architectures that are now core components of 5G networks.

Similarly, the Celtic-Plus OPERANet 2 project (2011–2015) addressed energy efficiency from a holistic perspective by considering life-cycle assessment, hardware design, radio resource management, and cooling technologies [

24]. The project reinforced the idea that mobile networks are dimensioned for peak traffic, leading to persistent over-provisioning. This insight directly motivated dynamic resource adaptation techniques such as cell on/off strategies and load-aware operation which are now widely studied and implemented in 5G energy saving solutions.

More recently, the 5GrEEn project explicitly bridged the gap between earlier green networking initiatives and the 5G era by adopting a “green-by-design” philosophy [

25]. Unlike previous approaches that focused on optimizing already deployed networks, 5GrEEn emphasized energy-efficient network design from the outset. The project investigated load-adaptive massive MIMO, energy-aware backhauling, and the decoupling of control and data planes concepts that strongly align with 5G features such as ultra-dense deployments, centralized control, and flexible network slicing [

26,

27,

28,

29].

Overall, the principles introduced by these foundational projects have directly shaped modern 5G energy-efficiency strategies. Techniques such as adaptive resource allocation, hardware-aware optimization, intelligent network planning, and energy-harvesting support originally explored in EARTH and GreenTouch are now being enhanced through artificial intelligence, software-defined networking, and cloud-native RAN architectures. Consequently, current 5G energy-efficient solutions can be viewed as a natural evolution of these earlier projects, adapted to meet the stringent performance, scalability, and sustainability requirements of contemporary and future mobile networks.

The most used proposed approaches adopted by these proposed 5G projects and others can be grouped under four broad categories which are resource allocation, hardware solutions, energy harvesting and transfer, and network deployment and planning [

30].

3.1. Resource Allocation

The first mechanism deployed to increase the power saving in wireless mobile networks is to allocate the system radio resources in efficient manner for the purpose of reducing energy consumption. Thus, it has been shown that this method is able to achieve substantial energy efficiency gains at the price of a reasonable throughput reduction [

31].

The idea is that the system’s radio resources should be optimized to increase the amount of information that is reliably transmitted per Joule of consumed energy instead of only optimizing the amount of information that is reliably transmitted. To achieve new resource allocation techniques taking into account energy efficiency maximization, new mathematical tools are required [

31]. Indeed, physically, the efficiency with which a communication system deploys a given resource is defined as the ratio between the benefit obtained by deploying the resource and the corresponding incurred cost. In this definition, the cost represents the amount of consumed energy over a wireless link, which includes the energy loss due to the use of non-ideal power amplifiers, the radiated energy, and the static energy dissipated in all other hardware blocks of the system. In fact, it is generally expected that the static hardware energy is independent of the radiated energy and the transmit amplifiers work in the linear region [

17,

31,

32,

33]. Based on these two assumptions, the consumed energy during a given time interval

T is expressed as

where

p represents the radiated power,

, with

as the efficiency of the transmit power amplifier, and

denotes the static power dissipated in all other circuit blocks of the transmitter and receiver, including the power dissipated in the different transmit–receive chains of multiple-antenna systems.

On the other side, the benefit generated after sustaining the energy cost in Equation (

2) is related to the amount of data reliably transmitted in the time interval

T. The benefit can be calculated with different manners according to the particular system under analysis, for instance, system capacity/achievable rate, throughput, and outage capacity represent some metrics deployed to calculate this quantity. In fact, all these metrics are expressed in

and depend on the Signal to Noise Ratio (SNR) (or SINR) of the communication link, denoted by

. Thus, the system benefit can be expressed by a function

, where

f is defined depending on the system being optimized.

Finally, the energy efficiency

of a communication link can be defined as

In this direction, energy-efficient resource allocation maximization is investigated by different researchers. In [

34], energy-efficiency maximization is proposed using a distributed algorithm by means of pricing and fractional optimization techniques. Another work in [

35] proposed an energy-efficient licensed-assisted access for LTE networks to use unlicensed bands. The authors in [

36] proposed a Lyapunov optimization method for the purpose of optimizing the energy efficiency of WiFi networks. The optimization is performed while taking into consideration the transmit power, the network selection, and the sub-channel assignment. In the same context, energy-efficiency optimization is performed in [

37] for MIMO-OFDM systems. In this work, the propagation channels are considered varying dynamically over time. For a massive MIMO system-based mmWave, an energy efficiency maximization is performed in [

38].

3.2. Hardware Solutions

The second scheme involves strategies of designing the hardware of wireless mobile systems by taking into consideration energy efficiency [

13]. For instance, designing a new green RF chain, deploying simplified transmitter/receiver structures, and adopting new architectural changes, notably the novel cloud RAN architecture [

39].

First, since the power amplifier system is the most energy-consuming unit, many researchers are focused on designing an energy-efficient power amplifier system [

40,

41]. Direct circuit design and signal design approaches are performed, aiming to achieve a peak-to-average power ratio reduction. Further, another manner to reduce hardware energy consumption is the deployment of simplified transmitter and receiver architectures. In particular, for systems deploying massive MIMO and mmWave techniques, coarse signals quantization and hybrid analog/digital beam formers are adopted. For instance, in [

42], authors analyze the spectral efficiency by adopting one-bit Analog to Digital Converters (ADCs) for massive MIMO systems based on single-carrier and Orthogonal Frequency Division Multiplexing (OFDM) systems. Further, in [

43], the capacity is analyzed using a one-bit quantized MIMO system with transmitter Channel State Information (CSI). In addition, the simplification of receiver design in massive MIMO systems analysis of one-bit ADCs coupled with high resolution ADCs is reported in [

44]. It is shown in this paper that using mixed-ADC architectures with a relatively small number of high-resolution ADCs can achieve a reduction in the energy consumption for both single-user and multi-user scenarios.

On the other hand, for mmWave MIMO systems, the implementation of digital beamforming leads to a big complexity, a large amount of energy consumption, and cost issues. Hence, to overcome these problems, hybrid analog and digital beamforming structures have been proposed in [

45,

46,

47] as efficient solutions to minimize complexity and reduce energy consumption. In this context, authors in [

48] investigate this issue in mmWave MIMO systems in which they prove that using hybrid decoding achieves more energy saving.

Another key strategy to make the system more energy efficient is the use of a cloud for the implementation of RAN to obtain C-RAN. The advantage of C-RAN is that the functionalities of the BS are divided between the RRH and the BBU where BBUs share the same energy conditionning which makes the system more flexible and more energy efficient [

39,

49,

50].

3.3. Energy Harvesting and Transfer

The third category includes energy harvesting techniques. This consists of deploying both renewable and clean energy sources like sun or wind energy, and other radio signals present in the air. The idea consists of harvesting energy from the environment and converting it to electrical power. In fact, this method reduces energy consumption by enabling wireless communications networks to be powered by clean and renewable energy sources [

30]. There are two main renewable sources of energy which are environmental source and radio frequency source. The first one refers to natural energy sources, such as sun and wind [

51,

52]. The second one refers to energy harvested from the radio signals over the air. Otherwise, this approach enables us to recycle the energy that is wasted over the air caused by interfering radio signals which generate electromagnetic-based power [

53,

54].

The main challenge for communication systems powered by renewable energy and energy harvesting is that the amount of energy available at a given time is related to the availability of environmental energy sources which follows a stochastic process. This causes problems when the energy needed to run the network is greater than the energy harvested. For that, communication systems powered by energy harvesting must satisfy the constraint of energy causality which means that the energy needed to run the system at time t cannot surpass the energy harvested up to time t.

To overcome this problem, early researches propose an offline approach which assumes that the amount of energy harvested at a given time

t is known in advance. Thus, an offline power allocation scheme named directional water filling is proposed in [

55]. In [

56], the same problem has been investigated by assuming that the data to be transmitted is available at random times. A more realistic scenario of a battery with finite capacity has been considered in [

57,

58] as an extension of the results of [

56]. While in [

59], the impact of energy losses resulting from the use of non-ideal batteries is developed. Previous proposed approaches have been extended for the application of multi-user networks in [

60,

61], and for relay-assisted communications in [

62], and for multiple-antenna systems in [

63].

The problem of energy randomness is not present only for environmental energy sources but also for RF energy sources. In fact, the amount of electromagnetic power generated by RF signals available in the air is not known in advance. In this context, several research works have been proposed. In [

64], an OFDMA system with hybrid BS is considered in which the BS is partly powered by radio frequency energy harvesting, while, a relay-assisted network is considered in [

65,

66], in which the relay is powered by drawing power from the received signals.

However, RF energy harvesting can be benefitted in reducing the randomness of wireless power sources by combining energy harvesting with wireless power transfer methods in such a way that the energy is shared within the network nodes [

67]. This combination leads to two main advantages. First, the redistribution of the network total energy became possible, which helps to extend the lifetime of nodes that suffer from low battery energy [

68,

69]. Second, it is possible to use dedicated beacons in the network, which proceed as wireless energy sources. In this manner, the randomness of the RF energy source can be eliminated or reduced. This technique is extended to the so-called Simultaneous Wireless Information and Power Transfer (SWIPT) [

70,

71,

72] which superimpose the energy signals on regular communication signals.

3.4. Network Deployment and Planning

The fourth category consists of developing several technologies for the planning, deployment, and operation of 5G networks. The idea consists of deploying infrastructure nodes (network densification) aiming to maximize the covered area per consumed energy, rather than just the covered area. Further, this category is mostly combined by Switch On/Off (SOO) algorithms which consist of turning off the BS when it is underutilized especially during low traffic periods. Then, antenna muting mechanisms are used to adapt to the traffic conditions and also enhance EE [

73,

74].

The objective from network densification approach is to satisfy the significant increasing number of connected devices by increasing the number of deployed infrastructure equipment. Dense Heterogeneous Networks and Massive MIMO represent the two principal candidates of network densification in 5G networks.

Dense Heterogeneous Networks increase the number of infrastructure nodes per unit of area [

75,

76]. A very large number of SBSs and Relay Nodes (RNs) are opportunistically deployed and activated in a demand-based fashion. Small BSs densification is energy efficient since it is able to reduce the number of macro BSs which consume the largest amount of energy. Moreover, the deployment of SBSs reduces the propagation electrical and/or physical distances between communicating terminals, and then reduces energy consumption. However, it can generate additional interference which might reduce the energy efficiency of the network. This trade-off has been investigated in [

77], where it is shown that SBSs densification represents an efficient way to reduce energy consumption. However, there is an optimal density level that should not be exceeded since when the number of infrastructure nodes increases, the gain saturates. In [

78], the authors discussed the optimal network densification level, where they determine a threshold value on the operating cost of a SBS. If below the threshold, SBSs are beneficial; otherwise, they should be switched off. More energy saving can be achieved by applying sleep modes or SOO algorithms in such dense heterogeneous networks [

35,

36,

37,

79]. For example, closed access femtocell Access Points (APs) are turned off automatically and activated when a registered user needs to use them. Moreover, the SOO algorithm may be controlled via user activity detection [

38].

Switch on/off Algorithms

The BS-SOO algorithms, also named BS sleep modes, are considered the most efficient technique adopted to save energy in wireless mobile networks. Since the traffic is not constant and varies over time and space, BS could be underutilized during a given period of time. Thus, turning off the BS during this interval of time represents an energy-efficient solution [

80]. The first application of sleep mode was checked in 2009 by the China Mobile group. Since then, the estimated reduced amount of energy has been 36 million kWh per year [

81].

The main optimization objective for SOO approaches is energy or power consumption. However, when a BS is turned off, their users will be out of coverage and QoS will be degraded. For that, the main constraint for designing SOO algorithms is to guarantee a good QoS for users after the SOO process. In fact, different metrics are deployed by researchers to measure the QoS after the SOO procedure. For instance, [

82] proposed a SOO scheme by developing an energy maximization problem with a QoS measured over the coverage probability and average delay for BSs to wake up. In addition, in [

83], the SOO is performed while the mean delay is calculated to evaluate the QoS after the switch-off process. In [

84], the outage probability is used as a metric of performance to evaluate the QoS while in [

85], the average number and average data rate of served users are calculated to measure QoS. In [

86,

87,

88,

89], the authors jointly exploit eNB parameter tuning, power adjustment, and Coordinated Multipoint (CoMP) techniques to adapt network operation to traffic variations. In these works, QoS is evaluated using the SINR, which is computed before and after the switch-on/off (SOO) process to assess performance degradation. Similarly, SINR is employed as a QoS metric in [

90]. In contrast, in [

91], QoS preservation is ensured by maintaining sufficient user coverage and throughput through cooperative transmission among the active access points.

SOO strategies have been proposed in different network architectures. They have been applied for Universal Mobile Telecommunications System (UMTS) networks [

92] up to 5G networks. However, since 5G systems are characterized by the integration of new various techniques as well as the deployment of HetNet architectures [

75], the design of SOO algorithms in 5G networks faces particular challenges, as follows:

Interoperability with new technologies: the deployment of new technologies in 5G systems requires the adjustment of the BS-SOO strategy. For instance, when using Device-to-Device (D2D) communication for a MU, even if a MU does not exist in the coverage range of the BS, it could be still sending or receiving data to or from a BS through another relay. Hence, the BS can be switched off without worrying about the problem of coverage.

Integration in new network architectures: in 5G systems, new architectures are adopted in which the BS has new functionalities and properties. For example, in C-RAN, the data processing is executed by the BBU and users are served by the RRHs. The BBU consumes more energy than the RRH. For that, switching off the underused BBUs is efficient for power saving. Nevertheless, when turning off a BBU, all RRHs connected to the BBU will be out of service and cannot serve users in their coverage zone. Hence, to prevent this problem, these RRHs should be re-connected to another BBU or the users should be connected to other available RRHs deploying handover. So, this will result in a complicated scheduling problem.

Dense number of BSs: since 5G systems are characterized by the large increase in traffic demands, BSs densification should be adopted to satisfy the sizable aggregated data rate requirements in hotspots. Thus, switching off a BS increases the traffic loads of neighboring BSs. However, a significant number of BSs should be turned off to achieve energy saving which results in a combinatorial problem with a significant number of variables. Generally, this type of problem is difficult to solve with conventional methods. Moreover, when BSs work on the same spectrum band, turning off BSs will affect the interference pattern.

3.5. Comparison of Past Energy Efficiency Strategies

Table 2 summarizes the main benefits and limitations of traditional energy optimization strategies used in 4G and early 5G networks, including hardware-based solutions, power control and resource allocation, energy harvesting, network deployment and planning, and switch on/off algorithms.

4. Present Approaches (AI-Driven/5G-Native)

4.1. Reinforcement Learning for BS Activation and Deactivation

As we have mentioned above, in contemporary 5G radio access networks, BSs represent a significant portion of overall energy usage, primarily because they remain active even during periods of minimal traffic. To address this inefficiency, a key approach involves adjusting BS activity dynamically based on spatial and temporal variations in network demand. However, conventional methods, such as heuristic algorithms or threshold-based sleep mode controls, often struggle to adapt effectively within the complex, dense, and fluctuating environments typical of 5G systems. In contrast, reinforcement learning (RL) offers a compelling alternative, allowing intelligent agents to develop optimal control strategies through continuous interaction with the network environment, thereby achieving a balanced trade-off between energy conservation and maintaining QoS.

Within this framework, the decision to switch ON or OFF BSs can be modeled using a Markov Decision Process (MDP). The system state may encompass parameters such as traffic intensity, user mobility and association patterns, BS activity status, and Key Performance Indicators (KPIs) of the network. The set of possible actions generally involves toggling a BS or its components between ON and OFF states. The reward function is crafted to reflect a balance between minimizing energy usage and maintaining acceptable service quality. For instance, the study in [

93] formulates the BS sleep-control challenge as a MDP, addressed through actor–critic algorithms that leverage traffic forecasts from a spatio-temporal model. In a similar vein, recent work on Open RAN platforms [

94] applies deep reinforcement learning to manage RF-frontend activation and deactivation, achieving notable energy savings with minimal impact on user experience. Likewise, Malta et al. explored RL-based energy management strategies tailored for ultra-dense 5G networks [

95]. Multi-agent RL frameworks further enhance coordination among BSs, mitigating risks of coverage gaps and service degradation. Additionally, [

96] proposed the Dynamic Resource Allocation and Grouping algorithm (DRAG) algorithm, which integrates Deep Deterministic Policy Gradient (DDPG) with action refinement to select optimal subsets of small-cell BSs. Complementary to this, Wu et al. [

93] combined an RL agent with a Graph-Based Spatio-Temporal Network (GS-STN) to achieve more reliable BS switching. Recent advances also incorporate RL with Reconfigurable Intelligent Surfaces (RIS) and Open RAN architectures to jointly optimize power allocation and BS activation [

97], while hierarchical controllers enable decision-making across multiple levels. RL-based BS on/off methods demonstrate several advantages but despite promising results, RL-based BS control faces several challenges.

Table 3 describes the most important advantages and limits of RL.

4.2. Deep Learning for Traffic Prediction and Proactive Energy Saving

In modern 5G networks, improving energy efficiency largely depends on anticipating traffic demand ahead of time and implementing proactive control techniques such as activating cell sleep modes, disabling carriers, or adjusting power levels rather than relying exclusively on reactive heuristics. Deep learning (DL) methods, particularly those skilled at modeling spatio-temporal patterns, have emerged as powerful tools for traffic prediction and now play a pivotal role in shaping proactive energy-saving strategies.

The highly dynamic nature of traffic in 5G networks fluctuating over time (peak vs. off-peak), across diverse locations, and by service type poses major challenges for energy management. Traditional static or threshold-based sleep control schemes often miss opportunities for energy savings during low-demand periods, while simultaneously risking QoS degradation when sudden traffic spikes occur.

Through precise short- and medium-term traffic forecasting, DL techniques empower network operators to anticipate low-load intervals and proactively adapt network operating states, for instance, disabling carriers, lowering MIMO ranks, or turning off SCs, thus cutting energy consumption while preserving acceptable QoS.

The typical DL-based workflow for proactive energy saving involves the following:

Data collection and preprocessing: Collected historical traffic from base stations, enriched with contextual attributes (temporal, spatial, and mobility) and corresponding spatial adjacency matrices.

Model training: Deep learning architectures including Long Short-Term Memory (LSTM) for temporal modeling, Convolutional Neural Network (CNN) for spatial feature extraction, and hybrid CNN-LSTM enhanced with attention mechanisms.

Traffic forecasting: Short-term prediction of BS traffic demand over successive future intervals.

Energy-saving decision: Based on anticipated low-traffic intervals, the controller activates energy-saving mechanisms, for example, disabling small cells, lowering MIMO layers, or turning off carriers.

Monitoring: To guarantee service quality, the system persistently tracks key QoS metrics, including throughput, latency, and blocking rate.

A simplified predictive energy-saving policy can be expressed as

where

is the power consumption of cell

i,

is the predicted activity level (between 0 and 1), and

is the duration of the low-traffic interval.

Recent research has highlighted the strong potential of DL-based traffic prediction in supporting energy-aware management of 5G networks. Jia et al. [

98] developed a hybrid CNN-LSTM framework augmented with a dual attention mechanism to forecast BS traffic load patterns. Their approach was integrated with a particle-swarm-optimized k-means clustering algorithm to detect low-traffic intervals and determine optimal BS switch-off schedules, achieving high prediction accuracy across data from 300 BSs. In parallel, Gao et al. [

99] proposed a Smoothed LSTM (SLSTM) model specifically designed for 5G traffic time-series forecasting, which outperformed conventional algorithms, particularly under noisy and highly variable network conditions.

Another research direction has focused on Federated Deep Learning (FDL) frameworks for distributed and privacy-preserving traffic prediction in BSs. Perifanis et al. [

100] demonstrated that decentralized yet accurate forecasting can substantially enhance network energy efficiency by supporting more adaptive and informed scheduling strategies. In a complementary effort, Hussien et al. [

101] presented a comprehensive survey of machine learning approaches for spatio-temporal traffic prediction in 5G systems, highlighting that hybrid CNN-LSTM models achieved up to 47% lower RMSE compared to classical time-series techniques such as SARIMA. Moreover, the ITU FG-AI4EE technical report [

102] underscores traffic prediction as a key enabler of AI-driven, energy-efficient network management. The report notes that “AI-driven network energy saving can forecast traffic load based on historical data, site coverage, and user behaviors, thereby facilitating appropriate energy-saving strategies.” Taken together, these contributions illustrate how DL-based predictive models, whether centralized, federated, or hybrid, are emerging as a foundational element of proactive energy optimization in 5G and future networks.

Table 4 describes the advantages and limitations of deep learning for traffic prediction and proactive energy saving in 5G networks.

4.3. Self-Organizing Networks and Autonomous AI

Self-Organizing Networks have long been a cornerstone of 4G and 5G cellular systems, designed to automate tasks such as configuration, optimization, and fault recovery. Yet, conventional SON frameworks remain largely dependent on rule-based controls and manually defined thresholds, limiting their responsiveness to complex and non-stationary network conditions. The advent of AI, particularly RL, DL, and FL, has driven the emergence of Autonomous AI-driven SON, capable of achieving self-optimization, self-healing, and self-configuration with minimal human oversight.

In dense and heterogeneous 5G environments, manual management of radio resources, mobility, interference, and energy consumption or reliance on static SON rules has become unsustainable. Network conditions shift continuously, driven by user mobility, fluctuating traffic loads, and the simultaneous operation of multiple radio access technologies.

AI-empowered SON frameworks allow 5G networks to learn from environment feedback, predict future states, and take autonomous actions to optimize performance metrics such as throughput, latency, and energy efficiency.

AI-driven SON systems generally follow a closed-loop control cycle, often implemented through the MAPE-K (Monitor–Analyze–Plan–Execute over Knowledge) framework:

Monitor: Real-time KPIs, such as cell load, interference metrics, and energy usage—are continuously collected by the system.

Analyze: Machine learning models identify anomalies and forecast future network states using historical data.

Plan: Reinforcement and deep learning agents select optimal actions to enhance network KPIs.

Execute: The controller executes actions such as BS sleep control, handover adjustment, and power scaling.

Knowledge Update: By leveraging feedback, the AI model incrementally refines and expands its knowledge base.

This architecture can be expressed mathematically as

where

denotes the SON control policy,

represents the network state (traffic load, interference, etc.),

the control action (e.g., cell activation/deactivation), and

the reward (e.g., energy efficiency improvement).

Recent literature has extensively investigated the role of artificial intelligence in enhancing Self-Organizing Network functionalities for energy-efficient operation in 5G systems. Unlike classical SON approaches that rely on predefined rules and limited adaptability, AI-driven SON frameworks leverage machine learning techniques to enable autonomous and data-driven network optimization under highly dynamic traffic and radio conditions. Comprehensive survey studies provide detailed taxonomies of AI-based energy optimization techniques in 5G networks and demonstrate that intelligent SON mechanisms can jointly optimize power consumption, resource allocation, and network performance across heterogeneous deployments, achieving significant energy savings compared to conventional approaches [

103]. Beyond individual SON functions, broader AI-enabled autonomous network management frameworks combining deep learning and reinforcement learning have been investigated to support scalable, self-adaptive, and energy-aware operation in dense 5G environments [

104,

105]. Overall, these works collectively confirm that AI-enhanced SON constitutes a fundamental pillar of present 5G-native energy optimization strategies, significantly outperforming traditional rule-based SON solutions.

From a standardization standpoint, the ITU-T Focus Group on Autonomous Networks (FG-AN) [

106] recognizes AI-driven SON frameworks as key enablers for the transition toward beyond-5G and 6G systems. This initiative highlights the critical role of closed-loop, intent-based, and self-optimizing management approaches designed to operate with minimal human intervention. Collectively, these efforts underscore the ongoing evolution from rule-based SON architectures toward autonomous, AI-native systems capable of real-time adaptation, proactive optimization, and sustainable energy management in next-generation networks..

Table 5 represents the advantages and limitations of AI-driven SON in 5G systems.

4.4. Network Slicing Energy-Aware

Network slicing (NS) represents a cornerstone innovation in 5G, enabling multiple virtual networks (slices) to operate concurrently on a shared physical infrastructure, each tailored to distinct service requirements such as eMBB, ultra-reliable low-latency communication (URLLC), or massive Machine Type Communication (mMTC). While NS provides unprecedented flexibility and service differentiation, it also poses substantial challenges for energy efficiency due to the dynamic and heterogeneous nature of resource allocation across slices. To mitigate these challenges, energy-aware network slicing has emerged as a promising paradigm, in which AI techniques and advanced optimization algorithms collaboratively manage slice resources to reduce energy consumption while ensuring compliance with QoS constraints.

Conventional network slicing frameworks primarily emphasize performance isolation and QoS guarantees, often overlooking energy efficiency as an optimization objective. Yet, with the rapid expansion of dense small-cell deployments, edge computing, and AI-driven services, energy efficiency has become a critical factor in slice orchestration. Because each slice exhibits distinct latency, reliability, and throughput requirements, static resource provisioning proves both inefficient and energy-intensive. In response, energy-aware network slicing has emerged, aiming to dynamically allocate computational, radio, and transport resources in a way that balances slice-specific performance demands with overarching network energy objectives.

Energy-aware slicing systems typically follow a three-layer architectural model:

Service Layer: Defines the requirements of each slice (e.g., latency, throughput, reliability, and energy targets).

Orchestration Layer: Employs AI/ML-based decision engines (e.g., RL, LSTM) to predict traffic load and allocate resources dynamically.

Infrastructure Layer: Executes the resource allocation actions by controlling the BBUs, Radio Units (RUs), and transport links.

A simplified formulation of the energy-aware slicing optimization problem is given by

where

denotes resource assignment of BS

j to slice

i,

is the transmit power,

and

are achieved throughput and latency, respectively, and

represents power consumption.

Recent research has increasingly explored the integration of artificial intelligence, machine learning, and optimization theory into energy-efficient network slicing mechanisms. An example of an AI-assisted network slicing framework is proposed by Awad Abdellatif et al., who introduce an intelligent slicing architecture that jointly optimizes resource allocation, VNF placement, and other network parameters to improve performance and energy-related KPIs in 5G-and-beyond networks [

107]. To provide a broader context on energy-aware network slicing strategies, several survey and taxonomy efforts have highlighted the importance of energy efficiency in the design and operation of network slicing systems, emphasizing dynamic slice management, resource adaptation, and cross-layer optimization as key enablers for sustainable 5G operation [

108]. In particular, AI-driven decision-making techniques such as reinforcement learning and deep learning have been investigated to dynamically adjust slice resource allocation and power domains according to channel and traffic conditions, significantly reducing overall energy consumption in sliced environments. Other works examine intelligent slice orchestration strategies, including actor-critic learning for coordinated resource and energy control, demonstrating the potential of machine learning for zero-touch network slicing with energy savings [

109]. Collectively, these contributions establish that AI-enabled network slicing is a promising pathway toward energy-efficient, scalable, and adaptive next-generation networks that meet diverse performance and sustainability requirements.

Table 6 summarizes the main benefits and research challenges associated with AI- and ML-driven energy-efficient network slicing mechanisms in 5G and beyond networks.

4.5. Edge Computing and Intelligent Energy Migration

Edge computing has become a vital pillar of 5G architecture, offering ultra-low latency, reduced backhaul congestion, and localized data processing. By positioning computational resources closer to end users, it substantially lowers transmission energy. Yet, the rapid expansion of edge nodes introduces new energy challenges, particularly in distributed resource management and workload balancing. To address these issues, intelligent energy migration has emerged as a promising strategy—dynamically reallocating computational tasks and services between edge and cloud nodes according to energy and performance considerations, thus enabling sustainable operation in 5G and beyond 5G networks.

Traditional centralized cloud architectures struggle to cope with the highly dynamic traffic patterns of 5G, particularly for latency-sensitive and context-aware applications. Deploying edge servers throughout the radio access network enables real-time decision-making but can result in uneven energy consumption when workloads are distributed irregularly. To overcome this challenge, intelligent energy migration has been introduced—an adaptive mechanism that shifts workloads across nodes (e.g., base stations, edge servers, and the core cloud) in response to traffic dynamics, renewable energy availability, and overall network load.

This process relies on AI-driven predictive models to estimate both energy cost and service delay, enabling dynamic trade-offs between efficiency and performance. It is especially critical for energy-constrained BSs that simultaneously support computing, caching, and communication functions.

An energy-aware edge migration system can be modeled as a multi-layer optimization framework consisting of

Device Layer: Mobile or IoT devices generating tasks with specific delay and energy requirements.

Edge Layer: Local servers performing computation close to users; responsible for short-term load prediction and migration decisions.

Cloud Layer: Centralized infrastructure for large-scale processing and model training.

The goal is to minimize the total energy consumption of computation and transmission while satisfying latency and QoS constraints. The optimization problem can be expressed as

where

denotes the migration decision for task

i,

is the transmission power,

and

are the computation and transmission energies, and

and

are delay and rate functions, respectively.

Recent research has extensively investigated energy-aware task migration and edge cloud collaboration as fundamental mechanisms for improving the energy efficiency of 5G and beyond networks. Early work by Chen et al. [

110] demonstrated that dynamic computation offloading in mobile edge computing systems can significantly reduce energy consumption by jointly optimizing radio and computational resources across user devices and edge servers. Complementarily, Sardellitti et al. [

111] established a rigorous optimization framework for joint radio computation resource allocation, highlighting the intrinsic trade-off between execution latency and energy efficiency in multi-cell MEC environments. Beyond single-edge optimization, Xu et al. [

112] proposed online learning-based offloading and migration strategies for energy-harvesting MEC systems, demonstrating that collaborative edge–cloud resource coordination can enhance both energy efficiency and service responsiveness under dynamic traffic conditions. More recently, distributed intelligence has emerged as a scalable solution for large-scale deployments. Lim et al. [

113] surveyed the application of federated learning in mobile edge networks, emphasizing its potential to enable decentralized and privacy-preserving intelligence for task offloading and migration decisions while reducing signaling overhead.

Table 7 summarizes the main benefits and limitations of AI-driven energy-aware task migration and edge cloud collaboration mechanisms.

5. Comparison Between Present and Past Approaches

The evolution from conventional energy saving strategies to AI-driven, 5G-native approaches has profoundly transformed the design of energy efficient mobile networks. The methods outlined in

Section 3, such as power control and resource allocation, hardware-based enhancements, energy harvesting, and network control/planning through SOO mechanisms, provided the foundation for early 5G energy management. Yet, these techniques largely depended on static optimization and lacked the adaptability needed to address the dynamic complexity of next generation networks. By contrast, the AI-enabled solutions discussed in

Section 4 incorporate autonomy, predictive intelligence, and cross-domain orchestration, enabling more intelligent and sustainable energy management.

Table 8 provides a detailed comparison between the major categories of past strategies and the modern AI-driven solutions, highlighting their objectives, techniques, scalability, and limitations.

From the comparison above, it is clear that early energy optimization strategies laid the groundwork for improving 5G efficiency but were fundamentally constrained by their static design. Classical power control methods, for example, minimized instantaneous transmit power yet lacked adaptability to dynamic traffic fluctuations or user mobility. Likewise, hardware-based solutions enhanced local efficiency but were unable to deliver network-wide optimization. In a similar vein, energy harvesting and SOO algorithms introduced valuable green networking concepts but operated without predictive intelligence or large scale coordination.

Modern AI-driven approaches address these limitations by incorporating real-time analytics, learning-based control, and holistic orchestration. Reinforcement learning empowers BSs to autonomously switch on or off in response to contextual information and predicted traffic load, while deep learning models forecast traffic evolution to enable proactive resource management. At a broader level, energy-aware network slicing and edge computing architectures facilitate distributed, intelligent, and scalable energy management across heterogeneous infrastructures.

The shift from rule-based, hardware-centric energy strategies to data-driven, AI-powered frameworks represents a fundamental paradigm change in energy efficiency research. While traditional methods remain useful for foundational control and low-complexity deployments, AI-enabled systems introduce adaptability, autonomy, and predictive intelligence capabilities that are essential for 5G and forthcoming 6G networks. Nevertheless, the adoption of such intelligence must be carefully balanced against challenges of computational overhead, model explainability, and cross-domain interoperability.

While the previous discussion highlighted the objectives, techniques, scalability, and limitations of past and present energy-saving strategies, a purely qualitative comparison may not fully capture their practical impact. To complement this analysis,

Table 9 provides a quantitative synthesis of representative approaches. It reports typical energy savings, computational complexity, and deployment scenarios extracted from the literature, thereby offering a clearer view of the trade-offs between conventional pre-5G methods and AI-driven 5G-native solutions. This quantitative perspective reinforces the comparative analysis by illustrating not only how strategies differ in design, but also how they perform in terms of measurable efficiency gains and operational feasibility.

Table 9 synthesizes both past and present energy-efficient strategies in wireless networks. Conventional pre-5G approaches, such as resource allocation, hardware optimization, energy harvesting, and network deployment and planning, achieved moderate energy savings (15–35%) but were often constrained by static assumptions and limited adaptability. In contrast, present AI-driven and 5G-native solutions leverage deep reinforcement learning, deep learning, and intelligent orchestration to deliver higher energy savings (25–40%) while dynamically adapting to traffic variability, edge computing demands, and slicing requirements. Furthermore, the integration of renewable energy into MEC infrastructures highlights a shift toward sustainability alongside efficiency. This comparative analysis underscores the evolution from static, scenario-specific methods to autonomous, data-driven frameworks, offering concrete guidance for operators aiming to balance energy efficiency, scalability, and service quality in future zero-energy and explainable AI-enabled networks.

6. Open Challenges and Future Research Directions

6.1. AI Technique Mapping of 6G KPIs and Use Cases

To strengthen the connection between current AI-based solutions and future 6G requirements, we provide

Table 10. This table explicitly maps representative AI techniques to specific 6G use cases and KPIs, including ultra reliable low latency communication, massive IoT, enhanced mobile broadband, and energy efficiency/carbon neutrality. By establishing this mapping, we clarify how existing AI approaches can contribute to meeting 6G performance targets and standardization challenges.

Table 10 highlights the role of different AI techniques in addressing key 6G performance indicators and use cases. Reinforcement learning is particularly suited for URLLC scenarios, where dynamic resource allocation ensures ultra-low latency and reliability. Deep learning-based traffic prediction supports massive IoT by anticipating device activity and enabling scalable scheduling. SON contribute to scalability in ultra-dense environments by automating network optimization. Energy-aware slicing and green AI models directly address carbon neutrality and energy efficiency targets, while intelligent edge migration enhances both eMBB and URLLC by reducing latency and improving throughput. This mapping demonstrates that AI is not only a driver of innovation but also a critical enabler for achieving 6G standardization goals.

The advent of 6G networks presents a broad spectrum of research opportunities in energy-aware wireless systems. In the following, we highlight key open challenges and outline promising directions that will guide the development of sustainable and autonomous future networks.

6.2. AI Explainability and Operator Trust

As 6G networks evolve toward greater autonomy and AI-native functionality, ensuring trustworthy and transparent decision making becomes essential. Network operators increasingly require XAI frameworks capable of justifying actions such as dynamic slice reallocation, BS sleep control, and edge migration. Recent studies have introduced architectures that integrate XAI with Large Language Models (LLMs) to support anomaly detection and enable zero touch service management in 6G systems [

114].

Key research challenges can be summarized as follows:

Interpreting RL/DL models in network control: extending beyond vision and NLP to map learning outcomes to domain specific parameters such as latency and power states.

Defining operator confidence metrics: establishing criteria to determine when automated decisions are sufficiently “safe” to execute without human intervention.

Balancing accuracy and interpretability: addressing the trade-off where highly accurate models often reduce transparency and explainability.

Embedding XAI in closed loop management: ensuring that generated explanations can drive corrective actions or enable human in the loop overrides.

Progress in these areas will empower operators to implement AI-native control with built in auditability an essential prerequisite for widespread industrial adoption and regulatory compliance.

6.3. Green 6G and Zero-Energy Networks

Energy sustainability stands as a fundamental pillar of future wireless networks. The pursuit of “zero-energy” devices and infrastructure builds on advances in ambient energy harvesting, wireless power transfer (WPT), and ultra-low-power hardware platforms. A notable example is the MILESTONE-6G project, which aims to enable autonomous, networked WPT systems to realize zero-energy massive IoT deployments [

115].

Promising research directions for sustainable 6G include the following:

Holistic energy neutral network design: integrating RAN, edge computing, backhaul, and IoT devices into a unified, power-aware ecosystem.

Next-generation energy harvesting technologies: leveraging solar, vibration, and RF scavenging in combination with ultra-low-power electronics and advanced sleep mechanisms.

Life-cycle and environmental sustainability: minimizing electronic waste from battery replacements and fostering a circular economy for network components.

Economic and business model innovation: addressing questions of cost responsibility for ambient harvesting infrastructure and exploring how “free energy” can be monetized within telecom markets.

In this vision, green 6G will merge technical breakthroughs with sustainability imperatives, ranging from renewable energy integration to carbon-neutral operations.

6.4. Energy-Aware Intelligent Reconfigurable Surfaces

Reconfigurable Intelligent Surfaces (RIS) and programmable metasurfaces are rapidly emerging as pivotal enablers of 6G wireless environments. By intelligently shaping signal propagation and supporting adaptive reflection, they hold great promise for enhancing energy efficiency. For instance, Tyrovolas et al. [

116] investigated “zero-energy RISs (zeRIS),” which harvest ambient energy and operate in passive reflection modes. Complementary studies, such as that of Jumani et al. [

117], demonstrated that RIS deployment can reduce overall network power consumption by more than 60%. Promising research directions for RIS include the following:

Joint design of hardware, control algorithms, and energy-harvesting strategies to ensure that the surfaces themselves operate with minimal power consumption.

AI-driven optimization of RIS phase control, SOO, and element segmentation under energy–QoS trade-offs [

118].

Integration with Non-Terrestrial Networks (NTNs) and edge computing, enabling energy efficient coverage in satellite, UAV, and High-Altitude Platform Station (HAPS) deployments [

119].

Standardization and interoperability efforts, including the definition of metrics (e.g., bits per Joule), benchmark scenarios, and deployment guidelines for RIS in 6G systems.

Taken together, RIS technologies provide a concrete pathway toward energy adaptive propagation environments that actively contribute to green and sustainable network operation.

6.5. Multi-Objective Fusion: Energy + QoS + Reliability + Ultra-Low Latency

Next-generation networks must move beyond treating energy efficiency as a trade-off against other Key Performance Indicators such as throughput, reliability, or latency. Instead, they must pursue joint optimization across these dimensions. Achieving this requires multi-objective frameworks capable of reconciling conflicting goals: energy conservation, ultra-reliable low-latency communication, massive machine-type communications, and enhanced mobile broadband.

Key research challenges ahead include

Formulating comprehensive utility functions that jointly capture energy, latency, reliability, and user experience, and designing AI/ML controllers capable of optimizing them in real time.

Establishing hierarchical control loops, where rapid edge-level decisions address latency requirements while slower, network-wide optimization focuses on energy efficiency, all integrated within a closed-loop system.

Creating benchmarking environments and simulation platforms that realistically reflect multi-objective trade-offs, such as balancing energy consumption against 1 ms latency and 99.999% reliability.

Guaranteeing robustness and SLA compliance, ensuring that energy optimization does not lead to catastrophic failures or unacceptable latency spikes.

By overcoming these challenges, 6G can evolve from being merely fast and efficient to becoming truly smart, reliable, and sustainable—a network capable of meeting diverse demands while advancing energy-aware innovation.

6.6. Toward “Zero-Touch Autonomous Networks”

The ultimate vision for 6G networks is the realization of fully autonomous “zero-touch” systems, where orchestration, management, and optimization occur seamlessly without human intervention. Achieving this requires closed loop, vendor agnostic automation, self learning controllers, and trustworthy AI. Early pilot demonstrations of the ETSI Zero-Touch Network and Service Management (ZSM) framework have already shown encouraging results in 6G testbeds [

120].

Key enablers and challenges on the path to zero-touch 6G networks include

Full-stack automation spanning the RAN Intelligent Controller (RIC), edge, core, and service orchestration layers.

Federated learning and on-device AI to enable real-time local adaptation, complemented by cloud-scale learning for global policy optimization.

Trust, security, and verification, ensuring autonomous systems can detect anomalies, self-heal, and provide auditable logs for operators and regulators.

Vendor interoperability and open interfaces, allowing plug-and-play modules from diverse suppliers and convergence on standardized APIs.

Human-in-the-loop fallback and governance, recognizing that while “zero-touch” is the ultimate goal, oversight and fallback mechanisms remain essential during transitional phases.

Realizing this vision will demand sustained research, rigorous standardization, and extensive large scale trials to move from semi-automated systems toward fully autonomous networks.

7. Conclusions

Energy efficiency has become a central design objective in contemporary 5G networks, driven by the rapid growth of mobile data traffic, the densification of radio access networks, and the increasing pressure to reduce both operational costs and carbon emissions. This survey has reviewed the evolution of energy optimization strategies in mobile networks, highlighting the transition from classical pre-5G approaches, such as resource allocation, power control, energy harvesting, and base-station SOO mechanisms, to more advanced, AI-driven, and 5G-native solutions.

A key insight emerging from this study is the existence of fundamental trade-offs between traditional and modern energy-saving strategies. Classical approaches are generally characterized by low computational complexity, deterministic behavior, and ease of implementation, making them attractive for early deployments and legacy systems. However, they often rely on static assumptions, coarse traffic models, and limited network awareness, which restrict their effectiveness in highly dynamic and heterogeneous 5G environments. In contrast, AI-based and self-organizing techniques offer superior adaptability, finer grained decision making, and improved energy performance trade-offs by leveraging real-time traffic prediction, context awareness, and cross-layer optimization. These gains, however, come at the cost of increased system complexity, higher computational overhead, data dependency, and challenges related to model interpretability and operational robustness.

From a practical perspective, this survey suggests several concrete recommendations for mobile network operators. First, rather than replacing legacy mechanisms entirely, hybrid energy management frameworks that combine proven pre-5G techniques (e.g., sleep modes and power control) with AI-assisted decision layers represent a pragmatic and cost effective migration path. Second, operators should prioritize traffic aware and load adaptive solutions, particularly in ultra-dense deployments where temporal and spatial traffic variations are pronounced. Third, energy optimization should be tightly integrated with network slicing and edge computing to ensure that energy savings do not compromise service-level agreements for latency-critical and reliability-sensitive applications. Finally, investment in data collection, monitoring infrastructure, and explainable AI models is essential to enable trustworthy and scalable deployment of intelligent energy saving solutions.

Looking ahead, the transition toward 6G networks further amplifies the importance of energy efficiency and sustainability. Future systems are expected to move toward zero-energy and carbon-neutral operation through the integration of renewable energy sources, intelligent reflecting surfaces, green AI, and holistic life-cycle energy optimization. Addressing these challenges will require interdisciplinary research that bridges wireless communications, artificial intelligence, and sustainable energy systems. Ultimately, the effective deployment of energy efficient wireless networks will not only enhance economic viability for operators but also play a crucial role in meeting global climate and sustainability objectives.