1. Introduction

Tornadoes are small-scale but highly destructive extreme weather phenomena that typically develop near the base of convective storms and are often accompanied by severe winds, heavy precipitation, and other hazardous conditions [

1]. Although their lifetimes are generally short—ranging from several minutes to tens of minutes—their peak near-surface wind speeds can exceed 150 m/s under extreme circumstances, with catastrophic impacts on lives and infrastructure. Consequently, tornadoes have become one of the most threatening natural hazards to human society in the context of ongoing global climate change [

2,

3]. Statistically, the United States experiences more than 1200 tornadoes annually on average, whereas the annual frequency in China accounts for only approximately 5–10% of that in the United States [

4]. Nevertheless, tornadoes in China are predominantly concentrated in densely populated and economically developed regions, such as the Jianghuai Plain, the Huang–Huai Plain, and parts of South China. Moreover, their peak occurrence period (June–August, 14:00–20:00 local time) coincides with periods of intensive human activity, substantially amplifying societal vulnerability and disaster losses [

5]. Recent high-impact events, including the 2016 Funing EF4 tornado and the 2020 Gaoyou EF3 tornado in Jiangsu Province, resulted in severe casualties and enormous economic losses, underscoring the urgent need for accurate and timely tornado detection and warning systems [

6].

Weather radars, particularly the Doppler weather radar, have long been recognized as the most effective observational tool for tornado monitoring and early warning, owing to their high temporal and spatial resolution and their ability to resolve storm-scale dynamical structures [

7]. One of the most prominent radar signatures associated with tornadoes is the Tornado Vortex Signature (TVS), which appears in Doppler radial velocity fields as a region of intense azimuthal shear characterized by adjacent inbound and outbound velocity extrema [

8,

9]. The identification of TVS has therefore served as a cornerstone for radar-based tornado detection. In addition, the deployment of dual-polarization radar has substantially enhanced tornado monitoring capabilities by providing microphysical information on hydrometeor shape, size, and phase. These additional observables enable improved discrimination between meteorological and non-meteorological echoes and facilitate the detection of Tornado Debris Signatures (TDS), which are strongly indicative of ongoing surface damage caused by tornadoes [

10]. More recently, the advancement of phased-array weather radar technology has further improved temporal resolution, allowing for rapid-scan observations that capture the continuous evolution of tornadoes. Such high-frequency measurements provide unprecedented opportunities to resolve fine-scale structural features and dynamical processes during tornado formation and evolution [

11].

The development of tornado detection algorithms has evolved from traditional empirical approaches toward modern machine learning and deep learning methodologies. Early tornado identification relied heavily on the subjective visual interpretation of radar imagery by forecasters, as well as on rule-based algorithms employing empirically defined thresholds [

12]. These conventional approaches primarily focused on Doppler velocity data and the identification of TVS to infer tornado presence [

13]. Within the algorithm generation framework of the U.S. Next Generation Weather Radar (NEXRAD) system, more than 40 operational algorithms have been developed, including the Tornado Detection Algorithm (TDA) and mesocyclone detection algorithms [

14,

15,

16]. Although these methods provide valuable guidance, they are typically constrained by strict physical and threshold-based criteria, which can result in elevated false alarm rates. In operational environments, radar noise, non-meteorological echoes, and data quality issues further degrade algorithm performance, limiting their reliability and robustness [

17].

In recent years, the rapid advancement of artificial intelligence and machine learning has ushered tornado detection research into a new stage. Data-driven models are capable of automatically learning discriminative features from large volumes of labeled radar observations and have demonstrated superior performance in tornado detection tasks. A wide range of algorithms, including support vector machines [

18], decision trees [

19], random forests (RF) [

17,

20], extreme gradient boosting (XGBoost) [

21], and convolutional neural networks (CNN) [

22,

23], have been successfully applied, yielding substantial improvements over traditional methods. Representative examples include the tornado probability model TORP developed by the U.S. National Severe Storms Laboratory (NSSL) based on RF [

20], the CNN-based tornado detection baseline model (TDA-CNN) proposed by the MIT Lincoln Laboratory [

22], and the XGBoost-based approach (TDA-XGB) introduced by Zeng et al. [

21].

Despite these advances, the practical deployment of machine learning–based tornado detection models in China faces a fundamental challenge: the relatively low frequency of tornado occurrences limits the availability of high-quality, labeled tornado samples for independent model training and optimization. In contrast, the central United States—often referred to as “Tornado Alley”—experiences exceptionally frequent tornado activity and has accumulated extensive, high-quality radar datasets over decades, providing a robust data foundation for model development. Motivated by this disparity in data availability, this study adopts a cross-domain research framework in which models are trained in a source domain (the United States) and evaluated in a target domain (China). Specifically, using comprehensive U.S. tornado radar datasets [

23], we construct three representative detection models—TORP, TORP-XGB, and TDA-CNN—and systematically assess their performance using observations from the China New Generation Weather Radar (CINRAD) network and a limited set of operationally confirmed tornado cases. The primary objectives of this study are to evaluate the adaptability and robustness of different algorithmic paradigms under the Chinese radar system, to quantify the impact of radar system discrepancies on model performance, and to analyze the mechanisms underlying cross-domain performance degradation. These findings aim to provide a theoretical basis for the cross-regional application of tornado detection models and to offer technical guidance for the future development of transfer learning-based automated tornado detection systems.

The remainder of this paper is organized as follows.

Section 2 introduces the data sources employed in this study.

Section 3 details the data processing methodologies and the associated baseline models.

Section 4 presents a comparative performance analysis of the trained models on both the U.S. and Chinese test datasets. Finally,

Section 5 and

Section 6 provide the discussion and conclusions.

3. Method

3.1. Data Preprocessing

The azimuthal resolution of the radar base data differs between the two datasets used in this study: the TorNet dataset provides radar observations at an azimuthal resolution of 0.5°, whereas the Chinese CINRAD base data have an azimuthal resolution of 1°. Such discrepancies in angular resolution can lead to inconsistencies in gradient-based feature estimation when using the Linear Least Squares Derivative (LLSD) method [

27], thereby affecting the comparability of extracted shear-related features across datasets.

To mitigate this issue, the TorNet radar data were downsampled from 0.5° to 1° in azimuth, ensuring consistency with the CINRAD observations. The downsampling procedure was implemented using a reflectivity-weighted averaging scheme for radial velocity fields [

28]. Specifically, two adjacent radar gates sampled at a 0.5° azimuthal spacing were grouped into a single averaging window, within which weighted averaging was applied to obtain the final radar value at 1° resolution. This approach preserves physically meaningful velocity information while reducing resolution-induced biases in subsequent gradient calculations. The formulation is expressed as

where

V denotes radial velocity,

Z denotes reflectivity, and

n represents the number of radial gates (

n = 2 in this study), and i denotes the radial index within a given ray.

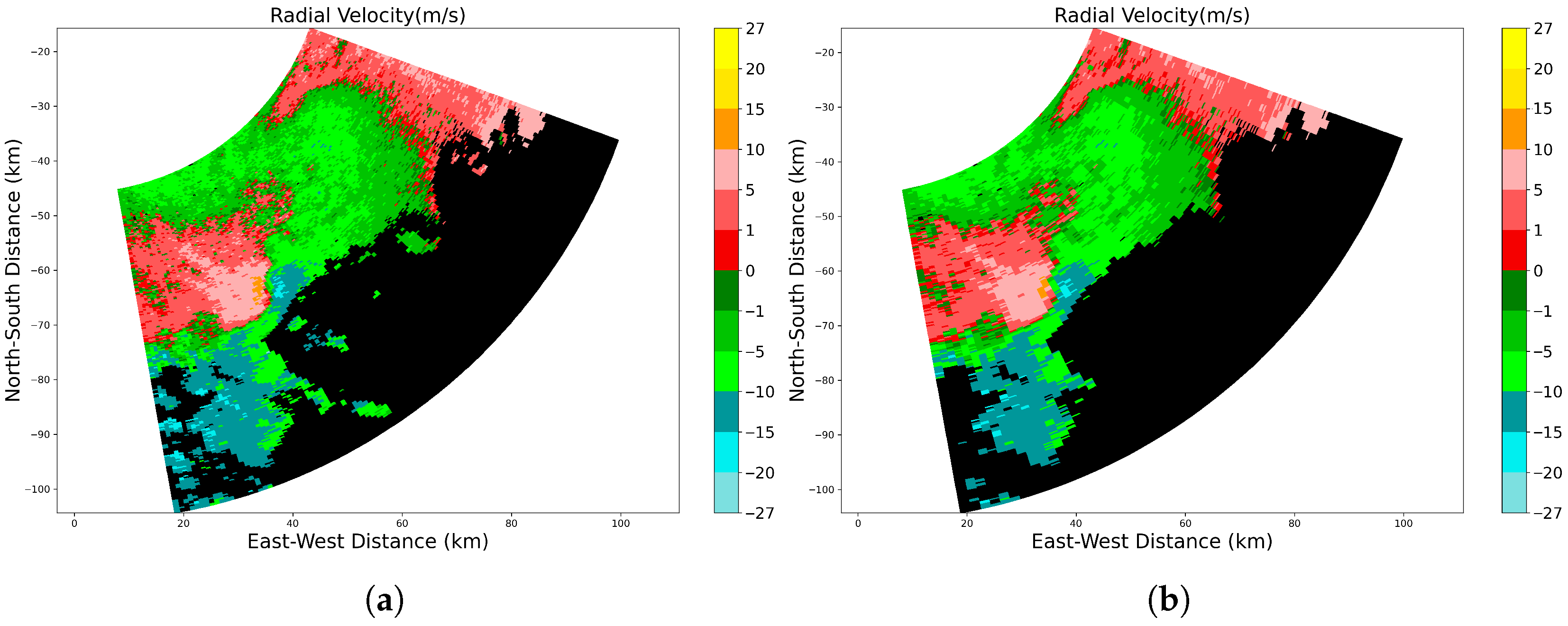

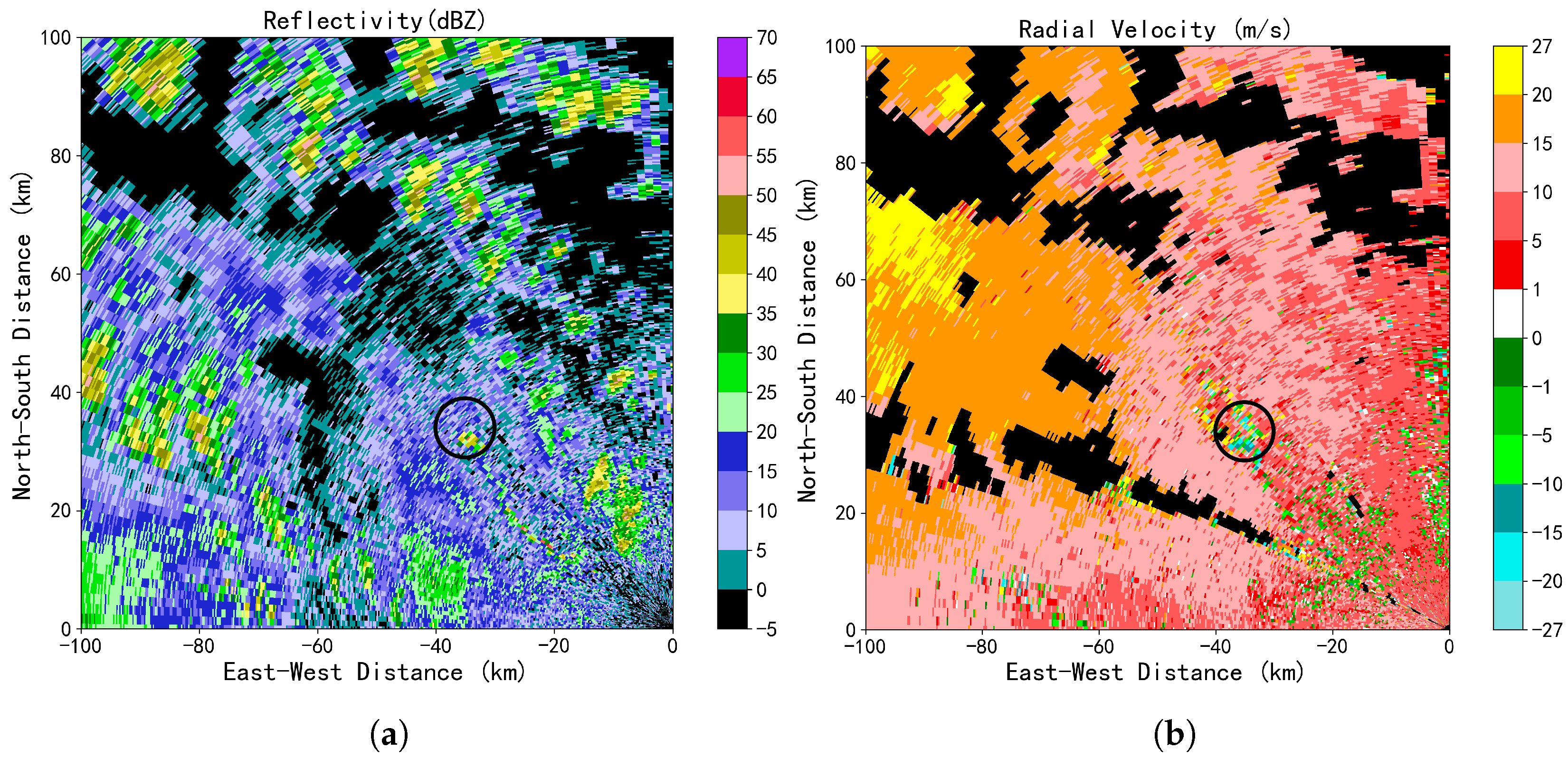

Figure 2 presents a comparative visualization of a representative tornado sample from the TorNet dataset, contrasting the raw data with the downsampled version. As is evident from the figure, the salient vortex signatures are effectively preserved despite the downsampling process. This downsampling procedure was consistently applied to all other radar variables utilized in this study.

3.2. Feature Extraction

For machine learning-based tornado detection, models typically require carefully designed statistical features derived from radar observations. In this study, LLSD was employed to compute shear-related gradient features. Compared with traditional finite-difference schemes, LLSD estimates gradients by fitting a local plane through least-squares optimization, providing more robust and noise-resistant gradient estimates in Doppler velocity fields [

27].

Six fundamental radar variables were used as inputs: DBZ, VEL, WIDTH, ZDR, RHOHV, KDP. For each radar variable field, only valid echo regions with reflectivity values exceeding 20 dBZ were retained. To suppress small-scale, physically insignificant isolated echoes, two successive median filtering operations were applied. Subsequently, a single morphological dilation was performed to enhance echo connectivity and improve the completeness of target structures.

Using the LLSD method, three types of gradients were computed for each radar variable: azimuthal gradients, radial gradients, and total gradients. These gradients characterize spatial variations in radar observables along azimuthal and radial directions and are particularly effective for capturing rotational signatures associated with tornadoes. In the LLSD formulation, the kernel size defines the local neighborhood used for plane fitting; radar observations within the kernel are weighted according to their spatial proximity, with radial and azimuthal offsets measured relative to the kernel center. The fitted plane yields estimates of the radial and azimuthal gradient components, along with a constant offset term.

where

denotes the size of the LLSD computational kernel, defined as the local radar-data neighborhood used to fit the least-squares plane.In our experiments, a kernel size of 750 m × 2500 m was employed to balance noise suppression and small-scale vortex preservation;

represents the radar-variable observation (e.g., radial velocity or reflectivity) at the

kth grid cell within the kernel;

is the corresponding weight assigned to the

kth grid cell;

and

denote the radial and azimuthal offsets of the

kth grid cell relative to the kernel center, respectively. The parameter

is the intercept term of the linear least-squares fit, while

and

represent the estimated gradients in the radial and azimuthal directions, respectively.

After obtaining the gradient fields, azimuthal shear derived from the radial velocity field (Azshear) was selected as the primary indicator for tornado candidate identification. A threshold of 0.006 s

−1 was applied based on a statistical analysis of U.S. storm reports from 2011 to 2018, for which approximately 92% of confirmed tornado samples exceeded this threshold [

20]. It is worth noting that downsampling the 0.5° U.S. data to 1.0° acts as a spatial smoothing filter, which naturally dampens the extreme gradient peaks. This inherently makes the U.S.-derived threshold slightly more stringent when applied to the coarser target domain data. Radar gates satisfying the threshold condition were spatially clustered using a depth-first search (DFS) algorithm. Starting from an initial gate, the DFS procedure recursively searches neighboring gates and aggregates all spatially connected gates that simultaneously meet the threshold criterion into a single candidate object, thereby forming preliminary tornado targets.

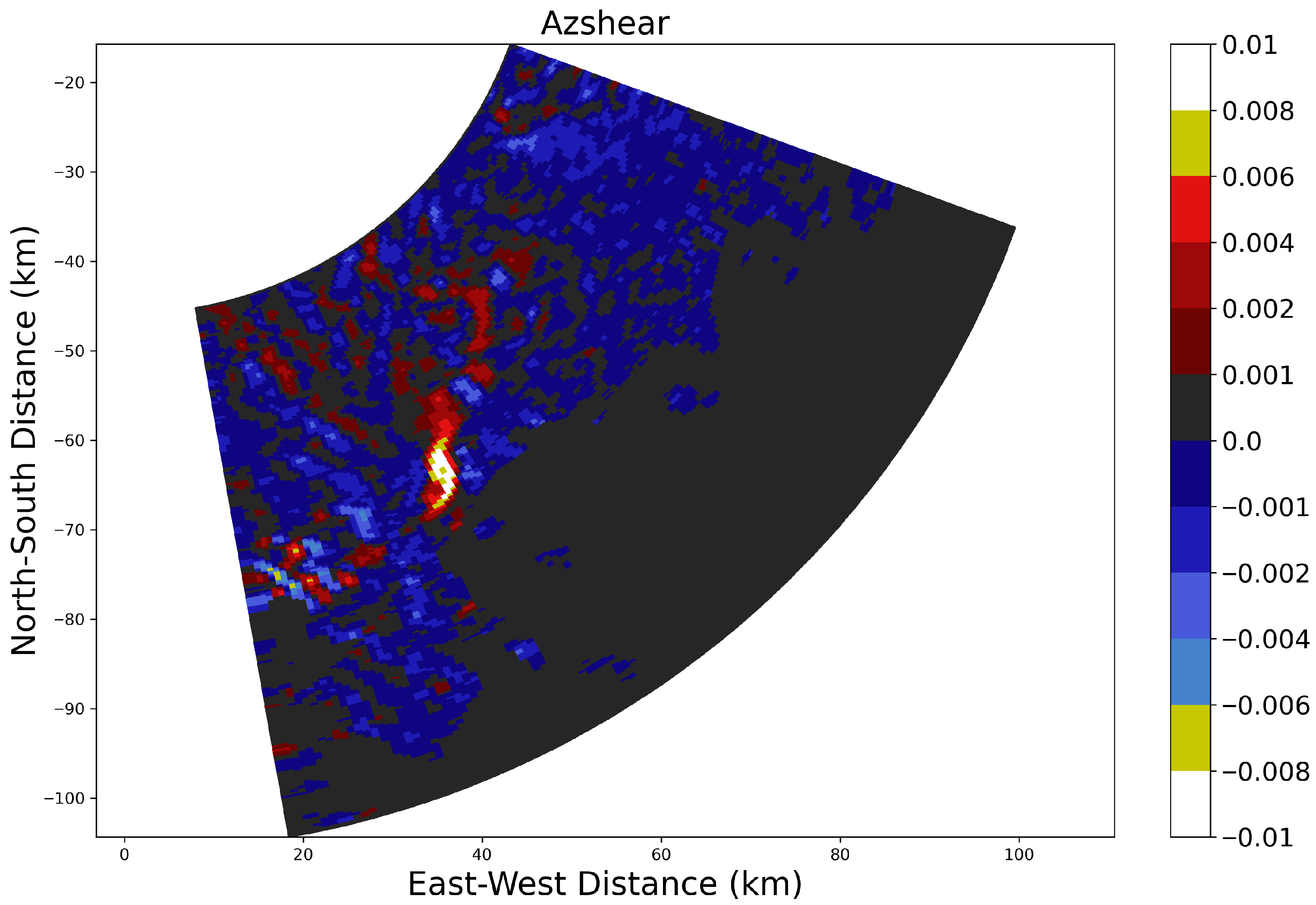

Figure 3 presents a comparative visualization between the raw radial velocity field and the derived Azshear distribution; the Azshear values within the tornado vortex region are significantly amplified compared to the surrounding background flow, exhibiting a distinct high-intensity core.

To reduce the influence of random noise and long-range observational uncertainties, candidate objects consisting of fewer than four radar gates were discarded, and targets with centroid distances from the radar site exceeding 150 km were excluded. Furthermore, candidate objects with centroid separations smaller than 9 km were merged to avoid multiple detections of the same tornado. Once tornado target objects were finalized, a circular region with a radius of 2.5 km centered on the location of maximum Azshear within each object was defined as the feature-extraction domain. Within this region, five statistical descriptors—minimum, 25th percentile, median, 75th percentile, and maximum—were computed for each radar variable and its corresponding LLSD-derived gradient fields. All extracted features were concatenated to form the input feature vectors for the TORP and TORP-XGB models.

3.3. Baseline Models

To evaluate the performance of different algorithmic paradigms in tornado detection, three representative models were selected as baseline approaches: RF, XGBoost, and CNN. RF and XGBoost represent feature-driven machine learning methods that rely on manually extracted physical features, whereas CNN exemplify data-driven deep learning approaches capable of learning features directly from raw radar observations.

3.3.1. TORP

The RF algorithm serves as a robust baseline, constructing an ensemble of mutually independent decision trees via bootstrap aggregation (bagging) [

29]. Unlike single estimators, RF introduces dual stochasticity—randomness in both the training sample space (via bootstrapping) and the feature space (via random subset selection at each split node). This mechanism effectively decorrelates the individual trees, thereby reducing the variance of the model and mitigating the risk of overfitting, which is particularly prevalent in high-dimensional meteorological datasets containing outliers.

In this study, the TORP model is instantiated within the RF framework. It ingests a vector of manually engineered features derived from the LLSD algorithm, encompassing both statistical moments of radar variables (e.g., DBZ cores, VEL couplets) and the morphological attributes of storm cells. By aggregating the probabilistic outputs of the entire forest, TORP achieves a stable consensus prediction, making it highly resilient to the heterogeneous noise often present in operational radar data.

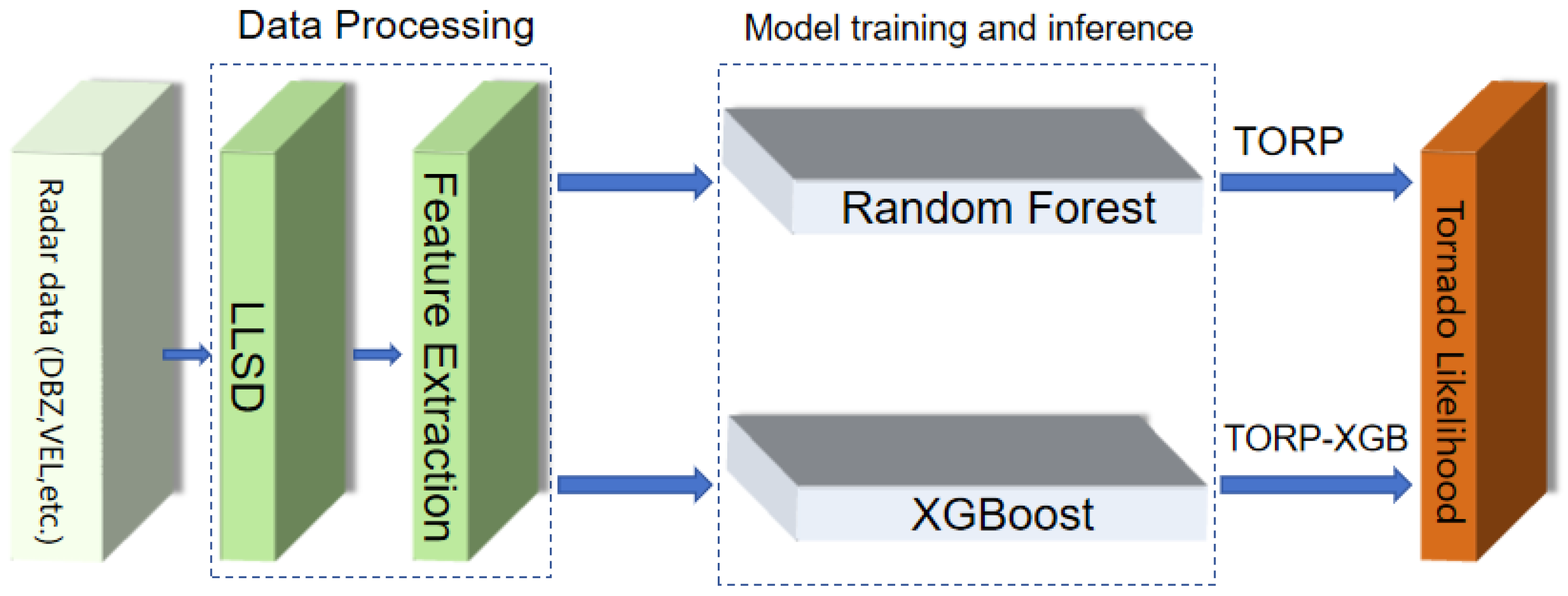

Figure 4 illustrates the integrated operational workflow of the TORP and TORP-XGB algorithms, delineating the end-to-end process from raw radar data preprocessing and feature extraction to the final probabilistic detection output.

3.3.2. TORP-XGB

To explore the potential of boosting strategies, we employ XGBoost, a scalable and highly optimized implementation of gradient boosted decision trees [

30]. Unlike the parallel bagging strategy of RF, XGBoost employs a gradient boosting framework, where decision trees are trained sequentially. Each new tree is optimized to fit the residuals of the preceding ensemble, thereby incrementally improving model accuracy.

Crucially, XGBoost integrates a sophisticated objective function that combines a convex loss term with a regularization term (penalizing model complexity), alongside second-order Taylor approximation for precise optimization. Its implementation of weighted quantile sketches and sparsity-aware split finding makes it exceptionally efficient for handling sparse meteorological data and subtle feature interactions. The TORP-XGB model developed herein retains the feature extraction pipeline of TORP but leverages the gradient boosting framework to capture complex, non-linear dependencies among kinematic and microphysical predictors that standard bagging methods might overlook. The operational flowchart for TORP-XGB is detailed in the lower portion of

Figure 4.

3.3.3. TDA-CNN

CNNs are end-to-end deep learning models that automatically learn hierarchical spatial feature representations directly from raw observational data. Through local receptive fields and parameter sharing, CNNs efficiently extract spatial structures while maintaining a relatively compact parameter space. Convolutional layers capture local spatial correlations, pooling layers enhance translational invariance through downsampling, and fully connected layers map high-dimensional feature representations to target classification or regression outputs. Benefiting from their automatic feature learning capability, CNNs have been widely applied to image recognition, object detection, and radar-based meteorological analysis [

31].

The TDA-CNN model employed in this study is based on the architecture released by the MIT Lincoln Laboratory, which was specifically designed for tornado detection using the TorNet dataset [

23]. The model input is a 14-channel tensor comprising six radar variables (DBZ, VEL, WIDTH, ZDR, RHOHV, KDP) observed at two elevation angles (0.5° and 0.9°), along with a range-folding gate mask. The network backbone consists of four hierarchical convolutional blocks. To address the non-uniform spatial geometry inherent in radar polar coordinates, a CoordConv mechanism is introduced in place of standard two-dimensional convolutions by explicitly incorporating spatial positional information—namely, the radial coordinate and its reciprocal—prior to convolution. This design enhances sensitivity to near-radar tornado signatures and effectively integrates radar geometry with deep feature representation.

Figure 5 depicts the data processing pipeline of the TDA-CNN. The architecture enables the automatic mapping from raw multi-channel radar observations to a final tornado probability score via successive convolution and pooling operations.

A notable challenge in applying this architecture to CINRAD is the discrepancy in volume coverage patterns (VCP), specifically the absence of the 0.9° elevation scan. To address this, we implemented a data adaptation strategy wherein the lowest-elevation data (0.5°) is duplicated to populate the 0.9° input channel. While this reduces the vertical independent information, it preserves the channel dimensionality required by the pre-defined architecture, allowing the deep feature extractors to function on the available distinct polarimetric fingerprints.

4. Results

To ensure a rigorously objective and unbiased comparison of algorithmic performance across the divergent operational environments of the United States and China, a unified evaluation framework was enforced. All three candidate models (TORP, TORP-XGB, and TDA-CNN) were subjected to identical testing protocols and spatiotemporal matching criteria within their respective domains.

Given the inherent spatial uncertainty in radar sampling and the rapid translational motion of tornadic supercells, a rigid point-to-point matching is physically impractical. Consequently, we adopted a spatiotemporal proximity criterion rooted in mesoscale dynamics. A prediction is classified as a true positive (TP) if the model-inferred tornado centroid falls within a 5 km radial tolerance and a min temporal matching window (two consecutive radar volume scans) of a verified tornado report. This threshold was selected to approximate the typical diameter of a mesocyclone or the representative scale of a TVS, accounting for potential navigational errors and grid discretization. Conversely, a false positive (FP) is recorded when the model triggers a detection absent of any verified event within the 5 km neighborhood, while a false negative (FN) denotes a failure to generate a detection signal for a confirmed tornado.

To quantify detection skill, we employed four standard contingency table metrics that are widely adopted in severe weather verification: Probability of Detection (POD), False Alarm Ratio (FAR), Critical Success Index (CSI), and Frequency Bias (BIAS) [

32]. These are mathematically defined as follows:

Each metric elucidates a distinct aspect of model efficacy. The POD characterizes the model’s sensitivity—its capability to correctly identify observed events—which is paramount for safety-critical warning systems to minimize missed detections. In contrast, the FAR quantifies operational reliability, where lower values indicate a reduction in false alarms, thereby preserving public confidence. However, given the extreme class imbalance inherent in tornado datasets where non-tornado events vastly predominate, relying solely on POD or FAR can be misleading. Therefore, the CSI, which integrates hits, false alarms, and misses while excluding the dominant true negatives, serves as the most robust indicator of overall detection performance and is treated as the primary ranking metric in this study. Complementing these skill scores, the BIAS provides insight into systematic model tendencies; a value of unity indicates perfect frequency consistency, whereas deviations reveal systematic over-forecasting (

) or under-forecasting (

). In this analysis, POD, FAR, and CSI are utilized to evaluate detection accuracy, while BIAS serves as an auxiliary criterion for calibrating optimal probability thresholds [

33].

4.1. Evaluation on the U.S. Dataset

For source-domain evaluation, tornado samples from the TorNet test set during 2020–2022 were used, comprising 464 confirmed tornado events, along with an equal number of WRN and NUL samples.This subset comprises the entire available test dataset for the 2020–2022 period. To maintain class balance and avoid evaluation bias, equal-sized WRN and NUL samples were randomly selected from the corresponding available cases during the same period. These data were independently input into the TORP, TORP-XGB, and TDA-CNN models for tornado detection. Each model outputs a tornado probability, and a detection is declared when the probability exceeds a predefined threshold.

To systematically examine model sensitivity to threshold selection, probability thresholds were varied from 0.1 to 0.9 in increments, and the corresponding POD, FAR, CSI, and BIAS values were computed. The resulting performance curves are shown in

Figure 6. Based on these curves, optimal probability thresholds were determined for each model. Importantly, these thresholds were subsequently fixed and directly applied to the Chinese dataset, ensuring a consistent evaluation baseline across regions and enhancing the comparability and operational relevance of cross-domain results.

Overall, all three models exhibit the characteristic trade-off between detection capability and reliability: as FAR decreases (i.e., reliability increases), POD gradually declines. The general trends of the curves are similar across models. However, TORP and TORP-XGB consistently outperform TDA-CNN on the U.S. dataset. At low to moderate thresholds, TORP and TORP-XGB maintain relatively high POD and CSI values, whereas TDA-CNN achieves comparable performance only at very low thresholds and exhibits a more rapid performance degradation as the threshold increases.

Considering CSI and BIAS jointly—favoring high CSI while maintaining BIAS close to unity—the optimal probability thresholds were determined as 40% for TORP, 40% for TORP-XGB, and 10% for TDA-CNN. These thresholds were held constant in subsequent evaluations on Chinese radar data.

Table 3 summarizes the performance of the three models at their respective optimal thresholds. Among them, TORP achieves the highest POD and CSI, indicating the strongest overall detection performance on the U.S. test dataset, followed closely by TORP-XGB, while TDA-CNN performs relatively less effectively.

4.2. Evaluation on the Chinese Dataset

To comprehensively assess model applicability under different regional and radar-system conditions, the evaluation procedure for the Chinese dataset strictly followed that used for the U.S. dataset. The probability thresholds determined from the U.S. TorNet evaluation were fixed and applied without modification to the Chinese test dataset, ensuring consistency in cross-regional assessment.

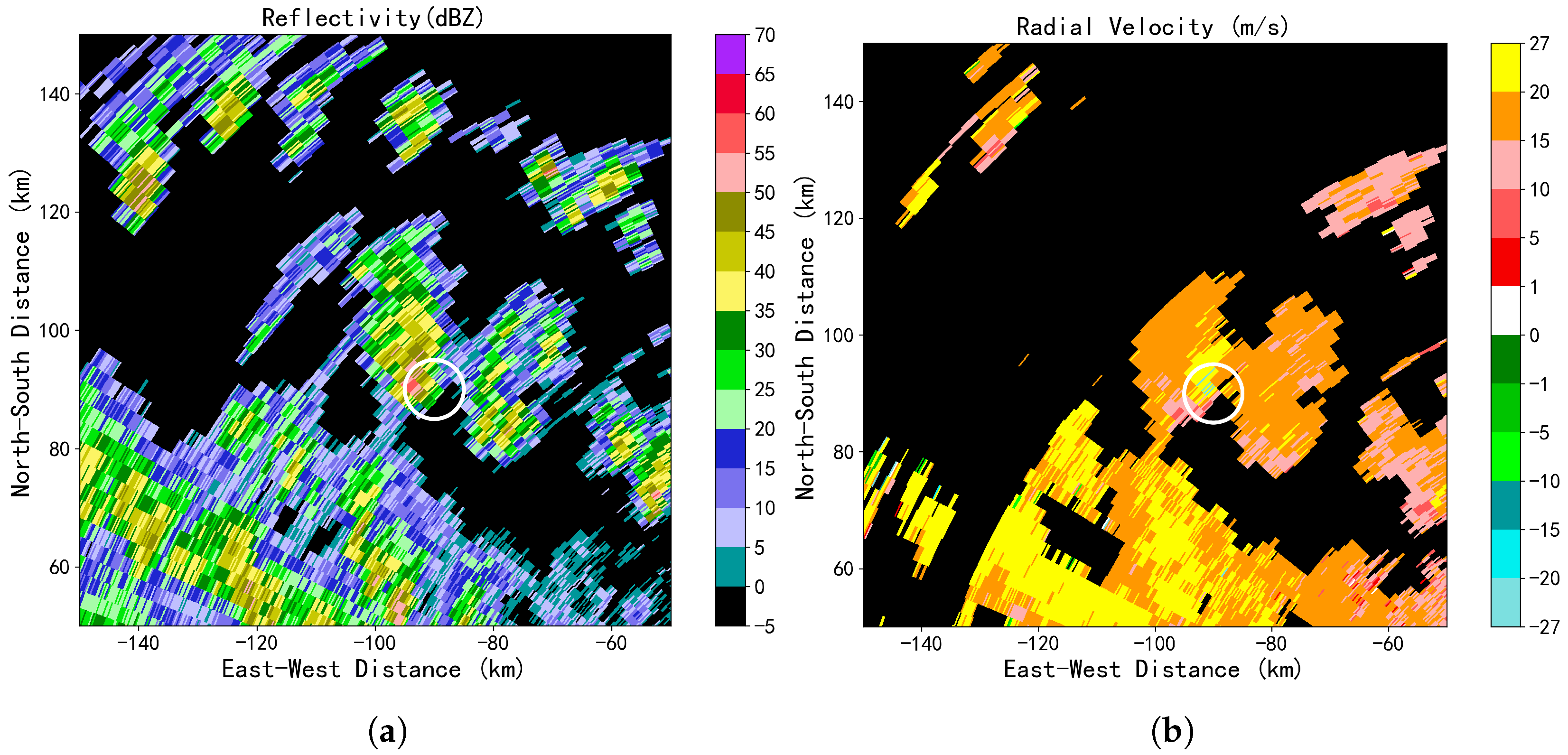

Figure 7 presents a multi-perspective visualization of a correctly detected tornado event in China, which was simultaneously captured by three distinct radar stations. Consistently across these observations, the reflectivity and radial velocity fields exhibit canonical tornadic signatures. In the reflectivity fields, compact hook echo structures are evident near the vortex locations, characterized by intensity values exceeding 40 dBZ. Correspondingly, the radial velocity fields reveal pronounced inbound–outbound velocity couplets with velocity differences surpassing 50 m/s and minimal spatial separation between extrema, indicating intense rotational shear. All three models (TORP, TORP-XGB, and TDA-CNN) successfully identified the tornado target across the scans from all participating radars.

Table 4 provides a comprehensive synthesis of the predictive performance for the three algorithmic paradigms when applied to the target domain (Chinese dataset). A comparative analysis against the source-domain (U.S.) benchmarks reveals a consistent trend of performance attenuation across all models, confirming the challenge posed by domain shifts inherent in differing radar sensing environments. This degradation is quantitatively manifested as a contraction in POD coupled with an inflation in FAR, collectively leading to suppressed CSI scores. However, the magnitude of this skill deterioration varies significantly among the architectures. The TORP model exhibited the most robust stability, suffering the least pronounced drop in detection metrics, followed by the TORP-XGB. Conversely, the TDA-CNN experienced the most substantial decline in performance. This disparity suggests that while deep learning models offer superior sensitivity in the source domain, they may be more susceptible to overfitting specific sensor characteristics (e.g., NEXRAD data distribution). In contrast, the feature-driven TORP framework demonstrates superior cross-domain generalization capability, indicating that explicit physical features retain higher transferability across heterogeneous radar networks than the latent representations learned by current deep learning architectures.

Figure 8 exemplifies a representative FN scenario, highlighting the limitations of current algorithmic paradigms in identifying weak or disorganized systems. In the horizontal reflectivity field, the convective structure appears notably fragmented, exhibiting relatively attenuated and diffuse echoes. Crucially, the storm lacks the classic supercellular morphology; specifically, there is an absence of a coherent hook echo or a distinct inflow notch, which serves as a primary visual anchor for identifying low-level rotation. Kinematically, the radial velocity signature presents further ambiguity. While a weak inbound–outbound velocity couplet is discernible, the rotational dynamics are ill-defined. The velocity gradients are spatially delocalized rather than exhibiting the sharp, gate-to-gate shear characteristic of a compact mesocyclone or Tornado Vortex Signature. Consequently, this event falls into a “gray zone” for the automated detectors. For the feature-driven models (TORP and TORP-XGB), the calculated physical descriptors—such as rotational velocity and shear intensity—likely fell below the critical decision thresholds required to trigger a positive classification. Similarly, the TDA-CNN failed to resolve the event; the indistinct spatial topology and lack of sharp gradients prevented the convolutional layers from extracting salient high-level feature representations, resulting in the network classifying the pattern as non-tornadic background noise.

A prevalent source of false alarms within the target domain is attributed to radar data quality degradation, specifically contamination from non-meteorological scatterers and signal processing artifacts.

Figure 9 illustrates a representative false positive case driven by range folding and ground clutter. In the radial velocity field, these artifacts do not manifest as random noise but rather generate fragmented, alternating inbound–outbound velocity patterns. Due to the aliasing or phase ambiguity inherent in range-folded gates, these discontinuities can inadvertently create spurious azimuthal shear signatures that spatially mimic the velocity couplet of a mesocyclone. Compounding this issue is the reflectivity presentation. The corresponding reflectivity field exhibits localized core values exceeding 30 dBZ. This coincidence creates a “numerical mimicry” in the feature space: the artifacts possess both the shear intensity (from chaotic velocity patterns) and the reflectivity intensity (from clutter or second-trip echoes) required to satisfy the detection criteria. Consequently, during the feature extraction phase, these non-tornadic signatures map to a vector space region that overlaps with the manifold of genuine tornadic vortices. The algorithms, unable to distinguish the texture of biological/terrain clutter from meteorological hydro-meteors, erroneously interpret these high-gradient features as tornadic dynamics, resulting in a high-confidence false alarm.

4.3. Feature Distribution Analysis and Sensitivity Experiment

To elucidate the physical mechanisms driving the observed cross-domain performance attenuation, we conducted a comparative statistical analysis of radar-derived feature distributions between the source (U.S.) and target (China) domains.

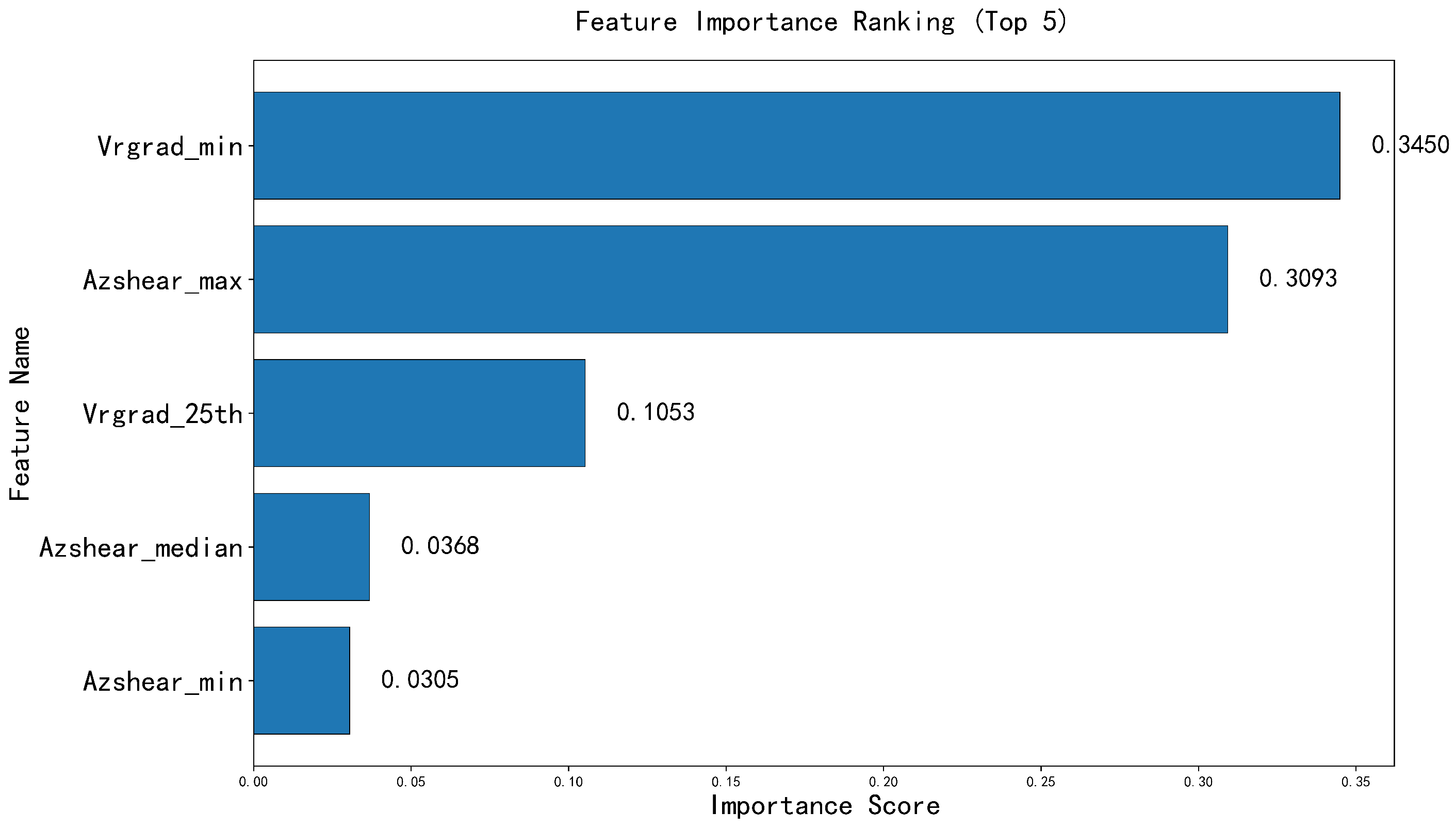

Figure 10 identifies the top-five most significant features based on their Gini importance ranking, derived from the TORP framework during the training phase. These features represent the primary “digital footprints” used by the models to distinguish tornadic vortices from non-tornadic shear. Detailed definitions and physical interpretations of these variables are provided in

Table A1 of

Appendix A. To ensure statistical validity, the samples used for this characterization were drawn from a held-out validation set, and were strictly independent of the test samples used in previous sections.

The comparative statistics, summarized in

Table 5, reveal a systematic “intensity gap” between the two regions. Specifically, radar signatures associated with Chinese tornadoes exhibit significantly attenuated magnitudes compared to their American counterparts across all key metrics. For instance, the median Azshear_max for Chinese samples is 0.0080 s

−1, which is approximately 1.4 times weaker than the 0.0115 s

−1 observed in U.S. samples. Similar reductions are evident in velocity gradients (Vrgrad_min) and median azimuthal shear, suggesting that Chinese tornadic signatures are inherently fainter within the radar reflectivity and velocity fields.

To validate whether this magnitude disparity is the primary driver of performance degradation, we performed a sensitivity experiment using a linear gain adjustment strategy. Based on the statistically derived ratio of feature means (

), a scalar enhancement factor of 1.35 was applied to the shear-related feature vectors of the Chinese dataset during the inference phase. As presented in

Table 6, this recalibration led to a marked improvement in POD for both TORP (0.69) and TORP-XGB (0.54), confirming that aligning the feature manifolds partially mitigates the cross-domain gap.

The systematic attenuation of Chinese tornadic signatures compared to U.S. samples can be primarily attributed to distinct climatological storm environments and storm-scale dynamics. Unlike the classic Great Plains supercells in the U.S., which frequently develop in environments with extreme convective available potential energy (CAPE) and produce deep, long-lived, and intense mesocyclones, Chinese tornadoes—particularly those in Jiangsu Province during the Meiyu season—typically occur in environments characterized by lower local instability (lower CAPE) but strong low-level wind shear. Consequently, the parent storms and associated vortices in the Chinese dataset are generally physically smaller in diameter and shallower in vertical extent, and exhibit inherently weaker rotational velocities. These meteorological realities translate directly to the fainter “digital footprints” observed in the target-domain radar feature space.

5. Discussion

The “intensity gap” documented in

Section 4.3 introduces a fundamental challenge of covariate shift, as the decision boundaries of tree-based models (RF and XGBoost) calibrated on the high-intensity manifold of U.S. supercells effectively learned a “high activation threshold.” When applied to the systematically weaker Chinese tornado signatures, these source-trained models underestimate event probabilities and contract the Probability of Detection (POD). While our linear enhancement experiment (

Table 6) serves as a preliminary sensitivity analysis—demonstrating that a 1.35 scalar multiplier can recover a significant portion of the POD—this heuristic approach inherently magnifies non-tornadic shear features and environmental noise. The resulting concurrent rise in the FAR yields only a marginal improvement in the overall CSI, demonstrating that simple magnitude scaling cannot fully resolve the cross-domain challenge. Rather, the performance gap is driven by complex physical discrepancies: Chinese mesocyclones are generally smaller in diameter, shallower in vertical height, and more transient than classic U.S. supercells, evolving within environments characterized by fundamentally different local instability and vertical shear profiles. Future work should explore more sophisticated, non-linear mapping and feature alignment methods to achieve robust domain adaptation.

The varying degrees of degradation between TORP and TORP-XGB stem from their internal logic. TORP, based on a bagging mechanism, aggregates decisions from independent trees, making it more robust to absolute scaling shifts. In contrast, XGBoost’s boosting framework is highly sensitive to the precise split thresholds learned during sequential optimization. When the target-domain feature distributions shift, these learned thresholds become sub-optimal, causing residual errors to accumulate and distorting the decision boundary.

For the CNN-based model (TDA-CNN), the degradation is linked to the activation of convolutional kernels. CNNs learn to detect local spatial textures and gradient structures. Because Chinese tornadoes often manifest as smaller, more transient, and less coherent rotational structures, the deep convolutional layers—trained to recognize the well-organized patterns of U.S. tornadoes—are insufficiently activated. This lack of high-level feature response prevents the final classifier from forming high-confidence decisions, leading to the observed drop in CSI.

Regarding future operational deployment, it is important to emphasize that the performance metrics reported in this study are strictly objective algorithmic outputs. These results are derived using fixed probability thresholds optimized on the U.S. dataset without human intervention. In actual practice at the CMA, these thresholds are not static parameters. To align with public safety priorities and the “over-warning” social preference observed in regions like the U.S., forecasters can dynamically lower the decision thresholds. Such an adjustment would successfully increase the operational POD to minimize missed events, albeit at the cost of a higher operational FAR. This study provides the baseline algorithmic capability, which can be further refined through local adaptation and operational threshold tuning.