1. Introduction

Unmanned aerial vehicle (UAV) laser scanning (ULS) has become a core technology for high-resolution three-dimensional geospatial data acquisition [

1]. By tightly integrating light detection and ranging (LiDAR) sensors with high-rate global navigation satellite system (GNSS) and inertial measurement units (IMUs), ULS systems enable rapid collection of dense and accurate point clouds under flexible flight conditions [

2,

3]. Compared with traditional airborne laser scanning (ALS) and terrestrial laser scanning (TLS), ULS offers advantages in deployment flexibility, operational safety, and cost-effectiveness [

4], and has therefore been widely applied in topographic mapping [

5], infrastructure surveying and inspection [

6,

7], forestry inventory [

8], disaster assessment [

9], and digital twin construction [

10].

Driven by advances in laser ranging technology, modern ULS systems now operate at pulse repetition rates in the megahertz range [

11] and increasingly adopt multi-head configurations [

12]. As a result, an individual ULS project routinely generates hundreds of millions to billions of laser points. This dramatic growth in data volume has shifted the efficiency bottleneck of the ULS workflow from field acquisition to office-based data processing. Efficient processing of massive point clouds has thus become a key factor limiting the scalability of ULS applications.

Within the ULS processing chain, direct georeferencing constitutes the first and one of the most data-intensive steps. By using high-frequency GNSS/IMU-derived platform position and attitude (POS trajectory) and system calibration information, direct georeferencing transforms raw laser measurements from the sensor-owned coordinate system (SOCS) directly into a georeferenced map coordinate system, eliminating the need for ground control points and significantly improving operational efficiency [

13]. However, rigorous direct georeferencing requires executing a complex sequence of coordinate transformations, interpolations, and geodetic projections for each laser point [

14]. When implemented serially, these computations become prohibitively time-consuming for large datasets.

To alleviate such computational bottlenecks, parallel computing has been widely adopted in geospatial data processing [

15]. Multicore CPU parallelism using OpenMP and heterogeneous CPU-GPU computing using CUDA have demonstrated significant performance gains in downstream LiDAR tasks such as DEM generation, point cloud segmentation, filtering, and registration. Nevertheless, most existing studies focus on iterative or algorithmically complex analysis stages applied to preprocessed point clouds. In contrast, the direct georeferencing stage, despite being the earliest, most fundamental, and most data-intensive component of the ULS workflow, has received limited systematic investigation regarding its parallelization potential and accuracy-efficiency trade-offs.

Before the widespread adoption of parallel hardware, direct georeferencing of point clouds relied primarily on traditional serial CPU processing. Altuntas et al. [

16] performed serial correction of LiDAR point cloud attitude using data from continuously operating reference stations to achieve direct georeferencing. This approach belongs to conventional GNSS post-processing, with its accuracy dependent on serial adjustment of the processing chain, and is mainly applicable to static terrestrial scanning stations. Zhang et al. [

17] systematically analyzed the direct georeferencing workflow of airborne LiDAR measurements in national coordinate systems and map projections, identifying multiple geometric distortion factors, including 3D scale, earth curvature, length, and angular distortions. They further proposed both high-precision and approximate map projection correction formulas to compensate for projection distortions within traditional serial point cloud processing pipelines, thereby ensuring point cloud accuracy in national coordinate systems.

While traditional CPU serial computing offers advantages regarding versatility and the handling of complex logic, it faces significant bottlenecks. These include low parallelism inherent to single-core architectures, memory access latency, and inefficiency when processing large-scale datasets.

With the widespread adoption of multi-core CPUs, parallel computing based on shared-memory architectures has become the preferred solution. As an industry standard, OpenMP enables developers to conveniently distribute computationally intensive loops across multiple CPU cores for parallel execution through compiler directives [

18]. Owing to its performance portability and ease of use, OpenMP has been widely applied in scientific computing libraries and geospatial data processing tasks [

19].

In the LiDAR data processing domain, applications of OpenMP have primarily focused on computationally intensive or algorithmically complex downstream analysis tasks. For the highly time-consuming DEM construction stage, Song et al. [

20] proposed a block-based parallel Delaunay triangulation growth algorithm, achieving task-level parallelism in multi-core environments. Experimental results demonstrated significant speedups over serial algorithms when processing large-scale airborne LiDAR point clouds, while maintaining efficient memory usage. To address the clustering demands of massive point cloud datasets, Deng et al. [

21] optimized the density-based spatial clustering of applications with noise algorithm using OpenMP by parallelizing the neighborhood search of core points, effectively alleviating efficiency bottlenecks in large-scale urban point cloud segmentation. For upstream data transformation in LiDAR processing, Mochurad et al. [

22] proposed an OpenMP-based parallel genetic algorithm to determine the current coordinates of LiDAR data.

In recent years, general-purpose computing on graphics processing units (GPGPU) has become a key paradigm shift in high-performance computing [

23]. GPUs adopt a high-throughput many-core architecture, integrating thousands of computing units and being specifically designed for large-scale parallel workloads under the single-instruction multiple-thread (SIMT) model [

24]. These architectural characteristics have established a typical CPU–GPU heterogeneous computing workflow, in which the CPU is responsible for overall logic control, while the GPU acts as a co-processor to execute computation-intensive kernels [

25]. When processing massive and unstructured data such as LiDAR point clouds, this heterogeneous paradigm can significantly overcome the computational limitations of traditional serial algorithms [

26].

Similarly, research on GPU parallelization has been largely focused on downstream analysis tasks. In real-time localization, GPU acceleration has been widely applied to point cloud registration algorithms to meet the real-time requirements of autonomous navigation and Simultaneous Localization and Mapping (SLAM) systems. For example, Koide et al. [

27] employed a CUDA-optimized parallel normal distribution transform algorithm, achieving speedups ranging from several to tens of times while maintaining accuracy, thereby satisfying the localization demands of high-speed platforms. Methods such as VAN-ICP further reduce the execution time of the ICP algorithm by accelerating approximate nearest neighbor searches through voxel inflation [

28]. In preprocessing, GPU acceleration mainly targets fundamental operations such as filtering and ground segmentation to remove noise and separate ground points, providing clean data for subsequent analysis. For instance, Zermas et al. [

29] proposed a scanline-based parallel segmentation algorithm that exploits the independent processing of individual scan lines on the GPU, enabling millisecond-level ground extraction. In addition, the application of CPU–GPU heterogeneous computing frameworks in large-scale geospatial point cloud processing further highlights the advantages of GPUs. In DEM generation, interpolation, and analysis of large-scale LiDAR point clouds, heterogeneous parallel algorithms effectively leverage the general-purpose capabilities of multi-core CPUs and the high throughput of GPUs, resulting in substantial performance improvements [

30].

Current research on parallel computing for LiDAR data processing is highly concentrated on downstream analysis tasks. These tasks are typically iterative and complex, and are often performed on datasets that have already undergone preprocessing. In contrast, the direct georeferencing process, which is the most fundamental, earliest-stage, and data-intensive component of the entire ULS workflow, has received little systematic investigation and performance evaluation with respect to its parallelization potential.

This study addresses this research gap by conducting a comprehensive evaluation of parallel computing strategies for ULS direct georeferencing. The main contributions are threefold: (1) the detailed formulation and implementation of a rigorous direct georeferencing model and an approximate model incorporating Meridian Convergence Angle Compensation; (2) the design of OpenMP-based multicore CPU and CUDA-based GPU parallel implementations for both models; and (3) a quantitative assessment of accuracy and performance using large-scale real-world ULS datasets, providing practical guidance for engineering applications.

The remainder of this paper is organized as follows.

Section 2 presents the theoretical principles and implementation details of the two direct georeferencing models, including the POS data search, interpolation, and parallelization strategies and implementations.

Section 3 comparatively analyzes the accuracy and efficiency of serial, OpenMP-based and CUDA-based parallel implementations using diverse datasets.

Section 4 presents the conclusions of this work.

2. Methods

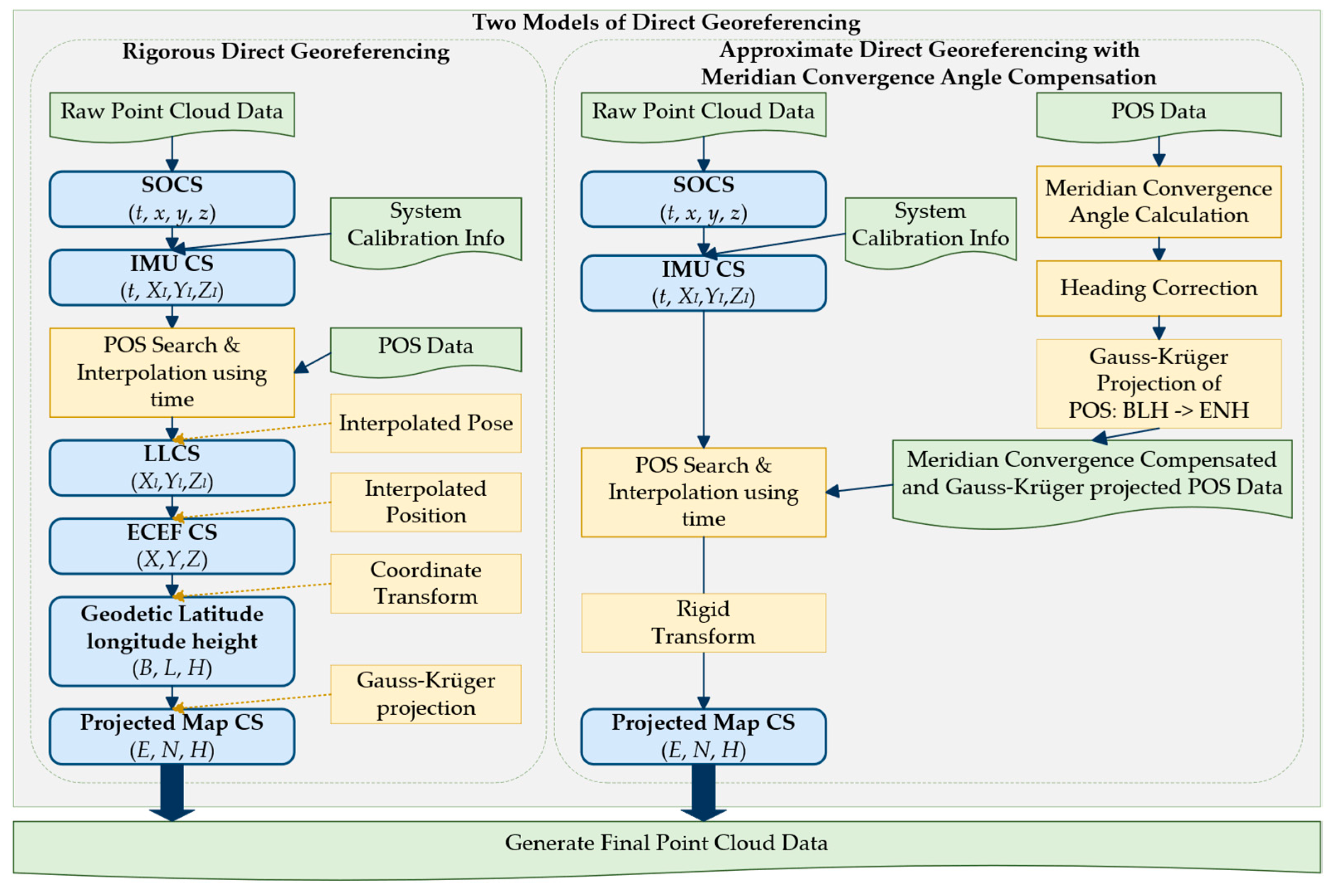

This study implements two models of direct georeferencing: a rigorous model and an approximate model. Furthermore, two parallel computing schemes, OpenMP-based and CUDA-based, are utilized to enhance the data processing efficiency. The overall workflow of the rigorous and approximate direct georeferencing model is illustrated in

Figure 1. The left branch corresponds to the rigorous direct georeferencing model, which performs strict point-wise coordinate transformations from the LiDAR SOCS to the projected map coordinate system. The right branch represents the approximate direct georeferencing model, in which meridian convergence angle correction and Gauss–Krüger projection preprocessing are applied to the POS trajectory, simplifying point-wise computations into two rigid-body transformations and thereby enabling efficient processing.

2.1. Rigorous Direct Georeferencing

The rigorous direct georeferencing model strictly follows the complete geodetic transformation chain from the SOCS to the projected map coordinate system. Each laser point undergoes a sequence of transformations. The raw LiDAR point cloud is measured in SOCS, including timestamp information and xyz coordinates and attributes such as intensity.

To ensure rigorous direct georeferencing accuracy, it is necessary to search the previous and next trajectory record using the precise timestamp of each laser point and perform interpolation of position and attitude from the searched adjacent POS records before subsequent coordinate transformations. The search is performed using binary search [

31]. The position

is interpolated by linear interpolation [

32], while the attitude is interpolated using the spherical linear interpolation [

33] to ensure smooth rotational motion. In this way, high-precision attitude angles

and geodetic coordinates

at the laser emission instant are obtained.

Using the interpolated navigation parameters, the laser point coordinates are then transformed through a series of coordinate systems. First, the target coordinates in the laser scanner coordinate system are transformed into the IMU coordinate system using the lever-arm offset and the boresight angles between the laser scanner and the IMU. Then, using the interpolated IMU attitude angles (roll, pitch, and heading), the coordinates in the IMU coordinate system are transformed into the Local Level Coordinate System. Finally, based on the latitude, longitude, and ellipsoidal height provided by the GNSS/IMU, the coordinates in the local coordinate system are converted into the WGS84 ECEF coordinate system.

The formula for transforming a laser point from the laser scanner coordinate system to the WGS84 ECEF coordinate system is as follows:

where

is the coordinate of the laser point in the WGS84 ECEF coordinate system;

is the rotation matrix from the SOCS to the IMU coordinate system;

is the translation vector from the SOCS coordinate system to the IMU coordinate system;

are the boresight angles between the laser scanner and the IMU system;

are the lever-arm offsets between the laser scanner and the IMU system;

is the rotation matrix from the IMU coordinate system to the Local Level Coordinate System;

is the rotation matrix from the Local Level Coordinate System to the WGS84 ECEF coordinate system;

is the translation vector from the Local Level Coordinate System to the WGS84 ECEF coordinate system;

are the longitude, latitude, and ellipsoidal height provided by the IMU;

are the attitude angles (roll, pitch, and heading) provided by the IMU.

To obtain the final planar coordinates of the laser point, must first be iteratively solved to obtain the geodetic coordinates . Then, the final planar coordinates are obtained through the Gauss–Krüger projection.

In summary, the rigorous direct georeferencing model requires performing a sequence of strict coordinate transformations for every individual laser foot point. To clearly illustrate this computational process, we summarize the workflow in Algorithm 1. Theoretically, the time complexity of this serial algorithm is

, determined by the binary search for each of the

laser points within the

trajectory records, combined with the computationally expensive iterative geodetic transformations. The space complexity is

, as it primarily requires memory to store the massive point cloud and the trajectory data.

| Algorithm 1: Rigorous Direct Georeferencing |

Input:

Raw LiDAR Points , where

POS Trajectory , where

Calibration Params: (Boresight), (Lever-arm)

Ellipsoid Params:

Central Meridian:

Output:

Georeferenced Points , where

and sort by time

do

// Step 1: SOCS to IMU Coordinate System

// Step 2: POS Search and Interpolation

)

)

)

// Step 3: IMU to Local Level Coordinate System

= BuildRotationMatrix(Attitude_interp)

// Step 4: LLCS to ECEF

= CalcEarthRotation(State_interp.lat, State_interp.lon)

= CalcEarthTranslation(State_interp.lat, State_interp.lon, State_interp.h)

// Step 5: ECEF to Geodetic (Iterative)

// Step 6: Map Projection (Gauss-Krüger)

= Gauss-KrügerProjection

16: end for

17: return |

2.2. Approximate Direct Georeferencing with Meridian Convergence Angle Compensation

To reduce the computational cost of processing massive datasets, the approximate model adopts a POS trajectory preprocessing and rigid body transformation strategy. The core idea is to assume that within a local scanning range, the variations in the meridian convergence angle and the Gauss projection scale factor are negligible.

In this method, the POS trajectory is first preprocessed. The meridian convergence angle

at each trajectory point is computed, and the heading angle is corrected as

. The POS positions are then directly projected into the ENH coordinate system using the Gauss–Krüger projection. Subsequently, the computation of each laser point is simplified to a local rigid body transformation with the POS position taken as the origin.

where,

is the coordinate of the laser point in the map projection coordinate system;

is the rotation matrix from the IMU coordinate system to the map projection coordinate system;

is the translation vector from the IMU coordinate system to the map projection coordinate system;

are the attitude angles provided by the IMU, representing roll, pitch, and the corrected heading;

are the POS trajectory coordinates after Gauss projection, corresponding to the easting, northing, and height of the POS position.

By incorporating meridian convergence angle compensation and trajectory pre-projection, the approximate model avoids computationally expensive iterative transformations during the point-wise loop. Algorithm 2 details this method, which decouples the process into a ‘Trajectory Preprocessing’ phase and a simplified ‘Point-wise Rigid Transformation’ phase. While the overall time complexity remains

, the constant factor is significantly reduced because the heavy projection math is moved to the preprocessing stage (which takes only

time). The space complexity remains

, with a negligible increase for storing the intermediate projected trajectory.

| Algorithm 2: Approximate Direct Georeferencing |

Input:

Output:

// Phase 1: Preprocessing POS Trajectory (Done once)

of size M

to M do

// Calculate Meridian Convergence Angle

= CalcMeridianConvergence

// Compensate Heading

// Direct Projection of POS position

= GaussianProjection

// Store projected state

7: end for

// Phase 2: Simplified Point Processing

to N do

// Step 1: SOCS to IMU

// Step 2: Search and Interpolate in Projected Domain

// Step 3: Rigid Body Transformation (IMU to Map)

// Construct rotation matrix directly from corrected attitude

)

= (State_proj.E, State_proj.N, State_proj.H)

// Direct transformation without geodetic iteration

15: end for

16: return |

2.3. Parallel Direct Georeferencing Architecture and Implementation

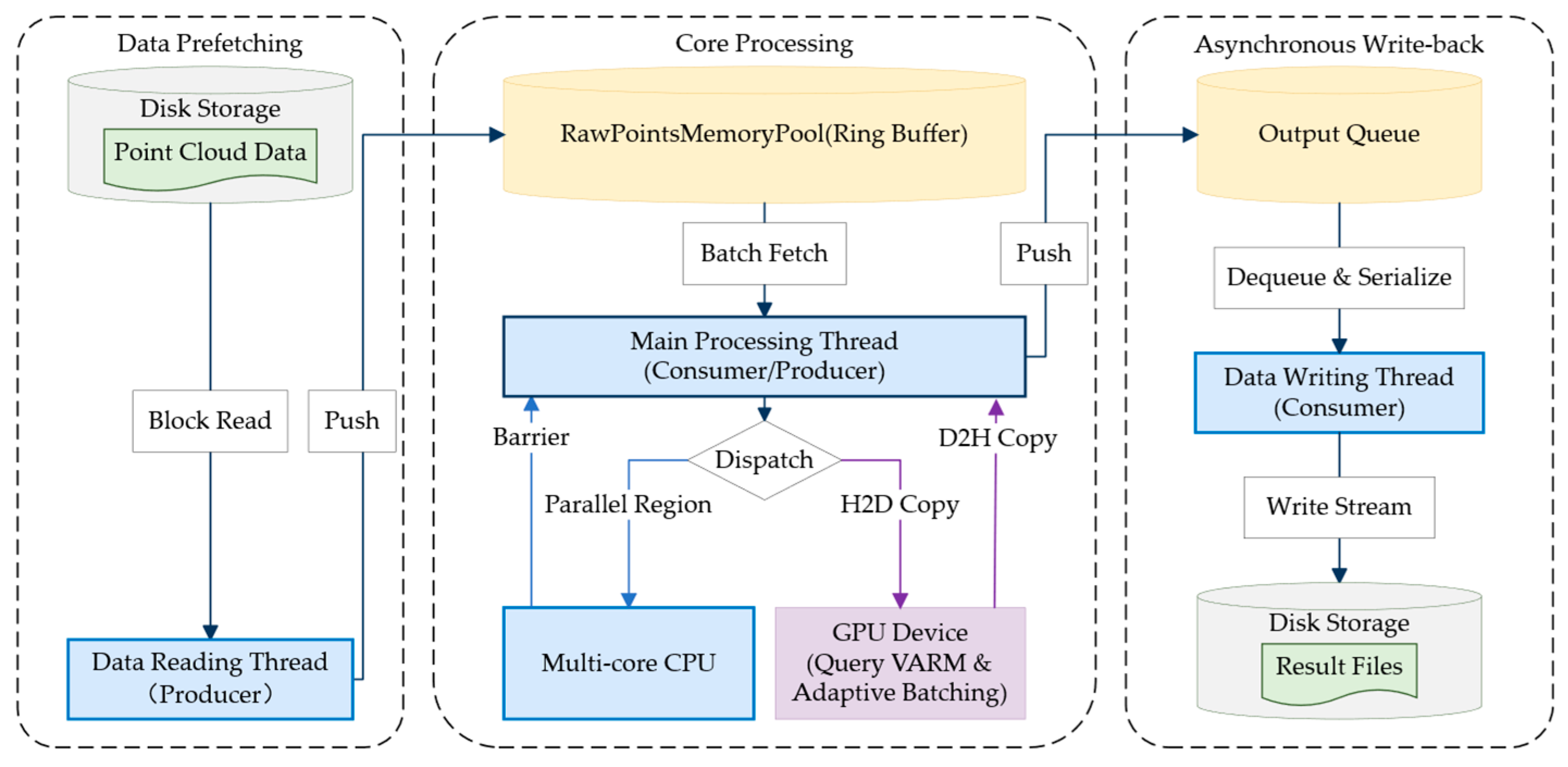

To overcome the I/O wall and memory wall encountered in large-scale ULS data processing, this study designs a direct georeferencing architecture based on a multithreaded pipeline to maximize the overlap between I/O operations and core computation. The overall technical architecture and data flow are shown in

Figure 2.

2.3.1. Asynchronous I/O Pipeline Based on Producer-Consumer Model

Direct georeferencing is essentially a dataflow-driven, high-throughput task. In order to solve the speed mismatch problem where the disk I/O speed is far lower than the computing speed, this study constructs a three-stage asynchronous pipeline based on the producer-consumer model.

- (1)

Data Prefetching Stage: As the “producer” of the pipeline, a dedicated reading thread continuously loads raw binary point cloud data from disk. To reduce file system I/O overhead, this module adopts a large-block reading strategy, directly filling preallocated memory buffers so that the computational core does not stall while waiting for data input.

- (2)

Core Processing Stage: The main thread acts as the “consumer,” retrieving data from the buffers in batches and dispatching coordinate transformation computations to either the CPU thread (via serial or OpenMP) or the GPU (via CUDA) according to the settings.

- (3)

Asynchronous Write-back Stage: Once georeferencing is completed, the resulting coordinates are pushed into an output queue and written to disk by an independent background thread, thereby achieving temporal overlap among the reading, computation, and writing stages.

2.3.2. Adaptive Memory Management Strategy

To address potential memory overflow and fragmentation caused by large-scale point cloud data, this study designs an adaptive memory management strategy that coordinates host memory and GPU memory, ensuring system robustness across different hardware configurations.

- (1)

Circular memory pool: When processing large, continuous data streams, frequent dynamic memory allocation and deallocation can lead to severe memory fragmentation and high system call overhead. To mitigate this issue, a memory pool mechanism based on a ring buffer is used.

During system initialization, a large contiguous block of memory is preallocated. The data loading and computation modules reuse this memory region in a lock-free cyclic manner by maintaining read and write pointers, thereby fundamentally eliminating the overhead of dynamic memory management.

Parallel memory copying: To overcome memory bandwidth limitations, parallel memory copy techniques are introduced during data filling and extraction operations within the memory pool. By partitioning large data transfer tasks and mapping them to multiple CPU physical cores for concurrent execution, this strategy significantly increases the transfer rate between the “I/O buffer” and the “compute buffer,” effectively reducing data movement latency.

- (2)

Adaptive dynamic batching for GPU memory: Unlike CPU memory, GPU global memory is typically limited in capacity and not expandable. To prevent memory overflow caused by loading excessive data at once, this system implements a hardware-aware dynamic batching strategy.

During initialization, the system queries the current available GPU memory in real time via runtime APIs. Based on the available memory capacity and the GPU memory required to process a single laser point (including the timestamp, raw observations, and computed results), the system dynamically determines the maximum allowable batch size for a single kernel launch. This strategy establishes a general resource mapping model:

where

denotes the currently available GPU memory,

is the memory consumption of a single point data structure, and

is a safety factor reserved to avoid memory exhaustion. This mechanism endows the algorithm with strong hardware adaptability, allowing it to maintain efficient and stable performance across computing devices with different GPU memory capacities without recompilation.

2.3.3. Parallel Implementation Details

This study designs two parallelization strategies using multicore CPUs and many-core GPUs: a coarse-grained task-parallel strategy based on OpenMP, and a fine-grained data-parallel strategy based on CUDA. The schematic diagram of the parallel implementation strategy is shown in

Figure 3.

Coarse-Grained CPU Parallelism via OpenMP

LiDAR point cloud data exhibit inherent data independence: the georeferencing of any individual laser point depends solely on its own timestamp, raw observations, and the corresponding POS trajectory data, making it highly suitable for data-parallel execution. On multicore CPU platforms, this study employs the OpenMP framework to implement coarse-grained, loop-level parallelism.

To maximize CPU utilization and prevent thread stalling, two specific optimization strategies were incorporated into the OpenMP implementation (as detailed in Algorithm 3):

Dynamic Load Balancing Strategy: In the rigorous direct georeferencing model, the time required to search for the corresponding POS record can vary slightly depending on the temporal distribution of the points and the binary search execution path. If static scheduling were used, threads assigned to denser point clusters might take longer, causing other threads to idle at the synchronization barrier. To overcome this, we utilized OpenMP’s dynamic scheduling (#pragma omp parallel for schedule(dynamic, chunk_size)). The runtime logically partitions millions of loop iterations into fixed-size data chunks and dynamically dispatches them to worker threads in real-time, effectively eliminating load imbalance.

Thread-Private Memory and False Sharing Avoidance: During the concurrent coordinate transformation, multiple threads need to compute and store intermediate states (such as rotation matrices and interpolated coordinates). To avoid the severe performance penalty of “false sharing” at the CPU L1/L2 cache level, all intermediate variables are strictly declared as thread-private. The computed georeferenced coordinates are directly written into a pre-allocated, conflict-free output array based on their globally unique index, completely avoiding the use of atomic locks or critical sections.

| Algorithm 3: OpenMP Coarse-Grained Parallelism |

Input: File List F, Memory Pool Size

Global: RingBuffer RB, OutputQueue Q

// Thread 1: Data Producer (I/O Reading)

2: for file in F do

= ReadBlock(file, )

4: while RB.isFull() do sleep() end while

6: end for

7: Set IsFinished = true

8: end procedure

// Thread 2: Main Compute (OpenMP)

9: procedure ComputeWorker()

10: while not (IsFinished and RB.isEmpty()) do

= RB.pop()

is Empty continue

// Fork: Parallel Execution on CPU Cores

13: #pragma omp parallel for schedule(dynamic)

.size do

// Execute Logic from Algorithm 1 or Algorithm 2

.points[i])

.results[i] = Result[i]

17: end for

// Join: Implicit Barrier

.results)

19: end while

20: end procedure

// Thread 3: Data Writer (Asynchronous Write)

21: procedure DataWriter()

22: while true do

.isEmpty() then

.pop()

26: end if

27: end while

28: end procedure |

Fine-Grained GPU Parallelism via CUDA

To process point cloud arrays at the scale of hundreds of millions of points, the proposed system constructs a one-dimensional thread grid comprising tens of thousands of thread blocks. During kernel execution, each GPU thread computes a globally unique logical index based on its block index and intra-block thread index. This logical index is directly mapped to the memory offset of the input point cloud array.

Since the direct georeferencing algorithm has a relatively low arithmetic intensity compared to its massive data throughput, its performance on the GPU is inherently memory-bound. To overcome the “memory wall” and maximize the utilization of the thousands of CUDA cores, three fine-grained memory optimization strategies were designed (as illustrated in Algorithm 4):

Memory Coalescing for Global Memory Access: To maximize the utilization of global memory bandwidth, the input and output point cloud data structures were reorganized from an Array of Structures (AoS) to a Structure of Arrays (SoA). Under this layout, arrays containing timestamps, X, Y, and Z coordinates are stored independently. When a Warp (32 threads) accesses the data, consecutive threads access consecutive memory addresses, ensuring fully coalesced memory transactions and significantly reducing global memory latency.

Constant Memory for Trajectory Caching: The POS trajectory data are read-only and frequently accessed by all threads for binary search and interpolation. We leverage the GPU’s Constant Memory (or Texture/Read-Only Cache, depending on the architecture) to store the currently active segment of the POS trajectory. Because threads within the same Warp typically process temporally adjacent laser points, they access the same or neighboring POS records. Constant memory broadcasts this data to all requesting threads simultaneously, drastically accelerating the trajectory lookup phase.

Shared Memory for Extrinsic Calibration Matrices: The boresight rotation matrices and lever-arm translation vectors constitute global shared data that must be accessed repeatedly for every point transformation. Instead of fetching them from global memory for every calculation, a shared-memory-based cooperative loading strategy is introduced. As shown in Algorithm 4, the leader thread of each block pre-loads the calibration parameters from global memory into the on-chip Shared Memory (which operates at L1 cache speeds). After a _syncthreads() barrier, all threads in the block access these parameters directly from shared memory, completely eliminating redundant global memory reads for matrix multiplications.

| Algorithm 4: CUDA Fine-Grained Parallelism |

Input:

// Host Side: Adaptive Batching & Dispatch

1: Query GPU Available Memory (Mem_free)

2: Batch_Size = (Mem_free * 0.95) / SizeOf(PointStruct)

3: Num_Batches = Ceiling(Total_Points / Batch_Size)

to Num_Batches do

* Batch_Size]

// H2D Transfer

, Current_Chunk, HostToDevice, Stream)

// Kernel Launch: 1 Thread per Point

7: Blocks = (Current_Chunk.size + ThreadsPerBlock − 1)/ThreadsPerBlock

8: Kernel_DG<<<Blocks, ThreadsPerBlock, Stream>>>

// D2H Transfer

, DeviceToHost, Stream)

10: end for

// Device Side: Kernel Function (__global__)

11: function Kernel_DG(Points, Calib, PosData, Output)

// Calculate Global Thread Index

12: idx = blockIdx.x * blockDim.x + threadIdx.x

13: if idx >= Points.size return

// Optimization: Load Calibration to Shared Memory

14: __shared__ Shared_Calib [12]

15: if threadIdx.x == 0 then

16: Shared_Calib = Calib // Cooperative loading

17: end if

18: __syncthreads()

// Independent Per-Thread Computation

// No data dependency between threads

= Points [idx]

// Math logic same as Algorithm 1 or Algorithm 2, executed in parallel

21: Output [idx] = res

22: end function |

3. Results

3.1. Experimental Setup

The experimental hardware platform was a laptop computer equipped with an AMD Ryzen 7 4800H CPU (8 cores and 16 threads, base frequency 2.9 GHz), 16 GB of DDR4 memory, and an NVIDIA GeForce RTX 2060 GPU (6 GB GDDR6 memory with 1920 CUDA cores). The software environment was based on the Windows 10 64-bit operating system, with Microsoft Visual Studio 2022 Community Version as the development tool. The parallel computing environments employed the OpenMP 2.0 standard for CPU-based multicore parallelism and the CUDA 12.5 Toolkit for GPU heterogeneous parallel computing.

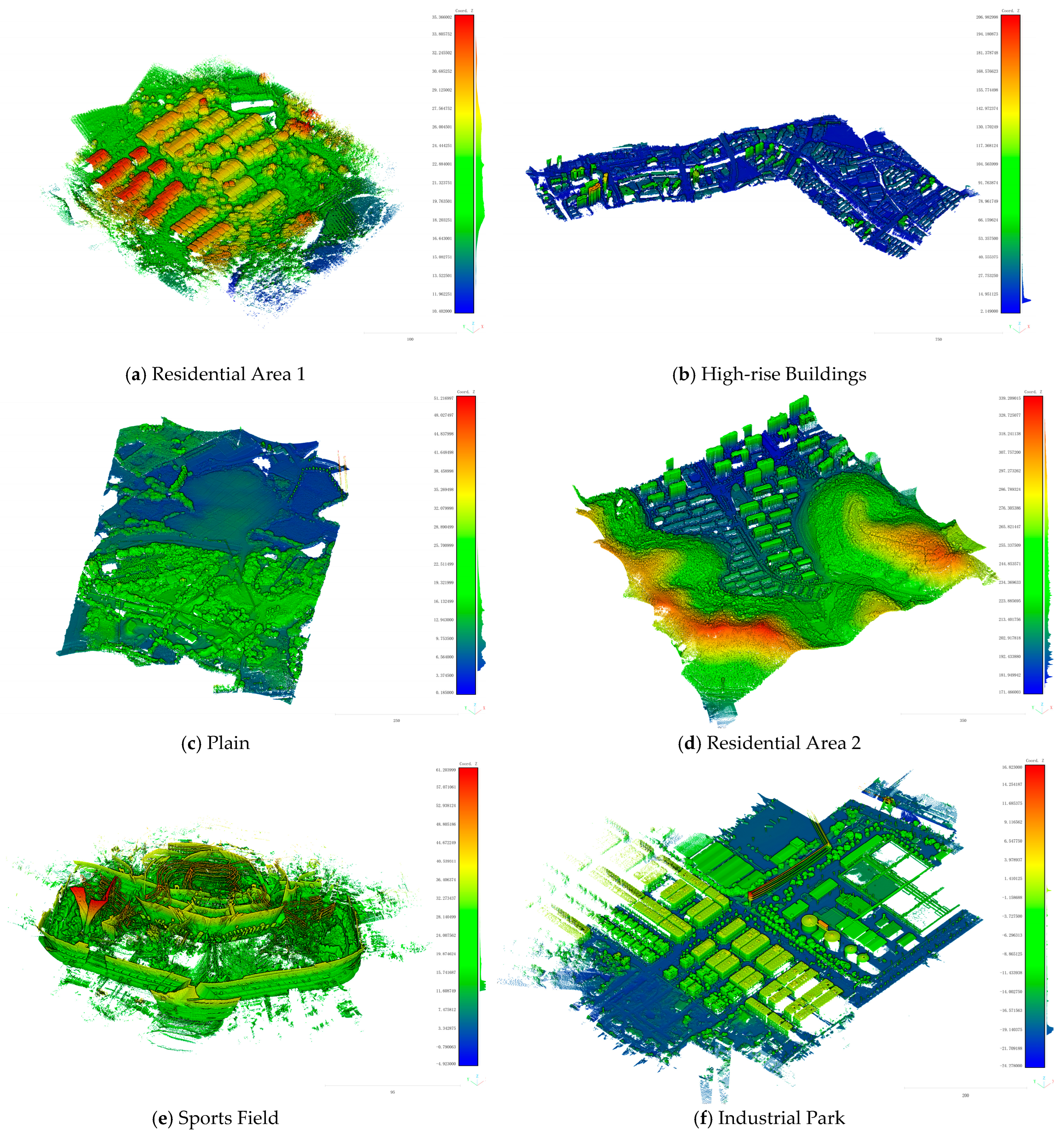

The experimental data consisted of real ULS datasets acquired by the Huace Navigation AA10 airborne LiDAR surveying system (Shanghai Huace Navigation Technology Ltd., Shanghai, China;

https://www.huace.cn/pdDetailNew/137, accessed on 20 March 2026) and the AU20 multi-platform laser scanning system (

https://www.huace.cn/pdDetailNew/141, accessed on 20 March 2026). To comprehensively evaluate the robustness and efficiency of the proposed algorithms under different terrain characteristics and data scales, six representative datasets were selected. The point cloud sizes ranged from tens of millions to 700 million points, covering diverse scenarios including residential areas, high-rise buildings, plains, sports fields, and large industrial parks. Detailed information on the datasets is provided in

Table 1, and visualizations of the point clouds obtained after rigorous direct georeferencing are shown in

Figure 4.

To ensure an objective evaluation of computational efficiency, an asynchronous I/O architecture based on the producer–consumer model was adopted. The timing measurements included only the in-memory computation time of the core algorithms, namely POS preprocessing, searching, interpolation, and coordinate transformation, while excluding disk I/O latency.

3.2. Accuracy Evaluation

To verify the reliability of the approximate model in practical engineering applications, this section adopts the results obtained from the rigorous model as the reference ground truth and quantitatively evaluates the model simplification errors introduced by the approximate approach. Specifically, point-to-point differences between the point clouds generated by the approximate model and those produced by the rigorous model were computed. The statistical metrics include the mean error (ME) and the root mean square error (RMSE) in the east (E/X), north (N/Y), and up (H/Z) directions. The detailed statistical results are summarized in

Table 2.

The results indicate that the approximate model achieves millimeter to centimeter approximation accuracy across all test scenarios. The 3D RMSE for all datasets ranges from 0.149 mm to 6.744 mm, while the MEs are at the sub-millimeter level. Considering the inherent ranging errors of the laser scanner and the errors in GNSS positioning and IMU measurements of low-cost ULS systems, the approximate model is practical for most ULS systems except for those with ultra-high precision laser scanners and IMU. These findings demonstrate that, under typical operating conditions, performing local rigid-body transformations based on preprocessed POS data is a high-fidelity alternative to per-point rigorous projection transformations.

It is noteworthy that the comprehensive error of Data 6 is noticeably higher than that of the other datasets. This variance is primarily driven by the exceptional spatial extent and data scale of Data 6. Because the approximate model relies on local constant assumptions for the meridian convergence angle, laser points located at the far extremities of such a vast survey area experience significantly larger scan ranges. This inevitably leads to a natural accumulation of projection distortions at the boundaries. A detailed geometric analysis and spatial interpretation of this error evolution will be further discussed in

Section 4.1.

3.3. Efficiency Comparison

To comprehensively evaluate the computational efficiency of the proposed parallel algorithms in the ULS direct georeferencing workflow, the core computation time of two direct georeferencing models was recorded under three computing modes: serial execution on a CPU, multicore CPU parallel execution based on OpenMP, and heterogeneous GPU parallel execution based on CUDA.

The experimental results are reported in

Table 3 and

Table 4. These tables list the execution times of each dataset under different computing modes and present the corresponding speedup factors, including the speedup of OpenMP relative to serial CPU execution, the speedup of GPU execution relative to serial CPU execution, and the speedup of GPU execution relative to OpenMP.

Overall, parallel computing achieves substantial performance gains for both models, with the approximate model exhibiting significantly lower total runtime due to its simplified computational pipeline. The OpenMP-based parallel scheme attains speedups ranging from 7.0 times to 8.1 times on an 8-core, 16-thread CPU. These improvements primarily result from efficient parallelization of the per-point point-cloud computation loop and the independent task allocation in the POS preprocessing stage. The speedup increases slightly with data scale, as larger datasets better amortize thread creation and synchronization overheads, leading to improved load balancing.

In comparison, the GPU-based parallel scheme demonstrates superior performance, with speedups between 11.9 times and 16.7 times, significantly outperforming the OpenMP approach. This advantage arises from the thousands of CUDA cores on the GPU, which can concurrently process tens of thousands of laser-point threads, closely matching the highly data-parallel nature of point-cloud computation. In addition, the batch-processing pipeline effectively alleviates GPU memory capacity constraints, while high-speed access to shared memory further reduces global memory latency.

Because the rigorous model involves a complete coordinate transformation chain, its computational intensity is significantly higher than that of the approximate model, and consequently, it achieves more pronounced benefits from parallel acceleration. The OpenMP-based parallel scheme attains speedups ranging from 7.7 times to 8.9 times, approaching the theoretical limit imposed by the number of physical CPU cores. In contrast, the GPU-based parallel scheme achieves speedups as high as 21.2 times to 24.6 times, substantially exceeding the GPU acceleration observed for the approximate model.

This difference arises because the rigorous model includes computationally intensive operations such as Gauss-Krüger projection, ECEF transformations, and extensive trigonometric and matrix computations, resulting in higher arithmetic intensity. With thousands of computing cores, the GPU can more effectively hide global memory access latency when processing such compute-intensive workloads, thereby exploiting its floating-point performance. Conversely, due to the substantially simplified computation pipeline of the approximate model, its performance bottleneck is more likely constrained by memory bandwidth, preventing the GPU’s computational capability from being fully utilized.

It is noteworthy that, for both the low-complexity approximate model and the computationally intensive rigorous model, the speedup factors of the OpenMP-based and GPU-based parallel schemes remain essentially consistent, despite substantial differences in terrain complexity and point-cloud spatial distribution among the datasets. This observation indicates that point-wise direct georeferencing algorithms inherently exhibit strong data decoupling characteristics: their computational efficiency scales linearly with the size of the point cloud and is largely independent of scene geometry or local point density, demonstrating high algorithmic robustness.

3.3.1. Impact of Asynchronous I/O Pipeline

In addition to the core computational acceleration, the end-to-end processing efficiency is critical for massive ULS datasets. The I/O latency often becomes a bottleneck when the computation speed significantly increases. To verify the effectiveness of the proposed three-stage asynchronous pipeline, we conducted a comparative experiment between the “synchronous I/O” mode and the “asynchronous I/O” mode.

In the synchronous I/O mode, data reading, georeferencing computation, and result writing are executed sequentially in a single thread. In contrast, the asynchronous I/O mode employs the producer-consumer architecture described in

Section 2.3, where data loading, computation, and writing are handled by independent threads concurrently.

The comparison was performed on the largest dataset, Data 6 (700 million points), using both the GPU-based and OpenMP-based rigorous models. The results are summarized in

Table 5.

The results demonstrate that the asynchronous pipeline delivers substantial performance improvements for both parallel implementations.

For the GPU-based model, the total runtime decreases from 247.189 s to 117.996 s, achieving a system-level speedup of 2.1 times. In the synchronous mode, the core calculation takes only 19.962 s, meaning the system spends over 90% of the time waiting for disk I/O. By adopting the asynchronous pipeline, the effective I/O overhead perceived by the system drops significantly to 98 s. This indicates that the proposed architecture successfully masks a large portion of the data transfer latency by overlapping GPU computation with disk operations, thereby keeping the high-speed GPU cores fully occupied.

Similarly, for the OpenMP-based model, the asynchronous strategy reduces the total runtime from 282.884 s to 142.897 s, yielding a 2.0 times speedup. Although the OpenMP calculation time is longer than that of the GPU, the I/O overhead remains the dominant factor in the synchronous mode. The asynchronous pipeline effectively reduces this overhead to 84.757 s.

It is worth noting that the “I/O Overhead” in the asynchronous mode is significantly lower than in the synchronous mode. This reduction occurs because the data reading and writing threads operate in parallel with the computation thread; thus, the visible system latency is determined primarily by the slowest stage of the pipeline rather than the sum of all stages. These findings confirm that the proposed three-stage asynchronous architecture effectively breaks the I/O wall in massive point cloud processing, ensuring that the efficiency gains from parallel algorithms translate directly into end-to-end productivity.

3.3.2. OpenMP Scalability Analysis

To evaluate the scalability and parallel efficiency of the proposed OpenMP-based implementation, a strong scaling test was conducted using the Rigorous model on Data 7. The number of worker threads was varied from 1 to 16, spanning both the physical cores and the logical threads (via Simultaneous Multithreading, SMT) of the processor, and the absolute speedup relative to the serial baseline execution was recorded. The quantitative results are listed in

Table 6, and the speedup trend is illustrated in

Figure 5.

As illustrated in

Figure 5, the speedup curve exhibits two distinct phases corresponding to the hardware architecture of the test platform (AMD Ryzen 7 4800H).

Physical Core Scaling (1–8 Threads): When the thread count is within the number of physical cores (8 cores), the algorithm demonstrates strong scalability. At 8 threads, the processing time drops from 450.307 s to 77.840 s, achieving a speedup of 5.8 times with a parallel efficiency of 0.72. The high efficiency (0.90 at 4 threads) indicates that the OpenMP dynamic scheduling strategy effectively balances the workload among cores, preventing significant load imbalance even when processing massive unstructured point clouds. The slight deviation from ideal linear speedup in this phase is primarily attributed to memory bandwidth contention, as eight cores simultaneously request high-frequency memory access for POS interpolation and coordinate data retrieval.

Logical Core Saturation (12–16 Threads): As the thread count exceeds the number of physical cores, enabling SMT, the performance gains begin to saturate. Increasing threads from 8 to 16 yields a marginal speedup increase from 5.8 times to 7.7 times, while parallel efficiency drops to 0.48. This behavior occurs because the rigorous direct georeferencing model is a compute-intensive task involving heavy double-precision floating-point operations (trigonometric functions and matrix multiplications). Logical threads share the physical Floating-Point Units (FPUs) with the main threads; therefore, SMT provides limited benefits for such dense calculation workloads compared to I/O-bound tasks.

Despite the efficiency drop at high thread counts, the total runtime continues to decrease, reaching a minimum of 58.14 s at 16 threads. This confirms that the proposed parallel architecture successfully maximizes the utilization of available CPU resources to accelerate the georeferencing process.