Investigating Very-High-Resolution Land Cover Mapping in the Pearl River Delta with Remote Sensing Foundation Models and Multi-Source Data Bayesian Fusion

Highlights

- A comprehensive benchmark dataset including 262,436 unlabeled VHR images, 33,342 annotated samples, and 15,000 sample points is constructed for land cover mapping in the Pearl River Delta.

- A remote sensing foundation model pretraining method (SDMAE) with a value-aware masking strategy and Edge-Enhanced Loss for VHR land cover mapping is proposed, effectively preserving critical features in high-value objects and boundary regions.

- A scene-based semantic segmentation network (SBFNet) is developed, specifically designed to capture key scene-level features for VHR land cover mapping of complex heterogeneous landscapes.

- A decision-level Bayesian fusion framework combining statistic and spatial consistency tests with Bayesian probabilistic modeling effectively integrates multi-source remote sensing data, achieving robust land cover mapping.

Abstract

1. Introduction

2. Study Area and Data

2.1. Study Area

2.2. VHR Data

2.3. MR Data

3. Methods

3.1. Segmentation-Driven Mask AutoEncoder (SDMAE)

3.2. Scene-Based Feature Network (SBFNet)

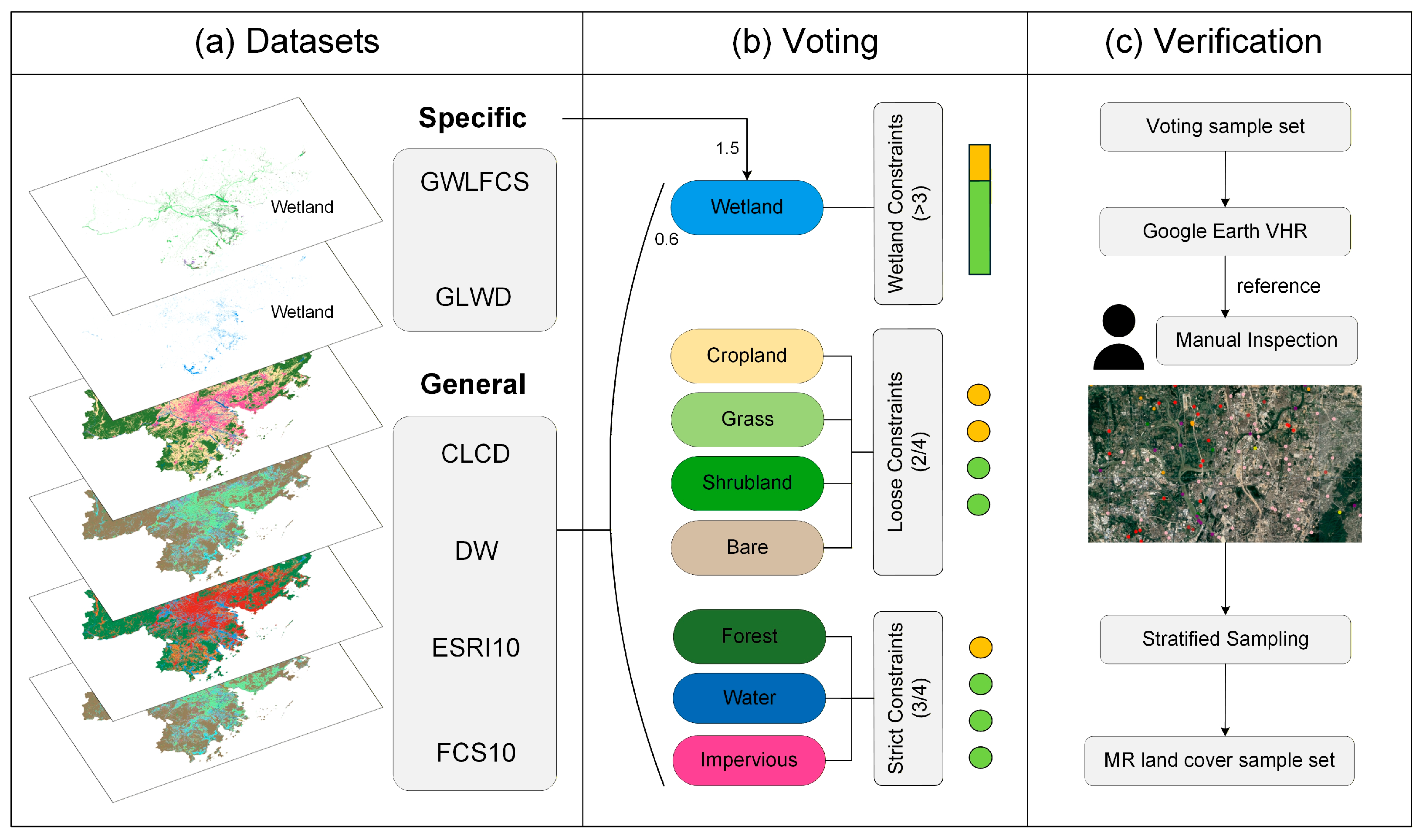

3.3. Random Forest Model for MR Mapping

3.4. Decision-Level Bayesian Fusion

3.5. Evaluation Metrics and Implementation Details

4. Results

4.1. Pretraining Performance of SDMAE

4.2. Evaluation of VHR Mapping

4.3. Ablation Study

4.4. Evaluation of MR Mapping

4.5. Performance of Decision-Level Bayesian Fusion

5. Discussion

5.1. Implications of the Decision-Level Bayesian Fusion

5.2. Impact, Limitation and Future Work

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A. Detailed Land Cover Classification System of PRDLC

| Class | Definition | Interpretation Symbols |

|---|---|---|

| Cropland | Areas for crop production | Color: Bright green to yellow-brown; Shape: Regular patches with clear boundaries; Texture: Striated or uniform row patterns. |

| Forest | Land with >10% tree canopy cover | Color: Dark green; Shape: Irregular clusters; Texture: Coarse, “popcorn-like” grainy appearance caused by tree crowns. |

| Grass | Herbaceous vegetation, mainly grass | Color: Light or yellowish-green; Shape: Large patches or strips; Texture: Fine, smooth, and uniform and lacks the grainy texture of trees. |

| Shrubland | Perennial woody plants with low canopy closure. | Color: Dull green; Shape: Scattered or clumped; Texture: Rougher than grass but smoother than forest and lower height shadows. |

| Wetland | Areas seasonally or permanently flooded | Color: Dark green to brownish-black; Site: Adjacent to water or low-lying; Texture: Mottled, mixed with water and vegetation. |

| Water | Natural or artificial water bodies (rivers, ponds) | Color: Dark blue to black (clear) or cyan (turbid); Shape: Linear or smooth polygons; Texture: Very smooth. |

| Impervious | Man-made surfaces (buildings, roads, runways) | Color: Gray, white, blue or terracotta; Shape: Geometric, sharp edges, distinct shadows; Texture: Smooth but structured. |

| Bare | Land with <10% vegetation (soil, sand, rock) | Color: Brown, light gray, or white; Shape: Irregular; Texture: Earthy or grainy and often shows signs of human activity (e.g., construction). |

Appendix B

| PRDLC | Globe230k | OneEarthMap | LoveDA | WHDLD | DLRSD | |||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Class | Label | Class | Original Label | Converted Label | Class | Original Label | Converted Label | Class | Original Label | Converted Label | Class | Original Label | Converted Label | Class | Original Label | Converted Label |

| Background | 0 | Background | 0 | 0 | Background | 0 | 0 | Background | 1 | 0 | Building | 1 | 7 | Airplane | 1 | 0 |

| Cropland | 1 | Cropland | 1 | 1 | Bareland | 1 | 8 | Building | 2 | 7 | Road | 2 | 7 | Bare soil | 2 | 8 |

| Forest | 2 | Forest | 2 | 2 | Rangeland | 2 | 3 | Road | 3 | 7 | Pavement | 3 | 7 | Buildings | 3 | 7 |

| Grassland | 3 | Grass | 3 | 3 | Developed space | 3 | 7 | Water | 4 | 6 | Vegetation | 4 | 0 | Cars | 4 | 0 |

| Shrubland | 4 | Shrub | 4 | 4 | Road | 4 | 7 | Barren | 5 | 8 | Bare soil | 5 | 8 | Chaparral | 5 | 4 |

| Wetland | 5 | Wetland | 5 | 5 | Tree | 5 | 2 | Forest | 6 | 2 | Water | 6 | 6 | Court | 6 | 7 |

| Water | 6 | Water | 6 | 6 | Water | 6 | 6 | Agriculture | 7 | 1 | Dock | 7 | 7 | |||

| Impervious | 7 | Tundra | 7 | 0 | Agriculture land | 7 | 1 | Field | 8 | 1 | ||||||

| Bare | 8 | Impervious surface | 8 | 7 | Building | 8 | 7 | Grass | 9 | 3 | ||||||

| Bareland | 9 | 8 | Mobile home | 10 | 0 | |||||||||||

| Ice/snow | 10 | 0 | Pavement | 11 | 7 | |||||||||||

| Sand | 12 | 8 | ||||||||||||||

| Sea | 13 | 6 | ||||||||||||||

| Ship | 14 | 0 | ||||||||||||||

| Tanks | 15 | 0 | ||||||||||||||

| Trees | 16 | 2 | ||||||||||||||

| Water | 17 | 6 | ||||||||||||||

| Sample contribution (number of images) | ||||||||||||||||

| 1–7370 | 7370–8810 | 8811–9538 | 9539–26,302 | 26,303–31,242 | 31,243–33,342 | |||||||||||

| PRDLC-PRO | SinoLC-1 | CLCD | Dynamic World | Esri 10 | GLC_FCS10 | GLWD V2 | GWL_FCS30 | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Class | ID | Class | ID | Class | ID | Class | ID | Class | ID | Class | ID | Class | ID | Class | ID |

| Cropland | 1 | Traffic route | 1 | Cropland | 1 | Water | 1 | Water areas | 1 | Cropland | 11–20 | Open water bodies | 1–7 | Permanent water | 180 |

| Forest | 2 | Tree cover | 2 | Forest | 2 | Trees | 2 | Trees | 2 | Forest | 51–92 | Lacustrine wetlands | 8–9 | Swamp | 181 |

| Grassland | 3 | Shrubland | 3 | Shrub | 3 | Grass | 3 | Flooded vegetation areas | 4 | Shrubland | 121–122 | Riverine wetlands | 10–19 | Marsh | 182 |

| Shrubland | 4 | Grassland | 4 | Grassland | 4 | Flooded vegetation | 4 | Crops | 5 | Grassland | 130 | Peatlands | 22–27 | Flooded flat | 183 |

| Wetland | 5 | Cropland | 5 | Water | 5 | Crops | 5 | Built areas | 7 | Tumdra | 140 | Coastal wetlands | 28–31 | Saline | 184 |

| Water | 6 | Building | 6 | Snow/Ice | 6 | Shrub&scrub | 6 | Bare ground areas | 8 | Wetland | 181–187 | Special wetlands | 32–33 | Mangrove forest | 185 |

| Imprevious | 7 | Barren and sparse vegetation | 7 | Barren | 7 | Built area | 7 | Snow/ice | 9 | Imprevious surfaces | 191–192 | Salt marsh | 186 | ||

| Bare | 8 | Snow and ice | 8 | Impervious | 8 | Bare ground | 8 | Clouds | 10 | Bare areas | 150–200 | Tidal flat | 187 | ||

| Water | 9 | Wetland | 9 | Snow/ice | 9 | Rangeland areas | 11 | Water | 210 | ||||||

| Wetand | 10 | Premanent ice and snow | 220 | ||||||||||||

| Moss and lichen | 12 | ||||||||||||||

| Data Source | Features | Calculation Formula |

|---|---|---|

| Sentinel-1 | VV, VH | - |

| CrossVH | ||

| RVI | ||

| PoL | ||

| Sentinel-2 | B2–B5, B8–B9, B11–B12 | - |

| NDVI | ||

| EVI | ||

| SAVI | ||

| NDWI | ||

| MNDWI | ||

| LSWI | ||

| NDBI | ||

| IBI | ||

| NDBSI | ||

| BSI | ||

| NASADEM | DEM | - |

| Slope | - |

References

- Wang, Y.; Sun, Y.; Cao, X.; Wang, Y.; Zhang, W.; Cheng, X. A Review of Regional and Global Scale Land Use/Land Cover (LULC) Mapping Products Generated from Satellite Remote Sensing. ISPRS J. Photogramm. Remote Sens. 2023, 206, 311–334. [Google Scholar] [CrossRef]

- Venter, Z.S.; Barton, D.N.; Chakraborty, T.; Simensen, T.; Singh, G. Global 10 m Land Use Land Cover Datasets: A Comparison of Dynamic World, World Cover and Esri Land Cover. Remote Sens. 2022, 14, 4101. [Google Scholar] [CrossRef]

- Thenkabail, P.S.; Schull, M.; Turral, H. Ganges and Indus River Basin Land Use/Land Cover (LULC) and Irrigated Area Mapping Using Continuous Streams of MODIS Data. Remote Sens. Environ. 2005, 95, 317–341. [Google Scholar] [CrossRef]

- Luo, X.; Zhou, H.; Satriawan, T.W.; Tian, J.; Zhao, R.; Keenan, T.F.; Griffith, D.M.; Sitch, S.; Smith, N.G.; Still, C.J. Mapping the Global Distribution of C4 Vegetation Using Observations and Optimality Theory. Nat. Commun. 2024, 15, 1219. [Google Scholar] [CrossRef]

- He, K.; Fan, C.; Zhong, M.; Cao, F.; Wang, G.; Cao, L. Evaluation of Habitat Suitability for Asian Elephants in Sipsongpanna under Climate Change by Coupling Multi-Source Remote Sensing Products with MaxEnt Model. Remote Sens. 2023, 15, 1047. [Google Scholar] [CrossRef]

- Gunacti, M.C.; Gul, G.O.; Cetinkaya, C.P.; Gul, A.; Barbaros, F. Evaluating Impact of Land Use and Land Cover Change under Climate Change on a Lake System. Water Resour Manag 2023, 37, 2643–2656. [Google Scholar] [CrossRef]

- Cihlar, J. Land Cover Mapping of Large Areas from Satellites: Status and Research Priorities. Int. J. Remote Sens. 2000, 21, 1093–1114. [Google Scholar] [CrossRef]

- Grekousis, G.; Mountrakis, G.; Kavouras, M. An Overview of 21 Global and 43 Regional Land-Cover Mapping Products. Int. J. Remote Sens. 2015, 36, 5309–5335. [Google Scholar] [CrossRef]

- Rogan, J.; Franklin, J.; Stow, D.; Miller, J.; Woodcock, C.; Roberts, D. Mapping Land-Cover Modifications over Large Areas: A Comparison of Machine Learning Algorithms. Remote Sens. Environ. 2008, 112, 2272–2283. [Google Scholar] [CrossRef]

- Nemmour, H.; Chibani, Y. Multiple Support Vector Machines for Land Cover Change Detection: An Application for Mapping Urban Extensions. ISPRS J. Photogramm. Remote Sens. 2006, 61, 125–133. [Google Scholar] [CrossRef]

- Foody, G.M. Land Cover Classification by an Artificial Neural Network with Ancillary Information. Int. J. Geogr. Inf. Syst. 1995, 9, 527–542. [Google Scholar] [CrossRef]

- Rodriguez-Galiano, V.F.; Ghimire, B.; Rogan, J.; Chica-Olmo, M.; Rigol-Sanchez, J.P. An Assessment of the Effectiveness of a Random Forest Classifier for Land-Cover Classification. ISPRS J. Photogramm. Remote Sens. 2012, 67, 93–104. [Google Scholar] [CrossRef]

- Jin, S.; Yang, L.; Zhu, Z.; Homer, C. A Land Cover Change Detection and Classification Protocol for Updating Alaska NLCD 2001 to 2011. Remote Sens. Environ. 2017, 195, 44–55. [Google Scholar] [CrossRef]

- Liu, L.; Zhang, X.; Gao, Y.; Chen, X.; Shuai, X.; Mi, J. Finer-Resolution Mapping of Global Land Cover: Recent Developments, Consistency Analysis, and Prospects. J. Remote Sens. 2021, 2021, 5289697. [Google Scholar] [CrossRef]

- Vali, A.; Comai, S.; Matteucci, M. Deep Learning for Land Use and Land Cover Classification Based on Hyperspectral and Multispectral Earth Observation Data: A Review. Remote Sens. 2020, 12, 2495. [Google Scholar] [CrossRef]

- Šćepanović, S.; Antropov, O.; Laurila, P.; Rauste, Y.; Ignatenko, V.; Praks, J. Wide-Area Land Cover Mapping with Sentinel-1 Imagery Using Deep Learning Semantic Segmentation Models. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2021, 14, 10357–10374. [Google Scholar] [CrossRef]

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional Networks for Biomedical Image Segmentation. In Proceedings of the Medical Image Computing and Computer-Assisted Intervention—MICCAI 2015; Navab, N., Hornegger, J., Wells, W.M., Frangi, A.F., Eds.; Springer International Publishing: Cham, Switzerland, 2015; pp. 234–241. [Google Scholar]

- Chen, L.-C.; Zhu, Y.; Papandreou, G.; Schroff, F.; Adam, H. Encoder-Decoder with Atrous Separable Convolution for Semantic Image Segmentation. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; Springer: Cham, Switzerland, 2018; pp. 801–818. [Google Scholar]

- Zhao, H.; Shi, J.; Qi, X.; Wang, X.; Jia, J. Pyramid Scene Parsing Network. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 2881–2890. [Google Scholar]

- Zheng, S.; Lu, J.; Zhao, H.; Zhu, X.; Luo, Z.; Wang, Y.; Fu, Y.; Feng, J.; Xiang, T.; Torr, P.H.S.; et al. Rethinking Semantic Segmentation From a Sequence-to-Sequence Perspective with Transformers. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 20–25 June 2021; pp. 6877–6886. [Google Scholar]

- Xie, E.; Wang, W.; Yu, Z.; Anandkumar, A.; Alvarez, J.M.; Luo, P. SegFormer: Simple and Efficient Design for Semantic Segmentation with Transformers. In Proceedings of the Advances in Neural Information Processing Systems; Curran Associates, Inc.: Red Hook, NY, USA, 2021; Volume 34, pp. 12077–12090. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very Deep Convolutional Networks for Large-Scale Image Recognition. arXiv 2014, arXiv:1409.1556. Available online: https://arxiv.org/abs/1409.1556 (accessed on 21 January 2026).

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Wang, J.; Sun, K.; Cheng, T.; Jiang, B.; Deng, C.; Zhao, Y.; Liu, D.; Mu, Y.; Tan, M.; Wang, X.; et al. Deep High-Resolution Representation Learning for Visual Recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2021, 43, 3349–3364. [Google Scholar] [CrossRef]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An Image Is Worth 16x16 Words: Transformers for Image Recognition at Scale. In Proceedings of the International Conference on Learning Representations, Vienna, Austria, 4 May 2021. [Google Scholar]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin Transformer: Hierarchical Vision Transformer Using Shifted Windows. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021; pp. 10012–10022. [Google Scholar]

- Guo, M.-H.; Lu, C.-Z.; Hou, Q.; Liu, Z.; Cheng, M.-M.; Hu, S. SegNeXt: Rethinking Convolutional Attention Design for Semantic Segmentation. Adv. Neural Inf. Process. Syst. 2022, 35, 1140–1156. [Google Scholar]

- Bartholomé, E.; Belward, A.S. GLC2000: A New Approach to Global Land Cover Mapping from Earth Observation Data. Int. J. Remote Sens. 2005, 26, 1959–1977. [Google Scholar] [CrossRef]

- Buchhorn, M.; Lesiv, M.; Tsendbazar, N.E.; Herold, M.; Bertels, L.; Smets, B. Copernicus Global Land Cover Layers-Collection 2. Remote Sens. 2020, 12, 1044. [Google Scholar] [CrossRef]

- Gong, P.; Liu, H.; Zhang, M.; Li, C.; Wang, J.; Huang, H.; Clinton, N.; Ji, L.; Li, W.; Bai, Y.; et al. Stable Classification with Limited Sample: Transferring a 30-m Resolution Sample Set Collected in 2015 to Mapping 10-m Resolution Global Land Cover in 2017. Sci. Bull. 2019, 64, 370–373. [Google Scholar] [CrossRef] [PubMed]

- Brown, C.F.; Brumby, S.P.; Guzder-Williams, B.; Birch, T.; Hyde, S.B.; Mazzariello, J.; Czerwinski, W.; Pasquarella, V.J.; Haertel, R.; Ilyushchenko, S.; et al. Dynamic World, near Real-Time Global 10 m Land Use Land Cover Mapping. Sci. Data 2022, 9, 251. [Google Scholar] [CrossRef]

- Li, Z.; He, W.; Cheng, M.; Hu, J.; Yang, G.; Zhang, H. SinoLC-1: The First 1 m Resolution National-Scale Land-Cover Map of China Created with a Deep Learning Framework and Open-Access Data. Earth Syst. Sci. Data 2023, 15, 4749–4780. [Google Scholar] [CrossRef]

- Zhong, Y.; Su, Y.; Wu, S.; Zheng, Z.; Zhao, J.; Ma, A.; Zhu, Q.; Ye, R.; Li, X.; Pellikka, P.; et al. Open-Source Data-Driven Urban Land-Use Mapping Integrating Point-Line-Polygon Semantic Objects: A Case Study of Chinese Cities. Remote Sens. Environ. 2020, 247, 111838. [Google Scholar] [CrossRef]

- Sang, Q.; Zhuang, Y.; Dong, S.; Wang, G.; Chen, H. FRF-Net: Land Cover Classification From Large-Scale VHR Optical Remote Sensing Images. IEEE Geosci. Remote Sens. Lett. 2020, 17, 1057–1061. [Google Scholar] [CrossRef]

- Zhang, W.; Tang, P.; Zhao, L. Fast and Accurate Land-Cover Classification on Medium-Resolution Remote-Sensing Images Using Segmentation Models. Int. J. Remote Sens. 2021, 42, 3277–3301. [Google Scholar] [CrossRef]

- Chen, Y.; Huang, J.; Wang, J.; Zhou, Y.; Ge, Y. Deep Spatiotemporal Subpixel Mapping Network by Integrating a Prior Fine Land Cover Map with Change Detection. IEEE Trans. Geosci. Remote Sens. 2025, 63, 4412214. [Google Scholar] [CrossRef]

- Tong, X.-Y.; Xia, G.-S.; Zhu, X.X. Enabling Country-Scale Land Cover Mapping with Meter-Resolution Satellite Imagery. ISPRS J. Photogramm. Remote Sens. 2023, 196, 178–196. [Google Scholar] [CrossRef]

- Zhang, Y.; Chen, G.; Myint, S.W.; Zhou, Y.; Hay, G.J.; Vukomanovic, J.; Meentemeyer, R.K. UrbanWatch: A 1-Meter Resolution Land Cover and Land Use Database for 22 Major Cities in the United States. Remote Sens. Environ. 2022, 278, 113106. [Google Scholar] [CrossRef]

- Ding, J.; Xue, N.; Xia, G.-S.; Bai, X.; Yang, W.; Yang, M.Y.; Belongie, S.; Luo, J.; Datcu, M.; Pelillo, M.; et al. Object Detection in Aerial Images: A Large-Scale Benchmark and Challenges. IEEE Trans. Pattern Anal. Mach. Intell. 2022, 44, 7778–7796. [Google Scholar] [CrossRef]

- He, K.; Fan, H.; Wu, Y.; Xie, S.; Girshick, R. Momentum Contrast for Unsupervised Visual Representation Learning. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 9726–9735. [Google Scholar]

- Kirillov, A.; Mintun, E.; Ravi, N.; Mao, H.; Rolland, C.; Gustafson, L.; Xiao, T.; Whitehead, S.; Berg, A.C.; Lo, W.-Y.; et al. Segment Anything. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 1–6 October 2023. [Google Scholar]

- He, K.; Chen, X.; Xie, S.; Li, Y.; Dollár, P.; Girshick, R. Masked Autoencoders Are Scalable Vision Learners. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 18–24 June 2022; pp. 15979–15988. [Google Scholar]

- Sun, X.; Wang, P.; Lu, W.; Zhu, Z.; Lu, X.; He, Q.; Li, J.; Rong, X.; Yang, Z.; Chang, H.; et al. RingMo: A Remote Sensing Foundation Model with Masked Image Modeling. IEEE Trans. Geosci. Remote Sens. 2023, 61, 1–22. [Google Scholar] [CrossRef]

- Reed, C.J.; Gupta, R.; Li, S.; Brockman, S.; Funk, C.; Clipp, B.; Keutzer, K.; Candido, S.; Uyttendaele, M.; Darrell, T. Scale-MAE: A Scale-Aware Masked Autoencoder for Multiscale Geospatial Representation Learning. In Proceedings of the 2023 IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 1–6 October 2023; pp. 4065–4076. [Google Scholar]

- Wu, K.; Zhang, Y.; Ru, L.; Dang, B.; Lao, J.; Yu, L.; Luo, J.; Zhu, Z.; Sun, Y.; Zhang, J.; et al. A Semantic-Enhanced Multi-Modal Remote Sensing Foundation Model for Earth Observation. Nat. Mach. Intell. 2025, 7, 1235–1249. [Google Scholar] [CrossRef]

- Gong, Z.; Wei, Z.; Wang, D.; Hu, X.; Ma, X.; Chen, H.; Jia, Y.; Deng, Y.; Ji, Z.; Zhu, X.; et al. CrossEarth: Geospatial Vision Foundation Model for Domain Generalizable Remote Sensing Semantic Segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2025. [Google Scholar] [CrossRef]

- Bao, H.; Dong, L.; Piao, S.; Wei, F. BEiT: BERT Pre-Training of Image Transformers. In Proceedings of the Tenth International Conference on Learning Representations (ICLR), Virtual Event, 25–29 April 2022. [Google Scholar]

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; Sastry, G.; Askell, A.; Mishkin, P.; Clark, J.; et al. Learning Transferable Visual Models From Natural Language Supervision. In Proceedings of the 38th International Conference on Machine Learning, online, 18–24 July 2021; pp. 8748–8763. [Google Scholar]

- Liu, F.; Chen, D.; Guan, Z.; Zhou, X.; Zhu, J.; Ye, Q.; Fu, L.; Zhou, J. RemoteCLIP: A Vision Language Foundation Model for Remote Sensing. IEEE Trans. Geosci. Remote. Sens. 2024, 62, 1–16. [Google Scholar] [CrossRef]

- Liu, C.; Huang, W.; Zhu, X.X. LandSegmenter: Towards a Flexible Foundation Model for Land Use and Land Cover Mapping. arXiv 2025, arXiv:2511.08156. [Google Scholar] [CrossRef]

- Wang, Y.; Albrecht, C.M.; Zhu, X.X. Self-Supervised Vision Transformers for Joint SAR-Optical Representation Learning. In Proceedings of the IGARSS 2022—2022 IEEE International Geoscience and Remote Sensing Symposium, Kuala Lumpur, Malaysia, 17–22 July 2022; pp. 139–142. [Google Scholar]

- Guo, X.; Lao, J.; Dang, B.; Zhang, Y.; Yu, L.; Ru, L.; Zhong, L.; Huang, Z.; Wu, K.; Hu, D.; et al. SkySense: A Multi-Modal Remote Sensing Foundation Model Towards Universal Interpretation for Earth Observation Imagery. In Proceedings of the 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 16–22 June 2024. [Google Scholar]

- Wang, D.; Hu, M.; Jin, Y.; Miao, Y.; Yang, J.; Xu, Y.; Qin, X.; Ma, J.; Sun, L.; Li, C.; et al. HyperSIGMA: Hyperspectral Intelligence Comprehension Foundation Model. IEEE Trans. Pattern Anal. Mach. Intell. 2025, 47, 6427–6444. [Google Scholar] [CrossRef]

- Soni, S.; Dudhane, A.; Debary, H.; Fiaz, M.; Munir, M.A.; Danish, M.S.; Fraccaro, P.; Watson, C.D.; Klein, L.J.; Khan, F.S.; et al. EarthDial: Turning Multi-Sensory Earth Observations to Interactive Dialogues. In Proceedings of the 2025 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 10–17 June 2025; pp. 14303–14313. [Google Scholar]

- Liu, C.; Chen, K.; Zhao, R.; Zou, Z.; Shi, Z. Text2Earth: Unlocking Text-Driven Remote Sensing Image Generation with a Global-Scale Dataset and a Foundation Model. IEEE Geosci. Remote Sens. Mag. 2025, 13, 238–259. [Google Scholar] [CrossRef]

- Yao, K.; Xu, N.; Yang, R.; Xu, Y.; Gao, Z.; Kitrungrotsakul, T.; Ren, Y.; Zhang, P.; Wang, J.; Wei, N.; et al. Falcon: A Remote Sensing Vision-Language Foundation Model (Technical Report). arXiv 2025, arXiv:2503.11070. [Google Scholar]

- Tong, X.-Y.; Xia, G.-S.; Lu, Q.; Shen, H.; Li, S.; You, S.; Zhang, L. Land-Cover Classification with High-Resolution Remote Sensing Images Using Transferable Deep Models. Remote Sens. Environ. 2020, 237, 111322. [Google Scholar] [CrossRef]

- Tuia, D.; Persello, C.; Bruzzone, L. Domain Adaptation for the Classification of Remote Sensing Data: An Overview of Recent Advances. IEEE Geosci. Remote Sens. Mag. 2016, 4, 41–57. [Google Scholar] [CrossRef]

- Li, Q.; Qiu, C.; Ma, L.; Schmitt, M.; Zhu, X.X. Mapping the Land Cover of Africa at 10 m Resolution from Multi-Source Remote Sensing Data with Google Earth Engine. Remote Sens. 2020, 12, 602. [Google Scholar] [CrossRef]

- Ienco, D.; Interdonato, R.; Gaetano, R.; Ho Tong Minh, D. Combining Sentinel-1 and Sentinel-2 Satellite Image Time Series for Land Cover Mapping via a Multi-Source Deep Learning Architecture. ISPRS J. Photogramm. Remote Sens. 2019, 158, 11–22. [Google Scholar] [CrossRef]

- Zhao, J.; Yuan, Z.; Mi, X.; Yang, J.; Chen, X.; Meng, X.; Zhu, H.; Meng, Y.; Jiang, Z.; Zhang, Z. A Cross-Spatiotemporal Weakly Supervised Framework for Land Cover Classification: Generating Temporally and Spatially Consistent Land Cover Maps. ISPRS J. Photogramm. Remote Sens. 2025, 227, 519–538. [Google Scholar] [CrossRef]

- Yin, Z.; Li, X.; Wu, P.; Lu, J.; Ling, F. CSSF: Collaborative Spatial-Spectral Fusion for Generating Fine-Resolution Land Cover Maps from Coarse-Resolution Multi-Spectral Remote Sensing Images. ISPRS J. Photogramm. Remote Sens. 2025, 226, 33–53. [Google Scholar] [CrossRef]

- Shi, Q.; Pan, T.; Lu, D.; Li, H.; Chai, Z. BPUM: A Bayesian Probabilistic Updating Model Applied to Early Crop Identification. J. Remote Sens. 2025, 5, 0438. [Google Scholar] [CrossRef]

- Luo, J.; Li, J.; Chu, X.; Yang, S.; Tao, L.; Shi, Q. BTCDNet: Bayesian Tile Attention Network for Hyperspectral Image Change Detection. IEEE Geosci. Remote Sens. Lett. 2025, 22, 5504205. [Google Scholar] [CrossRef]

- Wang, J.; Zheng, Z.; Ma, A.; Lu, X.; Zhong, Y. LoveDA: A Remote Sensing Land-Cover Dataset for Domain Adaptive Semantic Segmentation. In Proceedings of the 35th Conference on Neural Information Processing Systems (NeurIPS), Virtual Event, 6–14 December 2021. [Google Scholar]

- Shao, Z.; Yang, K.; Zhou, W. Performance Evaluation of Single-Label and Multi-Label Remote Sensing Image Retrieval Using a Dense Labeling Dataset. Remote Sens. 2018, 10, 964. [Google Scholar] [CrossRef]

- Shao, Z.; Zhou, W.; Deng, X.; Zhang, M.; Cheng, Q. Multilabel Remote Sensing Image Retrieval Based on Fully Convolutional Network. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2020, 13, 318–328. [Google Scholar] [CrossRef]

- Shi, Q.; He, D.; Liu, Z.; Liu, X.; Xue, J. Globe230k: A Benchmark Dense-Pixel Annotation Dataset for Global Land Cover Mapping. J. Remote Sens. 2023, 3, 0078. [Google Scholar] [CrossRef]

- Xia, J.; Yokoya, N.; Adriano, B.; Broni-Bediako, C. OpenEarthMap: A Benchmark Dataset for Global High-Resolution Land Cover Mapping. In Proceedings of the 2023 IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), Waikoloa, HI, USA, 2–7 January 2023. [Google Scholar]

- Yang, J.; Huang, X. The 30 m Annual Land Cover Dataset and Its Dynamics in China from 1990 to 2019. Earth Syst. Sci. Data 2021, 13, 3907–3925. [Google Scholar] [CrossRef]

- Karra, K.; Kontgis, C.; Statman-Weil, Z.; Mazzariello, J.C.; Mathis, M.; Brumby, S.P. Global Land Use/Land Cover with Sentinel 2 and Deep Learning. In Proceedings of the 2021 IEEE International Geoscience and Remote Sensing Symposium IGARSS, Brussels, Belgium, 11–16 July 2021; pp. 4704–4707. [Google Scholar]

- Zhang, X.; Liu, L.; Zhao, T.; Zhang, W.; Guan, L.; Bai, M.; Chen, X. GLC_FCS10: A Global 10-m Land-Cover Dataset with a Fine Classification System from Sentinel-1 and Sentinel-2 Time-Series Data in Google Earth Engine. Earth Syst. Sci. Data 2025, 17, 4039–4062. [Google Scholar] [CrossRef]

- Lehner, B.; Anand, M.; Fluet-Chouinard, E.; Tan, F.; Aires, F.; Allen, G.H.; Bousquet, P.; Canadell, J.G.; Davidson, N.; Ding, M.; et al. Mapping the World’s Inland Surface Waters: An Upgrade to the Global Lakes and Wetlands Database (GLWD V2). Earth Syst. Sci. Data 2025, 17, 2277–2329. [Google Scholar] [CrossRef]

- Zhang, X.; Liu, L.; Zhao, T.; Chen, X.; Lin, S.; Wang, J.; Mi, J.; Liu, W. GWL_FCS30: A Global 30 m Wetland Map with a Fine Classification System Using Multi-Sourced and Time-Series Remote Sensing Imagery in 2020. Earth Syst. Sci. Data 2023, 15, 265–293. [Google Scholar] [CrossRef]

- Wang, C.; Sun, W. Semantic Guided Large Scale Factor Remote Sensing Image Super-Resolution with Generative Diffusion Prior. ISPRS J. Photogramm. Remote Sens. 2025, 220, 125–138. [Google Scholar] [CrossRef]

- Hao, M.; Chen, S.; Lin, H.; Zhang, H.; Zheng, N. A Prior Knowledge Guided Deep Learning Method for Building Extraction from High-Resolution Remote Sensing Images. Urban Inf. 2024, 3, 6. [Google Scholar] [CrossRef]

- Shao, C.; Li, H.; Shen, H. Generative Shadow Synthesis and Removal for Remote Sensing Images Through Embedding Illumination Models. IEEE Trans. Geosci. Remote Sens. 2025, 63, 5620115. [Google Scholar] [CrossRef]

- Liu, Y.; Li, W.; Guan, J.; Zhou, S.; Zhang, Y. Effective Cloud Removal for Remote Sensing Images by an Improved Mean-Reverting Denoising Model with Elucidated Design Space. In Proceedings of the 2025 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 10–17 June 2025; pp. 17851–17861. [Google Scholar]

- Zhang, R.; Aziz, I.; Houtz, D.A.; Zhao, Y.; Ford, T.W.; Watts, A.C.; Alipour, M. UAV-Based Remote Sensing of Soil Moisture across Diverse Land Covers: Validation and Bayesian Uncertainty Characterization. IEEE Trans. Geosci. Remote Sens. 2025, 63, 1–18. [Google Scholar] [CrossRef]

- Fu, Y.; Wang, Z.; Maghareh, A.; Dyke, S.; Jahanshahi, M.; Shahriar, A.; Zhang, F. Effective Structural Impact Detection and Localization Using Convolutional Neural Network and Bayesian Information Fusion with Limited Sensors. Mech. Syst. Signal Process. 2025, 224, 112074. [Google Scholar] [CrossRef]

- Rohleder, S.; Costa, D.; Bozorgmehr, P.K. Area-Level Socioeconomic Deprivation, Non-National Residency, and COVID-19 Incidence: A Longitudinal Spatiotemporal Analysis in Germany. eClinicalMedicine 2022, 49, 101485. [Google Scholar] [CrossRef]

- GB/T 21010-2017; Land Use Classification Status. Standardization Administration of China: Beijing, China, 2017.

| Class | Samples per Class |

|---|---|

| Cropland | 1898 |

| Forest | 3329 |

| Grass | 1710 |

| Shrubland | 2369 |

| Wetland | 1910 |

| Water | 943 |

| Impervious | 1426 |

| Bare | 1415 |

| Model | OA (%) | mIoU (%) | FLOPs (G) | Params (M) |

|---|---|---|---|---|

| UNet | 68.94 | 50.35 | 124.58 | 13.40 |

| PSPNet | 70.15 | 52.97 | 59.22 | 46.71 |

| DeepLabV3+ | 69.68 | 52.88 | 56.58 | 40.52 |

| SETR | 66.56 | 45.86 | 41.97 | 89.77 |

| SegFormer | 66.40 | 46.01 | 153.89 | 136.66 |

| MAE-SBFNet-0P-20E | 57.29 | 32.78 | 29.18 | 92.71 |

| MAE-SBFNet-0P-100E | 66.66 | 46.17 | 29.18 | 92.71 |

| SDMAE-SBFNet-0P-20E | 60.54 | 38.24 | 29.85 | 93.30 |

| SDMAE-SBFNet-0P-100E | 68.79 | 49.31 | 29.85 | 93.30 |

| MAE-SBFNet-50P-20E | 66.94 | 46.89 | 29.18 | 92.71 |

| MAE-SBFNet-50P-100E | 69.35 | 50.00 | 29.18 | 92.71 |

| MAE-SBFNet-100P-20E | 67.81 | 47.99 | 29.18 | 92.71 |

| MAE-SBFNet-100P-100E | 69.34 | 50.34 | 29.18 | 92.71 |

| SDMAE-SBFNet-50P-20E | 66.78 | 46.33 | 29.85 | 93.30 |

| SDMAE-SBFNet-50P-100E | 68.85 | 50.54 | 29.85 | 93.30 |

| SDMAE-SBFNet-100P-20E | 69.85 | 50.77 | 29.85 | 93.30 |

| SDMAE-SBFNet-100P-100E | 71.53 | 53.21 | 29.85 | 93.30 |

| Model | OA (%) | mIoU (%) | FLOPs (G) | Params (M) |

|---|---|---|---|---|

| UNet | 70.25 | 50.06 | 124.58 | 13.40 |

| PSPNet | 70.43 | 50.12 | 59.22 | 46.71 |

| DeepLabV3+ | 69.36 | 47.07 | 56.58 | 40.52 |

| SETR | 66.80 | 44.65 | 41.97 | 89.77 |

| SegFormer | 68.88 | 49.14 | 153.89 | 136.66 |

| MAE-SBFNet-0P-20E | 60.46 | 30.48 | 29.18 | 92.71 |

| MAE-SBFNet-0P-100E | 69.72 | 44.24 | 29.18 | 92.71 |

| SDMAE-SBFNet-0P-20E | 50.72 | 24.47 | 29.85 | 93.30 |

| SDMAE-SBFNet-0P-100E | 70.49 | 46.30 | 29.85 | 93.30 |

| MAE-SBFNet-50P-20E | 59.46 | 30.10 | 29.18 | 92.71 |

| MAE-SBFNet-50P-100E | 69.36 | 46.90 | 29.18 | 92.71 |

| MAE-SBFNet-100P-20E | 48.37 | 21.81 | 29.18 | 92.71 |

| MAE-SBFNet-100P-100E | 71.09 | 43.76 | 29.18 | 92.71 |

| SDMAE-SBFNet-50P-20E | 65.83 | 39.00 | 29.85 | 93.30 |

| SDMAE-SBFNet-50P-100E | 69.53 | 46.32 | 29.85 | 93.30 |

| SDMAE-SBFNet-100P-20E | 67.74 | 44.10 | 29.85 | 93.30 |

| SDMAE-SBFNet-100P-100E | 72.22 | 46.86 | 29.85 | 93.30 |

| Model | OA (%) | mIoU (%) | FLOPs (G) | Params (M) |

|---|---|---|---|---|

| UNet | 78.41 | 50.32 | 124.58 | 13.40 |

| PSPNet | 78.13 | 51.53 | 59.22 | 46.71 |

| DeepLabV3+ | 78.01 | 51.67 | 56.58 | 40.52 |

| SETR | 76.39 | 48.53 | 41.97 | 89.77 |

| SegFormer | 77.15 | 49.22 | 153.89 | 136.66 |

| SBFNet-0P-20E | 74.55 | 44.89 | 29.18 | 92.71 |

| SBFNet-0P-100E | 76.15 | 48.37 | 29.18 | 92.71 |

| SDSBFNet-0P-20E | 75.35 | 46.36 | 29.85 | 93.30 |

| SDSBFNet-0P-100E | 75.84 | 47.24 | 29.85 | 93.30 |

| MAE-SBFNet-50P-20E | 77.84 | 50.30 | 29.18 | 92.71 |

| MAE-SBFNet-50P-100E | 77.84 | 50.30 | 29.18 | 92.71 |

| MAE-SBFNet-100P-20E | 76.64 | 48.33 | 29.18 | 92.71 |

| MAE-SBFNet-100P-100E | 78.21 | 50.55 | 29.18 | 92.71 |

| SDMAE-SBFNet-50P-20E | 78.84 | 51.11 | 29.85 | 93.30 |

| SDMAE-SBFNet-50P-100E | 79.22 | 52.28 | 29.85 | 93.30 |

| SDMAE-SBFNet-100P-20E | 76.22 | 48.69 | 29.85 | 93.30 |

| SDMAE-SBFNet-100P-100E | 79.49 | 52.26 | 29.85 | 93.30 |

| Model | OA (%) | mIoU (%) | FLOPs (G) | Params (M) |

|---|---|---|---|---|

| UNet | 89.30 | 63.58 | 124.58 | 13.40 |

| PSPNet | 87.91 | 62.04 | 59.22 | 46.71 |

| DeepLabV3+ | 88.41 | 64.13 | 56.58 | 40.52 |

| SETR | 83.71 | 56.82 | 41.97 | 89.77 |

| SegFormer | 85.14 | 57.98 | 153.89 | 136.66 |

| SBFNet-0P-20E | 73.33 | 35.96 | 29.18 | 92.71 |

| SBFNet-0P-100E | 85.18 | 57.58 | 29.18 | 92.71 |

| SDSBFNet-0P-20E | 76.47 | 42.50 | 29.85 | 93.30 |

| SDSBFNet-0P-100E | 85.68 | 58.83 | 29.85 | 93.30 |

| MAE-SBFNet-50P-20E | 84.74 | 55.17 | 29.18 | 92.71 |

| MAE-SBFNet-50P-100E | 88.69 | 64.77 | 29.18 | 92.71 |

| MAE-SBFNet-100P-20E | 83.16 | 54.57 | 29.18 | 92.71 |

| MAE-SBFNet-100P-100E | 88.42 | 63.44 | 29.18 | 92.71 |

| SDMAE-SBFNet-50P-20E | 83.99 | 51.56 | 29.85 | 93.30 |

| SDMAE-SBFNet-50P-100E | 88.28 | 66.57 | 29.85 | 93.30 |

| SDMAE-SBFNet-100P-20E | 81.99 | 49.88 | 29.85 | 93.30 |

| SDMAE-SBFNet-100P-100E | 87.98 | 66.61 | 29.85 | 93.30 |

| Datasets | VMask Strategy | Edge-Enhanced Loss | OA (%) | mIoU (%) | FLOPs (G) | Params (M) |

|---|---|---|---|---|---|---|

| LoveDA | × | × | 69.34 | 50.34 | 29.18 | 92.71 |

| LoveDA | × | ✓ | 69.35 | 50.76 | 29.18 | 92.71 |

| LoveDA | ✓ | × | 70.86 | 52.07 | 29.85 | 93.30 |

| LoveDA | ✓ | ✓ | 71.53 | 53.21 | 29.85 | 93.30 |

| DLRSD | × | × | 71.09 | 43.76 | 29.18 | 92.71 |

| DLRSD | × | ✓ | 74.50 | 45.94 | 29.18 | 92.71 |

| DLRSD | ✓ | × | 71.47 | 42.77 | 29.85 | 93.30 |

| DLRSD | ✓ | ✓ | 72.22 | 46.86 | 29.85 | 93.30 |

| WHDLD | × | × | 78.21 | 50.55 | 29.18 | 92.71 |

| WHDLD | × | ✓ | 77.04 | 48.84 | 29.18 | 92.71 |

| WHDLD | ✓ | × | 78.93 | 52.29 | 29.85 | 93.30 |

| WHDLD | ✓ | ✓ | 79.49 | 52.56 | 29.85 | 93.30 |

| PRDLC-PRO | × | × | 88.42 | 63.44 | 29.18 | 92.71 |

| PRDLC-PRO | × | ✓ | 86.73 | 63.09 | 29.18 | 92.71 |

| PRDLC-PRO | ✓ | × | 87.82 | 66.47 | 29.85 | 93.30 |

| PRDLC-PRO | ✓ | ✓ | 87.98 | 66.61 | 29.85 | 93.30 |

| Datasets | FBM | SBM | GSBM | OA (%) | mIoU (%) | FLOPs (G) | Params (M) |

|---|---|---|---|---|---|---|---|

| LoveDA | ✓ | × | × | 68.86 | 49.86 | 29.81 | 93.29 |

| LoveDA | ✓ | ✓ | × | 70.38 | 51.95 | 29.82 | 93.29 |

| LoveDA | ✓ | × | ✓ | 71.38 | 52.45 | 29.84 | 93.30 |

| LoveDA | ✓ | ✓ | ✓ | 71.53 | 53.21 | 29.85 | 93.30 |

| DLRSD | ✓ | × | × | 68.47 | 44.39 | 29.81 | 93.29 |

| DLRSD | ✓ | ✓ | × | 69.92 | 46.51 | 29.82 | 93.29 |

| DLRSD | ✓ | × | ✓ | 68.51 | 45.92 | 29.84 | 93.30 |

| DLRSD | ✓ | ✓ | ✓ | 72.22 | 46.86 | 29.85 | 93.30 |

| WHDLD | ✓ | × | × | 77.24 | 50.55 | 29.81 | 93.29 |

| WHDLD | ✓ | ✓ | × | 78.25 | 48.84 | 29.82 | 93.29 |

| WHDLD | ✓ | × | ✓ | 77.94 | 52.29 | 29.84 | 93.30 |

| WHDLD | ✓ | ✓ | ✓ | 79.49 | 52.56 | 29.85 | 93.30 |

| PRDLC-PRO | ✓ | × | × | 87.26 | 62.89 | 29.81 | 93.29 |

| PRDLC-PRO | ✓ | ✓ | × | 87.86 | 65.01 | 29.82 | 93.29 |

| PRDLC-PRO | ✓ | × | ✓ | 87.76 | 64.47 | 29.84 | 93.30 |

| PRDLC-PRO | ✓ | ✓ | ✓ | 87.98 | 66.61 | 29.85 | 93.30 |

| Class | UA (%) | PA (%) |

|---|---|---|

| Cropland | 61.06 | 52.33 |

| Forest | 92.83 | 94.21 |

| Grass | 67.09 | 59.49 |

| Shrubland | 62.33 | 64.96 |

| Wetland | 67.37 | 76.33 |

| Water | 96.29 | 97.52 |

| Impervious | 85.25 | 84.69 |

| Bare | 82.29 | 85.70 |

| OA (%) | 76.54 | |

| Kappa | 0.684 | |

| VHR-Only | Cropland | Forest | Grass | Shrubland | Wetland | Water | Impervious | Bare |

| Precision | 0.945 | 0.979 | 0.921 | 0.805 | 0.684 | 0.925 | 0.929 | 0.907 |

| Recall | 0.920 | 0.985 | 0.805 | 0.842 | 0.869 | 0.908 | 0.951 | 0.860 |

| F1 | 0.932 | 0.982 | 0.859 | 0.823 | 0.765 | 0.916 | 0.940 | 0.883 |

| IoU(%) | 87.27 | 96.44 | 75.22 | 69.93 | 61.98 | 84.56 | 88.63 | 79.04 |

| OA(%) | 95.37 | |||||||

| mIoU(%) | 80.38 | |||||||

| Fusion | Cropland | Forest | Grass | Shrubland | Wetland | Water | Impervious | Bare |

| Precision | 0.892 | 0.981 | 0.922 | 0.802 | 0.721 | 0.969 | 0.941 | 0.898 |

| Recall | 0.927 | 0.988 | 0.816 | 0.845 | 0.861 | 0.933 | 0.939 | 0.866 |

| F1 | 0.909 | 0.984 | 0.866 | 0.823 | 0.785 | 0.95 | 0.940 | 0.882 |

| IoU(%) | 83.34 | 96.93 | 76.34 | 69.93 | 64.56 | 90.54 | 88.63 | 78.81 |

| OA(%) | 95.99 | |||||||

| mIoU(%) | 81.13 | |||||||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Luo, J.; Zhao, Y.; Xuan, M.; Zheng, J.; Zhou, Y.; Liu, X. Investigating Very-High-Resolution Land Cover Mapping in the Pearl River Delta with Remote Sensing Foundation Models and Multi-Source Data Bayesian Fusion. Remote Sens. 2026, 18, 897. https://doi.org/10.3390/rs18060897

Luo J, Zhao Y, Xuan M, Zheng J, Zhou Y, Liu X. Investigating Very-High-Resolution Land Cover Mapping in the Pearl River Delta with Remote Sensing Foundation Models and Multi-Source Data Bayesian Fusion. Remote Sensing. 2026; 18(6):897. https://doi.org/10.3390/rs18060897

Chicago/Turabian StyleLuo, Junshen, Yikai Zhao, Mingyang Xuan, Jizhou Zheng, Yan Zhou, and Xiaoping Liu. 2026. "Investigating Very-High-Resolution Land Cover Mapping in the Pearl River Delta with Remote Sensing Foundation Models and Multi-Source Data Bayesian Fusion" Remote Sensing 18, no. 6: 897. https://doi.org/10.3390/rs18060897

APA StyleLuo, J., Zhao, Y., Xuan, M., Zheng, J., Zhou, Y., & Liu, X. (2026). Investigating Very-High-Resolution Land Cover Mapping in the Pearl River Delta with Remote Sensing Foundation Models and Multi-Source Data Bayesian Fusion. Remote Sensing, 18(6), 897. https://doi.org/10.3390/rs18060897