1. Introduction

Ships are important for maritime transportation, logistics, and marine resource activities, so they are key targets for ocean monitoring [

1]. In tasks like waterway management, port security, fisheries enforcement, and maritime search and rescue, robust ship detection is needed for reliable identification, tracking, and behavior analysis and directly affects the speed and reliability of the whole monitoring pipeline [

2]. These needs also apply to early warning, offshore rights protection, and national security, because decisions depend on stable perception when conditions change fast.

Of the available observation types, spaceborne optical remote sensing imagery remains a primary data source for maritime monitoring due to its wide coverage, fine ground sampling distance, and rich shape and texture cues [

3]. High-resolution optical images can reveal ship outlines and surrounding port context, supporting downstream tasks such as traffic monitoring and security assessment.

Accurate ship detection remains challenging because maritime imagery exhibits dynamic environments, structured clutter, and large appearance variation. In open-ocean scenes, ships often have low contrast and blurred boundaries and can be confused with wave streaks, shadows, reflections, and sensor noise [

4,

5]. In coastal areas and ports, dense layouts and linear structures such as docks, breakwaters, and shorelines resemble slender hull patterns, leading to false alarms and unstable localization, which are further aggravated by illumination and atmospheric changes across sensors and viewing geometries.

Optical ship detection is now largely driven by deep learning, with convolutional neural networks and Transformers serving as the primary backbone [

6,

7,

8]. Convolutional models emphasize local modeling and yield stable hierarchical features through progressive aggregation and downsampling. Transformers prioritize global dependency modeling via attention and can exploit a broader context, including spatial and semantic relations among ships, shipping lanes, and port facilities. Recent detectors often combine convolutional hierarchies with Transformer-based context interaction, together with pyramid style feature organization and context enhancement, and strong performance has been reported on benchmarks with dense ship targets [

9,

10,

11,

12,

13].

However, optical ship detection still faces three structural limitations in remote sensing imagery. Large variations in scale and orientation cause mismatches for fixed-grid spatial mixing, and slender hulls and wakes are easily mixed with linear coastal structures, making alignment unstable under direction changes. Moreover, global attention can be distracted by repetitive sea-surface textures or sharp background boundaries, and the resulting errors may propagate in crowded ports with occlusions and complex contexts. Finally, high-resolution inputs intensify the accuracy–efficiency trade-off [

14], since heavy downsampling or coarse tokenization can erode early spatial details under limited memory and compute budgets.

Maritime monitoring also provides a priori information about the domain. Ships are usually located on the water, and their heading is often correlated with the direction of the waterway and the wake trails. The berth and waterway often have a regular spatial layout. Contextual clues, such as trails, turbulence patterns, auxiliary vessels and port structures, can provide supporting evidence. Classical methods encode this prior information through explicit geometry and texture descriptors, but they are sensitive to threshold selection and have weak generalization ability across different scenes. Deep models provide stronger representations and better generalization, but nautical knowledge is rarely incorporated in an explicit and interpretable way. Under rare configurations, abnormal behaviors or scene changes, predictions may conflict with operational knowledge and become unreliable. This prompts researchers to consider structured constraints in feature fusion in the design, rather than just increasing the model capacity.

Recent research has solved these problems through adaptive sampling, directional aggregation and efficient fusion. Adaptive sampling reduces geometric mismatches by learning offsets or sparse sampling sets, and improves positioning accuracy without intensive global operations. Directional context aggregation, including stripe context, axial attention or directional branching, introduces inductive bias, so that the features extend along the main axis, while suppressing the error activation from the linear background, which is especially important in ports dominated by directional artificial structures. At the same time, efficient multi-scale fusion and background suppression are also advancing to prevent unwanted responses from being spread and amplified between scales [

15]. Parallel receptive field, learnable fusion weight and compensatory mechanism can improve positioning consistency by emphasizing stable structural clues rather than indiscriminately aggregating information.

Based on these points, we use RT-DETR as the baseline, and we propose DENet for ship detection on ShipRSImageNet. DENet aims to be more robust in structured maritime scenes. It does this by improving anisotropic structural alignment, reducing interference from background patterns, and keeping efficiency that is friendly for deployment. We focus on two main bottlenecks: unstable feature alignment under strong directional clutter and weak fusion across scales under large-scale variation. DSConv adds controlled sampling that is aware of direction and uses strip aggregation to enhance cues of elongated hulls and reduce clutter amplification. ESF makes fusion across scales more stable by balancing local details and low-frequency context with differential compensation. The main contributions are summarized as follows:

Directional Strip Convolution (DSConv) is introduced, which learns the feature-related offset and apply horizontal or vertical strip aggregation to emphasize the slender ship structure and anisotropic patterns. By aggregating information along the long strip and enhancing the branch through strip perception and optimization, the representation of a slender hull can be enhanced, while suppressing linear clutter such as docks and stern trails in complex maritime scenarios.

Efficient Scale Fusion (ESF), which uses multi-branch convolution and different convolutional kernel sizes, is introduced and supplemented by lightweight differential compensation mechanisms to balance local details and broader low-frequency contexts.

By jointly integrating DSConv and ESF into the RT-DETR architecture, DENet is proposed, through which enhances anisotropic feature modeling and multi-scale context aggregation at the same time to achieve more stable performance under challenging conditions such as complex sea, strong background interference and large-scale scene changes without affecting efficiency. Experimental results show that on ShipRSImageNet, increases by 4.4 points (58.8→63.2) and increases by 5.1 points (68.5→73.6), and consistent improvements are also observed on the NWPU VHR-10 dataset, where increases by 2.1 and increases by 3.0.

2. Related Work

2.1. Efficient Remote Sensing Ship Detection

Real-time surveillance and edge deployment have kept efficient detection as a long-term focus, encouraging simpler pipelines, less repeated computation, and faster inference [

6,

16,

17]. The YOLO series [

18,

19,

20,

21] adopts end-to-end single-stage regression, substantially reducing latency and enabling practical real-time detection. Beyond single-frame detection, YOLO-style pipelines have also been extended to maritime ship tracking in SAR imagery; TFST builds a two-frame tracking framework based on YOLOv12 and feature-based matching [

22]. SSD, proposed by Liu et al. [

23], extends single-stage detection by leveraging feature maps from different layers for multi-scale prediction [

24]. RetinaNet, proposed by Wang et al. [

25], employs a single-stage design with Focal Loss, down-weighting easy samples to alleviate class imbalance and improving stability in cluttered scenes. YOLOv4, proposed by Bochkovskii et al. [

26], further improves the speed–accuracy trade-off through backbone design, data augmentation, and training strategies. YOLOX, proposed by Ge et al. [

27], uses anchor-free training with a decoupled head, which simplifies optimization and supports more practical deployment.

Faster detection heads can be fast, but they do not remove the high compute cost of remote sensing images, so compute is often handled with two engineering strategies. The first strategy changes data handling and inference with patching and cascades [

3,

28,

29]. Overlapping patch training and inference split an image into smaller tiles to keep fine details, and the outputs are then merged to reduce boundary errors. Coarse-to-fine cascades first find candidate regions at low resolution and then refine only those regions at high resolution, so they avoid full-scene high-cost processing. COPO is a related step-by-step method that moves from divergence to concentration and from population to individual [

30]. Dynamic execution and region-based cropping also cut overhead because they skip areas with mostly background, so they reduce both computation and memory use. The goal is to keep key evidence under a fixed budget and to spend computation on dense targets and hard regions.

The second strategy cuts the cost of each inference pass by using lightweight networks and compression, and lightweight design keeps improving [

31,

32]. In SAR ship detection, HyperLi-Net, proposed by Zhang et al. [

33], is a representative hyper-light design that targets both high accuracy and high-speed inference. MobileNet, proposed by Howard et al. [

34], uses separable convolution to cut compute and is a common backbone for compact detectors. ShuffleNet, proposed by Ma et al. [

35], uses group convolution and channel shuffle to cut cost and still keeps information exchange. EfficientNet, proposed by Tan et al. [

36], uses compound scaling to better use parameters and gives strong baselines for lightweight models. For the neck, simple pyramid fusion and lightweight attention reduce repeated links across scales and reduce the cost of high-level features, so the speed and accuracy balance gets better. Many remote sensing methods use these lightweight parts and add stronger feature enhancement to reduce false alarms in dense and cluttered scenes [

37,

38]. Robustness and generalization are also a focus, and SSGNet, proposed by Yasir et al. [

39], studies single-source generalization for ship detection to reduce performance drops under domain shift.

Compression methods also work with network design. Han et al. [

40] used pruning and parameter compression to remove extra channels and connections. Knowledge distillation, introduced by Hinton et al. [

41], transfers knowledge from a teacher model to a student model and does not add inference cost. Attention transfer, proposed by Zagoruyko et al. [

42], matches spatial response patterns to guide student attention. Quantization reduces bit width to lower storage needs and increase throughput. Methods that combine pruning, quantization, and distillation can reduce accuracy loss, because they remove structure and add knowledge compensation [

43,

44].

Structured clutter from shorelines and port facilities, dense occlusion, and extreme scale variation can induce systematic false alarms and localization variance. Progress therefore depends not only on acceleration but also on reliable structural alignment and improved feature organization across scales. This view motivates tighter coupling between efficiency-driven methods and structure-guided modeling, so that models remain deployable while delivering stable and credible detection in rough sea states and congested ports.

2.2. Compensation-Aware Feature Fusion for Object Detection

To mitigate geometric mismatch caused by fixed-grid sampling, Dai et al. [

45] proposed deformable convolution. It learns offsets, so sampling locations can adapt to object shape and pose changes. Zhu et al. [

46] proposed DCNv2. It adds a modulation mechanism to control each sampling point, so the network can suppress weak samples and boost important regions. Zhu et al. [

47] proposed Deformable DETR. It replaces dense global attention with a small set of learnable sampling points for sparse aggregation, so efficiency and convergence improve. DETR, proposed by Carion et al. [

7], formulates detection as set prediction with Hungarian matching, removing anchors and Non-Maximum Suppression, and simplifying the pipeline [

48]. Subsequent studies have improved training efficiency, local modeling, and scalability to high-resolution inputs [

49,

50,

51,

52], making Transformer detection more practical for dense scenes with complex backgrounds [

28,

29,

53]. Collectively, these works suggest that adaptive sampling and sparse aggregation can improve alignment while reducing the cost of global interaction.

In remote sensing, arbitrary orientation and dense layouts make direction-sensitive modeling and rotated detection particularly important [

54,

55,

56,

57]. Ding et al. [

58] proposed RoI Transformer, which learns rotated RoI features and performs rotated box regression to better fit oriented targets. Yang et al. [

59] proposed R3Det and used cascaded refinement to progressively improve the regression of the rotated box, enhancing the stability of the location in crowded scenes. Guo et al. [

60] proposed S2ANet and reduced the difficulty of rotated regression through feature alignment and orientation awareness. Oriented R-CNN further improved training stability and localization quality through a more principled rotated-box parameterization and alignment strategy. Polarization-aware fusion with geometric feature embedding provided complementary cues for robust representation [

61]. These studies indicate that rotated regression can alleviate mismatch for oriented objects, yet performance still depends strongly on stable feature alignment and effective suppression of background interference.

Beyond rotated parameterization, direction-dependent long-range aggregation provides an efficient way to improve structural continuity. Hou [

62] proposed Strip Pooling, which aggregates features in the horizontal or vertical direction for long-range context with low overhead. Wang [

63] proposed Axial Attention, which turns 2D attention into two 1D axes, so it scales better. Recent designs often use compensatory sampling with directional aggregation. They use learnable offsets or sparse points to get aligned features, and then they aggregate them with directional convolution, strip pooling, or axial attention. Other work expands the receptive field in one direction by using long-kernel convolution or factorized elongated convolution and then fuses cues from different directions with lightweight modules.

In general, compensation improves sampling locations, and directional modeling keeps continuity by using inductive bias. This combination is useful for maritime scenes, but performance still depends on stable alignment and strong background suppression [

64]. In ports with anisotropic clutter such as docks, shorelines, and wakes, offset learning and long-range aggregation can be unstable and can amplify background responses along the main directions. Based on this, we propose DSConv. Unlike deformable convolution, DSConv limits adaptive sampling to a directional strip and uses center-accumulated offsets to keep sampling continuous. It aims to make enhancement that is sensitive to direction more stable. It strengthens responses that follow the object structure along the dominant directions and limits amplification caused by background clutter. As a result, it improves alignment reliability and improves the separation of foreground and background in dense remote sensing imagery with complex orientation patterns.

2.3. Multi-Scale Feature Representation for Object Detection

Multi-scale representation is fundamental to remote sensing object detection because objects within the same class can vary by orders of magnitude within one image. Lin et al. [

65] proposed FPN, which fuses features across pyramid levels through a top-down pathway with lateral connections, combining high-level semantics with low-level detail to improve scale consistency. Liu et al. [

66] proposed PANet and introduced bottom-up path enhancement to restore low-level localization cues and improve information flow in crowded scenes. Ghiasi et al. [

67] proposed NAS FPN and used the search for neural architecture to identify more effective fusion topologies. Tan et al. [

68] proposed EfficientDet and introduced BiFPN, which performs adaptive integration across scales via learnable weighted fusion and reduces the propagation of ineffective signals. Swarm Learning also introduces a perception–retrieval–localization process to localize ships step by step [

69]. These paradigms have been extended with stronger cross-level interactions and more efficient fusion operators, so robustness improves for objects of different sizes.

To increase scale coverage within a single stage, Szegedy et al. [

70] proposed the Inception architecture, where parallel branches with different kernel sizes provide multiple receptive fields. Chen et al. proposed dilated multi-branch designs such as ASPP, which use parallel dilation rates to enlarge the effective receptive field and aggregate context without reducing spatial resolution [

71,

72]. For high-resolution remote sensing, large-kernel convolution and factorized long-kernel convolution have also been explored to capture broader scene structure and improve semantic discrimination for large objects and complex layouts. To control computation, these designs are often paired with depthwise separable or factorized convolution or with reparameterization, so that inference remains efficient [

73].

Many methods further emphasize structural transitions to improve foreground–background separation under clutter. Yu et al. [

74] proposed Central Difference Convolution, which adds a central difference term to convolution responses to increase sensitivity to edges and texture changes, benefiting low-contrast and fine-grained structure recognition. A recurring observation is that enlarging receptive fields alone may amplify background responses, whereas compensation and suppression within fusion encourage features to depend more on stable structural cues.

Crowded scenes also motivate adaptive selection across scales and dynamic fusion. Liu et al. [

75] proposed ASFF, which performs spatially adaptive weighted selection across scales and avoids uniform fusion that accumulates noise. Dai et al. [

76] proposed dynamic fusion mechanisms such as Dynamic Head, which reweights features across spatial and channel dimensions to improve multi-scale utilization.

Overall, multi-scale fusion has evolved from pyramid aggregation and parallel receptive fields toward adaptive weighting with explicit suppression and compensation. However, ship detection in remote sensing couples structured background and scale variation more tightly than many generic benchmarks. The port background contains hierarchical and directional patterns, and the responses of linear facilities and textured clutter can be repeatedly reinforced across scales, leading to false alarms and localization drift. In such cases, stacking fusion operators alone may not yield stable gains when the dominant error source is structured clutter rather than insufficient context. Recent practice therefore favors joint design of multi-scale aggregation and suppression with lightweight modules that can be inserted into backbone or neck stages. Motivated by this gap, we introduce ESF, which couples multi-scale aggregation with lightweight differential compensation, aiming to retain scale diversity while preventing background-dominated responses from being amplified during fusion in complex maritime scenes.

3. Proposed Method

3.1. Directional Efficient Network (DENet)

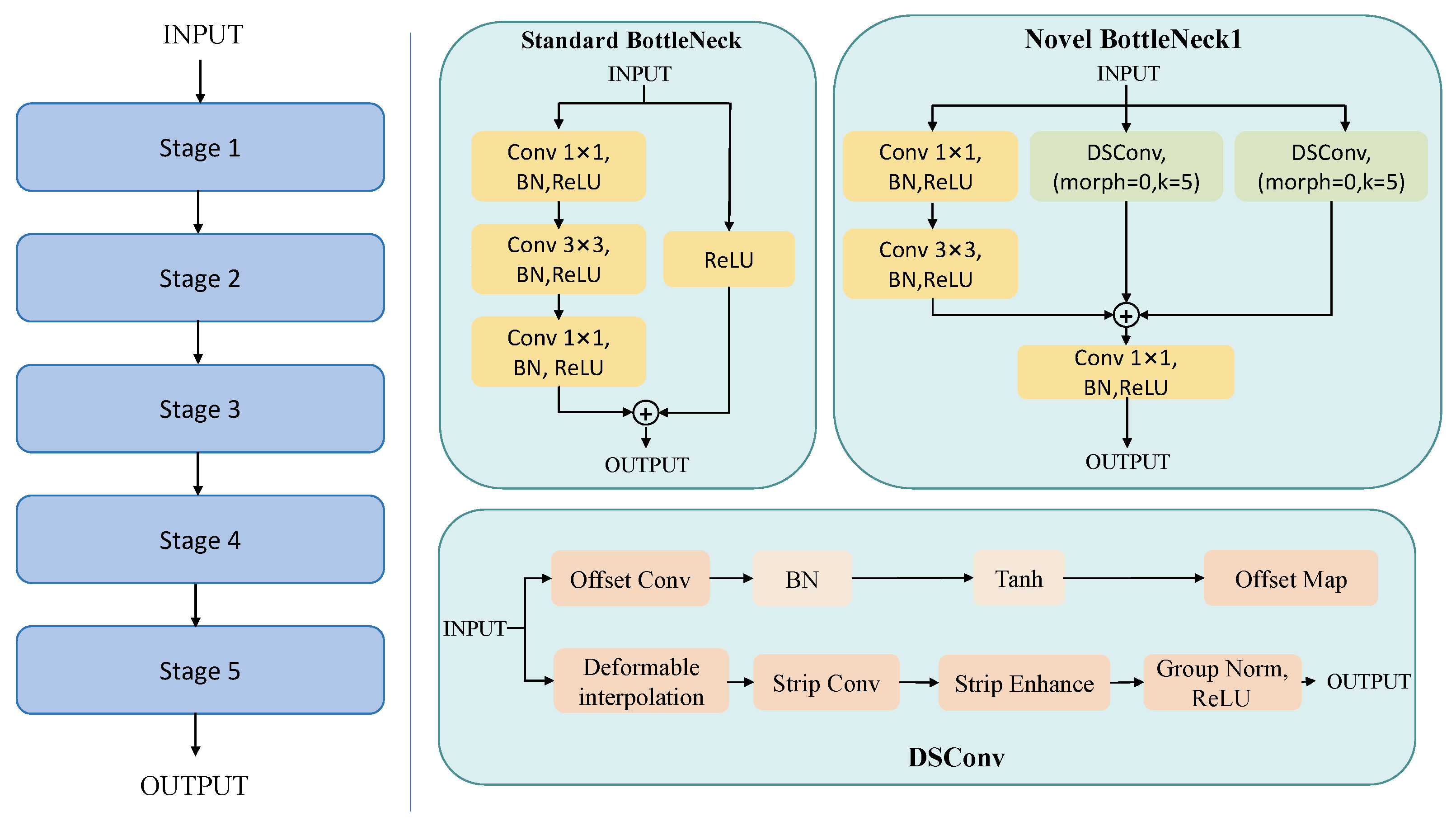

This section describes the overall design of DENet, shown in

Figure 1, which we built on a DETR-style baseline consisting of a ResNet-R50 backbone, a Hybrid Encoder, and a Transformer decoder for set prediction.

Given an input image

, the backbone produces multi-scale features

which are aggregated by the Hybrid Encoder to yield encoder representations

The decoder then predicts a fixed size using learnable queries

,

where

and

denote class scores and bounding boxes.

DENet preserves the baseline data flow and interfaces and introduces two localized operator enhancements to improve robustness under clutter. First, for backbone spatial mixing, a fixed grid

convolution within residual transforms can be geometry-agnostic and may sample excessive background for elongated or direction-varying targets. We therefore replace the spatial operator

with a Directional Strip Convolution (DSConv) while keeping the shortcut connections and stage strides unchanged,

Second, for encoder pyramid fusion, direct concatenation and convolution can be unstable. We augment the CSP-style fusion block by injecting Efficient Scale Fusion (ESF) as an additive correction term,

which keeps the fusion interface unchanged while emphasizing structural transitions through multi-receptive field aggregation and differential compensation.

Overall, DENet maintains the baseline training and inference protocol, including one-to-one matching and standard classification and box regression losses, since DSConv and ESF only modify local operators without altering the decoder or the prediction set definition. The detailed formulations of DSConv and ESF are given in the following subsections.

3.2. Directional Strip Convolution (DSConv)

The backbone follows a standard ResNet pipeline with a stem convolution, max pooling, and residual stages that output multi-scale features for detection. As shown in

Figure 2, our change is confined to the spatial mixing operator inside the bottleneck. Specifically, we replace the fixed-grid

convolution in the residual transform with DSConv, while keeping the shortcut and the surrounding

projections unchanged, so the modified bottleneck can be inserted stage-wise without altering the backbone interface.

DSConv consists of an offset branch and a strip aggregation branch. The offset branch predicts a bounded offset map using an offset convolution, normalization, and a tanh activation. This map guides deformable interpolation, so it samples aligned features. The sampled features are aggregated by strip convolution and refined by strip enhancement. Group normalization and ReLU are then applied to produce the output. This design gives content-adaptive spatial mixing that better matches elongated structures in remote sensing scenes, and it keeps stage strides and shortcut connections unchanged. Let

and

denote the input and output of stage

. The stage mapping is

each residual block follows

where

is a ReLU, and

is either identity or a projection for resolution or channel alignment. In the baseline,

contains a fixed-lattice convolution

where

with

. Here,

k denotes the local kernel size (thus,

) used to define the sampling radius

r, while

K denotes the strip length in DSConv when needed. Fixed-grid sampling uses a sampling set that does not depend on local geometry, so it can introduce extra background responses for elongated targets or targets with varying orientations. In our implementation, we set

(thus

) by default in DSConv as a balanced trade-off between local context support and computational overhead. DSConv handles this by predicting an offset field and sampling on a strip-shaped support. Given

, the output of the offset predictor is

where

K is the length of the strip.

corresponds to the switch offset: when offsets are disabled,

and DSConv degenerates to a strip operator without geometric deformation. We divide

into

and

. Let

be the strip center and

(i.e.,

extend_scope) control the deformation amplitude. For the horizontal strip mode, the strip extends along

x, and the offsets act on

y. We accumulate offsets from the center to both sides,

Compared with DCNv2, DSConv does not learn free 2D offsets that are independent at each point on a dense

grid. It limits sampling to a 1D strip and uses the offsets accumulated from the center in Equation (

10) to enforce a smooth trajectory constraint. This keeps samples continuous along slender hulls and reduces drift toward linear clutter. Compared with strip pooling, which aggregates on a fixed strip, DSConv shifts strip samples based on features under this smooth trajectory constraint. We then define sampling coordinates:

For the vertical strip mode, the strip extends along

y, and the offsets act on

x,

Since

is generally non integer, DSConv uses a differentiable sampler

which can be implemented by bilinear interpolation. The sampled features are aggregated by a strip convolution

followed by a strip enhancement operator that further strengthens directional continuity before normalization and activation. Denote

strip_enhance by

with mode

. In our implementation,

morph is exactly this mode index

, where

and

correspond to horizontal and vertical strip modes, respectively.

and the DSConv output is

where

is GroupNorm. We substitute DSConv for the spatial convolution inside

,

where

denotes the remaining pointwise transforms and channel adaptation in the block. The shortcut

and stage downsampling policy remain unchanged. DSConv reduces two-dimensional sampling to a strip, aligning computation with elongated structures and limiting background interference. The center-based accumulation in (

10) imposes a smooth trajectory prior, improving sampling continuity and stabilizing localization under noisy textures. The strip enhancement in (

15) aggregates along the same directional support, so it strengthens long-range directional cues that work with offset-based sampling.

3.3. Efficient Scale Fusion (ESF)

Pyramid fusion carries the main multi-scale information exchange in the Hybrid Encoder as shown in

Figure 3.

Direct concatenation and convolution can be unstable in cluttered scenes, because low-level features can be dominated by textures and high-level features can blur boundaries. DENet thus modifies only the fusion block. It adds ESF to the baseline CSPRepLayer, and it keeps the rest of the encoder unchanged. Given an input feature map

, the CSP split produces two aligned features,

where

and

denote a

convolution followed by normalization and activation. The transformed branch is

where

is a stack of

m RepVGG blocks. The baseline output is

where

is identity when widths match, otherwise a

projection. We introduce ESF on the main projected feature and fuse it additively,

so that ESF acts as a residual correction that emphasizes structural cues while preserving the original CSP gradient paths. ESF contains four parallel branches with kernel sizes

, and each branch applies a normal convolution

together with a differential compensation term built from the spatial sum of the kernel,

the resulting

can be viewed as an aggregated

kernel, which yields the corresponding

response

so that the compensated response is

To better illustrate the differential compensation mechanism in ESF,

Figure 4 shows the computation process in one branch. Specifically, the spatial weights of the

kernel are first aggregated into an equivalent

kernel, which produces a compact low-frequency response, and this response is then subtracted from the original convolution output.

The branch output is

where

is the convolution kernel,

forms an aggregated

kernel, and

controls the compensation strength. The branch outputs are then concatenated and fused by a pointwise layer,

where ⊕ denotes channel concatenation. By combining multiple receptive-field branches with differential compensation, ESF enhances responses to structural transitions and improves fusion robustness under clutter with minimal modification to the baseline encoder.

4. Experiments

4.1. Datasets

Considering the characteristics of RSIs, we used ShipRSImageNet, NWPU VHR-10, the Infrared Ship Database in the Raytron Database and VisDrone2019-DET as our experimental datasets. We tuned hyper-parameters on the validation split and report the final numbers on the official test split (the AP reported in

Section 4 refers to the test split).

4.1.1. ShipRSImageNet

The ShipRSImageNet dataset contains 3435 images and 17,573 instances, and it is split into 2191 images for training, 550 images for validation, and 687 images for testing. The dataset was created by extracting ship-containing imagery from xView and integrating HRSC2016 and FGSD with corrected annotations and supplemented by missed small-ship targets, together with additional samples from the Airbus Ship Detection Challenge and Chinese satellites. The ship instances are divided into four levels, Level 0, Level 1, Level 2, and Level 3, and the higher the level, the higher the level of fine-grained classification. Level 3 (50 classes) was used in this study for subsequent comparisons and ablations.

4.1.2. NWPU VHR-10

The NWPU VHR-10 subset contains 800 high-resolution images and 3775 annotated instances, covering notable variations in object scale, aspect ratio, and background complexity. We split the dataset into a training set and a test set with an 8:2 ratio for consistent model development and evaluation. The dataset has two image groups: (a) a positive image set of 650 images with at least one target, and (b) a negative image set of 150 images with no targets. The negative set can be used to evaluate false alarms and background suppression. In the positive set, all instances are manually labeled with tight bounding boxes and instance masks as ground truth. The labels include 757 airplanes, 302 ships, 655 storage tanks, 390 baseball diamonds, 524 tennis courts, 159 basketball courts, 163 ground track fields, 224 harbors, 124 bridges, and 477 vehicles.

4.1.3. Infrared Ship Database in Raytron Database

The Infrared Ship Database in the Raytron Database dataset contains 8410 images and 26,455 object instances. We randomly selected 5884 images as the training set, 841 images as the validation set, and 1685 images as the test set.

4.1.4. VisDrone2019-DET

To verify the generalization ability of our proposed model and evaluate its performance on UAV aerial image object detection, we conducted experiments on the VisDrone2019-DET dataset. It comprises 10,209 high-resolution static images. We randomly selected 6471 of these RSIs as the training set, 548 images as the validation set, and 1610 images as the test set.

4.2. Experimental Environment

All experiments were conducted under the same hardware and software environment to make the comparison fair, keep training stable, and make the results easy to reproduce.As shown in

Table 1, DENet and RT-DETR were implemented with PaddlePaddle. We used RT-DETR pre-trained on COCO2017 as the initial weights, and we evaluated all methods with COCO-style AP metrics on single-scale input images.

For training, we set the batch size to four and trained for 72 epochs. The optimizer was AdamW, and we used gradient clipping with a norm threshold of 0.1. The base learning rate was 0.0001. We used a linear warm-up for the first 2000 steps and then increased linearly to the base value. After the warm-up, we kept the learning rate the same with a piecewise scheduler (milestone at step 100 with

), and we followed the default configuration in [

77]. Data augmentation included random {color distort, expand, crop, flip, resize} operations. We also tested inference speed on the same hardware to compare efficiency.

Our models used inputs, but the Transformer series used a short edge length of 800 and a maximum size of 1333.

4.3. Performance Experimental Analysis

To comprehensively evaluate DENet on ShipRSImageNet, we compared it with RT-DETR under a unified protocol and report the Average Precision (AP) and its IoU, together with Frames Per Second (FPS), Parameters (Params), and GFLOPS.

Figure 5,

Figure 6 and

Figure 7 show that DENet surpassed RT-DETR with detailed data presented in

Table 2. DENet showed steady gains on the main accuracy metrics. The gains were larger at looser IoUs and for large objects. For example,

increased by about 5.1 points, and

increased by about 4.6 points. In contrast,

increased by only about 0.2 points, so there was less improvement for medium-scale objects. These results suggest that DENet uses structural cues better and makes box regression more stable in cluttered maritime scenes. This helps recall focused cases and medium-to-large targets.

4.4. Validity Experiment of the Modules

In this section, we investigate the impact of each module’s components on performance.

4.4.1. Effectiveness Experiment of Modules in DSConv

DSConv replaces standard local spatial mixing with structure-aligned strip aggregation. It uses two directional strip branches (horizontal and vertical) to capture long-range context. It predicts offsets from input features to adapt strip sampling to elongated ship structures, so it helps suppress line-shaped background clutter. A strip-enhancement stage further strengthens and fuses directional responses, so the multi-scale representation is more stable. We ran ablation studies on DENet with all other settings fixed.

Table 3 reports detection accuracy and efficiency, so we can compare accuracy and efficiency directly. Compared with deformable convolution, DSConv does not learn free 2D offsets for all kernel points. Instead, it limits adaptive sampling to 1D strip patterns in horizontal and vertical directions, so it focuses on long-range structure aggregation for elongated ships and keeps the offset space smaller. This is often more efficient and more stable in cluttered scenes.

The full DSConv gave the best overall performance. It reached and , and it kept real-time inference.

Removing the vertical strip branch caused the largest drop. dropped from to , and dropped from to . Speed increased, but the accuracy drop shows that vertical long-range aggregation provides key structure-aligned evidence, especially for large ships.

Removing the horizontal strip branch caused a smaller but steady drop.

dropped to

, and

dropped to

(

Table 3). This shows that the horizontal branch mainly adds extra context and refinement and is not the main source of gains.

Turning off the strip-enhancement stage also reduced performance. dropped from to , and dropped from to . Efficiency stayed almost the same. This shows what the enhancement stage does: it improves robustness and multi-scale consistency by enhancing strip feature strengthening and fusion, not by adding more computation.

4.4.2. Effect of the Compensation Factor

The kernel is obtained by summing the kernel over its spatial dimensions, so it can be regarded as an aggregated kernel. Accordingly, the term represents the corresponding aggregated response of the original branch, where ∗ denotes convolution and is the input feature. Because the spatial summation removes most intra-kernel spatial variation, this response mainly reflects low-frequency intensity bias and coarse background trends rather than fine structural details. Therefore, directly controls the subtraction strength of this low-frequency component in each branch, which helps balance background suppression and semantic preservation.

When is small, the compensation is weak, and ESF behaves similarly to standard multi-scale convolutional fusion, retaining more low-frequency background bias and global intensity information. As increases, the subtraction becomes stronger, making the branch response more sensitive to local structural transitions such as edges and fine textures while suppressing broad background bias. This is helpful in maritime scenes, where docks, shorelines, wake streaks, and repetitive water textures may induce false responses. However, if is excessively large, the subtraction may also suppress useful semantic information and weaken feature discrimination.

The results match this explanation. In

Table 4 and

Figure 8,

increases from 59.4 at

to 62.8 at

and reaches 63.2 at

, before dropping to 62.4 and 62.5 at

and

. The scale-wise results also show stable behavior across sizes. For small objects,

changes a bit (e.g., 10.1 at

) but reaches the best value of 13.2 at

, so moderate compensation can help keep and sharpen fine cues for small ships. For medium objects,

increases from 35.5 to 37.4 when

goes from 0.3 to 0.4, and then it stays around 37.1–37.2 near the best setting. For large objects,

increases from 64.7 at

to 69.2 at

, so the compensation also helps large ships by reducing background-driven responses and making localization more consistent. When

is larger than 0.5, the drop in

and

suggests that too much subtraction starts to remove useful semantics along with background bias, which matches the over-compensation case.

Thus, based on the best overall accuracy and the stable results across scales, we set

as the default in all later experiments. we additionally compared ESF with several conventional multi-scale fusion techniques on ShipRSImageNet. The compared methods included representative FPN-based and other commonly used multi-scale detectors. As shown in

Table 5, the proposed RTDETR + ESF achieves 60.1 HBB

, which is higher than all conventional FPN-based detectors listed in the table, including Faster RCNN with FPN, RetinaNet with FPN, and FCOS with FPN. It also slightly surpasses Cascade Mask RCNN with FPN (59.3), which is the strongest conventional multi-scale baseline in this comparison. These results indicate that the improvement is not merely due to the use of multi-scale features but is related to the proposed ESF design that explicitly suppresses low-frequency background bias while preserving discriminative local structures.

4.5. Ablation Study

To test the proposed modules, we ran ablation experiments on ShipRSImageNet with RT-DETR as the baseline. We kept the other settings fixed, and we added ESF, then DSConv, and then both modules together (DENet).

Table 6 reports detection accuracy and efficiency.

Adding ESF improved RT-DETR. increased from 58.8 to 60.1, and the gains appeared across scales. It added only moderate overhead. Parameters increased from 32.40 M to 42.96 M, and the model still ran at real-time speed. Replacing the baseline operator with DSConv increased to 61.0, and most of the gain came from large objects. But the model size increased a lot, so efficiency worsened.

Using both ESF and DSConv gave the best overall performance. DENet reached 63.2 in and got the highest and of 73.6 and 70.5. Parameters increased to 90.82 M, and speed dropped to 46.13 FPS. Even so, the results show that ESF and DSConv work well together, and using them both gives the best balance between accuracy and efficiency in the final design.

4.6. Comparison with Popular Object Detectors

To evaluate DENet for remote sensing ship detection, we compared it with several common detectors on ShipRSImageNet. The methods included two-stage detectors, one-stage detectors, and recent YOLO-series models.

Table 7 lists the results.

DENet gave the best scores on the main metrics for coverage and box quality. It reached . It also got the best and , at 73.6 and 70.5. This means it found more true ships and also predicted tighter boxes at higher IoU.

DENet was also better than YOLO-series baselines in accuracy. For example, when compared with YOLOv10, went up from 66.8 to 73.6, and went up from 65.0 to 70.5. Also, when compared with YOLOv5, went up from 72.1 to 73.6, and went up from 68.8 to 70.5. also went up from 60.2 to 63.2.

For object size, DENet worked especially well on large ships, reaching the best of 69.2. But it did not get the highest score on small objects, where the best was from YOLOv9. Even so, DENet stayed competitive on small ships and still led on the overall metrics. This shows that DENet is robust across different ship sizes and complex backgrounds, and it supports using DENet in real remote sensing tasks.

4.7. Comparison of Public Datasets

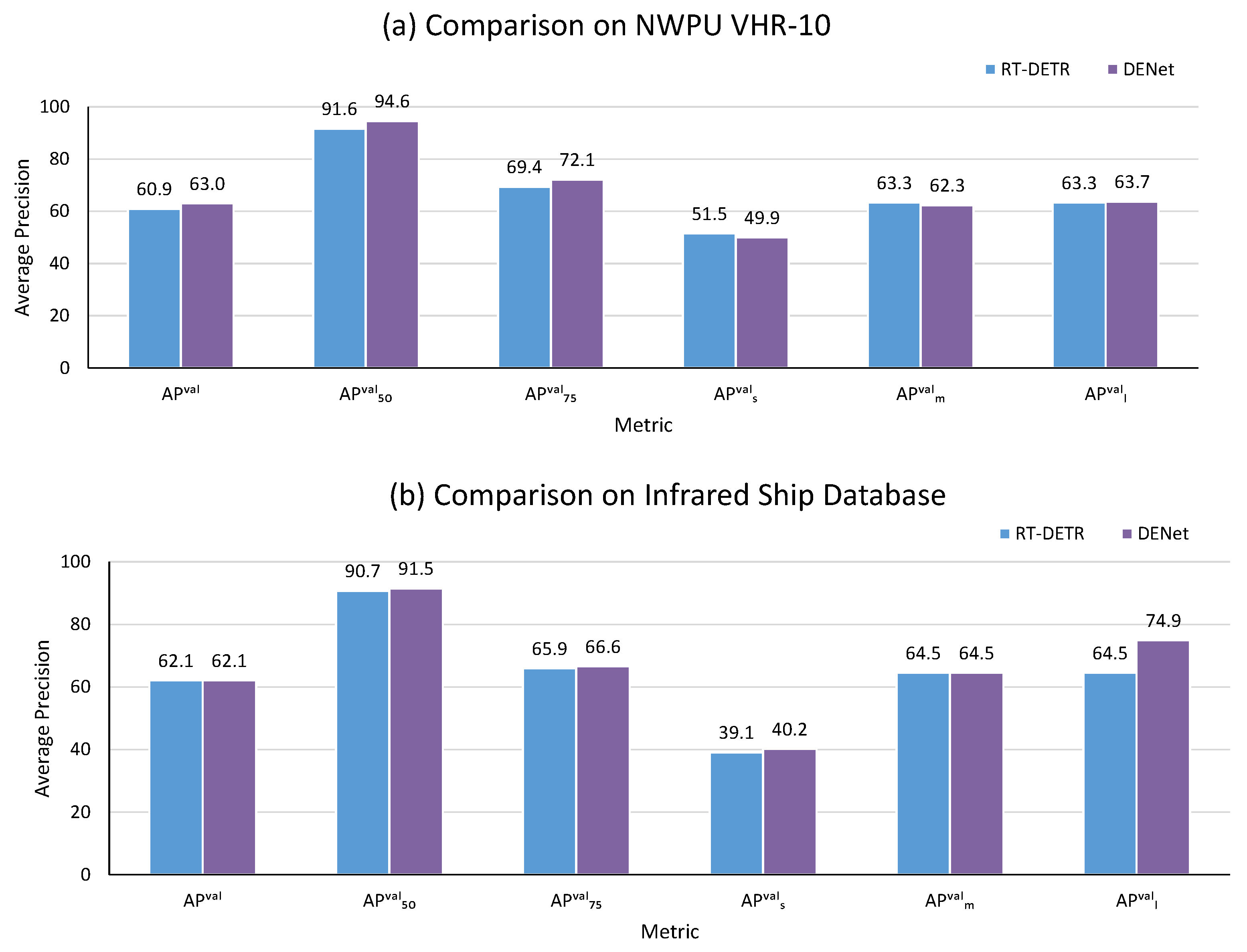

To evaluate the effectiveness of DENet, we employed the NWPU VHR-10 and Infrared Ship Database datasets in the Raytron Database, adhering to the setup outlined in B. Experimental Environment, utilizing 640 × 640 inputs for 72 epochs.

Table 8 presents a comprehensive comparison of the results obtained by DENet and RT-DETR on these datasets. DENet demonstrated superior performance over RT-DETR, achieving increases in all precision metrics. This underscores its proficiency in detecting remote sensing objects, particularly on the NWPU VHR-10 dataset. In comparison to RT-DETR, DENet exhibited slight improvements in APval on the Infrared Ship Database.

Figure 9 demonstrates the detection capabilities of RT-DETR and DENet on the NWPU VHR-10 and Infrared Ship Database datasets in the Raytron Database.

To test whether DENet worked beyond ship detection, we evaluated it on VisDrone2019-DET. This benchmark uses UAV images with many small objects, such as pedestrians and vehicles. Our changes were first designed for maritime scenes, but the design is task-agnostic. It aims to improve multi-scale features and localization in complex backgrounds.

Table 9 shows that DENet improves accuracy over RT-DETR on VisDrone2019-DET.

increases from 21.8 to 22.4.

increases from 38.7 to 39.5, and

increases from 21.4 to 22.2. The gains also appear on small and medium objects.

increases from 11.8 to 12.0, and

increases from 32.0 to 32.8.

decreases from 48.2 to 46.5, so the overall gain mainly comes from small and medium objects, not large ones.

These gains also add cost. Parameters increase from 32.39 M to 90.82 M, and computation increases from 49.99 to 61.93 GFLOPs. Inference speed drops from 57.82 to 43.66 FPS. Despite this overhead, the consistent gains on VisDrone2019-DET verify that the proposed components transfer effectively to UAV aerial detection and provide measurable benefits on real-world small-object scenarios.

5. Discussion

Experiments show that DENet improves remote sensing detection across many scenes and object scales. On ShipRSImageNet, DENet is better than RT-DETR on key accuracy metrics.

increases from 58.8 to 63.2, and

increases from 65.3 to 70.5. This suggests that the directional aggregation and scale-guided fusion produce stronger features and make localization more stable in cluttered maritime backgrounds. The gains also appear on NWPU VHR-10, so the changes work beyond one dataset and can help more remote sensing categories. The code is available

https://github.com/sssj2002/DENet (accessed on 7 March 2026).

There are still challenges. First, the accuracy gain comes with higher model complexity, so deployment can be harder under limited compute. Compared with RT-DETR, DENet has more parameters and higher compute cost, so inference speed is lower. For example, on VisDrone2019-DET, speed drops from 57.82 FPS to 43.66 FPS, so stronger spatial mixing and extra fusion add overhead. But the speed is still close to real time in practice (46.13 FPS). This is often enough for ship detection and maritime monitoring, because very high frame rates are not always needed, and the total delay is often limited by data collection, data transfer, and later processing. In this sense, the accuracy gains can justify the added computational burden in many operational settings. Future work should therefore focus on retaining the benefits of DSConv and ESF while reducing latency and memory footprint, for instance by adopting lighter operators, applying reparameterization during inference, and using compression strategies such as structured pruning and knowledge distillation.

Second, the improvement is not uniform across object sizes and sensing conditions, and performance on small objects remains a clear bottleneck. On ShipRSImageNet, the final model does not yield a clear gain on the metric for small objects relative to the baseline, suggesting that extremely small ships remain limited by weak foreground evidence and strong background interference. A similar pattern appears on VisDrone2019-DET. increases only from 21.8 to 22.4, and the large object metric drops from 48.2 to 46.5. This suggests that the balance between background suppression and semantic preservation can change across datasets. We think this is mainly because tiny ships have very weak evidence after backbone downsampling. DSConv is designed for strip aggregation on elongated structures, but for very small, blob-like ships the strip area can include more background than foreground, so weak target responses can be diluted and offset prediction can become noisier. Also, ESF reduces low-frequency background bias by differential compensation, but when tiny targets rely on subtle contrast, this subtraction can also reduce useful signals, so the gains on can be small or unstable.

Future work should focus on small targets and cross-modality robustness. This can include stronger supervision at higher spatial resolution, scale-adaptive fusion policies, and calibration methods that handle domain shift and changes in object size and sensing modality.

6. Conclusions

Optical remote sensing ship detection is still hard in real maritime scenes because docks, shorelines, and wake streaks create strong background clutter. Ships also show large scale changes and long, thin shapes at many orientations. These factors can cause geometric misalignment, unstable localization, and false alarms, especially in crowded ports and rough sea states.

This work proposed DENet, a structure-aware update based on RT-DETR. It improves robustness in cluttered scenes and keeps the DETR set prediction pipeline. Directional Strip Convolution replaces fixed-grid spatial mixing inside residual blocks. It predicts offsets from features and applies horizontal or vertical strip aggregation. Thus, DSConv aligns computation with slender hull shapes and reduces interference from linear background patterns. Efficient Scale Fusion is also added inside the Hybrid Encoder fusion block as an extra correction. ESF uses parallel receptive fields and a lightweight difference compensation term, so it balances low-frequency context and high-frequency structural changes and makes multi-scale fusion more stable in cluttered scenes. DSConv and ESF work together, so the model has stronger directional structure modeling and better scale-based context aggregation.

Experiments showed the effect of DENet. On ShipRSImageNet, increased from 58.8 to 63.2, and increased from 68.5 to 73.6. This means classification and localization are more reliable in hard maritime scenes. The gains also appeared on other benchmarks. DENet improved results on NWPU VHR-10 and VisDrone2019-DET; therefore, structure-based aggregation and compensated fusion can work beyond one dataset and one scene type.

Author Contributions

Conceptualization, J.S. and G.S.; methodology, J.S.; software, J.S.; validation, J.S. and Y.Y.; formal analysis, J.S.; investigation, J.S. and Y.Y.; resources, G.S. and Y.Y.; data curation, J.S. and X.C.; writing—original draft preparation, J.S.; writing—review and editing, J.S., G.S., Y.Y. and X.C.; visualization, J.S. and X.C.; supervision, G.S.; project administration, G.S.; funding acquisition, G.S. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by National Natural Science Foundation of China (Grant No. 52571403), National Natural Science Foundation of China (Grant No. 52101399).

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

The datasets, ShipRSImageNet, NWPU VHR-10, the Infrared Ship Database in the Raytron Database and VisDrone2019-DET, are publicly available from their respective sources.

Conflicts of Interest

The authors declare no conflicts of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| DENet | Directional Efficient Network |

| DSConv | Directional Strip Convolution |

| ESF | Efficient Scale Fusion |

| AP | Average Precision |

| FPS | Frames Per Second |

| IoU | Intersection over Union |

| RoI | Region of Interest |

| ASPP | Atrous Spatial Pyramid Pooling |

| BN | Batch Normalization |

| SSD | Single-Shot MultiBox Detector |

| BiFPN | Bidirectional Feature Pyramid Network |

| CNN | Convolutional Neural Network |

| NMS | Non-Maximum Suppression |

| FPN | Feature Pyramid Network |

| GN | Group Normalization |

References

- Li, B.; Xie, X.; Wei, X.; Tang, W. Ship detection and classification from optical remote sensing images: A survey. Chin. J. Aeronaut. 2021, 34, 145–163. [Google Scholar] [CrossRef]

- Zhao, T.; Wang, Y.; Li, Z.; Gao, Y.; Chen, C.; Feng, H.; Zhao, Z. Ship detection with deep learning in optical remote-sensing images: A survey of challenges and advances. Remote. Sens. 2024, 16, 1145. [Google Scholar] [CrossRef]

- Chen, Z.; Wang, H.; Wu, X.; Wang, J.; Lin, X.; Wang, C.; Gao, K.; Chapman, M.; Li, D. Object detection in aerial images using DOTA dataset: A survey. Int. J. Appl. Earth Obs. Geoinf. 2024, 134, 104208. [Google Scholar] [CrossRef]

- Zhang, Z.; Zheng, H.; Cao, J.; Feng, X.; Xie, G. FRS-Net: An efficient ship detection network for thin-cloud and fog-covered high-resolution optical satellite imagery. IEEE J. Sel. Top. Appl. Earth Obs. Remote. Sens. 2022, 15, 2326–2340. [Google Scholar] [CrossRef]

- Gao, J.; Sun, J.; Wang, Q. Experiments of ocean surface waves and underwater target detection imaging using a slit Streak Tube Imaging Lidar. Optik 2014, 125, 5199–5201. [Google Scholar] [CrossRef]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster r-cnn: Towards real-time object detection with region proposal networks. Adv. Neural Inf. Process. Syst. 2015, 28, 1137–1149. [Google Scholar] [CrossRef] [PubMed]

- Carion, N.; Massa, F.; Synnaeve, G.; Usunier, N.; Kirillov, A.; Zagoruyko, S. End-to-end object detection with transformers. In Proceedings of the European Conference on Computer Vision, Glasgow, UK, 23–28 August 2020; pp. 213–229. [Google Scholar]

- Wang, R.; Ma, L.; He, G.; Johnson, B.A.; Yan, Z.; Chang, M.; Liang, Y. Transformers for remote sensing: A systematic review and analysis. Sensors 2024, 24, 3495. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Z.; Zhang, L.; Wang, Y.; Feng, P.; He, R. ShipRSImageNet: A large-scale fine-grained dataset for ship detection in high-resolution optical remote sensing images. IEEE J. Sel. Top. Appl. Earth Obs. Remote. Sens. 2021, 14, 8458–8472. [Google Scholar] [CrossRef]

- Chen, J.; Chen, K.; Chen, H.; Zou, Z.; Shi, Z. A degraded reconstruction enhancement-based method for tiny ship detection in remote sensing images with a new large-scale dataset. IEEE Trans. Geosci. Remote. Sens. 2022, 60, 5625014. [Google Scholar] [CrossRef]

- Chen, K.; Wu, M.; Liu, J.; Zhang, C. FGSD: A dataset for fine-grained ship detection in high resolution satellite images. arXiv 2020, arXiv:2003.06832. [Google Scholar] [CrossRef]

- Liu, Z.; Yuan, L.; Weng, L.; Yang, Y. A high resolution optical satellite image dataset for ship recognition and some new baselines. In Proceedings of the International Conference on Pattern Recognition Applications and Methods; SciTePress: Setúbal, Portugal, 2017; Volume 2, pp. 324–331. [Google Scholar]

- Xia, G.S.; Bai, X.; Ding, J.; Zhu, Z.; Belongie, S.; Luo, J.; Datcu, M.; Pelillo, M.; Zhang, L. DOTA: A large-scale dataset for object detection in aerial images. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 3974–3983. [Google Scholar]

- Liang, Y.; Wang, Y.; Xie, X.; Wang, K.; Wang, Y.; Zhang, H.; Li, Z.; Zhou, L.; Zhang, Z.; Shi, Y. Distribution bias embedding tuning of vision transformer for remote sensing object detection. Sci. Rep. 2025, 15, 45257. [Google Scholar] [CrossRef] [PubMed]

- Zhang, T.; Zhang, X.; Liu, C.; Shi, J.; Wei, S.; Ahmad, I.; Zhan, X.; Zhou, Y.; Pan, D.; Li, J.; et al. Balance learning for ship detection from synthetic aperture radar remote sensing imagery. ISPRS J. Photogramm. Remote. Sens. 2021, 182, 190–207. [Google Scholar] [CrossRef]

- Li, X.; Wang, W.; Wu, L.; Chen, S.; Hu, X.; Li, J.; Tang, J.; Yang, J. Generalized focal loss: Learning qualified and distributed bounding boxes for dense object detection. Adv. Neural Inf. Process. Syst. 2020, 33, 21002–21012. [Google Scholar]

- Zhang, H.; Wang, Y.; Dayoub, F.; Sunderhauf, N. Varifocalnet: An iou-aware dense object detector. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, June 10-25, 2021; pp. 8514–8523. [Google Scholar]

- Redmon, J.; Farhadi, A. Yolov3: An incremental improvement. arXiv 2018, arXiv:1804.02767. [Google Scholar] [CrossRef]

- Tian, Y.; Ye, Q.; Doermann, D. Yolov12: Attention-centric real-time object detectors. arXiv 2025, arXiv:2502.12524. [Google Scholar]

- Wang, C.Y.; Yeh, I.H.; Mark Liao, H.Y. Yolov9: Learning what you want to learn using programmable gradient information. In Proceedings of the European Conference on Computer Vision, Milan, Italy, 29 September–4 October 2024; pp. 1–21. [Google Scholar]

- Wang, A.; Chen, H.; Liu, L.; Chen, K.; Lin, Z.; Han, J.; Ding, G. Yolov10: Real-time end-to-end object detection. Adv. Neural Inf. Process. Syst. 2024, 37, 107984–108011. [Google Scholar]

- Yasir, M.; Liu, S.; Xu, M.; Aguilar, F.J.; Wan, J.; Wei, S.; Pirasteh, S.; Fan, H.; Islam, Q.U. TFST: Two-Frame Ship Tracking for SAR Using YOLOv12 and Feature-Based Matching. IEEE J. Sel. Top. Appl. Earth Obs. Remote. Sens. 2025, 19, 3175–3189. [Google Scholar] [CrossRef]

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.Y.; Berg, A.C. Ssd: Single shot multibox detector. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 11–14 October 2016; pp. 21–37. [Google Scholar]

- Zhang, S.; Chi, C.; Yao, Y.; Lei, Z.; Li, S.Z. Bridging the gap between anchor-based and anchor-free detection via adaptive training sample selection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 9759–9768. [Google Scholar]

- Wang, Y.; Wang, C.; Zhang, H.; Dong, Y.; Wei, S. Automatic ship detection based on RetinaNet using multi-resolution Gaofen-3 imagery. Remote. Sens. 2019, 11, 531. [Google Scholar] [CrossRef]

- Bochkovskiy, A.; Wang, C.Y.; Liao, H.Y.M. Yolov4: Optimal speed and accuracy of object detection. arXiv 2020, arXiv:2004.10934. [Google Scholar] [CrossRef]

- Ge, Z.; Liu, S.; Wang, F.; Li, Z.; Sun, J. Yolox: Exceeding yolo series in 2021. arXiv 2021, arXiv:2107.08430. [Google Scholar] [CrossRef]

- Zhang, H.; Ma, Z.; Li, X. Rs-detr: An improved remote sensing object detection model based on rt-detr. Appl. Sci. 2024, 14, 10331. [Google Scholar] [CrossRef]

- Xu, Z.; Wang, C.; Huang, K. BiF-DETR: Remote sensing object detection based on Bidirectional information fusion. Displays 2024, 84, 102802. [Google Scholar] [CrossRef]

- Zhang, T.; Zhang, X.; Gao, G. Divergence to concentration and population to individual: A progressive approaching ship detection paradigm for synthetic aperture radar remote sensing imagery. IEEE Trans. Aerosp. Electron. Syst. 2025, 62, 1325–1338. [Google Scholar] [CrossRef]

- Sandler, M.; Howard, A.; Zhu, M.; Zhmoginov, A.; Chen, L.C. Mobilenetv2: Inverted residuals and linear bottlenecks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 4510–4520. [Google Scholar]

- Howard, A.; Sandler, M.; Chu, G.; Chen, L.C.; Chen, B.; Tan, M.; Wang, W.; Zhu, Y.; Pang, R.; Vasudevan, V.; et al. Searching for mobilenetv3. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, Republic of Korea, 27 October–2 November 2019; pp. 1314–1324. [Google Scholar]

- Zhang, T.; Zhang, X.; Shi, J.; Wei, S. HyperLi-Net: A hyper-light deep learning network for high-accurate and high-speed ship detection from synthetic aperture radar imagery. ISPRS J. Photogramm. Remote. Sens. 2020, 167, 123–153. [Google Scholar] [CrossRef]

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. Mobilenets: Efficient convolutional neural networks for mobile vision applications. arXiv 2017, arXiv:1704.04861. [Google Scholar] [CrossRef]

- Ma, N.; Zhang, X.; Zheng, H.T.; Sun, J. Shufflenet v2: Practical guidelines for efficient cnn architecture design. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 116–131. [Google Scholar]

- Tan, M.; Le, Q. Efficientnet: Rethinking model scaling for convolutional neural networks. In Proceedings of the International Conference on Machine Learning, PMLR, Long Beach, CA, USA, 9–15 June 2019; pp. 6105–6114. [Google Scholar]

- Han, S.; Pool, J.; Tran, J.; Dally, W. Learning both weights and connections for efficient neural network. arXiv 2015, arXiv:1506.02626. [Google Scholar] [CrossRef]

- Liu, Z.; Li, J.; Shen, Z.; Huang, G.; Yan, S.; Zhang, C. Learning efficient convolutional networks through network slimming. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 2736–2744. [Google Scholar]

- Yasir, M.; Liu, S.; Xu, M.; Sheng, H.; Aguilar, F.J.; do Lago Rocha, R.; de Figueiredo, F.A.; Colak, A.T.I.; Hossain, M.S. SSGNet: A single-source generalization model for ship detection in UAV imagery under challenging maritime environments. Ocean Eng. 2026, 348, 124120. [Google Scholar] [CrossRef]

- Han, S.; Mao, H.; Dally, W.J. Deep compression: Compressing deep neural networks with pruning, trained quantization and huffman coding. arXiv 2015, arXiv:1510.00149. [Google Scholar]

- Hinton, G.; Vinyals, O.; Dean, J. Distilling the knowledge in a neural network. arXiv 2015, arXiv:1503.02531. [Google Scholar] [CrossRef]

- Zagoruyko, S.; Komodakis, N. Paying more attention to attention: Improving the performance of convolutional neural networks via attention transfer. arXiv 2016, arXiv:1612.03928. [Google Scholar]

- Jacob, B.; Kligys, S.; Chen, B.; Zhu, M.; Tang, M.; Howard, A.; Adam, H.; Kalenichenko, D. Quantization and training of neural networks for efficient integer-arithmetic-only inference. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 2704–2713. [Google Scholar]

- Zhao, B.; Cui, Q.; Song, R.; Qiu, Y.; Liang, J. Decoupled knowledge distillation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 11953–11962. [Google Scholar]

- Dai, J.; Qi, H.; Xiong, Y.; Li, Y.; Zhang, G.; Hu, H.; Wei, Y. Deformable convolutional networks. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 764–773. [Google Scholar]

- Zhu, X.; Hu, H.; Lin, S.; Dai, J. Deformable convnets v2: More deformable, better results. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 9308–9316. [Google Scholar]

- Zhu, X.; Su, W.; Lu, L.; Li, B.; Wang, X.; Dai, J. Deformable detr: Deformable transformers for end-to-end object detection. arXiv 2020, arXiv:2010.04159. [Google Scholar]

- Zhang, C.B.; Zhong, Y.; Han, K. Mr. detr: Instructive multi-route training for detection transformers. In Proceedings of the Computer Vision and Pattern Recognition Conference, Nashville, TN, USA, 11–15 June 2025; pp. 9933–9943. [Google Scholar]

- Meng, D.; Chen, X.; Fan, Z.; Zeng, G.; Li, H.; Yuan, Y.; Sun, L.; Wang, J. Conditional detr for fast training convergence. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Nashville, TN, USA, 10–25 June 2021; pp. 3651–3660. [Google Scholar]

- Huang, Y.X.; Liu, H.I.; Shuai, H.H.; Cheng, W.H. Dq-detr: Detr with dynamic query for tiny object detection. In Proceedings of the European Conference on Computer Vision; Springer: Milan, Italy, 2024; pp. 290–305. [Google Scholar]

- Huang, S.; Lu, Z.; Cun, X.; Yu, Y.; Zhou, X.; Shen, X. Deim: Detr with improved matching for fast convergence. In Proceedings of the Computer Vision and Pattern Recognition Conference, Nashville, TN, USA, 11–15 June 2025; pp. 15162–15171. [Google Scholar]

- Ye, X.; Xu, C.; Zhu, H.; Xu, F.; Zhang, H.; Yang, W. Density-Aware DETR with Dynamic Query for End-to-End Tiny Object Detection. IEEE J. Sel. Top. Appl. Earth Obs. Remote. Sens. 2025, 18, 13554–13569. [Google Scholar] [CrossRef]

- Yin, H.; Zhu, Z.; Wang, H. SED-DETR: A Scale-Enhanced Deformable Detection Transformer for Remote Sensing Images. IEEE Trans. Geosci. Remote. Sens. 2025, 63, 5624412. [Google Scholar] [CrossRef]

- Liang, Y.; Feng, J.; Zhang, X.; Zhang, J.; Jiao, L. MidNet: An anchor-and-angle-free detector for oriented ship detection in aerial images. IEEE Trans. Geosci. Remote. Sens. 2023, 61, 5612113. [Google Scholar] [CrossRef]

- Liu, D. TS2Anet: Ship detection network based on transformer. J. Sea Res. 2023, 195, 102415. [Google Scholar] [CrossRef]

- Yang, X.; Sun, H.; Fu, K.; Yang, J.; Sun, X.; Yan, M.; Guo, Z. Automatic ship detection in remote sensing images from google earth of complex scenes based on multiscale rotation dense feature pyramid networks. Remote. Sens. 2018, 10, 132. [Google Scholar] [CrossRef]

- Zhao, J.; Ding, Z.; Zhou, Y.; Zhu, H.; Du, W.L.; Yao, R.; El Saddik, A. OrientedFormer: An end-to-end transformer-based oriented object detector in remote sensing images. IEEE Trans. Geosci. Remote. Sens. 2024, 62, 5640816. [Google Scholar] [CrossRef]

- Ding, J.; Xue, N.; Long, Y.; Xia, G.S.; Lu, Q. Learning RoI transformer for oriented object detection in aerial images. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 2849–2858. [Google Scholar]

- Yang, X.; Yan, J.; Feng, Z.; He, T. R3det: Refined single-stage detector with feature refinement for rotating object. In Proceedings of the AAAI Conference on Artificial Intelligence, Virtual, 2–9 February 2021; Volume 35, pp. 3163–3171. [Google Scholar]

- Guo, J.; Hao, J.; Mou, L.; Hao, H.; Zhang, J.; Zhao, Y. S2A-Net: Retinal structure segmentation in OCTA images through a spatially self-aware multitask network. Biomed. Signal Process. Control. 2025, 110, 108003. [Google Scholar] [CrossRef]

- Zhang, T.; Zhang, X. A polarization fusion network with geometric feature embedding for SAR ship classification. Pattern Recognit. 2022, 123, 108365. [Google Scholar] [CrossRef]

- Hou, Q.; Zhang, L.; Cheng, M.M.; Feng, J. Strip pooling: Rethinking spatial pooling for scene parsing. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 4003–4012. [Google Scholar]

- Wang, H.; Zhu, Y.; Green, B.; Adam, H.; Yuille, A.; Chen, L.C. Axial-deeplab: Stand-alone axial-attention for panoptic segmentation. In Proceedings of the European Conference on Computer Vision; Springer: Glasgow, UK, 2020; pp. 108–126. [Google Scholar]

- Pu, Y.; Wang, Y.; Xia, Z.; Han, Y.; Wang, Y.; Gan, W.; Wang, Z.; Song, S.; Huang, G. Adaptive rotated convolution for rotated object detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Paris, France, 2–6 October 2023; pp. 6589–6600. [Google Scholar]

- Lin, T.Y.; Dollár, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature pyramid networks for object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 2117–2125. [Google Scholar]

- Liu, S.; Qi, L.; Qin, H.; Shi, J.; Jia, J. Path aggregation network for instance segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 8759–8768. [Google Scholar]

- Ghiasi, G.; Lin, T.Y.; Le, Q.V. Nas-fpn: Learning scalable feature pyramid architecture for object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 7036–7045. [Google Scholar]

- Tan, M.; Pang, R.; Le, Q.V. Efficientdet: Scalable and efficient object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 10781–10790. [Google Scholar]

- Zhang, T.; Gao, G.; Ke, X.; Zhang, X. Swarm Learning: Perception-Retrieval-Localization for Ship Detection from Synthetic Aperture Radar Remote Sensing Imagery. IEEE J. Sel. Top. Appl. Earth Obs. Remote. Sens. 2026, 1–11. [Google Scholar] [CrossRef]

- Liu, Z.; Mao, H.; Wu, C.Y.; Feichtenhofer, C.; Darrell, T.; Xie, S. A convnet for the 2020s. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 11976–11986. [Google Scholar]

- Ding, X.; Zhang, X.; Han, J.; Ding, G. Scaling up your kernels to 31x31: Revisiting large kernel design in cnns. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 11963–11975. [Google Scholar]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, QC, Canada, 10–17 October 2021; pp. 10012–10022. [Google Scholar]

- Ding, X.; Zhang, X.; Ma, N.; Han, J.; Ding, G.; Sun, J. RepVGG: Making VGG-stay ConvNets Great Again. In Proceedings of the CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 10–25 June 2021; pp. 13728–13737. [Google Scholar]

- Yu, Z.; Zhao, C.; Wang, Z.; Qin, Y.; Su, Z.; Li, X.; Zhou, F.; Zhao, G. Searching central difference convolutional networks for face anti-spoofing. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 5295–5305. [Google Scholar]

- Liu, S.; Huang, D.; Wang, Y. Learning spatial fusion for single-shot object detection. arXiv 2019, arXiv:1911.09516. [Google Scholar] [CrossRef]

- Dai, X.; Chen, Y.; Xiao, B.; Chen, D.; Liu, M.; Yuan, L.; Zhang, L. Dynamic head: Unifying object detection heads with attentions. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 10–25 June 2021; pp. 7373–7382. [Google Scholar]

- Zhao, Y.; Lv, W.; Xu, S.; Wei, J.; Wang, G.; Dang, Q.; Liu, Y.; Chen, J. Detrs beat yolos on real-time object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 17–21 June 2024; pp. 16965–16974. [Google Scholar]

- Pang, J.; Chen, K.; Shi, J.; Feng, H.; Ouyang, W.; Lin, D. Libra r-cnn: Towards balanced learning for object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 821–830. [Google Scholar]

- Tian, Z.; Shen, C.; Chen, H.; He, T. Fcos: Fully convolutional one-stage object detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, Republic of Korea, 27 October–2 November 2019; pp. 9627–9636. [Google Scholar]

- Jocher, G.; Stoken, A.; Borovec, J.; Changyu, L.; Hogan, A.; Diaconu, L.; Ingham, F.; Poznanski, J.; Fang, J.; Yu, L.; et al. ultralytics/yolov5: V3. 1-bug fixes and performance improvements. Zenodo 2020, 10.5281. [Google Scholar]

Figure 1.

Overview of DENet, incorporating DSConv in the backbone and ESF in the Hybrid Encoder.

Figure 1.

Overview of DENet, incorporating DSConv in the backbone and ESF in the Hybrid Encoder.

Figure 2.

DSConv design with offset-guided strip enhancement.

Figure 2.

DSConv design with offset-guided strip enhancement.

Figure 3.

ESF design with multi-scale convolutions and differential compensation.

Figure 3.

ESF design with multi-scale convolutions and differential compensation.

Figure 4.

Illustration of the differential compensation in one ESF branch.

Figure 4.

Illustration of the differential compensation in one ESF branch.

Figure 5.

Qualitative comparison of detection results and activation maps in typical maritime scenes. (a) Small and scattered ships in a complex port scene. (b) elongated berthing targets under dock-boundary interference; (c) adjacent large vessels in a crowded harbor.

Figure 5.

Qualitative comparison of detection results and activation maps in typical maritime scenes. (a) Small and scattered ships in a complex port scene. (b) elongated berthing targets under dock-boundary interference; (c) adjacent large vessels in a crowded harbor.

Figure 6.

Visualization of feature heatmaps on small and densely distributed targets under clutter. (a) Multiple ships aligned with piers in a regular dock layout; (b) dense ships in a cluttered harbor scene; (c) small near-shore vessels under shoreline.

Figure 6.

Visualization of feature heatmaps on small and densely distributed targets under clutter. (a) Multiple ships aligned with piers in a regular dock layout; (b) dense ships in a cluttered harbor scene; (c) small near-shore vessels under shoreline.

Figure 7.

Comparison on ShipRSImageNet.

Figure 7.

Comparison on ShipRSImageNet.

Figure 8.

The effects of ESF on theta.

Figure 8.

The effects of ESF on theta.

Figure 9.

Comparison of public ship datasets.

Figure 9.

Comparison of public ship datasets.

Table 1.

Hardware and software environment.

Table 1.

Hardware and software environment.

| Hardware Environment | Software Environment |

|---|

| CPU | Intel(R) Xeon(R) Gold 6430 2.10 GHz | OS | Linux |

| RAM | 1.00 TB | CUDA | 11.2 |

| Video memory | 24 GB | Python | 3.8.20 |

| GPU | RTX 4090 | PaddlePaddle | 2.6.2 |

| Server platform | AutoDL | cuDNN | 8.2 |

Table 2.

Comprehensive comparison of DENet and RT-DETR performance.

Table 2.

Comprehensive comparison of DENet and RT-DETR performance.

| Method | Params (M) | GFLOPS (G) | FPS | | | | | | |

|---|

| RT-DETR | 32.40 | 40.99 | 69.30 | 58.8 | 68.5 | 65.3 | 13.2 | 37.0 | 64.6 |

| DENet | 90.82 | 72.46 | 46.13 | 63.2 | 73.6 | 70.5 | 13.2 | 37.2 | 69.2 |

Table 3.

Comparison of the effectiveness of components in DSConv.

Table 3.

Comparison of the effectiveness of components in DSConv.

| Method | Params (M) | GFLOPS (G) | FPS | | | | | | |

|---|

| DENet | 90.82 | 72.46 | 46.13 | 63.2 | 73.6 | 70.5 | 13.2 | 37.2 | 69.2 |

| No DSConv x | 88.80 | 67.00 | 49.26 | 61.5 | 71.6 | 68.3 | 16.5 | 37.3 | 67.0 |

| No DSConv y | 88.80 | 67.00 | 52.17 | 59.1 | 69.0 | 66.3 | 12.4 | 36.0 | 64.9 |

| No Strip Enhancement | 89.83 | 72.04 | 47.91 | 59.7 | 69.1 | 66.3 | 14.3 | 37.3 | 64.5 |

Table 4.

Exploring the effects of ESF on theta.

Table 4.

Exploring the effects of ESF on theta.

| Method | | | | | | |

|---|

| DENet ( = 0.3) | 59.4 | 69.0 | 66.0 | 12.4 | 35.5 | 64.7 |

| DENet ( = 0.4) | 62.8 | 73.4 | 70.0 | 10.1 | 37.4 | 69.2 |

| DENet ( = 0.5) | 63.2 | 73.6 | 70.5 | 13.2 | 37.2 | 69.2 |

| DENet ( = 0.6) | 62.4 | 73.4 | 70.2 | 13.0 | 37.1 | 68.2 |

| DENet ( = 0.7) | 62.5 | 72.5 | 69.4 | 12.1 | 36.4 | 68.3 |

Table 5.

Comparison with conventional multi-scale fusion methods on ShipRSImageNet. (Pytorch V1.7.1).

Table 5.

Comparison with conventional multi-scale fusion methods on ShipRSImageNet. (Pytorch V1.7.1).

| Model | Backbone | Style | HBB | SBB |

|---|

| Faster RCNN with FPN | R-50 | Pytorch | 37.5 | - |

| Faster RCNN with FPN | R-101 | Pytorch | 54.3 | - |

| Mask RCNN with FPN | R-50 | Pytorch | 54.5 | 45.0 |

| Mask RCNN with FPN | R-101 | Pytorch | 56.4 | 47.2 |

| Cascade Mask RCNN with FPN | R-50 | Pytorch | 59.3 | 48.3 |

| Retinanet with FPN | R-50 | Pytorch | 32.6 | - |

| Retinanet with FPN | R-101 | Pytorch | 48.3 | - |

| FoveaBox | R-101 | Pytorch | 45.9 | - |

| FCOS with FPN | R-101 | Pytorch | 49.8 | - |

| RTDETR + ESF (OURS) | R-50 | Pytorch | 60.1 | - |

Table 6.

Ablation experiments of DENet (the optimal values are bold, and the increments are indicated in the lower-right corner of the respective numerical values).

Table 6.

Ablation experiments of DENet (the optimal values are bold, and the increments are indicated in the lower-right corner of the respective numerical values).

| Method | Params (M) | GFLOPS (G) | FPS | | | | | | |

|---|

| RT-DETR | 32.40 | 40.99 | 69.30 | 58.8 | 68.5 | 65.3 | 13.2 | 37.0 | 64.6 |

| ESF | 42.96 | 50.70 | 61.02 | 60.1 | 69.9 | 66.9 | 14.6 | 38.4 | 65.9 |

| DSConv | 84.60 | 60.35 | 58.62 | 61.0 | 70.6 | 67.5 | 12.0 | 35.8 | 67.1 |

| DENet | 90.82 | 61.02 | 46.13 | 63.2 | 73.6 | 70.5 | 13.2 | 37.2 | 69.2 |

Table 7.

Comparison experiment on ShipRSImageNet. (The highest values in the table are marked in bold).

Table 7.

Comparison experiment on ShipRSImageNet. (The highest values in the table are marked in bold).

| Method | GFLOPs | | | | | | |

|---|

| Faster RCNN [6] | – | 38.1 | 52.9 | 43.8 | 18.1 | 41.6 | 38.7 |

| Libra RCNN [78] | – | 31.9 | 44.2 | 37.3 | 16.6 | 36.4 | 31.8 |

| SSD [23] | – | 31.9 | 49.0 | 36.5 | 9.4 | 33.0 | 32.9 |

| FCOS [79] | – | 27.3 | 37.7 | 31.7 | 16.7 | 33.6 | 26.5 |

| YOLOV3 [18] | 156.4 | 41.8 | 59.2 | 49.0 | 17.3 | 41.1 | 42.1 |

| YOLOV5 [80] | 16.5 | 60.2 | 72.1 | 68.8 | 23.2 | 59.8 | 61.9 |

| YOLOX [27] | 26.8 | 59.1 | 70.1 | 66.7 | 21.2 | 52.8 | 60.6 |

| YOLOV9 [20] | 102.1 | 43.3 | 52.5 | 49.3 | 24.2 | 52.2 | 42.9 |

| YOLOV10 [21] | 21.6 | 58.4 | 66.8 | 65.0 | 20.3 | 60.3 | 58.4 |

| RT-DETR | 41.0 | 58.8 | 68.5 | 65.3 | 13.2 | 37.0 | 64.6 |

| DENet (Ours) | 61.0 | 63.2 | 73.6 | 70.5 | 13.2 | 37.2 | 69.2 |

Table 8.

Validation on public datasets.

Table 8.

Validation on public datasets.

| Datasets | Method | Params (M) | GFLOPS | FPS | | | | | | |

|---|

| NWPU VHR-10 | RT-DETR | 32.38 | 49.90 | 48.5 | 60.9 | 91.6 | 69.4 | 51.5 | 63.3 | 61.6 |

| | DENet | 90.75 | 60.93 | 35.7 | 63.0 | 94.6 | 72.1 | 49.9 | 64.2 | 63.7 |

| Infrared Ship Database | RT-DETR | 32.57 | 49.90 | 62.3 | 62.1 | 90.7 | 65.9 | 39.1 | 64.5 | 74.9 |

| | DENet | 90.81 | 60.93 | 55.1 | 62.9 | 91.5 | 66.6 | 40.2 | 64.9 | 76.2 |

Table 9.

Validation on VisDrone2019-DET.

Table 9.

Validation on VisDrone2019-DET.

| Method | Params (M) | GFLOPS (G) | FPS | | | | | | |

|---|

| RT-DETR | 32.39 | 49.99 | 57.82 | 21.8 | 38.7 | 21.4 | 11.8 | 32.0 | 48.2 |

| DENet | 90.82 | 61.93 | 43.66 | 22.4 | 39.5 | 22.2 | 12.0 | 32.8 | 46.5 |

| Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |