1. Introduction

With rapid global urbanization and industrial expansion have exacerbated water pollution, transforming it into a critical global challenge. Consequently, the monitoring and governance of floating litter have garnered significant international attention [

1]. Floating debris, primarily composed of vegetation, plastic fragments, and anthropogenic waste [

2], tends to form high-density accumulations in river channels and coastal zones due to hydrodynamic forces. Non-degradable plastic waste, in particular, disrupts aquatic ecosystems, threatens fisheries and navigation safety, and endangers human health through bioaccumulation [

3]. Estimates suggest that 23 million tons of plastic waste entered aquatic ecosystems in 2016 alone, with projections soaring to 53 million tons by 2030 without effective intervention [

4]. Traditional management strategies, relying on manual patrols and vessel-based salvage, exhibit significant latency and are constrained by visual range, weather conditions, and labor costs, preventing high-frequency, all-weather coverage [

5].

In this context, Unmanned Aerial Vehicle (UAV) low-altitude remote sensing has emerged as a transformative paradigm. Surpassing the limitations of satellite remote sensing, UAVs offer centimeter-level spatial resolution and deployment flexibility, enabling real-time data acquisition in complex river networks [

6]. This technology has been successfully applied to wildlife tracking [

7], oil spill monitoring [

8], and vegetation classification [

9,

10]. Deploying deep learning-based object detection algorithms on UAV platforms for automated litter identification has become a focal point in environmental remote sensing [

11,

12,

13].

However, algorithm performance relies heavily on domain-specific datasets. General-purpose datasets (e.g., COCO [

14]) fail to capture the unique aerial perspectives and optical characteristics of water scenes. Recent benchmarks such as Seaclear [

15] and the River Floating Debris Dataset [

16] have begun to address this gap. Furthermore, large-scale datasets like FMPD [

17] have enhanced sample diversity and included annotations for complex environmental factors, such as reflections. Parallel to data accumulation, standardizing monitoring protocols is crucial. Research has focused on optimizing flight parameters and imaging configurations [

18,

19] and balancing coverage with resolution under varying hydrological conditions [

20] to ensure data consistency and scientific reproducibility.

Despite the maturity of object detection in terrestrial scenarios [

21,

22], domain adaptation for water surface floating litter presents unique difficulties. As illustrated in

Figure 1, UAV-based detection faces a triad of challenges stemming from target characteristics, environmental interference, and edge deployment constraints [

23].

(1) Small Scale and Non-Rigid Deformation: Due to the wide field of view of aerial imagery, floating litter often occupies minimal pixel areas (

pixels), with scales fluctuating drastically with flight altitude. While feature pyramids and context enhancement strategies have been proposed to mitigate this [

24,

25], targets also undergo non-rigid deformation caused by water flow, partial submersion, or occlusion. During the downsampling process of Deep Convolutional Neural Networks, the fine-grained features of these irregular targets are prone to information loss, leading to significant missed detections.

(2) Complex Environmental Noise: Natural water surfaces are highly dynamic [

26]. Interferences such as specular reflections, glints, and shadows of shoreline vegetation create high-frequency structural noise. These visual artifacts are easily confused with targets like white foam or plastic bags, hindering the extraction of discriminative features by traditional networks. Consequently, integrating attention mechanisms or frequency domain analysis to suppress background noise has become a mainstream approach for enhancing robustness [

27].

(3) SWaP Constraints on Edge Deployment: UAV inspection tasks necessitate deployment on embedded platforms with strict Size, Weight, and Power (SWaP) constraints, requiring real-time inference (FPS

) [

28]. Existing high-performance models typically incur high computational costs, making it difficult to balance accuracy and speed under low-power conditions, thereby restricting practical engineering implementation.

To address these lacunae, this paper proposes FLD-Net, a lightweight, real-time detection model designed for UAV visual perception. Furthermore, to alleviate the scarcity of high-quality annotated data, we construct UAV-Flow, the first multi-scenario dataset for floating litter from a UAV perspective. The primary contributions of this work are summarized as follows:

We construct UAV-Flow, a benchmark dataset that fills the gap for small and non-rigid targets in water environments. Covering diverse hydrological and lighting conditions, it provides critical data support for researching algorithm robustness.

We propose FLD-Net, a real-time detection framework robust against water surface interference. By integrating three novel mechanisms—DFEM, DCFN, and DANA—the model effectively overcomes the technical bottlenecks of deformation adaptation, feature loss, and background noise suppression.

We implement a high-performance edge deployment scheme. Validation on embedded platforms demonstrates the system’s real-time capability and energy efficiency, offering a viable solution for low-cost, automated intelligent water monitoring.

2. Related Work

The automated monitoring of floating litter represents an interdisciplinary synergy of computer vision, remote sensing technology, and environmental engineering. With the rapid advancement of deep learning, research in this domain has witnessed a paradigm shift from traditional image processing methods to intelligent recognition based on Convolutional Neural Networks (CNNs). This section systematically reviews existing literature from two dimensions, data benchmarks and detection algorithms, while analyzing the specific challenges encountered in UAV-based aquatic operations.

2.1. Surface Floating Debris Datasets

High-quality annotated data serves as the cornerstone for driving performance improvements in deep learning models. Although numerous marine and aquatic litter datasets have emerged in recent years, existing public repositories exhibit significant domain discrepancies and scenario limitations when supporting fine-grained detection tasks from a low-altitude UAV perspective. Aquatic litter can be categorized into three distinct types: beach litter, surface floating litter, and benthic debris [

29]. This study specifically targets waste that floats or suspends on open water surfaces (including oceans, lakes, and rivers) driven by currents or wind—typically low-density, high-buoyancy items such as plastic bags, bottles, foam, and branches.

The most representative dataset, SeaClear [

15], contains 8610 images of shallow water environments and serves as a critical benchmark. However, it primarily focuses on underwater scenarios where image characteristics are dominated by light attenuation and scattering, with background interference stemming largely from benthic organisms or sediment. In contrast, UAV orthophotography is dominated by surface specular reflections, where background noise consists of dynamic wave glint, spray, and shoreline vegetation reflections. Another category, such as the Floater dataset [

30], targets inland floating debris but is derived mainly from shore-based surveillance cameras. The horizontal or oblique angles of shore-based monitoring result in severe inter-object occlusion and perspective distortion, failing to simulate the spatial geometric features and scale distributions inherent to the UAV orthographic view.

While some studies have attempted to construct UAV-perspective datasets, they often suffer from limited sample sizes and scenario homogeneity. The River Floating Debris dataset [

16] comprises only 840 samples from a single river scene; its limited magnitude and lack of annotation for varied flight altitudes fail to capture scale variations essential for operational UAV missions. Similarly, the HAIDA Trash Dataset [

31] focuses on coastal and marine floating litter, covering common items like plastic bottles and fishing nets, yet it possesses a limited scale that restricts the generalization of deep CNNs under fluctuating illumination and cluttered backgrounds. The recently proposed FMPD dataset [

17] expands both data volume and scenario diversity but is largely collected under ideal conditions with favorable lighting and calm water surfaces, exhibiting a benign water bias. Real-world aquatic environments are replete with uncontrollable interference factors; consequently, models trained on such biased data may perform well on test sets but suffer a drastic decline in robustness when deployed in complex lighting and adverse hydrological conditions.

Therefore, constructing a benchmark dataset that encompasses multiple scenarios, multi-scale targets, and rich environmental noise is a prerequisite for addressing the robustness deficiencies of current algorithms. This necessity underpins the motivation for developing the UAV-Flow dataset in this study.

2.2. UAV Object Detection

UAV remote sensing imagery presents severe challenges to traditional object detection algorithms due to its high spatial resolution, wide field of view, and complex backgrounds. Unlike natural scene images, UAV aerial photography is characterized by drastic target scale variations and intricate background textures [

21]. Addressing these characteristics, existing research primarily focuses on three core dimensions: small target feature enhancement, complex background suppression, and lightweight edge deployment.

Regarding small target detection, UAV perspectives result in ground targets occupying minimal pixel areas (

pixels). Although the YOLO series [

32,

33,

34] and its variants [

35,

36] optimize multi-scale feature fusion via Path Aggregation Networks (PANet), continuous downsampling operations in deep CNNs inevitably lead to feature information loss. To mitigate this, LAR-YOLOv8 [

37] and MSD-YOLOn [

38] attempt to improve small target recall by expanding receptive fields or reinforcing shallow feature reuse. Their primary strategies include introducing attention mechanisms to enhance weak feature capture and employing multi-scale fusion architectures to integrate cross-level information, thereby preventing small targets from being submerged in deep features. Furthermore, RFLA [

39] and NWD [

40] introduce label assignment strategies based on Gaussian distribution modeling and Wasserstein distance, respectively. While these approaches alleviate the sensitivity of positive/negative sample matching from a loss function perspective, the loss of spatial information during the feature extraction stage remains a physical bottleneck constraining detection accuracy.

In terms of noise resistance in complex backgrounds, UAV imagery often includes urban scenes, vegetation cover, or uneven illumination, leading to significant background and occlusion interference. Attention mechanisms are widely regarded as effective means to enhance feature discriminability. FFCA-YOLO [

24] utilizes channel attention to amplify salient feature responses while suppressing invalid background channels. However, traditional global attention often relies on statistical information, making it difficult to suppress high-frequency background noise while preserving the structural details of small targets. In comparison, RTD-Net [

41] and RT-DETR [

42] incorporate Transformer architectures, utilizing self-attention mechanisms to model long-range dependencies and effectively distinguish targets from complex backgrounds. However, their quadratic complexity causes high latency, restricting their use in real-time tasks. Furthermore, recent advances emphasize the necessity of modeling global contexts in complex remote sensing scenes; for instance, Sun et al. [

43,

44] leveraged consistency reasoning and dual-stream relationship learning to concurrently capture spatial dependencies and mitigate detection ambiguities.

Regarding real-time edge deployment, with the proliferation of edge computing in UAV payloads, balancing inference latency and detection accuracy on resource-constrained devices has become a critical hurdle. Model lightweighting techniques such as pruning, quantization, and knowledge distillation have been extensively explored. For instance, Drone-YOLO [

36] maintains high detection accuracy under real-time conditions through a lightweight “sandwich” fusion mechanism, while LUD-YOLO [

45] significantly reduces parameters via channel pruning. Nevertheless, a trade-off between accuracy and efficiency persists in practical applications: excessive parameter compression often sacrifices the feature extraction capability for weak and small targets, increasing the miss rate; conversely, high-precision models typically incur massive Floating Point Operations (FLOPs), making it difficult to meet real-time video stream processing requirements (FPS

) within limited power budgets.

In summary, while existing methods have progressed in their respective dimensions, constructing a lightweight model that simultaneously possesses small target perception and noise resistance remains a key scientific problem to be solved in UAV remote sensing. Moreover, the proposed FLD-Net must specifically account for the additional challenges posed by dynamic water surface backgrounds.

3. UAV-Flow Dataset

As elucidated in

Section 2.1, existing benchmarks for floating litter detection universally suffer from domain discrepancies, insufficient sample magnitudes, and scenario homogeneity relative to UAV remote sensing imagery. To bridge this data hiatus and provide robust training support for detection models, this study constructs UAV-Flow, the first multi-scenario benchmark dataset tailored for the UAV perspective.

3.1. Dataset Construction

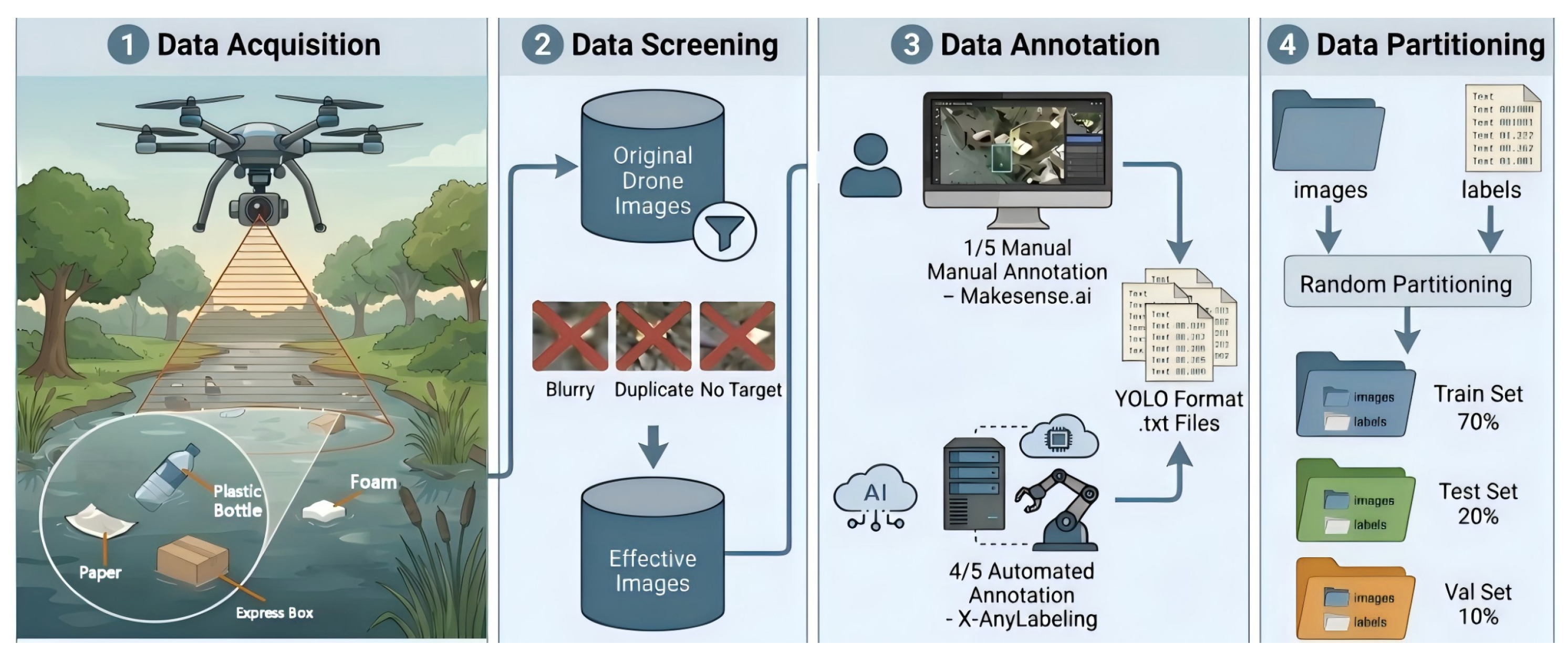

To guarantee data diversity and fidelity, the dataset construction workflow, as delineated in

Figure 2, comprises four distinct phases: data acquisition, screening, annotation, and partitioning.

Data acquisition was conducted using the DJI M350 RTK platform. This platform was selected for its centimeter-level positioning accuracy and high stability against wind, ensuring imaging quality under complex meteorological conditions. To build a robust sample library, the acquisition strategy emphasized spatiotemporal heterogeneity. Three typical aquatic environments were selected: urban river channels, natural lakes, and nearshore mudflats. These cover a spectrum of hydrological conditions, from static to dynamic flow and from clear to turbid water. A multi-variable flight strategy was employed, encompassing varying altitudes (15–100 m), multiple viewing angles, and diverse time slots. This approach effectively captured the visual feature variations of floating debris under different illumination intensities, shadow occlusions, and water surface reflection conditions. Over 5000 raw images were acquired. Following rigorous manual screening, images exhibiting motion blur, severe overexposure, or background-only scenes were discarded, resulting in a core dataset of 4593 high-resolution valid images.

Addressing the challenges of sparse distribution and high annotation costs for aquatic debris, we proposed a semi-supervised annotation strategy to balance efficiency and precision. First, approximately 20% of typical samples were manually annotated via the Makesense.ai [

46] platform to establish a high-quality seed dataset. Subsequently, an initial detection model was trained on this seed set to generate pseudo-labels for the remaining 80% of the data using the X-AnyLabeling tool [

47]. Human experts were then introduced to verify and fine-tune the automatically generated labels, focusing on correcting missed minute targets and refining boundary regression deviations. Finally, all annotations were standardized to the YOLO format and randomly partitioned into training, validation, and testing sets in a 7:2:1 ratio to ensure unbiased evaluation.

3.2. Statistical Analysis and Characteristics

The dataset contains 20,618 annotated instances covering five common categories of anthropogenic waste in natural waters: plastic bottles, plastic, express cartons, foam, and pieces of paper. The class distribution is relatively balanced. Specifically, plastic bottles—the most representative surface pollutant—account for approximately 30% of instances, effectively mitigating model bias caused by long-tail distributions.

To quantify the scale characteristics of floating litter in UAV imagery, this study strictly adheres to the definition standards of the MS COCO benchmark [

14]. Targets are classified into three scale levels based on the pixel area

A of the bounding box: small objects (

pixels), medium objects (

pixels), and large objects (

pixels). Statistical analysis of UAV-Flow based on these criteria (

Figure 3) reveals significant small-scale characteristics. Small objects constitute 78.9% of all annotated instances. Notably, the proportion of small objects for plastic bottles, waste paper, and courier cartons exceeds 80%. This scale distribution authentically replicates the visual challenges encountered during high-altitude UAV patrols, where fine-grained feature information is prone to loss during downsampling.

To validate the cross-domain generalization capability of the model, UAV-Flow maintains high diversity in scenario composition, comprising urban rivers (46%), natural lakes (37%), and nearshore mudflats (17%). This multi-environment coverage ensures the inclusion of diverse interference modes, ranging from static zones with strong specular reflections to dynamic zones with complex background clutter. As presented in

Table 1, compared to the single-perspective limitations of Floater and FMPD, or the small sample sizes of River Floating Debris and HAIDA, UAV-Flow achieves an order-of-magnitude improvement in image quantity, small object proportion, and environmental heterogeneity. In summary, UAV-Flow provides a moderately scaled, high-quality, and challenging multi-scenario benchmark for surface floating litter detection, offering solid data support for subsequent algorithmic research and model generalization evaluation.

4. Methodology

To address the challenges of high small-target density, non-rigid geometric deformations, and water surface background noise interference inherent in UAV-based floating litter detection, this article proposes FLD-Net, a lightweight real-time detection framework optimized for edge deployment. To satisfy the stringent requirements for low power consumption and real-time inference on UAV platforms, we select YOLOv11 as the baseline model due to its superior balance between speed and accuracy.

As illustrated in

Figure 4, we introduce three mechanism-enhancement modules into the backbone, neck, and pre-detection stages of the YOLOv11 architecture: the Deformable Feature Extraction Module (DFEM), the Dynamic Cross-Scale Fusion Network (DCFN), and the Dual-Domain Anti-Noise Attention (DANA). These innovative modules form a continuous enhancement chain from low-level feature acquisition to high-level semantic decision-making, achieving the preservation of deformed small-target information, efficient cross-scale feature fusion, and background noise suppression.

4.1. Deformable Feature Extraction Module

In UAV remote sensing imagery, floating litter often exhibits highly irregular, non-rigid geometric deformations due to hydrodynamic impact and partial submersion. Standard CNNs rely on fixed geometric grids for feature sampling. This rigid sampling mechanism, when processing floating objects with stochastic morphological variations, is prone to sampling points falling on background regions rather than the target body, resulting in spatial mismatch during feature extraction and loss of semantic information [

48].

To surmount the physical limitations of standard convolution in geometric modeling, Dai et al. [

49] first proposed Deformable Convolutional Networks (DCNs), which introduce a set of learnable 2D offsets to the standard sampling grid, endowing the convolution kernel with the ability to adaptively adjust the receptive field shape. Subsequently, Zhu et al. [

50] introduced a modulation mechanism in DCNv2, enhancing the model’s ability to suppress irrelevant backgrounds by adding weight masks. Recent studies have widely demonstrated the significant advantages of DCN in visual tasks involving complex geometric transformations. Xiao et al. [

51] utilized multi-scale deformable convolution alignment to effectively resolve spatial misalignment caused by dynamic motion in satellite video, confirming the robustness of DCN in feature alignment for dynamically drifting targets. Similarly, Liu et al. [

52] further showed that DCN can adaptively fit the geometric boundaries of complex topological structures such as slender and curved shapes, significantly breaking through the physical limitations of traditional convolution in modeling irregular targets.

Inspired by these studies and given the non-rigid characteristics of floating debris, this research posits that introducing deformable convolution is critical to resolving feature spatial mismatch and semantic loss. To this end, we designed the DFEM, intended to replace traditional convolution units in the deep layers of the backbone network, enabling the network to actively deform to fit the target’s true geometric contour. DFEM adopts a hierarchical nested architecture as shown in

Figure 5. This module follows the topological design principles of Cross Stage Partial (CSP), balancing model expressiveness and inference cost through gradient flow splitting and feature recombination.

As shown in

Figure 5a, the input feature flow of DFEM is first divided into a backbone branch and a residual branch via a Split operation. The backbone branch enters the core feature extraction component, designed as a C3_DCN unit depending on network depth. C3_DCN retains the efficiency of the YOLO series’ C3 module but introduces a deformation mechanism within its stacked bottleneck layers. The residual branch connects directly to the end via a

convolution. The two branches are finally merged via a Concat operation and fused by a terminal convolution layer. This design not only ensures lossless gradient flow during backpropagation but also effectively reduces video memory consumption after introducing complex operators.

The core innovation of DFEM lies in the micro-level Bottleneck_DCN unit, as shown in

Figure 5b. This unit reconstructs the standard residual block by replacing the standard convolution with deformable convolution, specifically assuming the input feature map is

X and the sampling grid of standard convolution is

R. In Bottleneck_DCN, for each position

on the output feature map

Y, the sampling process is reconstructed as:

where

denotes the fixed offset within the regular sampling grid

R, and

represents the kernel weight corresponding to the sampling position. The equation introduces two key variables, the learnable offset

and the modulation scalar

, which are dynamically predicted and generated by the network based on the input feature

X. Breaking the constraints of a regular grid, for each sampling point, the network predicts a 2D offset vector, shifting the actual sampling position to

. This allows the convolution kernel to deform, enabling its receptive field to adaptively stretch, rotate, or bend to fit the actual geometric contour of the floating litter.

is a scalar activated by Sigmoid, serving as the weight for each sampling point. If a sampling point

p falls into an irrelevant background region even after offsetting, the network can suppress its contribution by setting

. Since the new sampling position

p has non-integer coordinates, the feature value

at this sub-pixel location is computed via bilinear interpolation, ensuring gradient differentiability during backpropagation.

Through this design, Bottleneck_DCN can dynamically adjust the geometric shape of the receptive field according to the actual morphology of the floating litter, breaking the physical constraints of the rigid grid in standard convolution. Ultimately, via CSP structural feature aggregation, DFEM achieves high-fidelity feature extraction for non-rigid targets in deep networks, significantly ameliorating the issue of missed detections caused by geometric mismatch.

4.2. Dynamic Cross-Scale Fusion Network

In UAV aquatic remote sensing monitoring, floating litter exhibits cross-scale distribution characteristics due to variations in flight altitude, with a predominance of small targets. In the UAV-Flow dataset, small objects account for as high as 78.9%. Feature Pyramid Networks (FPNs) are the standard paradigm for solving such multi-scale problems [

53]. However, applying generic FPN architectures directly to water surface environments faces two core bottlenecks. First, while shallow feature maps retain high-resolution spatial details, they also contain substantial high-frequency background noise composed of water ripples and glints; excessive fusion of shallow features often degrades the Signal-to-Noise Ratio (SNR) of the feature maps. Second, traditional bilinear interpolation is content-agnostic, calculating sampling points based solely on spatial distance [

54]. When processing floating objects with weak textures or blurred edges, this interpolation strategy is prone to sub-pixel level feature aliasing, leading to the loss of edge information for minute targets during fusion. Consequently, this paper proposes DCFN, which reconstructs the cross-scale feature fusion path through the synergistic design of asymmetric topology pruning and content-aware upsampling.

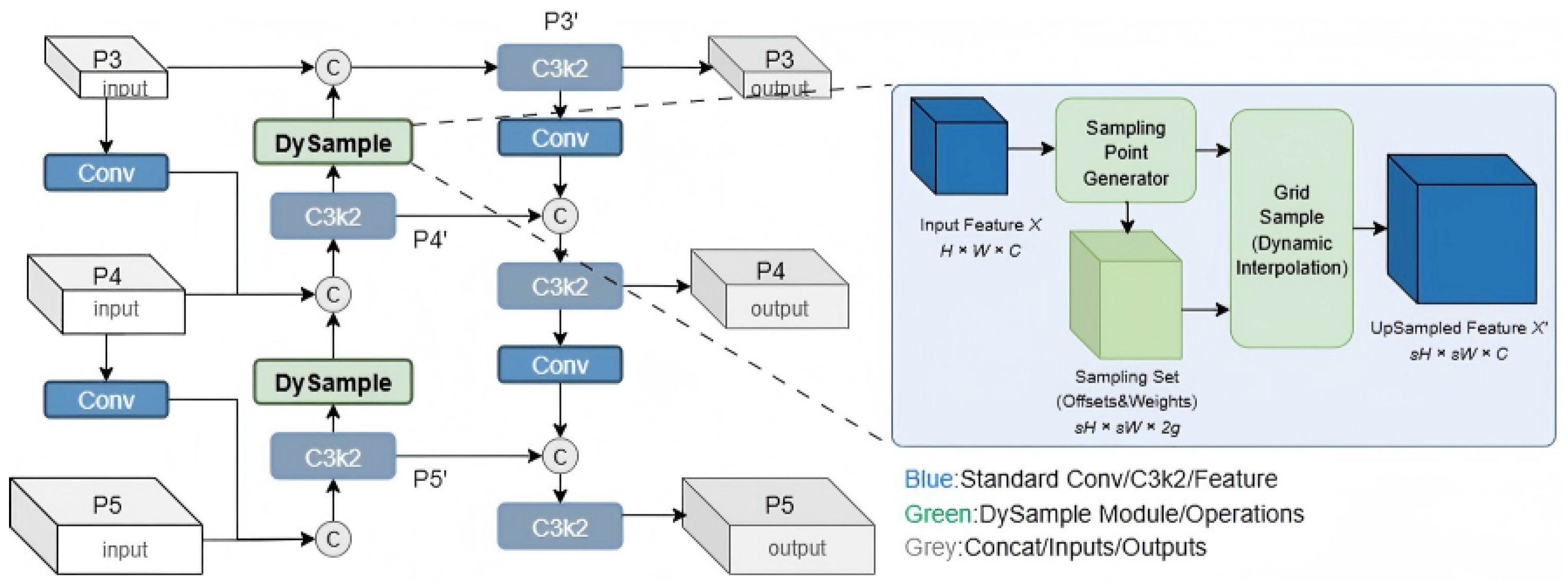

To achieve efficient feature aggregation under limited edge computing budgets, DCFN utilizes the P3, P4, and P5 feature layers of the backbone network as inputs to construct a lightweight asymmetric multi-path fusion structure, as shown in

Figure 6. Drawing on the efficient design concepts of GFPN [

55] and DAMO-YOLO [

56], this structure strategically prunes redundant shallow fusion paths via an asymmetric topology and strengthens the top-down flow of deep information. By utilizing DySample for the top-down fusion path, semantic information is ensured not to decay during transmission. The exclusion of extra shallow layer (P2) fusion is justified because, in aquatic aerial imagery, while shallow features retain high-resolution spatial information, they are also replete with high-frequency background noise. Excessive shallow injection would introduce significant non-target interference into deep semantics, causing minute targets to be submerged. This design not only adheres to SNR optimization principles in signal processing but also effectively reduces computational load (FLOPs), as complex operations on high-resolution shallow feature maps consume substantial computing power. Thus, DCFN theoretically achieves a dual optimization of accuracy and efficiency, making it particularly suitable for the resource constraints of edge devices.

To address the semantic ambiguity of small targets in cross-scale fusion, DCFN introduces the DySample operator to replace standard interpolation. DySample is a lightweight dynamic upsampler based on a point-sampling perspective. According to Wang et al. [

57] and Liu et al. [

54], standard interpolation kernels possess spatial invariance, using the same weight kernel for the entire image. However, for small targets with minimal pixel occupancy, local texture changes are drastic and sparse. Research [

54] indicates that adopting a content-aware reorganization strategy—dynamically generating upsampling kernels based on the semantic content of feature maps—is key to recovering minute target details and reducing feature aliasing. Compared to kernel prediction-based methods [

57], DySample is theoretically more aligned with the nature of geometric transformation and possesses lower computational complexity.

The core idea of DySample is that upsampling should not be fixed network interpolation but a resampling process dynamically generated based on feature content. Let the input feature map be

X and the target upsampling factor be

s, yielding output feature map

Y. As shown on the right side of

Figure 6, DySample is divided into two stages: sampling set generation and feature resampling. The network first uses a lightweight generator to predict a dense offset map based on the local semantic content of input feature

X. The final sampling grid is defined as the superposition of the original grid

G and the offset

O:

where

G represents the projection coordinates of the target feature map pixels on the original low-resolution map, and

O is the adaptive offset learned by the network. Each pixel value on the output feature map

Y is obtained by sampling at position

S in the input map

X:

Similarly, the sampling operation here is implemented via bilinear interpolation to ensure differentiability. By learning offsets, DySample attempts to recover lost spatial information, pulling sampling points back to the semantic center or edges of the target. For floating debris, this implies that even if the target is very blurry in high-level features, DySample can focus the upsampling on potential target areas by referencing surrounding contextual information, thereby reconstructing sharp feature boundaries. This mechanism theoretically guarantees semantic alignment during feature fusion, enabling deep semantics to be precisely injected into the texture of small targets in shallow layers.

4.3. Dual-Domain Anti-Noise Attention

Although DFEM and DCFN effectively improve the geometric representation and cross-scale semantic alignment of non-rigid targets, significant high-frequency structural noise specific to aquatic environments remains in the deep feature space before entering the detection head. For instance, specular reflections under strong sunlight and bright edges of dynamic ripples often exhibit feature responses highly similar to minute plastic debris. Traditional global attention mechanisms (e.g., SE [

58], CBAM [

59]) typically recalibrate channel or spatial weights based on statistical information like global average pooling. However, in aquatic scenarios, such blind enhancement strategies face severe inductive bias risks; they tend to enhance all high-response regions, potentially erroneously amplifying bright reflection noise and leading to a surge in false positives.

To this end, we propose the DANA module, as shown in

Figure 7. DANA is not merely feature enhancement but a discriminative decouplingmechanism. By establishing an interaction constraint mechanism between spatial and channel domains, it utilizes the spatial structural priors of the target to guide the screening of channel semantics, thereby precisely stripping the semantic signal of real floating debris from background clutter.

To precisely localize target boundaries while suppressing water ripple noise, DANA designs a Spatial Orthogonal Context Branch. Unlike standard spatial attention which incurs high computational burdens from large-kernel convolutions, this branch adopts the philosophy of Coordinate Attention (CA) [

60], performing 1D feature aggregation along horizontal (X) and vertical (Y) directions, respectively. For input feature

F, two direction-aware feature vectors are generated:

where superscripts

h and

w represent the vertical and horizontal spatial dimensions. Accordingly,

and

are formulated to capture long-range dependencies of the feature map

F along the vertical and horizontal directions. This operation effectively compresses the 2D spatial structure into two 1D feature descriptors. Subsequently, these two are concatenated, transformed via convolution, and passed through nonlinear activation to generate the intermediate feature

f:

Then,

f is split and transformed into two spatial attention maps

and

. The final spatial attention map

is the broadcast product of the two:

where ⊗ denotes element-wise broadcast multiplication. This interactive design enables spatial attention to simultaneously consider contextual information in both horizontal and vertical directions, thereby more precisely locating the non-rigid boundaries of floating litter.

To avoid the erroneous enhancement of background noise by traditional SE modules, DANA introduces the Spatial-Channel Interaction Strategy. Modulating channel descriptors using intermediate features generated by the spatial branch is the key step for noise resistance. Traditional channel descriptors are computed as

. In contrast, DANA computes a spatially weighted channel descriptor

. This ensures that if a channel responds spatially only in noise regions, its corresponding spatial weight will be suppressed, thereby lowering the overall weight of that channel via the interaction mechanism. After Sigmoid activation, the final channel attention vector

is generated. The Sigmoid function maps weights to the interval

rather than binarized

. This means that even faint target signals are merely re-weighted rather than hard-truncated. This soft attention mechanism ensures that gradients can still flow to minute targets during backpropagation, allowing the network to progressively correct its focus on small targets during training. The final feature reconstruction

Y is the result of dual weighting:

Through this spatial-channel interaction mechanism, DANA achieves discriminative decoupling of target semantics and noise structures. Spatial attention is responsible for suppressing the spatial locations of noise, while channel attention suppresses the semantic channels where noise resides. A high response is obtained only through the dual validation of and , effectively avoiding the over-suppression problem caused by judgment errors in a single dimension. After DANA processing, water surface reflections and ripple interference in the feature maps are significantly suppressed, while the feature responses of minute floating debris are focused and enhanced. This provides a high-SNR decision basis for the subsequent detection head, significantly reducing the false detection rate under complex lighting conditions.

5. Experiments and Discussion

5.1. Experimental Setup

All model training and validation processes in this study were conducted on a single computational node equipped with an NVIDIA GeForce RTX 4060 Ti GPU (16 GB VRAM). The software environment was established on the PyTorch 2.0.0 deep learning framework, accelerated by CUDA 11.8, using Python 3.10.18. Input images were uniformly resized to a resolution of pixels. A Stochastic Gradient Descent (SGD) optimizer was employed with an initial learning rate of , a momentum factor of 0.937, and a weight decay coefficient of . The batch size was set to 16, and the training process spanned 200 epochs.

To comprehensively evaluate model performance, Precision (

P), Recall (

R), and Mean Average Precision (mAP) were adopted as core metrics, defined mathematically as follows:

where

,

, and

denote the number of True Positives, False Positives, and False Negatives, respectively.

represents the average precision for a specific class, and mAP

50 denotes the mean average precision at an Intersection over Union (IoU) threshold of 0.5, reflecting the model’s detection capability. Additionally, Parameters (Params), Floating Point Operations (FLOPs), and Frames Per Second (FPS) were introduced to assess the degree of lightweight design and real-time inference capability.

5.2. Ablation Studies

To validate the scientific rigor of the FLD-Net architecture and the efficacy of its core modules, a two-stage ablation analysis was conducted on the UAV-Flow dataset. Prior to finalizing the architecture, granular comparative experiments were performed regarding the insertion position of DFEM and the topology/operator selection for DCFN.

Although DCN adapts to geometric deformations, it incurs computational overhead. To investigate its efficacy at different feature levels, DFEM was deployed at low levels, high levels, and all levels of the backbone. As illustrated in

Table 2, applying the DFEM specifically to the deeper P4 and P5 layers yields superior performance compared to its application across all layers, achieving an mAP

50 of 73.51%. This phenomenon can be attributed to the hierarchical nature of feature representation. While DFEM excels at modeling non-rigid geometric transformations of floating litter, applying it to shallow layers (P2, P3) may inadvertently amplify low-level background noise, such as surface ripples and glint, leading to unstable gradient flow. In contrast, the high-level semantic features in P4 and P5 provide a more robust global context, allowing DFEM to focus on the inherent structural deformations of targets while suppressing environmental interference. This selective integration ensures an optimal balance between feature adaptability and model parameters.

To verify the necessity of the DCFN design, we conducted a stepwise optimization starting from the standard PANet baseline. As presented in

Table 3, directly introducing the GFPN structure increased the mAP

50 to 73.58%; however, it resulted in a parameter surge to 13.72 M, violating the lightweight principle for UAV applications. By adopting an asymmetric pruning strategy (GFPN-Scale), the parameter count was significantly reduced to 9.66 M with only a marginal regression in accuracy. The critical breakthrough occurred with the integration of the DySample operator, which elevated the mAP

50 to 77.69% with a negligible increase in parameters. This strongly demonstrates that the performance leap of DCFN stems not from parameter stacking, but from DySample’s pixel-level lossless reconstruction of minute target features, which effectively compensates for the information loss caused by topological pruning. Furthermore, by evaluating a DCFN variant that retains the P2 layer, we explicitly validated the necessity of its removal. Discarding the P2 layer not only reduces computational overhead but also effectively mitigates the interference introduced by shallow-level background noise.

Upon establishing the optimal internal structure, we utilized YOLOv11s as the baseline to evaluate the proposed modules on detection performance (

Table 4). The baseline exhibits notable limitations in complex aquatic scenarios, where low Recall stems from severe missed detections (False Negatives, FNs) caused by minute target feature loss. Incorporating DFEM bolsters the network’s capacity to model geometric deformations, consequently elevating detection performance. DCFN effectively mitigates these FNs, increasing Recall to 75.04% and highlighting the critical role of dynamic cross-scale fusion in small object detection. Conversely, complex aquatic backgrounds induce a substantial number of false alarms (False Positives, FPs). The application of DANA effectively filters surface reflections and ripple noise via spatial-channel dual-domain filtering, achieving an mAP

50 of 78.95%.

The experiments demonstrate significant complementarity among the modules. DFEM ensures precise geometric alignment of deep features within the backbone, while DCFN and DANA specifically address small target omissions in the neck and complex background noise prior to the detection head, respectively. Crucially, their concurrent utilization exhibits a pronounced synergistic effect, yielding improvements that far exceed their individual contributions. Compared to the baseline, the final FLD-Net achieves an 11.66% increase in Recall and an 8.51% improvement in mAP50. These results definitively validate that FLD-Net systematically resolves the core challenges of extreme scale variations and intense background interference in complex aquatic remote sensing.

5.3. Comparative Experiments

To verify the comprehensive performance of FLD-Net, we benchmarked it against mainstream general object detectors, incorporating ultra-lightweight variants (YOLOv5n [

61], YOLOv8n [

33]) for edge-deployment evaluation, alongside YOLO series [

33,

34,

61] and RT-DETR [

42]. We also compared UAV-optimized models (TPH-YOLOv5 [

35], Drone-YOLO [

36], UAV-DETR [

62]) and the noise-resistant FFCA-YOLO [

24]. To quantify the perceptual capability for small objects, the

metric was introduced. All models were evaluated under identical configurations. As shown in

Table 5, FLD-Net achieved the optimal overall performance with an mAP

50 of 80.47%, a Recall of 77.84%, and an

of 35.6%. While ultra-lightweight models and the baseline YOLOv11s offer extremely high inference speeds, they are fundamentally limited by their feature extraction capabilities for small objects, struggling to surpass an

of 30%. In contrast, FLD-Net improved the baseline’s mAP

50 by 8.51% and

by a substantial 6.7%, maintaining an exceptional efficiency ratio with only a 0.5 M increase in parameters. Although heavy architectures like RT-DETR and UAV-DETR achieved competitive precision (

of 35.0% and 34.9%, respectively) via global attention, their computational costs are prohibitive for real-time airborne applications. FLD-Net not only surpassed UAV-DETR in small object accuracy but did so with only 1/4 of the parameters and less than 1/6 of the computational load, achieving a real-time speed of 156.72 FPS. Furthermore, FLD-Net significantly outperformed UAV-specific models like Drone-YOLO, attributed to its explicit mechanisms for suppressing strong structural noise, which generic UAV models lack.

To evaluate generalization and explicitly assess the model’s cross-domain robustness for small objects, validation was conducted on two external datasets: HAIDA Trash Dataset (complex marine scenes) and FMPD Dataset (dynamic river scenes). As presented in

Table 6, FLD-Net achieved the highest overall accuracy on HAIDA Trash with an mAP

50 of 73.1%, significantly outperforming all comparison models. Furthermore, it demonstrated a clear advantage in small object perception, securing the highest

of 17.8%. The model’s superiority is even more pronounced on the FMPD dataset, which is characterized by macroscopic debris and strong ripple interference. Notably, FLD-Net achieved an exceptional

of 41.8%, overwhelmingly dwarfing the YOLOv11s baseline (12.7%) and RT-DETR (8.2%). Specifically, contrasting the drastic recall and small-target precision decay of RT-DETR in this dynamic environment, FLD-Net maintained robust adaptability. This substantial leap in

is fully credited to the DANA module’s effective suppression of ripple and glare noise, preventing the weak features of minute floating debris from being submerged in highly dynamic aquatic backgrounds.

5.4. Visualization Analysis

To evaluate perceptual robustness in dynamic aquatic environments,

Figure 8 qualitatively compares FLD-Net with mainstream detectors across five challenging scenarios. In the visualizations, red and yellow bounding boxes indicate FNs and FPs, respectively.

Baseline models exhibit significant perceptual deficiencies under complex interference. Specifically, under dynamic water ripples (a), low illumination (b), and turbid water (d), the YOLO series and RT-DETR suffer from severe missed detections of small targets due to inadequate weak feature extraction and high-frequency noise interference. In shoreline shadow occlusion (c), low background contrast causes target features to be submerged, leading to further missed detections. Critically, under strong glare reflections (e), baselines fail to distinguish light spots from debris, generating numerous false positives. In contrast, FLD-Net demonstrates superior environmental adaptability, significantly outperforming mainstream models by effectively suppressing false positives and enhancing recall rates.

To further validate the generalization capability of FLD-Net,

Figure 9 illustrates the visualization results of FLD-Net versus mainstream detectors on the FMPD dataset, which is characterized by water flow fluctuations and high-density debris accumulation. The results indicate that the YOLO series models exhibit severe missed detection phenomena when confronting densely accumulated and minute floating fragments. This is primarily attributed to the loss of fine-grained features during the downsampling process, making it difficult to decouple minute targets from complex backgrounds. Conversely, although RT-DETR utilizes global attention to capture long-range dependencies, it demonstrates excessive sensitivity when processing dynamic ripple textures, resulting in substantial FPs by misidentifying water surface glints as floating litter. In comparison, FLD-Net exhibits exceptional adaptability on the FMPD dataset, effectively handling domain discrepancies across varying hydrological environments.

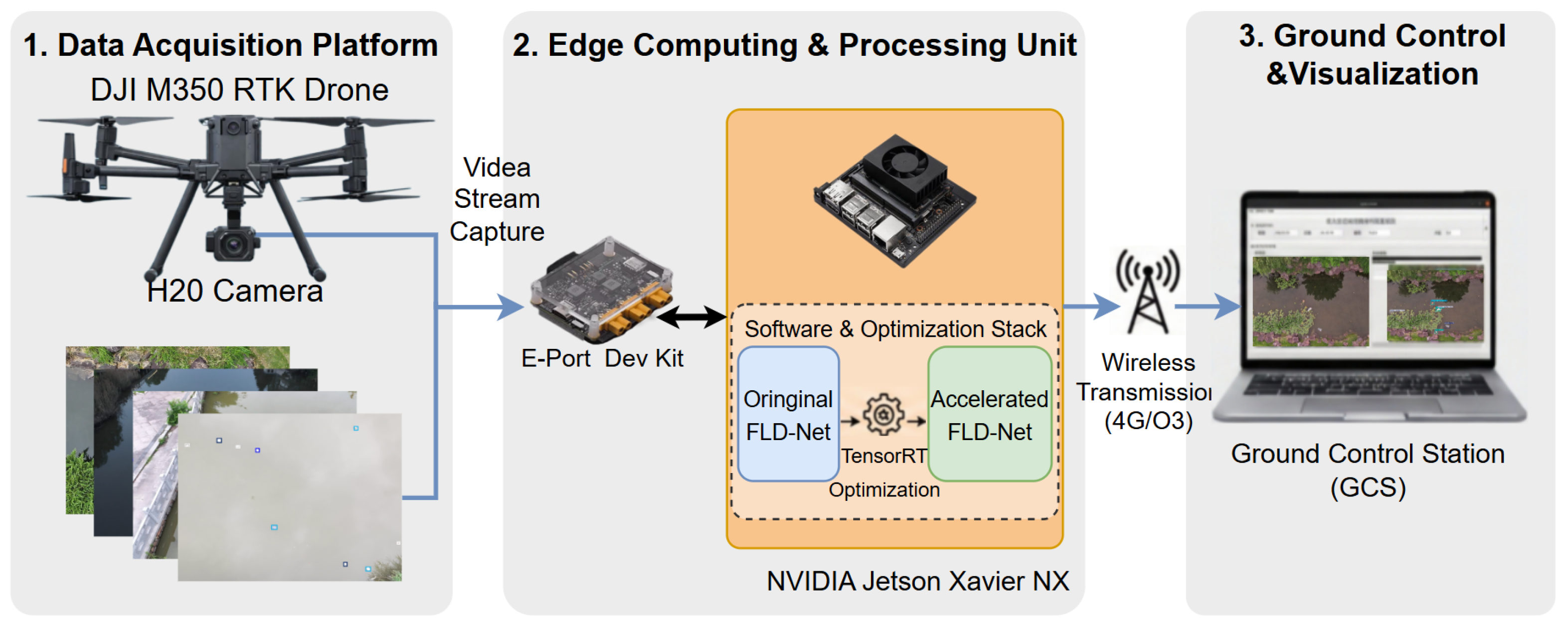

5.5. Real-Time Edge Deployment and Energy Efficiency

To validate the feasibility for practical engineering and explicitly address the computational overhead introduced by advanced operators (e.g., DFEM and DySample), end-to-end deployment testing was conducted on an NVIDIA Jetson Xavier NX (16 GB) embedded platform. The environment utilized the TensorRT 8.5 inference engine with FP16 half-precision quantization.

As

Table 5 shows, although FLD-Net incurs a 3.8 G FLOPs increase over the baseline, theoretical FLOPs do not always scale linearly with actual edge latency. As detailed in

Table 7, under TensorRT optimization, FLD-Net achieved an inference speed of 69.6 FPS, corresponding to a single-frame latency of approximately 14.4 ms. Despite incorporating three robust enhancement modules, the actual hardware latency increased by merely 2.4 ms compared to the optimized YOLOv11s, while securing an 8.51% improvement in mAP

50. Furthermore, the full-load power consumption remained highly stable at 14.5 W, achieving an excellent system energy efficiency of 4.80 FPS/W. These results strongly substantiate our lightweight design premise: FLD-Net successfully balances complex deformation adaptation and noise suppression without imposing a significant computational or power burden on typical UAV flight endurance.

As illustrated in

Figure 10, combined with field tests on the DJI M350 RTK platform, the end-to-end system response time—including camera acquisition, model inference, and 4G transmission—was controlled within 100 ms. This metric fully satisfies the operational requirements for closed-loop control and real-time ground station decision-making, confirming the practical value of FLD-Net as a high-efficiency edge detector.

6. Conclusions

Addressing the challenges of intelligent floating litter detection in complex aquatic environments, this article presents an end-to-end framework encompassing both a novel dataset and a specialized lightweight detector.

At the data level, we constructed UAV-Flow, a multi-scenario benchmark dataset tailored for UAV-based vision. Leveraging a semi-supervised annotation paradigm, this dataset authentically replicates visual challenges such as illumination variations, complex background clutter, and extreme scale fluctuations, providing robust data support for minute and non-rigid target detection. At the algorithmic level, we proposed FLD-Net, a real-time detection architecture. Specifically, the DFEM mitigates geometric misalignment in standard convolutions when modeling non-rigid targets. The DCFN utilizes a dynamic sampling mechanism to resolve feature aliasing and semantic ambiguity during cross-scale fusion. Furthermore, the DANA module precisely suppresses background structural noise, such as surface glint and ripples. Quantitative results demonstrate that FLD-Net achieves an optimal trade-off between accuracy and efficiency, with only 9.9 M parameters, confirming its viability for edge deployment on resource-constrained UAV payloads.

Despite these promising results, this study has certain limitations. First, regarding data distribution and generalization, UAV-Flow primarily focuses on surface-level debris; the model’s feature representation capacity for semi-submerged or heavily occluded objects remains limited due to light attenuation. Additionally, the data acquisition for UAV-Flow was constrained to a limited geographic range, meaning the model’s cross-regional generalization in globally diverse aquatic environments requires further validation. Second, regarding extreme edge deployment, while real-time inference was achieved on the Jetson Xavier NX, deploying the model on ultra-low-power microcontrollers (MCUs) may require further model compression techniques, such as INT8 quantization or structural reparameterization.

Future work will address these limitations by exploring temporal coherence and multi-modal sensing to penetrate surface reflection interference, and by investigating the spatiotemporal dynamics of aquatic pollution to better support ecological monitoring.