GTSegNet: An Island Coastline Segmentation Model Based on Collaborative Perception Strategy

Highlights

- Proposed GTSegNet, a novel collaborative perception framework that effectively tackles boundary blurring and topological discontinuity in complex island environments. It integrates a Graph Contextual modeling module (GCB) to capture global semantic information and a morphological Topology-Aware Refinement Module (TARM) to sharpen boundaries.

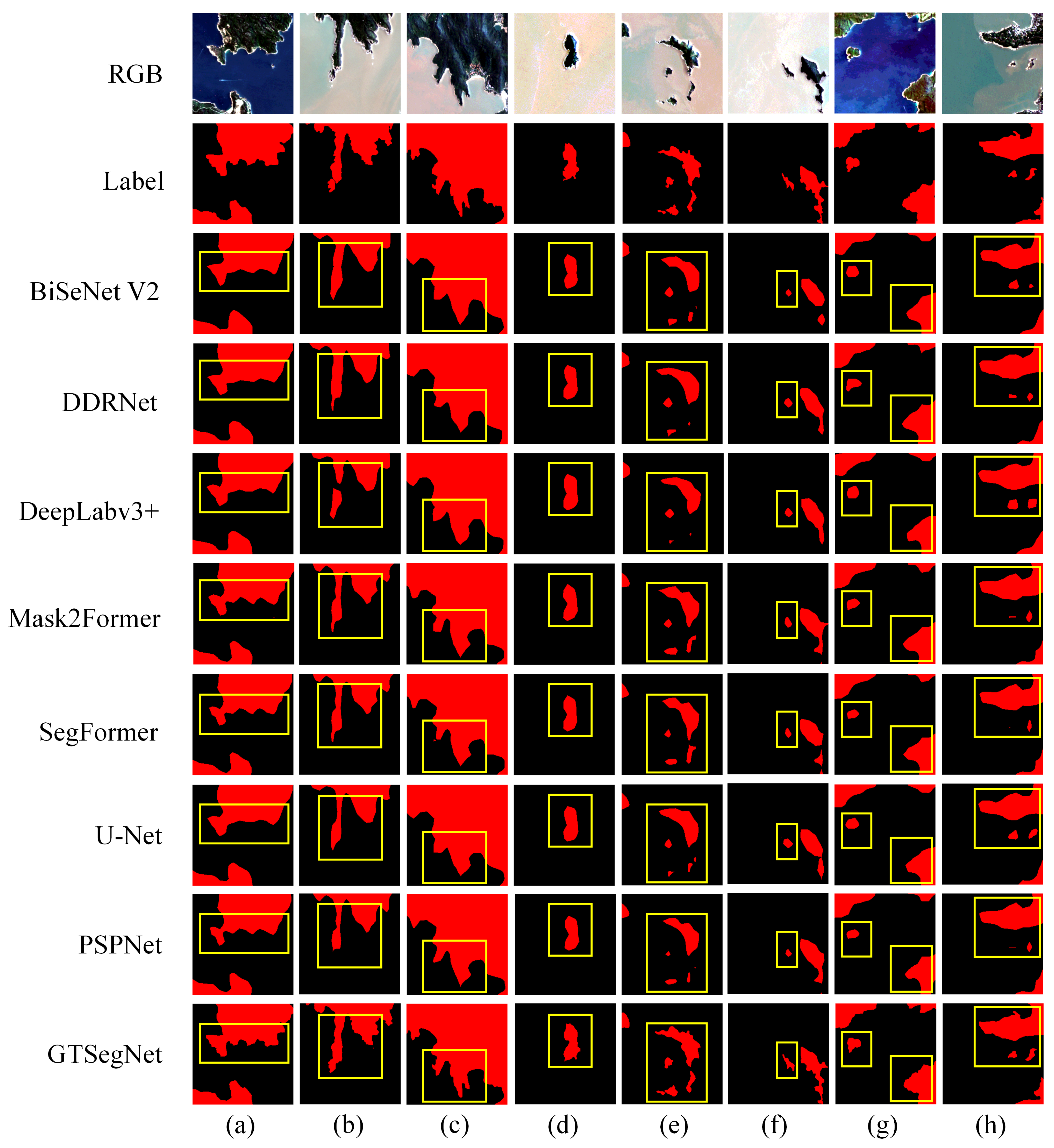

- Achieved state-of-the-art performance with an mIoU of 96.96% and a Recall of 98.54% on the self-constructed S2_China_Islands_2024 dataset. Quantitative and qualitative evaluations demonstrate its significant superiority over mainstream methods like U-Net and Mask2Former in accurately identifying small-scale islands.

- Technically, demonstrated that combining global contextual dependencies with morphological priors is a highly efficient strategy for high-resolution remote sensing tasks. The synergistic interaction between GCB and TARM provides a new paradigm for maintaining topological consistency in challenging maritime segmentation scenarios.

- Practically, provided a robust and automated tool capable of large-scale marine mapping and stable monitoring of coastal dynamics over time. Its excellent generalization ability, validated on both Landsat-8 imagery and multi-temporal datasets, offers critical scientific support for marine resource management and ecological protection.

Abstract

1. Introduction

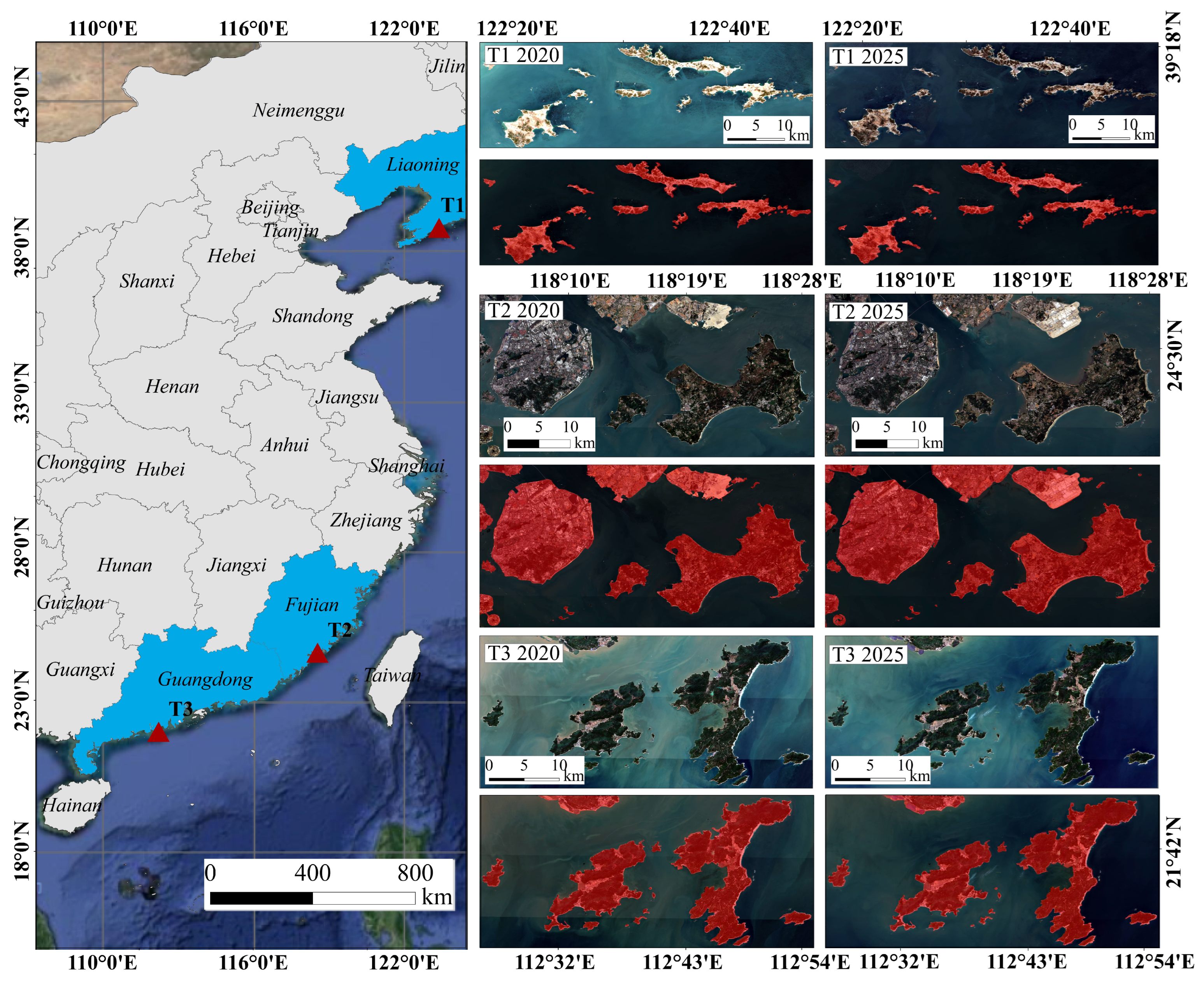

2. Materials

2.1. Study Area

2.2. S2_China_Islands_2024 Dataset and Data Processing Strategy

3. Methodology

3.1. Overall GTSegNet Architecture

3.2. Topology-Aware Refinement Module

- Calculation of the Morphological Gradient: The morphological gradient is a classical method to highlight the contours of the object. The TARM model calculates the morphological gradient by computing the difference between the max pooling and the negative max pooling (i.e., the min pool). Such math boosts how the model sees messy island borders. The true form of the land stays safe, allowing for sharp and clear boundary results.

- Convolution and Gating Mechanism: After the characteristics of the morphological gradient are enhanced by convolutional layers, they are adjusted by the gating mechanism [44]. This mechanism automatically controls the enhancement or suppression of the morphological gradient in boundary regions through a trainable gating coefficient. This smart gate keeps edge shapes connected and helps the final lines stay true to real borders.

- Target Refinement and Connectivity Assurance: In traditional convolutional neural networks, downsampling operations often lead to blurred boundaries, which in turn affect segmentation accuracy. TARM combines the calculation of the morphological gradient with the extraction of convolutional features to precisely refine the target boundaries and reduce the occurrence of false responses. Especially when handling small-scale islands and complex coastlines, TARM effectively enhances the feature response in boundary regions, reducing the boundary blurring issues caused by downsampling and spatial smoothing operations. Additionally, TARM keeps the whole island linked properly, making sure no parts get lost and the edge stays in one piece. This flow can be denoted as follows:

3.3. Graph Context Block

- Model Projection and Similarity Calculation: First, the input feature map is linearly projected into a lower-dimensional space. In the lower-dimensional space, the semantic similarity between nodes is calculated, generating a similarity matrix that represents the relationships between pixels. This step involves encoding each pixel in the feature map, allowing the network to capture the similarities between different pixels and represent them within the graph structure.

- Attention mechanism for graph structure: Using the calculated similarity matrix, GCB constructs an attention map for each node (pixel). This map computes the similarity between pixels and applies a Top-K selection mechanism for weighted aggregation [45]. The Top-K selection helps maintain the sparsity of the graph structure, avoiding computational redundancy in fully connected self-attention calculations, while retaining important long-range dependencies. It efficiently captures long-range dependencies and aggregates features related to the target region.

- Weighted Aggregation and Contextual Feature Update: After similarity calculation and Top-K selection, GCB fuses neighborhood information with target pixel features through weighted aggregation to generate new contextual features. These aggregated features capture long-range dependencies in the image, improving the structural perception and semantic consistency of the target, thus improving the extraction of meaningful features in complex scenarios. This flow can be denoted as follows:

3.4. Implementation Details

3.5. Evaluation Metrics

4. Results

4.1. Comparative Experiments

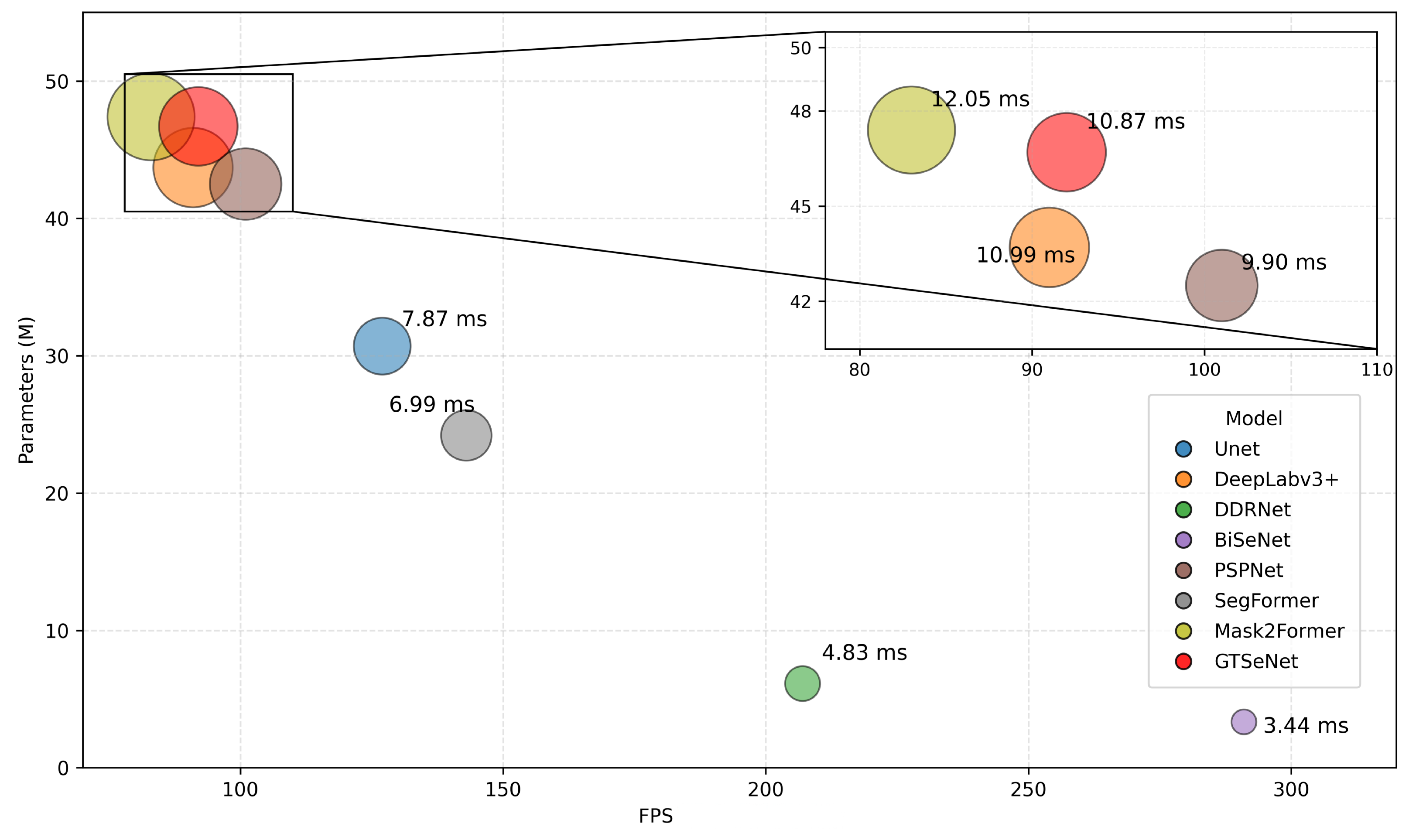

4.2. Model Complexity and Inference Efficiency Analysis

4.3. GTSegNet Training Parameter Analysis and Stability Verification

4.4. Ablation Study

5. Discussion

5.1. Model Applications

5.2. Generalization Ability of GTSegNet

5.3. Potential for Multi-Modal Extension

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Wessel, P.; Smith, W.H. A global, self-consistent, hierarchical, high-resolution shoreline database. J. Geophys. Res. Solid Earth 1996, 101, 8741–8743. [Google Scholar] [CrossRef]

- Derolez, V.; Bec, B.; Munaron, D.; Fiandrino, A.; Pete, R.; Simier, M.; Souchu, P.; Laugier, T.; Aliaume, C.; Malet, N. Recovery trajectories following the reduction of urban nutrient inputs along the eutrophication gradient in French Mediterranean lagoons. Ocean Coast. Manag. 2019, 171, 1–10. [Google Scholar] [CrossRef]

- Genz, A.S.; Fletcher, C.H.; Dunn, R.A.; Frazer, L.N.; Rooney, J.J. The predictive accuracy of shoreline change rate methods and alongshore beach variation on Maui, Hawaii. J. Coast. Res. 2007, 23, 87–105. [Google Scholar] [CrossRef]

- Suárez-de Vivero, J.L.; Mateos, J.C.R.; del Corral, D.F.; Barragán, M.J.; Calado, H.; Kjellevold, M.; Miasik, E.J. Food security and maritime security: A new challenge for the European Union’s ocean policy. Mar. Policy 2019, 108, 103640. [Google Scholar] [CrossRef]

- Brown, C.J.; Smith, S.J.; Lawton, P.; Anderson, J.T. Benthic habitat mapping: A review of progress towards improved understanding of the spatial ecology of the seafloor using acoustic techniques. Estuar. Coast. Shelf Sci. 2011, 92, 502–520. [Google Scholar] [CrossRef]

- Duffy, J.E. Biodiversity and the functioning of seagrass ecosystems. Mar. Ecol. Prog. Ser. 2006, 311, 233–250. [Google Scholar] [CrossRef]

- Sannigrahi, S.; Joshi, P.K.; Keesstra, S.; Paul, S.K.; Sen, S.; Roy, P.; Chakraborti, S.; Bhatt, S. Evaluating landscape capacity to provide spatially explicit valued ecosystem services for sustainable coastal resource management. Ocean Coast. Manag. 2019, 182, 104918. [Google Scholar] [CrossRef]

- McEvoy, S.; Haasnoot, M.; Biesbroek, R. How are European countries planning for sea level rise? Ocean Coast. Manag. 2021, 203, 105512. [Google Scholar] [CrossRef]

- Chavez, P.S., Jr. An improved dark-object subtraction technique for atmospheric scattering correction of multispectral data. Remote Sens. Environ. 1988, 24, 459–479. [Google Scholar] [CrossRef]

- Geng, X.; Jiao, L.; Li, L.; Liu, F.; Liu, X.; Yang, S.; Zhang, X. Multisource joint representation learning fusion classification for remote sensing images. IEEE Trans. Geosci. Remote Sens. 2023, 61, 4406414. [Google Scholar] [CrossRef]

- Wang, J.; Wang, S.; Zou, D.; Chen, H.; Zhong, R.; Li, H.; Zhou, W.; Yan, K. Social network and bibliometric analysis of unmanned aerial vehicle remote sensing applications from 2010 to 2021. Remote Sens. 2021, 13, 2912. [Google Scholar] [CrossRef]

- Kim, J.-I.; Kim, H.-C.; Kim, T. Robust mosaicking of lightweight UAV images using hybrid image transformation modeling. Remote Sens. 2020, 12, 1002. [Google Scholar] [CrossRef]

- Gens, R. Remote sensing of coastlines: Detection, extraction and monitoring. Int. J. Remote Sens. 2010, 31, 1819–1836. [Google Scholar] [CrossRef]

- McFeeters, S.K. The use of the Normalized Difference Water Index (NDWI) in the delineation of open water features. Int. J. Remote Sens. 1996, 17, 1425–1432. [Google Scholar] [CrossRef]

- Alesheikh, A.A.; Ghorbanali, A.; Nouri, N. Coastline change detection using remote sensing. Int. J. Environ. Sci. Technol. 2007, 4, 61–66. [Google Scholar] [CrossRef]

- Kass, M.; Witkin, A.; Terzopoulos, D. Snakes: Active contour models. Int. J. Comput. Vis. 1988, 1, 321–331. [Google Scholar] [CrossRef]

- van der Werff, H.M. Mapping shoreline indicators on a sandy beach with supervised edge detection of soil moisture differences. Int. J. Appl. Earth Obs. Geoinf. 2019, 74, 231–238. [Google Scholar] [CrossRef]

- Zhang, T.; Yang, X.; Hu, S.; Su, F. Extraction of coastline in aquaculture coast from multispectral remote sensing images: Object-based region growing integrating edge detection. Remote Sens. 2013, 5, 4470–4487. [Google Scholar] [CrossRef]

- Sekovski, I.; Stecchi, F.; Mancini, F.; Del Rio, L. Image classification methods applied to shoreline extraction on very high-resolution multispectral imagery. Int. J. Remote Sens. 2014, 35, 3556–3578. [Google Scholar] [CrossRef]

- Long, J.; Shelhamer, E.; Darrell, T. Fully convolutional networks for semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 3431–3440. [Google Scholar]

- Yuan, X.; Shi, J.; Gu, L. A review of deep learning methods for semantic segmentation of remote sensing imagery. Expert Syst. Appl. 2021, 169, 114417. [Google Scholar] [CrossRef]

- Seale, C.; Redfern, T.; Chatfield, P.; Luo, C.; Dempsey, K. Coastline detection in satellite imagery: A deep learning approach on new benchmark data. Remote Sens. Environ. 2022, 278, 113044. [Google Scholar] [CrossRef]

- Aghdami-Nia, M.; Shah-Hosseini, R.; Rostami, A.; Homayouni, S. Automatic coastline extraction through enhanced sea-land segmentation by modifying Standard U-Net. Int. J. Appl. Earth Obs. Geoinf. 2022, 109, 102785. [Google Scholar] [CrossRef]

- Sun, S.; Mu, L.; Feng, R.; Chen, Y.; Han, W. Quadtree decomposition-based Deep learning method for multiscale coastline extraction with high-resolution remote sensing imagery. Sci. Remote Sens. 2024, 9, 100112. [Google Scholar] [CrossRef]

- O’Sullivan, C.; Kashyap, A.; Coveney, S.; Monteys, X.; Dev, S. Enhancing coastal water body segmentation with landsat irish coastal segmentation (lics) dataset. Remote Sens. Appl. Soc. Environ. 2024, 36, 101276. [Google Scholar] [CrossRef]

- Niu, Z.; Zhong, G.; Yu, H. A review on the attention mechanism of deep learning. Neurocomputing 2021, 452, 48–62. [Google Scholar] [CrossRef]

- Elizar, E.; Zulkifley, M.A.; Muharar, R.; Zaman, M.H.M.; Mustaza, S.M. A review on multiscale-deep-learning applications. Sensors 2022, 22, 7384. [Google Scholar] [CrossRef]

- Xu, L.; Ren, J.; Yan, Q.; Liao, R.; Jia, J. Deep edge-aware filters. In Proceedings of the International Conference on Machine Learning, Lille, France, 6–11 July 2015; pp. 1669–1678. [Google Scholar]

- Fu, J.; Liu, J.; Tian, H.; Li, Y.; Bao, Y.; Fang, Z.; Lu, H. Dual attention network for scene segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 16–20 June 2019; pp. 3146–3154. [Google Scholar]

- Zhao, H.; Shi, J.; Qi, X.; Wang, X.; Jia, J. Pyramid scene parsing network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 2881–2890. [Google Scholar]

- Liu, R.; Tao, F.; Liu, X.; Na, J.; Leng, H.; Wu, J.; Zhou, T. RAANet: A residual ASPP with attention framework for semantic segmentation of high-resolution remote sensing images. Remote Sens. 2022, 14, 3109. [Google Scholar] [CrossRef]

- Chen, Y.; Yang, Z.; Zhang, L.; Cai, W. A semi-supervised boundary segmentation network for remote sensing images. Sci. Rep. 2025, 15, 2007. [Google Scholar] [CrossRef]

- Yuan, K.; Meng, G.; Cheng, D.; Bai, J.; Xiang, S.; Pan, C. Efficient cloud detection in remote sensing images using edge-aware segmentation network and easy-to-hard training strategy. In Proceedings of the 2017 IEEE International Conference on Image Processing (ICIP), Beijing, China, 17–20 September 2017; pp. 61–65. [Google Scholar]

- Koonce, B. ResNet 50. In Convolutional Neural Networks with Swift for TensorFlow; Apress: Berkeley, CA, USA, 2021; pp. 63–72. [Google Scholar]

- Zhao, Q.; Yu, L.; Li, X.; Peng, D.; Zhang, Y.; Gong, P. Progress and trends in the application of Google Earth and Google Earth Engine. Remote Sens. 2021, 13, 3778. [Google Scholar] [CrossRef]

- Mutanga, O.; Kumar, L. Google earth engine applications. Remote Sens. 2019, 11, 591. [Google Scholar] [CrossRef]

- Hoerber, T.C. The European Space Agency and the European Union: The next step on the road to the stars. J. Contemp. Eur. Res. 2009, 5, 405–414. [Google Scholar] [CrossRef]

- Li, J.; Wu, Z.; Hu, R.; Wang, X. Spatial-Temporal Approach and Dataset for Enhancing Cloud Detection in Sentinel-2 Imagery: A Case Study in China. Remote Sens. 2024, 16, 973. [Google Scholar]

- He, T.; Liang, S.; Song, D.-X. Spatio-temporal differences in cloud cover of Landsat-8 OLI observations across China during 2013–2016. J. Geogr. Sci. 2018, 28, 429–444. [Google Scholar] [CrossRef]

- Li, J.; Wang, L.; Liu, S.; Peng, B.; Ye, H. An automatic cloud detection model for Sentinel-2 imagery based on Google Earth Engine. Remote Sens. Lett. 2022, 13, 196–206. [Google Scholar] [CrossRef]

- Evans, A.N.; Liu, X.U. A morphological gradient approach to color edge detection. IEEE Trans. Image Process. 2006, 15, 1454–1463. [Google Scholar] [CrossRef]

- Nagi, J.; Ducatelle, F.; Di Caro, G.A.; Cireşan, D.; Meier, U.; Giusti, A.; Nagi, F.; Schmidhuber, J.; Gambardella, L.M. Max-pooling convolutional neural networks for vision-based hand gesture recognition. In Proceedings of the 2011 IEEE International Conference on Signal and Image Processing Applications (ICSIPA), Kuala Lumpur, Malaysia, 16–18 November 2011; pp. 342–347. [Google Scholar]

- Li, C.; Li, L.; Qi, J. A self-attentive model with gate mechanism for spoken language understanding. In Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, Brussels, Belgium, 31 October–4 November 2018; pp. 3824–3833. [Google Scholar]

- Veličković, P.; Cucurull, G.; Casanova, A.; Romero, A.; Lio, P.; Bengio, Y. Graph attention networks. arXiv 2017, arXiv:1710.10903. [Google Scholar]

- Fagin, R.; Kumar, R.; Sivakumar, D. Comparing top k lists. SIAM J. Discrete Math. 2003, 17, 134–160. [Google Scholar] [CrossRef]

- Rahman, M.A.; Wang, Y. Optimizing intersection-over-union in deep neural networks for image segmentation. In Proceedings of the International Symposium on Visual Computing, Las Vegas, NV, USA, 12–14 December 2016; pp. 234–244. [Google Scholar]

- Ye, S.; Pontius, R.G., Jr.; Rakshit, R. A review of accuracy assessment for object-based image analysis: From per-pixel to per-polygon approaches. ISPRS J. Photogramm. Remote Sens. 2018, 141, 137–147. [Google Scholar] [CrossRef]

- Wang, Y.; Yang, L.; Liu, X.; Yan, P. An improved semantic segmentation algorithm for high-resolution remote sensing images based on DeepLabv3+. Sci. Rep. 2024, 14, 9716. [Google Scholar] [CrossRef]

- Cheng, X.; Lei, H. Semantic segmentation of remote sensing imagery based on multiscale deformable CNN and DenseCRF. Remote Sens. 2023, 15, 1229. [Google Scholar] [CrossRef]

- Ronneberger, O.; Fischer, P.; Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention, Munich, Germany, 5–9 October 2015; pp. 234–241. [Google Scholar]

- Chen, L.-C.; Zhu, Y.; Papandreou, G.; Schroff, F.; Adam, H. Encoder-decoder with atrous separable convolution for semantic image segmentation. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 801–818. [Google Scholar]

- Yan, S.; Wu, C.; Wang, L.; Xu, F.; An, L.; Guo, K.; Liu, Y. Ddrnet: Depth map denoising and refinement for consumer depth cameras using cascaded cnns. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 151–167. [Google Scholar]

- Yu, C.; Gao, C.; Wang, J.; Yu, G.; Shen, C.; Sang, N. Bisenet v2: Bilateral network with guided aggregation for real-time semantic segmentation. Int. J. Comput. Vis. 2021, 129, 3051–3068. [Google Scholar] [CrossRef]

- Thisanke, H.; Deshan, C.; Chamith, K.; Seneviratne, S.; Vidanaarachchi, R.; Herath, D. Semantic segmentation using vision transformers: A survey. Eng. Appl. Artif. Intell. 2023, 126, 106669. [Google Scholar] [CrossRef]

- Cheng, B.; Misra, I.; Schwing, A.G.; Kirillov, A.; Girdhar, R. Masked-attention mask transformer for universal image segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 1290–1299. [Google Scholar]

- Smith, L.N. Cyclical learning rates for training neural networks. In Proceedings of the 2017 IEEE Winter Conference on Applications of Computer Vision (WACV), Santa Rosa, CA, USA, 24–31 March 2017; pp. 464–472. [Google Scholar]

- Zhang, Z. Improved adam optimizer for deep neural networks. In Proceedings of the 2018 IEEE/ACM 26th International Symposium on Quality of Service (IWQoS), Banff, AB, Canada, 4–6 June 2018; pp. 1–2. [Google Scholar]

- Gower, R.M.; Loizou, N.; Qian, X.; Sailanbayev, A.; Shulgin, E.; Richtárik, P. SGD: General analysis and improved rates. In Proceedings of the International Conference on Machine Learning, Long Beach, CA, USA, 9–15 June 2019; pp. 5200–5209. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Ward, R.; Wu, X.; Bottou, L. Adagrad stepsizes: Sharp convergence over nonconvex landscapes. J. Mach. Learn. Res. 2020, 21, 1–30. [Google Scholar]

- Zhou, P.; Xie, X.; Lin, Z.; Yan, S. Towards understanding convergence and generalization of AdamW. IEEE Trans. Pattern Anal. Mach. Intell. 2024, 46, 6486–6493. [Google Scholar] [CrossRef] [PubMed]

- Selvaraju, R.R.; Cogswell, M.; Das, A.; Vedantam, R.; Parikh, D.; Batra, D. Grad-cam: Visual explanations from deep networks via gradient-based localization. In Proceedings of the IEEE International Conference on Image Processing (ICIP), Venice, Italy, 22–29 October 2017; pp. 618–626. [Google Scholar]

- Gesnouin, J.; Pechberti, S.; Stanciulescu, B.; Moutarde, F. Assessing cross-dataset generalization of pedestrian crossing predictors. In Proceedings of the 2022 IEEE Intelligent Vehicles Symposium (IV), Aachen, Germany, 4–9 June 2022; pp. 419–426. [Google Scholar]

- Yang, T.; Jiang, S.; Hong, Z.; Zhang, Y.; Han, Y.; Zhou, R.; Wang, J.; Yang, S.; Tong, X.; Kuc, T.-y. Sea-land segmentation using deep learning techniques for landsat-8 OLI imagery. Mar. Geod. 2020, 43, 105–133. [Google Scholar] [CrossRef]

- Feyisa, G.L.; Meilby, H.; Fensholt, R.; Proud, S.R. Automated Water Extraction Index (AWEI): A new technique for surface water mapping using Landsat imagery. Remote Sens. Environ. 2014, 140, 23–35. [Google Scholar] [CrossRef]

- Pardo-Pascual, J.E.; Almonacid-Caballer, J.; Ruiz, L.A.; Palomar-Vázquez, J. Automatic extraction of shorelines from Landsat TM and ETM+ multi-temporal images with subpixel precision. Remote Sens. Environ. 2012, 123, 1–11. [Google Scholar] [CrossRef]

- Ling, J.; Zhang, H.; Lin, Y. Improving Urban Land Cover Classification in Cloud-Prone Areas with Polarimetric SAR Images. Remote Sens. 2021, 13, 4708. [Google Scholar] [CrossRef]

- Gens, R. Oceanographic applications of SAR remote sensing. Giscience Remote Sens. 2008, 45, 275–305. [Google Scholar] [CrossRef]

- Passarello, G.; Filippi, F.; Sorbello, F. Coastline extraction using SAR images and deep learning. In IEEE International Geoscience and Remote Sensing Symposium (IGARSS); IEEE: New York, NY, USA, 2024; pp. 1012–1015. [Google Scholar]

- Musthafa, S.M.; Dwarakish, G.S. Application of SAR-Optical fusion to extract shoreline position from Cloud-Contaminated satellite images. Remote Sens. Appl. Soc. Environ. 2020, 20, 100416. [Google Scholar]

- Tajima, Y.; Saruwatari, Y.; Arikawa, S. An automatic shoreline extraction method from SAR imagery using DeepLab-v3+ and its versatility. Coast. Eng. J. 2024, 67, 106–118. [Google Scholar]

- Yu, H.; Wang, F.; Hou, Y.; Guo, J. MSARG-Net: A Multimodal Offshore Floating Raft Aquaculture Area Extraction Network for Remote Sensing Images Based on Multiscale SAR Guidance. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2024, 17, 18319–18334. [Google Scholar] [CrossRef]

| Method | mIoU (%) | mPA (%) | mPrecision (%) | mRecall (%) |

|---|---|---|---|---|

| UNet | 92.84 | 96.61 | 95.95 | 96.61 |

| DeepLabv3+ | 93.46 | 96.83 | 96.40 | 96.83 |

| DDRNet | 93.54 | 96.93 | 96.39 | 96.93 |

| BiSeNet | 93.36 | 96.78 | 96.33 | 96.78 |

| PSPNet | 93.70 | 96.87 | 96.60 | 96.87 |

| SegFormer | 93.53 | 96.80 | 96.47 | 96.80 |

| Mask2Former | 93.62 | 96.84 | 96.52 | 96.84 |

| GTSegNet | 96.96 | 98.54 | 98.37 | 98.54 |

| Method | Params (M) | FPS | Infer (ms) |

|---|---|---|---|

| Unet | 30.7 | 127 | 7.87 |

| DeepLabv3+ | 43.7 | 91 | 10.99 |

| DDRNet | 6.13 | 207 | 4.83 |

| BiSeNet | 3.34 | 291 | 3.44 |

| PSPNet | 42.5 | 101 | 9.90 |

| SegFormer | 24.2 | 143 | 6.99 |

| Mask2Former | 47.4 | 83 | 12.05 |

| GTSegNet | 46.7 | 92 | 10.87 |

| Learning Rate | mIoU (%) | mPA (%) | mPrecision (%) | mRecall (%) |

|---|---|---|---|---|

| 0.0004 | 95.82 | 97.96 | 97.84 | 97.68 |

| 0.0003 | 96.34 | 98.12 | 98.06 | 98.02 |

| 0.0002 | 96.75 | 98.37 | 98.21 | 98.21 |

| 0.0001 | 96.96 | 98.54 | 98.37 | 98.54 |

| Learning Rate | mIoU (%) | mPA (%) | mPrecision (%) | mRecall (%) |

|---|---|---|---|---|

| AdaGrad | 95.48 | 97.36 | 97.18 | 97.22 |

| SGD | 95.91 | 97.84 | 97.63 | 97.79 |

| Adam | 96.42 | 98.12 | 98.03 | 98.10 |

| AdamW | 96.96 | 98.54 | 98.37 | 98.54 |

| Name | mIoU (%) | mPA (%) | mPrecision (%) | mRecall (%) |

|---|---|---|---|---|

| Baseline | 93.70 | 96.87 | 96.60 | 96.87 |

| Baseline + TARM | 95.66 | 97.81 | 97.74 | 97.81 |

| Baseline + GCB | 94.95 | 97.56 | 97.25 | 97.56 |

| Baseline + TARM + GCB | 96.96 | 98.54 | 98.37 | 98.54 |

| Method | Accuracy (%) | Recall (%) | mIoU (%) |

|---|---|---|---|

| RefineNet | 99.04 | 99.05 | 92.42 |

| FC-DenseNet | 99.55 | 99.55 | 92.72 |

| DeepLabV3+ | 99.40 | 99.40 | 92.98 |

| PSPNet | 99.50 | 99.51 | 92.63 |

| SegNet | 98.64 | 98.64 | 91.21 |

| U-Net | 99.38 | 99.38 | 92.79 |

| GTSegNet (ours) | 98.91 | 98.35 | 96.66 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zhu, Y.; Wang, F.; Hou, Y.; Cui, Z.; Yu, H.; Zhang, S.; Liao, Z.; Li, P.; Lu, Y. GTSegNet: An Island Coastline Segmentation Model Based on Collaborative Perception Strategy. Remote Sens. 2026, 18, 607. https://doi.org/10.3390/rs18040607

Zhu Y, Wang F, Hou Y, Cui Z, Yu H, Zhang S, Liao Z, Li P, Lu Y. GTSegNet: An Island Coastline Segmentation Model Based on Collaborative Perception Strategy. Remote Sensing. 2026; 18(4):607. https://doi.org/10.3390/rs18040607

Chicago/Turabian StyleZhu, Yuanyi, Fangxiong Wang, Yingzi Hou, Zhenqi Cui, Haomiao Yu, Shuai Zhang, Zhiying Liao, Peng Li, and Yi Lu. 2026. "GTSegNet: An Island Coastline Segmentation Model Based on Collaborative Perception Strategy" Remote Sensing 18, no. 4: 607. https://doi.org/10.3390/rs18040607

APA StyleZhu, Y., Wang, F., Hou, Y., Cui, Z., Yu, H., Zhang, S., Liao, Z., Li, P., & Lu, Y. (2026). GTSegNet: An Island Coastline Segmentation Model Based on Collaborative Perception Strategy. Remote Sensing, 18(4), 607. https://doi.org/10.3390/rs18040607