PC-YOLO: Moving Target Detection in Video SAR via YOLO on Principal Components

Highlights

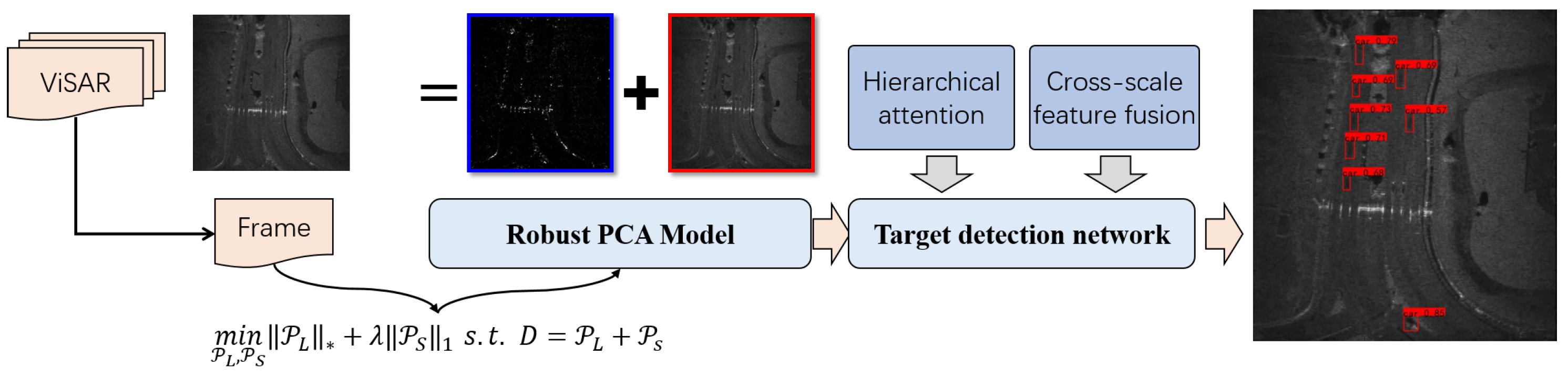

- We reduced the heavy level of clutter in ViSAR by the principal component decomposition learning technique. So the SNR of SAR image can be improved. It played an important role for the subsequent task of moving target detection. A hierarchical learning mechanism composed of the self-attention, the spatial attention, and the channel attention were presented. Much more effective features for the task of target detection can be learned.

- A cross-scale feature fusion strategy composed of the temporal fusion and the spatial fusion were presented within the look once detection framework. So the moving targets with mutable scales can be located accurately. Multiple rounds of experiments were performed. The results demonstrated the advantage of proposed method in comparison to the classical methods, as well as the state-of-the-art detection algorithms.

- A new clutter removal strategy specific for the task of moving target detection. Different from the preceding works, we regarded the complicated imaging scenarios as a typically combinatorial scene, where the moving targets contributed to the sparse components, while the background clutter attributed to the low-rank components. So far as we known, the assumption for ViSAR was first presented in this paper.

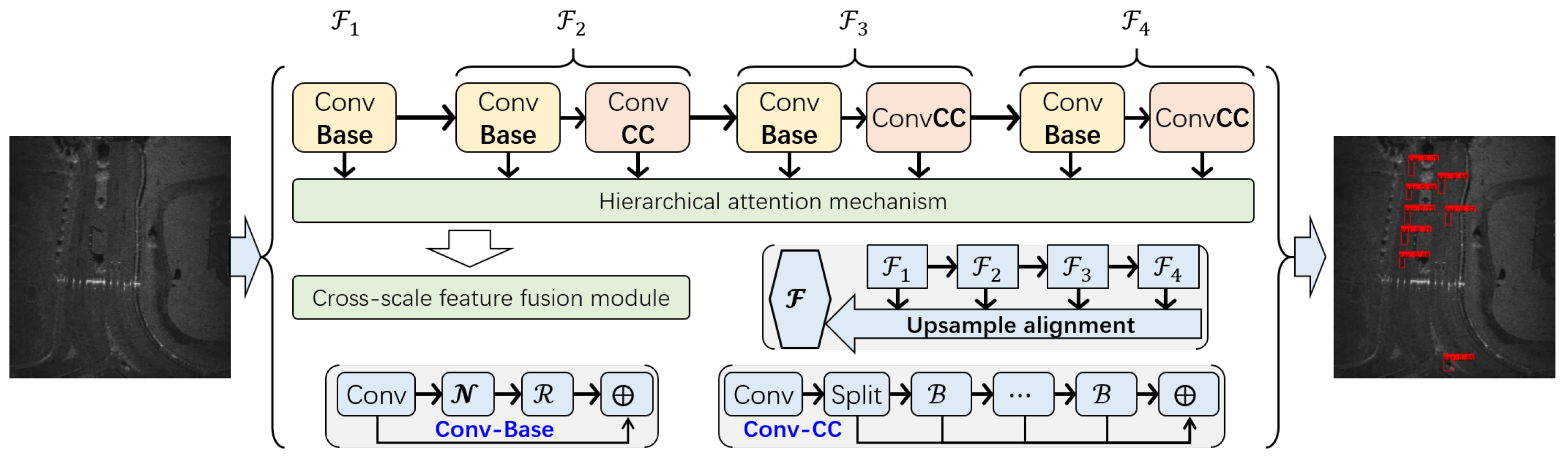

- A new detection framework for the extraction of shadow. Different from the generic method, the hierarchical attention mechanism and the cross-scale feature fusion were presented. So the target with various scales can be located accurately.

Abstract

1. Introduction

1.1. Moving Target Detection via SAR Images

- The DPCA technique leverages the identical scattering characteristics of stationary clutter across channels. By subtracting the channel echo signals, stationary clutter can be filtered out, whereas moving targets, due to their distinct motion characteristics, are not canceled by this subtraction, enabling their detection.

- The ATI technique operates on the principle that stationary targets have a consistent echo phase between channels, whereas moving targets exhibit a phase variation. By comparing this inter-channel phase information, moving targets can be detected, and these phase data can also be used to estimate the target’s radial velocity.

1.2. Moving Target Detection via Video SAR

- Signal processing tricks. Many kinds of signal processing techniques have been proposed to achieve target detection in video SAR. These methods identify moving targets through the statistical variations in radar echo amplitude. Since the scattering signatures of the targets had changed significantly, the resulting amplitude, phase, and frequency of the received echoes were altered accordingly. So, a natural idea was to extract motion information by comparing the amplitude, phase, and frequency of radar echoes, enabling the achievement of the task of target detection and tracking. Fan et al. proposed an echo-driven motion compensation framework to improve THz-ViSAR images [17]. Airborne motion errors were modeled as both radial and vibration errors and were further estimated from the echoes. Yan et al. derived the mathematical model of moving target echoes in a video SAR system [18]. Typical system parameters for motion trajectory estimation can be simulated. Li et al. developed a curvilinear moving target refocusing method for ViSAR images [19]. The localized phase-gradient autofocus trick was used to compensate for Doppler chirp-rate inconsistencies, while additional spatial-domain information was used for geometric deformation correction. Gou et al. proposed a circular-track video SAR detection method using sub-aperture segmentation [20]. This method achieved a high detection probability in high-frame-rate scenes. However, methods based on signal processing are sensitive to ground clutter and multipath interference, which reduce accuracy in complex environments.

- Shadow discernment. Moving targets exhibited a Doppler shift in video SAR. Energy leaking across the range cells caused the images to be defocused. This phenomenon resulted in a deviation along the azimuth direction and a certain angular spread, preventing the target’s true position from fully reflecting the radar waves and forming distinct shadow features. These characteristics can be used to approximate the true position and state information of targets. Another idea was to detect targets from their shadows. Early methods include the inter-frame difference method and the background difference method. Luo et al. applied image binary operations after differencing the obtained background model [21]. Morphological processing was then imposed on the resulting binary image to extract moving target shadows and suppress false alarms. Zhang et al. combined the background difference model with the three-frame difference method for shadow extraction [22]. Zhang et al. proposed extracting the target by subtracting three sequential frames [23]. The extracted images were then handled using the mathematical morphology technique. Wang et al. performed several rounds of simulation experiments on low Radar Cross Section (RCS) moving target signals [24]. They were then transferred into the real Ka-band airborne video SAR images. The detection method was effective even for the stealth targets. He et al. introduced a ViSAR generation method based on fast factorization back-projection to address the problem of speed limitations [25]. It was applicable to both video SAR generation and moving target detection. Yin et al. presented a decomposition framework based on a low-rank sparse decomposition model [26]. It was combined with trajectory area extraction to achieve fast and accurate detection in specific regions.

1.3. Our Solution

- Manual operations for feature extraction. Traditional methods, such as the inter-frame difference and the background difference, relied on many manual engineering operations, such as frame registration, de-speckling, and the estimation of background. These intermediate operations had a significant impact on termination performance.

- Strong clutter environments. Unlike common vision images, the frame of ViSAR was formed by coherent imaging, while both the diffuse reflection and the specular reflection were available. Therefore, the signal-to-noise ratio (SNR) of the SAR image was much lower. Low-SNR environments make shadow extraction much more difficult, and how to address the clutter remained an open problem.

- Variable target scales. In radar images, shadows form because electromagnetic echoes are occluded by the target itself. So the scale of the shadow was dependent on the size of the target, the incident angle, and the resolution. These factors make the scale of shadows mutable in ViSAR. Another problem was how to extract shadows with various scales, especially for those that covered few resolution cells.

2. The Signal Model

2.1. Range Compression

2.2. Azimuth Resolution

2.3. The Generation of ViSAR

2.4. The Formation of Shadow

3. The Proposed Method

3.1. Method Overview and Motivation

- Clutter removal. To suppress the clutter, the imaging scenarios were viewed as the combination of low-rank and sparse components. The former models the components with strong correlations, while the latter represents the motion modes. Each ViSAR frame was then decomposed into a sparse component and a low-rank component. The background clutter was suppressed, while the targets were enhanced.

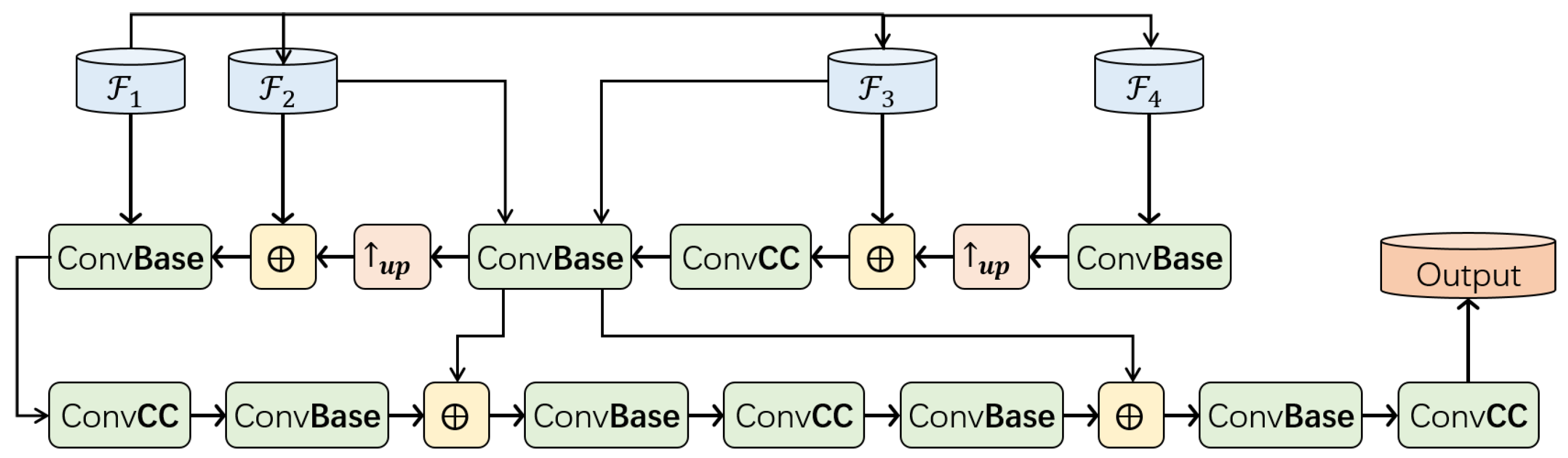

- Shadow extraction. To extract shadows of various sizes, a new detection algorithm was proposed, where the cross-scale feature fusion strategy and the hierarchical attention mechanism were deployed. The sequential representations of the multi-scale features generated from the backbone were formed to obtain the features at different levels of detail. They were further combined to enhance the perception ability of multi-scale targets so that the detection performance could be improved accordingly.

3.2. The Sparse and Low-Rank Decomposition Model

3.3. The Look Once Detection Network

3.3.1. Hierarchical Attention Mechanism

3.3.2. Cross-Scale Feature Fusion

4. Experiments and Discussion

4.1. Experimental Settings

4.1.1. The Imaging Setting

4.1.2. The Algorithm Setting

4.1.3. The Evaluation Metrics

4.2. The Ablation Study

- “Raw” refers to the performance of the original YOLO algorithm. The detection performance was very poor, with an mAP of 0.3562, due to the high level of clutter. The problem of moving-target detection in ViSAR could not be solved by applying generic methods directly.

- The “Det (PCs)” means applying the generic detection algorithm to the principal component. We found that the performance was clearly improved. The results prove that the principal component decomposition can suppress the complex background clutter effectively and enhance the saliency of motion-induced shadows simultaneously, and hence is advantageous to the following feature extraction and detection. Although the mAP was improved from 0.3562 to 0.7239, the recall rate, 0.5163, is not satisfactory. The results demonstrate that many false alarms are present.

- The “Det (F)” denotes the improved target detection algorithm. It improves the mAP from 0.3562 to 0.7504 using a combination of a hierarchical attention mechanism and cross-scale feature fusion. Thus, shadows at various scales can be located.

4.3. The Verification of Principal Component Decomposition

4.3.1. The Visualization of Principal Components

- “Raw” refers to the original image in the video SAR. We found that the heavy clutter is widespread in the imaging plane due to the coherent imaging mechanism. The low-SNR image makes target observations much more difficult.

- The “bkDIFF” is the background difference method. It is typically used to reduce clutter. Here, the current frame of ViSAR is subtracted from the previous one to cancel the clutter and noise. As can be seen, the results of the difference are much poorer due to the change of view.

- “bkMEAN” subtracts the average background from the current ViSAR frame so that the clutter cancellation can be achieved. It is also widely used in previous work. The results are not satisfactory. The moving targets are mixed with the background clutter.

- “PCA-L” and “PCA-S” represent the image reconstruction via the 95% eigenvalue energy and the corresponding residual. It prioritizes the directions where the data vary the most because more variation usually indicates more useful information.

4.3.2. Quantitative Experiments

4.4. The Comparative Studies

4.4.1. Quantitative Experiments

4.4.2. The Visualization of Detection Results

- In Figure 7b,c, many false alarms denoted by the green rectangle are produced.

- In Figure 7d,e,g, some moving targets (shadow) have been missed.

- Although all of the targets have been located in Figure 7f,h, the confidence values for (f) are much lower than for (g).

5. Conclusions

- It is not effective to apply the generic methods to video SAR data for moving target detection.

- The clutter removal plays an important role in the proposed method, because the clutter in the SAR image makes the detection task difficult.

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| SAR | Synthetic Aperture Radar |

| ViSAR | Video Synthetic Aperture Radar |

| GMTI | Multidisciplinary Digital Publishing Institute |

| DPCA | Displaced Phase Center Antenna |

| ATI | Along Track Interferometry |

| STAP | Space-Time Adaptive Processing |

| PCA | Principal Component Analysis |

| RPCA | Robust Principal Component Analysis |

| mAP | mean Average Precision |

References

- Moreira, A.; Prats-Iraola, P.; Younis, M.; Krieger, G.; Hajnsek, I.; Papathanassiou, K.P. A tutorial on synthetic aperture radar. IEEE Geosci. Remote Sens. Mag. 2013, 1, 6–43. [Google Scholar]

- Li, S.; Dong, G.; Liu, H. ImagingNet: A New Learnable SAR Imaging Method via Hierarchical U-Shaped Network. IEEE Trans. Circuits Syst. Video Technol. 2025, 35, 12007–12022. [Google Scholar] [CrossRef]

- Li, J.; Xu, C.; Xu, C.; Su, H.; Gao, L.; Wang, T. Deep Learning for SAR Ship Detection: Past, Present and Futuret. Remote Sens. 2022, 14, 2712. [Google Scholar] [CrossRef]

- Li, S.; Wang, Y.; Dong, G.; Wang, P.; Liu, H. SAR Missing Echo Imaging via Hierarchical Learning Deployed on Hybrid Network. IEEE Trans. Aerosp. Electron. Syst. 2025, 61, 18833–18849. [Google Scholar] [CrossRef]

- Cerutti-Maori, D.; Sikaneta, I. A generalization of DPCA processing for multichannel SAR/GMTI radars. IEEE Trans. Geosci. Remote Sens. 2012, 51, 560–572. [Google Scholar] [CrossRef]

- Carande, R.E. Dual baseline and frequency along-track interferometry. In IGARSS’92: Proceedings of the 12th Annual International Geoscience and Remote Sensing Symposium, Houston, TX, USA, 26–29 May 1992; Institute of Electrical and Electronics Engineers, Inc.: Piscataway, NJ, USA, 1992; Volume 2, pp. 20–43. [Google Scholar]

- Chen, J.; Miao, X.; Wan, Y.; Zhang, J.; Miao, H. Simulation Study of the Effect of Multi-Angle ATI-SAR on Sea Surface Current Retrieval Accuracy. Remote Sens. 2025, 17, 3383. [Google Scholar] [CrossRef]

- Brennan, L.E.; Reed, L. Theory of adaptive radar. IEEE Trans. Aerosp. Electron. Syst. 1973, AES-9, 237–252. [Google Scholar] [CrossRef]

- Deming, R.W.; MacIntosh, S.; Best, M. Three-channel processing for improved geo-location performance in SAR-based GMTI interferometry. In Proceedings of the Algorithms for Synthetic Aperture Radar Imagery XIX, Baltimore, MD, USA, 7 May 2012; SPIE: Bellingham, WA, USA, 2012; Volume 8394, pp. 100–116. [Google Scholar]

- Deming, R.; Best, M.; Farrell, S. Simultaneous SAR and GMTI using ATI/DPCA. In Proceedings of the Algorithms for Synthetic Aperture Radar Imagery XXI, Baltimore, MD, USA, 13 June 2014; Zelnio, E., Garber, F.D., Eds.; International Society for Optics and Photonics, SPIE: Bellingham, WA, USA, 2014; Volume 9093, p. 90930U. [Google Scholar]

- Ward, J. Space-time adaptive processing for airborne radar. In Proceedings of the IEE Colloquium on Space-Time Adaptive Processing, London, UK, 6 April 1998; IET: Stevenage, UK, 1998. [Google Scholar]

- Yang, D.; Yang, X.; Liao, G.; Zhu, S. Strong Clutter Suppression via RPCA in Multichannel SAR/GMTI System. IEEE Geosci. Remote Sens. Lett. 2015, 12, 2237–2241. [Google Scholar] [CrossRef]

- Guo, Y.; Liao, G.; Li, J.; Chen, X. A Novel Moving Target Detection Method Based on RPCA for SAR Systems. IEEE Trans. Geosci. Remote Sens. 2020, 58, 6677–6690. [Google Scholar] [CrossRef]

- Li, J.; Huang, Y.; Liao, G.; Xu, J. Moving Target Detection via Efficient ATI-GoDec Approach for Multichannel SAR System. IEEE Geosci. Remote Sens. Lett. 2016, 13, 1320–1324. [Google Scholar] [CrossRef]

- Ramos, L.P.; Alves, D.I.; Duarte, L.T.; Pettersson, M.I.; Machado, R. Robust Principal Component Analysis Techniques for Ground Scene Estimation in SAR Imagery. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2023, 16, 9697–9710. [Google Scholar] [CrossRef]

- Liu, K.; He, X.; Liao, G.; Zhu, S.; Zeng, C.; Lan, L. Multichannel Ground Moving Target Detection Based on the Block Space-Time RPCA Method. IEEE Trans. Geosci. Remote Sens. 2025, 63, 5217415. [Google Scholar] [CrossRef]

- Fan, L.; Wang, H.; Yang, Q.; Deng, B. High-Quality Airborne Terahertz Video SAR Imaging Based on Echo-Driven Robust Motion Compensation. IEEE Trans. Geosci. Remote Sens. 2024, 62, 2001817. [Google Scholar] [CrossRef]

- Yan, H.; Zhang, J.; Mao, X.; Zhu, D.; Gao, W. Moving target echo simulation of Video Synthetic Aperture Radar (ViSAR). In Proceedings of the 2017 International Symposium on Antennas and Propagation (ISAP), Phuket, Thailand, 30 October–2 November 2017; IEEE: Piscataway, NJ, USA, 2017; pp. 1–2. [Google Scholar]

- Li, Z.; Luo, C.; Wang, H.; Yang, Q.; Zhang, H.; Liang, C. Subspectrum Division-Based Imaging Method for Curvilinear Moving Target in Terahertz SAR. IEEE Geosci. Remote Sens. Lett. 2025, 22, 4011905. [Google Scholar] [CrossRef]

- Gou, L.; Zhu, D.; Li, Y. A novel moving target detection method for VideoSAR. In Proceedings of the 2019 International Applied Computational Electromagnetics Society Symposium-China (ACES), Miami, FL, USA, 14–18 April 2019; IEEE: Piscataway, NJ, USA, 2019; Volume 1, pp. 1–2. [Google Scholar]

- Luo, X. Videosar Moving Target Detection from Geometric Distortion Image Sequence. In Proceedings of the IGARSS 2022—2022 IEEE International Geoscience and Remote Sensing Symposium, Kuala Lumpur, Malaysia, 17–22 July 2022; IEEE: Piscataway, NJ, USA, 2022; pp. 2825–2828. [Google Scholar]

- Zhang, Y.; Zhu, D.; Yu, X.; Mao, X. Approach to Moving Targets Shadow Detection for VideoSAR. J. Electron. Inf. Technol. 2017, 39, 2197–2202. [Google Scholar] [CrossRef]

- Zhang, Y.; Wang, X.; Qu, B. Three-Frame Difference Algorithm Research Based on Mathematical Morphology. Procedia Eng. 2012, 29, 2705–2709. [Google Scholar] [CrossRef]

- Hui, W.; Chen, Z.; Zheng, S. Preliminary Research of Low-RCS Moving Target Detection Based on Ka-Band Video SAR. IEEE Geosci. Remote Sens. Lett. 2017, 14, 811–815. [Google Scholar]

- He, Z.; Chen, X.; Yi, T.; He, F.; Dong, Z.; Zhang, Y. Moving target shadow analysis and detection for ViSAR imagery. Remote Sens. 2021, 13, 3012. [Google Scholar] [CrossRef]

- Yin, Z.; Zheng, M.; Ren, Y. A ViSAR shadow-detection algorithm based on LRSD combined trajectory region extraction. Remote Sens. 2023, 15, 1542. [Google Scholar] [CrossRef]

- Zhang, Y.; Yang, S.; Li, H.; Xu, Z. Shadow Tracking of Moving Target Based on CNN for Video SAR System. In Proceedings of the 2018 IEEE International Geoscience and Remote Sensing Symposium (IGARSS), Valencia, Spain, 22–27 July 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 4399–4402. [Google Scholar]

- Ding, J.; Wen, L.; Zhong, C.; Loffeld, O. Video SAR Moving Target Indication Using Deep Neural Network. IEEE Trans. Geosci. Remote Sens. 2020, 58, 7194–7204. [Google Scholar] [CrossRef]

- Yan, S.; Zhang, F.; Fu, Y.; Zhang, W.; Yang, W.; Yu, R. A Deep Learning-Based Moving Target Detection Method by Combining Spatiotemporal Information for ViSAR. IEEE Geosci. Remote Sens. Lett. 2023, 20, 4014005. [Google Scholar] [CrossRef]

- Yang, X.; Shi, J.; Chen, T.; Hu, Y.; Zhou, Y.; Zhang, X.; Wei, S.; Wu, J. Fast Multi-Shadow Tracking for Video-SAR Using Triplet Attention Mechanism. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5224212. [Google Scholar] [CrossRef]

- Wen, L.; Ding, J.; Loffeld, O. Video SAR Moving Target Detection Using Dual Faster R-CNN. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2021, 14, 2984–2994. [Google Scholar] [CrossRef]

- Kim, S.H.; Fan, R.; Dominski, F. ViSAR: A 235 GHz radar for airborne applications. In Proceedings of the 2018 IEEE Radar Conference (RadarConf18), Oklahoma City, OK, USA, 23–27 April 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 1549–1554. [Google Scholar]

- Yan, S.; Fu, Y.; Yu, R.; Luo, C.; Zhang, W.; Yang, W. High-Precision Moving Target Shadow Detection Algorithm for ViSAR Based on Information Geometry. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2025, 18, 12728–12739. [Google Scholar] [CrossRef]

- Fang, H.; Liao, G.; Liu, Y.; Zeng, C. Shadow-Assisted Moving Target Tracking Based on Multi-discriminant Correlation Filters Network in Video SAR. IEEE Geosci. Remote Sens. Lett. 2023, 20, 4006205. [Google Scholar] [CrossRef]

- Wells, L.; Sorensen, K.; Doerry, A.; Remund, B. Developments in sar and ifsar systems and technologies at sandia national laboratories. In Proceedings of the 2003 IEEE Aerospace Conference Proceedings, Big Sky, MT, USA, 8–15 March 2003; IEEE: Piscataway, NJ, USA, 2003; Volume 2, pp. 1085–1095. [Google Scholar]

- He, Z.; Chen, X.; Yu, C.; Li, Z.; Yu, A.; Dong, Z. A Robust Moving Target Shadow Detection and Tracking Method for VideoSAR. J. Electron. Inf. Technol. 2022, 44, 3882. [Google Scholar] [CrossRef]

| the shortest slant range from scene center to aircraft | |

| the start, terminate time of observation | |

| the center time of the full aperture | |

| T | the synthetic aperture duration |

| the rotation angle of the aircraft relative to the scene center | |

| the angle between flight direction and line-of-sight at |

| Parameter | Value |

|---|---|

| Mode | Spotlight |

| Center Frequency | 16.7 GHz (Ku band) |

| Wavelength | 1.8 cm |

| Incidence Angle | 65° |

| Platform Height | 2 km |

| Platform Speed | 245 km/h |

| Cross-Range Resolution | 0.1 m |

| Total Rotation Angle | 200° |

| Truth | Prediction | ||||

| Yes | No | Total | |||

| Yes | TP | FN | Positive (T) | Missing alarm | |

| No | FP | TN | Negative (T) | ||

| Total | False alarm | ||||

| Method | Det | PCs | Fusion | Precision | Recall | F1 | mAP |

|---|---|---|---|---|---|---|---|

| Raw | ✓ | - | - | 0.3967 | 0.4451 | 0.4195 | 0.3562 |

| Det (PCs) | ✓ | ✓ | - | 0.7587 | 0.5163 | 0.6145 | 0.7239 |

| Det (F) | ✓ | - | ✓ | 0.8328 | 0.6972 | 0.7590 | 0.7504 |

| Our (PCs + F) | ✓ | ✓ | ✓ | 0.8280 | 0.7156 | 0.7590 | 0.7652 |

| Method | Precision | Recall | F1 | mAP |

|---|---|---|---|---|

| bkMEAN | 0.0937 | 0.0567 | 0.0706 | 0.0436 |

| bkDIFF | 0.0831 | 0.0736 | 0.0781 | 0.0352 |

| PCA | 0.7863 | 0.5586 | 0.6532 | 0.6622 |

| Proposed | 0.8280 | 0.7156 | 0.7677 | 0.7652 |

| Method | Precision | Recall | F1 | mAP |

|---|---|---|---|---|

| Faster-RCNN | 0.3219 | 0.2917 | 0.3617 | 0.2846 |

| YOLO5L | 0.4367 | 0.3951 | 0.4149 | 0.3770 |

| YOLO5X | 0.5458 | 0.4951 | 0.5192 | 0.4432 |

| YOLO7L | 0.4936 | 0.3676 | 0.4214 | 0.4130 |

| YOLO7X | 0.7987 | 0.5586 | 0.6574 | 0.7172 |

| PC-YOLO | 0.8280 | 0.7156 | 0.7677 | 0.7652 |

| 3.02% | 13.86% | 14.00% | 4.80% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Han, Y.; Wang, X.; Jiang, J.; Xue, C.; Qin, R.; Dong, G. PC-YOLO: Moving Target Detection in Video SAR via YOLO on Principal Components. Remote Sens. 2026, 18, 510. https://doi.org/10.3390/rs18030510

Han Y, Wang X, Jiang J, Xue C, Qin R, Dong G. PC-YOLO: Moving Target Detection in Video SAR via YOLO on Principal Components. Remote Sensing. 2026; 18(3):510. https://doi.org/10.3390/rs18030510

Chicago/Turabian StyleHan, Yu, Xinrong Wang, Jiaqing Jiang, Chao Xue, Rui Qin, and Ganggang Dong. 2026. "PC-YOLO: Moving Target Detection in Video SAR via YOLO on Principal Components" Remote Sensing 18, no. 3: 510. https://doi.org/10.3390/rs18030510

APA StyleHan, Y., Wang, X., Jiang, J., Xue, C., Qin, R., & Dong, G. (2026). PC-YOLO: Moving Target Detection in Video SAR via YOLO on Principal Components. Remote Sensing, 18(3), 510. https://doi.org/10.3390/rs18030510