1. Introduction

Unmanned aerial vehicles (UAVs), or drones, have rapidly become an essential tool for maritime safety, security, and search-and-rescue (SAR) operations because they can cover large sea areas quickly and at relatively low cost. Yet, from a computer vision perspective, maritime UAV scenes are among the most difficult environments: targets are tiny, the background is dynamic (waves, wakes, and reflections), and weather and lighting conditions can change dramatically within minutes. The SeaDronesSee benchmark was created precisely to address this gap. It provides over 54,000 annotated frames of humans and vessels in open water, with dedicated tracks for detection and tracking, and shows that even state-of-the-art detectors currently reach only around 36% mAP on its detection track, highlighting how far we still are from reliable automated SAR at sea [

1,

2].

Before deep learning, maritime video analytics relied heavily on classical motion-based methods such as background subtraction and optical flow. A comprehensive survey on video detection and tracking of maritime vessels documents how these traditional approaches struggle with sea clutter, camera motion, and changing illumination and how they often require careful tuning for each scenario. A more focused experimental study on object detection in a maritime environment systematically evaluated more than twenty background subtraction algorithms and found that none could cope robustly with the combined challenges of waves, wakes, and platform motion. Together, these works make a strong case that motion information alone is not sufficient for dependable maritime anomaly detection; robust appearance modeling is needed as well [

3,

4].

At the other end of the spatial scale, spaceborne optical imagery plays a crucial role in maritime domain awareness. A detailed survey on vessel detection and classification from spaceborne optical images reviews decades of research on detecting ships from satellites, across multispectral, SAR (Synthetic Aperture Radar), and high-resolution optical sensors. It shows a clear evolution from hand-crafted features to convolutional neural networks, but it also emphasizes persistent difficulties in detecting small vessels, dealing with cloud cover, and discriminating ships from look-alike coastal structures. These satellite-scale challenges mirror, at a different resolution, the small-object and clutter problems encountered in UAV imagery [

5].

In parallel, the broader maritime awareness community has been integrating AI methods into operational systems. A review of AI methods for maritime awareness systems discusses how machine learning has been used for detection, classification, anomaly detection, and route prediction across heterogeneous sensors such as the AIS (Automatic Identification System), radar, and imagery. That review points out not only the promise of deep learning but also unresolved issues: data scarcity in rare but critical events, the need for multi-sensor fusion, and the challenge of making AI-based decisions interpretable to human operators. This broader context underlines that object detection from images or video is only one component of a complete maritime anomaly-detection pipeline, but it is a foundational one [

6].

With the rise of deep learning, YOLO-style one-stage detectors have become a de facto standard for real-time vision in constrained platforms. In the context of UAV maritime surveillance, Cheng et al. proposed YOLOv5-ODConvNeXt, a tailored architecture for “Deep learning based efficient ship detection from drone-captured images for maritime surveillance”, which augments YOLOv5s with omni-dimensional convolution and ConvNeXt-like modules to better capture multi-scale ship features while remaining lightweight enough for drone deployment [

7]. Their experiments on a dedicated drone-captured ship dataset demonstrate that such optimized architectures can significantly improve both Accuracy and inference speed over vanilla YOLOv5, showing the potential of UAV-borne detectors for operational maritime surveillance.

Similarly, YOLOv7-Sea adapts YOLOv7 specifically to maritime UAV images by introducing microscale object heads, attention mechanisms, and data-augmentation strategies tuned to sea scenes [

8]. On SeaDronesSee and related datasets, YOLOv7-Sea achieves higher detection performance than the original YOLOv7, especially on small targets such as distant swimmers or small boats. This provides further evidence that domain-specific architectural enhancements can partially close the performance gap on challenging maritime UAV benchmarks.

Beyond individual platforms, there is an increasing interest in using YOLO models for large-scale traffic mapping. In “Mapping recreational marine traffic from Sentinel-2 imagery using YOLO object detection models”, Mäyrä et al. construct a ship-detection pipeline for Sentinel-2 satellite data and apply it to quantify recreational marine traffic patterns across wide coastal regions [

9]. Their work illustrates how YOLO-based detectors can be deployed at scale for strategic maritime monitoring, even at moderate resolution, and provides an example of how detection outputs can feed into higher-level analyses and policy questions.

Within the search-and-rescue domain, researchers have also started to design YOLO variants specifically for SAR imagery. MES-YOLO, an “efficient lightweight maritime search and rescue object detection algorithm with improved feature fusion pyramid network,” builds on a YOLOv8 backbone but introduces a Multi-Asymptotic Feature Pyramid Network and other architectural changes tailored to small SAR targets and complex sea states [

10]. Evaluations on SAR-oriented datasets show that MES-YOLO achieves higher Accuracy than standard YOLO baselines while remaining lightweight enough for UAV or edge deployment, reinforcing the trend toward task- and domain-specific YOLO variants in maritime applications.

Taken together, the existing literature indicates two complementary conclusions. First, classical motion-centric approaches highlight that temporal information is valuable but fragile in isolation under sea clutter and platform motion. Second, appearance-based deep detectors—even when optimized—still struggle with small targets and complex maritime backgrounds, and they may produce intermittent detections that degrade tracking continuity in video. This combination motivates approaches that explicitly integrate appearance cues from deep detectors with temporal cues derived from video dynamics.

In this work, we investigate a fusion-based, deployment-oriented pipeline for UAV maritime anomaly detection that combines YOLO-based appearance detection with motion/stillness assistance modules to improve video-level stability. Rather than proposing a new detector architecture, our focus is on the system-level hypothesis that complementary temporal cues can (i) reduce wave-induced false alarms, (ii) mitigate short-term detection dropouts, and (iii) support more consistent tracking in challenging UAV maritime videos. By framing the problem around video reliability and operational robustness, our study aims to contribute evidence and analysis toward more dependable maritime UAV surveillance and SAR-support systems.

2. Literature Review

Several related studies and projects on maritime surveillance and detection are summarized in

Table 1.

Prior work on YOLO for maritime monitoring can be grouped into three closely related lines. First, sensor-specific studies demonstrate that deep detectors can recover small vessels under challenging conditions: radar/time–frequency representations enable detection when echoes are embedded in sea clutter, while infrared pipelines combine carefully designed appearance cues to suppress sun-glint and background noise [

11,

13,

16,

19]. Second, a large body of work focuses on engineering lighter and more robust YOLO variants for deployment on constrained maritime or edge platforms, improving multi-scale detection and small-target sensitivity through backbone redesigns and loss-function refinements [

12,

15,

18,

20,

24]. Third, UAV- and aerial-platform studies show that drone-borne imagery has become a central modality for maritime observation, with many reports achieving strong frame-level detection Accuracy across diverse scenes [

17,

21,

23].

Despite this progress, most existing methods remain detection-centric: they largely operate on single frames (or short local fluctuation measures in infrared) and output bounding boxes and class labels, with limited use of temporal reasoning. Even recent efforts addressing data scarcity (e.g., semi-supervised learning) and benchmarking across sea states typically frame the task as “ship detection” rather than anomaly-oriented monitoring [

21,

22]. As a result, there is a clear gap in explicitly fusing appearance detections with motion and trajectory patterns over time to infer anomaly-like behaviors—such as small agile objects, atypical approach paths, or non-cooperative craft moving inconsistently with normal traffic.

Our work addresses this gap by shifting from frame-level detection to track-level reasoning. We build on YOLO’s proven appearance modeling and introduce a lightweight fusion layer that integrates (i) YOLO detections, (ii) motion cues from background subtraction and frame-differencing, and (iii) appearance-based reidentification against stored templates. This design supports anomaly-centric decision-making in drone video, enabling the system to distinguish ambiguous small movers from benign vessels and bridging the gap between object detection outputs and higher-level maritime situation awareness.

4. Methodology

4.1. YOLOv12

In this study, we employ YOLOv12 as the primary state-of-the-art detector to mitigate false positives arising from background dynamics (e.g., wave or cloud motion). YOLO is a single-stage architecture that performs direct regression from images to bounding boxes and class probabilities in a single forward pass—without an intermediate region-proposal stage. A backbone extracts features, and a detection head predicts box coordinates, an objectness score, and class probabilities at each spatial location/anchor. This design enables real-time inference and helps maintain focus on target objects while suppressing environmental noise [

27]. Specifically, YOLOv12—the latest iteration in the series, released on 18 February 2025 [

28]—retains the canonical backbone → neck → head layout, emphasizes attention with compute-efficient refinements, and stabilizes deep feature aggregation via R-ELAN [

29]. The overall architecture is depicted in

Figure 3a,b, adapted from [

30]. The key innovations of YOLOv12 can be summarized as follows:

Area Attention (A

2) with FlashAttention [

29,

31,

32]

YOLOv12 reshapes the feature map into spatial “areas” and applies attention locally per area and then fuses the results, as shown in Equation (1).

: Query, key, and value matrices (tokens projected from feature maps), respectively.

: Key dimensionality used for the scaling in attention.

: Scaled dot-product attention.

Instead of global attention over all (spatial height and width) tokens, the feature map is partitioned into areas (number of spatial areas/windows); attention is computed per area and then fused. This reduces the dominant cost from to while preserving a large receptive field. FlashAttention further accelerates by tiling to minimize GPU High-Bandwidth Memory (HBM) reads/writes (IO-aware exact attention), resulting in attention usable at real-time resolutions (e.g., 640).

- 2.

R-ELAN: Residual Efficient Layer Aggregation Networks [

29,

33,

34]

Since original ELAN/GELAN stacks deep aggregation paths but can create gradient bottlenecks in attention-centric, larger backbones, R-ELAN adds block-level residuals with scaling and retools aggregation to stabilize training and improve fusion, as shown in Equation (2) [

35].

: Input tensor to an R-ELAN block.

: ELAN-style internal transform (multi-branch conv/attention + concatenation/merge inside the block).

: Residual scaling factor (small constant, e.g., 0.01) that stabilizes training.

: Scaled residual connection output of the block.

With this solution, the model can perform optimization more easily (especially large models), has fewer parameters than naively deep stacks, and has better feature reuse/fusion in the backbone.

4.2. Motion Assistance

Following YOLOv12-based detection, we maintain confirmed anomaly tracks during temporary detector dropouts by incorporating motion cues. Specifically, we apply background subtraction and frame-differencing to extract motion blobs and then associate these blobs with existing tracks using distance, IoU, and path-tortuosity gating. This fusion allows tracks to persist through brief appearance changes, pose/shape deformations, or partial occlusions that may otherwise cause missed detections and premature track termination.

This was developed based on the pixel-wise Gaussian Mixture Model (GMM) for background subtraction that was introduced by [

36], who modeled each pixel’s recent history as a mixture of Gaussians and classified the foreground when the current sample did not match the dominant “background” components, as shown in Equation (3). Then, refs. [

37,

38] proposed adaptive, recursive updates that (i) automatically select the number of Gaussians per pixel and (ii) update the mixture parameters online, improving robustness to illumination changes and scene dynamics; these works are the basis of OpenCV’s MOG2 implementation [

39,

40].

: Pixel value at location in frame (intensity or 3-vector).

: Number of Gaussian components in the pixel’s mixture model.

: Weight of mixture component at pixel .

: Mean (expected pixel value) of component at .

: Covariance (often diagonal/scalar variance) of component at .

: Gaussian density.

- 2.

Frame differencing [

41,

42]

We compute a lightweight motion mask by thresholding the absolute inter-frame grayscale difference, as shown in Equation (4).

: Binary motion mask for frame (1 = moving, 0 = static).

: Gray-level threshold for differencing (pixels with change above this are motion).

1(⋅): An indicator.

Figure 3.

(

a) Architectural structure of YOLOv12. (

b) R-ELAN architecture. The illustrations were inspired by [

30].

Figure 3.

(

a) Architectural structure of YOLOv12. (

b) R-ELAN architecture. The illustrations were inspired by [

30].

4.3. Stillness (Appearance) Assistance

In cases where detected anomalies lose association with YOLO and become sufficiently slow or stationary such that motion-based support is ineffective, we revert to appearance-based reidentification against a stored template. We evaluate up to three appearance cues and consider the object present if any cue satisfies the acceptance criterion.

Template matching via normalized cross-correlation (NCC) [

43,

44]

This can capture structural similarity under linear brightness changes.

: Stored grayscale template patch of the target (fixed size).

: Current grayscale ROI resized to the same size as .

: Mean intensities of template and ROI, respectively.

: Normalized cross-correlation score.

This is accepted if where is the acceptance threshold for NCC (e.g., 0.72).

- 2.

Oriented FAST and Rotated BRIEF (ORB) keypoint matching (binary features + Hamming) [

45,

46]

This is rotation-aware, robust to modest viewpoint/illumination changes, and very fast. This is accepted if

ORB keypoints: Salient points detected in and .

Binary descriptors: Bitstrings describing patches around keypoints.

Hamming distance: Number of differing bits between two descriptors.

: Match quality.

: Minimal ratio to accept (e.g., 0.20).

This is a practical match-coverage heuristic akin to the inlier ratio used in RANSAC-style geometric verification [

47,

48].

- 3.

Hue, saturation, and value (HSV) histogram correlation (color cue) [

49,

50]

We compute 2D HSV histograms, , for the template and ROI. This is helpful, as it retains targets with consistent color distribution despite small pose/scale changes when texture is weak. We will accept the correlation metric if

: 2D color histograms for template and ROI.

: Mean bin values of each histogram.

: Pearson correlation between histograms (−1 to 1).

: Minimal histogram correlation to accept (e.g., 0.90).

We next integrate the foregoing components into a unified maritime-anomaly surveillance workflow (

Figure 4). Input video frames of the maritime scene are processed by our trained YOLOv12 detector, which classifies objects (e.g., sky, ships, towers) and flags instances of the anomaly class. Because operational data on genuine maritime anomalies (e.g., unlawful vessels, remotely operated craft) are restricted, we designate windsurf targets as a proxy anomaly: they are small, visually distinct from transport ships, and exhibit irregular trajectories suitable for stress-testing detection and tracking. Objects classified as non-anomalies are tracked until exit, and their metadata are logged by class. For anomalies, we maintain track continuity using two complementary modules: (i) motion assistance (M), which exploits background subtraction and frame-differencing to associate motion blobs when YOLO momentarily misses the target, and (ii) stillness assistance (S), which applies appearance-based reidentification against stored templates when the target is slow or stationary and motion cues are unreliable. The system switches between M and S as object dynamics change, while YOLO re-acquires detections whenever possible. The pipeline runs to completion over each video, producing an annotated output video and CSV logs for metrics and audit trails.

4.4. Performance Metrics

When training YOLOv12 to recognize target classes (e.g., ships, windsurfs, land; see

Table 2,

Section 3), rigorous evaluation criteria are required to determine deployment readiness. For object detection, three core metrics—Precision (P), Recall (R), and mean Average Precision (mAP), with particular emphasis on mAP50—are standard and form the basis of our model assessment [

51,

52]. We first define the requisite terms as follows: let a binary detector produce predicted labels

for ground truth

. Then,

TP (true positives): Predicted = 1 and = 1.

FP (false positives): Predicted = 1 and = 0.

TN (true negatives): Predicted = 0 and = 0.

FN (false negatives): Predicted = 0 and = 1.

Fraction of predicted positives that are actually positive:

High Precision means few false alarms (low FP).

- 2.

Recall (R)

Fraction of actual positives that are detected:

High Recall means few misses (low FN).

- 3.

Average Precision (AP) and Mean Average Precision (mAP) [

53,

54,

55]

We first introduce Intersection over Union (IoU), as it underpins the subsequent detection metrics.

Intersection over Union (IoU)

For object detection, a predicted box,

, matches a ground-truth box,

, if

where

is an IoU threshold (e.g., 0.50).

Average Precision (AP) is the area under the Precision–Recall curve (with standard interpolation): [

53,

56,

57]

: Recall level on the Precision–Recall (PR) curve;

.

: Precision measured at Recall level, , after sorting detections by confidence.

: Interpolated Precision (ensures a non-increasing PR curve for integration).

is an index over the Recall steps.

Mean Average Precision (mAP) is the mean of AP over all classes. In modern practice, mAP@0.50 (mAP50) is AP-computed at a single IoU threshold, = 0.50, and then averaged across classes (VOC-style).

Following offline evaluation using Precision, Recall, and mAP, we assess deployed performance on full-length videos using Accuracy (in place of mAP). Specifically, we compute object-level Accuracy against human-annotated ground-truth anomalies to quantify the proportion of correctly identified anomalies relative to all annotated instances, providing an overall measure of end-to-end detection effectiveness in operational conditions.

- 4.

Accuracy

Overall proportion of correct predictions, useful as a general score:

5. Experimental Results and Discussion

We trained our model, which is based on the YOLOv12 structure, with the datasets described in

Section 3. The training of the model was performed on an NVIDIA GeForce RTX 3050 Laptop GPU with 32 GB of DDR4 memory. We selected YOLOv12n based on a performance–efficiency trade-off observed in our preliminary benchmarking. Specifically, we trained and evaluated several YOLO-family detectors available in Ultralytics [

58] for 500 epochs on our dataset. Among the tested variants, YOLOv12 consistently achieved the strongest overall performance while maintaining a moderate parameter count, which kept GPU memory usage and training time within our computational constraints (

Table 3). In contrast, transformer-based or two-stage detectors such as RT-DETR, DETR, and Faster R-CNN [

23] typically contain > 30 million parameters, resulting in substantially higher training cost under the same hardware conditions. For instance, in our RT-DETR trial (≈33 M parameters), a single training epoch required approximately 7 min, implying roughly 58 h for a full 500-epoch run. This is markedly longer than the training time of the lightweight YOLO models evaluated in our study, which required approximately 1 h per model for the complete training schedule. Given its competitive detection Accuracy and substantially lower computational burden, YOLOv12n was, therefore, chosen as the base detector for integration into our maritime anomaly-detection fusion pipeline.

In

Table 4, regarding the YOLOv12 test set’s performance, the model attains Precision = 98.27%, Recall = 96.76%, and mAP50 = 98.77% for overall detection among all classes, indicating strong detection quality. For the designated anomaly class (windsurfs), Precision = 100% and Recall = 91.20%. This reflects the typical Precision–Recall trade-off: while false positives for windsurfs are effectively eliminated (100% Precision), a small fraction of true windsurf instances are missed (its Recall < overall Recall). A Recall of 91.20% implies that, on average, approximately 9 of 10 windsurf instances are detected.

To evaluate the robustness and stability of the trained model, we generated five randomized train/validation/test partitions for our dataset (327 images) using a 70:20:10 split ratio, consistent with the protocol in

Table 4, to assess the robustness of the trained YOLOv12 model. As summarized in

Table 5, the mean test set Precision, Recall, and mAP50 across the five split versions all exceed 90%, and the results follow the same overall trend reported in

Table 4. Moreover, the variability across splits is low, with standard deviations below 1%. Collectively, these findings suggest that, despite the limited dataset size, the model exhibits stable generalization performance and shows no clear evidence of overfitting or underfitting under the evaluated split conditions.

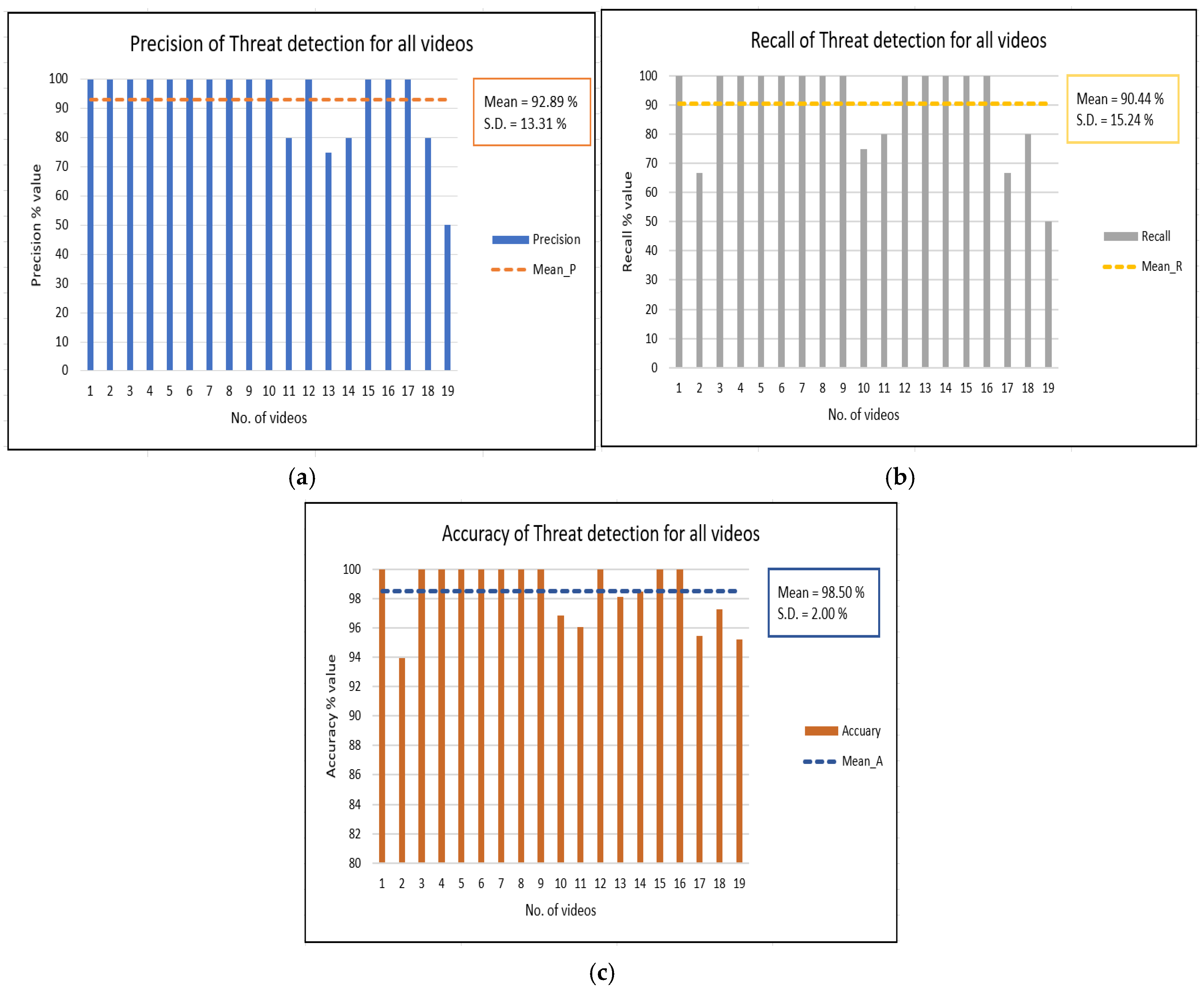

We then deployed and evaluated the trained model on 19 maritime videos captured near Bayshore MRT Station (TE29), Singapore, by a DJI Mini 2 drone, using the parameter settings listed in

Table 6. Across videos, the mean Precision is 92.89% with a standard deviation (SD) of 13.31%, as shown in

Figure 5a, and the mean Recall is 90.44% (SD 15.24%), as shown in

Figure 5b, evidencing variability consistent with changing environmental conditions (e.g., sea state, lighting, and scale). Despite this variance, the end-to-end Accuracy averages 98.50% (S.D. 2.00%), as shown in

Figure 5c, suggesting robust operational performance.

When the trained model was deployed on the drone-based maritime footage, YOLOv12 served as the primary detector for identifying anomalies (windsurfs). Using its learned weight parameters, YOLOv12 first localized objects in the scene and assigned class labels; instances classified as windsurfs were treated as anomalies for the purpose of this study, as illustrated in

Figure 6, which shows three detected anomalies (windsurfs). When a YOLOv12-detected anomaly continued to move, but the detector temporarily lost track of it, the system activated the motion assistance module. This module relied on pixel- and frame-differencing to estimate positional changes and maintain the track until YOLOv12 re-acquired the target, as shown in

Figure 7, where two anomalies are detected by YOLOv12 and one additional anomaly is maintained solely by motion assistance as a continuation of a detection from

Figure 6.

In scenarios where detected anomalies gradually slowed down and became nearly stationary—leading YOLOv12 to lose track—the system first attempted to rely on motion assistance. If motion cues were insufficient, the stillness (appearance) assistance module was engaged. This module preserved the track by comparing the visual similarity of candidate regions to the last YOLOv12 detection, thereby bridging periods without reliable motion or YOLOv12 outputs.

Figure 8 demonstrates this behavior, with three anomalies detected by YOLOv12 and one maintained by stillness (appearance) assistance. Finally, all three detection and tracking components—YOLOv12, motion assistance, and stillness (appearance) assistance—operate jointly to minimize missed detections and improve overall tracking Accuracy. This combined behavior is illustrated in

Figure 9, where three anomalies are detected by YOLOv12 (including one that was previously maintained only by stillness assistance in

Figure 8 and has since been re-acquired by YOLOv12), one anomaly is maintained by motion assistance (previously detected by YOLOv12 in

Figure 8), and one additional anomaly is tracked exclusively by stillness (appearance) assistance, which was not detected in the earlier frame shown in

Figure 8.

As summarized in

Table 7, both auxiliary modules—motion assistance and stillness (appearance) assistance—provide measurable support to the baseline YOLOv12 detector during video processing. In particular, among the 19 evaluated footage videos, 14 sequences contained at least one interval in which the motion assistance module was activated to sustain continuity for a YOLOv12-detected real anomaly. When counted at the event level, this corresponds to 20 of 60 true-positive anomaly instances (33.33%) benefiting from motion assistance at least once, primarily by reducing short-term tracking interruptions and helping the system recover from transient detection dropouts.

Similarly, the stillness (appearance) assistance module was triggered less frequently but still contributed to detection stability. Specifically, 4 of 19 videos (or 6 of 60 true-positive instances, 10.00%) relied on stillness assistance to preserve track continuity. This pattern is consistent with the intended design: appearance-based support is most beneficial under conditions where motion cues are weak or ambiguous (e.g., low relative motion, brief stationary behavior, or subtle object displacement), whereas motion assistance is more broadly applicable in dynamic scenes.

Although the absolute proportions are not dominant, these results are important for two reasons. First, they indicate that the proposed fusion pipeline provides robustness gains rather than headline improvements in detector Accuracy. In practical maritime surveillance, operational failures can arise not only from persistent misdetections but also from intermittent instability—for example, momentary misses due to waves, glare, compression artifacts, partial occlusion, or rapid viewpoint changes. Even occasional activation of assistance modules can, therefore, reduce fragmented tracks, stabilize temporal reasoning, and improve downstream analytics (e.g., duration, trajectory, and event-level reporting).

Second, the observed contribution supports the role of our fusion approach as a supplementary reliability layer rather than a competing detection architecture. The assistance modules are designed to “bridge” short detection gaps and prevent track loss, enabling YOLOv12 to operate more consistently when deployed in real-world maritime environments where appearance and motion conditions vary substantially across time and locations. Consequently, the fusion model primarily enhances deployment readiness—improving continuity and operational stability—rather than replacing the underlying detector. Additional details of the ablation study are provided in

Table A1 in

Appendix A.

Taken together, the results support that the integrated pipeline—YOLOv12 with motion assistance and stillness (appearance) assistance—performs reliably on real maritime footage, with high overall Accuracy and acceptable variability across scenes.

Generalization

Given the relatively limited size of our in-house dataset, we augmented it by integrating the drone-based maritime AFO dataset [

59], which was also adopted in [

23]. This dataset includes a “wind/sup-board” category that is closely aligned with our “windsurf” class, enabling a more consistent representation of the target anomaly category across sources. In addition, the AFO dataset provides finer-grained maritime object labels—such as humans, boats, buoys, sailboats, and kayaks—whereas our original annotations primarily consolidated non-target vessels into a single “ship” category, reflecting the generally larger object scale and reduced class diversity observed in our footage. We merged the two datasets and repartitioned the combined corpus into training, validation, and test sets using a 60:10:30 split, as shown in

Table 8. The relatively large test proportion was selected intentionally to evaluate model robustness and generalization under a more diverse data distribution and a stricter held-out evaluation setting. The corresponding performance results on this combined dataset are reported in

Table 9.

After training on the merged (our dataset + AFO) corpus, we evaluated the resulting model on an external set of drone-recorded maritime videos from a surveillance-and-rescue dataset [

61], which was also used in [

23]. This MOBDrone dataset was selected as a test case because its label taxonomy aligns well with the class definitions in our combined training set. Specifically, it includes person (corresponding to the human class), boat, wood, and life buoys, as well as surfboards, which serve as the closest counterpart to our windsurf anomaly category. The example results of this deployment study are presented in

Figure 10.

On the tested device, our laptop, processing a 30 FPS input stream, did not achieve full real-time operation under the serialized multi-module pipeline. For example, on the 16.27 s clip (488 frames, 30.00 FPS) in

Figure 10, the system achieved 5.44 FPS (0.18× real-time), with 179.93 ms/frame mean latency (p95 203.03 ms). Runtime profiling shows that the overhead is distributed across modules, with YOLO inference at 71.05 ms/frame and motion assistance at 73.08 ms/frame, and the remaining 35.80 ms/frame attributed to tracking/visualization/I/O. Power telemetry (NVIDIA nvidia-smi) reported a mean run-phase power of 11.59 W (run-phase energy 0.2894 Wh), with an idle baseline of 10.18 W for this run. Based on the measured throughput, a practical real-time deployment on the same device can be achieved via frame skipping, with an empirically recommended processing rate of approximately 4.8 FPS (proc_fps ≈ 4–6 for 30 FPS inputs), which preserves continuous monitoring while keeping computation bounded. If full-rate processing closer to the input FPS is required, the same serialized architecture can be deployed on an edge platform with higher GPU compute and memory bandwidth (or hardware-accelerated decoding), which is expected to raise end-to-end throughput and better support continuous real-time operation in practical engineering settings.

Overall, the cross-dataset evaluation across training, testing, and deployment suggests that the proposed YOLO-fusion model is well suited as a supplementary module rather than a replacement detector. In particular, it can be integrated with larger and more diverse datasets and combined with existing maritime object-detection pipelines that primarily optimize per-frame Accuracy, providing additional benefits for video-based operation by improving tracking continuity and reducing intermittent detection loss during deployment.

6. Conclusions

Maritime surveillance and security are critical for maintaining safe and efficient sea logistics, port operations, and coastal activities in the presence of anomalies such as unlawful maritime activities, security-related incidents, and anomalous events (e.g., extreme waves or aggressive marine wildlife). In this study, we proposed a hybrid drone-based maritime monitoring framework that integrates YOLOv12 with a motion assistance module for moving targets and a stillness (appearance) assistance module for slow or stationary targets. The system was trained on a custom dataset collected using a DJI Mini 2 drone around the port area near Bayshore MRT Station (TE29), Singapore, where windsurfers were used as proxy (dummy) anomalies due to the unavailability of real anomaly footage, which is restricted for security reasons.

The experimental results show that the trained model achieves more than 90% Precision, Recall, and mAP50 across all classes on the detection test set. When deployed on real maritime video sequences, the pipeline attains a mean Precision of 92.89% (SD 13.31), a mean Recall of 90.44% (SD 15.24%), and a mean Accuracy of 98.50% (SD 2.00%). These results suggest that the proposed framework is robust and shows strong potential for real-world maritime anomaly detection, despite some variation in performance across scenarios.

However, this work also has several limitations that open avenues for further research. First, the use of windsurfers as proxy anomalies should be replaced or complemented with genuine anomaly footage obtained from relevant authorities to better reflect operational conditions and anomaly behavior. Second, the current dataset is limited to a single geographic area and sensor platform; future studies should evaluate the model across multiple ports, environmental conditions (e.g., adverse weather, nighttime), and different UAV platforms to assess generalization. Third, the framework could be extended to incorporate additional sensors (such as thermal cameras or radar), more diverse anomaly classes, and advanced temporal or multi-object tracking algorithms to further enhance robustness under heavy clutter and occlusion. Finally, real-time deployment tests, human-in-the-loop evaluation, and integration with existing maritime command-and-control systems would be valuable steps toward transitioning this proof-of-concept into an operational maritime and coastal security tool.