4.1. Dataset Description

The TIB UAV dataset [

35] consists of 2850 image samples covering various types of UAVs, primarily including multi-rotor and fixed-wing categories. Representative example images are shown in

Figure 7a. The dataset includes image samples captured under various illumination conditions and contains challenging cases such as extremely small targets, motion blur, and complex background environments. The dataset is divided into three subsets: a training set with 1994 samples, a validation set with 428 samples, and a test set with 428 samples.

The Drone-vs-Bird UAV dataset [

36] contains 77 video sequences encompassing seven types of UAVs. Sample frames are illustrated in

Figure 7b. The dataset covers samples captured under diverse environmental conditions, including sky and vegetation backgrounds, varying weather situations, and complex illumination with direct sunlight and glare interference, as well as data obtained using different camera parameter settings. To reduce computational overhead, we systematically extracted 2168 representative frames from the original video data and divided them into three subsets: 1517 images for training, 325 images for validation, and 325 images for testing. This provides sufficient data support for subsequent algorithm validation and performance evaluation.

In this study, the aforementioned datasets are utilized to validate the effectiveness and generalization capability of the proposed improved model. As shown in

Figure 8, the pixel-size distribution of UAVs in the datasets indicates that the vast majority of UAV targets occupy regions smaller than 25 × 25 pixels. According to the MS COCO definition [

37], targets occupying an area of 32 × 32 pixels or less in an image are categorized as small objects. This indicates that under small-object conditions, the model must possess strong recognition and localization capabilities. Therefore, these datasets are particularly suitable for evaluating the proposed method’s performance in UAV small-object detection.

Figure 7.

Sample images from different scenarios in the TIB and Drone-vs-Bird datasets. (a) TIB; (b) Drone-vs-Bird.

Figure 7.

Sample images from different scenarios in the TIB and Drone-vs-Bird datasets. (a) TIB; (b) Drone-vs-Bird.

Figure 8.

TIB and Drone-vs-Bird Dataset: drone pixel size distribution (darker colors indicate higher quantities). (a) TIB; (b) Drone-vs-Bird.

Figure 8.

TIB and Drone-vs-Bird Dataset: drone pixel size distribution (darker colors indicate higher quantities). (a) TIB; (b) Drone-vs-Bird.

4.2. Experimental Setup and Evaluation Metrics

This experiment is conducted on a system equipped with an NVIDIA GeForce RTX 3060 GPU and an Intel Core i7-12700 CPU. All models are trained from scratch. The remaining key constant training parameters are presented in

Table 1.

To evaluate the effectiveness of the EFPNet model, several standard metrics commonly used in object detection tasks were adopted for comparative analysis. Precision (

P) represents the ratio of correctly predicted targets to all detected targets. Recall (

R) denotes the ratio of correctly detected targets to all actual targets present in the dataset. The calculation formulas are as follows:

where

denotes true positives (correct detections),

denotes false positives (incorrect detections), and

represents false negatives (missed detections).

The Average Precision (

) refers to the area under the precision–recall curve. The mean Average Precision (

) represents the average of

values across all categories. Two mAP metrics are employed in this paper: mAP50:50 (with an IoU threshold of 0.5) and mAP50 (averaged across IoU thresholds ranging from 0.5 to 0.95). Here,

k denotes the number of evaluated categories, and mAP is computed as

GFLOPs and Params are used to assess model complexity, while FPS (Frames Per Second) is employed to evaluate detection speed.

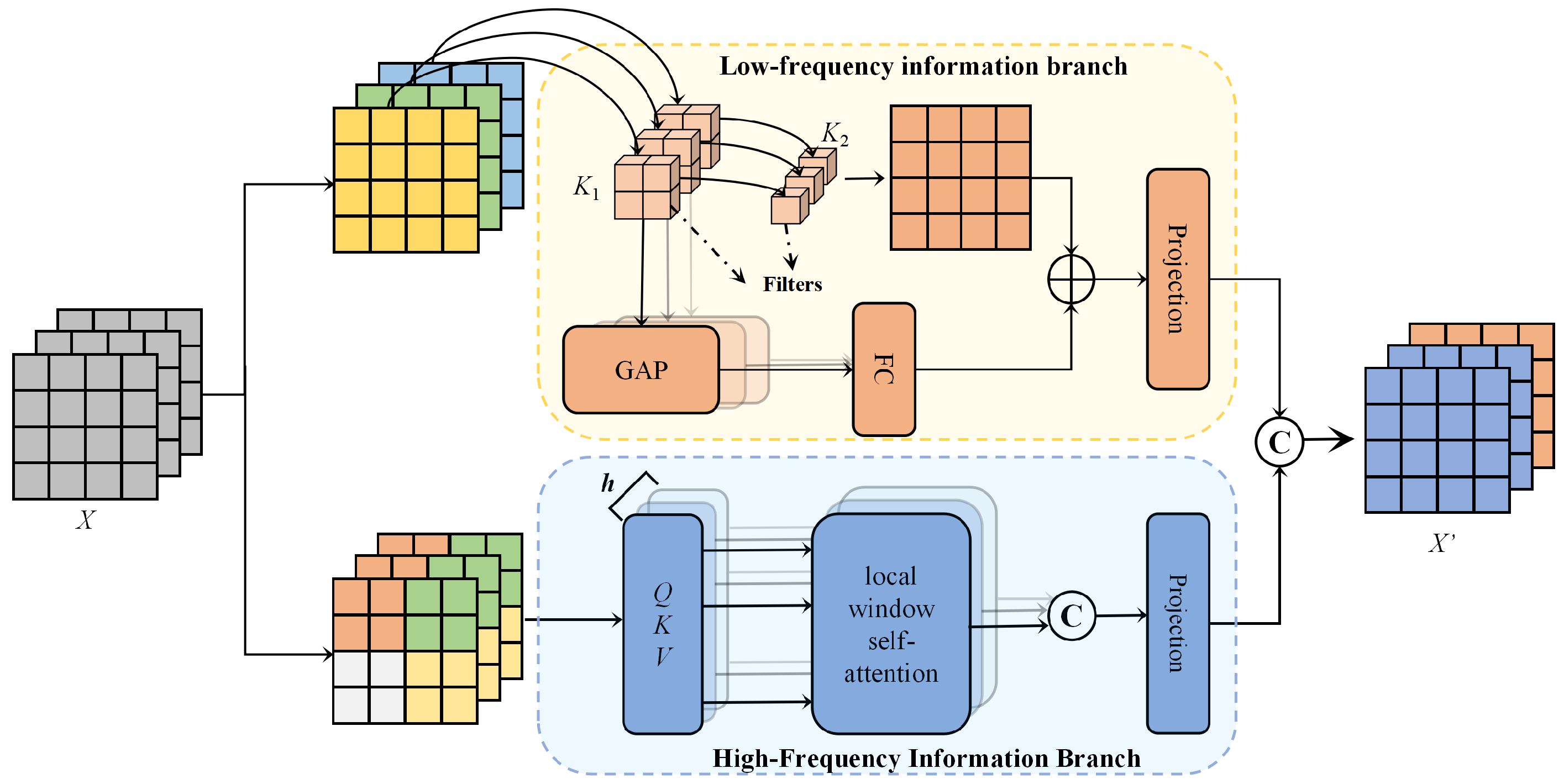

4.5. Ablation Experiment

To investigate the impact of each improvement on model performance, computational complexity, and parameter size, ablation experiments were conducted on the TIB UAV dataset using the EFPNet model. The model’s accuracy on the test set was recorded under non-pretrained conditions. The experiments examined three factors: (A) replacing the AIFI module in RT-DETR with the proposed high–low frequency interaction HCM-Attn module; (B) introducing the DySample dynamic upsampler; and (C) incorporating the PSFT-Net cross-scale feature fusion structure. The results are presented in

Table 4 and

Figure 9a.

The results indicate that the baseline model achieved a precision of 92.0%, a recall of 91.8%, mAP50 of 90.9%, mAP50:95 of 40.1, GFLOPs of 57.3, a parameter count of 19,974,480, and FPS of 84.7. In Experiments 2, 3, and 4, where only one module was added individually, the detection accuracy improved significantly, confirming the feasibility and effectiveness of each proposed component. For combinations of two modules, B + C achieved the highest precision (94.6%), recall (94.8%), and mAP50 (93.9%); A + C obtained the best mAP50:95 (43.3%); and A + B improved the FPS to 86.1. When all three modules were integrated, the model achieved optimal performance. Compared to the baseline, P, R, mAP50, and mAP50:95 improved by 2.8%, 3.1%, 3.2%, and 3.0%, respectively. Meanwhile, the GFLOPs decreased by 1.4 and the number of parameters was reduced by approximately 0.2 M. It is noteworthy that the joint deployment of modules A and B resulted in a slight increase in GFLOPs. This is primarily attributed to the feature dimension discrepancy between the output of HCM-Attn and the input of DySample, which necessitated the introduction of additional convolutional layers at the implementation level for channel alignment and feature mapping.

Supplementary experiments were conducted on the Drone-vs-Bird UAV dataset. (The results are shown in

Table 5 and

Figure 9b.) The results demonstrated a performance trend consistent with previous findings, further highlighting the robustness of the proposed method. The experiments confirmed the synergistic effect of the HCM-Attn, DySample, and PSFT-Net modules. When detecting UAV targets, these modules enable effective integration of deep and shallow features while suppressing interference from complex backgrounds, thereby achieving more accurate detection results.

Table 4.

Results of TIB dataset ablation experiments.

Table 4.

Results of TIB dataset ablation experiments.

| Test.No | A | B | C | P (%) | R (%) | mAP50 (%) | mAP50:95 (%) | GFLOPs | Params | FPS |

|---|

| 1 | × | × | × | 92.0 | 91.8 | 90.9 | 40.1 | 57.3 | 19,974,480 | 84.7 |

| 2 | ✓ | × | × | 92.5 | 92.3 | 91.0 | 40.0 | 57.1 | 19,940,944 | 87.4 |

| 3 | × | ✓ | × | 93.2 | 93.2 | 91.4 | 41.6 | 57.3 | 19,990,928 | 85.0 |

| 4 | × | × | ✓ | 93.5 | 93.4 | 92.1 | 41.6 | 55.6 | 19,852,144 | 45.8 |

| 5 | ✓ | ✓ | × | 93.2 | 92.2 | 90.8 | 40.2 | 57.5 | 19,957,392 | 86.1 |

| 6 | ✓ | × | ✓ | 94.1 | 94.0 | 92.5 | 43.3 | 56.2 | 19,900,656 | 62.6 |

| 7 | × | ✓ | ✓ | 94.6 | 94.8 | 93.9 | 43.0 | 56.1 | 19,950,640 | 61.5 |

| 8 | ✓ | ✓ | ✓ | 94.8 | 94.9 | 94.1 | 43.1 | 55.9 | 19,818,608 | 62.5 |

Table 5.

Results of Drone-vs-Bird dataset ablation experiments.

Table 5.

Results of Drone-vs-Bird dataset ablation experiments.

| Test.No | A | B | C | P (%) | R (%) | mAP50 (%) | mAP50:95 (%) | GFLOPs | Params | FPS |

|---|

| 1 | × | × | × | 97.3 | 96.6 | 96.2 | 55.3 | 57.3 | 19,974,480 | 85.9 |

| 2 | ✓ | × | × | 97.6 | 96.3 | 97.3 | 56.1 | 57.1 | 19,940,944 | 87.8 |

| 3 | × | ✓ | × | 97.3 | 96.5 | 97.2 | 55.5 | 57.3 | 19,990,928 | 85.3 |

| 4 | × | × | ✓ | 97.0 | 96.3 | 97.5 | 58.7 | 55.6 | 19,852,144 | 47.5 |

| 5 | ✓ | ✓ | × | 97.9 | 97.0 | 98.1 | 56.4 | 57.5 | 19,957,392 | 80.8 |

| 6 | ✓ | × | ✓ | 97.5 | 97.0 | 97.6 | 57.9 | 56.2 | 19,900,656 | 57.5 |

| 7 | × | ✓ | ✓ | 97.3 | 97.8 | 97.9 | 57.3 | 56.1 | 19,950,640 | 59.9 |

| 8 | ✓ | ✓ | ✓ | 98.3 | 97.6 | 98.1 | 58.3 | 55.9 | 19,818,608 | 60.1 |

Figure 9.

Visualization of selected parameters from ablation experiments. (a) TIB; (b) Drone-vs-Bird.

Figure 9.

Visualization of selected parameters from ablation experiments. (a) TIB; (b) Drone-vs-Bird.

To comprehensively evaluate the performance of EFPNet in various UAV small-object-detection scenarios, HiResCAM [

40] was employed to generate and compare heatmaps before and after applying the proposed mechanism. Three representative scenarios were selected for experimentation: (1) scenes with complex background interference, (2) scenes involving confusion with similar targets, and (3) scenes containing multiple detection targets. The comparative visualization results are presented in

Figure 10.

In Scenario 1, due to the highly complex background and the strong texture similarity between UAV targets and the surrounding environment, the baseline model struggled to extract discriminative features. As a result, its attention dispersed over irrelevant background regions, often leading to false detections involving non-target areas (see

Figure 10d). In Scenario 2, objects with highly similar texture details to UAVs (e.g., birds) were present. The baseline model, lacking sufficient discriminative capacity, mistakenly identified such objects as UAVs (see

Figure 10e). In Scenario 3, which involves multiple UAV targets, the original model’s multihead attention mechanism was relatively diffuse due to the spatial separation of targets. Consequently, it struggled to distinguish multiple target regions effectively within the same feature map, resulting in overlapping or missed detection boxes (see

Figure 10f). When EFPNet was applied, the introduction of an improved attention mechanism and a detail-oriented feature fusion structure enabled the model to effectively suppress background interference and enhance its sensitivity to small targets and local details, thereby achieving higher detection recall and better target differentiation. As shown in

Figure 10g–i, the model demonstrated a stronger ability to precisely focus on target regions within complex images, eliminating interference from the background and pseudo-targets (e.g., birds). This significantly reduced false and missed detections, thereby improving the overall detection accuracy.

Figure 10.

Visualization of heatmaps generated by ablation experiments. (a–c) Original images; (d–f) Heatmap visualization before applying EFPNet; (g–i) Heatmap visualization after applying EFPNet.

Figure 10.

Visualization of heatmaps generated by ablation experiments. (a–c) Original images; (d–f) Heatmap visualization before applying EFPNet; (g–i) Heatmap visualization after applying EFPNet.

4.6. Comparison of Different Detectors

To verify the performance superiority of the proposed EFPNet, we compared it against a series of classical and state-of-the-art detectors on the TIB and Drone-vs-Bird datasets. The models used in the experiments include Faster R-CNN [

15], YOLOv5-m [

41], YOLOv8-m [

42], YOLOv11-m [

43], Deformable DETR [

44], UAV-DETR [

45], and the proposed EFPNet, labeled as models A–H, respectively. Each model’s performance was evaluated using the aforementioned metrics. The results are presented in

Table 6 and

Table 7.

On the TIB dataset, model G (EFPNet) demonstrated the best overall performance, achieving a precision of 94.8%, a recall of 94.9%, mAP50 of 94.1%, and mAP50:95 of 43.1%—the highest across all tested models. Compared with the next-best, model F (UAV-DETR), EFPNet improved the mAP50 and mAP50:95 by 0.4% and 2.2%, respectively, while reducing GFLOPs and parameter count by 23.2% and 7.2%. Additionally, the classical YOLO series models exhibited excellent performance in FPS, with model B (YOLOv5) achieving the highest speed of 102.9 FPS. However, these models showed lower detection accuracy and higher GFLOPs and parameter counts. These results demonstrate that EFPNet effectively enhances UAV-target detection performance.

On the Drone-vs-Bird dataset, EFPNet again outperformed all other networks across all metrics, achieving mAP50 of 98.1% and mAP50:95 of 58.3%. This indicates that EFPNet maintains superior detection accuracy and robustness across different UAV scenarios, providing outstanding precision and recall performance.

Figure 11 illustrates the detection performance of seven algorithms across typical complex scenarios, including urban architecture, strong illumination, low-light conditions, vegetation interference, and cloudy weather. Compared with other algorithms that exhibit missed detections or false positives in various scenarios, the proposed EFPNet consistently extracts effective features and performs accurate detections across all environments, while maintaining high confidence levels under identical conditions.

Overall, the experimental results demonstrate that EFPNet achieves superior detection accuracy while maintaining a high processing speed. These findings fully validate the effectiveness and applicability of EFPNet for UAV-target detection tasks in complex background environments. In summary, EFPNet achieves a well-balanced trade-off between performance and efficiency, demonstrating competitive overall results across both datasets.

Figure 11.

Comparison of detection results across models.

Figure 11.

Comparison of detection results across models.