1. Introduction

Remote sensing aircraft object detection is crucial in various applications. In civil aviation and the aerospace industry, it helps identify other aircraft, drones, or obstacles around an aircraft to prevent collisions and enhance aviation safety. It also aids in the real-time monitoring and tracking of civil aviation flights, cargo planes, and private aircrafts to ensure their flight path and status. In the military domain, it identifies and tracks enemy aircraft, performs aerial reconnaissance, gathers intelligence, and supports aerial strikes and combat. In emergencies like aircraft disappearance or deviation from course, it assists in search and rescue operations to locate the aircraft and passengers.

The breadth of application domains in remote sensing object detection corresponds to the complexity of target data sources. Particularly for remote sensing targets, completing object detection tasks depends not only on the algorithm but also on the data source. Factors affecting detection effectiveness include not only the reliability of artificial intelligence algorithms but also that of remote sensing data sources. From acquiring raw data to inputting them into the network, considerations extend beyond complex scenes to factors like imaging conditions, resolution, and storage processes. During imaging, sensor size, atmospheric conditions, observation time, and lighting affect results. For instance, adverse weather like rain and fog significantly degrade imaging quality, necessitating image dehazing during processing. Additionally, image denoising is crucial in preprocessing due to signal transmission, dark current, and random noise effects.

For algorithms, considerations during training involve sample quantity, scene types, target categories, and annotation quality to select high-quality datasets. Enhancing recognition involves designing new network structures, adjusting training strategies, and selecting parameters. Since algorithms often deploy on hardware with low computing power and memory, compressing network volume and parameter size is vital. Given these considerations, selecting a suitable algorithm framework and dataset is paramount.

Currently, remote sensing object detection faces several challenges. Firstly, it suffers from a single detection perspective, limiting the useful information gathered from an overhead view. Secondly, remote sensing targets tend to be small in size with minimal differences between types, making fine-grained identification challenging. Most significantly, the vast range of object detection on remote sensing images leads to substantial computing power consumption and inefficient detection processes. To address these challenges, various algorithms with exceptional performance have emerged in remote sensing object detection, such as the R-CNN series [

1,

2,

3], YOLO series [

4,

5,

6,

7,

8], and DETR [

9]. Nonetheless, deploying these algorithms on mobile hardware platforms with constrained computing and memory resources presents significant challenges due to their large network size and complex structures. In high-altitude environments where real-time imaging and object detection are crucial, available memory and computational resources are severely limited. Addressing actual memory usage, after training these algorithms on the same dataset, the resulting model files typically range from a few megabytes to hundreds of megabytes. Some exceed even hundreds of megabytes, which is unacceptable to a certain extent. From a computational perspective, existing remote sensing aircraft object detection networks prioritize performance metrics, often leading to increased network depth and width. While this may improve performance, it also substantially increases resource consumption in terms of memory and computation. For instance, the actual FLOPs of YOLOv5n can reach as high as 4.3 G, rendering existing algorithms impractical for deployment on some actual mobile hardware platforms.

In response, several lightweight object detection networks have been proposed. Examples include the MobileNet series [

10,

11,

12], Ghost-Net series [

13], PP-LCNet series [

14], and Shufflenet series [

15,

16]. However, each of these algorithms has shortcomings in terms of detection performance, network size, and resource consumption. They fail to strike a balance between detection performance and memory and computational resource utilization. For example, Ghost-Net and Mobile-Net have excessively large network parameter sizes, while PP-LCNet exhibits slightly inferior detection performance. This makes it challenging to meet practical application requirements. Therefore, while ensuring detection performance remains unchanged or slightly decreased, the focus should be on significantly reducing network parameters and computational load to achieve a perfect balance between detection performance and resource consumption.

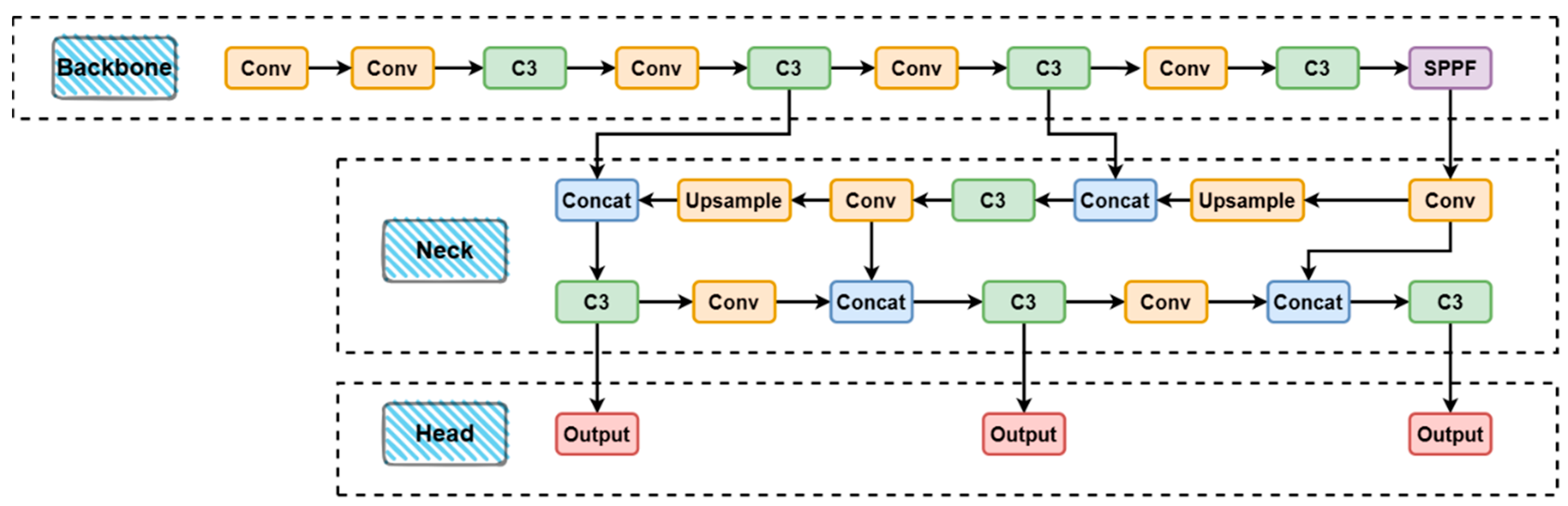

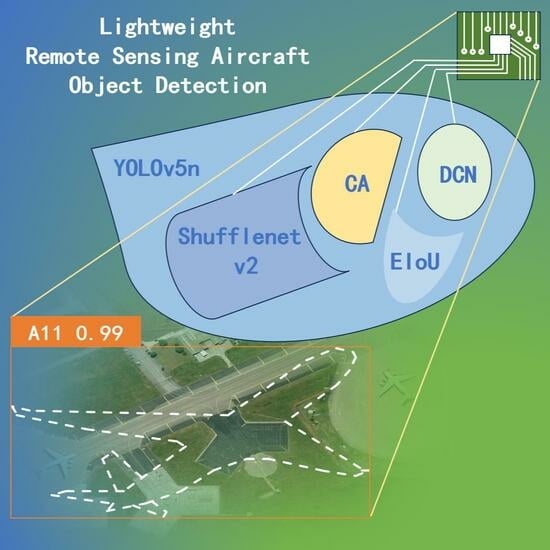

To achieve real-time remote sensing object detection while ensuring the network remains lightweight, we chose YOLOv5n as our baseline network. The You Only Look Once (YOLO) series [

4,

5,

6,

7,

8] of algorithms is a prominent representative of object detection algorithms, with YOLOv5 being one of the most mature algorithms in this series, striking a good balance between speed and accuracy. YOLOv5n, being the smallest model in the series, has optimized detection speed to the fullest. However, despite these advancements, the increasing real-time demands of remote sensing object detection systems pose challenges due to the complexity and size of network models and parameters, making it challenging to run on hardware with low power consumption and computing power. Hence, lightweighting the network becomes imperative.

For lightweight processing of the YOLO series, two main technical approaches are prevalent. One involves replacing the backbone network with a lightweight alternative, while the other entails adjusting the convolution structure, such as modifying the size of convolution kernels and the total number of kernels, which determine the width of the network.

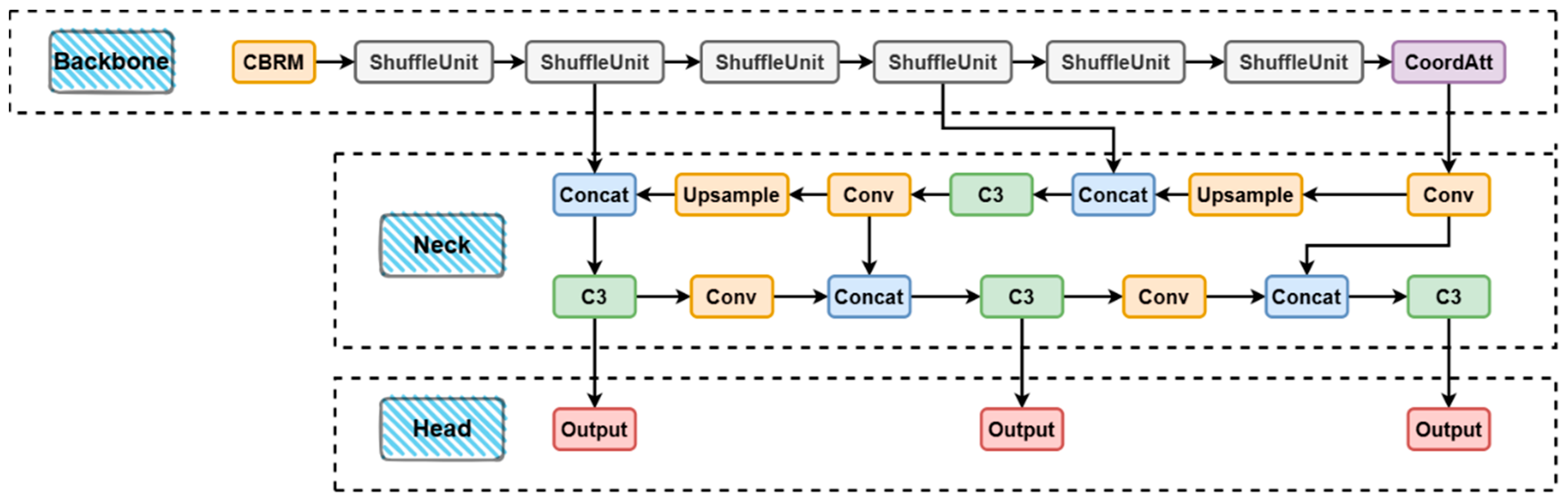

In our pursuit of maintaining detection accuracy akin to the original network, we conducted numerous hypotheses and experiments aimed at minimizing the volumetric dimensions of the network model. The Backbone, a critical and parameter-intensive component of YOLO series algorithms, is primarily responsible for extracting features from input remote sensing images and conveying them to the Neck. To enhance cost-effectiveness in terms of both parameter quantity and performance, we drew inspiration from the Shufflenet v2 paradigm. Utilizing group convolution and channel shuffling principles, we adopted the ShuffleUnit as the fundamental unit in constructing the feature extraction network. The ShuffleUnit facilitates deep convolution operations, achieving group convolution through the connection of two branches and channel reordering, thereby enhancing the network’s non-linear expressive capability while reducing computational costs. Additionally, we introduced the forward module to implement forward propagation, involving channel splitting, branch computation, and channel reordering. Unlike many similar lightweight networks, we departed from the alternating connection pattern of the C3 module and lightweight module in the original YOLOv5 network. Instead, we arranged six ShuffleUnits in a serial fashion to achieve the utmost lightweight design. To further enhance network performance, we embedded the Coordinate Attention (CA) mechanism at the end of the backbone. Moreover, we replaced the original Complete Intersection over Union (CIoU) loss function with the more advanced Efficient Intersection over Union (EIoU). Finally, to better handle deformations in irregular regions and targets in remote sensing images, we optimized a portion of traditional convolutions into deformable convolutions, resulting in a reduction in the overall parameter count.

The subsequent sections of this paper are organized as follows:

Section 1 provides an extensive overview of remote sensing object detection algorithms and attention mechanisms, particularly organizing algorithms based on YOLOv5.

Section 2 outlines the overall framework of our proposed network and elaborates on the improvements made. In

Section 3, we utilize YOLOv5 as the baseline network and employ various popular lightweight networks as replacements for the backbone, conducting comprehensive experimental comparisons across detection accuracy, parameter count, model size, and floating-point operations. These experiments convincingly demonstrate the superiority of our network. Furthermore,

Section 4 includes ablation experiments to validate the feasibility of our design modules. Finally, in

Section 5, we offer a comprehensive summary of the research and articulate future prospects.

2. Related Works

2.1. Remote Sensing Object Detection Based on Deep Learning

Around 2014, deep learning-based approaches began to dominate the field of object detection, subsequently extending their influence to the entire domain of remote sensing object detection. Object detection algorithms based on deep learning are typically categorized into two types: single-stage object detection algorithms and two-stage object detection algorithms. [

17] Two-stage algorithms mainly include Region-CNN (R-CNN) [

1], Fast R-CNN [

2], Faster R-CNN [

3], and others. They typically start by using a Region Proposal Network (RPN) [

3] to generate candidate boxes based on the texture, color, and size features associated with the objects. These candidate boxes undergo a filtering process to reduce their number before being sent to a deep learning network, typically utilizing a Convolutional Neural Network (CNN) [

18] for feature extraction. The obtained feature vectors are compared with predefined target categories to confirm the presence of a target and perform target classification. Simultaneously, each candidate box undergoes position regression to obtain corresponding position coordinate information. Due to its excellent detection speed and relatively small resource consumption, single-stage networks have gained dominance in recent years. The most prevalent network among single-stage networks is the YOLO series algorithm, known for its fast detection speed and high accuracy, particularly suitable for real-time scenarios. The single-stage algorithm mainly includes the YOLO series and the Single Shot MultiBox Detector (SSD) [

19] series. They generally pass the entire image as input to the CNN, skipping the step of generating candidate boxes. The CNN directly outputs category and location information of the target. Single-stage networks typically use-predefined anchor boxes with different scale sizes and aspect ratios to process targets, judging the presence of a target in each anchor box and predicting its location and category for object detection. With ongoing research, the detection accuracy of single-stage networks continues to improve. With superior detection speed, they have gradually replaced two-stage algorithms in many engineering practices, becoming the mainstream in practical applications.

Traditional methods for object detection in remote sensing images have limited representation power. Recently, many deep learning-based networks specifically for remote sensing images have emerged. Reference [

20] proposed a method that redesigns the feature extractor, utilizes a multi-scale object proposal network (MS-OPN) for object-like region generation, and employs an accurate object detection network (AODN) for object detection based on fused feature maps. [

21] Reference [

22] introduced the CSand-Glass module to replace the residual module in the backbone feature extraction network of YOLOv5, achieving higher accuracy and speed in remote sensing images. Liu et al. proposed the YOLO-extract algorithm [

23], which optimizes the model structure of YOLOv5 in two main ways. Firstly, it integrates a new feature extractor with stronger feature extraction ability. Secondly, it incorporates Coordinate Attention into the network.

In recent years, the Transformer [

24] has had a profound impact on deep learning. In the field of object detection, Facebook introduced the end-to-end object detection network called DEtection Transformer (DETR) [

9], which is based on the Transformer architecture. DETR can be viewed as a transformation process from an image sequence to a set sequence. This is due to the inherent nature of the Transformer as a sequence-to-sequence transformer. The approach taken by DETR involves unfolding the pixels of the output feature map from the backbone into a one-dimensional sequence, treating it as the sequence length, while maintaining the definitions of batch and channel. Consequently, DETR is capable of computing the correlations between each pixel of the feature map and all other pixels, unlike in CNNs, where this is achieved through the receptive field. The Transformer demonstrates the ability to capture a larger perceptual range than CNNs.

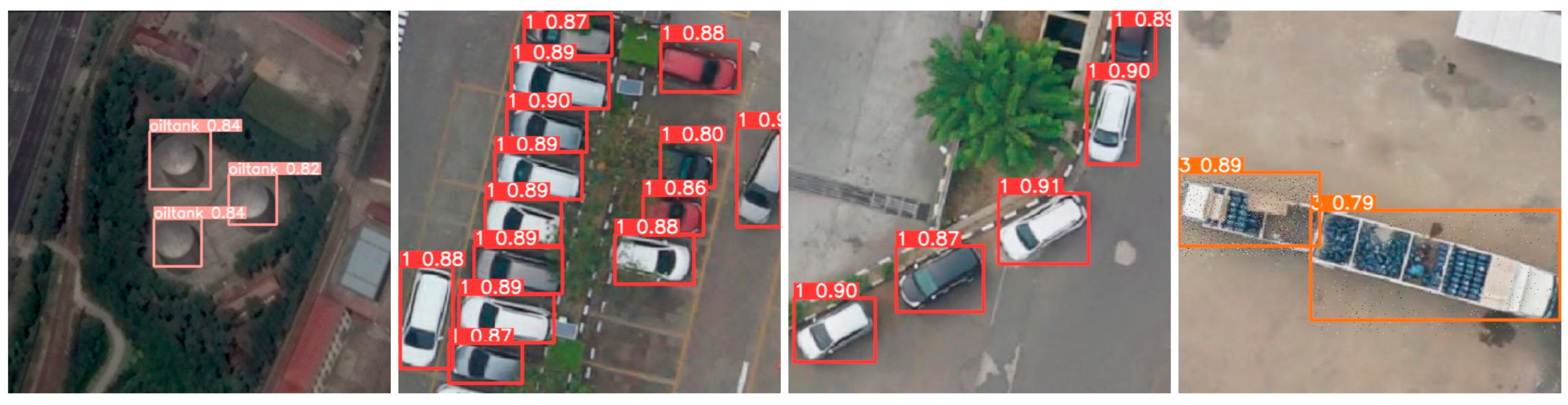

In addition to the previously mentioned methods for remote sensing object detection, there is a particular significance in introducing arbitrary-oriented remote sensing object detection methods. From a training perspective, the primary distinction between arbitrary-oriented object detection and regular object detection is in how object bounding boxes are represented in the dataset. Convolutional neural networks struggle to capture variations in scale and orientation of objects in remote sensing images. This struggle arises from the limited generalization ability of convolutional operations to target rotation and scale changes. As a result, the detection performance of convolutional neural networks tends to decrease, especially when dealing with dense objects and remote sensing targets with centrally symmetric features.

Optimizing the loss function for representing object bounding boxes is currently a focus of research. The early work of DRBox [

25] has significantly advanced arbitrary-oriented remote sensing object detection. DRBox identified various challenges in this field and introduced three different models along with their parameters tailored for cars, ships, and aircraft, encompassing a wide range of differently sized targets and distinguishing their heads and tails. R3det [

26] adopts single-stage object detection and suggests re-encoding the position information of refined bounding boxes into corresponding feature points. This process reconstructs the entire feature map to achieve feature alignment. The ROI-Transformer [

27] introduces a module named RoI Transformer, which detects directed and dense objects using supervised RRoI learning and position-sensitive alignment-based feature extraction within a two-stage framework. The innovative application of polar coordinates in the P-RSDet [

28] reduces parameter volume and introduces a novel loss function, Polar Ring Area Loss, leading to enhanced detection performance.

2.2. Lightweight Methods for Object Detection Networks Based on Deep Learning

Deep learning methods have demonstrated significant advancements in remote sensing object detection in recent years. As previously mentioned, the wide scope of object detection in remote sensing images leads to substantial computational overhead and significantly lowers detector efficiency. Consequently, lightweighting network models has become a focal point in current research. The primary goal of lightweighting network models is to reduce model complexity and decrease the number of model parameters. Four primary technical approaches exist for lightweighting network models: compressing pre-trained large models, redesigning lightweight models, accelerating numerical operations, and hardware acceleration.

In current practice, the first three technical approaches are widely employed. Knowledge distillation and model pruning are both well-established methods for compressing models. Knowledge distillation, a common method for model compression, reduces model volume and parameter count by transferring the knowledge of a complex model to a lightweight one. The literature points out that this method extracts the knowledge contained in the complex “teacher” model that has been trained into another lightweight model, the “student” model. In addition, model pruning is also a common method. Model pruning reduces model size by removing unimportant weights or neurons. It can be categorized into structured pruning and unstructured pruning based on different methods. Furthermore, adjusting the number and size of the network’s convolution kernels can achieve model lightweighting. Generally, a larger convolution kernel size can enhance feature extraction. However, the literature indicates that large convolution kernels increase computational requirements and the number of parameters, leading to unstable gradients. Therefore, scholars often employ multiple small convolution kernels instead of a single large one to compress models, as seen in the Visual Geometry Group (VGG) network proposed in [

29].

Lightweight networks find extensive applications in remote sensing image processing. The design of lightweight network architecture originated with SqueezeNet [

30] in 2016 and MobileNet [

10,

11,

12] in 2017. Subsequently, new and improved networks, such as SqueezeNext [

31] and MobileNetV2 [

11], have emerged. MobileNetV1 can essentially be viewed as replacing the standard convolutional layer in VGG with depthwise separable convolution [

32]. MobileNetV2 [

11] introduces shortcut connections and replaces part of ReLU with a linear activation function. They employ pointwise convolution to increase the dimension prior to depth convolution, extract features through depth convolution, reduce the dimension, and add the input and output to form the residual structure. SqueezeNet proposed the Fire module, which replaces the 3 × 3 convolution kernel with a 1 × 1 convolution kernel. By adjusting the number of 1 × 1 convolutions, the number of channels in each layer in the convolution operation can be flexibly controlled, thereby reducing the amount of model calculations. The Shufflenet v1 [

15] network, proposed at the same time, is one of the relatively mature lightweight networks. This article uses the improved Shufflenet v2 [

16] to initially achieve network lightweighting. In addition to network simplification, numerical calculations have also become a new focus area, with numerical quantification being a typical representative. Quantization is the process of converting a model’s weights and activations from floating-point numbers to lower bit-width integers or fixed-point numbers. Quantization can reduce the memory footprint and computational requirements of a model. The literature indicates that model parameters are the primary memory access objects in CNNs; thus, parameter quantization is an effective means of reducing memory access and power consumption. Hardware acceleration involves utilizing specialized hardware accelerators to expedite the inference process of deep learning models, consequently alleviating the computational load on the model.

In summary, for remote sensing object detection, the most effective approach to network lightweighting is to initiate redesign from the feature extraction segment, aiming to restructure the architecture into a lightweight framework. Consequently, we primarily adopt this methodology, selecting YOLOv5n and Shufflenet v2 as the benchmark network and lightweight architecture, respectively. With additional enhancements in various aspects, we achieved further improvements in model compactness and network performance.

2.3. Attention Mechanism

The attention mechanism, originating from the study of human visual cognition characteristics, represents a significant breakthrough in neural network development. In human visual information processing, due to input information characteristics and human brain processing limitations, it is essential to selectively focus on certain information while ignoring redundant data. For example, in images, focus is on vibrant colors and distinct textures, while in text, attention is on sentence beginnings, endings, and specific keywords. In remote sensing, the attention mechanism adjusts the network’s focus on different targets. Moreover, addressing the scarcity of remote sensing datasets, the attention mechanism enriches data, aiding the network in learning more valuable information. Additionally, for multi-source remote sensing images, it integrates diverse information efficiently, enhancing network performance. Therefore, attention mechanisms prioritize which input information to focus on and optimize resource allocation for information processing.

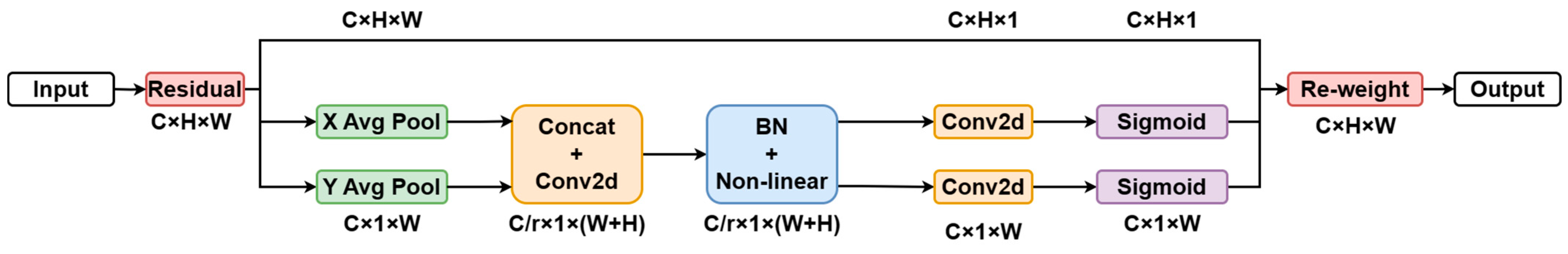

In existing object detection algorithms, the Squeeze-and-Excitation (SE) [

33] attention mechanism is widely used in mobile networks. By employing SE modules, the network evaluates relationships between feature channels, determining the importance of each channel through the learning process. It then enhances crucial features for the current task while suppressing less important ones. However, this approach only considers inter-channel information and disregards positional information. Subsequent efforts, like BAM BAM [

34] and CBAM [

35], aim to incorporate positional information, but convolutional operations capture only local relationships, failing to model crucial long-distance dependencies for visual tasks. To address these limitations and achieve multidimensional information integration, attention mechanisms like Coordinate Attention (CA) and Efficient Channel Attention (ECA) [

36] have emerged. Besides focusing on channel and positional information, researchers have explored new approaches, such as evaluating neuron weights and subsequently suppressing or focusing on them based on evaluation results, thus achieving more efficient computations. Representative examples include NAM Attention [

37] and SimAM [

38]. Furthermore, numerous efficient attention algorithms continue to emerge, including Sequential Attention [

39], which emphasizes logical attention, Co-attention [

40], which focuses on spatial attention, and the prevalent Transformer based on self-attention.

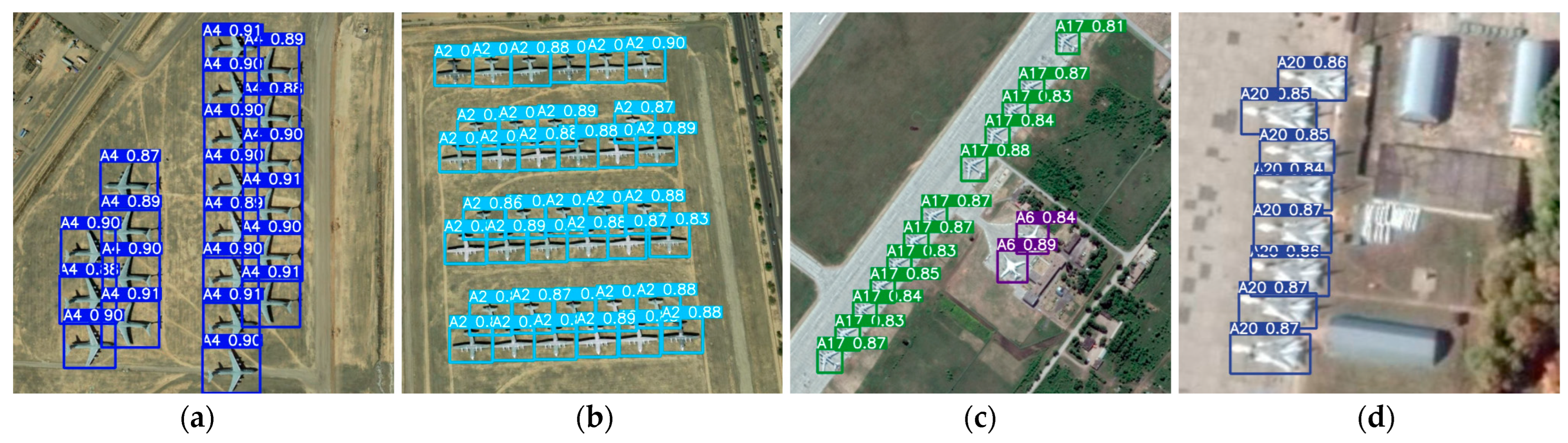

5. Conclusions

This article introduces an ultra-lightweight remote sensing aircraft object detection network based on YOLOv5n. To balance detection accuracy and speed, we draw inspiration from Shufflenet v2. Utilizing grouped convolution and channel shuffling methods, we constructed ShuffleUnit as the component unit of the feature extraction network. ShuffleUnit defines a deep convolution operation and implements group convolution through the connection of two branches and the rearrangement of channels, thereby improving the nonlinear expression ability of the network and reducing the amount of calculation. Additionally, we implemented forward propagation to include channel splitting, branch calculation, and channel rearrangement. In contrast to many similar lightweight networks, we opted for an extreme lightweight approach by abandoning the alternate connection of C3 modules and lightweight modules in the original YOLOv5 network, and instead, we boldly connected six shuffleblocks in series. Furthermore, to further enhance the network’s performance, we embedded the CA attention mechanism at the end of the backbone. CA is an attention mechanism that can efficiently obtain inter-channel information and position information at the same time. The CA module encodes channel relationships and long-range dependencies with precise location information [

21], thereby helping the network locate objects of interest more accurately. Additionally, we replaced the CIoU in the original network with the more advanced EIoU for the loss function, enhancing the aspect ratio loss by considering the difference between the prediction and the minimum bounding box size. Finally, to better handle deformation for irregular areas and targets, we optimized some traditional convolutions into deformable convolutions, resulting in a reduction in the number of parameters.

Experimental results on the public dataset MAR20 demonstrate that our lightweight network, compared to the original YOLOv5n network, has approximately one-fifth of its parameter count, achieving a 6.5% increase in Frames Per Second (FPS) with only a slight 2.7% decrease in mAP@0.5. Moreover, it has a compact model volume of only 1.13 MB. After deployment on the embedded device, the network achieved an FPS of 22.8 even with relatively limited computing power, enabling real-time object detection. Finally, based on YOLOv5n, experiments were conducted on models with lightweight networks such as Shufflenet v2, GhostNet, PP-LCNet, MobileNetV3, and MobileNetV3s as the backbone network, comparing detection accuracy, model size, and detection speed. The results achieved the optimal balance, confirming the feasibility and superiority of the network. Furthermore, a series of ablation experiments were conducted on the proposed network to further validate the effectiveness of our innovations. The results show that each innovation point added produces a certain degree of effect compared to the original network. According to the experimental results, our network accurately captured the position of each aircraft target in the position regression problem of object detection. For the fine-grained classification of aircraft with similar characteristics, such as A11, A18, and A20, the probability of misjudgment increases, which will be a primary focus of our future improvement efforts.