Ontology-Enhanced Educational Annotation Activities

Abstract

1. Introduction

- One of the relevant concerns regarding educational use of digital annotations is to compare these digital annotations with respect to conventional, handwritten, ones. A representative work in this line is that reported in [5], where two experiments are presented that try to analyze the differences between the annotations made on paper and those made online by students. These experiments focused on aspects such as types of annotations, the purpose of annotations, the quality of the annotations, the difficulties creating and using them (search strategies, time spent searching), etc. The results of the experience showed that the annotations on paper were longer and richer from the conceptual point of view than those made online, although the latter were more oriented towards sharing, commenting on, or recovering the annotated resource. In a similar way, [6,7] showed that students using a digital annotation tool outperformed those who annotated the text using paper and pencil.

- Another relevant concern is analyzing how digital annotations can contribute to improving reading comprehension. For instance, in [8], two groups of students who read the same text with annotations and without annotations respectively were considered and subsequently evaluated regarding the content. The result showed that the students who had read the annotated text performed better. In [9] an experiment was set up in which readers in a group were exposed to all the annotations in the text, while readers in another group were exposed to high-level annotations only. Reading comprehension was tested, and the results showed that the readers who had used high-level annotations achieved much better reading comprehension than those who were exposed to all the annotations.

- In addition to supporting reading, the true educational potential of digital annotation tools is achieved when students actively participate in the annotation process. In this regard, in [10] an experiment was conducted in which a group of students read a text, while another group read and annotated it. The students who annotated the text achieved better results and were more motivated. In [11] an experiment was set up in which students in one group annotated a text individually, while students in another group worked in pairs, with one acting as an annotator and the other acting as a reviewer of the annotations made by the first one. The results showed that when students work in pairs to annotate the same document, redundancy is reduced, and topics addressed are discussed in greater depth.

- Finally, another relevant topic is the enabling of collaborative annotation activities, in which students collaborate on the digital annotations of texts. In [11], a study focusing on multimedia annotations of web-based content made it apparent how individual annotation can be outperformed by collaborative annotation. In [12] several experiments in the context of an English course were described that, in addition to focusing on analyzing the effects of annotation systems on reading comprehension, also focused on the analysis of critical thinking and metacognitive competences. For this purpose, a group of students annotated and read the texts proposed individually, while another group carried out the activity in a collaborative way. Students who worked on the texts in a collaborative way improved their metacognitive competences and their reading comprehension. However, there was no difference in critical thinking. A similar study was conducted in [13], in which the results showed that, although the collaborative annotation strategy negatively affected the students’ initial performance, it had clearly positive effects on the tests taken one month after the experience compared to those of the students who followed a non-collaborative annotation strategy. In [14,15], two experiments based on collaborative annotation of online documents carried out by pairs of students are described. The highest-quality annotations were made by students who were more motivated to use the tool. They also achieved the highest grades. In [16] the effect of annotations in collaborative environments was investigated. For this purpose, the performance of students using a conventional discussion board system was compared to that of students using a collaborative annotation tool. The results showed that the use of an annotation system can increase learning achievement in collaborative learning environments. In [17] a collaborative annotation system was compared to a recommender-supported system. Although both approaches outperformed individual annotation, no statistical differences were observed between them.

2. Related Work

- Lack of mechanisms for classifying annotations. This approach groups tools that prioritize other aspects instead of organizing annotations, such as sophisticated ways of interacting with the documents.

- Predefined annotation modes. Tools that follow this approach provide different ways of annotating a document (underlining or highlighting fragments and adding comments), which induce an implicit categorization of the annotations.

- Pre-established semantic categories. This approach is adopted by tools that introduce a predefined set of semantic tags to classify annotations.

- Folksonomies. This approach is based on the classification of annotations by mean of tags that are created by the users to conform a folksonomy. Previously created tags (by the user or other participants in the annotation activity) can be employed or new tags that are better suited to particular classification needs can be created.

- Ontologies. Tools that adhere to this approach enable loading specific ontologies for each annotation activity. Students can use these ontologies to make the semantics of annotations explicit (e.g., by associating one or more concepts in the ontologies to the annotations).

- Tools that lack mechanisms for classifying annotations and tools based on predefined annotation modes imitate conventional paper-and-pencil-based annotation mechanisms, perhaps modulated with a greater repertoire of presentation styles. Therefore, they do not provide any mechanism to support students’ guidance.

- Concerning tools that are based on predefined repertories of semantic categories, although the lists of tags provided by these repertories allow annotations to be classified according to certain semantic criteria, their pre-established nature produces generic classification systems, which typically consist of general purpose and reduced sets of universal categories (4 in PAMS 2.0 or in MyNote, 7 in CRAS-RAID, 9 in Tafannote or in MADCOW, etc.), which may not fit the specific characteristics of every annotation activity.

- Folksonomy-based tools enable lists of semantic tags that are specifically adapted to each annotation activity. However, most of these tools delegate the collaborative design of these vocabularies of tags to the students. For instructors, this practice does not guarantee that the resulting folksonomies adequately capture the objectives of the annotation activity, because it requires a considerable amount of expert knowledge that students lack. Although some of the folksonomy-based tools (e.g., annotation studio) also provide support for tag repertories provided by instructors, folksonomies lack structure beyond the provided by simple tag lists, which can be inconvenient for in-depth annotation.

- Ontology-based tools provide appropriate vehicles (ontologies) for capturing specific knowledge about annotation activities, which enables the adaptation of the tools to the semantic particularities of each activity and provide a high degree of contextualization in this activity. In addition, the structural richness of ontologies solves the problems of lack of structure of plain lists of semantic tags.

- The complexity of the ontology definition by instructors must be carefully considered. Of the tools that were analyzed, only Loomp addresses this aspect; it proposes a two-level organization scheme that is based on vocabularies that cluster atomic concepts. This approach is too simple for conceptual organization purposes. The other tools adopt standard semantic web technologies (like RDFS—Resource Description Framework Schema, or OWL—Web Ontology Language) and do not introduce mechanisms to help instructors provide the ontologies.

- All ontology-based tools that were analyzed differentiate between semantic annotations and other types of annotations. This fact is evident, for example, in DLNotes, which explicitly distinguishes between semantic annotations and free-text annotations. The other tools focus on the process of semantic annotation, which is understood as semantic tagging of document fragments. From a detailed annotation perspective, providing textual content to annotations in free-text format is essential to reflect the particular and subjective reading of the content by the student.

3. Materials and Methods

- Following the guidelines of design-based research methods, to address the aforementioned shortcomings of ontology-based annotation tools we designed and developed our own annotation tool, @note, which fully implement our annotation paradigm. This tool is detailed in Section 3.1.

- To assess the educational utility of @note, we undertook a pilot experiment concerning a learning domain that requires a large amount of domain knowledge and skilled annotation capabilities: critical literary annotation. Concerning the quantitative analysis method in this experiment, we opted for a within-subject design approach [60] since it fits reduced groups of students, which typically arise in advanced university-level literature courses. The pilot design is detailed in Section 3.2.

3.1. @note Annotation Tool

- @note places a strong emphasis on integrating free-text and semantic approaches in a single annotation paradigm.

- @note is equipped with user-friendly ontology-edition mechanisms suitable for end users with no specific background in computer science or knowledge engineering, which allows instructors to design reasonably complex ontologies. These ontologies are based on taxonomic arrangements of concepts, and therefore overcome the simplicity of other tools that pay attention to this aspect (e.g., the two-level organizations of vocabularies in Loomp).

3.1.1. Annotation Activities

- The document to annotate. When designing an annotation activity, the instructor must choose the document to be annotated by the students. In the current version of @note, these documents can be obtained from the collection of Google digitized books (books.google.com) or by directly loading documents into the tool (Figure 1a).

- The annotation group. This set of students will be in charge of performing the activity (Figure 1b).

- The annotation ontology. This ontology will guide the students throughout the annotation process (Figure 1c).

3.1.2. Annotation Ontologies

- Intermediate concepts can be refined in terms of other simpler sub-concepts. For instance, in Figure 1c, concepts such as Analysis, Structure, Structure_type or Criticism are intermediate concepts, and Structure_type, Plot, and Setting are direct sub-concepts of Structure.

- Final concepts are concepts that the students can use to classify annotations. These concepts correspond to the taxonomy leaves. In Figure 1c, Narration, Use_Of_frames, Plot, Setting, Bibliographical, Psychological, and Cultural are final concepts that students can use to classify their annotations.

3.1.3. Making Annotations

- An anchor. The anchor is an area of the digital document associated with the activity, which students can delimit with a mouse.

- A content. The content is an unconstrained description provided by the students. In @note, annotation contents can contain text and substantially richer multimedia content (images, video, and audio), links to other resources, and any type of HTML5-compliant information.

- A classification. The classification is a set of final concepts taken from the annotation ontology. Each annotation must have at least one final concept associated with it. To perform the classification of their annotations, students use the representation of the annotation ontology and select concepts that they consider to be relevant (Figure 2b). The hierarchical organization of concepts and the associated bracketed representation facilitates this task, helping students to identify the most suitable concepts and offering a complete visual snapshot of the ontology.

3.1.4. Assessment of Annotation Activities

- The instructor can select all annotations that are tagged with a set of concepts, either intermediate concepts or final concepts. The annotations that are selected will be the annotations that are tagged with the final concepts chosen by the instructor and at least a final sub-concept of each intermediate concept chosen by the instructor. Figure 3a illustrates this mechanism: the annotations that are selected will be the annotations that are tagged as Cultural and a final sub-concept of Structure_Type (i.e., by Narration or Use_Of_Frames).

- The instructor can also use a more sophisticated search engine based on arbitrary Boolean queries in conjunctive normal form. Concepts can be asserted or denied and grouped together to express conjunctions. Each of these conjunctions is referred to as a criterion. Final queries are formulated by disjunctions of criteria. For example, Figure 3b illustrates the edition and application of a query with two criteria: the first criterion is named Narrative and enables the selection of annotations that are tagged with the Narration concept but are not tagged with the Plot concept (asserted concepts are marked with ‘+’, while denied concepts are marked with ‘-’); the second criterion is named Criticism and selects annotations that are tagged with a sub-concept of Criticism but are not tagged with any sub-concept of Structure.

3.2. Pilot Experiment

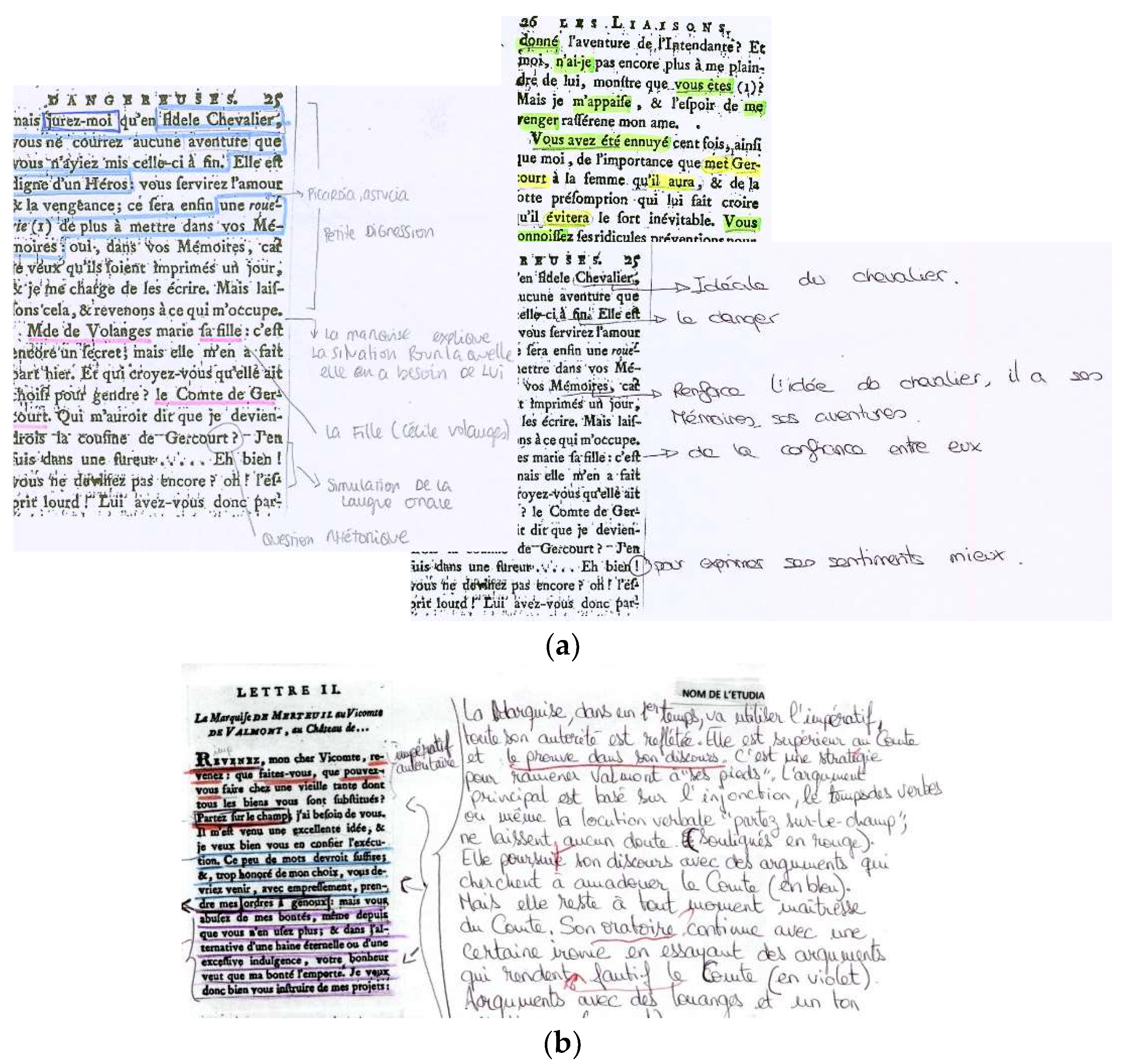

- After reviewing the key principles of structural and thematic narratology for the analysis of narrative texts, the students were instructed in the conventional practice of annotation with paper and pencil [62] to initiate a narrative-type analysis.

- Then, they were asked to annotate a first text obtained from Les Liaisons Dangereuses by eighteenth-century French author Choderlos de Laclos (Amsterdam, Durand, 1784). This activity was employed as a baseline in the within-subject design. Twenty-six of the 28 students participated in this activity (two absences were recorded). Students worked during a one-hour class session and were given the option to finalize the activity during the following week.

- In parallel, an annotation activity was designed in @note for a second text, which was also obtained from Les Liaisons Dangereuses with a complexity that was similar to that of the baseline activity. Figure 4 outlines the annotation ontology provided by the instructor. As the ontology is aimed at students of French Studies, the instructor used French to name the concepts. This ontology includes and structures basic concepts related to the analysis of a narrative text following narratological criteria. The ontology introduces intermediate concepts of the first level to capture the main aspects contemplated in narratology [63]: sociocultural aspects of the text (Contexte socio-culturel concept), aspects related to the space (Espace) of the narration (i.e., to the frame or place where the events occur and the characters are placed), temporal aspects of the narrative (Temps), actors that lead the action (Actants), aspects related to the author (Auteur), aspects related to the narrator (Narrateur), and aspects related to discourse analysis (Discours). These aspects are refined in terms of more elementary narratological concepts, as the ontology outlined in Figure 4 indicates. In this way, this ontology guides students in the process of analyzing a narrative text following very well-defined narratological criteria. The ontology is applicable and reusable in other contexts. This fact is reflected in the average number of sub-concepts for each intermediate concept (4.43), which is a relatively high value that denotes the horizontal nature of the ontology [64].

- One week after completing the paper-and-pencil baseline annotation activity, a one-hour session was dedicated to instructing students in the critical annotation of texts with @note. In the next session, students undertook the @note activity and worked in class for an additional hour, in which questions about the use of the tool were answered. Similar to the paper-and-pencil baseline activity, the students had a week to complete the critical annotation activity with @note. A total of 27 students participated in this activity: 25 of the 26 students who participated in the previous (paper-and-pencil-based) activity and 2 other students who had not attended this previous activity.

4. Results

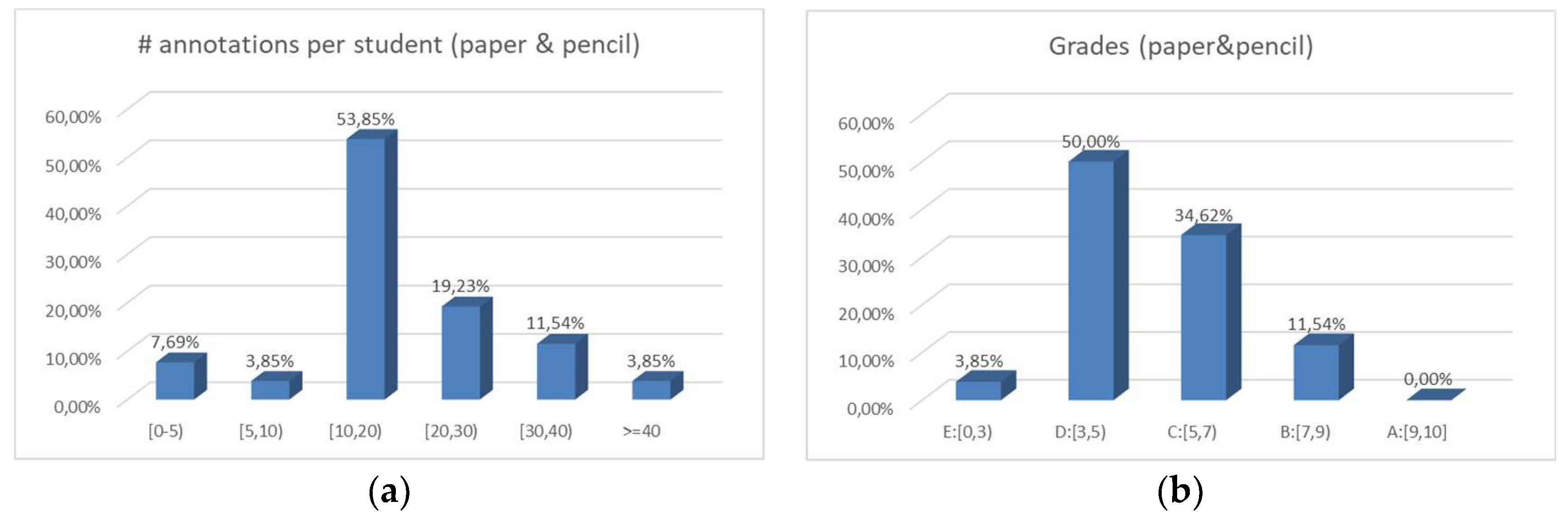

4.1. Paper-and-Pencil-Based Annotation

- As shown in Figure 6, students usually adopted many different annotation styles, which indicates a lack of systematicity during annotation.

- As also reflected in Figure 6, superfluous annotations that were minimally related to the narratological principles and many critical aspects that were not considered were frequently observed.

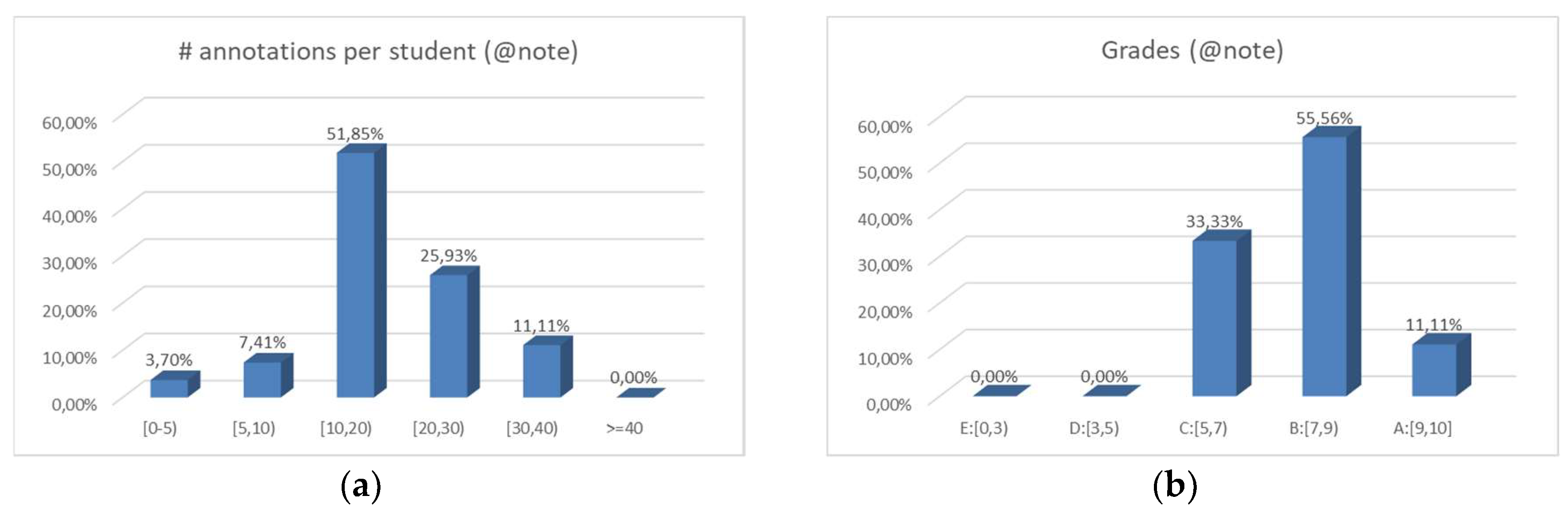

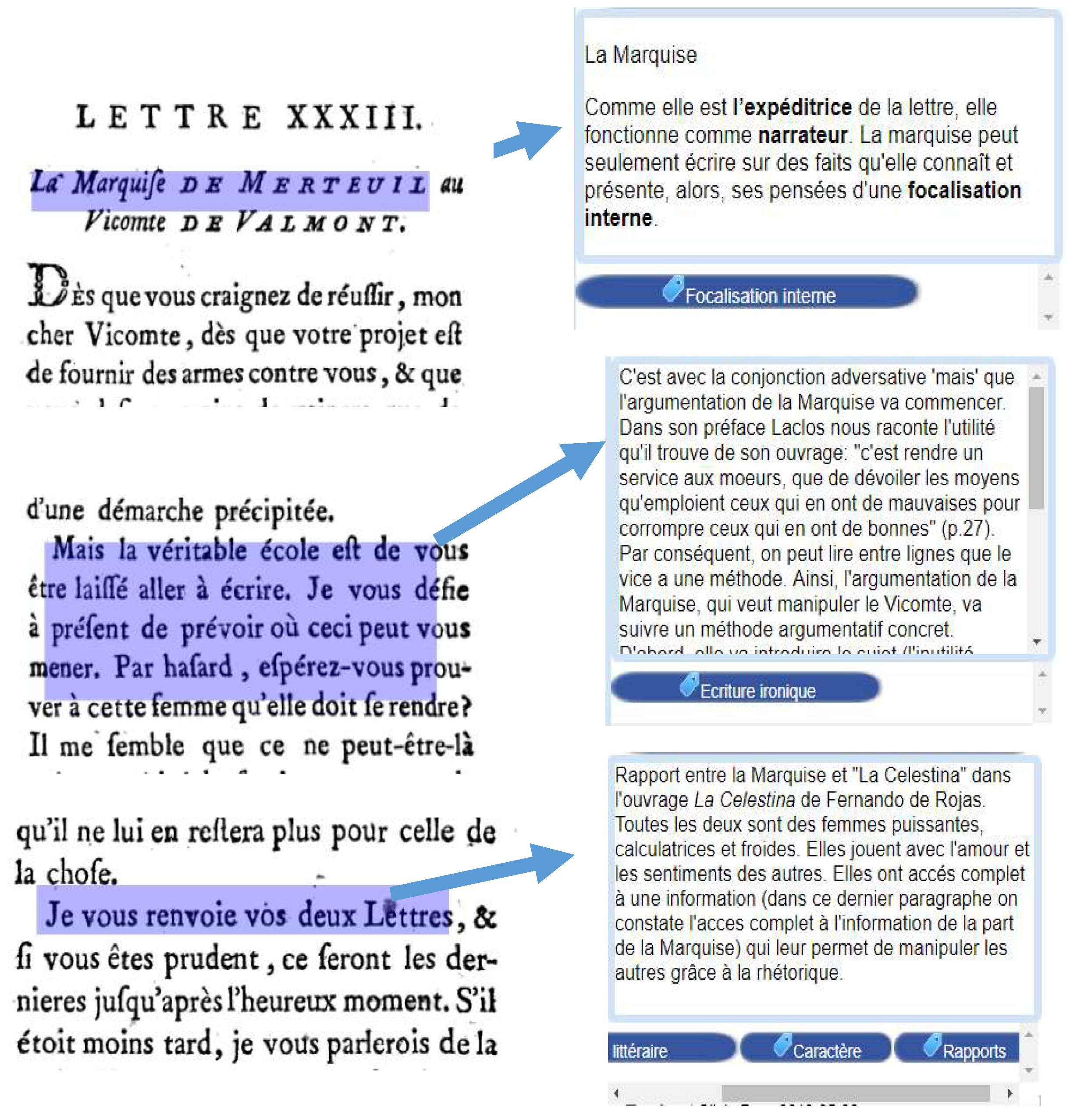

4.2. Annotation with @note

- The instructor observed a greater homogenization in the annotation process due to the annotation discipline introduced by the ontology-guided approach and imposed by the tool. The heterogeneity in the annotation modes disappeared in favor of a single annotation format based on a semantic tagging of the annotations and an elaboration in the form of an enriched free text (Figure 9).

- The instructor observed a considerably more systematic critical annotation process: the students were forced to tag their annotations with concepts with a strong semantic charge and include strong arguments that support tagging, which contributed to a decrease in superfluous annotations.

4.3. Comparison

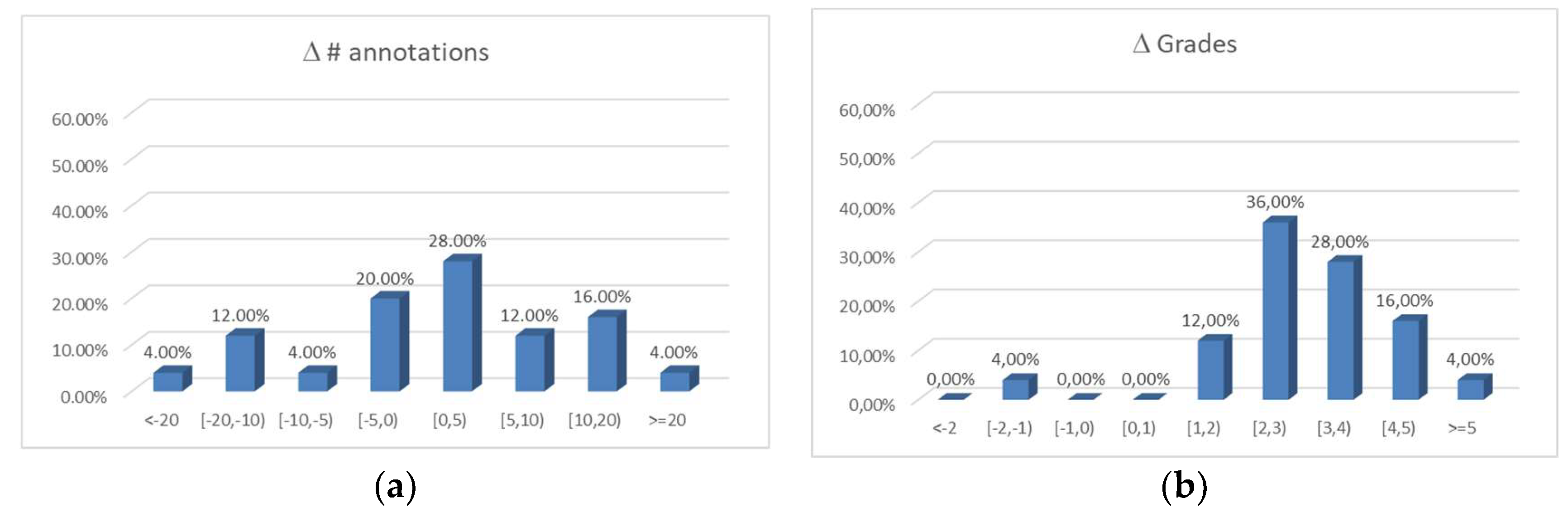

- Figure 10a shows the distribution of the difference between the number of annotations produced with @note and the number of annotations produced with paper and pencil for the 25 students that participated in both activities. Although there is a tendency toward positive differences (40% negative versus 60% positive), this trend is not overwhelming. Consequently, the average of the difference in the number of annotations is 0.44 (95% CI [−3.7, 4.6]), and no statistically significant evidence is observed in favor of a non-zero median for the distribution (Wilcoxon signed rank test of the number of annotations for each student in both activities: Z = 150, p = 0.715).

- The average score with @note is almost 2.5 points above the average score in the activity based on pencil and paper. The improvement is statistically significant (Mann–Whitney U test of the scores in both activities: U = 639, p = 0.000).

- This improvement is also shown when the analysis focuses on the improvement that is individually obtained by each student. Taking into account the 25 participants who engaged in both activities, Figure 10b shows the distribution of the differences in the grades between the @note activity and the paper-and-pencil activity. Most of the students (84%) increased the grade obtained by more than 2 points. The average improvement does not significantly differ from the previously indicated value (2.44 points, 95% CI [1.87, 3.01]). The corresponding Wilcoxon signed rank test yields statistically significant evidence (Z = 316.5, p = 0.000) in favor of the improvement (median of the difference distribution is positive).

5. Discussion

- Fatigue was avoided since the annotation activities (baseline, paper-and-pencil, and @note activities) were performed in a separated session, and in both cases, students had sufficient time to complete the work in a calm way (one week).

- Potential carryover effects due to practice were minimized. As the baseline activity was based on paper and pencil instead of another annotation tool, the previous practice of students with other annotation tools for critical annotation was explicitly avoided. In addition, the text in the @note activity was different from the text in the baseline activity and the two annotation activities obeyed two radically different annotation paradigms: unguided, free-style annotation in the baseline activity vs. ontology-guided annotation in the activity that involved @note. Therefore, we estimated the probability that the experience in the critical annotation gained by the students during the baseline activity had significantly influenced the realization of the activity with @note to be negligible.

6. Conclusions and Future Research

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Colglazier, W. Sustainable development agenda: 2030. Science 2015, 349, 1048–1050. [Google Scholar] [CrossRef]

- Wu, J.; Guo, S.; Huang, H.; Liu, W.; Xiang, Y. Information and Communications Technologies for Sustainable Development Goals: State-of-the-Art, Needs and Perspectives. IEEE Commun. Surv. Tutor. 2018, 20, 2389–2406. [Google Scholar] [CrossRef]

- Daniela, L.; Visvizi, A.; Gutiérrez-Braojos, C.; Lytras, M.D. Sustainable Higher Education and Technology-Enhanced Learning (TEL). Sustainability 2018, 10, 3883. [Google Scholar] [CrossRef]

- Mayes, J.; Morrison, D.; Mellar, H.; Bullen, P.; Oliver, M. Transforming Higher Education through Technology-Enhanced Learning; Higher Education Academy: York, UK, 2009; ISBN 978-1-907207-11-2. [Google Scholar]

- Kawase, R.; Herder, E.; Nejdl, W. A Comparison of Paper-Based and Online Annotations in the Workplace. In Learning in the Synergy of Multiple Disciplines, Proceedings of the 4th European Conference on Technology Enhanced Learning, Niza, France 29 September–2 October 2009; Cress, U., Dimitrova, V., Specht, M., Eds.; Springer: Berlin/Heidelberg, Germany, 2009; pp. 240–253. [Google Scholar]

- Chen, C.-M.; Chen, F.-Y. Enhancing digital reading performance with a collaborative reading annotation system. Comput. Educ. 2014, 77, 67–81. [Google Scholar] [CrossRef]

- Sung, H.-Y.; Hwang, G.-J.; Liu, S.-Y.; Chiu, I.-H. A prompt-based annotation approach to conducting mobile learning activities for architecture design courses. Comput. Educ. 2014, 76, 80–90. [Google Scholar] [CrossRef]

- Kawasaki, Y.; Sasaki, H.; Yamaguchi, H.; Yamaguchi, Y. Effectiveness of highlighting as a prompt in text reading on a computer monitor. In Proceedings of the 8th WSEAS International Conference on Multimedia systems and signal processing (WSEAS), Hangzhou, China, 6–8 August 2008; pp. 311–315. [Google Scholar]

- Jan, J.-C.; Chen, C.-M.; Huang, P.-H. Enhancement of digital reading performance by using a novel web-based collaborative reading annotation system with two quality annotation filtering mechanisms. Int. J. Human-Comput. Stud. 2016, 86, 81–93. [Google Scholar] [CrossRef]

- Mendenhall, A.; Johnson, T.E. Fostering the development of critical thinking skills, and reading comprehension of undergraduates using a Web 2.0 tool coupled with a learning system. Interact. Learn. Environ. 2010, 18, 263–276. [Google Scholar] [CrossRef]

- Hwang, W.-Y.; Wang, C.-Y.; Sharples, M. A study of multimedia annotation of Web-based materials. Comput. Educ. 2007, 48, 680–699. [Google Scholar] [CrossRef]

- Johnson, T.E.; Archibald, T.N.; Tenenbaum, G. Individual and team annotation effects on students’ reading comprehension, critical thinking, and meta-cognitive skills. Comput. Human Behav. 2010, 26, 1496–1507. [Google Scholar] [CrossRef]

- Archibald, T.N. The Effect of the Integration of Social Annotation Technology, First Principles of Instruction, and Team-Based Learning on Students’ Reading Comprehension, Critical Thinking, and Meta-Cognitive Skills. Ph.D. Thesis, The Florida State University, Tallahassee, FL, USA, 2010. [Google Scholar]

- Nokelainen, P.; Kurhila, J.; Miettinen, M.; Floreen, P.; Tirri, H. Evaluating the role of a shared document-based annotation tool in learner-centered collaborative learning. In Proceedings of the 3rd IEEE International Conference on Advanced Technologies, Athens, Greece, 9–11 July 2003; pp. 200–203. [Google Scholar]

- Nokelainen, P.; Miettinen, M.; Kurhila, J.; Floréen, P.; Tirri, H. A shared document-based annotation tool to support learner-centred collaborative learning. Br. J. Educ. Technol. 2005, 36, 757–770. [Google Scholar] [CrossRef]

- Su, A.Y.S.; Yang, S.J.H.; Hwang, W.-Y.; Zhang, J. A Web 2.0-based collaborative annotation system for enhancing knowledge sharing in collaborative learning environments. Comput. Educ. 2010, 55, 752–766. [Google Scholar] [CrossRef]

- Hsu, C.-K.; Hwang, G.-J.; Chang, C.-K. A personalized recommendation-based mobile learning approach to improving the reading performance of EFL students. Comput. Educ. 2013, 63, 327–336. [Google Scholar] [CrossRef]

- Bauer, M.; Zirker, A. Explanatory Annotation of Literary Texts and the Reader: Seven Types of Problems. Int. J. Humanit. Arts Comput. 2017, 11, 212–232. [Google Scholar] [CrossRef]

- Kalboussi, A.; Mazhoud, O.; Kacem, A.H. Comparative study of web annotation systems used by learners to enhance educational practices: features and services. Int. J. Technol. Enhanc. Learn. 2016, 8, 129–150. [Google Scholar] [CrossRef]

- Cigarrán-Recuero, J.; Gayoso-Cabada, J.; Rodríguez-Artacho, M.; Romero-López, M.-D.; Sarasa-Cabezuelo, A.; Sierra, J.-L. Assessing semantic annotation activities with formal concept analysis. Expert Syst. with Appli. 2014, 41, 5495–5508. [Google Scholar] [CrossRef]

- Gayoso-Cabada, J.; Ruiz, C.; Pablo-Nuñez, L.; Cabezuelo, A.S.; Goicoechea-de-Jorge, M.; Sanz-Cabrerizo, A.; Sierra-Rodríguez, J.L. A flexible model for the collaborative annotation of digitized literary works. In Proceedings of the Digital Humanities 2012, DH 2012, Conference Abstracts, Hamburg, Germany, 16–22 July 2012; pp. 195–197. [Google Scholar]

- Gayoso-Cabada, J.; Sanz-Cabrerizo, A.; Sierra, J.-L. @Note: An Electronic Tool for Academic Readings. In Proceedings of the 1st International Workshop on Collaborative Annotations in Shared Environment: Metadata, Vocabularies and Techniques in the Digital Humanities, Florence, Italy, 10 September 2013; pp. 1–4. [Google Scholar]

- Gayoso-Cabada, J.; Sarasa-Cabezuelo, A.; Sierra, J.-L. Document Annotation Tools: Annotation Classification Mechanisms. In Proceedings of the 6th International Conference on Technological Ecosystems for Enhancing Multiculturality, Salamanca, Spain, 24–26 October 2018; pp. 889–895. [Google Scholar]

- Pearson, J.; Buchanan, G.; Thimbleby, H.; Jones, M. The Digital Reading Desk: A lightweight approach to digital note-taking. Int. Comput. 2012, 24, 327–338. [Google Scholar] [CrossRef]

- Kam, M.; Wang, J.; Iles, A.; Tse, E.; Chiu, J.; Glaser, D.; Tarshish, O.; Canny, J. Livenotes: A System for Cooperative and Augmented Note-taking in Lectures. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Portland, OR, USA, 2–7 April 2005; pp. 531–540. [Google Scholar]

- Tront, J.G.; Eligeti, V.; Prey, J. Classroom Presentations Using Tablet PCs and WriteOn. In Proceedings of the Proceedings of the 36th Annual Conference in Frontiers in Education (IEEE); San Diego, CA, USA, 27–31 October 2006, pp. 1–5.

- Liao, C.; Guimbretière, F.; Anderson, R.; Linnell, N.; Prince, C.; Razmov, V. PaperCP: Exploring the Integration of Physical and Digital Affordances for Active Learning. In Human-Computer Interaction–INTERACT 2007, Proceedings of the 11th IFIP Conference on Human-Computer Interaction Part II; Rio de Janeiro, Brazil, 10–14 September 2007; Baranauskas, C., Palanque, P., Abascal, J., Barbosa, S.D.J., Eds.; Springer: Berlin/Heidelberg, Germany, 2007; pp. 15–28. [Google Scholar]

- Chatti, M.A.; Sodhi, T.; Specht, M.; Klamma, R.; Klemke, R. u-Annotate: An Application for User-Driven Freeform Digital Ink Annotation of E-Learning Content. In Proceedings of the 6th IEEE International Conference on Advanced Learning Technologies (IEEE), Kerkrade, The Netherlands, 5–7 July 2006; pp. 1039–1043. [Google Scholar]

- Lu, J.; Deng, L. Examining students’ use of online annotation tools in support of argumentative reading. Australas. J. Educ. Technol. 2013, 29, 161–171. [Google Scholar] [CrossRef]

- Kahan, J.; Koivunen, M.-R.; Prud’Hommeaux, E.; Swick, R.R. Annotea: An open RDF infrastructure for shared Web annotations. Comput. Netw. 2002, 39, 589–608. [Google Scholar] [CrossRef]

- Glover, I.; Hardaker, G.; Xu, Z. Collaborative annotation system environment (CASE) for online learning. Campus-Wide Inform. Syst. 2004, 21, 72–80. [Google Scholar] [CrossRef]

- Lin, S.S.J.; Chen, H.Y.; Chiang, Y.T.; Luo, G.H.; Yuan, S.M. Supporting Online Reading of Science Expository with iRuns Annotation Strategy. In Proceedings of the 7th International Conference on Ubi-Media Computing (IEEE), Ulaanbaatar, Mongolia, 12–14 July 2014; pp. 309–312. [Google Scholar]

- Chang, M.; Kuo, R.; Chang, M.; Kinshuk; Kung, H. Online annotation system and student clustering platform. In Proceedings of the 8th International Conference on Ubi-Media Computing (IEEE), Colombo, Sri Lanka, 24–26 August 2015; pp. 202–207. [Google Scholar]

- Asai, H.; Yamana, H. Intelligent Ink Annotation Framework That Uses User’s Intention in Electronic Document Annotation. In Proceedings of the 9th ACM International Conference on Interactive Tabletops and Surfaces, Dresden, Germany, 16–19 November 2014; pp. 333–338. [Google Scholar]

- Glover, I.; Xu, Z.; Hardaker, G. Online annotation–Research and practices. Comput. Educ. 2007, 49, 1308–1320. [Google Scholar] [CrossRef]

- Pereira Nunes, B.; Kawase, R.; Dietze, S.; Bernardino de Campos, G.H.; Nejdl, W. Annotation Tool for Enhancing E-Learning Courses. In Advances in Web-Based Learning-ICWL 2012, Proceedings of the 11th International Conference on Web Based Learning, Sinaia, Romania, 2–4 September 2012; Popescu, E., Li, Q., Klamma, R., Leung, H., Specht, M., Eds.; Springer: Berlin/Heidelberg, Germany, 2012; pp. 51–60. [Google Scholar]

- Chen, Y.-C.; Hwang, R.-H.; Wang, C.-Y. Development and evaluation of a Web 2.0 annotation system as a learning tool in an e-learning environment. Comput. Educ. 2012, 58, 1094–1105. [Google Scholar] [CrossRef]

- Cabanac, G.; Chevalier, M.; Chrisment, C.; Julien, C. A Social Validation of Collaborative Annotations on Digital Documents. In Proceedings of the International Workshop on Annotation for Collaboration, Paris, France, 23–24 November 2005; pp. 31–40. [Google Scholar]

- Bonifazi, F.; Levialdi, S.; Rizzo, P.; Trinchese, R. A Web-based Annotation Tool Supporting e-Learning. In Proceedings of the Working Conference on Advanced Visual Interfaces, Trento, Italy, 22–24 May 2002; pp. 123–128. [Google Scholar]

- Bottoni, P.; Civica, R.; Levialdi, S.; Orso, L.; Panizzi, E.; Trinchese, R. MADCOW: A Multimedia Digital Annotation System. In Proceedings of the Working Conference on Advanced Visual Interfaces, Gallipoli, Italy, 25–28 May 2004; pp. 55–62. [Google Scholar]

- Lebow, D.G.; Lick, D.W. HyLighter: An effective interactive annotation innovation for distance education. In Proceedings of the 20th Annual Conference on Distance Teaching and Learning, Madison, WI, USA, 4–6 August 2004; pp. 1–5. [Google Scholar]

- Kennedy, M. Open Annotation and Close Reading the Victorian Text: Using Hypothes.is with Students. J. Vic. Cult. 2016, 21, 550–558. [Google Scholar] [CrossRef][Green Version]

- Perkel, J.M. Annotating the scholarly web. Nat. News 2015, 528, 153–154. [Google Scholar] [CrossRef] [PubMed]

- Paradis, J.; Fendt, K. Annotation Studio-Digital Annotation as an Educational Approach in the Humanities and Arts (Tech. Report); MIT White Paper: Cambridge, MA, USA, 2016. [Google Scholar]

- Anagnostopoulou, C.; Howell, F. Collaborative Online Annotation of Musical Scores for eLearning using A.nnotate.com. In Proceedings of the 3rd International Technology, Education and Development Conference (IATED), Valencia, Spain, 2–4 March 2009; pp. 4071–4079. [Google Scholar]

- Tseng, S.-S.; Yeh, H.-C.; Yang, S. Promoting different reading comprehension levels through online annotations. Comput. Assist. Lang. Learn. 2015, 28, 41–57. [Google Scholar] [CrossRef]

- Kim, J.-K.; Sohn, W.-S.; Hur, K.; Lee, Y. Increasing Learning Effect by Tag Cloud Interface with Annotation Similarity. Int. J. Adv. Media Commun. 2014, 5, 135–148. [Google Scholar] [CrossRef]

- Bateman, S.; Brooks, C.; McCalla, G.; Brusilovsky, P. Applying Collaborative Tagging to E-Learning. In Proceedings of the Workshop on Tagging and Metadada for Social Information Organization-6th International World Wide Web Conference, Banff, AB, Canada, 8–12 May 2007; pp. 1–7. [Google Scholar]

- Bateman, S.; Farzan, R.; Brusilovsky, P.; McCalla, G. OATS: The Open Annotation and Tagging System. In Proceedings of the Conference of the LORNET Research Network (I2LOR’2006), Montreal, QC, Canada, 8–10 November 2006; pp. 1–10. [Google Scholar]

- Kawase, R.; Herder, E.; Nejdl, W. Annotations and Hypertrails With Spread Crumbs-An Easy Way to Annotate, Refind and Share. In Proceedings of the 6th International Conference on Web Information Systems and Technologies (INSTICC), Valencia, Spain, 7–10 April 2010. [Google Scholar]

- Silvestre, F.; Vidal, P.; Broisin, J. Tsaap-Notes–An Open Micro-blogging Tool for Collaborative Notetaking during Face-to-Face Lectures. In Proceedings of the 14th IEEE International Conference on Advanced Learning Technologies (IEEE), Athens, Greece, 7–10 July 2014; pp. 39–43. [Google Scholar]

- Hinze, A.; Heese, R.; Schlegel, A.; Luczak-Rösch, M. User-Defined Semantic Enrichment of Full-Text Documents: Experiences and Lessons Learned. In Proceedings of the Theory and Practice of Digital Libraries, Paphos, Cyprus, 23–27 September 2012; Zaphiris, P., Buchanan, G., Rasmussen, E., Loizides, F., Eds.; Springer: Berlin/Heidelberg Germany, 2012; pp. 209–214. [Google Scholar]

- Luczak-Rösch, M.; Heese, R. Linked Data Authoring for Non-Experts. Proceedings of WWW2009 Workshop on Linked Data on the Web, Madrid, Spain, 20 April 2009; pp. 1–5. [Google Scholar]

- Da Rocha, T.R.; Willrich, R.; Fileto, R.; Tazi, S. Supporting Collaborative Learning Activities with a Digital Library and Annotations. In Proceedings of the Education and Technology for a Better World, Bento Gonçalves, Brazil, 27–31 July 2009; Tatnall, A., Jones, A., Eds.; Springer: Berlin/Heidelberg Germany, 2009; pp. 349–358. [Google Scholar]

- Azouaou, F.; Desmoulins, C. Teachers’ document annotating: models for a digital memory tool. Int. J. Contin. Eng. Educ. Life Long Learn. 2006, 16, 18–34. [Google Scholar] [CrossRef]

- Azouaou, F.; Desmoulins, C. MemoNote, a context-aware annotation tool for teachers. In Proceedings of the 7th International Conference on Information Technology Based Higher Education and Training (IEEE), Sydney, Australia, 10–13 July 2006; pp. 621–628. [Google Scholar]

- Azouaou, F.; Mokeddem, H.; Berkani, L.; Ouadah, A.; Mostefai, B. WebAnnot: a learner’s dedicated web-based annotation tool. Int. J. Technol. Enhanc. Learn. 2013, 5, 56–84. [Google Scholar] [CrossRef]

- Kalboussi, A.; Omheni, N.; Mazhoud, O.; Kacem, A.H. An Interactive Annotation System to Support the Learner with Web Services Assistance. In Proceedings of the 15th IEEE International Conference on Advanced Learning Technologies, Hualien, Taiwan, 6–9 July 2015; pp. 409–410. [Google Scholar]

- Barab, S.; Squire, K. Design-Based Research: Putting a Stake in the Ground. J. Learn. Sci. 2004, 13, 1–14. [Google Scholar] [CrossRef]

- Greenwald, A.G. Within-subjects designs: To use or not to use? Psychol. Bull. 1976, 83, 314–320. [Google Scholar] [CrossRef]

- Bennett, A.J.; Royle, N. An Introduction to Literature, Criticism and Theory: Key Critical Concepts; Prentice Hall/Harvester Wheatsheaf: Upper Saddle River, NJ, USA; London, UK, 1995; ISBN 0-13-355215-2. [Google Scholar]

- Bold, M.R.; Wagstaff, K.L. Marginalia in the digital age: Are digital reading devices meeting the needs of today’s readers? Libr. Inf. Sci. Res. 2017, 39, 16–22. [Google Scholar] [CrossRef]

- Bal, M. Narratology: Introduction to the theory of Narrative, 4th Ed. ed; University of Toronto Press: Toronto, ON, Canada, 2017. [Google Scholar]

- Tartir, S.; Arpinar, I.-B.; Moore, M.; Sheth, A.-P.; Aleman-Meza, B. OntoQA: Metric-Based Ontology Quality Analysis. Proceedings of IEEE Workshop on Knowledge Acquisition from Distributed, Autonomous, Semantically Heterogeneous Data and Knowledge Sources, Houston, TX, USA, 27 November 2005. [Google Scholar]

- Lysaker, J.; Hopper, E. A Kindergartner’s Emergent Strategy Use During Wordless Picture Book Reading. Read. Teach. 2015, 68, 649–657. [Google Scholar] [CrossRef]

- Gómez-Albarrán, M. The Teaching and Learning of Programming: A Survey of Supporting Software Tools. Comput. J. 2005, 48, 130–144. [Google Scholar] [CrossRef]

- Paakki, J. Attribute Grammar Paradigms—A High-level Methodology in Language Implementation. ACM Comput. Surv. 1995, 27, 196–255. [Google Scholar] [CrossRef]

- Rodríguez-Cerezo, D.; Sarasa-Cabezuelo, A.; Gómez-Albarrán, M.; Sierra, J.-L. Serious games in tertiary education: A case study concerning the comprehension of basic concepts in computer language implementation courses. Comput. Human Behav. 2014, 31, 558–570. [Google Scholar] [CrossRef]

- Gayoso-Cabada, J.; Gómez-Albarrán, M.; Sierra, J.-L. Query-Based Versus Resource-Based Cache Strategies in Tag-Based Browsing Systems. In Maturity and Innovation in Digital Libraries, Proceedings of the 20th International Conference on Asia-Pacific Digital Libraries, Hamilton, New Zealand, 19–22 November 2018; Dobreva, M., Hinze, A., Žumer, M., Eds.; Springer International Publishing: Cham, Switzerland, 2018; pp. 41–54. [Google Scholar]

- Gayoso-Cabada, J.; Rodríguez-Cerezo, D.; Sierra, J.-L. Multilevel Browsing of Folksonomy-Based Digital Collections. In Web Information Systems Engineering–WISE 2016, Proceedings of the 17th International Conference, Shanghai, China, 8–10 November 2016; Cellary, W., Mokbel, M.F., Wang, J., Wang, H., Zhou, R., Zhang, Y., Eds.; Springer International Publishing: Cham, Switzerland, 2016; pp. 43–51. [Google Scholar]

- Gayoso-Cabada, J.; Rodríguez-Cerezo, D.; Sierra, J.-L. Browsing Digital Collections with Reconfigurable Faceted Thesauri. In Complexity in Information Systems Development, Proceedings of the 25th International Conference on Information Systems Development; Goluchowski, J., Pankowska, M., Linger, H., Barry, C., Lang, M., Schneider, C., Eds.; Springer International Publishing: Cham, Switzerland, 2017; pp. 69–86. [Google Scholar]

- Buendía, F.; Gayoso-Cabada, J.; Sierra, J.-L. From Digital Medical Collections to Radiology Training E-Learning Courses. In Proceedings of the 6th International Conference on Technological Ecosystems for Enhancing Multiculturality, Salamanca, Spain, 24–26 October 2018; pp. 488–494. [Google Scholar]

- Buendía, F.; Gayoso-Cabada, J.; Sierra, J.-L. Using Digital Medical Collections to Support Radiology Training in E-learning Platforms. In Lifelong Technology-Enhanced Learning, Proceedings of the 3th European Conference on Technology Enhanced Learning, EC-TEL 2018, Leeds, UK, 3–5 September 2018; Pammer-Schindler, V., Pérez-Sanagustín, M., Drachsler, H., Elferink, R., Scheffel, M., Eds.; Springer International Publishing: Cham, Switzerland, 2018; pp. 566–569. [Google Scholar]

- Buendía, F.; Gayoso-Cabada, J.; Sierra, J.-L. Generation of Standardized E-Learning Content from Digital Medical Collections. J. Med. Syst. 2019, 43, 188. [Google Scholar] [CrossRef]

| Annotation Classification Approach | Examples of Tools |

|---|---|

| Absence of classification mechanisms | Digital Reading Desk [24] Livenotes [25] WriteOn [26] PaperCP [27] u-Annotate [28] |

| Predefined annotation modes | Adobe Reader (acrobat.adobe.com) PDF Annotator (www.pdfannotator.com) Diigo’s (www.diigo.com) annotation tool [29] Amaya’s annotation tool [30], Anozilla (annozilla.mozdev.org) CASE [31] CON2ANNO [32] Online annotation system [33] VPen [11] IIAF [34] |

| Pre-established semantic categories | eLAWS and Annoty [35] Highlight [36] PAMS 2.0 [16] MyNote [37] Tafannote [38] WCRAS-TQAFM [9] CRAS-RAID [6] UCAT [39] MADCOW [40] |

| Folksonomies | HyLighter (www.hylighter.com) [41] Hypothe.sis (web.hypothes.is) [42,43] annotation studio [44] A.nnotate [45,46] Note-taking [47] OATS [48,49] SpreadCrumbs [5,50] Tsaap-Notes [51] |

| Ontologies | Loomp [52,53] DLNotes [54] MemoNote [55,56] WebAnnot [57] New-WebAnnot [58] |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Gayoso-Cabada, J.; Goicoechea-de-Jorge, M.; Gómez-Albarrán, M.; Sanz-Cabrerizo, A.; Sarasa-Cabezuelo, A.; Sierra, J.-L. Ontology-Enhanced Educational Annotation Activities. Sustainability 2019, 11, 4455. https://doi.org/10.3390/su11164455

Gayoso-Cabada J, Goicoechea-de-Jorge M, Gómez-Albarrán M, Sanz-Cabrerizo A, Sarasa-Cabezuelo A, Sierra J-L. Ontology-Enhanced Educational Annotation Activities. Sustainability. 2019; 11(16):4455. https://doi.org/10.3390/su11164455

Chicago/Turabian StyleGayoso-Cabada, Joaquín, María Goicoechea-de-Jorge, Mercedes Gómez-Albarrán, Amelia Sanz-Cabrerizo, Antonio Sarasa-Cabezuelo, and José-Luis Sierra. 2019. "Ontology-Enhanced Educational Annotation Activities" Sustainability 11, no. 16: 4455. https://doi.org/10.3390/su11164455

APA StyleGayoso-Cabada, J., Goicoechea-de-Jorge, M., Gómez-Albarrán, M., Sanz-Cabrerizo, A., Sarasa-Cabezuelo, A., & Sierra, J.-L. (2019). Ontology-Enhanced Educational Annotation Activities. Sustainability, 11(16), 4455. https://doi.org/10.3390/su11164455