1. Introduction

Traditional aerial target detection methods [

1], such as manual operator-based feature extractors and sliding window strategies, although they have made a lot of progress, often suffer from the limitations of computational efficiency and the shortcomings of weak feature expression capability and insufficient robustness. Driven by the advancement of deep learning techniques, one-stage and two-stage detectors have become the prevailing architectures in modern object detection research. One-stage frameworks such as SSD [

2], RetinaNet [

3], and the YOLO series [

4,

5] achieve end-to-end detection by directly learning discriminative features from images and predicting results without generating region proposals in advance. Relying on densely distributed preset anchors in the input image, these models can quickly output object categories and bounding box positions, enabling efficient target detection. On the contrary, two-stage detectors represented by Faster R-CNN [

6], Mask R-CNN [

7], and other improved variants [

8,

9,

10], adopt a hierarchical detection strategy. The Region Proposal Network is first utilized to locate and extract potential object-containing Regions of Interest (RoIs) from the image. Afterward, the detection head performs in-depth feature processing on these RoIs to refine bounding-box regression and accurately perform object classification. Although such methods have achieved prominent improvements in feature representation ability, they seldom fully account for the adaptive matching between bounding boxes and diverse object shapes during the regression process. Consequently, the overall detection performance is constrained to some extent.

In recent years, the refinement of bounding box representations has evolved into a core research direction in remote sensing object detection [

11,

12,

13]. Ding et al. [

14] put forward a strategy that transforms horizontal regions of interest (HRoI) into rotated regions of interest (RRoI), which markedly decreases the quantity of predefined anchors relative to conventional anchor-based pipelines. This improvement not only boosts computational efficiency but also strengthens the model’s adaptability to targets with arbitrary orientations. The Gliding Vertex method [

15] characterizes objects via quadrilateral structures, supporting a more accurate depiction of target contour features. Approaches including PAA [

16] and IQDet [

17] rely on the Gaussian Mixture Model (GMM) to model the distribution patterns of targets. By dynamically optimizing the selection of positive and negative samples, these schemes allow bounding box regression to better absorb and utilize critical information from ground-truth annotations. Although representative works such as [

18,

19,

20] have achieved remarkable progress in bounding box optimization and effectively improved detection accuracy and robustness, considerable challenges still remain.

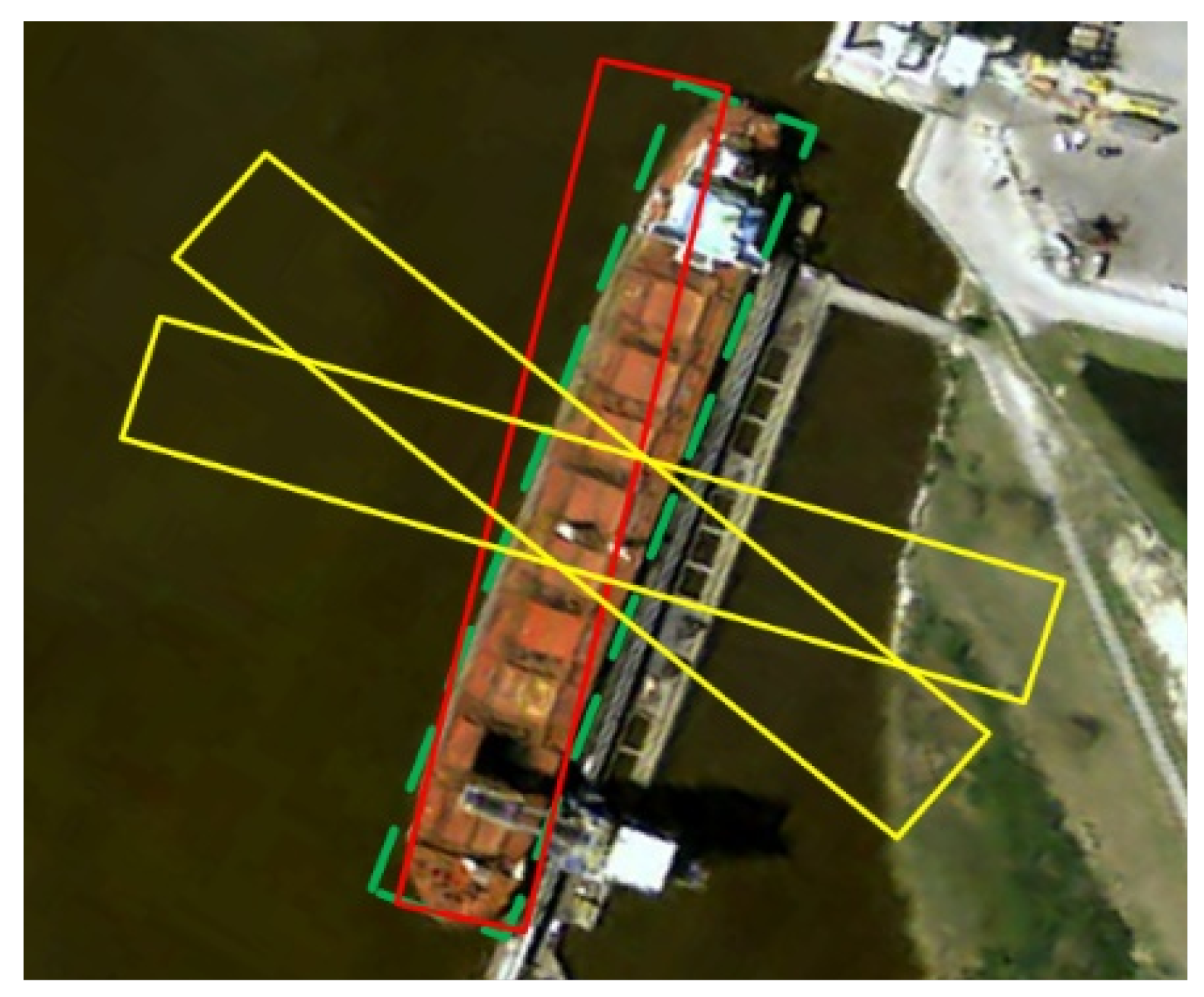

For targets with large aspect ratios—including slender bridges and ships where the aspect ratio exceeds a certain value—a slight angular deviation may result in a significant decline in

Figure 1. The capability of bounding boxes to capture the features of large aspect ratio targets efficiently and precisely relies on three core procedures [

21,

22]: (1) the representativeness of the learned samples; (2) the accuracy and efficacy of the matching strategy; (3) the optimality of the designed objective function. These three aspects jointly determine the efficiency and precision of bounding box representation within the model. Taking the aforementioned procedures into account, we have identified two key challenges in the accurate feature capture of elongated targets via bounding boxes.

To begin with, in the sample selection phase, assignment strategies based on fixed IoU thresholds are unable to cover elongated targets. Second, during the regression and loss function design stages, the bounding box regression task for elongated targets is more intricate compared to that for ordinary targets. The drastic fluctuations in the gradients further contribute to unstable model training. In the subsequent sections, we elaborate on these two challenges in detail.

(1) Missing out on high-quality sample anchors:

Object detection tasks face distinctive difficulties when it comes to identifying elongated targets like ships and bridges. Depending exclusively on the Intersection over Union (IoU) metric to assess the accuracy of predicted anchors often struggles to comprehensively grasp the key characteristics of the target in

Figure 2. This problem is further exacerbated by the high aspect ratio of slender targets. A slight localization deviation can lead to a considerable drop in IoU, as demonstrated in

Figure 3. As a result, anchor boxes (marked in red) that contain key point information might be ignored, which prevents the model from deeply learning the essential features. Such biases not only undermine the model’s ability to accurately locate the bounding boxes of elongated targets but also may reduce the overall detection precision.

(2) Unstable training caused by drastic changes in the gradient of the regression function:

Models typically prioritize anchors that generate larger loss gradients to refine predictions more accurately. But boundaries of elongated targets introduce unique challenges. Firstly, as illustrated in

Figure 1, even minor coordinate errors can trigger a sharp surge in loss function gradients, necessitating loss functions sensitive to such subtle variations. Nevertheless, many existing loss function designs are not fully adapted to this characteristic—they fail to effectively prompt the model to allocate sufficient attention to these small errors, thereby hindering the achievement of precise localization of slender targets during training.

Furthermore, as illustrated in

Figure 3, anchors that have minimal overlap with the ground-truth (the yellow anchors in the figure) can also generate substantial gradient losses during the process of coordinate regression. Such low-quality samples are capable of misleading model training, often resulting in unstable backpropagation and a subsequent decline in model performance.

To tackle these issues, it is necessary to devise more advanced regression strategies and loss functions, allowing bounding boxes to more effectively adapt to the characteristics of slender targets. In order to address the aforementioned challenges, we argue that an optimal bounding box representation ought to have the following attributes: (1) Precise sampling strategy: It should supply adequately representative samples for targets with varied orientations and shapes—especially elongated ones—to ensure that the training dataset contains key information. (2) Efficient regression evaluation criteria: Evaluation metrics and loss functions need to be designed to precisely reflect the performance of bounding box regression, particularly regarding accuracy and robustness in dealing with elongated targets. (3) Efficient deployment capability: To guarantee practical efficiency, the bounding box representation should reduce the computational burden on the detection head to the greatest extent possible while maintaining accuracy. This demands the integration of simplified algorithms to promote rapid deployment and real-time processing in practical applications. To achieve this goal, the methods are as follows:

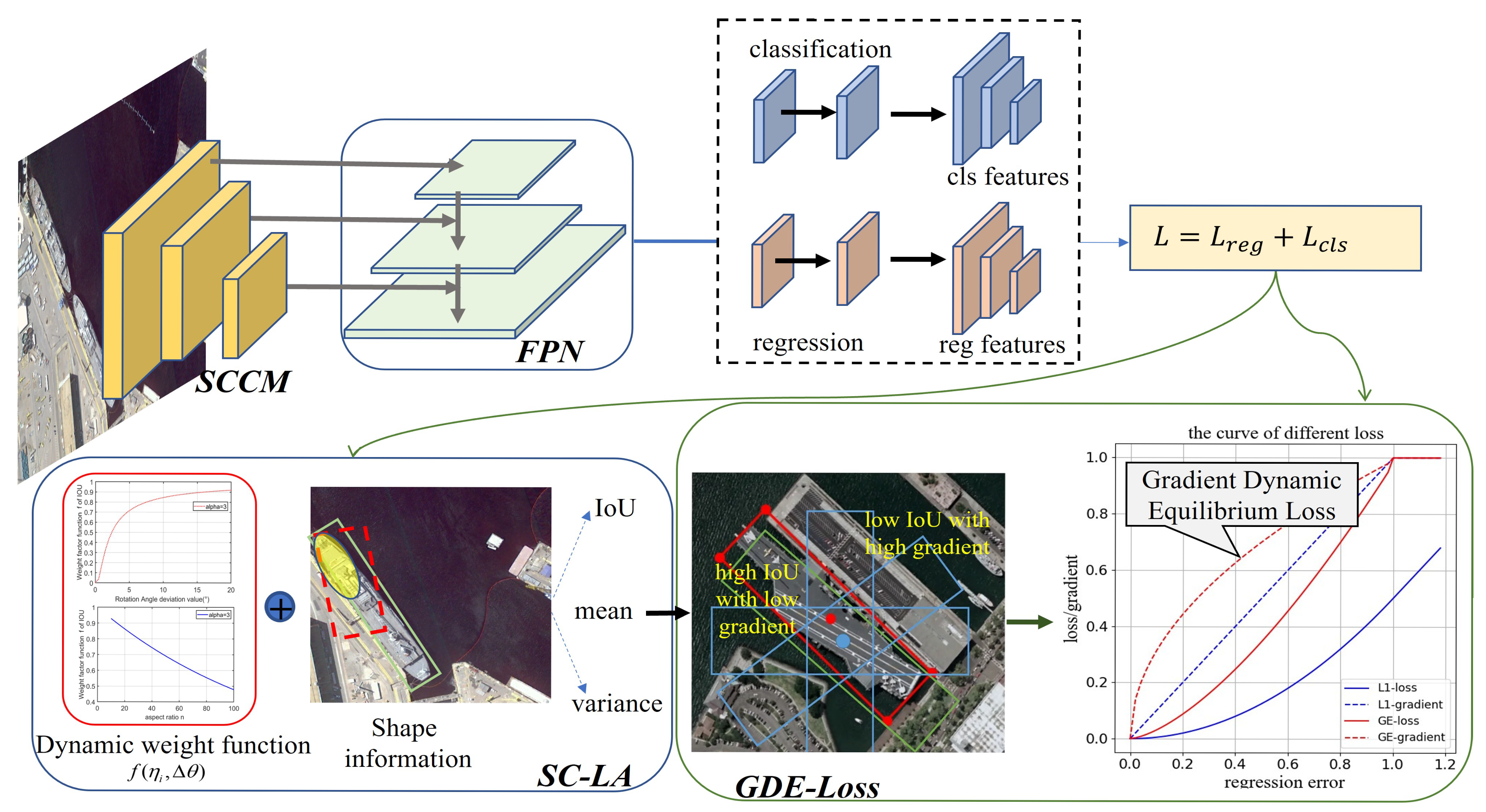

(1) Backbone: By employing a multi-branch parallel framework to integrate local contextual features and specific directional characteristics captured by square convolution and large-kernel strip convolution, we weaken the interference of background noise and realize accurate extraction of key features for targets with varying aspect ratios.

(2) Label assignment strategy: To address the issue of high-quality sample anchor omission caused by slender targets, this paper proposes a label assignment strategy named SC-LA. On the basis of the IoU metric, this strategy incorporates two additional evaluation indices: angular difference and aspect ratio. Specifically, when a target has a high aspect ratio and the angular deviation between samples and ground truths is small, the IoU threshold is dynamically reduced. This ensures that high-quality samples containing key information are assigned as positive samples, enabling the model to more thoroughly learn the characteristics of these samples and thereby improve detection accuracy.

(3) Loss function: To enhance localization capability for slender targets, we put forward the GDE-Loss function. Through fine-grained adjustment of the loss function gradient, our GDE-Loss can effectively mitigate gradient instability during the regression of large-aspect-ratio targets, promoting better model convergence.

Experimental results indicate that our detection head achieves superior performance compared to other state-of-the-art approaches on the benchmark datasets DOTA, UCAS-AOD, and HRSC2016. This outstanding outcome demonstrates the considerable potential of the proposed method in the domains of object detection and remote sensing image analysis.

4. Experiments

Experiments were conducted on typical public datasets, i.e., DOTA, HRSC2016, and UCAS-AOD. Detailed information about the datasets, method implementation, and experimental results is presented in the following subsections.

4.1. Datasets

DOTA [

33] serves as the largest-scale aerial dataset, consisting of 2806 high-resolution images with varying dimensions collected from various remote sensing platforms (e.g., satellites and unmanned aerial vehicles (UAVs)). The height and width of these images range from 800 to 4000 pixels. Annotated with rotating bounding boxes, the dataset contains 188,282 target instances that exhibit significant scale differences and dense distribution. These annotated instances fall into 15 categories, including roundabouts (RA), harbours (HA), swimming pools (SP), helicopters (HC), planes (PL), and others.

The HRSC2016 [

34] dataset is a high-resolution satellite imagery dataset. It comprises 436 training images, 181 validation images for performance evaluation, and 444 test images. These images exhibit a wide range of sizes, from small 300 × 300-pixel images to large 1500 × 900-pixel ones, thus covering a broad spectrum of ship scales. Additionally, the dataset includes targets with diverse distributions—encompassing ships of different types, sizes, and colors—set against complex backgrounds (e.g., clouds and coastlines).

The UCAS-AOD [

35] is an aerial benchmark customized for aerial object detection, focusing specifically on car and aircraft detection. These images capture various types of cars and aircraft under different environmental conditions, including variations in weather and seasons. For the purposes of this research, 1057 images were randomly selected for model training, while the remaining 302 images were used as the test set. Notably, the dataset boasts rich target diversity in distribution: it includes cars and aircraft of different models and colors, distributed across various background environments such as airport runways, parking lots, and urban streets.

To clarify the data distribution for reproducibility, we explicitly define the train/val/test split ratios (in percentage terms) for all datasets used in this study: For the DOTA dataset, we adopt a split of 1964 training images (70%), 281 validation images (10%), and 561 test images (20%); the HRSC2016 dataset is divided into 436 training images (50%), 181 validation images (21%), and 444 test images (29%); as for the UCAS-AOD dataset, it consists of 1057 training images (70%) and 302 test images (20%) without an independent validation set.

4.2. Implementation Details

In the present study, two baseline models were established, including an anchor-based framework adopting ResNet101 and an anchor-free architecture based on RepPoints. Both models utilize a backbone for feature extraction and integrate two detection heads to refine predictive outputs. During the training process, the stochastic gradient descent (SGD) optimizer was employed, with its initial learning rate, momentum, and weight decay set to 0.012, 0.9, and 0.0001, respectively.

To evaluate the performance of the models, training was conducted on the HRSC 2016 and UCAS-AOD for 100 and 120 epochs. In the anchor-free RepPoints model, the number of point sets was set to 12. The weighting parameter

was adjusted on both datasets based on experimental experience. Additionally, experiments were conducted using the MMDetection-1.1, PyTorch-1.3, and MMRotate frameworks, with hardware configurations consisting of 11G RAM and six GPUs with a total memory of 62 GB. Data augmentation strategies included random flipping and random rotation. Experimental results for the baseline models and our proposed method, presented in

Table 1 and

Table 2, were fairly compared with other methods through multi-scale training and data augmentation.

4.3. Ablation Studies

Evaluation of Each Proposed Component. To validate the effectiveness of the proposed modules, we performed corresponding ablation experiments.

Table 1 presents the experimental results of the anchor-free RepPoints method on these two datasets, respectively. The baseline model achieves mAP scores of merely 85.63% and 86.0%, which is due to its tendency to ignore key features of targets with large aspect ratios. When integrating the SC-LA strategy with GDE-Loss, the detector’s performance is improved by 3.09%. This enhancement indicates that the dynamic weighting function in the SC-LA strategy can adaptively lower the IoU threshold according to target shapes. This mechanism facilitates more thorough model learning, ensuring the model fully captures the key features of targets and thus improving detection accuracy.

Furthermore, we introduced the GDE-Loss function, which enhances the model’s sensitivity to samples with minor errors through gradient fine-tuning while alleviating the excessive focus on hard samples. This strategy effectively stabilizes the model’s gradient during the regression process, leading to further performance improvements—specifically, mAP increases by 1.08% and 3.32% on the two datasets, respectively.

Additionally, we carried out experiments on the anchor-based ResNet-101 model, and similar performance improvements were also observed on both datasets in

Table 2. These experimental results fully confirm that the proposed strategy is effective in enhancing target detection performance, particularly when dealing with challenging large-aspect-ratio targets.

In contrast to utilizing a single module, a network architecture incorporating multiple stacked modules yields superior performance. Notably, the integration of the SC-LA and GDE-Loss modules enriches the training process with samples containing critical features and provides efficient, precise regression guidance—all without introducing excessive computational complexity. This enhancement directly contributes to improved regression and classification outcomes. When employing the anchored ResNet-101 as the backbone, the model attains its optimal performance, with mean average precision (mAP) values of 90.02 and 90.17%.

Evaluation of the parameters within the module: We set the parameters on HRSC2016, based on the ResNet-101 backbone network with anchors, in the context of the separate inclusion of SC-LA

sensitivity experiments were conducted to test the effect of SC-LA in

Table 3.

As observed from the table, when is less than 3, the lower the value of , the lower the mAP. This shows that the angular difference has a weaker impact on the weight function. For high-aspect-ratio targets, the corresponding IoU threshold is adjusted to an excessively low level, which may lead to misclassification of some low-quality samples as positive samples and thus result in redundant information being included in positive samples. When exceeds 3, conversely, the higher the value of , the lower the mAP. This is because an excessively large excessively restrains the reduction in the IoU threshold; as a result, even some samples that contain key target features may be erroneously excluded from the positive set. Such over-restraint limits the model’s ability to learn key target features, thereby impairing detection performance.

However, when = 3, the highest mAP of 91.029% is achieved. This indicates that under this condition, the SC-LA strategy adapts well to target shapes and effectively learns target features. At this point, it neither excessively suppresses high-quality samples with angular differences nor misclassifies low-quality samples as positive samples.

Furthermore, with the SC-LA strategy adopted ( = 3), we conducted sensitivity experiments on parameter to verify the impact of the GDE-Loss.

It can be observed that when

is less than 15, the mAP value declines as a result of the characteristics of elongated ship targets (shown in

Table 4). Specifically, when

= 0.5,

= 1,

= 5, and

= 10, the high aspect ratio fails to effectively capture the sharp decline of the loss function with the increase of coordinate error. This setting may cause the model to overly concentrate on unimportant samples, thereby limiting the model’s detection accuracy. When

= 15, the mAP reaches a peak of 91.29%, indicating that gradient settings have achieved an appropriate balance on the HRSC2016 dataset. Under this condition, the model can avoid excessive attention to unimportant samples, thus realizing accurate detection of elongated ship targets.

Complexity analysis of SOBA-Net:Table 5 is used to compare the number of parameters, FLOPs, and inference time of SOBA-Net with baseline models based on the same hardware environment (NVIDIA Tesla V100 GPU). With the addition of three core modules, SOBA-Net decreases the number of parameters by 10%, FLOPs by 50%, and inference time by 4%, while maintaining computational efficiency comparable to baseline models and verifying the practicality of the method.

4.4. Comparative Experiments

An exhaustive comparison between SOBA-Net and other approaches was conducted on the DOTA dataset in

Table 6. From the table provided, our proposed SOBA-Net attains the highest AP scores for the bridge (BR), ship (SH), soccerball field (SBF), roundabout (RA), swimming pool (SP), and helicopter (HC) categories, with respective values of 63.54, 89.35, 72.41, 73.04, 83.24, and 81.26. Moreover, we secured the optimal average performance across all categories, achieving a mAP of 81.74. The traditional R3Det enables more precise alignment between feature representations and the positions of predicted boxes, thereby effectively enhancing detection accuracy. However, this method fails to fully capture the global features of objects, leading to slightly lower accuracy than our approach when detecting high-aspect-ratio targets such as bridges and ships.

LSKNet can more accurately model the differentiated contextual information demands of various object types by dynamically adjusting the network’s large spatial receptive field. Nevertheless, its adoption of inflated convolution can result in the sparsity in key features, leading to a 0.53% gap in mAP compared to our proposed SOBA-Net. In contrast, SOBA-Net achieves superior accuracy by simultaneously extracting long-range contextual features via SCCM. Additionally, it realizes precise prediction of rotating anchor parameters via a decoupled network guided by a dynamic progressive activation mask.

Visualized detection results from selected DOTA dataset samples are presented in

Figure 7. Through decoupled parameter prediction, the proposed SOBA-Net precisely delineates the boundaries of diverse targets, thereby achieving accurate detection of densely packed small objects. For targets with arbitrary orientations (e.g., aircraft), this network exhibits the ability to accurately capture their spatial pose, enabling adaptation to rotations across all angular ranges. Additionally, SOBA-Net yields detection results that are more closely aligned with the ground-truth annotations of these objects. Even in scenarios where foreground-background contrast is low (e.g., the rightmost column of images), our method successfully detects bridges and ships with vague textural details, underscoring its strong generalization capability. Notably, SOBA-Net mitigates the incidence of missed detections and false positives, adapts effectively to substantial variations in object scale and aspect ratio, and generates rotating bounding boxes that better conform to target contours. These experimental findings validate that SOBA-Net can robustly capture the spatial location and shape characteristics of objects, facilitating high-precision prediction of object orientations.

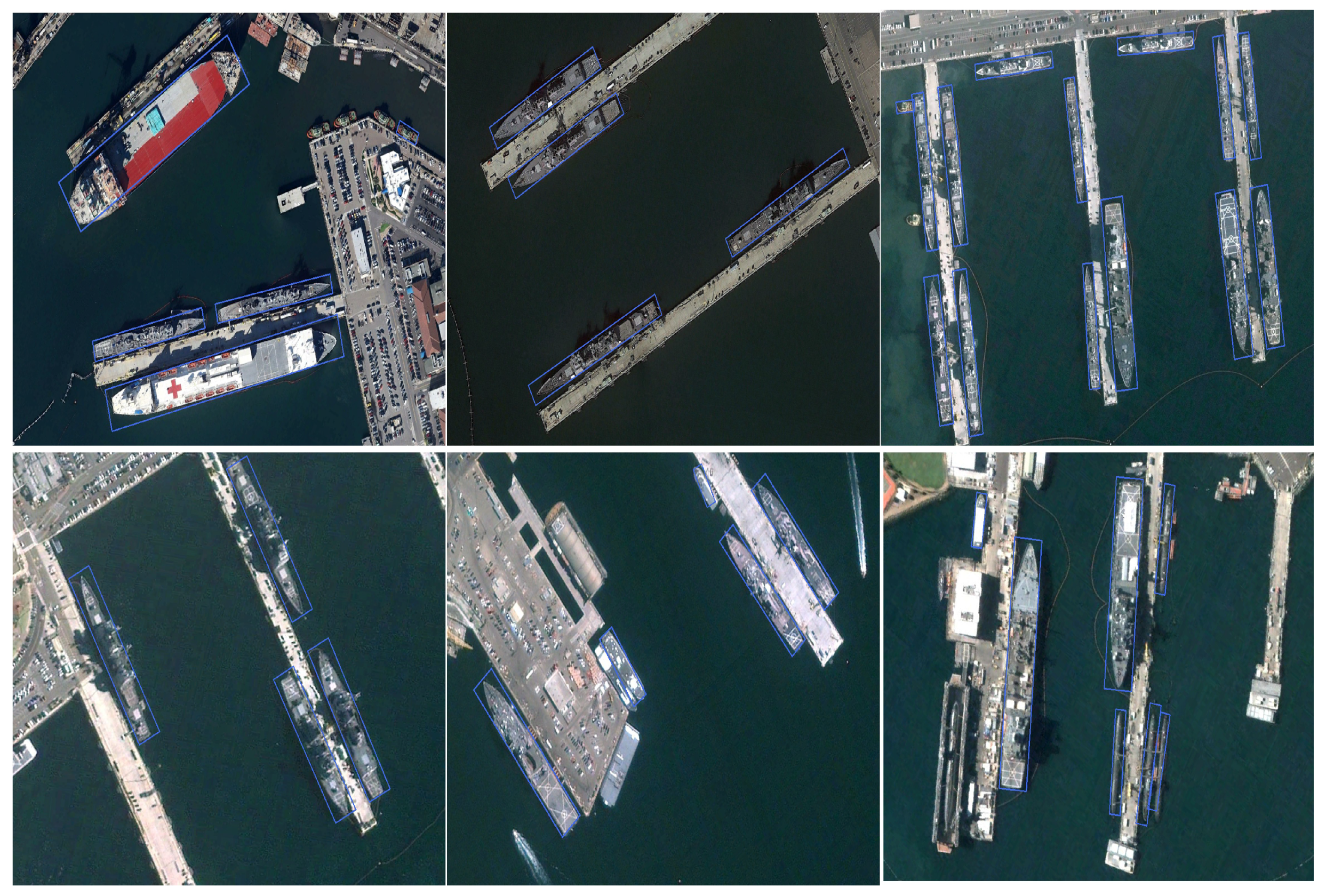

Results on HRSC2016

This dataset comprises various ship types, anchored in harbors and on the open seas, offering abundant test scenarios to validate effectiveness. Through the integration of innovative modules, our method achieves a mAP of 91.29%, surpassing all other existing detectors listed in the

Table 7. Of particular note is that our method outperforms S2ANet (90.10%), a dedicated ship detector, by 1.19% in mAP. This superiority underscores the superior efficiency of our method, which features fewer parameters and lower computational complexity while delivering better performance.

Visualized detection results, presented in

Figure 8, fully illustrate the exceptional performance of our model when confronted with slender ships featuring varied angular orientations. This is especially evident in complex environments adjacent to piers or harbors, as well as in dense side-by-side berthing scenarios. In contrast, our detection head overcomes these limitations by adopting the GDE-Loss regression training strategy and implementing gradient fine-tuning, which substantially enhances the model’s localization accuracy for long, thin targets.

The images in the third row further validate the adaptability of our model in handling targets with vastly differing scales. In these images, the ships exhibit substantial length variations, with some differing in length by as much as 10-fold. By leveraging the SC-LA (Sample-Specific) strategy, our model comprehensively captures the key features of the ships and can adaptively recognize and precisely locate these vessels of vastly varying sizes.

For the target detection task conducted on the UCAS-AOD dataset, our model surpasses all existing two-stage and one-stage detectors, achieving an impressive mAP score of 91.34% (shown in

Table 8). The visualized detection outcomes, presented in

Figure 9 and

Figure 10, further validate the exceptional detection capability of our model in handling targets with varying aspect ratios. Within this dataset, the majority of targets—including vehicles and aircraft—have aspect ratios of 1 or 1.5, yet our model still maintains precise localization for these objects. This superior performance is credited to the SC-LA (Sample-Specific) strategy, which dynamically captures target features through its adaptive weight-function shape, demonstrating remarkable adaptability and generalization ability for targets with different aspect ratios.

Results on UCAS-AOD

Particular significance is the dense side-by-side aircraft parking scenario illustrated in

Figure 9 and

Figure 10: even when the noses are intertwined with the fuselages of adjacent ones, our model can still effectively localize each individual target. This achievement stems from the SC-LA strategy’s ability to accurately capture key target information, such as the nose and tail of aircraft. Meanwhile, for vehicles and aircraft distributed in arbitrary directions, traditional detection methods often struggle to achieve effective localization due to unstable training gradients. In contrast, our GDE-Loss regression strategy provides the model with more stable and precise parameter update directions during training, which substantially enhances the localization accuracy.

Analysis of Failure Cases

To address the failure case analysis, we identify some representative scenarios with visual examples and underlying causes (as shown in

Figure 11). First, for severely occluded targets (occlusion rate ≥ 50%), such as densely moored ships in harbors where adjacent hulls overlap extensively or bridges partially blocked by vegetation, the SC-LA strategy struggles to screen effective positive samples—key features (e.g., ship bows/sterns or bridge piers) are obscured by overlapping regions, leading to missed detections or imprecise bounding boxes. Second, extremely small high-aspect-ratio targets (pixel size < 30 × 3), such as slender components of power lines or narrow waterways in remote sensing images, fail to be fully covered by the receptive field of the SCCM module, resulting in incomplete feature extraction and unstable regression. Third, low-contrast environments (grayscale difference between target and background < 10%), e.g., ships on foggy seas or bridges against cloudy skies, lead to a low signal-to-noise ratio for features; although GDE-Loss mitigates gradient instability, it cannot fully offset the interference from background noise, causing bounding box deviation.

These failures primarily stem from the limitations of current modules in handling extreme spatial constraints and environmental interference: the SC-LA’s key feature screening relies on partial overlap, which is ineffective under heavy occlusion; the SCCM’s multi-scale strip convolution cannot adapt to targets smaller than the minimum receptive field; and the GDE-Loss lacks adaptive adjustment to feature quality. Visualizations of these cases (supplemented as

Figure 11) clearly show that occluded targets are misclassified as background, tiny targets are omitted, and low-contrast targets have bounding boxes deviating from ground truth. Future improvements will integrate attention mechanisms to enhance feature extraction in occluded regions, optimize the SCCM’s receptive field adaptation for ultra-small targets, and fuse multi-modal features to improve robustness in low-contrast scenarios.