We evaluate HRPM–IRC on real and synthetic datasets against strong baselines, using multi-criterion metrics and rigorous statistical testing. Figures are provided as .png files for portability and are referenced directly in the text below.

6.1. Datasets

The empirical evaluation is based on an anonymized snapshot of tertiary hospitals in Beirut, Lebanon, complemented by a family of synthetic test beds calibrated to the real data. All records were de-identified prior to analysis and approved under institutional data-use and IRB guidelines (ref. MU-2025-HH01). The variables span patient attributes (age band, diagnosis group, comorbidity index, isolation flag , expected length of stay), room attributes (capacity , ward label, ventilation class) and staff structure (nursing teams covering disjoint room sets with workload weights ).

Although the empirical evaluation is based on a single tertiary hospital network, the modeling framework itself is institution-agnostic. All input components (room capacities, isolation flags, ventilation classes, workload weights, and infection propensities) correspond to standard hospital information system variables that are routinely available across healthcare facilities. The optimization structure does not rely on Lebanon-specific regulatory assumptions and can be recalibrated using local data from other institutions.

For infection modeling, pairwise propensities were estimated using a logistic regression model trained on historical co-location and pathogen transmission logs. The dependent variable indicates whether a secondary infection was observed following co-location of patients i and j within the same ward block.

The feature set includes (i) temporal overlap duration (hours of shared stay), (ii) pathogen class compatibility indicators, (iii) immunosuppression or high-risk clinical flags, (iv) ward-level contact intensity metrics, and (v) shared staff coverage indicators. Continuous variables were standardized prior to fitting.

Model coefficients were estimated using maximum likelihood, with empirical Bayes shrinkage toward ward-level priors to mitigate small-sample bias in low-incidence wards. Calibration quality was evaluated using fivefold cross-validation, reporting mean AUC, Brier score, and calibration slope. The resulting predicted probabilities were clipped to and used as pairwise infection propensity parameters .

Room multipliers encode environmental risk modifiers that capture room-level infection transmission conditions. In practical hospital settings, these values can be determined from measurable infrastructure and infection-control attributes, including ventilation performance (air changes per hour), presence of negative-pressure systems, HEPA filtration availability, single versus double occupancy configuration, and isolation capability.

In the Lebanese dataset, values were derived from facilities audit reports and ventilation classifications. Rooms were grouped into three environmental risk classes (low, medium, high) based on infection-control infrastructure. These classes were then mapped to normalized multipliers within , preserving relative risk differences while ensuring numerical stability in optimization. Spatial or specialty mismatch costs combine geometric proximity to the required specialty unit and penalties for off-service placement; distances are computed from floor plans (Manhattan metric across ward blocks) and rescaled to . Nurse workload weights were derived from acuity scoring (nurse-to-patient ratios per ward), z-scored within wards and clipped to ensure numerical stability.

Data cleaning removed impossible timestamps and resolved overlapping admissions using earliest-start precedence; missing covariates (<3%) were imputed using ward-conditional medians for continuous fields and non-frequent categories. To assess generalization, we split instances into development (60%), validation (20%), and holdout evaluation sets (20%) at the admission-episode level, maintaining ward and isolation stratification. All algorithms receive only the evaluation slice during final reporting.

Synthetic test beds expand the operating envelope while preserving key epidemiological and operational regularities. We vary scale with and , and modulate infection density from 5% to 30%. Pairwise risks are sampled from a ward-blocked Beta mixture calibrated to the Lebanese posterior means and variances, yielding community-like clusters (higher within-wards risk, lower between-wards risk). We induce correlation between isolation flags and high-risk edges by increasing the Beta concentration for cases. Ventilation multipliers follow the empirical room-class histogram; capacities mirror the real ratio of single to double rooms. Distances are generated on a synthetic grid consistent with the ward map, then scaled to ; workload weights follow truncated normal distributions centered at ward means.

Arrival uncertainty for scenario analysis is simulated via an inhomogeneous Poisson process with time-of-day intensity fitted from the real timestamps; lengths of stay draw from a ward-specific log-normal. To stress robustness, we add zero-mean Gaussian noise to (bounded to ) and occasionally perturb within class bounds to emulate ventilation outages. All random generators are seeded for reproducibility and released with the code.

Owing to patient privacy regulations and IRB restrictions, the real hospital dataset cannot be publicly shared. To ensure reproducibility despite these constraints, we provide a complete data dictionary (variable definitions, units, and ranges), documented preprocessing procedures, synthetic instance generators statistically calibrated to the empirical dataset, fixed random seeds, and parameter configuration files.

The synthetic test beds are constructed to preserve key structural characteristics observed in the real hospital (infection density, ward clustering patterns, capacity ratios, and ventilation class distributions), while systematically varying scale and epidemiological intensity. This design enables independent replication of experiments and supports external validity assessment beyond the single empirical site. Dataset summary (real and synthetic) is shown in

Table 1.

6.2. Baseline Methods

We benchmark HRPM–IRC against five standard baselines under matched time budgets and identical feasibility rules (capacity, isolation, incompatibilities). All methods operate on the same solution encoding (patient-to-room vector) and minimize the aggregated normalized multi-objective function in Equation (

1) under identical feasibility constraints, ensuring consistent multi-objective evaluation across methods. Baselines and main settings (pilot-tuned) is shown in

Table 2The greedy construction (GRD) orders patients (e.g., isolation first, then by descending

) and assigns each to the feasible room with the minimum marginal objective increase, breaking ties by lower risk and then shorter travel, as illustrated in Algorithm 2.

| Algorithm 2 GRD: Greedy Feasible Assignment |

Require: patients P (ordered), rooms R, feasibility set - 1:

empty assignment - 2:

for do - 3:

- 4:

choose - 5:

assign in x - 6:

end for - 7:

return x

|

Simulated annealing (SA), as illustrated in Algorithm 3, starts from GRD and explores a swap/move neighborhood with geometric cooling; a candidate is accepted with probability , where .

The genetic algorithm (GA) maintains a population of 60 solutions, uses one-point crossover (

), feasible mutation (

), and elitism (top 2). Infeasible offspring are repaired by projecting to

via capacity-aware reassignments that prioritize isolation, as presented in Algorithm 4.

| Algorithm 3 SA: Swap/Move with Geometric Cooling |

Require: initial GRD; ; time limit - 1:

while elapsed_time() and do - 2:

sample_neighbor ▹ random move or pairwise swap respecting - 3:

- 4:

if or rand() then - 5:

- 6:

end if - 7:

- 8:

end while - 9:

return best solution seen

|

| Algorithm 4 GA: One-Point Crossover + Feasible Mutation |

Require: pop size , , , time limit - 1:

initialize with GRD and random feasible variants - 2:

while elapsed_time() do - 3:

select parents by tournament; with prob apply one-point crossover - 4:

mutate with prob by reassigning a random i to a random feasible r - 5:

repair any infeasibility by - 6:

evaluate ; form next generation with elitism - 7:

end while - 8:

return best in

|

Tabu search (TS), illustrated in Algorithm 5, explores swap/insertion moves with tabu tenure 10 and aspiration by best; the neighborhood is filtered to maintain feasibility and to prioritize high-risk or overloaded wards.

| Algorithm 5 TS: Swap/Insertion with Short-Term Memory |

Require: initial GRD; tabu list ; tenure ; maxIter - 1:

for to maxIter do - 2:

feasible swap/insertion moves not in - 3:

choose ▹ aspiration allows tabu if globally best - 4:

update with reverse move; drop expired entries - 5:

; update best if improved - 6:

end for - 7:

return best solution seen

|

The exact MILP solves the full model in

Section 3 using Gurobi Optimizer version 10.0.3 under a 300 s time cap; we report the final objective value and MIPGap. All experiments were conducted on a workstation equipped with an Intel Core i7-12700 CPU @ 2.10 GHz, 32 GB RAM, running Ubuntu 22.04 LTS. Medium instances use GRD warm starts to tighten root bounds, as illustrated in Algorithm 6.

| Algorithm 6 MILP: Exact Solving with Time/Gap Limit |

Require: model s.t. constraints (2)–(14)- 1:

set MIPGap ; TimeLimit s; warm start ← GRD - 2:

solve with branch-and-bound + cuts; keep incumbent and bound - 3:

return incumbent solution, best bound, and reported gap

|

All local methods (SA, TS, GA-mutation/repair) share a feasibility-preserving neighborhood: (i) swap across rooms; (ii) move with capacity/isolation checks; (iii) two-swap across targeting risk-gradient improvement or nurse-balance relief. Repairs always prioritize isolation feasibility, then capacity, then incompatibilities.

6.3. Performance Metrics

To evaluate the performance of HRPM–IRC and the comparative baselines, five complementary metrics are used to capture the infection control efficiency, operational cost, workload fairness, computational efficiency, and temporal stability of allocations. All metrics are reported as averages across experimental instances, and lower values generally indicate better performance.

It is important to clarify that all optimization procedures (HRPM–IRC and baselines) minimize the aggregated normalized multi-objective function defined in Equation (

1). The individual objective values

R,

T, and

W reported below are presented solely for analytical interpretation of trade-offs and performance decomposition. They do not correspond to independent single-objective optimizations.

The first metric, infection risk

R, quantifies expected cross-infection exposure:

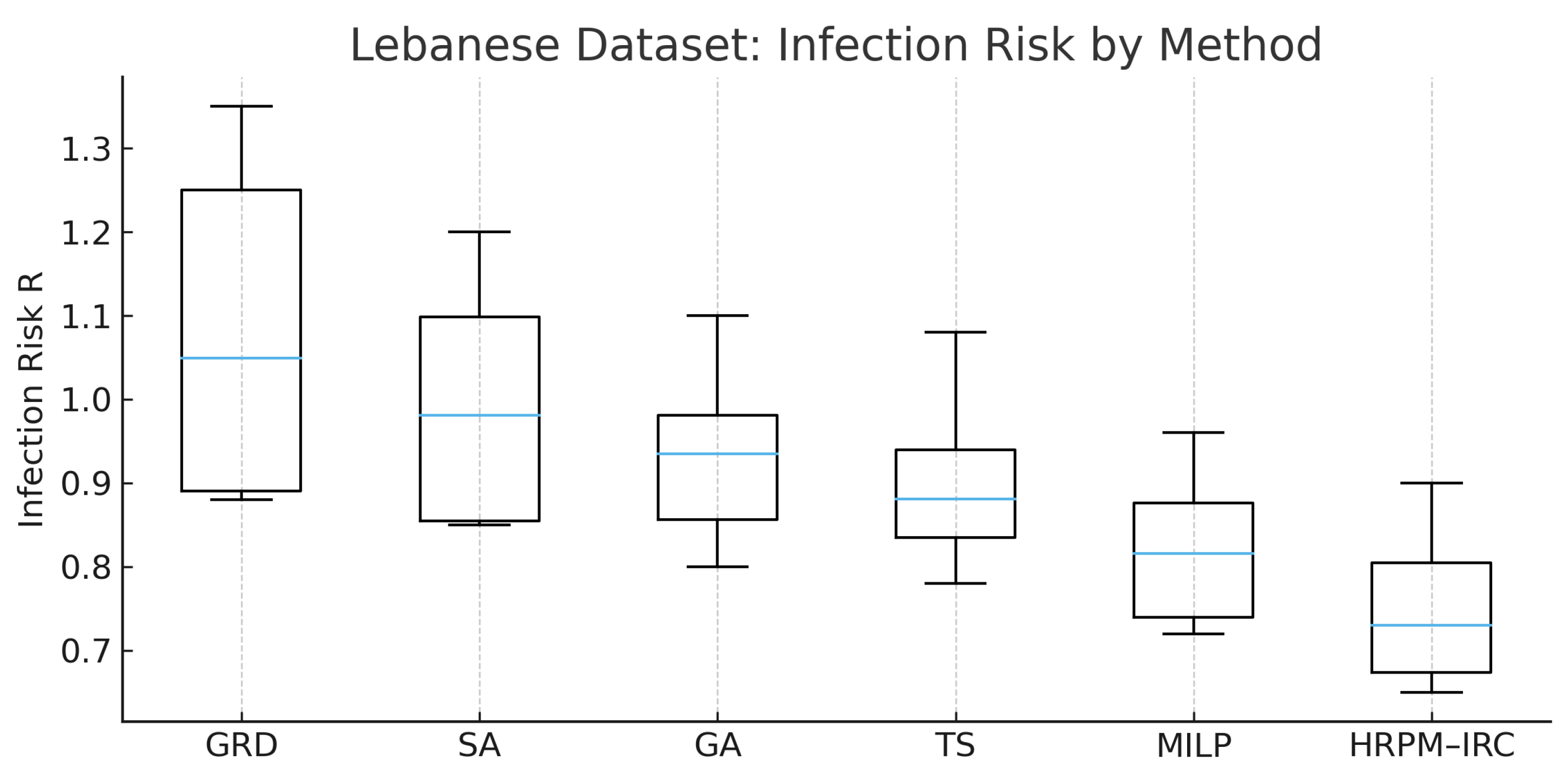

Figure 1 shows the distribution of

R for all methods on the Lebanese dataset; HRPM–IRC reduces the median risk notably versus GRD and GA.

Spatial/logistical efficiency is measured by the travel cost

T:

Workload fairness is tracked through imbalance

W:

Runtime

(seconds) indicates practical deployability;

Figure 2 compares runtimes on the medium synthetic set, showing HRPM–IRC remains within operational windows while the exact MILP is capped.

Temporal stability

S measures reallocation volatility via normalized Hamming distance:

Figure 3 (outbreak scenario) indicates faster stabilization for HRPM–IRC than for GA.

For each metric, significance is evaluated using non-parametric tests (Wilcoxon, Friedman with Nemenyi), with effect sizes expressed as Cliff’s

. For ablation-only ranking, we form a normalized composite

where

.

6.4. Sensitivity Analysis on Objective Weights

To evaluate the robustness of the proposed multi-objective framework with respect to managerial preference variations, multiple weight configurations were systematically tested. Importantly, in all experiments, the optimization was performed using the aggregated normalized objective defined in Equation (

1), ensuring simultaneous optimization of infection risk, cost, and workload fairness. All weights satisfy

, preserving interpretability as relative policy priorities.

Infection-priority regime:

Cost-priority regime:

Workload-priority regime:

Balanced regime:

In addition to these representative regimes, a grid-based sensitivity sweep was conducted over clinically plausible ranges, where each weight varied between 0.2 and 0.7 (in increments of 0.1) while proportionally adjusting the remaining weights to maintain normalization. This continuous exploration allowed assessment of stability beyond discrete policy scenarios.

The results demonstrate clear and consistent trade-off behavior: increasing leads to measurable reductions in infection exposure, with moderate increases in spatial cost; increasing improves logistical compactness with limited epidemiological degradation; and increasing reduces workload imbalance without destabilizing infection-control performance. The observed response curves were monotonic and smooth, with no abrupt performance shifts across tested ranges.

These observations confirm that the proposed weighted-sum structure captures meaningful Pareto-efficient trade-offs, even though a full Pareto-front enumeration is not explicitly generated. The stability of solution patterns across regimes and across continuous weight variations indicates that the framework is robust to moderate and even substantial weight perturbations.

Furthermore, computational times remained nearly unchanged across all weight configurations, confirming that the multi-objective aggregation does not compromise real-time applicability.

Overall, this sensitivity study validates that the HRPM–IRC model does not treat objectives independently; rather, it enables policy-driven trade-off navigation within a computationally efficient optimization structure suitable for operational deployment. Hospitals can therefore adjust weights according to outbreak severity, capacity stress, or staffing constraints without risking optimization instability.