1. Introduction

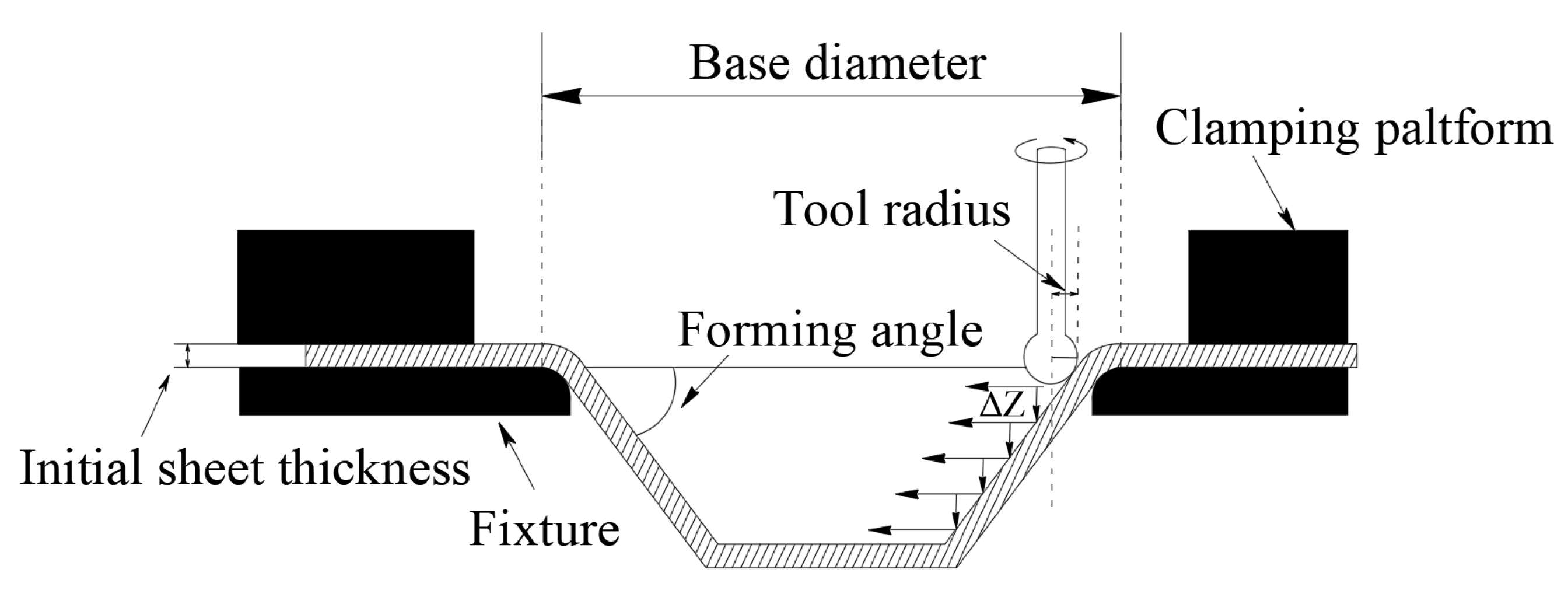

Single point incremental forming (SPIF) [

1] is an eco-friendly, flexible sheet metal forming technique derived from the layer-by-layer deposition principle of additive manufacturing. This process employs a numerical control system to precisely guide the forming tool along a predefined trajectory, inducing localized plastic deformation of the sheet material point by point and layer by layer. This cumulative deformation ultimately achieves the efficient fabrication of complex curved components. As it requires no dedicated molds or only simple backing molds during forming, this technology significantly reduces tooling costs and shortens product development cycles. Consequently, it demonstrates unique advantages for manufacturing complex curved components and small-batch customized products, such as personalized medical implants. However, its engineering applications remain severely constrained by limitations including excessive geometric deviation, suboptimal surface quality, and pronounced local thinning of the sheet material [

2].

To enhance the effectiveness of SPIF applications, researchers have proposed several improvement measures, including multi-stage forming [

3], thermo-assisted processing [

4], process variations [

5], and process parameter optimization [

6]. Among these, multi-stage forming reduces spring-back by applying forming processes with a hardening effect in stages, though it significantly prolongs manufacturing time. Thermally assisted SPIF also reduces spring-back, but requires higher equipment specifications, and is typically only applicable to materials with poor formability at ambient temperatures. Process modifications improve forming quality by reinforcing forming regions (e.g., using counter-supports or partial dies), though this increases equipment costs. In contrast, process parameter optimization enhances forming accuracy and surface quality directly and efficiently by minimizing local thinning and spring-back through optimizing critical forming parameters such as feed rate, tool trajectory, forming angle, and initial sheet thickness. This approach is a highly effective solution.

In practical SPIF applications, various performance indicators, such as thickness reduction, geometric deviation, texture and microstructure [

7], are utilized to assess the outcome. However, geometric deviation and thickness reduction are two key indicators that directly determine part usability and structural integrity. Geometric deviation reflects the difference between the formed part and the target geometry, which is a critical quality requirement in industrial forming processes. Thickness reduction, on the other hand, is closely associated with localized necking and fracture risk, representing the primary constraint for preventing failure. The macroscopic performance of both indicators is fundamentally governed by microstructural evolution, particularly the development of deformation texture. More importantly, these two objectives are often mutually exclusive: aggressive parameters aimed at minimizing deviation frequently trigger excessive thinning, whereas conservative settings to preserve thickness may lead to significant spring-back and poor accuracy. Therefore, these two objectives, respectively, represent product quality and forming safety, defining the critical trade-off necessary for optimizing the SPIF process. Extensive work has been conducted on optimizing process parameters for these objectives. For instance, Habeeb et al. [

8] experimentally and statistically demonstrated that increasing step depth appropriately reduces thickness reduction while enhancing forming quality. Samad et al. [

9] achieved an accuracy of 92% in predicting the thickness distribution using a random forest model. Yang et al. [

10] utilized deep learning models to predict spring-back and reduce geometric deviation. Najm et al. [

11] achieved the rapid prediction and optimization of geometric deviation through artificial neural networks (ANNs). However, multiple process parameters in SPIF interact synergistically to influence forming quality. Single-objective optimization typically compromises other performance metrics. For instance, optimizing the thinning rate to enhance material utilization may compromise the forming geometric accuracy. Coordinating multiple process parameters to optimize metrics such as thinning rate and geometric deviation simultaneously constitutes a complex multi-objective optimization problem. Traditional optimization methods, such as genetic algorithm (GA), are inefficient when addressing such multi-objective problems, as they struggle to locate global optima within reasonable timeframes. To achieve rapid convergence towards global optima, Moses et al. [

12] proposed an enhanced squirrel search algorithm. This approach optimized seven SPIF process parameters, effectively reducing geometric deviations and enhancing forming accuracy. Experimental results demonstrate that truncated cones formed using the optimized SPIF process exhibit root mean square errors of 2.4 mm

2 for roundness deviation and 3.2 mm

2 for positional deviation. This highlights the importance of developing more efficient optimization algorithms for enhancing both SPIF multi-objective optimization and overall forming performance.

Multi-objective grey wolf optimization (MOGWO) [

13], proposed by Mirjalili et al. in 2016, is a novel meta-heuristic optimization method that simulates the hunting behavior of grey wolf packs in nature. This algorithm mathematically models the social hierarchy and behaviors of wolves, such as encircling, pursuing, and attacking prey. It effectively combines global search with local exploration. It features minimal parameters and a straightforward structure. Its leader-follower guided search strategy effectively prevents premature convergence, enhances convergence speed, and strengthens the algorithm’s directionality towards finding optimal solutions, thereby accelerating the discovery of the global optimum [

14]. Existing research confirms that MOGWO significantly outperforms traditional algorithms such as NSGA-II and multi-objective particle swarm optimization in terms of convergence and solution set distribution quality, demonstrating particular advantages in complex optimization problems [

15]. Although MOGWO has been extensively applied in various fields, including energy system optimization [

16], logistics route optimization [

17], and parameter tuning [

18], its utilization in SPIF parameter optimization is scarcely documented. Consequently, applying MOGWO to SPIF parameter optimization is a promising avenue for further research.

The application of MOGWO for SPIF parameter optimization requires a suitable objective function [

19]. Due to its robust nonlinear modeling and multivariable coupling capabilities, the neural network has become a common choice for constructing SPIF parameter optimization objective functions [

20]. Unlike traditional statistical methods, neural networks do not rely on low-order polynomial assumptions, significantly enhancing prediction accuracy and model generalization performance. For instance, Ajay et al. [

21] achieved a correlation coefficient of 0.99992 by using an ANN to optimize wall angles and surface roughness for Ti-Grade 5 material. Kumar et al. [

22] proposed a hybrid artificial neural network to estimate the maximum forming load for AA7075-O sheet, attaining a prediction accuracy of 99.80%. Regarding multi-objective co-optimization, Xiao et al. [

23] proposed an AA5052 SPIF forming optimization method based on a back propagation neural network and GA, achieving both maximized forming angle and minimized thinning rate while obtaining a Pareto optimal solution. Taherkhani et al. [

24] proposed a synergistic optimization method for SPIF geometric accuracy and surface quality based on the group method of data handling and GA. It simultaneously enhances geometric accuracy and surface quality, achieving a minimum geometric deviation of 0.79 mm and a surface roughness of 0.57 mm. Consequently, developing a neural network agent model for SPIF multi-objective optimization can enhance optimization accuracy.

Multi-objective parameter optimization problems are characterized by strong parameter coupling, narrow feasible regions, and conflicting quality objectives, which make conventional multi-objective metaheuristic algorithms prone to premature convergence and poorly distributed Pareto fronts. Existing studies often focus on improving a single aspect of the optimization process, such as population diversity, convergence speed, or leader update strategies [

25,

26,

27,

28]. In particular, dynamic ensemble-learning models have incorporated GWO variants for complex risk assessment and high-level predictive modeling [

25], primarily for structural reliability analysis and dynamical weighting optimization. Nevertheless, in complex practical problems such as SPIF parameter optimization, the search space is continuous, highly coupled, and severely constrained. Under these conditions, existing variants of metaheuristic algorithms often struggle to maintain solution diversity within narrow feasible regions and fail to simultaneously ensure effective global exploration capability, convergence stability, and a well-distributed Pareto front.

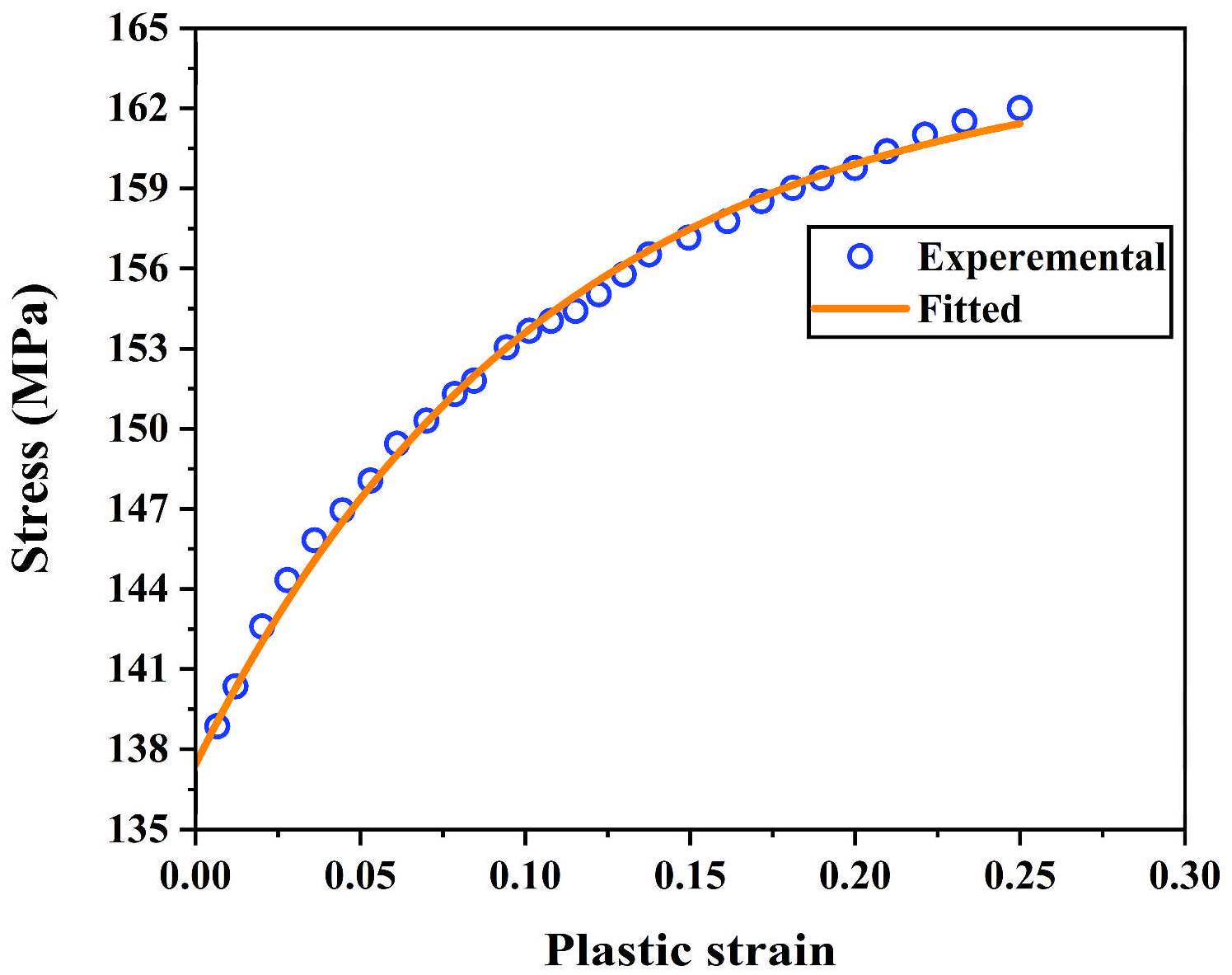

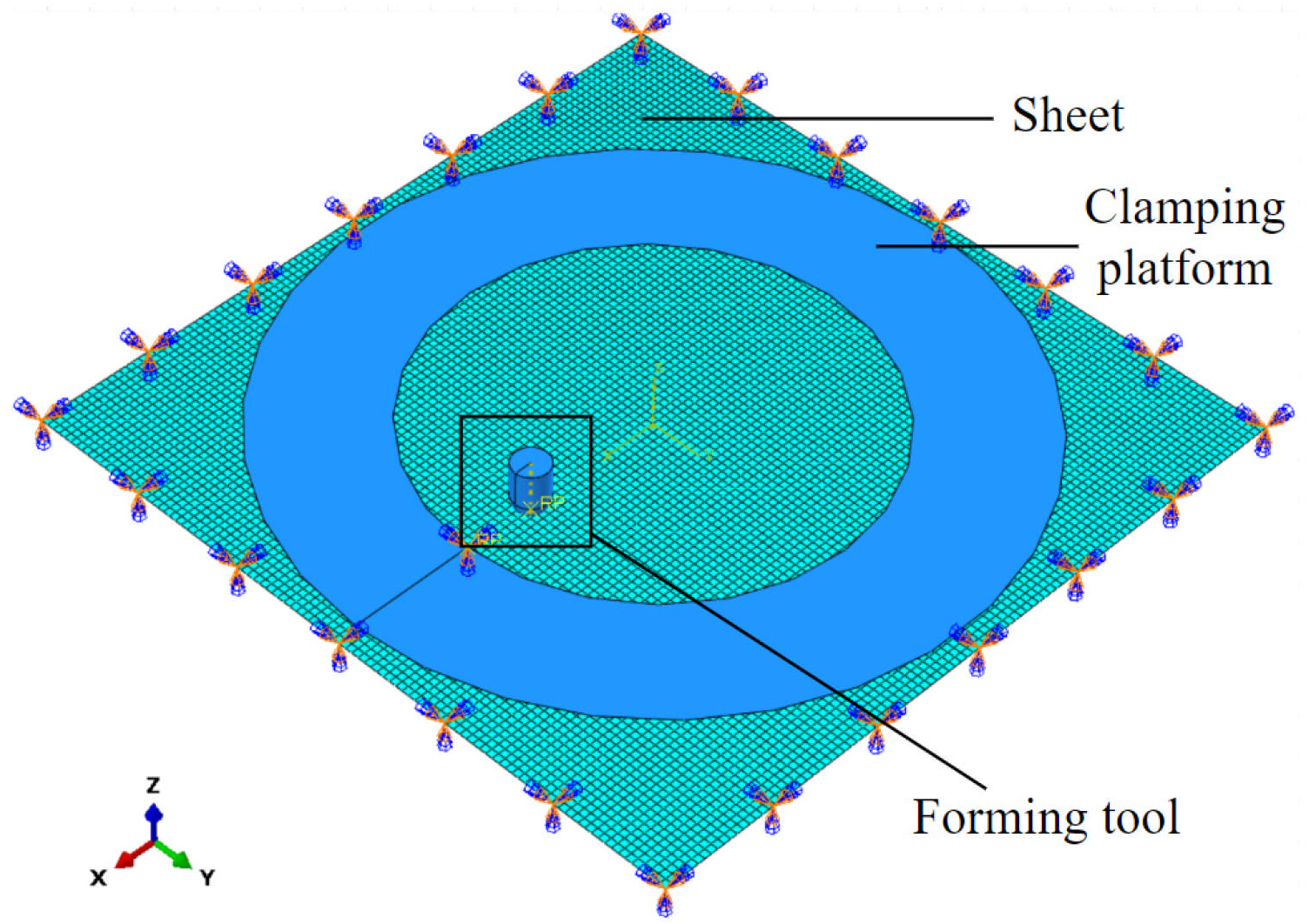

To achieve synergistic optimization of the SPIF thinning rate and geometric deviation, this paper proposed a dual-objective optimization method combining MLP and improved multi-objective grey wolf optimization (IMOGWO). First, a sample dataset was constructed using finite element simulations, and an MLP neural network was established and trained to create a nonlinear mapping model of thinning rate and geometric deviation with respect to forming process parameters. Subsequently, an IMOGWO variant was proposed to enhance the diversity of algorithmic initialization and the exchange of information within grey wolf populations, thereby improving convergence efficiency and global optimization capability. This was achieved through chaotic mapping initialization, an improved convergence coefficient updating mechanism and a cooperative learning mechanism. Finally, the Pareto optimal solution set was screened for optimal process parameters using the entropy-weighted TOPSIS method. The finite element simulation and experimental results of the truncated cones made from Al 1060 sheet metal are consistent, thus verifying the effectiveness of the proposed IMOGWO method.

4. SPIF Multi-Objective Optimization Based on IMOGWO

The GWO models the parameter optimization process as the hunting behavior of grey wolves [

33]. In this algorithm, each grey wolf represents a potential solution to the parameter optimization problem. The three wolves with the highest fitness are designated as the Alpha, Beta, and Delta wolves, forming the pack of head wolves. The head wolves then guide the pack’s search behavior, steering it towards the optimal solution.

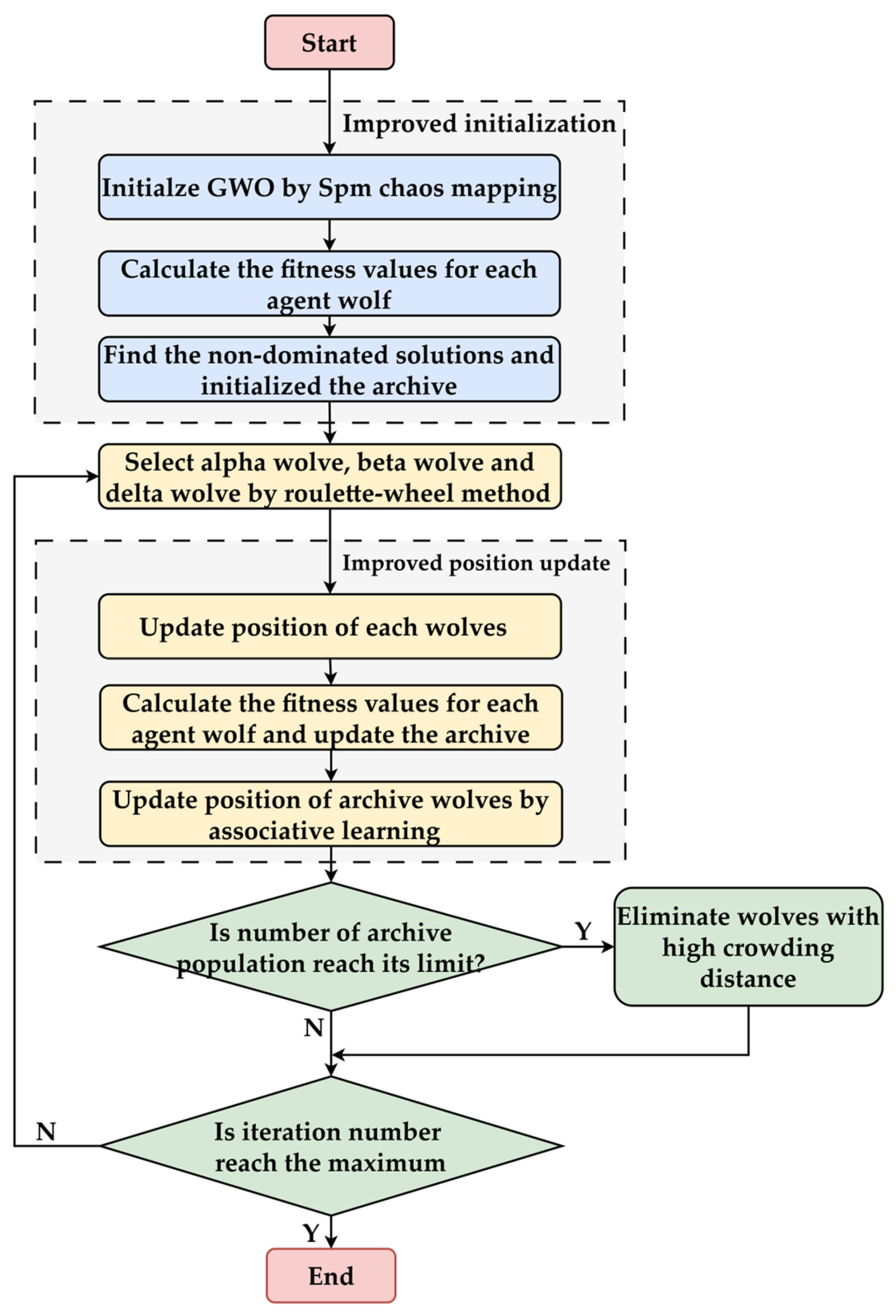

To apply GWO to multi-objective optimization problems, Mirjalili et al. [

13] introduced the Pareto front to record optimal solutions and employed a roulette wheel selection method for selecting head wolves, thereby constructing the MOGWO algorithm. However, traditional MOGWO still suffers from issues such as getting stuck in local optima and slow convergence. Therefore, an IMOGWO algorithm was presented and investigated to achieve the co-optimization of the SPIF thinning rate and geometric deviation based on the MLP model established in

Section 3 as the cost function. By incorporating the Spm chaotic initialization, an improved convergence coefficient, and an enhanced wolf pack associative learning mechanism, MOGWO was refined to address its susceptibility to local optima and slow convergence. This improves the accuracy and speed of the SPIF parameter optimization process [

34]. Unlike existing MOGWO variants that enhance a single optimization aspect, the proposed IMOGWO adopts a coordinated and stage-aware design to address the coupled, constrained, and conflicting nature of SPIF multi-objective optimization.

4.1. Spm Chaos Mapping Initialization

Traditional MOGWO typically employs random population initialization methods, which can readily lead to uneven initial population distributions, causing the algorithm to converge to local optima. To address this, this paper introduces Spm chaotic mapping initialization [

35], which enables the initial distribution of the wolf pack to exhibit superior exploration and randomness. This enhances the algorithm’s ability to explore unknown spaces and improves its global performance. Concurrently, Spm chaotic mapping initialization positions the initial population closer to optimal solutions, accelerating the convergence of the IMOGWO algorithm.

In the SPIF parameter optimization probe, continuous parameter variables can be initialized as the Spm chaotic mapping:

where

is the initialized parameters for the

i-th grey wolf within the IMOGWO,

and

represent the lower and upper bounds, respectively, for the values of the parameters to be optimized. The Spm chaos mapping initialization factor,

, can be given as follows:

where

is a random number uniformly distributed within the interval (0, 1), which serves as the initial seed for the chaotic iterations.

is the modulo operator, ensuring the generated values remain within the predetermined range.

and

are coefficients controlling the dynamic properties of the mapping, with values between 0 and 1. Here,

= 0.4 and

= 0.3 are used to achieve a relatively uniform and random initial distribution [

36].

When the number of grey wolf agents in IMOGWO reaches 500, the Spm chaotic mapping initialization factors are shown in

Figure 8. As can be seen from the figure, the population initialization of the Spm chaotic mapping exhibits good uniformity, effectively enhancing the ability to explore unknown spaces.

4.2. Improved Convergence Coefficient

In the MOGWO algorithm, the process of finding the optimal solution to a problem is analogous to a pack of grey wolves searching for or pursuing prey. During the iterations of the algorithm, the behavior of the wolves is influenced by the head wolves, as shown in Equation (8):

where

denotes the potential parameter solution for the

i-th grey wolf after the (

k + 1)-th iteration.

,

and

represent the updated parameter solutions for the

i-th grey wolf, influenced by

,

and

, respectively, which can be computed using Equation (9).

where

represents the potential parameter solution indicated by the head wolves after the

k-th iteration in the IMOGWO.

and

are random numbers following a uniform distribution within the interval (0, 1).

is the convergence coefficient for the

k-th iteration in the algorithm. It decreases linearly with the iteration number during the search for the optimal solution, controlling the wolves’ actions by influencing

. When

, the wolves perform a global search, whereas when

, they deploy a coordinated pursuit and converge toward the optimal solution.

To enhance the global search capability of the IMOGWO, the traditional linear descent was replaced with a cosine iteration scheme that constructs a convergence coefficient

[

37], as shown in Equation (10).

where

denotes the maximum number of iterations. The comparison of the

before and after improvement over the number of iterations is shown in

Figure 9. It can be observed that the improved

decreases slowly during the initial iteration phase and then declines rapidly as the number of iterations increases. Therefore, based on the improved

, IMOGWO maintains global search behavior in the early stages, thereby enhancing the algorithm’s capability for global optimization.

4.3. Associative Learning-Based Update Mechanism

In the process of seeking optimal solutions using the traditional MOGWO algorithm, each iterative update is solely influenced by the head wolves. Consequently, the algorithm may fail if the head wolves are trapped in a local optimum. To enhance the global search capability of the algorithm, an associative learning mechanism [

26] was introduced to the IMOGWO. The grey wolves on the Pareto front, excluding the alpha wolf, are updated using Equation (11).

where

denotes a bounded random perturbation, whose magnitude is adaptively limited by the distances from the current solution to the lower and upper bounds.

and

denote random numbers uniformly distributed within the interval (0, 1);

denotes the parameters of a random grey wolf from the current Pareto front, excluding the alpha wolf. Equation (9) shows that the update process based on the associative learning mechanism is influenced by other grey wolves in the Pareto front, as well as the alpha wolf. This indicates associative learning behavior among grey wolves. The convergence factors

and

can be calculated using Equation (12):

As can be seen from Equation (12), as the iteration number, k, increases, decreases while increases. Consequently, the update process of the grey wolf becomes less influenced by random solutions within the Pareto front and more influenced by the alpha wolf. During the initial phase of the algorithm, therefore, the wolves within the Pareto front tend to interact with each other, preventing the algorithm from becoming trapped in local optima. In the later phase, the process converges toward the alpha wolf, ensuring the convergence accuracy of the algorithm.

Figure 10 illustrates the flowchart of the proposed IMOGWO, which incorporates the Spm chaotic mapping initialization, an improved convergence coefficient, and an associative learning mechanism.

4.4. Modeling and Analysis of the IMOGWO

The aim of this paper is to minimize the maximum thinning rate,

, and maximum geometric deviation,

, during forming. Constraints include the tool radius,

, tool path strategy,

, initial sheet thickness,

, forming angle,

, and step depth,

, all of which must remain within specified ranges. According to

Table 1 and the established MLP model for predicting the SPIF thinning rate and geometric deviation, the mathematical optimization model for the IMOGWO can be formulated as follows:

where

is the set of real numbers.

The wolf population size, maximum iterations, and Pareto archive capacity for IMOGWO were all set to 100. A key merit of the proposed IMOGWO lies in its minimal dependence on hyperparameter tuning. By restricting primary parameters to population size and iterations, the algorithm mitigates the performance fluctuations frequently observed in more parameter-intensive metaheuristics.

The comparison of the convergence processes of IMOGWO and MOGWO is illustrated in

Figure 11. As can be seen, IMOGWO converged at the 45th iteration, whereas MOGWO required 80 iterations, which demonstrates the superior convergence speed of IMOGWO. Upon convergence, IMOGWO maintained a maximum thinning rate of approximately 19.3% and a maximum geometric deviation of around 2.065 mm. This demonstrates that the proposed IMOGWO significantly reduces sheet thinning while preserving high forming performance.

Figure 12 shows the obtained Pareto front, which includes the identified 47 optimal parameter solutions (indicated by red dots). The target values of the Pareto optimal solutions are tabulated in

Table 5.

To validate its effectiveness, the proposed IMOGWO was evaluated against MOGWO, NSGA-II, and the dynamical ensemble machine-learning-based MOGWO (DEML-MOGWO) [

25]. To ensure a fair comparison, all algorithms employed the same population size (100) and maximum number of iterations (100). NSGA-II used a crossover probability of 0.9 and a mutation probability of 0.1. MOGWO and IMOGWO followed the standard parameter settings suggested in the original literature.

The performance of the various multi-objective optimization algorithms was evaluated quantitatively using hypervolume (HV) and spacing metric. HV measures the volume of the objective space dominated by the obtained Pareto front with respect to a reference point, defined as 1.1 times the maximum objective values found in the front, reflecting both convergence and solution diversity. Spacing evaluates the uniformity of the distribution of solutions along the Pareto front.

Table 6 summarizes the mean and standard deviation of the HV and spacing metric, calculated based on the final Pareto fronts from 30 independent runs. The proposed IMOGWO exhibited superior performance. It achieved the highest mean HV (7.50 × 10

−3), surpassing NSGA-II (7.20 × 10

−3), DEML-MOGWO (7.23 × 10

−3) and the original MOGWO (7.02 × 10

−3). Furthermore, the mean spacing of IMOGWO (0.19 × 10

−3) is notably lower than that of MOGWO (0.50 × 10

−3) and NSGA-II (0.55 × 10

−3). This substantial reduction in spacing indicates that the non-dominated solutions obtained by IMOGWO are much more uniformly distributed along the Pareto front. Meanwhile, the spacing for IMOGWO (0.19 × 10

−3) and DEML-MOGWO (0.18 × 10

−3) was found to be highly comparable. This suggests that both frameworks have an equivalent capability to maintain a highly uniform distribution along the Pareto front. However, the fact that IMOGWO achieves this level of uniformity through its refined search mechanism—without the additional computational complexity of a dynamic ensemble surrogate—further highlights the efficiency and structural robustness of the proposed IMOGWO. These results indicate that IMOGWO provides superior convergence quality and a more uniform Pareto front distribution.

4.5. Optimal Solution Selection Based on Entropy-Weighted TOPSIS

Given the conflicting and constrained nature of the two optimization objectives of maximum thinning rate and maximum geometric deviation, the solutions on the Pareto optimal front are non-dominated trade-offs that cannot be compared directly. Consequently, the entropy-weighted TOPSIS method was employed to evaluate and rank the identified 47 Pareto optimal solutions. TOPSIS is one of the most widely employed multi-criteria decision-making approaches [

38], ranking solutions based on their proximity to positive and negative ideal solutions. The optimal solution is the one that is closest to the positive ideal solution and furthest from the negative ideal solution. The entropy-weighted TOPSIS decision method improves decision accuracy by calculating the information entropy of different attributes to determine the weighting of process parameter evaluation indicators.

The IMOGWO has derived a Pareto front comprising two cost-type indicators: maximum SPIF thinning rate and maximum geometric deviation, both of which have lower values that are preferable. Furthermore, given the varying quantities and units of these attributes, normalization was required to prevent computational errors and distortion of the results. Consequently, a standardized decision matrix,

Q, can be constructed and expressed as Equation (14).

where

and

represent the number of the Pareto optimal solutions and cost-type indicators (optimization objectives) within the Pareto front, respectively.

indicates the

j-th indicator of the

i-th solution on the Pareto optimal front.

and

.

The entropy value of the

j-th indicator,

, can be calculated as follows:

where

represents the normalized weight of the

j-th indicator under the

i-th solution, which is calculated as the ratio of its value to the sum of all indicator values for that solution:

The entropy weight for the

j-th indicator can be calculated as follows:

Combined Equations (14) and (17), the weighted normalized decision matrix,

, can be given as:

Considering that both the geometric deviation and the thinning rate should be minimized in SPIF processing, the positive and negative ideal solutions

and

can be expressed as:

where

,

.

Combining Equations (18) and (19), the Euclidean distances

and

of the

i-th solution to the positive and negative ideal solutions, respectively, along with the resulting relative closeness,

, of the

i-th solution to the positive ideal solution can be calculated as follows:

The relative closeness values of the Pareto optimal solutions are listed in

Table 7. It can be seen that the optimal process parameters for SPIF were found to be those of solution 42, which exhibited the highest closeness (corresponding to a value of 0.995, highlighted in bold in the table). These parameters are: a tool radius of 4.00871 mm, an initial sheet thickness of 1.07692 mm, an axial step depth of 0.18797 mm, and a forming angle of 30°, with a contour path.

Physically, the optimized parameters reflect coupled trade-offs in SPIF mechanics. Step depth and tool radius jointly balance strain localization, thinning suppression, and geometric deviation, leading to interior optimal values. A larger initial sheet thickness increases load-bearing capacity and delays necking, but also raises forming forces and contact stresses. In contrast, forming angle exhibits a largely monotonic influence: a smaller angle reduces material stretching, membrane strain, and through-thickness thinning while improving geometric deviation. Consequently, the optimization naturally drives the forming angle to its lower bound, whereas the other parameters converge to interior values within the feasible design space.