Robust Enough? Exploring Temperature-Constrained Energy Transition Pathways under Climate Uncertainty

Abstract

1. Introduction

2. General Approach for Robustifying Optimization-Based IAMs

2.1. Integrated Assessment Modelling—A Stylised Description in the Optimization Framework

2.2. The Robust IAM for Climate Parameters

| Algorithm 1: Constraint generation algorithm. |

|

2.3. Obtaining Uncertainty Ranges for Climate Parameters

3. Application to TIAM-World

3.1. Model Overview

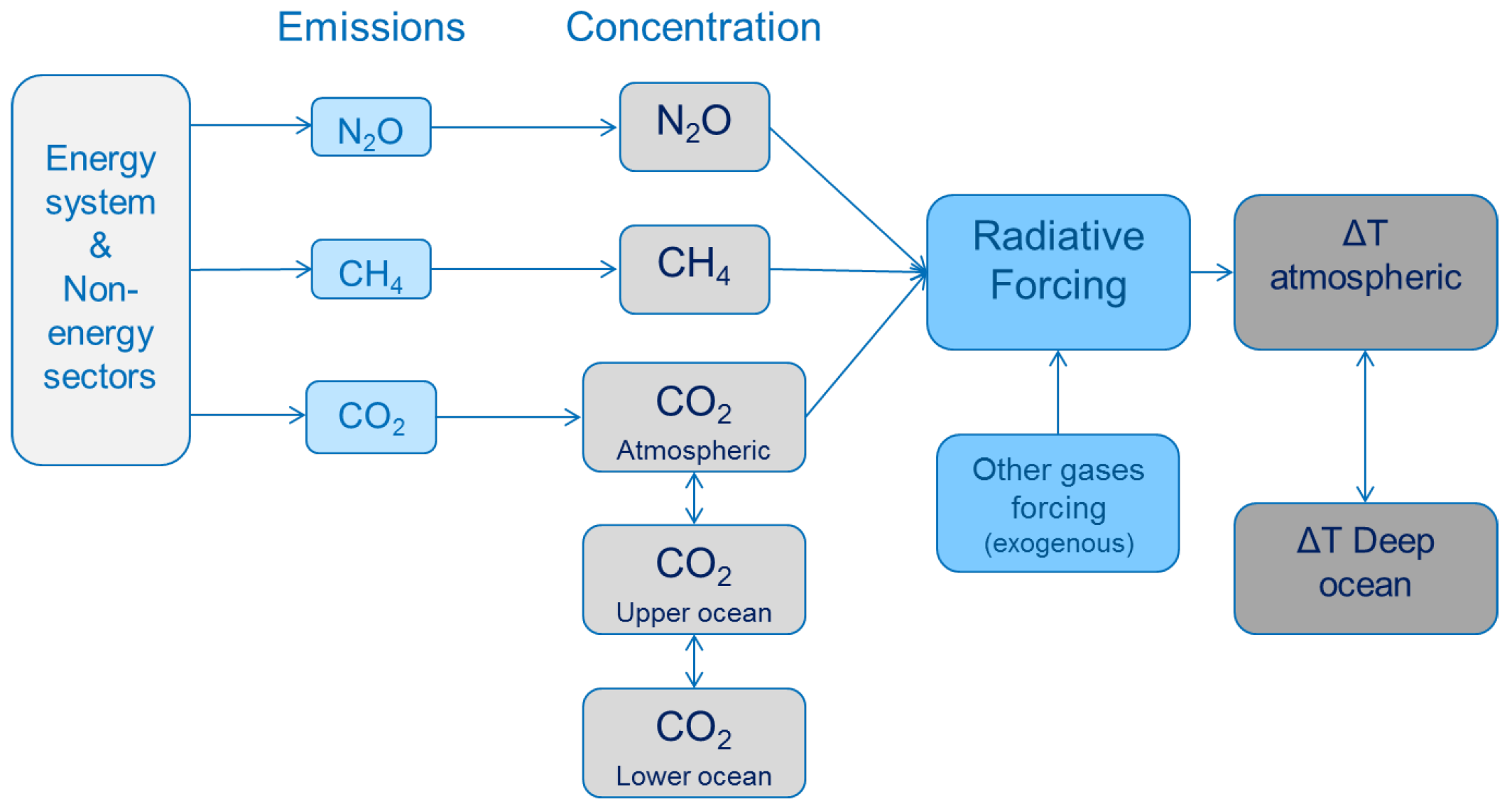

3.2. The Climate Module and the Uncertainty Sets of Climate Parameters

3.3. Robust Formulation of the Climate Problem

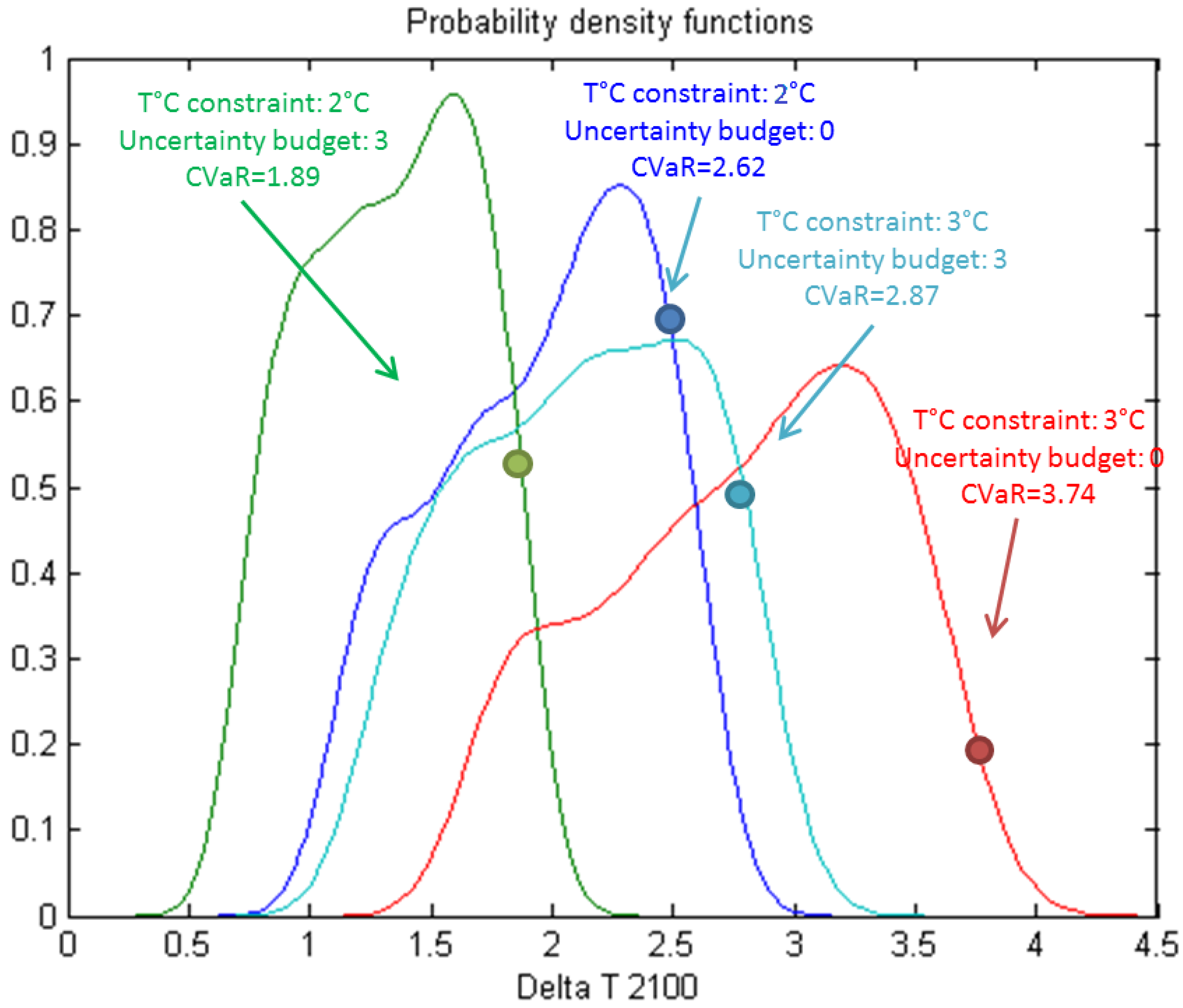

4. Results and Discussion

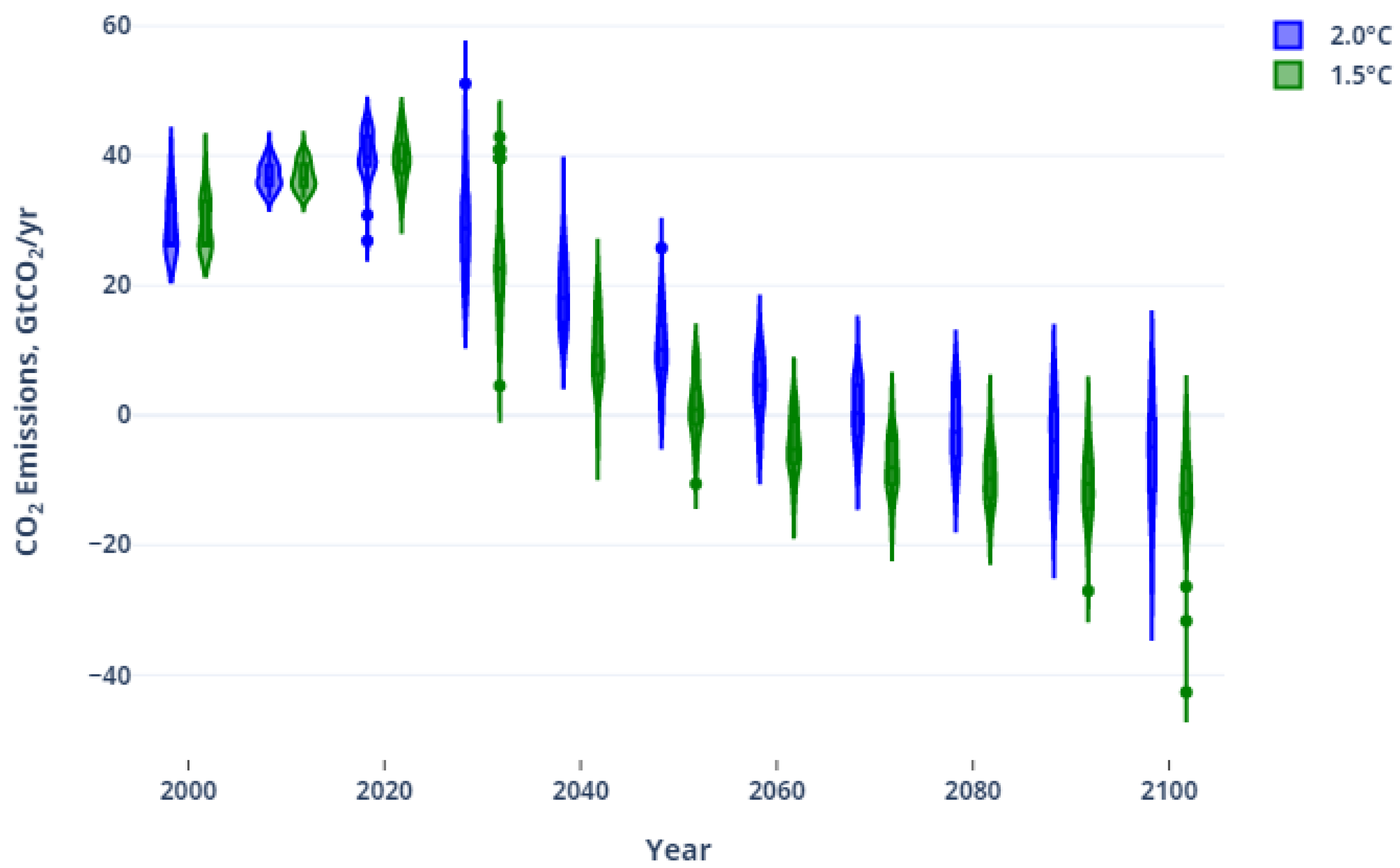

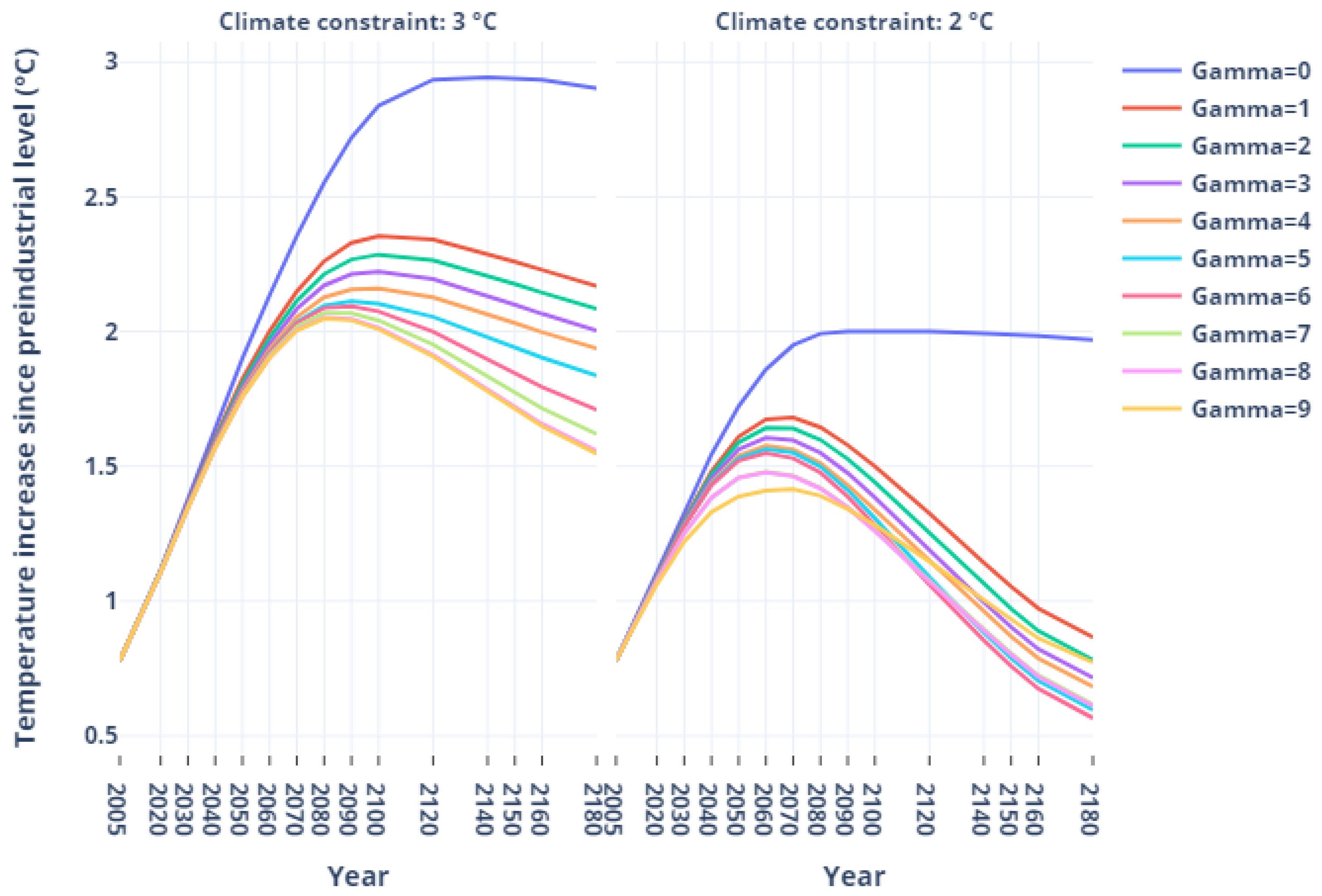

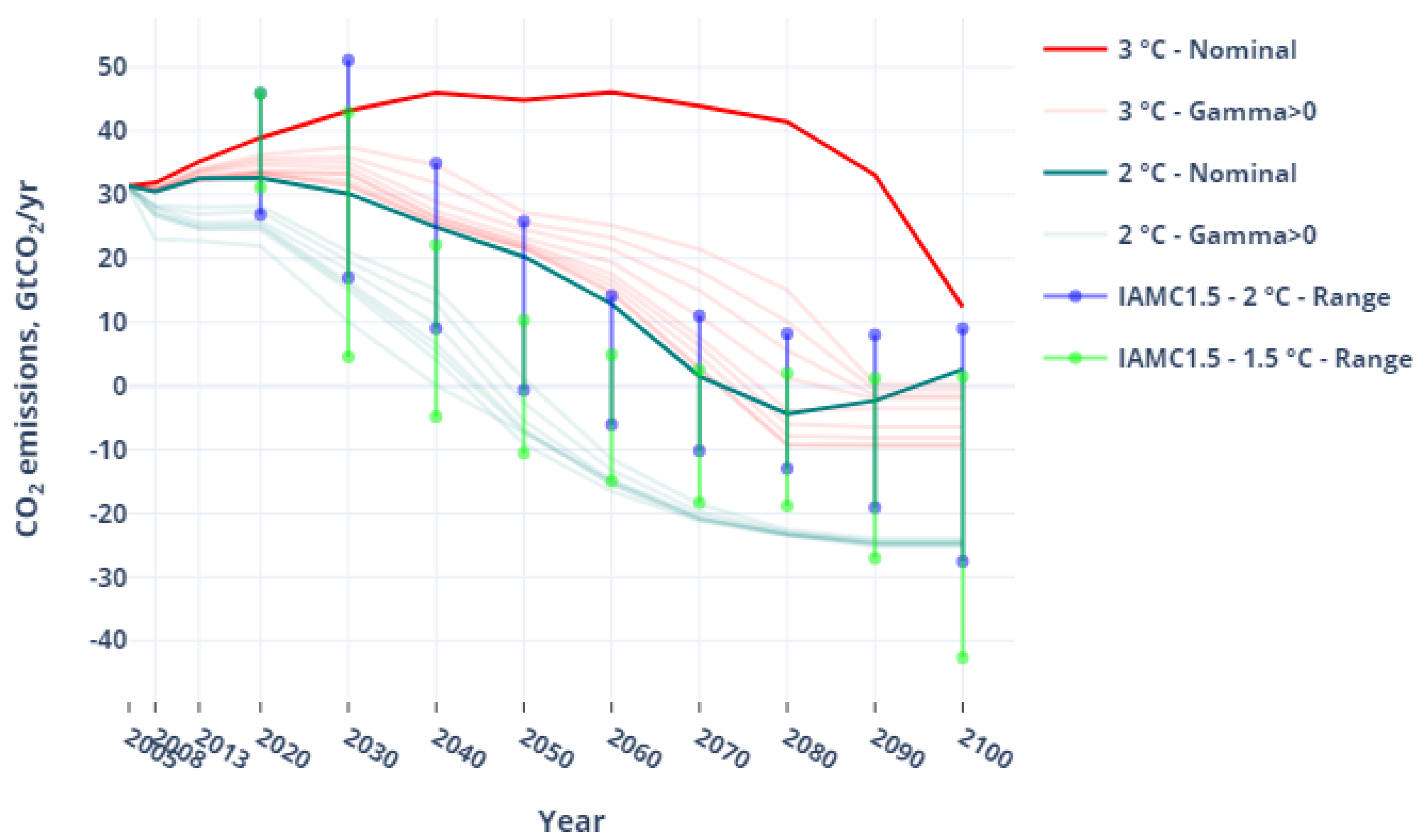

4.1. Temperature and Emission Trajectories

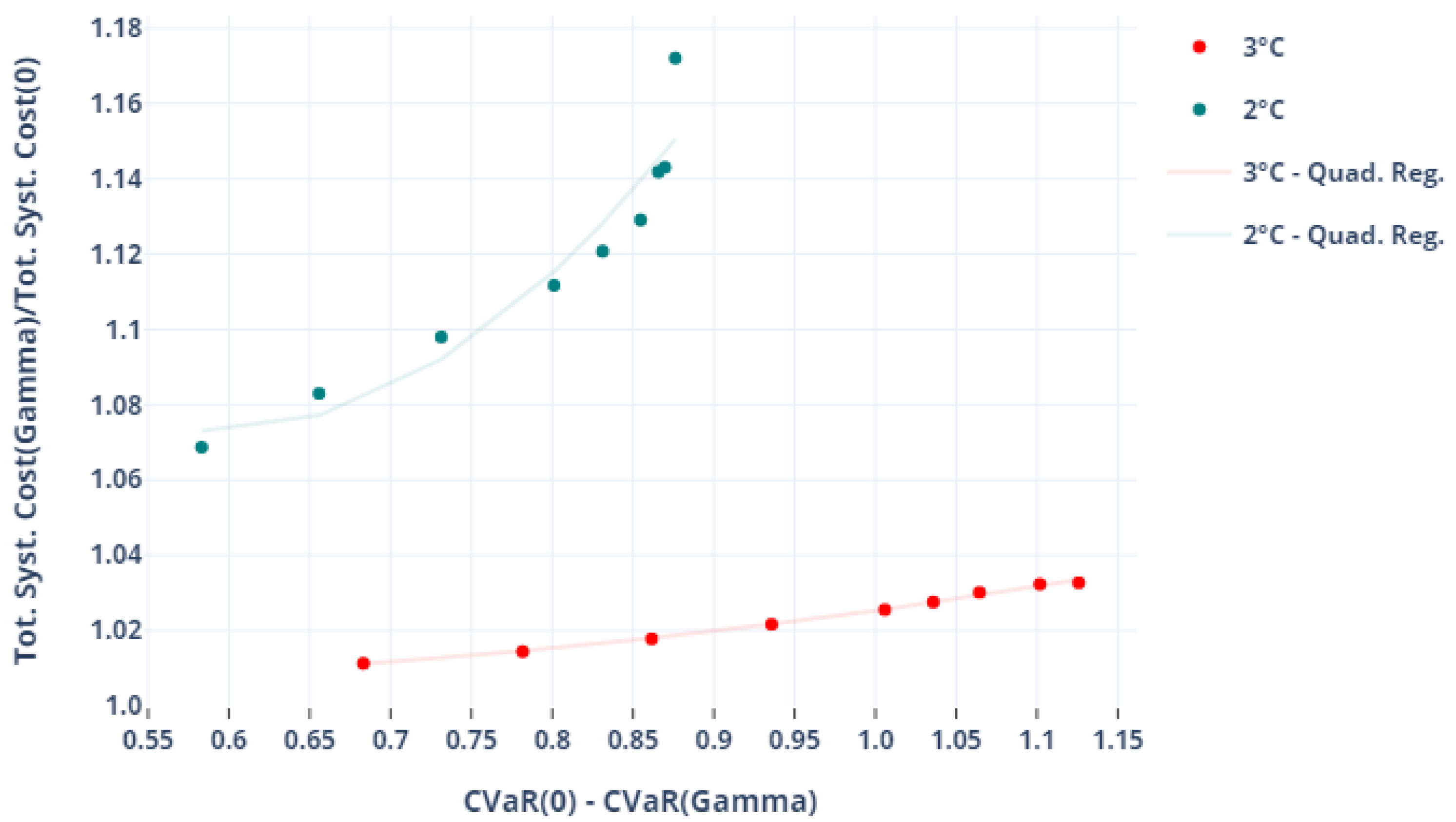

4.2. Robustness Cost

4.3. Robust Energy Transition Pathways

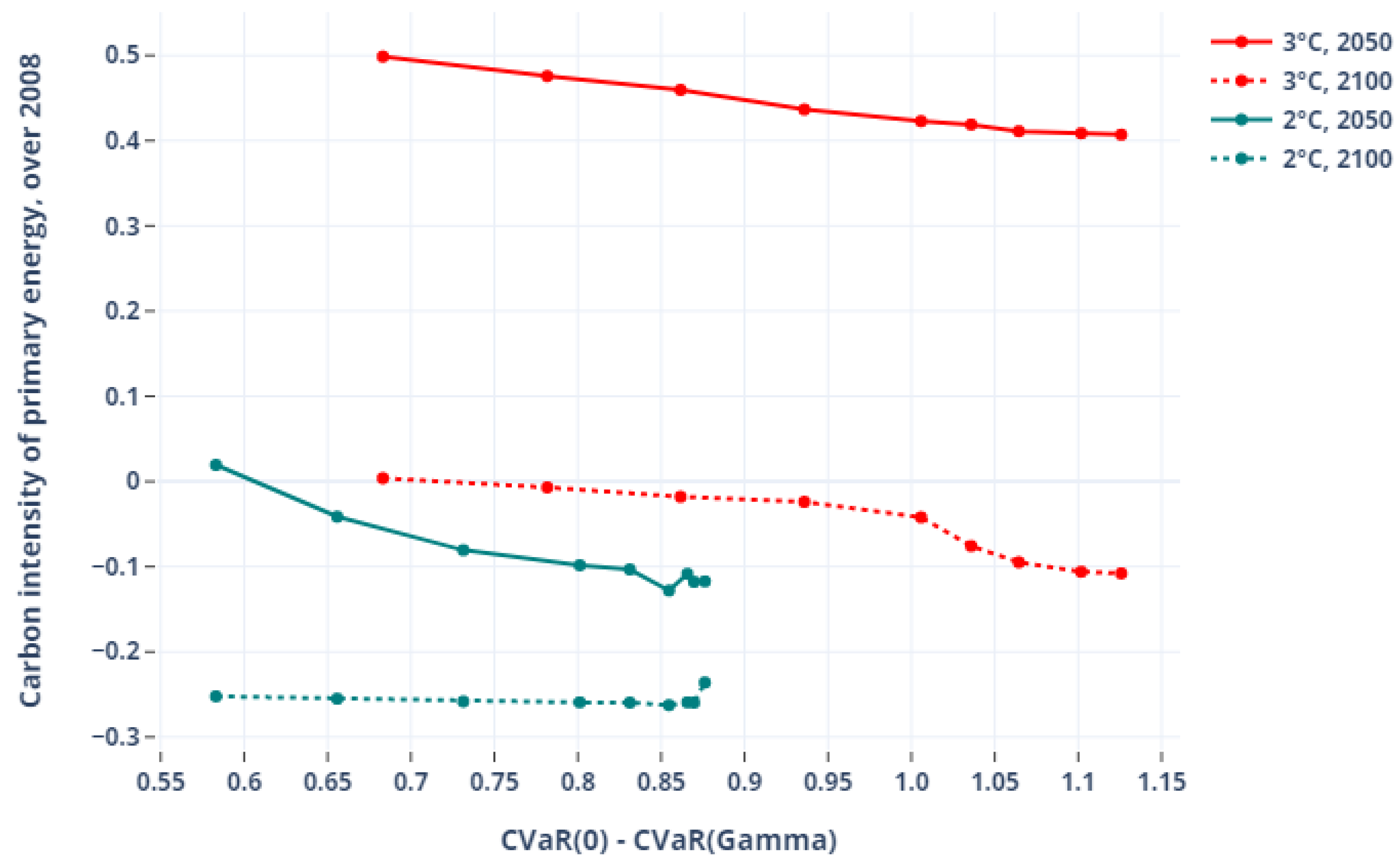

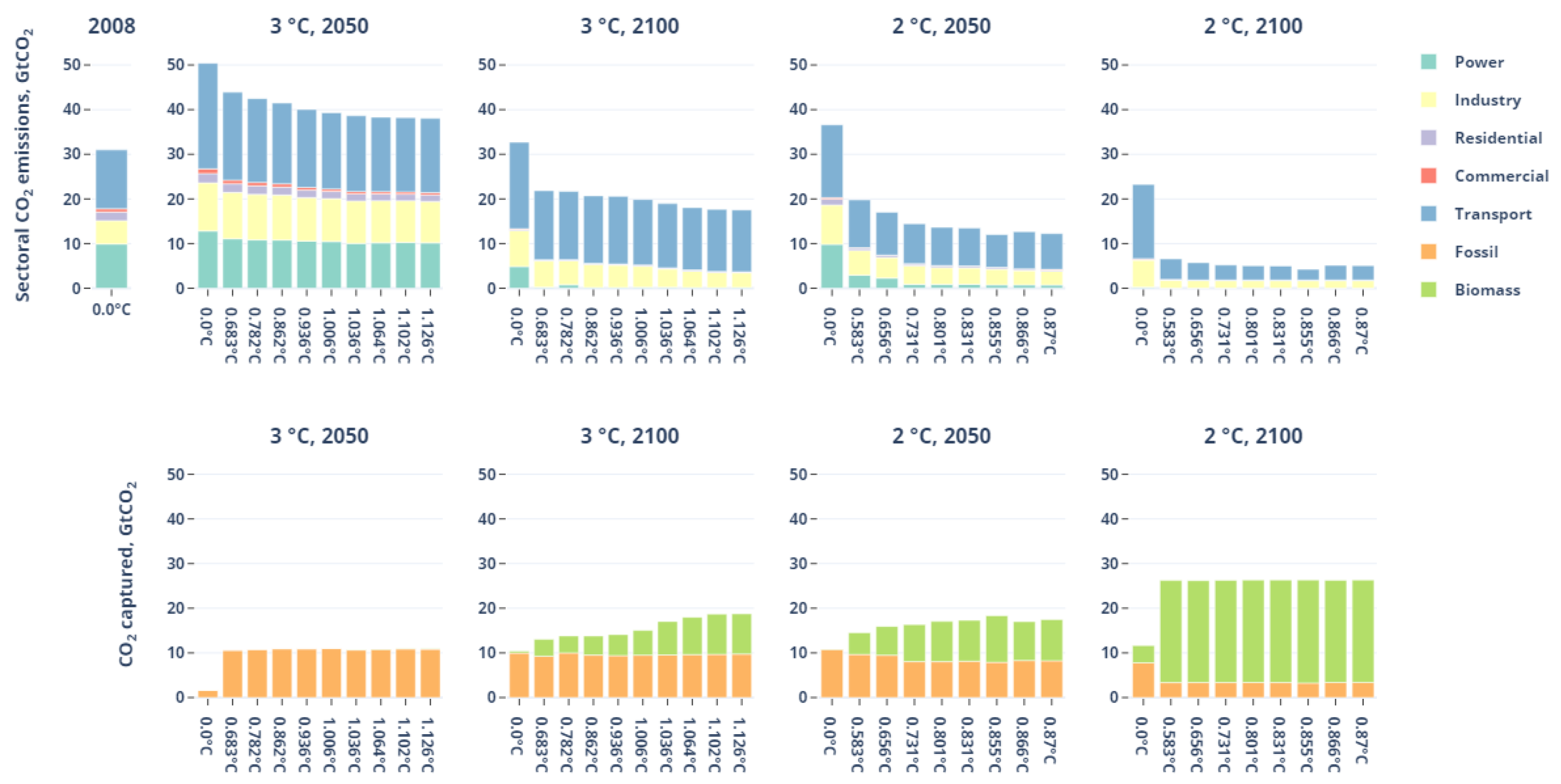

4.3.1. Robust Decarbonization Challenges: A Mesoscopic View

4.3.2. Robust Energy Portfolios

4.3.3. A Sectoral View: The ‘Backstop’ Negative Emissions Pathways against Low-Elastic Transport

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| BECCS | Bioenergy with carbon capture and storage |

| CCS | Carbon capture and storage |

| EMIC | Earth System Models of Intermediate Complexity |

| GHG | Greenhouse gas |

| IA | Integrated Assessment |

| IAM | Integrated Assessment Model |

| IPCC | Intergovernmental Panel on Climate Change |

| RCP | Representative Concentration Pathway |

| RO | Robust optimization |

| SCM | Simple Climate Model |

| TSC | Total System Cost |

Appendix A. Model Overview

Appendix B. TIAM-World Climate Module

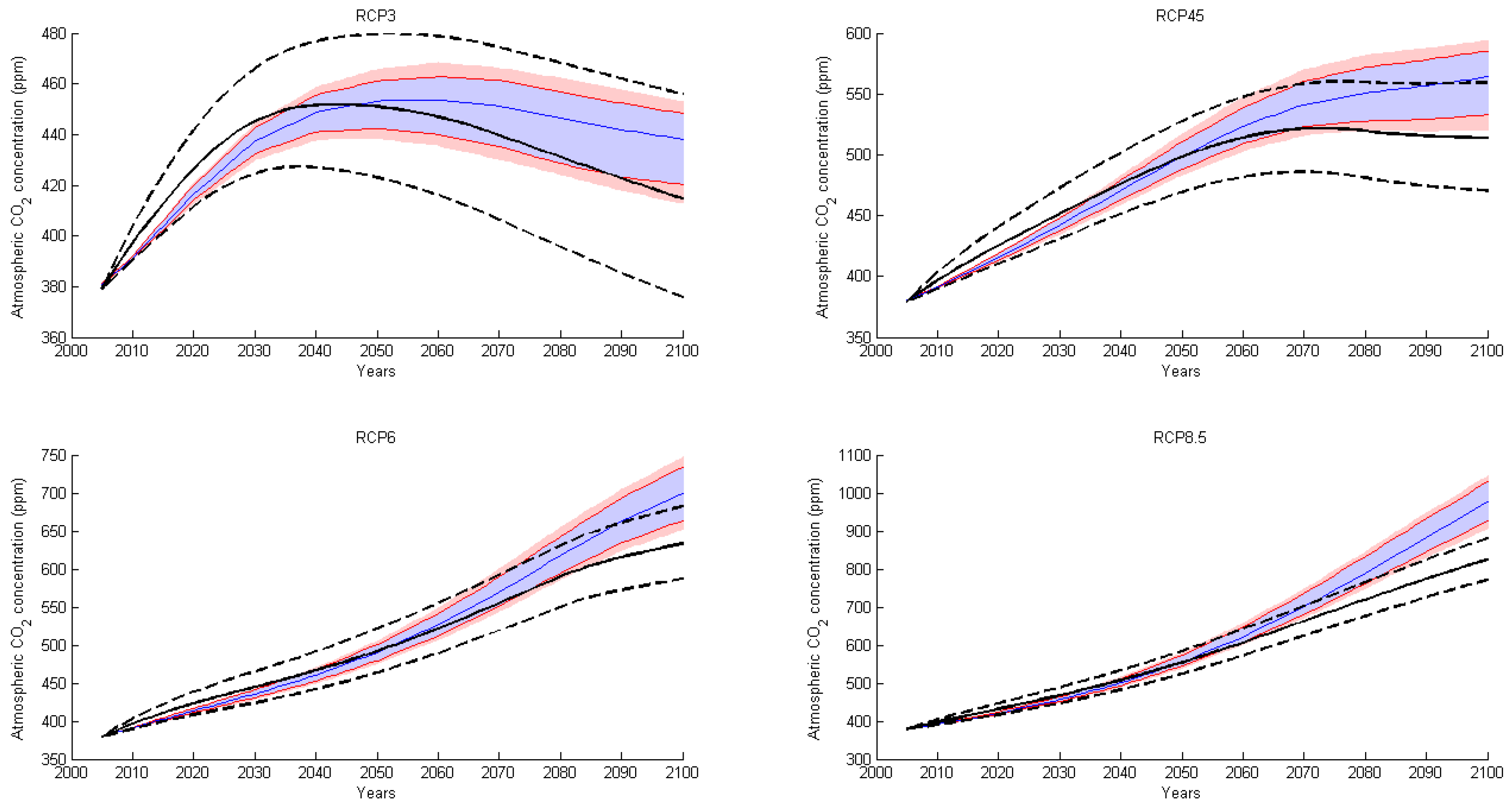

Appendix C. Estimation of Lower/Upper Bounds for Climate Parameters

- The nominal values of the parameters in the climate module in TIAM-World was left as described in [74];

- The upper bound of the inter-boxes transfer coefficients were estimated to become close to the 83rd percentile of the MAGICC6 inter-model simulations for the four RCP scenarios. This is done by changing the parameters by identical relative amounts, and computing a simple distance measure (the sum of squares of annual relative distances between the TIAM-climate simulation and the MAGICC6-RCP benchmark).

| Parameter | Description | Nominal Value (This Paper) | Lower/ Upper Bound (This Paper) | Nominal Value (Bulter) | Lower/ Upper Bound (Butler) |

|---|---|---|---|---|---|

| Atmosphere to upper layer carbon transfer coefficient (annual) | 0.046 | 0.04393 | 0.189288 | 0.223288 | |

| Upper layer to atmosphere carbon transfer coefficient (annual) | 0.0453 | 0.0473385 | 0.097213 | derived | |

| Upper to lower layer carbon transfer coefficient (annual) | 0.0146 | 0.013943 | 0.05 | 0.025 | |

| Lower to upper layer carbon transfer coefficient (annual) | 0.00053 | 0.00055385 | 0.003119 | derived | |

| Radiative forcing from doubling of CO | 3.7 | 4.5 | 3.8 | 3.9 | |

| Climate sensitivity from doubling of CO | 2.9 | 4.5 | 3 | 8 | |

| Adjustment speed of atmospheric temperature | 0.024 | 0.0264 | 0.22 | 0.24 | |

| Heat loss from atmosphere to deep ocean | 0.44 | 0.396 | 0.3 | 0.27 | |

| Heat gain by deep ocean | 0.002 | 0.0018 | 0.05 | 0.045 |

Appendix D. Implementation Details for the Worst-Case Oracle in TIAM-World Model

Appendix E. Monte-Carlo Simulations of the Temperature

References

- IPCC. Summary for Policymakers. In Climate Change 2021: The Physical Science Basis. Contribution of Working Group I to the Sixth Assessment Report of the Intergovernmental Panel on Climate Change; MassonDelmotte, V., Zhai, P., Pirani, A., Connors, S.L., Péan, C., Berger, S., Caud, N., Chen, Y., Goldfarb, L., Gomis, M.I., et al., Eds.; Cambridge University Press: Cambridge, UK; New York, NY, USA, 2021. [Google Scholar]

- IPCC. Summary for Policymakers. In Climate Change 2014: Impacts, Adaptation, and Vulnerability. Part A: Global and Sectoral Aspects. Contribution of Working Group II to the Fifth Assessment Report of the Intergovernmental Panel on Climate Change; Field, C.B., Barros, V.R., Dokken, D.J., Mach, K.J., Mastrandrea, M.D., Bilir, T.E., Chatterjee, M., Ebi, K.L., Estrada, Y.O., Genova, R.C., Eds.; Cambridge University Press: Cambridge, UK; New York, NY, USA, 2014. [Google Scholar]

- IPCC. Global Warming of 1.5 °C: An IPCC Special Report on the Impacts of Global Warming of 1.5 °C above Pre-Industrial Levels and Related Global Greenhouse Gas Emission Pathways, in the Context of Strengthening the Global Response to the Threat of Climate Change; Masson-Delmotte, V., Zhai, P., Pörtner, H.-O., Roberts, D., Skea, J., Shukla, P.R., Pirani, A., Moufouma-Okia, W., Péan, C., Pidcock, R., et al., Eds.; Technical Report; Cambridge University Press: Cambridge, UK; New York, NY, USA, 2018. [Google Scholar]

- Bahn, O.; Haurie, A.; Malhamé, R. A stochastic control model for optimal timing of climate policies. Automatica 2008, 44, 1545–1558. [Google Scholar] [CrossRef]

- Bahn, O.; Chesney, M.; Gheyssens, J. The effect of proactive adaptation on green investment. Environ. Sci. Policy 2012, 18, 9–24. [Google Scholar] [CrossRef]

- Bahn, O.; Chesney, M.; Gheyssens, J.; Knutti, R.; Pana, A. Is there room for geoengineering in the optimal climate policy mix? Environ. Sci. Policy 2015, 48, 67–76. [Google Scholar] [CrossRef]

- Nordhaus, W.D. Estimates of the Social Cost of Carbon: Concepts and Results from the DICE-2013R Model and Alternative Approaches. J. Assoc. Environ. Resour. Econ. 2014, 1, 273–312. [Google Scholar] [CrossRef]

- Anthoff, D.; Tol, R.S.J. The uncertainty about the social cost of carbon: A decomposition analysis using FUND. Clim. Chang. 2013, 117, 515–530. [Google Scholar] [CrossRef]

- Manne, A.; Mendelsohn, R.; Richels, R. MERGE A model for evaluating regional and global effects of GHG reduction policies. Energy Policy 1995, 23, 17–34. [Google Scholar] [CrossRef]

- Hope, C.W. The Marginal Impact of CO2 from PAGE2002: An Integrated Assessment Model Incorporating the IPCC’s Five Reasons for Concern. Integr. Assess. J. 2006, 6, 19–56. [Google Scholar]

- Loulou, R.; Labriet, M. ETSAP-TIAM: The TIMES integrated assessment model Part I: Model structure. Comput. Sci. Spec. Issue Manag. Energy Environ. 2008, 5, 7–40. [Google Scholar] [CrossRef]

- Loulou, R.; Goldstein, G. Documentation for the TIMES Model PART II; Energy Technology Systems Analysis Programme: Paris, France, 2005. [Google Scholar]

- Stern, N. The Economics of Climate Change: The Stern Review; Cambridge University Press: Cambridge, UK, 2007; p. 692. [Google Scholar]

- Nordhaus, W.D. A Question of Balance: Weighing the Options on Global Warming Policies; Yale University Press: Yale, CT, USA, 2008; Volume 87, pp. 166–167. [Google Scholar]

- Rogelj, J.; Shindell, D.; Jiang, K.; Fifita, S.; Forster, P.; Ginzburg, V.; Handa, C.; Kheshgi, H.; Kobayashi, S.; Kriegler, E.; et al. Mitigation pathways compatible with 1.5 °C in the context of sustainable development. In Special Report on the Impacts of Global Warming of 1.5 °C; Intergovernmental Panel on Climate Change: Geneva, Switzerland, 2018. [Google Scholar]

- Huppmann, D.; Kriegler, E.; Krey, V.; Riahi, K.; Rogelj, J.; Rose, S.K.; Weyant, J.; Bauer, N.; Bertram, C.; Bosetti, V.; et al. IAMC 1.5 °C Scenario Explorer and Data Hosted by IIASA; Integrated Assessment Modeling Consortium & International Institute for Applied Systems Analysis: Laxenburg, Austria, 2018. [Google Scholar] [CrossRef]

- Pindyck, R.S. Climate Change Policy: What Do the Models Tell Us? J. Econ. Lit. 2013, 51, 860–872. [Google Scholar] [CrossRef]

- Stern, N. The Structure of Economic Modeling of the Potential Impacts of Climate Change: Grafting Gross Underestimation of Risk onto Already Narrow Science Models. J. Econ. Lit. 2013, 51, 838–859. [Google Scholar] [CrossRef]

- Pindyck, R.S. The Use and Misuse of Models for Climate Policy. Rev. Environ. Econ. Policy 2017, 11, 100–114. [Google Scholar] [CrossRef]

- Van Asselt, M.; Rotmans, J. Uncertainty in integrated assessment modelling: From positivism to pluralism. Clim. Chang. 2002, 54, 75–105. [Google Scholar] [CrossRef]

- Rotmans, J.; van Asselt, M.B. Uncertainty Management in Integrated Assessment Modeling: Towards a Pluralistic Approach. Environ. Monit. Assess. 2001, 69, 101–130. [Google Scholar] [CrossRef]

- Enserink, B.; Kwakkel, J.H.; Veenman, S. Coping with uncertainty in climate policy making: (Mis)understanding scenario studies. Futures 2013, 53, 1–12. [Google Scholar] [CrossRef]

- Kanudia, A.; Loulou, R. Robust responses to climate change via stochastic MARKAL: The case of Québec. Eur. J. Oper. Res. 1998, 106, 15–30. [Google Scholar] [CrossRef]

- Jensen, S.; Traeger, C.P. Optimal climate change mitigation under long-term growth uncertainty: Stochastic integrated assessment and analytic findings. Eur. Econ. Rev. 2014, 69, 104–125. [Google Scholar] [CrossRef]

- Golub, A.; Narita, D.; Schmidt, M.G. Uncertainty in Integrated Assessment Models of Climate Change: Alternative Analytical Approaches. Environ. Model. Assess. 2014, 19, 99–109. [Google Scholar] [CrossRef]

- Kunreuther, H.; Gupta, S.; Bosetti, V.; Cooke, R.; Dutt, V.; Duong, M.H.; Held, H.; Llanes-Regueiro, J.; Patt, A.; Shittu, E.; et al. Integrated Risk and Uncertainty Assessment of Climate Change Response Policies. In Climate Change 2014: Mitigation of Climate Change, Contribution of Working Group III to the IPCC Fifth Assessment Report; Edenhofer, O., Pichs-Madruga, R., Sokona, Y., Farahani, E., Kadner, S., Seyboth, K., Adler, A., Baum, I., Brunner, S., Eickemeier, P., et al., Eds.; MinxCambridge University Press: Cambridge, UK; New York, NY, USA, 2014; pp. 151–206. [Google Scholar]

- Seneviratne, S.I.; Rogelj, J.; Séférian, R.; Wartenburger, R.; Allen, M.R.; Cain, M.; Millar, R.J.; Ebi, K.L.; Ellis, N.; Hoegh-Guldberg, O.; et al. The many possible climates from the Paris Agreement’s aim of 1.5 °C warming. Nature 2018, 558, 41–49. [Google Scholar] [CrossRef] [PubMed]

- Arino, Y.; Akimoto, K.; Sano, F.; Homma, T.; Oda, J.; Tomoda, T. Estimating option values of solar radiation management assuming that climate sensitivity is uncertain. Proc. Natl. Acad. Sci. USA 2016, 113, 5886–5891. [Google Scholar] [CrossRef] [PubMed]

- Hwang In Chang and Reynès, F.; J, T.R.S. Climate Policy Under Fat-Tailed Risk: An Application of Dice. Environ. Resour. Econ. 2013, 56, 415–436. [Google Scholar] [CrossRef]

- Lemoine, D.; Rudik, I. Managing Climate Change Under Uncertainty: Recursive Integrated Assessment at an Inflection Point. Annu. Rev. Resour. Econ. 2017, 9, 117–142. [Google Scholar] [CrossRef]

- Hassler, J.; Krusell, P.; Olovsson, C. The Consequences of Uncertainty: Climate Sensitivity and Economic Sensitivity to the Climate. Annu. Rev. Econ. 2018, 10, 189–205. [Google Scholar] [CrossRef]

- Nordhaus, W. Projections and Uncertainties about Climate Change in an Era of Minimal Climate Policies. Am. Econ. J. Econ. Policy 2018, 10, 333–360. [Google Scholar] [CrossRef]

- Crost, B.; Traeger, C.P. Optimal climate policy: Uncertainty versus Monte Carlo. Econ. Lett. 2013, 120, 552–558. [Google Scholar] [CrossRef]

- Stoerk, T.; Wagner, G.; Ward, R.E.T. Policy Brief—Recommendations for Improving the Treatment of Risk and Uncertainty in Economic Estimates of Climate Impacts in the Sixth Intergovernmental Panel on Climate Change Assessment Report. Rev. Environ. Econ. Policy 2018, 12, 371–376. [Google Scholar] [CrossRef]

- Soyster, L.A. Convex Programming with Set-Inclusive Constraints and Applications to Inexact Linear Programming. Oper. Res. 1973, 21, 1154–1157. [Google Scholar] [CrossRef]

- Ben-Tal, A.; Nemirovski, A. Robust solutions of Linear Programming problems contaminated with uncertain data. Math. Program. 2000, 88, 411–424. [Google Scholar] [CrossRef]

- Ben-Tal, A.; Nemirovski, A. Robust Optimization—Methodology and Applications. Math. Program. 2002, 92, 453–480. [Google Scholar] [CrossRef]

- El Ghaoui, L.; Oustry, F.; Lebret, H. Robust solutions to uncertain semidefinite programs. Soc. Ind. Appl. Math. 1998, 9, 33–52. [Google Scholar] [CrossRef]

- Bertsimas, D.; Sim, M. The Price of Robustness. Oper. Res. 2004, 52, 35–53. [Google Scholar] [CrossRef]

- Ben-Tal, A.; den Hertog, D.; Vial, J. Deriving robust counterparts of nonlinear uncertain inequalities. Math. Program. 2015, 149, 265–299. [Google Scholar] [CrossRef]

- Bertsimas, D.; Brown, D.; Caramanis, C. Theory and applications of Robust Optimization. SIAM Rev. 2010, 53, 464–501. [Google Scholar] [CrossRef]

- Yue, X.; Pye, S.; DeCarolis, J.; Li, F.G.; Rogan, F.; Gallachóir, B.Ó. A review of approaches to uncertainty assessment in energy system optimization models. Energy Strategy Rev. 2018, 21, 204–217. [Google Scholar] [CrossRef]

- Babonneau, F.; Kanudia, A.; Labriet, M.; Loulou, R.; Vial, J. Energy Security: A Robust Optimization Approach to Design a Robust European Energy Supply via TIAM-WORLD. Environ. Model. Assess. 2011, 17, 19–37. [Google Scholar] [CrossRef]

- Andrey, C.; Babonneau, F.; Haurie, A.; Labriet, M. Modélisation stochastique et robuste de l’atténuation et de l’adaptation dans un système énergétique régional. Application à la région Midi-Pyrénées. Nat. Sci. Soc. 2015, 23, 133–149. [Google Scholar] [CrossRef][Green Version]

- Ekholm, T. Hedging the climate sensitivity risks of a temperature target. Clim. Chang. 2014, 127, 153–167. [Google Scholar] [CrossRef]

- Funke, M.; Paetz, M. Environmental policy under model uncertainty: A robust optimal control approach. Clim. Chang. 2011, 107, 225–239. [Google Scholar] [CrossRef]

- Loulou, R. ETSAP-TIAM: The TIMES integrated assessment model. part II: Mathematical formulation. Comput. Manag. Sci. 2008, 5, 41–66. [Google Scholar] [CrossRef]

- Van Vuuren, D.P.; Lowe, J.; Stehfest, E.; Gohar, L.; Hof, A.F.; Hope, C.; Warren, R.; Meinshausen, M.; Plattner, G.K. How well do integrated assessment models simulate climate change? Clim. Chang. 2009, 104, 255–285. [Google Scholar] [CrossRef]

- Meinshausen, M.; Raper, S.C.B.; Wigley, T.M.L. Emulating coupled atmosphere-ocean and carbon cycle models with a simpler model, MAGICC6—Part 1: Model description and calibration. Atmos. Chem. Phys. 2011, 11, 1417–1456. [Google Scholar] [CrossRef]

- Syri, S.; Lehtila, A.; Ekholm, T.; Savolainen, I.; Holttinen, H.; Peltola, E. Global energy and emissions scenarios for effective climate change mitigation—Deterministic and stochastic scenarios with the TIAM model. Int. J. Greenh. Gas Control 2008, 2, 274–285. [Google Scholar] [CrossRef]

- Labriet, M.; Nicolas, C.; Tchung-Ming, S.; Kanudia, A.; Loulou, R. Energy decisions in an uncertain climate and technology outlook: How stochastic and robust analyses can assist policy-makers. In Informing Energy and Climate Policies Using Energy Systems Models; Giannakidis, G., Labriet, M., Ó’Gallachóir, B., Tosato, G., Eds.; Springer: Cham, Switzerland, 2015; Chapter 3. [Google Scholar]

- Schneider, S. Integrated assessment modeling of global climate change: Transparent rational tool for policy making or opaque screen hiding value laden assumptions? Environ. Model. Assess. 1997, 2, 229. [Google Scholar] [CrossRef]

- Sokolov, A.P.; Schlosser, C.A.; Paltsev, S.D.; Kicklighter, D.; Jacoby, H.; Prinn, R.; Forest, C.; Reilly, J.; Wang, C.; Felzer, B.S. The MIT Integrated Global System Model (IGSM) Version 2: Model Description and Baseline Evaluation; Technical Report 124, MIT Joint Program; MIT: Cambridge, MA, USA, 2005. [Google Scholar]

- Crassous, R.; Sassi, O.; Hourcade, J. Endogenous Structural Change and Climate Targets Modeling Experiments with Imaclim-R. Energy J. 2006, SI1, 259–276. [Google Scholar] [CrossRef]

- Huppmann, D.; Gidden, M.; Fricko, O.; Kolp, P.; Orthofer, C.; Pimmer, M.; Kushin, N.; Vinca, A.; Mastrucci, A.; Riahi, K.; et al. The MESSAGEix Integrated Assessment Model and the ix modeling platform (ixmp): An open framework for integrated and cross-cutting analysis of energy, climate, the environment, and sustainable development. Environ. Model. Softw. 2019, 112, 143–156. [Google Scholar] [CrossRef]

- Bosetti, V.; Massetti, E.; Tavoni, M. The Witch Model: Structure, Baseline, Solutions. FEEM Work. Pap. 2007, 1, 1–49. [Google Scholar] [CrossRef][Green Version]

- Edwards, N.; Marsh, R. Uncertainties due to transport-parameter sensitivity in an efficient 3-D ocean-climate model. Clim. Dyn. 2005, 24, 415–433. [Google Scholar] [CrossRef]

- Boville, B.; Kiehl, J.; Rasch, P.; Bryan, F. Improvements to the NCAR CSM-1 for Transient Climate Simulations. J. Clim. 2001, 14, 164–179. [Google Scholar] [CrossRef]

- Smith, M.J.; Vanderwel, M.C.; Lyutsarev, V.; Emmott, S.; Purves, D.W. The climate dependence of the terrestrial carbon cycle; including parameter and structural uncertainties. Biogeosci. Discuss. 2012, 9, 13439–13496. [Google Scholar] [CrossRef]

- Joos, F.; Roth, R.; Fuglestvedt, J.S.; Peters, G.P.; Enting, I.G.; Von Bloh, W.; Brovkin, V.; Burke, E.J.; Eby, M.; Edwards, N.R.; et al. Carbon dioxide and climate impulse response functions for the computation of greenhouse gas metrics: A multi-model analysis. Atmos. Chem. Phys. 2013, 13, 2793–2825. [Google Scholar] [CrossRef]

- Ben-tal, A.; El Ghaoui, L.; Nemirovski, A. Robust Optimization; Princeton Series in Applied Mathematics; Princeton University Press: Princeton, NJ, USA, 2009. [Google Scholar]

- Hof, A.; Hope, C.; Lowe, J.; Mastrandrea, M.; Meinshausen, M.; van Vuuren, D. The benefits of climate change mitigation in integrated assessment models: The role of the carbon cycle and climate component. Clim. Chang. 2012, 113, 897–917. [Google Scholar] [CrossRef]

- Hu, Z.; Cao, J.; Hong, L.J. Robust Simulation of Global Warming Policies Using the DICE Model. Manag. Sci. 2012, 58, 1295–1305. [Google Scholar] [CrossRef]

- Butler, M.; Reed, P.; Fisher-Vanden, K.; Keller, K.; Wagener, T. Identifying parametric controls and dependencies in integrated assessment models using global sensitivity analysis. Environ. Model. Softw. 2014, 59, 10–29. [Google Scholar] [CrossRef]

- Knutti, R.; Hegerl, G. The equilibrium sensitivity of the Earth’s temperature to radiation changes. Nat. Geosci. 2008, 1, 735–743. [Google Scholar] [CrossRef]

- Nordhaus, W.; Sztorc, P. DICE 2013R: Introduction and User’s Manual with; PCHES: University Park, PA, USA, 2013; pp. 1–102. [Google Scholar]

- Cao, L.; Bala, G.; Caldeira, K.; Nemani, R.; Ban-Weiss, G. Importance of carbon dioxide physiological forcing to future climate change. Proc. Natl. Acad. Sci. USA 2010, 107, 9513–9518. [Google Scholar] [CrossRef]

- Schmidt, H.; Alterskjær, K.; Alterskjæ r, K.; Bou Karam, D.; Boucher, O.; Jones, A.; Kristjánsson, J.E.; Niemeier, U.; Schulz, M.; Aaheim, A.; et al. Solar irradiance reduction to counteract radiative forcing from a quadrupling of CO2: Climate responses simulated by four earth system models. Earth Syst. Dyn. 2012, 3, 63–78. [Google Scholar] [CrossRef]

- Block, K.; Mauritsen, T. Forcing and feedback in the MPI-ESM-LR coupled model under abruptly quadrupled CO2. J. Adv. Model. Earth Syst. 2013, 5, 676–691. [Google Scholar] [CrossRef]

- IPCC. Summary for Policymakers. In Climate Change 2013: The Physical Science Basis. Contribution of Working Group I to the Fifth Assessment Report of the Intergovernmental Panel on Climate Change; Stocker, T.F., Qin, D., Plattner, G.-K., Tignor, M., Allen, S.K., Boschung, J., Nauels, A., Xia, Y., Bex, V., Midgley, P.M., Eds.; Cambridge University Press: Cambridge, UK; New York, NY, USA, 2013. [Google Scholar]

- Zhang, M.; Huang, Y. Radiative Forcing of Quadrupling CO2. J. Clim. 2014, 27, 2496–2508. [Google Scholar] [CrossRef]

- Labriet, M.; Kanudia, A.; Loulou, R. Climate mitigation under an uncertain technology future: A TIAM-World analysis. Energy Econ. 2012, 34, S366–S377. [Google Scholar] [CrossRef]

- Nordhaus, W.D.; Boyer, J. Warming the World; The MIT Press: Cambridge, UK, 1999; pp. 1–229. [Google Scholar]

- Loulou, R.; Lehtila, A.; Labriet, M. TIMES Climate Module (November 2010). In TIMES Version 2.0 User Note; Energy Technology Systems Analysis Programme: Paris, France, 2010. [Google Scholar]

- Vanderzwaan, B.; Gerlagh, R. Climate sensitivity uncertainty and the necessity to transform global energy supply. Energy 2006, 31, 2571–2587. [Google Scholar] [CrossRef]

- Labriet, M.; Loulou, R.; Kanudia, A. Uncertainty and Environmental Decision Making. In International Series in Operations Research & Management Science; Springer: Boston, MA, USA, 2010; Volume 138, pp. 51–77. [Google Scholar]

- Van Dender, K.; Crist, P. Policy Instruments to Limit Negative Environmental Impacts from Increased International Transport; Technical Report; Joint Transport Research Centre of the OECD and the International Transport Forum: Paris, France, 2008. [Google Scholar]

- IPCC. Summary for Policymakers. In Contribution of Working Group I to the Fourth Assessment Report of the Intergovernmental Panel on Climate Change; Solomon, S., Qin, D., Manning, M., Chen, Z., Marquis, M., Averyt, K.B., Tignor, M., Miller, H.L., Eds.; Cambridge University Press: Cambridge, UK; New York, NY, USA, 2007. [Google Scholar]

| Parameter | Description | Nominal Value | Lower Bound | Upper Bound |

|---|---|---|---|---|

| Atmosphere to upper layer carbon transfer coefficient (annual) | 0.046 | 0.04393 | 0.04807 | |

| Upper layer to atmosphere carbon transfer coefficient (annual) | 0.0453 | 0.04326 | 0.0473 | |

| Upper to lower layer carbon transfer coefficient (annual) | 0.0146 | 0.0139 | 0.01526 | |

| Lower to upper layer carbon transfer coefficient (annual) | 0.00053 | 0.00051 | 0.00055 | |

| Radiative forcing from doubling of CO | 3.7 | 2.9 | 4.5 | |

| Climate sensitivity from doubling of CO | 2.9 | 1.3 | 4.5 | |

| Adjustment speed of atmospheric temperature | 0.024 | 0.0216 | 0.0264 | |

| Heat loss from atmosphere to deep ocean | 0.44 | 0.396 | 0.484 | |

| Heat gain by deep ocean | 0.002 | 0.0018 | 0.0022 |

| Parameters | |||||||||

|---|---|---|---|---|---|---|---|---|---|

| Order 3 °C | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 |

| Order 2 °C | 1 | 2 | 3 | 4 | 9 | 7 | 5 | 6 | 8 |

| EJ/yr | 3 °C Target | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Natural Gas | Crude Oil | Coal | Uranium | Biomass | Solar | Wind | Non Renewable | Renewable | Total | ||

| 2050 | Deterministic | 189 | 62 | 237 | 54 | 97 | 16 | 26 | 542 | 139 | 681 |

| Lowest Protection level (0.68 °C) | 163 | 63 | 185 | 91 | 116 | 17 | 27 | 502 | 160 | 663 | |

| Highest Protection level (1.13 °C) | 151 | 63 | 153 | 91 | 139 | 18 | 26 | 458 | 184 | 642 | |

| 2100 | Deterministic | 135 | 55 | 100 | 191 | 304 | 97 | 52 | 482 | 453 | 935 |

| Lowest Protection level (0.68 °C) | 93 | 84 | 47 | 177 | 444 | 110 | 55 | 401 | 608 | 1009 | |

| Highest Protection level (1.13 °C) | 34 | 89 | 36 | 164 | 580 | 98 | 58 | 322 | 735 | 1057 | |

| 2 °C Target | |||||||||||

| 2050 | Deterministic | 49 | 140 | 90 | 176 | 154 | 20 | 26 | 455 | 200 | 655 |

| Lowest Protection level (0.58 °C) | 12 | 91 | 24 | 164 | 376 | 25 | 30 | 292 | 431 | 723 | |

| Highest Protection level (0.88 °C) | 3 | 95 | 22 | 165 | 521 | 27 | 32 | 286 | 580 | 866 | |

| 2100 | Deterministic | 34 | 131 | 58 | 133 | 409 | 105 | 60 | 356 | 574 | 930 |

| Lowest Protection level (0.58 °C) | 3 | 97 | 25 | 134 | 647 | 168 | 79 | 259 | 894 | 1153 | |

| Highest Protection level (0.88 °C) | 3 | 96 | 21 | 134 | 649 | 179 | 77 | 254 | 905 | 1159 | |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Nicolas, C.; Tchung-Ming, S.; Bahn, O.; Delage, E. Robust Enough? Exploring Temperature-Constrained Energy Transition Pathways under Climate Uncertainty. Energies 2021, 14, 8595. https://doi.org/10.3390/en14248595

Nicolas C, Tchung-Ming S, Bahn O, Delage E. Robust Enough? Exploring Temperature-Constrained Energy Transition Pathways under Climate Uncertainty. Energies. 2021; 14(24):8595. https://doi.org/10.3390/en14248595

Chicago/Turabian StyleNicolas, Claire, Stéphane Tchung-Ming, Olivier Bahn, and Erick Delage. 2021. "Robust Enough? Exploring Temperature-Constrained Energy Transition Pathways under Climate Uncertainty" Energies 14, no. 24: 8595. https://doi.org/10.3390/en14248595

APA StyleNicolas, C., Tchung-Ming, S., Bahn, O., & Delage, E. (2021). Robust Enough? Exploring Temperature-Constrained Energy Transition Pathways under Climate Uncertainty. Energies, 14(24), 8595. https://doi.org/10.3390/en14248595