Uncertainty Characterisation of Mobile Robot Localisation Techniques using Optical Surveying Grade Instruments

Abstract

1. Introduction

1.1. Ultra-Wideband Localisation Systems

1.2. Robotic Total Stations

2. State Estimation Formulation

2.1. Problem Formulation

2.1.1. State Transition Model

2.1.2. Measurement Model

| Algorithm 1 Range based EKF Localisation |

| Prediction: |

|

| Correction: |

|

3. Methodology

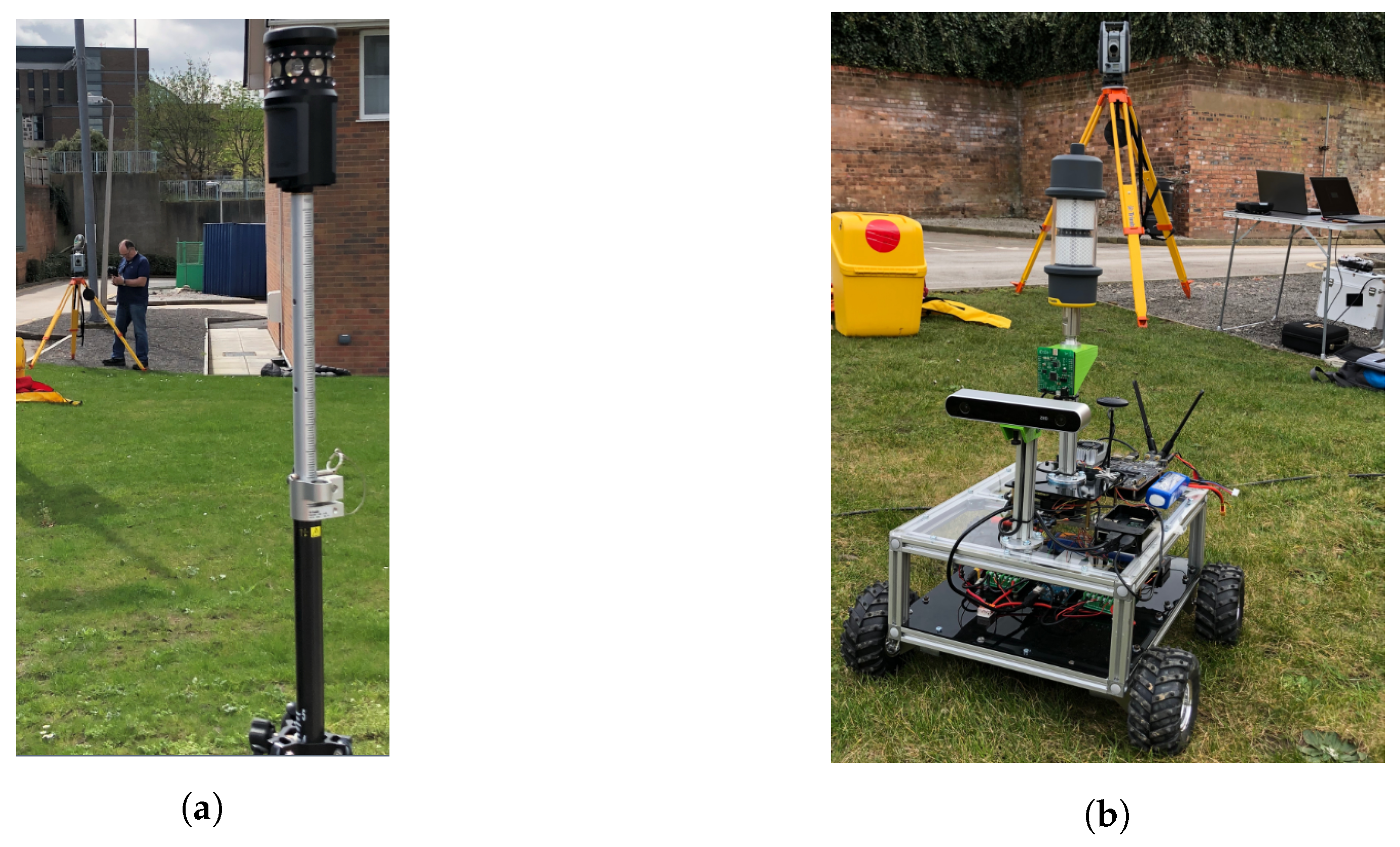

3.1. Robotic Testing Platform

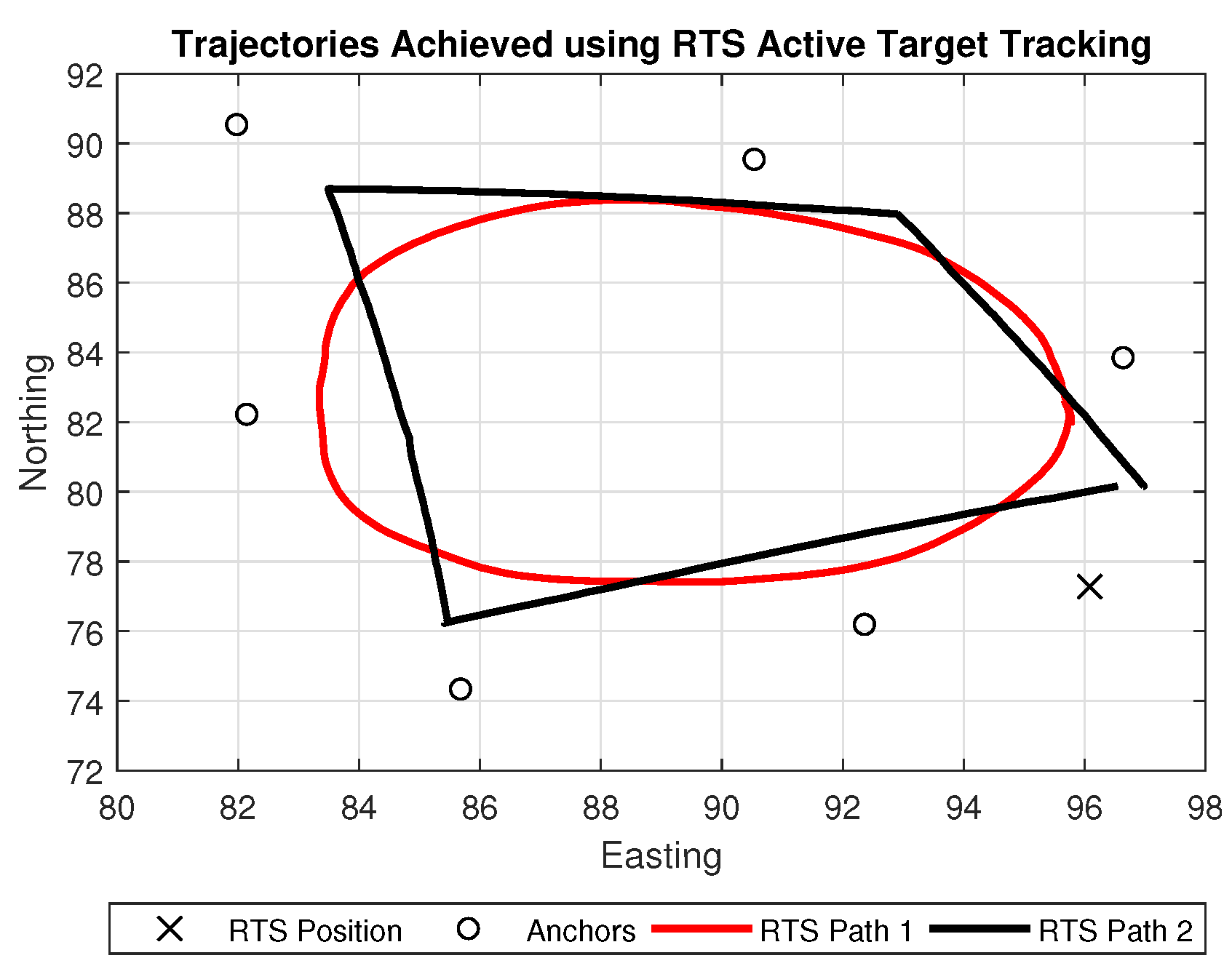

3.2. Robotic Total Station Configuration

3.3. UWB System

- Channel—5,

- Bitrate—110 kbit/s,

- PRF—64 MHz,

- Preamble Length—1024.

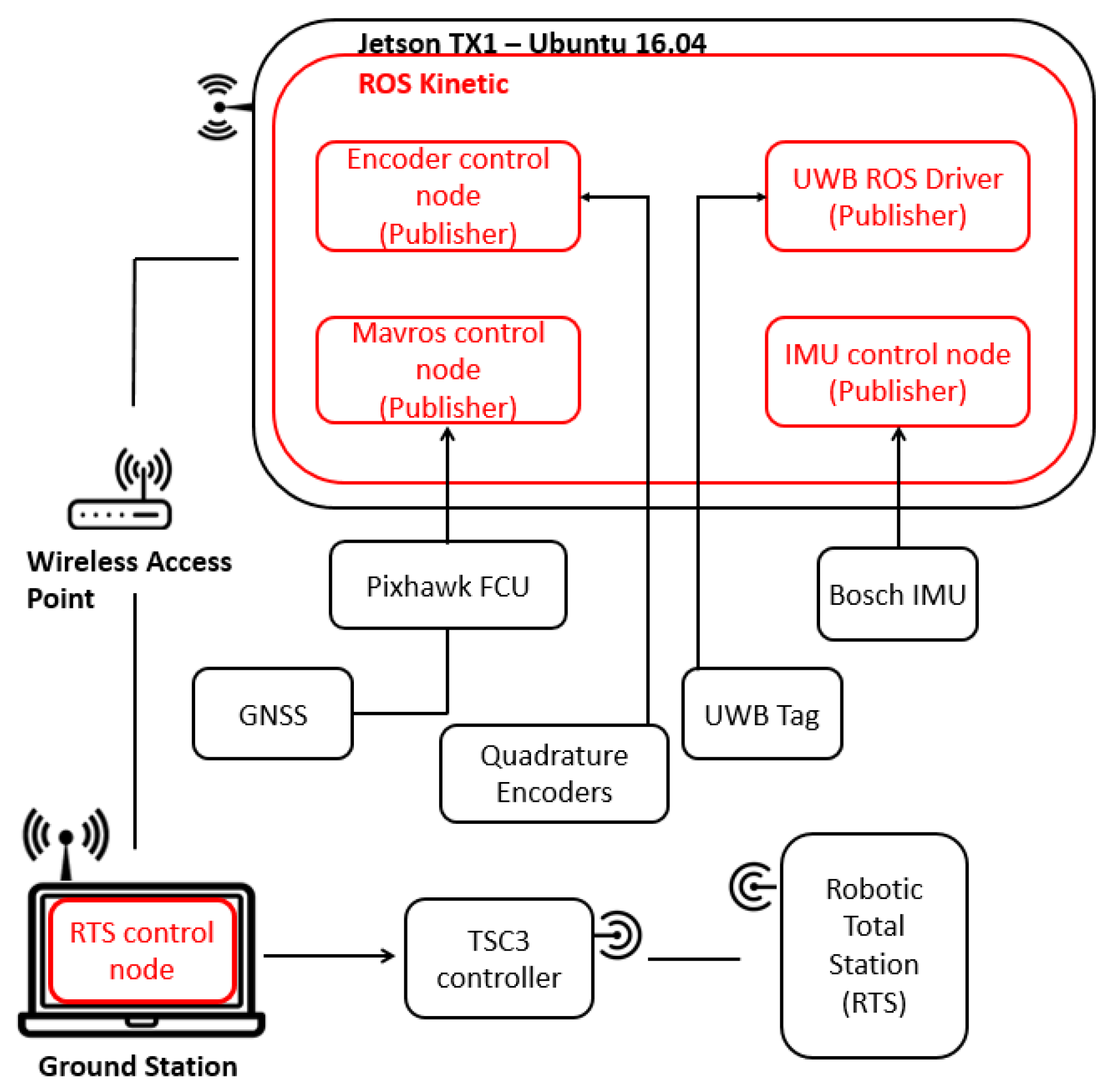

3.4. System Level Architecture

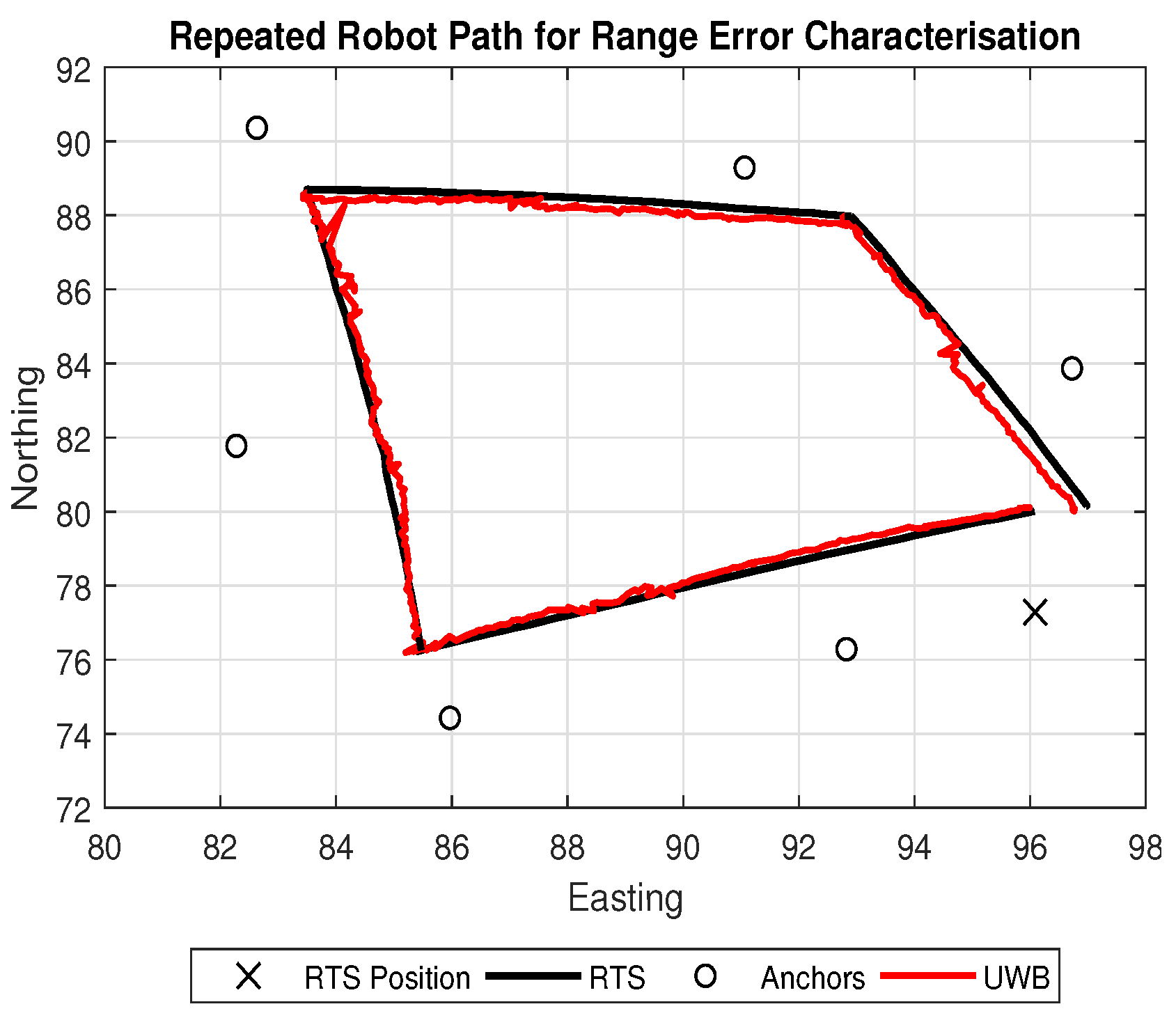

3.5. Range Error Characterisation

3.6. Encoder Error Characterisation

3.7. Localisation

4. Results and Analysis

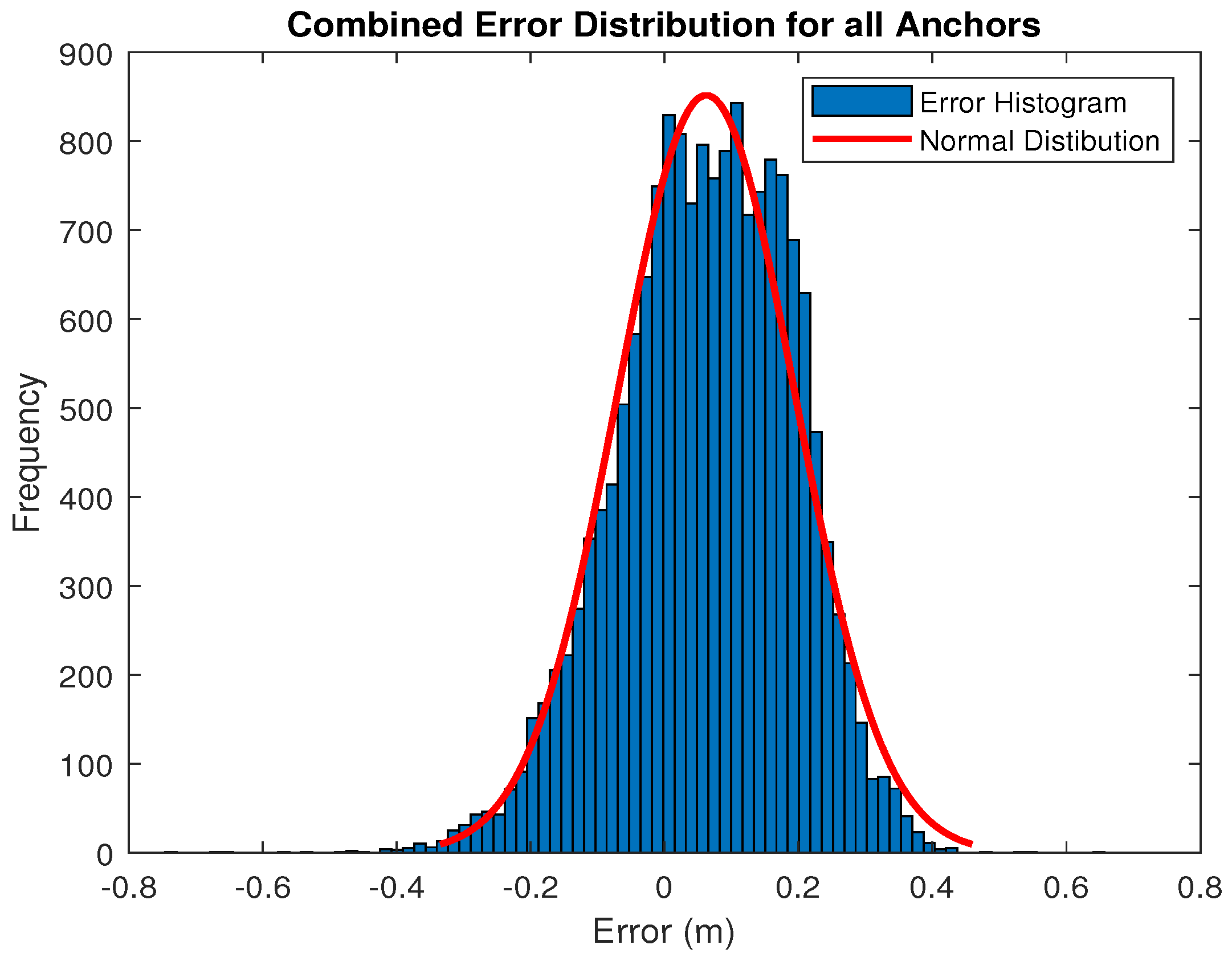

4.1. Range Error Characterisation Results

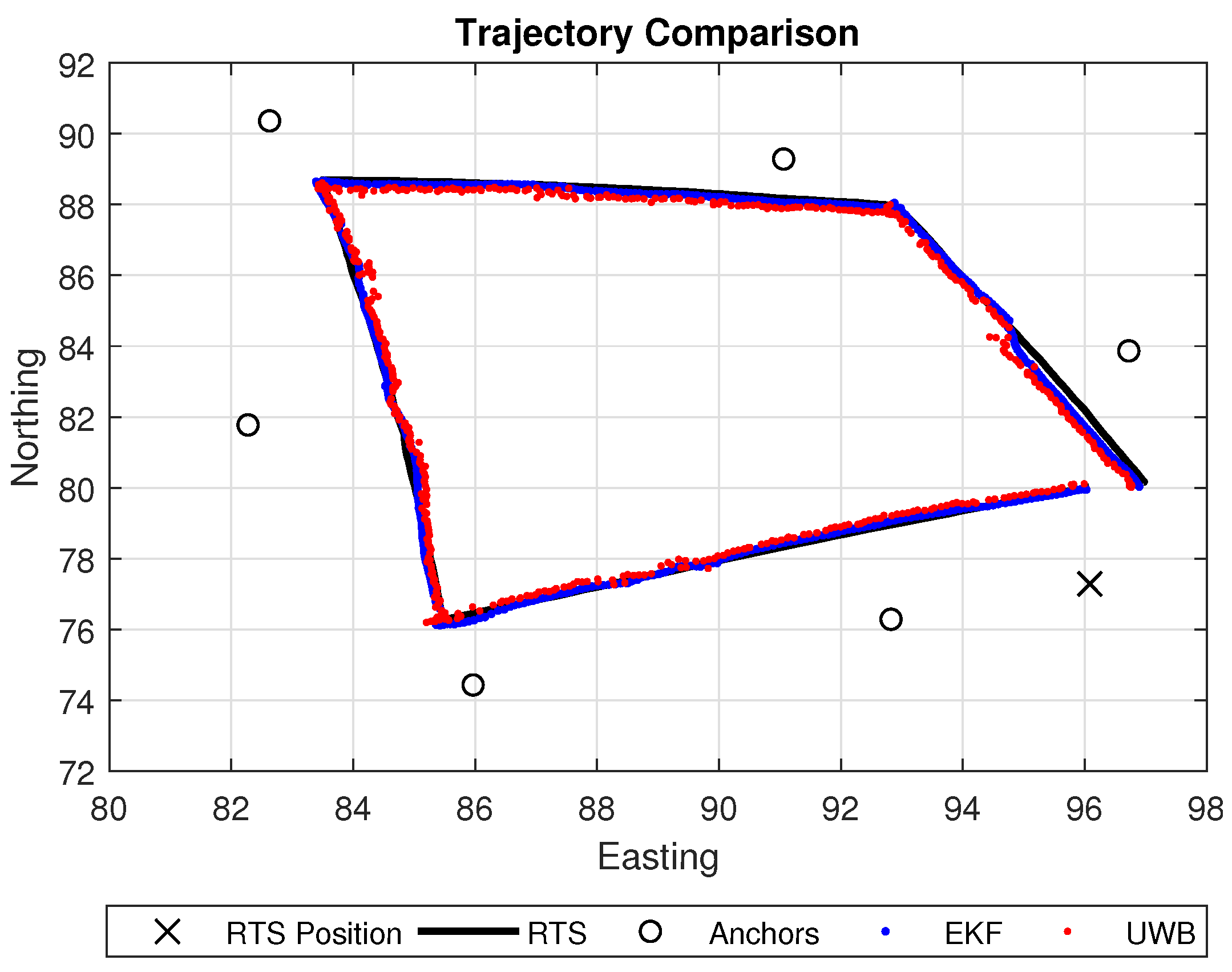

4.2. Localisation Techniques Assessment

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| EKF | Extended Kalman Filter |

| TS | Total Station |

| RTS | Robotic Total Station |

| GNSS | Global Navigation Satellite System |

| IMU | Inertial Measurement Unit |

| INS | Inertial Navigation System |

| LiDAR | Light Detection and Ranging |

| UWB | Ultra-Wideband |

| EDM | Electronic Distance Measurement |

| RFID | RADIO Frequency Identification |

| TOA | Time of Arrival |

| TOF | Time of Flight |

| BNG | British National Grid |

| ROS | Robot Operating System |

References

- Maimone, M.; Cheng, Y.; Matthies, L. Two years of visual odometry on the mars exploration rovers. J. Field Robot. 2007, 24, 169–186. [Google Scholar] [CrossRef]

- Park, J.H.; Shin, Y.D.; Bae, J.H.; Baeg, M.H. Spatial uncertainty model for visual features using a Kinect™ sensor. Sensors 2012, 12, 8640–8662. [Google Scholar] [CrossRef] [PubMed]

- Alatise, M.B.; Hancke, G.P. Pose Estimation of a Mobile Robot Based on Fusion of IMU Data and Vision Data Using an Extended Kalman Filter. Sensors 2017, 17, 2164. [Google Scholar] [CrossRef] [PubMed]

- Fox, D.; Burgard, W.; Kruppa, H.; Thrun, S. A probabilistic approach to collaborative multi-robot localization. Auton. Robots 2000, 8, 325–344. [Google Scholar] [CrossRef]

- Cho, B.S.; Moon, W.S.; Seo, W.J.; Baek, K.R. A dead reckoning localization system for mobile robots using inertial sensors and wheel revolution encoding. J. Mech. Sci. Technol. 2011, 25, 2907–2917. [Google Scholar] [CrossRef]

- Lee, S.; Song, J.B. Robust mobile robot localization using optical flow sensors and encoders. In Proceedings of the 2004 IEEE International Conference on Robotics and Automation, New Orleans, LA, USA, 26 April–1 May 2004. [Google Scholar]

- Se, S.; Lowe, D.; Little, J. Mobile robot localization and mapping with uncertainty using scale-invariant visual landmarks. Int. J. Robot. Res. 2002, 21, 735–758. [Google Scholar] [CrossRef]

- Betke, M.; Gurvits, L. Mobile robot localization using landmarks. IEEE Trans. Robot. Autom. 1997, 13, 251–263. [Google Scholar] [CrossRef]

- Thrun, S.; Burgard, W.; Fox, D. Probabilistic Robotics; MIT Press: Cambridge, MA, USA, 2005. [Google Scholar]

- Goel, P.; Roumeliotis, S.I.; Sukhatme, G.S. Robust localization using relative and absolute position estimates. In Proceedings of the 1999 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS’99), Kyongju, Korea, 17–21 October 1999. [Google Scholar]

- Agrawal, M.; Konolige, K. Real-time localization in outdoor environments using stereo vision and inexpensive gps. In Proceedings of the 18th International Conference on Pattern Recognition (ICPR 2006), Hong Kong, China, 20–24 August 2006. [Google Scholar]

- Caron, F.; Duflos, E.; Pomorski, D.; Vanheeghe, P. GPS/IMU data fusion using multisensor Kalman filtering: Introduction of contextual aspects. Inf. Fusion 2006, 7, 221–230. [Google Scholar] [CrossRef]

- Eliazar, A.I.; Parr, R. Learning probabilistic motion models for mobile robots. In Proceedings of the Twenty-First International Conference on Machine Learning, Banff, AB, Canada, 4–8 July 2004. [Google Scholar]

- Mostafa, M.M.; Schwarz, K.-P. Digital image georeferencing from a multiple camera system by GPS/INS. ISPRS J. Photogramm. Remote Sens. 2001, 56, 1–12. [Google Scholar] [CrossRef]

- Christiansen, M.P.; Laursen, M.S.; Jørgensen, R.N.; Skovsen, S.; Gislum, R. Designing and Testing a UAV Mapping System for Agricultural Field Surveying. Sensors 2017, 17, 2703. [Google Scholar] [CrossRef] [PubMed]

- Bachrach, A.; Prentice, S.; He, R.; Roy, N. RANGE–Robust autonomous navigation in GPS-denied environments. J. Field Robot. 2011, 28, 644–666. [Google Scholar] [CrossRef]

- Bachrach, A.; Prentice, S.; He, R.; Henry, P.; Huang, A.S.; Krainin, M.; Roy, N. Estimation, planning, and mapping for autonomous flight using an RGB-D camera in GPS-denied environments. Int. J. Robot. Res. 2012, 31, 1320–1343. [Google Scholar] [CrossRef]

- Aiello, G.R.; Rogerson, G.D. Ultra-wideband wireless systems. IEEE Microw. Mag. 2003, 4, 36–47. [Google Scholar] [CrossRef]

- Liu, H.; Darabi, H.; Banerjee, P.; Liu, J. Survey of wireless indoor positioning techniques and systems. IEEE Trans. Syst. Man Cybern. Part C (Appl. Rev.) 2007, 37, 1067–1080. [Google Scholar] [CrossRef]

- Gezici, S.; Tian, Z.; Giannakis, G.B.; Kobayashi, H.; Molisch, A.F.; Poor, H.V.; Sahinoglu, Z. Localization via ultra-wideband radios: A look at positioning aspects for future sensor networks. IEEE Signal Process. Mag. 2005, 22, 70–84. [Google Scholar] [CrossRef]

- Fontana, R.J. Recent system applications of short-pulse ultra-wideband (UWB) technology. IEEE Trans. Microw. Theory Tech. 2004, 52, 2087–2104. [Google Scholar] [CrossRef]

- Bharadwaj, R.; Parini, C.; Alomainy, A. Experimental investigation of 3-D human body localization using wearable ultra-wideband antennas. IEEE Trans. Antennas Propag. 2015, 63, 5035–5044. [Google Scholar] [CrossRef]

- Mucchi, L.; Trippi, F.; Carpini, A. Ultra wide band real-time location system for cinematic survey in sports. In Proceedings of the 2010 3rd International Symposium on Applied Sciences in Biomedical and Communication Technologies (ISABEL), Rome, Italy, 7–10 November 2010. [Google Scholar]

- Masiero, A.; Fissore, F.; Vettore, A. A low cost UWB based solution for direct georeferencing UAV photogrammetry. Remote Sens. 2017, 9, 414. [Google Scholar] [CrossRef]

- Conceição, T.; dos Santos, F.N.; Costa, P.; Moreira, A.P. Robot Localization System in a Hard Outdoor Environment; Springer International Publishing: Cham, Switzerland, 2018. [Google Scholar]

- Trimble Geospatial. Trimble S7 Total Station Datasheet. Available online: https://drive.google.com/file/d/0BxW3dqQ5gdnTNkdZRWNRMFdyWGc/view (accessed on 10 June 2018).

- Braun, J.; Stroner, M.; Urban, R.; Dvoracek, F. Suppression of systematic errors of electronic distance meters for measurement of short distances. Sensors 2015, 15, 19264–19301. [Google Scholar] [CrossRef] [PubMed]

- Martinez, G. Field tests on flat ground of an intensity-difference based monocular visual odometry algorithm for planetary rovers. In Proceedings of the 2017 Fifteenth IAPR International Conference on Machine Vision Applications (MVA), Nagoya, Japan, 8–12 May 2017. [Google Scholar]

- Cheng, T.; Venugopal, M.; Teizer, J.; Vela, P.A. Performance evaluation of ultra wideband technology for construction resource location tracking in harsh environments. Autom. Constr. 2011, 20, 1173–1184. [Google Scholar] [CrossRef]

- Lau, L.; Quan, Y.; Wan, J.; Zhou, N.; Wen, C.; Qian, N.; Jing, F. An autonomous ultra-wide band-based attitude and position determination technique for indoor mobile laser scanning. ISPRS Int. J. Geo-Inf. 2018, 7, 155. [Google Scholar] [CrossRef]

- Roberts, C.; Boorer, P. Kinematic positioning using a robotic total station as applied to small-scale UAVs. J. Spat. Sci. 2017, 61, 29–45. [Google Scholar] [CrossRef]

- Keller, F.; Sternberg, H. Multi-sensor platform for indoor mobile mapping: System calibration and using a total station for indoor applications. Remote Sens. 2013, 5, 5805–5824. [Google Scholar] [CrossRef]

- Kiriy, E.; Buehler, M. Three-State Extended Kalman Filter for Mobile Robot Localization; Tech. Rep. TR-CIM; McGill University: Montreal, QC, Canada, 2002; Volume 5, p. 23. [Google Scholar]

- Teslić, L.; Škrjanc, I.; Klančar, G. EKF-based localization of a wheeled mobile robot in structured environments. J. Intell. Robot. Syst. 2011, 62, 187–203. [Google Scholar] [CrossRef]

- Active Robotics. EMG30 Motor with Quadrature Encoders Datasheet. Available online: http://www.robot-electronics.co.uk/htm/emg30.htm (accessed on 10 June 2018).

- Pozyx Labs. Pozyx BVBA, Vrijdagmarkt 10/201, 9000 Gent, Belgium. Available online: https://www.pozyx.io/ (accessed on 26 May 2018).

- Quigley, M.; Conley, K.; Gerkey, B.; Faust, J.; Foote, T.; Leibs, J.; Ng, A.Y. ROS: An open-source Robot Operating System. In Proceedings of the ICRA Workshop on Open Source Software, Kobe, Japan, 12–17 May 2009. [Google Scholar]

- Liang, Q. Radar sensor wireless channel modeling in foliage environment: UWB versus narrowband. IEEE Sens. J. 2011, 11, 1448–1457. [Google Scholar] [CrossRef]

| Anchor | Mean Error (m) | Standard Deviation of Error (m) |

|---|---|---|

| Anchor 1 | 0.0301 | 0.1216 |

| Anchor 2 | 0.0235 | 0.1336 |

| Anchor 3 | 0.0237 | 0.1287 |

| Anchor 4 | 0.1014 | 0.1325 |

| Anchor 5 | 0.1081 | 0.1194 |

| Anchor 6 | 0.0867 | 0.1256 |

| Combined | 0.0622 | 0.1323 |

| Axis | Mean Error (m) | Standard Deviation of Error (m) |

|---|---|---|

| UWB (x) | 0.0621 | 0.1478 |

| UWB (y) | 0.0718 | 0.1510 |

| EKF (x) | 0.0167 | 0.1611 |

| EKF (y) | 0.0071 | 0.1326 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

McLoughlin, B.J.; Pointon, H.A.G.; McLoughlin, J.P.; Shaw, A.; Bezombes, F.A. Uncertainty Characterisation of Mobile Robot Localisation Techniques using Optical Surveying Grade Instruments. Sensors 2018, 18, 2274. https://doi.org/10.3390/s18072274

McLoughlin BJ, Pointon HAG, McLoughlin JP, Shaw A, Bezombes FA. Uncertainty Characterisation of Mobile Robot Localisation Techniques using Optical Surveying Grade Instruments. Sensors. 2018; 18(7):2274. https://doi.org/10.3390/s18072274

Chicago/Turabian StyleMcLoughlin, Benjamin J., Harry A. G. Pointon, John P. McLoughlin, Andy Shaw, and Frederic A. Bezombes. 2018. "Uncertainty Characterisation of Mobile Robot Localisation Techniques using Optical Surveying Grade Instruments" Sensors 18, no. 7: 2274. https://doi.org/10.3390/s18072274

APA StyleMcLoughlin, B. J., Pointon, H. A. G., McLoughlin, J. P., Shaw, A., & Bezombes, F. A. (2018). Uncertainty Characterisation of Mobile Robot Localisation Techniques using Optical Surveying Grade Instruments. Sensors, 18(7), 2274. https://doi.org/10.3390/s18072274