Abstract

The classical information-theoretic measures such as the entropy and the mutual information (MI) are widely applicable to many areas in science and engineering. Csiszar generalized the entropy and the MI by using the convex functions. Recently, we proposed the grid occupancy (GO) and the quasientropy (QE) as measures of independence. The QE explicitly includes a convex function in its definition, while the expectation of GO is a subclass of QE. In this paper, we study the effect of different convex functions on GO, QE, and Csiszar’s generalized mutual information (GMI). A quality factor (QF) is proposed to quantify the sharpness of their minima. Using the QF, it is shown that these measures can have sharper minima than the classical MI. Besides, a recursive algorithm for computing GMI, which is a generalization of Fraser and Swinney’s algorithm for computing MI, is proposed. Moreover, we apply GO, QE, and GMI to chaotic time series analysis. It is shown that these measures are good criteria for determining the optimum delay in strange attractor reconstruction.

Keywords:

entropy; mutual information; convex function; quality factor; strange attractor; delay-coordinate PACS Codes:

89.70.cf; 05.45.Pq

1. Introduction

The advent of information theory was hallmarked by Shannon’s seminal paper [1]. In that paper, some fundamental measures of information were established, among which is the entropy. The entropy is a concept that originally resided in thermodynamics and statistical physics as a measure of the degree of disorder [2]. For a different purpose, Shannon used the entropy to measure the amount of information. He also proposed the prototype of mutual information (MI) to measure the amount of information transmitted through a communication channel. The Shannon MI can be viewed as the Kullback divergence (also known as the relative entropy) between the joint probability density function (PDF) and the product of marginal PDFs. It reaches its minimum, zero, if and only if the variables are independent. Hence MI can be viewed as a measure of independence. Since the 1960s, generalizations of the Shannon entropy and MI have attracted the attention of many researchers, yielding various forms of non-Shannon information-theoretic measures [2,3,4,5,6,7,8,9]. For example, Renyi suggested some properties that the entropy should satisfy, such as the additivity, and proposed a class of entropies with a parameter. The Shannon entropy is the limit of these entropies when the parameter approaches 1 [3]. Harvrda and Charvat proposed a generalization of the Shannon entropy that is different from the Renyi’s entropy, which is called structural α-entropy [4]. Using convex functions, Csiszar proposed the f-divergence [5], which is a generalization of the relative entropy. A generalized version of Shannon MI, which is referred to as generalized mutual information (GMI) in this paper, can be readily derived from f-divergence [5]. Tsallis postulated a generalized form of entropy [6]. Kapur also gave many definitions of entropies [7]. More recently, we proposed the grid occupancy (GO) [8] and the quasientropy (QE) [9] as measures of independence. The QE, which explicitly includes a convex function, is a general enough definition that includes the α-entropy, Tsallis entropy, and some of the Kapur’s entropies as special cases. For the GO, it can be shown that its expectation is a subclass of QE [8].

As we can see, many information-theoretic measures involve convex functions. Thus, it is interesting to pose the question that how different convex functions affect the behavior of the related measures. Previous studies were usually carried out on a restricted type of convex function. For example, Tsallis studied the power function [6]. In this paper, however, we study the effect of arbitrary convex functions. We propose a quality factor (QF) to quantify the sharpness of the minima of GO, QE, and GMI. We show that the order of QF with respect to l, the number of quantization levels, correctly predicts the sharpness of minima. Especially, some convex functions yield QFs with higher order than that of the minus Shannon entropy and MI, which implies that the related measures have sharper minima than the minus Shannon entropy and MI do.

Nowadays, applications of entropy and information theory have extended, far beyond the original scope of communication theory, to a wide variety of fields such as statistical inference, non-parametric density estimation, time series analysis, pattern recognition, biological, ecological and medical modeling and so on [10,11,12,13,14,15]. In this paper, besides the above theoretical results, we also deal with a specific application of the independence measures to chaotic time series analysis, the background of which is described as follows. In many cases, the commonly seen one-dimensional scalar signals or time series are observations of a certain variable of a multivariate dynamical system. An interesting property of dynamical systems is that their multivariate behavior can be approximately “reconstructed” from observations of just one variable by a method called state space (phase portrait) reconstruction. For chaotic systems, this method is also termed strange attractor reconstruction, which has become a fundamental approach in chaotic signal analysis. Basic reconstruction can be done using delay coordinates [16]. Namely, we take the current observed value of the time series and the values after equally spaced time lags τ, 2τ, 3τ… to obtain “observations” of several reconstructed variables where τ is the delay. If τ is not appropriate, the effect of reconstruction might not be good. So we should consider how to choose a proper delay. Since reconstruction aims at releasing the information of the whole system “condensed” in one variable, generally the reconstructed variables should be as independent as possible. Thus, a measure of independence can be used as a criterion for choosing τ. For example, Fraser and Swinney used the first minimum of the Shannon MI for choosing delay according to Shaw’s suggestion. They proposed a recursive algorithm for computing Shannon MI, and they showed that the MI is actually more advantageous than the correlation function that only takes into account second order dependence [17]. In this paper, we shall develop a recursive algorithm for computing GMI, which is a generalization of Fraser and Swinney’s algorithm for computing Shannon MI. In addition, we are going to show that GO, QE, and GMI are even better criteria for choosing τ than the Shannon MI. It should be noted that this paper is by no means a thorough treatment of all the measures of independence, there are many not covered such as the Hilbert-Schmidt independence criterion proposed by Gretton et al. [18] and the distance correlation proposed by Szekely et al. [19].

The rest of the paper is organized as follows. In Section 2, we first give the definition of quasientropy (QE), and then derive the quality factor (QF) of QE. In Section 3, we illustrate the principle of grid occupancy (GO), introduce the relation between GO and QE, and define the QF of GO based on the QF of QE. Section 4 reveals the relation between QE and the generalized mutual information (GMI), and deduces the QF of GMI, also from the QF of QE. The recursive algorithm for computing GMI is mentioned in Section 4, while the details are placed in the Appendix for better organization of the paper. Section 5 is devoted to a study of the order of QF with respect to l, the number of quantization levels, elucidating the cases when this order is higher than, equal to, and lower than that of the MI’s. These theoretical results are verified by the numerical experiments on delay reconstruction of the Rössler and the Lorenz attractors presented in Section 6. Finally, Section 7 concludes the paper. In the following, the word “entropy,” when appears alone, refers to the Shannon entropy and, the phrase “MI,” when appears alone, refers to the Shannon MI, as is the common usage.

2. Quality Factor (QF) of Quasientropy (QE)

2.1. Quasientropy

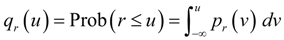

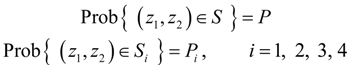

Let us consider the problem of how to measure the independence of several variables. We denote by qr (·) the cumulative distribution function (CDF) of variable r.  , where Prob(A) denotes the probability that event A occurs, and pr (·) is the probability density function (PDF) of r. Without loss of generality, consider two continuous variables r1 and r2. In the past one or two decades, the study of copulas has become a blooming field of statistical research [20]. Copula is the joint CDF of the transformed variables by their respective CDFs. The rationale of the study of copulas is that, to study the relation between two or more variables, we should nullify the effect of their marginal distributions and concentrate on their joint distribution. Based on this principle, we transform r1 and r2 by their respective CDFs as follows:

, where Prob(A) denotes the probability that event A occurs, and pr (·) is the probability density function (PDF) of r. Without loss of generality, consider two continuous variables r1 and r2. In the past one or two decades, the study of copulas has become a blooming field of statistical research [20]. Copula is the joint CDF of the transformed variables by their respective CDFs. The rationale of the study of copulas is that, to study the relation between two or more variables, we should nullify the effect of their marginal distributions and concentrate on their joint distribution. Based on this principle, we transform r1 and r2 by their respective CDFs as follows:

then, (z1, z2) ∈ [0, 1] × [0, 1], and we have [8,9]:

then, (z1, z2) ∈ [0, 1] × [0, 1], and we have [8,9]:

, where Prob(A) denotes the probability that event A occurs, and pr (·) is the probability density function (PDF) of r. Without loss of generality, consider two continuous variables r1 and r2. In the past one or two decades, the study of copulas has become a blooming field of statistical research [20]. Copula is the joint CDF of the transformed variables by their respective CDFs. The rationale of the study of copulas is that, to study the relation between two or more variables, we should nullify the effect of their marginal distributions and concentrate on their joint distribution. Based on this principle, we transform r1 and r2 by their respective CDFs as follows:

, where Prob(A) denotes the probability that event A occurs, and pr (·) is the probability density function (PDF) of r. Without loss of generality, consider two continuous variables r1 and r2. In the past one or two decades, the study of copulas has become a blooming field of statistical research [20]. Copula is the joint CDF of the transformed variables by their respective CDFs. The rationale of the study of copulas is that, to study the relation between two or more variables, we should nullify the effect of their marginal distributions and concentrate on their joint distribution. Based on this principle, we transform r1 and r2 by their respective CDFs as follows:

Lemma 1.

r1 and r2 are independent if and only if (z1, z2) is uniformly distributed in [0, 1] × [0, 1].

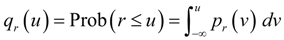

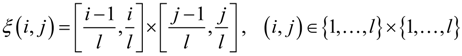

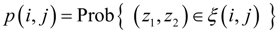

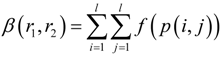

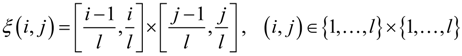

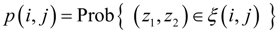

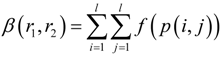

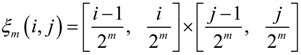

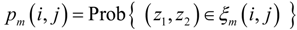

Lemma 1 shows that we can measure the independence of r1 and r2 by measuring the uniformity of the distribution of (z1, z2) in [0, 1] × [0, 1]. To this end, let us partition the region [0, 1] × [0, 1] into an l × l uniform grid, and denote by ξ (i, j) the (i, j)th square in the grid:

Denote by p (i, j) the probability that (z1, z2) belongs to ξ (i, j), i.e.,

Denote by p (i, j) the probability that (z1, z2) belongs to ξ (i, j), i.e.,

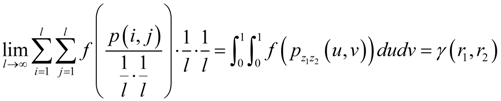

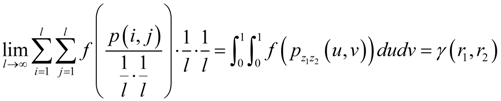

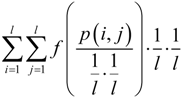

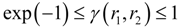

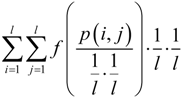

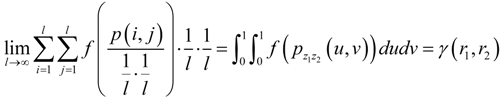

then, the QE for measuring the independence of r1 and r2 is defined as [9]:

then, the QE for measuring the independence of r1 and r2 is defined as [9]:

where f (·) is a differentiable strictly convex function on [0,1/l]. Clearly, when f (u) = u log u, QE is nothing but the entropy of p (i, j) up to a minus sign. When f (u) = (1/l2 – uq)/(1 – q), up to a minus sign, QE becomes the Tsallis entropy [6] of p (i, j).

where f (·) is a differentiable strictly convex function on [0,1/l]. Clearly, when f (u) = u log u, QE is nothing but the entropy of p (i, j) up to a minus sign. When f (u) = (1/l2 – uq)/(1 – q), up to a minus sign, QE becomes the Tsallis entropy [6] of p (i, j).

Due to the Jensen’s inequality, we have [9]:

with equality if and only if p (i, j) is uniform in {1,…, l} × {1,…, l}.

with equality if and only if p (i, j) is uniform in {1,…, l} × {1,…, l}.

If r1 and r2 are independent, then (z1, z2) is uniform in [0, 1] × [0, 1] and, thus, p (i, j) is uniform in {1,…, l} × {1,…, l}. Then the equality in (5) holds and β (r1, r2) reaches its minimum l2 f (1/l2). Conversely, if r1 and r2 are not independent, then there exists l0 such that for any l > l0, β (r1, r2) cannot reach its minimum [9]. Thus, for a large enough l, the minimal β (r1, r2) implies independent r1 and r2.

2.2. Quality Factor (QF) of QE

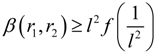

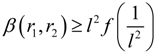

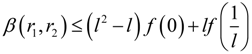

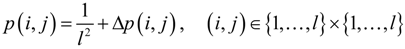

There are infinitely many strictly convex functions. Let us consider the effect of different convex functions on the performance of QE. In [9], we proved that:

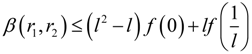

In order to better differentiate between independence and “near” independence, it is preferable that QE have high sensitivity around its minimum to the variance of the uniformity of probability density. Thus, we write p (i, j) in the following form:

In order to better differentiate between independence and “near” independence, it is preferable that QE have high sensitivity around its minimum to the variance of the uniformity of probability density. Thus, we write p (i, j) in the following form:

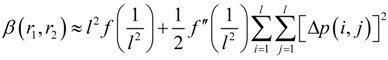

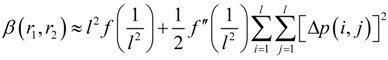

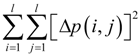

Then, the Taylor expansion of (4) gives [9]:

Then, the Taylor expansion of (4) gives [9]:

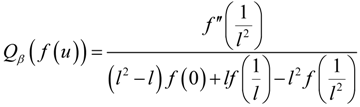

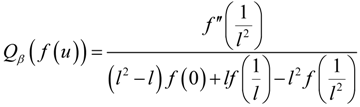

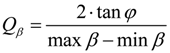

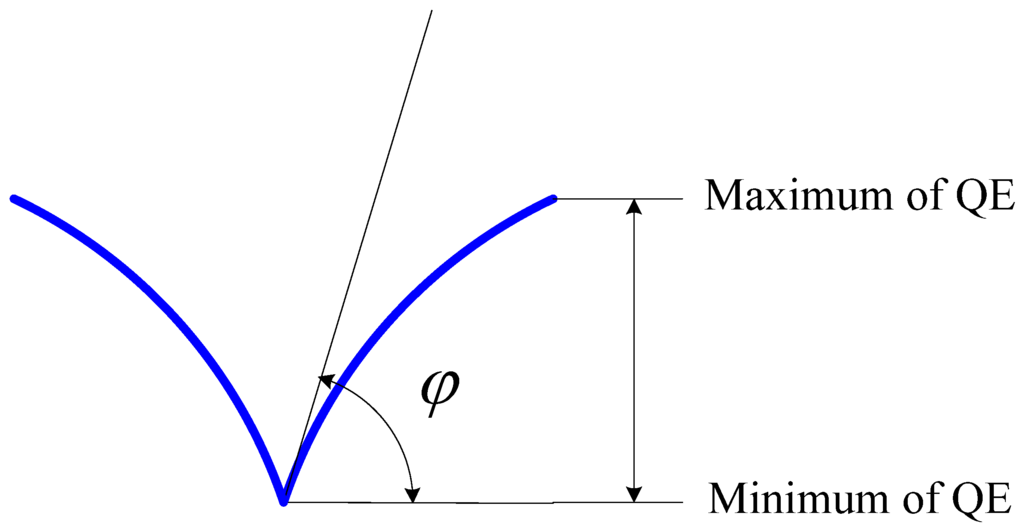

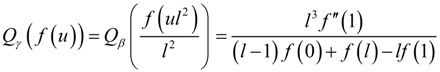

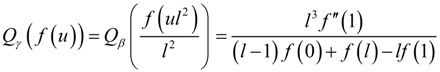

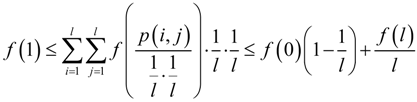

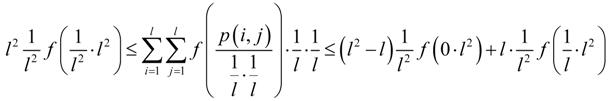

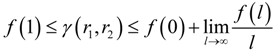

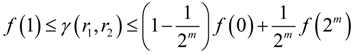

Based on (5), (6), and (8), we can define the quality factor (QF) of QE as:

Based on (5), (6), and (8), we can define the quality factor (QF) of QE as:

where, the numerator is twice the amplification rate of the variance between p (i, j) and the uniform probability distribution in (8), and the denominator is the maximum dynamic range of β(r1, r2) derived from (6) and (5). The greater the QF, the more sensitive is QE to the change in the uniformity of probability distribution around its minimum and, thus, the sharper is the shape of the minimum of QE.

where, the numerator is twice the amplification rate of the variance between p (i, j) and the uniform probability distribution in (8), and the denominator is the maximum dynamic range of β(r1, r2) derived from (6) and (5). The greater the QF, the more sensitive is QE to the change in the uniformity of probability distribution around its minimum and, thus, the sharper is the shape of the minimum of QE.

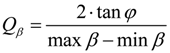

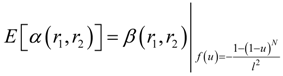

An illustration of the QF of QE is shown in Figure 1. Suppose that QE is plotted versus  , and φ is the angle that is tangent to the curve of QE at the minimum of QE, then:

, and φ is the angle that is tangent to the curve of QE at the minimum of QE, then:

Clearly, when Qβ is large (small), QE is sharp (blunt) around its minimum.

Clearly, when Qβ is large (small), QE is sharp (blunt) around its minimum.

, and φ is the angle that is tangent to the curve of QE at the minimum of QE, then:

, and φ is the angle that is tangent to the curve of QE at the minimum of QE, then:

Figure 1.

Illustration of quality factor (QF) of QE.

Figure 1.

Illustration of quality factor (QF) of QE.

3. QF of Grid Occupancy (GO)

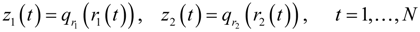

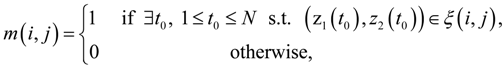

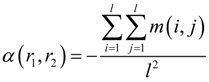

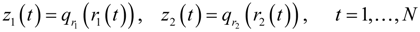

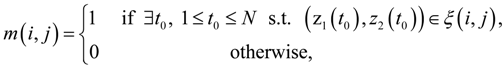

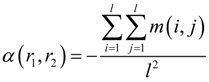

In [8], we described an interesting phenomenon that independence can be measured by simply counting the number of occupied squares: Given N realizations (observed points) of (r1, r2) denoted by (r1(t), r2(t)), t = 1,..., N, where N > 1, let:

and let:

and let:

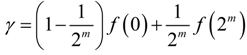

where ξ (i, j) is as defined in (2), and (i, j) ∈ {1,…, l} × {1,…, l}. Then, the independence measure GO is defined as:

where ξ (i, j) is as defined in (2), and (i, j) ∈ {1,…, l} × {1,…, l}. Then, the independence measure GO is defined as:

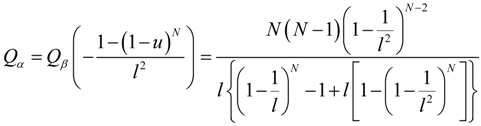

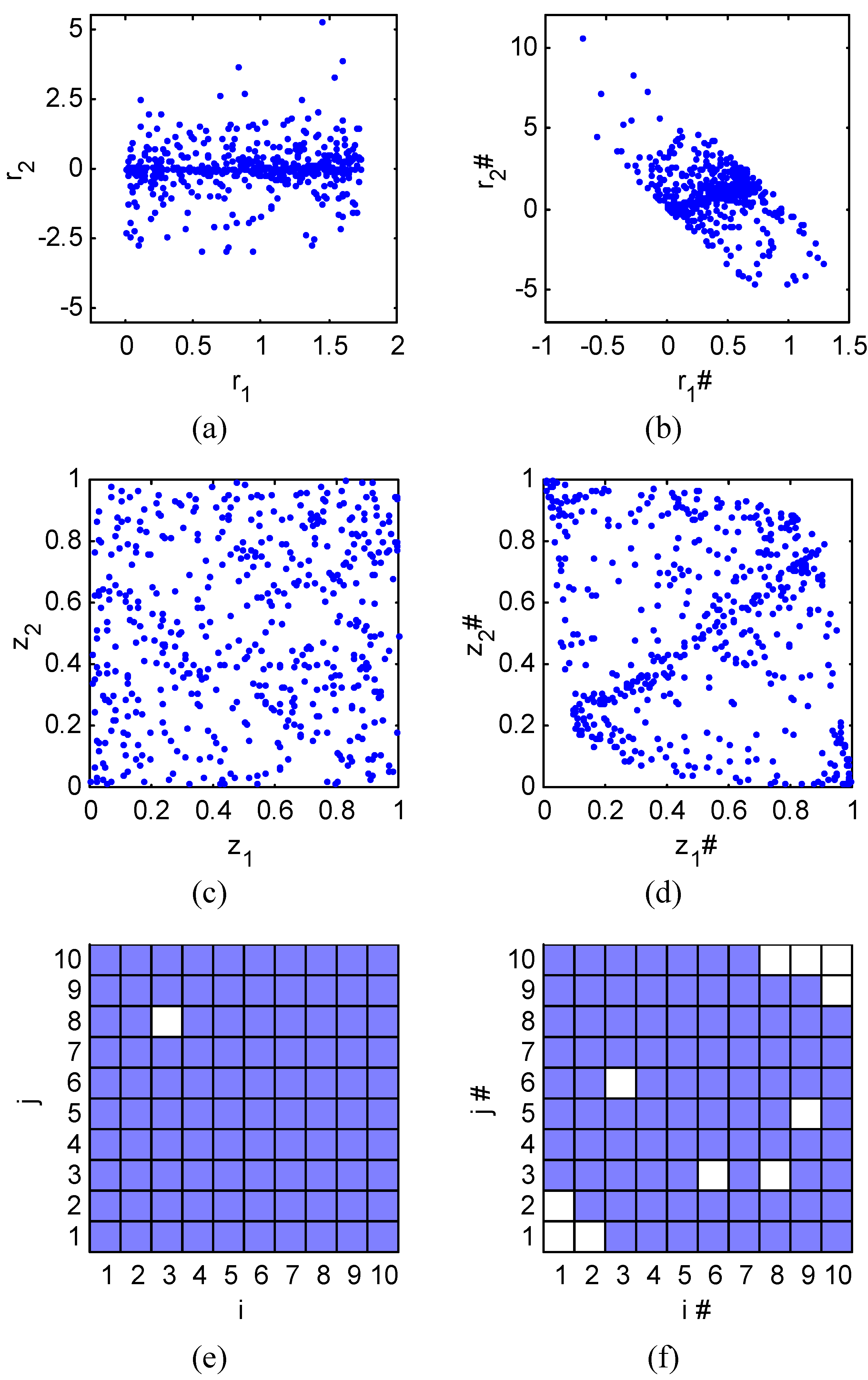

A visual illustration of GO is depicted in Figure 2. Figure 2a and Figure 2b plot 500 observed points of two independent variables (r1, r2) and two dependent variables (r1#, r2#), respectively. Figure 2c and Figure 2d plot 500 observed points of (z1, z2) and (z1#, z2#), respectively, where z1 ≡ qr1 (r1), z2 ≡ qr2 (r2), z1# ≡ qr1# (r1#), and z2# ≡ qr2# (r2#). It is easy to see that the points in Figure 2c are uniformly distributed whereas those in Figure 2d are not. Partition the region [0, 1] × [0, 1] in Figure 2c and Figure 2d into a grid of l × l, say, 10 × 10, same-sized squares. A square is said to be occupied if there is at least one point in it. Then, being uniform is the most efficient way to occupy maximum number of squares, and this is confirmed in Figure 2e and Figure 2f where the situations of Figure 2c and Figure 2d are shown, respectively. (The occupied squares are shaded.) The grid occupancy (GO) defined in (13) is exactly minus the ratio of occupied squares. As Figure 2e and Figure 2f clearly show, α(r1, r2) = −0.99 < α(r1#, r2#) = −0.89. Therefore, r1 and r2 are more independent than r1# and r2#.

Indeed, we can prove that the expectation of GO is a subclass of QE [8]:

where E [·] denotes the mathematical expectation. Therefore, we can define the QF of GO as:

where E [·] denotes the mathematical expectation. Therefore, we can define the QF of GO as:

Figure 2.

Illustration of grid occupancy (GO). (a) r1 and r2 are independent. (b) r1# and r2# are dependent. (c) (z1,z2) transformed from (r1,r2) is uniform. (d) (z1#,z2#) transformed from (r1#,r2#) is not uniform. (e) α(r1,r2) = −0.99. (f) α(r1#,r2#) = −0.89.

Figure 2.

Illustration of grid occupancy (GO). (a) r1 and r2 are independent. (b) r1# and r2# are dependent. (c) (z1,z2) transformed from (r1,r2) is uniform. (d) (z1#,z2#) transformed from (r1#,r2#) is not uniform. (e) α(r1,r2) = −0.99. (f) α(r1#,r2#) = −0.89.

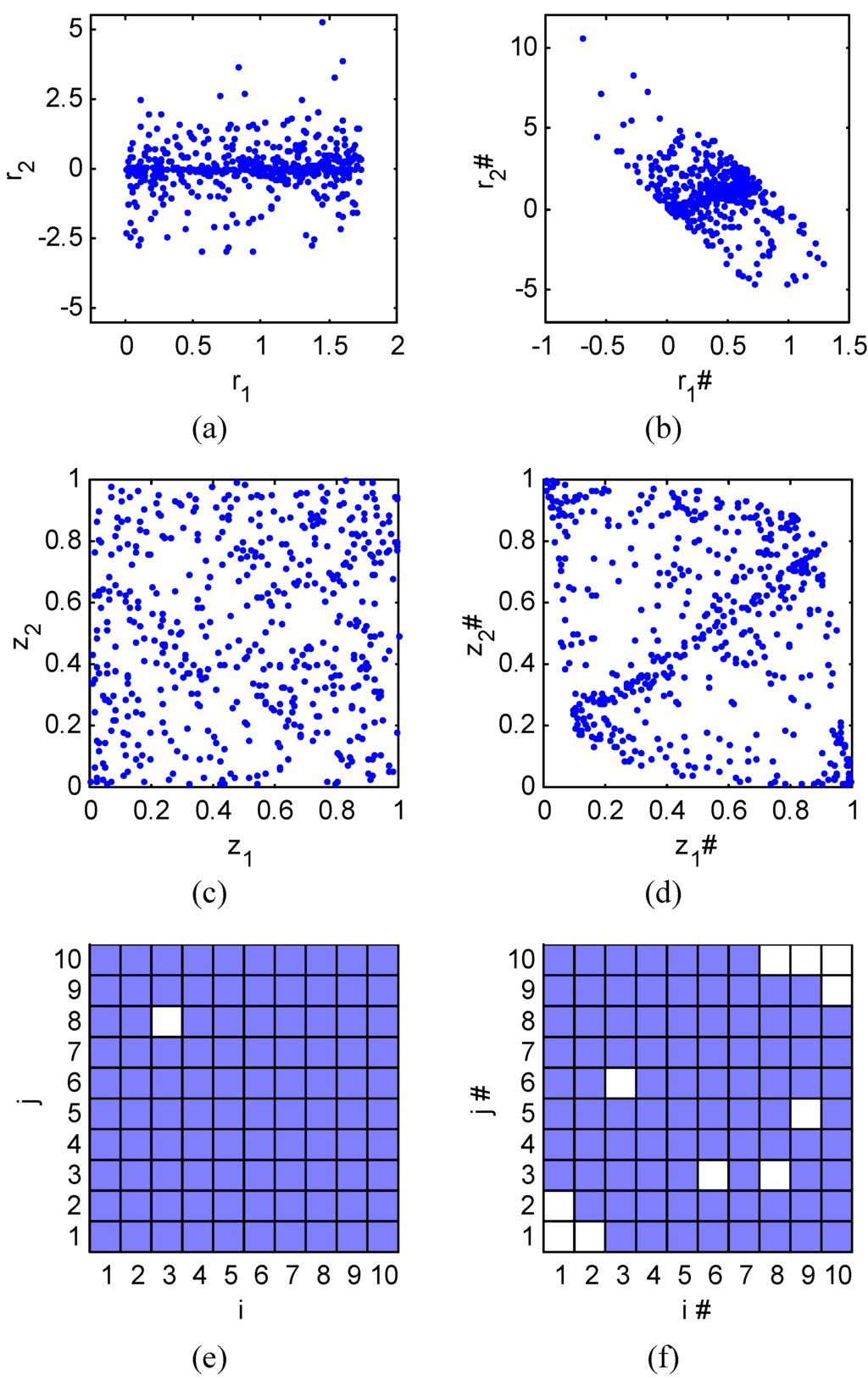

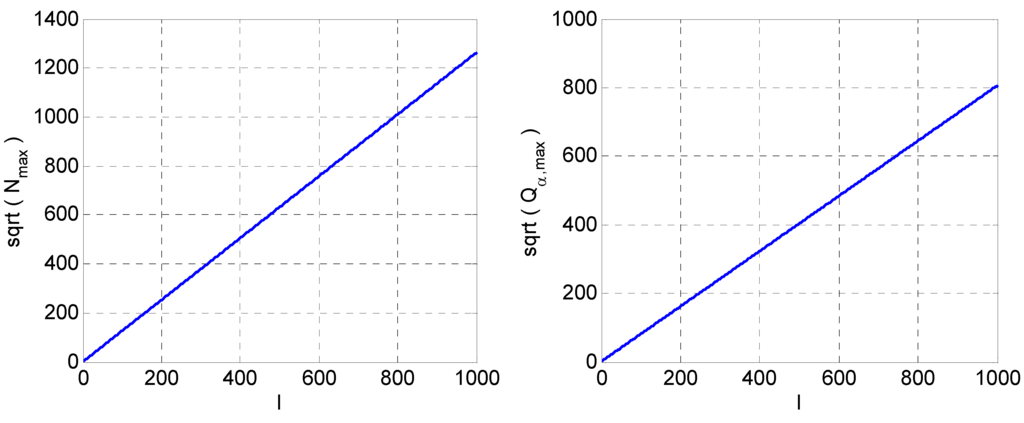

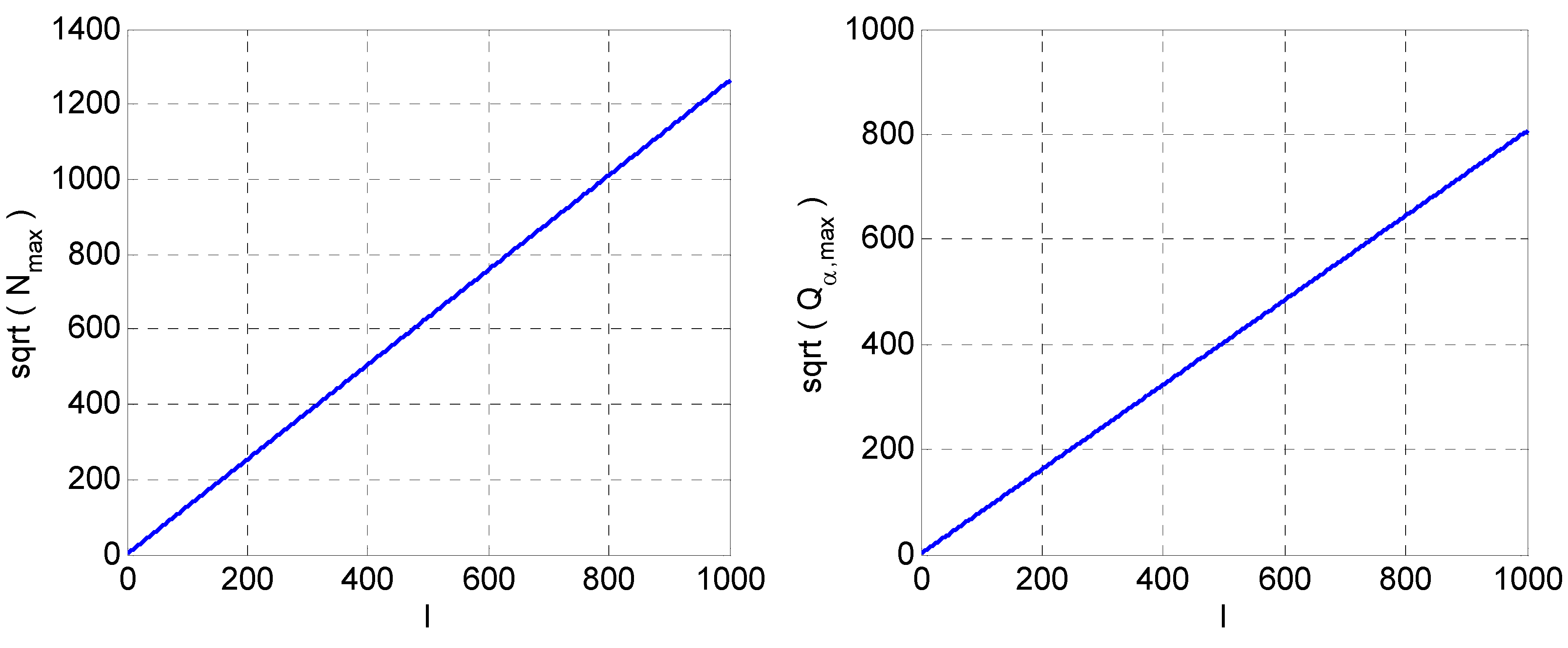

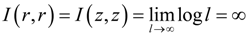

Since Qα is not easy to be analyzed, we study it using numerical methods as shown in Figure 3. We vary l from 2 to 1,000. For each l, find the N, denoted Nmax, that maximizes Qα to Qα,max. In Figure 3, we can find:

Figure 3.

Numerical study of QF of GO. Left:  versus l. Right:

versus l. Right:  versus l.

versus l.

versus l. Right:

versus l. Right:  versus l.

versus l.

Figure 3.

Numerical study of QF of GO. Left:  versus l. Right:

versus l. Right:  versus l.

versus l.

versus l. Right:

versus l. Right:  versus l.

versus l.

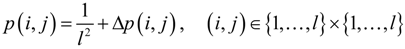

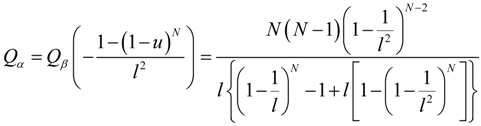

4. QF of Generalized Mutual Information (GMI)

4.1. QF of GMI

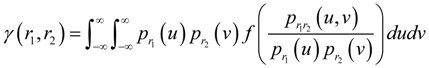

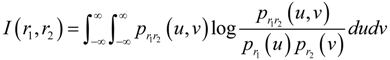

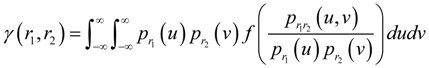

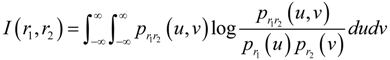

Let us now consider the generalized mutual information (GMI) proposed by Csiszar [5]:

where f(·) is strictly convex on [0, ∞). Clearly, when f(u) = ulogu, GMI reduces to the classical MI:

where f(·) is strictly convex on [0, ∞). Clearly, when f(u) = ulogu, GMI reduces to the classical MI:

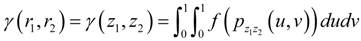

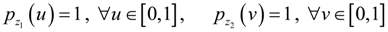

Like MI, GMI is invariant under componentwise invertible transformations [21]. Therefore:

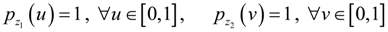

where z1 and z2 are as defined in (1), and we have utilized the following facts [9]:

where z1 and z2 are as defined in (1), and we have utilized the following facts [9]:

It is easy to verify that:

It is easy to verify that:

where p(i,j) is as defined in (3). As we are mainly concerned about the situation when l is fairly large, according to (21), we can define the QF of GMI as:

where p(i,j) is as defined in (3). As we are mainly concerned about the situation when l is fairly large, according to (21), we can define the QF of GMI as:

We have developed a recursive algorithm for computing GMI. The algorithm is described in the Appendix, where some related issues are also addressed.

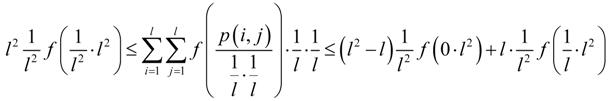

4.2. Existence of GMI

From (19), f(pz1z2(u, v)) being continuous on [0,1] × [0,1] is a sufficient condition to ensure the existence of GMI. Sometimes, however, f(pz1z2(u,v)) might not be continuous, so let us investigate the existence of GMI in more depth. As already done in (22), the  in (21) can be treated as the QE with convex function

in (21) can be treated as the QE with convex function  . Thus, (5) and (6) can be applied to this QE, which yield:

. Thus, (5) and (6) can be applied to this QE, which yield:

Namely,

Namely,

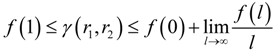

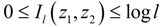

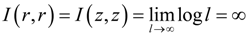

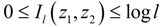

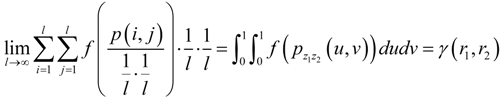

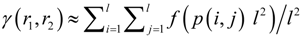

Taking the limit as l → ∞ and applying (21), we get:

Taking the limit as l → ∞ and applying (21), we get:

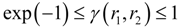

Equation (25) gives the lower and upper bounds of GMI. The lower bound is reached when r1 and r2 are independent [5]. The upper bound is reached when, for example, r1 ≡ r2, which is one of the most dependent cases. When f(u) = ulogu, (24) gives the lower and upper bounds of Shannon MI of l-level uniformly quantized versions of z1 and z2, denoted Il(z1,z2), as follows:

Equation (25) gives the lower and upper bounds of GMI. The lower bound is reached when r1 and r2 are independent [5]. The upper bound is reached when, for example, r1 ≡ r2, which is one of the most dependent cases. When f(u) = ulogu, (24) gives the lower and upper bounds of Shannon MI of l-level uniformly quantized versions of z1 and z2, denoted Il(z1,z2), as follows:

When z1 ≡ z2 ≡ z, Il(z,z) = logl, which reaches the upper bound shown in (26). The MI of continuous variables is the limit of the MI of their quantized versions as the number of quantization levels goes to infinity [9]. Therefore, when r1 ≡ r2 ≡ r and thus z1 ≡ z2 ≡ z,

When z1 ≡ z2 ≡ z, Il(z,z) = logl, which reaches the upper bound shown in (26). The MI of continuous variables is the limit of the MI of their quantized versions as the number of quantization levels goes to infinity [9]. Therefore, when r1 ≡ r2 ≡ r and thus z1 ≡ z2 ≡ z,

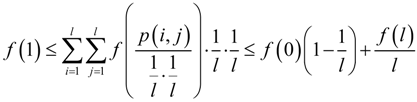

We see that the MI between a continuous variable and itself is infinity. This is because that pzz (u, v) is infinite along the diagonal u = v and causes the integration in (19) to diverge. Compared with the Shannon MI, there are more stable forms of GMI. For example, when f (u) = exp (−u), (25) becomes:

We see that the MI between a continuous variable and itself is infinity. This is because that pzz (u, v) is infinite along the diagonal u = v and causes the integration in (19) to diverge. Compared with the Shannon MI, there are more stable forms of GMI. For example, when f (u) = exp (−u), (25) becomes:

in (21) can be treated as the QE with convex function

in (21) can be treated as the QE with convex function  . Thus, (5) and (6) can be applied to this QE, which yield:

. Thus, (5) and (6) can be applied to this QE, which yield:

This shows that the GMI with f (u) = exp (−u) is lower bounded by exp (−1) (about 0.3679) and upper bounded by 1 and never diverge. It takes the finite value, 1, even for the most dependent case.

5. Orders of QFs with Respect to l

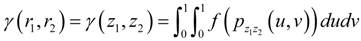

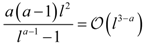

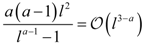

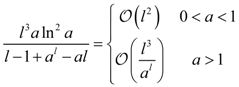

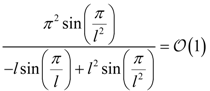

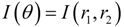

Table 1 shows the Qβ’s and Qγ’s of some convex functions and their orders with respect to l as l → ∞. The function −sinπu, which appears in the last line, is not convex on [0, ∞), so it cannot be used in GMI and Qγ does not exist. However, it is convex on [0,1], so it can be used in QE and Qβ can be calculated. Note the Qβ and Qγ of −ua (0 < a < 1), and the Qγ of ua (0 < a < 1). Their orders are all Ϭ (l2), which are higher than Ϭ(l2/ln l), the order of the minus entropy and the MI. For the case of GO, (16) already shows that it can have the QF of Ϭ(l2). This means that, when l is large enough, these measures will have sharper minima than the minus entropy and the MI.

Table 1.

QFs of QE and GMI.

| f (u) | Qβ (f (u)) | Qγ (f (u)) |

| − ua (0 < a < 1) |  |  |

| u log u |  |  |

| ua (a > 1) |  |  |

| au (a > 0, a ≠ 1) |  |  |

| −sin πu |  |

6. Numerical Experiments

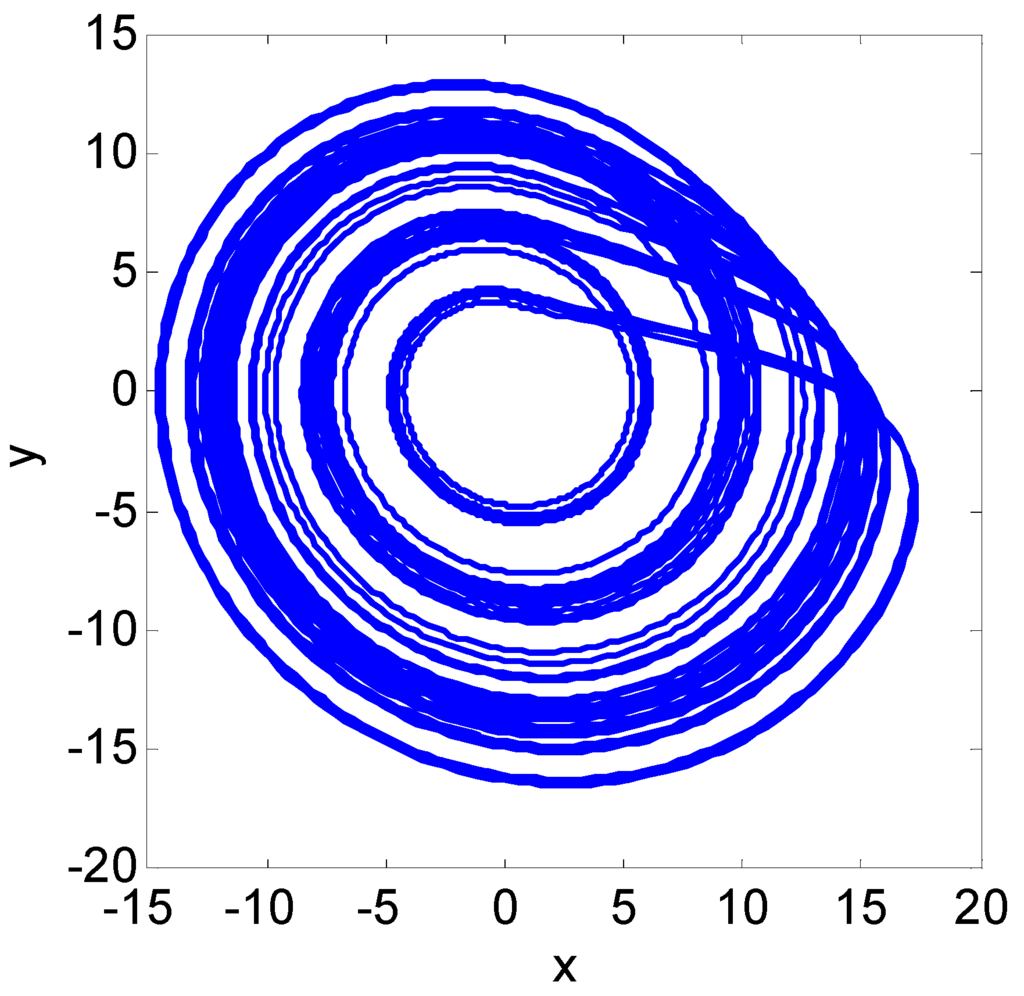

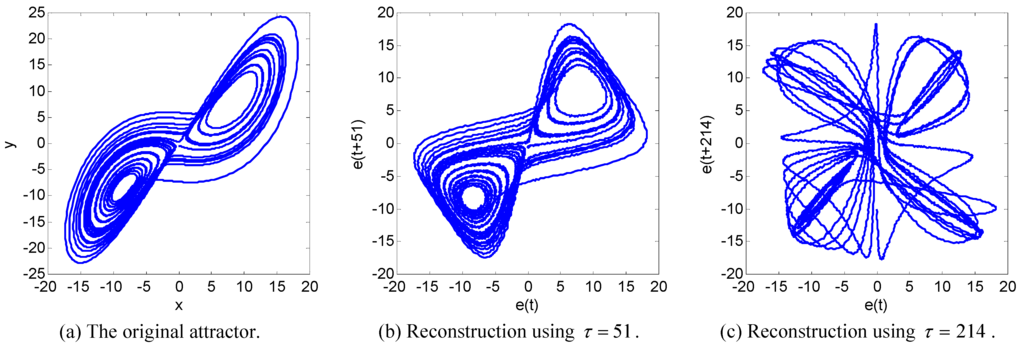

In this section, we show some numerical results of applying GO, QE, and GMI to delay reconstruction of strange attractors. Figure 4 shows the time evolution (x(t), y(t)) of Rössler chaotic system [20], where t is the sampling number. The sampling time interval is π/100. The observed sequence e(t) is obtained by adding a white noise uniformly distributed in [−0.1,0.1] to x(t). We use (e(t), e(t + τ)) to reconstruct the original chaotic attractor, where τ is the delay to be determined. We examine the curves of α(e(t), e(t + τ)), β (e (t), e (t + τ)), and γ(e(t), e(t + τ)) versus τ. τ can be chosen at the minima of these curves. So we hope these curves provide apparent minima.

Figure 4.

The Rössler attractor.

Figure 4.

The Rössler attractor.

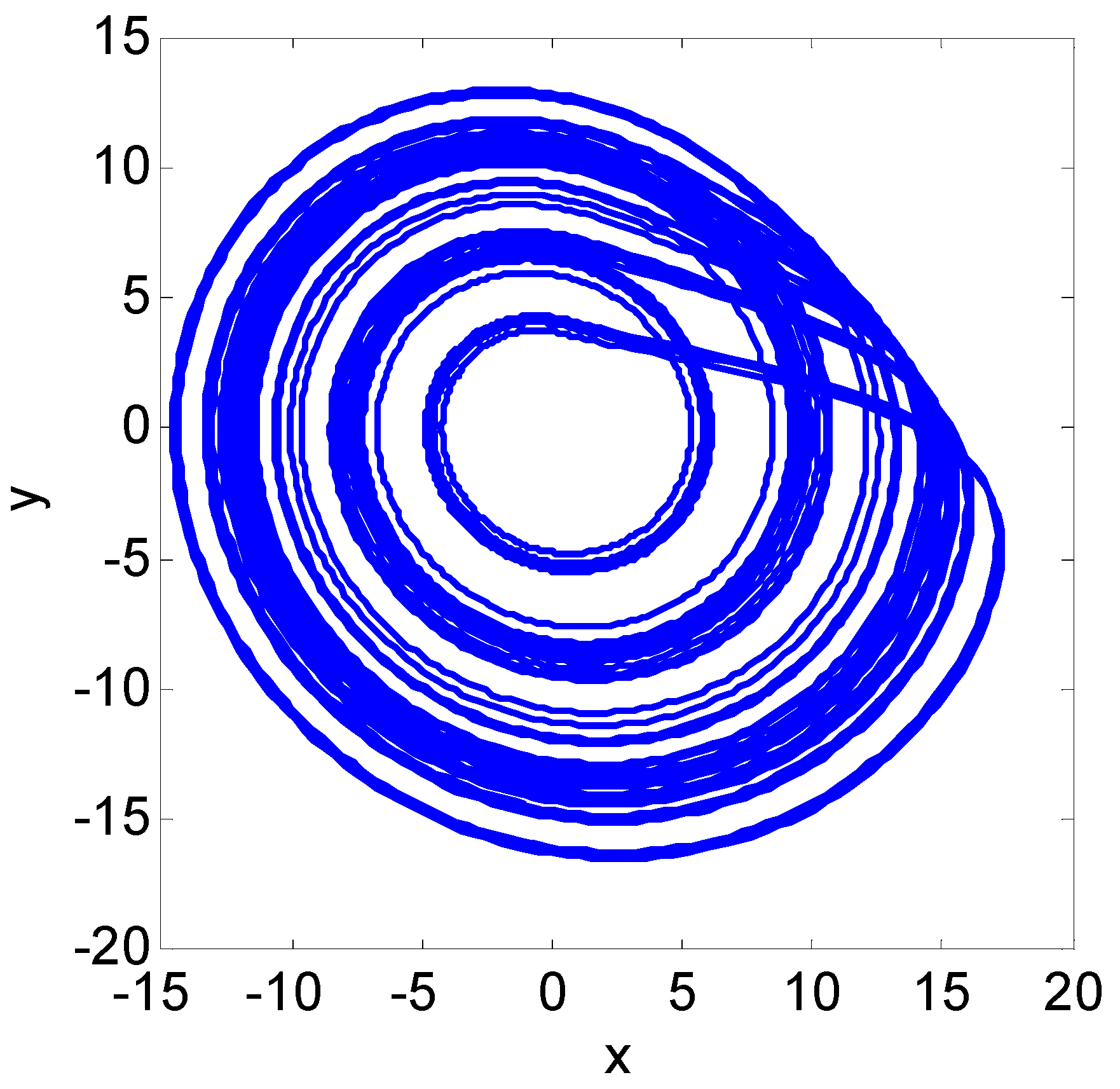

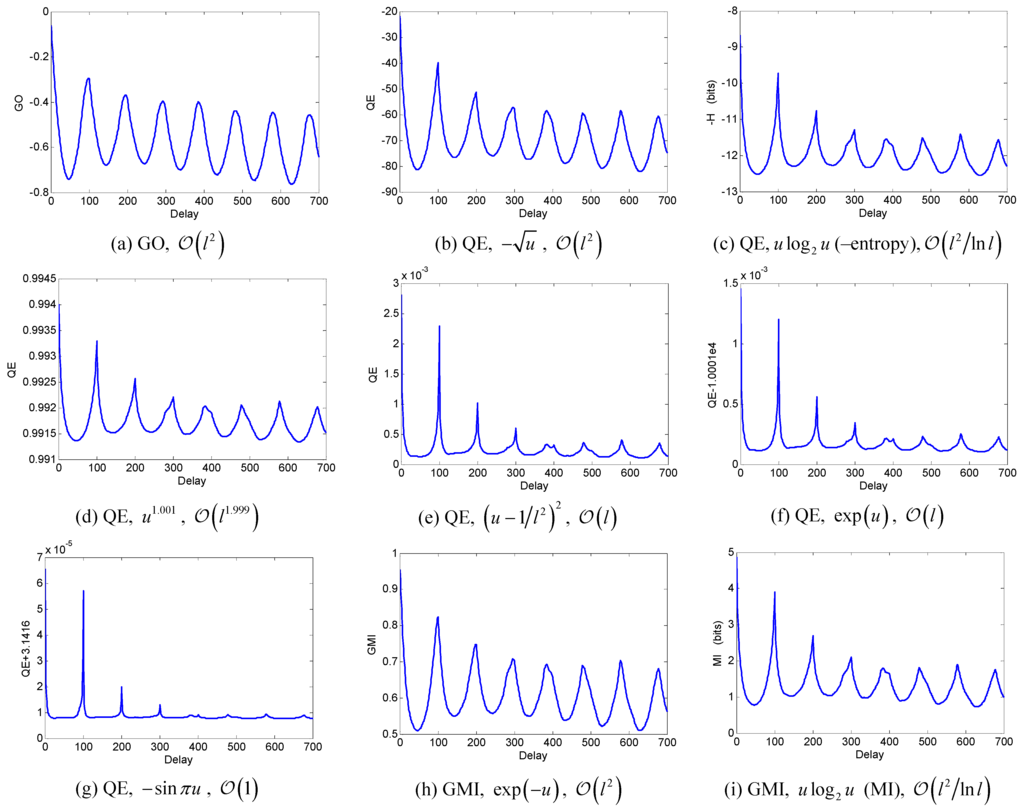

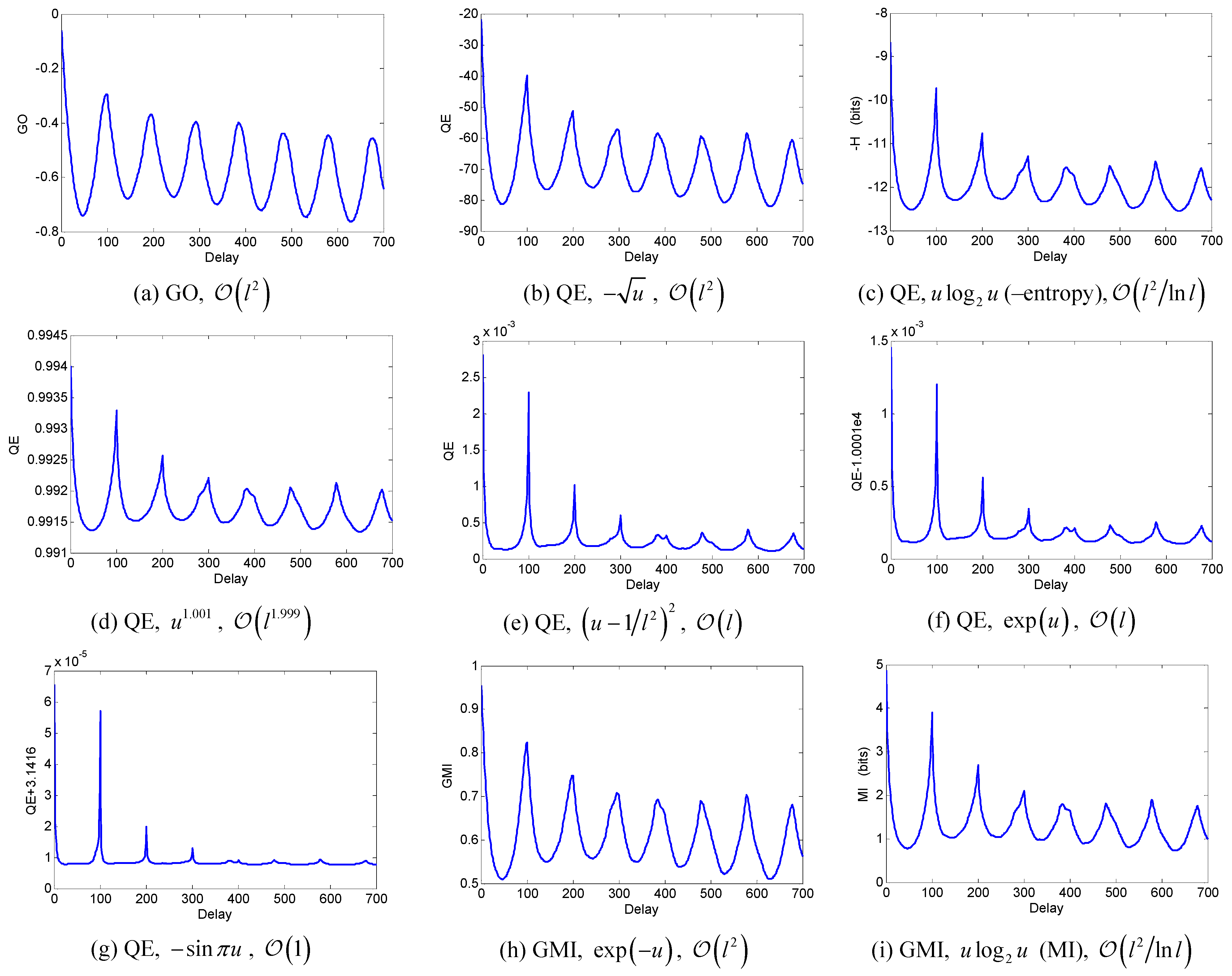

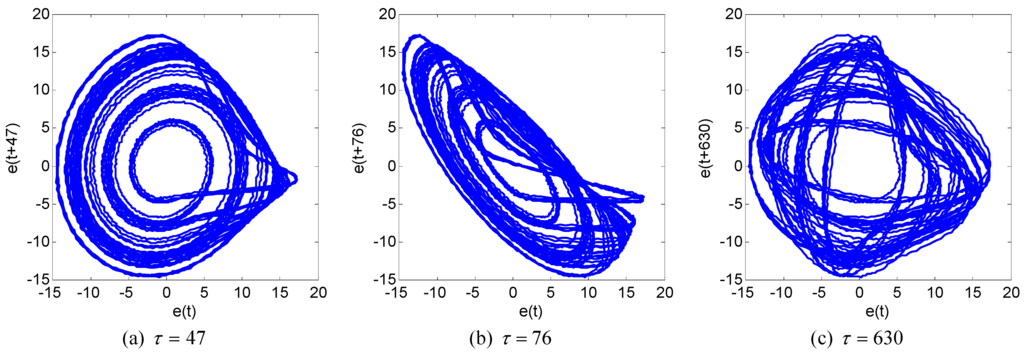

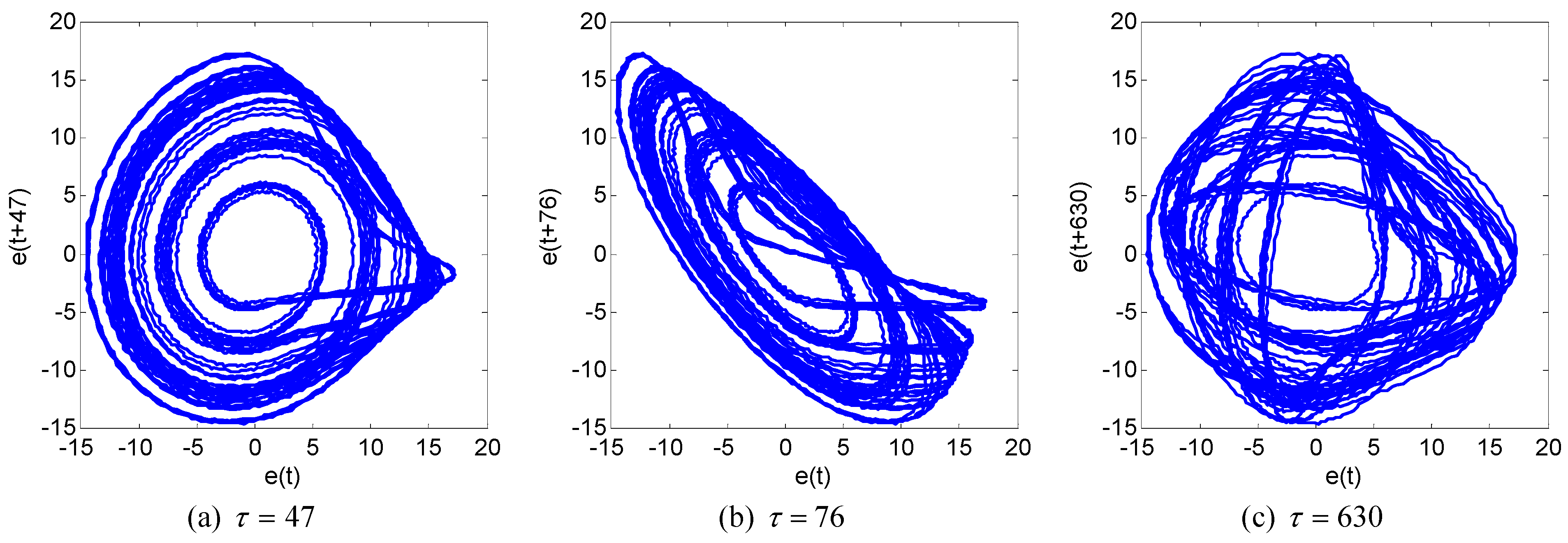

Figure 5 shows these curves where several different convex functions are used for QE and GMI. We can see that the order of QF correctly predicts the shape of minima: The higher (lower) the order, the sharper (blunter) the minima. Figure 5b–g are plots of QE, where we have arranged them so that their orders of QFs decrease from high to low. Observing Figure 5b–g, we can see the process that the minima of these curves of QE vary from sharp to blunt, and until very vague. In [9], it is shown that the order of QF should be at least Ϭ (l) to ensure that β keeps a well-defined minimum as l increases. The orders of QFs of Figure 5b–d are all higher than Ϭ(l). The minima in these plots are all very prominent. Figure 5c is minus the entropy of p(i,j) (labeled −H in the plot). Adding 2 log2 l = 2 log2 100 = 13.29 bits to its ordinate approximately leads to the plot of MI shown in Figure 5i. The QFs of Figure 5e and Figure 5f are both Ϭ (l). Hence the shapes of their minima are basically identical. The order Ϭ (l) reaches the theoretical lower bound proposed in [9]. However, we can see that the neighboring areas of the minima are too flat and the positions of minima are not easy to locate. This is due to that e(t) and e(t + τ) cannot be completely independent. Therefore, generally we should choose convex functions whose orders of QFs are higher than Ϭ(l). Figure 5e is indeed the variance between p(i,j) and the uniform probability distribution. Figure 5e shows that such form of variance, though easily conceived of, is obviously not a good measure of independence. The shape of the QE plot of f(u) = u2 is identical with Figure 5e. According to Table 1, under the precondition a > 1, the smaller a, the higher the order of QF of ua. Thus the nearer a approaches 1, the better the effect of QE. For instance, Figure 5d and Figure 5e show that the effect of u1.001 is better than that of u2. Tsallis proved that the limit of his entropy is the Shannon entropy when the parameter q in his entropy, which corresponds to the a in f(u) = ua here, approaches 1 [6]. Figure 5c and Figure 5d are good manifestations of that statement. We can see that the shapes of curves in Figure 5c and Figure 5d look very close. What is more, that statement can be testified by the order of QFs. The order of QF of ua where a > 1, Ϭ(l3−a), is lower than but approaches the order of QF of ulogu, Ϭ(l2/lnl), as a approaches 1. We have used in Figure 5g the sine function − sinπu. This can be traced back to Kapur who used trigonometric functions to create entropies [7]. The QF of − sinπu is of constant order Ϭ (1). Therefore, the neighborhoods of the minima in Figure 5g are even more flat than those in Figure 5e and Figure 5f, and the positions of minima are hardly located. Finally, we can see that the minima in Figure 5a, Figure 5b, and Figure 5h, whose order is Ϭ(l2), are sharper, and their positions are more definite than those in Figure 5c and Figure 5i whose order is Ϭ(l2/lnl). Namely, these measures outperform the minus entropy and the MI in the prominence of minima. Of course, the minima in Figure 5a–d, Figure 5h, and Figure 5i are all distinct enough, and their positions coincide. The first (leftmost) minimum, τ = 47, is a good choice of the delay for reconstruction. To verify this point, Figure 6a, Figure 6b, and Figure 6c show the delay portraits using the first (leftmost) minimum, a non-minimum, and the seventh minimum, respectively. Compared with Figure 4, it is clear that Figure 6a reproduces well the folding structure of the original Rössler attractor.

Figure 5.

GO, QE, and GMI of delay-coordinate variables of Rössler chaotic system versus delay τ. All plots are calculated using 65,536 sample points. l = 100 is used for GO and QE. Delay is measured in sampling numbers. The convex functions f (u)’s used in (b)–(i) and the orders of QFs of (a)–(i) are labeled in their captions.

Figure 5.

GO, QE, and GMI of delay-coordinate variables of Rössler chaotic system versus delay τ. All plots are calculated using 65,536 sample points. l = 100 is used for GO and QE. Delay is measured in sampling numbers. The convex functions f (u)’s used in (b)–(i) and the orders of QFs of (a)–(i) are labeled in their captions.

Figure 6.

Delay reconstruction portraits of the Rössler attractor using time delays corresponding to (a) the first minimum, (b) a non-minimum, and (c) the seventh minimum, respectively, of the curves in Figure 5a–d, Figure 5h, and Figure 5i.

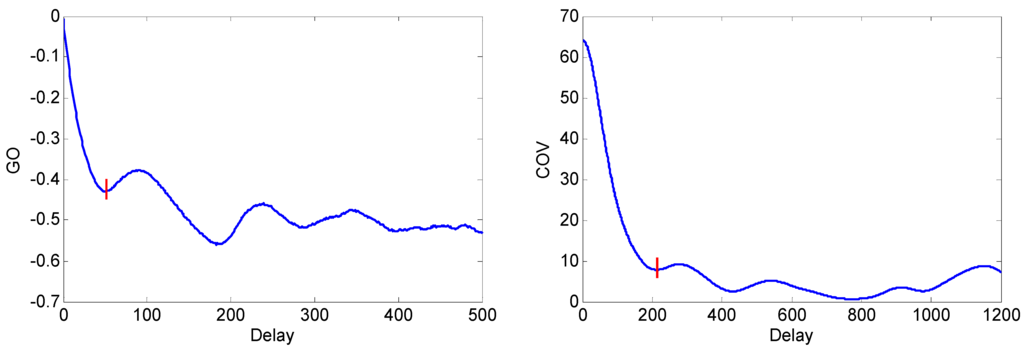

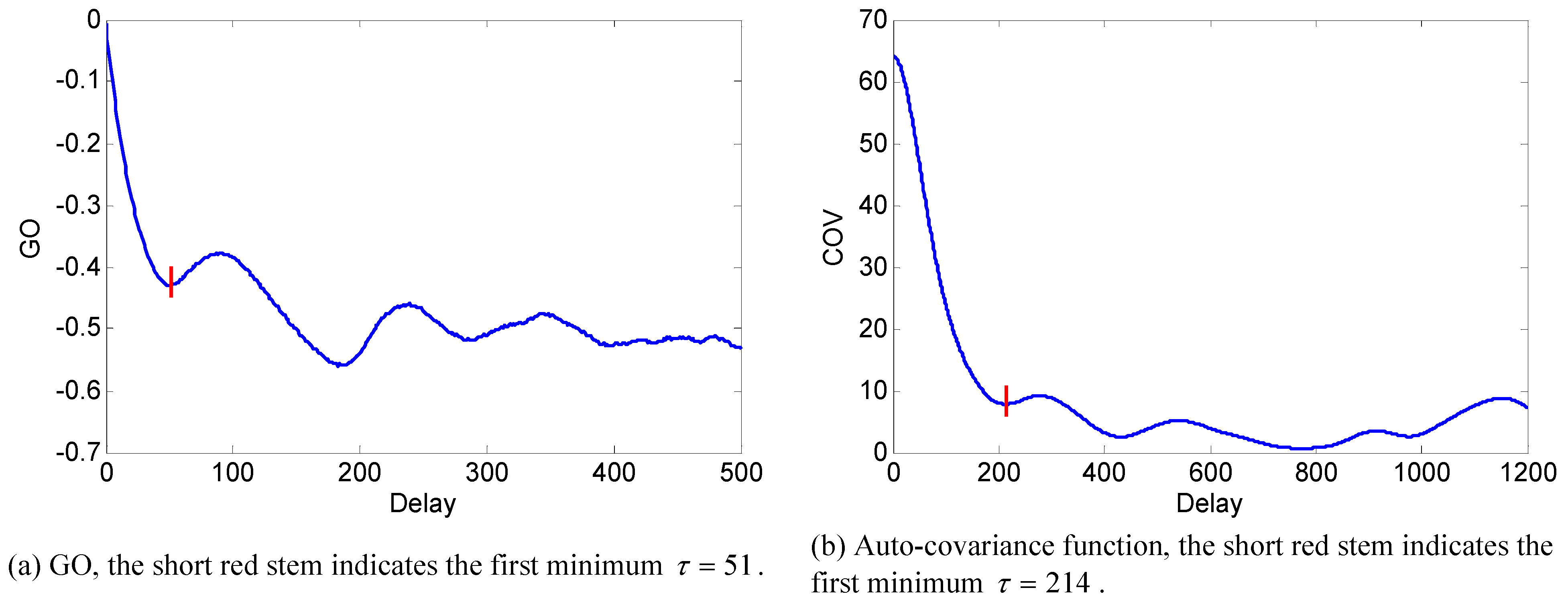

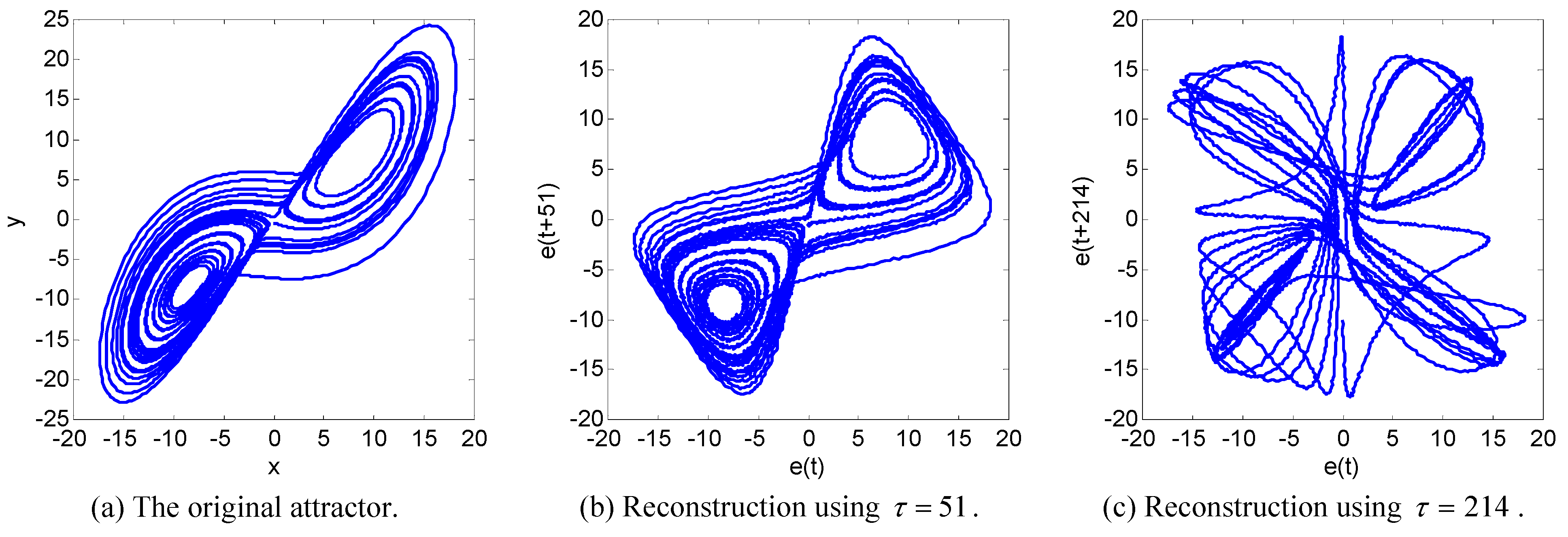

The covariance function reflects the correlation between signals and sometimes can also be used for choosing τ [23]. However, generally speaking, the independence measures GO, QE, and GMI, like MI, are better criteria than the covariance. To illustrate this point, let us see the second example, which is on the Lorenz system [22,24]. The sampling time interval is π/1000. The observed sequence e (t) is also obtained by adding noise to x(t). Figure 7a and Figure 7b plot the curves of α(e(t), e(t + τ)) and the auto-covariance function cov (e(t), e(t + τ)) versus τ. Figure 8 shows that the reconstruction portrait with the first minimum of GO reproduces the double wing structure of the Lorenz attractor fairly well. The results are similar when using the QE or GMI. By contrast, none of the minima of the covariance function in Figure 7b are good choices. For instance, Figure 8c is the delay portrait using the first minimum of covariance function. It looks much more complex than Figure 8a and Figure 8b. Actually, the curve of covariance function misses the best choice of τ, which appears earlier than its first minimum.

Figure 7.

GO and auto-covariance function of reconstructed variables of the Lorenz attractor versus τ. N = 50000 sample points are used in both. l = 200 in GO.

Figure 7.

GO and auto-covariance function of reconstructed variables of the Lorenz attractor versus τ. N = 50000 sample points are used in both. l = 200 in GO.

Figure 8.

Comparison of reconstruction effects of the Lorenz attractor. The reconstruction delays of (b) and (c) are the first minima of GO and auto-covariance function in Figure 7, respectively.

Figure 8.

Comparison of reconstruction effects of the Lorenz attractor. The reconstruction delays of (b) and (c) are the first minima of GO and auto-covariance function in Figure 7, respectively.

Note that GO and QE are much more efficient to compute than MI and GMI so that they work well with real-time applications such as independent component analysis [8] and blind source separation [9]. MI and GMI, however, can give more accurate estimate of the degree of independence.

7. Conclusions

We have studied some convex functions based measures of independence. Essentially, the independence of variables is equivalent to the uniformity of the joint PDF of the variables obtained by transforming the original variables by their respective CDFs. The MI just uses the convex function ulogu to penalize the nonuniformity of this joint PDF, and thus measures the independence. Similarly, the QE uses an arbitrary convex function to penalize the nonuniformity of the dicretized version of this PDF. Since any convex functions can appear in it, the QE is a broad enough definition that actually includes quite a few existing definitions of entropies. In this paper, we have focused on how different selections of convex functions affect the performance of the corresponding independence measure. When the QE is plotted versus the variance from the uniform distribution, the QF of QE is twice the slope of QE at its minimum over the dynamic range of QE and, therefore, indicates the sharpness of QE around its minimum. The expectation of GO is a subclass of QE, and the Csiszar’s GMI is the limit of a special form of QE as the number of quantization levels approaches infinity. Based on these two facts, the definition of QF can be generalized to be made also applicable for GO and GMI. The order of QF with respect to the number of quantization levels well predicts the sharpness of the minima of these measures of independence. Furthermore, it indicates that GO, QE, and GMI can have more prominent minima than the minus entropy and the MI.

Besides theoretical study, we have also applied the convex functions based measures of independence to chaotic signal processing. Initiated by Fraser and Swinney, the MI has become the most widely used criterion for choosing delay in strange attractor reconstruction. However, there is no evidence to show that it is the optimum criterion. Indeed, GO, QE, and GMI are good alternative and sometimes even better criteria for choosing delay. We have proposed a recursive algorithm for computing GMI that is a generalization of Fraser and Swinney’s algorithm for computing MI. In addition, we have conducted numerical experiments on chaotic systems that well exemplify the theory of QF that we have developed.

Finally we note that whether a measure of information is good or not is application dependent. In some applications, a sharp minimum might not be crucial. We shall describe such application in a forthcoming paper. Future work also includes applying the convex function based measures to real world problems such as EEG analysis. To conclude, the results presented in this paper is instructive in understanding the behavior of convex functions based information measures and helping selecting the most suitable measures for various applications.

Acknowledgements

The authors thank H. Shimokawa for his valuable comments. This work was partly supported by the National Natural Science Foundation of China under Grant 60872074 and the Scientific Research Foundation for the Returned Overseas Chinese Scholars, State Education Ministry. The authors also thank the anonymous reviewers for their valuable comments and suggestions. This work is an invited contribution to the special issue of Entropy on advances in information theory.

References

- Shannon, C.E. A mathematical theory of communication. Bell Syst. Tech. J. 1948, 27, 379–423, 623–656. [Google Scholar] [CrossRef]

- Zemansky, M.W. Heat and Thermodynamics; McGraw-Hill: New York, NY, USA, 1968. [Google Scholar]

- Renyi, A. On measures of entropy and information. In Proceedings of the Fourth Berkeley Symposium on Mathematical Statistics and Probability, Berkeley, CA, USA, 20 June–30 July 1960; University of California Press: Berkeley, CA, USA, 1961; Volume 1, pp. 547–561. [Google Scholar]

- Havrda, J.; Charvat, F. Quantification method of classification processes. Kybernetika 1967, 1, 30–34. [Google Scholar]

- Csiszar, I. Information-type measures of difference of probability distributions and indirect observations. Stud. Sci. Math. Hung. 1967, 2, 299–318. [Google Scholar]

- Tsallis, C. Possible generalization of Boltzmann-Gibbs statistics. J. Stat. Phys. 1988, 52, 479–487. [Google Scholar] [CrossRef]

- Kapur, J.N. Measures of Information and Their Applications; John Wiley & Sons: New York, NY, USA, 1994. [Google Scholar]

- Chen, Y. A novel grid occupancy criterion for independent component analysis. IEICE Trans. Fund. Electron. Comm. Comput. Sci. 2009, E92-A, 1874–1882. [Google Scholar] [CrossRef]

- Chen, Y. Blind separation using convex functions. IEEE Trans. Signal Process. 2005, 53, 2027–2035. [Google Scholar] [CrossRef]

- Kapur, J.N. Maximum-Entropy Models in Science and Engineering; John Wiley & Sons: New York, NY, USA, 1989. [Google Scholar]

- Xu, J.; Liu, Z.; Liu, R.; Yang, Q. Information transmission in human cerebral cortex. Physica D 1997, 106, 363–374. [Google Scholar] [CrossRef]

- Kolarczyk, B. Representing entropy with dispersion sets. Entropy 2010, 12, 420–433. [Google Scholar] [CrossRef]

- Takata, Y.; Tagashira, H.; Hyono, A.; Ohshima, H. Effect of counterion and configurational entropy on the surface tension of aqueous solutions of ionic surfactant and electrolyte mixtures. Entropy 2010, 12, 983–995. [Google Scholar] [CrossRef]

- Zupanovic, P.; Kuic, D.; Losic, Z.B.; Petrov, D.; Juretic, D.; Brumen, M. The maximum entropy production principle and linear irreversible processes. Entropy 2010, 12, 996–1005. [Google Scholar] [CrossRef]

- Van Dijck, G.; Van Hulle, M.M. Increasing and decreasing returns and losses in mutual information feature subset selection. Entropy 2010, 12, 2144–2170. [Google Scholar] [CrossRef]

- Takens, F. Detecting strange attractors in turbulence. In Warwick 1980 Lecture Notes in Mathematics; Springer-Verlag: Berlin, Germany, 1981; Volume 898, pp. 366–381. [Google Scholar]

- Fraser, A.M.; Swinney, H.L. Independent coordinates for strange attractors from mutual information. Phys. Rev. A 1986, 33, 1134–1140. [Google Scholar] [CrossRef] [PubMed]

- Gretton, A.; Bousquet, O.; Smola, A.; Scholkopf, B. Measuring statistical dependence with Hilbert-Schmidt norms. In Proceedings of the 16th International Conference on Algorithmic Learning Theory, Singapore, October 2005; pp. 63–77.

- Szekely, G.J.; Rizzo, M.L.; Bakirov, N.K. Measuring and testing dependence by correlation of distances. Ann. Stat. 2007, 35, 2769–2794. [Google Scholar] [CrossRef]

- Nelsen, R.B. An Introduction to Copulas, Lecture Notes in Statistics 139; Springer-Verlag: New York, NY, USA, 1999. [Google Scholar]

- Chen, Y. On Theory and Methods for Blind Information Extraction. Ph.D. dissertation, Southeast University, Nanjing, China, 2001. [Google Scholar]

- Rössler, O.E. An equation for continuous chaos. Phys. Lett. A 1976, 57, 397–398. [Google Scholar] [CrossRef]

- Longstaff, M.G.; Heath, R.A. A nonlinear analysis of the temporal characteristics of handwriting. Hum. Movement Sci. 1999, 18, 485–524. [Google Scholar] [CrossRef]

- Lorenz, E.N. Deterministic nonperiodic flow. J. Atmos. Sci. 1963, 20, 130–141. [Google Scholar] [CrossRef]

Appendix: Recursive Algorithm for Computing GMI and Related Issues

A. Recursive Algorithm for Computing GMI

Recall (21) and rewrite it as follows:

where p(i,j) is as defined in (3). Thus, when l is fairly large,

where p(i,j) is as defined in (3). Thus, when l is fairly large,  . Alternatively, a more delicate approach can be taken. Namely, different numbers of quantization levels are used according to the bumpiness of pz1z2 in different local regions. Larger l should be taken in more fluctuant regions to avoid the estimated γ from being too small, whereas smaller l should be taken in rather flat regions to avoid the estimated γ from being too large due to limited sample size.

. Alternatively, a more delicate approach can be taken. Namely, different numbers of quantization levels are used according to the bumpiness of pz1z2 in different local regions. Larger l should be taken in more fluctuant regions to avoid the estimated γ from being too small, whereas smaller l should be taken in rather flat regions to avoid the estimated γ from being too large due to limited sample size.

. Alternatively, a more delicate approach can be taken. Namely, different numbers of quantization levels are used according to the bumpiness of pz1z2 in different local regions. Larger l should be taken in more fluctuant regions to avoid the estimated γ from being too small, whereas smaller l should be taken in rather flat regions to avoid the estimated γ from being too large due to limited sample size.

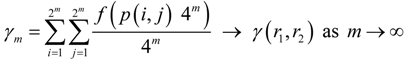

. Alternatively, a more delicate approach can be taken. Namely, different numbers of quantization levels are used according to the bumpiness of pz1z2 in different local regions. Larger l should be taken in more fluctuant regions to avoid the estimated γ from being too small, whereas smaller l should be taken in rather flat regions to avoid the estimated γ from being too large due to limited sample size.Specifically, let l = 2m, m = 0,1,2,..., then:

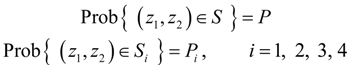

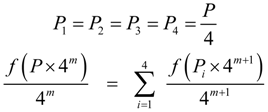

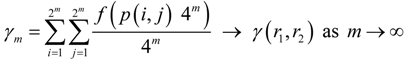

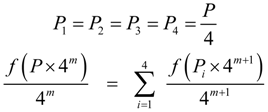

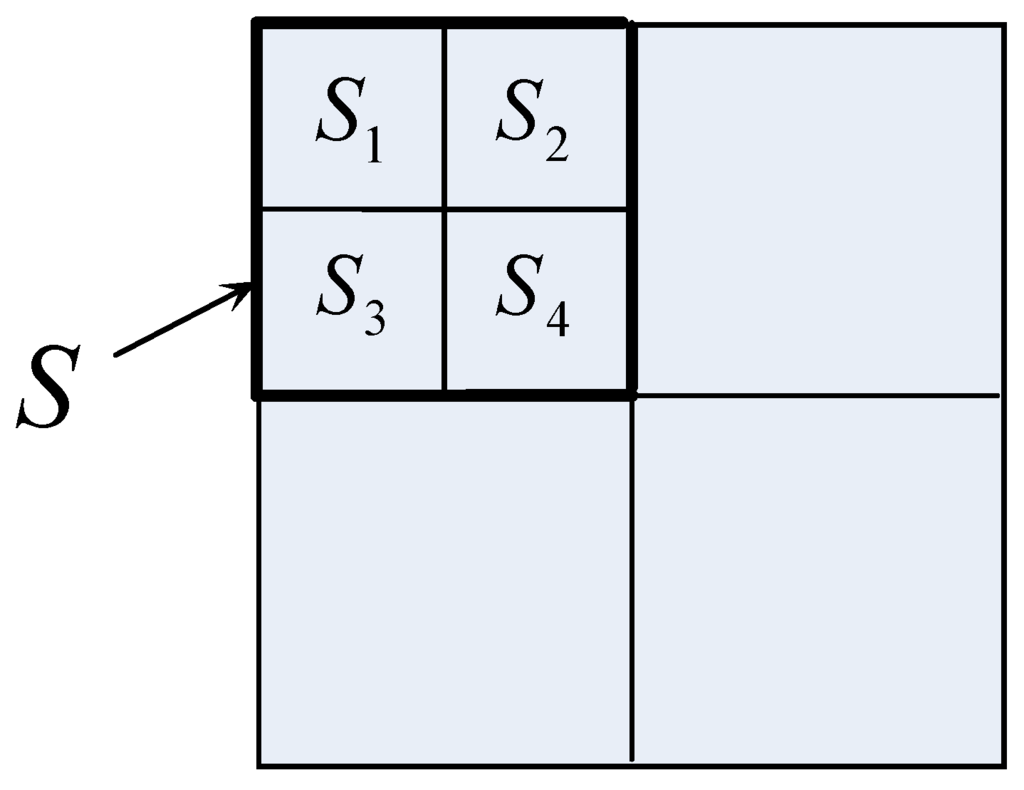

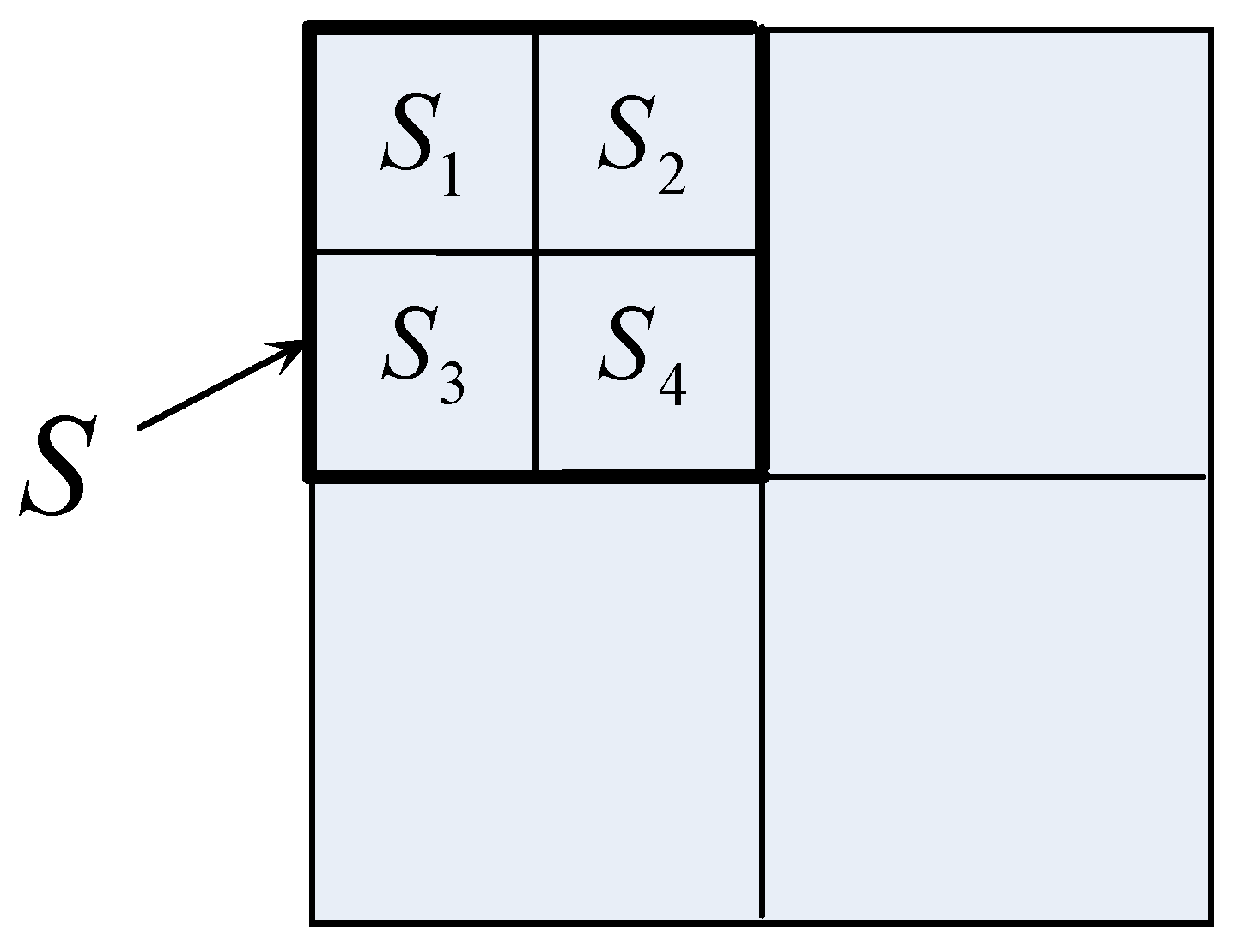

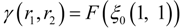

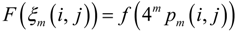

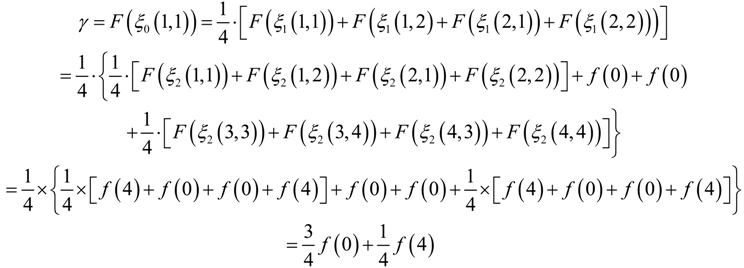

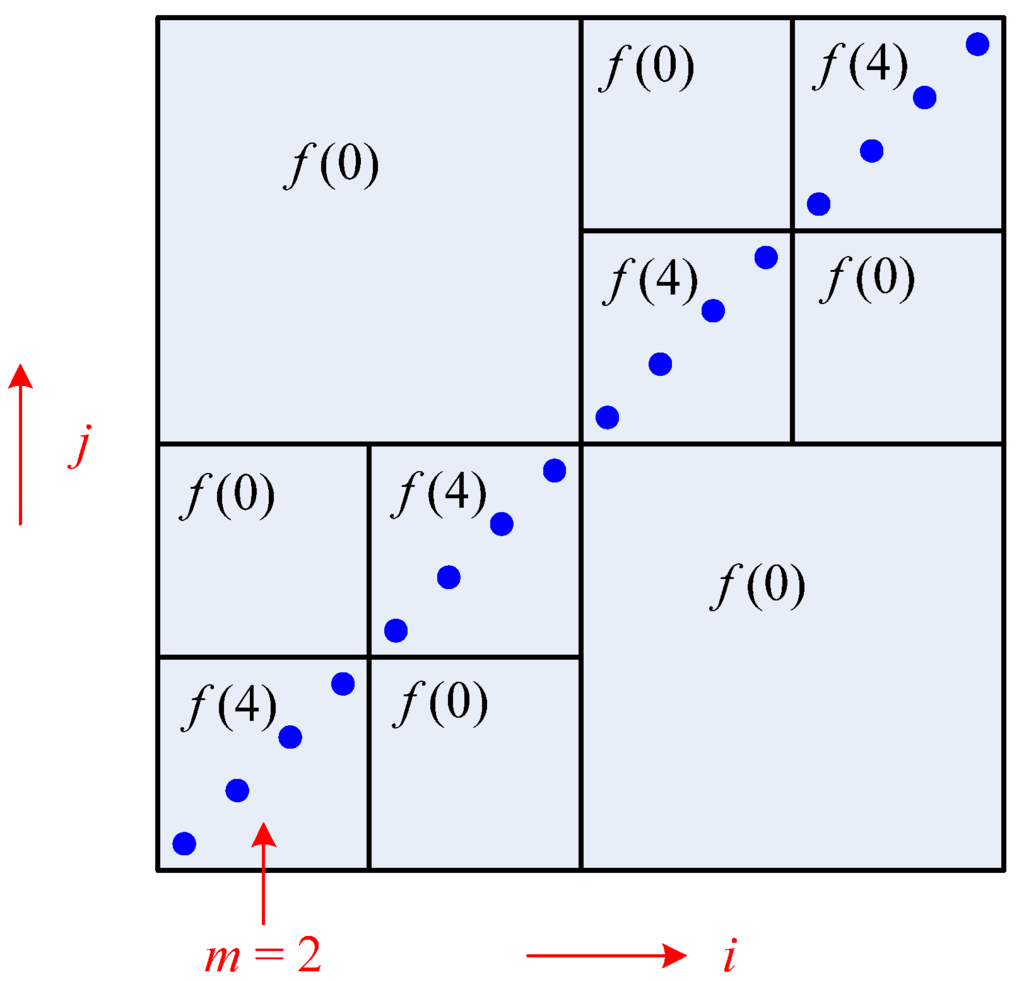

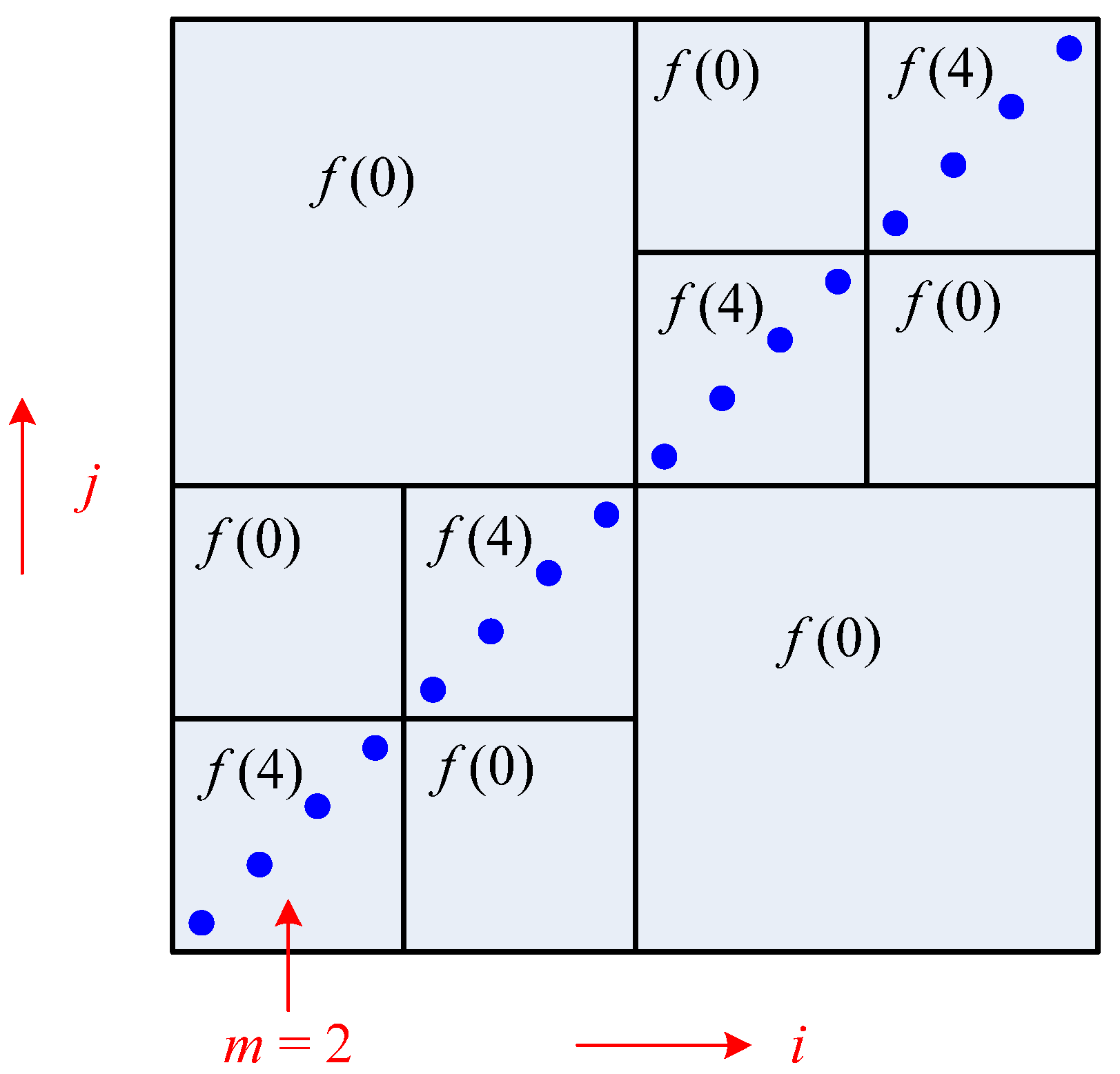

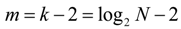

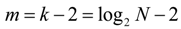

As shown in Figure 9, each square in the 2m × 2m grid consists of four squares in the 2m+1 × 2m+1 grid. Let us denote a certain square in the 2m × 2m grid by S and denote the four squares 2m+1 × 2m+1 in the grid that constitute S by S1, S2, S3, and S4. Also, let:

As shown in Figure 9, each square in the 2m × 2m grid consists of four squares in the 2m+1 × 2m+1 grid. Let us denote a certain square in the 2m × 2m grid by S and denote the four squares 2m+1 × 2m+1 in the grid that constitute S by S1, S2, S3, and S4. Also, let:

If (z1,z2) is uniformly distributed over S, then:

If (z1,z2) is uniformly distributed over S, then:

Namely,

Hence there is no need to further divide S into S1, S2, S3, and S4. Thus, the following recursive algorithm for computing γ(r1,r2) is obtained.

Namely,

Hence there is no need to further divide S into S1, S2, S3, and S4. Thus, the following recursive algorithm for computing γ(r1,r2) is obtained.

Component of γm on S = Component of γm+1 on S

Figure 9.

Each square in the 2m × 2m grid is composed of four squares in the 2m+1 × 2m+1 grid.

Figure 9.

Each square in the 2m × 2m grid is composed of four squares in the 2m+1 × 2m+1 grid.

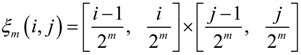

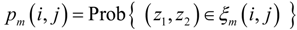

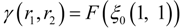

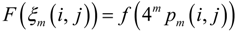

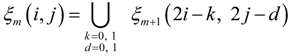

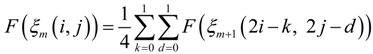

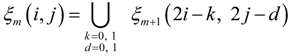

Let ξm(i,j) denote the square in the ith column and the jth row of the 2m × 2m uniform grid over [0,1] × [0,1], i.e.,

and let:

and let:

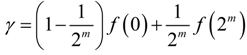

Then,

Then,

where, if pz1z2 is uniform in ξm (i, j), then

where, if pz1z2 is uniform in ξm (i, j), then

If pz1z2 is not uniform in ξm (i,j), then we further divide ξm (i,j) into four squares in the 2m+1 × 2m+1 grid, noting that:

If pz1z2 is not uniform in ξm (i,j), then we further divide ξm (i,j) into four squares in the 2m+1 × 2m+1 grid, noting that:

yielding:

yielding:

It is easy to verify that, when f(u) = ulogu, the above algorithm reduces to Fraser and Swinney’s recursive algorithm for computing MI [17].

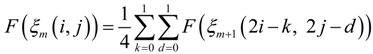

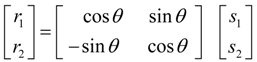

B. Uniformity Test

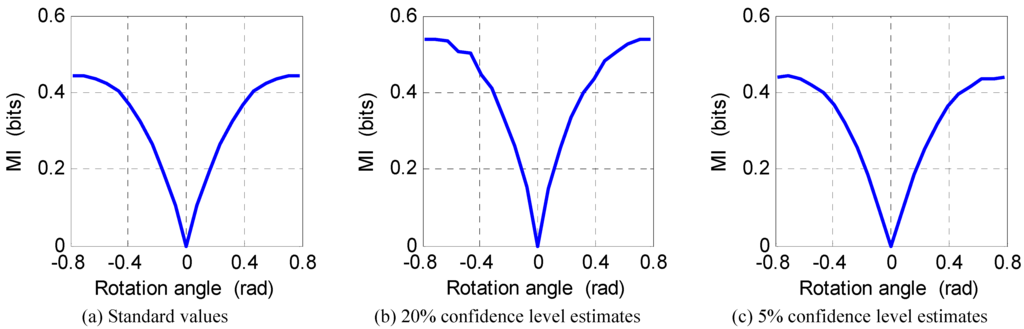

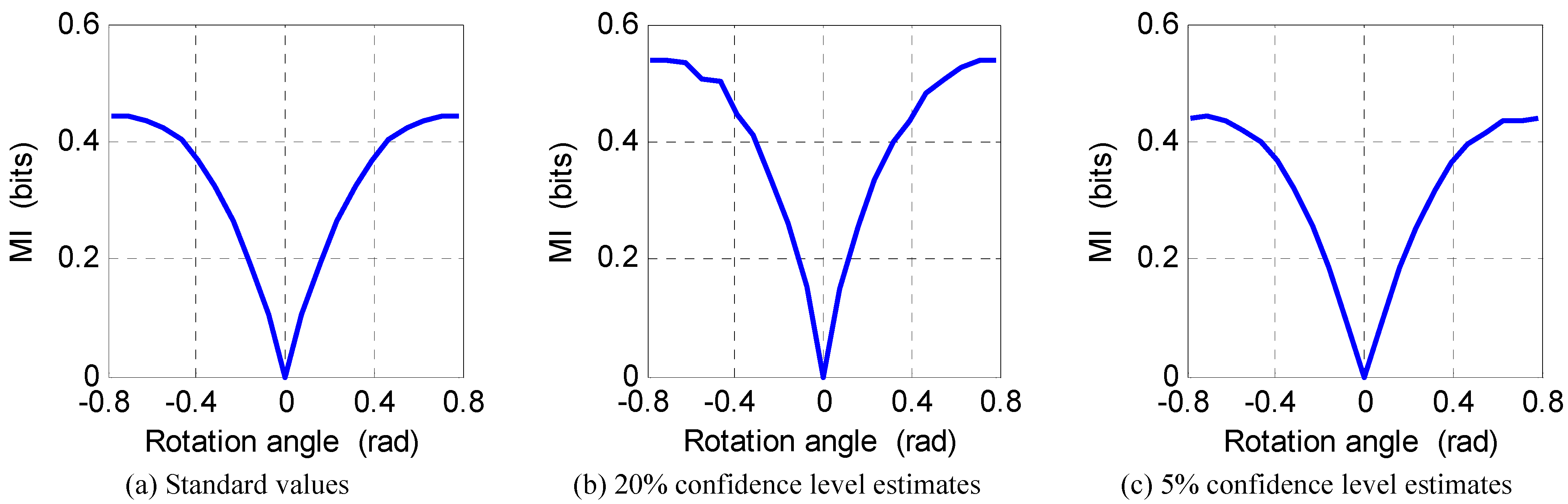

In the above recursive algorithm for computing GMI, we need to judge whether pz1z2 is uniform in ξm(i,j). This can be done using the χ2 test proposed in [17]. However, the 20% confidence level in [17] was chosen arbitrarily. Experiments show that the uniformity test with the 20% confidence level may be too stringent and cause the GMI estimate to be too large. To solve this problem, we here propose a simple method for choosing the confidence level. We mix two independent variables, s1 and s2, with identical distributions by a 2 × 2 rotation matrix with rotation angle θ to get two variables, r1 and r2, i.e.,

The MI of r1 and r2 is then a function of θ, i.e.,

The MI of r1 and r2 is then a function of θ, i.e.,

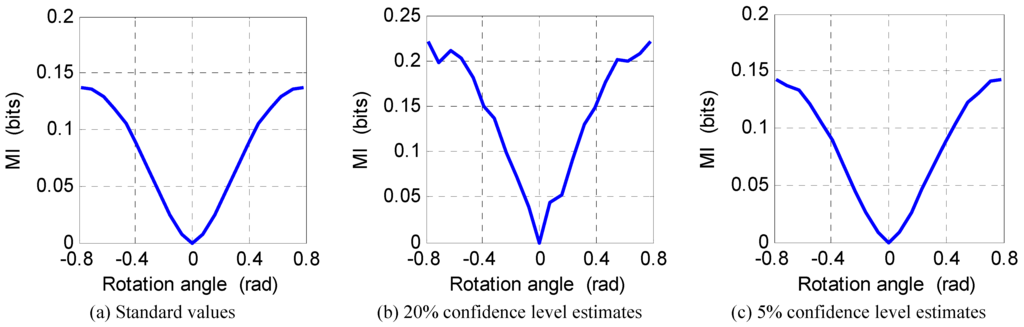

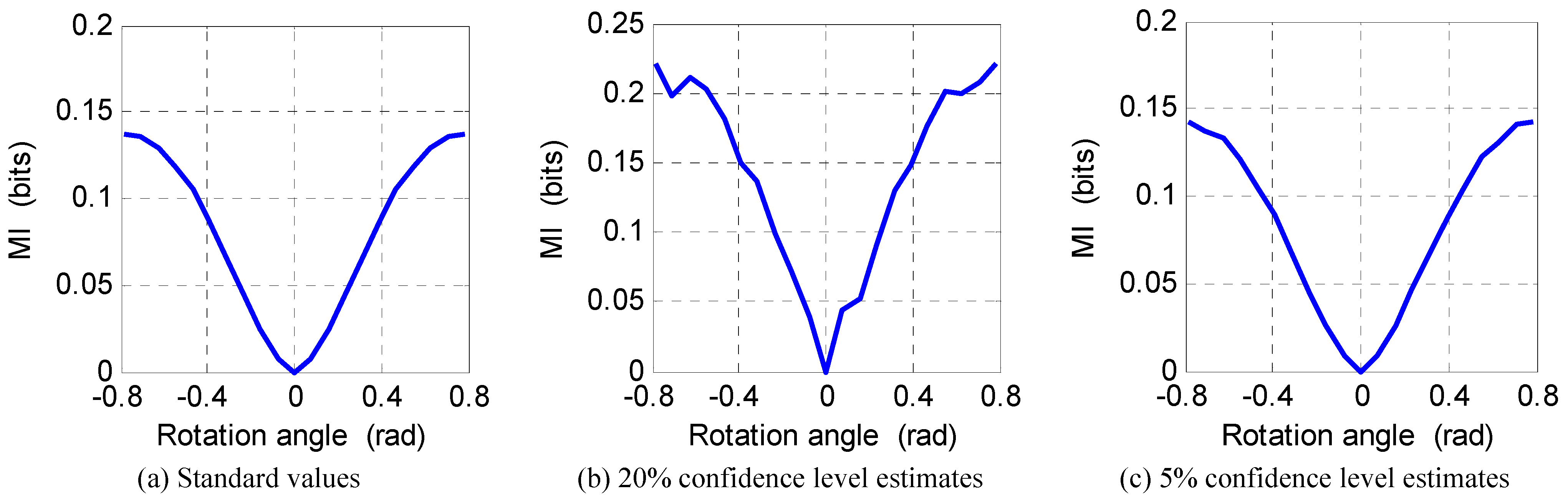

Referring to the χ2 distribution table, we choose five confidence levels, 20, 10, 5, 2, and 1%, to estimate I (θ). If s1 and s2 have some standard distributions, then standard values of I (θ) are easy to obtain. Examining in turn the two cases where s1 and s2 are both uniform variables and both Laplacian variables, we can spot that the 5% confidence level produces the minimum estimation error in both cases. Comparing the estimation results using 20% and 5% confidence levels with the standard curves, we can see that the results with the 20% confidence level are obviously too large, whereas those with the 5% confidence level are quite accurate, as shown in Figure 10 and Figure 11. Therefore, 5% is a better choice for the confidence level.

Referring to the χ2 distribution table, we choose five confidence levels, 20, 10, 5, 2, and 1%, to estimate I (θ). If s1 and s2 have some standard distributions, then standard values of I (θ) are easy to obtain. Examining in turn the two cases where s1 and s2 are both uniform variables and both Laplacian variables, we can spot that the 5% confidence level produces the minimum estimation error in both cases. Comparing the estimation results using 20% and 5% confidence levels with the standard curves, we can see that the results with the 20% confidence level are obviously too large, whereas those with the 5% confidence level are quite accurate, as shown in Figure 10 and Figure 11. Therefore, 5% is a better choice for the confidence level.

Figure 10.

MI of rotational mixtures of two independent identical uniform variables versus rotation angle.

Figure 10.

MI of rotational mixtures of two independent identical uniform variables versus rotation angle.

Figure 11.

MI of rotational mixtures of two independent identical Laplacian variables versus rotation angle.

Figure 11.

MI of rotational mixtures of two independent identical Laplacian variables versus rotation angle.

C. Practical Implementation

In computing GO, QE, and GMI, the original variables should be transformed by their CDFs. In practice, the analytical forms of CDFs are usually unknown. However, the lack of analytical CDFs does not impede the computation of these measures. Using the definition of CDF, if we sort the N observations of variable r denoted by r(1),...,r(N) in ascending order and among them r(t) is in the ith place, then i/N is an unbiased estimate of qr(r (t)).Indeed, when computing GO and QE, we may directly map the sample points of (r1,r2) to the indices of the squares that the corresponding sample points of (z1,z2) belong to [8,9]. When computing GMI, a sample point of (r1,r2) is first mapped to an order pair (i,j). Then the square that (i,j) belongs to is determined according to the present partitioned grid. These operations (mainly sorting) do not involve floating point operations and can achieve high efficiency. The p(i,j) (pm(i,j)) in QE (GMI) is estimated by the ratio of the sample points in the square ξ(i,j) (ξm(i,j)) to the total number of sample points.

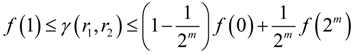

D. Output of the Recursive Algorithm of GMI

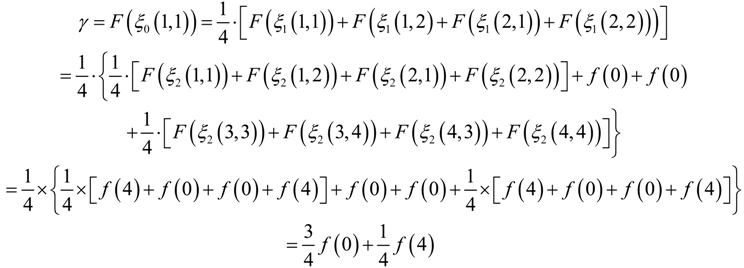

If r1 and r2 are independent, (z1,z2) will be uniform in [0,1] × [0,1]. The recursive algorithm for computing GMI will terminate at the m = 0 hierarchy and produce the minimal output f(1). The maximal output is generated for the most dependent case, e.g., r1 ≡ r2. Assume that we use N = 2k sample points of two same variables r1 ≡ r2 to compute GMI. The corresponding sample points of (z1,z2) will line up along the diagonal. The χ2 uniformity tests, no matter using 20, 10, or 5% confidence levels, all lead to the same final partition that divides the sample points of (z1,z2) into every four points in a square. For example, Figure 12 shows the case of N = 16 sample points. In this case, the computation of GMI proceeds as follows:

Figure 12.

Partition of N = 16 sample points of (z1,z2) of two same variables.

Figure 12.

Partition of N = 16 sample points of (z1,z2) of two same variables.

The result of (42) can be generalized to the case of N = 2k sample points:

where:

where:

Thus, we obtain the lower and upper bounds of GMI computed using the recursive algorithm as follows.

Thus, we obtain the lower and upper bounds of GMI computed using the recursive algorithm as follows.

We can check whether overflow might occur with (45) when a convex function such as f(u) = au(a > 1) is used.

We can check whether overflow might occur with (45) when a convex function such as f(u) = au(a > 1) is used.

© 2011 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/3.0/).