Abstract

In the present paper, we propose a large sample asymptotic approximation for the sampling and posterior distributions of differential entropy when the sample is composed of independent and identically distributed realization of a multivariate normal distribution.

1. Introduction

Entropy has been an active topic of research for over 50 years and much has been published about this measure in various contexts. In statistics, recent developments have investigated how to estimate entropy from data, either in a parametric [1,2,3] or nonparametric framework [4,5], as well as the reliability and convergence properties of these estimators [6,7].

By contrast, relatively little is known about the statistical distribution of entropy, even in the simple case of a multivariate normal distribution. For instance, the differential entropy of a D-dimensional random variable X that is normally distributed with mean μ and covariance matrix Σ is given by

If are N independent and identically distributed realizations of X and S the corresponding sum of square, then the sample differential entropy is used as the so-called plug-in estimator for . However, is also a random variable whose sampling distribution could be studied. Ahmed et al. provided the exact expression for the mean and variance of this variable [1]. Similarly, in a Bayesian framework, given , what are the probable values of ? We are not aware of any study in this direction for multivariate normal distributions (but see, e.g., [8,9] for the posterior moments of entropy in the case of multinomial distributions). In the present paper, we provide an asymptotic approximation for both the sampling distribution of and, in a Bayesian framework, the posterior distribution of given . To this aim, we first calculate the moments of in the same condition as above. We then use this result to provide a closed form expression for the cumulant-generating function of , from which we derive closed form expressions for the cumulants, together with asymptotic expansions when . Using the characteristic function of U, we then provide an asymptotic normal approximation for the distribution of this variable. We finally apply these result to the sample and posterior entropy of multivariate normal distributions.

2. General Result

Assume that S is distributed according to a Wishart distribution with degrees of freedom and scale matrix Σ, i.e., [10] (Chapter 7)

where is the normalizing constant,

Direct calculation show that we have, for ,

provided that the integral sums to one, i.e., or, equivalently, .

2.1. Cumulant-Generating Function, Cumulants, and Central Moments of U

Cumulant-generating function Let U be the function defined in the introduction, i.e.,

and its cumulant-generating function. is the log of the quantity calculated in Equation (3)

and can be expressed using Equation (2), leading to

Cumulants By construction, the nth cumulant of U is given by . In the present case, can be obtained by direct derivation, yielding for the cumulants

and

for , where ψ is the digamma function, i.e., , and its nth derivative [11] (pp. 258–260). For any , is always strictly positive. It is an increasing function of D and a decreasing function of ν. It tends to 0 when ν tends to infinity. For a proof of these properties, see the appendix.

Central moments Cumulants and central moments are related as follows: If we denote by μ, , γ and the mean, variance, skewness and excess kurtosis of U, respectively, we have , , , and . Note that, by definition, μ is equal to the expression of Equation (7) and to that of Equation (8) with .

2.2. Asymptotic Expansion

When ν is large, ψ can be approximated using the following asymptotic expansion [11] (p. 260)

where refers to Landau notation and stands for any function for which there exists so that is bounded for . This leads to

Incorporating this expansion in Equation (7) yields for the first cumulant or, equivalently, the mean μ

For the cumulants and central moments of order 2 and up, we use the following approximation of [11] (p. 260)

Each term in the sum of Equation (8) can therefore be approximated as

leading to an approximation of of the form

Taking n equal to 2, 3, and 4 respectively yields for the cumulants of order 2, 3, and 4

We can now provide asymptotic approximations for the corresponding central moments. The variance is given by Equation (12). Approximation for the skewness can be obtained from Equations (12) and (13) as

being asymptotically positive, the distribution is skewed on the right. Finally, the approximation for can be expressed as

which is asymptotically positive, corresponding to a leptokurtic distribution.

2.3. Asymptotic Distribution of U

We now use the previous results to prove that U is asymptotically normally distributed with mean and variance . To this aim, set

with and . The logarithm of the characteristic function of reads

where is the characteristic function of U. We proved Equation (3) as an analytic identity for . This expression will, however, be valid in the range where is analytic. We can thus obtain an expression for by replacing t by in Equation (3), leading to

We then use Stirling’s approximation [11] (p. 257)

to approximate each term of the sum in the second term of the right-hand side of Equation (16) when ν is large, yielding

We consequently have for the characteristic moment of

As ν tends towards infinity, achieves pointwise convergence toward , which is continuous in . According to Lévi’s continuity theorem, therefore converges in distribution to the standard normal distribution,

3. Application to Differential Entropy

We can use the results of the previous section to obtain the exact and asymptotic cumulants of the sample and posterior entropy when the data are multivariate normal.

3.1. Sampling Distribution

The differential entropy of a D-dimensional random variable X that is normally distributed with (known) mean μ and (unknown) covariance matrix Σ is given by Equation (1). Let be N independent and identically distributed realizations of X. Set S the sum of square, i.e.,

S follows a Wishart distribution with degrees of freedom and scale matrix Σ [12] (Th. 7.2.2). Define the sample differential entropy corresponding to the N realizations as . Using the fact that , we obtain that , where U was defined in Equation (4). The mean and variance of can therefore be expressed as functions of the corresponding central moments of U, i.e., [Equations (7) and (9)] and [Equations (6) and (12)], leading to the following closed form expressions and approximations

and

Furthermore, use of Section 2.3 shows that, given N and Σ, is asymptotically normally distributed with mean and variance . If μ is unknown, we replace μ by the sample mean m in Equation (17). S is then still Wishart distributed with scale matrix Σ but degrees of freedom [12] (Cor. 7.2.2). The exact expectation and variance of are therefore given by Equations (18) and (20), respectively where N is replaced by . Performing asymptotic expansion of this expression leads to

and

Furthermore, since the first-order approximation is the same for for , both quantities have the same asymptotic distribution.

3.2. Posterior Distribution

With the same assumptions as above, and assuming a non-informative Jeffreys prior for Σ, i.e.,

the posterior distribution for Σ given the N realizations of X is inverse Wishart with degrees of freedom and scale matrix [13]. This implies that , the concentration matrix, is Wishart distributed with n degrees of freedom and scale matrix . Results of Section 3.1 therefore apply to . But, since for any matrix A, , we have that is equal to or, equivalently, to . As a consequence,

and

Also, is asymptotically normally distributed with mean and variance .

4. Application to Mutual Information and Multiinformation

Similar results can also be derived about the first cumulant of mutual information and multiinformation, its generalization to more than two variables. The mutual information between two sets of variables (of dimension ) and (of dimension ) is defined as

For multivariate normal variables, we have

where and are the two block diagonal elements of Σ and where h was defined in Equation (1).

4.1. Sampling Mean

Define the sample mutual information as . Using Equation (24), direct calculation shows that we have

An asymptotic approximation for can be obtained by direct use of Equation (19). For and , we proceed as follows. If S is Wishart distributed with N degrees of freedom and scale matrix Σ, then () is also Wishart distributed with N degrees of freedom and scale matrix [12] (Th. 7.3.4). Equation (19) can therefore be applied to matrix with the proper scale matrix, yielding

consequently reads

A similar result can be obtained for the generalization of i to K sets of variables (of size ) as a measure called total correlation [14], multivariate constraint [15], δ [16], or multiinformation [17]. In that case, we have

and, in the particular case where each is one-dimensional (i.e., ),

4.2. Posterior Mean

A similar argument can be applied to the Bayesian posterior mean of i. Using Equation (24) again, we have

An asymptotic approximation for can be obtained by direct use of Equation (23). Now, if Σ is inverse Wishart distributed with n degrees of freedom and scale matrix S, then () is also inverse Wishart distributed with (, ) degrees of freedom and scale matrix [18]. Application of Equation (23) with the proper degrees of freedom and scale matrix leads to

where we only retained the expansion terms of order up to for the sake of simplicity. consequently reads

For posterior multiinformation, we have

and, in the particular case where each is one-dimensional (i.e., ),

5. Simulation Study

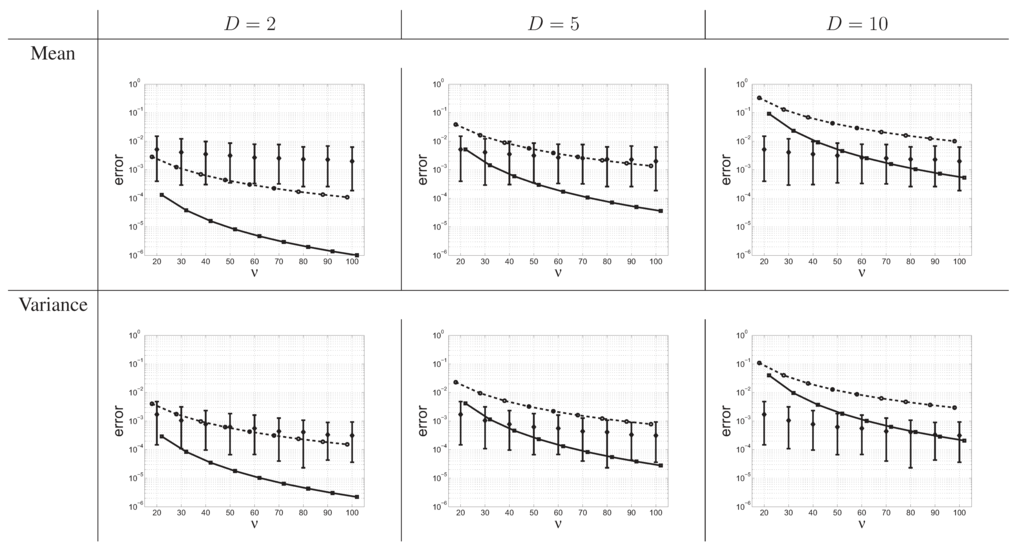

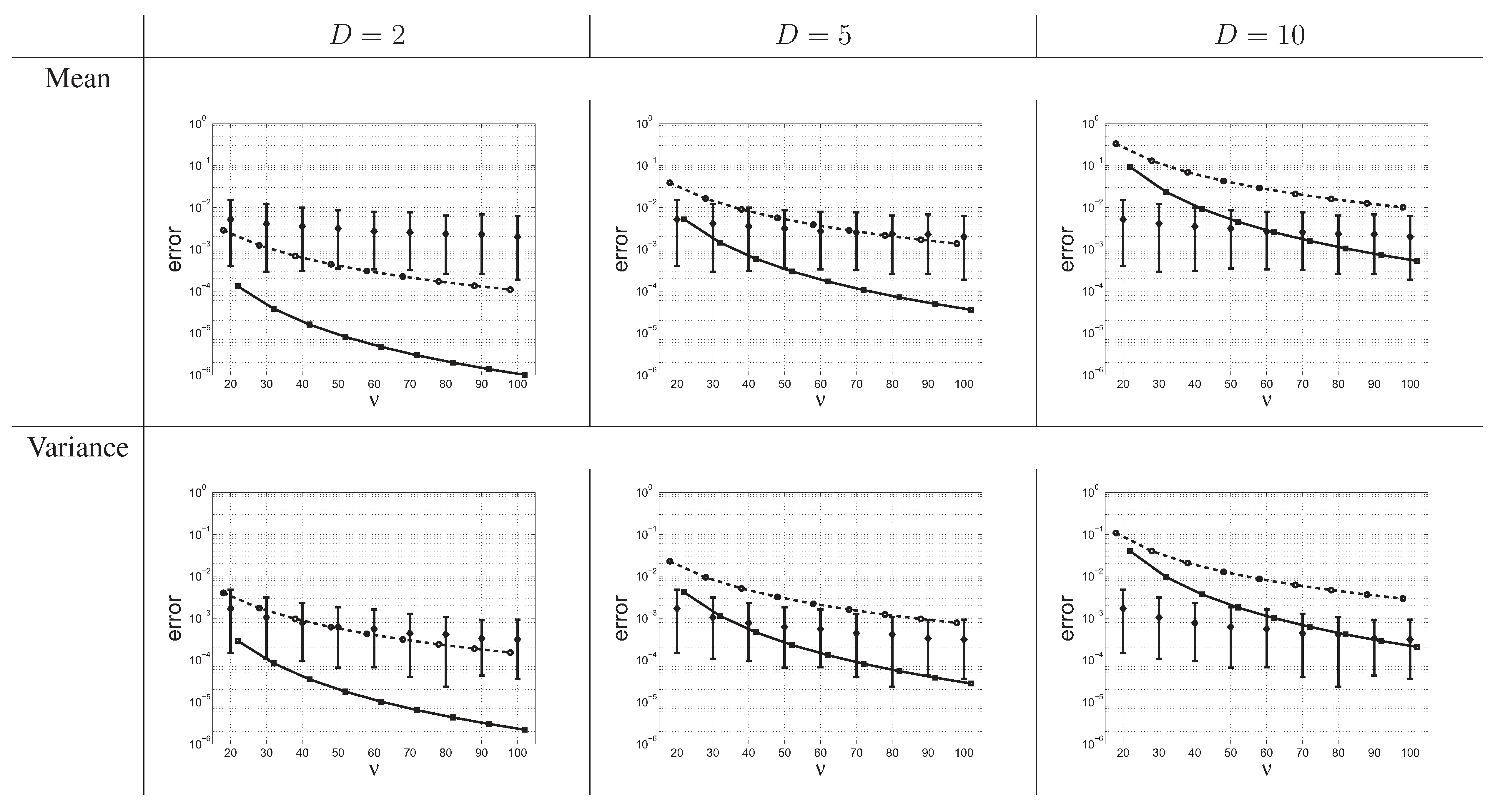

We conducted the following computations for . To assess the accuracy of the asymptotic expansion of the cumulants of sample entropy, we calculated the error made by the first and second central moments (i.e., the mean and variance of the distribution) compared to the exact values as a function of ν. As a way of comparison, we computed the same quantities for 500 different homogeneous positive definite matrices Σ (i.e., with all non-diagonal elements equal to the same value ρ, generated uniformly); for each value of Σ and ν, we generated 1,000 samples from , computed the corresponding values of sample entropy, and approximated the moments by the corresponding sampling moments. The results are reported in Figure 1.

6. Discussion

In this work, we calculated both the moments of and the cumulant-generating function of when S is Wishart distributed with ν degrees of freedom and scale matrix Σ. From there, we provided an asymptotic approximation of the first four central moments of U. We also proved that U is asymptotically normally distributed. We then demonstrated the quality of the normal approximation compared to simulations. We finally applied these results to the multivariate normal distribution to provide asymptotic approximations of the sample and posterior distributions of differential entropy, as well as an asymptotic approximation of the sample and posterior mean of multiinformation.

Interestingly, the moments of and, as a way of consequence, the cumulant-generating function of U depends on the distribution that S follows only through the matrix dimension D and the degree of freedom ν, but not through Σ. This means that the exact distribution of U is also independent from that parameter and could possibly be tabulated as a function of the two integer parameters.

As mentioned in the introduction, the sample differential entropy defined in Equation (1) is equal to the plug-in estimator for differential entropy. The present work provides a quantification in the case of multivariate normal samples for the well-known negative bias for this estimator [7]. Obviously, Equation (18) confirms that, to correct from this bias, one must take the uniformly minimum variance unbiased (UMVU) estimator [1].

The posterior derivation that we presented here is a particular case of the Bayesian posterior estimate obtained by [3] with, in our case, the prior distribution for Σ taken as Jeffreys prior (i.e., and with their notations). While the same analysis as in [3] could have been performed, it would essentially lead to the same result, since we only consider the asymptotic case, where the sample is large and the prior distribution is supposed to have very little influence—provided that it does not contradict the data. The present study also shows an interesting feature of Bayesian estimation with respect to the above-mentioned negative bias. As the sample differential entropy tends to underestimate by a factor of , if one takes the posterior mean as the Bayesian estimate of , then the negative bias is corrected by the opposite factor.

Figure 1.

Error on the mean (top row) and variance (bottom row) of sample entropy for various values of D and ν when using the first-order approximation (circles), the second-order approximation (squares), or the sampling scheme (diamonds). The error was calculated as the absolute value of the difference between the approximation and the true value. For the sampling scheme are represented the median as well as the symmetrical 90% probability interval of the error. Scale on y axis is logarithmic.

Figure 1.

Error on the mean (top row) and variance (bottom row) of sample entropy for various values of D and ν when using the first-order approximation (circles), the second-order approximation (squares), or the sampling scheme (diamonds). The error was calculated as the absolute value of the difference between the approximation and the true value. For the sampling scheme are represented the median as well as the symmetrical 90% probability interval of the error. Scale on y axis is logarithmic.

We were also able to obtain an asymptotic approximation of the sampling and posterior expectations of mutual information and multiinformation. Contrary to the general argument developed by [7], we proved that, for multivariate normal distributions, the negative bias for differential entropy does entail a positive bias for mutual information. This result is in agreement with the fact that, under the null hypothesis of Σ diagonal matrix, corresponding to , is asymptotically chi square distributed with degrees of freedom and, hence, has an expectation equal to that value [19] (pp. 306–307). Surprisingly, and unlike what was said for entropy, the positive bias of the sample multiinformation was not corrected by the Bayesian approach. A naive correction of minus the positive bias could lead to negative values, which is impossible by construction of multiinformation. Note that, using the present results alone, we were not able to obtain an asymptotic approximation for the variance of the same measures.

In the present paper, we used loose versions of the inequalities proposed in [20] to prove the monotonicity and sign of the cumulants of U (see Section 2.1 and Appendix). Note that, using the same inequalities, it seems that it would also be possible to obtain lower and upper bounds for these quantities, instead of asymptotic approximations. These bounds would be useful complements to the approximations provided in the present manuscript.

Acknowledgements

The authors are grateful to Pierre Bellec for helpful discussions.

References

- Ahmed, N.A.; Gokhale, D.V. Entropy expressions and their estimators for multivariate distributions. IEEE Trans. Inform. Theory 1989, 35, 688–692. [Google Scholar] [CrossRef]

- Misra, N.; Singh, H.; Demchuk, E. Estimation of the entropy of a multivariate normal distribution. J. Multivariate Anal. 2005, 92, 324–342. [Google Scholar] [CrossRef]

- Gupta, M.; Srivastava, S. Parametric Bayesian estimation od differential entropy and relative entropy. Entropy 2010, 12, 818–843. [Google Scholar] [CrossRef]

- Beirlant, J.; Dudewicz, E.J.; Györfi, L.; van der Meulen, E.C. Nonparametric entropy estimation: An overview. Int. J. Math. Stastist. Sci. 1997, 6, 17–39. [Google Scholar]

- Strong, S.P.; Koberle, R.; de Ruyter van Steveninck, R.R.; Bialek, W. Entropy and information in neural spike trains. Phys. Rev. Lett. 1998, 80, 197–200. [Google Scholar] [CrossRef]

- Antos, A.; Kontoyiannis, I. Convergence properties of functional estimates for discrete distributions. Random Struct. Algor. 2001, 19, 163–193. [Google Scholar] [CrossRef]

- Paninski, L. Estimation of entropy and mutual information. Neural Comput. 2003, 15, 1191–1253. [Google Scholar] [CrossRef]

- Wolpert, D.H.; Wolf, D.R. Estimating functions of probability distributions from a finite set of samples. Phys. Rev. E 1995, 52, 6841–6854. [Google Scholar] [CrossRef]

- Wolpert, D.H.; Wolf, D.R. Erratum: Estimating functions of probability distributions from a finite set of samples. Phys. Rev. E 1996, 54, 6973. [Google Scholar] [CrossRef]

- Anderson, T.W. An Introduction to Multivariate Statistical Analysis; John Wiley and Sons: New York, NY, USA, 1958. [Google Scholar]

- Abramowitz, M.; Stegun, I.A. Handbook of Mathematical Functions; Applied Mathematics Series 55; National Bureau of Standards: Washington, DC, USA, 1972. [Google Scholar]

- Anderson, T.W. An Introduction to Multivariate Statistical Analysis, 3rd ed.; Series in Probability and Mathematical Statistics; John Wiley and Sons: New York, NY, USA, 2003. [Google Scholar]

- Gelman, A.; Carlin, J.B.; Stern, H.S.; Rubin, D.B. Bayesian Data Analysis; Texts in Statistical Science; Chapman & Hall: London, UK, 1998. [Google Scholar]

- Watanabe, S. Information theoretical analysis of multivariate correlation. IBM J. Res. Dev. 1960, 4, 66–82. [Google Scholar] [CrossRef]

- Garner, W.R. Uncertainty and Structure as Psychological Concepts; John Wiley & Sons: New York, NY, USA, 1962. [Google Scholar]

- Joe, H. Relative entropy measures of multivariate dependence. J. Am. Statist. Assoc. 1989, 84, 157–164. [Google Scholar] [CrossRef]

- Studený, M.; Vejnarová, J. The multiinformation function as a tool for measuring stochastic dependence. In Proceedings of the NATO Advanced Study Institute on Learning in Graphical Models; Jordan, M.I., Ed.; MIT Press: Cambridge, MA, USA, 1998; pp. 261–298. [Google Scholar]

- Press, S.J. Applied Multivariate Analysis. Using Bayesian and Frequentist Methods of Inference, 2nd ed.; Dover: Mineola, NY, USA, 2005. [Google Scholar]

- Kullback, S. Information Theory and Statistics; Dover: Mineola, NY, USA, 1968. [Google Scholar]

- Chen, C.P. Inequalities for the polygamma functions with application. Gener. Math. 2005, 13, 65–72. [Google Scholar]

Appendix

Results Regarding the Cumulants

The proofs differ for and , .

1. Results for

For , set as defined in Equation (7).

Result 1: is a decreasing function of ν. Derivation of with respect to ν leads to

We use the following inequality [20]

This implies that

For , we have . Consequently, each term in the sum of Equation (25) is strictly negative, and so is . is therefore a strictly decreasing function of ν.

Result 2: is an increasing function of D. We have

Using the following inequality [20]

we obtain that

leading to

Since , we have

and, therefore, .

Result 3: is positive. is the sum of terms that are strictly positive (cf previous paragraph); it is thus strictly positive.

Result 4: tends to infinity as D increases.

From the proof of Result 2, we have

which tends to infinity when D tends to infinity.

Result 5: tends to 0 as ν increases. We use the following inequality [20]

This implies that

leading to

Since , we have

Summing over d yields

which tends to 0 when ν increases.

2. Results for ,

Define as in Equation (6), is completely monotonic. As a consequence, is a decreasing function of ν. We also use the following inequality [20]

This implies that is strictly positive and, as a consequence, that is an increasing function of D. It also implies that tends to 0 as ν tends to infinity.

© 2011 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/3.0/).