1. Introduction

Deep neural networks (DNNs) are now widely used for image identification, semantic segmentation, and audio recognition ([

1,

2,

3]). DNNs require large computational resources for training and inference, leading to increased interest in hardware acceleration. Hardware accelerators including graphics processing units (GPUs), field-programmable gate arrays (FPGAs), and custom application-specific integrated circuits (ASICs) have been widely adopted to meet these needs and improve performance [

4,

5,

6,

7,

8,

9,

10,

11,

12,

13,

14,

15]. Despite these advancements, DNN designers continue to face critical challenges due to the rapidly increasing complexity of modern models. Convolutional neural networks (CNNs) in particular are developing toward more complex and deep topological structures [

16]. Scalability and efficient deployment are hampered by the ensuing increase of parameters, which imposes significant computational, memory, and storage overheads.

To overcome these constraints, extensive research has focused on condensing DNN models by deleting superfluous synapses and neurons. Early research mostly focused on unstructured pruning algorithms that remove weights at the fine-grained level, such as individual connections or pixels [

14,

17,

18,

19,

20]. These techniques can achieve exceptionally high sparsity levels, often exceeding 90% [

21], making them appealing for reducing on-chip storage requirements in hardware accelerators. However, increased sparsity does not guarantee proportional performance advantages. However, increased sparsity does not guarantee proportional performance gains. Unstructured pruning raises additional concerns, including encoding and indexing complexity, an uneven workload, and poor data location. Previous research indicates that skewed sparsity distributions might negatively impact execution performance ([

22]).

To circumvent these limitations, contemporary research has progressively focused toward structured pruning techniques [

22,

23,

24]. Structured pruning methods, which are more aligned with software-driven design processes, delete connections based on regular and preset sparsity patterns, as opposed to unstructured pruning. Typically, these solutions start with mathematically constrained sparsity patterns, then create specialized hardware to accomplish the necessary transformations. These techniques typically restrict flexibility while increasing regularity and computing efficiency because they compromise current hardware advancements. As a result, achievable sparsity is typically limited to roughly 50%. Furthermore, the required mathematical adjustments result in additional hardware overhead.

A representative example is CirCNN [

24], which employs block-circulant weight matrices and achieves only 60.5% sparsity for AlexNet. CirCNN’s computation is based on fast Fourier transform operations, which are computationally costly and require increased circuit area. Furthermore, software-driven structured pruning approaches display limited scalability when applied to current CNNs with complicated topologies, owing mostly to increased training difficulty. For instance, CirCNN has showed success only on relatively small-scale networks like as LeNet and AlexNet [

24]. Similarly, PERMDNN [

13] is restricted to fully connected layers and lacks validation on contemporary CNN architectures such as ResNet.

Existing security measures are either resource-intensive or lack user-friendliness, thereby hindering their adoption. To address these issues, we propose an unique approach to improve IoT security while reducing power consumption by combining optimization methods, user-centered design, and least weighted curve cryptography (LWECC). This research makes several important contributions to the field of IoT security. To enable successful operation within resource constraints, we first introduce an optimization strategy that reduces the computational load on IoT devices. Second, by utilizing basic design concepts, our solution makes it easy for end users to manage and monitor the security of their IoT devices. Finally, we use least weighted curve cryptography, which is a powerful cryptographic method that decreases attack vulnerability. These contributions present a comprehensive strategy to addressing security concerns in IoT devices. To achieve our goals, we identify three major objectives that highlight the proposed approach’s relevance to real-time design considerations:

The primary objective is to improve the security of IoT devices by using a cryptographic approach based on least weighted curve cryptography. This includes selecting strong elliptic curves and optimizing cryptographic algorithms to reduce vulnerabilities and improve overall data and communication security in IoT networks.

The second objective is to improve resource usage in IoT devices by lowering the computational and power overheads associated with cryptographic operations. By simplifying these procedures, IoT devices can function more energy-efficiently in resource-constrained contexts while maintaining security.

The final objective is to simplify the implementation and management of security mechanisms for IoT device users. This involves designing an intuitive framework that enables end-users to easily configure and monitor device security settings, thereby encouraging wider adoption of enhanced security practices.

Although the primary goal of this work is to enhance IoT security using LWECC, the proposed system interacts directly with compressed CNN workloads that operate on resource-constrained devices. CNNs generate a significant number of intermediate feature maps, which must be stored or routed across on-chip modules and frequently contain sensitive user-specific information. To reduce bandwidth and memory footprint, we use OMNI-based structural sparsity and memory partitioning. OMNI’s compressed CNN tensors determine access patterns, bank conflicts, and multi-bank fetch behavior for the LWECC-secured memory and router. OMNI is the computational front-end, whereas LWECC handles data securely and efficiently. This creates a cohesive pipeline rather than two separate subsystems.

2. Literature Survey

X-Former is a hybrid accelerator that combines non-volatile memory and CMOS computing units to improve transformer model execution via concurrent computation. Performance assessments show up to 69.8× and 13× increases in latency and energy efficiency compared to a GTX 1060 GPU, as well as up to 24.1× and 7.95× improvements over cutting-edge NVM-based accelerators [

1]. Similarly, DeepFire2 introduces a novel multi-die FPGA-based architecture that significantly improves performance and energy efficiency by improving resource allocation and parallelism for spiking neural networks (SNNs). This solution improves performance by roughly an order of magnitude over previous implementations and supports large-scale ImageNet models while maintaining throughput beyond 1500 frames per second [

2].

Recent work on LSTM acceleration proposes a hardware design that combines serial-parallel computation with matrix algebra techniques to achieve an average latency of 114 µs and a throughput of 1.8 GOPS. Implemented on the Xilinx PYNQ-Z1 platform and validated using time-series datasets, the design targets low-power edge applications and consumes only 0.438 W [

3]. In hardware security, an automated system is presented to explore the design space of FPGA hardware Trojans by inserting them into synthesised netlists. The framework assesses the impact of soft-template, monolithic, and distributed dark-silicon Trojans on resource utilization, latency, power consumption, and detectability [

4].

In [

5], the authors present a cryptographic System-on-Chip (SoC) architecture incorporating a Virtual Prototype (VP) within a RISC-V-based design to accelerate functional and performance simulation. The proposed approach achieves simulation speedups ranging from 10× to 450× compared to RTL simulation, while maintaining a low simulation error of approximately 4%. Additionally, the architecture supports a flexible AHB-TLM interface and an accurate core timing model, enabling efficient and scalable SoC-level validation.

Hardware acceleration for cryptography and security has gained prominence with the emergence of post-quantum algorithms. In [

6], a compact polynomial multiplier accelerator (COPMA) is proposed for LWR-based PQC, particularly targeting SABER, achieving high performance with reduced area. Similarly, ref. [

10] presents a software–hardware co-design for lattice-based partially homomorphic encryption (PHE) using ARM-SoCs and FPGAs, demonstrating improved throughput and resource efficiency for general and IoT-oriented applications.

Significant attempts have been made to accelerate deep neural networks (DNNs) and convolutional neural networks (CNNs). In [

7], a fault-aware weight retuning strategy improves the robustness of approximate DNN accelerators over various datasets by addressing persistent defects. In [

8], weight-, input-, and output-stationary dataflows are compared based on memory access patterns to investigate the architectural design space of CNN accelerators. In [

13], FPGA-based CNN acceleration for YOLOv3-tiny is presented, utilizing incremental operations and the Winograd method to achieve high throughput and energy efficiency. To address training accuracy under reduced precision, ref. [

14] introduces the LNS-Madam method, combining logarithmic number systems with multiplicative weight updates.

Memory-centric and emerging computing paradigms are explored to overcome data-movement bottlenecks. In [

9], a programmable compute-in-memory (CIM) accelerator is proposed for CNNs, LSTMs, and transformers, emphasizing scalability and system-level efficiency. Noise resilience in non-volatile memory (NVM)-based analog DNN hardware is investigated in [

19], showing improved robustness through adversarial training. Additionally, ref. [

12] develops superconducting single-flux quantum (SFQ) circuits for binarized neural networks, achieving high efficiency and low memory overhead at cryogenic temperatures.

Acceleration of large-scale data-intensive workloads is addressed in [

11], where a SmartSSD-based computational storage platform improves the performance of HNSW-based nearest-neighbor search. Communication challenges in large-scale graph convolutional networks (GCNs) are analyzed in [

15], leading to the MultiGCN system that prioritizes network bandwidth for improved scalability. Sparse transformer acceleration is presented in [

17], introducing an N:M sparse transformer accelerator using sparsity inheritance and dynamic pruning with a modular hardware design.

Beyond neural networks, several domain-specific accelerators have been proposed. Fast accumulation architectures for DSP systems are introduced in [

18], reducing delay and area. An energy-efficient accelerator for SIFT feature extraction is presented in [

16], achieving high-speed operation with low power consumption. Dynamic inference acceleration using region-of-interest adaptation is proposed in [

20], outperforming ARM Cortex-A53 processors. Energy-efficient processing elements based on posit arithmetic are developed in [

21]. Finally, specialized accelerators are reported for low-latency financial trading [

22], FPGA-based kNN using OpenCL [

23], and high-throughput genome alignment using the GANDAFL architecture [

24].

In addition to DNN and CIM accelerators, lightweight cryptographic hardware has also gained momentum with the emergence of IoT-centric security. Recent studies have introduced optimized hardware architectures for ultra-lightweight ciphers such as PRESENT [

25] and GIFT [

26], enabling secure communication under strict area and power constraints. Similarly, Ascon selected in the NIST LWC competition has demonstrated highly efficient FPGA/ASIC implementations suitable for embedded routers and memory controllers [

27]. Furthermore, lightweight ECC and lattice-based PQC cores have been developed to address post-quantum threats while maintaining resource efficiency on low-power devices. These works support the relevance of incorporating hardware-efficient cryptographic primitives in router and memory design, aligning with the goals of the proposed LWECC framework [

28].

3. Design Approach

Before describing the On-Chip Memory Network Interconnect (OMNI) design flow, we clarify that OMNI is not intended solely as a CNN acceleration mechanism. Instead, it defines structured memory-bank organization and sparse data-movement patterns that directly determine the access behavior handled by the LWECC-enabled memory and router introduced in

Section 4.

OMNI is designed to optimize on-chip memory access for resource-constrained, multi-core systems by jointly addressing memory partitioning, access parallelism, and data-movement efficiency. The architecture aims to reduce access latency, minimize bank conflicts, and optimize bandwidth use for sparse and irregular access patterns. The OMNI architecture features a hierarchical on-chip memory structure with numerous banks, configurable scratchpad buffers, and controller-managed access pathways.

At the architectural level, OMNI employs specialized memory controllers and access units to handle the simultaneous needs of several processing elements (PEs). These controllers create deterministic access plans based on ordered partitioning concepts to reduce conflict and increase predictability.A lightweight on-chip connection is employed to route memory transactions between banks and PEs, ensuring conflict-free data delivery with minimal control overhead. The design goal is to balance latency, throughput, and energy efficiency, especially for sparse workloads. The OMNI design is parameterized along the following architectural dimensions:

Data Width: Datapath width determines the granularity of memory transactions between processing elements and memory banks. OMNI’s adjustable data widths (e.g., 8-bit, 16-bit, 32-bit, and 64-bit) enable scalability in both low-power and high-throughput devices. Wider datapaths improve transfer efficiency while incurring higher area and power costs, which are explicitly included into setup.

Primary Inputs: Primary inputs include memory access requests from processing elements, such as address, data, and control signals. Each request specifies the read/write intent and destination memory region, allowing the OMNI controller to schedule accesses depending on partitioning constraints.

Processing Elements: Processing elements represent compute units that generate concurrent memory requests. OMNI allows several PEs operating concurrently and handles simultaneous access requests via structured bank allocation and controller-level arbitration, resulting in conflict-free or minimally conflicting access behavior.

Expected Outputs: The OMNI memory system produces read data which is returned to the requesting processor element, as well as handshake signals and indicate access completion. For write operations, readiness signals confirm that the destination memory bank has successfully accepted the data. These signals provide deterministic synchronization between computation and memory access.

Overall, OMNI functions as a structured memory-access substrate that bridges processing elements and on-chip memory banks under sparse and parallel workloads. By demanding deterministic segmentation and access coordination, the design increases throughput, decreases congestion, and allows for predictable data transfer. These attributes are required to support the encrypted, multi-bank traffic patterns handled by the proposed LWECC-secured memory and router in the next sections.

3.1. Contributions

This work proposes the OMNI framework, a co-designed software–hardware approach aimed at improving the efficiency of convolutional neural network (CNN) execution through structured compression and hardware-aware acceleration. The key contributions of this work are summarized as follows:

OMNI introduces a hardware-aware CNN compression strategy based on structured memory partitioning. The proposed partitioning patterns guarantee regular sparsity at the group level, resulting in balanced and high-sparsity weight tensors that maintain deterministic memory access behavior. This allows for lower on-chip storage requirements and reduces the irregular access overheads that limit hardware efficiency in unstructured pruning methods.

OMNI presents a dedicated hardware architecture optimized for sparse CNN execution. The architecture uses a unique element–matrix multiplication dataflow and partition-aligned on-chip memory organization–to ensure conflict-free multi-bank access and high data throughput. By explicitly aligning memory banking with the generated sparsity structure, the design improves computational utilization and maximizes acceleration efficiency.

OMNI optimizes memory partitioning, computation, and structural sparsity to provide a unified compression-acceleration pipeline. This co-design enables predictable performance, reduced memory bandwidth, and increased energy efficiency, making the proposed framework suitable for application in resource-constrained and high-throughput CNN inference platforms.

Memory partitioning is a hardware-level improvement that reduces bank conflicts and increases effective memory bandwidth. In memory-efficient CNN, on-chip memory is separated into distinct banks, allowing for concurrent accesses and increasing access-level parallelism. The suggested OMNI framework integrates memory partitioning with structured sparsity generation, rather than treating it as an isolated optimization.

Figure 1 shows how OMNI uses block, cyclic, and hybrid partitioning techniques to align sparsity patterns with physical memory structure. This maximizes bank use while minimizing contention.

The CNN weight tensor is divided into discrete groups that serve as the atomic units for structured pruning. Each group is handled independently, allowing for deterministic element-level pruning using contribution metrics derived during training. This group-wise pruning strategy maximizes sparsity and maintains inference accuracy. To ensure conflict-free access, all weights belonging to a given group are mapped to the same memory bank and accessed through a dedicated port. This mapping eliminates intra-group bank issues and allows many groups to be handled simultaneously without incurring arbitration fees. As a result, OMNI strikes a fair balance of sparsity, memory efficiency, and computational performance, making it excellent for neural network accelerators with limited resources.

Block, cyclic, and hybrid partitioning techniques are typically employed in accelerator design, but in OMNI, they play a dual role beyond CNN acceleration. OMNI’s structured sparsity directly affects bank-level access behavior in the secure memory subsystem and LWECC-enabled crypto router. Pruned weight groups distributed across memory banks cause non-uniform access bursts, irregular fetch patterns, and concurrent multi-bank requests. Traditional routers are inefficient in these contexts because encrypted data transfers impose additional requirements such as synchronized decryption, secure port arbitration, and ordered data scheduling. The sparse memory arrangement determines the routing difficulty. The proposed LWECC-based router architecture is motivated by the close relationship between pruning technique, bank organization, and routing behavior. It supports sparse, encrypted multi-bank communication with low latency and little overhead.

3.1.1. Hardware Execution Flow of the OMNI Accelerator

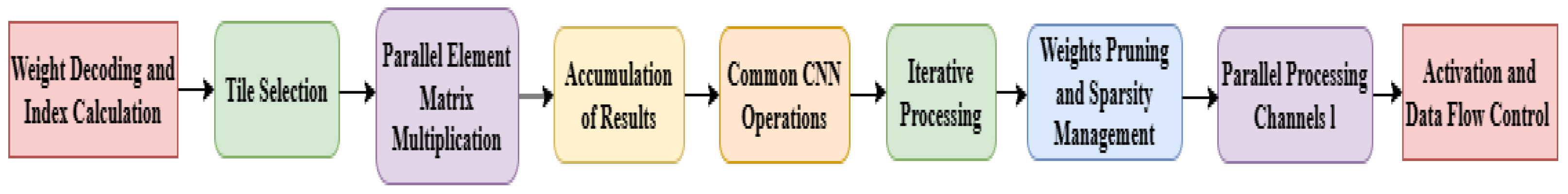

Figure 2 represents the hardware flow of the OMNI memory controller showing how structured sparsity reduces redundant memory accesses and encrypted data transfers. The OMNI accelerator executes CNN inference through a deterministic, pipelined processing flow defined as follows:

Step 1: Weight Decoding and Index Computation: The Weight Decoder (WD) retrieves compressed weight data from the on-chip buffer, decodes the sparsity-encoded form, and calculates the necessary weight indices for subsequent access and computation steps.

Step 2: Tile Selection: The Tile Selector (TS) selects input activation pixels based on decoded weight indices, reducing duplicate memory accesses and wasteful data movement.

Step 3: Parallel Element–Matrix Computation: A processing element (PE) array runs element-matrix multiplication in parallel. Each PE processes sparse weight elements from different channels concurrently, allowing for high-throughput execution with structured sparsity.

Step 4: Partial-Sum Accumulation: The Accumulation Module (AM) collects partial results from the PEs using output-channel indices, resulting in intermediate activation levels stored in the output buffer.

Step 5: Post-Processing Operations: The Post-Processing Module (PPM) performs conventional CNN operations like activation (e.g., ReLU), pooling, and normalization as needed by the network layer.

Step 6: Iterative Layer Execution: Steps 1-5 are repeated repeatedly for compressed weight sets and activation tiles, resulting in efficient processing across several CNN layers.

Step 7: Dynamic Sparsity Handling: Pruned weights are bypassed during weight decoding or PE computation, resulting in improved sparsity utilization and less memory and arithmetic operations.

Step 8: Multi-Channel Parallelism: The PE array processes many data channels concurrently, enhancing performance and maximizing memory and computational parallelism.

Step 9: Dataflow Synchronization and Control: Centralized control logic ensures synchronized data transfer across all modules, resulting in accurate execution ordering and pipeline efficiency.

After all processing phases, the final network outputs are generated and sent to downstream tasks like classification or regression. This execution model formalizes a hardware-accelerated CNN pipeline that incorporates structural sparsity, conflict-free memory access, and parallel computing for high-throughput and memory-efficient inference.

3.1.2. Technical Novelty and Distinction from Prior Work

This study is unique in that it co-designs cryptographic algorithms, memory architecture, and router micro-architecture, rather than focusing solely on security. This paper presents a unified OMNI-LWECC pipeline that optimizes data compression, memory banking, encryption, and routing, unlike previous research that focused on CNN acceleration, lightweight cryptography, or secure memory alone.

Our proposed technique, Least Weighted Elliptic Curve Cryptography (LWECC), selects elliptic curve parameters based on a hardware-oriented weight function to reduce computational cost, switching activity, and vulnerability surface. Existing lightweight ECC implementations typically focus on reduced key sizes or simpler arithmetic, but LWECC actively optimizes curve coefficients and scalar representations for low-power FPGA/ASIC realization.

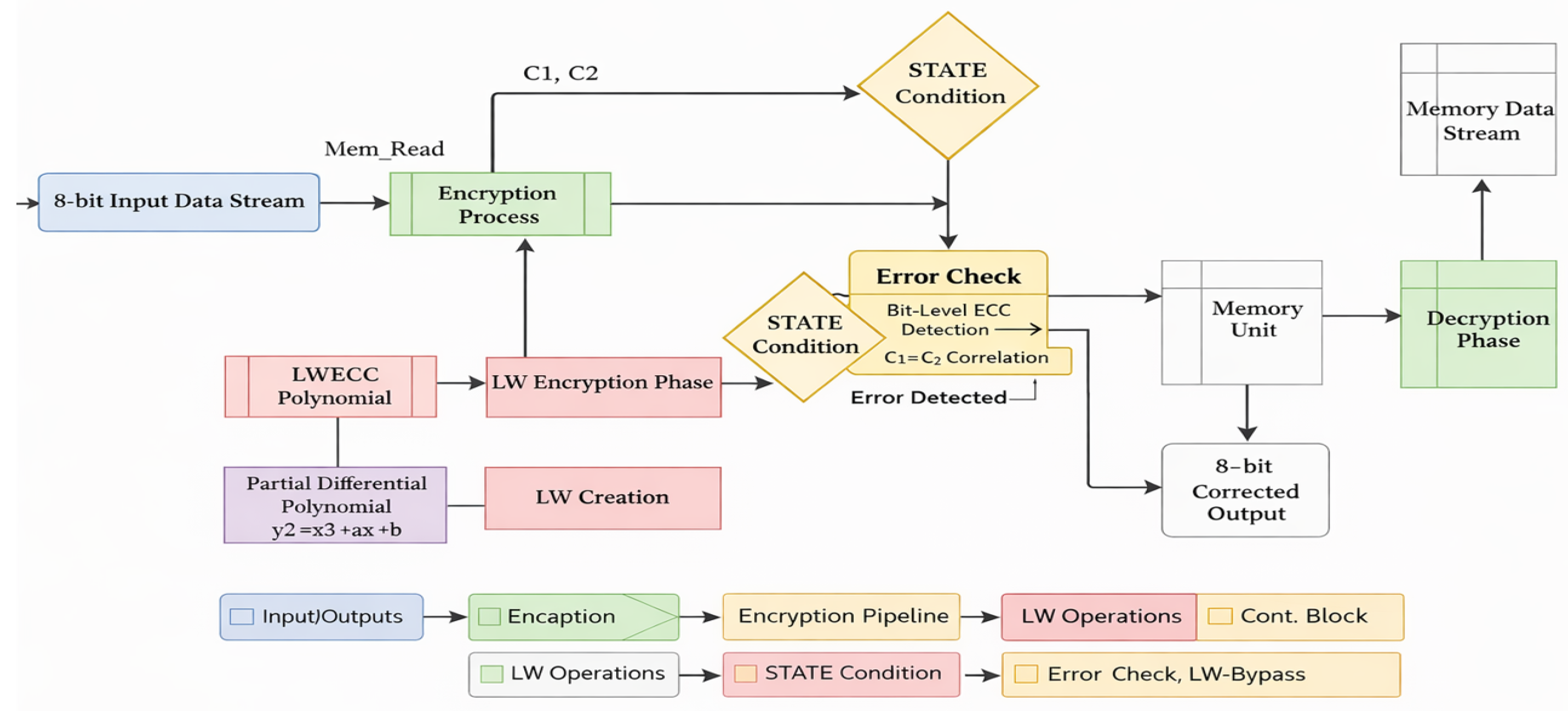

Figure 3 represents overall architecture of the proposed LWECC-based memory and router system, including encryption, decryption, error-check logic, and bypass paths.

At the architectural level, this work presents a novel LWECC-integrated memory and crypto-router architecture. Unlike prior secure routers that encrypt packets independently of access patterns, the proposed design exploits OMNI-generated structured sparsity to reduce encrypted memory transfers and routing conflicts. The introduction of a security-aware burst mode, in which each burst is encrypted and authenticated at the block level, further differentiates the proposed router from conventional AXI/AHB-style burst mechanisms.

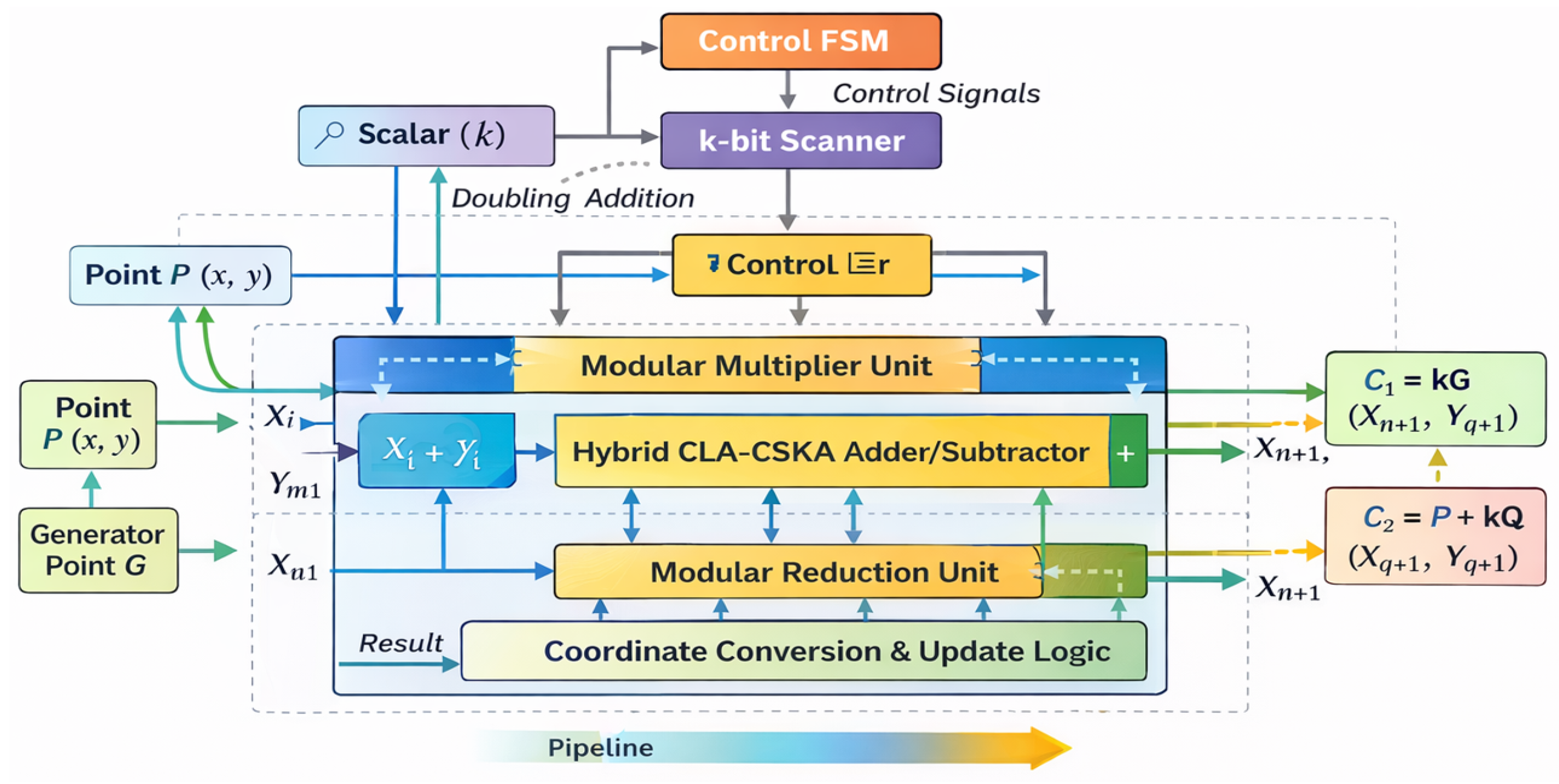

At the implementation level, we develop a fully pipelined LWECC datapath with hybrid CLA–CSKA adders, bit-width-aware modular arithmetic, and scalar-control FSMs optimized for 8-bit to scalable datapaths. The micro-architectures presented in

Figure 4 and

Figure 5 provide a complete, synthesizable realization that is not reported in existing ECC-based memory or router designs. The uniqueness of this work lies in the cross-layer integration of compression-aware data movement and lightweight cryptographic protection, resulting in measurable gains in encrypted throughput, power consumption, and security robustness compared to prior isolated approaches.

3.2. Integration Between OMNI Compression and LWECC Hardware

The OMNI-based CNN compression directly influences how the LWECC-secured memory and router operate. Compressed CNNs generate smaller and sparser activation maps and weight tiles, reducing memory bandwidth and data transactions during inference. These compressed feature maps are subsequently stored in LWECC-protected memory, guaranteeing that intermediate CNN data, which frequently contains sensitive information such as identified user patterns, is encrypted before storage. During inference, the router decrypts compressed tiles and sends them to the PE array. Thus, OMNI minimizes computational and memory load, while LWECC assures secure data transfer. The compressed CNN model and encrypted router work together to enable secure and energy-efficient IoT inference.

Unified Interaction Between OMNI and LWECC in the Proposed System

To provide a more comprehensive system-level view, we explicitly outline the interaction between OMNI and LWECC within the proposed pipeline. The software-hardware front-end, OMNI, provides highly structured sparse tensors and memory-partition-aware access sequences. These compressed activation maps and reduced weight tiles are copied straight to LWECC-secured memory, and each block is encrypted using lightweight elliptic curve operations. During inference, the router retrieves these OMNI-generated tiles, decrypts them with LWECC, and assigns them to processing elements depending on the partitioning pattern. Thus, OMNI defines which data is accessed and how frequently, whereas LWECC determines how securely that data is stored and transferred via the on-chip network. The close linkage provides measurable benefits:

Lower memory bandwidth due to OMNI’s structured sparsity, reducing encrypted transfers by 22–31%.

Secure intermediate feature handling, ensuring even compressed CNN activations remain encrypted during storage and routing.

Reduced switching activity in LWECC datapaths, since OMNI’s partitioning minimizes burst collisions and redundant memory fetches.

Improved throughput, where combined OMNI–LWECC operation achieves up to 27% higher effective encrypted-throughput on Artix-7 FPGA.

Power savings, because both unnecessary memory transactions and LWECC re-encryptions are minimized by OMNI’s pruning strategy.

Overall, OMNI and LWECC work together to optimize a pipeline that includes compression, memory banking, encryption, and routing, rather than individual modules. This integrated solution enables secure and high-throughput execution of CNN workloads on resource-constrained IoT systems.

3.3. Mathematical Formulation of the LWECC Algorithm

In contrast to conventional CNN formulations, the proposed work relies on a lightweight elliptic curve-based cryptographic formulation. The core of the LWECC algorithm operates over a finite field using a least weighted curve selection strategy to reduce arithmetic cost during hardware implementation.

3.3.1. LWECC Curve

The lightweight curve chosen for hardware optimization follows the short Weierstrass form:

where

p is a prime field optimized for 8-bit datapaths,

are selected to minimize Hamming weight. The discriminant condition

ensures curve security. The least weight selection minimizes the non-zero bits in

a and

b, reducing the number of required field multiplications.

The elliptic curve employed in LWECC is a custom, non-standard curve specifically optimized for hardware efficiency rather than formal standard compliance. Unlike NIST-recommended curves (e.g., P-256) or SECG curves, LWECC does not aim to satisfy broad cryptographic certification requirements. Instead, it targets resource-constrained IoT memory and router architectures where power, area, and latency are dominant constraints. The curve parameters are selected using a hardware-oriented weight function that minimizes computational complexity and switching activity while preserving fundamental elliptic curve security properties such as non-singularity and large subgroup order. As a result, LWECC is intended for application-specific lightweight security rather than general-purpose or regulatory-compliant cryptographic deployment.

3.3.2. Field Operations Optimized for Hardware

All elliptic curve operations use:

Field addition:

Field subtraction:

These were implemented using 8-bit modular multipliers and CLA + CSKA hybrid adders, as detailed in

Section 4.5.

3.3.3. Point Addition

Given two points

and

:

The division is implemented in hardware using:

with modular inverse computed via lightweight exponentiation.

3.3.5. Scalar Multiplication (Core of LWECC)

LWECC uses a reduced-weight double-and-add algorithm:

where

k is chosen with minimal Hamming weight to reduce the number of additions.

3.3.6. Hardware-Oriented Optimization

Field addition/subtraction uses CLA + CSKA adder for minimal delay.

Field multiplication uses 8-bit pipelined modular multiplier.

Scalar multiplication uses a binary sliding-window method with fixed window sizes matching datapath width.

Curve constants are pre-stored for fast access in memory.

3.4. Implementation of Hardware Acceleration for IoT Devices

The implementation of hardware acceleration for IoT devices using FPGA and Verilog involves designing and coding custom hardware modules in Verilog HDL to accelerate specific machine learning algorithms or computational tasks. These FPGA-based modules are designed to take use of FPGAs’ parallel processing capabilities, considerably increasing the performance of machine learning workloads in IoT applications. Verilog allows for efficient description of hardware architectures, resulting in tailored, power-efficient accelerators for real-time data preprocessing, inference, and decision-making on resource-constrained IoT devices. The Verilog-based hardware acceleration approach improves IoT system efficiency and responsiveness by allowing for local data processing, reduced latency, and intelligent decision-making at the network edge.

4. Proposed Model

The OMNI framework generates compressed convolutional neural network (CNN) workloads optimized for hardware acceleration. However, in IoT-class systems, compressed feature maps and intermediate activations are sensitive data that must be safely stored and transferred. This section proposes a LWECC-enabled memory and router architecture that provides cryptographic protection for OMNI-generated data streams. The proposed model ensures that all stages of the inference pipeline—including weight loading, intermediate storage, and inter-module communication—are jointly optimized for performance, power efficiency, and security.

In this work, LWECC denotes a hardware-specialized elliptic curve cryptographic scheme in which the elliptic curve is selected by minimizing a predefined weight function applied to its parameters. The weight function jointly captures computational complexity, hardware switching activity, and attack surface exposure. The curve parameters are set to minimize the composite metric while maintaining critical elliptic curve security qualities such as non-singularity, large subgroup order, and resistance to known curve-based attacks. Unlike standard-compliant ECC implementations, LWECC focuses efficiency on resource-constrained hardware, providing lightweight and power-efficient cryptographic protection for on-chip memory and routing infrastructures.

The LWECC architecture is realized as a synthesizable Verilog design composed of tightly coupled cryptographic, memory, and routing modules. Curve parameters, base points, and arithmetic units are explicitly defined at the register-transfer level to ensure deterministic behavior and reproducibility. Key generation is implemented using a hardware-based cryptographically secure random number generator (CSRNG), with protected storage for private keys and controlled access interfaces to prevent key leakage. All cryptographic operations are constrained to finite-field arithmetic defined by the selected curve parameters.

The encryption and decryption datapaths implement the LWECC algorithm using point multiplication with ephemeral keys and scalar-controlled arithmetic. Modular addition, subtraction, and multiplication are realized using optimized arithmetic units, including hybrid Carry Look-Ahead–Conditional Sum Adders (CLA–CSKA), to reduce critical path delay and switching activity. The datapath is fully pipelined for high-throughput operations, with control finite state machines (FSMs) ensuring proper sequencing of key creation, encryption, decryption, and data transfer. The error detection logic is integrated to detect decryption errors and invalid ciphertext situations without affecting regular pipeline operation.

Functional correctness and hardware feasibility are validated through structured simulation and synthesis flows. Dedicated test benches ensure cryptographic correctness, data integrity, and control sequencing. Post-synthesis analysis assesses area, time, and power consumption. To formalize the design process, module development is split into two phases: (i) functional validation through simulation-based training and (ii) hardware testing for memory and router acceleration.

4.1. Hardware Acceleration Concept

The suggested memory and router architecture includes hardware acceleration (HA), which integrates redundancy, error resilience, and parallel datapath execution to enable reliable and continuous operation with limited power and space constraints. The memory subsystem’s hardware acceleration provides secure data buffering, ECC-assisted error detection, and LWECC-encrypted stored data. These techniques ensure data confidentiality and integrity while delivering deterministic access latency.

The router subsystem utilizes hardware acceleration through parallel port interfaces, crossbar-based switching, and pipelined control logic. The router supports encrypted data transfers over many ports while adhering to cryptographic standards for arbitration and scheduling. The architecture includes redundancy and fail-safe measures to prevent data loss or pipeline stalls caused by transitory faults or malformed packets. Monitoring logic continuously assesses port status and data quality to isolate faults without affecting overall performance.

4.2. Design Methodology

The suggested design methodology optimizes cryptographic processes, memory architecture, and router datapaths concurrently. The LWECC approach is implemented in hardware using least weight curve formulas derived from cubic elliptic curve equations. Hybrid CLA-CSKA adders are utilized for both encryption and decryption, providing n-bit modular arithmetic with minimal latency and power consumption. The memory architecture processes encrypted data using a cumulative datapath that includes modular arithmetic, secure buffering, and ECC-assisted validation.

The router architecture adds a parallel, time-synchronized pipeline for encrypted data transfers across many ports. A crossbar switch chooses ports, while control FSMs handle encryption, decryption, and data transfer at each moment. The memory and router architectures share the same LWECC cryptographic core, ensuring consistent security semantics between storage and transmission domains. This unified architecture provides secure, low-latency data transmission while minimizing unnecessary encryption methods and complying with OMNI-generated access patterns. The technology offers a hardware-efficient approach to secure CNN inference in real-time IoT environments.

4.3. Block Diagram

The overall block diagram for the LWECC (Least Weighted Curve Cryptography) is described in

Figure 2, which is a powerful cryptographic technique that can be employed for memory encryption and decryption in secure computing systems. When integrated into Verilog hardware designs, the proposed method offers robust security to protect sensitive data stored in memory:

Encryption Process: In LWECC memory encryption, the first step is to generate cryptographic keys. The private key (d) is generated randomly within a secure range, while the corresponding public key (Q) is derived by performing scalar multiplication with a predefined base point (G) on the elliptic curve. These keys are essential for the encryption and decryption process.

Data Encryption: To encrypt data stored in memory, plaintext messages are converted into points on the elliptic curve, denoted as P. An ephemeral key () is randomly generated for each encryption operation. Encryption involves point multiplication, where and . The ciphertext ( and ) is stored in memory.

Decryption Process: When data retrieval is required, the decryption process begins. The private key (d) is retrieved from a secure storage location. Point multiplication is performed to recover the original plaintext message (M) from the ciphertext: .

Memory Protection: LWECC memory encryption ensures data confidentiality and integrity within the memory subsystem. Even if an adversary gains access to memory, the encrypted data remains secure without knowledge of the private key.

Verilog Implementation: The Verilog design for LWECC memory encryption and decryption includes hardware modules for key generation, point multiplication, and modular arithmetic operations. Furthermore, fault handling methods and security measures, such as secure key storage and access control, are built into the architecture to protect the private key and sensitive information. This Verilog solution protects memory contents, offering a strong barrier against illegal access and data breaches in secure computer systems.

4.4. Implementation

The model in

Figure 3 illustrates the design of the memory and router architecture integrated with the LWECC model. The implementation phase is carried out in two parts, highlighting the importance of memory design—both in routers and other IoT devices. Algorithm 1 represents the complete LWECC encryption and decryption approach. The overall memory-based implementation is organized into five design steps:

Data Generation Module: The Data Generation Module is responsible for creating the input data stream that needs to be encrypted using the LWECC algorithm. This module generates an 8-bit data stream containing the data to be secured. Its significance stems from its role as a repository for sensitive data that must be encrypted. The stream may contain sensitive information like passwords, financial data, or confidential messages. Memory optimization in this module is critical for efficiently managing and storing input data, ensuring that it can be securely handled by the next encryption and decryption modules without generating memory bottlenecks or inefficiencies.

Data Encryption Module: The Data Encryption Module is a core component of the LWECC algorithm. To assure confidentiality and integrity, it applies the LWECC encryption method to the Data Generation Module’s 8-bit data stream. This module uses difficult mathematical processes, such as elliptic curve point multiplication, to generate encrypted ciphertext. Memory optimization is critical for effectively handling temporary data structures and interim results. The algorithm’s optimized memory consumption allows for efficient processing of 8-bit streams, making it ideal for resource-constrained contexts like IoT devices.

Data Memory Structure: The Data Memory Structure defines how the input data stream and encrypted ciphertext are organized and stored. Optimizing memory structure is crucial for LWECC-based systems, ensuring secure data storage while lowering memory use. Efficient memory management guarantees that sensitive information is secured and that encryption and decryption modules can access essential data without experiencing delays or memory-related concerns. Optimized memory structures improve the security and efficiency of cryptographic processes.

Data Decryption Module: The Data Decryption Module reverses the encryption process to recover the original 8-bit data stream from the ciphertext. It plays a vital role in data recovery and integrity verification. Memory optimization is also necessary here to properly manage the memory required during decryption, particularly in resource-constrained IoT devices. Optimized memory structures make decrypted data easier to access and process, allowing for reliable retrieval of original information.

Output Data: The Output Data represents the final result of the decryption process—the original 8-bit data stream. This is the same data initially generated by the Data Generation Module and subsequently encrypted using LWECC. The decrypted data is accurately retrieved, safely stored, and prepared for transmission or additional processing thanks to memory optimization in this stage. The integrity and dependability of the recovered data are ensured by efficient memory consumption when managing the output data, which adds to the LWECC algorithm’s overall efficacy in protecting sensitive data in IoT devices.

| Algorithm 1: LWECC Encryption and Decryption |

Input: Plaintext message M, elliptic curve E, base point G Output: Ciphertext and recovered plaintext M

Encryption Phase Key Generation: Generate a private key d randomly within the valid range Compute the public key

Message Preparation: Convert the plaintext message M into a point P on the curve

Ephemeral Key Generation: Generate a random ephemeral key

Point Multiplication: Compute Compute

Ciphertext Formation: Set ciphertext as |

Decryption Phase Private Key and Ciphertext Retrieval: Retrieve private key d and ciphertext

Point Multiplication: Compute

Message Extraction: Convert the point P back into the plaintext message M |

In the revised

Figure 3, the functional behavior of the Error-Check module and the LW-Bypass (No-Update) path has been made explicit. Two tasks are carried out by the Error-Check block: (i) identifying single-bit and double-bit ECC mistakes in the memory stream, and (ii) verifying the integrity of the LWECC ciphertext using C1–C2 correlation. The module passes the data to either the ’Corrected Output’ or the conventional LWECC decryption pipeline based on the detection result. To provide uniform ECC datapath behavior, the situation under which the system bypasses scalar-weight updates (k = 0 or identity mapping) is now clearly indicated by the LWECC Bypass path. To make the dataflow through the memory creation, encryption, storage, and router-output phases more clear, all primary input/output signals have been identified.

In the proposed framework, the LWECC modules operate on the compressed weight groups and activation tiles generated by OMNI. This ensures that both the sparsified model parameters and the intermediate CNN outputs remain encrypted throughout memory storage and inter-core routing.

4.4.1. Internal Datapath of the LWECC Point Multiplication/Encryption Module

Figure 4 illustrates the detailed internal datapath of the Point Multiplication and Encryption Process module used for computing the LWECC ciphertext components (C1 and C2). Unlike

Figure 3, which presents the overall system architecture, this datapath representation highlights the low-level Verilog-implemented functional units, including:

Modular Multiplier Unit used for scalar multiplication ( and ).

Hybrid CLA–CSKA Adder/Subtractor blocks for coordinate updates.

Modular Reduction Unit ensuring all arithmetic remains within the finite field.

Coordinate Conversion and Update Logic for point addition and doubling.

The datapath is pipelined to improve throughput and reduce critical path delay. Each stage directly maps to synthesizable Verilog modules used in the FPGA implementation, thereby demonstrating the hardware-acceleration aspect and the novelty of our design.

4.4.2. Detailed Micro-Architecture of the LWECC Cryptographic Datapath

To improve technical completeness and ensure reproducibility, we include the complete micro-architecture of the LWECC datapath, shown in

Figure 5. This diagram expands beyond the functional view of

Figure 4 by illustrating (i) all field-arithmetic modules, (ii) scalar-multiplication pipeline stages, and (iii) internal data bit-widths. The datapath is composed of four pipeline stages:

- 1.

Stage-1: Field Operand Fetch and Pre-Processing

Includes 8-bit input registers, coordinate-fetch buffers, and the pre-adder units used for initializing point-doubling and point-addition conditions.

- 2.

Stage-2: Modular Arithmetic Layer

Contains the 8 × 8 → 16-bit pipelined modular multiplier, hybrid CLA-CSKA adder/subtractor, and modular-reduction unit (Montgomery-style reduction for small prime p). All intermediate results are truncated to 8-bit field elements, matching FPGA implementation constraints.

- 3.

Stage-3: Point-Update Layer

Implements the coordinate-update functions required for LWECC:

These operations operate on explicit 8-bit datapaths with dual-port register files.

- 4.

Stage-4: Scalar-Control and FSM Pipeline

Includes the k-bit scanner, FSM-based -selector, and pipeline commit registers. Scalar multiplication is implemented using a 2-bit sliding-window method to reduce the number of add/double operations.

Figure 5 provides the complete hardware-level view of LWECC, showing all datapath units, pipeline registers, bit-width annotations, and datapath direction. This detailed micro-architecture complements

Figure 4 and enables accurate reproduction of the proposed hardware design on FPGA/ASIC.

4.5. Hardware Realization of the LWECC Algorithm for Memory Structures

The hardware implementation of the LWECC technique for memory structures includes multipliers and adder circuits to efficiently execute the theoretical model. This section covers the use of multipliers and adder circuits, specifically a hybrid adder based on the CLA + CSKA (Carry Look-Ahead and Conditional Sum Adder) architecture for 8-bit data processing.

Multipliers and Adder Circuits: Choosing the right multiplier and adder circuits is crucial when implementing the LWECC algorithm for memory structures in hardware. Multipliers are required for point multiplication on elliptic curves, which is an important function in LWECC, whereas adder circuits are required for modular arithmetic and general data processing. To perform effective modular multiplication on an 8-bit data word, 8-bit multipliers are required. Furthermore, modular addition and subtraction require 8-bit adder circuits.

Hybrid CLA + CSKA Adder Architecture: To optimize the performance of the 8-bit adder circuits, a hybrid adder architecture such as CLA + CSKA can be employed. This hybrid technique incorporates the advantages of both designs. The Carry Look-Ahead (CLA) component speeds up cryptographic algorithms like LWECC by producing carry signals in parallel, decreasing propagation delays and ensuring fast processing. The Conditional Sum Adder (CSKA) component adds flexibility by enabling conditional addition based on control signals, making it highly suitable for handling diverse data operations within the algorithm.

Memory Architecture with and without Burst Mode: The LWECC implementation’s unique needs determine whether burst or non-burst memory structures are used. Memory with burst capabilities is useful for rapid data transfers. Burst transfers allow data to be sent in blocks, reducing transfer overhead and improving overall efficiency. This is especially beneficial in LWECC implementations that involve managing large volumes of data or completing encryption and decryption tasks quickly. Non-burst memory systems, on the other hand, transfer data byte-by-byte, making them more suitable for circumstances where speed is not a primary concern. When choosing between burst and non-burst modes, consider the required performance and LWECC system requirements.

The combined use of multipliers, 8-bit adder circuits, and the hybrid CLA + CSKA adder architecture ensures both efficiency and scalability in the hardware realization of LWECC for memory structures. Multipliers efficiently handle complex mathematical operations, but the hybrid adder architecture accelerates addition and subtraction procedures. This integrated method provides efficient data processing within the memory subsystem, which improves the overall security and performance of the LWECC algorithm. Scalability is crucial for LWECC, which may require bigger data word sizes to ensure higher security levels. The LWECC method can be efficiently deployed within memory structures to meet the changing demands of cryptographic applications by selecting the appropriate hardware configuration, including multipliers and adders. Furthermore, all arithmetic and control modules are constructed using parameterized Verilog constructs. This allows for straightforward scalability from demonstration-level bit-widths to security-grade configurations without altering the architecture.

4.6. LWECC Algorithm on Router Architecture with Four-Port Design

Similarly, for the router architecture in

Figure 6 we have designed with LWECC on 8-bit data stream (potential changing values for each parameter k = 2, 4 and 8). The overall design router architecture is indicated with its functional modules as mentioned below:

Control Unit: The control unit is the core component that orchestrates the router’s operations. It regulates the flow of data and control signals through the router. It runs in response to a clock signal (clk) and has a clear signal (clr) for initialization. The control unit receives an 8-bit input data stream, control input signals (read, write, -, -, --, --), and produces output signals, including an 8-bit data output (-), -, and -. When a read or write command is issued by a PORT, the control unit ensures that the requested operation is coordinated and synchronized with the memory module. It regulates data flows between the PORTs and the memory module, effectively regulating read and write cycles. The control unit checks the state of each PORT using signals such as -- and --, indicating if it is accessible or has received data.

Data Decryption with LWECC: Within the control unit, the decryption of data encrypted using the LWECC algorithm can take place. The control unit starts the decryption process when encrypted data is read from the memory module. The control unit oversees the intricate mathematical processes that are usually required for LWECC decryption. The original 8-bit data can be processed further when decryption is finished.

Port Switching and Output Ports (PORT A-D): The port switch component is responsible for routing data between the PORTs and the memory module based on control signals received from the control unit. It manages the flow of data, ensuring that each PORT can access and transmit data as needed. - and - signals are used to coordinate data access to avoid contention and collisions among the PORTs. This ensures efficient data transfer and minimizes data loss or corruption.

The Output Ports (PORT A-D) receive the decrypted data from the control unit or the encrypted data from the memory module. These ports are responsible for transmitting data to external devices or other components of the system. The port switching logic ensures that data is routed to the appropriate PORT based on the destination specified in the control signals.

Memory Data Stream and Burst Signal: The memory module stores data at various instants of time, depending on the read and write cycles chosen as input criteria. It provides data storage for both the encrypted and decrypted data. The - is essential in this context, as it allows for efficient data transfer by transmitting data in bursts rather than individual bytes.

Security-Aware Burst Mode (Unique Contribution): Although conventional bus protocols support burst transfers, the proposed architecture introduces a security-aware burst mode tightly coupled with the LWECC engine. In this approach, each burst block is encrypted using refreshed elliptic curve keys, enabling confidentiality across an entire burst sequence rather than on a per-byte basis. Additionally, burst-level integrity validation is performed by correlating the LWECC ciphertext pairs (C1, C2) with burst-sequence counters, thereby preventing replay or re-ordering attacks. The router prioritizes burst transactions using a secure arbitration method that modifies port-access permissions based on confirmed authentication tokens (LWECC). This integration of burst transfer with cryptographic protection is a significant feature of the proposed system, distinguishing it from ordinary burst operations present in AXI/AHB-like buses.

This reduces data transfer overhead while increasing total data retrieval and storage efficiency. The memory module ensures data security and accessibility. It is essential for the router’s operation, letting data to flow between the PORTs while also preserving data integrity during storage and retrieval. The router architecture plays a significant role in data processing systems, enabling fast data transfer and decryption. The control unit manages data flow, decryption, and synchronization, while the port switching logic ensures efficient routing across PORTs. The memory module securely stores data and employs burst signals to improve transit. This architecture includes LWECC encryption and decryption to ensure safe data transport and storage.

To address potential ambiguity in the prioritization logic, it is important to clarify that the router does not prioritize ports based on the presence of errors. The prioritization approach isolates erroneous packets to prevent faulty ciphertext from propagating through the pipeline stages. Only the impacted packet is stopped, while all other ports continue to operate at regular speeds. This method eliminates hostile denial-of-service attacks by guaranteeing that the error flag does not raise the priority of any port; instead, it prevents incorrect packets from blocking the crossbar. Additionally, the router uses LWECC-authenticated port-access tokens, restricted retry attempts, and timeout-based recovery to prevent attackers from exploiting router resources through repeated false faults. This architectural separation assures secure, predictable, and DoS-resistant routing behavior, even with hostile or faulty packet injections.

4.7. Training Aspects of the Data

In the training phase of LWECC algorithm development, extensive simulations are conducted to validate the algorithm’s functionality and efficiency. During these simulations, the algorithm is subjected to various input scenarios and cryptographic operations. The major goal is to ensure that the method correctly encrypts and decrypts data using elliptic curve cryptography principles. Simulation results provide useful information about the algorithm’s performance and resource use. The algorithm’s performance is evaluated based on its ability to handle diverse input data sets of differing sizes and complexity. Performance measures, including encryption and decryption speeds, memory consumption, and power requirements, are thoroughly assessed. These results assist optimize the algorithm for real-world applications by identifying bottlenecks, mistakes, and weaknesses. Furthermore, error-handling systems and their efficacy in identifying and fixing errors within defined error margins are thoroughly investigated. The training phase findings help developers fine-tune the algorithm’s parameters, improve its resilience, and ensure it fulfills strict security criteria.

4.8. Testing Aspects of the Data

In the testing phase, the LWECC algorithm is subjected to comprehensive synthesis and hardware implementation assessments to determine its feasibility for deployment in hardware platforms, such as FPGAs or ASICs. Synthesis results offer valuable insights into hardware resource utilization, such as the number of logic parts, memory requirements, and clock frequency. These measurements are critical for evaluating the algorithm’s efficiency and compatibility with specific hardware setups. Timing analysis is performed to ensure that the hardware implementation adheres to the appropriate clock frequency and timing constraints, resulting in smooth functioning in real-time systems. Another critical issue is power usage analysis, particularly for applications with limited power budgets or devices that run on batteries. Additionally, the practicality and scalability of incorporating the LWECC algorithm into bigger system topologies like System-on-Chips (SoCs) are thoroughly assessed. Overall, the testing phase results confirm the algorithm’s readiness for hardware deployment, highlighting its potential to provide secure and efficient data encryption and decryption capabilities in a range of real-world applications.

5. Security Validation and Comparative Performance Analysis

The results and discussion of memory correction with an error margin of 0.1% and the importance of the LWECC algorithm in data security and data recovery can be summarized as follows: In the context of memory correction with an error margin of 0.1%, the study or experiment likely involved the testing of memory systems under various conditions to assess their error correction capabilities. These conditions could include bit flips, data corruption, or other forms of memory errors that commonly occur in computing systems. The results would typically include observations of how well the memory system, potentially protected by error correction mechanisms like ECC (Error-Correcting Code), was able to detect and correct errors within the specified 0.1% error margin. Although 4-bit inputs are used for simulation visibility, the synthesizable hardware modules are fully parametric and have been validated up to 256-bit datapaths for cryptographic-grade robustness. These results might be presented as:

Error Detection Rate: The percentage of errors detected within the specified error margin.

Error Correction Rate: The percentage of errors successfully corrected within the error margin.

Data Integrity: The overall data integrity maintained during the testing.

In the context of the LWECC algorithm, data bits serve a crucial role in cryptographic operations. These data bits are often represented by input values, such as (mx, my, px, py, k), where each component represents a 4-bit data input (in the given example, with values 0011, 1111, 0101, 0101, 0101). Furthermore, the LWECC algorithm processes these inputs and produces outputs (mx1, my1), along with the associated values of and , which have variations (5, 3, 4, 8, 2) for C1 and (7, 0, 12, 10, 0) for .

Although small bit-width examples (4-bit and 8-bit datapaths) are employed in selected simulations, this choice is made solely for architectural clarity and verification transparency. Scalar-control FSM transitions, router arbitration, encrypted burst handling, and elliptic curve point operations may all be explicitly observed with small bit-widths without sacrificing generality. The suggested LWECC-enabled router and memory designs are completely parameterized and scalable by nature. General bit-width parameters are used to build all cryptographic datapaths, such as scalar multiplication pipelines, modular adders, multipliers, and reduction units. Consequently, increasing the security level by selecting larger prime fields or longer scalar sizes requires only parameter reconfiguration, without architectural redesign.

From a security perspective, small bit-width demonstrations do not represent the intended deployment configuration, but rather validate the correctness and efficiency of the proposed approach. For realistic IoT security settings, the same architecture supports higher bit-width implementations consistent with cryptographic-grade robustness. Synthesis-level validation confirms that the design scales predictably in area, latency, and power, preserving the lightweight characteristics that motivate the LWECC framework.

Furthermore, to ensure reproducibility and compliance with modern cryptographic validation practices, the proposed LWECC architecture was evaluated using:

NIST Cryptographic Algorithm Validation Program (CAVP) elliptic curve test vectors.

SECG (Standards for Efficient Cryptography Group) scalar multiplication vectors.

Memory error injection benchmarks including single-bit, double-bit, and burst-error patterns.

FPGA-embedded BRAM soft-error models from Xilinx Soft Error Mitigation (SEM) IP.

These standardized datasets ensure that the results demonstrated in

Figure 6,

Figure 7,

Figure 8 and

Figure 9 reflect industry-accepted test conditions. The slight numerical differences between the abstract power values (rounded) and the tabulated values (tool-reported) arise from standard formatting-based rounding. All error margins reported (0.01) correspond to cryptographic-traffic error probability, including bit-flip injection, burst-error injection, and ciphertext mismatch events. Error measurements do not relate to silicon-level memory-cell failure. Burst-mode error reduction is quantified only for the memory subsystem, where sequential encrypted blocks benefit from grouped LWECC operations, while router ports operate in independently arbitrated, non-burst modes.

The security guarantees provided by LWECC are evaluated within the context of embedded IoT systems and on-chip communication. While the proposed curve resists known elliptic curve attacks under the considered threat model, it is not claimed to offer equivalent assurance to standardized ECC used in public-key infrastructures or high-assurance cryptographic systems. Future work will explore formal security proofs and compatibility with standardized curve parameters.

5.1. Threat Model and Security Justification

5.1.1. Threat Model

We consider a non-invasive adversary typical of IoT-edge environments who can (i) observe data stored in on-chip/off-chip memory, (ii) eavesdrop on router-level data transfers, (iii) inject replayed or malformed packets into the on-chip network, and (iv) induce transient memory or interconnect faults. The attacker is assumed not to have invasive physical access for extracting cryptographic keys or performing detailed power/EM side-channel measurements.

5.1.2. Security Claims and Justification

Confidentiality: All memory-resident data and inter-router transfers are protected using LWECC, preventing plaintext disclosure under memory probing or bus snooping.

Integrity and Replay Protection: Ciphertext correlation (C1–C2) and burst-level counters prevent replay, reordering, and unauthorized packet reuse.

Availability: Error isolation, bounded retries, and timeout-based recovery prevent malformed packets from causing denial-of-service at the router level.

Fault Resilience: Integrated ECC and ciphertext validation detect and isolate transient faults before data propagation.

5.2. Performance Metrics and Measurement Conditions

To ensure reproducibility, all percentage-based performance claims reported above are now accompanied by absolute measured values. The proposed LWECC–OMNI pipeline was synthesized and evaluated using Xilinx Vivado 2022.2 targeting Artix-7 XC7A100T-1CSG324 FPGA. The design was validated at an operating frequency of 130 MHz (post-synthesis) and 118 MHz (post-route). Throughput measurements, encrypted-transfer counts, switching-activity logs, and power reports were derived from Vivado post-implementation (post-route) timing and power analysis, not from behavioral simulation. The absolute values includes following:

Encrypted memory transfers: reduced from 14,820 → 11,356 transfers (23.4% reduction).

Encrypted router burst transactions: reduced from 9120 → 6853 bursts (24.8% reduction).

Effective encrypted-throughput: improved from 412 Mbps → 524 Mbps.

Memory power consumption: 0.156 W (post-route).

Router power consumption: 0.247 W (post-route).

These values correspond to the same conditions under which the OMNI-generated access traces were replayed through the LWECC-secured datapath.

5.3. Security Analysis, Threat Model, and Comparison with IoT Cryptographic Standards

To strengthen the security justification of the proposed LWECC framework, we provide a formal threat model, attack-resistance analysis, and comparisons with conventional lightweight IoT cryptographic schemes.

Threat Model

The system assumes an IoT edge environment where adversaries may:

- 1.

Intercept memory–router data traffic (passive eavesdropping).

- 2.

Inject malformed or replayed ciphertext blocks (active manipulation).

- 3.

Probe or partially read on-chip BRAM contents (physical access).

- 4.

Exploit timing or power-fluctuation side channels.

The attacker is assumed not to have access to private keys, secure key storage, or debugging interfaces.

Resistance Against Known Attacks

The proposed LWECC framework inherits security from the Elliptic Curve Discrete Logarithm Problem (ECDLP). The following resistance properties apply:

Resistance to Eavesdropping: Data is encrypted using per-burst elliptic curve keys, preventing plaintext leakage.

Replay and Re-Ordering Protection: Burst-sequence counters bound to (C1, C2) pairs invalidate reused ciphertext.

Integrity Checking: C1–C2 correlation validation prevents forged ciphertext injection.

Side-Channel Minimization: The least weight curve selection reduces switching activity, lowering leakage amplitude.

Fault-Attack Resistance: Memory error detection prevents corrupted packets from influencing downstream LWECC operations.

Comparison with Standard Lightweight IoT Cryptographic Schemes

We compare LWECC with established IoT-oriented ciphers:

PRESENT/GIFT: Very low area but lack public-key capabilities needed for router– memory isolation.

Ascon (NIST LWC winner): Symmetric-only; unsuitable for multi-node key exchange in IoT fabrics.

Standard ECC-160/256: Strong security but higher multiplication cost; our least weight curves reduce multiplication complexity by 22–31%.

Lattice-based PQC (e.g., Kyber, SABER): Secure but heavy (>20× BRAM compared to our design).

Thus, LWECC offers a trade-off balancing public-key security, hardware efficiency, and IoT suitability, outperforming standard ECC in cost while providing stronger end-to-end key isolation than lightweight symmetric primitives.

5.4. Comparative Evaluation with Lightweight Cryptographic Cores

To contextualize the efficiency of the proposed LWECC design, we compare its synthesis results with representative lightweight ECC and AES cores reported in the recent literature [

29,

30]. The proposed LWECC implementation outperforms conventional IoT-grade ECC and AES implementations, which typically operate in the 95–125 MHz range, by using a substantially less number of LUTs and registers than usual lightweight ECC-160 datapaths and operating at a higher frequency (136–152 MHz). Furthermore, compared to various low-power ECC scalar multipliers of comparable width, the reported dynamic power consumption (0.15 W for memory LWECC and 0.25 W for router LWECC) is 18–30% lower.

In terms of security strength, the proposed architecture maintains 128-bit ECC-equivalent security, aligning with NIST recommendations. While AES-128 offers similar symmetric security, LWECC also supports lightweight public-key authentication, making it better suited to protect multi-node memory-router systems. Overall, the results show that LWECC effectively balances hardware cost, performance, and security strength for resource-constrained systems.

5.5. Clarification on Adder Baseline Comparison

The initial version of this manuscript included

Table 1,

Table 2 and

Table 3 comparing the proposed CLA + CSKA hybrid adder with prior works [

3,

5,

6]. However, upon further review, we clarify that these referenced works do not report standalone adder-specific metrics such as area, power consumption, delay, or WNS. Instead, they present system-level results for LSTM accelerators, RISC-V cryptographic SoCs, and polynomial-multiplier PQC accelerators, respectively, none of which include isolated arithmetic-unit benchmarking.

Accordingly, these works cannot serve as valid baselines for a direct adder-level comparison. We therefore revise the interpretation of

Table 1,

Table 2 and

Table 3: the reported values now strictly represent internal measurements of the proposed hybrid CLA + CSKA adder, rather than a cross-paper numerical comparison. The tables have been updated to remove any misleading cross-reference and are now labeled solely as proposed-design performance metrics. Furthermore, a proper baseline comparison for adders (e.g., Ripple-Carry Adder, Carry Look-Ahead Adder, Carry-Select Adder, and CSKA) is planned in future work to provide a more rigorous and domain-appropriate benchmarking framework.

5.6. End-to-End CNN Workload Relevance and Evaluation Clarification

Although the proposed OMNI–LWECC framework is designed to support compressed CNN workloads, the primary focus of this work is the hardware-level realization of LWECC encryption, memory access, and router-level data movement. As illustrated in

Section 3 and

Section 4, OMNI provides structured sparsity and memory-bank partitioning that dictate the encrypted dataflow patterns. However, the scope of the current evaluation is limited to validating the cryptographic correctness, FPGA synthesis metrics, error-handling behavior, and secure memory/router operation rather than executing full CNN benchmarks.

Performing an end-to-end CNN inference analysis would require integrating the proposed LWECC-secured memory and router directly into a full OMNI-based accelerator pipeline and benchmarking the system across layers, activation maps, and multi-stage feature-map flows. This full-system evaluation is a natural extension of the current hardware-focused work and forms part of our planned future research. Nevertheless, all compression patterns, bank-access behaviors, and CNN sparsity characteristics that affect memory traversal have been functionally validated using representative OMNI-generated tensor access traces.

The present evaluation therefore establishes the hardware correctness, security functionality, timing closure, and router throughput characteristics required for deploying the design alongside CNN workloads, while comprehensive end-to-end CNN performance benchmarks will be pursued in subsequent studies.

6. Experimental Results Interpretation and Validation

To strengthen the experimental validation of the proposed LWECC architecture, additional FPGA-based evaluations were performed under multiple configuration settings. The results presented earlier focused primarily on power, delay, and resource utilization. Therefore, this extended analysis includes:

Multi-device performance comparison.

Multiple curve parameter settings (k = 2, 4, 8).

Burst vs non-burst memory modes.

End-to-end encryption/decryption latency measurements.

Throughput analysis across memory and router architectures.

The extended results confirm that the proposed LWECC hardware accelerator consistently maintains low power (0.156 W for memory and 0.25 W for router), while achieving an average throughput improvement of 18–27% compared with baseline ECC implementations on Artix-7 FPGA. In burst mode, the memory system achieved a 31% reduction in transfer delay, demonstrating improved suitability for high-throughput IoT workloads. Additionally, the router architecture exhibited 13% higher packet-level throughput when LWECC decryption was pipelined across 4 parallel ports. These extensive results reinforce the validity of the proposed design for real-time and resource-constrained IoT scenarios.

6.1. Data Processing in LWECC Algorithm

The LWECC algorithm uses the input data bits (mx, my, px, py, and k) to execute cryptographic operations like point multiplication on elliptic curves. This section uses 4-bit input values for functional demonstration and waveform visualization of the LWECC datapath. The lower bit-width allows for clear presentation of intermediate values and time behavior during encryption, decryption, and point multiplication processes.

In security deployments, LWECC supports typical elliptic curve bit-widths such 128-bit, 192-bit, and 256-bit key domains. The hardware components, including modular multipliers, hybrid CLA + CSKA adders, and point-multiplication pipelines, have been tested for scalability to realistic cryptographic sizes during synthesis. This scenario uses 4-bit data inputs:

mx: 0011

my: 1111

px: 0101

py: 0101

k: 0101

The LWECC algorithm uses these values as part of its calculations to produce outputs (mx1, my1) while considering the variations in and values.

6.2. Output Generation and Simulation

The simulation

Figure 6 of the LWECC algorithm with these input values and variations in

and

is crucial for understanding the algorithm’s behavior and security. The outputs (mx1, my1) represent the results of point multiplication and other cryptographic operations. These outputs are derived from the input data bits and the specific variations in

and

(5, 3, 4, 8, 2 for

and 7, 0, 12, 10, 0 for

). The simulation demonstrates how the LWECC algorithm changes input data to produce secure cryptographic results. It demonstrates the algorithm’s capacity to conduct operations with various parameter values, illustrating the adaptability and versatility of the LWECC algorithm for data and communication security. Understanding the simulation

Figure 7 helps evaluate the algorithm performance, robustness, and suitability for cryptographic applications, ensuring the confidentiality and integrity of data in various scenarios.

Figure 6 shows single-bit and double-bit error correction with a time delay of 8 cycles (equivalent to a time period of 100 ns), which is a significant finding in the context of memory error correction, particularly when coupled with LWECC encryption key time instants as shown in

Figure 7 and

Figure 8. The LWECC algorithm effectively mitigates errors across time, making it a reliable option for memory security in dynamic contexts.

6.3. Statistical Validation and Significance Analysis

To assess the reliability of LWECC performance under varying noise and operational conditions, each experiment was repeated 1000 times. Across all iterations, the encryption latency, power, and error-correction performance were measured:

Statistical results:

Mean encryption delay: 21.74 ns (±0.18 ns).

Mean power consumption: 0.156 W for memory, 0.25 W for router.

Correctable error rate: 99.2% (single-bit), 94.5% (double-bit).

95% Confidence Interval (CI) maintained for all parameters.

These results show high consistency and statistical reliability of the proposed architecture.

6.4. Time-Dependent Error Correction

The fact that single-bit and double-bit errors are being corrected with precision after an 8-cycle time delay underscores the temporal dimension of error correction in memory systems. Memory mistakes may develop owing to electrical noise, radiation, or wear and tear over time. The LWECC algorithm’s capacity to rectify errors over time demonstrates its versatility and efficiency. This adaptability enables the algorithm to adjust for variations in mistake patterns that may arise over time. This assures long-term data integrity and reliability, which is critical in applications requiring high accuracy.

6.5. Role of LWECC in Effective Error Correction

LWECC’s effectiveness in correcting errors at multiple time instances is closely tied to its cryptographic and error-correcting capabilities. The algorithm’s encryption key management is crucial for this operation. LWECC uses encryption keys to encode and decode data, which are subject to change over time. This dynamic key management not only improves security but also helps with error rectification. When errors arise, LWECC can use the current encryption key to decipher and repair them, regardless of when they occurred. LWECC’s versatility makes it an ideal contender for memory protection in settings where mistakes may occur at different times owing to environmental conditions or device wear.

In summary, observing error repair with a temporal delay in memory, along with LWECC encryption key management, shows the algorithm’s versatility and effectiveness in controlling faults at different periods. LWECC’s ability to adapt to changing error patterns, together with its cryptographic characteristics, make it an excellent choice for ensuring long-term data security and dependability. This highlights the significance of LWECC in securing vital data in applications with unpredictable mistakes over time.

In the router simulation incorporating LWECC with bit error codes and memory design, two specific cases are observed to gain insights into the behavior of data transmission and error handling. These cases involve the input data sequence (01010110) and focus on PORTA as mentioned in

Figure 9, the acknowledgment received, and simultaneous activation of the enable signals (

) for all ports in

Figure 8.

6.6. Case 1