A New Algorithm for Finding Initial Basic Feasible Solutions of Transportation Problems

Abstract

1. Introduction

Research Gap and Contribution

2. Literature Review

3. Methodology: Finding Initial Basic Feasible Solutions

3.1. Notation

- Stopping criterion: Continue allocating until all supplies and demands are satisfied.

- Degeneracy safeguard: An IBFS must contain exactly basic cells. If a row and a column are both exhausted by the same allocation, cross out one line and leave the other active with a zero residual, or place a tiny bookkeeping allocation in a zero-cost eligible cell (do not alter totals) to maintain basic variables.

- Tie-breaking (deterministic): When ties occur, use: (i) prefer row-first over column when methods require choosing a line; (ii) within a line, choose the lowest cost; if still tied, choose the leftmost (smallest column index); if still tied, choose the topmost (smallest row index). Using a fixed rule ensures reproducibility.

- Quality measure: The IBFS cost is

3.2. General Steps for Finding IBFS

- 1.

- Balance the problem. If total supply and total demand differ, add a zero-cost dummy row (when demand exceeds supply) or dummy column (when supply exceeds demand) so totals match.

- 2.

- Initialize. Mark all rows and columns as active, with their remaining supply/demand.

- 3.

- Choose a line or cell (selection rule). Apply one of the rules below to decide where to allocate next.

- 4.

- Allocate. In the chosen cell , set

- 5.

- Update the rim. Reduce the row’s remaining supply and the column’s remaining demand by .

- 6.

- Cross out satisfied lines. Any row/column that reaches zero becomes inactive.

- 7.

- Handle degeneracy. If a single allocation makes both a row and a column reach zero, cross out one and keep the other active with zero balance (or place a formal in an eligible cell) so that the final IBFS has exactly basic cells.

- 8.

- Repeat. Recompute the quantities required by the selection rule on the reduced tableau and return to Step 3 until all supplies and demands are satisfied.

- 9.

- Stop. When all rim requirements are met, the current constitute an IBFS.

- 10.

- The IBFS cost is computed using Equation (1).

Selection Rules (To Use in Step 3)

- North–West Corner Method (NWCM): Start at the top-left active corner, allocate, then move right when a column is satisfied and down when a row is satisfied.

- Least Cost Method (LCM): Choose the globally cheapest active cell.

- Vogel’s Approximation Method (VAM): For each active line, the penalty iswhere and are the smallest and second smallest costs in that line.

- Mean Minima Method: For each active line, computeand select the line with the smallest mean.

3.3. Computational Complexity Analysis

4. Results and Discussions

4.1. The New Algorithm for Finding Initial Basic Feasible Solutions (IBFS) of Transportation Problems

4.1.1. Step 1: Balance Check

4.1.2. Step 2: Row and Column Fractional Penalties

4.1.3. Step 3: Selection and Allocation

4.1.4. Step 4: Iteration

4.1.5. Step 5: IBFS Cost

4.2. Monotonicity Property of the Fractional Penalty

4.3. Demonstration of the New Algorithm

4.3.1. Iteration 1

4.3.2. Iteration 2

4.3.3. Iteration 3

4.3.4. Iteration 4

4.3.5. Iteration 5

4.3.6. Iteration 6

4.3.7. Iteration 7

4.3.8. Iteration 8

4.3.9. Iteration 9

Final Initial Basic Feasible Solution

IBFS Cost

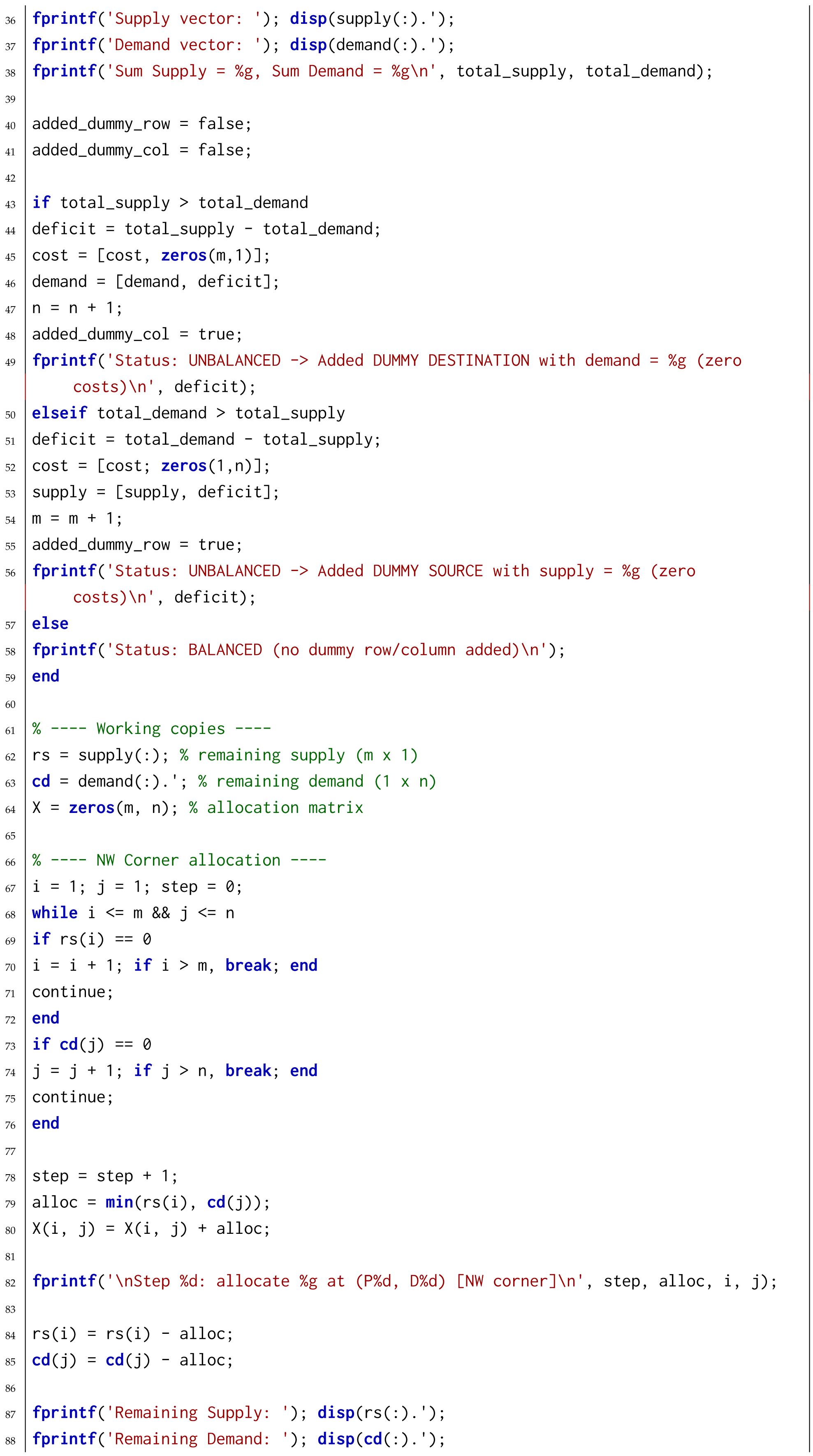

4.4. Optimality Gap Analysis

4.5. Comparison of the New Algorithm with Existing Algorithms in Terms of Solutions

4.6. Statistical Validation of Comparative Results

Reference Optimal Values

4.7. Interpretation of Aggregated Optimality Gap Statistics

4.8. Wilcoxon Signed-Rank Test Formulation

4.9. Paired t-Test Formulation

4.10. Graphical Comparison of Optimality Gaps

4.11. Benchmark Characteristics

- Uniformly distributed costs,

- Highly skewed cost distributions,

- Clustered near-equal costs,

- Instances containing zero or near-zero costs.

4.12. Comparison of the Computational Speed of the New Algorithm with VAM

5. Theoretical Justification of the Fractional Penalty

5.1. Scale Invariance Property

5.2. Structural Relationship to VAM

5.3. Degeneracy Handling

5.4. Dominance Considerations: Additive vs. Multiplicative Ordering

5.5. Discussions

5.6. Sensitivity and Boundary Conditions

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Appendix A. MATLAB Codes for the New Algorithm

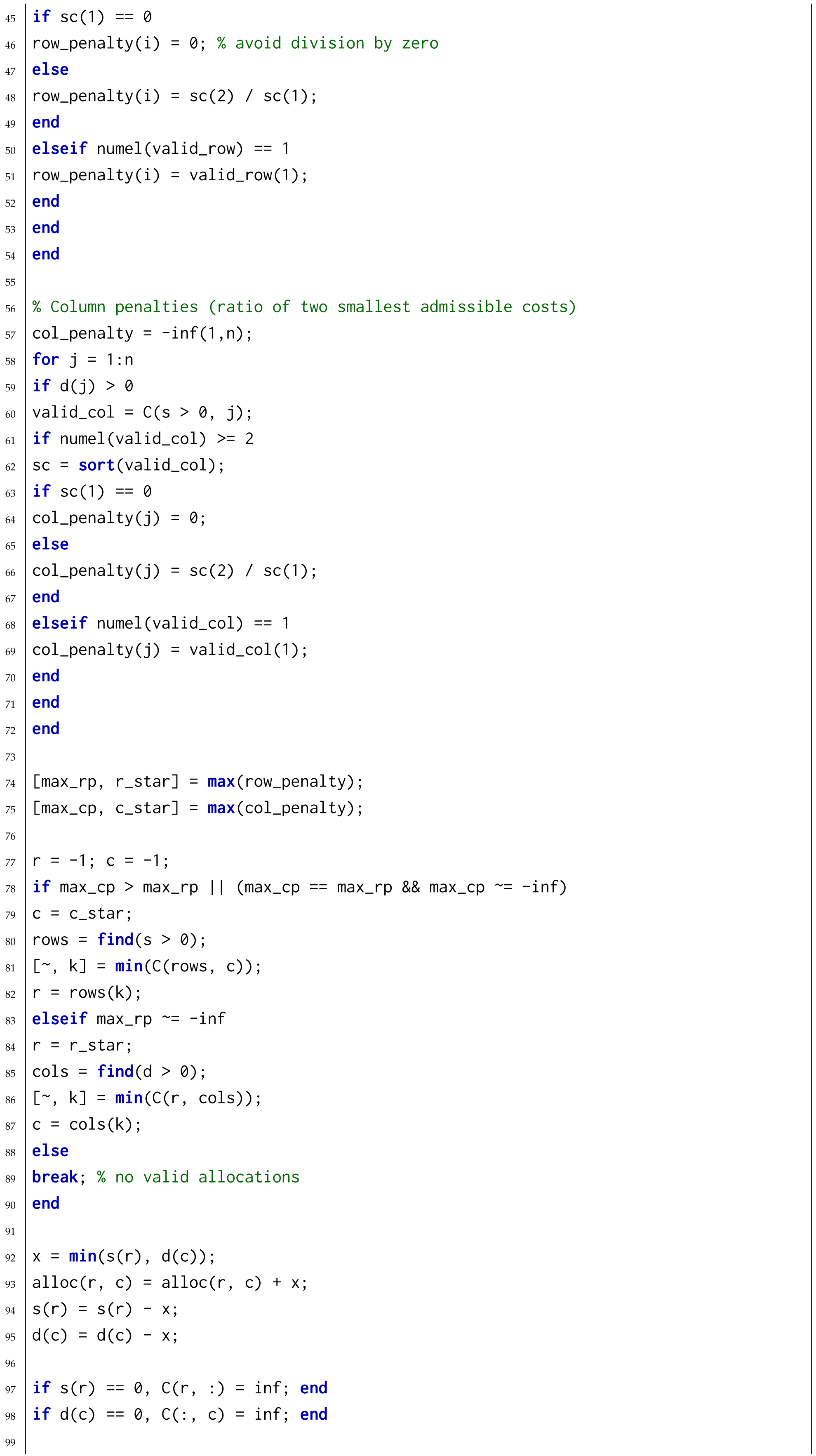

Appendix B. MATLAB Codes for the Vogel’s Approximation Method (VAM)

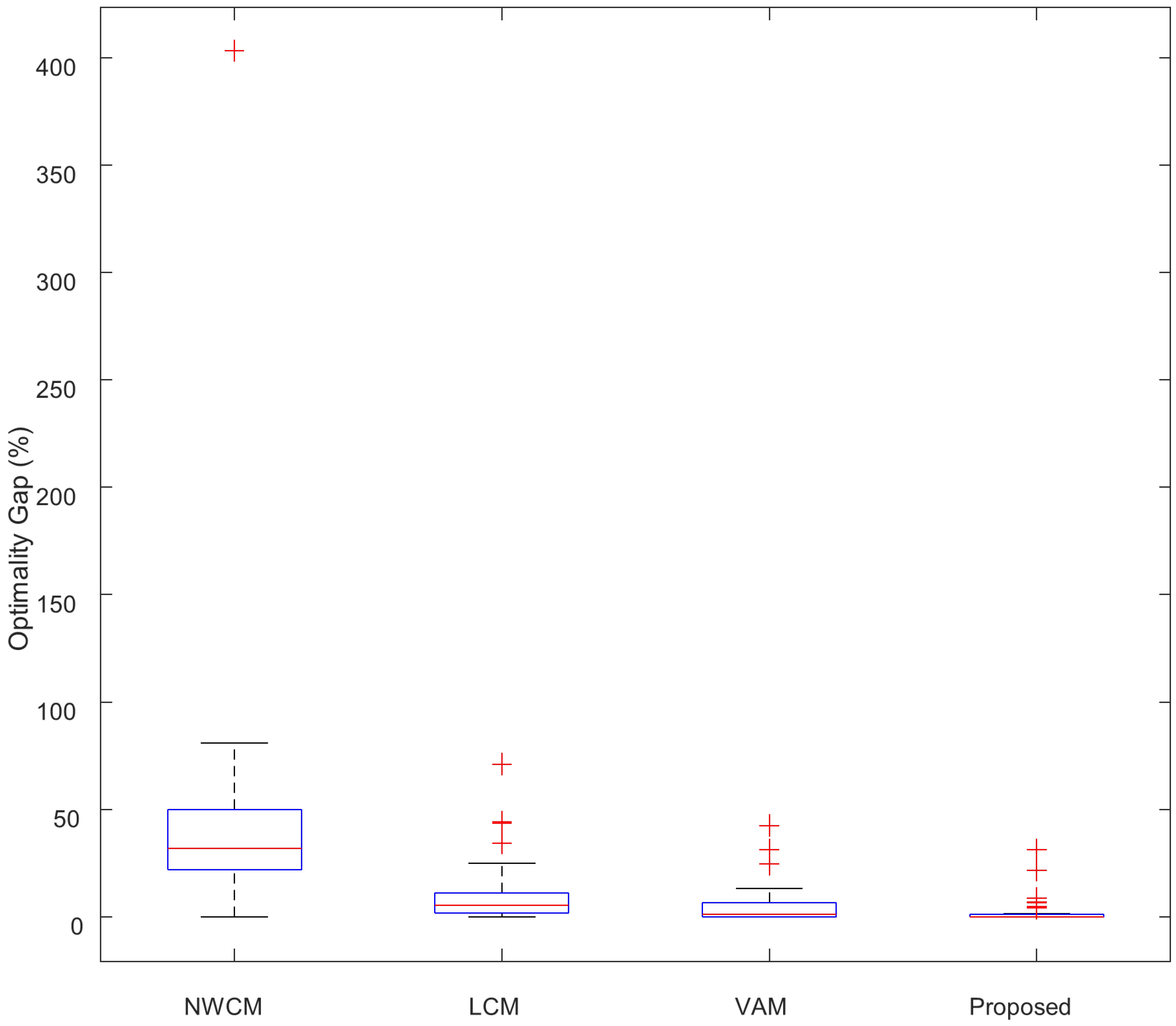

Appendix C. MATLAB Codes for the Least Cost Method (LCM)

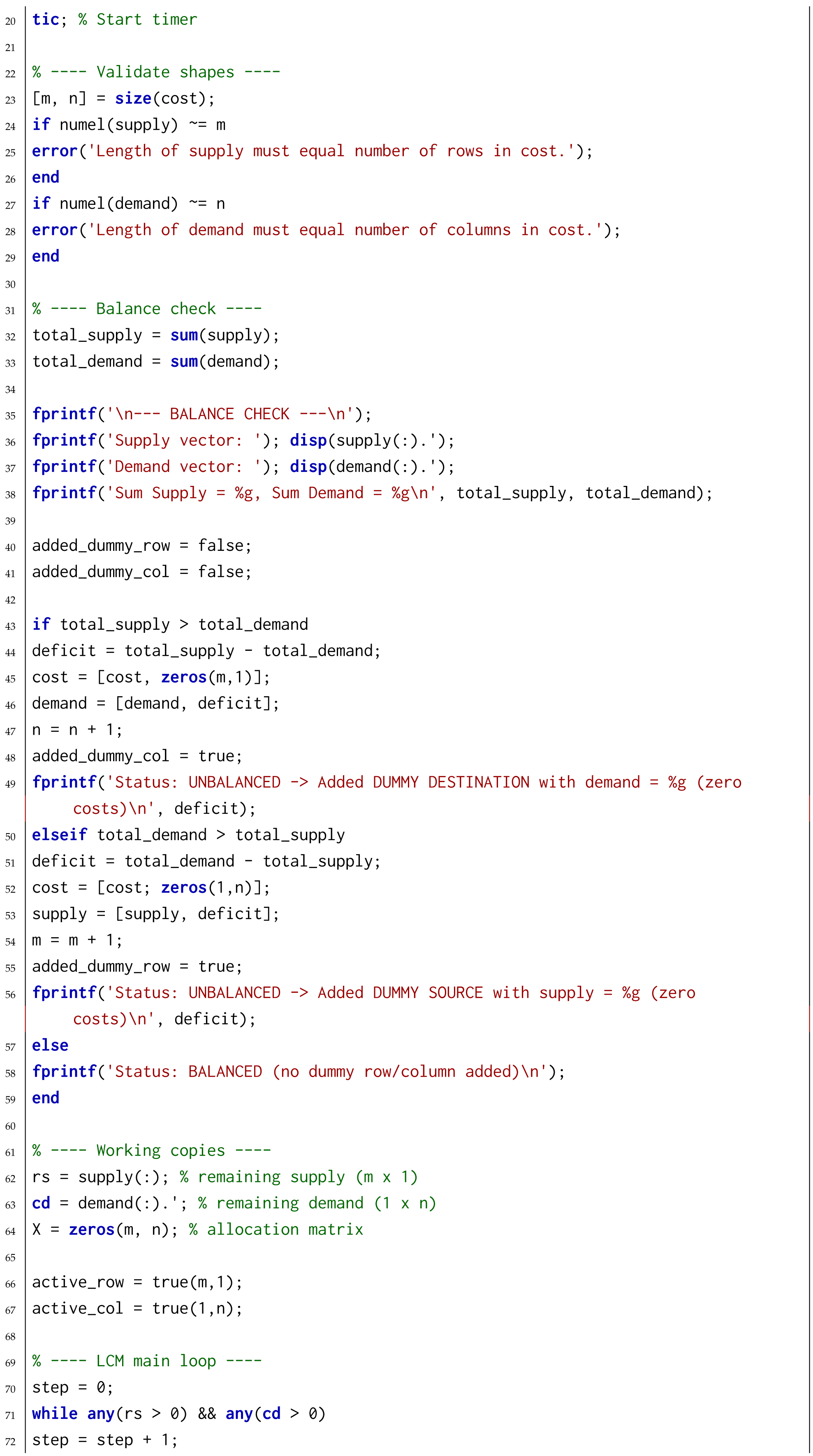

Appendix D. MATLAB Codes for the North-West Corner Method (NWCM)

References

- Hitchcock, F.L. The distribution of a product from several sources to numerous localities. J. Math. Phys. 1941, 20, 224–230. [Google Scholar] [CrossRef]

- Dantzig, G.B. Linear Programming and Extensions; Princeton University Press: Princeton, NJ, USA, 1951. [Google Scholar]

- Charnes, A.; Cooper, W.W. The stepping-stone method of explaining linear programming calculations in transportation problems. Manag. Sci. 1954, 1, 49–69. [Google Scholar] [CrossRef]

- Vogel, W.R. A method for finding initial solutions of the transportation problem. Northwestern Univ. Ind. Eng. Bull. 1958, 3, 1–15. [Google Scholar]

- Russell, R.A. An effective heuristic for the transportation problem. Oper. Res. 1969, 17, 187–191. [Google Scholar] [CrossRef][Green Version]

- Wireko, F.A.; Mensah, I.D.K.; Aborhey, E.N.A.; Appiah, S.A.; Sebil, C.; Ackora-Prah, J. The maximum range method for finding IBFS for transportation problems. Results Control Optim. 2025, 19, 100551. [Google Scholar] [CrossRef]

- Abdelati, M. A new approach for finding an initial basic feasible solution. J. Adv. Eng. Trends 2024, 43, 77–85. [Google Scholar] [CrossRef]

- Amreen, M.; Venkateswarlu, B. A new strategy using exponential distribution for IBFS. Baghdad Sci. J. 2025, 22, 23. [Google Scholar]

- Ekanayake, D.; Ekanayake, U. A novel approach algorithm for determining the initial basic feasible solution. Indones. J. Innov. Appl. Sci. 2022, 2, 234–246. [Google Scholar]

- Winston, W.L.; Goldberg, J.B. Operations Research: Applications and Algorithms, 5th ed.; Cengage: Boston, MA, USA, 2022. [Google Scholar]

- Taha, H.A. Operations Research: An Introduction, 10th ed.; Pearson: London, UK, 2017. [Google Scholar]

- Hillier, F.S.; Lieberman, G.J. Introduction to Operations Research, 10th ed.; McGraw-Hill: Columbus, OH, USA, 2015. [Google Scholar]

- Hasan, M. On initial feasible solutions for the transportation problem. Int. J. Math. Oper. Res. 2012, 4, 357–369. [Google Scholar]

- Shaikh, A.; Pathan, R.; Khan, S. Hybrid initial solution strategies for the transportation problem. J. Appl. Res. Ind. Eng. 2018, 5, 274–284. [Google Scholar]

- Sabir, M. Optimal solutions to transportation problems: A case-based analysis. Int. J. Logist. Syst. 2009, 4, 211–224. [Google Scholar]

- Sen, D.; Pal, S.; Roy, S. Large-scale cost matrices and industrial logistics planning. Int. J. Ind. Eng. Comput. 2020, 11, 423–440. [Google Scholar]

- Dnyaneshwar, D.; Shinde, P.; Patil, S. Exact and heuristic approaches for transportation problems: A survey. Int. J. Oper. Res. 2016, 13, 77–88. [Google Scholar]

- Bazaraa, M.S.; Jarvis, J.J.; Sherali, H.D. Linear Programming and Network Flows, 4th ed.; Wiley: Hoboken, NJ, USA, 2010. [Google Scholar]

- Rezaul, K.M.; Rahman, M.; Islam, S. On improved initial solutions and optimality tests in TP. J. Appl. Math. Comput. 2021, 67, 201–222. [Google Scholar]

- Murugesan, G.; Esakkiammal, C. On efficient optimality checks for the transportation problem. Int. J. Sci. Technol. Res. 2019, 8, 1202–1208. [Google Scholar]

- Sanaullah, M.; Fatichah, C.; Erma, S. Total opportunity cost matrix: A new approach to determine IBFS. Egypt. Inform. J. 2019, 20, 131–141. [Google Scholar]

- Sharma, J.K. Operations Research: Theory and Applications, 5th ed.; Macmillan: New York, NY, USA, 2014. [Google Scholar]

- Pandian, P.; Natarajan, G. A new method for finding an optimal solution for transportation problems. Int. J. Math. Arch. 2012, 3, 1831–1834. [Google Scholar]

- Balakrishnan, N. Modified Vogel’s approximation method for transportation problems. Appl. Math. Lett. 1990, 3, 1–3. [Google Scholar] [CrossRef]

- Aliyu, M.L.; Usman, U.; Babayaro, Z.; Aminu, M. Minimization of Transportation Cost in a Multi-Source Network. Am. J. Oper. Res. 2019, 9, 1–7. [Google Scholar]

- Rardin, R.L. Optimization in Operations Research; Prentice Hall: Upper Saddle River, NJ, USA, 1998. [Google Scholar]

- Koruloğlu, S.; Ballı, S. Large-scale transportation problem instances and solution characteristics. Comput. Ind. Eng. 2011, 61, 330–340. [Google Scholar]

- Al-Sabawi, A.; Hayawi, K. A comparative study of initial solution methods for the transportation problem. J. Oper. Res. 2002, 5, 123–131. [Google Scholar]

- Al-Badri, K.; Saleh, H. Efficient approaches for solving transportation problems. Appl. Math. Comput. 2007, 185, 123–132. [Google Scholar]

- Hakim, A. Variants of the MODI method for faster optimality in transportation problems. Appl. Math. Sci. 2012, 6, 3621–3632. [Google Scholar]

- Aramuthakanan, R. An empirical investigation of solution times in small transportation problems. Asian J. Math. Stat. 2013, 6, 89–96. [Google Scholar]

- Sridhar, A.; Pitchai, R. Performance evaluation of optimality methods for large transportation instances. Int. J. Pure Appl. Math. 2018, 118, 3567–3584. [Google Scholar]

| D1 | D2 | D3 | D4 | D5 | D6 | Supply | |

|---|---|---|---|---|---|---|---|

| P1 | 25 | 30 | 20 | 40 | 45 | 37 | 37 |

| P2 | 30 | 25 | 20 | 30 | 40 | 20 | 22 |

| P3 | 40 | 20 | 40 | 35 | 45 | 22 | 32 |

| P4 | 25 | 24 | 50 | 27 | 30 | 25 | 14 |

| Demand | 15 | 20 | 15 | 25 | 20 | 10 | 105 |

| Line | Penalty | ||

|---|---|---|---|

| Row P1 | 20 | 25 | |

| Row P2 | 20 | 20 | |

| Row P3 | 20 | 22 | |

| Row P4 | 24 | 25 | |

| Col D1 | 25 | 25 | |

| Col D2 | 20 | 24 | |

| Col D3 | 20 | 20 | |

| Col D4 | 27 | 30 | |

| Col D5 | 30 | 40 | |

| Col D6 | 20 | 22 |

| Example | Problem Size | Sources and Problems | VAM | NWCM | LCM | New Algorithm |

|---|---|---|---|---|---|---|

| 1 | [28] = [4 3 1 2 6; 5 2 3 4 5; 3 5 6 3 2; 2 4 4 5 3; 4 3 6 5 1]; = [65 50 40 20 25]; = [60 60 30 40 10]. | 420 | 760 | 420 | 420 | |

| 2 | [29] = [20 16 14 20; 9 15 16 10; 8 13 5 9; 9 6 5 11]; = [9 8 7 5]; = [5 10 5 9]. | 308 | 392 | 308 | 308 | |

| 3 | [29] = [5 1 2 3 4 7; 7 2 3 1 5 6; 9 1 9 5 2 3; 6 5 8 4 1 4; 8 7 11 6 4 5; 2 5 7 5 2 1]; = [400 500 300 150 600 350]; = [300 500 700 300 250 250]. | 8400 | 9600 | 8600 | 8400 | |

| 4 | [30] = [7 3 8 2; 5 6 11 12; 10 4 7 6]; = [100 200 300]; = [80 170 190 160]. | 3210 | 4010 | 4010 | 3210 |

| Example | Problem Size | Sources and Problems | VAM | NWCM | LCM | New Algorithm |

|---|---|---|---|---|---|---|

| 5 | [31] = [46 74 9 28 99; 12 75 6 36 48; 35 199 4 5 71; 61 81 44 88 9; 85 60 14 25 79]; = [461 277 356 488 393]; = [278 60 461 116 1060]. | 64,499 | 68,969 | 72,174 | 64,499 | |

| 6 | [32] = [6 8 10; 7 11 11; 4 5 12]; = [150 175 275]; = [200 100 300]. | 5125 | 5925 | 4550 | 4525 | |

| 7 | [25] = [9 12 9 6 9 10; 7 3 7 7 5 5; 6 5 9 11 3 11; 6 8 11 2 2 10]; = [5 6 2 9]; = [4 4 6 2 4 2]. | 112 | 139 | 114 | 112 | |

| 8 | [26] = [4 3 1 2 6; 5 2 3 4 5; 3 5 6 3 2; 2 4 4 5 3]; = [80 60 40 20]; = [60 60 30 40 10]. | 450 | 670 | 420 | 420 | |

| 9 | [11] = [10 30 25 15; 20 15 20 10; 10 30 20 20; 30 40 35 45]; = [14 10 15 12]; = [10 15 12 15]. | 1005 | 1220 | 1075 | 1000 | |

| 10 | [30] = [1 2 1 4 5 2; 3 3 2 1 4 3; 4 2 5 9 6 2; 3 1 7 3 4 6]; = [30 50 75 20]; = [20 40 30 10 50 25]. | 450 | 740 | 450 | 450 | |

| 11 | [31] = [11 9 6; 12 14 11; 10 8 10]; = [40 50 40]; = [55 45 30]. | 1200 | 1490 | 1200 | 1200 | |

| 12 | [7]. = [30 50 40 60 35; 65 35 45 30 25; 35 40 60 40 30; 20 30 50 45 35]; = [20 15 25 20]; = [15 18 10 17 20]. | 2600 | 3105 | 2675 | 2600 |

| Example | Problem Size | Sources and Problems | VAM | NWCM | LCM | New Algorithm |

|---|---|---|---|---|---|---|

| 13 | [17] = [15 12 10 8; 17 18 21 14; 14 15 10 21]; = [24 8 18]; = [11 9 21 9]. | 571 | 760 | 595 | 571 | |

| 14 | [32] = [5 8 6 6 3; 4 7 7 6 5; 8 4 6 6 4]; = [800 500 900]; = [400 400 500 400 800]. | 9800 | 13,100 | 10,200 | 9200 | |

| 15 | [32] = [3 1 7 4; 2 6 5 9; 8 3 3 2]; = [300 400 500]; = [250 350 400 200]. | 2850 | 4400 | 2900 | 2850 | |

| 16 | [26] = [4 1 2 4 4; 2 3 2 2 2; 3 5 2 4 4]; = [60 35 40]; = [22 45 20 18 30]. | 275 | 363 | 305 | 273 | |

| 17 | [12] = [5 19 12 70 66 74 283; 103 89 81 26 23 62 97]; = [4000 47,700]; = [21,600 15,600 15,600 19,500 16,800 10,500 8100]. | 2,332,700 | 2,336,000 | 2,348,300 | 1,992,700 | |

| 18 | [23] = [3 48 14 2; 4 2 30 10; 36 8 12 12]; = [24 24 2]; = [6 12 3 44]. | 224 | 906 | 308 | 180 |

| Example | Problem Size | Sources and Problems | VAM | NWCM | LCM | New Algorithm |

|---|---|---|---|---|---|---|

| 19 | [21] = [9 12 9 6 9 10; 7 3 7 7 5 5; 6 5 9 11 3 11; 6 8 11 2 2 10]; = [5 6 2 9]; = [4 4 6 2 4 2]. | 112 | 119 | 114 | 112 | |

| 20 | [21] = [3 5 7 6; 2 5 8 2; 3 6 9 2]; = [50 75 25]; = [20 20 50 60]. | 650 | 670 | 650 | 650 | |

| 21 | [20] = [10 8 9 5 13; 7 9 8 10 4; 9 3 7 10 6; 11 4 8 3 9]; = [100 80 70 90]; = [60 40 100 50 90]. | 2130 | 3010 | 2070 | 2070 | |

| 22 | [8] = [9 12 9 6 9 10; 7 3 7 7 5 5; 6 5 9 11 3 11; 6 8 11 2 2 10]; = [2 5 6 9]; = [2 2 4 4 4 6]. | 109 | 167 | 122 | 109 | |

| 23 | [22] = [3 4 6; 7 3 8; 6 4 5; 7 5 2]; = [100 80 90 120]; = [110 110 60]. | 840 | 1010 | 1210 | 840 | |

| 24 | [16] = [60 120 75 180; 58 100 60 165; 62 110 65 170; 65 115 80 175; 70 135 85 195]; = [8000 9200 6250 4900 6100]; = [5000 2000 10,000 6000]. | 2,164,000 | 2,398,000 | 2,383,000 | 2,160,000 |

| Example | Problem Size | Sources and Problems | VAM | NWCM | LCM | New Algorithm |

|---|---|---|---|---|---|---|

| 25 | [19] = [4 1 3 4 4; 2 3 2 2 3; 3 5 2 4 4]; = [60 35 40]; = [22 45 20 18 30]. | 305 | 363 | 307 | 290 | |

| 26 | [19] = [6 4 1; 3 8 7; 4 4 2]; = [50 40 60]; = [20 95 35]. | 555 | 710 | 555 | 555 | |

| 27 | [24] = [73 40 9 79 20; 62 93 96 8 13; 96 65 80 50 65; 57 58 29 12 87; 56 23 87 18 12]; = [8 7 9 3 5]; = [6 8 10 4 4]. | 1128 | 1994 | 1123 | 1104 | |

| 28 | [26] = [25 30 20 40 45 37; 30 25 20 30 40 20; 40 20 40 35 45 22; 25 24 50 27 30 25]; = [37 22 32 14]; = [15 20 15 25 20 10]. | 2850 | 3195 | 2878 | 2785 | |

| 29 | [10] = [8 6 10 9; 9 12 13 7; 14 9 16 5]; = [35 50 40]; = [45 20 30 30]. | 1020 | 1180 | 1080 | 1020 | |

| 30 | [6] = [7 8 10; 9 7 8]; = [50 50]; = [40 40 40]. | 730 | 730 | 800 | 730 | |

| 31 | Self. = [6 9 5 7 8 6 4 9 3 7; 7 5 8 6 9 7 5 8 6 4; 8 6 9 7 5 8 6 9 7 5; 5 8 6 9 7 5 8 6 9 7; 9 7 5 8 6 9 7 5 8 6]. = [35 50 45 40 30]. = [15 20 25 10 30 18 12 22 20 28]. | 973 | 1434 | 973 | 973 |

| Example | Problem Size | Sources and Problems | VAM | NWCM | LCM | New Algorithm |

|---|---|---|---|---|---|---|

| 32 | Self. = [4 6 9 7 8 5 7 6 8 9; 7 4 6 9 7 8 5 7 6 8; 8 7 4 6 9 7 8 5 7 6; 6 8 7 4 6 9 7 8 5 7; 5 6 8 7 4 6 9 7 8 5; 9 5 6 8 7 4 6 9 7 8; 7 9 5 6 8 7 4 6 9 7; 6 7 9 5 6 8 7 4 6 9; 8 6 7 9 5 6 8 7 4 6; 6 8 6 7 9 5 6 8 7 4]. = [30 25 40 35 20 50 25 30 20 25]. = [35 20 25 30 15 40 25 35 30 45]. | 1285 | 1590 | 1285 | 1285 | |

| 33 | Self. = [4 7 5 8 6 4 7 5 8 6 4 7 5 8 6; 6 4 7 5 8 6 4 7 5 8 6 4 7 5 8; 8 6 4 7 5 8 6 4 7 5 8 6 4 7 5; 5 8 6 4 7 5 8 6 4 7 5 8 6 4 7; 7 5 8 6 4 7 5 8 6 4 7 5 8 6 4; 9 7 5 8 6 9 7 5 8 6 9 7 5 8 6; 6 9 7 5 8 6 9 7 5 8 6 9 7 5 8; 8 6 9 7 5 8 6 9 7 5 8 6 9 7 5; 5 8 6 9 7 5 8 6 9 7 5 8 6 9 7; 7 5 8 6 9 7 5 8 6 9 7 5 8 6 9]. = [28 42 33 47 22 36 28 50 44 50]. = [22 27 32 36 18 42 30 24 28 20 26 38 34 28 15]. | 1819 | 2383 | 1819 | 1763 | |

| 34 | Self. = [6 9 4 7 5 8 6 5 7 9 4 8 6 7 5 9 6 4 8 5; 7 6 8 5 9 4 7 6 8 5 9 6 5 8 7 4 6 9 5 7; 5 8 6 9 4 7 5 8 6 7 5 9 4 6 8 5 7 4 6 9; 8 5 7 6 9 5 8 4 6 9 7 5 8 6 4 7 5 8 6 7; 9 4 6 8 5 7 3 6 9 5 8 4 7 6 5 8 5 8 6 7; 4 7 5 8 6 9 7 5 8 6 9 7 4 6 9 5 8 7 5 6; 6 5 9 4 7 6 8 5 7 4 6 8 7 5 9 6 4 7 5 8; 5 7 4 6 9 7 5 7 4 6 9 7 5 7 4 6 9 7 5 7; 7 4 6 9 5 8 6 9 5 7 6 4 8 5 7 6 9 5 8 6; 6 8 5 7 4 6 9 7 5 7 4 6 9 7 5 7 4 6 9 7]. = [48 37 55 62 41 59 46 68 44 50]. = [22 22 24 28 26 20 34 25 21 27 23 29 35 23 24 26 28 24 24 25]. | 2146 | 3434 | 2235 | 2130 |

| Method | Mean Gap (%) | Median Gap (%) | Std. Dev. (%) | Max Gap (%) |

|---|---|---|---|---|

| NWCM | 45.78 | 24.89 | 73.05 | 403.33 |

| LCM | 14.97 | 2.57 | 29.07 | 71.11 |

| VAM | 5.22 | 0.00 | 7.91 | 42.50 |

| Proposed Variant | 2.78 | 0.00 | 5.89 | 31.25 |

| Example | Problem Size | Sources and Problems | VAM (Time in Seconds) | New Algorithm (Time in Seconds) |

|---|---|---|---|---|

| 1 | 5 × 5 | [28] = [4 3 1 2 6; 5 2 3 4 5; 3 5 6 3 2; 2 4 4 5 3; 4 3 6 5 1] = [65 50 40 20 25] = [60 60 30 40 10] | 0.040992 | 0.038122 |

| 2 | 4 × 4 | [29] = [20 16 14 20; 9 15 16 10; 8 13 5 9; 9 6 5 11] = [9 8 7 5] = [5 10 5 9] | 0.060489 | 0.055432 |

| 3 | 6 × 6 | [29] = [5 1 2 3 4 7; 7 2 3 1 5 6; 9 1 9 5 2 3; 6 5 8 4 1 4; 8 7 11 6 4 5; 2 5 7 5 2 1] = [400 500 300 150 600 350] = [300 500 700 300 250 250] | 0.071573 | 0.051505 |

| 4 | 3 × 4 | [30] = [7 3 8 2; 5 6 11 12; 10 4 7 6] = [100 200 300] = [80 170 190 160] | 0.048811 | 0.045997 |

| 5 | 5 × 5 | [31] = [46 74 9 28 99; 12 75 6 36 48; 35 199 4 5 71; 61 81 44 88 9; 85 60 14 25 79] = [461 277 356 488 393] = [278 60 461 116 1060] | 0.072355 | 0.054465 |

| 6 | 3 × 3 | [32] = [6 8 10; 7 11 11; 4 5 12] = [150 175 275] = [200 100 300] | 0.066016 | 0.051732 |

| Example | Problem Size | Sources and Problems | VAM (Time in Seconds) | New Algorithm (Time in Seconds) |

|---|---|---|---|---|

| 7 | 4 × 6 | [25] = [9 12 9 6 9 10; 7 3 7 7 5 5; 6 5 9 11 3 11; 6 8 11 2 2 10] = [5 6 2 9] = [4 4 6 2 4 2] | 0.053182 | 0.048898 |

| 8 | 4 × 5 | [26] = [4 3 1 2 6; 5 2 3 4 5; 3 5 6 3 2; 2 4 4 5 3] = [80 60 40 20] = [60 60 30 40 10] | 0.045334 | 0.041321 |

| 9 | 4 × 4 | [11] = [10 30 25 15; 20 15 20 10; 10 30 20 20; 30 40 35 45] = [14 10 15 12] = [10 15 12 15] | 0.063444 | 0.057456 |

| 10 | 4 × 6 | [30] = [1 2 1 4 5 2; 3 3 2 1 4 3; 4 2 5 9 6 2; 3 1 7 3 4 6] = [30 50 75 20] = [20 40 30 10 50 25] | 0.052848 | 0.050397 |

| 11 | 3 × 3 | [31] = [11 9 6; 12 14 11; 10 8 10] = [40 50 40] = [55 45 30] | 0.055022 | 0.052754 |

| 12 | 4 × 5 | [7] = [30 50 40 60 35; 65 35 45 30 25; 35 40 60 40 30; 20 30 50 45 35] = [20 15 25 20] = [15 18 10 17 20] | 0.066100 | 0.066043 |

| 13 | 3 × 4 | [17] = [15 12 10 8; 17 18 21 14; 14 15 10 21] = [24 8 18] = [11 9 21 9] | 0.061222 | 0.060881 |

| 14 | 3 × 5 | [32] = [5 8 6 6 3; 4 7 7 6 5; 8 4 6 6 4] = [800 500 900] = [400 400 500 400 800] | 0.071123 | 0.068456 |

| Example | Problem Size | Sources and Problems | VAM (Time in Seconds) | New Algorithm (Time in Seconds) |

|---|---|---|---|---|

| 15 | 3 × 4 | [32] = [3 1 7 4; 2 6 5 9; 8 3 3 2] = [300 400 500] = [250 350 400 200] | 0.028123 | 0.020764 |

| 16 | 3 × 5 | [26] = [4 1 2 4 4; 2 3 2 2 2; 3 5 2 4 4] = [60 35 40] = [22 45 20 18 30] | 0.049365 | 0.049004 |

| 17 | 2 × 7 | [12] = [5 19 12 70 66 74 283; 103 89 81 26 23 62 97] = [4000 47,700] = [21,600 15,600 15,600 19,500 16,800 10,500 8100] | 0.059800 | 0.055632 |

| 18 | 3 × 4 | [23] = [3 48 14 2; 4 2 30 10; 36 8 12 12] = [24 24 2] = [6 12 3 44] | 0.067088 | 0.051764 |

| 19 | 4 × 6 | [21] = [9 12 9 6 9 10; 7 3 7 7 5 5; 6 5 9 11 3 11; 6 8 11 2 2 10] = [5 6 2 9] = [4 4 6 2 4 2] | 0.053237 | 0.055003 |

| 20 | 3 × 4 | [21] = [3 5 7 6; 2 5 8 2; 3 6 9 2] = [50 75 25] = [20 20 50 60] | 0.048811 | 0.045997 |

| 21 | 4 × 5 | [20] = [10 8 9 5 13; 7 9 8 10 4; 9 3 7 10 6; 11 4 8 3 9] = [100 80 70 90] = [60 40 100 50 90] | 0.047493 | 0.046144 |

| 22 | 4 × 6 | [8] = [9 12 9 6 9 10; 7 3 7 7 5 5; 6 5 9 11 3 11; 6 8 11 2 2 10] = [2 5 6 9] = [2 2 4 4 4 6] | 0.054543 | 0.054323 |

| 23 | 4 × 3 | [22] = [3 4 6; 7 3 8; 6 4 5; 7 5 2] = [100 80 90 120] = [110 110 60] | 0.051605 | 0.038987 |

| Example | Problem Size | Sources and Problems | VAM (Time in Seconds) | New Algorithm (Time in Seconds) |

|---|---|---|---|---|

| 24 | 4 × 5 | [16] = [60 120 75 180; 58 100 60 165; 62 110 65 170; 65 115 80 175; 70 135 85 195] = [8000 9200 6250 4900 6100] = [5000 2000 10,000 6000] | 0.051246 | 0.048190 |

| 25 | 3 × 5 | [19] = [4 1 3 4 4; 2 3 2 2 3; 3 5 2 4 4] = [60 35 40] = [22 45 20 18 30] | 0.056765 | 0.054321 |

| 26 | 3 × 3 | [19] = [6 4 1; 3 8 7; 4 4 2] = [50 40 60] = [20 95 35] | 0.045671 | 0.0430081 |

| 27 | 5 × 5 | [24] = [73 40 9 79 20; 62 93 96 8 13; 96 65 80 50 65; 57 58 29 12 87; 56 23 87 18 12] = [8 7 9 3 5] = [6 8 10 4 4] | 0.050447 | 0.048615 |

| 28 | 4 × 6 | [26] = [25 30 20 40 45 37; 30 25 20 30 40 20; 40 20 40 35 45 22; 25 24 50 27 30 25] = [37 22 32 14] = [15 20 15 25 20 10] | 0.052754 | 0.048678 |

| 29 | 3 × 4 | [10] = [8 6 10 9; 9 12 13 7; 14 9 16 5] = [35 50 40] = [45 20 30 30] | 0.052999 | 0.047592 |

| 30 | 2 × 3 | [10] = [7 8 10; 9 7 8] = [50 50] = [40 40 40] | 0.054106 | 0.050773 |

| 31 | Self. = [6 9 5 7 8 6 4 9 3 7; 7 5 8 6 9 7 5 8 6 4; 8 6 9 7 5 8 6 9 7 5; 5 8 6 9 7 5 8 6 9 7; 9 7 5 8 6 9 7 5 8 6]. = [35 50 45 40 30]. = [15 20 25 10 30 18 12 22 20 28]. | 0.079673 | 0.064325 |

| Example | Problem Size | Sources and Problems | VAM (Time in Seconds) | New Algorithm (Time in Seconds) |

|---|---|---|---|---|

| 32 | Self. = [4 6 9 7 8 5 7 6 8 9; 7 4 6 9 7 8 5 7 6 8; 8 7 4 6 9 7 8 5 7 6; 6 8 7 4 6 9 7 8 5 7; 5 6 8 7 4 6 9 7 8 5; 9 5 6 8 7 4 6 9 7 8; 7 9 5 6 8 7 4 6 9 7; 6 7 9 5 6 8 7 4 6 9; 8 6 7 9 5 6 8 7 4 6; 6 8 6 7 9 5 6 8 7 4]. = [30 25 40 35 20 50 25 30 20 25]. = [35 20 25 30 15 40 25 35 30 45]. | 0.042330 | 0.037643 | |

| 33 | Self. = [4 7 5 8 6 4 7 5 8 6 4 7 5 8 6; 6 4 7 5 8 6 4 7 5 8 6 4 7 5 8; 8 6 4 7 5 8 6 4 7 5 8 6 4 7 5; 5 8 6 4 7 5 8 6 4 7 5 8 6 4 7; 7 5 8 6 4 7 5 8 6 4 7 5 8 6 4; 9 7 5 8 6 9 7 5 8 6 9 7 5 8 6; 6 9 7 5 8 6 9 7 5 8 6 9 7 5 8; 8 6 9 7 5 8 6 9 7 5 8 6 9 7 5; 5 8 6 9 7 5 8 6 9 7 5 8 6 9 7; 7 5 8 6 9 7 5 8 6 9 7 5 8 6 9]. = [28 42 33 47 22 36 28 50 44 50]. = [22 27 32 36 18 42 30 24 28 20 26 38 34 28 15]. | 0.034432 | 0.032304 | |

| 34 | Self. = [6 9 4 7 5 8 6 5 7 9 4 8 6 7 5 9 6 4 8 5; 7 6 8 5 9 4 7 6 8 5 9 6 5 8 7 4 6 9 5 7; 5 8 6 9 4 7 5 8 6 7 5 9 4 6 8 5 7 4 6 9; 8 5 7 6 9 5 8 4 6 9 7 5 8 6 4 7 5 8 6 7; 9 4 6 8 5 7 3 6 9 5 8 4 7 6 5 8 5 8 6 7; 4 7 5 8 6 9 7 5 8 6 9 7 4 6 9 5 8 7 5 6; 6 5 9 4 7 6 8 5 7 4 6 8 7 5 9 6 4 7 5 8; 5 7 4 6 9 7 5 7 4 6 9 7 5 7 4 6 9 7 5 7; 7 4 6 9 5 8 6 9 5 7 6 4 8 5 7 6 9 5 8 6; 6 8 5 7 4 6 9 7 5 7 4 6 9 7 5 7 4 6 9 7]. = [48 37 55 62 41 59 46 68 44 50]. = [22 22 24 28 26 20 34 25 21 27 23 29 35 23 24 26 28 24 24 25]. | 0.064502 | 0.057820 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Boah, D.K.; Fiele, S.A.; Etwire, C.J. A New Algorithm for Finding Initial Basic Feasible Solutions of Transportation Problems. AppliedMath 2026, 6, 58. https://doi.org/10.3390/appliedmath6040058

Boah DK, Fiele SA, Etwire CJ. A New Algorithm for Finding Initial Basic Feasible Solutions of Transportation Problems. AppliedMath. 2026; 6(4):58. https://doi.org/10.3390/appliedmath6040058

Chicago/Turabian StyleBoah, Douglas Kwasi, Suleman Abudu Fiele, and Christian John Etwire. 2026. "A New Algorithm for Finding Initial Basic Feasible Solutions of Transportation Problems" AppliedMath 6, no. 4: 58. https://doi.org/10.3390/appliedmath6040058

APA StyleBoah, D. K., Fiele, S. A., & Etwire, C. J. (2026). A New Algorithm for Finding Initial Basic Feasible Solutions of Transportation Problems. AppliedMath, 6(4), 58. https://doi.org/10.3390/appliedmath6040058