1. Introduction

Metal materials are extensively utilised in critical industries such as aerospace, automotive, and energy equipment. Their service safety and fatigue life directly determine the reliability and total life-cycle cost of major engineering projects [

1]. Actual structures often operate under multiaxial conditions such as coupled tension-torsion loading and variable-amplitude fatigue [

2]. Crack initiation and propagation exhibit distinct nonlinearity, stage-dependent behaviour, and path dependency: the combined effects of loading history, nonproportional effects, and material parameters render the fatigue evolution complex and challenging to model explicitly. Traditional methods like the Palmgren-Miner linear cumulative damage model struggle to capture load sequence and interactions [

3], often yielding systematic biases under sequential effects or non-proportional loading [

4]. Empirical regression models, while convenient for engineering applications, exhibit limited generalisation when transferring to new operating conditions [

5]. Meanwhile, multi-axis fatigue testing is costly and has limited coverage, constraining model validation and generalisation.

Over the past decades, multiaxial fatigue has been predominantly treated by critical-plane and stress-invariant criteria, such as the classical approaches proposed by Dang Van, Findley, McDiarmid and Matake. These methods project the local stress or strain tensor onto candidate planes and relate suitable combinations of shear and normal components to fatigue strength. They have been successfully applied to a wide range of metallic components under proportional and non-proportional loading, and remain the backbone of many design codes. However, most of these criteria rely on relatively low-dimensional descriptors (for example, equivalent shear stress ranges and mean normal stresses) and simple linear or bilinear damage laws. As a result, their accuracy may degrade when dealing with complex variable-amplitude histories, strong mean-stress effects or geometrically intricate components, especially when only limited experimental data are available to calibrate material parameters.

Recent studies have further demonstrated the practical importance and limitations of traditional multiaxial schemes in engineering applications. For example, Lee et al. analysed the multiaxial fatigue behaviour of additively manufactured Ti-6Al-4V and showed that surface texture and manufacturing-induced defects strongly affect life under complex loading paths, even when conventional criteria are employed for modelling [

6]. Venturini et al. combined modal analysis and surrogate meta-models to assess the fatigue strength of automotive steel wheels subjected to service-like multiaxial loads, highlighting the need to couple structural dynamics with fatigue criteria in vehicle components [

7]. Liu et al. investigated high-cycle multiaxial fatigue of 30CrMnSiA steel with combined mean tensile and shear stresses, and discussed the capability of critical-plane models to reproduce the observed failure envelopes [

8]. These works underscore that, while classical criteria remain valuable for design and interpretation, there is increasing demand for models that can directly exploit rich experimental databases and capture highly nonlinear interactions among loading history, surface condition and material properties.

Against this background, neural-network (NN) approaches can be seen as a data-driven complement to classical multiaxial criteria. Instead of prescribing a specific combination of stress or strain components, deep models learn mappings directly from high-dimensional inputs—such as full loading histories, strain-rate signals and extended sets of material descriptors—to fatigue life. In principle, this enables the network to infer complex, nonlinear interactions that are difficult to encode in closed-form criteria, while still allowing classical models to provide baseline predictions, sanity checks or additional engineered features. The present work follows this complementary philosophy by using multiaxial fatigue data both to build a learning-based predictor and to interpret its behaviour in the light of established fatigue mechanisms [

9].

Data-driven approaches offer a novel technical pathway for fatigue life prediction [

10,

11]. Convolutional networks excel at local/spatial feature extraction, while gated recurrent networks can capture sequence dependencies over extended time scales, demonstrating potential in life-related tasks. However, a single model struggles to simultaneously address the dual demands of “time-series cumulative damage” and “static feature coupling” [

12]: Time-series models alone inadequately express higher-order relationships in static parameters like stress amplitude and load frequency; while spatial models alone struggle to preserve cyclic history information. Simply blending or concatenating multi-source features often leads to information competition and weight imbalance. Attention mechanisms [

13] driven solely by data lack explicit correspondence with critical fatigue mechanism stages (e.g., damage jump phase, rapid stiffness decay phase), compromising interpretability and stability.

To overcome these limitations, hybrid models have emerged as a research focus. Compared to Long Short-Term Memory (LSTM), Gated Recurrent Unit (GRU) structures are simpler with fewer parameters, theoretically offering convergence and generalisation advantages in low-sample-size tasks [

14]. This paper adopts GRU as one methodological rationale but does not conduct additional low-sample experiments for validation. Attention mechanisms can focus on critical damage stages like crack propagation by computing feature weights, thereby amplifying the influence of core information on prediction outcomes [

15]. Deep Neural Networks (DNNs) excel at capturing higher-order coupling relationships in static features such as stress amplitude and load frequency through multi-layer nonlinear mappings [

16,

17]. Based on this, this paper constructs a feature-optimised Hybrid GRU-Attention-DNN fusion model. The overall approach involves decoupling dynamic and static information for modelling, followed by deep interaction in the fusion layer: the dynamic branch employs GRU to represent cyclic history while introducing attention to weight potentially critical damage stages; the static branch utilises multilayer perceptrons to capture high-order nonlinear couplings of static features like stress amplitude and load frequency [

18,

19]. The fusion layer achieves complementary integration of both information types within a unified representation space. To enhance consistency with the underlying mechanism, the attention design incorporates a lightweight prior bias constructed from the rate of change in strain amplitude. This strength parameter is learnable, enabling end-to-end training while guiding the model to focus on phase signals with engineering significance. To reduce redundancy and improve training stability, core features are pre-selected using Pearson correlation coefficients to eliminate noise and invalid inputs [

20,

21].

Compared with previously reported hybrid deep-learning architectures for fatigue and remaining-life prediction, such as LSTM–attention–Multilayer Perceptron (MLP) models, Convolutional Neural Network (CNN)–Recurrent Neural Network (RNN) cascades and Transformer–GRU schemes, the present framework is designed with three distinctive characteristics that are particularly tailored to multiaxial fatigue data [

22]. First, it explicitly decouples static material descriptors from dynamic multiaxial loading histories: scalar mechanical properties and loading parameters are processed by a dedicated DNN branch, whereas the cyclic histories are encoded by a GRU branch. Fusion is only performed at a high-level representation stage, which helps disentangle “material-related” and “path-related” influences on life and avoids the feature competition that may occur when all variables are fed into a single backbone. Second, the attention mechanism is attached to the entire GRU hidden-state sequence and is biased by a physics-inspired prior built from the rate of change in strain amplitude, so that life stages associated with rapid stiffness loss or strain jumps receive systematically higher weights; by contrast, existing LSTM–attention–MLP designs usually apply purely data-driven attention without an explicit link to damage-evolution stages. Third, the static branch is realised as a compact DNN that operates only on a feature-optimised subset of inputs instead of the full metadata table, which reduces redundancy and improves robustness under the moderate sample size of the Materials Cloud dataset. This specific combination of GRU, physics-guided attention and feature-optimised DNN was chosen to balance expressiveness, interpretability and parameter efficiency, and the experiments in

Section 3 show that it consistently outperforms single-branch CNN/GRU/LSTM baselines in both accuracy and robustness.

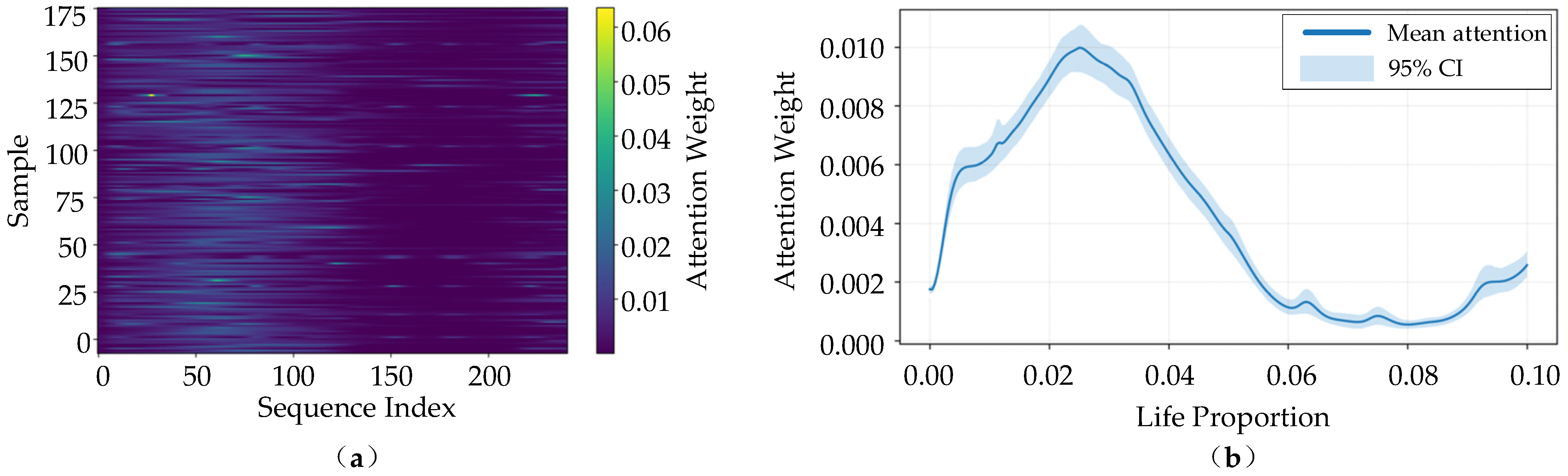

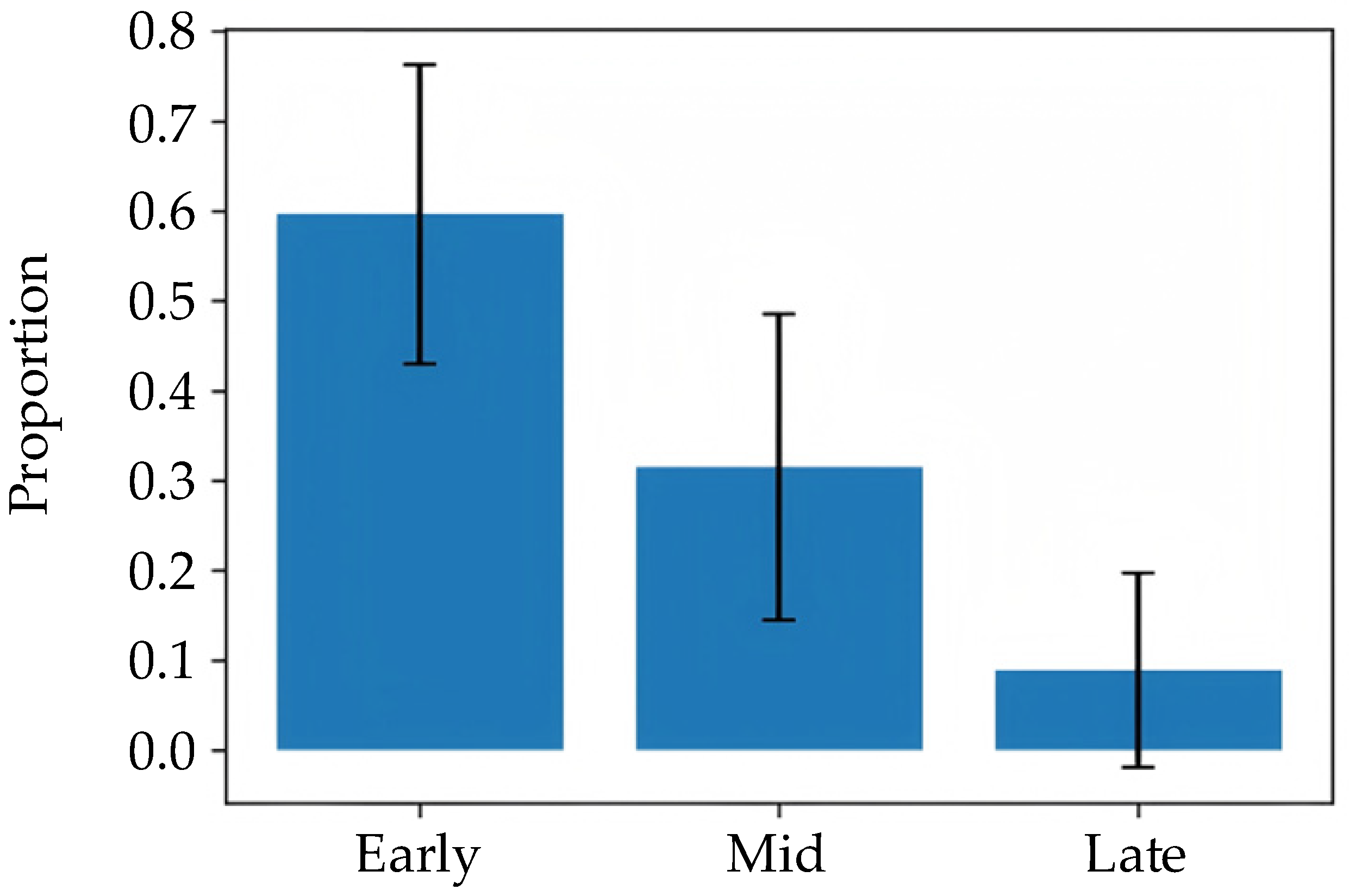

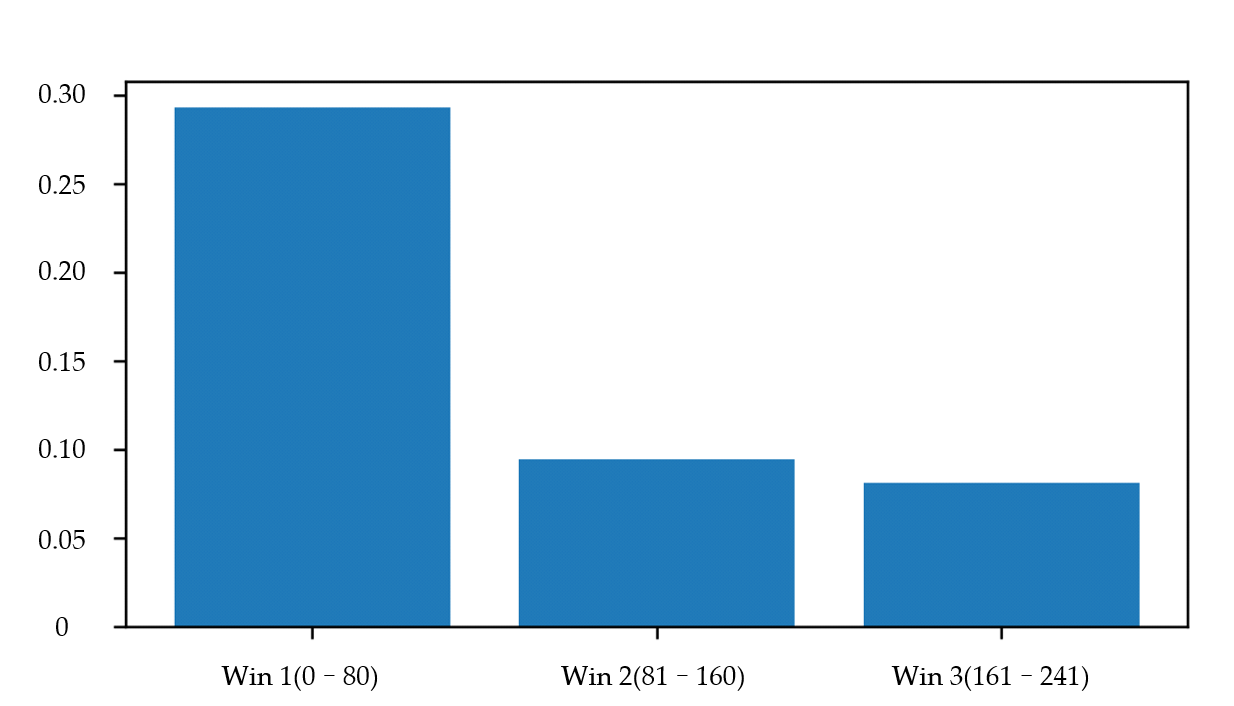

The main contributions of this work can be summarised as follows: (1) A feature-optimised Hybrid GRU–Attention–DNN architecture is proposed for multiaxial fatigue-life prediction, in which dynamic loading histories and static material descriptors are explicitly decoupled and processed by specialised branches before being fused in a joint representation space. (2) A physics-guided attention mechanism biased by strain-rate changes is introduced to highlight critical damage stages, providing improved robustness and enhanced interpretability compared with purely data-driven attention schemes. (3) A comprehensive experimental study is conducted on the Materials Cloud multiaxial fatigue dataset, including comparisons with CNN/GRU/LSTM baselines and bootstrap-based confidence-interval analysis, as well as attention visualisation and time-window masking experiments that jointly reveal the dominant role of early-life dynamic responses in fatigue-life prediction.

The subsequent sections of this paper are structured as follows:

Section 2 details the data sources, preprocessing methods, feature selection workflow, and hybrid model construction specifics;

Section 3 presents experimental results, including feature selection outcomes, model performance comparisons, and analysis;

Section 4 summarises research conclusions and outlines future improvement directions.

2. Data and Methods

2.1. Data Sources and Preprocessing

The data used in this study are derived from the public dataset “A deep learning dataset for metal multiaxial fatigue life prediction” published on Materials Cloud. The original dataset contains 1167 fatigue samples from 40 metallic materials under 48 multiaxial loading paths. Each sample consists of a summary record storing mechanical properties and fatigue life, and a separate CSV file storing the corresponding multiaxial loading path as a two-channel time series. In this work, we select the strain-controlled subset with complete mechanical properties and loading-path files, and obtain 914 samples from 40 materials after basic cleaning, which are further split into 731 training samples and 183 test samples. According to the data descriptor in [

23], the experiments were performed on a servo-hydraulic multiaxial fatigue testing machine.

During each test, axial stress and strain responses were recorded by the machine’s data acquisition system and exported as comma-separated value (CSV) files. These CSV files, together with the associated test metadata, are curated by the dataset providers into a structured digital database on the Materials Cloud platform. In this study, we download the metadata table and loading-path CSV files, import them into a Python-based scientific computing environment, and merge the scalar metadata with the two-channel loading paths to form input–output pairs for subsequent feature construction, normalisation and model training. In the present work, we directly used the processed digital dataset released by the authors. The metadata table and all associated time-series files were imported into Python 3.9 for subsequent feature construction and normalisation.

Table 1 summarises all fields available in the raw database used in this work, including both metadata and per-test time-series quantities, together with their types, units and roles in the proposed model.

Before modelling, the metadata were checked for quality. An extra empty column that contained only missing entries was removed. For the remaining quantities listed in

Table 1, no missing values were detected. To enhance robustness against potential missing data in future applications, a median-based imputation step was retained in the preprocessing pipeline, although it did not modify any samples in the current dataset.

To assess whether any input variables were nearly constant and thus uninformative, we performed a simple variance analysis on the scalar features. For each of the four mechanical properties listed in

Table 1, the sample variance was computed over all training specimens. The results show that all four quantities exhibit a clear spread across the dataset, and none of them behaves as a near-constant variable. Therefore, no feature was discarded on the basis of low variance, and all scalar inputs in

Table 1 were retained for subsequent modelling.

In addition, Pearson correlation analysis was carried out among the four scalar input features and the fatigue-life target. For each pair of variables, the linear correlation coefficient was computed on the training data to quantify their mutual dependence. The resulting correlation matrix indicates that some mechanical properties (for example, ultimate tensile strength and yield strength) are moderately correlated, whereas their correlations with fatigue life remain at a moderate level and no pair of variables exhibits near-perfect linear dependence. Consequently, none of the features listed in

Table 1 was removed on the basis of excessive correlation, and all scalar inputs were retained in the subsequent modelling.

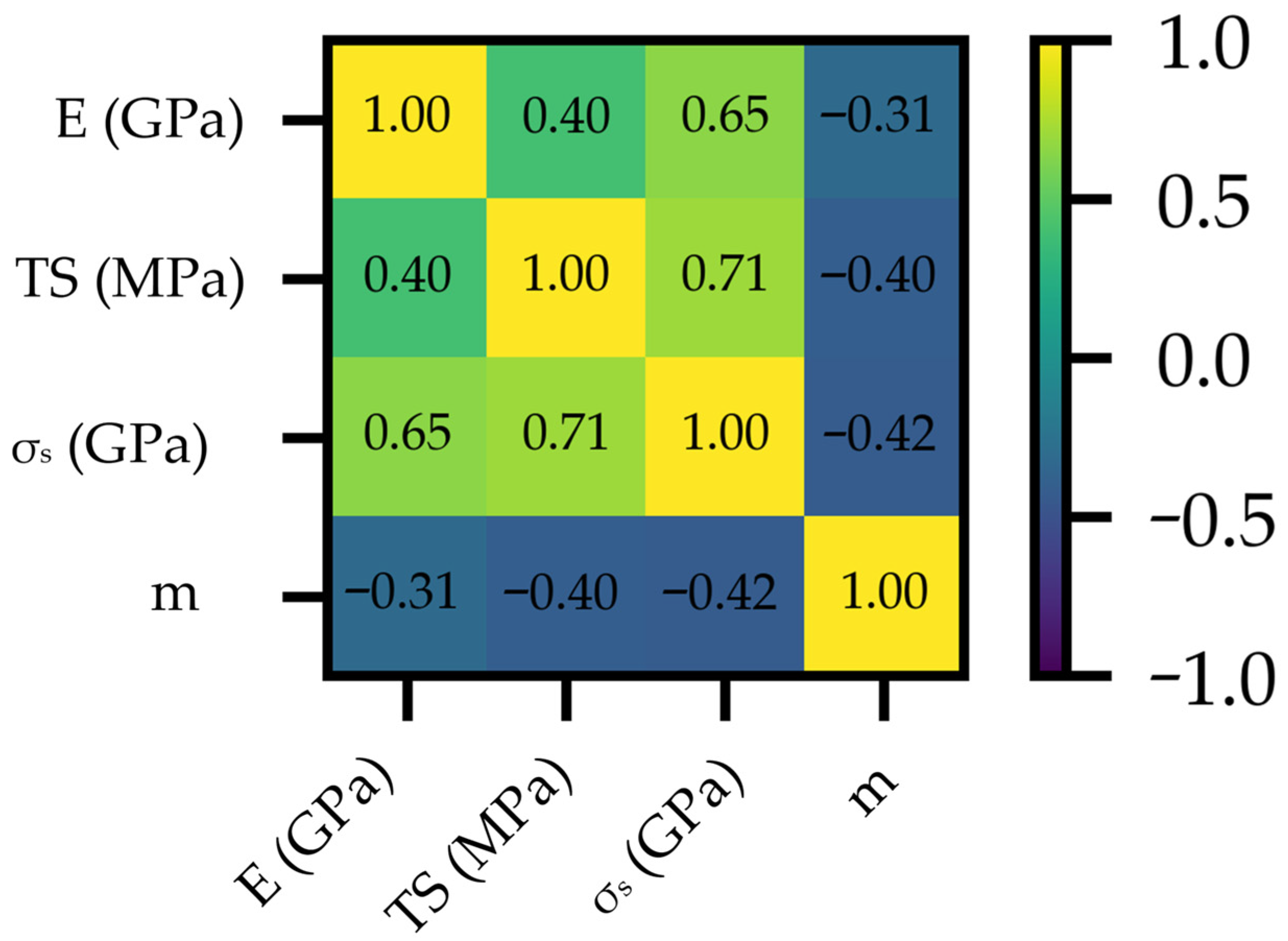

In addition, Pearson correlation analysis was performed among the four scalar input features to quantify their linear interdependency, as shown in

Figure 1. A strong positive correlation is observed between TS and the yield strength

(

), indicating a high degree of linear dependence between these two strength-related descriptors. E exhibits a moderate correlation with

(

) and a weaker correlation with TS (

). In contrast, the hardening exponent m shows negative correlations with the three mechanical properties, with

(E − m),

(TS − m), and

(

− m). Although TS and

are strongly correlated, all four descriptors were retained because they capture complementary mechanical aspects and are jointly used as inputs in the subsequent modelling.

All continuous variables were subsequently standardised to make them comparable in scale. For each scalar feature and for the fatigue-life target, the mean

and standard deviation

were first computed on the training set, and every value

was then transformed according to

. The same statistics were used to transform the test set, so that no information from the test data leaked into training. This z-score normalisation was implemented by the StandardScaler routine provided in the scikit-learn library. For the two-channel loading-path time series, the same standardisation procedure was applied separately to each channel before they were fed into the neural network.

After preprocessing, the specimens were randomly divided at the specimen level into a training set and a test set, with 80% of the samples used for training and 20% held out for testing (fixed random seed). As a consequence, different specimens of the same material may appear in both subsets. This setting matches the intended application scenario, where basic mechanical properties of a material are already available from previous tests and the model is used to predict fatigue life under new multiaxial loading paths for materials that have been characterised before. Generalisation to completely unseen materials is beyond the scope of the present study and will be considered in future work using material-wise partitioning.

To ensure data quality, outliers were first processed using the

rule [

24]: the mean (

) and standard deviation (

) of each feature were calculated, and data points satisfying Formula (1) were identified as outliers.

Subsequently, all features were standardised using Z-score normalisation [

25], with the formula being:

This transformation uniformly maps the eigenvalues to a distribution range with a mean of 0 and a standard deviation of 1, thereby eliminating interference from dimensional differences in parameters such as the number of cycles (unit: cycles), stress amplitude (unit: MPa), and load frequency (unit: Hz) during model training.

2.2. Fusion Model Construction

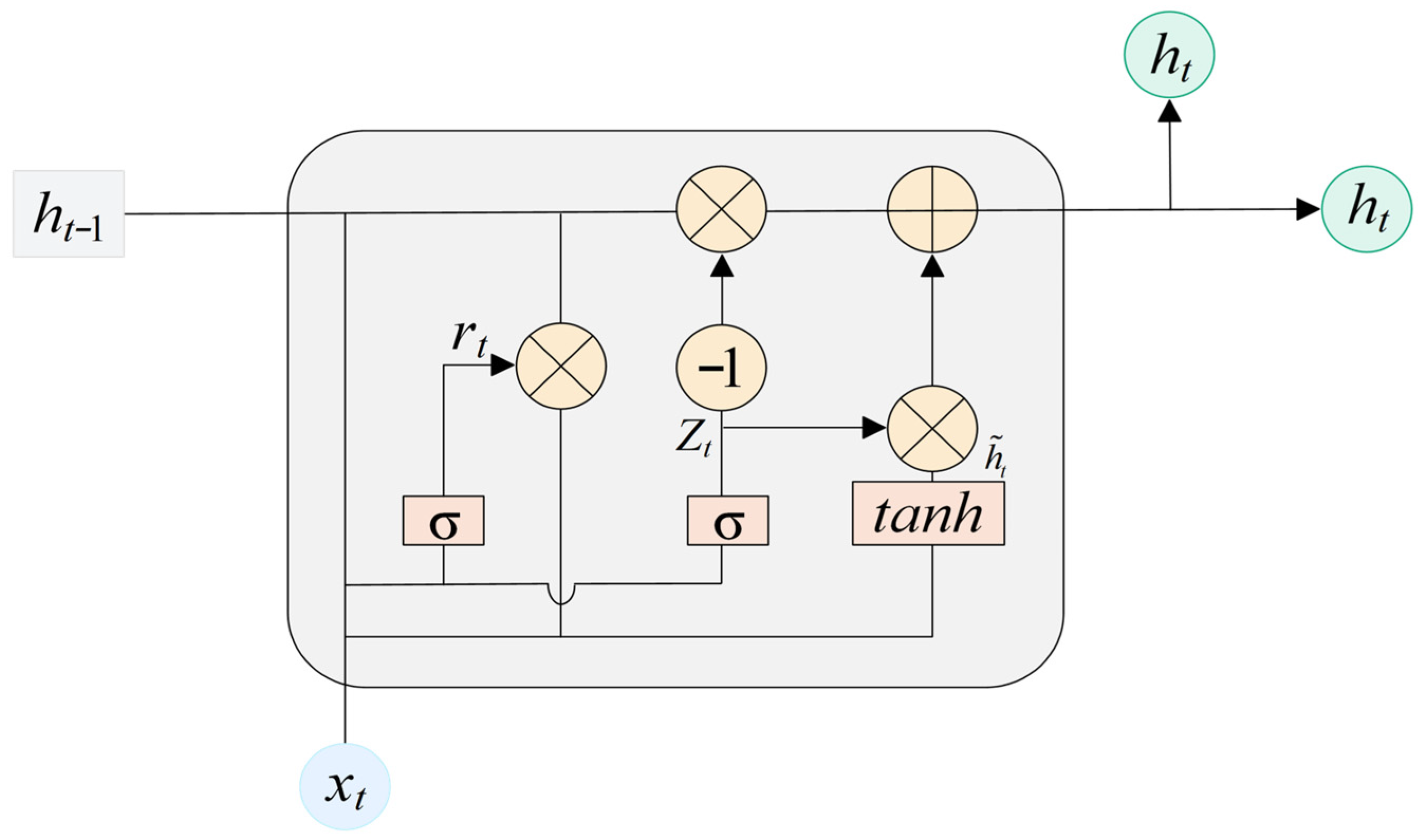

2.2.1. GRU Model

The GRU is a simplified variant of the LSTM network [

26,

27]. GRU merges the input and forget gates into a single update gate, and uses a reset gate to control how past information is combined with the current input. Like LSTM, GRU retains relevant past information while selectively incorporating new inputs, thereby providing effective memory and mitigating vanishing gradients [

28]. The key difference lies in GRU’s removal of the memory control mechanism present in LSTM, thereby simplifying computational complexity, as illustrated in

Figure 2.

Owing to its simplified gating, GRU is more compact. The update and reset gates jointly determine how the hidden state is updated at time step

. The hidden state is driven by both the current input and the hidden state from the previous time step [

29]. Below is an introduction to these two components.

The update gate determines which information from the previous hidden state should be retained and carried forward to the current time step, as expressed by the formula:

is the sigmoid function,

is the parameter matrix input to the update gate,

is the connection weight from the hidden state to the update gate,

is the bias term,

is the input vector at this time step,

is the hidden state from the previous time step.

The reset gate determines which hidden states from the previous time step should be ignored.

is the weight matrix input to the reset gate,

is the parameter matrix input to the reset gate, and

is the offset.

Calculated based on the current input and the previous hidden state adjusted by the reset gate, it serves as a candidate for updating the hidden state:

is the hyperbolic tangent function.

and

are the parameter matrices for transforming the hidden state into candidate hidden states after processing by the input and reset gates, respectively.

is the bias parameter.

The current hidden state is obtained by weighting and combining the previous hidden state and candidate hidden states through the update gate:

In this paper, the temporal inputs are organised by cycle index

, where each time step contains two types of dynamic features: “cycle count” and “strain rate”. A single-layer GRU maps sequences of length

to hidden state sequences

, where

represents a compressed representation of cumulative damage evolution up to the

-th cycle pair. Intuitively, if a sudden change in strain magnitude or accelerated damage occurs at a certain stage, the

corresponding to adjacent segments will exhibit significant differences, providing discriminative power for subsequent attention weighting.

In practice, the temporal branch uses a single-layer GRU with 64 hidden units. These hyperparameters were determined by a small grid search (hidden size ∈ {32, 64, 128}, number of layers ∈ {1, 2}) on the validation set. Larger configurations improved the validation accuracy by less than one percentage point but almost doubled the training time and memory consumption. Because the dataset is of moderate size and the model has to be retrained many times for ablation and interpretability studies, running a full Bayesian or evolutionary hyper-parameter optimisation, which would require tens to hundreds of additional full trainings, was not feasible within our computational budget and would not substantially change the conclusions. Therefore, we adopt a limited grid/hand-tuning strategy guided by validation performance and domain knowledge.

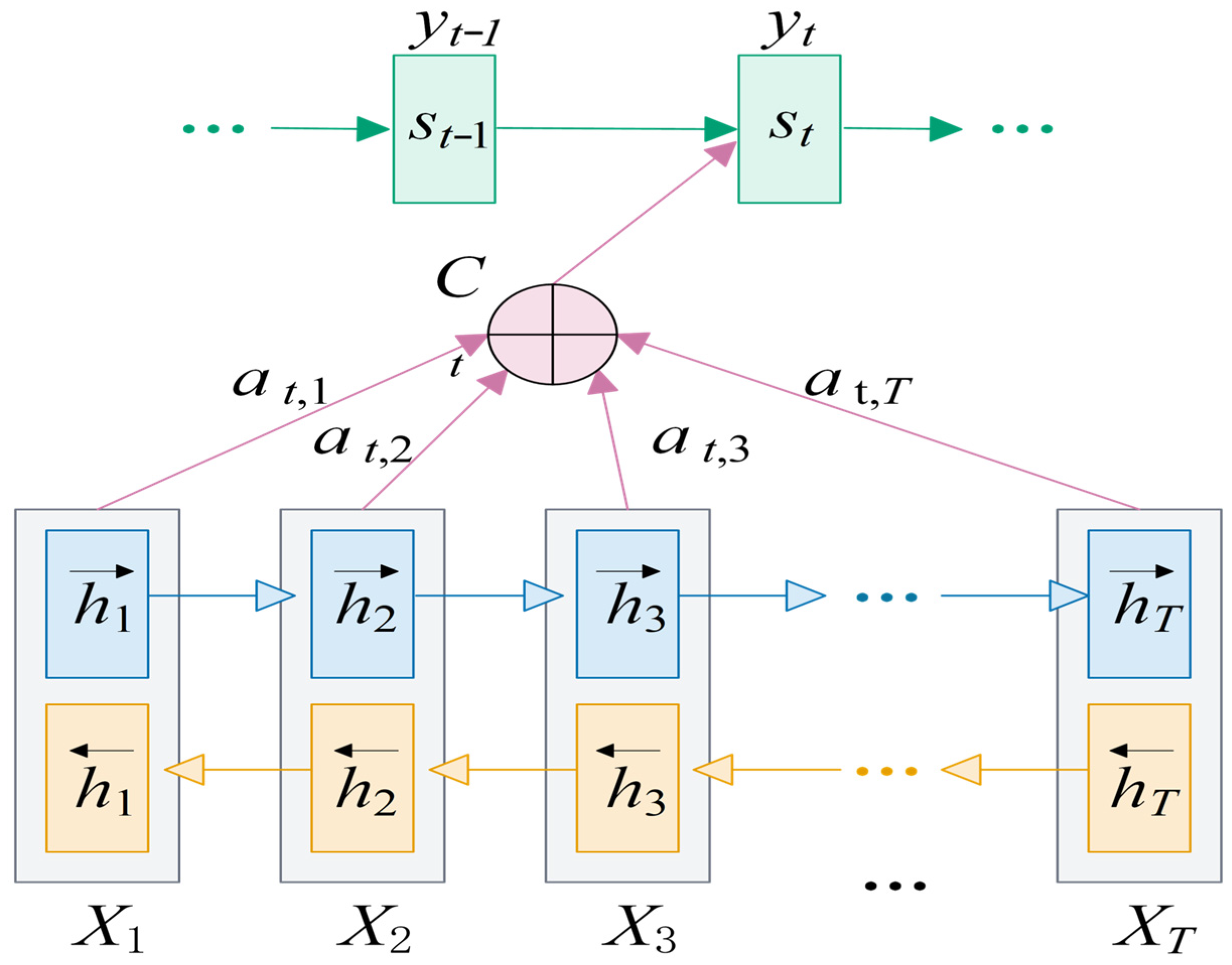

2.2.2. Attention Mechanism

The attention mechanism is a model that simulates the human brain’s attention system. It can be viewed as a composite function that calculates the probability distribution of attention to emphasise the influence of a key input on the output [

30].

This model maps a variable-length input

to a variable-length output

. Specifically, the Encoder transforms a variable-length input sequence

X into an intermediate semantic representation

) through a nonlinear transformation. The Decoder’s task is to predict and generate the output

at time step

i based on the intermediate semantic representation C of the input sequence

X and the previously generated

, where both

and

are nonlinear transformation functions. Due to the lack of discriminative power in traditional Encoder–Decoder frameworks for input sequences

X, the attention mechanism was introduced to address this issue [

31]. Their proposed model architecture is illustrated in

Figure 3.

Among these,

represents the hidden state at time step

on the decoder side,

denotes the target word, and

signifies the context vector. The hidden state at time step

is then calculated as shown in Equation (7).

depends on the hidden layer representation of the input sequence at the encoding end, which can be expressed as shown in Equation (8) after weighted processing.

Among these,

represents the latent vector of the

j-th word on the Encoder side. It contains information about the entire input sequence but focuses primarily on the vicinity of the

j-th word.

T denotes the input sequence length.

represents the attention weight assigned by the

j-th word in the encoder to the t-th word in the decoder, where the sum of all

probability values equals 1. The calculation formula for

is shown in Equation (9).

Among these,

represents an alignment model used to measure the degree of alignment of a word at position

on the encoder side relative to a word at position

on the decoder side.

This paper focuses attention on identifying “critical stages” of fatigue. The approach involves: using the hidden state obtained by the GRU at each cycle as a temporal slice representation, first calculating its similarity with the global sequence summary as a base weight; Simultaneously, the normalised absolute value of the “strain rate” extracted from dynamic features serves as a learnable strength-based prior bias superimposed on the base weight. This naturally amplifies attention intensity during phases of strain sudden changes or rapid stiffness decay. Subsequently, the softmax function yields attention weights for each time step, which are then weighted and aggregated to form a temporal feature summary [

32].

The scaled dot-product attention module in this work is deliberately kept lightweight: it shares the same hidden dimension as the GRU output (64), and uses single-head projections for queries, keys and values followed by softmax pooling. In preliminary trials we compared mean pooling, max pooling and the proposed single-head attention, and found that attention consistently improved the prediction accuracy while introducing only a small number of additional parameters. More complex variants with multi-head attention or larger projection dimensions brought only marginal gains (less than one percentage point in validation accuracy) but nearly doubled the computational cost and tended to overfit due to the limited dataset size. For this reason we did not perform a separate large-scale Bayesian search for the attention hyperparameters, and instead fixed this minimal configuration based on the above validation experiments.

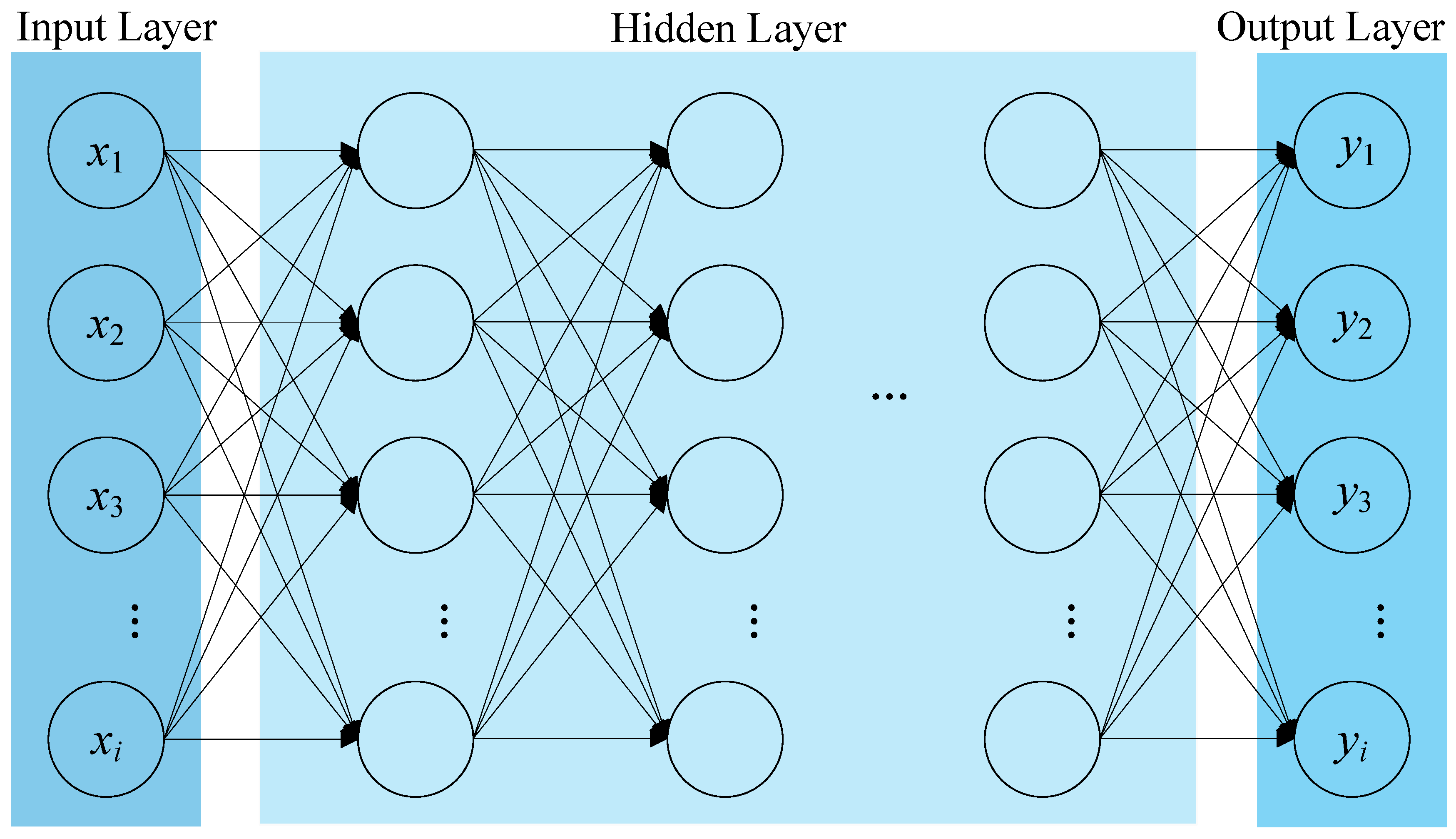

2.2.3. Deep Neural Network Model

DNN consists of multiple layers of fully connected neurons. Through nonlinear activation functions and multi-layer mapping, they can approximate complex nonlinear functions. In fatigue life prediction problems, DNNs are primarily used for high-order nonlinear modelling of static features such as stress amplitude and load frequency [

33].

As shown in

Figure 4, a deep neural network can be divided into an input layer, hidden layers, and an output layer. All these layers are fully connected, meaning that any neuron in layer

is connected to all neurons in layer

. When performing forward propagation in a deep neural network, the calculation formula is as follows:

Here,

denotes the i-th input variable,

represents the weight connected to the i-th input variable,

is the weighted sum of all input variables,

is the threshold,

is the activation function, and

is the output of the neural network. Common activation functions include ReLU, sigmoid, and tanh. ReLU is frequently employed to mitigate the vanishing gradient problem during backpropagation. This paper utilises ReLU activation in both the input layer and hidden layers. The expression is as follows:

In this paper, the DNN module adopts a three-layer architecture (64-32-16 neurons) to progressively extract and compress static features. These are then combined with the temporal features output by the GRU-Attention module for lifespan prediction, thereby achieving collaborative modelling of dynamic and static features.

The fully connected sub-network that processes the static material properties consists of three hidden layers with 64, 32 and 16 neurons, respectively. Each hidden layer uses a linear transformation followed by a ReLU activation, batch normalisation and a dropout layer with a rate of 0.2 to reduce overfitting. The output of this DNN branch is a 16-dimensional feature vector, which is later concatenated with the temporal representation learned by the GRU–attention branch for final fatigue life prediction.

The sizes of the hidden layers were selected by a small grid and hand-tuning procedure on the validation set. We tested one- to three-layer configurations with hidden widths in the range of 32–128 units and observed that the three-layer setting with 64-32-16 units provided a good balance between prediction accuracy and model complexity. The whole hybrid model was trained end-to-end using the AdamW optimiser with an initial learning rate of 1 × 10−3 and a weight decay of 1 × 10−5. The batch size was set to 32 and the maximum number of training epochs was 1000, with a learning-rate scheduler that reduces the learning rate when the validation mean squared error stops improving. The model parameters used for testing correspond to the epoch with the lowest validation error.

2.2.4. Integration Method

In complex fatigue life prediction tasks, a single model often struggles to simultaneously capture long-term dependencies in time-series features and nonlinear expressions of static structural characteristics. To overcome this limitation, this paper proposes a Hybrid GRU-Attention-DNN fusion model. Through modular design, this model enables collaborative modelling of multi-source features: GRU captures temporal dependencies during cyclic loading, Attention enhances key stage features, and DNN models nonlinear effects of static parameters like stress amplitude and load frequency. Finally, the fusion layer achieves unified representation and outputs life prediction values.

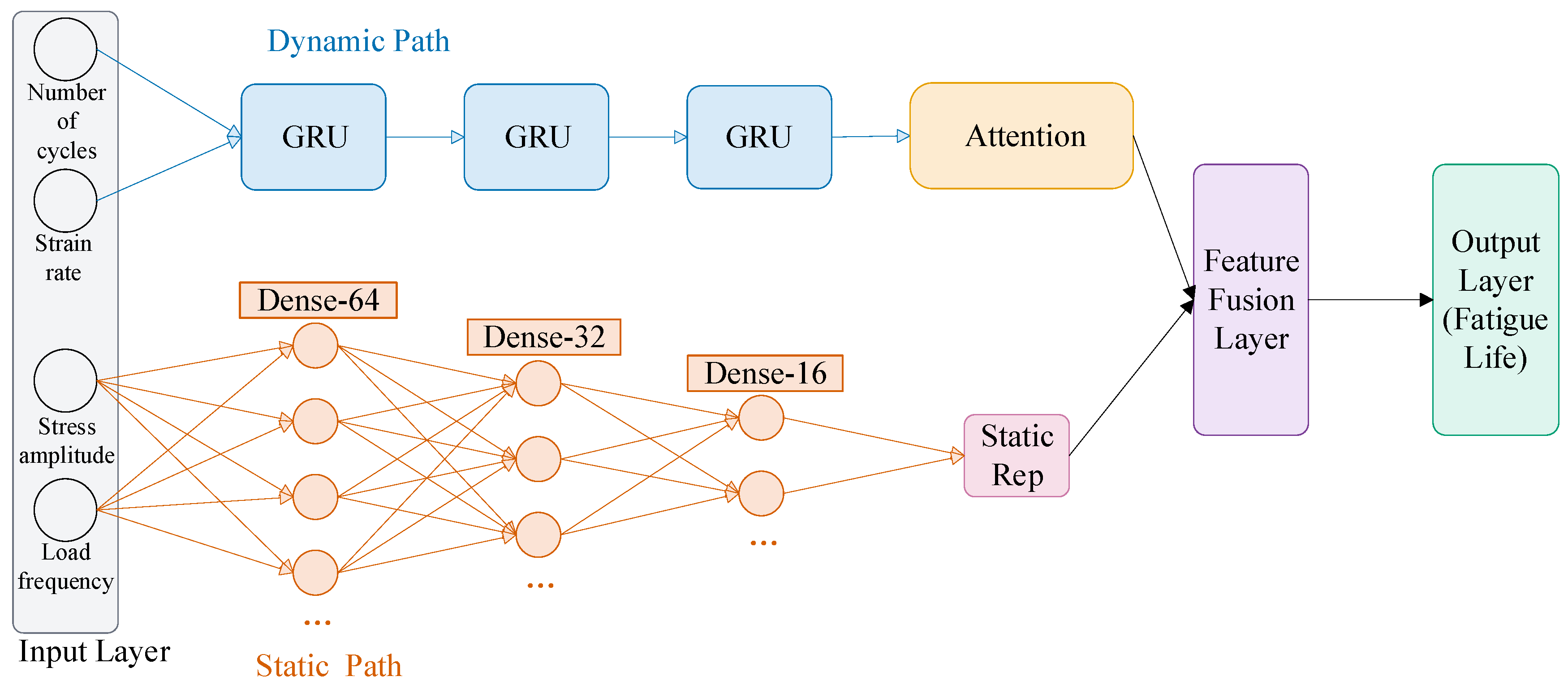

The overall architecture of the Hybrid GRU-Attention-DNN model is illustrated in

Figure 5. It comprises six distinct yet seamlessly integrated components: an input layer, a temporal feature extraction module, an attention-weighted module, a static feature modelling module, a feature fusion layer, and an output layer. The input layer normalises raw features and distinguishes between dynamic and static information; the GRU module focuses on capturing fatigue evolution patterns over extended time scales; the Attention layer highlights critical damage stages; the DNN module extracts higher-order relationships among static features; the fusion layer merges both feature types into a unified representation; and the output layer ultimately maps these to fatigue life prediction results.

This integration strategy differs from conventional “sequence encoder + MLP” schemes, where all variables are concatenated and processed by a single backbone. By decoupling static material properties from dynamic loading histories and combining them only at the fusion layer, the proposed model can assign specialised sub-networks to learn long-term temporal patterns and higher-order static relationships, respectively. The attention mechanism then adaptively weighs the temporal hidden states before fusion, so that the final prediction is mainly driven by those life stages that are most critical for damage accumulation. This modular design improves both prediction accuracy and interpretability compared with monolithic architectures.

In the input layer, the original multiaxial fatigue database provides both numerical and categorical descriptors, such as material label, specimen identifier, loading type and specimen geometry, in addition to several fatigue-related variables. To build a compact and physically meaningful input space, this study focuses on four core fatigue features: number of cycles, strain rate, stress amplitude and load frequency. The number of cycles and strain rate are recorded along the loading path and are therefore treated as dynamic temporal features, while the stress amplitude and load frequency are used as static features. Other numerical or categorical fields either contain a large fraction of missing or inconsistent entries, or mainly encode information that is already reflected in these four variables and in the loading histories. Including them would substantially increase the input dimensionality and the risk of overfitting for the given dataset size. To avoid training interference caused by scale differences, all selected features are further processed by Z-score normalisation and batch normalisation.

For each specimen, the dynamic input is constructed from the multiaxial strain-controlled loading history provided in the Materials Cloud database. The history is represented as a two-channel time series consisting of the (normalised) number of cycles and the strain rate. One representative loading block covering the whole fatigue life is extracted for every specimen and uniformly sampled along the life axis to obtain a fixed length of T = 241 points. In this way, all sequences share the same number of time steps and can be stacked into an array of shape (N, T, 2) for N specimens. At each time step, the two channels are standardised by subtracting the mean and dividing by the standard deviation computed from the training set. No additional numerical integration or moving-average smoothing is applied; instead, the GRU–attention branch directly learns temporal patterns such as changes in strain amplitude, load reversals and damage-accumulation stages from the original normalised histories.

The temporal feature extraction module employs a GRU network. Through dynamic adjustment via update and reset gates, the GRU effectively preserves early damage effects within million-cycle load sequences and propagates them to later stages [

34], thereby revealing latent correlations between “low-amplitude cycling” and “high-amplitude cycling” phases. The GRU outputs hidden state vectors embodying temporal evolution patterns.

The Attention module further applies weighted distribution to the GRU output. Its core principle involves calculating similarity between queries and keys to generate a weight matrix for value vector weighting [

35]. This significantly amplifies the impact of damage transition points (e.g., sudden stiffness drop phases) on life prediction, enhancing the model’s sensitivity to critical stages.

The static feature modelling module employs a three-layer DNN. Stress amplitude and load frequency undergo sequential nonlinear mapping to progressively extract their higher-order combinatorial features [

36]. This module identifies the coupled effect of “high stress inducing stress concentration + high load accelerating damage accumulation”, outputting a compact static feature representation. To mitigate overfitting, each DNN layer incorporates dropout and batch normalisation [

37].

The fusion layer concatenates the attention output (64-D) and the DNN output (16-D) into an 80-D vector, which is fed to a fully connected layer with ReLU to promote interaction between dynamic and static information.

It is worth emphasising that this integration strategy differs from previously reported hybrid architectures that combine LSTM units with attention and MLP heads or embed GRU cells inside Transformer-style temporal encoders. In LSTM–attention–MLP fatigue models, static and dynamic variables are often concatenated and processed by the same recurrent backbone, and attention is applied purely on the hidden states. In contrast, the present Hybrid GRU–Attention–DNN assigns separate, specialised branches to dynamic and static information, and uses attention that is explicitly guided by strain-rate changes, which enhances both physical interpretability and numerical stability. Compared with Transformer–GRU hybrids, which introduce multi-head self-attention layers with a relatively large number of parameters, the proposed design deliberately adopts a lightweight single-layer GRU with single-head scaled dot-product attention. This keeps the parameter count and computational cost commensurate with the available dataset size, reducing the risk of overfitting while still capturing the key temporal patterns that drive fatigue damage accumulation.

The output layer uses a single linear neuron to produce the final fatigue-life prediction, consistent with a regression objective. The mean squared error (MSE) loss function balances sensitivity to overall error and extreme values.

2.3. Verification Plan

To assess the proposed Hybrid GRU-Attention-DNN for multiaxial fatigue-life prediction, we design a systematic validation protocol. The protocol comprises four components: dataset partitioning, training/testing procedures, metric selection, and comparative experiments.

First, after preprocessing, all samples were split at the specimen level into a training set and a held-out test set with an 80/20 ratio using a fixed random seed. From the training set, a validation subset was further split off for model selection and early stopping, while the test set was kept completely untouched until the final evaluation. Because the split is specimen-level rather than material-wise, different specimens of the same material may appear in both training and test sets. Therefore, the reported performance mainly reflects generalisation to unseen loading paths of already characterised materials, rather than to completely unseen materials.

Second, within the training set we created a validation subset for model selection and early-stopping decisions. The training subset facilitated parameter updates, while the validation subset monitored performance changes during training to prevent overfitting. The training phase employed a mini-batch gradient descent strategy combined with the Adam optimizer for efficient parameter updates [

38]. The learning rate was selected via a small grid search on the validation subset to balance convergence speed and stability. Other hyperparameters such as the GRU hidden size, the widths of the DNN layers, the batch size and the dropout rate were adjusted through several exploratory runs on the validation data. We monitored the training and validation curves under different settings and chose the configuration that achieved a good trade-off between fitting accuracy, generalisation on the validation subset and training time, and then kept it fixed when comparing different architectures.

During testing, the model outputs the logarithmic life

. Both predictions and ground truths are transformed back to the cycle-life scale by

, and all metrics in Equations (13)–(16) are computed on the original life scale

.

Regarding evaluation metrics, this paper adopts four complementary indicators: the coefficient of determination (R

2), root mean squared error (RMSE), mean absolute error (MAE), and root mean squared logarithmic error (RMSLE). During testing, the predicted and true fatigue lives are first mapped back from the logarithmic outputs

to the original number-of-cycles scale via

. All four metrics are then computed on this original life scale

. MAE reflects the model’s ability to control absolute deviations between predicted and true fatigue lives. RMSE penalises larger errors more strongly than MAE and therefore highlights occasional large deviations. RMSLE evaluates the discrepancy between predicted and true values on a logarithmic scale, which is suitable when fatigue life spans several orders of magnitude and engineering practice often cares about relative rather than purely absolute errors. R

2 measures the goodness of fit (with values closer to 1 indicating better agreement). Combining these four metrics provides a comprehensive assessment of both absolute accuracy and relative stability across the entire life range. Their respective calculation formulas are:

where

and

denote the ground-truth and predicted fatigue lives of the

-th sample on the original cycle-life scale

, respectively;

is the number of test samples;

is the mean of the ground-truth values; and

denotes the natural logarithm. The

transform in RMSLE is used to evaluate relative discrepancies and to avoid taking the logarithm of zero.

4. Conclusions

This study proposed a feature-optimised Hybrid GRU–Attention–DNN model for predicting the multiaxial fatigue life of metallic materials. By assigning specialised branches to dynamic loading histories and static material descriptors and fusing them in a joint representation space, the model simultaneously captures temporal damage evolution and higher-order static couplings between material properties and loading paths.

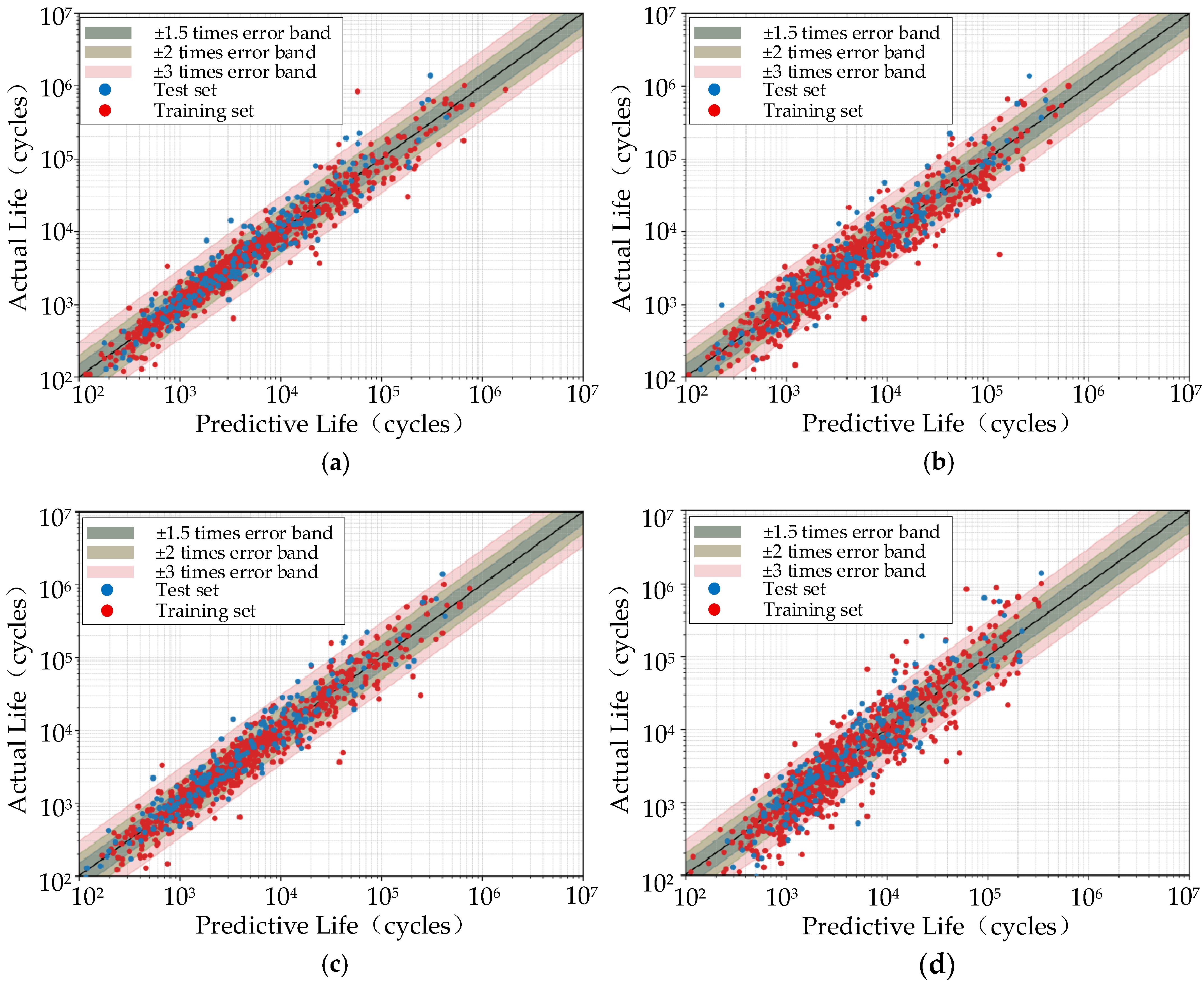

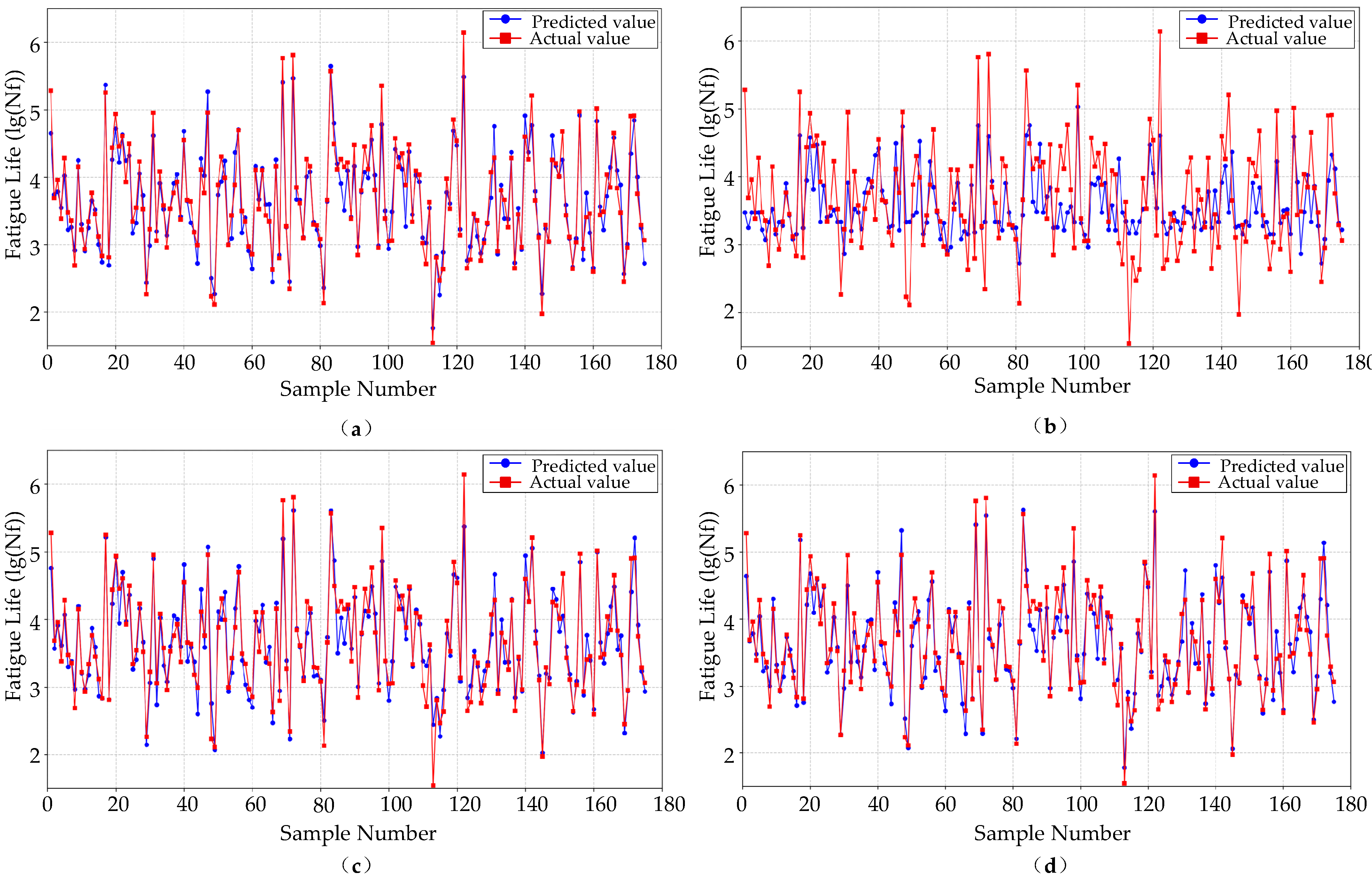

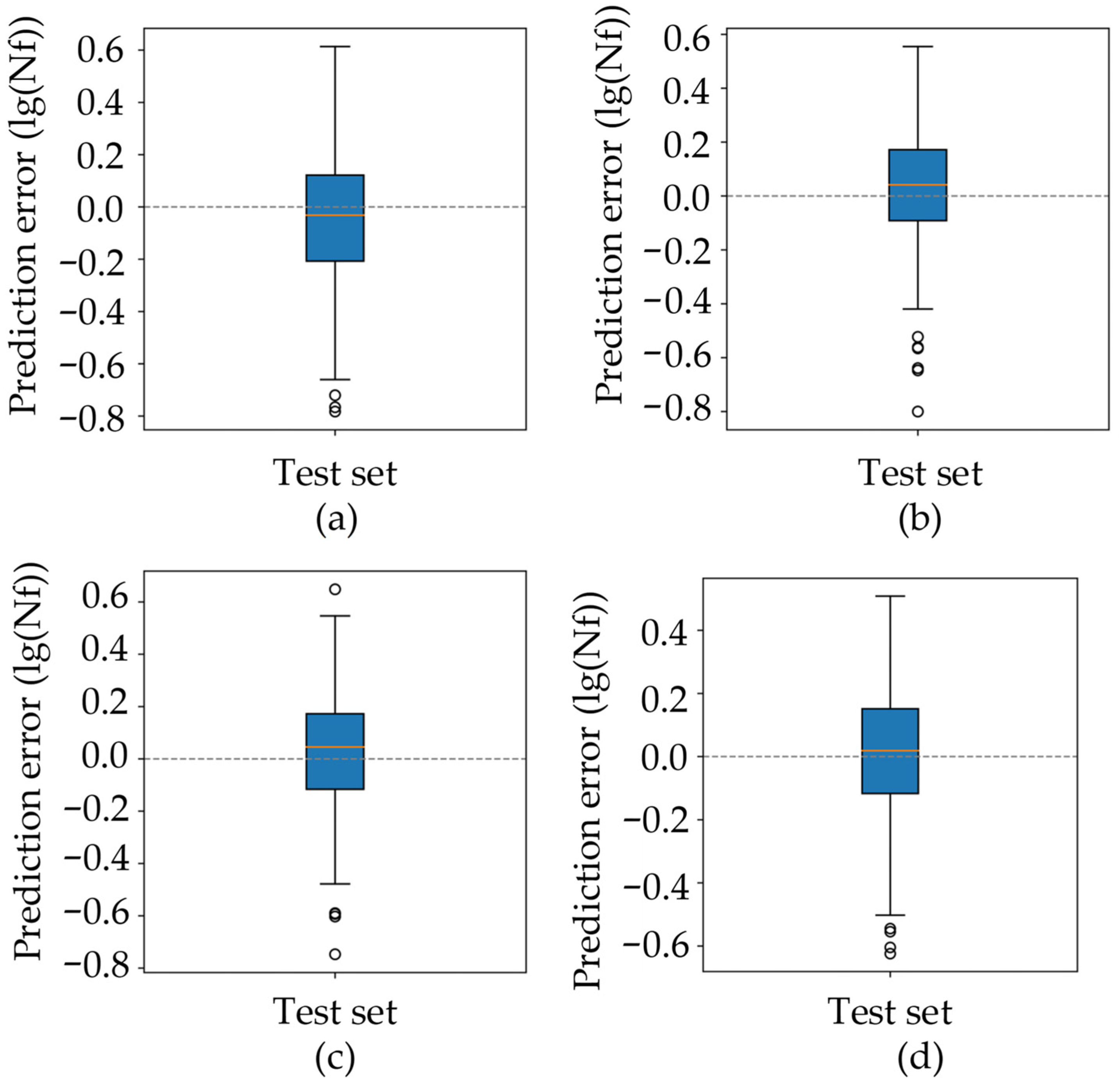

On the Materials Cloud multiaxial fatigue dataset, the proposed Hybrid GRU–Attention–DNN consistently outperforms the baseline CNN, GRU and LSTM models. On the held-out test set, it achieves the highest R2 (0.9156) and the lowest MAE, RMSE and RMSLE among all candidates, indicating both improved global fitting quality and reduced absolute as well as relative errors. Error-band plots show that more than 90% of the predictions fall within the ±1.5× band, with markedly fewer outliers than the baselines, which demonstrates the overall superiority of the proposed architecture.

Analysis of residual boxplots and bootstrap-based confidence intervals further reveals that the performance gain is statistically robust rather than driven by a few favourable samples. The Hybrid model exhibits the narrowest interquartile range and the smallest extreme residuals, and its 95% confidence intervals of R2, RMSE, MAE and RMSLE are consistently shifted towards better values compared with CNN and LSTM, while being comparable to or slightly better than GRU. These results suggest that the proposed fusion strategy effectively enhances robustness against variability in loading paths and material properties, which is crucial for practical fatigue assessment.

The interpretability analysis provides additional insight into the prediction mechanism. Attention visualisation reveals a stable “early-life attention band”, where the average attention curve peaks in the early stage of the loading history and the Early/Mid/Late attention proportions follow an approximate 0.59/0.32/0.09 pattern. Time-window masking experiments show that removing the Early segment causes the largest increase in RMSLE, whereas masking the Mid or Late segments leads to only minor degradation. These two independent lines of evidence consistently indicate that early dynamic responses carry the most informative and irreplaceable cues for life prediction, which agrees with the physical understanding that initial stiffness evolution and early damage accumulation strongly influence subsequent fatigue life.

From an engineering perspective, the proposed model provides a practical tool for multiaxial fatigue assessment when only a moderate number of tests are available. It can be used to rapidly screen candidate loading paths or component geometries and to support design decisions by quantifying the influence of both loading histories and static material parameters. Nevertheless, the present evaluation protocol has some limitations. The train–test split is performed at the specimen level, so different specimens of the same material may appear in both sets; consequently, the reported performance mainly reflects generalisation to unseen loading paths of already characterised materials rather than to completely new alloys. In addition, the dataset covers a finite set of materials and loading conditions, and the current model does not explicitly incorporate microstructural information or crack-growth mechanics. Future work will consider material-wise partitioning or leave-one-material-out evaluation to more exhaustively assess generalisation to unseen materials. Extending the framework to other fatigue-critical components and to variable-temperature or environmental loading conditions is also an important direction.