A Review of Robots, Perception, and Tasks in Precision Agriculture

Abstract

1. Introduction

- costs reductions,

- optimisation of yields and quality concerning the productive capacity of each site,

- better management of the resources, and

- protection of the environment.

Sustainable Development Goals in Agriculture

- Adopting sustainable agriculture techniques that boost productivity and production, aid ecosystem sustainability, and strengthen the capacity to respond to climate change, extreme weather, droughts, floods, and other calamities, as well as progressively increasing land and soil quality. All of this is critical to reaching SDG2’s goals of Zero Hunger by 2030 [24]. Farmers and agricultural organisations who use PA receive access to technologies that help them increase yields, check product quality, enhance crop management, and minimise resource usage costs, all of which help to assure global food security and alleviate hunger.

- By 2030, global water consumption will have increased by more than 50%, with agriculture alone requiring more than can be provided. As a consequence, PA solutions help farmers to manage agrochemicals and minimise the overuse or unnecessary application of fertilisers and pesticides, protecting water and soil quality, in line with SDG6—Clean Water and Sanitation [25] to encourage access to and sustainable management of water and sanitation.

- As part of SDG12—Responsible Consumption and Production [26], the UN is trying to achieve the sustainable management and effective use of natural resources. The PA approach aids farmers in achieving safe management of phytosanitary products and other waste and avoiding the use of harmful substances where possible, reducing their negative effects on soil, water, and air, and thus on human health, as a result of the implementation of active innovation in the development of its products.

- SDG13—Climate Action [27] necessitates immediate measures and activities to address climate change and its implications. The PA methods provide users with tools and technology that not only assure sustainability and increase output and profit, but also allow them to monitor the climate and take appropriate preventative steps to safeguard both fields and the environment.

- Soil degradation is a severe and growing threat due to unsustainable land use and management practices, as well as catastrophic climatic events that introduces a variety of social, economic, and governance challenges. PA works on SDG15—Life on Land [28] targets by encouraging measures that promote land and soil restoration, prevention, and sustainable usage through planned and appropriate crop and field interventions.

2. Review Methodology

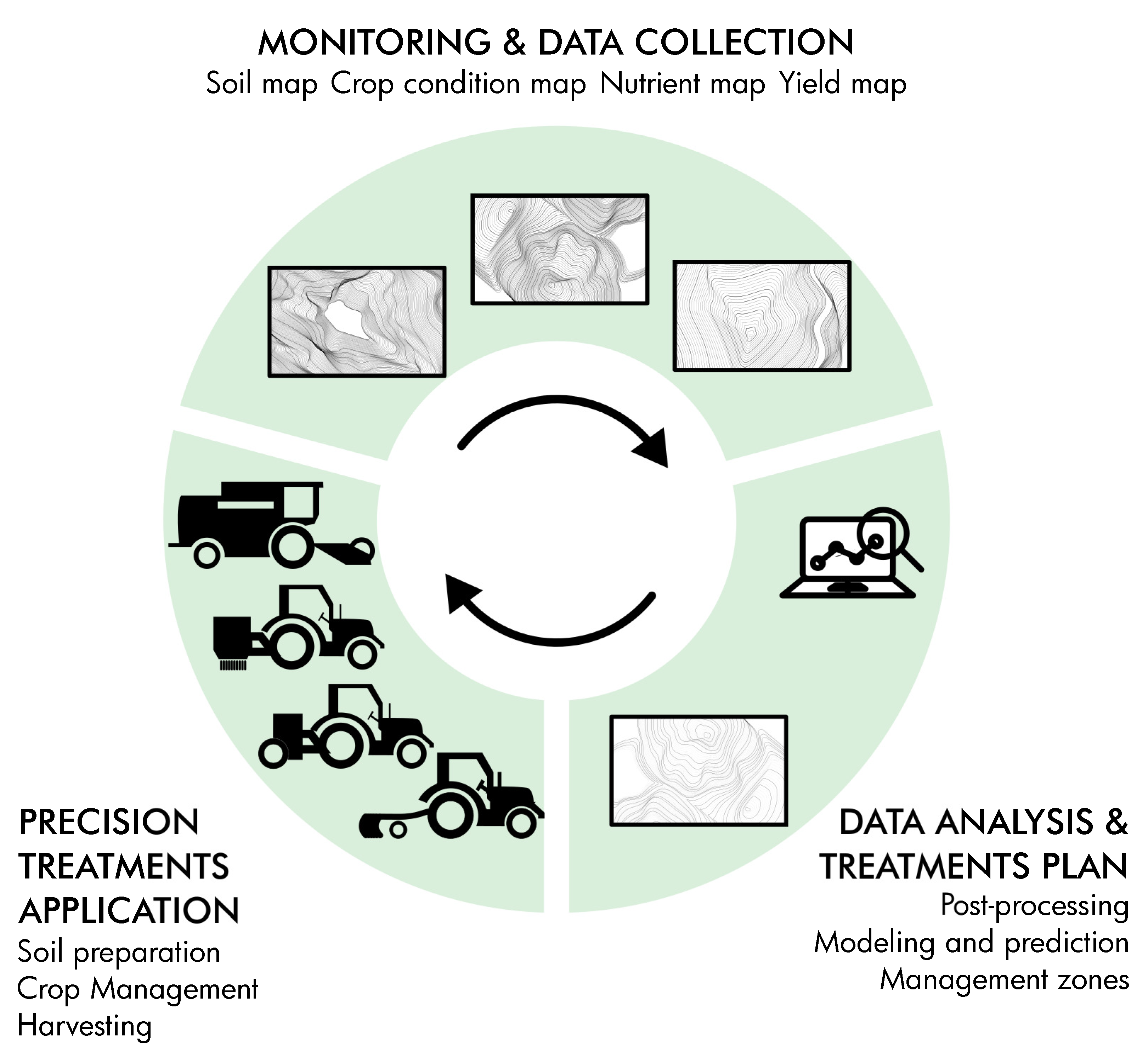

3. Enabling Technologies in Precision Agriculture

- Navigation: The vehicle must move in the field independently, following a predetermined course, following key waypoints, and avoiding any obstacles or collisions.

- Sensing: The PA vehicle must be capable of detecting, measuring, and sampling anything that might be relevant in planning crop and soil management operations.

- Mapping: Sensing activities generate a great amount of data, which is generally processed by Geographic Information Systems (GIS), which are maps that collect all essential field features.

- Action: The mapping creates numerous MZs, and then the necessary treatment is carried out in each MZ. As a result, PA vehicles must be equipped to carry out such operations on their own.

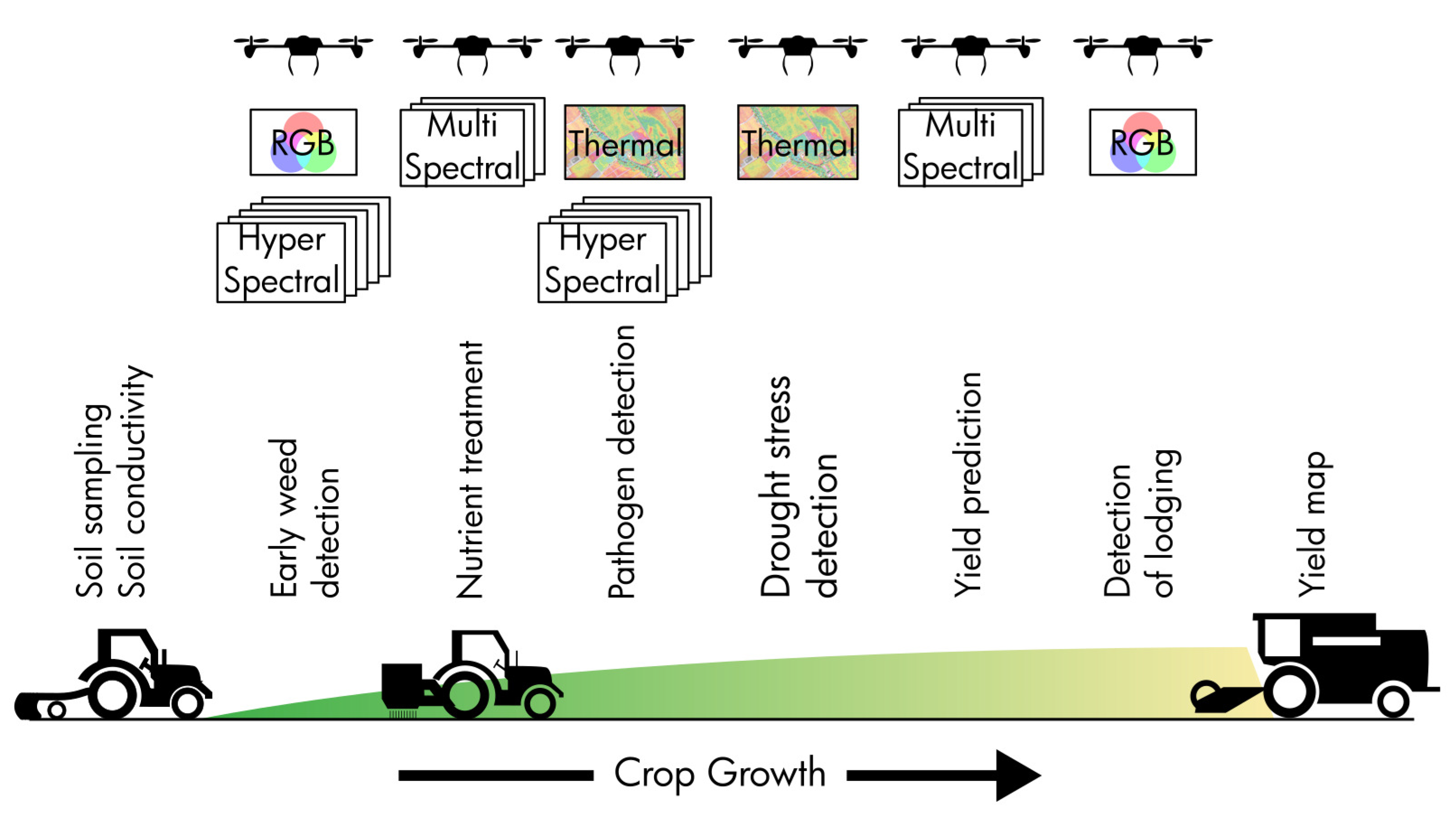

3.1. Remote Sensing

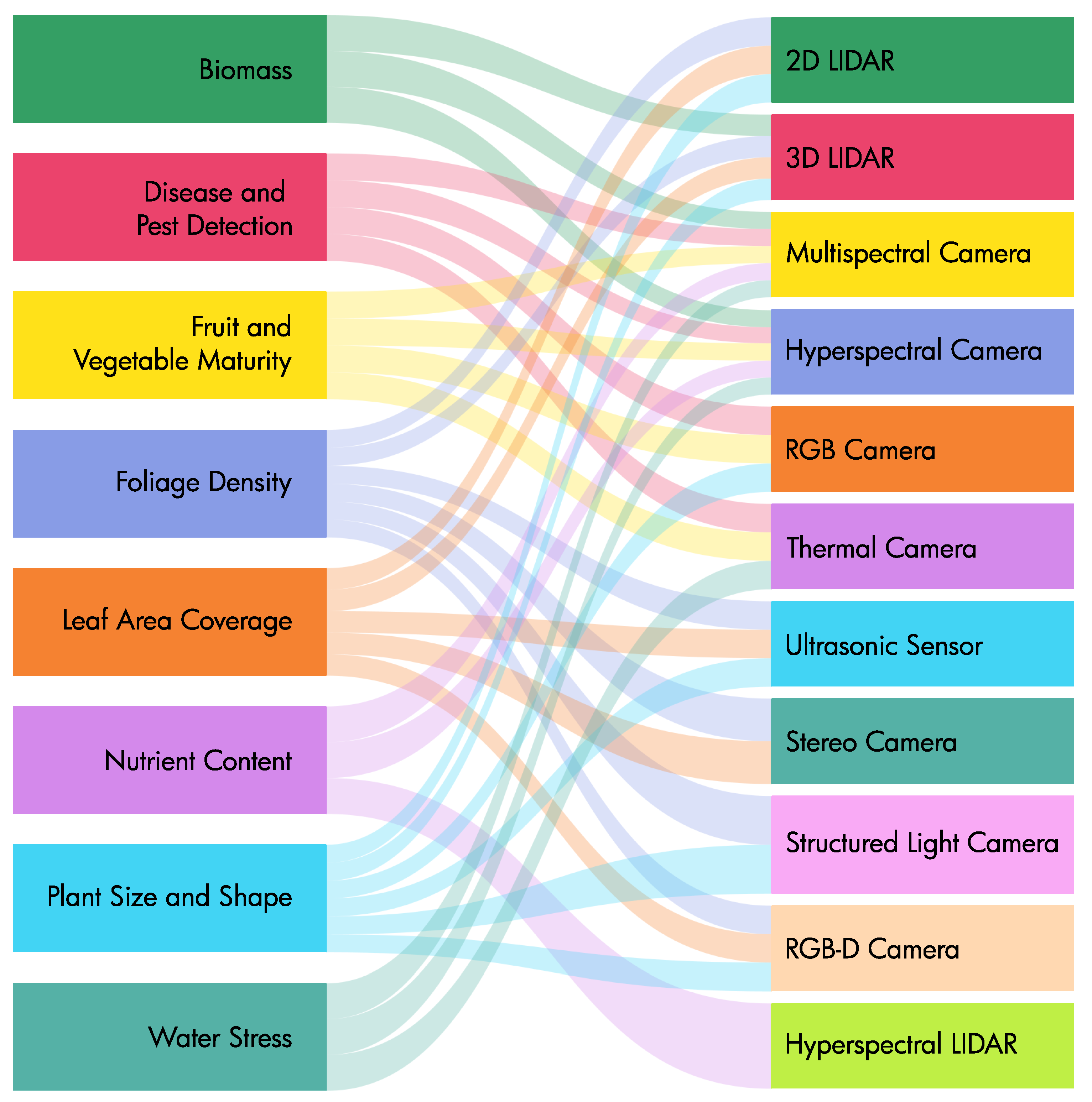

- Structural characterisation: Estimating characteristics such as canopy volume, plant height, leaf area coverage, and biomass, among others, allows farmers to make better decisions. A number of researchers [40,41] used canopy volume data to improve pesticide and fertiliser spraying on fruit trees in terms of input savings and environmental costs. Mora et al. [42] also used leaf area coverage for crop-growth monitoring and production prediction, since it reflects various physiological processes in plants. Furthermore, biomass mapping and monitoring enable the identification of changes in plantation conditions due to storms, drought, or diseases [43,44]. Furthermore, because bio-energy from certain crops has become one of the most extensively used energy sources, Kankare et al. [45] were able to use crop biomass as a productivity criterion.

- Plant/Fruit detection: For automated actions such as pruning, harvesting, and sowing to be effective, precision in detecting things of interest in the environment is necessary. To achieve this aim, scientists have used a variety of plant and fruit features and qualities, including colour, shape, and temperature. Colour is a trait that may be used to identify the fruit inside the canopy [46,47] or in the agricultural field [48] in robotic fruit picking or crop harvesting. Furthermore, according to Karkee et al. [49], the morphology of the stems is the property that provides the cutting directions in the majority of instances for automated robotic pruning.

- Physiology assessment: The canopy’s physical response to sunlight produces different spectral patterns that reveal information about the plant’s physiological health. As a result, a number of indices [50,51] based on crop spectral responses have been developed to assess parameters such as nitrogen deficiency, chlorophyll content, water stress, and insect infestation. In addition, numerous sensing tools (for example, infrared gas analysers) enable the direct assessment of a number of physiological characteristics in plants. Many of them need direct contact with the crop, which results in more exact readings; nevertheless, the measuring method takes a unique path, making this technique time-consuming in most cases [52].

3.1.1. Vision-Based Sensors

3.1.2. Range Sensors

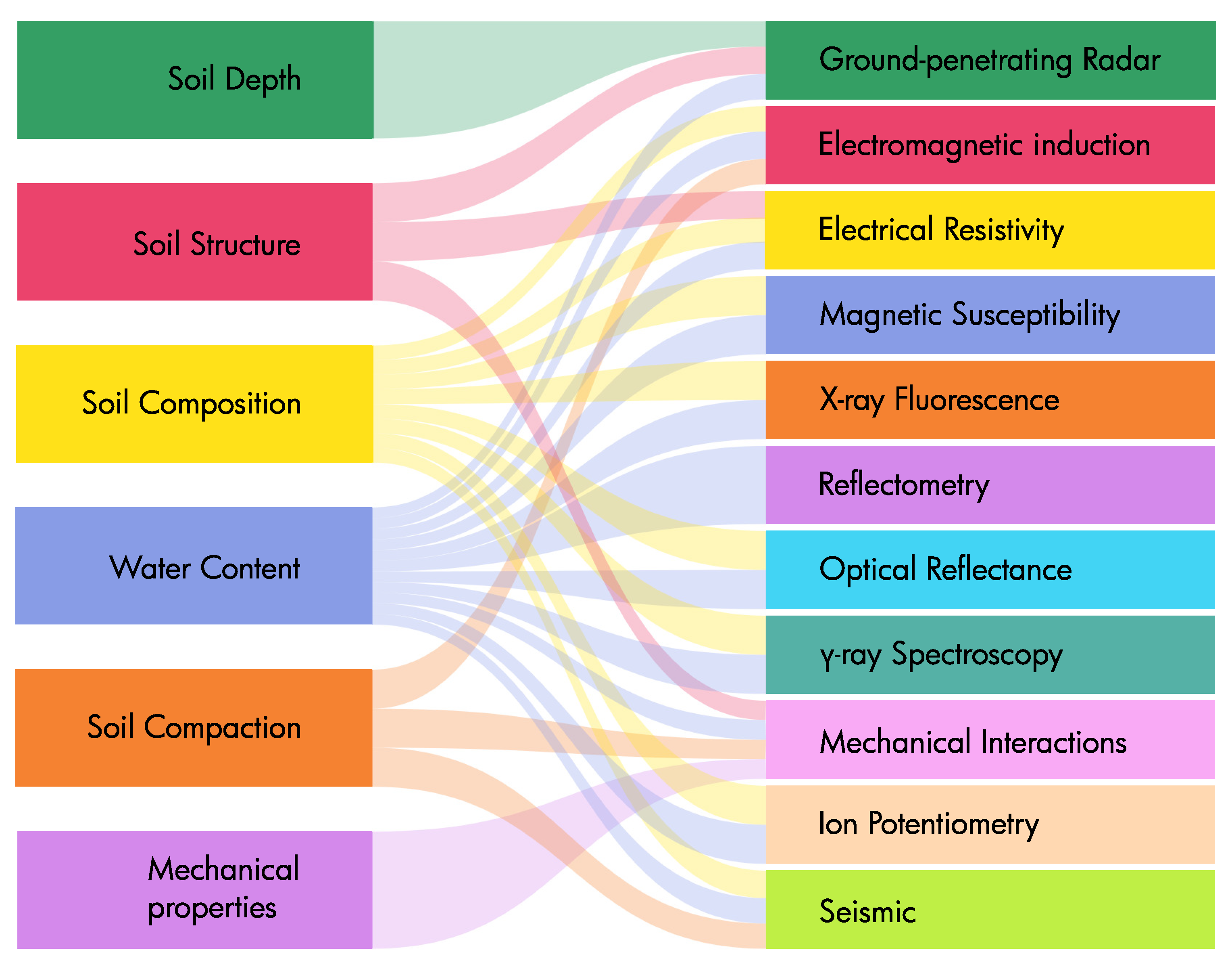

3.2. Proximal Sensing

4. Robotics and Agriculture

- The cost of employing robots is lower than the cost of employing any other method.

- Using robots in agriculture improves agricultural production capacities, yields, profits, and survival while also boosting product quality and uniformity.

- The use of robots in growth and production processes minimises uncertainty and volatility.

- In comparison to the conventional method, the introduction of robots allows the farmer to make higher-resolution judgements and/or increase the quality of the output, allowing for growth and production phase optimisation.

- The robot is capable of doing tasks that are either dangerous or impossible to execute manually.

4.1. Flying Drones

4.2. Land-Based Robots

4.2.1. Agricultural Robot Main Functions Taxonomy

4.2.2. Agricultural Robots and Vineyards

4.2.3. Agricultural Robots Classified by Size

4.2.4. Agricultural Robots by Mobility Layout Configurations

5. Collaboration of Multiple Robots in Precision Agriculture

6. Case Study: Agri.Q

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Sylvester, G. E-Agriculture in Action: Drones for Agriculture; FAO: Bangkok, Thailand, 2018. [Google Scholar]

- Crist, E.; Mora, C.; Engelman, R. The interaction of human population, food production, and biodiversity protection. Science 2017, 356, 260–264. [Google Scholar] [CrossRef]

- Ramankutty, N.; Mehrabi, Z.; Waha, K.; Jarvis, L.; Kremen, C.; Herrero, M.; Rieseberg, L.H. Trends in Global Agricultural Land Use: Implications for Environmental Health and Food Security. Annu. Rev. Plant Biol. 2018, 69, 789–815. [Google Scholar] [CrossRef]

- Srinivasan, A. Handbook of Precision Agriculture: Principles and Applications; CRC Press: Boca Raton, FL, USA, 2006. [Google Scholar] [CrossRef]

- Corti, M.; Marino Gallina, P.; Cavalli, D.; Ortuani, B.; Cabassi, G.; Cola, G.; Vigoni, A.; Degano, L.; Bregaglio, S. Evaluation of In-Season Management Zones from High-Resolution Soil and Plant Sensors. Agronomy 2020, 10, 1124. [Google Scholar] [CrossRef]

- Griffin, T.W.; Yeager, E.A. Adoption of precision agriculture technology: A duration analysis. In Proceedings of the of 14th International Conference on Precision Agriculture; International Society of Precision Agriculture: Monticello, IL, USA, 2018. [Google Scholar]

- Barrientos, A.; Colorado, J.; Cerro, J.d.; Martinez, A.; Rossi, C.; Sanz, D.; Valente, J. Aerial remote sensing in agriculture: A practical approach to area coverage and path planning for fleets of mini aerial robots. J. Field Robot. 2011, 28, 667–689. [Google Scholar] [CrossRef]

- Beloev, I.; Kinaneva, D.; Georgiev, G.; Hristov, G.; Zahariev, P. Artificial Intelligence-Driven Autonomous Robot for Precision Agriculture. Acta Technol. Agric. 2021, 24, 48–54. [Google Scholar] [CrossRef]

- Pierce, F.J.; Nowak, P. Aspects of Precision Agriculture. In Advances in Agronomy; Academic Press: Cambridge, MA, USA, 1999; Volume 67, pp. 1–85. [Google Scholar] [CrossRef]

- Zhang, N.; Wang, M.; Wang, N. Precision agriculture—A worldwide overview. Comput. Electron. Agric. 2002, 36, 113–132. [Google Scholar] [CrossRef]

- Davoodi, M.; Mohammadpour Velni, J.; Li, C. Coverage control with multiple ground robots for precision agriculture. Mech. Eng. 2018, 140, S4–S8. [Google Scholar] [CrossRef]

- Tokekar, P.; Hook, J.V.; Mulla, D.; Isler, V. Sensor Planning for a Symbiotic UAV and UGV System for Precision Agriculture. IEEE Trans. Robot. 2016, 32, 1498–1511. [Google Scholar] [CrossRef]

- Lowenberg-DeBoer, J.; Huang, I.Y.; Grigoriadis, V.; Blackmore, S. Economics of robots and automation in field crop production. Precis. Agric. 2020, 21, 278–299. [Google Scholar] [CrossRef]

- Vecchio, Y.; Agnusdei, G.P.; Miglietta, P.P.; Capitanio, F. Adoption of precision farming tools: The case of Italian farmers. Int. J. Environ. Res. Public Health 2020, 17, 869. [Google Scholar] [CrossRef]

- Batte, M.T.; Arnholt, M.W. Precision farming adoption and use in Ohio: Case studies of six leading-edge adopters. Comput. Electron. Agric. 2003, 38, 125–139. [Google Scholar] [CrossRef]

- Pierce, F.J.; Elliott, T.V. Regional and on-farm wireless sensor networks for agricultural systems in Eastern Washington. Comput. Electron. Agric. 2007, 61, 32–43. [Google Scholar] [CrossRef]

- Swinton, S.M.; Lowenberg-DeBoer, J. Evaluating the Profitability of Site-Specific Farming. J. Prod. Agric. 1998, 11, 439–446. [Google Scholar] [CrossRef]

- Fountas, S.; Blackmore, S.; Ess, D.; Hawkins, S.; Blumhoff, G.; Lowenberg-Deboer, J.; Sorensen, C.G. Farmer Experience with Precision Agriculture in Denmark and the US Eastern Corn Belt. Precis. Agric. 2005, 6, 121–141. [Google Scholar] [CrossRef]

- Ellis, K.; Baugher, T.A.; Lewis, K. Results from Survey Instruments Used to Assess Technology Adoption for Tree Fruit Production. HortTechnology 2010, 20, 1043–1048. [Google Scholar] [CrossRef]

- Lamb, D.W.; Frazier, P.; Adams, P. Improving pathways to adoption: Putting the right P’s in precision agriculture. Comput. Electron. Agric. 2008, 61, 4–9. [Google Scholar] [CrossRef]

- United Nations. Sustainable Development Goals. 2016. Available online: https://www.un.org/development/desa/publications/sustainable-development-goals-report-2016.html (accessed on 13 April 2022).

- Gil, J.D.B.; Reidsma, P.; Giller, K.; Todman, L.; Whitmore, A.; van Ittersum, M. Sustainable development goal 2: Improved targets and indicators for agriculture and food security. Ambio 2019, 48, 685–698. [Google Scholar] [CrossRef]

- Vecchio, Y.; De Rosa, M.; Adinolfi, F.; Bartoli, L.; Masi, M. Adoption of precision farming tools: A context-related analysis. Land Use Policy 2020, 94, 104481. [Google Scholar] [CrossRef]

- United Nations. SDG 2: Zero Hunger. 2016. Available online: https://www.un.org/sustainabledevelopment/hunger/ (accessed on 13 April 2022).

- United Nations. SDG 6: Clean Water and Sanitation. 2016. Available online: https://ec.europa.eu/eurostat/statistics-explained/index.php?title=SDG_6_-_Clean_water_and_sanitation (accessed on 13 April 2022).

- United Nations. SDG 12: Responsible Consumption and Production. 2016. Available online: https://ec.europa.eu/eurostat/statistics-explained/index.php?title=SDG_12_-_Responsible_consumption_and_production_(statistical_annex)&oldid=363908 (accessed on 13 April 2022).

- United Nations. SDG 13: Climate Action. 2016. Available online: https://www.un.org/sustainabledevelopment/climate-action/ (accessed on 13 April 2022).

- United Nations. SDG 15: Life on Land. 2016. Available online: https://ec.europa.eu/eurostat/statistics-explained/index.php?title=SDG_15_-_Life_on_land (accessed on 13 April 2022).

- Long, D.S.; Carlson, G.R.; DeGloria, S.D. Quality of Field Management Maps. In Site-Specific Management for Agricultural Systems; John Wiley & Sons, Ltd.: Hoboken, NJ, USA, 1995; pp. 251–271. [Google Scholar] [CrossRef]

- Nawar, S.; Corstanje, R.; Halcro, G.; Mulla, D.; Mouazen, A.M. Chapter Four—Delineation of Soil Management Zones for Variable-Rate Fertilization: A Review. In Advances in Agronomy; Academic Press: Cambridge, MA, USA, 2017; Volume 143, pp. 175–245. [Google Scholar] [CrossRef]

- Auat Cheein, F.A.; Carelli, R. Agricultural Robotics: Unmanned Robotic Service Units in Agricultural Tasks. IEEE Ind. Electron. Mag. 2013, 7, 48–58. [Google Scholar] [CrossRef]

- Maes, W.H.; Steppe, K. Perspectives for Remote Sensing with Unmanned Aerial Vehicles in Precision Agriculture. Trends Plant Sci. 2019, 24, 152–164. [Google Scholar] [CrossRef]

- Radoglou-Grammatikis, P.; Sarigiannidis, P.; Lagkas, T.; Moscholios, I. A compilation of UAV applications for precision agriculture. Comput. Netw. 2020, 172, 107148. [Google Scholar] [CrossRef]

- Rosell, J.R.; Sanz, R. A review of methods and applications of the geometric characterization of tree crops in agricultural activities. Comput. Electron. Agric. 2012, 81, 124–141. [Google Scholar] [CrossRef]

- Bac, C.W.; van Henten, E.J.; Hemming, J.; Edan, Y. Harvesting Robots for High-value Crops: State-of-the-art Review and Challenges Ahead. J. Field Robot. 2014, 31, 888–911. [Google Scholar] [CrossRef]

- Gongal, A.; Amatya, S.; Karkee, M.; Zhang, Q.; Lewis, K. Sensors and systems for fruit detection and localization: A review. Comput. Electron. Agric. 2015, 116, 8–19. [Google Scholar] [CrossRef]

- Vázquez-Arellano, M.; Griepentrog, H.W.; Reiser, D.; Paraforos, D.S. 3-D Imaging Systems for Agricultural Applications—A Review. Sensors 2016, 16, 618. [Google Scholar] [CrossRef]

- Yandun Narvaez, F.; Reina, G.; Torres-Torriti, M.; Kantor, G.; Cheein, F.A. A Survey of Ranging and Imaging Techniques for Precision Agriculture Phenotyping. IEEE/ASME Trans. Mechatronics 2017, 22, 2428–2439. [Google Scholar] [CrossRef]

- Tsouros, D.C.; Bibi, S.; Sarigiannidis, P.G. A review on UAV-based applications for precision agriculture. Information 2019, 10, 349. [Google Scholar] [CrossRef]

- Chen, Y.; Zhu, H.; Ozkan, H.E. Development of variable-rate sprayer with laser scanning sensor to synchronize spray outputs to tree structures. Trans. ASABE 2012, 55, 773–781. [Google Scholar] [CrossRef]

- Escolà, A.; Rosell-Polo, J.R.; Planas, S.; Gil, E.; Pomar, J.; Camp, F.; Llorens, J.; Solanelles, F. Variable rate sprayer. Part 1—Orchard prototype: Design, implementation and validation. Comput. Electron. Agric. 2013, 95, 122–135. [Google Scholar] [CrossRef]

- Mora, M.; Avila, F.; Carrasco-Benavides, M.; Maldonado, G.; Olguín-Cáceres, J.; Fuentes, S. Automated computation of leaf area index from fruit trees using improved image processing algorithms applied to canopy cover digital photograpies. Comput. Electron. Agric. 2016, 123, 195–202. [Google Scholar] [CrossRef]

- Eitel, J.U.H.; Magney, T.S.; Vierling, L.A.; Brown, T.T.; Huggins, D.R. LiDAR based biomass and crop nitrogen estimates for rapid, non-destructive assessment of wheat nitrogen status. Field Crop. Res. 2014, 159, 21–32. [Google Scholar] [CrossRef]

- Li, W.; Niu, Z.; Chen, H.; Li, D.; Wu, M.; Zhao, W. Remote estimation of canopy height and aboveground biomass of maize using high-resolution stereo images from a low-cost unmanned aerial vehicle system. Ecol. Indic. 2016, 67, 637–648. [Google Scholar] [CrossRef]

- Kankare, V.; Holopainen, M.; Vastaranta, M.; Puttonen, E.; Yu, X.; Hyyppä, J.; Vaaja, M.; Hyyppä, H.; Alho, P. Individual tree biomass estimation using terrestrial laser scanning. ISPRS J. Photogramm. Remote Sens. 2013, 75, 64–75. [Google Scholar] [CrossRef]

- Ji, W.; Zhao, D.; Cheng, F.; Xu, B.; Zhang, Y.; Wang, J. Automatic recognition vision system guided for apple harvesting robot. Comput. Electr. Eng. 2012, 38, 1186–1195. [Google Scholar] [CrossRef]

- Gongal, A.; Silwal, A.; Amatya, S.; Karkee, M.; Zhang, Q.; Lewis, K. Apple crop-load estimation with over-the-row machine vision system. Comput. Electron. Agric. 2016, 120, 26–35. [Google Scholar] [CrossRef]

- Foglia, M.M.; Reina, G. Agricultural robot for radicchio harvesting. J. Field Robot. 2006, 23, 363–377. [Google Scholar] [CrossRef]

- Karkee, M.; Adhikari, B.; Amatya, S.; Zhang, Q. Identification of pruning branches in tall spindle apple trees for automated pruning. Comput. Electron. Agric. 2014, 103, 127–135. [Google Scholar] [CrossRef]

- Jones, H.G.; Serraj, R.; Loveys, B.R.; Xiong, L.; Wheaton, A.; Price, A.H.; Jones, H.G.; Serraj, R.; Loveys, B.R.; Xiong, L.; et al. Thermal infrared imaging of crop canopies for the remote diagnosis and quantification of plant responses to water stress in the field. Funct. Plant Biol. 2009, 36, 978–989. [Google Scholar] [CrossRef]

- Du, L.; Gong, W.; Shi, S.; Yang, J.; Sun, J.; Zhu, B.; Song, S. Estimation of rice leaf nitrogen contents based on hyperspectral LIDAR. Int. J. Appl. Earth Obs. Geoinf. 2016, 44, 136–143. [Google Scholar] [CrossRef]

- Weerakkody, W.A.P.; Suriyagoda, L.D.B. Estimation of leaf and canopy photosynthesis of pot chrysanthemum and its implication on intensive canopy management. Sci. Hortic. 2015, 192, 237–243. [Google Scholar] [CrossRef]

- Zhao, C.; Lee, W.S.; He, D. Immature green citrus detection based on colour feature and sum of absolute transformed difference (SATD) using colour images in the citrus grove. Comput. Electron. Agric. 2016, 124, 243–253. [Google Scholar] [CrossRef]

- Nuske, S.; Wilshusen, K.; Achar, S.; Yoder, L.; Narasimhan, S.; Singh, S. Automated Visual Yield Estimation in Vineyards. J. Field Robot. 2014, 31, 837–860. [Google Scholar] [CrossRef]

- Barbedo, J.G.A.; Koenigkan, L.V.; Santos, T.T. Identifying multiple plant diseases using digital image processing. Biosyst. Eng. 2016, 147, 104–116. [Google Scholar] [CrossRef]

- Nandi, C.S.; Tudu, B.; Koley, C. A Machine Vision-Based Maturity Prediction System for Sorting of Harvested Mangoes. IEEE Trans. Instrum. Meas. 2014, 63, 1722–1730. [Google Scholar] [CrossRef]

- Wang, Q.; Nuske, S.; Bergerman, M.; Singh, S. Automated Crop Yield Estimation for Apple Orchards. In Experimental Robotics: The 13th International Symposium on Experimental Robotics, Quebec, Canada, 18–21 June 2021; Springer Tracts in Advanced Robotics; Springer International Publishing: Berlin/Heidelberg, Germany, 2013; pp. 745–758. [Google Scholar] [CrossRef]

- Baluja, J.; Diago, M.P.; Balda, P.; Zorer, R.; Meggio, F.; Morales, F.; Tardaguila, J. Assessment of vineyard water status variability by thermal and multispectral imagery using an unmanned aerial vehicle (UAV). Irrig. Sci. 2012, 30, 511–522. [Google Scholar] [CrossRef]

- Wachs, J.P.; Stern, H.I.; Burks, T.; Alchanatis, V. Low and high-level visual feature-based apple detection from multi-modal images. Precis. Agric. 2010, 11, 717–735. [Google Scholar] [CrossRef]

- Reina, G.; Milella, A.; Rouveure, R.; Nielsen, M.; Worst, R.; Blas, M.R. Ambient awareness for agricultural robotic vehicles. Biosyst. Eng. 2016, 146, 114–132. [Google Scholar] [CrossRef]

- Li, D.; Xu, L.; Tan, C.; Goodman, E.D.; Fu, D.; Xin, L. Digitization and Visualization of Greenhouse Tomato Plants in Indoor Environments. Sensors 2015, 15, 4019–4051. [Google Scholar] [CrossRef]

- Chéné, Y.; Rousseau, D.; Lucidarme, P.; Bertheloot, J.; Caffier, V.; Morel, P. On the use of depth camera for 3D phenotyping of entire plants. Comput. Electron. Agric. 2012, 82, 122–127. [Google Scholar] [CrossRef]

- Wang, W.; Li, C. Size estimation of sweet onions using consumer-grade RGB-depth sensor. J. Food Eng. 2014, 142, 153–162. [Google Scholar] [CrossRef]

- Andújar, D.; Ribeiro, A.; Fernández-Quintanilla, C.; Dorado, J. Using depth cameras to extract structural parameters to assess the growth state and yield of cauliflower crops. Comput. Electron. Agric. 2016, 122, 67–73. [Google Scholar] [CrossRef]

- Mulla, D.J. Twenty five years of remote sensing in precision agriculture: Key advances and remaining knowledge gaps. Biosyst. Eng. 2013, 114, 358–371. [Google Scholar] [CrossRef]

- Hillnhütter, C.; Mahlein, A.K.; Sikora, R.A.; Oerke, E.C. Remote sensing to detect plant stress induced by Heterodera schachtii and Rhizoctonia solani in sugar beet fields. Field Crop. Res. 2011, 122, 70–77. [Google Scholar] [CrossRef]

- Zarco-Tejada, P.J.; González-Dugo, V.; Berni, J.A.J. Fluorescence, temperature and narrow-band indices acquired from a UAV platform for water stress detection using a micro-hyperspectral imager and a thermal camera. Remote Sens. Environ. 2012, 117, 322–337. [Google Scholar] [CrossRef]

- Torres-Sánchez, J.; López-Granados, F.; Castro, A.I.D.; Peña-Barragán, J.M. Configuration and Specifications of an Unmanned Aerial Vehicle (UAV) for Early Site Specific Weed Management. PLoS ONE 2013, 8, e58210. [Google Scholar] [CrossRef]

- Zaman-Allah, M.; Vergara, O.; Araus, J.L.; Tarekegne, A.; Magorokosho, C.; Zarco-Tejada, P.J.; Hornero, A.; Albà, A.H.; Das, B.; Craufurd, P.; et al. Unmanned aerial platform-based multi-spectral imaging for field phenotyping of maize. Plant Methods 2015, 11, 35. [Google Scholar] [CrossRef]

- AghaKouchak, A.; Farahmand, A.; Melton, F.S.; Teixeira, J.; Anderson, M.C.; Wardlow, B.D.; Hain, C.R. Remote sensing of drought: Progress, challenges and opportunities. Rev. Geophys. 2015, 53, 452–480. [Google Scholar] [CrossRef]

- Palleja, T.; Landers, A.J. Real time canopy density estimation using ultrasonic envelope signals in the orchard and vineyard. Comput. Electron. Agric. 2015, 115, 108–117. [Google Scholar] [CrossRef]

- Escolà, A.; Planas, S.; Rosell, J.R.; Pomar, J.; Camp, F.; Solanelles, F.; Gracia, F.; Llorens, J.; Gil, E. Performance of an Ultrasonic Ranging Sensor in Apple Tree Canopies. Sensors 2011, 11, 2459–2477. [Google Scholar] [CrossRef]

- Alenyà, G.; Dellen, B.; Torras, C. 3D modelling of leaves from color and ToF data for robotized plant measuring. In Proceedings of the 2011 IEEE International Conference on Robotics and Automation, Shanghai, China, 9–13 May 2011; pp. 3408–3414. [Google Scholar] [CrossRef]

- Chaivivatrakul, S.; Tang, L.; Dailey, M.N.; Nakarmi, A.D. Automatic morphological trait characterization for corn plants via 3D holographic reconstruction. Comput. Electron. Agric. 2014, 109, 109–123. [Google Scholar] [CrossRef]

- Kazmi, W.; Foix, S.; Alenyà, G. Plant leaf imaging using time of flight camera under sunlight, shadow and room conditions. In Proceedings of the 2012 IEEE International Symposium on Robotic and Sensors Environments Proceedings, Magdeburg, Germany, 16–18 November 2012; pp. 192–197. [Google Scholar] [CrossRef]

- Vitzrabin, E.; Edan, Y. Adaptive thresholding with fusion using a RGBD sensor for red sweet-pepper detection. Biosyst. Eng. 2016, 146, 45–56. [Google Scholar] [CrossRef]

- Elfiky, N.M.; Akbar, S.A.; Sun, J.; Park, J.; Kak, A. Automation of Dormant Pruning in Specialty Crop Production: An Adaptive Framework for Automatic Reconstruction and Modeling of Apple Trees. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, Boston, MA, USA, 7–12 June 2015; pp. 65–73. [Google Scholar]

- Sanz-Cortiella, R.; Llorens-Calveras, J.; Escolà, A.; Arnó-Satorra, J.; Ribes-Dasi, M.; Masip-Vilalta, J.; Camp, F.; Gràcia-Aguilá, F.; Solanelles-Batlle, F.; Planas-DeMartí, S.; et al. Innovative LIDAR 3D Dynamic Measurement System to Estimate Fruit-Tree Leaf Area. Sensors 2011, 11, 5769–5791. [Google Scholar] [CrossRef] [PubMed]

- Pueschel, P.; Newnham, G.; Hill, J. Retrieval of Gap Fraction and Effective Plant Area Index from Phase-Shift Terrestrial Laser Scans. Remote Sens. 2014, 6, 2601–2627. [Google Scholar] [CrossRef]

- Koenig, K.; Höfle, B.; Hämmerle, M.; Jarmer, T.; Siegmann, B.; Lilienthal, H. Comparative classification analysis of post-harvest growth detection from terrestrial LiDAR point clouds in precision agriculture. ISPRS J. Photogramm. Remote Sens. 2015, 104, 112–125. [Google Scholar] [CrossRef]

- Fieber, K.D.; Davenport, I.J.; Ferryman, J.M.; Gurney, R.J.; Walker, J.P.; Hacker, J.M. Analysis of full-waveform LiDAR data for classification of an orange orchard scene. ISPRS J. Photogramm. Remote Sens. 2013, 82, 63–82. [Google Scholar] [CrossRef]

- Allouis, T.; Durrieu, S.; Véga, C.; Couteron, P. Stem Volume and Above-Ground Biomass Estimation of Individual Pine Trees From LiDAR Data: Contribution of Full-Waveform Signals. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2013, 6, 924–934. [Google Scholar] [CrossRef]

- Auat Cheein, F.A.; Guivant, J.; Sanz, R.; Escolà, A.; Yandún, F.; Torres-Torriti, M.; Rosell-Polo, J.R. Real-time approaches for characterization of fully and partially scanned canopies in groves. Comput. Electron. Agric. 2015, 118, 361–371. [Google Scholar] [CrossRef]

- Lin, Y. LiDAR: An important tool for next-generation phenotyping technology of high potential for plant phenomics? Comput. Electron. Agric. 2015, 119, 61–73. [Google Scholar] [CrossRef]

- Livny, Y.; Yan, F.; Olson, M.; Chen, B.; Zhang, H.; El-Sana, J. Automatic reconstruction of tree skeletal structures from point clouds. In Proceedings of the ACM SIGGRAPH Asia 2010, Seoul, Korea, 15–18 December 2010; Association for Computing Machinery: New York, NY, USA, 2010. SIGGRAPH ASIA’10. pp. 1–8. [Google Scholar] [CrossRef]

- Rossel, R.V.; Adamchuk, V.; Sudduth, K.; McKenzie, N.; Lobsey, C. Proximal soil sensing: An effective approach for soil measurements in space and time. Adv. Agron. 2011, 113, 243–291. [Google Scholar]

- Allred, B.; Daniels, J.J.; Ehsani, M.R. Handbook of Agricultural Geophysics; CRC Press: Boca Raton, FL, USA, 2008. [Google Scholar]

- Zajícová, K.; Chuman, T. Application of ground penetrating radar methods in soil studies: A review. Geoderma 2019, 343, 116–129. [Google Scholar] [CrossRef]

- Klotzsche, A.; Jonard, F.; Looms, M.C.; van der Kruk, J.; Huisman, J.A. Measuring soil water content with ground penetrating radar: A decade of progress. Vadose Zone J. 2018, 17, 1–9. [Google Scholar] [CrossRef]

- Doolittle, J.A.; Brevik, E.C. The use of electromagnetic induction techniques in soils studies. Geoderma 2014, 223, 33–45. [Google Scholar] [CrossRef]

- Samouëlian, A.; Cousin, I.; Tabbagh, A.; Bruand, A.; Richard, G. Electrical resistivity survey in soil science: A review. Soil Tillage Res. 2005, 83, 173–193. [Google Scholar] [CrossRef]

- Adamchuk, V.; Allred, B.; Doolittle, J.; Grote, K.; Rossel, R.; Ditzler, C.; West, L. Tools for proximal soil sensing. In Soil Survey Manual. Natural Resources Conservation Service. US Department of Agriculture Handbook; USDA: Washington, DC, USA, 2015. [Google Scholar]

- Nof, S.Y. Springer Handbook of Automation; Springer: Berlin/Heidelberg, Germany, 2009. [Google Scholar]

- Zhang, Q. Opportunity of robotics in specialty crop production. IFAC Proc. Vol. 2013, 46, 38–39. [Google Scholar] [CrossRef]

- Schueller, J.K. CIGR handbook of agricultural engineering. Inf. Technol. 2006, 5, 330. [Google Scholar]

- Nagasaka, Y.; Umeda, N.; Kanetai, Y.; Taniwaki, K.; Sasaki, Y. Autonomous guidance for rice transplanting using global positioning and gyroscopes. Comput. Electron. Agric. 2004, 43, 223–234. [Google Scholar] [CrossRef]

- Xia, C.; Wang, L.; Chung, B.K.; Lee, J.M. In Situ 3D Segmentation of Individual Plant Leaves Using a RGB-D Camera for Agricultural Automation. Sensors 2015, 15, 20463–20479. [Google Scholar] [CrossRef]

- Blackmore, S. Towards robotic agriculture. In Proceedings of the Autonomous Air and Ground Sensing Systems for Agricultural Optimization and Phenotyping, Baltimore, MA, USA, 18–19 April 2016; Volume 9866, pp. 8–15. [Google Scholar] [CrossRef]

- Cariou, C.; Lenain, R.; Thuilot, B.; Berducat, M. Automatic guidance of a four-wheel-steering mobile robot for accurate field operations. J. Field Robot. 2009, 26, 504–518. [Google Scholar] [CrossRef]

- Bergerman, M.; Billingsley, J.; Reid, J.; van Henten, E. Robotics in Agriculture and Forestry. In Springer Handbook of Robotics; Springer Handbooks; Springer International Publishing: Cham, Switzerland, 2016; pp. 1463–1492. [Google Scholar] [CrossRef]

- Tremblay, N.; Fallon, E.; Ziadi, N. Sensing of Crop Nitrogen Status: Opportunities, Tools, Limitations, and Supporting Information Requirements. HortTechnology 2011, 21, 274–281. [Google Scholar] [CrossRef]

- Tremblay, N.; Bouroubi, Y.M.; Bélec, C.; Mullen, R.W.; Kitchen, N.R.; Thomason, W.E.; Ebelhar, S.; Mengel, D.B.; Raun, W.R.; Francis, D.D.; et al. Corn Response to Nitrogen is Influenced by Soil Texture and Weather. Agron. J. 2012, 104, 1658–1671. [Google Scholar] [CrossRef]

- Gonzalez-de Soto, M.; Emmi, L.; Garcia, I.; Gonzalez-de Santos, P. Reducing fuel consumption in weed and pest control using robotic tractors. Comput. Electron. Agric. 2015, 114, 96–113. [Google Scholar] [CrossRef]

- Gonzalez-de Soto, M.; Emmi, L.; Benavides, C.; Garcia, I.; Gonzalez-de Santos, P. Reducing air pollution with hybrid-powered robotic tractors for precision agriculture. Biosyst. Eng. 2016, 143, 79–94. [Google Scholar] [CrossRef]

- Wilson, J.N. Guidance of agricultural vehicles—A historical perspective. Comput. Electron. Agric. 2000, 25, 3–9. [Google Scholar] [CrossRef]

- Choi, K.H.; Han, S.K.; Han, S.H.; Park, K.H.; Kim, K.S.; Kim, S. Morphology-based guidance line extraction for an autonomous weeding robot in paddy fields. Comput. Electron. Agric. 2015, 113, 266–274. [Google Scholar] [CrossRef]

- Conesa-Muñoz, J.; Gonzalez-de Soto, M.; Gonzalez-de Santos, P.; Ribeiro, A. Distributed Multi-Level Supervision to Effectively Monitor the Operations of a Fleet of Autonomous Vehicles in Agricultural Tasks. Sensors 2015, 15, 5402–5428. [Google Scholar] [CrossRef]

- Burks, T.; Villegas, F.; Hannan, M.; Flood, S.; Sivaraman, B.; Subramanian, V.; Sikes, J. Engineering and Horticultural Aspects of Robotic Fruit Harvesting: Opportunities and Constraints. HortTechnology 2005, 15, 79–87. [Google Scholar] [CrossRef]

- Xiang, R.; Jiang, H.; Ying, Y. Recognition of clustered tomatoes based on binocular stereo vision. Comput. Electron. Agric. 2014, 106, 75–90. [Google Scholar] [CrossRef]

- Billingsley, J.; Visala, A.; Dunn, M. Robotics in Agriculture and Forestry. In Springer Handbook of Robotics; Springer: Berlin/Heidelberg, Germany, 2008; pp. 1065–1077. [Google Scholar] [CrossRef]

- Bechar, A.; Vigneault, C. Agricultural robots for field operations: Concepts and components. Biosyst. Eng. 2016, 149, 94–111. [Google Scholar] [CrossRef]

- Bechar, A.; Vigneault, C. Agricultural robots for field operations. Part 2: Operations and systems. Biosyst. Eng. 2017, 153, 110–128. [Google Scholar] [CrossRef]

- Ortuani, B.; Sona, G.; Ronchetti, G.; Mayer, A.; Facchi, A. Integrating geophysical and multispectral data to delineate homogeneous management zones within a vineyard in Northern Italy. Sensors 2019, 19, 3974. [Google Scholar] [CrossRef]

- Belcore, E.; Angeli, S.; Colucci, E.; Musci, M.A.; Aicardi, I. Precision Agriculture Workflow, from Data Collection to Data Management Using FOSS Tools: An Application in Northern Italy Vineyard. ISPRS Int. J. Geo Inf. 2021, 10, 236. [Google Scholar] [CrossRef]

- Schor, N.; Bechar, A.; Ignat, T.; Dombrovsky, A.; Elad, Y.; Berman, S. Robotic Disease Detection in Greenhouses: Combined Detection of Powdery Mildew and Tomato Spotted Wilt Virus. IEEE Robot. Autom. Lett. 2016, 1, 354–360. [Google Scholar] [CrossRef]

- Ceres, R.; Pons, J.; Jiménez, A.; Martín, J.; Calderón, L. Design and implementation of an aided fruit-harvesting robot (Agribot). Ind. Robot 1998, 25, 337–346. [Google Scholar] [CrossRef]

- Nguyen, T.T.; Kayacan, E.; De Baedemaeker, J.; Saeys, W. Task and Motion Planning for Apple Harvesting Robot. IFAC Proc. Volume 2013, 46, 247–252. [Google Scholar] [CrossRef]

- Hellström, T.; Ringdahl, O. A software framework for agricultural and forestry robots. Ind. Robot. 2013, 40, 20–26. [Google Scholar] [CrossRef][Green Version]

- Tazzari, R.; Mengoli, D.; Marconi, L. Design Concept and Modelling of a Tracked UGV for Orchard Precision Agriculture. In Proceedings of the 2020 IEEE International Workshop on Metrology for Agriculture and Forestry (MetroAgriFor), Online, 4–6 November 2020; pp. 207–212. [Google Scholar] [CrossRef]

- Cuesta, F.; Gomez-Bravo, F.; Ollero, A. Parking maneuvers of industrial-like electrical vehicles with and without trailer. IEEE Trans. Ind. Electron. 2004, 51, 257–269. [Google Scholar] [CrossRef]

- Low, C.B.; Wang, D. GPS-Based Path Following Control for a Car-Like Wheeled Mobile Robot with Skidding and Slipping. IEEE Trans. Control. Syst. Technol. 2008, 16, 340–347. [Google Scholar] [CrossRef]

- Underwood, J.; Hung, C.; Whelan, B.; Sukkarieh, S. Mapping almond orchard canopy volume, flowers, fruit and yield using lidar and vision sensors. Comput. Electron. Agric. 2016, 130, 83–96. [Google Scholar] [CrossRef]

- Mueller-Sim, T.; Jenkins, M.; Abel, J.; Kantor, G. The Robotanist: A ground-based agricultural robot for high-throughput crop phenotyping. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA), Singapore, 29 May–3 June 2017; pp. 3634–3639. [Google Scholar] [CrossRef]

- Virlet, N.; Sabermanesh, K.; Sadeghi-Tehran, P.; Hawkesford, M. Field Scanalyzer: An automated robotic field phenotyping platform for detailed crop monitoring. Funct. Plant Biol. 2017, 44, 143–153. [Google Scholar] [CrossRef]

- Cubero, S.; Marco-noales, E.; Aleixos, N.; Barbé, S.; Blasco, J. Robhortic: A field robot to detect pests and diseases in horticultural crops by proximal sensing. Agriculture 2020, 10, 276. [Google Scholar] [CrossRef]

- Rey, B.; Aleixos, N.; Cubero, S.; Blasco, J. XF-ROVIM. A field robot to detect olive trees infected by Xylella fastidiosa using proximal sensing. Remote Sens. 2019, 11, 221. [Google Scholar] [CrossRef]

- Barbosa, W.S.; Oliveira, A.I.S.; Barbosa, G.B.P.; Leite, A.C.; Figueiredo, K.T.; Vellasco, M.M.B.R.; Caarls, W. Design and Development of an Autonomous Mobile Robot for Inspection of Soy and Cotton Crops. In Proceedings of the 2019 12th International Conference on Developments in eSystems Engineering (DeSE), Kazan, Russia, 7–10 October 2019; pp. 557–562. [Google Scholar] [CrossRef]

- Menendez-Aponte, P.; Kong, X.; Xu, Y. An approximated, control affine model for a strawberry field scouting robot considering wheel-terrain interaction. Robotica 2019, 37, 1545–1561. [Google Scholar] [CrossRef]

- Bietresato, M.; Carabin, G.; Vidoni, R.; Gasparetto, A.; Mazzetto, F. Evaluation of a LiDAR-based 3D-stereoscopic vision system for crop-monitoring applications. Comput. Electron. Agric. 2016, 124, 1–13. [Google Scholar] [CrossRef]

- Bietresato, M.; Carabin, G.; D’Auria, D.; Gallo, R.; Ristorto, G.; Mazzetto, F.; Vidoni, R.; Gasparetto, A.; Scalera, L. A tracked mobile robotic lab for monitoring the plants volume and health. In Proceedings of the 2016 12th IEEE/ASME International Conference on Mechatronic and Embedded Systems and Applications (MESA), Auckland, New Zealand, 29–31 August 2016; pp. 1–6. [Google Scholar] [CrossRef]

- Ristorto, G.; Gallo, R.; Gasparetto, A.; Scalera, L.; Vidoni, R.; Mazzetto, F. A Mobile Laboratory for Orchard Health Status Monitoring in Precision Farming. Chem. Eng. Trans. 2017, 58, 661–666. [Google Scholar] [CrossRef]

- Vidoni, R.; Gallo, R.; Ristorto, G.; Carabin, G.; Mazzetto, F.; Scalera, L.; Gasparetto, A. ByeLab: An agricultural mobile robot prototype for proximal sensing and precision farming. In Proceedings of the ASME International Mechanical Engineering Congress and Exposition, Tampa, FL, USA, 3–9 November 2017; American Society of Mechanical Engineers: New York, NY, USA; Volume 58370, p. V04AT05A057. [Google Scholar]

- EarthSense. Terra Sentia by EarthSense. 2019. Available online: https://researchpark.illinois.edu/article/earthsense-terrasentia-featured-in-successful-farming/ (accessed on 13 April 2022).

- Small Robot Company Tom Robot by Small Robot Company. 2021. Available online: https://www.smallrobotcompany.com (accessed on 13 April 2022).

- Bakker, T.; Asselt, K.; Bontsema, J.; Müller, J.; Straten, G. Systematic design of an autonomous platform for robotic weeding. J. Terramech. 2010, 47, 63–73. [Google Scholar] [CrossRef]

- Bawden, O.; Kulk, J.; Russell, R.; McCool, C.; English, A.; Dayoub, F.; Lehnert, C.; Perez, T. Robot for weed species plant-specific management. J. Field Robot. 2017, 34, 1179–1199. [Google Scholar] [CrossRef]

- Utstumo, T.; Urdal, F.; Brevik, A.; Dørum, J.; Netland, J.; Overskeid, O.; Berge, T.; Gravdahl, J. Robotic in-row weed control in vegetables. Comput. Electron. Agric. 2018, 154, 36–45. [Google Scholar] [CrossRef]

- Naio Technologies Autonomous Oz Weeding Robot. 2016. Available online: https://www.naio-technologies.com/en/oz/ (accessed on 13 April 2022).

- Naio Technologies DINO Vegetable Weeding Robot for Large-Scale Vegetable Farms. 2016. Available online: https://www.naio-technologies.com/en/dino/ (accessed on 13 April 2022).

- Naio Technologies TED, the Vineyard Weeding Robot. 2016. Available online: https://www.naio-technologies.com/en/ted/ (accessed on 13 April 2022).

- CARRÉ. ANATIS by CARRÉ. 2019. Available online: https://www.carre.fr/entretien-des-cultures-et-prairies/anatis/?lang=en (accessed on 13 April 2022).

- Ecorobotix. AVO The Autonomous Robot Weeder from Ecorobotix. 2020. Available online: https://platform.innoseta.eu/product/400 (accessed on 13 April 2022).

- Haibo, L.; Shuliang, D.; Zunmin, L.; Chuijie, Y. Study and Experiment on a Wheat Precision Seeding Robot. J. Robot. 2015, 2015, 696301. [Google Scholar] [CrossRef]

- Ruangurai, P.; Ekpanyapong, M.; Pruetong, C.; Watewai, T. Automated three-wheel rice seeding robot operating in dry paddy fields. Maejo Int. J. Sci. Technol. 2015, 9, 403–412. [Google Scholar]

- Hassan, M.U.; Ullah, M.; Iqbal, J. Towards autonomy in agriculture: Design and prototyping of a robotic vehicle with seed selector. In Proceedings of the 2016 2nd International Conference on Robotics and Artificial Intelligence (ICRAI), Islamabad, Pakistan, 20–22 April 2016; pp. 37–44. [Google Scholar] [CrossRef]

- Srinivasan, N.; Prabhu, P.; Smruthi, S.S.; Sivaraman, N.V.; Gladwin, S.J.; Rajavel, R.; Natarajan, A.R. Design of an autonomous seed planting robot. In Proceedings of the 2016 IEEE Region 10 Humanitarian Technology Conference (R10-HTC), Agra, India, 21–23 December 2016; pp. 1–4. [Google Scholar] [CrossRef]

- Jayakrishna, P.V.S.; Reddy, M.S.; Sai, N.J.; Susheel, N.; Peeyush, K.P. Autonomous Seed Sowing Agricultural Robot. In Proceedings of the 2018 International Conference on Advances in Computing, Communications and Informatics (ICACCI), Bangalore, India, 19–22 September 2018; pp. 2332–2336. [Google Scholar] [CrossRef]

- Ali, A.A.; Zohaib, M.; Mehdi, S.A. An Autonomous Seeder for Maize Crop. In Proceedings of the 2019 5th International Conference on Robotics and Artificial Intelligence, Singapore, 22–24 November; Association for Computing Machinery: New York, NY, USA, 2019. ICRAI’19. pp. 42–47. [Google Scholar] [CrossRef]

- Pramod, A.S.; Jithinmon, T.V. Development of mobile dual PR arm agricultural robot. J. Physics Conf. Ser. 2019, 1240, 012034. [Google Scholar] [CrossRef]

- Jose, C.M.; Sudheer, A.P.; Narayanan, M.D. Modelling and Analysis of Seeding Robot for Row Crops. In Proceedings of the Innovative Product Design and Intelligent Manufacturing Systems, Rourkela, India, 2–3 December 2020; Lecture Notes in Mechanical Engineering. Springer: Singapore, 2020; pp. 1003–1014. [Google Scholar] [CrossRef]

- Azmi, H.N.; Hajjaj, S.S.H.; Gsangaya, K.R.; Sultan, M.T.H.; Mail, M.F.; Hua, L.S. Design and fabrication of an agricultural robot for crop seeding. Mater. Today Proc. 2021. [Google Scholar] [CrossRef]

- Kumar, P.; Ashok, G. Design and fabrication of smart seed sowing robot. Mater. Today: Proc. 2021, 39, 354–358. [Google Scholar] [CrossRef]

- Mohammed, I.I.; Jassim, A.R.A.L. Design and Testing of an Agricultural Robot to Operate a Combined Seeding Machine. Ann. Rom. Soc. Cell Biol. 2021, 25, 92–106. [Google Scholar]

- Li, S.; Li, S.; Jin, L. The Design and Physical Implementation of Seeding Robots in Deserts. In Proceedings of the 2020 39th Chinese Control Conference (CCC), Online, 27–29 July 2020; pp. 3892–3897. [Google Scholar] [CrossRef]

- Iqbal, M.Z.; Islam, M.N.; Ali, M.; Kabir, M.S.N.; Park, T.; Kang, T.G.; Park, K.S.; Chung, S.O. Kinematic analysis of a hopper-type dibbling mechanism for a 2.6 kW two-row pepper transplanter. J. Mech. Sci. Technol. 2021, 35, 2605–2614. [Google Scholar] [CrossRef]

- Liu, Z.; Wang, X.; Zheng, W.; Lv, Z.; Zhang, W. Design of a sweet potato transplanter based on a robot arm. Appl. Sci. 2021, 11, 9349. [Google Scholar] [CrossRef]

- Rowbot. Rowbot. 2020. Available online: https://www.rowbot.com/ (accessed on 13 April 2022).

- Yaguchi, H.; Nagahama, K.; Hasegawa, T.; Inaba, M. Development of an autonomous tomato harvesting robot with rotational plucking gripper. In Proceedings of the 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Daejeon, Korea, 9–14 October 2016; pp. 652–657. [Google Scholar] [CrossRef]

- Feng, Q.; Zou, W.; Fan, P.; Zhang, C.; Wang, X. Design and test of robotic harvesting system for cherry tomato. Int. J. Agric. Biol. Eng. 2018, 11, 96–100. [Google Scholar] [CrossRef]

- Agrobot Robotic Harvesters|Agrobot. 2018. Available online: https://www.agrobot.com/e-series (accessed on 13 April 2022).

- Ge, Y.; Xiong, Y.; Tenorio, G.L.; From, P.J. Fruit Localization and Environment Perception for Strawberry Harvesting Robots. IEEE Access 2019, 7, 147642–147652. [Google Scholar] [CrossRef]

- Xiong, Y.; Ge, Y.; Grimstad, L.; From, P.J. An autonomous strawberry-harvesting robot: Design, development, integration, and field evaluation. J. Field Robot. 2020, 37. [Google Scholar] [CrossRef]

- Bac, C.; Hemming, J.; van Tuijl, B.; Barth, R.; Wais, E.; van Henten, E. Performance Evaluation of a Harvesting Robot for Sweet Pepper. J. Field Robot. 2017, 34, 1123–1139. [Google Scholar] [CrossRef]

- Arad, B.; Balendonck, J.; Barth, R.; Ben-Shahar, O.; Edan, Y.; Hellström, T.; Hemming, J.; Kurtser, P.; Ringdahl, O.; Tielen, T.; et al. Development of a sweet pepper harvesting robot. J. Field Robot. 2020, 37, 1027–1039. [Google Scholar] [CrossRef]

- Lehnert, C.; McCool, C.; Sa, I.; Perez, T. Performance improvements of a sweet pepper harvesting robot in protected cropping environments. J. Field Robot. 2020, 37. [Google Scholar] [CrossRef]

- Raja, V.; Bhaskaran, B.; Nagaraj, K.; Sampathkumar, J.; Senthilkumar, S. Agricultural harvesting using integrated robot system. Indones. J. Electr. Eng. Comput. Sci. 2022, 25, 152–158. [Google Scholar] [CrossRef]

- Birrell, S.; Hughes, J.; Cai, J.Y.; Iida, F. A field-tested robotic harvesting system for iceberg lettuce. J. Field Robot. 2020, 37, 225–245. [Google Scholar] [CrossRef]

- Leu, A.; Razavi, M.; Langstädtler, L.; Ristić-Durrant, D.; Raffel, H.; Schenck, C.; Gräser, A.; Kuhfuss, B. Robotic Green Asparagus Selective Harvesting. IEEE/ASME Trans. Mechatronics 2017, 22, 2401–2410. [Google Scholar] [CrossRef]

- Kang, H.; Zhou, H.; Chen, C. Visual Perception and Modeling for Autonomous Apple Harvesting. IEEE Access 2020, 8, 62151–62163. [Google Scholar] [CrossRef]

- Bogue, R. Fruit picking robots: Has their time come? Ind. Robot. 2020, 47, 141–145. [Google Scholar] [CrossRef]

- Cantelli, L.; Bonaccorso, F.; Longo, D.; Melita, C.D.; Schillaci, G.; Muscato, G. A Small Versatile Electrical Robot for Autonomous Spraying in Agriculture. AgriEngineering 2019, 1, 391–402. [Google Scholar] [CrossRef]

- Danton, A.; Roux, J.C.; Dance, B.; Cariou, C.; Lenain, R. Development of a spraying robot for precision agriculture: An edge following approach. In Proceedings of the 2020 IEEE Conference on Control Technology and Applications (CCTA), Montreal, QC, Canada, 24–26 August 2020; pp. 267–272. [Google Scholar] [CrossRef]

- Terra, F.; Nascimento, G.; Duarte, G.; Drews, P., Jr. Autonomous Agricultural Sprayer using Machine Vision and Nozzle Control. J. Intell. Robot. Syst. Theory Appl. 2021, 102. [Google Scholar] [CrossRef]

- Amrita, S.A.; Abirami, E.; Ankita, A.; Praveena, R.; Srimeena, R. Agricultural Robot for automatic ploughing and seeding. In Proceedings of the 2015 IEEE Technological Innovation in ICT for Agriculture and Rural Development (TIAR), Chennai, India, 10–12 July 2015; pp. 17–23. [Google Scholar] [CrossRef]

- Grimstad, L.; From, P.J. The Thorvald II Agricultural Robotic System. Robotics 2017, 6, 24. [Google Scholar] [CrossRef]

- CASE IH. Case IH Autonomous Concept Vehicle. 2017. Available online: https://www.caseih.com/anz/en-au/innovations/autonomous-farming (accessed on 13 April 2022).

- Sitia. TREKTOR, 2020. Available online: https://www.sitia.fr/en/innovation-2/trektor/ (accessed on 13 April 2022).

- Deere, J. John Deere CES® 2022. Available online: https://ces2022.deere.com/ (accessed on 13 April 2022).

- Agrobot. Bug Vacuum, 2020. Available online: https://www.agrobot.com/bugvac (accessed on 13 April 2022).

- Williams, H.; Nejati, M.; Hussein, S.; Penhall, N.; Lim, J.Y.; Jones, M.H.; Bell, J.; Ahn, H.S.; Bradley, S.; Schaare, P.; et al. Autonomous pollination of individual kiwifruit flowers: Toward a robotic kiwifruit pollinator. J. Field Robot. 2020, 37, 246–262. [Google Scholar] [CrossRef]

- Galati, R.; Mantriota, G.; Reina, G. Design and Development of a Tracked Robot to Increase Bulk Density of Flax Fibers. J. Mech. Robot. 2021, 13. [Google Scholar] [CrossRef]

- Loukatos, D.; Petrongonas, E.; Manes, K.; Kyrtopoulos, I.V.; Dimou, V.; Arvanitis, K. A synergy of innovative technologies towards implementing an autonomous diy electric vehicle for harvester-assisting purposes. Machines 2021, 9, 82. [Google Scholar] [CrossRef]

- Oberti, R.; Marchi, M.; Tirelli, P.; Calcante, A.; Iriti, M.; Tona, E.; Hočevar, M.; Baur, J.; Pfaff, J.; Schütz, C.; et al. Selective spraying of grapevines for disease control using a modular agricultural robot. Biosyst. Eng. 2016, 146, 203–215. [Google Scholar] [CrossRef]

- Adamides, G.; Katsanos, C.; Constantinou, I.; Christou, G.; Xenos, M.; Hadzilacos, T.; Edan, Y. Design and development of a semi-autonomous agricultural vineyard sprayer: Human—Robot interaction aspects. J. Field Robot. 2017, 34, 1407–1426. [Google Scholar] [CrossRef]

- Azienda Agricola Pantano. Rovitis 4.0 by Azienda Agricola Pantano. 2018. Available online: https://www.aziendapantano.it/rovitis40.html (accessed on 13 April 2022).

- Shafiekhani, A.; Kadam, S.; Fritschi, F.; Desouza, G. Vinobot and vinoculer: Two robotic platforms for high-throughput field phenotyping. Sensors 2017, 17, 214. [Google Scholar] [CrossRef]

- Reiser, D.; Sehsah, E.S.; Bumann, O.; Morhard, J.; Griepentrog, H. Development of an autonomous electric robot implement for intra-row weeding in vineyards. Agriculture 2019, 9, 18. [Google Scholar] [CrossRef]

- Botterill, T.; Paulin, S.; Green, R.; Williams, S.; Lin, J.; Saxton, V.; Mills, S.; Chen, X.; Corbett-Davies, S. A Robot System for Pruning Grape Vines. J. Field Robot. 2017, 34, 1100–1122. [Google Scholar] [CrossRef]

- Majeed, Y.; Karkee, M.; Zhang, Q.; Fu, L.; Whiting, M.D. Development and performance evaluation of a machine vision system and an integrated prototype for automated green shoot thinning in vineyards. J. Field Robot. 2021, 38, 898–916. [Google Scholar] [CrossRef]

- VitiBot. Bakus S by VitiBot. 2018. Available online: https://vitibot.fr/vineyards-robots-bakus/vineyard-robot-bakus-s/?lang=en (accessed on 13 April 2022).

- Thuilot, B.; Cariou, C.; Martinet, P.; Berducat, M. Automatic Guidance of a Farm Tractor Relying on a Single CP-DGPS. Auton. Robot. 2002, 13, 53–71. [Google Scholar] [CrossRef]

- Fountas, S.; Mylonas, N.; Malounas, I.; Rodias, E.; Hellmann Santos, C.; Pekkeriet, E. Agricultural Robotics for Field Operations. Sensors 2020, 20, 2672. [Google Scholar] [CrossRef]

- Fue, K.G.; Porter, W.M.; Barnes, E.M.; Rains, G.C. An Extensive Review of Mobile Agricultural Robotics for Field Operations: Focus on Cotton Harvesting. AgriEngineering 2020, 2, 150–174. [Google Scholar] [CrossRef]

- Oliveira, L.F.P.; Moreira, A.P.; Silva, M.F. Advances in Agriculture Robotics: A State-of-the-Art Review and Challenges Ahead. Robotics 2021, 10, 52. [Google Scholar] [CrossRef]

- Vidoni, R.; Bietresato, M.; Gasparetto, A.; Mazzetto, F. Evaluation and stability comparison of different vehicle configurations for robotic agricultural operations on side-slopes. Biosyst. Eng. 2015, 129, 197–211. [Google Scholar] [CrossRef]

- Braunack, M.V. Changes in physical properties of two dry soils during tracked vehicle passage. J. Terramech. 1986, 23, 141–151. [Google Scholar] [CrossRef]

- Braunack, M.V. The residual effects of tracked vehicles on soil surface properties. J. Terramech. 1986, 23, 37–50. [Google Scholar] [CrossRef]

- Braunack, M.V.; Williams, B.G. The effect of initial soil water content and vegetative cover on surface soil disturbance by tracked vehicles. J. Terramech. 1993, 30, 299–311. [Google Scholar] [CrossRef]

- Ayers, P.D. Environmental damage from tracked vehicle operation. J. Terramech. 1994, 31, 173–183. [Google Scholar] [CrossRef]

- Prosser, C.W.; Sedivec, K.K.; Barker, W.T. Tracked Vehicle Effects on Vegetation and Soil Characteristics. J. Range Manag. 2000, 53, 666–670. [Google Scholar] [CrossRef]

- Li, Q.; Ayers, P.D.; Anderson, A.B. Modeling of terrain impact caused by tracked vehicles. J. Terramech. 2007, 44, 395–410. [Google Scholar] [CrossRef]

- Molari, G.; Bellentani, L.; Guarnieri, A.; Walker, M.; Sedoni, E. Performance of an agricultural tractor fitted with rubber tracks. Biosyst. Eng. 2012, 111, 57–63. [Google Scholar] [CrossRef]

- Vu, Q.; Raković, M.; Delic, V.; Ronzhin, A. Trends in Development of UAV-UGV Cooperation Approaches in Precision Agriculture. In Proceedings of the Interactive Collaborative Robotics, Leipzig, Germany, 18–22 September 2018; Springer International Publishing: Cham, Switzerland, 2018. Lecture Notes in Computer Science. pp. 213–221. [Google Scholar] [CrossRef]

- Zoto, J.; Musci, M.A.; Khaliq, A.; Chiaberge, M.; Aicardi, I. Automatic path planning for unmanned ground vehicle using uav imagery. In Proceedings of the International Conference on Robotics in Alpe-Adria Danube Region, Kaiserlautern, Germany, 19–21 June 2019; pp. 223–230. [Google Scholar]

- Cinat, P.; Di Gennaro, S.F.; Berton, A.; Matese, A. Comparison of unsupervised algorithms for Vineyard Canopy segmentation from UAV multispectral images. Remote Sens. 2019, 11, 1023. [Google Scholar] [CrossRef]

- Ribeiro, A.; Conesa-Muñoz, J. Multi-robot Systems for Precision Agriculture. In Innovation in Agricultural Robotics for Precision Agriculture: A Roadmap for Integrating Robots in Precision Agriculture; Progress in Precision Agriculture; Springer International Publishing: Cham, Switzerland, 2021; pp. 151–175. [Google Scholar] [CrossRef]

- Galceran, E.; Carreras, M. A survey on coverage path planning for robotics. Robot. Auton. Syst. 2013, 61, 1258–1276. [Google Scholar] [CrossRef]

- Zurich, E. Aerial Data Collection and Analysis, and Automated Ground Intervention for Precision Farming|Flourish Project|Fact Sheet|H2020|CORDIS|European Commission; Florish Project: Payneham, Australia, 2018. [Google Scholar]

- Bhandari, S.; Raheja, A.; Green, R.L.; Do, D. Towards collaboration between unmanned aerial and ground vehicles for precision agriculture. In Proceedings of the Autonomous Air and Ground Sensing Systems for Agricultural Optimization and Phenotyping II, Anaheim, CA, USA, 9–13 April 2017; Volume 10218, pp. 26–39. [Google Scholar] [CrossRef]

- Gonzalez-de Santos, P.; Ribeiro, A.; Fernandez-Quintanilla, C.; Lopez-Granados, F.; Brandstoetter, M.; Tomic, S.; Pedrazzi, S.; Peruzzi, A.; Pajares, G.; Kaplanis, G.; et al. Fleets of robots for environmentally-safe pest control in agriculture. Precis. Agric. 2017, 18, 574–614. [Google Scholar] [CrossRef]

- Roldán, J.J.; Garcia-Aunon, P.; Garzón, M.; De León, J.; Del Cerro, J.; Barrientos, A. Heterogeneous Multi-Robot System for Mapping Environmental Variables of Greenhouses. Sensors 2016, 16, 1018. [Google Scholar] [CrossRef] [PubMed]

- Vitali, G.; Cardillo, C.; Albertazzi, S.; Della Chiara, M.; Baldoni, G.; Signorotti, C.; Trisorio, A.; Canavari, M. Classification of Italian Farms in the FADN Database Combining Climate and Structural Information. Cartographica 2012, 47, 228–236. [Google Scholar] [CrossRef]

- Kaufmann, H.; Blanke, M. Chilling requirements of Mediterranean fruit crops in a changing climate. In Proceedings of the III International Symposium on Horticulture in Europe-SHE2016, Chania, Greece, 17–21 October 2016; pp. 275–280. [Google Scholar]

- Pessina, D.; Facchinetti, D. A survey on fatal accidents for overturning of agricultural tractors in Italy. Chem. Eng. Trans. 2017, 58, 79–84. [Google Scholar]

- Quaglia, G.; Visconte, C.; Carbonari, L.; Botta, A.; Cavallone, P. Agri. q: A Sustainable Rover for Precision Agriculture. In Solar Energy Conversion in Communities; Springer: Berlin/Heidelberg, Germany, 2020; pp. 81–91. [Google Scholar]

- Cavallone, P.; Visconte, C.; Carbonari, L.; Botta, A.; Quaglia, G. Design of the Mobile Robot Agri. q. In Proceedings of the Symposium on Robot Design, Dynamics and Control, Sapporo, Japan, 20–24 September 2020; pp. 288–296. [Google Scholar]

- Visconte, C.; Cavallone, P.; Carbonari, L.; Botta, A.; Quaglia, G. Design of a Mechanism with Embedded Suspension to Reconfigure the Agri_q Locomotion Layout. Robotics 2021, 10, 15. [Google Scholar] [CrossRef]

- Cavallone, P.; Botta, A.; Carbonari, L.; Visconte, C.; Quaglia, G. The Agri.q Mobile Robot: Preliminary Experimental Tests. In Proceedings of the Advances in Italian Mechanism Science; Niola, V., Gasparetto, A., Eds.; Springer International Publishing: Cham, Switzerland, 2021; pp. 524–532. [Google Scholar] [CrossRef]

- Botta, A.; Cavallone, P. Robotics Applied to Precision Agriculture: The Sustainable Agri.q Rover Case Study. In Proceedings of the I4SDG Workshop 2021, Online, 25–26 November 2021; Mechanisms and Machine Science. Springer International Publishing: Cham, Switzerland, 2022; pp. 41–50. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Botta, A.; Cavallone, P.; Baglieri, L.; Colucci, G.; Tagliavini, L.; Quaglia, G. A Review of Robots, Perception, and Tasks in Precision Agriculture. Appl. Mech. 2022, 3, 830-854. https://doi.org/10.3390/applmech3030049

Botta A, Cavallone P, Baglieri L, Colucci G, Tagliavini L, Quaglia G. A Review of Robots, Perception, and Tasks in Precision Agriculture. Applied Mechanics. 2022; 3(3):830-854. https://doi.org/10.3390/applmech3030049

Chicago/Turabian StyleBotta, Andrea, Paride Cavallone, Lorenzo Baglieri, Giovanni Colucci, Luigi Tagliavini, and Giuseppe Quaglia. 2022. "A Review of Robots, Perception, and Tasks in Precision Agriculture" Applied Mechanics 3, no. 3: 830-854. https://doi.org/10.3390/applmech3030049

APA StyleBotta, A., Cavallone, P., Baglieri, L., Colucci, G., Tagliavini, L., & Quaglia, G. (2022). A Review of Robots, Perception, and Tasks in Precision Agriculture. Applied Mechanics, 3(3), 830-854. https://doi.org/10.3390/applmech3030049