Development of Surface EMG Game Control Interface for Persons with Upper Limb Functional Impairments

Abstract

1. Introduction

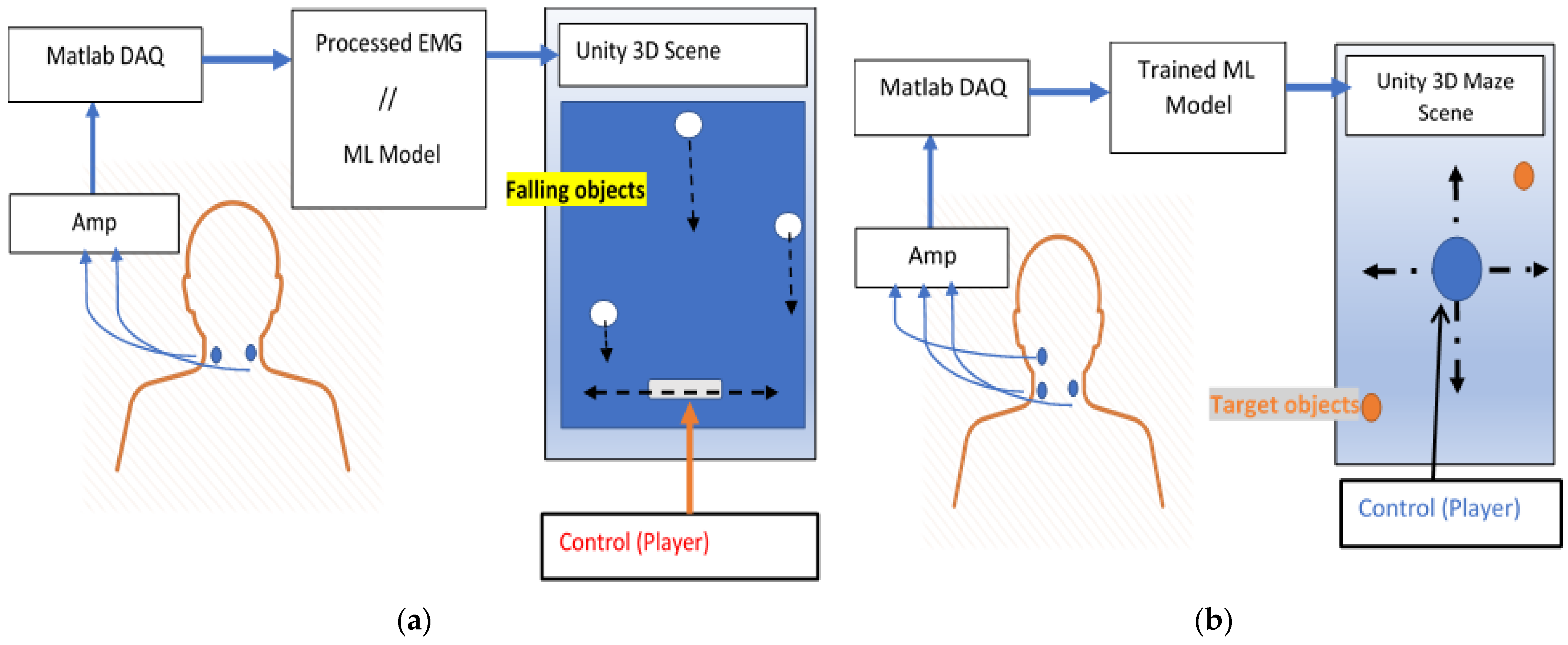

2. Materials and Methods

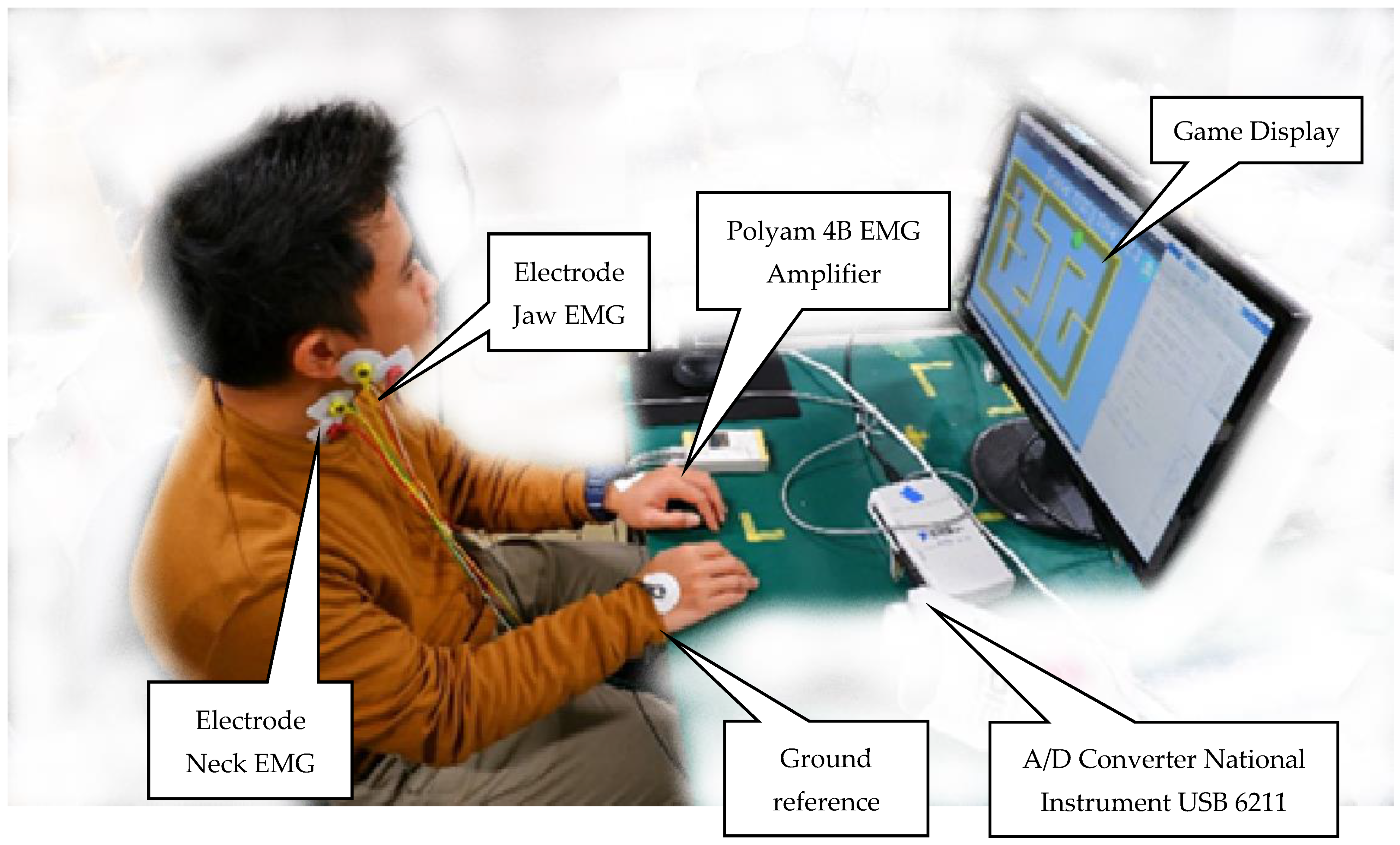

2.1. Setup and Data Acquisition

2.2. Unity3D Engine Game Development

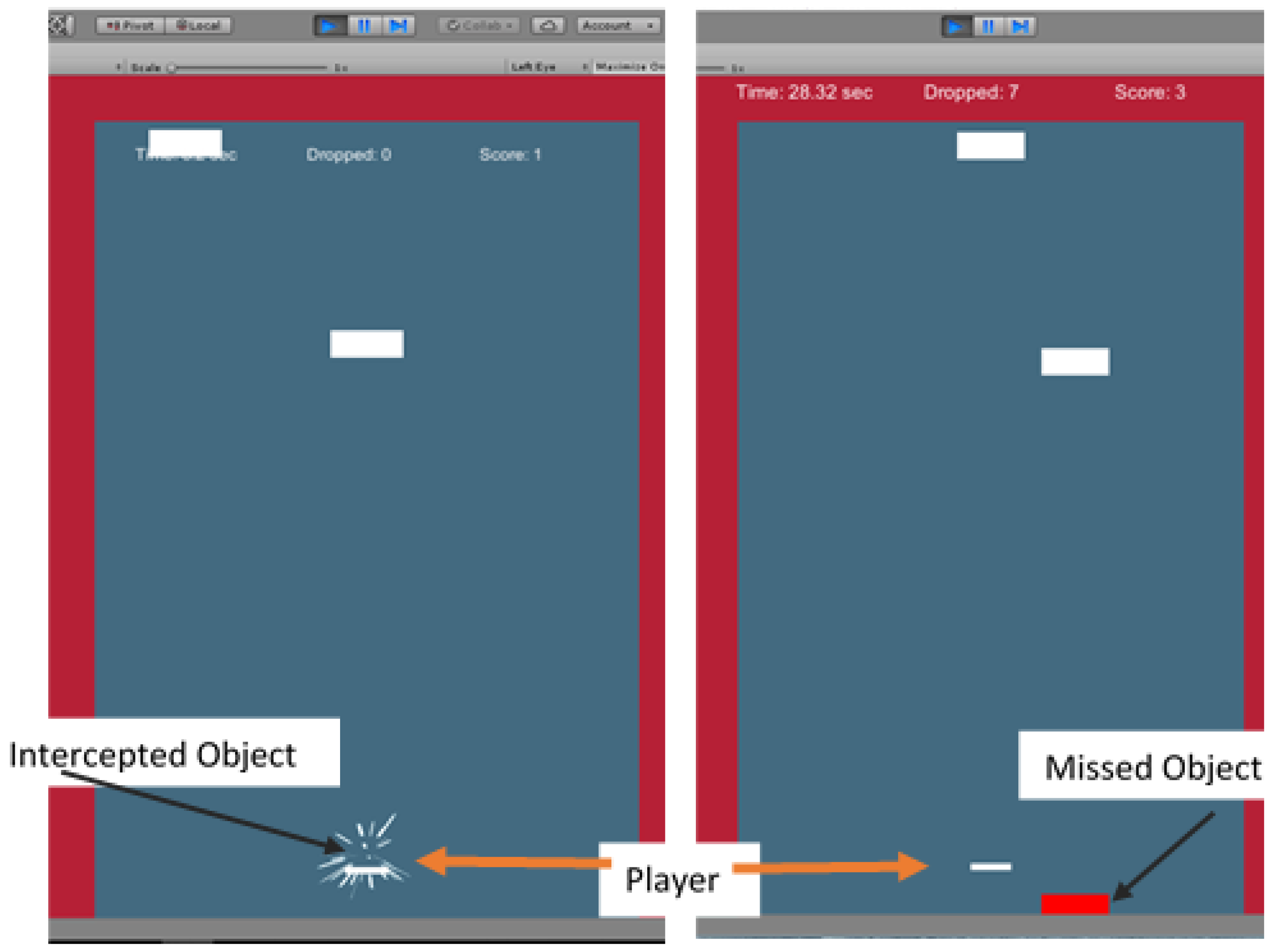

2.2.1. Experiment One: Intercepting Game

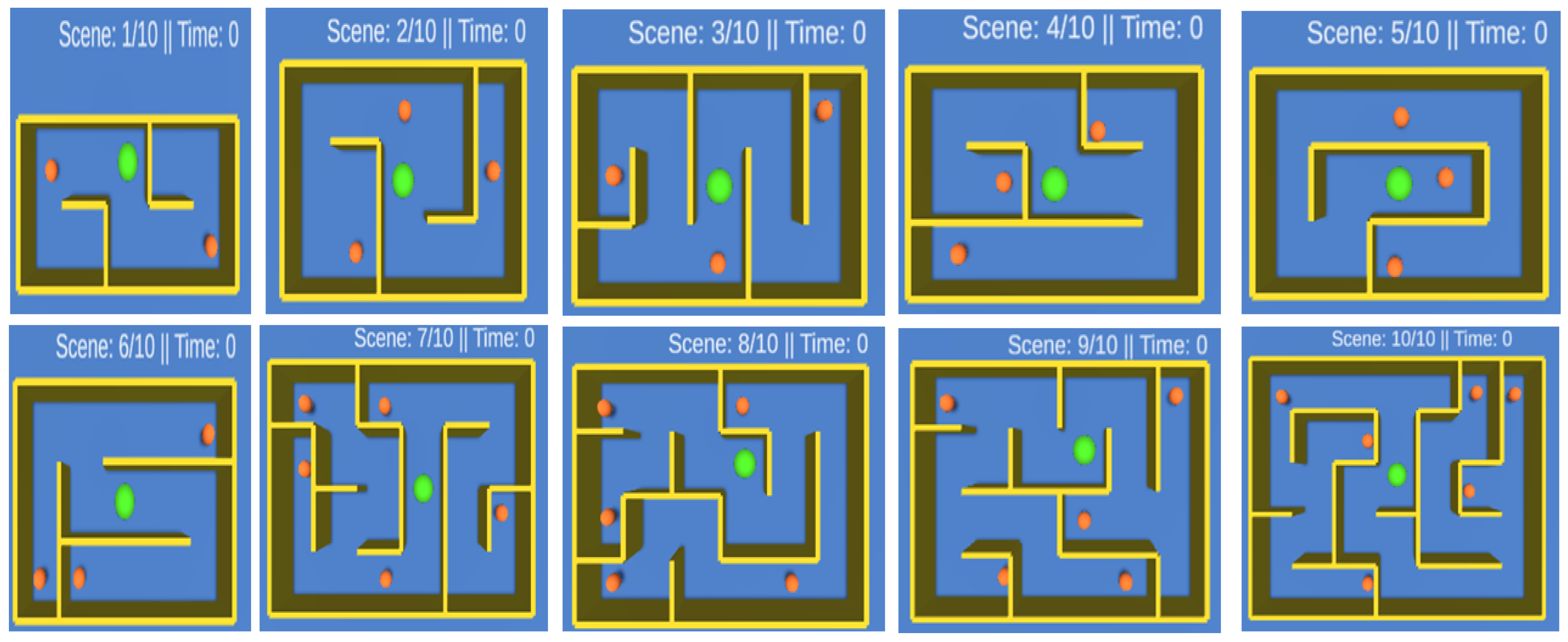

2.2.2. Experiment Two: Maze Game

2.3. Head Rotation Estimation

2.3.1. Equation Estimation

2.3.2. Machine Learning (ML) Estimation

2.4. Participants

3. Performance Verification and Results

3.1. Performance Verification

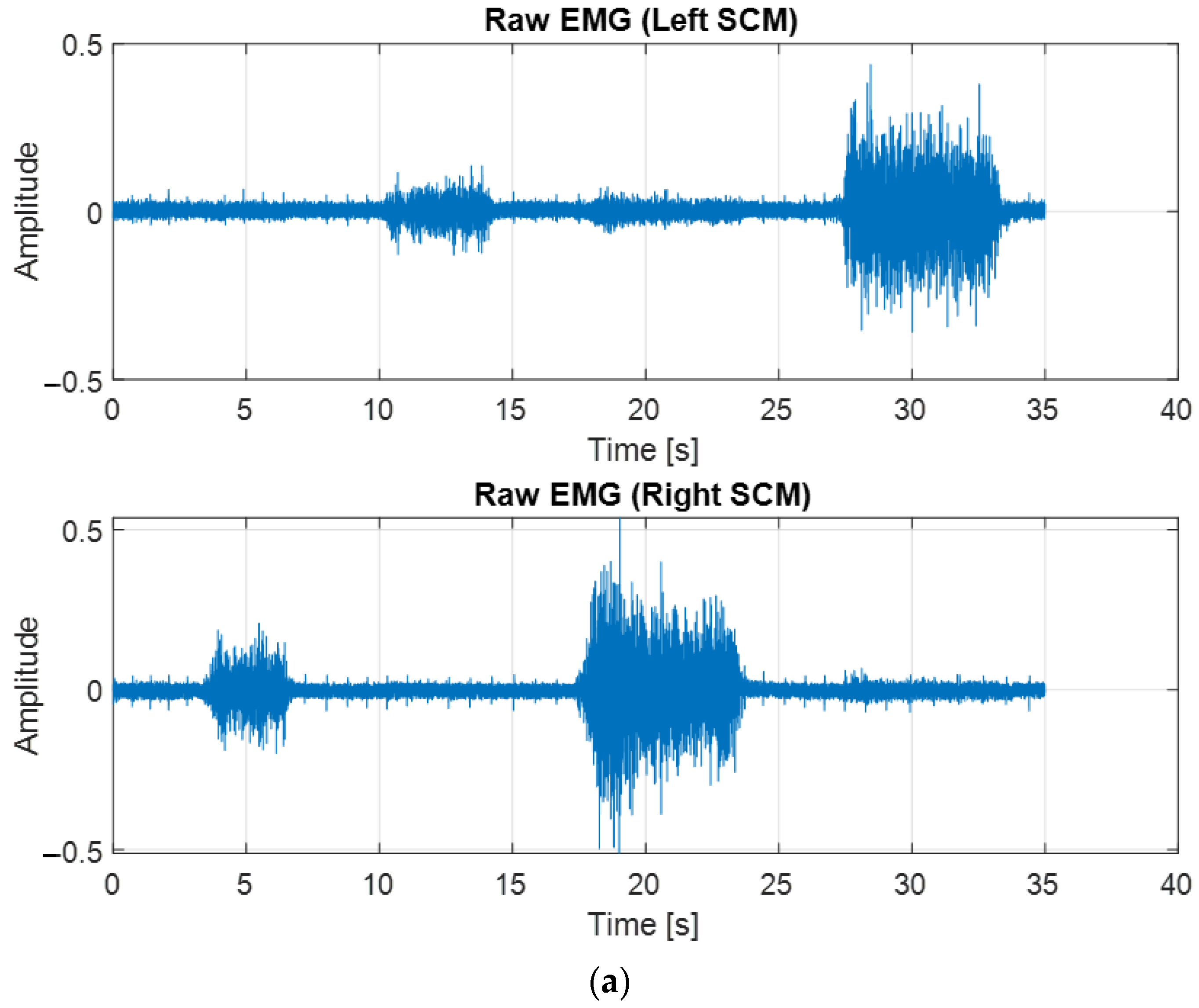

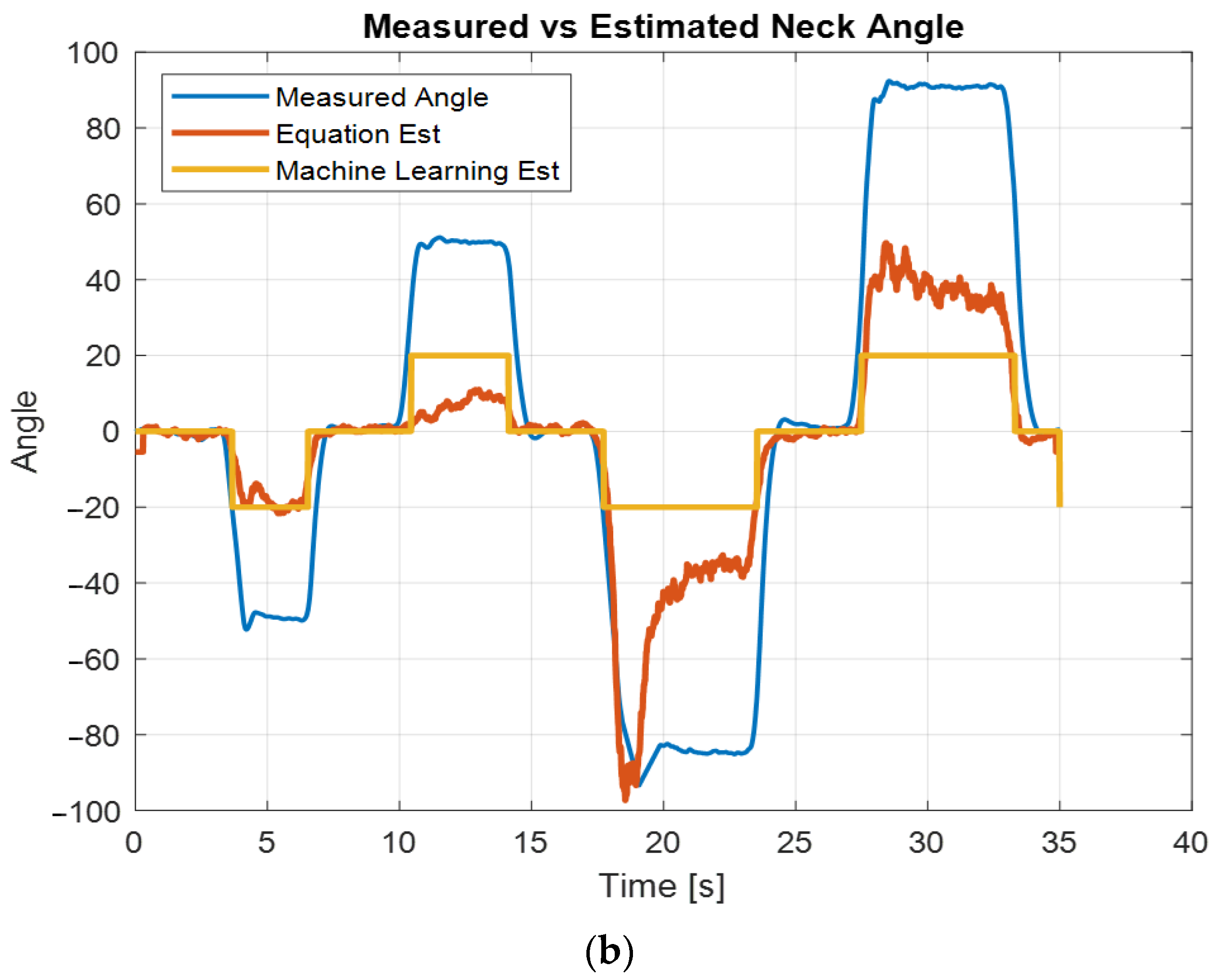

3.1.1. Head Rotation Validation

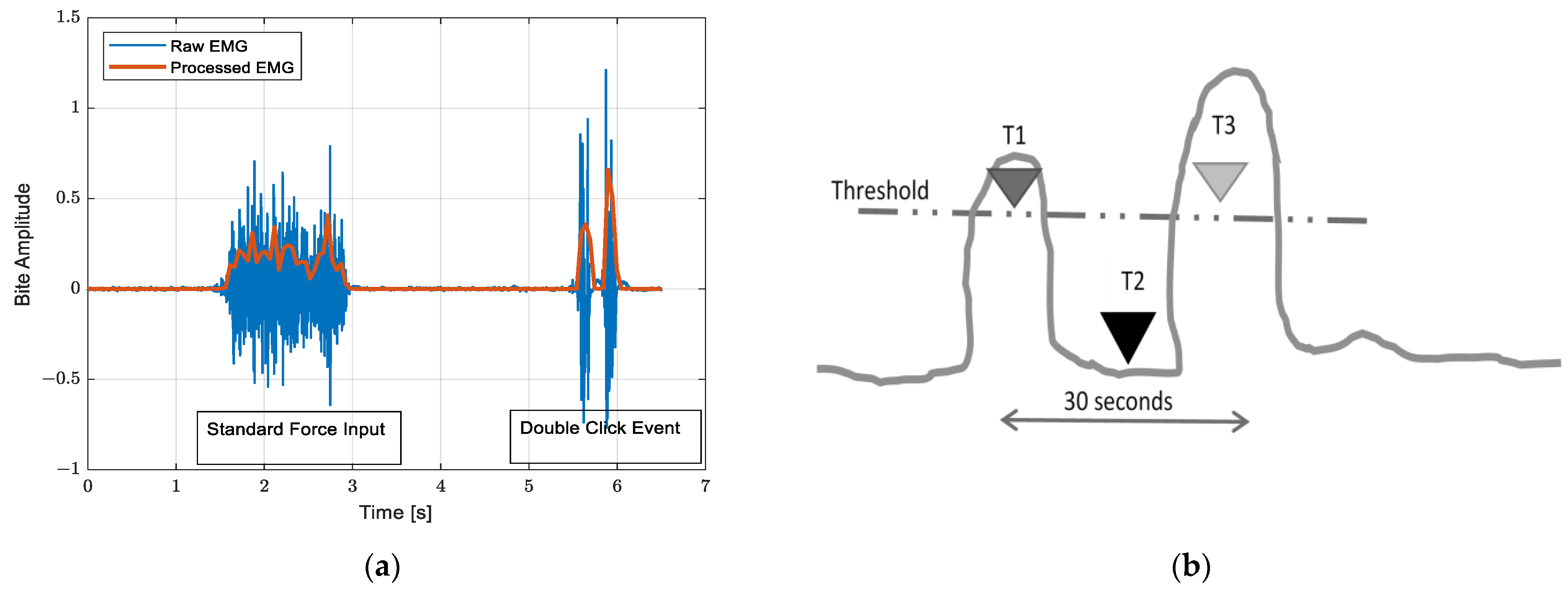

3.1.2. Bite EMG

3.2. Results

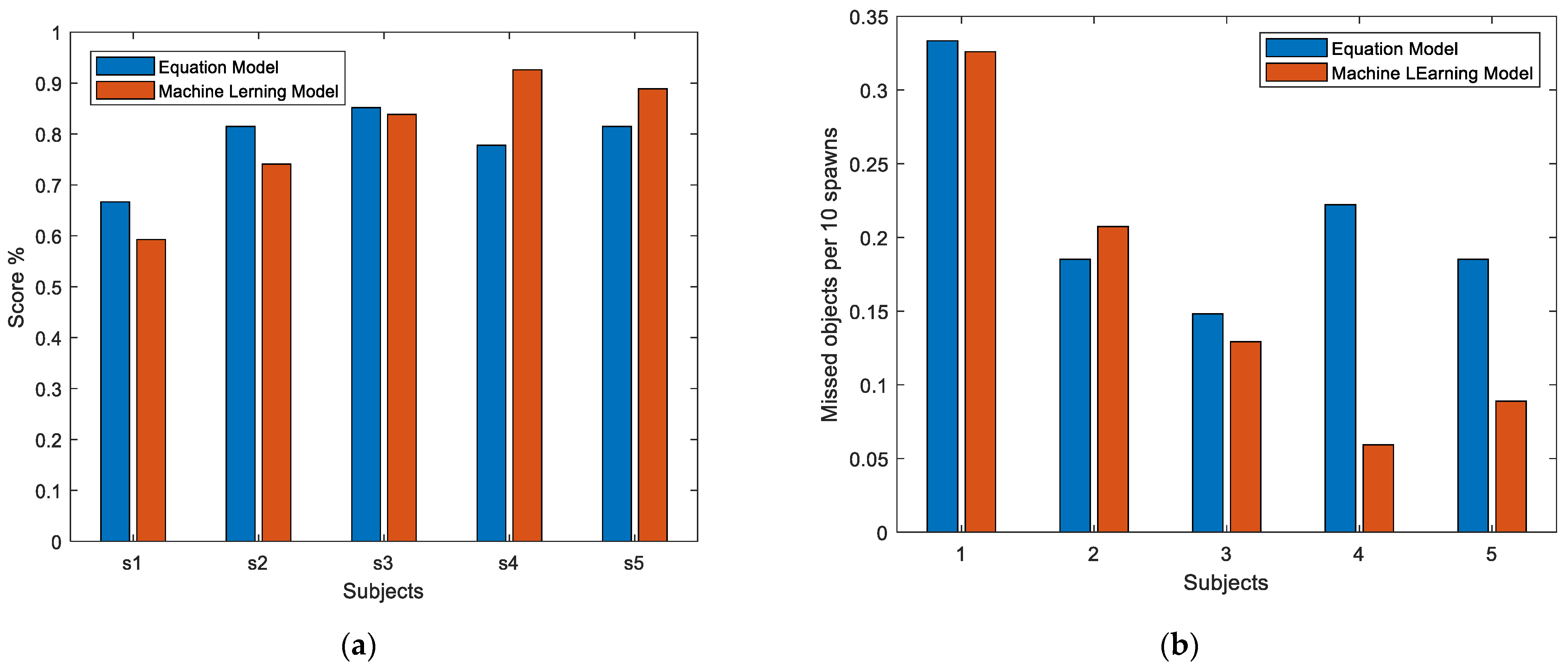

3.2.1. Experiment One

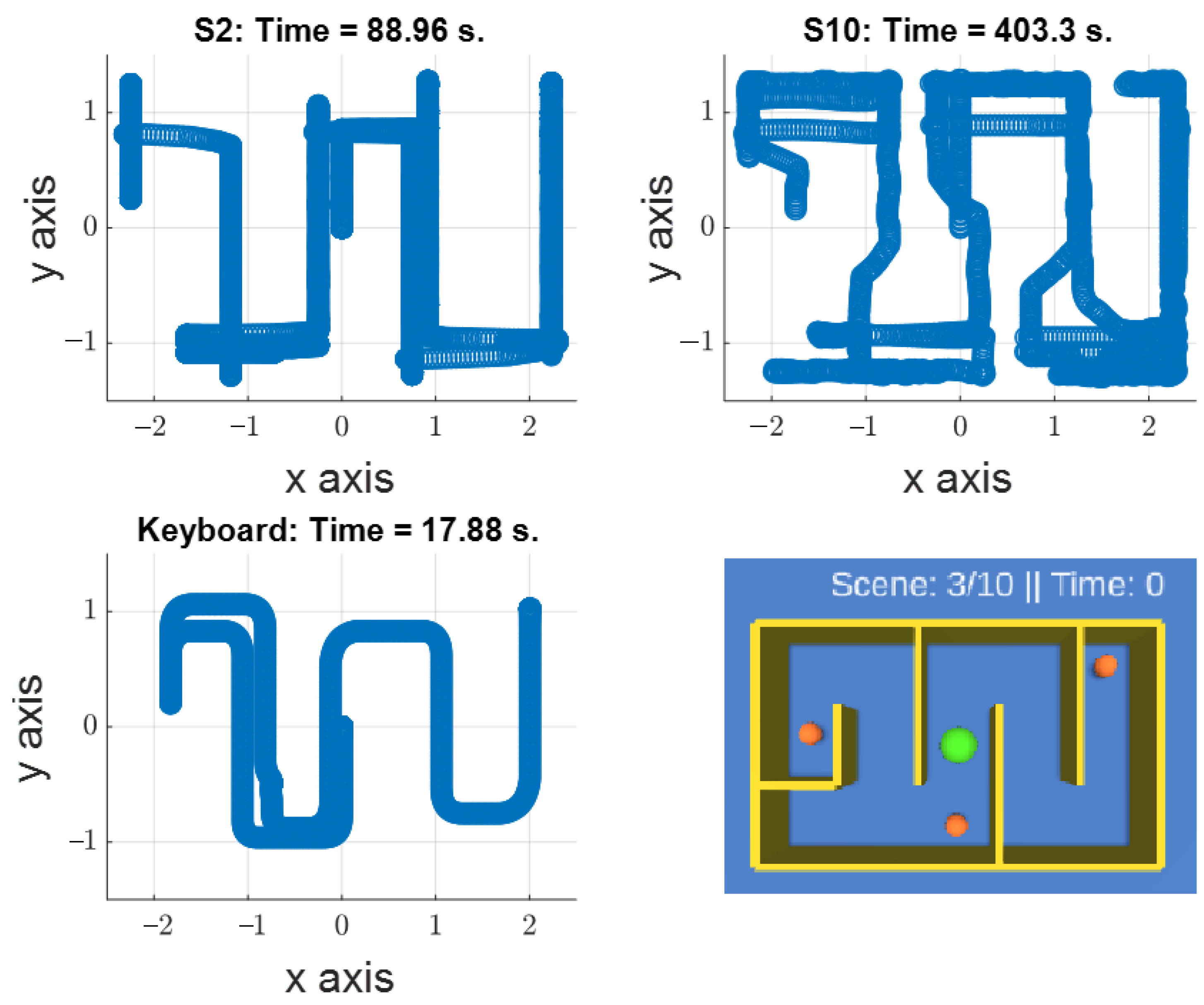

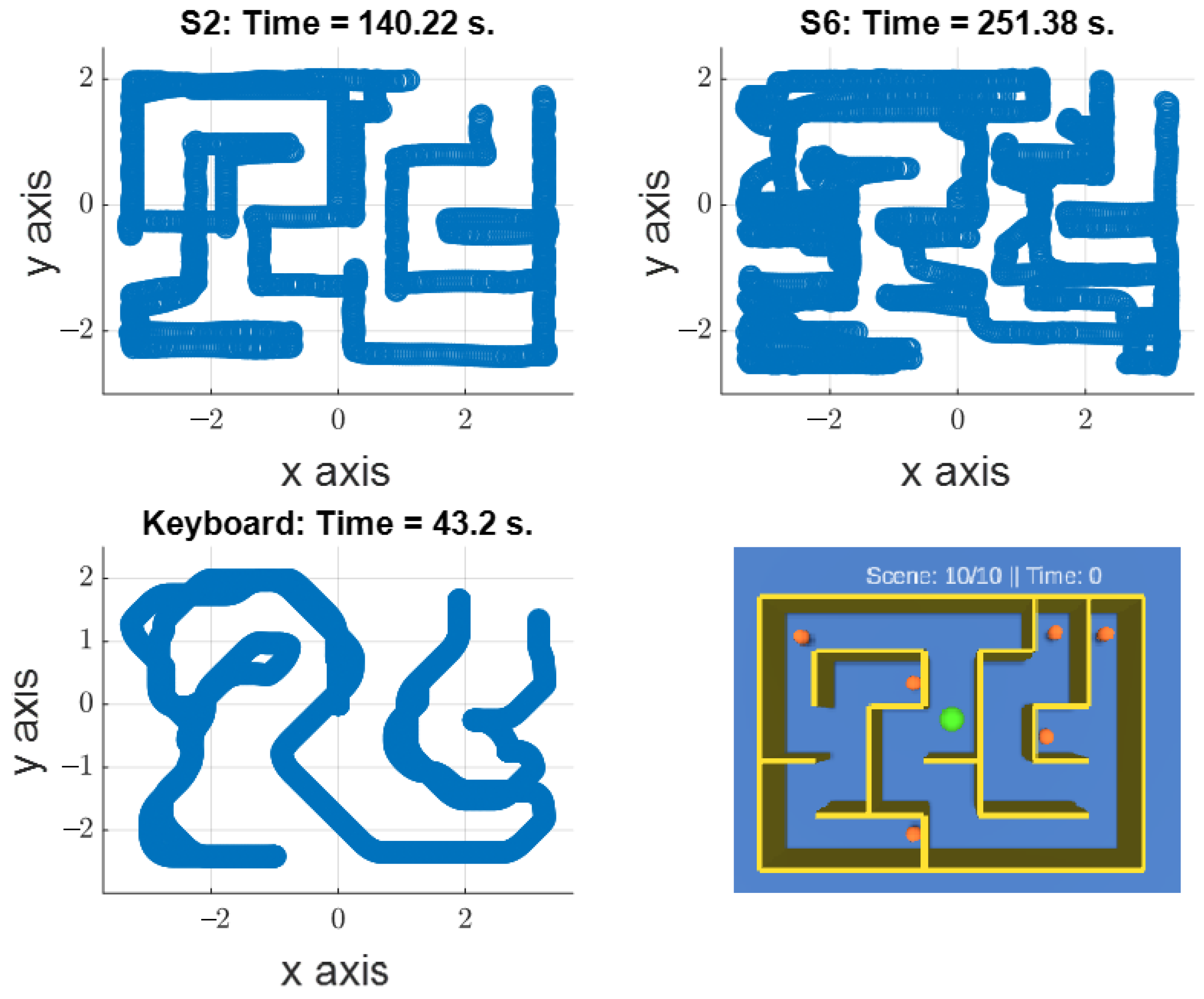

3.2.2. Experiment Two

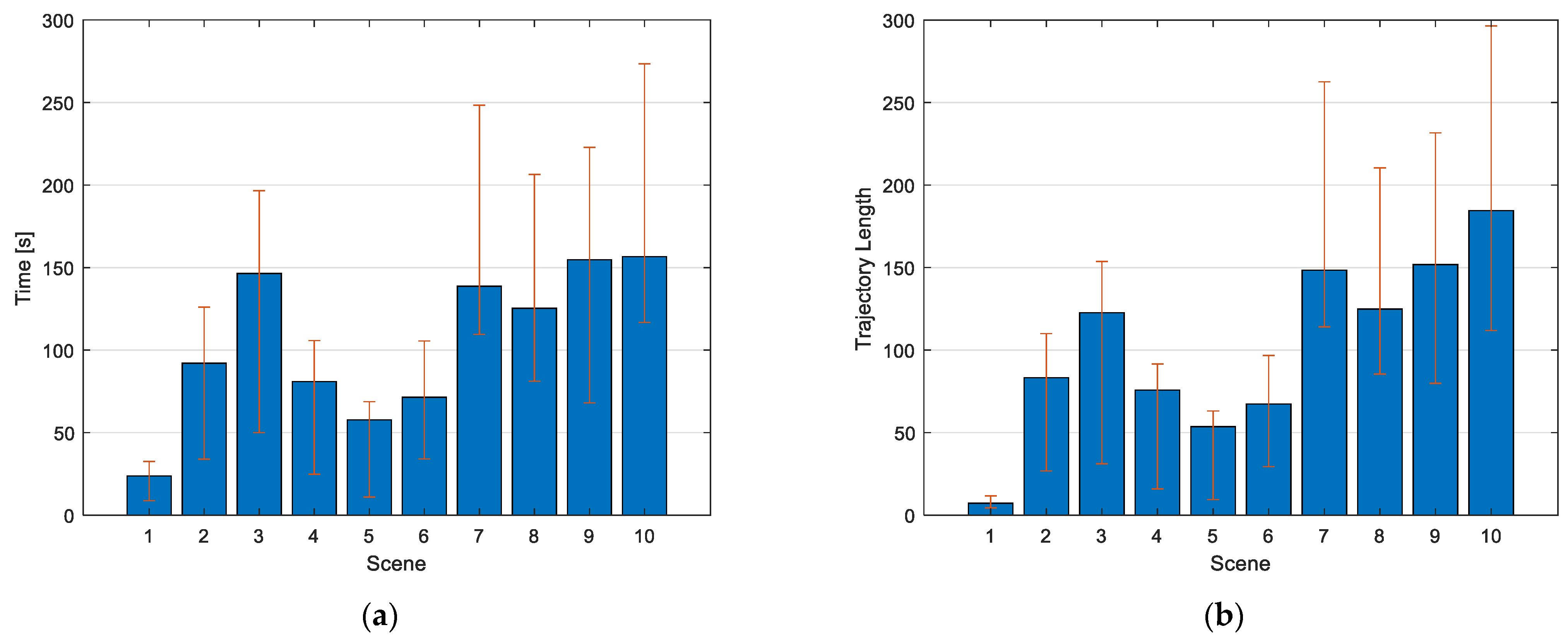

Completion Time

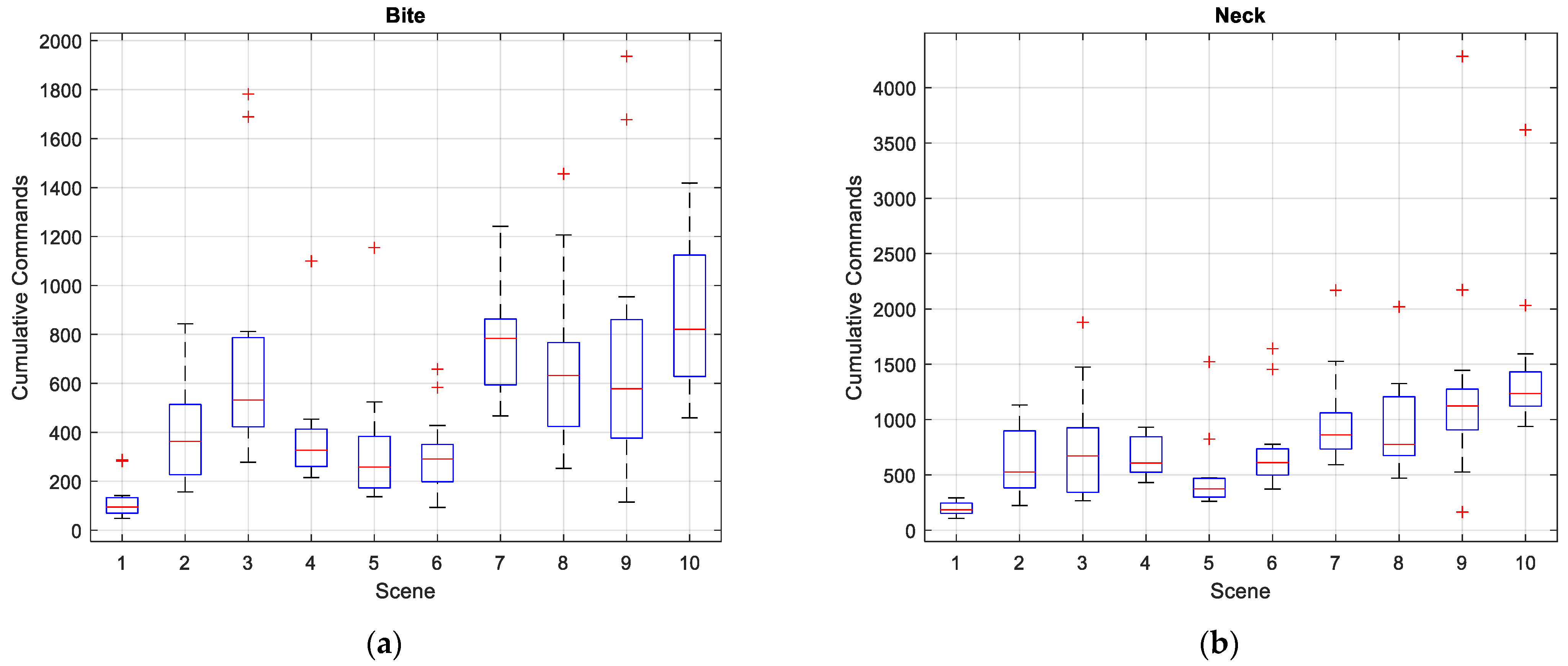

Input Command

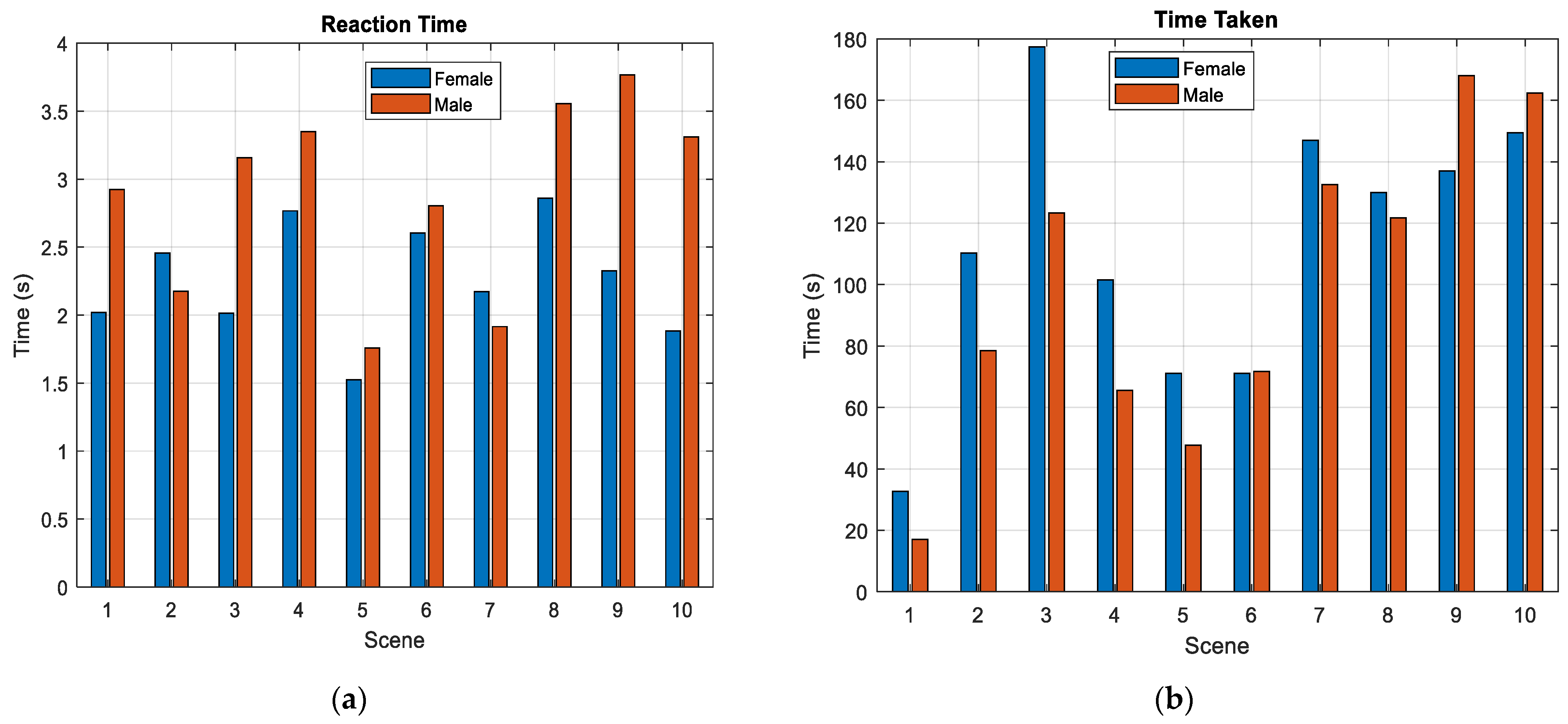

Group Comparison

Overall Performance

4. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Campo, P.; Dangles, O. An overview of games for entomological literacy in support of sustainable development. Curr. Opin. Insect Sci. 2020, 40, 104–110. [Google Scholar] [CrossRef] [PubMed]

- Forouzandeh, N.; Drees, F.; Forouzandeh, M.; Darakhshandeh, S. The effect of interactive games compared to painting on preoperative anxiety in Iranian children: A randomized clinical trial. Complement. Ther. Clin. Pract. 2020, 40, 101211. [Google Scholar] [CrossRef] [PubMed]

- Gupta, A.; Lawendy, B.; Goldenberg, M.G.; Grober, E.; Lee, J.Y.; Perlis, N. Can video games enhance surgical skills acquisition for medical students? A systematic review. Surgery 2021, 169, 821–829. [Google Scholar] [CrossRef]

- Barr, M. Student attitudes to games-based skills development: Learning from video games in higher education. Comput. Hum. Behav. 2018, 80, 283–294. [Google Scholar] [CrossRef]

- Vickers, S.; Istance, H.; Heron, M.J. Accessible Gaming for People with Physical and Cognitive Disabilities: A Framework for Dynamic Adaptation. In Proceedings of the Conference on Human Factors in Computing Systems, Paris, France, 27 April–2 May 2013; pp. 19–24. [Google Scholar] [CrossRef]

- Bailey, J.M. Adaptive Video Game Controllers Open Worlds for Gamers With Disabilities. The New York Times. 20 February 2019. Available online: https://www.nytimes.com/2019/02/20/business/video-game-controllers-disabilities.html (accessed on 13 January 2021).

- Winkie, L. For Disabled Gamers Like BrolyLegs, Esports Is an Equalizer. Available online: https://www.vice.com/en/article/ywgqxv/for-disabled-gamers-like-brolylegs-esports-is-an-equalizer (accessed on 13 January 2021).

- Donovan, T. Disabled and Hardcore The Story of the Man Behind Call of Duty’s N0M4D Controls. Available online: https://www.eurogamer.net/articles/2011-07-13-disabled-and-hardcore-article (accessed on 13 January 2021).

- Caesarendra, W.; Lekson, S.U.; Mustaqim, K.A.; Winoto, A.R.; Widyotriatmo, A. A classification method of hand EMG signals based on principal component analysis and artificial neural network. In Proceedings of the 2016 International Conference on Instrumentation, Control, and Automation, ICA 2016, Bandung, Indonesia, 29–31 August 2016; pp. 22–27. [Google Scholar]

- López, A.; Fernández, M.; Rodríguez, H.; Ferrero, F.; Postolache, O. Development of an EOG-based system to control a serious game. Measurement 2018, 127, 481–488. [Google Scholar] [CrossRef]

- Yeh, S.C.; Hou, C.L.; Peng, W.H.; Wei, Z.Z.; Huang, S.; Kung, E.Y.C.; Lin, L.; Liu, Y.H. A multiplayer online car racing virtual-reality game based on internet of brains. J. Syst. Archit. 2018, 89, 30–40. [Google Scholar] [CrossRef]

- Malone, L.A.; Padalabalanarayanan, S.; McCroskey, J.; Thirumalai, M. Assessment of Active Video Gaming Using Adapted Controllers by Individuals With Physical Disabilities: A Protocol. JMIR Res. Protoc. 2017, 6, e116. [Google Scholar] [CrossRef] [PubMed]

- Thirumalai, M.; Kirkland, W.B.; Misko, S.R.; Padalabalanarayanan, S.; Malone, L.A. Adapting the wii fit balance board to enable active video game play by wheelchair users: User-centered design and usability evaluation. J. Med. Internet Res. 2018, 20, 36–48. [Google Scholar] [CrossRef]

- Prahm, C.; Vujaklija, I.; Kayali, F.; Purgathofer, P.; Aszmann, O.C. Game-Based Rehabilitation for Myoelectric Prosthesis Control. JMIR Serious Games 2017, 5, e3. [Google Scholar] [CrossRef]

- Laksono, P.W.; Kitamura, T.; Muguro, J.; Matsushita, K.; Sasaki, M.; Suhaimi, M.S.A.b. Minimum mapping from EMG signals at human elbow and shoulder movements into two DoF upper-limb robot with machine learning. Machines 2021, 9, 56. [Google Scholar] [CrossRef]

- Laksono, P.W.; Matsushita, K.; Suhaimi, M.S.A.b.; Kitamura, T.; Njeri, W.; Muguro, J.; Sasaki, M. Mapping three electromyography signals generated by human elbow and shoulder movements to two degree of freedom upper-limb robot control. Robotics 2020, 9, 83. [Google Scholar] [CrossRef]

- Sasaki, M.; Matsushita, K.; Rusyidi, M.I.; Laksono, P.W.; Muguro, J.; Suhaimi, M.S.A.b.; Njeri, W. Robot control systems using bio-potential signals. AIP Conf. Proc. 2020, 2217, 20008. [Google Scholar] [CrossRef]

- Williams, M.R.; Kirsch, R.F. Evaluation of head orientation and neck muscle EMG signals as three-dimensional command sources. J. Neuroeng. Rehabil. 2015, 12, 25. [Google Scholar] [CrossRef] [PubMed]

- Han, J.S.; Zenn Bien, Z.; Kim, D.J.; Lee, H.E.; Kim, J.S. Human-Machine Interface for wheelchair control with EMG and Its Evaluation. In Proceedings of the Annual International Conference of the IEEE Engineering in Medicine and Biology-Proceedings, Cancun, Mexico, 17–21 September 2003; Volume 2, pp. 1602–1605. [Google Scholar]

- Tello, R.J.M.G.; Bissoli, A.L.C.; Ferrara, F.; Müller, S.; Ferreira, A.; Bastos-Filho, T.F. Development of a Human Machine Interface for Control of Robotic Wheelchair and Smart Environment. IFAC-PapersOnLine 2015, 48, 136–141. [Google Scholar] [CrossRef]

- Lyu, M.; Chen, W.-H.; Ding, X.; Wang, J.; Pei, Z.; Zhang, B. Development of an EMG-Controlled Knee Exoskeleton to Assist Home Rehabilitation in a Game Context. Front. Neurorobotics 2019, 13, 67. [Google Scholar] [CrossRef] [PubMed]

- Gutiérrez, Á.; Sepúlveda-Muñoz, D.; Gil-Agudo, Á.; de los Reyes Guzmán, A. Serious Game Platform with Haptic Feedback and EMG Monitoring for Upper Limb Rehabilitation and Smoothness Quantification on Spinal Cord Injury Patients. Appl. Sci. 2020, 10, 963. [Google Scholar] [CrossRef]

- Garcia-Hernandez, N.; Garza-Martinez, K.; Parra-Vega, V.; Alvarez-Sanchez, A.; Conchas-Arteaga, L. Development of an EMG-based exergaming system for isometric muscle training and its effectiveness to enhance motivation, performance and muscle strength. Int. J. Hum. Comput. Stud. 2019, 124, 44–55. [Google Scholar] [CrossRef]

- van Dijk, L.; van der Sluis, C.K.; van Dijk, H.W.; Bongers, R.M. Learning an EMG Controlled Game: Task-Specific Adaptations and Transfer. PLoS ONE 2016, 11, e0160817. [Google Scholar] [CrossRef]

- Dębska, M.; Polechoński, J.; Mynarski, A.; Polechoński, P. Enjoyment and Intensity of Physical Activity in Immersive Virtual Reality Performed on Innovative Training Devices in Compliance with Recommendations for Health. Int. J. Environ. Res. Public Health 2019, 16, 3673. [Google Scholar] [CrossRef]

- Prahm, C.; Kayali, F.; Sturma, A.; Aszmann, O. PlayBionic: Game-Based Interventions to Encourage Patient Engagement and Performance in Prosthetic Motor Rehabilitation. PM R 2018, 10, 1252–1260. [Google Scholar] [CrossRef]

- Prahm, C.; Kayali, F.; Vujaklija, I.; Sturma, A.; Aszmann, O. Increasing motivation, effort and performance through game-based rehabilitation for upper limb myoelectric prosthesis control. In Proceedings of the International Conference on Virtual Rehabilitation, ICVR, Montreal, QC, Canada, 19–22 June 2017; Volume 2017. [Google Scholar]

- Rowland, J.L.; Malone, L.A.; Fidopiastis, C.M.; Padalabalanarayanan, S.; Thirumalai, M.; Rimmer, J.H. Perspectives on Active Video Gaming as a New Frontier in Accessible Physical Activity for Youth With Physical Disabilities. Phys. Ther. 2016, 96, 521–532. [Google Scholar] [CrossRef]

- Muguro, J.K.; Sasaki, M.; Matsushita, K. Evaluating Hazard Response Behavior of a Driver Using Physiological Signals and Car-Handling Indicators in a Simulated Driving Environment. J. Transp. Technol. 2019, 9, 439–449. [Google Scholar] [CrossRef]

- Sommerich, C.M.; Joines, S.M.B.; Hermans, V.; Moon, S.D. Use of surface electromyography to estimate neck muscle activity. J. Electromyogr. Kinesiol. 2000, 10, 377–398. [Google Scholar] [CrossRef]

- Steenland, H.W.; Zhuo, M. Neck electromyography is an effective measure of fear behavior. J. Neurosci. Methods 2009, 177, 355–360. [Google Scholar] [CrossRef] [PubMed]

- Benno, M.; Nigg, W.H. Biomechanics of the Musculo-Skeletal System, 3rd ed.; Wiley: Toronto, ON, Canada, 2007; ISBN 978-0-470-01767-8. [Google Scholar]

- Seth, N.; Freitas, R.C.D.; Chaulk, M.; O’Connell, C.; Englehart, K.; Scheme, E. EMG pattern recognition for persons with cervical spinal cord injury. In Proceedings of the IEEE International Conference on Rehabilitation Robotics, Toronto, ON, Canada, 24–28 June 2019; Volume 2019, pp. 1055–1060. [Google Scholar]

- Williams, M.R.; Kirsch, R.F. Evaluation of head orientation and neck muscle EMG signals as command inputs to a human-computer interface for individuals with high tetraplegia. IEEE Trans. Neural Syst. Rehabil. Eng. 2008, 16, 485–496. [Google Scholar] [CrossRef] [PubMed]

- Iacopetti, F.; Fanucci, L.; Roncella, R.; Giusti, D.; Scebba, A. Game console controller interface for people with disability. In Proceedings of the CISIS 2008: 2nd International Conference on Complex, Intelligent and Software Intensive Systems, Barcelona, Spain, 4–7 March 2008; pp. 757–762. [Google Scholar]

- Arozi, M.; Caesarendra, W.; Ariyanto, M.; Munadi, M.; Setiawan, J.D.; Glowacz, A. Pattern recognition of single-channel sEMG signal using PCA and ANN method to classify nine hand movements. Symmetry 2020, 12, 541. [Google Scholar] [CrossRef]

- Raisamo, R.; Patomäki, S.; Hasu, M.; Pasto, V. Design and evaluation of a tactile memory game for visually impaired children. Interact. Comput. 2007, 19, 196–205. [Google Scholar] [CrossRef]

- Zhang, B.; Tang, Y.; Dai, R.; Wang, H.; Sun, X.; Qin, C.; Pan, Z.; Liang, E.; Mao, Y. Breath-based human–machine interaction system using triboelectric nanogenerator. Nano Energy 2019, 64, 103953. [Google Scholar] [CrossRef]

- Choudhari, A.M.; Porwal, P.; Jonnalagedda, V.; Mériaudeau, F. An Electrooculography based Human Machine Interface for wheelchair control. Biocybern. Biomed. Eng. 2019, 39, 673–685. [Google Scholar] [CrossRef]

- Sirvent Blasco, J.L.; Iáñez, E.; Úbeda, A.; Azorín, J.M. Visual evoked potential-based brain-machine interface applications to assist disabled people. Expert Syst. Appl. 2012, 39, 7908–7918. [Google Scholar] [CrossRef]

- Müller, P.N.; Achenbach, P.; Kleebe, A.M.; Schmitt, J.U.; Lehmann, U.; Tregel, T.; Göbel, S. Flex Your Muscles: EMG-Based Serious Game Controls. In Joint International Conference on Serious Games; Springer: Cham, Switzerland, 2020; Volume 12434 LNCS, pp. 230–242. [Google Scholar]

- Dalgleish, M. There are no universal interfaces: How asymmetrical roles and asymmetrical controllers can increase access diversity. G|A|M|E Games Art Media Entertain. 2018, 1, 11–25. [Google Scholar]

- Shin, D.; Kambara, H.; Yoshimura, N.; Kang, Y.; Koike, Y. Control of a brick-breaking game using electromyogram. Int. J. Eng. Technol. (IJET) 2013, 6, 128–131. [Google Scholar] [CrossRef]

- Muguro, J.K.; Sasaki, M.; Matsushita, K.; Njeri, W.; Laksono, P.W.; Suhaimi, M.S.A.b. Development of neck surface electromyography gaming control interface for application in tetraplegic patients’ entertainment. AIP Conf. Proc. 2020, 2217, 030039. [Google Scholar] [CrossRef]

- Nambiar, S. Gamedolphin Unity Maze. Available online: https://github.com/gamedolphin (accessed on 2 February 2021).

- Côté-Allard, U.; Fall, C.L.; Drouin, A.; Campeau-Lecours, A.; Gosselin, C.; Glette, K.; Laviolette, F.; Gosselin, B. Deep Learning for Electromyographic Hand Gesture Signal Classification Using Transfer Learning. IEEE Trans. Neural Syst. Rehabil. Eng. 2019, 27, 760–771. [Google Scholar] [CrossRef] [PubMed]

- Bishop, C.M. Pattern Recognition and Machine Learning; Springer: Berlin/Heidelberg, Germany, 2006; ISBN 1493938436. [Google Scholar]

- DelPreto, J.; Rus, D. Plug-and-play gesture control using muscle and motion sensors. In Proceedings of the ACM/IEEE International Conference on Human-Robot Interaction, Cambridge, UK, 23–26 March 2020; pp. 439–448. [Google Scholar] [CrossRef]

- Grammenos, D.; Savidis, A.; Stephanidis, C. Designing universally accessible games. Comput. Entertain. 2009, 7, 1–29. [Google Scholar] [CrossRef]

- Shalal, N.S.; Aboud, W.S. Smart robotic exoskeleton: A 3-DOF wrist-forearm rehabilitation. J. Robot. Cont. 2021, 2, 476–483. [Google Scholar] [CrossRef]

| Type | Accuracy | Prediction Time (s) | Training Time (s) |

|---|---|---|---|

| Ensemble (RUS-Boosted Trees) | 0.939 | 0.03 | 74.93 |

| Ensemble (Bagged Trees) | 0.934 | 0.05 | 65.53 |

| KNN (Cubic) | 0.938 | 0.02 | 57.44 |

| KNN (Cosine) | 0.936 | 0.03 | 53.00 |

| SVM (fine Gaussian) | 0.938 | 0.01 | 38.67 |

| SVM (Linear) | 0.934 | 0.04 | 49.07 |

| Male | Female | ANOVA (p-Value) | |

|---|---|---|---|

| Reaction Time (s) | 2.87 ± 0.70 | 2.26 ± 0.42 | 0.0299 * |

| Completion Time (s) | 98.84 ± 50.16 | 112.75 ± 44.21 | 0.519 |

| SCM EMG | 492.30 ± 262.48 | 573.46 ± 240.20 | 0.480 |

| Masseter EMG | 831.08 ± 446.76 | 778.68 ± 290.82 | 0.7595 |

| Approx. Turns | Reaction Time | Completion Time | SCM Input | Masseter Input | Trajectory/ Path Length | Total Objects | ||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| h | v | Time (s) | std | Time (s) | std | Sum | std | Sum | std | Sum | std | |

| 3 | 1 | 2.54 | 1.30 | 23.74 | 14.90 | 119.09 | 75.75 | 198.79 | 56.46 | 722.54 | 283.49 | 2 |

| 3 | 6 | 2.30 | 0.75 | 92.08 | 58.11 | 405.34 | 203.46 | 598.90 | 286.29 | 8329.42 | 5655.32 | 3 |

| 6 | 7 | 2.67 | 1.96 | 146.50 | 96.43 | 707.53 | 464.89 | 748.29 | 479.92 | 12263.92 | 9157.81 | 3 |

| 8 | 5 | 3.10 | 1.89 | 80.94 | 56.13 | 380.11 | 221.90 | 671.95 | 172.68 | 7579.69 | 5995.52 | 3 |

| 5 | 3 | 1.66 | 0.53 | 57.76 | 46.76 | 333.12 | 263.26 | 477.12 | 332.44 | 5359.19 | 4410.02 | 3 |

| 6 | 4 | 2.72 | 0.95 | 71.45 | 37.37 | 310.37 | 158.64 | 725.07 | 369.32 | 6735.52 | 3802.65 | 3 |

| 13 | 13 | 2.03 | 1.26 | 138.77 | 29.20 | 768.13 | 216.44 | 975.86 | 417.63 | 14840.91 | 3427.49 | 5 |

| 15 | 11 | 3.26 | 2.00 | 125.29 | 44.17 | 671.08 | 330.30 | 960.57 | 414.29 | 12489.97 | 3938.40 | 5 |

| 13 | 11 | 3.15 | 1.85 | 154.70 | 86.62 | 725.67 | 513.16 | 1298.74 | 971.09 | 15180.07 | 7197.53 | 5 |

| 16 | 16 | 2.70 | 1.90 | 156.81 | 39.77 | 850.41 | 287.54 | 1430.92 | 692.21 | 19860.39 | 5027.02 | 6 |

| H-Index | V-Index | Reaction Time | Completion Time | SCM EMG | Masseter EMG | |

|---|---|---|---|---|---|---|

| H-index | 1 | |||||

| V-index | 0.911 | 1 | ||||

| Reaction Time | 0.415 | 0.239 | 1 | |||

| Completion Time | 0.760 * | 0.892 * | 0.316 | 1 | ||

| SCM EMG | 0.826 * | 0.938 * | 0.228 | 0.980 * | 1 | |

| Masseter EMG | 0.886 * | 0.925* | 0.394 | 0.891 * | 0.888 * | 1 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Muguro, J.K.; Laksono, P.W.; Rahmaniar, W.; Njeri, W.; Sasatake, Y.; Suhaimi, M.S.A.b.; Matsushita, K.; Sasaki, M.; Sulowicz, M.; Caesarendra, W. Development of Surface EMG Game Control Interface for Persons with Upper Limb Functional Impairments. Signals 2021, 2, 834-851. https://doi.org/10.3390/signals2040048

Muguro JK, Laksono PW, Rahmaniar W, Njeri W, Sasatake Y, Suhaimi MSAb, Matsushita K, Sasaki M, Sulowicz M, Caesarendra W. Development of Surface EMG Game Control Interface for Persons with Upper Limb Functional Impairments. Signals. 2021; 2(4):834-851. https://doi.org/10.3390/signals2040048

Chicago/Turabian StyleMuguro, Joseph K., Pringgo Widyo Laksono, Wahyu Rahmaniar, Waweru Njeri, Yuta Sasatake, Muhammad Syaiful Amri bin Suhaimi, Kojiro Matsushita, Minoru Sasaki, Maciej Sulowicz, and Wahyu Caesarendra. 2021. "Development of Surface EMG Game Control Interface for Persons with Upper Limb Functional Impairments" Signals 2, no. 4: 834-851. https://doi.org/10.3390/signals2040048

APA StyleMuguro, J. K., Laksono, P. W., Rahmaniar, W., Njeri, W., Sasatake, Y., Suhaimi, M. S. A. b., Matsushita, K., Sasaki, M., Sulowicz, M., & Caesarendra, W. (2021). Development of Surface EMG Game Control Interface for Persons with Upper Limb Functional Impairments. Signals, 2(4), 834-851. https://doi.org/10.3390/signals2040048