1. Introduction

Pre-elections polls are a tool for candidates in elections to help them win their political campaign, from the top tier—with national elections—to the lowest level—local elections [

1]. Pre-election polls, along with exit polls [

2], are tools used for different purposes by the media, academic researchers, and the public. However, regardless of the user, the main reason to use these election-focused surveys is the desire to know accurate information where uncertainty is around. For this purpose, the science of polling has been developing over the past century to minimize the uncertainty in the forecast of election results by obtaining more statistically significant results [

3].

While trying to predict as exactly as possible the results of upcoming elections, thus increasing accuracy, pollsters cope with [

4]: methodological problems (such as coverage, sampling, non-responding, weighting, adjustment, or treatment of non-disclosers); socio-political problems (such as characteristics of the campaign, of the parties, or the electoral system); and sociological problems (such as characteristics of the society, its cleavages, or traditions).

Nonetheless, errors happen occasionally, expanding the criticism on miss-predicted outcomes [

5]. Examples range from around the world, such as in previous presidential elections in the US: underestimating of Ronald Reagan’s victory in 1980 and the overestimation of Bill Clinton’s victory in 1996 [

6], or Hungary’s “Black Sunday” in 2002 [

7]. Despite those errors and others, over the years, pre-election and exit polls have established a significant role in media and campaigns and have become more reliable as a tool to predict election results, regardless of the country, its political structure, or electoral system [

8]. However, elections held in 2015–2016 in several countries expanded the number of miss-predicted results. Some examples are shown in Appendix

Table A1 and represent the outlier when considering the outcome of hundreds of other campaigns where pre-election polls were accurate [

9]. Nevertheless, it is the focus of this study to examine this phenomenon. The examples in this research represent democracies with a variety of types of political structure and are based on different electoral systems: parliamentary democracy in Israel and the UK, presidential democracy in the US, and semi-presidential democracy in Poland.

In May 2015, pre-election presidential polls in Poland predicted a lead for the then president, Mr. Komorowski, over other candidates, such as Mr. Duda, and even predicted the possibility for Mr. Komorowski to win from the first round, given the margin of error, for example, 39% to 31% in a pre-election poll from 8 May 2015 [

10]. The exit polls showed a different expected result—a tie with a slight lead by Mr. Duda over Mr. Komorowski (34.8% to 32.2%) [

11], which was close to the actual vote (34.8% to 33.77%) in the first round. Eventually, Mr. Duda was declared president after winning the second round of the elections.

A similar situation was found in the same month during the national legislative elections in Britain. Pre-election polls predicted a competitive competition between the Conservative party and the Labor party (for example 219 to 219 seats) [

12]. However, the exit-polls showed different expected results—a lead by the Conservative party (316 to 239 seats) [

13]. Eventually, election results were not as competitive as the pre-election polls predicted. The Conservative party gained a majority in the parliament (330 to 232 seats), even more than predicted by the exit polls. None of the pre-election polls or the exit polls in the UK were able to serve as an accurate predictor of the results. Thus, as the same as in Poland, they failed to serve their goal.

A much more disturbing situation was the 2015 Israeli elections, which are considered as “The Black Tuesday of pollsters in Israel.” The pre-election polls predicted a lead for the Zionist Union party over the Likud party (for example, 25 to 21 seats, out of 120 seats). The pre-election national polls also predicted a slight lead for the block of left-center parties over the block of right-religious parties (64 to 56 seats). As in the UK case, the exit polls showed a different situation than the pre-election polls. The Likud party was predicted to lead over the Zionist Union (27 to 26 seats). However, the lead of the left-center parties over the right-religious parties was maintained (65 to 55 seats) in the exit polls [

14]. Conversely to the UK and the Polish cases, the election results in Israel were not accurately predicted by neither the pre-election results nor the exit polls. The Likud party was eventually the biggest in the Knesset and much more than the Zionist Union (30 to 24 seats).

A year later, in June 2016, a week before the voting day on Brexit, it was predicted by some pollsters that the “Remain” supporters were more likely to narrowly win over the “Leave” supporters (by as low as 1% or by high as 10%). However, it is worth noting that several online polls predicted a “Leave” win. Eventually, the “Leave” supporters won the referendum by 51.9% to 48.1%, while adding more questions about the accuracy of the polling methodologies [

15].

Pre-election polls in all of the above examples were not able to serve their primary goal: to give an accurate estimation of the outcome on election day. However, these examples are different in many ways. They differ in the election methods, the polling methods, the depth of the mistake (i.e., miss-predicted pre-election polls vs. miss-predicted exit polls, or both), and the source of the mistakes. This differentiation applies as well to the US presidential elections. The US political system is a presidential democracy, in which the president is the head of the state and leads the executive branch that is separated from the legislative branch and the judicial branch [

16]. The election system in the US is based on the Electoral College. It applies that the President of the US is elected by an absolute majority within a body of 538 electors. Each state is assigned a number of electors that is equal to the combined total of the state’s number of members in the House of Representatives and the Senate [

17]. Every state chooses those electors, in most cases by a majority of votes in the state [

18].

The 2016 US Presidential Elections have been labeled as “Unprecedented” Elections regarding the triumph of non-establishment presidential candidates during the primaries [

19], the unpopularity of the final candidates [

20], and the growing importance of gender, race, religion, and ethnicity [

21]. Given that, and the errors in predicting the vote in recent national elections in some democratic countries, it was essential to examine the various methods of predicting the vote in the 2016 US presidential election. The 2016 US Presidential Election ultimately ended up in the same way as the examples mentioned above of a failure in predicting the winner. In the next pages, we will assess the accuracy of some leading methods. While assessing the various methods conducted in this cycle, it is worth noting some untypical methods that were accurate, like: “The Keys to the White House” and “The Primary Model”.

“The Keys to the White House” [

22] is a method that is based on a set of 13 true/false statements. In case any six of them are false, the incumbent party loses the presidency, and the challenging party wins. However, it is worth noting that assessing true/false is subjective and may change over the campaign. Following this method, Lichtman predicted that the “

Democrats would not be able to hold on to the White House.” Using this assessment, however, without giving specifics on how the assessment is made, this method predicted the change of power in the presidency from Democrats to Republicans.

“The Primary Model” [

23] is a method that relies on presidential primaries as a predictor of the vote in the general election; it also makes use of a swing of the electoral pendulum that is useful for forecasting. As in the case of the “The Keys to the White House”, “The Primary Model” also predicted the victory of Donald Trump [

24]. However, “The Primary Model” predicted a Trump win over the popular vote, which turned out to not be accurate.

Conversely to the first two methods, the following methods that we examined did not accurately predict the Trump win. “National newspapers endorsements” is another method that indicates possible media influence on voters, rather than an accurate estimator of the results [

25]. In this cycle, the Democratic candidate Hillary Clinton had gained the support of a long list of editorial boards, with a total of more than 500 endorsements [

26]. While her Republican rival Trump received less than 30. Moreover, most of those newspapers that endorsed Trump where less regarded and less circulated (the most circulated, the Las Vegas Review-Journal, is ranked 57th regarding circulation.)

The following method examined was the “Mock Presidential Election” by Western Illinois University [

27]. This method was promoted as one that has been accurate in predicting the next president since 1975. The results of the “Mock Presidential Election” conducted in November 2015 predicted a win for Sanders with more than 400 Electoral College votes [

28]. However, Sanders was not on the Democratic ticket, and neither was the Democratic nominee—Clinton—close to this number of electors.

The last method we examined as a non-public opinion polling method was “Market Predictions.” It has been examined and found useful in recent years in sports and politics as well as in other areas [

29]. Other researchers showed that poll-based forecast outperforms “Market Predictions” [

30]. In any case, as of the night before election day of the 2016 US campaign, leading companies in the “Market Predictions” were accepting investments with much higher success rates for Clinton over Trump (for example: “Predictwise” with 88.9% for Clinton) [

31]. In this case, a recent study raised doubts on using “Market Predictions,” while suggesting the possibility of bias such as market manipulation or misunderstanding by participants even when they invest their own money [

32].

Following this discussion, this research examined the accuracy of several methods used in the 2016 US Presidential Elections. Despite the growing importance of non-public opinion methods, as of 2016, public opinion polling methods remain the leading tools to predict the results of elections worldwide. However, there are various methods of pre-election polls. These include, for example, telephone interviews using a landline, mobile, or combination of the two; internet surveys of a recruited group of people or voluntary participants; face-to-face interviews; and mail surveys [

33]. The research questions are: What is the accuracy of the different methods in predicting votes? Why do pre-election polls miss-predict results? What are the suggested improvements needed in the pre-election polling methodology?

The current literature is split on this matter. Some acknowledge an existing problem and try to suggest new methods. Prosser and Mellon [

34] describe the failure of predicting the results of the recent elections in the UK and the US. The same also with Lauderdale et al. [

35] who suggest a new method to improve accuracy following those recent elections. They suggest applying multilevel regression and post-stratification methods to pre-election polling. Others also joined, such as Cowley and Kavanagh [

36], Moon [

37], and Toff [

38]. The Report of the Inquiry into the 2015 British general election opinion polls [

39] stated that the error is not new, but in any case, suggested that the primary cause of the error in 2015 was unrepresentative samples.

Others fail to acknowledge any problem. Such as Jennings and Wlezien [

9] who claim that recent performance of polls has not been outside the ordinary. This claim is similar to Jennings’ recent claim [

40]. Even a report by the American Association for Public Opinion Research (AAPOR) [

41] stated that the problem is only in some state-level polls and predicted that the errors “

are perhaps unlikely to repeat.” Tourangeau [

42] acknowledges the challenges but fails to approve the

“failure” (apostrophes as in the source) in 2016. Panagopoulos et al. [

43] even examined the state-wide polls in the US 2016 elections and found no significant bias.

2. Materials and Methods

To examine the accuracy of the pre-election polls, the root-mean-square error (

RMSE) was employed, as shown in Equation (1), to determine how accurate each poll compared to the actual results [

44]. It is an estimator that measures the average of the squares of the errors, which is the difference between the estimator (

) and the actual results for each candidate (

Mit) [

45].

is the vector of the predictions of the results of the n candidates in the elections of a specific poll in a specific time frame t. Mit is the vector of the actual results of the elections for the candidate n. The advantage of using RMSE is that it has the same units as the quantity being estimated; for an unbiased estimator, the RMSE is the square root of the variance, known as the standard deviation. The lower the RMSE is, the higher the accuracy of the poll to the actual results.

The framework of the correlation is:

This research hypothesizes that demographic characters explain the miss-predicted results. To examine that, this study relies on the following independent factors. For race and ethnicity, we used: percentage of the Hispanic population, the percentage of the White population, the percentage of the Black population [

46], and the Diversity Index [

47]. For religion, we used the percentage of Evangelical Protestant, Mainline Protestant, Historically Black Protestant, Catholic, Mormon, Other Christian, Total Christian, Non-Christian, Unaffiliated, and Level of religiosity [

48]. The dependent factors are the

RMSE and a factor that represented true/false of prediction of the winner (1 for true, 0 for false).

To examine the research questions, we focused on the correlation between the abovementioned dependent and independent variables by using two different methods. The correlation between the

RMSE and all the dependent factors, we used the Pearson correlation coefficient [

49]. If the Pearson correlation value is positive, it means that the higher the value of that relevant factor is, the higher the

RMSE of the prediction is. Which in return means a less accurate prediction. When the Pearson correlation value is negative, it means that the higher the percentage of that relevant factor is, the lower the

RMSE of the prediction is. Which in return means a more accurate prediction. Given that the true/false prediction of the winner is a dichotomy factor, we examined its correlation with the independent variables using the Biserial correlation coefficient [

50]. If the Biserial correlation coefficient is positive, it means that the higher the value of that relevant factor, the higher the chances are to get a correct prediction of the winner.

3. Results

3.1. Popular Vote

Table 1 presents the results of the examination of the predicted results by leading pollsters on the last week before election day, compared to the final results of the popular vote: Clinton—48.2%, Trump—46.1%, others—5.7% [

51] (for full details on these polls see

Table A2 in the

Appendix). Most polls predicted the lead of Clinton in the popular vote, except two. More than 60% of these last-week polls were accurate with an

RMSE smaller than 5%. Most polls fell out of their declared margin of error.

According to this examination, the United Press International/CVoter International poll was the most accurate. It is worth noting that their method was based on internet interviews in which participants self-selected to participate.

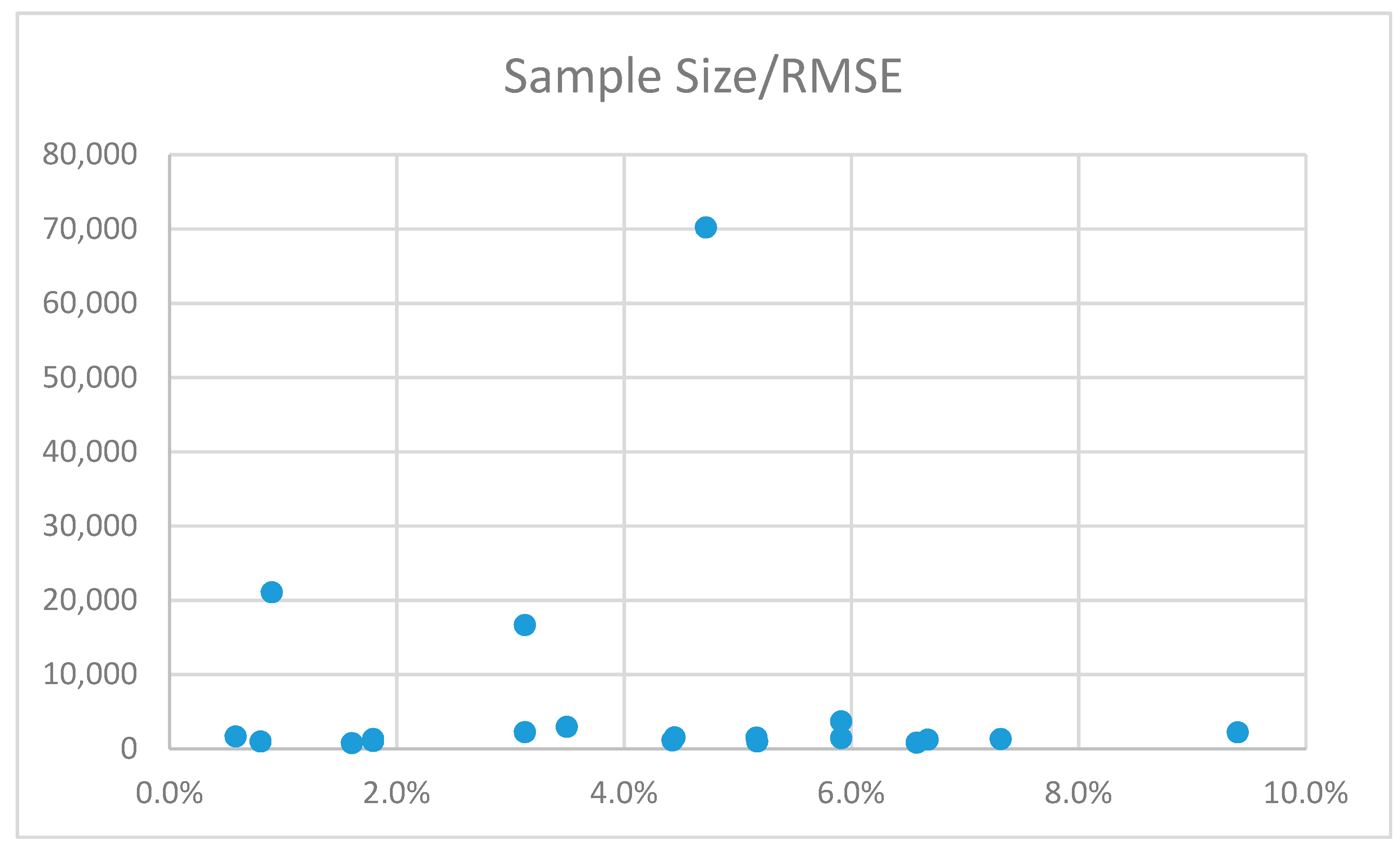

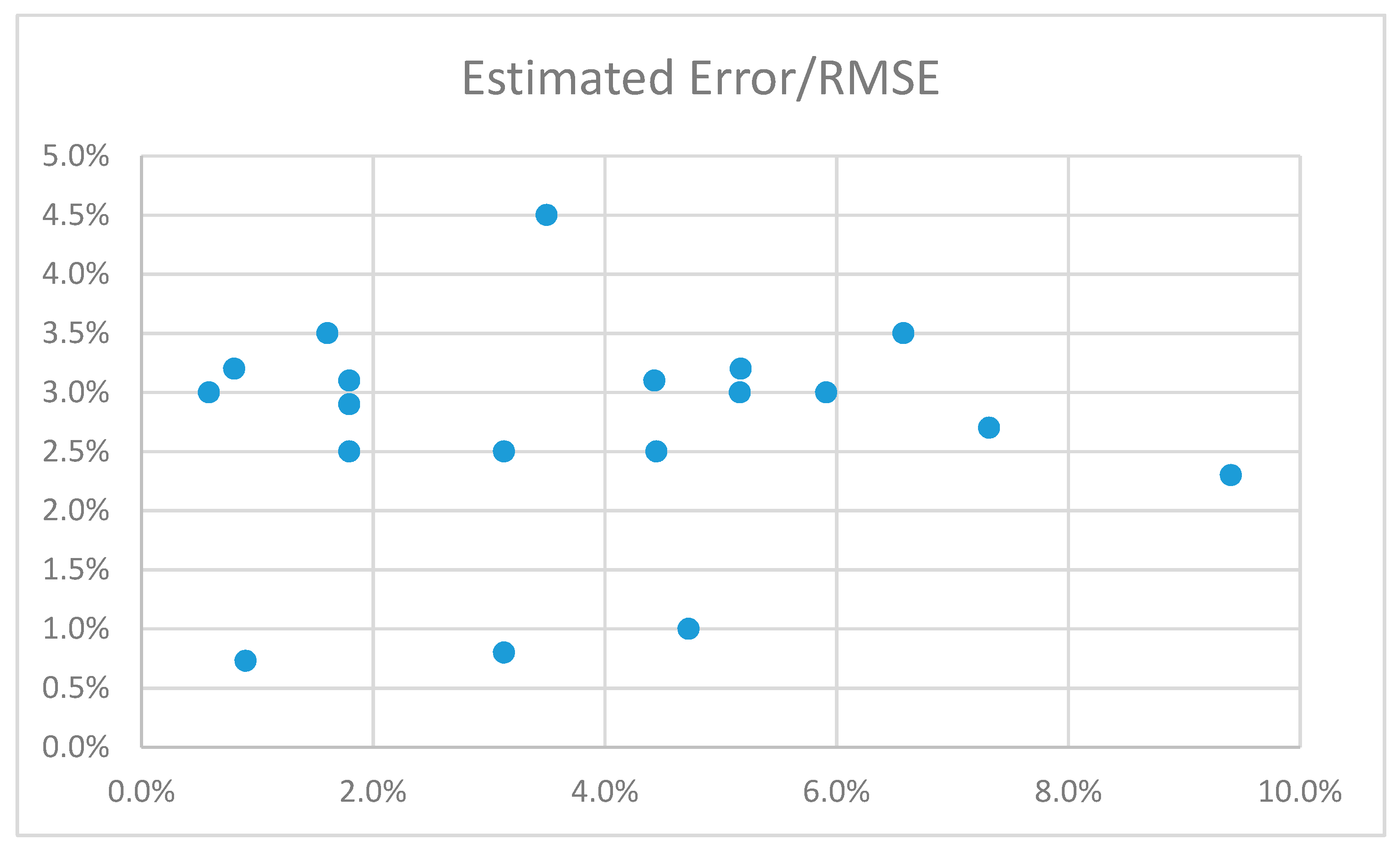

However, when examining the various methods during the 2016 campaign, little difference was found in the accuracy of predicting the results, as presented in

Figure A1 and

Figure A2 in the

Appendix. Comparing internet interviews vs phone interviews reveal that internet interviews had slightly better accuracy. Mixed methods were only in two polls of our sample. Thus we were not able to conclude whether it was better. Applying the same comparison between polls that weighted for social and demographic stratifications (such as race/ethnicity, age, gender, region, partisanship, education, annual income, and marital status) gives better accuracy for polls with no-weighting.

3.2. Poll-Aggregators

Based on those last-week polls, all major poll-aggregators predicted the lead of Clinton in the popular vote and had a relatively high level of accuracy, as shown in

Table 2. Among them, the RealClearPolitics was the most accurate. The accuracy of the top three aggregators was found as better than the average and the median (4.4%) of the last-week polls (as reported in

Table 1). Only the prediction by FiveThirtyEight was with lower accuracy.

Based on these predictions, most poll aggregators formulated a projection of “the chance of winning.” All the aggregators mentioned above, like others, projected a Clinton win: Princeton Election Consortium—99% Clinton; The Huffington Post—98% Clinton; The New York Times—85% Clinton; FiveThirtyEight—71% Clinton. Some of these projections gave a slight chance for a Trump win, after analyzing the various ways leading to the presidency. Alternatively, as FiveThirtyEight’s editor-in-chief stated on the eve of the Election Day [

52]: “…

there’s a wide range of outcomes, and most of them come up with Clinton.”

3.3. State Polls and the Electoral College

Instead of focusing on predicting the popular vote, predictions based on pre-election polls in the US presidential elections need to be based on predicting the winner of the electors in every state, as already mentioned by other scholars who examined the US 2016 elections [

53]. However, examining the performance of pollsters at the state level during the 2016 presidential election also reveals a problem. There were many differences between pollsters. However, the conclusions were similar—a Clinton win. For example, the polls published by the New York Times (NYT) predicted the results accurately in 44 states and Washington DC [

54]. This is considered as a high-level accuracy of polls. However, the problem is that these polls miss-predicted the winner in “only” six states, as shown in

Table 3. Those six states have altogether 108 electors that went to Trump. This number of electors made all the difference between losing and winning for Clinton and Trump, respectively, and made all the difference between predicting accurately, or incorrectly, the outcome of the presidential elections.

Based on such state polls, poll aggregators gave a lead for Clinton in the Electoral College (as shown in

Table 4), while the final results were Trump 304 to Clinton 227 (and seven write-ins/other). The accuracy of such predictions was very low and even lower than the least accurate poll of the popular vote.

Therefore, this required a more thorough investigation of the state-level pre-election polls. The results, as shown in

Table 5, suggest the following. Only one of the Demographic Characters by State was correlated correctly with predicting the winner of the state’s popular vote. The higher the Diversity Index is in a state, the higher the chances to predict the winner in that state (

r = 0.39,

r = 0.38, and

r = 0.38). It suggests that current methods used by pollsters are more adjusted to a diverse population, but not able to predict the winner accurately in less diverse states.

This is also supported by the Pearson value of the correlation between the Diversity Index and the RMSE of all the three predictions examined. It shows that the higher the Diversity Index value is, the lower the RMSE is (r = −0.53, r = −0.50, and r = −0.50), meaning the higher the accuracy of the prediction. In other words, the higher the diversity is, the higher the accuracy of the prediction.

When examining the correlation between the other demographic factors and the RMSE of the NYT predictions, similar trends were found with that of the Huff-Post predictions. However, both were different from the FiveThirtyEight predictions. In fact, in the FiveThirtyEight predictions, we did find significant correlations only to two factors—Black population and Historically Black Protestant. In both cases, the RMSE of the FiveThirtyEight predictions were correlated positively to those two factors (r = 0.37 and r = 0.29, respectively). This suggests that the higher the Black population is in a state, the higher the RMSE of the FiveThirtyEight predictions. The same is for Historically Black Protestant, which supports the same conclusion. It means that their models are less useful in predicting trends among Black communities or followers of the Historically Black Protestant.

Despite some differences between the NYT predictions and the Huff-Post, we can still conclude the following for both predictions. Accurate predictions are more likely found in states with: higher Diversity Index (r = −0.53 or r = −0.50), higher percentage of people religiously affiliated as Catholic (r = −0.40 or r = −0.48), “Other Christians” (r = −0.40 or r = −0.44), Non-Christians (r = −0.52 or r = −0.54), and Unaffiliated (r = −0.42 or −0.43). Accurate predictions are also more likely found in states with a higher percentage of people living in an urban setting, compared to rural (r = −0.54 or r = 0.59).

Less accurate predictions are more likely in states with: higher religiosity (r = 0.46 or r = 0.49), higher percentage of Christians (r = 0.51 or r = 0.53)—especially higher percentages of Mainline Protestant (r = 0.38 or r = 0.42) and Evangelical Protestants (r = 0.45 or r = 0.51). It was also evident that it is more likely to have less accurate predictions in states with a higher percentage of White populations (r = 0.40 or r = 0.48).

These findings suggest that the NYT and the Huff-Post predictions were based on models that can cope with states with highly urbanized populations, with more a diverse society regarding race, ethnicity, and religion. However, those models proved less accurate to predict the vote in states that were less urbanized, with a homogeneous population and less diverse society regarding race, ethnicity, and religion. The current models are not able to predict: White people, who are Christians, mostly Protestants (Evangelical or Mainline), are highly religious, and live in rural areas.

3.4. Explanations of the Errors

None of the public opinion polling methods in the 2016 US presidential elections were accurate to a level that was useful to predict the elected president. Polls that predicted the popular vote were relatively very accurate; however, this did not lead to accurately calling the winner of the elections. Moreover, polls which were supposed to predict the distribution of the Electoral College, hence leading to the winner, provided a wrong Clinton win prediction.

Identifying the sources of the failure will require the understanding that such failure exists and a thorough examination. Explanations may include for example sampling errors, last minute changes, or “The Shy Theory.”

Sampling errors happen when pollsters fail to achieve a representative sample [

55]. The sources of such a failure vary and may include these possibilities: a lack of an accurate phone numbers database; people who refused to participate, for various reasons, may have resulted in sampling errors; and pollsters assumed that people who did not vote in the 2012 election would not vote in 2016. Thus, they were excluded from the sample or less accounted for. Some of these people came to be Trump supporters, and they did cast their ballots. There was also a financial aspect that led to less state-level polls being conducted. In addition, it is claimed that many people only have a mobile phone—usually younger people—while many pollsters use mainly landline phones, which are used more by older people [

56]. However, from the sample polls we examined, all of them used a combination of landline and mobile phones.

Last minute changes in the choice of voters or those who at the last minute decided to not cast ballots went without notice by pollsters. This may be caused by a variety of reasons, such as FBI director James Comey’s late decision to review additional Clinton emails which might have swayed some voters without being noticed by the pollsters [

57]; and the Get Out the Vote [

58] campaign may have been much more effective for one candidate than the other, and this may have resulted in more supporters of one candidate casting their ballots than initially expected.

“The Shy theory”/“The lying” phenomena describes the situation when respondents do not give candid answers or an effect of the “Spiral Silence” [

59]—when voters become reluctant to express their preferences to pollsters. It is explained by the fact that Clinton supporters were more likely to admit that to pollsters compared with Trump supporters. Moreover, voters were sheepish about admitting to a human pollster that they were backing Trump. There is even a claim that Trump supporters considered pollsters and the media as biased against Trump, thus they preferred to lie on their preferred choice to make polls intentionally mistaken. Research that was conducted on this claim found “

no evidence in support of the “Shy Trump Supporter” hypothesis” [

60].

Despite the errors, the findings from the 2016 US presidential election conclude that pre-election polls are still an accurate tool to predict the results. This has been evident in the data shown above concerning the popular vote and most of the inter-state pre-election polls. This fact should alone lead to eliminating almost all the explanations of the failure. For example, one cannot claim that there were significant sampling errors, or significant last-minute changes, or a significant number of interviewees who lied to pollsters or miss-led them, only in few states while in a majority of states it was not so significant.

Hence, we suggest that the way to explain the failure and to fix the problem should not be based on those claims: sampling errors (i.e., database or misrepresentation of non-voters in 2012), or last-minute changes, or the “Shy Theory.” Instead, it needs to be based on this research’s findings—that the sampling methods are not robust enough for the various social structures.

4. Discussion

The importance of popular vote predictions is well-established in the literature [

61]. It is most suitable in an electoral system which is not divided into constituencies, such as in Israel [

62]. However, this research focused on the US elections in which the elected president is decided by winning the electoral college of the presidential constituencies that are in each and every state. The findings of this research question the use of the popular vote to project the winner of the presidential elections in the US. Given that this is the second time in five election cycles (2000 and 2016) that the candidate who won the Electoral College, and became president, had lost the popular vote [

63], this study argues that predicting the popular vote is becoming less relevant.

Instead, predictions of the US presidential elections need to be based on predicting the winner of the Electoral College in every state. However, this research showed that predicting the vote in the constituencies—the states—is less accurate than the popular vote in some cases. Therefore, an improvement is required in the method of predicting the vote at the state level, in order to secure the highest level of accuracy. Accurate prediction of the outcome of elections in all states independently will produce an accurate prediction of the elected president.

Furthermore, this study suggests that the primary failure in predicting the US 2016 presidential election was due to the non-adjustment of the methods to social structures and its social groups. Among the reasons for the failure of predicting the winner in the 2016 campaign is that pollsters underestimated Trump’s support among voters who are White, Christian, mostly Protestant, (Evangelical or Mainline), highly religious, and who live in rural areas.

The miss-predicted 2016 US election is among a series of failures of pre-election polls around the world in the past two years—Poland, UK, and Israel, in which polls mainly miss-predicted a win of a more conservative party or a single candidate. Hence, it may be a sign of a change happening in democratic societies, regardless of the type of political system or electoral method. However, further research in those countries and others are required to approve this claim. From our perspective in this paper, focusing on the US, the polling approach and methods were not able yet to reflect this change. For that, polls need to be able to adapt their methodology. Otherwise, it risks becoming not relevant in some of the election cycles ahead and in more countries.

Our conclusion of an existing problem that needs to be examined and solved is in line with the findings of other scholars such as Prosser and Mellon [

34], Cowley and Kavanagh [

36], Moon [

37], and Toff [

38]. All agree that current methods need to be re-examined to improve the accuracy of pre-election polls. The Report of the Inquiry into the 2015 British general election opinion polls [

39] and Lauderdale et al. [

35] mainly focus on the unrepresentative samples, as this research suggests.

However, our findings contradict with other scholars. We contradict mainly with the findings of Jennings and Wlezien [

9], Jennings [

40], the AAPOR report [

41], and Tourangeau [

42]. Our findings contradict those of Panagopoulos et al. [

43] by providing a significant bias in some of the state level pre-election polls and contradicting their results. In this study, we show that there is a problem in the sampling that is not representative of all social groups. Their main focus is that the failures are “outliers,” only in a few state-level cases, and is likely not to repeat. However, we showed in this research that that kind of errors could sway the prediction to the wrong outcome. It could happen in any country, regardless of its political structure or electoral system. To guarantee that the failure is minimized, improvement is needed.

Based on the outcome of this research, in order to improve pre-election polls, it is suggested to adopt “Cleavage Sampling.” It can better represent the expected turnout of specific social groups, such as some of Trump’s supporters. This is in line with the suggestions of post-stratification methods by Lauderdale et al. [

33]. Polls are about minimizing the uncertainty in the forecast of election results by obtaining more statistically significant results. Sampling is concerned with the selection of a subset of individuals from within a statistical population to estimate characteristics of the whole population. To do so, we need to reflect the population better and by that to solve some of the sampling errors.

Following the miss-predicted elections’ results of 2015–2016, the main contribution of the article is that higher accuracy will be achieved by better reflecting actual trends of turnout and choice among the social cleavages in the states—by region, race, religion, and ethnicity. In this study, we found that polls that weighted for social and demographic stratifications (such as race/ethnicity, age, gender, region, partisanship, education, annual income, and marital status) gave a better accuracy compared to polls with no-weighting. The argument is that societies in Western countries are becoming more politically divided and emphasize once again the differences between social cleavages in democratic states. In this study, the findings suggest that the current models proved less able to accurately predict the vote in states that are less urbanized, with a homogeneous population, and less diverse society regarding race, ethnicity, and religion. To characterize those that the current models are not able to predict—White people who are Christian, mostly Protestant (Evangelical or Mainline), and are highly religious. As such, it is suggested that from the various sampling methods (i.e., simple random sampling, cluster sampling, systematic sampling, multiple sampling) the stratified sampling—“Cleavage Sampling”—is the one expected to be the most suitable to predict the results. This “Cleavage Sampling” will be able to produce more accurate estimates of the population than in a simple random sample of the whole population [

64]. It will work better when the population is split into reasonably homogeneous groups. Pollsters need to focus on specific states. Every state needs to be sampled according to social cleavages—by region (urban vs rural), ethnicity, race, religion, and religiosity.

Also, other suggestions need to be examined, such as the use of multiple methods and “Cross-section" distribution of the undecided.

Pollsters already concluded that to understand the trends among voters better, polls need to be repeatedly conducted over the campaign period. Moreover, others have already shown that the use of multiple methods better serves the accuracy of the prediction [

29,

65,

66,

67]. This is a potential solution to last minute changes. In this study, we found no significant difference between the different methods, including more traditional phone interviews and internet interviews. In this research, we were not able to examine the use of multiple methods due to the small sample (not many surveys used multiple methods). However, it is suggested to examine the use of multiple methods. This multiple methods approach includes the more traditional methods of collecting data—landline and mobile phone surveys along with internet polling. Moreover, it includes the use of social media trends like Twitter and Google searches [

68]. Recent research tested a new method of collecting data in the field by placing a mobile polling station in various places within a constituency [

64]. Although this was tested in a one-time experiment, it needs to be tested more in the future, as it proved to be much more accurate than traditional phone interviews or internet surveys, as it reached groups of people that are not usually represented in traditional samples.

In addition, the distribution of the undecided is one of the significant problems that pollsters cope with. Many pollsters assume a proportional distribution of the undecided. Thus, many polls fall short of predicting the results due to the wrong distribution of the undecided. In such a changing world, it is suggested to examine the “Cross-section” distribution of the undecided. In this approach, it is suggested to distribute more undecided to the challenger, if there is an incumbent [

69] or a challenger to a candidate of an incumbent’s party. This is in line with the basic approach included in “The Keys to the White House” and “The Primary Model” which both turned out to be more accurate in predicting the results of the 2016 US presidential election. It is a potential solution to the “Spiral Silence”. According to this, in a “two-candidate” election, the “challenger” candidate will receive the higher share from the undecided—similar to the percentage of the leading candidates, and the “leading” candidate will receive the lower share from the undecided—similar to the percentage of the other.