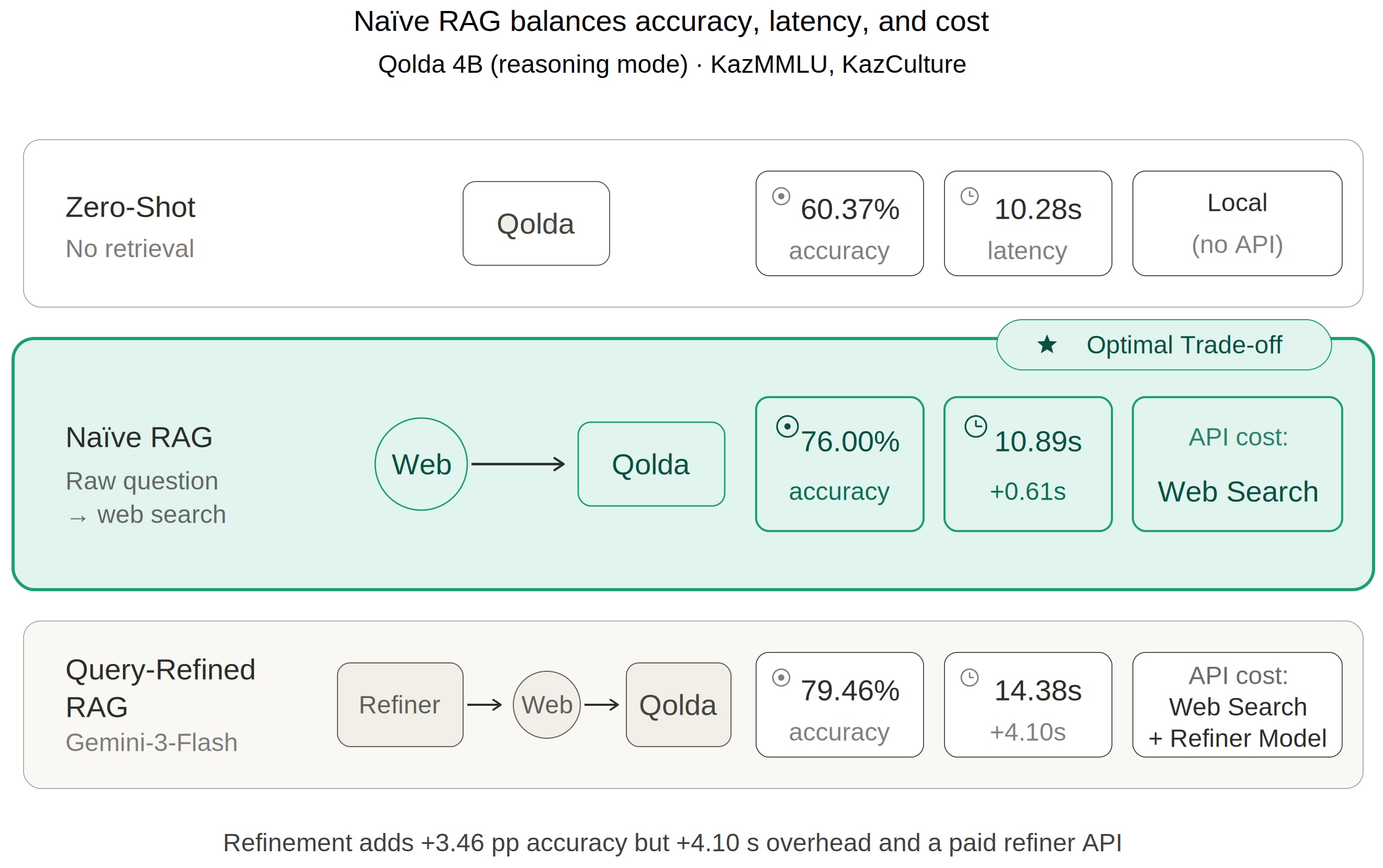

Web Search-Enhanced Small Language Models: A Case Study for a Kazakh-Centric Language Model

Abstract

Share and Cite

Maxutov, A.; Medeu, N.; Varol, H.A. Web Search-Enhanced Small Language Models: A Case Study for a Kazakh-Centric Language Model. Mach. Learn. Knowl. Extr. 2026, 8, 128. https://doi.org/10.3390/make8050128

Maxutov A, Medeu N, Varol HA. Web Search-Enhanced Small Language Models: A Case Study for a Kazakh-Centric Language Model. Machine Learning and Knowledge Extraction. 2026; 8(5):128. https://doi.org/10.3390/make8050128

Chicago/Turabian StyleMaxutov, Akylbek, Nūrali Medeu, and Huseyin Atakan Varol. 2026. "Web Search-Enhanced Small Language Models: A Case Study for a Kazakh-Centric Language Model" Machine Learning and Knowledge Extraction 8, no. 5: 128. https://doi.org/10.3390/make8050128

APA StyleMaxutov, A., Medeu, N., & Varol, H. A. (2026). Web Search-Enhanced Small Language Models: A Case Study for a Kazakh-Centric Language Model. Machine Learning and Knowledge Extraction, 8(5), 128. https://doi.org/10.3390/make8050128