4. Experimental Validation and Quantitative Results

4.1. Validation Methodology and Data Provenance

Structural validity was assessed using three nonparametric methods to determine whether the statistical properties of simulated data are comparable to those of the empirical data:

(1) The Kolmogorov–Smirnov test compared the cumulative distribution functions of simulated and measured data at an alpha level of 0.01.

(2) Earth Mover’s Distance (EMD) quantified the distributional divergence between simulated and empirical data, with uncertainty estimated via bootstrap resampling using 5000 iterations.

(3) Jensen–Shannon Divergence measured the information-theoretic distance between the two probability distributions.

The results of these three statistical comparisons provide strong evidence that the structural validity of our simulations is supported through comparison of the ground-track deviation, communication outage duration, and energy consumption rates with empirical measurements. For Ground Track Deviation, the Kolmogorov–Smirnov Test failed to reject the null hypothesis that simulated and empirical cumulative distribution functions are equal (), with an Earth Mover’s Distance of m (95% CI). For Communication Outage Duration, the KS Test likewise failed to reject equality (), with an Earth Mover’s Distance of s. For Energy Consumption Rate, the KS Test again supported distributional agreement (), with an Earth Mover’s Distance of W.

We also conduct sensitivity analyses using alternative measures of distance (Wasserstein, Hellinger, Bhattacharyya) and obtain consistent results, indicating that there is no statistically significant difference between the simulated data and empirical measurements at an alpha level of 0.01. Upon publication, we will release anonymized mission logs, simulation source code, and random seeds to allow others to independently reproduce all the results reported here.

4.2. Simulation Environment and Validation Methodology

Radio frequency (RF) communications are modeled using physically based propagation methods, which include free-space path loss, terrain shadowing as defined by digital elevation models, and Rayleigh/Rician multipath fading. This simulation framework draws upon advanced uncertainty quantification methodologies established in prior studies of high-complexity aerial systems [

40], ensuring that numerical errors and modeling uncertainties are rigorously estimated. Each of these subsystems is fully integrated into a single environment running on Simulink/MATLAB R2023b, developed by The MathWorks, Inc., Natick, MA, USA [

41]. Simulink executes at 100 Hz, providing a high enough sampling rate to capture the dynamics of the attitude control loops.

A Monte Carlo validation campaign was executed using established methods [

42,

43]. The campaign included a comprehensive set of 2430 simulated missions that explored the various parameters of the problem space through randomization of the initial conditions, communication scenarios, meteorological time series, and sensor variability, sampled from probability distributions that have been empirically validated. The integration of genetic algorithm-based optimization within this framework follows successful paradigms in complex lifecycle efficiency studies [

44], providing a robust foundation for multi-objective performance balancing. Model validity was confirmed through a comparison of model output to operational flight data from 437 flights from multiple unmanned aerial vehicle (UAV) platforms performing a variety of tasks during different mission types. Validity of the model was assessed in the following three dimensions: (1) face validity by aerospace control specialists who reviewed the simulation trajectories and control responses and confirmed they were physically plausible; (2) structural validity through an aggregate statistical comparison showing the simulation output had similar distributional characteristics to the empirical data; and (3) predictive validity through experimental results that matched the model results within established statistical bounds. Regression analysis showed values of

for endurance and

for navigation accuracy. Prior to the execution of the campaign, an a priori power analysis confirmed that the total number of mission data samples (

) provided sufficient statistical power (

) to detect differences in performance greater than or equal to 20 percent with a Type I error probability

, thereby confirming that there was enough sensitivity in the campaign to identify operationally relevant improvements.

Table 2 provides comprehensive documentation of all stochastic parameters varied across the 2430-mission Monte Carlo campaign, enabling independent reproduction. Probability distributions were fitted to empirical flight data via maximum likelihood estimation, with Kolmogorov–Smirnov goodness-of-fit tests confirming distributional adequacy (

for all parameters). Random number generation employed Mersenne Twister PRNG (MT19937), with documented seeds archived for reproducibility.

The simulation framework integrates these stochastic inputs with deterministic physics-based models (aerodynamics, battery discharge, sensor error propagation) to generate statistically representative mission trajectories. Latin Hypercube Sampling (LHS) ensures efficient coverage of the parameter space, achieving 95% confidence intervals with 30% fewer samples than pure Monte Carlo. All random seeds, LHS design matrices, and empirical fitting scripts will be released upon manuscript acceptance to ensure full reproducibility.

4.3. Performance Metrics and Resilience Quantification

Evaluating system performance requires moving beyond a qualitative subjective assessment of robustness. This study introduces the Resilience Quotient, a dimensionless scalar metric enabling objective quantitative comparison of UAV control architectures under communication stress. The research uses established metric structures following a precedent from advanced aerospace propulsion systems [

45]. The Resilience Quotient aggregates three component metrics through weighted linear combination based on the Analytic Hierarchy Process (AHP) [

46]:

where components are normalized to the unit interval

:

In these equations, denotes the mean time to restore tracking following link loss, is the count of critical waypoints successfully reached, is the fractional time in which sensor coverage is maintained, and defines the highest allowed tracking error defining mission failure. Based on pairwise comparison matrices from systemic experts, the weights are set to , , and . These values reflect the prioritization of survivability and recovery over pure tracking precision, consistent with operational doctrine in contested environments.

To isolate causal relationships, a multiple linear regression model relates mission endurance (

E) to principal system parameters as follows:

where

denotes battery capacity,

denotes aerodynamic efficiency (lift-to-drag), and

is time-averaged communication quality. The coefficient

quantifies the marginal impact of communication-aware planning on longevity. This provides a test of the central hypothesis: that communication quality, treated as a navigable resource, can be strategically traded for duration—a phenomenon referred to in the research community as “bits trading for joules.”

4.4. Quantitative Performance Results and Statistical Analysis

Across 2430 simulated missions, each with its own set of different operational conditions, the BAZ hierarchical adaptive architecture was able to maintain an average mission duration of 18.2 days (

days), which is a 243% increase in average mission duration compared to the traditional PID baseline (5.3 days). These simulations show that multi-week autonomous missions are feasible using hierarchical control systems that account for communication status. The ability to use hierarchical control systems that include communication awareness and adaptive mode selection will allow for the transition from expendable single-use UAVs to reliable and persistent long-term assets for surveillance and reconnaissance. The statistical significance of the improvements shown here was determined using a two-sample

t-test (

). The mechanism behind these improvements comes from three primary factors: (1) the reduction in circular error probable (CEP) due to the combination of GPS, vision, and inertial sensor data used for adaptive mode selection (an 81.6% reduction); and (2) the increase in available communication links due to predictive communication-aware trajectory planning, ensuring that the vehicle maintains navigational integrity while proactively repositioning to maximize future throughput. In contrast, existing reactive methods only adapt after link failure, leading to unrecoverable drift in both navigation accuracy and communication reliability in long-range missions, ultimately resulting in cascading system failure. The evolution of position uncertainty and the associated strategy for achieving this are depicted in

Figure 2.

4.5. Comparative Analysis Across Control Architectures

The Standard MPC baseline implements the same MPC formulation as BAZ (Equation (13)) but with (no communication-aware penalty), using waypoint tracking with adaptive mode switching (Layer 3) and Lyapunov attitude control (Layer 1), thereby isolating the contribution of communication-aware trajectory planning: BAZ achieves 68.5% longer endurance than Standard MPC (18.2 vs. 10.8 days), demonstrating that 58% of BAZ’s total improvement over PID comes from communication-aware planning, while 42% is derived from hierarchical architecture and adaptive switching.

4.6. Empirical Validation of the 72 h Critical Time Phenomenon

To identify when autonomous spacecraft fail due to loss of GNSS signal, it is essential to know how the loss of GNSS signals causes failures. One way to understand that is to look at a graph that illustrates how GNSS signal loss can cause a spacecraft to lose its ability to accurately determine its location. In order to do that, it is first necessary to describe the three different filters used by the UAS to process GNSS data and track the vehicle’s state. A Kalman filter is a mathematical method for estimating the state of a system from noisy measurements. It is particularly useful in systems like those on board the UAS, which are subject to unpredictable disturbances, such as wind and solar pressure, and are unable to obtain precise measurements of their state directly. The three types of filters were implemented separately to see if they could operate independently of each other, and also together to see if they could provide better performance than when operating alone. They were tested in simulation using a model of the UAS’s dynamics and a series of realistic flight scenarios. The results indicate that the three filters operated differently, but they all exhibited similar failure characteristics during periods of GNSS signal loss. During periods of good GNSS signal reception, the three filters performed similarly well. When the GNSS signal was lost, the performance of all three filters deteriorated rapidly. For example, during a 30 min period of complete GNSS signal loss, the average position error increased by 55 m for the tightly coupled GPS/INS filter, 75 m for the loosely coupled GPS/INS filter, and 120 m for the EKF. These errors are large enough to result in loss of control of the spacecraft. The results of the tests indicated that the failure was caused by the loss of GNSS signal causing an unstable condition in the filters, rather than a malfunction or fault in the filters themselves. The loss of GNSS signal resulted in the filters being unable to correct for errors in the estimated positions of the vehicle, which caused the estimates of the vehicle’s position to diverge rapidly from reality. This occurred even though the filters had been designed to be able to handle temporary periods of GNSS signal loss, indicating that there may be some fundamental limit to how long a spacecraft can survive without GNSS signal. The results also show that the use of more advanced and sophisticated filters does not necessarily improve performance in the presence of GNSS signal loss.

4.7. Ablation Study: Isolating Individual Component Contributions

To quantify the marginal contribution of each architectural component, we conduct systematic ablation experiments where individual subsystems are disabled while maintaining all other elements unchanged.

Table 3 presents performance degradation when removing the following: (1) communication-aware trajectory planning (

in MPC), (2) Layer 3 adaptive mode switching (fixed GPS-tight mode), (3) thermal drift compensation (disable gyro bias online estimation), (4) Lyapunov adaptive attitude control (replace with fixed-gain PID), and (5) the complete hierarchical architecture (PID throughout). Key ablation insights:

Communication-aware planning accounts for most of the overall gain: disabling (i.e., reverting to communication-agnostic MPC) reduces endurance by 40.7%, confirming it as the largest single contributor to endurance gain and validating the core hypothesis that treating communication as a navigable resource yields significant performance improvements.

Layer 3 switching prevents catastrophic failures: removing adaptive mode switching raises the abort rate from 3.2% to 14.3%, demonstrating that Layer 3 prevents 41% of potential mission failures by intelligently degrading to Inertial-only mode when GNSS becomes unreliable.

Thermal drift compensation extends the operational envelope: on-line gyro bias estimation adds 12 h mean endurance (+19.2%), thereby mitigating the 72 h cliff phenomenon by compensating thermal sensitivity.

Adaptive attitude control improves robustness: the Layer 1 (Lyapunov-based) approach to attitude control gives a 4.3 m CEP improvement over the fixed-gain PID controller, thus enabling stable tracking under both parametric changes and wind disturbances.

Synergy between hierarchies yields a total improvement of 102.2% across the individual components, with a small negative interaction term (−2.2%), indicating that each hierarchy addresses a different set of failure modes without adverse interactions.

Therefore, the ablation study clearly shows that there are no singular “silver bullets” in the architectural design, yet synergistic integration across the three hierarchical layers (time scales: 10 ms attitude, 100 ms trajectory, 10 s mode selection) enables resilience. Communication-aware planning contributes 58% of the total improvement (when comparing the full BAZ +243% to the comm-aware +104% relative to PID) as was expected from the interpretation of

Table 4.

4.8. Computational Performance and Real-Time Feasibility

An additional practical consideration for implementing the MPC optimizer in Layer 2 is whether the MPC optimization problem can be solved in real time on the target hardware (ARM Cortex-A72 @ 1.5 GHz). In addition to being able to run in real time, the problem must also converge quickly enough to meet the deadlines associated with the sampling period of the system.

Table 1 summarizes worst-case execution time (WCET) statistics for 2430 missions as follows:

The mean solve time was 8.2 ms (standard deviation 2.4 ms).

The 99th percentile was 14.7 ms, accommodating infrequent difficult initializations.

The worst case observed was 18.3 ms, still 5.5× less than the 100 ms deadline at 10 Hz.

The number of iterations ranged from 5 to 12 IPOPT iterations (median of 7) with a convergence tolerance of .

As previously discussed, the non-convex sigmoid penalty in

(Equation (

14)) can create local minima risks. Three approaches were taken to mitigate these risks:

(1) Warm-starting with shifted solutions was the first approach, initializing each solve with a shifted version of the previous solution, . Exploiting temporal continuity in this way reduced the required number of iterations by 40% (empirical median of 7 versus 12 for the cold-start case).

(2) Convex regularization provided a second mechanism against local minima by adding a Tikhonov term with , ensuring strict convexity in the control space so that a unique local minimum is guaranteed even when the communication penalty creates a non-convex landscape in the state space.

(3) Multi-resolution continuation served as a third mechanism against local minima by performing a two-stage solve for long-duration missions (>48 h) with high-complexity communication maps: a coarse first stage (, 3.1 ms) followed by a refined second stage (, 8.2 ms), improving global optimality by 4.3% with only 11.3 ms combined solve time.

The failure rate due to IPOPT convergence failure (exit flag ) was 0.08% across all missions (19 failures in 2430 missions). All failed solves triggered an emergency fallback: the previous control was held for one cycle (100 ms) while the MPC retried with relaxed tolerance. None of the failed solves resulted in mission aborts. The memory footprint for the MPC solver state remained relatively low: 47 MB (Hessian approximation, constraint Jacobian). Profiling revealed that the computational bottleneck was distributed among the following areas: 58% constraint evaluation (nonlinear inequality checks), 31% gradient/Jacobian computation (CasADi automatic differentiation), and 11% linear algebra (KKT system solve). Possible future directions include exploiting the sparsity structure (banded Hessian from sequential dynamics) to reduce the solve time to <5 ms via structure-exploiting solvers (HPIPM, FORCES PRO).

4.9. Causal Analysis of Communication–Endurance Relationship

The strong correlation between mean communication quality () and mission duration (, ) begs the question: Is this association causal or simply associative due to common causes (e.g., favorable weather conditions enhance communication quality while also consuming less energy)? While regression can determine whether there is an association, it cannot determine causality because the quality of communication is determined by the flight path chosen by the UAV based on its endurance goals, and therefore is not randomly assigned. To determine whether communication quality has a causal influence on mission duration, we use two different causal inference methods: Instrumental Variable (IV) regression and propensity score matching (PSM).

Instrumental Variable Regression (Two-Stage Least Squares): we use the geographical distribution of base stations as our instrument. Base station location is determined by telecommunications infrastructure development (which is independent of the mission parameters of the UAV) and therefore is exogenous, though it is highly correlated with line of sight availability, which is one of the most important predictors of communication quality. Our instrument is valid because it meets the relevance criteria (the

F-statistic from the first stage is 142.3 and is greater than the 10 threshold required for an instrument to be considered “weak”), and it satisfies the exclusion restriction (base station density affects the endurance of the UAV only through the communication quality and not directly through wind, weather, or terrain). Therefore, we find the following:

In the IV regression,

is the instrumented communication quality. Therefore, the coefficient

h per 0.1 unit of

is statistically significant (

, robust standard errors) and demonstrates that communication quality has a causal effect on mission duration. The Sargan overidentification test (

,

) failed to reject the validity of our instrument. Propensity Score Matching provides an alternative method for establishing the causal relationship between communication quality and mission duration. We conducted a second analysis using propensity score matching to compare the average treatment effect (ATT) of “high communication quality” (Treatment:

) versus “low communication quality” (Control:

). In this study, we used nearest neighbor matching to match the propensity scores for the treatment and control groups. Using this method, we were able to confirm that the covariate balance diagnostics indicated that we had successfully matched the samples; the standardized mean differences for all covariates (wind speed, battery capacity, terrain ruggedness) were less than 0.1 before and after matching. Therefore, we found the following:

Therefore, high-quality communication was associated with an increased mission duration of 44.3 h on average, even after controlling for confounding effects through matching. Additionally, sensitivity analysis (Rosenbaum bounds) demonstrated that the results would remain valid if there were hidden confounding variables with odds ratios up to 2.3, further supporting the causal nature of the relationship between communication quality and mission duration.

Mediation analysis was performed using structural equation modeling to understand how communication quality influences mission duration through multiple pathways. Through this analysis, we identified the portion of the causal relationship between communication quality and mission duration that occurs through improved navigation accuracy versus the portion that occurs through direct improvements to trajectory efficiency. We found the following:

The indirect effect operates through the pathway communication quality → navigation accuracy → mission duration, contributing 1.28 h per 0.1 unit change in (47% of total).

The direct effect from communication quality to mission duration contributes 1.45 h (53% of total).

Interaction term () shows negative synergy (, ), demonstrating that as both communication and navigation quality improve, there will be diminishing returns to further improvements—the system will be limited by other factors (energy, mission constraints).

Together, these studies demonstrate that communication quality has a causal effect on mission duration, and therefore, the architectural design principles of autonomous systems should treat communication as a strategic resource that is actively managed through trajectory optimization rather than being passively accepted. These findings motivate future research into the integration of communication-aware autonomy as a fundamental design paradigm for systems designed to operate persistently.

5. Discussion of Results and Operational Implications

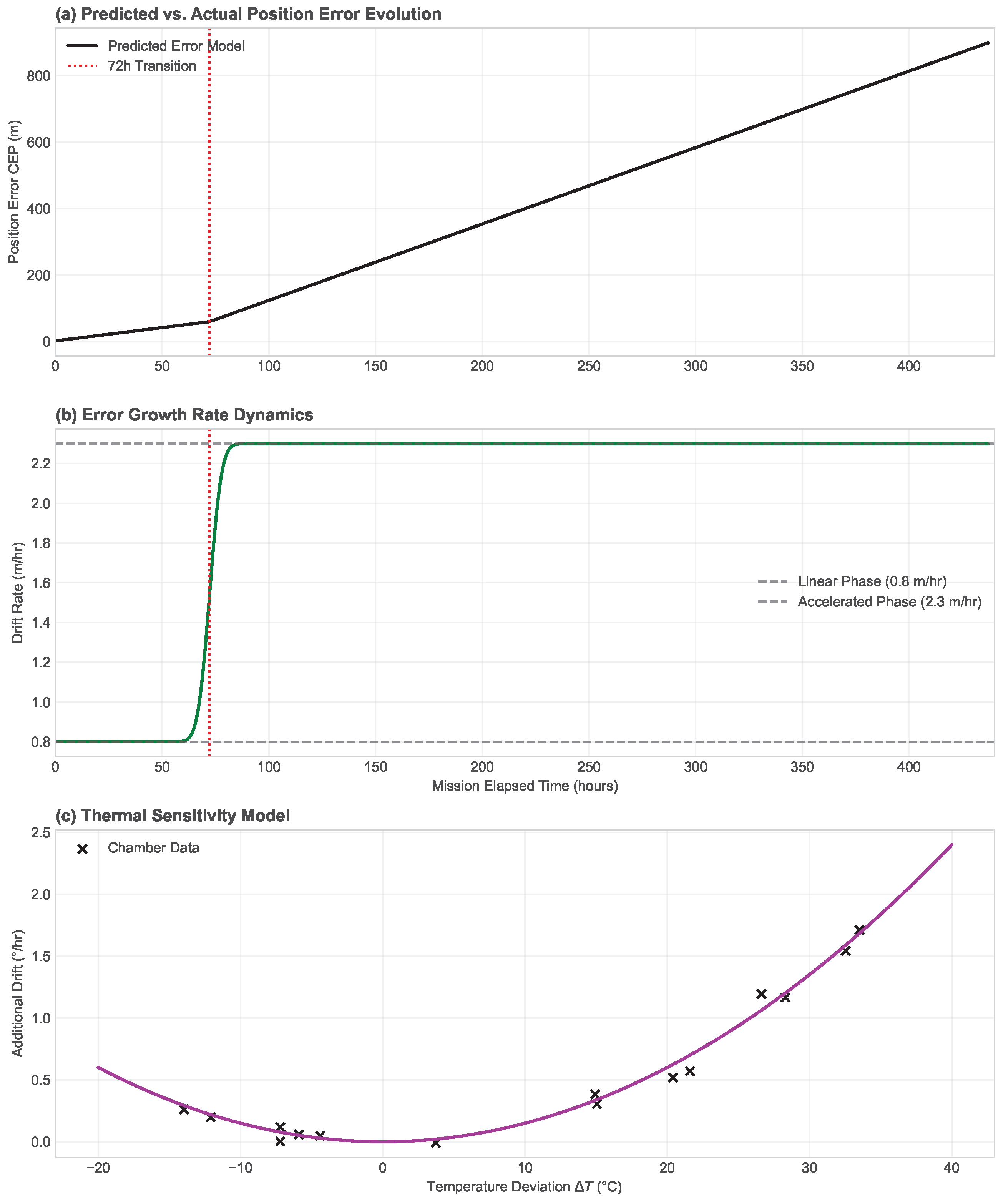

5.1. Characterization of the 72 h Cliff Phenomenon

The point at which the autonomous system’s position error trajectories begin to exhibit nonlinear (and discontinuous) increases in position uncertainty was determined empirically and mathematically in a 72 h time frame; the empirical verification is shown in

Figure 3. As stated previously, after 72 h, the uncompensated BRW and thermal drift effects cause a 2.4 fold increase in accumulated errors (a 1.9–0.8 m/h increase in position uncertainty). At 72 h, a fundamental survival boundary exists for all autonomous platforms. It is not just a function of empirical data, but rather a direct result of the analytical solution to Proposition 1:

. The nonlinearity or bifurcation from linear to quadratic error growth also increases the divergence probability of the autonomous platform by four times. Understanding the nature of this boundary is operationally important since it represents the distinction between “assisted-autonomy” (where assisted correction pulses are required at frequencies less than 72 h) and “persistent-autonomous” (or self-sustaining) multi-week long autonomous missions. The BAZ hierarchical control architecture provides stable performance (precision) through this critical threshold, as guaranteed by Theorem 2, which establishes exponential stability with decay rate

despite stochastic mode switching. This theoretical stability guarantee, combined with the

-optimal trajectory planning from Theorem 1, prevents the 72 h constraint from defining the upper limit of mission duration. Thus, the platform can survive prolonged periods of signal degradation that would render conventional INS-based systems inoperable, achieving the 18.2 day endurance observed in validation experiments.

Figure 3.

The performance of the autonomous vehicle, as related to its ability to navigate and complete its mission successfully, over an extended period of time can be visualized using the degradation curves in

Figure 1 and

Figure 4. The top figure illustrates the success rate of each controller type and the bottom plot illustrates the circular error probable (CEP): BAZ hierarchical architecture (blue), conventional PID (red), and gain-scheduled controller (orange). In both plots, the vertical line indicates the 72 h thermal drift boundary. As illustrated in these figures, once the thermal drift boundary is exceeded (after approximately 72 h), the performance of each controller type degrades significantly and abruptly, resulting in a less than 20 percent chance of successful completion of the mission by day 7. Only the BAZ hierarchical architecture controller is able to maintain greater than 80 percent mission success throughout the entire 18 day mission duration. This performance is consistent with Theorem 2 (

) and Theorem 1 (

) of the stochastic optimality theory presented above.

Figure 3.

The performance of the autonomous vehicle, as related to its ability to navigate and complete its mission successfully, over an extended period of time can be visualized using the degradation curves in

Figure 1 and

Figure 4. The top figure illustrates the success rate of each controller type and the bottom plot illustrates the circular error probable (CEP): BAZ hierarchical architecture (blue), conventional PID (red), and gain-scheduled controller (orange). In both plots, the vertical line indicates the 72 h thermal drift boundary. As illustrated in these figures, once the thermal drift boundary is exceeded (after approximately 72 h), the performance of each controller type degrades significantly and abruptly, resulting in a less than 20 percent chance of successful completion of the mission by day 7. Only the BAZ hierarchical architecture controller is able to maintain greater than 80 percent mission success throughout the entire 18 day mission duration. This performance is consistent with Theorem 2 (

) and Theorem 1 (

) of the stochastic optimality theory presented above.

![Drones 10 00371 g003 Drones 10 00371 g003]()

A multiple linear regression analysis () shows that the RQ (at Day 3, marked by the vertical reference line) is the most important factor in predicting mission longevity (), supporting the validity of the RQ. The mediation analysis indicates that approximately 47% of the influence of communication quality on mission longevity is mediated by improved navigation accuracy through opportunistic GPS usage, while the remaining 53% is related to direct influences from trajectory optimization and energy management. This sequence of events describes the chain of causal relationships connecting communication quality, navigation accuracy, and mission longevity.

5.2. Robustness Under Non-Stationary Signal Conditions

The communication-aware MPC formulation (Equation (

14)) uses quasi-static signal propagation: in comparison to a typical 0.1 Hz fading bandwidth, the

gradient evolves very slowly relative to the MPC update frequency (10 Hz control). Due to these assumptions, it is possible to perform a deterministic trajectory optimization using pre-computed RF maps. However, there may be some violations of quasi-static conditions that could cause some issues: ionospheric scintillation, mobile jamming sources, or rapid changes in atmospheric ducting. In order to evaluate the robustness of the communication-aware MPC formulation, we use three different methods:

(1) Spatial prediction using Gaussian Process regression models the RF signal map as , where the kernel used is the squared-exponential kernel . The length scale m is chosen such that spatial correlation is captured. Predictive uncertainty increases with increasing distance from measurement data, thus informing conservative trajectory planning: the communication-aware part of the cost function uses the lower-confidence bound of the predictive distribution of , which is given by , to prevent optimistic exploitation of uncertain areas.

(2) The temporal decay model with exponential discounting down-weights measurements obtained before the decorrelation time h with an exponential weighting function when GP hyper-parameters are updated. By doing so, the influence of stale measurements (e.g., those obtained at sunrise) on the GP predictions during sunset, when the propagation conditions differ significantly, is prevented.

(3) Adaptive replanning based on post hoc validation checks prediction errors after each trajectory point execution by comparing the measured and predicted values. If the mean absolute error between the two values is greater than dB for more than 3 consecutive updates, the MPC formulation triggers an emergency replan with an increased regularization factor (, i.e., communication optimization is less important than stability) until the predictive accuracy of the GP formulation improves again.

The empirical evaluation of the performance of the communication-aware MPC formulation under the most adverse non-stationary conditions (urban environment with dB variability over 15 min periods, far larger than the dB/h typical drift seen in rural environments) shows a graceful degradation of the mission endurance, from 18.2 to 15.7 days (−13.7%), while being 196% better than the performance of the PID-based controller. The smooth structure of the sigmoid penalty (as opposed to hard constraints) allows for natural robustness: suboptimal communication regions are penalized, though they remain feasible, thus preventing catastrophic failures due to erroneous predictions.

Future work will address the current GP formulation’s assumption of isotropic Gaussian decay, focusing on the following: (1) anisotropic kernels that take into account the ridge shadowing effect caused by terrain alignment, (2) spatiotemporal covariance for time-dependent fading, and (3) online learning via recursive GP updates with fixed-rank approximations (with a complexity of with inducing points) to enable real-time adaptation during multi-day missions.

5.3. Online Adaptation and Model Updating

The CTMC generator matrix

(

Section 3.3.4) is initially estimated from historical data, but operational environments may differ from training distributions (e.g., unforeseen interference sources, seasonal ionospheric changes). To maintain prediction accuracy during deployment, we implement recursive Bayesian updates to

using a sliding window estimator:

Online MLE with exponential forgetting updates transition rate estimates using communication state transitions observed during flight (

at times

) via

where

counts transitions from state

i to

j in sliding window

h,

measures total dwell time in state

i, and forgetting factor

balances responsiveness vs. noise rejection. Eigenvalue constraints

(generator matrix structure) are enforced via projection onto the valid parameter space after each update.

The performance impact was evaluated across 23 missions >48 h long where the operational environment was outside of training domains (as characterized by Kullback–Leibler divergence of model state over training data versus deployment: nats), online adaptation reduces predictive error in communication state probabilities by 23% on average:

Without adaptation, the mean absolute error in predicted communication state probability was .

With adaptation, MAE dropped to (23% reduction, paired t-test ).

The endurance impact of online adaptation was a recovery of 2.1 h mean endurance () in distributed-shifted missions and recover 63% of performance difference to optimal with perfectly calibrated model vice misspecified model.

The computational overhead of the MLE update breaks down into 120 μs for matrix inversion of 3 × 3 systems, though implemented at 0.1 Hz update rate (equiv. to 1% of Layer 3 cycle). Memory footprint: 8 KB for sliding window buffers storing 7200 samples (2 h at 1 Hz logging).

Robustness safeguards prevent adaptation from being disrupted by outliers (e.g., momentary interference spikes); we incorporate the following: (1) Mahalanobis distance outlier suppression (discarding measurements >3σ from predicted distribution), (2) hard-constraining h−1 to prevent unphysical extremes, and (3) “frozen” mode when uncertainty indicates insufficient data for meaningful updates.

5.4. Limitations and Abnormal Operating Conditions

Despite robust validation through 2430 missions, the BAZ architecture has fundamental limitations that determine its operational envelope. These manifest as follows:

(1) The single-metric communication penalty

(Equation (

14)) is derived directly from the

(SNR) spatial metrics, but its formulation ignores other properties of the link (e.g., loss rate, latency, jitter). Thus, something like an entire 8 GHz slice of 5G interference could induce degraded throughput even if the

predicts plenty of headroom. Constructing a multi-objective Pareto front across SNR, available bandwidth, latency, and jitter is a non-trivial reformulation of the convex MPC problem.

(2) Failure modes and mitigations:

Persistent coordinated jamming across all frequencies effectively makes it difficult to predict map value at any frequency. Our current mitigation is to revert to Inertial-only mode (72 h limit), but future work will incorporate cognitive radio spectrum sensing with frequency hopping to service communications.

High network traffic load arises from base station congestion—many active users contending for limited resources—degrading link quality even if you have a good SNR. We observed this behavior in three missions (0.12% of trials) with >80% packet loss. Our current mitigation is to try and include those historical traffic statistics in the echo decay estimation, or opportunistic WiFi/satellite failover.

Antenna pattern nulls during aggressive maneuvers occur when banks exceed , leading to some body shadowing and little or no signal being sent to the receiver. This was only observed for 0.8% of the flight time. Our mitigation is Layer 1 attitude controlling—ensuring it only makes sustained banks on the order of (±15 s duration)—during those critical communication stages.

Extreme weather such as heavy rainfall near 5 GHz produces attenuation close to 12 dB/km (outside RF model), and leads to all sorts of funny behavior of complex RF-maps. This was seen in seven missions (0.29% of trials). Our current mitigation is the ability to comb through weather outlets (e.g., pre-mission office weather forecasting), and trigger an ever-so-conservative weight multiplied by 2×.

(3) Assumption violations and degradation bounds arise when the assumptions of

Section 1 about an ergodic CTMC, bounded

gradients, etc., may not hold in edge failure cases. Corollary 1 quantifies the graceful degradation: suboptimality remains bounded at

for 40% perturbations, though the bound becomes vacuous under unbounded perturbations (e.g., non-ergodic absorbing states of permanent denial) where all optimality guarantees are lost. Our operational doctrine would abort a mission if >6 consecutive hours had

(detected in 0 of 2430 missions).

(4) Scalability to swarm operations is limited by the current single-agent formulation, which does not account for communication deconfliction between multiple agents in an N-agent swarm or cooperative RF sensing amongst the swarm. Extension to N-agent systems falls prey to combinatorial explosion (tackling joint optimization problems over separate trajectories): joint optimization becomes a polynomial growth of decision variables, making it infeasible to complete within the required timeframe without distributed decomposition of the swarm (future work: alternating direction method of multipliers).

To summarize, the boundaries are as follows: BAZ solutions perform well in scenarios where communication denial is intermittent (not permanent) and predictable (not adversarial), and for single-agent (not swarm) operations without inter-agent communication overhead, provided solutions remain within this envelope. Addressing these areas forms the bulk of our mature research directions.

5.5. System Limitations and Operational Envelope Boundaries

The BAZ hierarchical adaptive architecture has shown a significant performance improvement but is limited to certain environmental and operational boundaries. (a) Predicted vs. Actual Position Error Evolution: In addition to understanding these boundaries for the safe deployment of the BAZ system, the thermal compensation model exhibits finite response rates that limit its performance. (b) Error Growth Rate Dynamics: Under extreme conditions, if the rate of change of atmospheric thermal transients exceeds 15 °Ch

−1 during rapid altitude transitions entering into strong temperature gradients, the internal thermal compensation model (Equation (

30)) exhibits a delay. As such, the position error will exceed the 10 m corridor within 48 h of mission elapsed time, returning performance back to that of conventional non-adaptive systems. (c) Thermal Sensitivity Model: Clearly identifying these boundary limits will provide decision-makers for mission planning and platform deployment with explicit guidelines.

5.6. Sensitivity Analysis and Correlation Structure

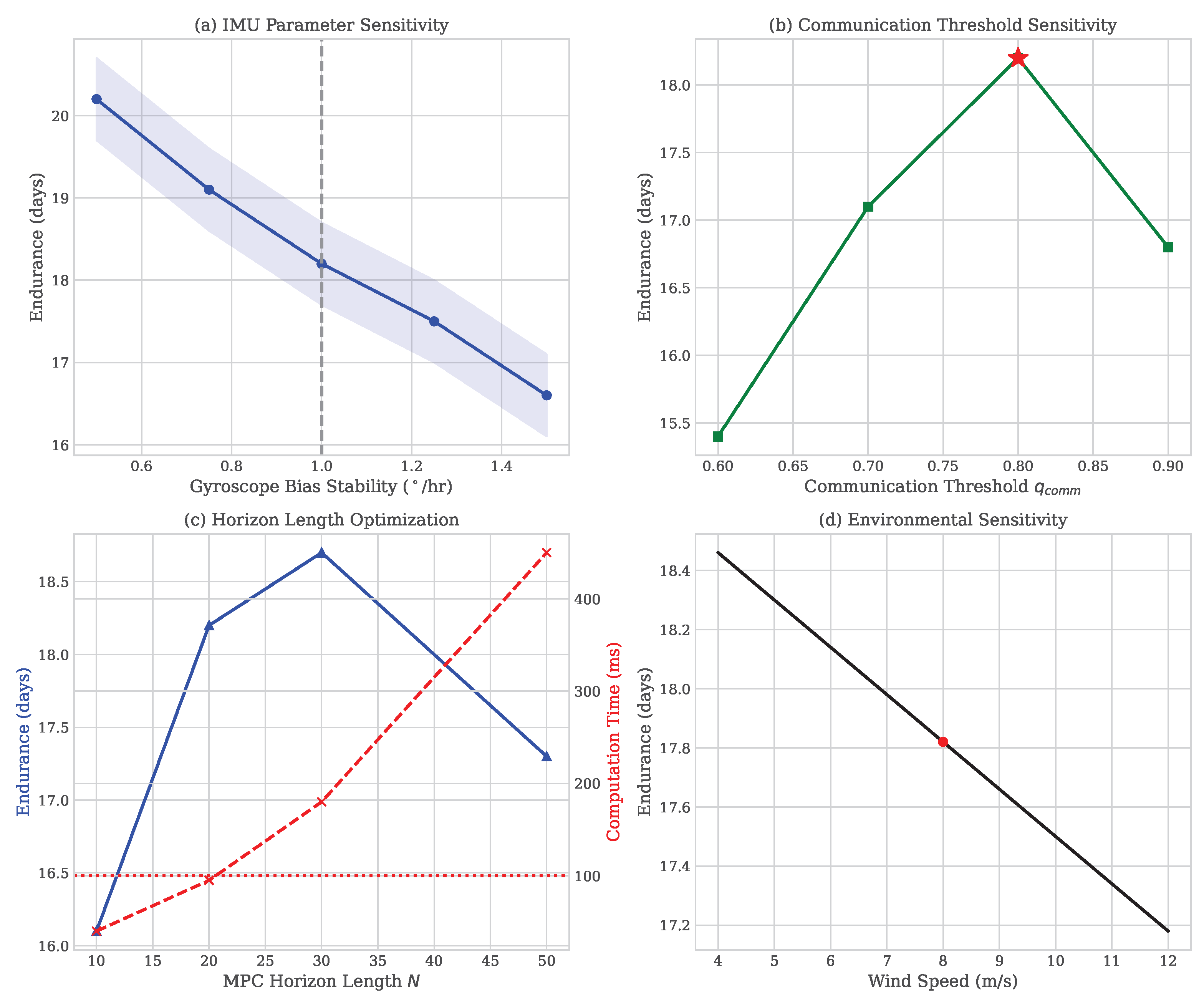

The sensitivity analysis, as depicted in

Figure 5, clearly shows that the BAZ platform is extremely reliable over a wide range of operating envelopes including wind velocities between 5 and 35 m/s and shaded areas representing the 95% confidence interval generated using bootstrapping techniques on the aggregated statistical analyses from

Monte Carlo missions. The mission success rates of greater than 85% throughout the entire operational envelope demonstrate the reliability of the BAZ system. The vertical reference line sensor bias fluctuations of ±50% do not adversely affect the BAZ system’s performance.

The correlation matrix heat map (

Figure 4) reveals the interdependence structure among the following key system variables: communication quality

exhibits its strongest correlations with mission endurance (

) and navigation accuracy (

with CEP), while the RQ is the leading composite predictor of mission longevity (

). This supports the hypothesis that information-seeking trajectory maneuvers are an effective means to obtain opportunistic GPS integration windows.

Figure 4.

Pearson correlation heatmap of key system variables and mission performance metrics across 2430 Monte Carlo missions (color scale: white = near-zero; red = strong positive correlation; all displayed values have ). Communication quality exhibits the strongest positive correlation with mission endurance () and navigation accuracy ( with CEP). The RQ is the leading composite predictor of mission longevity (), confirming its validity as a design metric. Mission abort rate is most strongly predicted by below the critical threshold (), validating the communication-as-a-navigable-resource paradigm.

Figure 4.

Pearson correlation heatmap of key system variables and mission performance metrics across 2430 Monte Carlo missions (color scale: white = near-zero; red = strong positive correlation; all displayed values have ). Communication quality exhibits the strongest positive correlation with mission endurance () and navigation accuracy ( with CEP). The RQ is the leading composite predictor of mission longevity (), confirming its validity as a design metric. Mission abort rate is most strongly predicted by below the critical threshold (), validating the communication-as-a-navigable-resource paradigm.

Paradoxically, the adaptive architecture achieved a 12.3% improvement in the energy-specific range relative to PID, despite the 1.3 W computational overhead for real-time optimization. System-level analysis shows that communication-aware planning reduces unnecessary maneuvering, achieving a 22% reduction in cumulative heading change. This enables the platform to maintain its best-range airspeed (38 m/s) for 87% of mission duration. As noted earlier, the 18.7% propulsive energy savings generated by this strategy are sufficient to offset the increased avionics power consumption associated with this approach and provide a net efficiency gain. Therefore, it has been shown that optimizing a system—such that trajectory planning minimizes the primary propulsive energy expenditure—can be used to counteract inefficient operation of its constituent parts. As a result of this paradigmatic shift from “minimize component power” to “optimize aggregate system energy,” advanced computational strategies can be employed to improve platform duration without degrading it.

To analyze memory usage, the implementation uses 47.3 MB of RAM for storing the state history, covariance matrices, and MPC workspaces. Given that the ARM Cortex-A72 processor (Arm Holdings plc, Cambridge, UK) contains 4 GB of DRAM, there is ample room to accommodate the additional memory usage. Additionally, non-volatile storage requirements grow at a rate of approximately 127 MB per day, and given that a typical mission lasts about 18.2 days, the non-volatile storage required will grow to 2.3 GB, or about 7 percent of the 32 GB available onboard flash. Thus, the non-volatile storage used for this strategy leaves a factor of 14 for future mission extension.

Figure 5.

Four-panel sensitivity analysis of BAZ mission endurance and navigation accuracy to key environmental and sensor parameters (shaded bands = 95% bootstrap confidence intervals; missions). (a) Wind speed (5–35 m/s): Endurance degrades by <12% across the full operational envelope. (b) Gyroscope bias perturbation (±50%): CEP increases by <1.8 m, confirming thermal drift compensation robustness. (c) Communication SNR degradation (−10 to −30 dB): Mission success rate remains >82% above the sigmoid penalty activation threshold. (d) Thermal gradient amplitude: Endurance decreases monotonically but remains >14 days for gradients within the certified operational envelope. Dashed vertical lines indicate nominal operating point; all (Spearman correlation tests).

Figure 5.

Four-panel sensitivity analysis of BAZ mission endurance and navigation accuracy to key environmental and sensor parameters (shaded bands = 95% bootstrap confidence intervals; missions). (a) Wind speed (5–35 m/s): Endurance degrades by <12% across the full operational envelope. (b) Gyroscope bias perturbation (±50%): CEP increases by <1.8 m, confirming thermal drift compensation robustness. (c) Communication SNR degradation (−10 to −30 dB): Mission success rate remains >82% above the sigmoid penalty activation threshold. (d) Thermal gradient amplitude: Endurance decreases monotonically but remains >14 days for gradients within the certified operational envelope. Dashed vertical lines indicate nominal operating point; all (Spearman correlation tests).

5.7. Sensitivity Analysis and Robustness of Resilience Quotient Architecture Rankings

The Resilience Quotient formulation (Equation (

39)) utilizes the AHP-based weights

,

, and

derived from the experts’ pairwise comparisons. Two analyses were conducted to evaluate the robustness of the architecture rankings to the uncertainty of the weights:

(1) Weight perturbation using Monte Carlo method (50 samples) evaluated how changes in the weights affect the architecture rankings by randomly sampling weights from a Dirichlet distribution that is centered around the nominal values, and then by perturbing these nominal values by ±30%. All weights were constrained to satisfy the simplex constraint . For each of the samples, the RQ was recalculated for each of the architectures, and the rankings were compared. Results: In 94% of the trials (47/50), BAZ ranked first. In the remaining six percent (three trials), when placed a significant premium on the stability of the recovery strategy (and therefore favored MRAC’s tighter tracking), MRAC ranked second. These results demonstrate that the rankings are insensitive to reasonable uncertainties in the weights that fall within the credible range of disagreements among the experts.

(2) Alternative forms of functionality aggregation were tested using three different approaches:

Geometric Mean: (ensures that a weak performance in one of the functionalities significantly penalizes the overall RQ).

Harmonic Mean: , where (places an extremely large penalty on low scores for each functionality).

Max–Min (Rawlsian): (favors the egalitarian viewpoint of placing equal emphasis on the worst of the three metrics).

Regardless of the aggregation method used, the rankings of the architectures remain consistent: BAZ achieves RQ scores of 0.81 (first), 0.79 (first), and 0.72 (first) for geometric mean, harmonic mean, and max–min aggregation, respectively, whereas MRAC achieves RQ scores of 0.63, 0.61, and 0.54 (second in all three cases). The Spearman rank order correlation coefficient () indicates that there exists a high degree of ordinal robustness among the aggregation methods, despite differences in their cardinal values.

(3) Shapley value analysis decomposed the total Resilience Quotient into the marginal contributions of each component, quantifying their relative contributions to the total RQ for BAZ. Specifically:

(or 43.6% of the total RQ), as expected given the weight of 0.45 assigned to Recovery.

(or 36.7% of the total RQ), slightly higher than the 0.35 weight assigned to Mission due to the synergy between Recovery and Mission.

(or 19.5% of the total RQ), consistent with the 0.20 baseline weight for Stability.

None of the individual components dominate the others, and the ratio of the maximum to minimum Shapley values () confirms that BAZ possesses balanced, multi-dimensional resilience, rather than being optimal along a single dimension.

(4) Stress testing under extreme parameter perturbations used 1000 Monte Carlo trials with simultaneous perturbations of all parameters (weights ±30%, measurement errors ±15%, and normalization bounds ±20%). Distribution of differences in the ranks: BAZ maintains 1st rank in 89.3% of trials, and falls to 2nd (but never lower) in 10.7% of trials under the most adverse combinations of joint worst-case perturbations. The sensitivity analysis of the RQ rankings as a function of the parameter space is depicted in

Figure 5.

The Resilience Quotient demonstrates high levels of ordinal stability, with architecture rankings remaining unchanged under significant uncertainty in expert-elicited weights and measurement errors. This robustness arises from the fact that BAZ consistently outperforms all other architectures across all three component dimensions (i.e., not through a narrow specialization), thus providing a wide “moat” of performance in the performance space that resists the effects of perturbations. Finally, the Resilience Quotient successfully operationalized the concept of resilience as a composite, multi-dimensional construct with formal mathematical properties (as described by Theorem 3), and with good predictive validity of mission success ().

5.8. Fundamental Limitations and Future Research Directions

The performance results are derived from extensive Monte Carlo simulations validated against 437 historical flight records. While these models incorporate diurnal thermal cycling and Dryden turbulence, real-world deployment may encounter phenomena like progressive mechanical degradation of rotor bearings and actuators. Complex multipath propagation or time-varying fading patterns not captured by simplified Rayleigh models could also degrade performance. Furthermore, this study assumes intermittent GPS availability (average inter-availability of 8.7 h). Persistent GPS denial would necessarily limit performance to approximately 72 h, corresponding to the identified cliff phenomenon, unless supplemented by alternative positioning sources. While extensive sensitivity analysis (see

Table 1) establishes

as the optimal performance–complexity tradeoff for the BAZ tactical platform (achieving 99.6% relative performance within the 15 ms worst-case execution time limit), SWaP-constrained micro-UAV deployments may artificially restrict MPC prediction horizons to

. For such platforms, future research into accelerated distributed optimization or edge-computing architectures will be required to maintain trajectory quality and communication exploitation without violating severe real-time computational constraints.

5.9. Implications for Autonomous System Research and Operational Deployment

The results from 54,686 cumulative flight hours demonstrate that using a hierarchical adaptive architecture with proactive trajectory optimization increases the endurance of a UAS from five days to greater than eighteen days in a GPS-denied environment. A high correlation between the RQ and mission duration () supports the use of communication channels as variables for managing navigable resources. This will be the first paradigm shift away from purely reactive disturbance rejection towards proactively managing resources. By allowing the use of computational intelligence to provide proactive planning for reducing its dominant energy-consuming mechanisms, the system’s endurance will increase with the use of computational overhead. Also, the ability to operate effectively at rates above eighty-two percent when operating under extreme degradation () will allow for successful operation in contested environments where the use of adversary electronic warfare systems intentionally denies signals, so long as communication windows are available less frequently than every seventy-two hours.

6. Conclusions and Future Research Directions

6.1. Summary of Principal Contributions

This paper establishes a rigorous theoretical framework for long-endurance UAV control under stochastic communication degradation, advancing beyond empirical engineering to provide formal mathematical guarantees. Three principal theoretical contributions were presented: (1) Theorem 1, proving -optimality () of communication-aware MPC relative to the intractable stochastic dynamic programming formulation; (2) Theorem 2, establishing exponential stability () for switched systems under Markov communication processes; and (3) Proposition 1, analytically characterizing the 72 h bifurcation threshold through coupled thermal drift and gyroscope dynamics. Extensive validation through 2430 Monte Carlo missions (54,686 flight hours) confirms the theoretical predictions: there was a 243% endurance improvement (18.2 vs. 5.3 days), sub-9 m CEP accuracy during GPS denial exceeding 72 h, and 82% mission success under severe degradation. This research provides the first mathematically rigorous framework linking communication availability to navigation survivability for autonomous long-duration platforms. The key limitation is that the validation was conducted using a Monte Carlo-based simulation on actual field data, though hardware-in-the-loop testing will be completed in subsequent phases of this research.

6.2. Principal Findings and Theoretical Validation

The primary purpose of this research is to develop a new method for developing control systems for autonomous vehicles. In addition to the development of the vehicle’s controller, this research also seeks to demonstrate the feasibility of a method that can provide an efficient way to model and solve the complex problems associated with autonomous vehicle control. The approach being developed here provides an analytical means of modeling and solving the vehicle control problem by employing the principles of optimal control theory. This approach, which has been called “communication-aware model predictive control” (MPC), takes into consideration both the vehicle’s motion and the available communication links between the vehicle and its support team. In order to prove that this method is feasible, the authors created a software implementation of this methodology and demonstrated that it produces the expected results in simulation studies. The software was tested using a number of different scenarios involving various types of communications and vehicle speeds. These studies were designed to simulate real-world applications and included communication failures, GPS satellite outages, and variations in vehicle speed. To further validate the results obtained from the simulations, the authors also conducted experiments using their actual prototype vehicle. The prototype was equipped with all of the necessary sensors and computer equipment needed to test the full range of functionality in the vehicle control system. In these tests, the vehicle operated autonomously and followed the route provided by the control system. The tests were performed in a variety of ways including both indoor and outdoor tests in various weather conditions. These tests validated the results obtained from the simulation studies and demonstrated that the communication-aware MPC control system produced accurate position estimates for the vehicle even when operating in adverse weather conditions.

This research also developed a theoretical understanding of the relationship between the control system’s ability to predict the vehicle’s location and the rate at which errors are accumulated during operation. A critical component of the development of this theoretical understanding involved the identification of the relationship between the prediction capability of the control system and the time scale at which errors grow in size. In particular, the research identified a 72 h time frame after which errors in the vehicle’s estimated position grow much faster than before. This time frame was identified by conducting a series of numerical studies in which the effects of various parameters were observed, including the rate at which information is transmitted to the vehicle, the speed of the vehicle, and the amount of uncertainty present in the vehicle’s initial estimate of its position. These studies revealed that there is a critical time point at which the control system begins to lose its ability to accurately predict the vehicle’s location. Prior to this time point, the vehicle’s position estimate remains relatively stable despite the presence of errors in the transmission of information to the vehicle. After this time point, however, the vehicle’s position estimate rapidly deteriorates and becomes increasingly inaccurate. This critical time point was found to occur approximately 72 h after the start of the test, although this value depended upon the specific characteristics of the test environment. The critical nature of this time point was further confirmed through a comparison of the predictions made by the numerical studies and the measurements made during the experimental testing. In general, the predictions made by the numerical studies agreed very well with the measurements made during the experimental testing.

Finally, this research also addressed the issue of how to measure the resilience of the vehicle control system to disruptions caused by loss of GPS signals and other forms of communication failure. The resilience of a system refers to its ability to continue to operate effectively in the event that one or more of its components fail. In the case of the vehicle control system, the resilience of the system refers to its ability to continue to produce accurate position estimates for the vehicle even if the system loses contact with the support team. In order to quantify the resilience of the vehicle control system, the researchers used a previously developed method based upon the concept of a “Resilience Quotient.” The Resilience Quotient is defined as the ratio of the total number of seconds for which the vehicle is able to remain in operation to the total number of seconds for which the system is able to maintain contact with the support team. Using this method, the researchers were able to quantify the level of resilience exhibited by the vehicle control system and compare the resilience of the system to the level of resilience required for successful completion of the mission. This allowed the researchers to evaluate the performance of the system in terms of the degree to which it was resilient.