A Learning Analytics Conceptual Framework for Augmented Reality-Supported Educational Case Studies

Abstract

1. Introduction

1.1. Augmented Reality in Education

1.2. Integration of Learning Analytics in Education

- Micro-level: data gathered by recording a specific module or learning activity in-class.

- Intermediate level: data gathered by recording a complete training program or unit.

- Macro-level: data gathered by recording a set of educational programs or modules.

- Descriptive analytics, centering on what has already happened and answering the question of discovering patterns based on the aggregation of students’ data.

- Predictive analytics, focusing on what is going to happen and attempting to predict evolutionary trends in student’s future progress.

- Regulatory analytics, aiming at what needs are important and what factors are affecting student learning performance and proposing recommendations for future activities.

- Management analytics, converging on the financial cost of the operational/technical equipment and attempting to predict the future use of the present resources and decisions to ensure the quality of educational units and/or modules.

2. Rationale and Purpose

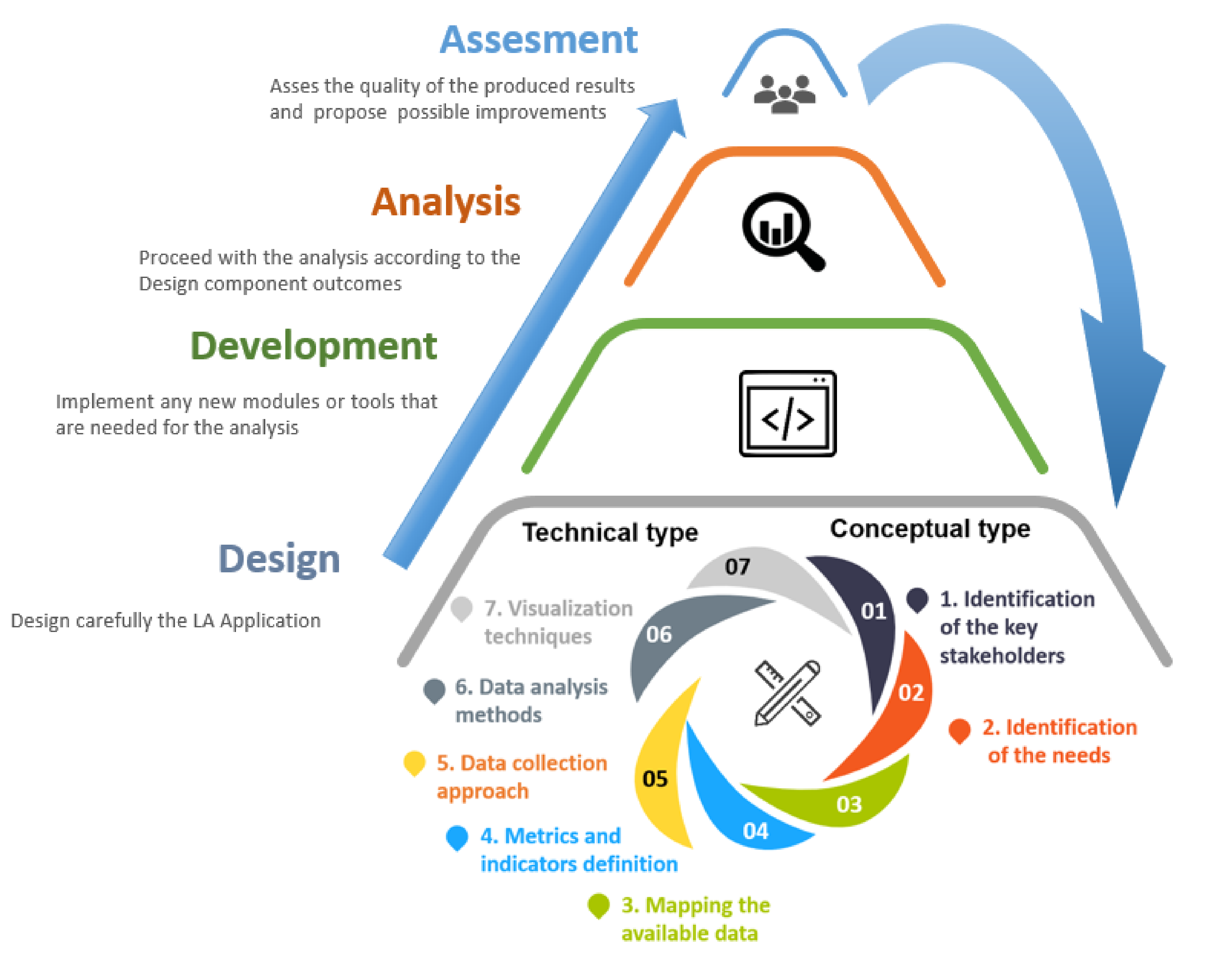

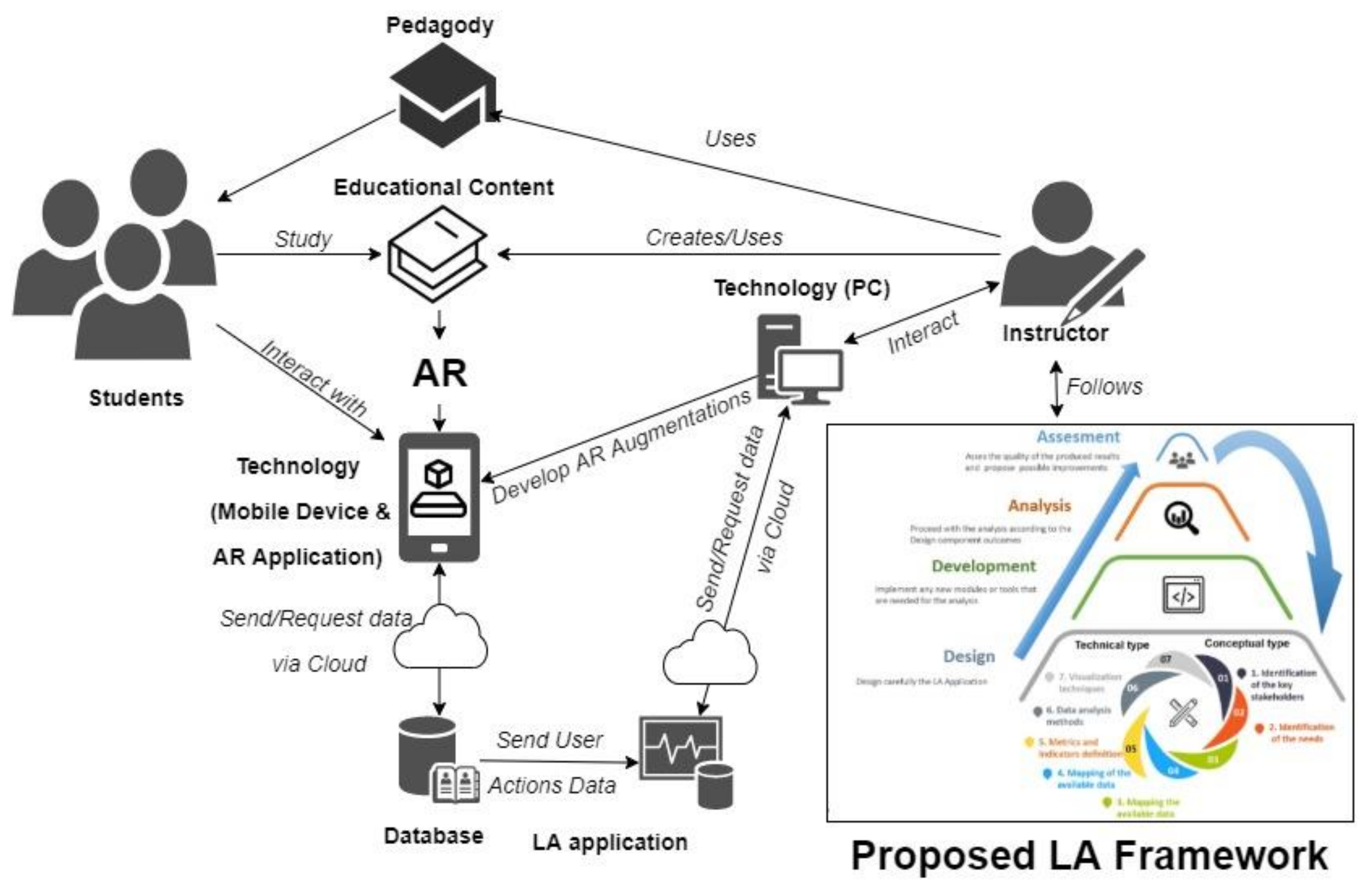

3. Framework Overview

3.1. Design

3.1.1. Identification of the Key Stakeholders

3.1.2. Identification of Needs

Identification of Concepts for Analysis

Educational Content

Learner Profiles and Behavior/Activity

Technology Utilization Context in the Educational Process

Identification of Data Shortages

3.1.3. Mapping the Available Data

- Studying the literature for available indices and metrics and their relationship to LA concepts: Many researchers have suggested different indices and metrics to study different concepts related to e-learning. Often, researchers can adapt them to the specific LA process. In this case, they can suggest changes in the e-learning platform or the online course to obtain the data needed for the subsequent analysis. For example, if a system only records the login/logout time of its users, researchers may ask to record the time users spend on certain online resources of the course.

- Using appropriate existing indices and metrics: If researchers have found that existing indices and metrics can be used in the LA process, they may need to adapt them to the specific needs and characteristics of the learners. For example [60] uses indices and metrics proposed by Laudon and Traver [61] from e-commerce and business analytics for LA processes.

- Proposal of new indices: Researchers can propose new indices that allow them to study specific concepts. They must study the literature carefully and decide whether they can link the newly proposed indices with specific concepts. In most cases, this process requires validation to prove the correlation between the indices and the research concepts.

3.1.4. Data Collection Approach

3.1.5. Data Analysis Methods

3.1.6. Visualization Techniques

3.2. Development

3.3. Analysis

3.4. Assessment

4. Framework Implementation for AR-supported Interventions

5. Discussion

6. Implications

- Instructional designers should be trained in how to use appropriate software and hardware related to AR technology.

- Application developers and learning technologists should explore design solutions related to the use of AR technology in “hands-on” learning practices.

- Policymakers should not neglect the socio-cognitive and cultural effects of using interactive AR applications combined with LA to inform trainees and practitioners about their performance and outcomes.

7. Conclusions and Future Work

Author Contributions

Funding

Conflicts of Interest

References

- Pellas, N.; Kazanidis, I.; Konstantinou, N.; Georgiou, G. Exploring the educational potential of three-dimensional multi-user virtual worlds for STEM education: A mixed-method systematic literature review. Educ. Inf. Technol. 2017, 22, 2235–2279. [Google Scholar] [CrossRef]

- STEM Education Data. Available online: https://nsf.gov/nsb/sei/edTool/explore.html (accessed on 21 November 2020).

- Pellas, N.; Fotaris, P.; Kazanidis, I.; Wells, D. Augmenting the learning experience in primary and secondary school education: A systematic review of recent trends in augmented reality game-based learning. Virtual Real. 2019, 23, 329–346. [Google Scholar] [CrossRef]

- Potkonjak, V.; Gardner, M.; Callaghan, V.; Mattila, P.; Guetl, C.; Petrović, V.M.; Jovanović, K. Virtual laboratories for education in science, technology, and engineering: A review. Comput. Educ. 2016, 95, 309–327. [Google Scholar] [CrossRef]

- Pellas, N.; Dengel, A.; Christopoulos, A. A Scoping Review of Immersive Virtual Reality in STEM Education. IEEE Trans. Learn. Technol. 2020, 13, 748–761. [Google Scholar] [CrossRef]

- Crescenzi-Lanna, L. Multimodal Learning Analytics research with young children: A systematic review. Br. J. Educ. Technol. 2020, 51, 1485–1504. [Google Scholar] [CrossRef]

- Christopoulos, A.; Pellas, N. Theoretical foundations of Virtual and Augmented reality-supported learning analytics. In Proceedings of the 11th International Conference on Information, Intelligence, Systems and Applications (IISA), Piraeus, Greece, 15–17 July 2020; IEEE: Piscataway, NJ, USA, 2020. [Google Scholar]

- Yılmaz, R. Enhancing community of inquiry and reflective thinking skills of undergraduates through using learning analytics-based process feedback. J. Comput. Assist. Learn. 2020, 36, 909–921. [Google Scholar] [CrossRef]

- Bach, C. Learning Analytics: Targeting Instruction, Curricula and Student Support; Office of the Provost, Drexel University: Philadelphia, PA, USA, 2010. [Google Scholar]

- Dillenbourg, P. Orchestration Graphs: Modelling Scalable Education; EPFL Press: Lausanne, Switzerland, 2015. [Google Scholar]

- Gašević, D.; Kovanović, V.; Joksimović, S.; Siemens, G. Where is research on massive open online courses headed? A data analysis of the MOOC Research Initiative. Int. Rev. Res. Open Dist. Learn. 2014, 15, 134–176. [Google Scholar] [CrossRef]

- Moissa, B.; Gasparini, I.; Kemczinski, A. A systematic mapping on the learning analytics field and its analysis in the massive open online courses context. IJDET 2015, 13, 1–24. [Google Scholar] [CrossRef]

- Dyckhoff, A.L.; Zielke, D.; Bültmann, M.; Chatti, M.A.; Schroeder, U. Design and Implementation of a Learning Analytics Toolkit for Teachers. Educ. Technol. Soc. 2012, 15, 58–76. [Google Scholar]

- Di Mitri, D.; Schneider, J.; Specht, M.; Drachsler, H. From signals to knowledge: A conceptual model for multimodal learning analytics. J. Comput. Assist. Learn. 2018, 34, 338–349. [Google Scholar] [CrossRef]

- Azuma, R.T. A Survey of Augmented Reality. Presence Teleoperators Virtual Environ. 1997, 6, 355–385. [Google Scholar] [CrossRef]

- Wu, H.K.; Lee, S.W.Y.; Chang, H.Y.; Liang, J.C. Current status, opportunities and challenges of augmented reality in education. Comput. Educ. 2013, 62, 41–49. [Google Scholar] [CrossRef]

- Ibáñez, M.B.; Delgado-Kloos, C. Augmented reality for STEM learning: A systematic review. Comput. Educ. 2018, 123, 109–123. [Google Scholar] [CrossRef]

- Cheng, K.H.; Tsai, C.C. Affordances of Augmented Reality in Science Learning: Suggestions for Future Research. J. Sci. Educ. Technol. 2013, 22, 449–462. [Google Scholar] [CrossRef]

- Akçayır, M.; Akçayır, G.; Pektaş, H.M.; Ocak, M.A. Augmented reality in science laboratories: The effects of augmented reality on university students’ laboratory skills and attitudes toward science laboratories. Comput. Hum. Behav. 2016, 57, 334–342. [Google Scholar] [CrossRef]

- Thees, M.; Kapp, S.; Strzys, M.P.; Beil, F.; Lukowicz, P.; Kuhn, J. Effects of augmented reality on learning and cognitive load in university physics laboratory courses. Comput. Hum. Behav. 2020, 108, 1–11. [Google Scholar] [CrossRef]

- Lin, P.; Chen, S. Design and Evaluation of a Deep Learning Recommendation Based Augmented Reality System for Teaching Programming and Computational Thinking. IEEE Access 2020, 8, 45689–45699. [Google Scholar] [CrossRef]

- Mahmoudi, M.T.; Mojtahedi, S.; Shams, S. AR-based value-added visualization of infographic for enhancing learning performance. Comput. Appl. Eng. Educ. 2017, 25, 1038–1052. [Google Scholar] [CrossRef]

- Kazanidis, I.; Pellas, N. Developing and Assessing Augmented Reality Applications for Mathematics with Trainee Instructional Media Designers: An Exploratory Study on User Experience. J. Univ. Comput. Sci. 2019, 25, 489–514. [Google Scholar] [CrossRef]

- Akdeniz, C. Instructional Strategies. In Instructional Process and Concepts in Theory and Practice, 1st ed.; Akdeniz, C., Ed.; Springer: Singapore, 2016; pp. 57–105. [Google Scholar] [CrossRef]

- Yeh, S.C.; Hwang, W.Y.; Wang, J.L. Study of co-located and distant collaboration with symbolic support via a haptics-enhanced virtual reality task. Interact. Learn. Environ. 2013, 21, 184–198. [Google Scholar] [CrossRef]

- Moore, K.D. Classroom Teaching Skills, 6th ed.; McGraw-Hill: Boston, MA, USA, 2007; pp. 1–369. [Google Scholar]

- Secretan, J.; Wild, F.; Guest, W. Learning Analytics in Augmented Reality: Blueprint for an AR/xAPI Framework. In Proceedings of the 2019 IEEE International Conference on Engineering, Technology and Education (TALE), Yogyakarta, Indonesia, 10–13 December 2019. [Google Scholar]

- Christopoulos, A.; Pellas, N.; Laakso, M.J. A learning analytics theoretical framework for STEM education Virtual Reality applications. Special issue: “New Research and Trends in Higher Education”. Educ. Sci. 2020, 10, 317. [Google Scholar] [CrossRef]

- Siemens, G.; Gasevic, D. Guest Editorial—Learning and Knowledge Analytics. J. Educ. Technol. Soc. 2012, 15, 1–2. Available online: https://www.jstor.org/stable/jeductechsoci.15.3.1 (accessed on 23 October 2020).

- Buckingham Shum, S.; Ferguson, R. Social learning analytics. J. Educ. Technol. Soc. 2012, 15, 3–26. [Google Scholar]

- Daniel, B. Big Data and analytics in higher education: Opportunities and challenges. Br. J. Educ. Technol. 2015, 46, 904–920. [Google Scholar] [CrossRef]

- Sønderlund, A.L.; Hughes, E.; Smith, J. The efficacy of learning analytics interventions in higher education: A systematic review. Br. J. Educ. Technol. 2019, 50, 2594–2618. [Google Scholar] [CrossRef]

- Christopoulos, A.; Kajasilta, H.; Salakoski, T.; Laakso, M.-J. Limits and Virtues of Educational Technology in Elementary School Mathematics. J. Educ. Technol. Syst. 2020, 49, 59–81. [Google Scholar] [CrossRef]

- Tempelaar, D.T.; Rienties, B.; Giesbers, B. In search for the most informative data for feedback generation: Learning analytics in a data-rich context. Comput. Hum. Behav. 2015, 47, 157–167. [Google Scholar] [CrossRef]

- Viberg, O.; Hatakka, M.; Bälter, O.; Mavroudi, A. The current landscape of learning analytics in higher education. Comput. Hum. Behav. 2018, 89, 98–110. [Google Scholar] [CrossRef]

- Hasan, R.; Palaniappan, S.; Mahmood, S.; Abbas, A.; Sarker, K.U.; Sattar, M.U. Predicting student performance in higher educational institutions using video learning analytics and data mining techniques. Appl. Sci. 2020, 10, 3894. [Google Scholar] [CrossRef]

- Sciarrone, F.; Temperini, M. Learning analytics models: A brief review. In Proceedings of the 2019 23rd International Conference Information Visualisation (IV), Paris, France, 2–5 July 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 287–291. [Google Scholar] [CrossRef]

- Campbell, J.P.; DeBlois, P.B.; Oblinger, D.G. Academic analytics: A new tool for a new era. Educ. Rev. 2007, 42, 40. [Google Scholar]

- Clow, D. The learning analytics cycle: Closing the loop effectively. In Proceedings of the 2nd International Conference on Learning Analytics and Knowledge, ser. LAK ’12, New York, NY, USA, 29 April–2 May 2012; ACM: New York, NY, USA, 2012; pp. 134–138. [Google Scholar] [CrossRef]

- Greller, W.; Drachsler, H. Translating Learning into Numbers: A Generic Framework for Learning Analytics. Educ. Technol. Soc. 2012, 15, 42–57. [Google Scholar]

- Chatti, M.A.; Dyckhoff, A.L.; Schroeder, U.; Thus, H. A reference model for learning analytics. Int. J. Technol. Enhanc. Learn. 2012, 4, 318–331. [Google Scholar] [CrossRef]

- Siemens, G. Learning Analytics: The Emergence of a Discipline. Am. Behav. Sci. 2013, 57, 1380–1400. [Google Scholar] [CrossRef]

- Sergis, S.; Sampson, D. Teaching and learning analytics to support teacher inquiry: A systematic literature review. In Learning Analytics. From Research to Practice; Pea-Ayala, A., Ed.; Springer: Berlin/Heidelberg, Germany, 2017; pp. 25–63. [Google Scholar]

- Clow, D. An overview of learning analytics. Teach. High. Educ. 2013, 18, 683–695. [Google Scholar] [CrossRef]

- Ferguson, R. Learning analytics: Drivers, developments and challenges. IJTEL 2012, 2012 4, 304–317. [Google Scholar] [CrossRef]

- Pishtari, G.; Rodríguez-Triana, M.J.; Sarmiento-Márquez, E.M.; Pérez-Sanagustín, M.; Ruiz-Calleja, A.; Santos, P.; PPrieto, L.; Serrano-Iglesias, S.; Väljataga, T. Learning design and learning analytics in mobile and ubiquitous learning: A systematic review. Br. J. Educ. Technol. 2020, 51, 1078–1100. [Google Scholar] [CrossRef]

- Chan, N.N.; Walker, C.; Gleaves, A. An exploration of students’ lived experiences of using smartphones in diverse learning contexts using a hermeneutic phenomenological approach. Comput. Educ. 2015, 82, 96–106. [Google Scholar] [CrossRef]

- Huang, W.H.D.; Oh, E. Retaining disciplinary talents as informal learning outcomes in the digital age: An exploratory framework to engage undergraduate students with career decision-making processes. In Handbook of Research on Learning Outcomes and Opportunities in the Digital Age; Wang, V.C.X., Ed.; IGI Global: Hershey, PA, USA, 2016; pp. 402–420. [Google Scholar] [CrossRef]

- Eichmann, B.; Greiff, S.; Naumann, J.; Brandhuber, L.; Goldhammer, F. Exploring behavioural patterns during complex problem-solving. J. Comput. Assist. Learn. 2020, 36, 933–956. [Google Scholar] [CrossRef]

- Frensch, P.; Funke, J. Definitions, traditions, and a general framework for understanding complex problem solving. In Complex Problem Solving: The European Perspective; Frensch, A., Funke, J.P., Eds.; Lawrence Erlbaum Associates: Hillsdale, NJ, USA, 1995; pp. 3–25. [Google Scholar] [CrossRef]

- Livieris, I.E.; Tampakas, V.; Kiriakidou, N.; Mikropoulos, T.; Pintelas, P. Forecasting Students’ Performance Using an Ensemble SSL Algorithm. In Communications in Computer and Information Science, Proceedings of the 1st Int. Conf. on Technology and Innovation in Learning, Teaching and Education; Thessaloniki, Greece, 20–22 June 2018, Tsitouridou, M.A., Diniz, J., Mikropoulos, T., Eds.; Springer Nature: Cham, Switzerland, 2019. [Google Scholar] [CrossRef]

- Kazanidis, I.; Satratzemi, M. Modeling User Progress and Visualizing Feedback—The Case of Proper. In Proceedings of the 2nd International Conference on Computer Supported Education (CSEDU 2010), Valencia, Spain, 7–10 April 2010; SCITEPRESS: Setúbal, Portugal, 2010. [Google Scholar] [CrossRef]

- Valsamidis, S.; Kontogiannis, S.; Kazanidis, I.; Theodosiou, T.; Karakos, A. A Clustering Methodology of Web Log Data for Learning Management Systems. J. Educ. Technol. Soc. 2012, 15, 154–167. [Google Scholar]

- Kazanidis, I.; Valsamidis, S.; Gounopoulos, E.; Kontogiannis, S. Proposed S-Algo+ data mining algorithm for web platforms course content and usage evaluation. Soft Comput. 2020, 24, 14861–14883. [Google Scholar] [CrossRef]

- Valsamidis, S.; Kazanidis, I.; Kontogiannis, S.; Karakos, A. Course ranking and automated suggestions through web mining. In Proceedings of the 10th IEEE International Conference on Advanced Learning Technologies (ICALT ‘10), Sousse, Tunisia, 5–7 July 2010; IEEE Press: Piscataway, NJ, USA, 2010. [Google Scholar] [CrossRef]

- Valsamidis, S.; Kazanidis, I.; Kontogiannis, S.; Karakos, A. An approach for LMS assessment. IJTEL 2012, 4, 265–283. [Google Scholar] [CrossRef]

- Kim, M.J. A framework for context immersion in mobile augmented reality. Autom. Constr. 2013, 33, 79–85. [Google Scholar] [CrossRef]

- Pellas, N. The influence of computer self-efficacy, metacognitive self-regulation and self-esteem on student engagement in online learning programs: Evidence from the virtual world of Second Life. Comput. Hum. Behav. 2014, 35, 157–170. [Google Scholar] [CrossRef]

- Thomas, M.S.C.; Ansari, D.; Knowland, V.C.P. Annual Research Review: Educational neuroscience: Progress and prospects. J. Child Psychol. Psychiatry 2019, 60, 477–492. [Google Scholar] [CrossRef] [PubMed]

- Gounopoulos, E.; Valsamidis, S.; Kazanidis, I.; Kontogiannis, S. Mapping and identifying features of e-learning technology through indexes and metrics. In In Special Session on Analytics in Educational Environments. In Proceedings of the 9th International Conference on Computer Supported Education (CSEDU 2017), Porto, Portugal, 21–23 April 2017; SCITEPRESS: Setúbal, Portugal, 2017. [Google Scholar] [CrossRef]

- Laudon, K.C.; Traver, C.G. E-commerce, 10th ed.; Pearson: New York, NY, USA, 2013; pp. 1–912. [Google Scholar]

- Kazanidis, I.; Valsamidis, S.; Theodosiou, T.; Kontogiannis, S. Proposed framework for data mining in e-learning: The case of open e-class. In Proceedings of the IADIS International Conference Applied Computing 2009, Rome, Italy, 19–21 November 2009; pp. 254–258. [Google Scholar]

- Valsamidis, S.; Kazanidis, I.; Kontogiannis, S.; Karakos, A. Homogeneity and Enrichment: Two Metrics for Web Applications Assessment. In Proceedings of the 14th Panhellenic Conference on Informatics (PCI 2010), Tripoli, Greece, 10–12 September 2010; IEEE Press: Piscataway, NJ, USA, 2010. [Google Scholar] [CrossRef]

- Christopoulos, A.; Conrad, M.; Shukla, M. Interaction with Educational Games in Hybrid Virtual Worlds. J. Educ. Technol. Syst. 2018, 46, 385–413. [Google Scholar] [CrossRef]

- Christopoulos, A.; Conrad, M.; Shukla, M. Increasing student engagement through virtual interactions: How? Virtual Real. 2018, 22, 353–369. [Google Scholar] [CrossRef]

- Christopoulos, A.; Conrad, M.; Shukla, M. The Added Value of the Hybrid Virtual Learning Approach: Using Virtual Environments in the Real Classroom. In Integrating Multi-User Virtual Environments in Modern Classrooms; Qian, Y., Ed.; IGI Global: Hershey, PA, USA, 2018; pp. 259–279. [Google Scholar] [CrossRef]

- Peña-Ayala, A. Learning analytics: A glance of evolution, status, and trends according to a proposed taxonomy. WIREs Data Min. Knowl. Discov. 2018, 8, 1–29. [Google Scholar] [CrossRef]

- Sciarrone, F. Machine Learning and Learning Analytics: Integrating Data with Learning. In Proceedings of the 17th International Conference on Information Technology Based Higher Education and Training (ITHET), Olhao, Portugal, 26–28 April 2018; Curran Associates: Red Hook, NY, USA, 2018. [Google Scholar] [CrossRef]

- Slade, S.; Prinsloo, P. Learning Analytics Ethical Issues and Dilemmas. Am. Behav. Sci. 2013, 57, 1510–1529. [Google Scholar] [CrossRef]

| 1 | Definition of metrics and indicators |

| 2 | Data collection approach |

| 3 | Data analysis methods |

| 4 | Visualization techniques |

| No | Stage | Aim | Type |

|---|---|---|---|

| 1 | Identification of the key stakeholders | Identify all the possible key stake holders | Conceptual |

| 2 | Identification of needs | Provide guidelines on the needs, identification according to the previous stages | Conceptual |

| 3 | Mapping the available data | Study the available data and the possible ways that they can be analyzed | Technical |

| 4 | Definition of metrics and indicators | Choose or create the metrics and indices that will be used for the LA process | Technical |

| 5 | Data collection approach | Adopt an adequate collection approach | Technical |

| 6 | Data analysis methods | Decide on the methods that will be used | Technical |

| 7 | Visualization techniques | Decide on the visualization techniques | Technical |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kazanidis, I.; Pellas, N.; Christopoulos, A. A Learning Analytics Conceptual Framework for Augmented Reality-Supported Educational Case Studies. Multimodal Technol. Interact. 2021, 5, 9. https://doi.org/10.3390/mti5030009

Kazanidis I, Pellas N, Christopoulos A. A Learning Analytics Conceptual Framework for Augmented Reality-Supported Educational Case Studies. Multimodal Technologies and Interaction. 2021; 5(3):9. https://doi.org/10.3390/mti5030009

Chicago/Turabian StyleKazanidis, Ioannis, Nikolaos Pellas, and Athanasios Christopoulos. 2021. "A Learning Analytics Conceptual Framework for Augmented Reality-Supported Educational Case Studies" Multimodal Technologies and Interaction 5, no. 3: 9. https://doi.org/10.3390/mti5030009

APA StyleKazanidis, I., Pellas, N., & Christopoulos, A. (2021). A Learning Analytics Conceptual Framework for Augmented Reality-Supported Educational Case Studies. Multimodal Technologies and Interaction, 5(3), 9. https://doi.org/10.3390/mti5030009