1. Introduction

We use eye movements to solve many problems, such as avoiding obstacles, or to find an item of importance. The ability to direct our vision is invaluable. In some situations, humans appear able to use eye movements to direct the visual system in an optimal manner [

1]. In other situations, people do not make eye movements that are consistent with an optimal strategy [

2,

3]. Najemnik and Geisler [

1] derived an “ideal observer” model of eye movements in visual search, which minimises the number of fixations needed to find a target by deploying fixations that reduce overall uncertainty of information in the search space. To do this, the model accounts for fixation history, as well as differences in acuity across the retina, to select fixations that provide the most new information. They found that humans matched the ideal model in terms of the number of fixations needed to find the target, suggesting that we make decisions about where to fixate based on maximising information gain. However, calculating expected information gain for all possible fixations is a resource-intensive process, considering we tend to select new fixations 2–3 times each second during search. With this in mind, Clarke et al. [

4] suggested a simpler alternative model, i.e., human performance in a visual search task can be characterised as a stochastic process. The stochastic model randomly selects each eye movement during search from a population of saccade vectors executed from that region of the search array, until the target is “found” (that is, until the model’s fixation lands close enough to the target that it would be detected, given empirical estimates of target visibility over eccentricity). Though the population from which the saccades were selected may have been shaped by factors such as the target visibility and search history, the model itself did not use this information in selecting each new fixation location. Similar to Najemnik and Geisler [

1], Clarke and Hunt [

2] also found that their model matches human observers in terms of the number of fixations needed to find the target, despite the selection process being random instead of driven by expected information gain.

This leaves us with a puzzle: two models with very different underlying mechanisms that both describe human search equivalently well. On the one hand, humans may make each fixation based upon current scene statistics and fixation history, and, on the other hand, their fixation selection may be based on a more generic set of perceptual and motor biases. The assumption that differentiates these two models is whether fixations are selected based on a principle of expected information gain.

Experiments by Nowakowska et al. [

5] directly test this assumption and suggest both models may be correct. They presented participants with search arrays that had two halves: on one half, the target would pop out from the distractors and could be easily seen using peripheral vision, and on the other side, the distractors were more variable and the target would be harder to spot. An optimal searcher would direct all fixations to the more difficult side, because no new information would be gained by fixating on the easy side. The results show large individual differences. A few participants conformed to an optimal strategy, but a wide range of other strategies were observed. These strategy differences were closely correlated with the efficiency of search performance, and Clarke et al. [

6] showed they were also stable over time (see also [

7]). These studies suggest that no single model can describe fixation selection during search: variability driven by contexts and individuals will need to be accounted for.

Another paradigm that addresses the extent to which eye movements are driven by expected information gain was devised by Morvan and Maloney [

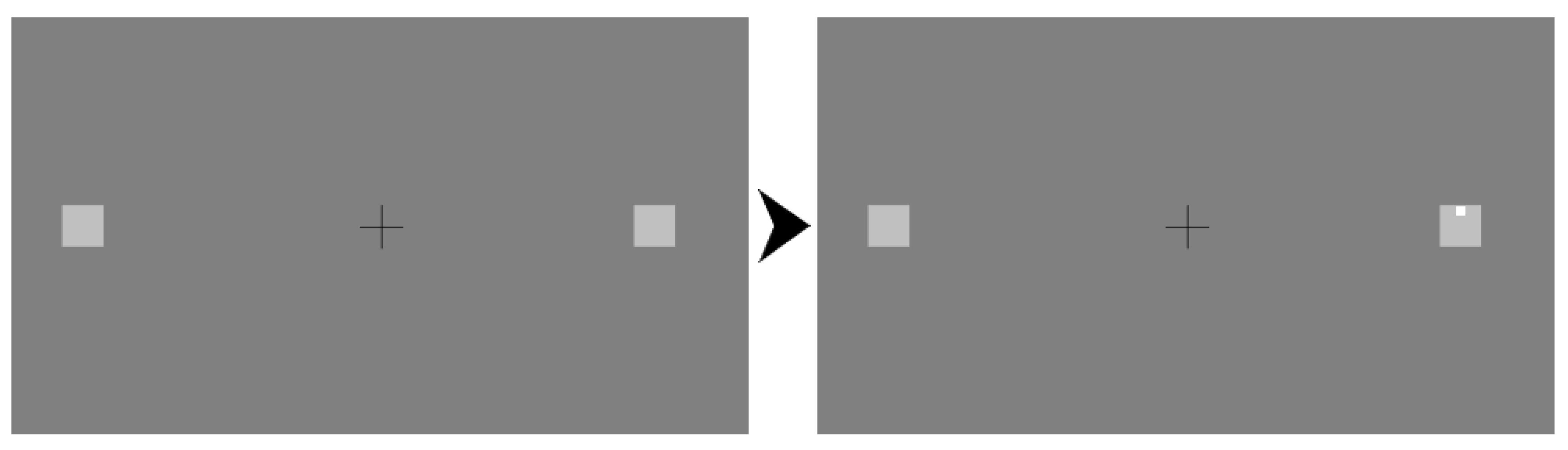

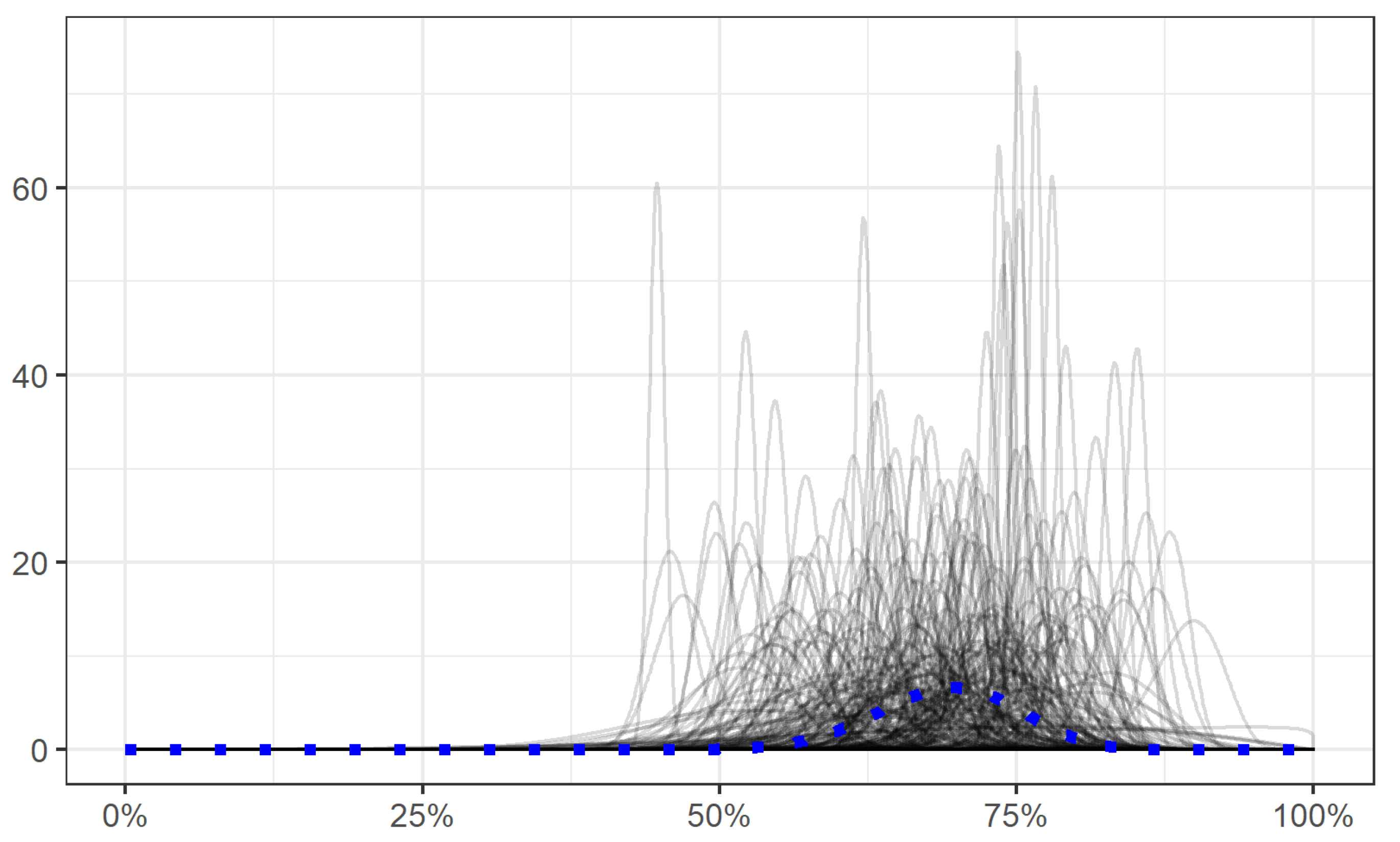

3]. In their task, participants had to make a single eye movement in order to discriminate a small target dot that could appear in one of two locations. In the beginning of each trial, participants were presented with three boxes: one in the centre, with two boxes either side. Participants were instructed to fixate one of these boxes and then the target would appear in either of the two side boxes. Throughout the experiment, these two side boxes would appear at different eccentricities, sometimes being placed very close to the central box, and at other times a large distance away. When the boxes were closely spaced, the optimal strategy would be to fixate the central box, using peripheral vision to detect the target when it appears to the left or right of fixation. However, as the distance between the boxes gets larger, performance from a central fixation position begins to decrease towards chance (50%). Therefore, for a big enough distance, the optimal strategy is to fixate either of the two side boxes, as this would achieve an expected accuracy of around 75% (100% for targets appearing in the fixated box, and 50% for targets appearing in the other box). If participants were to select fixations according to expected information gain, given the limitations of their own visual acuity, they would fixate the centre box when expected accuracy given the distance between the boxes is greater than 75%, and one of the side boxes when expected accuracy from the centre is less than 75%. The results of Morvan and Maloney’s experiment clearly demonstrate that people failed to select fixations according to this optimal strategy. This failure went well beyond not quite switching at the optimal point, or not consistently carrying out the optimal strategy. In fact, none of the participants in their study modified their choice of which box to fixate according to the distance between the boxes.

This failure to fixate in a way that optimises information gain during search does not appear to be a limitation that is specific to eye movements and visual search. Clarke and Hunt [

2] demonstrated the same striking decision failure across a range of different tasks (like choosing where to stand to throw objects at targets, or choosing whether to try and memorise one or two digit strings for later report). They characterised the decision failure as an inability to use an expectation of task difficulty to guide decisions about whether to attempt to accomplish multiple goals or to focus resources on a single task.

Sub-optimal behaviour is prevalent in many other tasks [

8]. Potential explanations for the observed patterns were discussed by Kahneman and Tversky [

9], as well as Gigerenzer and Gaissmaier [

10]. One important consideration in the decision dilemma posed in the current study is that participants might not have an accurate representation of their own ability, and, hence, are unable to adopt the optimal strategy, which requires them to use that information to decide whether to pursue one goal or two. This would be an example of Bounded Rationality [

11] where human behaviour is argued to be optimal given some constraints. The constraints in this case would come from imprecise information about one’s own capabilities, or a lack of confidence in the accuracy of that information. This explanation seems unlikely, because participants in these experiments first complete a session in which their ability to perform the task was recorded (e.g., throwing, memorising or detecting), without the need to make a decision. The purpose of this session is to calculate their switch point in the second session, and to calibrate the second session’s difficulty level to match the capability of the participant, but a secondary benefit of this session is that the participant gains experience doing the task and observing their own chances of success at different levels of task difficulty. To check if participants successfully learned this information, James et al. [

12] asked participants to provide estimates of their chances of successfully hitting a target at a range of different distances. They found that participants’ estimates were very close to their actual accuracy in performing the task. This suggests that participants do have the information necessary to perform optimally, but do not make use of this when making decisions.

Other explanations for suboptimal decisions in tasks like this could be based on other classic cognitive biases, such as risk/loss aversion [

9] or achievement motivation [

13]. However, there is a great deal of variation in the decision behaviour of individual participants, with some consistently trying to accomplish both goals, and others consistently focusing on one, and most showing a blend of both strategies (but rarely in a way that varies systematically with task difficulty). As such, one single bias or heuristic might be able to explain the behaviour of some individuals on some trials, but does not provide a satisfying explanation for sub-optimal performance in the experiment overall.

The potential explanation for sub-optimal performance that is addressed in the current experiment is a lack of motivation. The idiosyncratic and variable decisions the participants tend to make in this paradigm may be due to participants prioritising something other than accuracy in the task when making their decisions. Some other studies that added a financial incentive have shown that participants begin to make use of more optimal strategies [

14,

15]. A clear motivation for success in the form of a financial gain can lead participants to move from employing strategies that attempted to be successful for all outcomes to focus more on those that were more likely. It is important to note that the participants in the experiments of Morvan and Maloney [

3] (

N = 4 in Experiment 1,

N = 2 in Experiment 2) were financially rewarded for correct responses, up to a total of

$20. However, the monetary gain was spread out over thousands of trials, which may have diluted its effects. In Clarke and Hunt [

2], there were more participants and the experiments were much shorter, but participants were offered no monetary reward for being successful, relying instead on the value placed on success by each participant (which may in some cases have been non-existent). Therefore, it is possible that at least some of the participants were simply not motivated to succeed.

Even with an added financial incentive, the goals of a participant may still be misaligned with the desires of the experimenter. For example, even though in Morvan and Maloney [

3] participants were rewarded for success, they may have prioritised completing the task as quickly as possible rather than gaining the largest sum of money at the end of the experiment. This could be the result of participants wanting to maximise the rate of gain over time as opposed to solely focussing on the total gain. Miranda and Palmer [

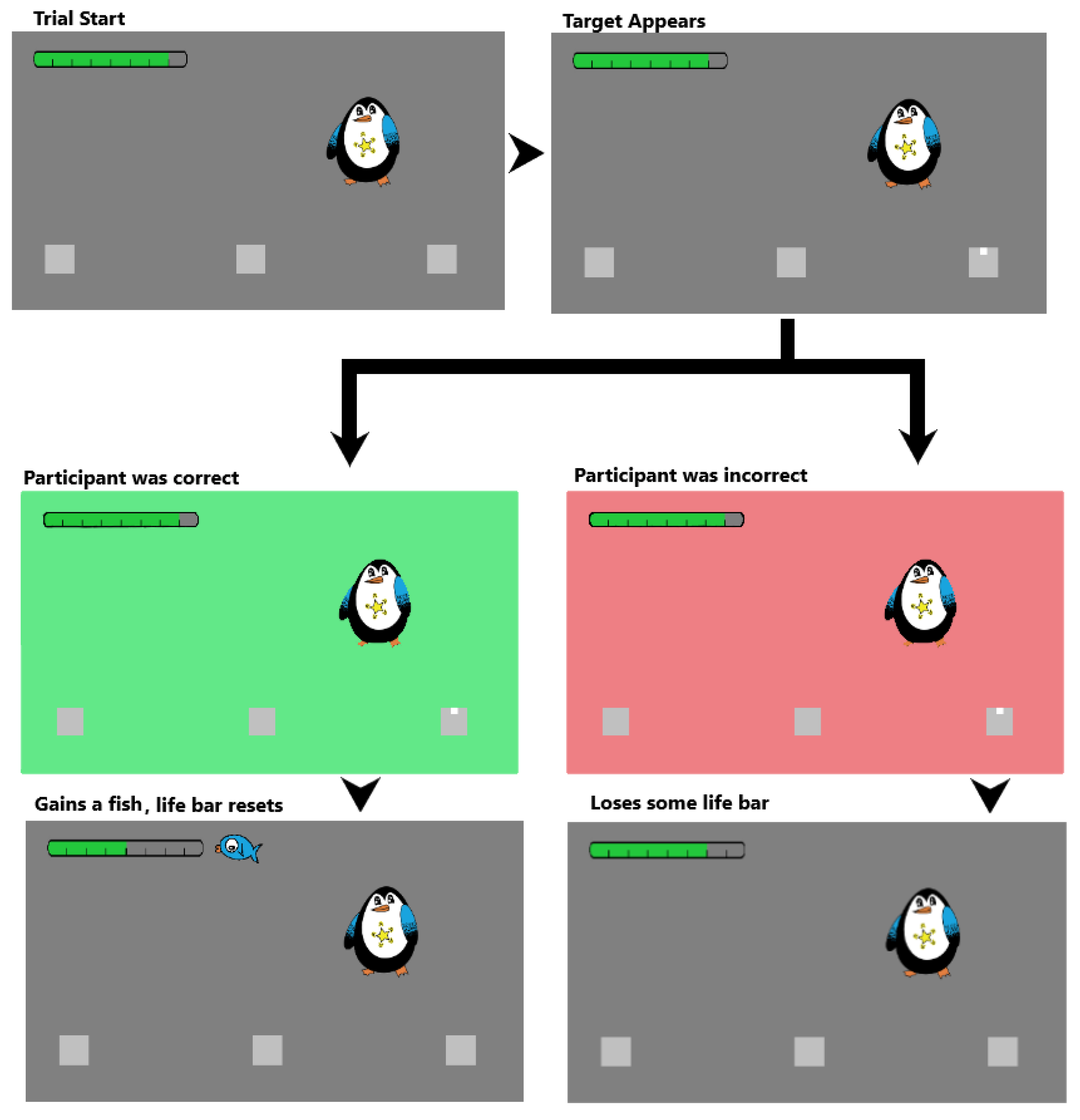

16] suggested that game-like experiments can encourage participants to prioritise success rather than speed of completion. They posited that enjoyable experiments intrinsically motivate participants to focus on the task as opposed to simply performing the task to gain something external (such as money or course credit). In effect, this could align the goals of the participant and experimenter as participants would find the task more enjoyable and be less likely to rush through it or be negligent.

In the present study, we gamified the decision problem with the aim to motivate participants to adopt a more success-oriented attitude. We hypothesise that participants taking part in a more engaging version of the task would be more likely to use a more optimal strategy, resulting in a greater rate of success.

4. Discussion

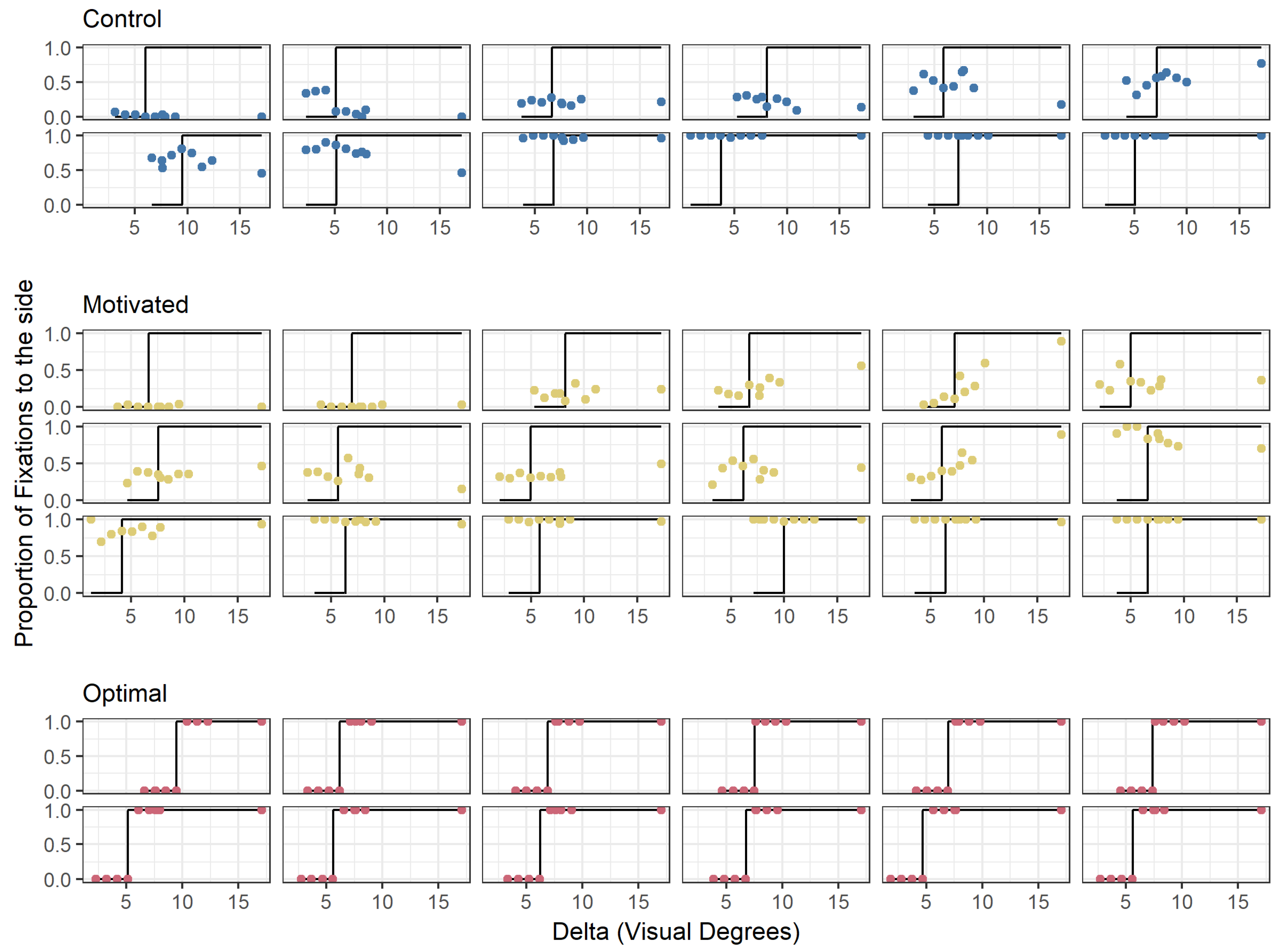

The results from this experiment are consistent with previous findings in that participants are highly variable and suboptimal in their fixation decisions in this task [

2,

3]. Despite the presence of a strong motivation to be optimal, which demonstrably increased participants’ efforts to do well in the task, none of the participants adopted the optimal strategy. The novel contribution from the current experiment is that the decisions about where to fixate (

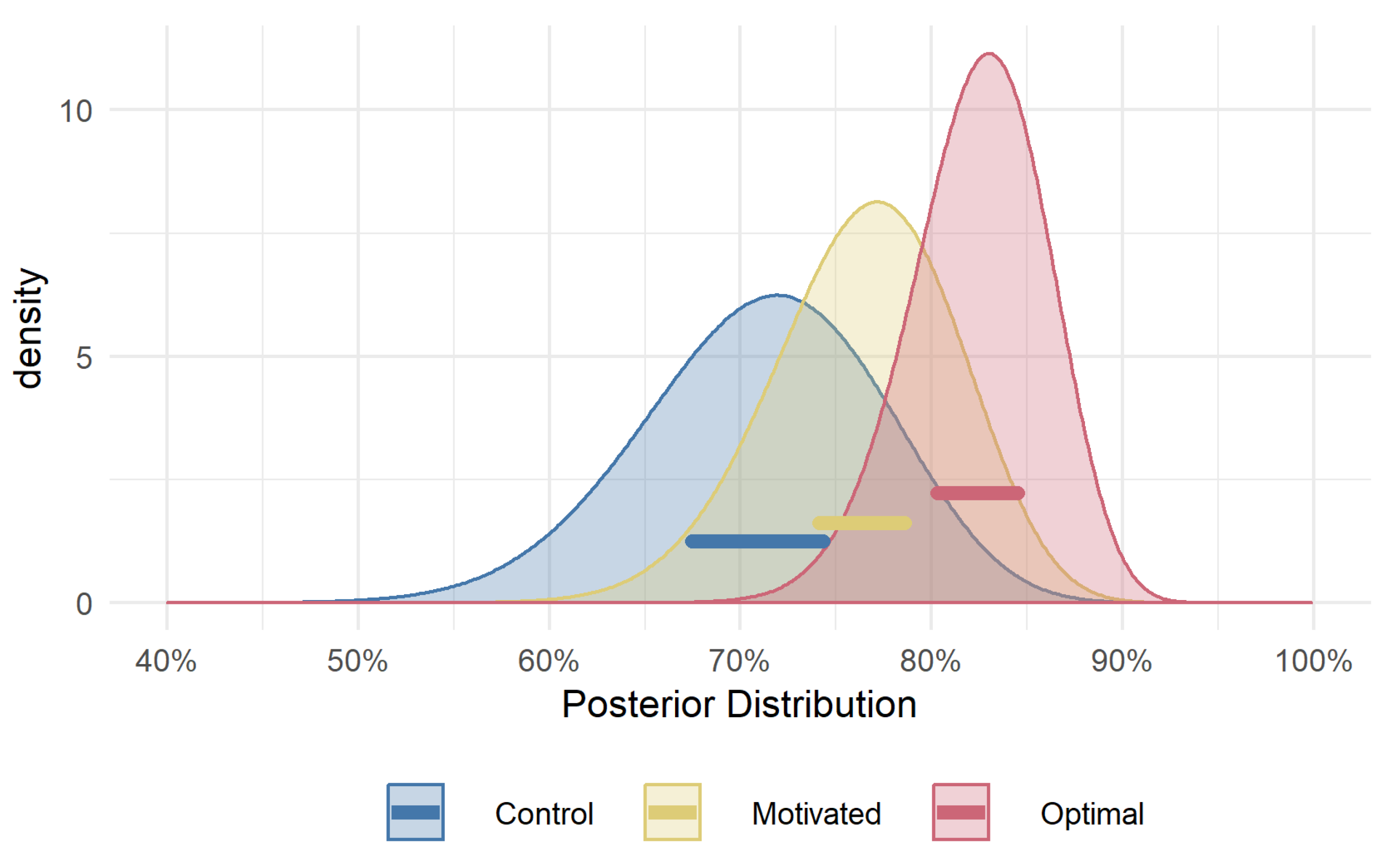

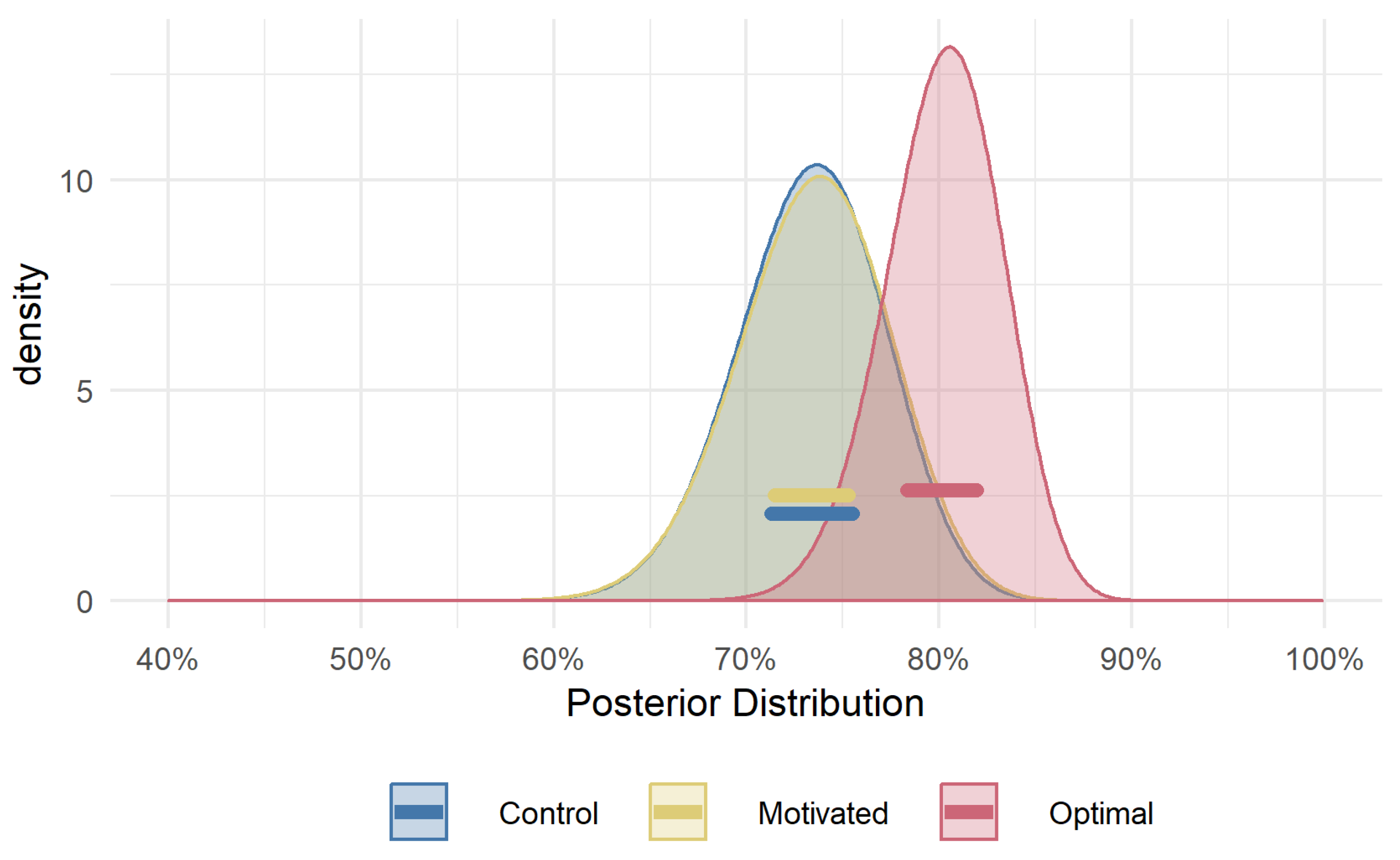

Figure 4) show no discernible difference in strategy between the

Motivated and the

Control group. This would suggest that having a clear motivation for success does not cause people to use more optimal strategies. This result rules out a failure to adequately motivate participants as a likely explanation for suboptimal decisions.

The

Motivated group did have higher overall accuracy in the task than the

Control group, demonstrating that the motivation manipulation was effective. The boost in accuracy in the absence of an improved strategy suggests participants were expending more effort to detect the target and respond correctly. This result is consistent with several studies demonstrating that visual sensitivity, attention, and effort are all affected by the presence of rewards [

16,

25,

26]. Participants in the

Motivated group may have made fewer erroneous button presses (that is, pressed the key they intended to) than the

Control group as a result of more sustained attention/lower lapse rates [

27]. They also may have made more of an effort to detect the target during the decision phase, in which the “game” was introduced, and therefore were better able to detect the target than would be expected given their initially measured visual acuity [

16].

This finding rules out a general lack of motivation as an explanation for why participants fail to discern or follow an easily implemented decision rule that would increase their accuracy. This can be added to a growing list of other simple explanations. As noted in the Introduction, James et al. [

12] ruled out a lack of self-awareness of ability as a viable explanation. Another possible explanation is that the optimal strategy may in fact be more difficult to implement than it initially seems. Hunt et al. [

17] ruled this out as well by asking non-naive participants to perform both the Throwing and Detection task from Clarke and Hunt [

2]. The results from these participants were very close to optimal. This demonstrates that it is possible to implement the strategy when it is known. However, calculating optimal fixations in the context of visual search can be quite effortful (as demonstrated by the ideal search model of Najemnik and Geisler [

1]), and does not always result in large gains in terms of utility or efficiency relative to making “random” fixations [

4]. This may go some way towards explaining why participants do not employ the optimal strategy in the simpler context of the current experiment. That is, participants may not know

a priori that the strategy in this particular case is simple and will benefit performance, but they may know that the gains that can usually be achieved by figuring out an optimal strategy would not outweigh the cost of figuring out the strategy in the first place. This could be considered an example of satisficing, in that participants reached a point at which their strategy met their own moderate standards [

11]. Here, the participants of the

Motivated group might have set themselves a standard for performance that they were able to meet without changing their fixation strategy: their goal was simply to get as many fish as they could. In deed, all of them completed the experiment with a net positive number of fish, which may have been sufficient for them to feel that they did their best. The presence of a motivating element presumably raised their standard above that of the

Control group, and therewith their performance. However, had they explicitly recognised the additional gains they could have made with better fixation strategies, they may have re-examined fixation strategies and made more efficient decisions.

A striking contrast exists between the results from this task, in which participants are highly variable and sub-optimal, and examples from visually-guided reaching and eye movements for tasks in which participants appear able to execute near-optimal movement plans (e.g., [

28,

29]). In these studies, participants are able to take into account their own variability in movement production to target locations that balance risk and reward to optimise expected gain. The key difference between those experiments and the one presented here (and in [

2,

3,

12,

17]) is the directness of the relationship between the strategy and the rewarded outcome. In the current task, participants need to select a place to fixate that optimises their accuracy in the detection task, but it is performance on the detection task that is ultimately rewarded. This highlights an interesting distinction between optimal decisions about how to prepare for an upcoming task, relative to optimal decisions about how to execute the task when the time comes. Rather than the extent of motivation, it might be the directness between motivation and (optimal) strategy that needs to be higher for the latter to be adopted. In the current study, the execution of the detection aspect of the task responded to the motivation manipulation and showed clear improvements, but the decision about where to look in preparation for the task was unchanged. A similar dissociation is reported in [

30], where participants performed rapid reaches towards pairs of lights, one of which was only revealed to be the target after the movement had begun. In these experiments, participants were able to weigh up possible movement plans and select those which minimised mechanical effort. However, they completely failed to flexibly adapt their reaching strategies to maximise expected success, similar to the decision failure reported in the current experiment.

Reinforcement learning is likely to play an important role in shaping optimal behaviour in the execution component of decision making scenarios. An interesting question for future research is to address why this reinforcement does not improve the strategic preparation aspects of these decisions.