On the Aperture Problem of Binocular 3D Motion Perception

Abstract

1. Introduction

1.1. The Inverse Problem and Geometric Defaults

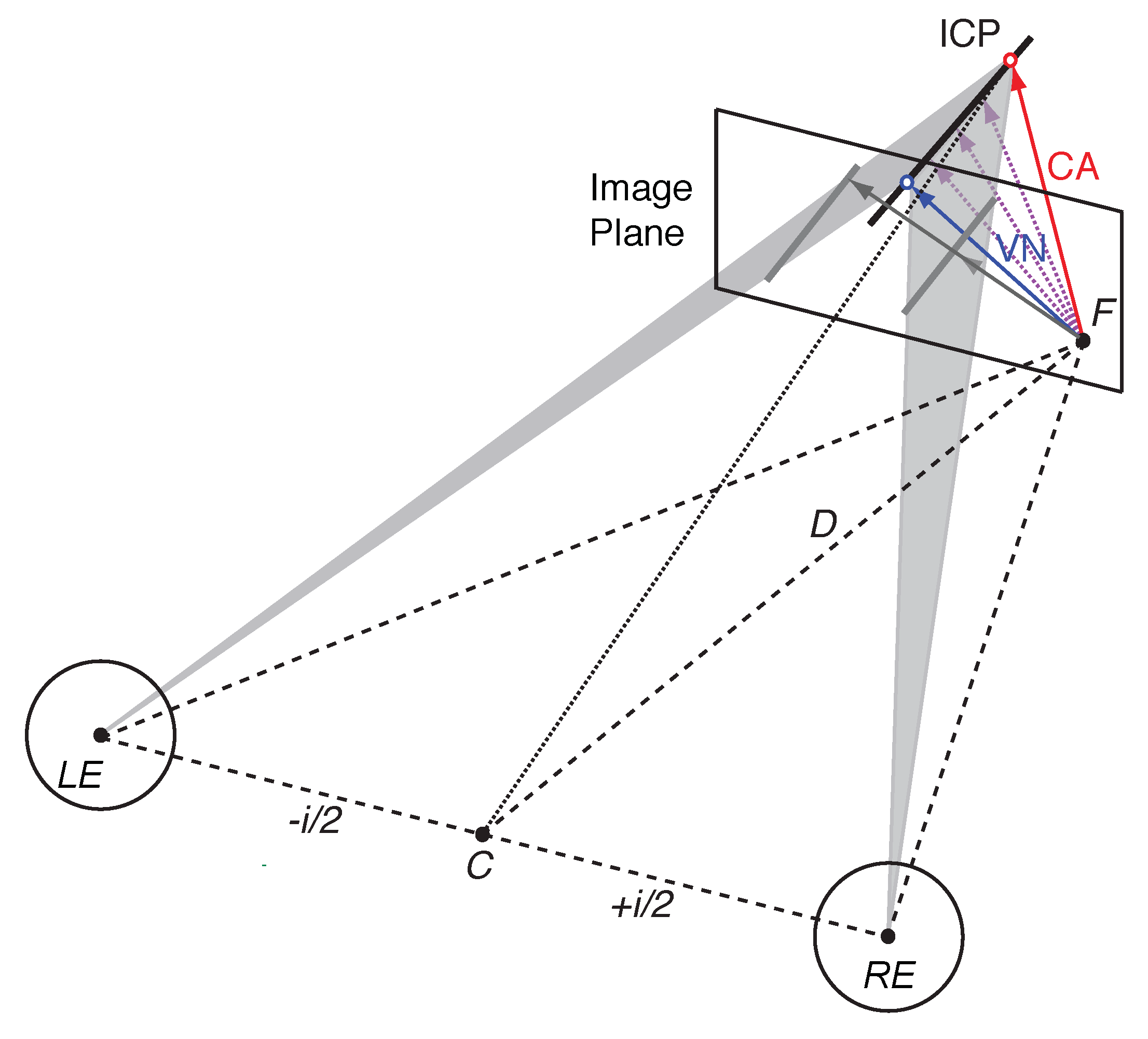

1.2. Vector Normal and Cyclopean Average

1.3. Bayesian Inference

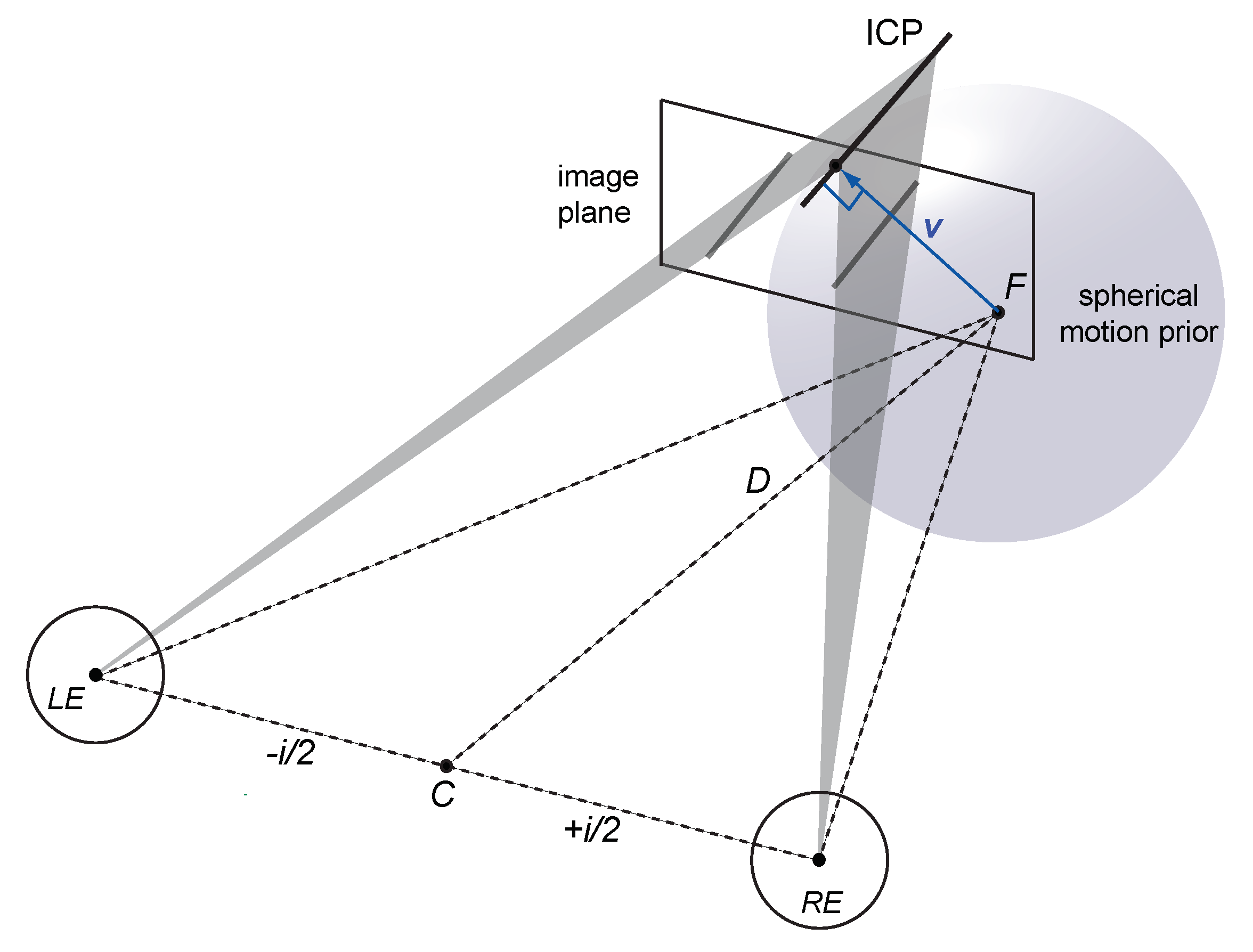

1.4. Spherical Motion Prior

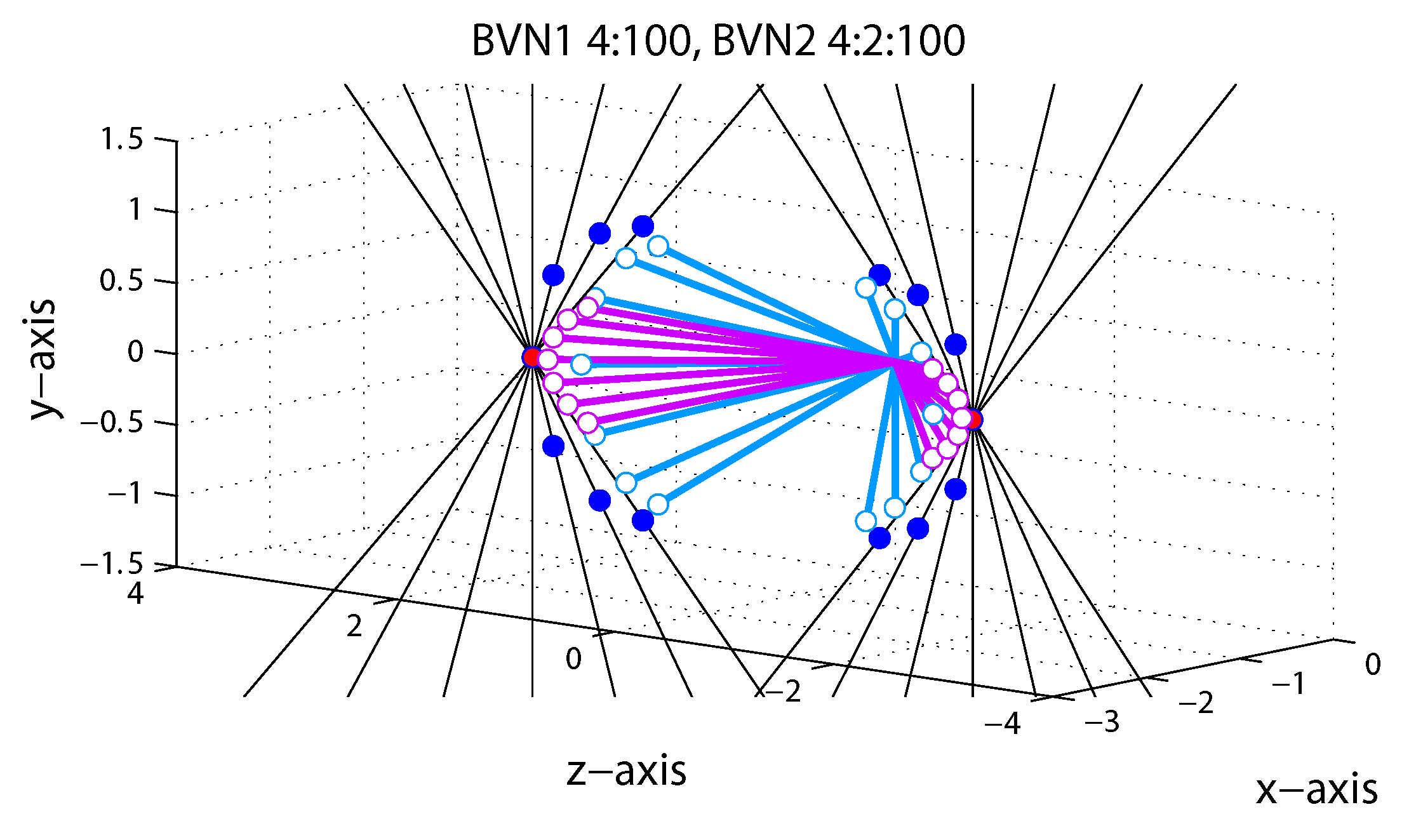

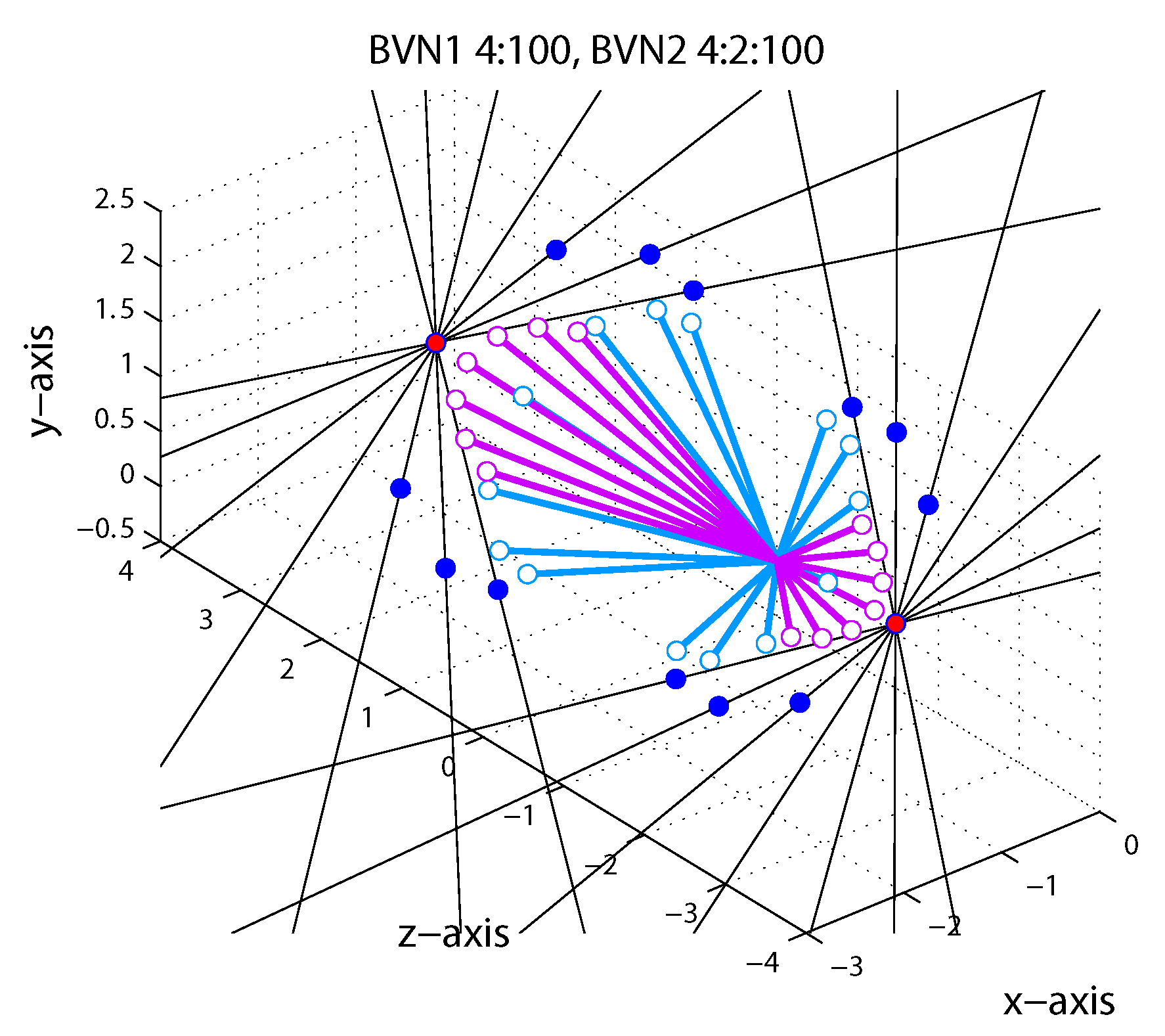

1.5. Bayesian Vector Normal

2. General Methods

2.1. Participants

2.2. Apparatus

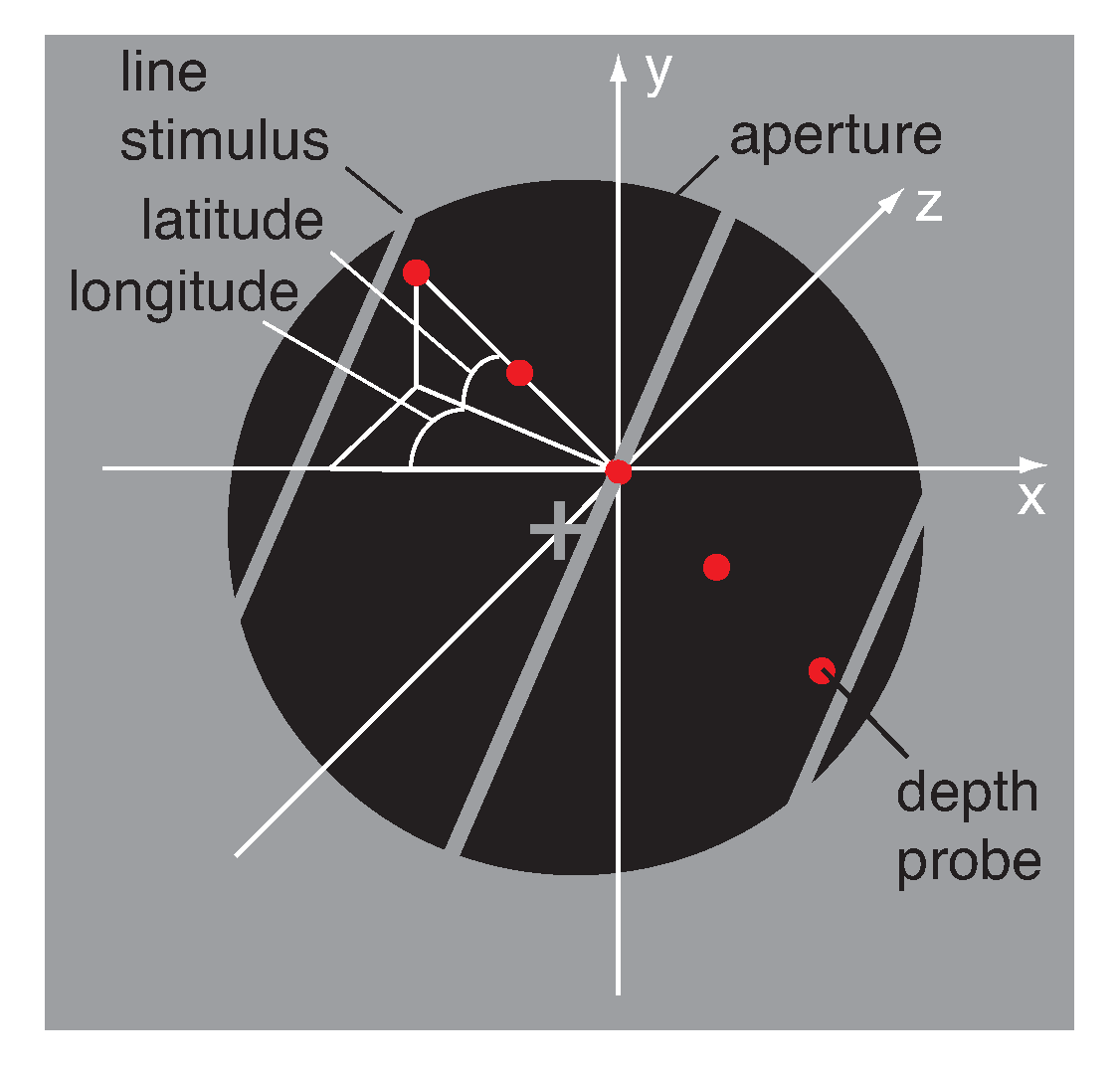

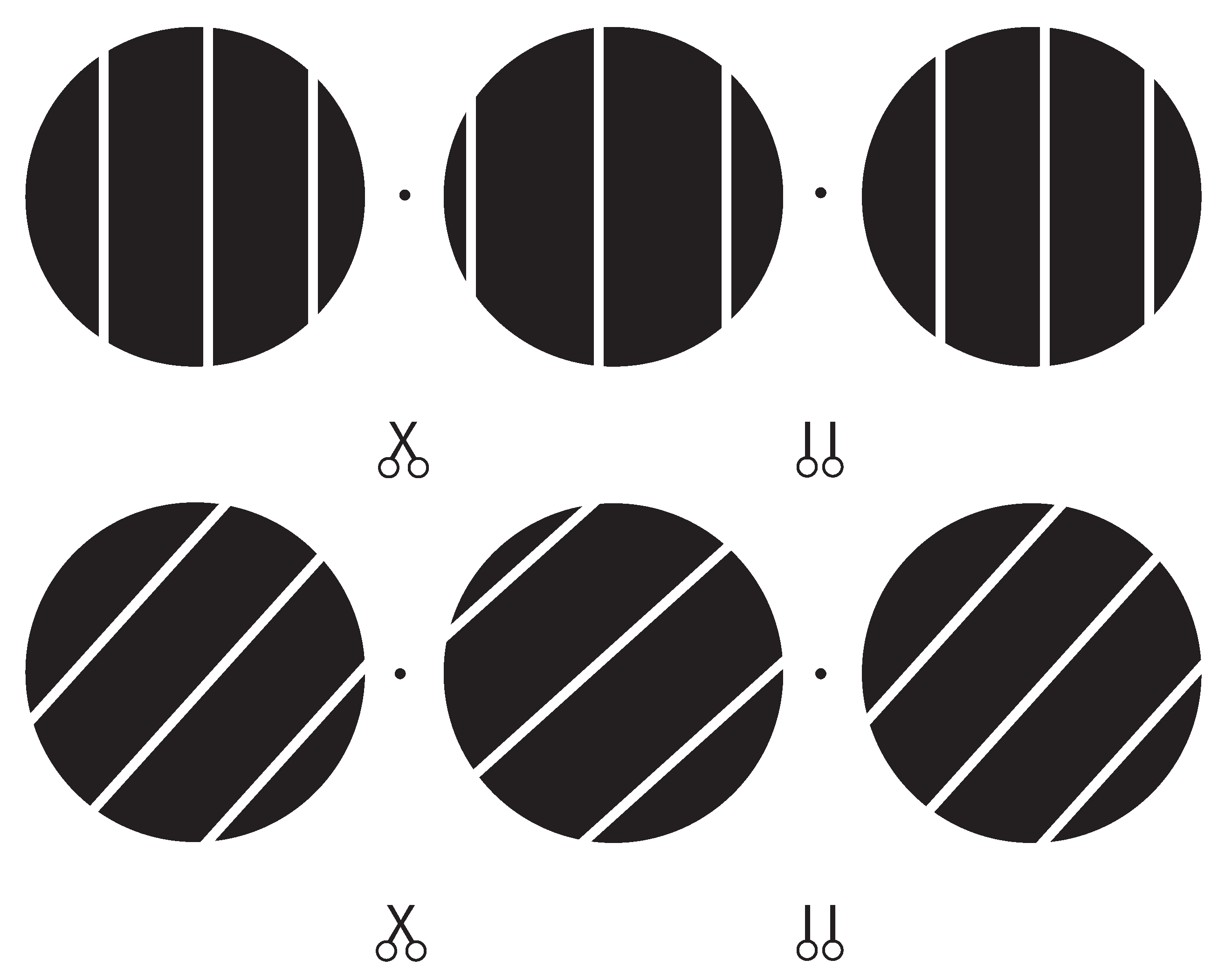

2.3. Stimulus

2.4. Procedure

3. Results

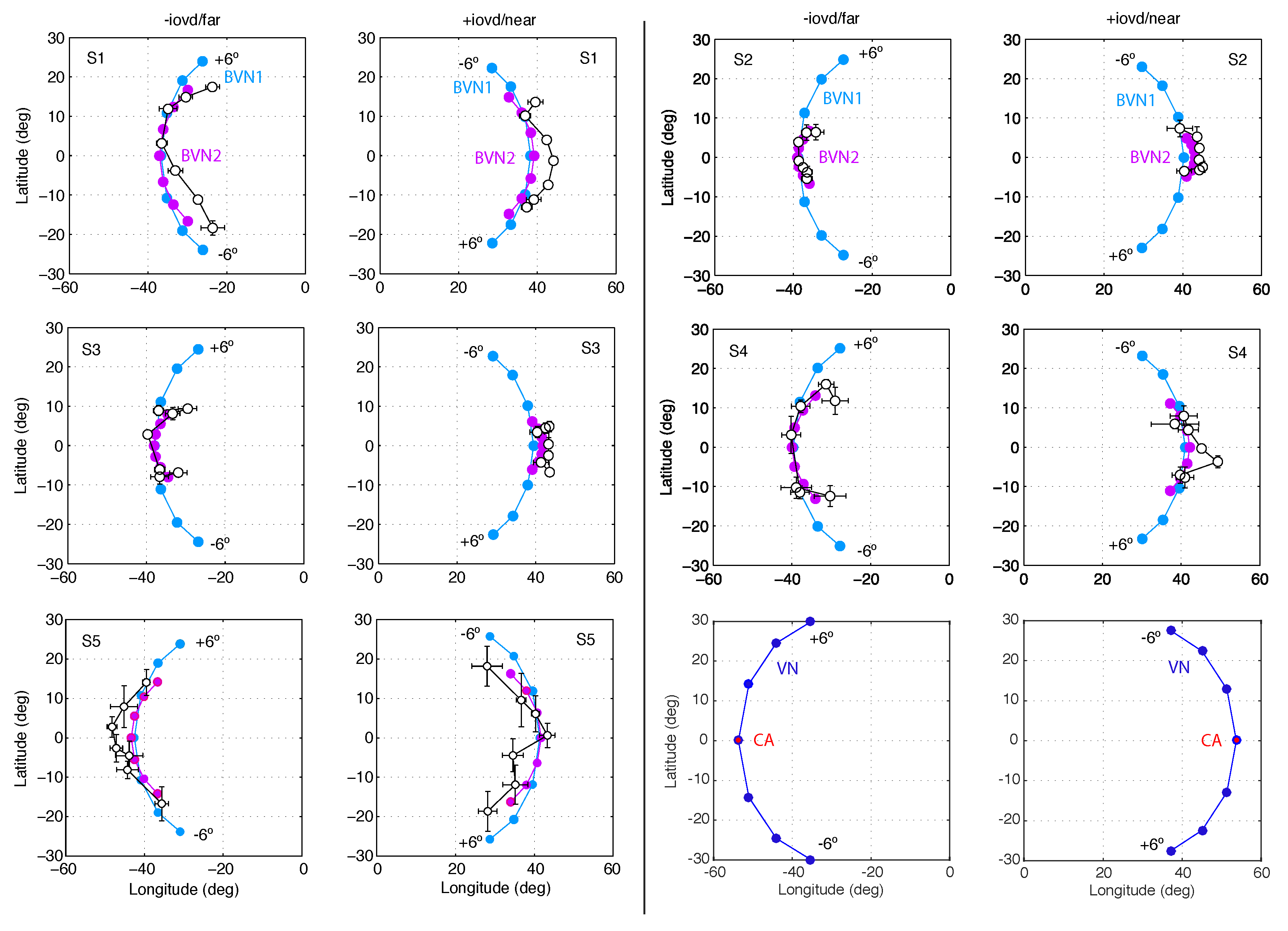

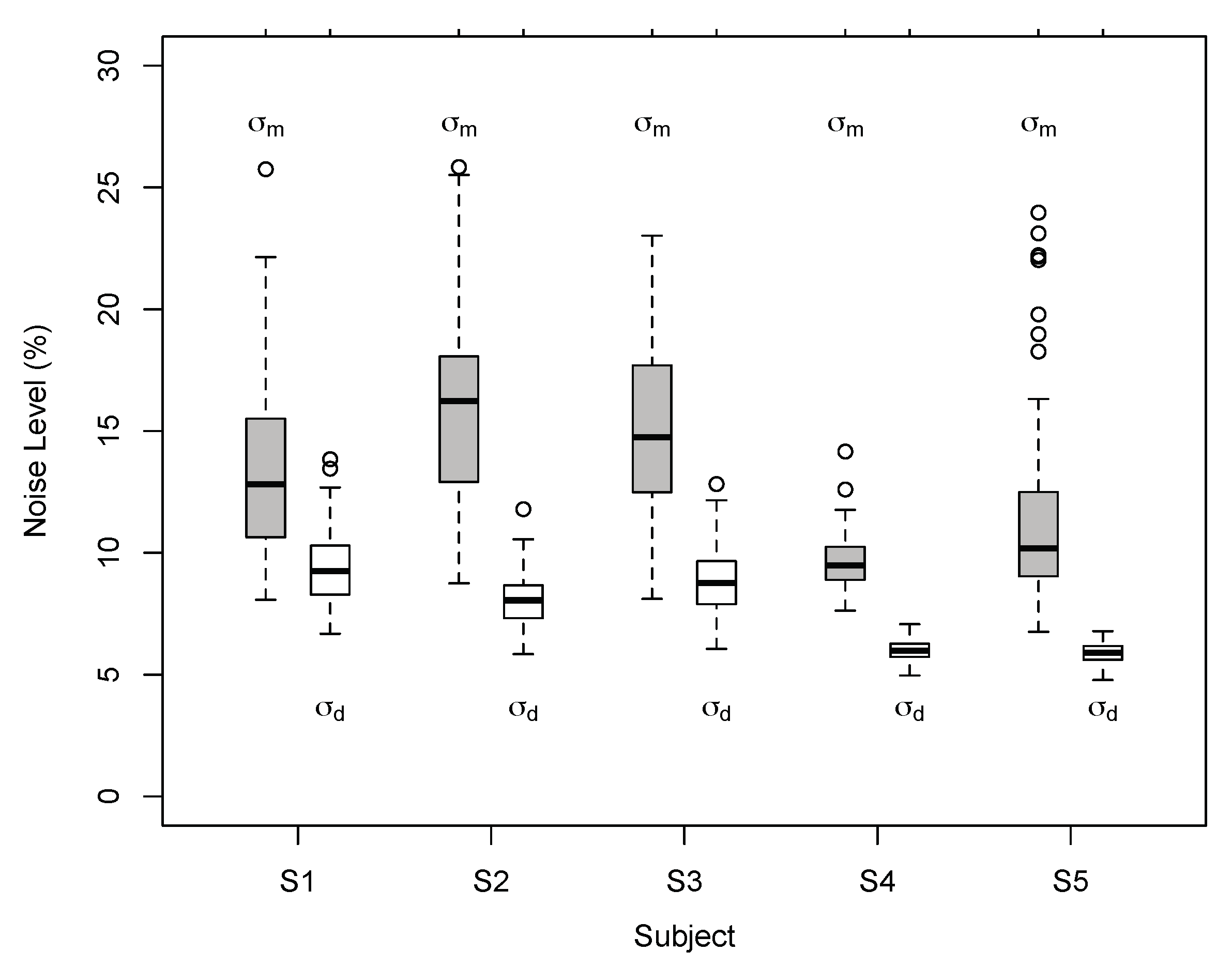

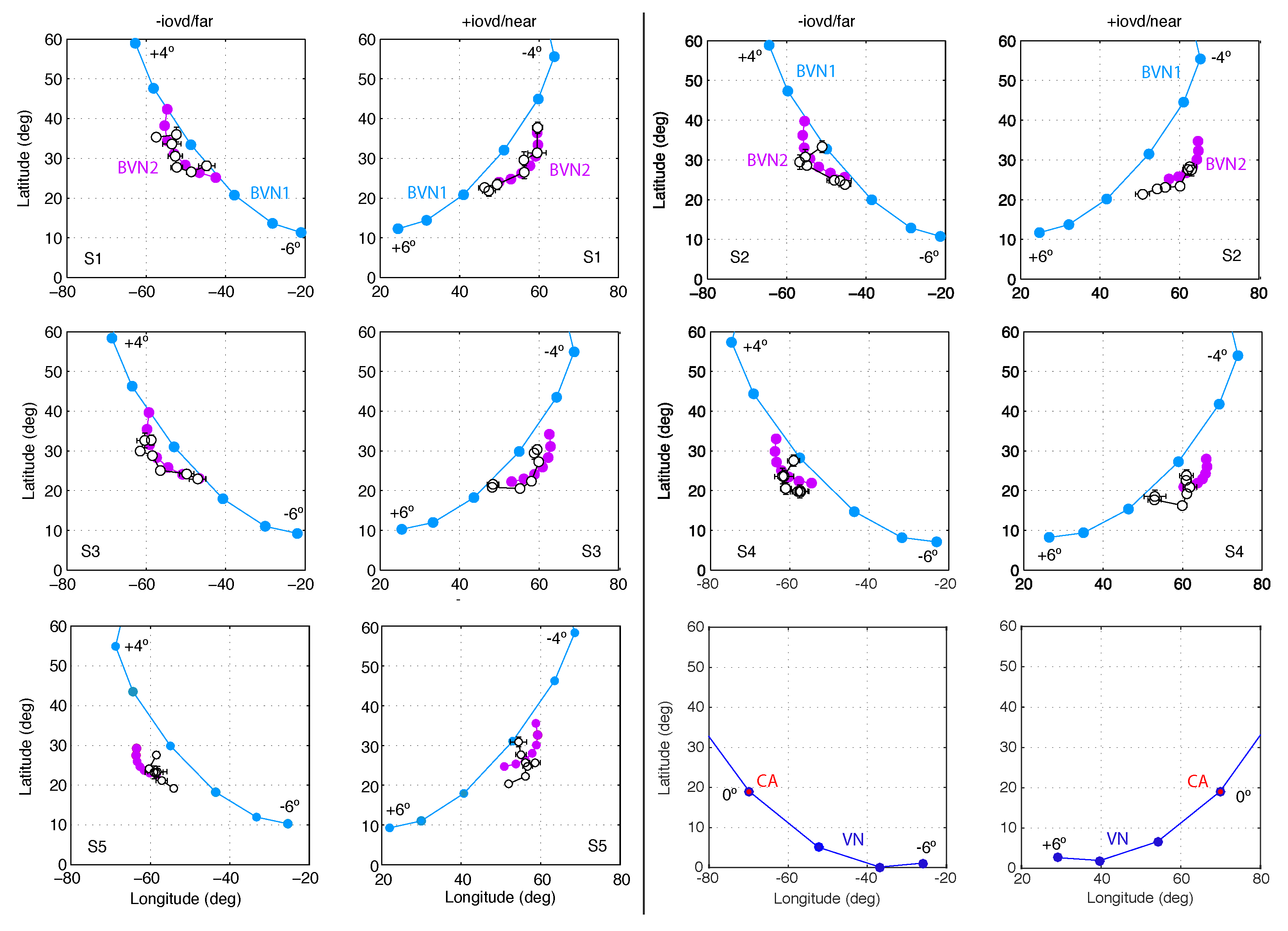

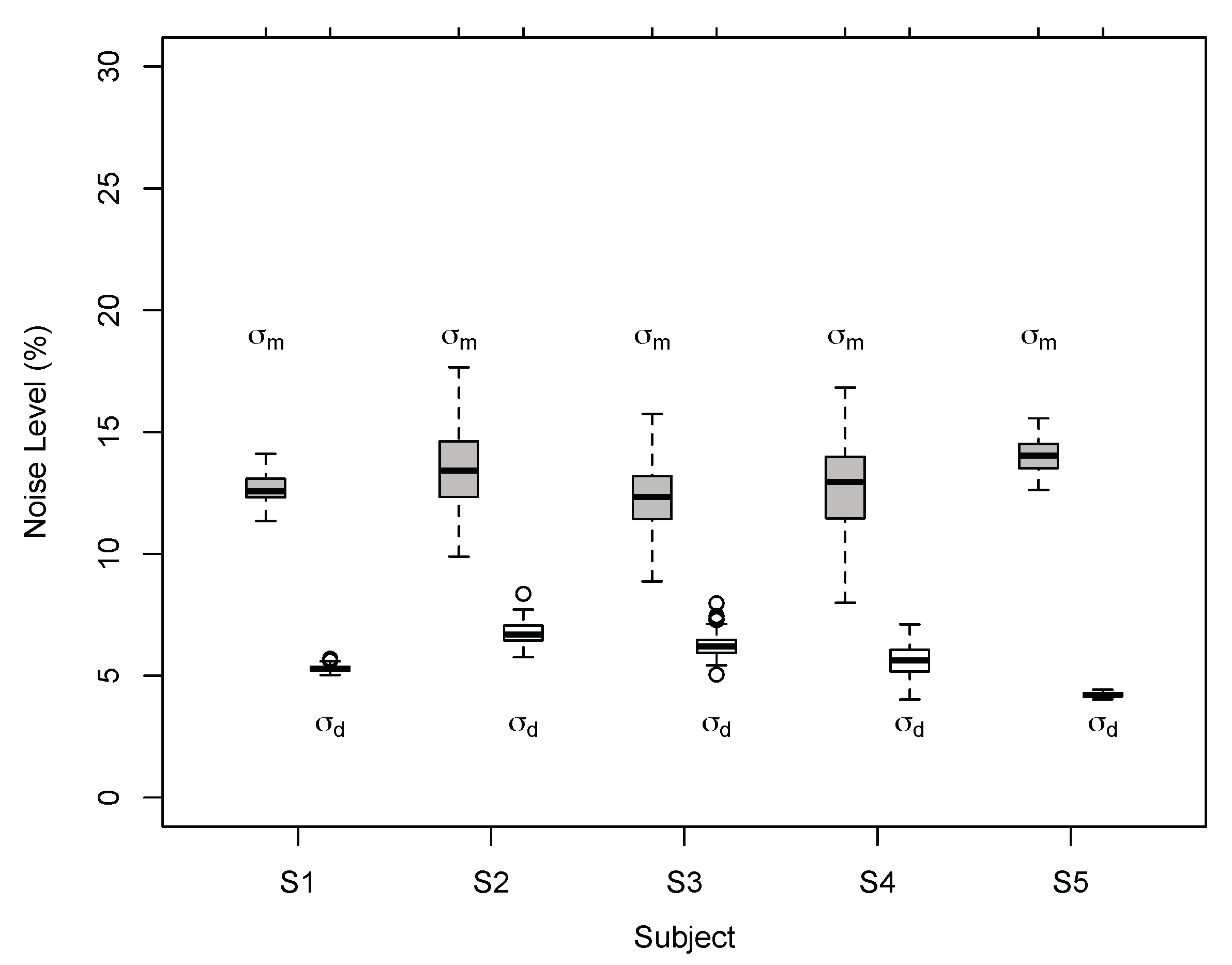

3.1. Results of Experiment 1

3.2. Results of Experiment 2

4. Discussion

Limitations and Implications

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A

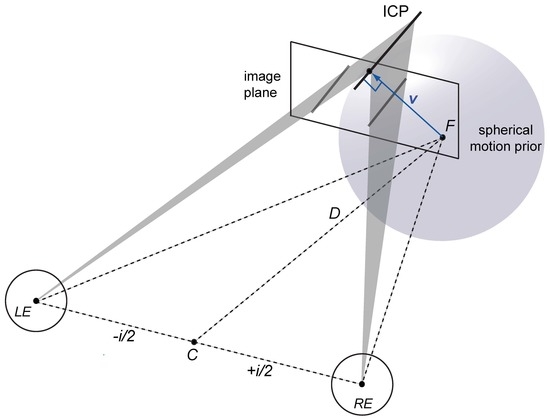

Appendix A.1. Viewing Geometry

Appendix A.2. Velocity Constraints

Appendix A.3. Brightness and Velocity Constraints

Appendix A.4. Bayesian Vector Normal Model

Appendix A.5. Model Fit and Model Comparison

| Obs. | BVN1 | BVN2 | Mod. Comp. | ||||

|---|---|---|---|---|---|---|---|

| Exp. 1 | : | : | : | LR | |||

| S1 | 7.03 | 93.0 | 8.97 | 6.85 | 21.7 | 1.89 | 0.69 |

| S2 | 6.42 | 227.4 | 14.48 | 6.03 | 4.32 | 8.27 | 16.67 |

| S3 | 6.68 | 182.2 | 13.53 | 6.35 | 12.0 | 5.08 | 3.39 |

| S4 | 6.20 | 54.7 | 9.55 | 6.00 | 7.01 | 3.28 | 1.38 |

| S5 | 6.19 | 24.96 | 10.22 | 5.95 | 7.63 | 3.37 | 1.44 |

| Exp. 2 | : | : | : | LR | |||

| S1 | 6.41 | 486.3 | 12.71 | 5.31 | 15.2 | 6.65 | 7.43 |

| S2 | 6.18 | 570.8 | 13.77 | 5.00 | 29.3 | 5.81 | 4.89 |

| S3 | 5.58 | 859.1 | 11.20 | 4.48 | 24.9.0 | 6.62 | 7.33 |

| S4 | 4.67 | 632.9 | 11.01 | 3.36 | 27.8 | 6.06 | 5.54 |

| S5 | 5.59 | 1741 | 13.99 | 4.21 | 46.6 | 6.92 | 8.52 |

References

- Hubel, D.; Wiesel, T. Receptive fields and functional architecture of monkey striate cortex. J. Physiol. 1968, 195, 215–243. [Google Scholar] [CrossRef] [PubMed]

- Marr, D. Vision. A Computational Investigation into the Human Representation and Processing of Visual Information; W H Freeman and Company: New York, NY, USA, 1982. [Google Scholar]

- Horn, B.; Schunck, B. Determining optical flow. Artif. Intell. 1981, 17, 185–204. [Google Scholar] [CrossRef]

- Lucas, B.; Kanade, T. An iterative image registration technique with an application to stereo vision. In Proceedings of the DARPA Image Understanding Workshop, Vancouver, BC, Canada, 24–28 August 1981; pp. 121–130. [Google Scholar]

- Stumpf, P. Über die Abhängigkeit der visuellen Bewegungsempfindung und ihres negativen Nachbildes von den Reizvorgängen auf der Netzhaut. Z. Psychol. 1911, 59, 321–330. [Google Scholar]

- Korte, A. Kinematoskopische Untersuchungen. Z. Psychol. 1915, 72, 194–296. [Google Scholar]

- Wallach, H. Über visuell wahrgenommene Bewegungsrichtung. Psychol. Forsch. 1935, 20, 325–380. [Google Scholar] [CrossRef]

- Ullman, S. The Interpretation of Visual Motion; MIT Press: Cambridge, MA, USA, 1979. [Google Scholar]

- Hildreth, E. The Measurement of Visual Motion; MIT Press: Cambridge, MA, USA, 1984. [Google Scholar]

- Welch, L. The perception of moving plaids reveals two motion-processing stages. Nature 1989, 337, 734–736. [Google Scholar] [CrossRef]

- Yo, C.; Wilson, H.R. Perceived direction of moving two-dimensional patterns depends on duration, contrast and eccentricity. Vis. Res. 1992, 32, 135–147. [Google Scholar] [CrossRef]

- Loffler, G.; Orbach, H.S. Anisotropy in judging the absolute direction of motion. Vis. Res. 2001, 41, 3677–3692. [Google Scholar] [CrossRef]

- Perrinet, L.; Masson, G. Motion-based prediction is sufficient to solve the aperture problem. Neural Comput. 2012, 24, 2726–2750. [Google Scholar] [CrossRef]

- Morgan, M.J.; Castet, E. The aperture problem in stereopsis. Vis. Res. 1997, 37, 2737–2744. [Google Scholar] [CrossRef]

- Adelson, E.H.; Movshon, J.A. Phenomenal coherence of moving visual patterns. Nature 1982, 300, 523–525. [Google Scholar] [CrossRef]

- Ito, H. The aperture problems in the Pulfrich effect. Perception 2003, 32, 367–375. [Google Scholar] [CrossRef] [PubMed]

- Rokers, B.; Czuba, T.B.; Cormack, L.K.; Huk, A.C. Motion processing with two eyes in three dimensions. J. Vis. 2011, 11. [Google Scholar] [CrossRef] [PubMed]

- Sakai, K.; Ogiya, M.; Hirai, Y. Decoding of depth and motion in ambiguous binocular perception. J. Opt. Soc. Am. A 2011, 28, 1445–1452. [Google Scholar] [CrossRef] [PubMed]

- Lages, M.; Heron, S. On the inverse problem of binocular 3D motion perception. PLoS Comput. Biol. 2010, 6, e1000999. [Google Scholar] [CrossRef]

- Gellert, W.; Gottwald, S.; Hellwich, M.; Kästner, H.; Künstner, H. VNR Concise Encyclopaedia of Mathematics, 2nd ed.; Van Nostrand Reinhold: New York, NY, USA, 1989; pp. 541–543. [Google Scholar]

- Von Helmholtz, H. Handbuch der Physiologischen Optik; English translation produced by Optical Society of America (1924–25); Voss: Leipzig, Germany, 1867. [Google Scholar]

- Gregory, R. Perceptions as hypotheses. Phil. Trans. R. Soc. Lond. B 1980, 290, 185–204. [Google Scholar] [CrossRef]

- Knill, D.; Kersten, D.; Yuille, A. Introduction: A Bayesian formulation of visual perception. In Perception as Bayesian Inference; Cambridge University Press: Cambridge, UK, 1996; Chapter 1; pp. 1–21. [Google Scholar]

- Pizlo, Z. Perception viewed as an inverse problem. Vis. Res. 2001, 41, 3145–3161. [Google Scholar] [CrossRef]

- Read, J.C.A. A Bayesian approach to the stereo correspondence problem. Neural Comput. 2002, 14, 1371–1392. [Google Scholar] [CrossRef]

- Mamassian, P.; Landy, M.; Maloney, L. Bayesian modeling of visual perception. In Probabilistic Models of the Brain: Perception and Neural Function; MIT Press: Cambridge, MA, USA, 2002; Chapter 1; pp. 13–36. [Google Scholar]

- Weiss, Y.; Simoncelli, E.P.; Adelson, E.H. Motion illusions as optimal percepts. Nat. Neurosci. 2002, 5, 598–604. [Google Scholar] [CrossRef]

- Ascher, D.; Grzywacz, N. A Bayesian model for the measurement of visual velocity. Vis. Res. 2000, 40, 3427–3434. [Google Scholar] [CrossRef]

- Stocker, A.A.; Simoncelli, E.P. Noise characteristics and prior expectations in human visual speed perception. Nat. Neurosci. 2006, 9, 578–585. [Google Scholar] [CrossRef] [PubMed]

- Lages, M. Bayesian models of binocular 3-D motion perception. J. Vis. 2006, 6, 508–522. [Google Scholar] [CrossRef] [PubMed]

- Welchman, A.E.; Lam, J.M.; Bülthoff, H.H. Bayesian motion estimation accounts for a surprising bias in 3D vision. Proc. Natl. Acad. Sci. USA 2008, 105, 12087–12092. [Google Scholar] [CrossRef] [PubMed]

- Rokers, B.; Fulvio, J.; Pillow, J.; Cooper, E. Systematic misperceptions of 3-D motion explained by Bayesian inference. J. Vis. 2018, 18, 1–23. [Google Scholar] [CrossRef] [PubMed]

- Lages, M.; Heron, S.; Wang, H. Local constraints for the perception of binocular 3D motion. In Developing and Applying Biologically-Inspired Vision Systems: Interdisciplinary Concepts; IGI Global: Hershey, PA, USA, 2013; Chapter 5; pp. 90–135. [Google Scholar]

- Wang, H.; Heron, S.; Moreland, J.; Lages, M. A Bayesian approach to the aperture problem of 3D motion perception. In Proceedings of the 2012 International Conference on 3D Imaging (IC3D), Liège, Belgium, 3–5 December 2012; pp. 1–8. [Google Scholar]

- Cumming, B.; Parker, A. Binocular mechanisms for detecting motion in depth. Vis. Res. 1994, 34, 483–495. [Google Scholar] [CrossRef]

- Hubel, D.; Wiesel, T. Receptive fields, binocular interaction and functional architecture in the cat’s visual cortex. J. Physiol. 1962, 160, 106–154. [Google Scholar] [CrossRef]

- Hubel, D.; Wiesel, T. Stereoscopic vision in macaque monkey. Nature 1970, 225, 41–42. [Google Scholar] [CrossRef]

- Ohzawa, I.; DeAngelis, G.C.; Freeman, R.D. Stereoscopic depth discrimination in the visual cortex: Neurons ideally suited as disparity detectors. Science 1990, 249, 1037–1041. [Google Scholar] [CrossRef]

- Cormack, L.; Czuba, T.; Knöll, J.; Huk, A. Binocular mechanisms of 3D motion processing. Annu. Rev. Vis. Sci. 2017, 3, 297–318. [Google Scholar] [CrossRef]

- Shioiri, S.; Saisho, H.; Yaguchi, H. Motion in depth based on inter-ocular velocity differences. Vis. Res. 2000, 40, 2565–2572. [Google Scholar] [CrossRef]

- Czuba, T.B.; Rokers, B.; Huk, A.C.; Cormack, L.K. Speed and eccentricity tuning reveal a central role for the velocity-based cue to 3D visual motion. J. Neurophysiol. 2010, 104, 2886–2899. [Google Scholar] [CrossRef]

- Qian, N.; Andersen, R.A. A physiological model for motion-stereo integration and a unified explanation of Pulfrich-like phenomena. Vis. Res. 1997, 37, 1683–1698. [Google Scholar] [CrossRef]

- Anzai, A.; Ohzawa, I.; Freeman, R.D. Joint-encoding of motion and depth by visual cortical neurons: Neural basis of the Pulfrich effect. Nat. Neurosci. 2001, 4, 513–518. [Google Scholar] [CrossRef] [PubMed]

- Peng, Q.; Shi, B.E. The changing disparity energy model. Vis. Res. 2010, 50, 181–192. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Peng, Q.; Shi, B. Neural population models for perception of motion in depth. Vis. Res. 2014, 101, 11–31. [Google Scholar] [CrossRef] [PubMed]

- Regan, D.; Beverley, K.J.; Cynader, M. Stereoscopic subsystems for position in depth and for motion in depth. Proc. R. Soc. Lond. B Biol. Sci. 1979, 204, 485–501. [Google Scholar] [PubMed]

- Brooks, K.R. Stereomotion speed perception: Contributions from both changing disparity and interocular velocity difference over a range of relative disparities. J. Vis. 2004, 4, 1061–1079. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Lages, M.; Heron, S. Motion and disparity processing informs Bayesian 3D motion estimation. Proc. Natl. Acad. Sci. USA 2008, 105, E117. [Google Scholar] [CrossRef]

- Brainard, D. The Psychophysics Toolbox. Spat. Vis. 1997, 10, 433–436. [Google Scholar] [CrossRef]

- Pelli, D. The VideoToolbox software for visual psychophysics: Transforming numbers into movies. Spat. Vis. 1997, 10, 437–442. [Google Scholar] [CrossRef]

- Porrill, J.; Frisby, J.P.; Adams, W.J.; Buckley, D. Robust and optimal use of information in stereo vision. Nature 1999, 397, 63–66. [Google Scholar] [CrossRef] [PubMed]

- Hillis, J.; Watt, S.; Landy, M.; Banks, M. Slant from texture and disparity cues: Optimal cue combination. J. Vis. 2004, 4, 967–992. [Google Scholar] [CrossRef] [PubMed]

- Watt, S.J.; Akeley, K.; Ernst, M.O.; Banks, M.S. Focus cues affect perceived depth. J. Vis. 2005, 5, 834–862. [Google Scholar] [CrossRef] [PubMed]

- Nefs, H.T.; O’hare, L.; Harris, J.M. Two independent mechanisms for motion-in-depth perception: Evidence from individual differences. Front. Psychol. 2010, 1, 155. [Google Scholar] [CrossRef] [PubMed]

- Heron, S.; Lages, M. Screening and sampling in studies of binocular vision. Vis. Res. 2012, 62, 228–234. [Google Scholar] [CrossRef] [PubMed]

- Barendregt, M.; Dumoulin, S.; Rokers, B. Impaired velocity processing reveals an agnosia for motion in depth. Psychol. Sci. 2016, 27, 1474–1485. [Google Scholar] [CrossRef] [PubMed]

- Howard, I.; Rogers, B. Seeing in Depth; University of Toronto Press: Toronto, ON, Canada, 2002; Volume 2. [Google Scholar]

- Greenwald, H.S.; Knill, D.C. Orientation disparity: A cue for 3D orientation? Neural Comput. 2009, 21, 2581–2604. [Google Scholar] [CrossRef]

- Van Ee, R.; Schor, C.M. Unconstrained stereoscopic matching of lines. Vis. Res. 2000, 40, 151–162. [Google Scholar] [CrossRef]

- Shimojo, S.; Silverman, G.; Nakayama, K. Occlusion and the solution to the aperture problem for motion. Vis. Res. 1989, 29, 619–626. [Google Scholar] [CrossRef]

- Anderson, B.L. Stereoscopic surface perception. Neuron 1999, 24, 919–928. [Google Scholar] [CrossRef]

- Lu, H.; Lin, T.; Lee, A.; Vese, L.; Yuille, A. Functional form of motion priors in human motion perception. Proc. Neural Inf. Process. Syst. (NIPS) 2010, 300, 1–9. [Google Scholar]

- Farell, B.; Chai, Y.C.; Fernandez, J.M. Projected disparity, not horizontal disparity, predicts stereo depth of 1-D patterns. Vis. Res. 2009, 49, 2209–2216. [Google Scholar] [CrossRef] [PubMed]

- Allen, B.; Haun, A.; Hanley, T.; Green, C.; Rokers, B. Optimal combination of the binocular cues to 3D motion. Investig. Ophthalmol. Vis. Sci. 2015, 56, 7589–7596. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Bakin, J.S.; Nakayama, K.; Gilbert, C.D. Visual responses in monkey areas V1 and V2 to three-dimensional surface configurations. J. Neurosci. 2000, 20, 8188–8198. [Google Scholar] [CrossRef] [PubMed]

- Thomas, O.M.; Cumming, B.G.; Parker, A.J. A specialization for relative disparity in V2. Nat. Neurosci. 2002, 5, 472–478. [Google Scholar] [CrossRef]

- Hinkle, D.A.; Connor, C.E. Three-dimensional orientation tuning in macaque area V4. Nat. Neurosci. 2002, 5, 665–670. [Google Scholar] [CrossRef]

- Maunsell, J.; van Essen, D. The connections of the middle temporal visual area (MT) and their relationship to a cortical hierarchy in the macaque monkey. J. Neurosci. 1983, 3, 2563–2586. [Google Scholar] [CrossRef]

- Felleman, D.J.; Essen, D.C.V. Distributed hierarchical processing in the primate cerebral cortex. Cereb Cortex 1991, 1, 1–47. [Google Scholar] [CrossRef]

- Ponce, C.R.; Lomber, S.G.; Born, R.T. Integrating motion and depth via parallel pathways. Nat. Neurosci. 2008, 11, 216–223. [Google Scholar] [CrossRef][Green Version]

- DeAngelis, G.C.; Newsome, W.T. Perceptual “Read-Out” of Conjoined Direction and Disparity Maps in Extrastriate Area MT. PLoS Biol. 2004, 2, e77. [Google Scholar] [CrossRef]

- Majaj, N.J.; Carandini, M.; Movshon, J.A. Motion integration by neurons in macaque MT is local, not global. J. Neurosci. 2007, 27, 366–370. [Google Scholar] [CrossRef]

- Likova, L.T.; Tyler, C.W. Stereomotion processing in the human occipital cortex. Neuroimage 2007, 38, 293–305. [Google Scholar] [CrossRef]

- Rokers, B.; Cormack, L.K.; Huk, A.C. Disparity- and velocity-based signals for three-dimensional motion perception in human MT+. Nat. Neurosci. 2009, 12, 1050–1055. [Google Scholar] [CrossRef]

- Ban, H.; Preston, T.J.; Meeson, A.; Welchman, A.E. The integration of motion and disparity cues to depth in dorsal visual cortex. Nat. Neurosci. 2012, 15, 636–643. [Google Scholar] [CrossRef]

- Czuba, T.; Huk, A.; Cormack, L.; Kohn, A. Area MT encodes three-dimensional motion. J. Neurosci. 2014, 34, 15522–15533. [Google Scholar] [CrossRef]

- Van Ee, R.; Anderson, B.L. Motion direction, speed and orientation in binocular matching. Nature 2001, 410, 690–694. [Google Scholar] [CrossRef][Green Version]

- Bradshaw, M.F.; Cumming, B.G. The direction of retinal motion facilitates binocular stereopsis. Proc. Biol. Sci. 1997, 264, 1421–1427. [Google Scholar] [CrossRef]

- Tyler, C. Stereoscopic depth movement. Science 1971, 174, 958–961. [Google Scholar] [CrossRef]

- Lages, M.; Mamassian, P.; Graf, E. Spatial and temporal tuning of motion in depth. Vis. Res. 2003, 43, 2861–2873. [Google Scholar] [CrossRef][Green Version]

- Cooper, E.; van Ginkel, M.; Rokers, B. Sensitivity and bias in the discrimination of two-dimensional and three-dimensional motion direction. J. Vis. 2016, 16, 1–11. [Google Scholar] [CrossRef]

- Welchman, A. The human brain in depth: How we see in 3D. Annu. Rev. Vis. Sci. 2016, 2, 345–376. [Google Scholar] [CrossRef]

- Raftery, A. Bayesian model selection in social research. In Sociological Methodology; Blackwell: Oxford, UK, 1995; pp. 111–196. [Google Scholar]

- Raftery, A. Bayes factors and BIC. Sociol. Methods Res. 1999, 27, 411–427. [Google Scholar] [CrossRef]

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lages, M.; Heron, S. On the Aperture Problem of Binocular 3D Motion Perception. Vision 2019, 3, 64. https://doi.org/10.3390/vision3040064

Lages M, Heron S. On the Aperture Problem of Binocular 3D Motion Perception. Vision. 2019; 3(4):64. https://doi.org/10.3390/vision3040064

Chicago/Turabian StyleLages, Martin, and Suzanne Heron. 2019. "On the Aperture Problem of Binocular 3D Motion Perception" Vision 3, no. 4: 64. https://doi.org/10.3390/vision3040064

APA StyleLages, M., & Heron, S. (2019). On the Aperture Problem of Binocular 3D Motion Perception. Vision, 3(4), 64. https://doi.org/10.3390/vision3040064