Horsing Around—A Dataset Comprising Horse Movement

Abstract

1. Summary

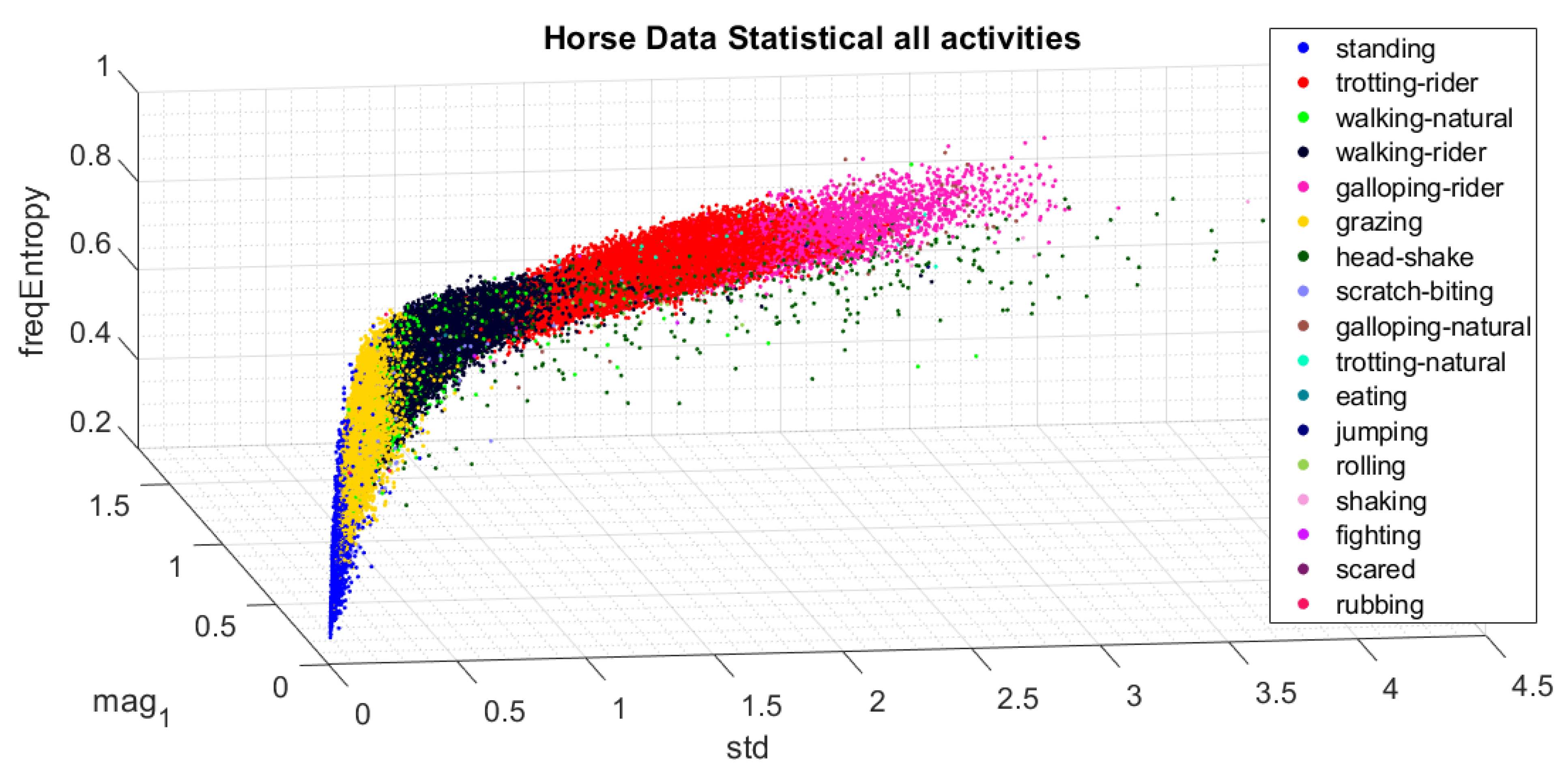

2. Data Description

File Structure

- /matlab

- Folder that contains the datasets in Matlab format organized per subject number (%ID) and name (%NAME) as subject_%ID_%NAME.mat. The columns of the tables are described in Table 1. Each row in the tables denotes a raw data sample.

- /csv

- Folder that contains the datasets in .csv format. Each .csv file contains a maximum of rows and the datasets are therefore separated into multiple .csv parts (denoted by %PART in the filename). Files are named as follows: subject_%ID_%NAME_part_%PART.mat.

- subject_mapping[ .xlsx, .csv ]

- A table that maps the name of each subject to an integer subject identifier.

- activity_distribution[ .xlsx, .csv ]

- A table containing the number of data samples per activity for each subject (Table 2).

- settings[ .xlsx, .csv ]

- Table that shows the used settings to organize the dataset and activity_distribution table.

3. Methods

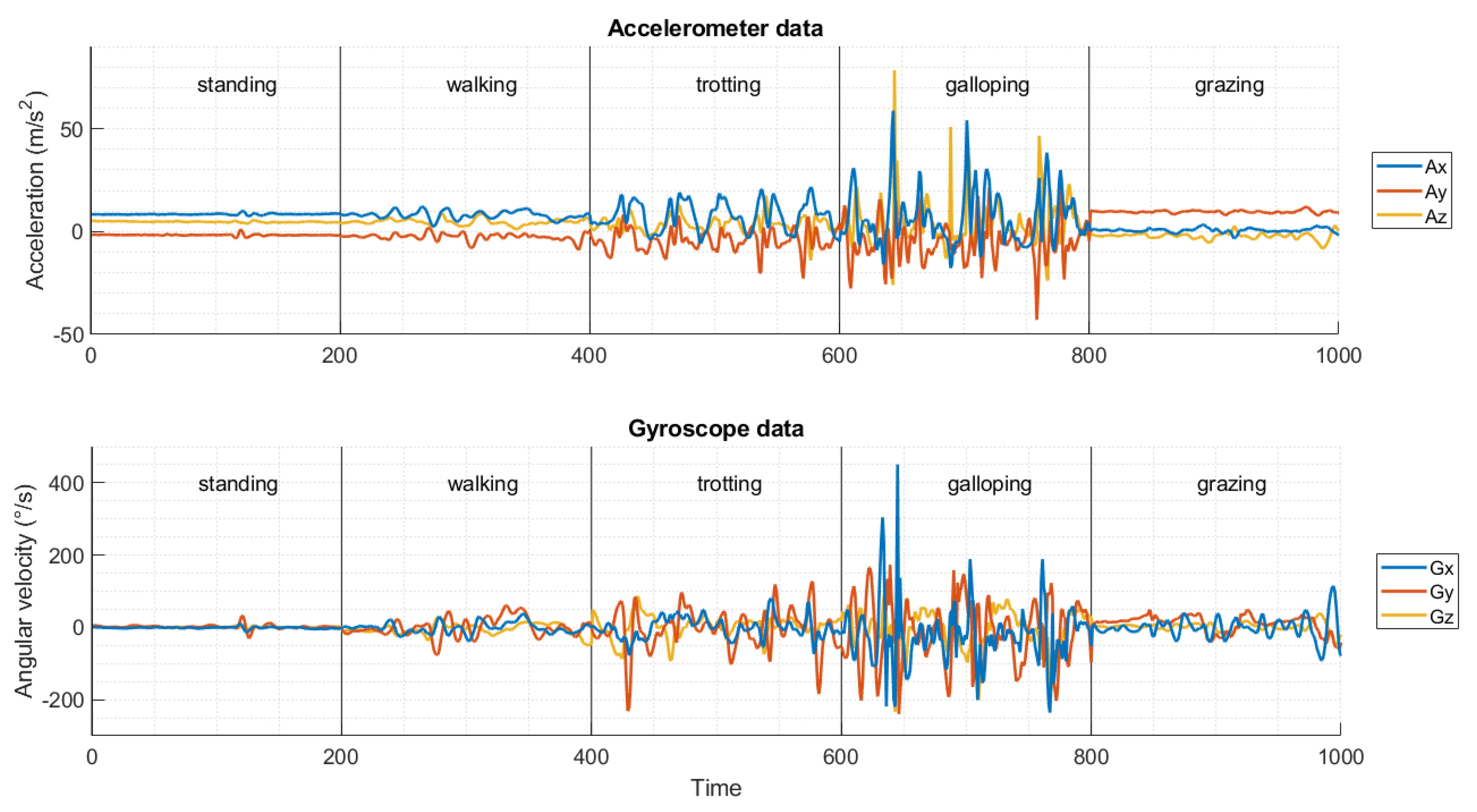

3.1. Data Acquisition

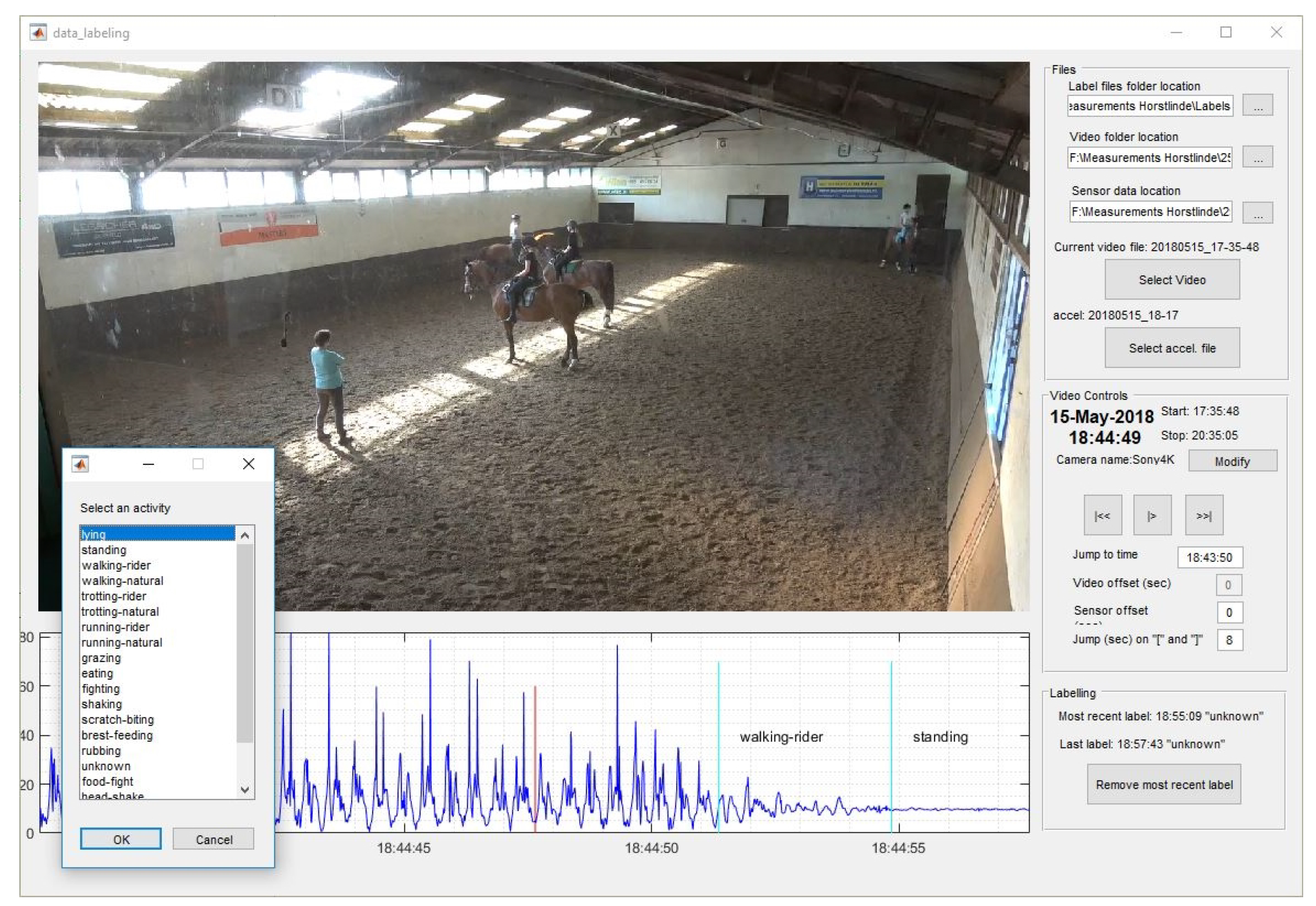

3.2. Data Labeling

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Kamminga, J.W.; Le, D.V.; Meijers, J.P.; Bisby, H.; Meratnia, N.; Havinga, P.J. Robust Sensor- Orientation-Independent Feature Selection for Animal Activity Recognition on Collar Tags. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 2018, 2, 1–27. [Google Scholar] [CrossRef]

- Kamminga, J.W.; Bisby, H.C.; Le, D.V.; Meratnia, N.; Havinga, P.J.M. Generic Online Animal Activity Recognition on Collar Tags. In Proceedings of the 2017 ACM International Joint Conference on Pervasive and Ubiquitous Computing and Proceedings of the 2017 ACM International Symposium on Wearable Computers; ACM: New York, NY, USA, 2017; pp. 597–606. [Google Scholar] [CrossRef]

- Juang, P.; Oki, H.; Wang, Y.; Martonosi, M.; Peh, L.S.; Rubenstein, D. Energy-efficient computing for wildlife tracking. ACM SIGOPS Oper. Syst. Rev. 2002, 36, 96. [Google Scholar] [CrossRef]

- Shepard, E.L.C.; Wilson, R.P.; Quintana, F.; Laich, A.G.; Liebsch, N.; Albareda, D.A.; Halsey, L.G.; Gleiss, A.; Morgan, D.T.; Myers, A.E.; et al. Identification of animal movement patterns using tri-axial accelerometry. Endanger. Spec. Res. 2010, 10, 47–60. [Google Scholar] [CrossRef]

- Nathan, R.; Spiegel, O.; Fortmann-Roe, S.; Harel, R.; Wikelski, M.; Getz, W.M. Using tri-axial acceleration data to identify behavioral modes of free-ranging animals: General concepts and tools illustrated for griffon vultures. J. Exp. Biol. 2012, 215, 986–996. [Google Scholar] [CrossRef] [PubMed]

- Wilson, R.P.; White, C.R.; Quintana, F.; Halsey, L.G.; Liebsch, N.; Martin, G.R.; Butler, P.J. Moving towards acceleration for estimates of activity-specific metabolic rate in free-living animals: The case of the cormorant. J. Anim. Ecol. 2006, 75, 1081–1090. [Google Scholar] [CrossRef] [PubMed]

- Yoda, K.; Sato, K.; Niizuma, Y.; Kurita, M.; Bost, C.A.; Le Maho, Y.; Naito, Y. Precise monitoring of porpoising behaviour of Adélie penguins determined using acceleration data loggers. J. Exp. Biol. 1999, 202, 3121–3126. [Google Scholar] [PubMed]

- Tapiador-Morales, R.; Rios-Navarro, A.; Jimenez-Fernandez, A.; Dominguez-Morales, J.; Linares-Barranco, A. System based on inertial sensors for behavioral monitoring of wildlife. In Proceedings of the IEEE CITS 2015–2015 International Conference on Computer, Information and Telecommunication Systems, Gijon, Spain, 15–17 July 2015. [Google Scholar] [CrossRef]

- le Roux, S.P.; Marias, J.; Wolhuter, R.; Niesler, T. Animal-borne behaviour classification for sheep (Dohne Merino) and Rhinoceros (Ceratotherium simum and Diceros bicornis). Anim. Biotelem. 2017, 5, 25. [Google Scholar] [CrossRef]

- Bishop-Hurley, G.; Henry, D.; Smith, D.; Dutta, R.; Hills, J.; Rawnsley, R.; Hellicar, A.; Timms, G.; Morshed, A.; Rahman, A.; et al. An investigation of cow feeding behavior using motion sensors. In Proceedings of the 2014 IEEE International Instrumentation and Measurement Technology Conference (I2MTC), Montevideo, Uruguay, 12–15 May 2014; pp. 1285–1290. [Google Scholar] [CrossRef]

- González, L.A.; Bishop-hurley, G.J.; Handcock, R.N.; Crossman, C. Behavioral classification of data from collars containing motion sensors in grazing cattle. Comput. Electron. Agric. 2015, 110, 91–102. [Google Scholar] [CrossRef]

- Vázquez Diosdado, J.A.; Barker, Z.E.; Hodges, H.R.; Amory, J.R.; Croft, D.P.; Bell, N.J.; Codling, E.A. Classification of behaviour in housed dairy cows using an accelerometer-based activity monitoring system. Anim. Biotelem. 2015, 3, 15. [Google Scholar] [CrossRef]

- Martiskainen, P.; Järvinen, M.; Skön, J.P.; Tiirikainen, J.; Kolehmainen, M.; Mononen, J. Cow behaviour pattern recognition using a three-dimensional accelerometer and support vector machines. Appl. Anim. Behav. Sci. 2009, 119, 32–38. [Google Scholar] [CrossRef]

- Dutta, R.; Smith, D.; Rawnsley, R.; Bishop-Hurley, G.; Hills, J.; Timms, G.; Henry, D. Dynamic cattle behavioural classification using supervised ensemble classifiers. Comput. Electron. Agric. 2015, 111, 18–28. [Google Scholar] [CrossRef]

- Sneddon, J.; Mason, A. Automated Monitoring of Foraging Behaviour in Free Ranging Sheep Grazing a Bio-diverse Pasture using Audio and Video Information. In Proceedings of the 8th International Conference on Sensing Technology, Wellington, New Zealand, 3–5 December 2014; pp. 2–4. [Google Scholar] [CrossRef]

- Marais, J.; Petrus, S.; Roux, L.; Wolhuter, R.; Niesler, T. Automatic classification of sheep behaviour using 3-axis accelerometer data. In Proceedings of the 2014 PRASA, RobMech and AfLaT International Joint Symposium, Cape Town, South Africa, 27–28 November 2014; pp. 97–102. [Google Scholar]

- Petrus, S. A Prototype Animal Borne Behaviour Monitoring System. Ph.D. Thesis, Stellenbosch University, Stellenbosch, South Africa, 2016. [Google Scholar]

- Terrasson, G.; Llaria, A.; Marra, A.; Voaden, S. Accelerometer based solution for precision livestock farming: Geolocation enhancement and animal activity identification. IOP Conf. Ser. Mate. Sci. Eng. 2016, 138. [Google Scholar] [CrossRef]

- Watanabe, S.; Izawa, M.; Kato, A.; Ropert-Coudert, Y.; Naito, Y. A new technique for monitoring the detailed behaviour of terrestrial animals: A case study with the domestic cat. Appl. Anim. Behav. Sci. 2005, 94, 117–131. [Google Scholar] [CrossRef]

- Ladha, C.; Hammerla, N.; Hughes, E.; Olivier, P.; Ploetz, T. Dog’s life: Wearable Activity Recognition for Dogs. In Proceedings of the 2013 ACM international joint conference on Pervasive and ubiquitous computing—UbiComp ’13, Zurich, Switzerland, 8–12 September 2013; pp. 415–418. [Google Scholar] [CrossRef]

- Gutierrez-Galan, D.; Dominguez-Morales, J.P.; Cerezuela-Escudero, E.; Rios-Navarro, A.; Tapiador-Morales, R.; Rivas-Perez, M.; Dominguez-Morales, M.; Jimenez-Fernandez, A.; Linares-Barranco, A. Embedded neural network for real-time animal behavior classification. Neurocomputing 2018, 272, 17–26. [Google Scholar] [CrossRef]

- Kamminga, J.W. Horsing Around—A Dataset Comprising Horse Movement. 4TU.Centre for Research Data. Dataset. 2019. Available online: https://data.4tu.nl/repository/uuid:2e08745c-4178-4183-8551-f248c992cb14 (accessed on 22 September 2019).

- Kamminga, J.W.; Meratnia, N.; Havinga, P.J. Dataset: Horsing Around—Description and Analysis of Horse Movement Data. In The 2nd Workshop on Data Acquisition To Analysis (DATA’19), New York, NY, USA, 10 November 2019; Association for Computing Machinery: New York, NY, USA, 2019. [Google Scholar] [CrossRef]

- Bengio, Y.; Courville, A.; Vincent, P. Representation Learning: A Review and New Perspectives. IEEE Trans. Pattern Anal. Mach. Intell. 2013, 35, 1798–1828. [Google Scholar] [CrossRef] [PubMed]

- Friday, H.; Wah, Y.; Al-garadi, M.A.; Rita, U.; Nweke, H.F.; Teh, Y.W.; Al-garadi, M.A.; Alo, U.R.; Friday, H.; Wah, Y.; et al. Deep learning algorithms for human activity recognition using mobile and wearable sensor networks: State of the art and research challenges. Expert Syst. Appl. 2018, 105, 233–261. [Google Scholar] [CrossRef]

- Wang, J.; Chen, Y.; Hao, S.; Peng, X.; Hu, L. Deep learning for sensor-based activity recognition: A survey. Pattern Recognit. Lett. 2019, 119, 3–11. [Google Scholar] [CrossRef]

- Lecun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Kamminga, J. Deep Unsupervised Representation Learning for Animal Activity Recognition. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 2019. submitted. [Google Scholar]

- Gulf Coast Data Concepts, LLC. Human Activity Monitor: HAM; Gulf Coast Data Concepts, LLC.: Waveland, MS, USA, 2019. [Google Scholar]

- Kamminga, J.W.; Jones, M.; Seppi, K.; Meratnia, N.; Havinga, P.J. Synchronization between Sensors and Cameras in Movement Data Labeling Frameworks. In The 2nd Workshop on Data Acquisition To Analysis (DATA’19), New York, NY, USA, 10 November 2019; Association for Computing Machinery: New York, NY, USA, 2019. [Google Scholar] [CrossRef]

- Kamminga, J. Jacob-Kamminga/Matlab-Movement-Data-Labeling-Tool: Generic Version Release. 8 August 2019. Available online: https://doi.org/10.5281/zenodo.3364004 (accessed on 22 September 2019).

- MATLAB. Version 9.5.0.944444 (R2018b); The MathWorks Inc.: Natick, MA, USA, 2018. [Google Scholar]

| Column Name | Description |

|---|---|

| Ax | Raw data from accelerometer x-axis |

| Ay | Raw data from accelerometer y-axis |

| Az | Raw data from accelerometer z-axis |

| Gx | Raw data from gyroscope x-axis |

| Gy | Raw data from gyroscope y-axis |

| Gz | Raw data from gyroscope z-axis |

| Mx | Raw data from compass (magnetometer) x-axis |

| My | Raw data from compass (magnetometer) y-axis |

| Mz | Raw data from compass (magnetometer) z-axis |

| A3D | l2-norm (3D vector) of accelerometer axes |

| G3D | l2-norm (3D vector) of gyroscope axes |

| M3D | l2-norm (3D vector) of compass axes |

| label | Label that belongs to each row’s data |

| segment | Each activity has been segmented with a maximum length of 10 s. Data within one segment is continuous. Segments have been numbered incrementally. |

| subject | Subject identifier |

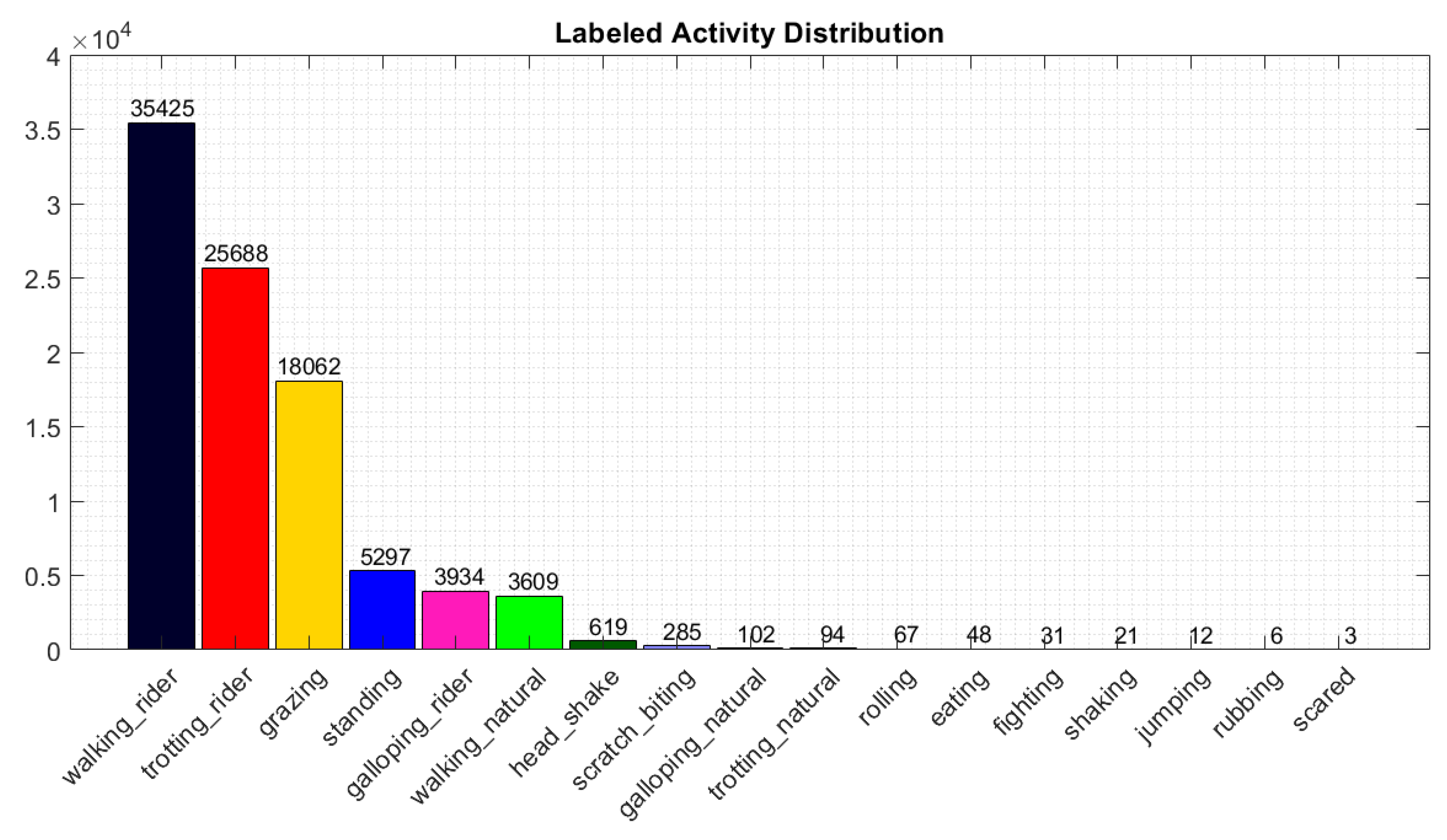

| Name/Activity | Null | Unknown | Walking_Rider | Trotting_Rider | Grazing | Standing | Galloping_Rider | Walking_Natural | Head_Shake | Scratch_Biting | Galloping_Natural | Trotting_Natural | Rolling | Eating | Fighting | Shaking | Jumping | Rubbing | Scared | Total |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Galoway | 62,155 | 23,264 | 9653 | 6374 | 4315 | 1750 | 1030 | 1402 | 59 | 170 | 13 | 49 | 13 | 16 | 25 | 4 | 110,292 | |||

| Bacardi | 92,775 | 9850 | 1317 | 1981 | 1116 | 245 | 288 | 360 | 22 | 40 | 13 | 6 | 108,013 | |||||||

| Driekus | 85,468 | 11,271 | 4024 | 2670 | 2465 | 341 | 310 | 270 | 55 | 14 | 13 | 3 | 23 | 31 | 4 | 106,962 | ||||

| Patron | 78,536 | 15,156 | 5150 | 3385 | 1951 | 1244 | 709 | 388 | 37 | 5 | 17 | 31 | 106,609 | |||||||

| Happy | 68,468 | 13,606 | 8896 | 7032 | 5062 | 1186 | 689 | 746 | 238 | 8 | 7 | 6 | 1 | 105,945 | ||||||

| Zonnerante | 90,431 | 90,431 | ||||||||||||||||||

| Duke | 81,885 | 81,885 | ||||||||||||||||||

| Viva | 69,441 | 4413 | 1066 | 700 | 58 | 82 | 79 | 5 | 4 | 1 | 75,849 | |||||||||

| Flower | 75,741 | 75,741 | ||||||||||||||||||

| Pan | 68,628 | 1575 | 241 | 36 | 44 | 70,524 | ||||||||||||||

| Porthos | 67,080 | 67,080 | ||||||||||||||||||

| Barino | 66,517 | 66,517 | ||||||||||||||||||

| Zafir | 38,424 | 10,349 | 5078 | 3546 | 1091 | 347 | 826 | 161 | 105 | 23 | 9 | 13 | 12 | 59,984 | ||||||

| Niro | 43,563 | 2740 | 85 | 20 | 2 | 46,410 | ||||||||||||||

| Sense | 38,823 | 1569 | 1977 | 39 | 157 | 120 | 44 | 15 | 6 | 6 | 2 | 42,758 | ||||||||

| Blondy | 31,579 | 31,579 | ||||||||||||||||||

| Noortje | 17,777 | 2878 | 31 | 20,686 | ||||||||||||||||

| Clever | 17,696 | 17,696 | ||||||||||||||||||

| total | 1,094,987 | 96,671 | 35,425 | 25,688 | 18,062 | 5297 | 3934 | 3609 | 619 | 285 | 102 | 94 | 67 | 48 | 31 | 21 | 12 | 6 | 3 | 1,284,961 |

| fraction | 85.22% | 7.52% | 2.76% | 2.00% | 1.41% | 0.41% | 0.31% | 0.28% | 0.05% | 0.02% | 0.01% | 0.01% | 0.005% | 0.004% | 0.002% | 0.002% | 0.001% | 0.000% | 0.000% | 100.00% |

| fraction of labeled | 37.97% | 27.53% | 19.36% | 5.68% | 4.22% | 3.87% | 0.66% | 0.31% | 0.11% | 0.10% | 0.072% | 0.051% | 0.033% | 0.023% | 0.013% | 0.006% | 0.003% |

| Name | Type |

|---|---|

| Viva | horse |

| Driekus | horse |

| Galoway | horse |

| Barino | horse |

| Zonnerante | horse |

| Patron | horse |

| Duke | horse |

| Porthos | horse |

| Bacardi | horse |

| Happy | horse |

| Clever | horse |

| Zafier | horse |

| Noortje | pony |

| Blondy | pony |

| Flower | pony |

| Peter Pan | pony |

| Niro | horse |

| Sense | horse |

| Activity | Description |

|---|---|

| Standing | Horse standing on 4 legs, no movement of head, standing still |

| Walking natural | No rider on horse, the horse puts each hoof down one at a time, creating a four beat rhythm |

| Walking rider | Rider on horse, the horse puts each hoof down one at a time, creating a four beat rhythm |

| Trotting natural | No rider on horse, 2 beat gait, one front hoof and its opposite hind hoof come down at the same time, making a two-beat rhythm, different speeds possible but always 2 beat gait |

| Trotting rider | Rider on horse, 2 beat gait, one front hoof and its opposite hind hoof come down at the same time, making a two-beat rhythm, different speeds possible but always 2 beat gait |

| Galloping natural | No rider on horse, one hind leg strikes the ground first, and then the other hind leg and one foreleg come down together, the the other foreleg strikes the ground. This movement creates a three-beat rhythm |

| Galloping rider | Rider on horse, can be right or left leaning, one hind leg strikes the ground first, and then the other hind leg and one foreleg come down together, the the other foreleg strikes the ground. This movement creates a three-beat rhythm |

| Jumping | All legs off the ground, going over an obstacle |

| Grazing | Head down in the grass, eating and slowly moving to get to new grass spots |

| Eating | Head is up, chewing and eating food, usually eating hay or long grass |

| Head shake | Shaking head alone, no body shake, either head up or down |

| Shaking | Shaking the whole body, including head |

| Scratch biting | Horse uses its head/mouth to scratch mostly front legs |

| Rubbing | Scratching body against an object, rubbing its body to scratch itself |

| Fighting | Horses try to bite and kick each other |

| Rolling | Horse laying down on ground, rolling on its back, from one side to another, not always full roll |

| Scared | Quick sudden movement, horse is startled |

| Parameter | Accelerometer | Gyroscope | Magnetometer |

|---|---|---|---|

| Unit | m/s2 | °/s | μT |

| Sampling rate (Hz) | 100 | 100 | 12 |

| Full scale range | 78.45 m/s2 (8 g) | 2000 °/s | 1200 μT |

| Sensitivity | 9.8 m/s2 (1 g) | 1 °/s | 1 μT |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kamminga, J.W.; Janßen, L.M.; Meratnia, N.; Havinga, P.J.M. Horsing Around—A Dataset Comprising Horse Movement. Data 2019, 4, 131. https://doi.org/10.3390/data4040131

Kamminga JW, Janßen LM, Meratnia N, Havinga PJM. Horsing Around—A Dataset Comprising Horse Movement. Data. 2019; 4(4):131. https://doi.org/10.3390/data4040131

Chicago/Turabian StyleKamminga, Jacob W., Lara M. Janßen, Nirvana Meratnia, and Paul J. M. Havinga. 2019. "Horsing Around—A Dataset Comprising Horse Movement" Data 4, no. 4: 131. https://doi.org/10.3390/data4040131

APA StyleKamminga, J. W., Janßen, L. M., Meratnia, N., & Havinga, P. J. M. (2019). Horsing Around—A Dataset Comprising Horse Movement. Data, 4(4), 131. https://doi.org/10.3390/data4040131