1. Introduction

The advent and wide use of digital technologies for science publishing has triggered a fast-moving avalanche of scholarly communications. Papers are piling at an exponential rate as young investigators toil to meet the high demands of an academic system that is increasingly led by numbers rather than by judgement [

1,

2]. In such a scenario, an ever-increasing number of scientists compete with one another for available funding, as well as for limited seats in the ranks of academia. And they all know that the impact of their science is the single most highly regarded factor to boost their chances of success in the competitive race [

3].

It is thus not surprising that so much importance is attached to quantitative measures of scientific impact based on the counting of papers’ citations. A key factor here is the

h-index, which was first proposed by Jorge Hirsch in 2005, and since, has taken the scientific community by storm, forever popularizing citation counting for individual researchers [

4,

5,

6]. The metric is now a widely embraced key to success with recruiters routinely asking for

h-index values from candidates, some universities pay money on the basis of the number, and web tools make it easy to track [

7,

8,

9].

Evaluating in this way has the obvious advantage of applying the same standards to all researchers within the same area. Objective measures such as the

h-index are expected to create a level playing field for assessing the quality of researchers in each academic discipline. This, in turn, allows fairer and less biased assessment of the work of individuals, research groups, and departments, thus contrasting favoritism and nepotism in hiring decisions and resource allocations. However, abuse of such indicators by excessive self-citing can generate perverse and unintended effects on the direction of research [

10]. To prevent this from happening requires that self-citation data become more transparent, explainable, and accountable.

2. Bibliometrics and Peer Judgment

The appeal to numbers as aids to human judgment is a very common narrative in modern science. This is so not only because numbers promise precision, but also because they can bring about a kind of disinterested assessment. As Theodor Porter points out “[a] decision made by the numbers […] has at least the appearance of being fair and impersonal” [

11]. However, assessing science only by the numbers has its disadvantages as well.

For one thing, there are attributes that matter to recruitment and funding agencies that quantitative measures can hardly capture. Reliability, dedication, fairness to co-workers, availability to students and colleagues, project management skills, originality, curiosity, independence, and so on do not lend themselves easily to objective measurement and quantification, and certainly current bibliometric indices have little to reveal about them. What is more, excessive emphasis on citation counts could drive research efforts towards the safest topics, that is, those that guarantee more immediate dividends in terms of visibility and citations. This could channel disproportionate amounts of effort and money towards the latest most fashionable areas of research, while leaving other important areas underfunded. As a consequence, following scientifically more risky or unconventional paths may instead be discouraged by a system that rewards primarily how quickly one’s research gets picked up by others.

In light of these downsides, the current obsession with quantitative assessment may seem disproportionate. Yet, if regarded properly, such measures, which are under constant development and scrutiny, can still serve their purposes. In particular, paying attention to the ways in which manipulation of objective measurements can lead to distortions in the evaluation of scientific impact and productivity is critical. Here, we briefly consider how excessive self-citation may alter the reliability of the h-index and call for enhanced transparency, not as a long-term solution, but rather as a means for scientists across the various disciplines to better understand, define, and address appropriate citation behavior within their respective fields.

3. The Dangers of Excessive Self-Citation

Self-citing is permissible when scholarly work is used in subsequent research, however, many see it as a slippery slope to abuse for personal gratification and self-promotion. Excessiveness is incentivized by a recent trend in scientific management decision-making to rely heavily on bibliometrics to hire, promote, and fund. Not surprisingly, much time is now spent by scientists, as one group of authors recently expressed, taking “professional selfies” and hoping that the likes (i.e., cites) will soon follow [

12]. The resulting image can look so fake and unnatural that it really fails to capture the essence of the research at all [

13]. Furthermore, the pressure to “publish or perish” may lead some scientists to strategically publish a series of short papers to amplify self-cites instead of having each paper tell a significant story.

For sure, people working in academia generally share the intuition that including unnecessary self-citations is a bad habit. However, it is not clear whether and in which circumstances self-citing is ethically wrong and deserves moral sanction. For one thing, all can do it. This entails that including unneeded self-citations in a manuscript, albeit being somewhat dishonorable, is not in and of itself unfair. Since it does not prevent others from doing the same, self-citing does not give rise to an unfair advantage. One can still argue, however, that since researchers at more advanced stages of their careers have more authored papers to self-cite, they enjoy a systematic competitive advantage over their younger colleagues. It follows from this consideration that citation counts could be adjusted to academic age, but not necessarily that the practice of superfluous self-citation is unethical, at least as far as individual agency is concerned.

Questioning the good faith of scientists seems exaggerated. Therefore, we shall better concede the presumption of ethical innocence to researchers who self-cite, unless we want to sanction a behavior that, in most cases, is likely to be perfectly acceptable. Yet, as we have shown, the abuse of self-citation is in a certain sense deplorable and leads to undesirable effects. Ultimately, excessive self-citation will undermine the very aim of assessing scientific productivity by boosting individual careers artificially and driving both taxpayers’ money and private investment off the path of truly valuable research.

Of utmost concern, excessive self-citing can change the overall patterns of papers’ citations, thus producing considerable impact on career trajectories [

10]. For example, one report has shown that each self-cite yields roughly an additional three cites from others over a five-year period [

14]. This, combined with consistently self-boosting citations across papers (particularly the less popular ones) over many years, can lead to significant differences in researcher profiles.

Excessive self-citing is especially troubling news for women given that men cite their own papers 56% more on average according to an analysis of 1.5 million studies published between 1779 and 2011 [

15]. The study, which considered articles across disciplines in the digital scholarly library JSTOR, found that men’s self-citation rate had risen to 70% more than women’s in the last two decades despite an increase of women in academia in recent years [

15]. Here, self-citation, when used excessively, can only exacerbate the already existing disadvantage that affects women scientists in terms of visibility and recognition in scientific fields. In this respect, self-citing can jeopardize the very ideals of fairness that quantitative measures such as the

h-index incarnate.

4. Call for Self-Citation Data Transparency

4.1. Current Limitations on Reporting

Action should be taken to ensure that excessive self-citing does not interfere with the process of recognizing and rewarding good science. To this end, citation tracking databases, Web of Science and Scopus, have made it possible to exclude self-citations from performance reports. Removing self-citation points from scorecards goes a long way in stopping deliberate attempts to boost citation counts, however, it does nothing to address the domino effect (cite yourself and others will follow [

14]). A more aggressive strategy involves penalizing, which some favor, but in practice, this would likely trigger scientifically unjustifiable levels of self-censorship. Furthermore, policing would provoke endless debates over what qualifies as bad behavior and may criminalize warranted self-citations that are a result of coordinated, sustained, and productive research efforts [

16]. Penalizing is either technically insufficient or excessively stigmatizing and does not take into account that each disciplinary community will have its own standards of appropriateness with respect to the use of self-citations. Simply put, attempts to exclude or penalize self-citing at best provides modest improvements to how we measure productivity and impact, but ultimately falls short of promoting good citation habits.

4.2. The Self-Citation Index

We propose additional metrics based on transparency rather than top-down criteria and sanctions. For example, a self-citation index (referred to here as an s-index) calculated similar to the h-index provides details regarding the proportion and placement of self-citations from the total number of citations that a scientist has received over the course of his or her career. The expectation in sharing such information is that it will elevate awareness and subsequently direct more careful attention towards our existing citation practices and problems. In the long run, each disciplinary community will automatically adjust to its perceived standard of what is to be considered an acceptable use of self-citations.

In this way, we can continue to reap the benefits of objective assessment, correct some limits of existing indices and importantly, avoid sanctioning individual behavior directly through fixed criteria. Rather, an s-index aims at bringing about change through transparency. Providing a quantitative measure of how much a given author has resorted to self-citation, such an index promotes self-correction through visibility in a community of peers.

Introducing a self-citation index and reporting number of self-citations over one’s career, as well as at the manuscript level should help dampen the incentive to excessively self-cite, not to mention, aid in identifying high-quality research. Tallying self-citations is arguably the easiest and most powerful aid to help address career driven, strategic self-promotion. The tools are already in place and with a few simple adjustments we have the following:

s-index: A scientist has a self-citation index s equal to the total number of s papers that he or she has published that have at least the same amount of s self-citations.

4.3. Example of Implementation

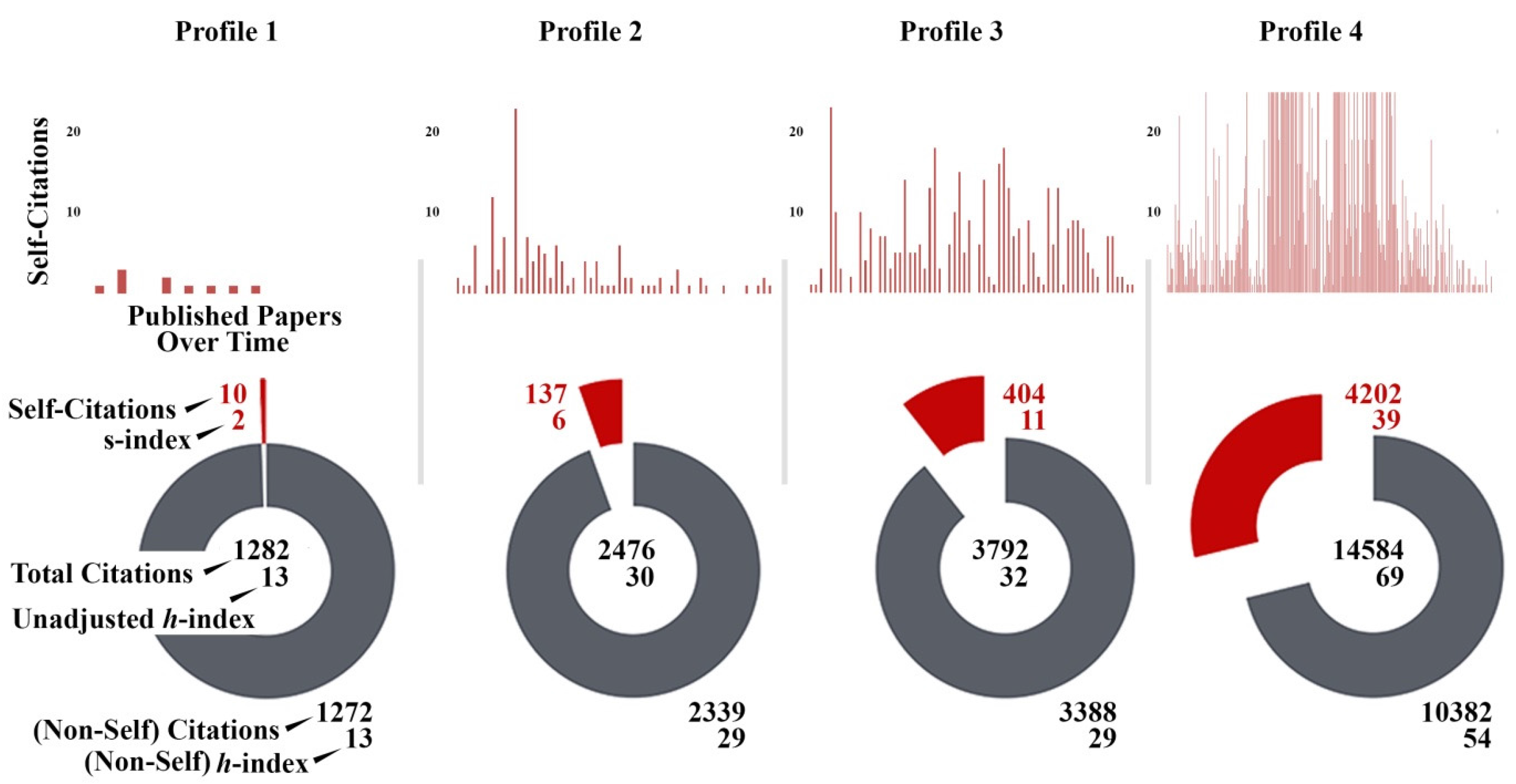

Figure 1 shows one of many possible ways of reporting self-citation behavior. In brief, profiles were generated for four biomedical researchers from the same field of research using papers deposited in PubMed, which is the full-text archive of biomedical and life sciences literature stored at the National Institutes of Health’s National Library of Medicine. Citations for each publication were sorted and counted based on whether or not the researcher in question was included on the author list of the paper doing the citing. We define self-citation in this manner so that researchers receive

s-index scores that reflect only the papers where they were directly involved in deciding what gets cited in the reference list. Aside from focusing on individual author citation behavior, one could also consider cumulative self-promotion at the paper level by counting each instance where anyone from the author list cites the work against the total number of cites from the surrounding community.

Clearly, there are differences in self-citing behavior between the four scientists. For example, the researchers in profiles 2 and 3 are at a similar stage in their careers with a similar number of publications, however, the scientist represented by profile 3 has cited themselves 267 more times, distributing self-citations more evenly across all published articles. This increases the

h-index value reported for profile 3 over profile 2 in a way that will become dramatic in time. Interestingly, adjusting the

h-index so that self-cites are not counted puts profiles 2 and 3 on more equal footing, except that profile 3 will still have more citations from others, perhaps in part due to the so-called domino effect of self-citing [

14].

To fully understand self-citing differences requires that we first are forthcoming about such basic data as self-citation counts. Mainly, citation tracking tool makers must recognize that transparency is a move in the right direction. Our hope in showing data represented like in

Figure 1 is to encourage developers of tracking tools to report self-citation data, as well as to stimulate more formal studies on far larger data sets across databases. A current obstacle is that self-citation information is currently not freely accessible, making large-scale studies problematic. The Initiative for Open Citations (

https://i4oc.org) is currently working to allow unrestricted availability of citation data to scholars. As more thorough studies can be conducted, the resulting deeper knowledge of self-citation behavior will greatly enhance discussions on what is acceptable versus what is excessive.

5. Conclusions

An s-index will increase the usefulness of the metric upon which it is based, the h-index, by allowing us to look at citation counts from a different, one could say, truer angle. Researchers will be less likely to blatantly boost their own scores while others are watching. Instead, we expect that showing the data will encourage authors, reviewers, and editors alike to give more thoughtful attention to the citation process and thus ultimately this should enhance the informative character of future manuscripts. Likewise, funding agencies and academic institutions, in light of the new self-citing data, and especially as outstanding studies become easier to identify, can improve evaluation procedures.

Citing is a serious matter as it serves to link together ideas, technologies, and advances in today’s packed and perplexing world of published science, and yet often young researchers receive no formal training in how to appropriately select manuscript references, nor do they explore the ethics of citation.

If this does not change, we risk diminishing the connectivity and usefulness of scientific communications, especially in the face of publication overload.

The confusing glare reflected from excessive self-citing threatens to dramatically alter the rate and direction of science. New results are oft-times fitted to text that showcases the researcher rather than the research. Doing so regularly over an extended period of time provides a clear advantage in the prevailing academic publishing game where no one can afford to go underrecognized. Principal investigators running larger, well-established labs consisting of many postdocs can do it the best by publishing at breakneck speeds, whereas young aspiring scientists, who find themselves in smaller laboratory settings, will struggle to keep up. In this way, strategic self-citing directly influences the flow of ideas, as well as pressures early-career researchers to be more risk-adverse by choosing “safer” projects over those with greater potential for major gain in efforts to contend for precious research money. Until we reconsider the criteria for judging and rewarding performance, many scholars will remain inclined to embrace excessiveness with respect to self-citing and “safe” thinking in regards to projects, which can be counter-productive to rapid dissemination of truly groundbreaking advances.

Without doubt, writers and reviewers play a key role in ensuring citation quality, but as bibliometrics are here to stay for the foreseeable future, a metrics-based solution is essential. An s-index will help curb excessive use of self-citation, thus providing an important contribution to the fair and objective assessment of scientific impact and productivity.