Improving Sports Outcome Prediction Process Using Integrating Adaptive Weighted Features and Machine Learning Techniques

Abstract

:1. Introduction

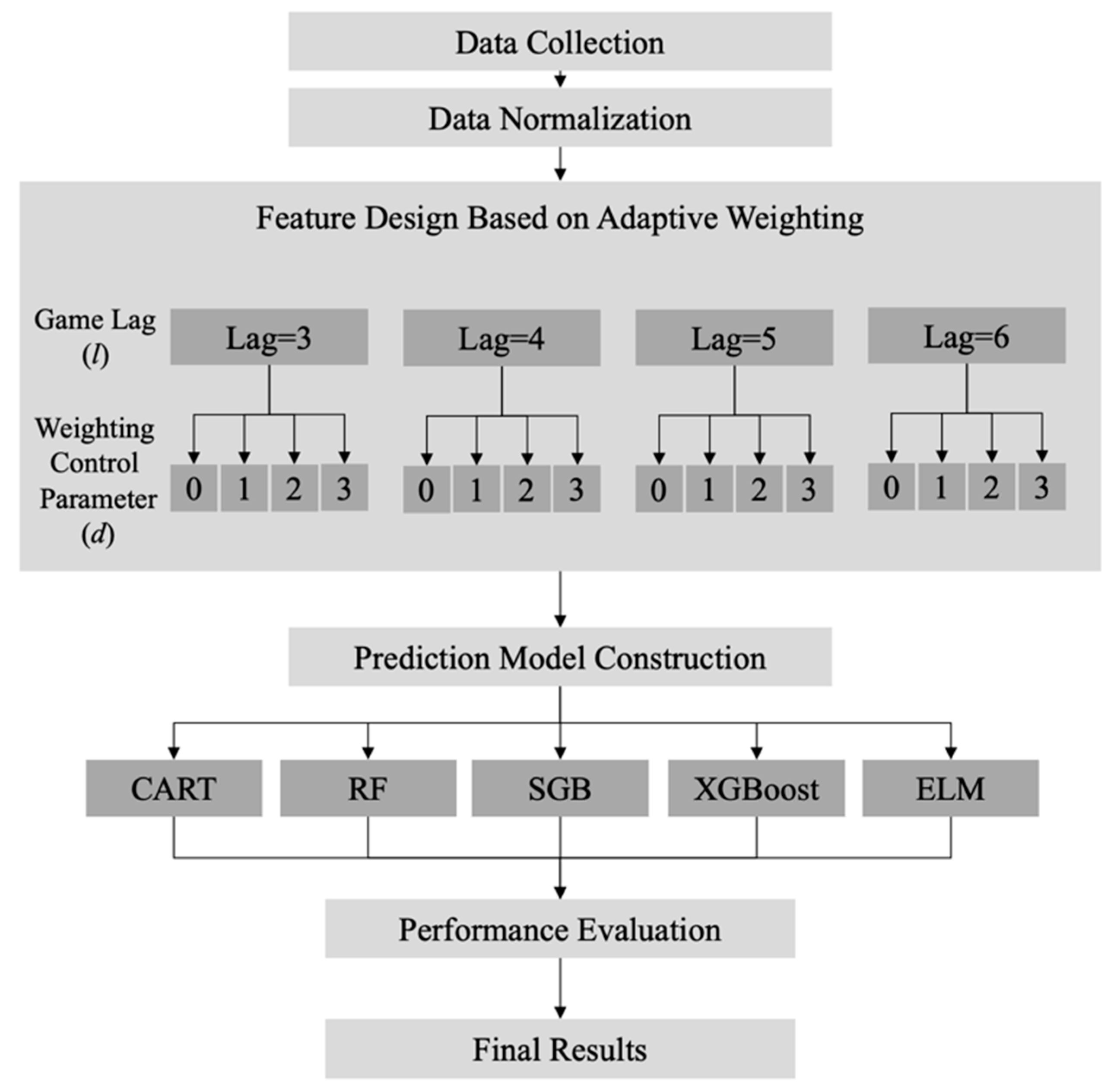

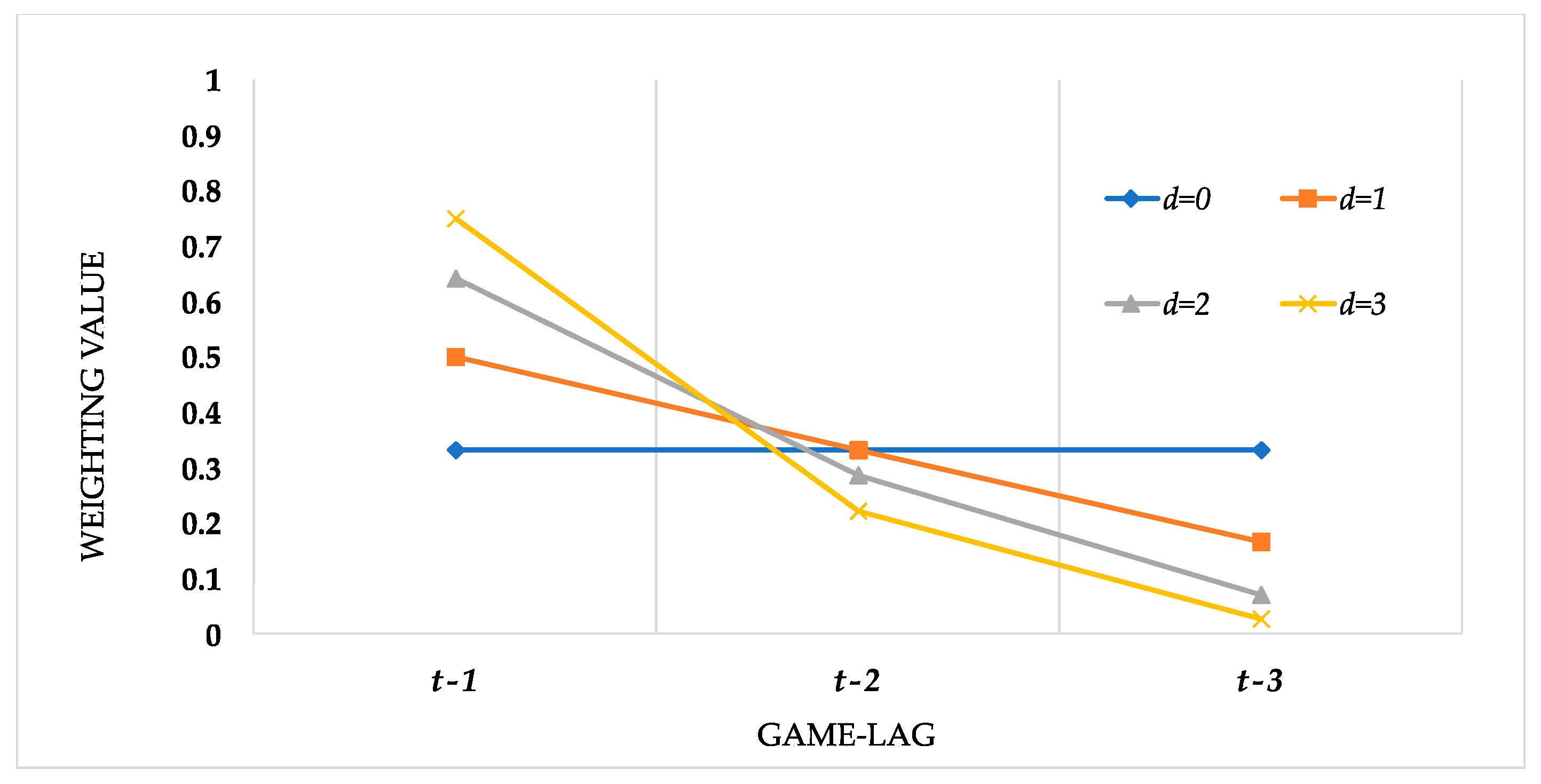

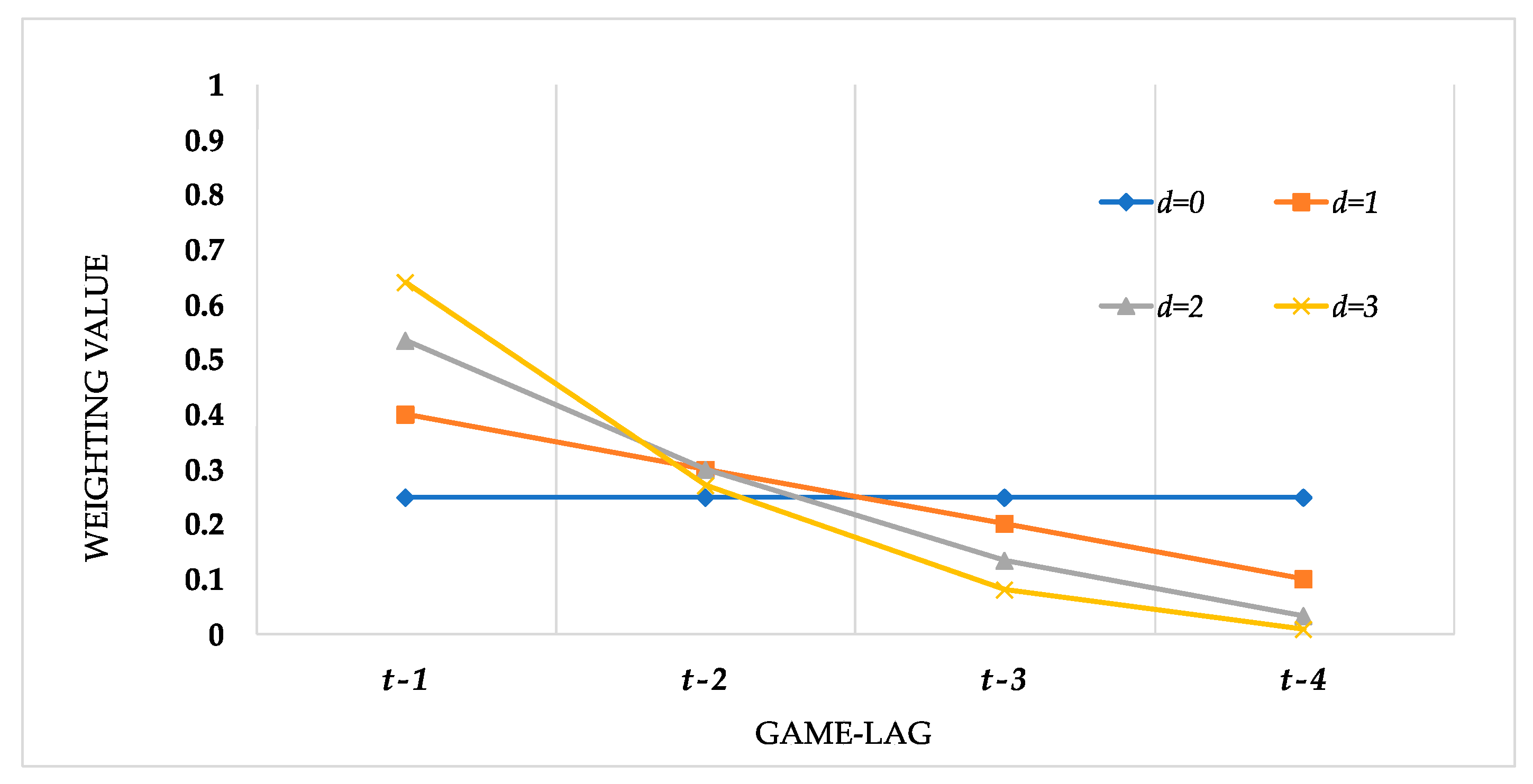

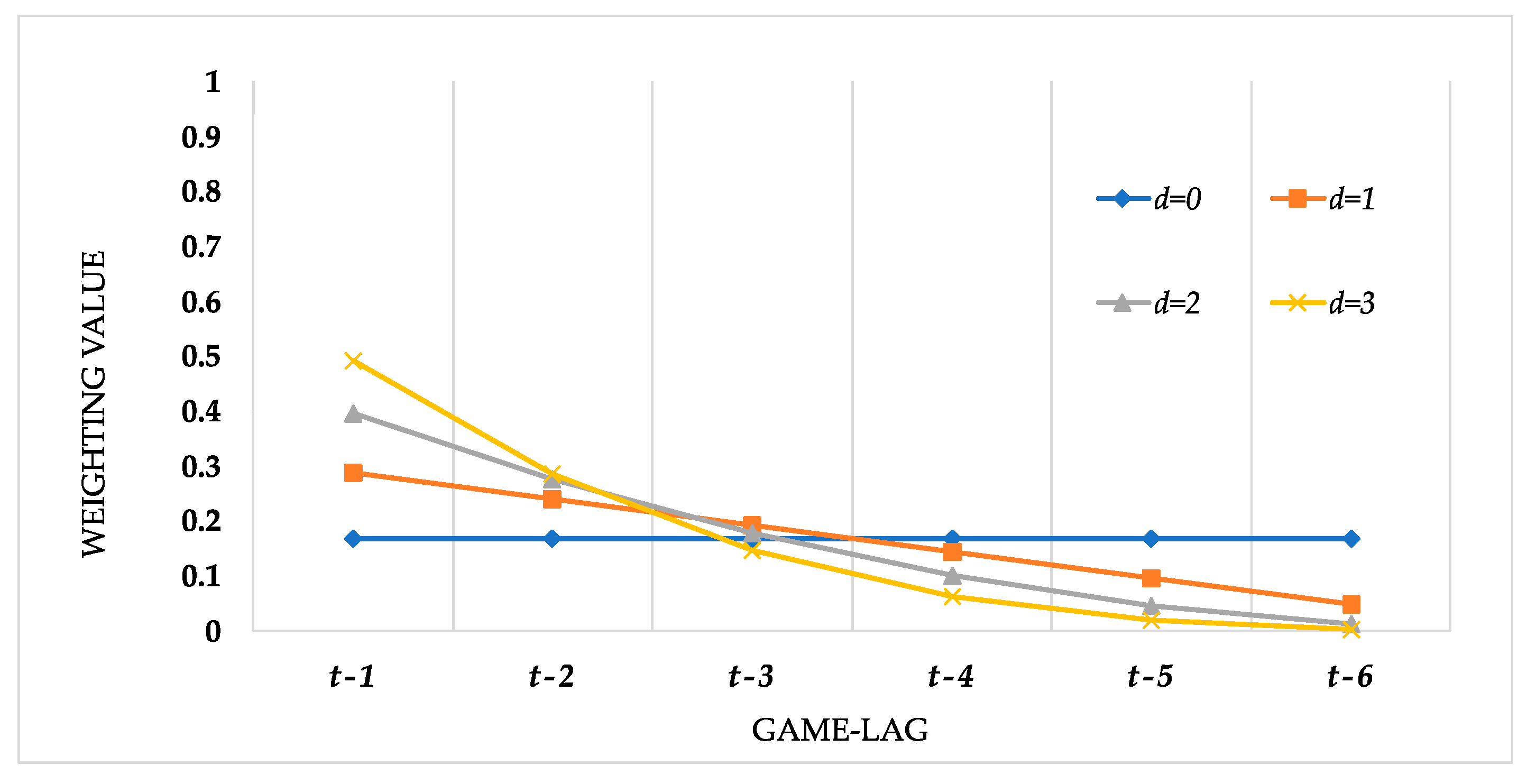

2. Methodology

3. Proposed Sports Outcome Prediction Process

4. Empirical Results

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Arel, B.; Tomas, M.J., III. The NBA Draft: A Put Option Analogy. J. Sports Econ. 2012, 13, 223–249. [Google Scholar] [CrossRef]

- Pollard, R. Evidence of a Reduced Home Advantage When a Team Moves to a New Stadium. J. Sports Sci. 2002, 20, 969–973. [Google Scholar] [CrossRef]

- Yang, C.H.; Lin, H.Y. Is There Salary Discrimination by Nationality in the NBA?: Foreign Talent or Foreign Market. J. Sports Econ. 2012, 13, 53–75. [Google Scholar] [CrossRef]

- Kopkin, N. Tax Avoidance: How Income Tax Rates Affect the Labor Migration Decisions of NBA Free Agents. J. Sports Econ. 2012, 13, 571–602. [Google Scholar] [CrossRef]

- Pollard, R.; Pollard, G. Long-Term Trends in Home Advantage in Professional Team Sports in North America and England (1876–2003). J. Sport Sci. 2005, 23, 337–350. [Google Scholar] [CrossRef] [PubMed]

- Zhang, S.; Lorenzo, A.; Gómez, M.Á.; Mateus, N.; Gonçalves, B.S.V.; Sampaio, J. Clustering Performances in the NBA According to Players’ Anthropometric Attributes and Playing Experience. J. Sports Sci. 2018, 36, 2511–2520. [Google Scholar] [CrossRef]

- Morgulev, E.; Azar, O.H.; Bar-Eli, M. Searching for Momentum in NBA Triplets of Free Throws. J. Sports Sci. 2020, 38, 390–398. [Google Scholar] [CrossRef] [PubMed]

- Haghighat, M.; Rastegari, H.; Nourafza, N.; Branch, N.; Esfahan, I. A review of data mining techniques for result prediction in sports. Adv. Comput. Sci. Int. J. 2013, 2, 7–12. [Google Scholar]

- Horvat, T.; Job, J. The use of machine learning in sport outcome prediction: A review. Wiley Interdiscip. Rev. Data Min. Knowl. Discov. 2020, 10, e1380. [Google Scholar] [CrossRef]

- Morgulev, E.; Azar, O.H.; Lidor, R. Sports Analytics and the Big-Data Era. Int. J. Data Sci. Anal. 2018, 5, 213–222. [Google Scholar] [CrossRef]

- Musa, R.M.; Majeed, A.P.A.; Taha, Z.; Chang, S.W.; Nasir, A.F.A.; Abdullah, M.R. A Machine Learning Approach of Predicting High Potential Archers by Means of Physical Fitness Indicators. PLoS ONE 2019, 14, e0209638. [Google Scholar] [CrossRef]

- Baboota, R.; Kaur, H. Predictive Analysis and Modelling Football Results using Machine Learning Approach for English Premier League. Int. J. Forecast. 2019, 35, 741–755. [Google Scholar] [CrossRef]

- Zuccolotto, P.; Manisera, M.; Sandri, M. Big Data Analytics for Modeling Scoring Probability in Basketball: The Effect of Shooting under High-Pressure Conditions. Int. J. Sports Sci. Coach. 2018, 13, 569–589. [Google Scholar] [CrossRef]

- Lam, M.W. One-Match-Ahead Forecasting in Two-Team Sports with Stacked Bayesian Regressions. J. Artif. Intell. Soft Comput. Res. 2018, 8, 159–171. [Google Scholar] [CrossRef] [Green Version]

- Horvat, T.; Havaš, L.; Srpak, D. The Impact of Selecting a Validation Method in Machine Learning on Predicting Basketball Game Outcomes. Symmetry 2020, 12, 431. [Google Scholar] [CrossRef] [Green Version]

- Loeffelholz, B.; Bednar, E.; Bauer, K.W. Predicting NBA Games using Neural Networks. J. Quant. Anal. Sports 2009, 5, 7. [Google Scholar] [CrossRef]

- Cheng, G.; Zhang, Z.; Kyebambe, M.N.; Kimbugwe, N. Predicting the Outcome of NBA Playoffs Based on the Maximum Entropy Principle. Entropy 2016, 18, 450. [Google Scholar] [CrossRef]

- Pai, P.F.; Chang-Liao, L.H.; Lin, K.P. Analyzing Basketball Games by a Support Vector Machines With Decision Tree Model. Neural Comput. Appl. 2017, 28, 4159–4167. [Google Scholar] [CrossRef]

- Li, Y.; Wang, L.; Li, F. A Data-Driven Prediction Approach for Sports Team Performance and Its Application to National Basketball Association. Omega 2021, 98, 102123. [Google Scholar] [CrossRef]

- Song, K.; Zou, Q.; Shi, J. Modelling the Scores and Performance Statistics of NBA Basketball Games. Commun. Stat.-Simul. Comput. 2018, 49, 2604–2616. [Google Scholar] [CrossRef]

- Thabtah, F.; Zhang, L.; Abdelhamid, N. NBA Game Result Prediction Using Feature Analysis and Machine Learning. Ann. Data Sci. 2019, 6, 103–116. [Google Scholar] [CrossRef]

- Huang, M.L.; Lin, Y.J. Regression Tree Model for Predicting Game Scores for the Golden State Warriors in the National Basketball Association. Symmetry 2020, 12, 835. [Google Scholar] [CrossRef]

- Song, K.; Gao, Y.; Shi, J. Making Real-Time Predictions for NBA Basketball Games by Combining the Historical Data and Bookmaker’s Betting Line. Phys. A Stat. Mech. Appl. 2020, 547, 124411. [Google Scholar] [CrossRef]

- Chen, W.-J.; Jhou, M.-J.; Lee, T.-S.; Lu, C.-J. Hybrid Basketball Game Outcome Prediction Model by Integrating Data Mining Methods for the National Basketball Association. Entropy 2021, 23, 477. [Google Scholar] [CrossRef]

- Domingos, P. A few useful things to know about machine learning. Commun. ACM 2012, 55, 78–87. [Google Scholar] [CrossRef] [Green Version]

- Zhang, C.; Cao, L.; Romagnoli, A. On the feature engineering of building energy data mining. Sustain. Cities Soc. 2018, 39, 508–518. [Google Scholar] [CrossRef]

- Long, W.; Lu, Z.; Cui, L. Deep learning-based feature engineering for stock price movement prediction. Knowl.-Based Syst. 2019, 164, 163–173. [Google Scholar] [CrossRef]

- Chen, Y.; Gao, D.; Nie, C.; Luo, L.; Chen, W.; Yin, X.; Lin, Y. Bayesian statistical reconstruction for low-dose X-ray computed tomography using an adaptive-weighting nonlocal prior. Comput. Med. Imaging Graph. 2009, 33, 495–500. [Google Scholar] [CrossRef]

- Pang, Y.; Peng, L.; Chen, Z.; Yang, B.; Zhang, H. Imbalanced learning based on adaptive weighting and Gaussian function synthesizing with an application on Android malware detection. Inf. Sci. 2019, 484, 95–112. [Google Scholar] [CrossRef]

- Yang, M.; Deng, C.; Nie, F. Adaptive-weighting discriminative regression for multi-view classification. Pattern Recognit. 2019, 88, 236–245. [Google Scholar] [CrossRef]

- Bartier, P.M.; Keller, C.P. Multivariate interpolation to incorporate thematic surface data using inverse distance weighting (IDW). Comput. Geosci. 1996, 22, 795–799. [Google Scholar] [CrossRef]

- Bekele, A.; Downer, R.G.; Wolcott, M.C.; Hudnall, W.H.; Moore, S.H. Comparative evaluation of spatial prediction methods in a field experiment for mapping soil potassium. Soil Sci. 2003, 168, 15–28. [Google Scholar] [CrossRef]

- Lloyd, C.D. Assessing the effect of integrating elevation data into the estimation of monthly precipitation in Great Britain. J. Hydrol. 2005, 308, 128–150. [Google Scholar] [CrossRef]

- Ping, J.L.; Green, C.J.; Zartman, R.E.; Bronson, K.F. Exploring spatial dependence of cotton yield using global and local autocorrelation statistics. Field Crop Res. 2004, 89, 219–236. [Google Scholar] [CrossRef]

- Ahn, G.; Yun, H.; Hur, S.; Lim, S. A Time-Series Data Generation Method to Predict Remaining Useful Life. Processes 2021, 9, 1115. [Google Scholar] [CrossRef]

- Khan, M.A. HCRNNIDS: Hybrid Convolutional Recurrent Neural Network-Based Network Intrusion Detection System. Processes 2021, 9, 834. [Google Scholar] [CrossRef]

- Lv, Q.; Yu, X.; Ma, H.; Ye, J.; Wu, W.; Wang, X. Applications of Machine Learning to Reciprocating Compressor Fault Diagnosis: A Review. Processes 2021, 9, 909. [Google Scholar] [CrossRef]

- Oh, S.-H.; Lee, H.J.; Roh, T.-S. New Design Method of Solid Propellant Grain Using Machine Learning. Processes 2021, 9, 910. [Google Scholar] [CrossRef]

- Wang, C.-C.; Chien, C.-H.; Trappey, A.J.C. On the Application of ARIMA and LSTM to Predict Order Demand Based on Short Lead Time and On-Time Delivery Requirements. Processes 2021, 9, 1157. [Google Scholar] [CrossRef]

- Desai, P.S.; Granja, V.; Higgs, C.F., III. Lifetime Prediction Using a Tribology-Aware, Deep Learning-Based Digital Twin of Ball Bearing-Like Tribosystems in Oil and Gas. Processes 2021, 9, 922. [Google Scholar] [CrossRef]

- Gao, Y.; Li, J.; Hong, M. Machine Learning Based Optimization Model for Energy Management of Energy Storage System for Large Industrial Park. Processes 2021, 9, 825. [Google Scholar] [CrossRef]

- Breiman, L.; Friedman, J.H.; Olshen, R.A.; Stone, C.J. Classification and Regression Trees; Routledge: Pacific Grove, CA, USA, 1984. [Google Scholar]

- Steinburg, D.; Colla, P. Classification and Regression Trees; Salford Systems: San Diego, CA, USA, 1997. [Google Scholar]

- Alic, E.; Das, M.; Kaska, O. Heat Flux Estimation at Pool Boiling Processes with Computational Intelligence Methods. Processes 2019, 7, 293. [Google Scholar] [CrossRef] [Green Version]

- Zhang, H.; Li, J.; Hong, M. Machine Learning-Based Energy System Model for Tissue Paper Machines. Processes 2021, 9, 655. [Google Scholar] [CrossRef]

- Dusseldorp, E.; Conversano, C.; Van Os, B.J. Combining an additive and tree-based regression model simultaneously: STIMA. J. Comput. Graph. Stat. 2010, 19, 514–530. [Google Scholar] [CrossRef] [Green Version]

- Gray, J.B.; Fan, G. Classification tree analysis using TARGET. Comput. Stat. Data Anal. 2008, 52, 1362–1372. [Google Scholar] [CrossRef]

- Loh, W.-Y.; Chen, C.-W.; Zheng, W. Extrapolation errors in linear model trees. ACM Trans. Knowl. Disc. Data 2007, 1, 6-es. [Google Scholar] [CrossRef]

- Loh, W.Y. Fifty years of classification and regression trees. Int. Stat. Rev. 2014, 82, 329–348. [Google Scholar] [CrossRef] [Green Version]

- Breiman, L. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef] [Green Version]

- Yuk, E.H.; Park, S.H.; Park, C.-S.; Baek, J.-G. Feature-Learning-Based Printed Circuit Board Inspection via Speeded-Up Robust Features and Random Forest. Appl. Sci. 2018, 8, 932. [Google Scholar] [CrossRef] [Green Version]

- Singgih, I.K. Production Flow Analysis in a Semiconductor Fab Using Machine Learning Techniques. Processes 2021, 9, 407. [Google Scholar] [CrossRef]

- Kastenhofer, J.; Libiseller-Egger, J.; Rajamanickam, V.; Spadiut, O. Monitoring E. coli Cell Integrity by ATR-FTIR Spectroscopy and Chemometrics: Opportunities and Caveats. Processes 2021, 9, 422. [Google Scholar] [CrossRef]

- Nakawajana, N.; Lerdwattanakitti, P.; Saechua, W.; Posom, J.; Saengprachatanarug, K.; Wongpichet, S. A Low-Cost System for Moisture Content Detection of Bagasse upon a Conveyor Belt with Multispectral Image and Various Machine Learning Methods. Processes 2021, 9, 777. [Google Scholar] [CrossRef]

- Meinshausen, N. Forest garrote. Electron. J. Stat. 2009, 3, 1288–1304. [Google Scholar] [CrossRef]

- Biau, G. Analysis of a random forests model. J. Mach Learn Res. 2012, 13, 1063–1095. [Google Scholar]

- Genuer, R. Variance reduction in purely random forests. J. Nonparameter. Stat. 2012, 24, 543–562. [Google Scholar] [CrossRef] [Green Version]

- Ishwaran, H.; Kogalur, U.B. Consistency of random survival forests. Stat. Probab. Lett. 2010, 80, 1056–1064. [Google Scholar] [CrossRef] [Green Version]

- Zhu, R.; Zeng, D.; Kosorok, M.R. Reinforcement learning trees. J. Am. Stat. Assoc. 2015, 110, 1770–1784. [Google Scholar] [CrossRef] [Green Version]

- Biau, G.; Scornet, E. A random forest guided tour. TEST 2016, 25, 197–227. [Google Scholar] [CrossRef] [Green Version]

- Fernandes, B.; González-Briones, A.; Novais, P.; Calafate, M.; Analide, C.; Neves, J. An Adjective Selection Personality Assessment Method Using Gradient Boosting Machine Learning. Processes 2020, 8, 618. [Google Scholar] [CrossRef]

- Hastie, T.; Tibshirani, R.; Friedman, J. The Elements of Statistical Learning: Data Mining, Inference and Prediction. Math. Intell. 2005, 27, 83–85. [Google Scholar]

- Friedman, J. Greedy Function Approximation: A Gradient Boosting Machine. Ann. Stat. 2001, 29, 1189–1232. [Google Scholar] [CrossRef]

- Friedman, J. Stochastic Gradient Boosting. Comput. Stat. Data Anal. 2002, 38, 367–378. [Google Scholar] [CrossRef]

- Lawrence, R.; Bunn, A.; Powell, S.; Zambon, M. Classification of Remotely Sensed Imagery Using Stochastic Gradient Boosting as A Refinement of Classification Tree Analysis. Remote Sens. Environ. 2004, 90, 331–336. [Google Scholar] [CrossRef]

- Bishop, C.M. Pattern Recognition and Machine Learning; Springer: Berlin/Heidelberg, Germany, 2006. [Google Scholar]

- Moisen, G.G.; Freeman, E.A.; Blackard, J.A.; Frescino, T.S.; Zimmermann, N.E.; Edwards Jr, T.C. Predicting Tree Species Presence and Basal Area in Utah: A Comparison of Stochastic Gradient Boosting, Generalized Additive Models, and Tree-Based Methods. Ecol. Model. 2006, 199, 176–187. [Google Scholar] [CrossRef]

- Lei, Y.; Jiang, W.; Jiang, A.; Zhu, Y.; Niu, H.; Zhang, S. Fault Diagnosis Method for Hydraulic Directional Valves Integrating PCA and XGBoost. Processes 2019, 7, 589. [Google Scholar] [CrossRef] [Green Version]

- Tang, Z.; Tang, L.; Zhang, G.; Xie, Y.; Liu, J. Intelligent Setting Method of Reagent Dosage Based on Time Series Froth Image in Zinc Flotation Process. Processes 2020, 8, 536. [Google Scholar] [CrossRef]

- Chen, T.; Guestrin, C. XGBoost: A Scalable Tree Boosting System. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, San Francisco, CA, USA, 13–17 August 2016; pp. 785–794. [Google Scholar]

- Natekin, A.; Knoll, A. Gradient Boosting Machines, a Tutorial. Front. Neurorobotics 2013, 7, 21. [Google Scholar] [CrossRef] [Green Version]

- Torlay, L.; Perrone-Bertolotti, M.; Thomas, E.; Baciu, M. Machine Learning–XGBoost Analysis of Language Networks to Classify Patients with Epilepsy. Brain Inform. 2017, 4, 159–169. [Google Scholar] [CrossRef] [PubMed]

- Ting, W.C.; Chang, H.R.; Chang, C.C.; Lu, C.J. Developing a Novel Machine Learning-Based Classification Scheme for Predicting SPCs in Colorectal Cancer Survivors. Appl. Sci. 2020, 10, 1355. [Google Scholar] [CrossRef] [Green Version]

- Liu, T.; Fan, Q.; Kang, Q.; Niu, L. Extreme Learning Machine Based on Firefly Adaptive Flower Pollination Algorithm Optimization. Processes 2020, 8, 1583. [Google Scholar] [CrossRef]

- Ding, J.; Chen, G.; Yuan, K. Short-Term Wind Power Prediction Based on Improved Grey Wolf Optimization Algorithm for Extreme Learning Machine. Processes 2020, 8, 109. [Google Scholar] [CrossRef] [Green Version]

- Chen, X.; Li, Y.; Zhang, Y.; Ye, X.; Xiong, X.; Zhang, F. A Novel Hybrid Model Based on an Improved Seagull Optimization Algorithm for Short-Term Wind Speed Forecasting. Processes 2021, 9, 387. [Google Scholar] [CrossRef]

- Huang, G.B.; Zhu, Q.Y.; Siew, C.K. Extreme Learning Machine: Theory and Applications. Neurocomputing 2006, 70, 489–501. [Google Scholar] [CrossRef]

- Therneau, T.; Atkinson, B.; Ripley, B. Rpart: Recursive Partitioning and Regression Trees. R Package Version, 4.1-15. Available online: https://www.rdocumentation.org/packages/rpart/versions/4.1-15 (accessed on 1 May 2021).

- Liaw, A.; Wiener, M. Randomforest: Breiman and Cutler’s Random Forests for Classification and Regression. R Package Version, 4.6.14. Available online: https://www.rdocumentation.org/packages/randomForest (accessed on 1 May 2021).

- Greenwell, B.; Boehmke, B.; Cunningham, J. Gbm: Generalized Boosted Regression Models. R Package Version, 2.1.8. Available online: https://www.rdocumentation.org/packages/gbm (accessed on 1 May 2021).

- Chen, T.; He, T.; Benesty, M. XGBoost: Extreme Gradient Boosting. R Package Version 1.3.2.1. Available online: https://www.rdocumentation.org/packages/XGBoost (accessed on 1 May 2021).

- Gosso, A. ElmNN: Implementation of ELM (Extreme Learning Machine) Algorithm for SLFN (Single Hidden Layer Feedforward Neural Networks). R Package Version, 1.0. Available online: https://www.rdocumentation.org/packages/elmNN (accessed on 1 May 2021).

- R Core Team. R: A Language and Environment for Statistical Computing; R Foundation for Statistical Computing: Vienna, Austria. Available online: http://www.R-project.org (accessed on 1 May 2021).

- Kuhn, M.; Wing, J.; Weston, S. Caret: Classification and Regression Training. R Package Version, 6.0-86. Available online: https://www.rdocumentation.org/packages/caret (accessed on 1 May 2021).

- Ćalasan, M.; Aleem, S.H.A.; Zobaa, A.F. On the root mean square error (RMSE) calculation for parameter estimation of photovoltaic models: A novel exact analytical solution based on Lambert W function. Energy Convers. Manag. 2020, 210, 112716. [Google Scholar] [CrossRef]

- Chai, T.; Draxler, R.R. Root mean square error (RMSE) or mean absolute error (MAE)?-Arguments against avoiding RMSE in the literature. Geosci. Model Dev. 2014, 7, 1247–1250. [Google Scholar] [CrossRef] [Green Version]

- Trawinski, K. A fuzzy classification system for prediction of the results of the basketball games. In Proceedings of the International Conference on Fuzzy Systems, Barcelona, Spain, 18–23 July 2010. [Google Scholar] [CrossRef]

- Miljković, D.; Gajić, L.; Kovačević, A.; Konjović, Z. The use of data mining for basketball matches outcomes prediction. In Proceedings of the IEEE 8th International Symposium on Intelligent and Informatics, Subotica, Serbia, 10–11 September 2010; pp. 309–312. [Google Scholar] [CrossRef]

- Jain, S.; Kaur, H. Machine learning approaches to predict basketball game outcome. In Proceedings of the 2017 3rd International Conference on Advances in Computing, Communication & Automation (ICACCA) (Fall), Dehradun, India, 15–16 September 2017; pp. 1–7. [Google Scholar] [CrossRef]

- McKeen, S.A.; Wilczak, J.; Grell, G.; Djalalova, I.; Peckham, S.; Hsie, E.; Gong, W.; Bouchet, V.; Menard, S.; Moffet, R.; et al. Assessment of an ensemble of seven real-time ozone forecasts over eastern North America during the summer of 2004. J. Geophys. Res. 2005, 110, D21307. [Google Scholar] [CrossRef]

- Savage, N.H.; Agnew, P.; Davis, L.S.; Ordóñez, C.; Thorpe, R.; Johnson, C.E.; O’Connor, F.M.; Dalvi, M. Air quality modelling using the Met Office Unified Model (AQUM OS24-26): Model description and initial evaluation. Geosci. Model Dev. 2013, 6, 353–372. [Google Scholar] [CrossRef] [Green Version]

- Chai, T.; Kim, H.-C.; Lee, P.; Tong, D.; Pan, L.; Tang, Y.; Huang, J.; McQueen, J.; Tsidulko, M.; Stajner, I. Evaluation of the United States National Air Quality Forecast Capability experimental real-time predictions in 2010 using Air Quality System ozone and NO2 measurements. Geosci. Model Dev. 2013, 6, 1831–1850. [Google Scholar] [CrossRef] [Green Version]

- Dahl, K.D.; Dunford, K.M.; Wilson, S.A.; Turnbull, T.L.; Tashman, S. Wearable sensor validation of sports-related movements for the lower extremity and trunk. Med. Eng. Phys. 2020, 84, 144–150. [Google Scholar] [CrossRef]

- Roell, M.; Roecker, K.; Gehring, D.; Mahler, H.; Gollhofer, A. Player monitoring in indoor team sports: Concurrent validity of inertial measurement units to quantify average and peak acceleration values. Front. Physiol. 2018, 9, 141. [Google Scholar] [CrossRef] [Green Version]

- Van der Slikke, R.M.A.; Berger, M.A.M.; Bregman, D.J.J.; Veeger, H.E.J. Wheel skid correction is a prerequisite to reliably measure wheelchair sports kinematics based on inertial sensors. Procedia Eng. 2015, 112, 207–212. [Google Scholar] [CrossRef] [Green Version]

| Variables | Abbreviation | Description |

|---|---|---|

| 2PA | 2-Point Field Goal Attempts of a team in game | |

| 2P% | 2-Point Field Goal Percentage of a team in game | |

| 3PA | 3-Point Field Goal Attempts of a team in game | |

| 3P% | 3-Point Field Goal Percentage of a team in game | |

| FTA | Free Throw Attempts of a team in game | |

| FT% | Free Throw Percentage of a team in game | |

| ORB | Offensive Rebounds of a team in game | |

| DRB | Defensive Rebounds of a team in game | |

| AST | Assists of a team in game | |

| STL | Steals of a team in game | |

| BLK | Blocks of a team in game | |

| TOV | Turnovers of a team in game | |

| PF | Personal Fouls of a team in game | |

| H/A | Home or Away game of a team in game | |

| Score | Team Score of a team in game |

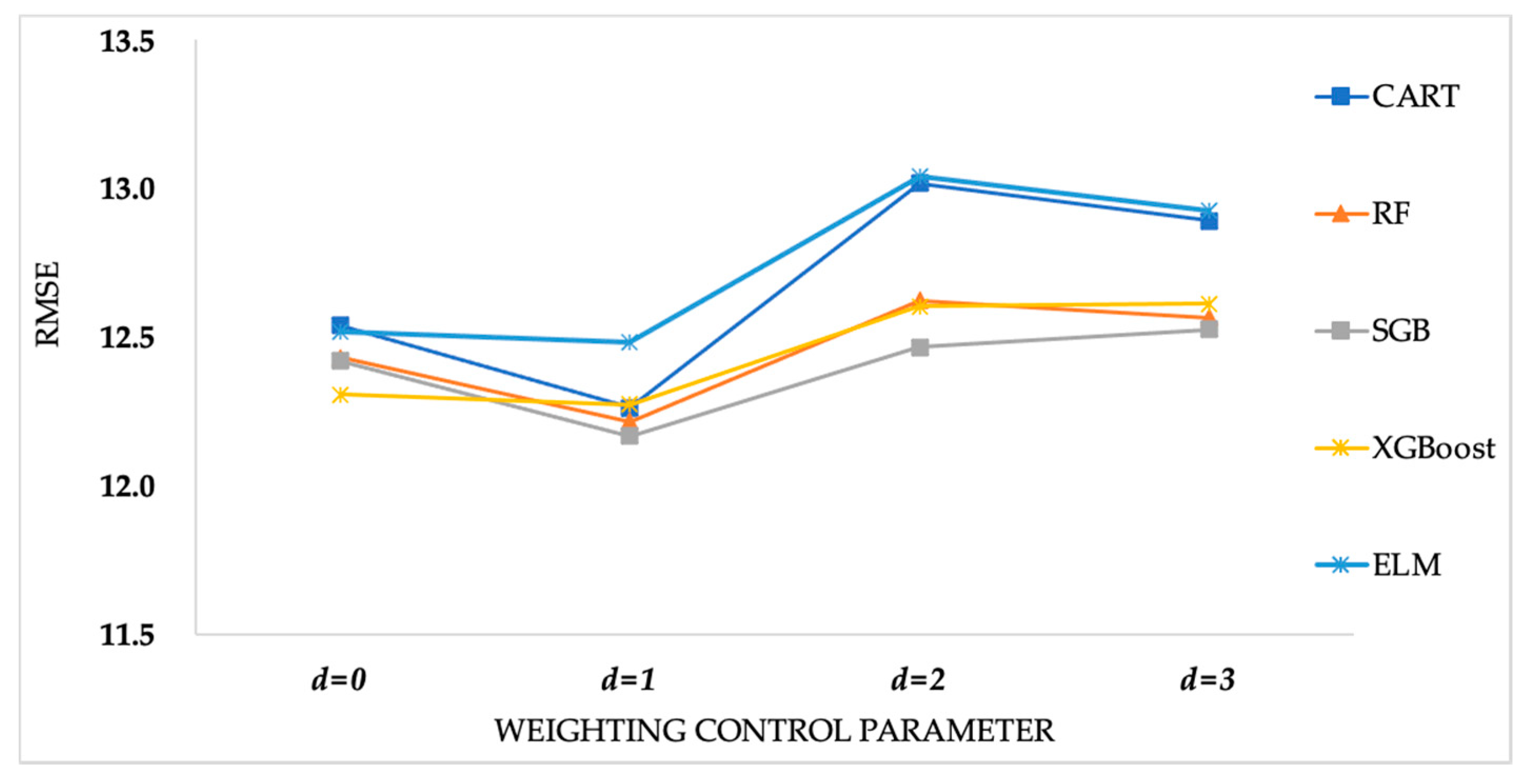

| Game-Lag Selection | Methods, Mean (SD) | Weighting Control Parameter | |||

|---|---|---|---|---|---|

| d = 0 | d = 1 | d = 2 | d = 3 | ||

| Lag = 3 | CART | 12.5396(0.3290) | 12.2611(0.3306) | 13.0188(0.4692) | 12.8924(0.2148) |

| RF | 12.4307(0.2905) | 12.2159(0.3745) | 12.6226(0.4834) | 12.5646(0.2224) | |

| SGB | 12.4195(0.2497) | 12.1671(0.3266) | 12.4670(0.4863) | 12.5261(0.2312) | |

| XGBoost | 12.3062(0.2659) | 12.2736(0.3173) | 12.6042(0.4987) | 12.6124(0.2152) | |

| ELM | 12.5172(0.3316) | 12.4846(0.3203) | 13.0403(0.4648) | 12.9261(0.1883) | |

| Lag = 4 | CART | 12.3434(0.4804) | 11.7564(0.2878) | 12.9753(0.4243) | 12.4579(0.3524) |

| RF | 11.9571(0.3822) | 11.6303(0.2608) | 12.6935(0.4393) | 12.2696(0.3283) | |

| SGB | 12.0481(0.4366) | 11.5586(0.2914) | 12.6784(0.3873) | 12.1120(0.3628) | |

| XGBoost | 12.0491(0.4159) | 11.6941(0.2787) | 12.6785(0.4090) | 12.0791(0.3280) | |

| ELM | 12.2817(0.3972) | 11.8020(0.2599) | 12.9334(0.3991) | 12.4511(0.3401) | |

| Lag = 5 | CART | 13.1326(0.2213) | 12.4316(0.2403) | 12.8013(0.2307) | 13.0725(0.4351) |

| RF | 12.6148(0.2084) | 12.1525(0.2545) | 12.5351(0.3057) | 12.8432(0.4818) | |

| SGB | 12.6969(0.2198) | 12.2448(0.2307) | 12.6745(0.1237) | 12.9750(0.3807) | |

| XGBoost | 12.7785(0.1925) | 12.3145(0.2506) | 12.6760(0.1069) | 12.8648(0.3881) | |

| ELM | 13.0344(0.1970) | 12.6748(0.2529) | 13.0100(0.1896) | 13.0235(0.4071) | |

| Lag = 6 | CART | 12.3738(0.2968) | 12.0896(0.3365) | 12.9175(0.3717) | 12.4925(0.3025) |

| RF | 12.3614(0.2805) | 12.0245(0.4672) | 12.9398(0.3260) | 12.3072(0.3375) | |

| SGB | 12.1067(0.3232) | 12.0293(0.3484) | 12.9223(0.2830) | 12.0876(0.2995) | |

| XGBoost | 12.1655(0.3143) | 11.9898(0.3171) | 12.8547(0.3208) | 12.2934(0.3074) | |

| ELM | 12.3923(0.2942) | 12.2520(0.3485) | 13.0727(0.2992) | 12.3416(0.3309) | |

| Game-Lag Selection | Methods, CI | Weighting Control Parameter | |||

|---|---|---|---|---|---|

| d = 0 | d = 1 | d = 2 | d = 3 | ||

| Lag = 3 | CART | (12.6041, 12.4751) | (12.3259, 12.1963) | (13.1107, 12.9268) | (12.9345, 12.8503) |

| RF | (12.4876, 12.3737) | (12.2893, 12.1425) | (12.7173, 12.5279) | (12.6081, 12.5210) | |

| SGB | (12.4684, 12.3705) | (12.2311, 12.1030) | (12.5623, 12.3717) | (12.5715, 12.4808) | |

| XGBoost | (12.3583, 12.2541) | (12.3357, 12.2114) | (12.7020, 12.5065) | (12.6545, 12.5702) | |

| ELM | (12.5822, 12.4522) | (12.5473, 12.4218) | (13.1314, 12.9492) | (12.9630, 12.8892) | |

| Lag = 4 | CART | (12.4376, 12.2493) | (11.8128, 11.7000) | (13.0584, 12.8921) | (12.5269, 12.3888) |

| RF | (12.0320, 11.8822) | (11.6814, 11.5791) | (12.7796, 12.6074) | (12.3612, 12.2325) | |

| SGB | (12.1337, 11.9625) | (11.6157, 11.5015) | (12.7543, 12.6025) | (12.1832, 12.0409) | |

| XGBoost | (12.1306, 11.9675) | (11.7487, 11.6395) | (12.7586, 12.5983) | (12.1434, 12.0148) | |

| ELM | (12.3595, 12.2038) | (11.8529, 11.7511) | (13.0116, 12.8552) | (12.5187, 12.3845) | |

| Lag = 5 | CART | (13.1759, 13.0892) | (12.4787, 12.3845) | (12.8465, 12.7561) | (13.1578, 12.9872) |

| RF | (12.6557, 12.5740) | (12.2024, 12.1026) | (12.5951, 12.4752) | (12.9377, 12.7488) | |

| SGB | (12.7400, 12.6538) | (12.2901, 12.1996) | (12.6987, 12.6502) | (13.0496, 12.9003) | |

| XGBoost | (12.8162, 12.7408) | (12.3636, 12.2654) | (12.6970, 12.6551) | (12.9409, 12.7888) | |

| ELM | (13.0730, 12.9958) | (12.7244, 12.6253) | (13.0471, 12.9728) | (13.1033, 12.9437) | |

| Lag = 6 | CART | (112.4319, 12.3156) | (12.1555, 12.0236) | (12.9903, 12.8446) | (12.5518, 12.4332) |

| RF | (12.4163, 12.3064) | (12.1161, 11.9329) | (13.0037, 12.8759) | (12.3734, 12.2411) | |

| SGB | (12.1700, 12.0433) | (12.0975, 11.9610) | (12.9778, 12.8668) | (12.1463, 12.0289) | |

| XGBoost | (12.2271, 12.1039) | (12.0520, 11.9276) | (12.9175, 12.7918) | (12.3537, 12.2332) | |

| ELM | (12.4499, 12.3346) | (12.3203, 12.1837) | (13.1314, 13.0141) | (12.4064, 12.2767) | |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lu, C.-J.; Lee, T.-S.; Wang, C.-C.; Chen, W.-J. Improving Sports Outcome Prediction Process Using Integrating Adaptive Weighted Features and Machine Learning Techniques. Processes 2021, 9, 1563. https://doi.org/10.3390/pr9091563

Lu C-J, Lee T-S, Wang C-C, Chen W-J. Improving Sports Outcome Prediction Process Using Integrating Adaptive Weighted Features and Machine Learning Techniques. Processes. 2021; 9(9):1563. https://doi.org/10.3390/pr9091563

Chicago/Turabian StyleLu, Chi-Jie, Tian-Shyug Lee, Chien-Chih Wang, and Wei-Jen Chen. 2021. "Improving Sports Outcome Prediction Process Using Integrating Adaptive Weighted Features and Machine Learning Techniques" Processes 9, no. 9: 1563. https://doi.org/10.3390/pr9091563

APA StyleLu, C.-J., Lee, T.-S., Wang, C.-C., & Chen, W.-J. (2021). Improving Sports Outcome Prediction Process Using Integrating Adaptive Weighted Features and Machine Learning Techniques. Processes, 9(9), 1563. https://doi.org/10.3390/pr9091563