1. Introduction

In the petroleum and natural gas extraction industry, ensuring the stable operation of key production equipment presents a significant challenge. As an efficient artificial lift equipment, the Electric Submersible Progressive Cavity Pump (ESPCP) is a complex electromechanical device composed of multiple subsystems, whose primary function is to lift downhole oil and gas media to the surface production system. Compared to traditional centrifugal pump technology, this equipment can provide higher output torque under low-speed conditions, is particularly suitable for produced fluid environments with high solid content, and demonstrates broad application prospects. Equipment failures often necessitate complex downhole operations and system debugging, leading to production interruptions and economic losses. Therefore, developing effective remote fault monitoring and diagnosis technologies holds significant practical engineering importance.

Limited by special downhole conditions and system complexity, the operational status of ESPCP can only be monitored through limited sensor parameters, and it is difficult to establish accurate mathematical models to derive unmeasured parameters. In traditional maintenance modes, analysis typically relies on experienced technical personnel interpreting multi-source monitoring data such as motor current, rotational speed, and inlet/outlet pressure, combined with professional experience for fault judgment. However, such specialized technical talent is scarce, and their experiential knowledge is highly subjective and difficult to rapidly replicate through conventional training. This urgency drives the industry to develop intelligent fault diagnosis technologies to provide decision support for field operations.

In recent years, Deep Learning (DL) has shown significant advantages in the field of rotating machinery fault diagnosis [

1], which can effectively process high-dimensional nonlinear monitoring data and automatically extract fault features, demonstrating broad application prospects [

2]. Typical deep learning methods include: Convolutional Neural Networks (CNN) [

3]; Recurrent Neural Networks (RNN) [

4,

5,

6]; Autoencoders (AE) [

7,

8,

9]; Deep Belief Networks (DBN) [

10,

11] and various other algorithmic architectures. Specifically, in the aspect of handling strong noise and complex temporal dynamics in signals, Guo et al. proposed methods integrating wavelet transform with attention mechanisms: in ref. [

12], a hybrid model combined WaveletKernelNet, CBAM, and BiLSTM for noise-robust feature extraction in drilling pumps; while in ref. [

13], a parallel deep network was developed to synchronously analyze time and time-frequency domains for meticulous feature examination. In the aspect of addressing severe data imbalance and scarcity, Gao et al. [

14] employed an enhanced CGAN to generate minority-class samples for ESP faults, Duan et al. [

15] proposed MeanRadius-SMOTE to better handle class imbalance in mechanical diagnosis, and Xu et al. [

16] combined ELM with MAML for few-shot adaptation in ESPCP diagnosis. In the aspect of adapting to dynamic environments with new faults, Zhou et al. [

17] proposed an online active kernel learning model that incrementally updates the diagnostic model by selectively querying informative samples under prior drift.

In the field of oil and gas production, this issue can be further elaborated. Current fault diagnosis technologies for downhole extraction equipment such as Electric Submersible Pumps (ESPs) or Electric Submersible Progressive Cavity Pumps (ESPCPs) have shifted from traditional mechanism-based models or statistical methods toward artificial intelligence algorithms [

18]. This trend is typically accompanied by an increase in the dimensionality of observational data, greater complexity in model architectures, and enhanced methods for dataset processing. As for multi-perspective observation of single-dimensional data, Liu et al. [

19] employed a multi-scale 1D convolutional neural network to learn motor current characteristics of progressive cavity pump drive motors, constructing an ESPCP fault diagnosis model. And in the direction of noise reduction for datasets, Li et al. [

20] proposed a fault diagnosis method for progressive cavity pumps based on improved wavelet packet and dynamic adaptive cuckoo search algorithm optimized BP neural network, achieving accurate diagnosis by analyzing key characteristic parameters such as active power and dynamic fluid level. In the direction of addressing dataset imbalance, Xu et al. [

21] conducted systematic research on electric submersible progressive cavity pumps, establishing a diagnostic model based on probabilistic neural network combined with wavelet packet time-frequency analysis on one hand, and constructing a random forest model using the Hadoop platform for fault classification through multi-parameter fusion on the other hand. Although these methods have achieved success in several specific operational scenarios, there remains a gap towards their genuine implementation in frontline oil and gas production scenarios.

Since the beginning of this century, researchers have begun exploring machine learning applications in fault diagnosis of submersible pumps, yet a comprehensive industrial solution has not been formed. From a data perspective, current mainstream methods typically construct complete and unified testbed datasets to ensure that differences within the data are controlled and arise solely from the target factors. However, when the data source cannot meet this requirement, i.e., when data originates from multiple independent cases, additional cleaning operations are necessary to address unknown discrepancies. On this basis, collaboration between mechanistic models and data-driven models requires in-depth consideration. Knowledge-guided methods have a relatively wide application scope, can handle various complex fault types, and exhibit strong adaptability to varying working conditions and environments. However, they often lack deeper perceptual and judgment capabilities. Data-driven methods, on the other hand, frequently face fragmented data distributions due to case differences. This prevents ordinary machine learning algorithms from effectively capturing features across larger datasets, thereby limiting model generalizability. Therefore, research in this field needs to further enhance the adaptability of methods to datasets, whether in terms of precision within limited scopes or their potential for transferability across broader contexts. Ou et al. [

22] conducted a relatively in-depth analysis of this issue and proposed a method from the perspective of domain generalization that utilizes inter-domain similarity and inter-class differences for contrastive learning. This approach denoises the feature extraction process and enables unified training on mixed-domain datasets.

Unlike the research based on specific small-scale applications mentioned above, this article aims to propose a technical solution with greater adaptability and expansion potential in larger scenarios. We take the decomposition of overall system efficiency as the entry point to reconstruct a unified physical description framework for the system’s operational state. The focus of the model’s learning is then directed towards extracting common fault features that transcend individual case differences. To achieve this goal, the model must not only possess the capability to extract deep and complex features but also be able to automatically identify and suppress interference information introduced by specific working conditions, sensor biases, or individual differences—information that is irrelevant to the core fault mechanisms. For this purpose, we propose a dual-channel correlation attention mechanism. This mechanism integrates the global time-series autocorrelation characteristics of cases with the local inter-parameter cross-correlation relationships within samples. It is embedded into each layer of the multi-scale feature extraction network. This mechanism enables the model to dynamically perceive and quantify the specificity components within samples during the feature learning process. Subsequently, it suppresses these components inversely through attention weights, thereby forcing the network to focus more on learning common fault representations with cross-case generalization capabilities. Specifically, the model utilizes autocorrelation function (ACF) analysis to extract global temporal features that characterize the periodicity and stability of system operation. Simultaneously, it employs Pearson correlation coefficients to construct a local relationship graph among parameters and captures local coupling information between parameters via a Graph Convolutional Network (GCN). These two types of correlation information, captured from the temporal and parameter spaces respectively, are fused and used as the query vectors for a cross-attention mechanism. This guides the feature extraction network to concentrate on more universal fault patterns. It is important to emphasize that this method is primarily designed for application scenarios lacking long-term, stable learning conditions and targeting groups of multiple devices with similar internal mechanisms but individual variations. For instance, ideally, the model could be deployed across multiple mine site units with similar geological conditions and identical equipment models but differing operational histories and working conditions. Its core objective is to eliminate the interference of individual variability on the final diagnostic results, achieving reliable diagnosis based on common fault mechanisms.

In summary, fault diagnosis for ESPCPs applied in coalbed methane wells faces multiple challenges: uneven data distribution due to diverse sources, significant individual differences between cases, limited sample sizes, and severe noise in field data. Furthermore, the strong nonlinearity and time-varying nature of the system render its operational state akin to a “grey box.” To address the aforementioned issues, this paper proposes an ESPCP fault diagnosis method based on a Multi-scale Convolutional Residual Neural Network (MCRNet). This network architecture integrates multi-scale feature extraction with residual learning and innovatively incorporates the aforementioned dual-channel correlation attention mechanism. The aim is to enhance the model’s ability to extract stable, common fault features from complex, noisy, and non-stationary data, thereby improving the diagnostic model’s accuracy, robustness, and cross-case generalization capability. Additionally, to tackle the common issue of class imbalance in industrial field data, this method synthesizes minority class samples within the high-dimensional feature space learned by the model. This ensures the generated samples follow a distribution closer to the real physical process, thereby optimizing the classification boundaries. Experimental results indicate that compared to other mainstream diagnostic models, the proposed method achieves superior comprehensive diagnostic performance on an actual, severely imbalanced industrial dataset.

The main contributions of this paper include the following:

Reconstructing the label classification of the original dataset through subsystem efficiency decomposition, and proposing the use of common features and fault region definitions for major fault categories.

Designing a dual-channel correlation attention mechanism that integrates case-wise temporal autocorrelation and sample-wise local parameter cross-correlation. This enhances the model’s perception in specific application scenarios, thereby inversely strengthening its amplification effect on common features.

Constructing an original industrial field dataset, performing enhancement in high-dimensional feature space, and validating the superiority of the proposed method through experiments.

The structure of this paper is as follows:

Section 2 introduces the theoretical background of related methods, presents the mechanistic formulas of the target system, and the efficiency decomposition process;

Section 3 describes the design of the feature extraction module, how the attention mechanism identifies case specificity, and the dataset processing;

Section 4 presents the experimental results and discussion;

Section 5 provides the conclusions.

2. Failure Mechanism and Dataset

The ESPCP system consists of multiple modules, including the power cable connecting the surface power supply to the downhole motor, the drive motor providing power to the main pump unit, the mechanical transmission shaft responsible for transmitting motor torque, the progressive cavity pump main body for extracting underground fluids, and the pipeline transporting oil and gas to the surface. Its structure is shown in

Figure 1. Typically, the ESPCP system is installed deep underground and operates for extended periods under the control of surface stations to ensure production stability. Its core component is the progressive cavity pump installed downhole. Its interior contains a rotating metal threaded rod combined with an outer stator rubber sleeve. Its working method involves generating multiple continuous cavities through rotor rotation, thereby creating a stable pressure differential. However, failures in any of the aforementioned parts can interfere with this process, affecting normal system operation.

According to the working principle of the progressive cavity pump, for a pump with stator lead

L (which represents the longitudinal length of the continuous cavity in the pump), when the rotor completes one revolution, the liquid in the enclosed cavity will move axially by distance

L. Assuming the cavity is completely filled, the theoretical displacement per rotor revolution is

the theoretical displacement of the pump is

where

is the theoretical displacement of the pump in m

3/d;

e is the eccentricity of the progressive cavity pump in m;

n is the rotational speed of the progressive cavity pump in r/min;

D is the diameter of the progressive cavity pump cross-section in m;

L is the stator lead in m. The overall operating efficiency of the progressive cavity pump system can be regarded as the ratio of theoretical flow to actual flow:

where

Q is the actual displacement of the pump in m

3/d;

is the overall operating efficiency of the progressive cavity pump.

Here,

is actually influenced by multiple factors and can be considered as the parameter through which all common fault types ultimately hinder stable system operation. By simplifying the system components, this parameter can be decomposed into four smaller efficiency factors:

where

is the efficiency of the drive motor utilizing electrical energy supplied from the surface;

is the efficiency of the rotor obtaining torque from the drive motor;

is the efficiency of the rotor actually lifting fluid at a fixed speed, and in certain specific cases is mainly determined by the volumetric efficiency

of the progressive cavity pump;

is the efficiency of fluid transport in the oil pipeline. During normal system operation, these four sub-efficiencies correspond to the working efficiency of various system parts respectively and can cover almost all common fault effect ranges.

Assuming the current rotational speed

, these four efficiency indicators are expressed as

where

is the mechanical power output of the motor;

is the electrical energy supplied to the ESPCP by the surface system;

is the torque output of the motor;

is the torque of the pump rotor;

is the actual outlet flow of the progressive cavity pump; the subsystem efficiency coefficients

,

,

,

,

are constants. For clarity,

Table 1 provides a comprehensive classification of all symbols and variables used in this section, categorizing them into parameters, directly measurable variables, and non-measurable variables based on their physical nature and measurability in field conditions.

In preliminary research, combining actual cases and expert experience, we found that since common faults follow certain behavioral patterns, fault factors mostly manifest as specific parameter variation trends and ultimately appear as abnormalities in these four efficiency factors. We divided the total system efficiency into four specific sub-efficiencies corresponding to components, then classified the data into six label categories: normal, power subsystem abnormality, mechanical transmission part abnormality, pump transport efficiency abnormality, oil pipeline blockage, oil pipeline leakage.

Figure 2 shows four common faults, corresponding to label types 1, 2, 3, and 4 respectively. Note that in the figure, the vertical axis represents the parameter values, while the horizontal axis represents time

T in seconds. In

Figure 2a, the power cable is damaged due to harsh working conditions, causing abnormal fluctuations in power system transmission efficiency in time interval [0, 24,000] and slowly increasing transmission loss in interval [24,000, 80,000]; In

Figure 2b, the transmission shaft fractures due to high load, showing early fault characteristics in interval [0, 25,000], with fault worsening at T = 60,000 causing the transmission system to malfunction, triggering continuous controller adjustments of set values. After this moment, the transmission shaft’s speed regulation capability severely declines, eventually necessitating shutdown maintenance. In

Figure 2c, insufficient water injection causes slight dry pumping in the pump well, with volumetric efficiency fluctuations resulting in abnormal pump transport efficiency. Since dry pumping does not worsen, the most important mechanical transmission system and drive motor do not suffer serious damage. In

Figure 2d, wax accumulation in the oil pipeline causes blockage, forming complex staged load changes with controller regulation effects. The operational data exhibits various change patterns, including fluctuations (interval [5000, 15,000]), brief shutdown (T = 16,000), long shutdown (interval [38,000, 52,000]), speed regulation delay (interval [53,000, 70,000]), oscillation (interval [85,000, 90,000]), etc. Considering different fault evolution speeds, deterioration methods, and other external factors, the aforementioned fault characteristics cannot cover all respective label categories, only representing relatively representative change patterns. Complex fault characteristic distribution is also a common problem faced by deep learning methods on such industrial datasets.

Under the aforementioned label classification method, we labeled relevant data from historical fault cases of a coalbed methane well field and integrated it into a dataset for training deep learning models. The preliminary standardization processing of the data is as follows:

Step 1: Wavelet-based Denoising. First, wavelet basis decomposition is applied to filter out high-frequency noise from the raw data. Specifically, for the voltage-related parameters that are susceptible to power grid fluctuations (i.e., Column 2: output voltage and Column 6: input voltage), we employed a discrete wavelet transform (DWT) using the Daubechies 4 (‘db4’) wavelet with five decomposition levels. A universal thresholding technique was used for noise removal: the noise standard deviation was robustly estimated using the median absolute deviation (MAD), and a global threshold was computed as threshold , where is the MAD-based noise estimate and N is the length of the coefficients. Hard thresholding was applied to all detail coefficients and the final approximation coefficient—coefficients with absolute values below the threshold were set to zero. The denoised signal was then reconstructed from the thresholded coefficients. This approach effectively suppresses high-frequency noise while preserving critical fault-induced transients in the data.

Step 2: Combine sliding window method with box plots to remove outliers in local signals;

Step 3: Use quadratic linear interpolation to fill missing values;

Step 4: Perform Z-score standardization on all dimensions of fault data. The formula is

where

x is the parameter value of the variable;

is the local mean of the variable;

is the normal standard deviation of the variable.

After completing the aforementioned data standardization process, we obtained dimensionless sample data. Based on this, we used correlation analysis and sliding window method to rearrange and slice the data dimensions. Traditional fault monitoring methods for submersible centrifugal pumps use Hotelling’s statistic and SPE after PCA dimensionality reduction to perceive changes in relationships between system multidimensional variables. The basic principle is that when the system is in normal operation, multiple variables maintain relatively stable relationships, while the impact of fault occurrence disrupts many mapping relationships among them, causing rapid increase in fault warning indicators. Therefore, relationships between variables contain external representations of fault patterns, requiring extraction and identification.

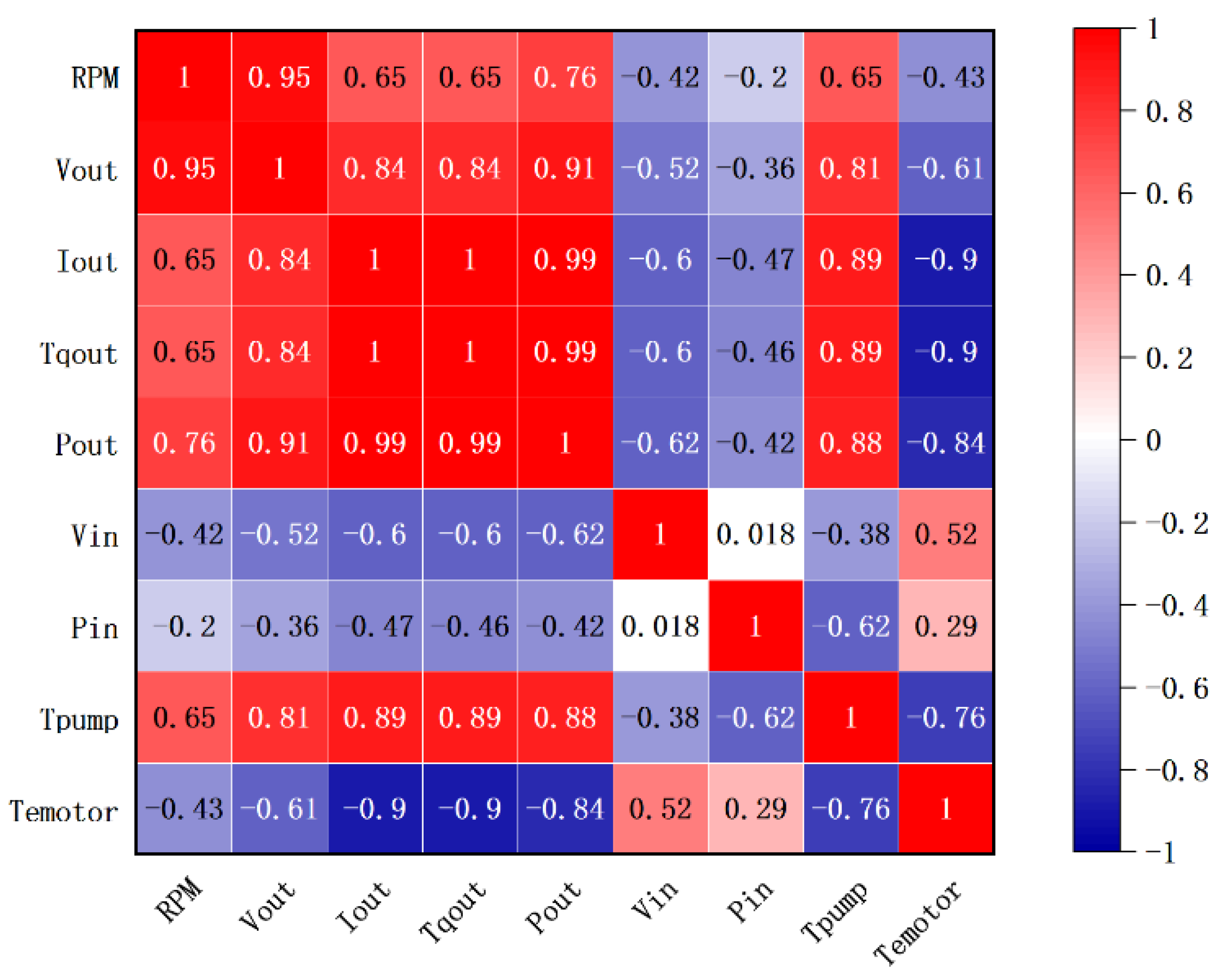

To enable the feature mapping model to better extract data dimension features, we collected operational parameters under normal conditions and analyzed variables using Pearson coefficient, with results shown in

Figure 3. The correlation analysis results show that motor speed, load, and related current and voltage parameters exhibit strong positive correlation, while input current and voltage related to power transmission and mechanical torque related to mechanical transmission are relatively independent. Interrelationships between motor-related parameters often change due to internal motor faults. Mechanical torque is simultaneously directly affected by both the drive motor and transmission shaft. Motor load is influenced by the aforementioned two factors and also by the oil pipeline status. In addition to these direct influence relationships, coupling faults, special environmental factors, controller regulation, and other internal and external factors also have immeasurable effects on different variables. Considering measurement limitations, we selected the aforementioned three types of parameters for directly observing the operational status of three subsystems, while also indirectly describing other parts of the overall system. After comprehensively considering correlation analysis results and field data availability, we selected six parameters as model inputs, arranged in the following order: output speed (rpm), output voltage (V), output current (A), output power (KW), rotor torque (NM), input voltage (V).

The selection of these six parameters is the result of a trade-off among data availability, physical interpretability, and signal reliability in field conditions. While more downhole physical parameters exist, many (e.g., downhole casing pressure) exhibit highly complex characteristics due to the strong coupling of control actions, formation changes, and system dynamics. Their signals are often too entangled for quantitative analysis and are typically used only for qualitative assessment. Therefore, we prioritize electrically and mechanically parameters that are stably measurable at the surface and directly reflect the core operational states of the motor, drive shaft, and pump load.

Critically, under different fault conditions, these six parameters exhibit distinct and interpretable patterns that align with the efficiency factor framework outlined in

Section 2:

Label 1 (Power/Motor Fault): Primarily affects the electrical energy transmission path, causing measurable deviations in the steady-state relationship between input voltage and output electrical parameters (voltage, current). This pattern is associated with the efficiency factor .

Label 2 (Mechanical Transmission Fault): Manifests as disruptions in torque transmission, leading to characteristic distortions in the dynamic profile of the output speed (e.g., oscillations, transient spikes) and subsequent anomalies in rotor torque. These correspond to failures captured by .

Label 3 (Abnormal Pump Pressure): Impacts the fluid displacement process, resulting in irregular fluctuations that simultaneously affect pump load (reflected in output power/rotor torque) and flow efficiency. This behavior is linked to the efficiency factor .

Label 4 & 5 (Pipeline Blockage/Leakage): Both alter the hydraulic load on the system. A blockage (Label 4) typically introduces a variable time delay and complex staged changes in the system’s response, visible across multiple parameters. A leakage (Label 5) primarily causes a persistent offset or steady-state error in the load under closed-loop control. These disturbances are both encompassed by anomalies in .

Thus, the chosen parameter set not only provides a concise representation of system state but also carries the necessary discriminative information to link observable data patterns to underlying fault types and their corresponding efficiency factor anomalies. This establishes a clear, physics-informed foundation for the subsequent data-driven feature learning and classification tasks.

In the ESPCP system, most common fault types, including some sudden fault cases such as shaft breakage, are gradually caused by specific factors over a period, which can be viewed as a gradually developing process. Therefore, the data samples we extract should carry additional autocorrelation relationships [

23]. After comprehensive consideration, we used a sliding window method similar to the data processing part for time dimension sampling. By investigating numerous past cases and combining common fault cycle lengths, we ultimately set the window length to 2880, equivalent to 48 h, with a sampling step size of 720, equivalent to 12 h. The adopted sample form is multivariate time series data.

3. Methodology

The application of Convolutional Neural Networks (CNN) in the field of fault diagnosis is primarily based on their excellent local feature extraction capability. In fault diagnosis tasks, low-level convolutional kernels typically learn basic features such as edges and textures, while as network depth increases, high-level convolutional kernels can combine these basic features to form more discriminative high-level feature representations. Traditional single-scale convolutional networks have the limitation of fixed receptive fields during feature extraction. During network training, shallow convolutional kernels usually capture basic features (such as sudden changes or periodic variations in signals), while deep convolutional kernels combine these basic features into more complex features (such as specific fault patterns). We designed a novel correlation attention mechanism to enable this multi-granularity information perception capability to be more effectively utilized. The overall workflow of the model is shown in

Figure 4. As depicted, the model acquires data from the online monitoring system of industrial equipment, which undergoes uniform cleaning and sampling before training. Prior to feature extraction, the data is observed by an attention mechanism for its differential information, and this information directly supervises the feature extraction channels at different scales. This compensation-like mechanism ensures that the features, when participating in classification training, exhibit more accurate category characteristics while avoiding similar features introduced by other positional factors.

3.1. Multi-Scale Feature Extraction Module with Residual Structure

Traditional CNNs use single-size convolutional kernels, relying only on fixed fields of view to observe signal characteristics. To address this issue, we adopted a multi-scale convolutional structure [

24,

25], simultaneously using convolutional kernels of different sizes (such as 16, 64, 256, etc.). This concept aims to strengthen the ability to recognize information by observing features of varying scales, from the local to the global scope [

26]. Small-scale convolutional kernels are primarily used to observe short-period fault patterns, such as common mechanical faults, which are often accompanied by significant oscillations spanning several seconds. In contrast, large-scale kernels are more suitable for analyzing long-term trends, including slow variations like a continuous decline in power supply efficiency over several hours, or hysteresis phenomena lasting tens of minutes after controller adjustments, which may arise from multiple potential causes. In progressive cavity pump diagnosis, the characteristic scales of mechanical faults and electrical faults differ significantly, and this multi-scale design can more comprehensively capture various fault features. Importantly, these different scale convolutions are computed in parallel, without significantly increasing overall computation time. It is crucial to note that the temporal length of fault features exhibits considerable randomness. The determination of specific window sizes and kernel scales primarily relies on the expertise of seasoned field professionals, combined with statistical analysis of relevant historical cases [

19]. Due to the inherently subjective nature of defining certain diagnostic criteria (e.g., thresholds or waveform characteristics), such estimations typically incorporate a significant margin to accommodate potential variations, including unusually prolonged fault scenarios. This empirical yet cautious approach ensures the model’s robustness against a wide range of fault manifestations encountered in real-world operations. Finally, features from the three scales are concatenated and fused through a 3-layer fully connected layer, ultimately outputting 128-dimensional fused features.

To further enhance feature extraction capability, we incorporated residual structures into the network [

27]. The core principle is to allow the network to focus on learning the difference between input and output, rather than directly learning the complete mapping relationship. Specifically, each residual module contains two convolutional layers, each followed by data normalization (batch normalization) and activation function (ReLU) processing, finally adding the original input to the convolutional result. This design effectively alleviates the common gradient vanishing problem in deep networks, enabling the network to learn deeper features and significantly improving diagnostic accuracy. The multi-scale convolutional residual network constructed in this paper integrates the advantages of the aforementioned techniques, with its structure shown in

Table 2. Multi-scale convolutional layers extract features of different granularities in parallel, while residual structures ensure these features can be effectively transmitted and combined in deep networks. Finally, multi-scale feature fusion and classification are achieved through feature concatenation and fully connected layers. Compared to traditional methods, this network has three significant characteristics: adaptive feature learning avoids the subjectivity of manual feature extraction; multi-level feature fusion enhances diagnostic reliability; end-to-end training simplifies the diagnostic process.

3.2. Cross-Attention Mechanism for Characterizing Specificity Factors

Industrial field cases possess specificity, a characteristic arising not only from the uniqueness of the equipment itself but also influenced by factors such as geology, climate, and lifecycle, thereby complicating the origin of features. This phenomenon severely interferes with the extraction of common features. Consequently, the model itself should possess the ability to analyze the data source it identifies and the corresponding objective environmental factors, enabling reverse elimination by perceiving specificity factors.

We choose the attention mechanism to achieve this capability [

28]. We hypothesize that the specificity of a case can emerge from the intrinsic correlation patterns of its data, mainly manifested in two aspects: first, the evolution pattern of the case overall in the time dimension; second, the interaction of operational parameters at local time points. For this purpose, we designed a dual-source condition vector, capturing these two patterns through autocorrelation function and graph convolutional network respectively. In deep learning, attention mechanism is a mature supervised method widely used to constrain the feature processing within the model and guide this trend through manually designed indicators. To appropriately describe the objective specificity of the data source and the relative position of the sample within the entire source, we selected two correlation calculation methods.

Figure 5 illustrates the workflow of the attention mechanism.

In the attention calculation process, we designed information collection operations from both local and global perspectives. The purpose of this design is to address the variable system characteristics under dynamic operating conditions. The global perspective focuses more on the system’s long-term operational state, such as its load level and operational stability. This information forms the foundation of the attention mechanism and is compared with the specific information carried by the sample. This comparison operation is absent in most fault diagnosis studies that do not simultaneously consider short-term and long-term characteristics. The specific short-term feature information primarily characterizes the dynamic relationships among multiple key parameters within a certain time period and is captured by the GCN structure. The final calculation results are applied to the feature extraction stage, rather than the classification stage, thereby reducing issues in practical application scenarios.

The first method calculates the temporal autocorrelation of the case from which the data originates using the autocorrelation function, thereby describing the overall development trend and characteristics of the case. The temporal autocorrelation function describes the self-similarity of a single parameter sequence at different lag steps, effectively characterizing the memory length and periodic trend of this parameter over the entire case time span, reflecting the macro inertia of system operation. To extract this feature, we compute the ACF for each parameter channel and extract its decay characteristics as representation.

For the

c-th parameter channel time series

of a sample in the batch, its autocorrelation function

at lag

is calculated as

where

is the mean of this channel sequence. To obtain a fixed-length representation vector, we calculate the number of lag steps required for

to decay to its first zero-crossing point, which characterizes the “memory length” of this parameter sequence:

Finally, for all

channels of a sample, the lag steps are normalized using max-min normalization to obtain the overall temporal autocorrelation vector

:

The second method constructs a local adjacency matrix using Pearson correlation coefficient and employs a GCN structure to compute the cross-correlation among the six parameters of the data extracted at specific time points, thereby describing local characteristics. The cross-correlation between parameters reveals the dynamic coupling relationship among system subsystems, which exhibits specific patterns under particular fault modes. We utilize graph convolutional network to aggregate local neighborhood information, thereby encoding this local interaction.

First, for the current sample, compute the pairwise Pearson correlation coefficients among its

C parameter time series to form the local adjacency matrix

, whose element

is

where

is the standard deviation. To enhance the significance of graph connections, set a threshold

for filtering: if

, retain it; otherwise set to 0. Subsequently, perform symmetric normalization on

A to obtain the normalized adjacency matrix

:

where

D is the degree matrix,

.

Input this sample data

and

into a single-layer GCN, and obtain sample-level representation through global average pooling:

where

and

are the learnable parameters of the GCN layer. The output

is the local parameter cross-correlation vector.

Finally, concatenate the two source vectors to form the final dual-source condition vector

:

To utilize the dual-source condition vector to guide multi-scale feature learning, we introduced conditional attention modules at the ends of the three branches of the original multi-scale convolutional residual network. This mechanism enables the model to adaptively assign different importance weights to feature maps at different scales based on the specificity of the current sample.

Let the feature map output by the s-th branch be . First, reshape it into a sequence of feature vectors of dimension : .

The conditional attention module takes the aforementioned dual-source condition vector as condition, and the calculation process is as follows:

1. Generate query vector: Map the condition vector through a branch-specific fully connected layer to a query vector matching the feature channel number

of this branch:

add dimension to

to serve as query:

.

2. Calculate attention weights: Use the feature map

as key and value. Calculate the dot product of the query with all keys and normalize through Softmax function to obtain attention weights

:

3. Apply attention weights: Use attention weights to perform weighted summation on the values (i.e.,

), obtaining the conditional feature vector

for this branch:

The above process is executed in parallel on the three branches (parameters not shared), finally obtaining three conditional feature vectors

,

,

. These vectors are subsequently concatenated and fed into the fully connected layer for classification:

This mechanism enables the model to perceive sample specificity and reversely suppress feature responses caused by specific factors through attention weights, thereby strengthening feature expressions related to the fault essence and more common across different scales, enhancing the model’s generalization capability and robustness in complex industrial scenarios.

3.3. Feature Space-Based Sample Augmentation Method

In deep neural network-based fault diagnosis, the class imbalance problem significantly affects model classification performance. To address this issue, this study proposes an innovative method that performs sample augmentation in the 128-dimensional feature space output by the penultimate layer of the network. This method effectively mitigates the class distribution imbalance in fault data by combining Borderline-SMOTE and Tomek-Links techniques.

The Borderline-SMOTE algorithm is the core component of this method. This algorithm first identifies critical minority class samples located at the classification boundary, which must satisfy specific conditions:

where

represents the set of

k nearest neighbors of sample

,

denotes the majority class sample set, and

is a set threshold (taken as

and

in this experiment). It should be noted that the parameter k determines the degree of fit between the generated sample and other neighboring samples. When

k is too small, it will result in more isolated points being generated, while when

k is too large, it will limit the generated points to a very small range due to limited selection rights. And

constrains the description of the boundary, usually the smaller the value, the closer the generated points are to the boundary, and the easier it is to introduce noise. For each

identified as a borderline sample, randomly select a sample

from its same-class

k nearest neighbors, and generate a new sample according to the following interpolation formula:

where

is a random interpolation coefficient. This selective interpolation approach ensures that new samples are concentrated near the decision boundary, effectively expanding the coverage of minority classes.

The Tomek-Links technique is used to optimize the decision boundary. The judgment condition is: for a sample pair

from different classes, when satisfying

for any sample

of other classes, it constitutes a Tomek-Link, at which point the majority class sample is preferentially removed to optimize the classification boundary.

Performing sample augmentation in the feature space offers significant advantages. First, the 128-dimensional features extracted by the deep network exhibit better intra-class compactness and inter-class separability, making the virtual samples generated by Equation (

11) more consistent with the real data distribution. Second, linear interpolation operations in the feature space correspond to reasonable transitions between fault states, possessing more physical significance compared to interpolation performed in the original time-domain space.

4. Experiments and Analysis

The experimental framework was implemented in Python 3.12, utilizing PyTorch 2.9 for neural network construction and Scikit-learn for building baseline machine learning models. This section evaluates the proposed model’s performance, conducts comparative analyses against conventional methods, and performs ablation studies based on the dataset detailed in

Section 4.1.

4.1. Dataset Construction

The experimental data were acquired from the Supervisory Control and Data Acquisition (SCADA) systems monitoring electric submersible progressive cavity pump (ESPCP) operations in coalbed methane wells located in southwestern China.

The dataset originates from 31 distinct industrial cases, corresponding to specific fault episodes or extended periods of normal operation from individual wells. These cases are classified according to the efficiency-based diagnostic framework established in

Section 2.

Table 3 summarizes their distribution and the cumulative duration of valid data segments within each fault category. A critical aspect of this dataset is that a single case (identified by a unique ID) may encompass multiple non-contiguous temporal segments, all of which contribute to the subsequent sample generation process.

The construction of the final modeling dataset adhered to a rigorous pipeline. Comprehensive preprocessing—including wavelet-based denoising, outlier removal via box plots, missing value imputation, and Z-score normalization—was applied to all valid data segments, as detailed in

Section 2. Multivariate time series samples were then generated by applying a sliding window (length = 2880, step = 720) sequentially to each processed segment. To ensure the generalizability of the evaluation and prevent data leakage, a case-wise splitting strategy was employed. Specifically, after sample generation from each case, the 31 cases were randomly partitioned, with approximately 70 percent allocated to the training set and the remaining 30 percent to the test set. This strict partitioning ensures that all samples derived from any individual case reside exclusively in either the training or testing subset, thereby guaranteeing that model evaluation is performed on completely unseen fault episodes from the same field with identical pump types. This approach simulates the practical scenario of diagnosing future faults based on historical data within a specific operational context. Finally, to mitigate the severe class imbalance inherent in the initial sample distribution, as shown in

Table 4, the Borderline-SMOTE and Tomek Links techniques were applied, but strictly within the feature space of the training set, as detailed in

Section 3.3, leaving the test set in its original, imbalanced state to reflect real-world conditions.

Table 4 presents the final composition of the sample library. ‘Initial Quantity’ denotes samples derived directly from the segmented and windowed case data, while ‘Generated Quantity’ refers to synthetic samples introduced solely into the training set for balance correction.

All subsequent experiments and analyses reported in this paper are based on this rigorously constructed and partitioned dataset.

4.2. Model Training

In the first part, we trained the multi-scale residual convolutional neural network for feature extraction. The experimental settings were as follows: batch size 128, decreasing learning rate with initial value 0.001, and 20 training epochs.

Figure 6 shows the training effectiveness of the feature extraction part, with the left subfigure showing the loss curve and the right subfigure showing the classification accuracy changes per epoch. The figure demonstrates that the model converges quickly without getting stuck in local optima.

4.3. Ablation Study

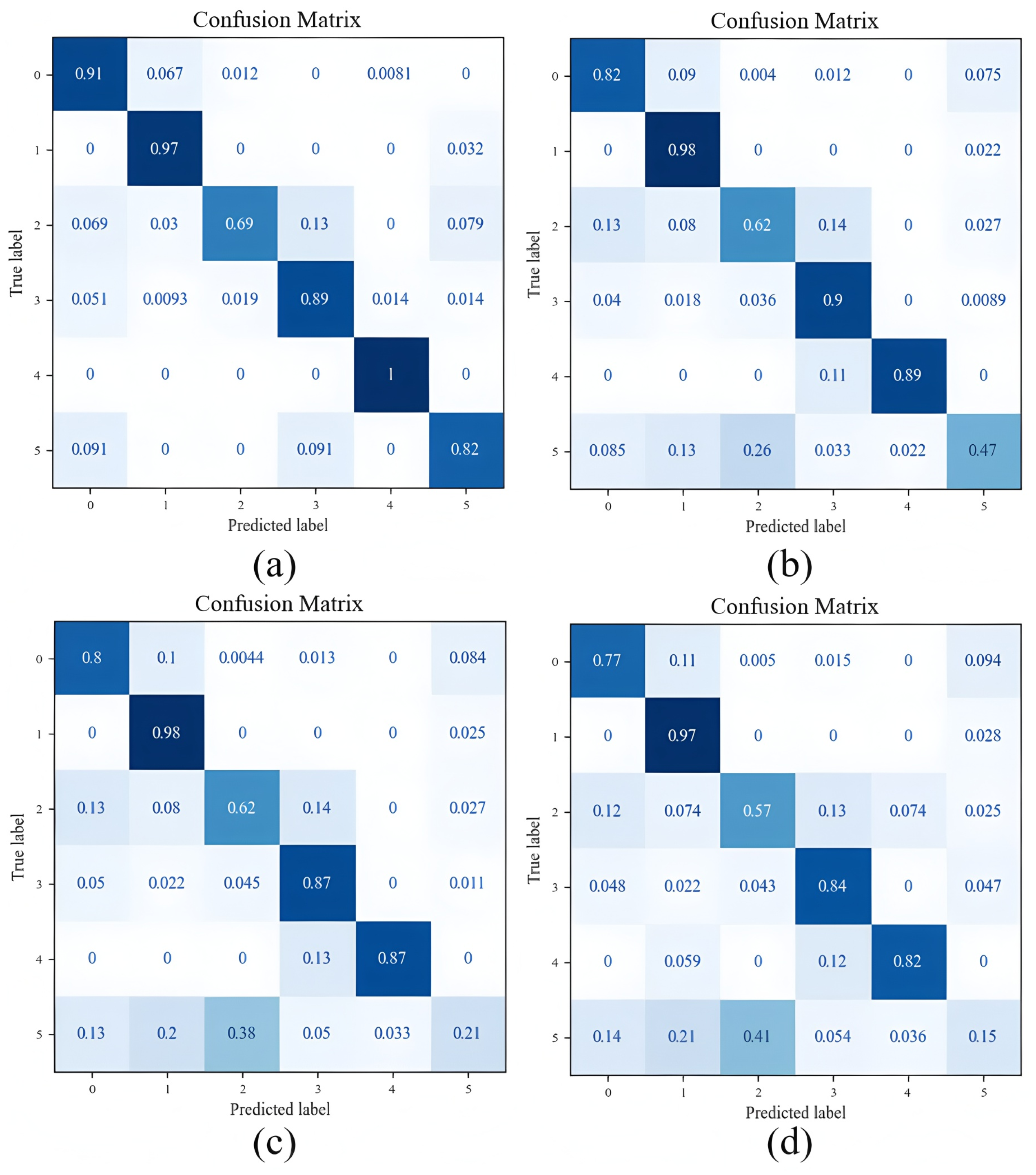

In the second part, we conducted ablation experiments and performance comparison tests with ordinary convolutional neural network classification models. The experimental results are shown in

Figure 7. In this part, we tested the complete model proposed in this paper, the model without the correlation attention mechanism, the model further without residual connections, and the model without multi-scale feature fusion. The last model is equivalent to an ordinary multi-layer convolutional neural network classification model. The confusion matrices of these four models correspond to

Figure 7a–d, respectively.

Table 5 shows the test results of the four models. The data in

Table 5 demonstrate the effectiveness of all modules in the model and show their contribution to improving model accuracy. Compared to the basic model, the complete model improved the F1 Measure from 80.0 to 90.7. Furthermore, combined with the confusion matrices in

Figure 7, it can be observed that although multi-scale feature fusion and residual structures enhance classification accuracy by strengthening the model’s perception capability, they cannot solve the recognition of some complex samples in labels 2, 4, and 5. This phenomenon occurs because these samples contain some relatively special isolated cases that exhibit significant differences from the majority of other cases due to other factors. Therefore, without attention perception, the model cannot correctly identify these samples.

As evident from the confusion matrices, the specific effects of each model component can be observed. The most pronounced improvement stems from the addition of the attention mechanism, which significantly elevates the accuracy for Labels 4 and 5. The primary reason is that cases for Labels 4 and 5 have less available data and often lack distinct early-stage fault characteristics, which further compresses the distribution of usable information across the time span. This indirectly demonstrates that when reference data is scarce, the inherent differences between data points can introduce greater noise impact. Furthermore, after removing the residual structure, the misdiagnosis of Label 5 as Label 2 worsens considerably, while the impact on other classes is less significant. This result indicates that the model’s ability to perceive deep-level features is crucial for this challenging, minority-class classification task. It can be hypothesized that the model’s lack of deep feature perception would also impair the effectiveness of the attention mechanism, as the latter relies on the feature extraction process to function.

4.4. Comparative Experiments

In the third part, we tested the comparative effectiveness of our model with more existing models. The compared models include: (1) AlexNet, (2) SVM, (3) RFs, (4) BPNN, and (5) our model. Among them: (1) AlexNet is a classic CNN structure with automatic padding, containing five convolutional layers and two fully connected layers, using 96 11 × 3 convolutional kernels, 256 5 × 3 convolutional kernels, two identical layers of 384 3 × 3 convolutional kernels and 256 3 × 3 convolutional kernels, with the first layer stride of (4,1) and other strides of 1; MaxPooling 2D pooling layers were added after the first, second, and fifth convolutional layers, all using 3 × 1 pooling kernels with stride (2,1); the two fully connected layers output 4096 dimensions, with a Dropout layer (ratio 0.5) in between, and finally a softmax layer for output; (2) SVM was configured using the Scikit-learn library, using the Reshape function to flatten the input, with RBF kernel and regularization parameter 1; (3) RFs were also configured using the Scikit-learn library, using the Reshape function to flatten the input, with 500 trees and maximum depth 10; (4) BPNN is a six-layer fully connected network, flattening and concatenating the data matrix through a Concat layer, with ReLU activation function for the first five layers, Dropout layers (ratio 0.5) after the first three layers, and output dimensions of the six layers being 4096, 1024, 512, 128, 64, and 1 respectively. The comparative experiments used the same dataset and training method as the first part.

The comparative experimental results are shown in

Table 6. The experimental results show that our model achieved the best average F1-score of 90.7% among the five methods, based on 10 repeated trials. Among the other methods, AlexNet achieved 83.1%, BPNN achieved 75.2%, RFs achieved 70.1%, and SVM achieved 68.9%. Among the other methods, AlexNet achieved 83.1%, BPNN achieved 75.2%, RFs achieved 70.1%, and SVM achieved 68.9%. SVM showed the lowest classification accuracy in the comparative test, likely because multiple feature sizes and patterns exist within the same category, introducing interference for forming correct hyperspace. During dimensionality reduction, the model retained incorrect feature dimensions, thus failing to eliminate this interference.

4.5. Case Testing

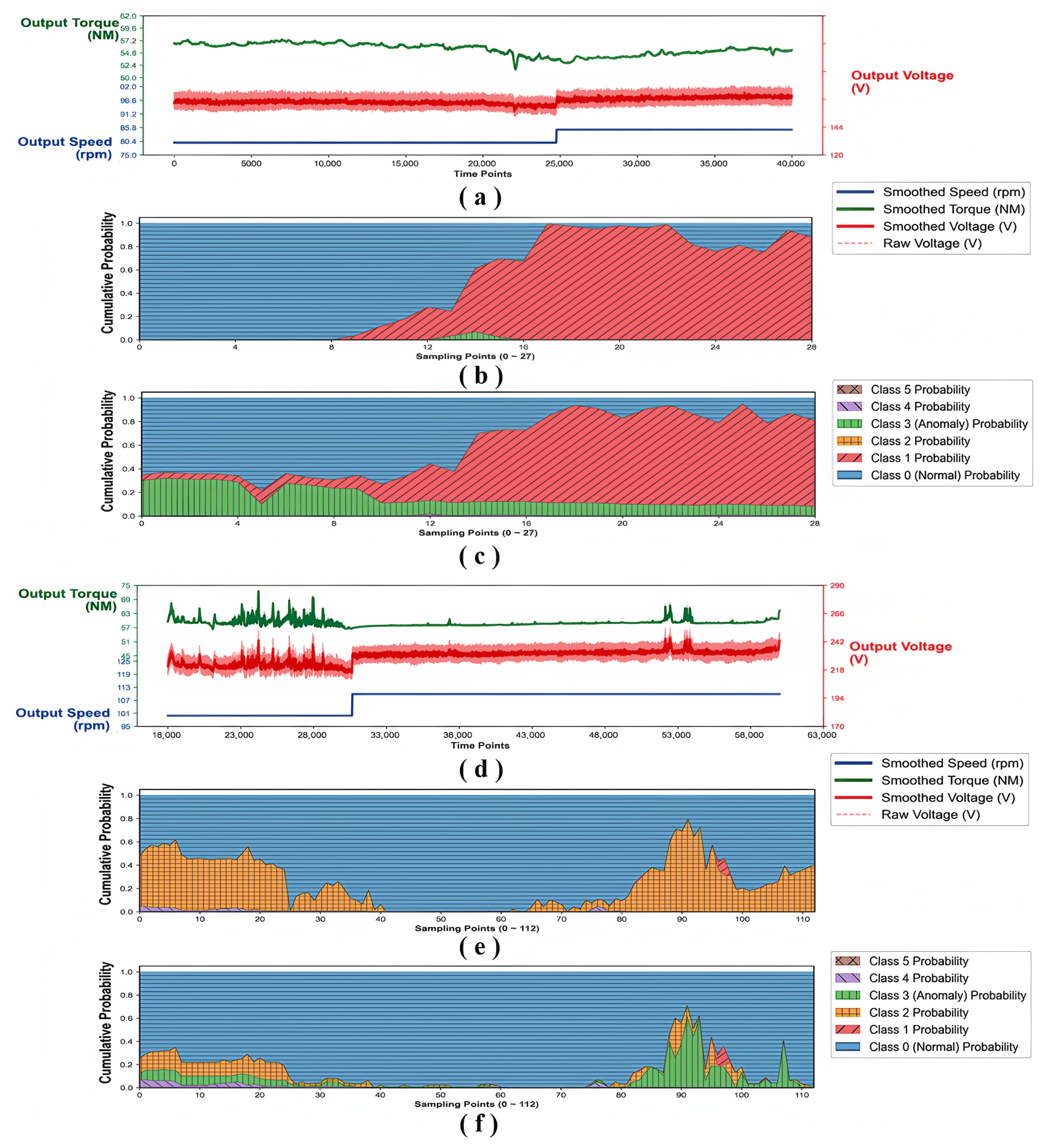

In the fourth part, we performed testing using two field fault cases. The experiment used the model trained on the sample set to test, simulating field operation by performing periodic sampling detection on two long-term cases. The sampling window and step size were the same as when constructing the sample set, 48 h and 12 h, respectively. For each window, the model performed identification and output the identification results in the form of cumulative probability distribution. To more clearly demonstrate the impact of specificity in each case, we added a control group. This control group removed the correlation attention mechanism during training while retaining all other structures. The test results are shown in

Figure 8, where

Figure 8a–c show the operational parameter curves of fault case one (with motor output voltage in red, torque in green, output speed in blue), the identification results of the complete model, and the identification results of the control group without specificity perception capability, respectively;

Figure 8d–e show the corresponding results for case two. For the model’s output results,

Figure 8 displays continuous cumulative probability distributions, where probabilities for labels 0, 1, 2, 3, 4, 5 correspond to six colors: blue, red, orange, green, purple, and brown, respectively. The sampling step size is consistent with the dataset construction in this paper, once every 720 min, corresponding to every 720 points on the horizontal axis in

Figure 8a,d.

4.5.1. Case 1: Electrical Equipment Fault (Above)

Case one presents an electrical equipment fault event. Due to long-term wear on the power cable surface, internal wires were eventually damaged, causing a decrease in power transmission efficiency from the surface station to the downhole motor. During time period [10,000, 17,500], the equipment was still in the early wear stage without damage to internal wires. Around time point [17,500], due to damage to the protective layer, obvious leakage occurred. Due to decreased power supply, the system increased speed to compensate for the impact of the fault.

From

Figure 8b, it can be observed that the model demonstrates remarkable sensitivity in capturing early fault characteristics. While this feature could potentially be achieved through simple external model adjustments, assuming an early warning threshold of 30% probability, the model in

Figure 8b, begins issuing alerts around time 18,000. In contrast, the controller’s response appears around 24,500, representing a delay of 4 days and 12 h. Considering that control center staff cannot directly monitor motor data from all wells, the model in this case demonstrates excellent performance in autonomous diagnosis and early warning.

From

Figure 8d, it can be seen that after removing the perception of case specificity, the model exhibits significant confusion. Although the overall feature distribution shift caused is not substantial (the shift here is consistent with the concept in transfer learning), it can still be stably observed. This phenomenon proves that the model indeed spontaneously adjusts classification boundaries during training. This phenomenon proves that the model indeed spontaneously adjusts classification boundaries during training. This adjustment is performed at the case level in terms of distribution, making its effect more pronounced for samples near the classification boundary within each case. For complex mechanical systems, these ambiguous samples typically reside at the interface between two stable states, making them crucial for achieving advanced prediction.

4.5.2. Case 2: Mechanical Transmission Structure Fault (Below)

Case two presents a mechanical transmission structure fault event. Unlike the minor impact in the previous case, in this case, the transmission shaft jammed due to foreign object entry and rapidly deteriorated, causing complete system failure. During time period [18,000, 22,000], the output voltage can relatively intuitively reflect the periodic increase in system load with increasing frequency. At time points [22,000] and [30,500], the system state changed twice: first, the voltage completely entered a state of large irregular fluctuations, then the controller increased speed, bringing the system into another relatively stable equilibrium state. At higher speeds, the jam was temporarily masked, but the underlying factors were not eliminated, causing the system state to become unbalanced again during time period [50,000, 60,000], and complete jamming occurred after time point [60,000].

From

Figure 8e, it can be observed that the model’s identification of obvious fault characteristics is consistent with system performance, and after the system briefly returns to a stable state, the model recaptures the trend of re-deterioration. Since measured parameters contain underlying noise during system operation, many underlying features cannot be observed. However, starting from time point [40,000], we can still observe regular fluctuations in the lower limit of output voltage before smoothing operations. The period of these fluctuations is approximately 21 h, similar to the behavioral performance of the first-stage jamming (referring to obvious periodic oscillations with similar period lengths). The model possesses excellent perception capability for such quantitative features, thus enabling it to determine that the fault would deteriorate again.

From

Figure 8f, it can be seen that in case two, the model exhibits significant misjudgment, identifying category 2 as categories 3 and 4. This misjudgment can only be obtained from probability distribution outputs, as most occur at moments not triggering the warning threshold. The differences between Label 2 and Label 3 show similarity under certain circumstances, primarily because both can trigger significant large-scale fluctuations and cause load loss of control. Furthermore, the inter-case variability for these two types of samples is also the greatest, as mechanical faults and pump efficiency anomalies can be triggered by various different factors and exhibit distinct differences with varying severity levels. This issue can lead the model to learn incorrect features during training, thereby resulting in erroneous outcomes. After losing perception capability, the model’s overall judgment trend does not show significant changes because the specificity between cases does not create fundamental differences. This point still requires further verification.

4.6. Discussion and Limitations

The model designed in this paper has obvious preset application scenarios, so the theories obtained will be discussed in the field of ESPCP fault diagnosis. From multiple comparative experiments and ablation studies, it is not difficult to see that the method proposed in this paper mainly addresses the high dimensionality and complexity of fault data, as well as dataset imbalance and case independence. Experiments preliminarily verify the rationality and effectiveness of these methods. However, some issues remain to be resolved.

The first problem is the cost of data labeling and the associated human-computer interaction issues. Although many current studies suggest that self-supervised or semi-supervised learning can effectively handle information-sparse datasets, this is not applicable in the context of this paper. The reason is the complex composition of faults themselves, involving multiple triggering factors and multiple system parts. Although we compensated for this issue using efficiency decomposition in

Section 2, we still failed to completely resolve the conflict between problem complexity and label classification simplicity. From a more specific perspective, this paper attempts to use the softmax output to display a dynamic probability distribution that represents the evolution of a fault from an early stage to a severe later stage, and this is demonstrated using two representative cases. Under more complex working conditions, this process of state change is ambiguous and difficult to define qualitatively. Clearly, relying on a static label from a single dimension cannot provide a complete description of this process, and this limitation is not due to insufficient model performance but stems from issues in human–computer interaction. This problem occurs at both the “data labeling” stage and the “model output to human analysis” stage, and it can also be understood as occurring throughout the complete interaction process from “user to model” and from “model to user.” This issue requires more in-depth discussion in the future.

The second problem is the lack of system observation methods and the difficulty in effectively measuring the model’s perception capability. Although we explained the shift phenomenon after weakening the model’s perception capability in the case analysis section, we lack effective measurement methods for the specific parts and extent of this shift. The process of the model spontaneously correcting this shift is unobservable, thus preventing further targeted optimization. For large-scale field reuse and cross-well migration, this characteristic significantly increases practical implementation difficulty. Current research on domain generalization also deeply explores similar problem scenarios and generally attempts to solve them through domain alignment methods, which in most cases are unidirectional and open-loop. In comparison, the approach proposed in this paper is closer to an adaptive anti-interference algorithm and explicitly restricts data features caused by non-target factors outside the extraction process. Therefore, how to further optimize this screening and restriction mechanism becomes the core problem for improving the current model.

The third problem concerns cross-system migration. While the research approach presented in this paper holds potential for transplantation to other similar industrial pumping systems, several challenges exist. First, direct parameter-level migration is difficult because both the model architecture and data labels are deeply intertwined with the specific application scenario and the expertise of field engineers. Consequently, these elements require adjustment to adapt to new targets during the migration process. Second, the cross-attention mechanism proposed in this paper is designed to address data noise stemming from case-specific factors. In a new application scenario, this factor may change significantly—for instance, the discrepancy might be so large that it exceeds the noise-reduction capacity of the attention mechanism. Taking these issues into account, preliminary validation work for migration also imposes requirements on data volume, which is the most difficult to fulfill in industrial settings.

In light of the above issues, we will focus future research directions on further expanding the model’s applicability, primarily by enhancing data usability in scenarios with greater heterogeneity and developing automated labeling mechanisms for grey-box states. Similar to the concept of domain generalization, we will focus more on identifying common data features across different scenarios within the same label categories.