Real-Time Detection of Electrohydrodynamic Atomization Modes via a YOLOv8-Based Deep Learning Model

Abstract

1. Introduction

2. Experiments and Model Development

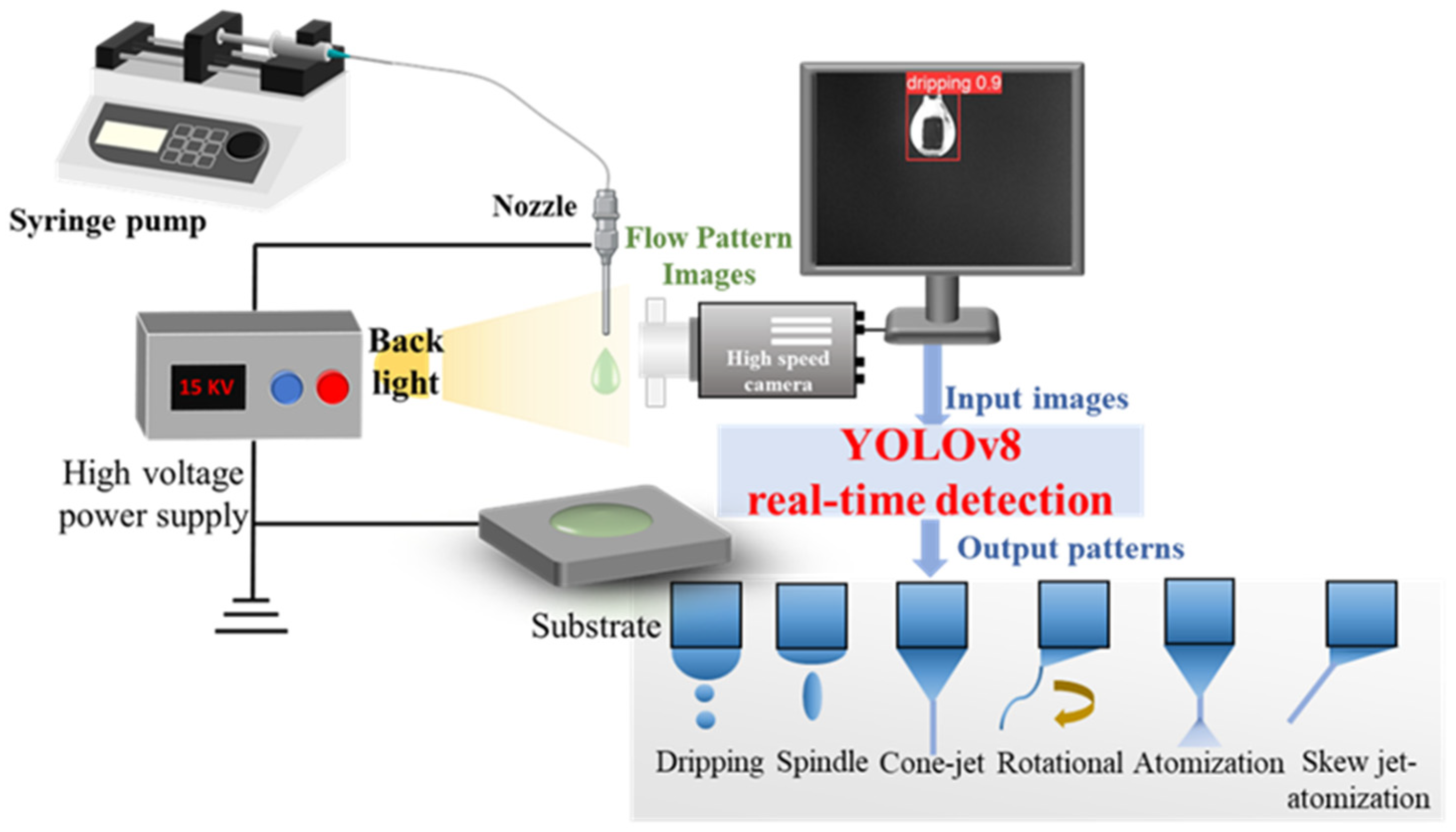

2.1. EHDA Experimental System for Real-Time Detection

2.2. Data Preprocessing

2.3. YOLOv8 Model

2.4. Performance Metrics

3. Results

3.1. Training Process

3.2. Feature Attention Mechanism

3.3. Mode Detection Performance

4. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| CNN | Convolutional Neural Network |

| EHD | electrohydrodynamic |

| EHDA | electrohydrodynamic atomization |

| YOLO | You Only Look Once |

| LED | Light Emitting Diode |

| mAP | mean Average Precision |

| Conv | convolutional |

| C2f | Conv-to-Fully-Connected |

| SPPF | Spatial Pyramid Pooling Fusion |

| FPN | Feature Pyramid Network |

| PAN | Path Aggregation Network |

| IoU | Intersection over Union |

| CIoU | Complete Intersection over Union |

| AP | Average Precision |

| cls_loss | classification loss |

| DFL | Distribution Focal Loss |

| Grad-CAM | Gradient-weighted Class Activation Mapping |

| GFLOPs | Giga Floating-point Operations per Second |

| Params | parameters |

| 1-D CNN | One-Dimensional Convolutional Neural Network |

| ML | Machine Learning |

References

- Xie, J.; Jiang, J.; Davoodi, P.; Srinivasan, M.P.; Wang, C.-H. Electrohydrodynamic atomization: A two-decade effort to produce and process micro-/nanoparticulate materials. Chem. Eng. Sci. 2015, 125, 32–57. [Google Scholar] [CrossRef] [PubMed]

- Xue, J.; Wang, Z.; Chen, Y.; Wang, J.; Tian, J.; Li, B.; Wang, X.; Xu, H.; Huo, Y.; Dong, Q.; et al. Cone-jet regime in electrospray: A comprehensive review. Phys. Fluids 2025, 37, 081307. [Google Scholar] [CrossRef]

- Chen, L.; Ru, C.; Zhang, H.; Zhang, Y.; Wang, H.; Hu, X.; Li, G. Progress in Electrohydrodynamic Atomization Preparation of Energetic Materials with Controlled Microstructures. Molecules 2022, 27, 2374. [Google Scholar] [CrossRef]

- Bagheri-Tar, F.; Sahimi, M.; Tsotsis, T.T. Preparation of Polyetherimide Nanoparticles by an Electrospray Technique. Ind. Eng. Chem. Res. 2007, 46, 3348–3357. [Google Scholar] [CrossRef]

- Zhang, Z.; Deng, J.; Lu, Y.; Tang, X.; Bao, Y. Dry cutting performance and mechanism of tools with textured composite coatings fabricated by EHDA. J. Manuf. Process. 2025, 156, 365–380. [Google Scholar] [CrossRef]

- Wang, J.; Deng, J.; Rong, S.; Zhang, Z.; Bao, Y. Effect of bionic microtexture geometry on the tribological performance of hydrophobic surfaces. J. Mater. Res. Technol. 2025, 38, 4234–4247. [Google Scholar] [CrossRef]

- Wang, R.; Deng, J.; Zhang, Z.; Lu, Y.; Li, X.; Ge, D. Preparation and infrared properties of Ni3Al–Cr3C2 composite films deposited by electrohydrodynamic atomization technology. Mater. Chem. Phys. 2022, 278, 125654. [Google Scholar] [CrossRef]

- Chen, J.; Wei, M.; Xu, Z.; Wang, Z.; Li, B.; Zhang, W.; Xu, H.; Wang, J.; Pan, J.; Yu, K. Porous Microparticle Preparation via Tuning Electrostatic Breakup Characteristics of a Non-Newtonian Fluid for High-Performance Uranium Extraction. Ind. Eng. Chem. Res. 2024, 63, 2384–2394. [Google Scholar] [CrossRef]

- Li, Y.; Wang, Z.; Kong, Q.; Li, B.; Wang, H. Sulfur dioxide absorption by charged droplets in electrohydrodynamic atomization. Int. Commun. Heat Mass Transf. 2022, 137, 106275. [Google Scholar] [CrossRef]

- Chen, C.; Liu, W.; Jiang, P.; Hong, T. Coaxial Electrohydrodynamic Atomization for the Production of Drug-Loaded Micro/Nanoparticles. Micromachines 2019, 10, 125. [Google Scholar] [CrossRef] [PubMed]

- Yu, D.-G.; Gong, W.; Zhou, J.; Liu, Y.; Zhu, Y.; Lu, X. Engineered shapes using electrohydrodynamic atomization for an improved drug delivery. WIREs Nanomed. Nanobiotechnol. 2024, 16, e1964. [Google Scholar] [CrossRef]

- Kim, S.-Y.; Lee, H.; Cho, S.; Park, J.-W.; Park, J.; Hwang, J. Size Control of Chitosan Capsules Containing Insulin for Oral Drug Delivery via a Combined Process of Ionic Gelation with Electrohydrodynamic Atomization. Ind. Eng. Chem. Res. 2011, 50, 13762–13770. [Google Scholar] [CrossRef]

- Dau, V.T.; Nguyen, T.-K.; Dao, D.V. Charge reduced nanoparticles by sub-kHz ac electrohydrodynamic atomization toward drug delivery applications. Appl. Phys. Lett. 2020, 116, 023703. [Google Scholar] [CrossRef]

- Man, Y.; Zhou, C.; Adhikari, B.; Wang, Y.; Xu, T.; Wang, B. High voltage electrohydrodynamic atomization of bovine lactoferrin and its encapsulation behaviors in sodium alginate. J. Food Eng. 2022, 317, 110842. [Google Scholar] [CrossRef]

- Lyu, X.; Wang, X.; Wang, Q.; Ma, X.; Chen, S.; Xiao, J. Encapsulation of sea buckthorn (Hippophae rhamnoides L.) leaf extract via an electrohydrodynamic method. Food Chem. 2021, 365, 130481. [Google Scholar] [CrossRef] [PubMed]

- Guan, Y.; Sha, Y.; Wu, H.; Zheng, J.; He, B.; Liu, Y.; Lei, Y.; Huang, Y. The spraying characteristics of electrohydrodynamic atomization under different nozzle heights and diameters. Exp. Therm. Fluid Sci. 2025, 169, 111551. [Google Scholar] [CrossRef]

- Moreira, K.S.; Di Bonito, L.P.; Glanzer, K.; Carrasco-Munoz, A.; Di Natale, F.; Marques, J.P.M.; Gabriel, P.A.; Oliveira, M.E.; Agostinho, L.L.F. Electric current based automatic classification and operation of EHDA modes. J. Aerosol Sci. 2025, 190, 106648. [Google Scholar] [CrossRef]

- Rosell-Llompart, J.; Grifoll, J.; Loscertales, I.G. Electrosprays in the cone-jet mode: From Taylor cone formation to spray development. J. Aerosol Sci. 2018, 125, 2–31. [Google Scholar] [CrossRef]

- Wang, J.; Dong, T.; Cheng, Y.; Yan, W.-C. Machine Learning Assisted Spraying Pattern Recognition for Electrohydrodynamic Atomization System. Ind. Eng. Chem. Res. 2022, 61, 8495–8503. [Google Scholar] [CrossRef]

- Ma, M.; Zou, Y.; Huang, Z. Deep learning-based automated morphology classification of Electrospun ultrafine fibers from M44 element image of muller matrix. Optik 2020, 206, 164261. [Google Scholar] [CrossRef]

- Wang, J.-X.; Wang, X.; Ran, X.; Cheng, Y.; Yan, W.-C. Deep Learning based spraying pattern recognition and prediction for electrohydrodynamic system. Chem. Eng. Sci. 2024, 295, 120163. [Google Scholar] [CrossRef]

- Kim, M.J.; Song, J.Y.; Hwang, S.H.; Park, D.Y.; Park, S.M. Electrospray mode discrimination with current signal using deep convolutional neural network and class activation map. Sci. Rep. 2022, 12, 16281. [Google Scholar] [CrossRef]

- Sun, J.; Jing, L.; Fan, X.; Gao, X.; Liang, Y.C. Electrohydrodynamic printing process monitoring by microscopic image identification. Int. J. Bioprint. 2018, 5, 164. [Google Scholar] [CrossRef] [PubMed]

- Zhou, J.; Shu, X.; Zhang, J.; Yi, F.; Jia, C.; Zhang, C.; Kong, X.; Zhang, J.; Wu, G. A deep learning method based on CNN-BiGRU and attention mechanism for proton exchange membrane fuel cell performance degradation prediction. Int. J. Hydrogen Energy 2024, 94, 394–405. [Google Scholar] [CrossRef]

- Jia, C.; He, H.; Zhou, J.; Li, K.; Li, J.; Wei, Z. A performance degradation prediction model for PEMFC based on bi-directional long short-term memory and multi-head self-attention mechanism. Int. J. Hydrogen Energy 2024, 60, 133–146. [Google Scholar] [CrossRef]

- Chai, X.; Zhao, M.; Li, J.; Li, J. Image small target detection in complex traffic scenes based on Yolov8 multiscale feature fusion. Alex. Eng. J. 2025, 126, 578–590. [Google Scholar] [CrossRef]

- Terven, J.; Córdova-Esparza, D.-M.; Romero-González, J.-A. A Comprehensive Review of YOLO Architectures in Computer Vision: From YOLOv1 to YOLOv8 and YOLO-NAS. Mach. Learn. Knowl. Extr. 2023, 5, 1680–1716. [Google Scholar] [CrossRef]

- Kang, S.; Hu, Z.; Liu, L.; Zhang, K.; Cao, Z. Object Detection YOLO Algorithms and Their Industrial Applications: Overview and Comparative Analysis. Electronics 2025, 14, 1104. [Google Scholar] [CrossRef]

- Sirisha, U.; Praveen, S.P.; Srinivasu, P.N.; Barsocchi, P.; Bhoi, A.K. Statistical Analysis of Design Aspects of Various YOLO-Based Deep Learning Models for Object Detection. Int. J. Comput. Intell. Syst. 2023, 16, 126. [Google Scholar] [CrossRef]

- Vijayakumar, A.; Vairavasundaram, S. YOLO-based Object Detection Models: A Review and its Applications. Multimed. Tools Appl. 2024, 83, 83535–83574. [Google Scholar] [CrossRef]

- Duan, X.; Wang, P.; Hu, Y.; Li, H.; Yang, S.; Zhu, Y. YOLOv8-DuckPluck: A lightweight target detection model for cherry valley duck feather pecking site detection. Poult. Sci. 2025, 104, 105484. [Google Scholar] [CrossRef] [PubMed]

- Wang, Z.; Zhang, Y.; Zhang, S. Real-time personal protective equipment detection and classification with YOLOv8 multi-scale fusion. J. Real-Time Image Process. 2025, 22, 131. [Google Scholar] [CrossRef]

- Guo, A.; Sun, K.; Zhang, Z. A lightweight YOLOv8 integrating FasterNet for real-time underwater object detection. J. Real-Time Image Process. 2024, 21, 49. [Google Scholar] [CrossRef]

| Model | Precision | Recall | mAP@0.5 | GFlops | Params (M) |

|---|---|---|---|---|---|

| Faster RCNN | 0.971 | 0.986 | 0.989 | 30.6 | 13.3 |

| EfficientDet | 0.974 | 0.987 | 0.990 | 55 | 20.7 |

| SSD | 0.976 | 0.988 | 0.991 | 30.7 | 13.3 |

| DETR | 0.989 | 0.986 | 0.991 | 86 | 41 |

| YOLOv3 | 0.987 | 0.986 | 0.991 | 154.7 | 61.5 |

| YOLOv5 | 0.858 | 0.870 | 0.899 | 16.5 | 7.2 |

| YOLOv6 | 0.991 | 0.989 | 0.993 | 42.8 | 15.9 |

| YOLOv9 | 0.992 | 0.993 | 0.994 | 58.3 | 18.7 |

| YOLOv10 | 0.987 | 0.996 | 0.994 | 24.5 | 8.03 |

| YOLOv11 | 0.987 | 0.996 | 0.994 | 21.3 | 9.4 |

| YOLOv8 | 0.995 | 0.995 | 0.995 | 23.4 | 9.8 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Ran, X.; Xu, H.; Wei, X.; Wang, J.; Yan, W.-C. Real-Time Detection of Electrohydrodynamic Atomization Modes via a YOLOv8-Based Deep Learning Model. Processes 2026, 14, 313. https://doi.org/10.3390/pr14020313

Ran X, Xu H, Wei X, Wang J, Yan W-C. Real-Time Detection of Electrohydrodynamic Atomization Modes via a YOLOv8-Based Deep Learning Model. Processes. 2026; 14(2):313. https://doi.org/10.3390/pr14020313

Chicago/Turabian StyleRan, Xiong, Heming Xu, Xiangfei Wei, Jinxin Wang, and Wei-Cheng Yan. 2026. "Real-Time Detection of Electrohydrodynamic Atomization Modes via a YOLOv8-Based Deep Learning Model" Processes 14, no. 2: 313. https://doi.org/10.3390/pr14020313

APA StyleRan, X., Xu, H., Wei, X., Wang, J., & Yan, W.-C. (2026). Real-Time Detection of Electrohydrodynamic Atomization Modes via a YOLOv8-Based Deep Learning Model. Processes, 14(2), 313. https://doi.org/10.3390/pr14020313