1. Introduction

In recent years, China has proposed “Industry 4.0” and “Made in China 2025” as major strategies for the development of the manufacturing industry [

1]. However, for manufacturing enterprises, effectively monitoring and diagnosing the fault conditions of core equipment’s critical components, such as rolling bearings, is crucial for enhancing the level of manufacturing. It is also a key aspect of achieving equipment intelligence and promoting the transformation of the manufacturing industry [

2]. The “Mechanical Engineering Discipline Development Strategic Report 2021–2035” also lists improving the reliability and safety of major equipment operations in manufacturing as an important development direction [

3].

As the complexity of large equipment continues to increase, rolling bearings, which are core components, need to operate under higher loads and higher speeds. This significantly raises the probability of bearing failures. When a failure occurs, it can lead to downtime for maintenance, affecting the progress of the manufacturing process. Therefore, the ability to quickly and accurately diagnose the operating condition of rolling bearings is of utmost importance for the manufacturing industry [

4].

Rolling bearing fault signals exhibit strong nonlinearity and non-stationarity and are characterized by periodic impact features. In recent years, with the continuous development of signal processing technology, research on rolling bearing fault diagnosis has achieved certain results. Huang et al. [

5] proposed an Empirical Mode Decomposition (EMD) time–frequency analysis method for adaptive signal decomposition, but this method has the following shortcomings: (1) endpoint effects and mode mixing during decomposition; (2) EMD lacks rigorous theoretical derivation [

6]. To address these issues, Bonizzi et al. proposed a novel signal adaptive analysis method called Singular Spectrum Decomposition (SSD) [

7]. SSD evolves from the Singular Spectrum Analysis (SSA) algorithm and allows for the adaptive reconstruction of single-component signals from high frequencies to low frequencies [

8]. This provides a new approach for analyzing the time series of nonlinear and non-stationary vibration signals. Compared to EMD, Ensemble Empirical Mode Decomposition (EEMD), and Variational Mode Decomposition (VMD), SSD has a solid mathematical foundation, higher decomposition accuracy, and better suppression of spurious components and mode mixing [

6]. SSD has been successfully applied to analyze vibration signals in rotating machinery and has achieved good results. However, preliminary research has found that when SSD uses the residual energy ratio of vibration signals as the iteration termination condition, it cannot accurately decompose weak components with low energy ratios. Based on this, this paper proposes an enhanced Singular Spectrum Decomposition method that uses Jensen-Shannon (JS) distance and variance contribution rate as supplementary iteration termination conditions to improve the precision of SSD in decomposing weak signals and suppressing spurious components.

The modal components produced using SSD contain fault information obtained from the original signal. Traditional vibration signal demodulation methods can cause modulation effects at both ends of the demodulated signal [

8], leading to errors in extracting accurate fault frequencies. Information entropy can effectively describe the uncertainty of various possible events occurring in an information source [

9]. For rolling bearing vibration signals, the uncertainty of vibration signals under different bearing conditions can also be represented by information entropy. Approximate entropy [

10], sample entropy [

11], and permutation entropy [

12] are commonly used methods for uncertainty analysis in fault diagnosis. However, approximate entropy has selectivity towards the target object, and both approximate entropy and sample entropy use hard threshold criteria, which can affect their results [

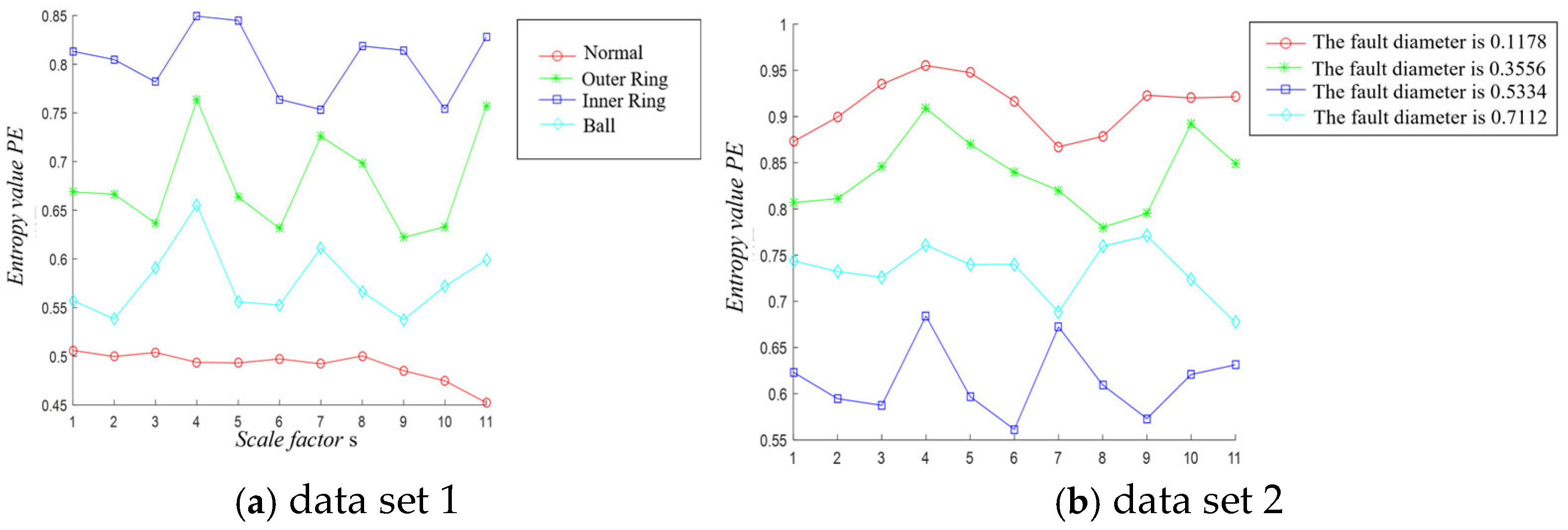

13]. Permutation entropy reflects the uncertainty and complexity of vibration signals on a single scale, whereas fault vibration signal characteristics are often contained across multiple scales. Therefore, multi-scale permutation entropy has been proposed. Zheng Jinde et al. [

14] conducted fault diagnosis based on multi-scale permutation entropy for bearing faults, but multi-scale permutation entropy still has the following drawbacks: (1) the effectiveness of quantifying fault characteristics depends on the selection of parameters; (2) in multi-scale permutation entropy, the time series length shortens and original information is lost after coarse-graining, and the dynamic mutation phenomena of the original signal can be neutralized when the time series is averaged after coarse-graining [

15]. To address the first issue, Rao Guoqiang et al. [

16] determined the parameters for multi-scale permutation entropy using independent determination methods (mutual information method and pseudo-nearest neighbor method) through an experimental comparison of rolling bearing full-life data. Chen Dongning et al. [

17] achieved the classification and identification of bearing faults using Fast Variational Mode Decomposition (FVMD), optimized multi-scale permutation entropy, and GK fuzzy clustering methods. For the second issue, Wang Gongxun et al. [

15] proposed multi-scale mean permutation entropy and achieved good results in extracting rolling bearing fault features. However, the effectiveness of multi-scale mean permutation entropy in reflecting the uncertainty of vibration signals is also highly dependent on its parameters (delay time, embedding dimension, data length, and scale factor). Therefore, this paper uses particle swarm optimization to adaptively select parameters for MMPE to maximize its fault feature extraction performance.

Once the fault features of the vibration signal have been thoroughly extracted, utilizing this feature information to distinguish fault patterns is a crucial step. Support Vector Machines (SVM) have become a popular classification method in recent years due to their solid theoretical foundation and superior classification performance in fields such as pattern recognition and machine learning [

18]. However, since the penalty factor c and the kernel function parameter g can affect the classification results of SVM [

19], this paper uses particle swarm optimization to optimize and select these parameters.

In summary, to fully leverage the advantages of SSD, MMPE, and SVM in signal decomposition, feature extraction, and pattern recognition, this paper proposes improvements to combine these methods to diagnose multi-condition faults in rolling bearings. First, an enhanced Singular Spectrum Decomposition (ESSD) method is proposed based on the JS distance and variance contribution rate to improve SSD’s accuracy in decomposing weak signals and suppressing spurious components, and to select the optimal components through correlation analysis. Second, a particle swarm optimization (PSO) method is introduced to optimize multi-scale mean permutation entropy (MMPE) for the effective extraction of fault features from the optimal components. Finally, PSO is used to optimize and select SVM parameters to recognize the features identified by ESSD + PSO - MMPE, achieving an effective diagnosis of the rolling bearing faults.

The remainder of this paper is organized as follows.

Section 2 provides a detailed description of the proposed fault diagnosis method.

Section 3 presents the simulation analysis of the proposed method.

Section 4 presents the experimental design and its comparative results.

Section 5 concludes the paper and presents the limitations of the proposed study.

2. Theoretical Foundation

2.1. Singular Spectrum Decomposition

Singular Spectrum Analysis (SSA) is a method for processing nonlinear time series data. It originates from the singular value decomposition of a specific matrix derived from a vibration signal time series. By performing a decomposition and reconstruction of the trajectory matrix of the vibration signal time series, SSA extracts different component sequences from the vibration signal and analyzes the vibration signal [

8].

SSD originates from SSA and is a newly proposed adaptive signal processing method. It can decompose nonlinear and non-stationary vibration signals into several Singular Spectrum Components (SSC) and a residual term, ordered from high to low frequencies [

6]. The algorithmic process is as follows:

- (1)

Construct a new trajectory matrix. Given a time series x(n) with a data length of N and an embedding dimension of M, construct an M × N trajectory matrix X. To better understand the matrix construction process, consider the time series x(n) = {1,2,3,4,5} with an embedding dimension M = 3. The corresponding matrix X is

In Equation (1), the left three rows and the left three columns of matrix X constitute the trajectory matrix in SSA. To enhance the oscillatory components in the initial time-domain signal and ensure that the energy of the residual components decreases progressively after iteration, move the three elements from the bottom right of matrix X to the top left of the matrix. Construct a new matrix as expressed in Equation (2) to achieve component extraction.

In the equation, the left half of matrix X is the new trajectory matrix in SSA.

- (2)

Adaptively select the embedding dimension M. Since SSA has the drawback of empirically choosing the embedding dimension, SSD uses an adaptive rule to select the embedding dimension M. Let rj(n) be the residual component at the j-th iteration, and its expression is as follows:

First, calculate the Power Spectral Density (PSD) of Equation (3) and identify the maximum frequency fmax. In the first iteration, if (fmax/fs) ≤ 10−3 (given threshold), the residual component is considered a major trend term, and M = N/3, where fs is the sampling frequency. If this condition is not met and the iteration number j > 1, then M = 1.2(fs/fmax) to enhance the effectiveness of an analysis using SSA.

- (3)

Reconstruct the SSC. Reconstruct in order from high frequency to low frequency. If M = N/3, use the first left singular vector to obtain g1(n). If M = 1.2(fs/fmax), select the left singular vectors from the set of all feature groups with prominent main frequencies in the spectrum [fmax − ∆f, fmax + ∆f] and from the feature groups that contribute the most to the main component’s energy, creating a subset Ij(Ij = {i1,i2,…,ip}). Finally, perform reconstruction using the matrix diagonal averaging.

- (4)

Set the algorithm iteration termination condition. Separate the component sequences estimated from the iterations from the original signal to obtain a residual term v(j+1). Calculate the normalized mean squared error between the obtained residual term and the original time series σNMSE(j).

If the value of Equation (4) is less than the set threshold (default value is 0.01), the decomposition process terminates. Otherwise, use the residual term as the new signal and iterate repeatedly until the termination condition is met, ultimately obtaining the singular spectrum decomposition result.

2.2. Enhanced Singular Spectrum Decomposition Algorithm

From the principles of the SSD algorithm mentioned above, it is understood that the SSCs are generated through iterative decomposition in SSD. When comparing the energy ratio of the residual signal from each decomposition to the original signal with a set threshold, if the signal is less than the threshold, the iteration stops, ending the signal decomposition and outputting the components. Therefore, the performance of the SSD depends on the manually set threshold for terminating the iterations of the singular spectrum decomposition (default value is 0.01). If this threshold is set inappropriately, it will directly affect the accuracy of the singular spectrum decomposition algorithm. Previous research has shown that if the signal contains relatively weak component signals (signals with low amplitude and a low energy ratio), setting the default energy ratio threshold incorrectly will prevent the SSD algorithm from conducting an effective decomposition. Therefore, this paper proposes an ESSD method by setting the JS distance and variance contribution rate as supplementary termination conditions for the iterations, improving the precision of singular spectrum decomposition and suppressing the generation of false components.

2.2.1. Variance Contribution Rate

The variance contribution rate represents the proportion of the variance of the residual signal with physical significance within the total variance of the signal [

20]. It quantifies the impact of different periodic components on the original signal. To reduce the generation of false components, this paper proposes using the variance contribution rate of the residual signal produced during the singular spectrum decomposition as a supplementary first iteration termination condition for SSD. When the variance contribution rate of the residual signal is less than the set value, it is considered that there is no valid information within the residual signal, and the singular spectrum decomposition terminates. The specific calculation process is as follows:

A data sequence {x

1, x

2, …, x

N} is collected within the sampling time t. The definition of the variance contribution rate is

where

σ2, E, and N represent the variance, mean, and length of the data sequence, respectively.

2.2.2. JS Distance

JS distance, also known as Jensen–Shannon (JS) divergence, is derived from KL divergence (Kullback–Leibler divergence). Both can be used as measures of the difference between two probability distributions, but KL divergence has the drawback of asymmetry, which limits its effectiveness in measuring the difference between two distributions. In contrast, the JS distance addresses this asymmetry issue by constructing an average probability distribution. The value of the JS distance ranges from [0, 1]. It equals 0 when the two probability distributions are identical and equals 1 when the two distributions are completely opposite [

21]. The specific definition is as follows:

Let the random discrete variable x have k possible values {X

1, X

2, …, X

k}. Let Y

1(x) and Y

2(x) be two probability distributions of the random discrete variable x. The calculation formula for the JS distance is given by Equation (7):

Therefore, given the excellent ability of the JS distance to measure differences, this paper proposes using the JS distance as a supplementary second iteration termination condition for the singular spectrum decomposition algorithm. This enhances the algorithm’s ability to accurately decompose weak signals.

2.3. Multi-Scale Permutation Entropy

Multi-scale permutation entropy is composed of the permutation entropy of time series at different scales s. The specific calculation process is as follows:

- (1)

Perform coarse-graining on the time series X = (Xi, i = 1, 2, …, N) to obtain the coarse-grained series yj(s), i.e.,

where s is the scale factor, N is the length of the vibration signal time series, and [N/S] denotes the integer part of N/S.

- (2)

Perform N-dimensional phase space reconstruction on the coarse-grained time series, i.e.,

where l is the l-th reconstructed component l = 1, 2, …, (m − 1)τ, m is the embedding dimension, and τ is the time delay.

- (3)

Sort the reconstructed components in ascending order to obtain the symbol sequence S(r) = (j1, j2, …, jm), where r = 1, 2, …, R and R ≤ m!. Calculate the probability Pr of each symbol sequence occurring.

- (4)

Calculate the permutation entropy value for each coarse-grained time series.

- (5)

Normalize Hp to obtain the multi-scale permutation entropy, which is

The range of Hp is from 0 to 1. The magnitude of Hp indicates the randomness and complexity of the time series: a larger Hp value signifies greater randomness, while a smaller Hp value indicates a more regular time series.

2.4. Multi-Scale Mean Permutation Entropy

Compared to multi-scale permutation entropy, multi-scale mean permutation entropy preserves more of the characteristic information of the time series and reduces sampling errors and sample expansion [

1]. The specific principle is as follows:

- (1)

Replace the coarse-grained time series in the multi-scale permutation entropy with a mean-processed time series to obtain the mean-processed sequence yj(s), i.e.,

When s is equal to 1, the original sequence is the mean-processed sequence. When s is greater than 1, the original sequence becomes a mean-processed sequence of length (N + 1 − s).

- (2)

The MMPE of the original time series is composed of the permutation entropy of the time series processed by mean for scales from 1 to s.

2.5. Particle Swarm Optimization Algorithm

In 1995, the American scholar Kennedy and engineer Eberhart first proposed the Particle Swarm Optimization (PSO) algorithm [

22]. This algorithm simulates the interaction and competition between different particles in a swarm to achieve the optimal position in the search space for the given problem. The position and velocity update formulas are as follows:

where i is the particle, i = 1, 2, …, N, and N is the total number of particles in the swarm. V

i is the velocity of the particle; rand() is a randomly generated number between 0 and 1; x

i is the current position of the particle; c1 and c2 are acceleration constants; w is the inertia weight; pbest

i is the best individual position found by particle i; and gbest

i is the best global position found by particle i.