1. Introduction

Rolling bearings, often referred to as the joints of industry, are widely used in various industrial scenarios such as aerospace, wind power generation, and manufacturing, serving to reduce friction and support loads. The health condition of rolling bearings is critical to the safe operation of mechanical equipment [

1]. In operation, rolling bearings often run under complex working conditions and are affected by alternating loads, making them one of the parts that are most prone to failure [

2]. Therefore, it is of great significance to carry out research on fault diagnosis technology for rolling bearings.

Traditional fault diagnosis methods typically extract fault characteristics using signal processing techniques and then compare them with fault characteristic frequencies derived from fault mechanism analysis. Li et al. [

3] systematically investigated several key factors influencing the performance of Singular Value Decomposition (SVD) and proposed a Correlated SVD (C-SVD) algorithm, which proved effective in bearing fault diagnosis. Liu et al. [

4] proposed a sparse time-frequency analysis method, the Time-reassigned Multi-synchrosqueezing S-Transform (TMSSST), which achieves high-resolution time-frequency representation and robust bearing fault diagnosis, outperforming traditional methods in transient feature extraction. Ren et al. [

5] investigated the mathematical properties of Time-Synchronous Averaging (TSA) and demonstrated its advantages in quasi-periodic signal processing through its successful application to rolling bearing signal analysis. However, these methods require substantial domain knowledge, extensive diagnostic experience, and a high level of user expertise.

With the development of computer technology, researchers have introduced machine learning algorithms into the field of fault diagnosis. Cao et al. [

6] extracted frequency-domain and time-domain features from bearing vibration signals to construct feature vectors, applied Principal Component Analysis (PCA) for dimensionality reduction, and finally fed the reduced features into a Random Forest (RF) model for fault diagnosis. Zhou et al. [

7] employed VMD to extract bearing fault features, which were then fed into a Support Vector Machine (SVM) for fault classification. Both the feature extraction and classification processes were optimized using the Whale Gray Wolf Optimization Algorithm (WGWOA). Li et al. [

8] proposed a method based on wavelet packet decomposition to extract fault features, which were subsequently fed into an SVM optimized by an improved sparrow search algorithm for rolling bearing fault diagnosis. In these methods, machine learning algorithms act merely as classifiers and do not fully exploit the signal information. As a result, the effectiveness of these approaches largely depends on the quality of the extracted features.

In the ImageNet Large Scale Visual Recognition Challenge (ILSVRC) of 2012, a deep learning-based model, AlexNet, achieved a breakthrough victory [

9], surpassing traditional image recognition methods and igniting a surge of interest in deep learning. Since then, deep learning has taken center stage in subsequent competitions. Over the following years, deep learning algorithms have demonstrated remarkable performance in domains such as image processing and speech recognition, motivating researchers to explore their potential in fault diagnosis. For example, Huang et al. [

10] proposed a multi-scale parallel convolutional neural network integrated with a channel attention mechanism, enabling effective rolling bearing fault diagnosis under noisy conditions and varying working speeds. Zhao et al. [

11] applied Continuous Wavelet Transform (CWT) to convert raw vibration signals into images, which were subsequently fed into a Convolutional Deep Belief Network (CDBN) with Gaussian distribution for fault diagnosis. Duan et al. [

12] employed Markov Transition Fields (MTF) to transform time-domain signals into two-dimensional feature maps, which were then input into a Multidimensional Supervised Module Convolutional Neural Network (MSMCNN) for fault diagnosis. Guo et al. [

13] used Gram Angle Field (GAF) encoding to transform time-domain signals into images, which were then fed into an improved ConvNeXt network for fault diagnosis.

Despite recent advances, existing fault diagnosis models face two major limitations: they either rely heavily on complex signal preprocessing procedures for performance improvement or lack interpretability due to opaque internal decision mechanisms. In particular, conventional signal decomposition algorithms, which are widely used as preprocessing tools, are separated from the downstream classifier and optimized independently, often leading to suboptimal diagnostic performance.

Recent work in explainable AI (XAI) and physics-informed deep learning (e.g., refs. [

14,

15,

16,

17]) has aimed to enhance model transparency or embed domain knowledge into fault diagnosis. However, XAI methods typically extract post hoc features associated with classification, and physics-informed networks require manually designed physics-based components, relying heavily on expert knowledge. In contrast, our framework aims to make the learning process itself interpretable by embedding an adaptive, data-driven signal decomposition mechanism into the network and jointly optimizing reconstruction and classification objectives.

Interestingly, many traditional signal decomposition algorithms share a formal similarity with autoencoders in their transform–inverse transform structure. Signal decomposition can be understood as breaking a complex signal into several basic constituent patterns, while different channels in a convolutional neural network (CNN) act as filters that extract distinct components from the input signal. In this context, the convolution operation in CNNs can be interpreted as waveform matching using convolution kernels. Accordingly, training a CNN corresponds to adaptively learning a set of basis-like functions that are most responsive to fault-related waveforms, which aligns conceptually with classical basis-function signal decomposition methods based on inner products [

18,

19]. From this viewpoint, a convolutional autoencoder can be regarded as a data-driven signal decomposition framework in which encoder channels capture latent nonlinear components and the decoder performs a corresponding inverse transformation to reconstruct the original signal. Motivated by this insight, this paper proposes a novel Adversarial Autoencoder (AAE) framework that integrates adaptive signal decomposition directly into the neural network. Specifically, a convolutional autoencoder is designed as the feature extraction module, where each encoder channel can be interpreted as a nonlinear signal component and the decoder acts as the inverse transformation to ensure reconstruction fidelity. A channel attention mechanism is further introduced to adaptively reweight feature components, while a classification network functions as a discriminator to enforce class separability in the latent representation. In this way, the feature decomposition process is directly optimized toward fault discrimination, achieving both adaptivity and interpretability within an end-to-end pipeline. Validation on three benchmark datasets demonstrates the effectiveness and robustness of the proposed method.

2. Theoretical Background

2.1. Convolutional Neural Network

CNNs are currently the mainstream framework of deep learning algorithms due to their excellent feature extraction capabilities, and they are also widely used in the field of fault diagnosis. The convolutional layer plays a central role in CNNs.

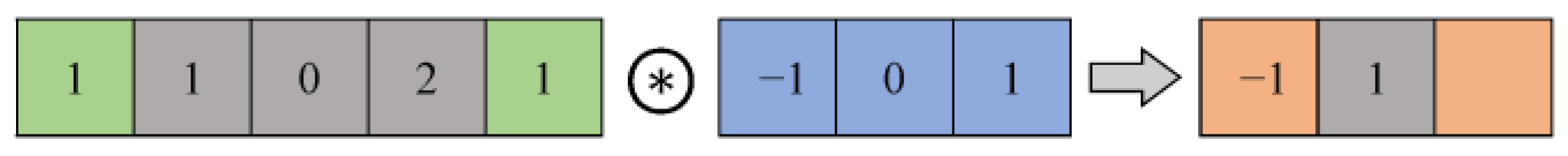

Figure 1 illustrates the 1D convolution operation, where the

denotes the convolution operation, and its computational formula is given as follows:

where

denotes the output of the convolutional layer,

represents the input (with

= 0 when the index is out of range),

is the convolution kernel,

is the kernel size,

is the stride,

is the padding,

is the bias, and

is the output sequence length of the convolutional layer.

From the formula, it can be seen that the convolution operation essentially performs an inner product between the convolution kernel and local segments of the input sequence, which is consistent with signal decomposition methods based on inner-product principles. Therefore, this paper employs convolutional neural networks as the backbone for feature extraction.

2.2. Residual Networks

As the depth of neural networks increases, they encounter the problem of vanishing and exploding gradients, which makes training deep models difficult. Blindly increasing the number of layers may even degrade network performance. To address this issue, He et al. [

20] proposed ResNet in 2016, where shortcut connections are introduced. These connections allow gradients to be propagated without attenuation during backpropagation, effectively alleviating the vanishing and exploding gradient problems. Based on this idea, the proposed network in this paper is constructed using Residual Blocks (RBs), which not only facilitate convergence but also make it easy to add or remove layers when the model suffers from underfitting or overfitting, thereby enabling further improvements. The network used in this study is built upon the RB structure shown in

Figure 2.

Each Residual Block consists of three convolutional modules, each comprising a one-dimensional convolutional layer (1D_Conv), a Batch Normalization (BN) layer, and an activation function. The kernel sizes of the three convolutional modules are 1, 3, and 1, respectively, with paddings of 0, 1, and 0 and convolution strides of 1 or 2. The activation function used is ReLU, due to its simplicity, relatively low computational cost, and effectiveness in alleviating the vanishing gradient problem. In addition, the convolutional layer with a kernel size of 1 facilitates adjustment of the number of channels when the input and output dimensions differ, while also enhancing the model’s nonlinearity.

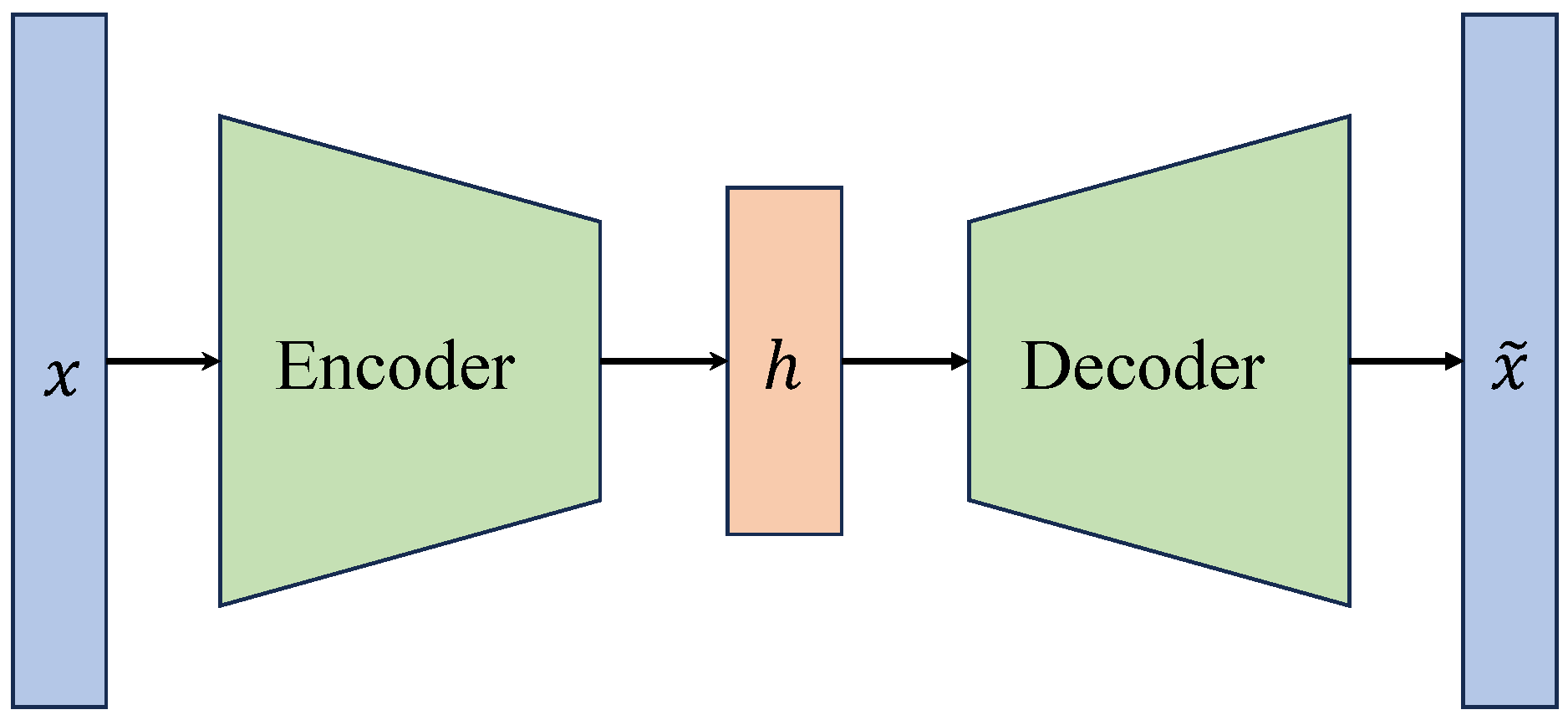

2.3. Autoencoder

The autoencoder is an unsupervised learning model that consists of an encoder and a decoder, as shown in

Figure 3.

Autoencoder- and VAE’-based methods have been explored to learn representations directly from raw vibration signals. However, conventional autoencoders generally compress the high-dimensional input signal x into a low-dimensional latent variable h optimized independently for reconstruction or classification, which limits interpretability and prevents decomposition into meaningful signal components. In this process, the decoder attempts to reconstruct the original signal from the latent variable, forcing the network to learn the most representative features of the input and making the autoencoder an effective feature extractor. When the autoencoder is implemented using convolutional neural networks (CNNs) and each latent channel is interpreted as a distinct component, the architecture becomes formally analogous to a signal decomposition process, with each channel capturing a latent component and the decoder performing the corresponding inverse transformation to reconstruct the original signal.

Here, represents a functional transformation, represents its inverse transformation, , is the length of the signal, , is the number of decomposed signals, corresponding to the number of output channels of the encoder, and is the length of the decomposed signal.

This formulation reveals a key insight: a convolutional autoencoder is mathematically analogous to traditional signal decomposition algorithms. Building on this equivalence, we replace conventional handcrafted decomposition procedures with a convolutional autoencoder, thereby embedding an adaptive functional decomposition transformation directly within the neural network. Unlike conventional autoencoders that primarily aim to downscale the input into a compact latent vector, the proposed encoder–decoder architecture performs an upscaling transformation, projecting the original signal into a higher-dimensional representation in which each latent channel preserves the same temporal length as the input signal.

In this expanded feature space, the energy of the signal is redistributed across channels, enabling fault-related components that are inseparable in the original signal domain to become distinguishable. Furthermore, by jointly optimizing reconstruction and classification objectives in an end-to-end manner, the classifier can be interpreted as a metric function that explicitly constrains the encoded representations to remain separable across different fault categories. If the encoder output achieves high classification accuracy under this metric, the resulting transformation can be regarded as a reversible, discriminative signal decomposition that simultaneously ensures interpretability and fault separability. This framework eliminates the need for explicit handcrafted signal decomposition steps, simplifies the diagnostic pipeline, and improves robustness by learning the decomposition process directly from data within a unified optimization framework.

3. Adversarial Autoencoder Model

3.1. Structure and Parameters

The network used in this paper is constructed with Residual Blocks and consists of an encoder, a decoder, and a classifier, as shown in

Figure 4.

The encoder is built with seven Residual Blocks, and the decoder is designed to be symmetrical to the encoder. Following the principle of an autoencoder, the number of channels in the network is designed to decrease from large to small. The network parameters for the encoder, decoder and classifier are shown in

Table 1.

In this paper, pooling layers and down-sampling layers are omitted in the autoencoder, since such operations are irreversible and hinder signal reconstruction. As a result, the output length of the latent variable layer remains consistent with the length of the input layer. Each channel of the latent variable layer is treated as a component, making the encoder analogous to a functional decomposition transformation, while the decoder serves as its inverse transformation. Subsequently, the output of the latent variable layer is connected to the classifier for classification. The AvgPool denotes the average pooling layer with a pooling kernel size of 4, a stride of 2, and a padding of 1. AdaptiveAvgPool refers to the adaptive average pooling layer in PyTorch, which automatically adjusts pooling parameters according to the target output length; in this case, the output length is set to 1, equivalent to computing the average value of each channel.

3.2. Channel Attention Layer

The encoder’s different components capture distinct fault-related characteristics. To reduce the interference of irrelevant features on the classifier, an attention mechanism [

21] is introduced, and a channel attention layer is incorporated into the classifier. This layer enhances the network’s focus on critical features by adaptively reweighting channels. Moreover, since each channel is assigned different weights during training, the autoencoder is encouraged to produce diverse feature representations across channels, thereby improving model performance. The structure of the channel attention layer is illustrated in

Figure 4.

The channel attention layer first compresses the input features along the channel dimension using different pooling functions. The compressed features are then passed through a fully connected layer for affine transformation, followed by a sigmoid activation to obtain the channel weight matrix. Finally, the input features are multiplied by the weight matrix to generate the output features. The computation of the channel weight matrix

is given by:

In the formula, represents the attention weight matrix, represents the input features, and are activation functions, denotes a fully connected layer, and and represent max pooling and average pooling, respectively.

3.3. Adversarial Training Strategy

For the training of autoencoders, a common approach is to first pre-train the encoder and decoder and then jointly train the encoder and the classifier [

22]. However, to enhance the effectiveness of the features extracted by the encoder, this paper adopts the network training strategy proposed in [

23], which draws inspiration from the adversarial training paradigm of Generative Adversarial Networks (GANs). GANs have been widely applied in fault diagnosis, such as domain adversarial learning [

24,

25] and fault signal generation [

26,

27], although their training process is often reported to suffer from convergence difficulties and instability. In this paper, inspired by the adversarial training strategy of GANs, the classifier is regarded as a discriminator that guides the gradient descent of the autoencoder. In this way, the autoencoder is encouraged to achieve a low reconstruction error while simultaneously ensuring that the encoded features are more discriminative across different fault categories.

Regarding training stability, no notable convergence difficulties were observed in our experiments. This can be attributed to the fact that the reconstruction loss remains significantly smaller than the classification loss during training, such that the overall optimization process is dominated by the classification objective. Consequently, the adversarial-inspired training strategy does not introduce severe instability in practice while still effectively enhancing the discriminative capability of the learned features. It should be noted that, as with adversarial training in general, convergence behavior may be influenced by factors such as loss weighting and network initialization; however, such issues were not observed under the experimental settings considered in this work.

In practice, an alternating iterative training strategy is adopted: the autoencoder network is updated first, followed by the classifier network. The mean squared error is used as the reconstruction loss for the autoencoder, while cross-entropy is employed as the classification loss.

Let the model parameters for the encoder, decoder, and classifier be , , and respectively. The encoder and decoder are regarded as one sub-network, while the encoder and classifier constitute another sub-network. These two sub-networks are optimized alternately to ensure a balance between reconstruction and classification.

In the first phase, the encoder and decoder are updated to minimize the reconstruction loss:

In the second phase, the encoder and classifier are updated to minimize the classification loss:

where

represents the learning rate, and

denotes the partial derivative operator.

One complete training iteration consists of executing both phases, enabling the encoder to learn features for reconstruction and classification simultaneously. The hardware, software, and training configurations of the experimental platform are summarized in

Table 2, and the training strategy is illustrated in

Figure 5.

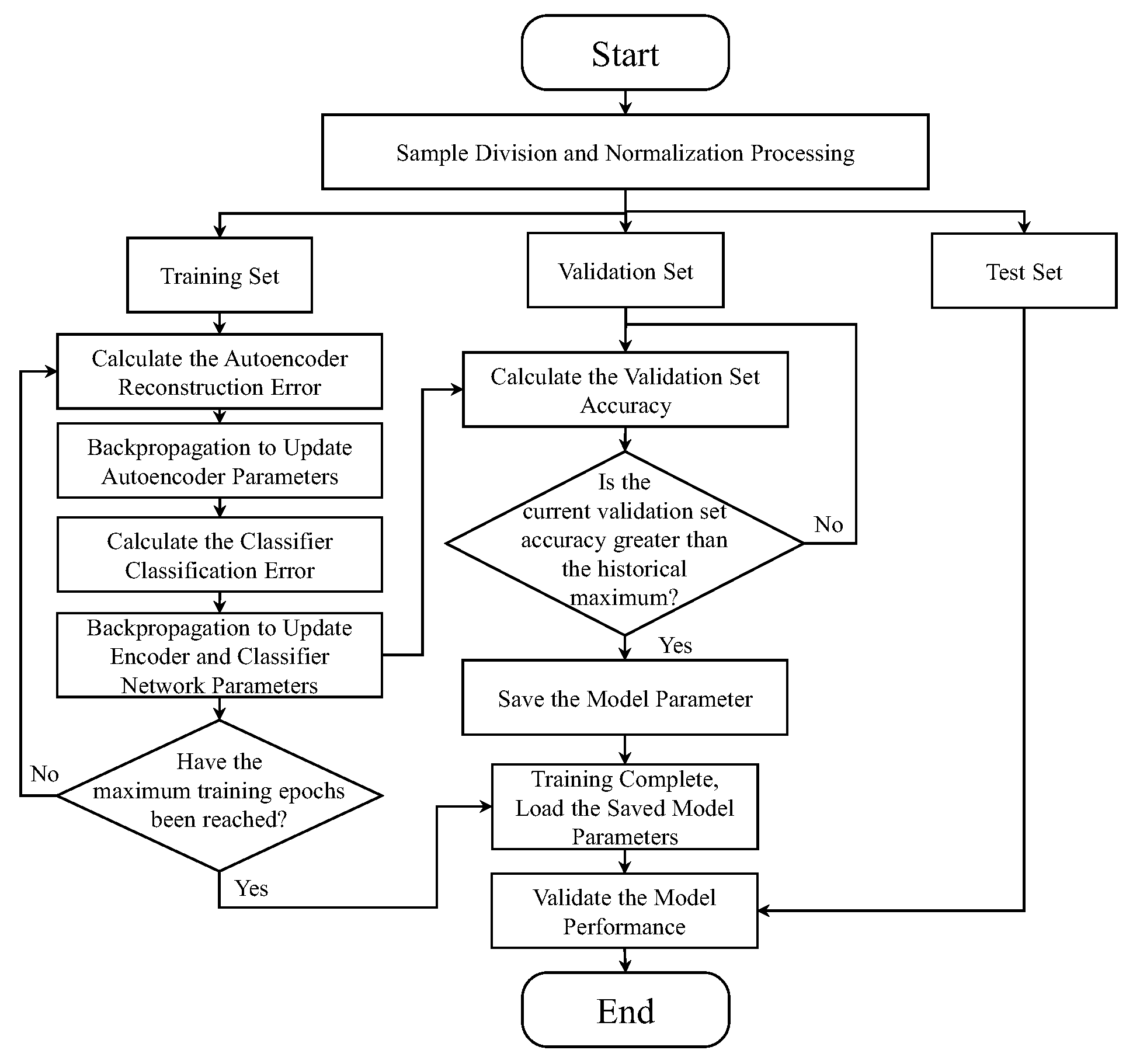

3.4. Model Training

The flowchart of the proposed fault diagnosis method is shown in

Figure 6. First, the dataset is segmented into samples of a fixed length and then split into training, validation, and testing sets according to a predefined ratio. Each sample is subsequently normalized. The model is then trained on the training set, and the parameters corresponding to the highest validation accuracy are saved, as this model is considered to have the best generalization ability. Finally, after training, the model is evaluated on the test set to assess its performance.

4. Experiments

4.1. Data Preprocessing

The collected signals are standardized using the min–max normalization method, as shown in Equation (9), to scale the amplitudes of different signals to the range [0, 1], thereby reducing the influence of varying signal magnitudes.

In the equation, represents the normalized signal, where is the length of the signal, represents the original signal, and and denote the maximum and minimum value functions, respectively.

4.2. Experimental Dataset

To evaluate the performance of the proposed model, one rolling bearing failure dataset, referred to as Dataset_1, collected on a rotating machinery fault simulation test bench in our laboratory, along with two public datasets, is used for validation.

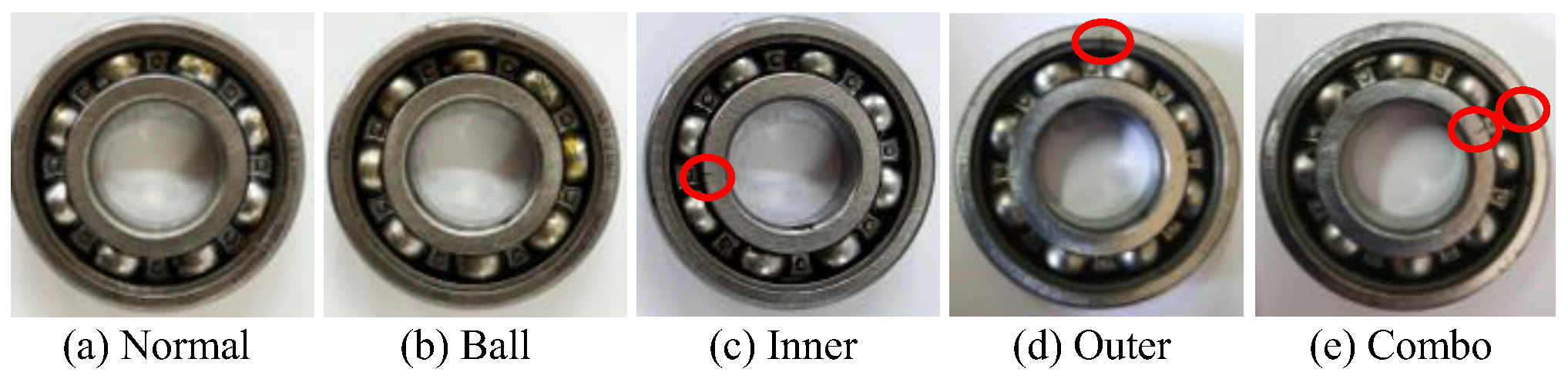

The structure of the test rig is shown in

Figure 7, which consists of an electric motor, a bearing housing, a belt pulley, and other components. The bearing used is an SKF-6204, and faults are introduced by Wire Electrical Discharge Machining (WEDM), as illustrated in

Figure 8. Notches of different depths (0.2 mm, 0.4 mm, and 0.6 mm) are machined to simulate varying fault severities, resulting in a total of 13 fault types, as listed in

Table 3. The motor operates at 1500 RPM, and acceleration signals along the Z-axis of the bearing housing are collected at a sampling frequency of 12.8 kHz, with each recording lasting 30 s. In addition, the height of the tension pulley is adjusted to vary the bearing load, thereby enriching the dataset. Each fault type is recorded under two different load conditions. The collected data is segmented into 9750 non-overlapping samples of 1024 points each and further divided into training, validation, and test sets with a ratio of 3:1:1.

The rolling bearing fault dataset from Case Western Reserve University (CWRU) [

28] contains fault data under four load conditions and four levels of fault severity, sampled at a frequency of 12 kHz. Fault data with fault depths of 7 mils, 14 mils, and 21 mils at the drive end are selected. Fault data of the same type but under different loads are grouped into a single fault category, resulting in a total of 10 fault categories, each containing data from four different load conditions, as shown in

Table 4. The model’s classification performance under varying load conditions is evaluated. The dataset consists of 5927 non-overlapping samples, each with 1024 data points, which are divided into training, validation, and testing sets in a ratio of 3:1:1.

The rolling bearing fault dataset from Huazhong University of Science and Technology (HUST) [

29] contains vibration signals of bearings under nine different health conditions and four different speeds, sampled at a frequency of 25.6 kHz. Each dataset includes vibration signals in three directions. Signals of the same fault type at different speeds are merged into a single category, and the acceleration signals along the Z-axis are selected, resulting in data for nine fault categories. Each fault category therefore includes signals from four different speeds, as shown in

Table 5.

The model’s classification performance under varying rotational speeds is evaluated. The vibration data are segmented into non-overlapping samples of 1024 points, yielding a total of 9216 samples, which are then divided into training, validation, and testing sets in a ratio of 3:1:1.

4.3. Comparative Experiment

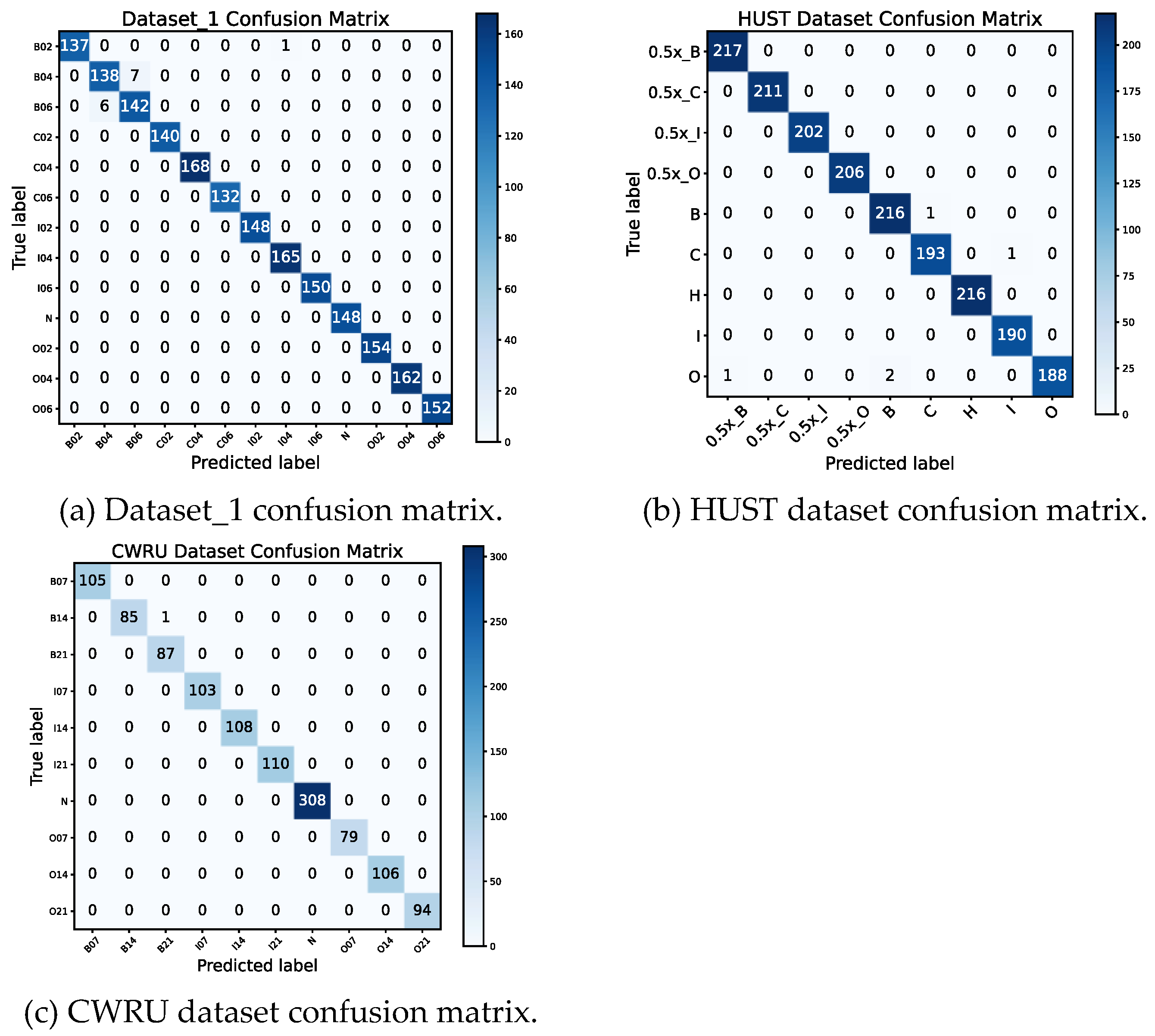

The time-domain signals of the rolling bearings, after standardization processing, were directly fed into the neural network for training on the training set. The classification accuracy of the model was evaluated on the test set, and the average performance over 10 training runs was reported as the final result for each dataset. The proposed model achieved an average reconstruction error of 0.000436 across the three datasets, while the average classification accuracy reached 99.64 ± 0.29%. These results demonstrate that the proposed network is capable of simultaneously achieving low reconstruction error and high classification accuracy, indicating that a reversible signal decomposition process can be simulated by training an autoencoder, and the decomposed signals can be effectively utilized for fault classification. The confusion matrix of the test set is shown in

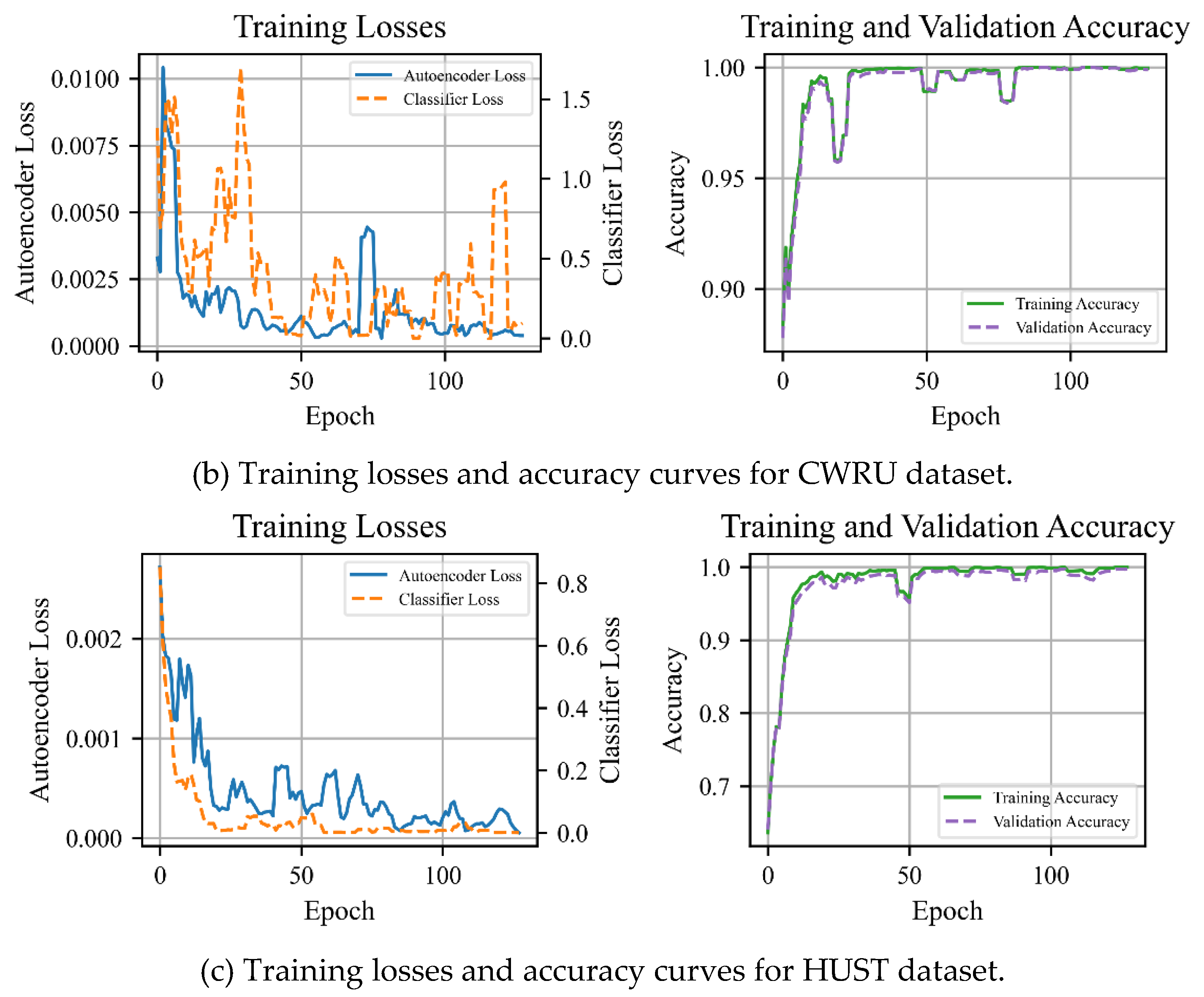

Figure 9, and the training loss curves and validation accuracy curves are presented in

Figure 10.

Dataset_1 and the CWRU dataset correspond to variable load conditions. As shown in the confusion matrix, the network misclassified some samples with different fault depths of rolling elements due to load variations and relative rolling motion. The HUST dataset, on the other hand, corresponds to variable speed conditions, where speed fluctuations caused the network to confuse certain samples of different fault types. Overall, the proposed network is capable of achieving high diagnostic accuracy under both variable speed and variable load conditions.

In addition, adversarial training strategies are often prone to issues such as pattern collapse and convergence difficulty [

30,

31]. However, no such problems were observed during the training of the proposed model. As indicated by the loss curves, the reconstruction error is significantly smaller than the classification error, suggesting that the classification error dominates during training. This may explain why the model did not encounter convergence difficulties.

To further evaluate the performance of the model, the proposed method is compared with existing mechanical fault diagnosis methods. The methods compared in this paper mainly come from Ref. [

32], as the selected methods in Ref. [

32] provide complete implementation details and publicly available source code, ensuring fair comparison and experimental reproducibility. The compared methods include AlexNet, BiLSTM, CNN, CNN+CWT, ResNet18, and an autoencoder (AE) composed of fully connected layers, as well as an additional model, CNN+EMD, which first decomposes the signals using EMD and selects the five components with the highest correlation to the original signal as input to the CNN. All networks use the Adam optimizer, with 128 training epochs and a batch size of 32. The saved model parameters correspond to the highest accuracy historically achieved on the validation set. The AE network for comparison is trained in the same manner as in this paper. The final results are shown in

Table 6,

Table 7 and

Table 8.

From

Table 6,

Table 7 and

Table 8, it can be seen that the proposed model achieves high diagnostic accuracy on all three datasets, with the smallest standard deviation. In terms of classification results, Dataset_1 exhibits the highest classification difficulty, followed by the HUST dataset, and then the CWRU dataset. While the proposed model achieves the highest average accuracy on Dataset_1 and the HUST dataset, it does not reach the highest accuracy on the CWRU dataset. This performance drop may be attributed to mild overfitting caused by the relatively excessive network capacity for this simpler dataset. Supporting this, the loss curve in

Figure 10b shows larger fluctuations in gradient magnitude during training, suggesting less stable optimization dynamics when the model capacity exceeds the data complexity. In such cases, appropriately reducing the model parameters may lead to improved performance on simpler datasets.

Furthermore, the comparatively lower performance of the fully connected stacked autoencoder (AE) can be explained by two factors. First, fully connected AEs often involve a large number of parameters, leading to inefficient utilization for one-dimensional vibration signals. Second, unlike convolutional autoencoders, AEs do not explicitly capture local structural characteristics of the signal. Since bearing fault signals typically exhibit localized impulsive patterns, convolutional architectures are better suited to extract these discriminative features, resulting in superior diagnostic performance.

4.4. Ablation Study

To investigate the impact of each module on the model’s performance, the decoder part of the proposed method was removed, leaving only the encoder and classifier to form a classification model, referred to as Model I. The attention layer was then removed from both the proposed method and Model I to form Models II and III, respectively. Ablation experiments were conducted to verify the effectiveness of each component of the model. The experimental results are shown in

Table 9,

Table 10 and

Table 11.

From the results, it can be observed that the introduction of adversarial training, channel attention layers and residual structure can enhance the model’s feature extraction capability. This improvement is particularly evident when the dataset presents a higher classification difficulty. However, when the dataset is relatively simple, overfitting may occur, leading to a decrease in classification accuracy. In such cases, it is appropriate to reduce the number of layers in the model.

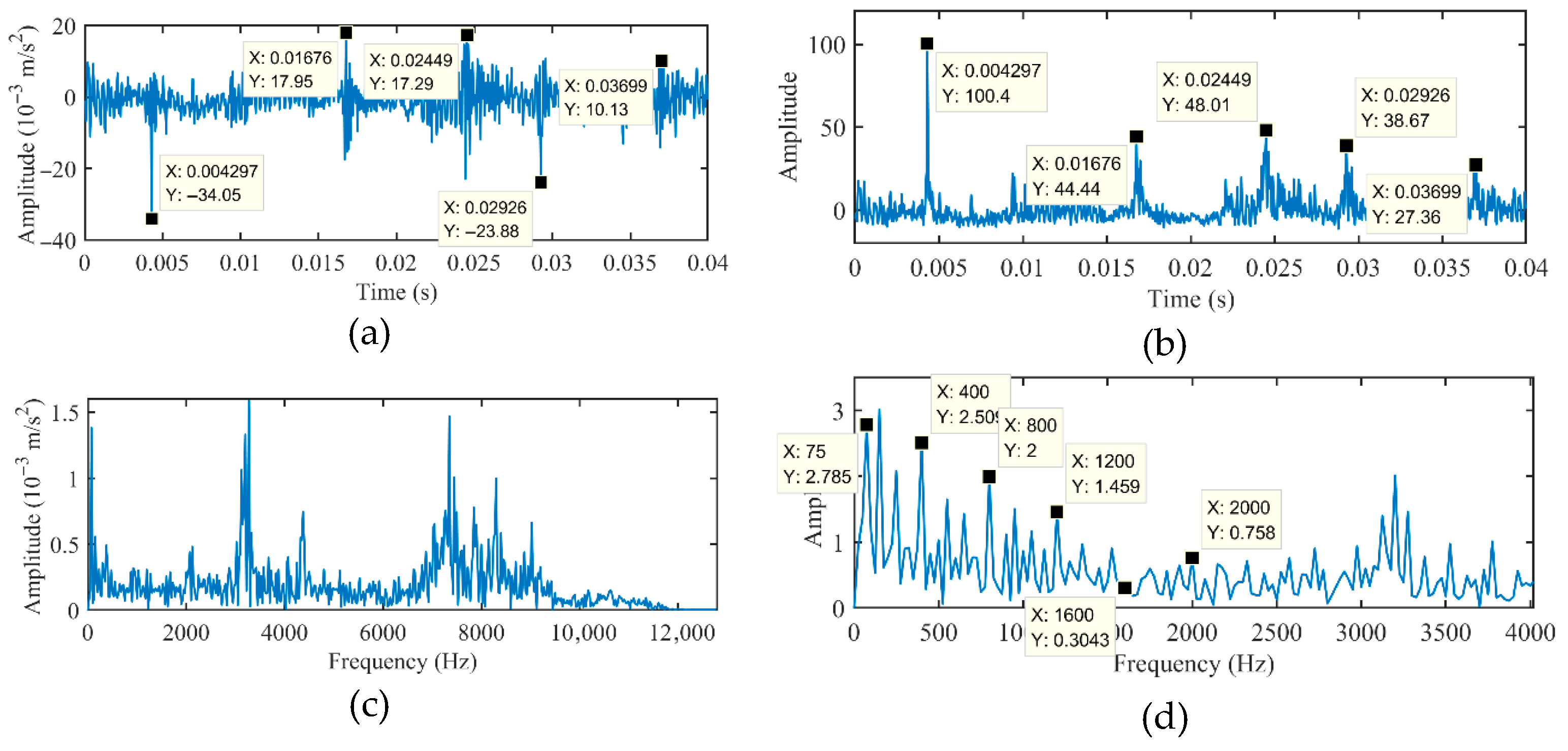

To facilitate observation of the signals decomposed by the encoder, the outputs of the encoder trained on Dataset_1 were summed across channels, and the resulting signals were plotted in both the time and frequency domains for comparison with the original signals, as shown in

Figure 11.

For rolling bearing faults, the main characteristic lies in the periodic impact components of the signal. From the time-domain signal obtained by summing the encoder outputs across channels, it can be observed that the impacts are more pronounced after the encoder transformation, and the impact frequency is close to the fault frequency of 124 Hz. Moreover, the fault frequency can be directly identified from the frequency-domain representation of the summed encoder outputs. The signals processed by the encoder thus exhibit an effect similar to envelope demodulation. For comparison, the envelope spectrum of the original signal is shown in

Figure 12, from which it can be observed that the identified fault characteristic frequencies are consistent with those revealed by the envelope spectrum.

Similarly, the time-domain and frequency-domain waveforms of the sum of encoder outputs across all channels, trained on the HUST dataset, were plotted and compared with the original signals, as shown in

Figure 13. For reference, the envelope spectrum of the original signal is shown in

Figure 14. The summed encoder outputs in the time domain exhibit noticeable impact components; however, since the impacts in the original signals are less pronounced, only the more prominent portions are highlighted. The rotational speed of the bearing corresponding to the original signals is 75 Hz, and the calculated inner-race fault frequency is approximately 407 Hz. This fault frequency can also be identified in the frequency-domain representation of the summed encoder outputs, and it is consistent with the characteristic frequency obtained from envelope spectrum analysis. The signals processed by the encoder continue to exhibit an effect similar to envelope demodulation.

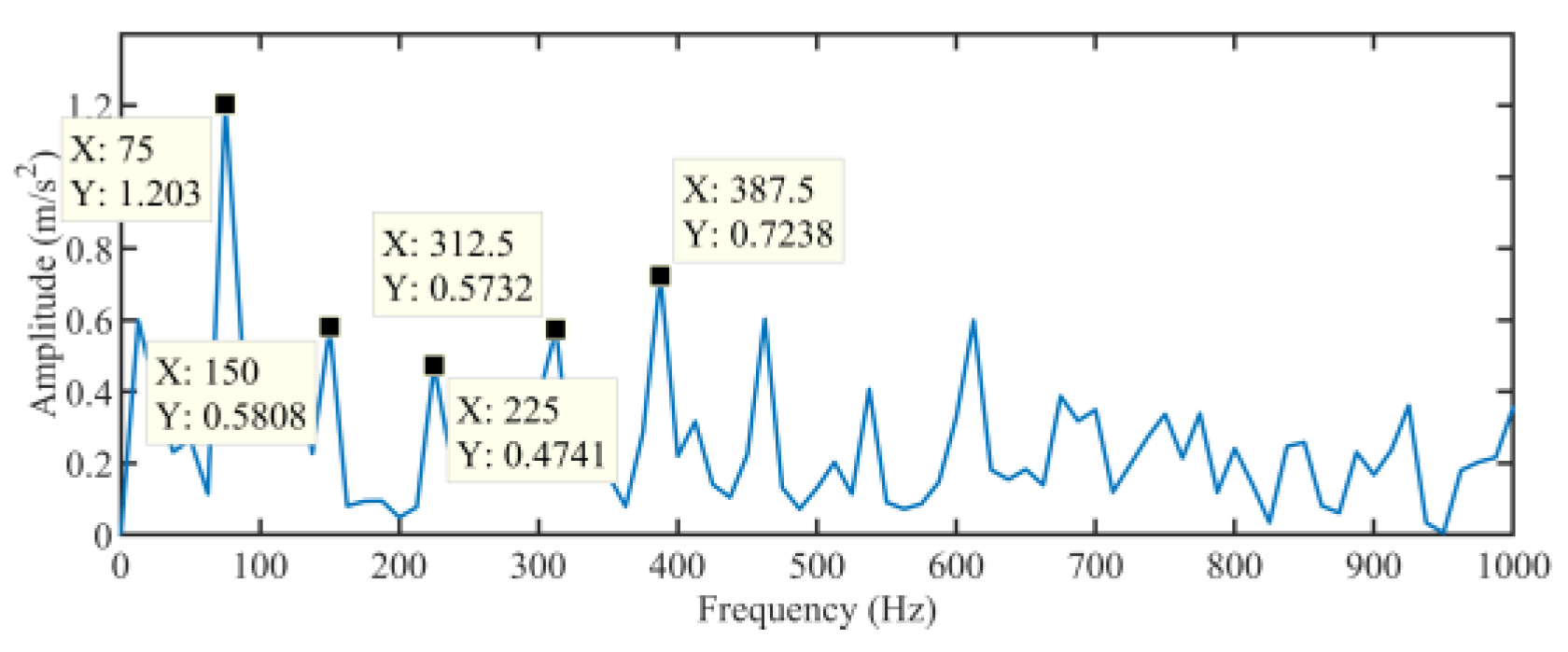

The rolling bearing fault signals collected in our laboratory were input into the encoder trained on the CWRU dataset. The time-domain and frequency-domain waveforms of the original signals and the sum of encoder outputs across all channels are shown in

Figure 15. In addition, the envelope spectrum of the original signal is presented in

Figure 16. These signals were not used in neural network training, and both the bearing model and operating conditions differ from those in the training data. Nevertheless, the summed encoder outputs in the time domain still exhibit pronounced impact components. The rotational speed corresponding to the original input signals is 25 Hz, and the calculated outer-race fault frequency is approximately 76 Hz. This fault frequency can also be identified in the frequency-domain representation of the summed encoder outputs and is consistent with the characteristic frequency obtained from envelope spectrum analysis, indicating that the encoder has effectively learned to extract fault-related features.

These observations suggest that through training, the autoencoder can learn an effective adaptive functional decomposition for signal preprocessing, allowing impact components in the signals to be effectively identified. Moreover, this transformation exhibits a degree of transferability, which may enable future applications in signal analysis: by feeding complex signals into the encoder and analyzing its outputs, characteristic patterns corresponding to different fault types can be discerned.

5. Conclusions

This paper proposes a novel adversarial autoencoder framework for rolling bearing fault diagnosis, addressing the limitations of existing methods that either rely on complex signal preprocessing or lack interpretability. Inspired by the formal analogy between signal decomposition algorithms and autoencoders, as well as the equivalence between CNN convolution operations and inner-product-based waveform matching, a convolutional autoencoder was designed to perform adaptive signal decomposition within the network. In this view, the autoencoder can be regarded as a data-driven signal decomposition approach, where each encoder channel corresponds to a nonlinear signal component, and the decoder ensures reconstruction fidelity. A channel attention mechanism adaptively reweights these components, and a classifier serves as a discriminator to enforce class separability in the latent space.

The autoencoder is trained via an adversarial-inspired strategy, achieving both low reconstruction error and highly discriminative latent features. Ablation studies confirm the effectiveness of the key components, including attention mechanisms and the adversarial training strategy. Analysis of encoder outputs demonstrates that the learned transformation emphasizes impact components in the signals, producing effects similar to envelope demodulation, and exhibits a degree of transferability across different datasets and operating conditions. Quantitative evaluation demonstrates the model’s robust performance, achieving an accuracy of 99.64 ± 0.29%, recall of 99.62 ± 0.29%, and an F1-score of 99.63 ± 0.29%, confirming the reliability and effectiveness of the proposed framework for rolling bearing fault diagnosis.

Overall, the proposed method provides an end-to-end, interpretable, and adaptive framework for intelligent fault diagnosis that eliminates the need for separate preprocessing steps while achieving high classification accuracy. At the same time, certain limitations remain. For example, the model may overfit when applied to simpler datasets with relatively low complexity; its robustness under extreme noise or highly variable operating conditions has not been fully evaluated, and the convolutional architecture may be less sensitive to non-local signal patterns. Future work will focus on addressing these limitations by enhancing the model’s robustness to noise, extending the framework to extract and enhance additional types of signal components, and incorporating model transfer strategies to facilitate knowledge transfer across different machines or operating environments, ultimately improving the model’s generalization to more complex and previously unseen operating conditions.