Integrating Artificial Intelligence into Mechatronics: A Comprehensive Study of Its Influence on System Performance, Autonomy, and Manufacturing Efficiency

Abstract

1. Introduction

2. Conceptual Background

2.1. Mechatronics Systems: Architecture and Components

2.2. Evolution of AI in Engineering Systems

2.3. Intersection of AI and Mechatronics

2.4. Materials and Mechanism-Level Perspectives in AI-Enabled Mechatronic Systems

3. Artificial Intelligence Techniques Relevant to Mechatronics

3.1. Machine Learning

3.2. Deep Learning

3.3. Reinforcement Learning

4. AI for Enhancing Mechatronic System Performance

4.1. Intelligent Control Systems (AI-PID, Adaptive Control)

4.2. Optimization of Motion and Positioning Accuracy

4.3. Energy Efficiency and Resource Optimization

4.4. Real-Time Embedded AI in Mechatronics

5. AI-Driven Autonomy in Mechatronic Systems

5.1. Autonomous Decision-Making and Planning

5.2. Robotics Navigation and Path Planning

5.3. Machine Vision and Perceptual Intelligence

Algorithm of Machine Vision

5.4. Human-Machine Interaction and Collaborative Systems

6. AI-Enabled Manufacturing Efficiency

6.1. Smart Factories and Industry 4.0 Integration

6.2. Intelligent Process Monitoring and Control

6.3. Predictive Maintenance and Fault Diagnosis

6.4. Production Flow Optimization and Scheduling

7. Case Studies and Industrial Applications

7.1. Robotics

7.2. Automotive

7.3. Aerospace Manufacturing and Unmanned Aerial Systems

7.4. Healthcare Mechatronics

7.5. Industrial Automation and Advanced Manufacturing

7.6. Cross-Case Synthesis and Conceptual Framework for AI-Enabled Mechatronic Systems

7.6.1. Common Architectural Patterns Across Application Domains

7.6.2. Role of AI Across Control, Optimization, and Material-Level Mechanisms

7.6.3. Cross-Domain Challenges and Maturity Levels

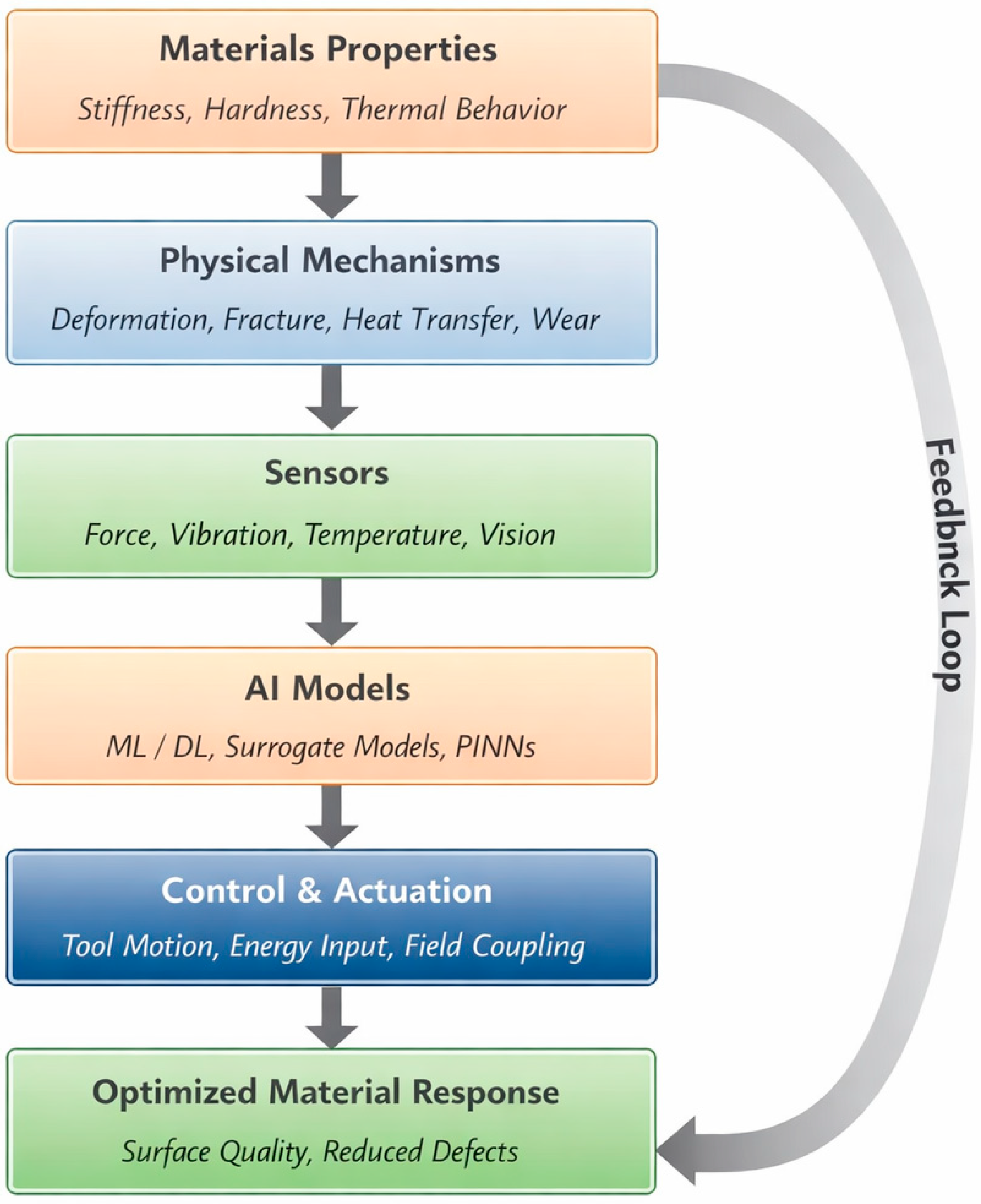

7.6.4. Unified Conceptual Framework for AI-Enabled Mechatronics

- Material and physical system properties (e.g., stiffness, thermal behavior, surface integrity);

- Mechanism-level processes (e.g., deformation, fracture, heat transfer, wear);

- Multi-modal sensing (force, vibration, temperature, vision);

- AI-based inference and optimization models (ML, DL, RL, surrogate models, physics-informed networks);

- Control and actuation strategies (motion control, energy-field coupling, adaptive regulation);

- Feedback and continuous learning enabled by embedded intelligence and digital twins.

7.6.5. Implications for Future Research and Industrial Deployment

8. Challenges and Limitations in AI-Mechatronics Integration

8.1. Research Gap: Data Availability and Quality

8.2. Research Gap: Real-Time Constraints and Deterministic Operation

8.3. Research Gap: Safety, Reliability, and Robustness

8.4. Research Gap: System Interoperability and Integration

8.5. Research Gap: Ethical, Economic, and Workforce Implications

9. Future Research Directions

9.1. Edge AI and TinyML for Real-Time Control

9.2. AI-Powered Digital Twins

9.3. Fully Autonomous Mechatronic Cells

9.4. Explainable and Trustworthy AI

9.5. Self-Adapting and Lifelong Learning Systems

10. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| IoT | Internet of Things |

| DTs | Digital Twins |

| ML | Machine Learning |

| HRC | Human–Robot Collaboration |

| MV | Machine Vision |

| AI | Artificial Intelligence |

| DL | Deep Learning |

| RL | Reinforcement Learning |

References

- Soori, M.; Dastres, R.; Arezoo, B.; Jough, F.K.G. Intelligent robotic systems in Industry 4.0: A review. J. Adv. Manuf. Sci. Technol. 2024, 4, 2024007. [Google Scholar] [CrossRef]

- Yakubu Rabiu, A.A.; Messager, N.D.; Musa, M.I.; Bayero, N.S.; Surajo, A. Artificial Intelligence in Smart Sensors and Actuators for Mechatronic Applications. Simulation 2025, 3, 20. [Google Scholar] [CrossRef]

- Ryalat, M.; Franco, E.; Elmoaqet, H.; Almtireen, N.; Al-Refai, G. The integration of advanced mechatronic systems into industry 4.0 for smart manufacturing. Sustainability 2024, 16, 8504. [Google Scholar] [CrossRef]

- Nüßgen, A.; Lerch, A.; Degen, R.; Irmer, M.; Fries, M.; Richter, F.; Boström, C.; Ruschitzka, M. Reinforcement Learning in Mechatronic Systems: A Case Study on DC Motor Control. Circuits Syst. 2025, 16, 1–24. [Google Scholar] [CrossRef]

- Windmann, A.; Wittenberg, P.; Schieseck, M.; Niggemann, O. Artificial intelligence in Industry 4.0: A review of integration challenges for industrial systems. In 2024 IEEE 22nd International Conference on Industrial Informatics (INDIN); IEEE: New York, NY, USA, 2024; pp. 1–8. [Google Scholar]

- Chatti, S.; Laperrière, L.; Reinhart, G.; Tolio, T. CIRP Encyclopedia of Production Engineering; Springer: Berlin/Heidelberg, Germany, 2019; pp. 1181–1186. [Google Scholar]

- Bishop, R.H. Mechatronics: An Introduction; CRC Press: Boca Raton, FL, USA, 2017. [Google Scholar]

- Afolalu, S.A.; Ikumapayi, O.M.; Abdulkareem, A.; Soetan, S.B.; Emetere, M.E.; Ongbali, S.O. Enviable roles of manufacturing processes in sustainable fourth industrial revolution—A case study of mechatronics. Mater. Today Proc. 2021, 44, 2895–2901. [Google Scholar] [CrossRef]

- Stankovski, S.; Ostojić, G.; Zhang, X.; Baranovski, I.; Tegeltija, S.; Horvat, S. Mechatronics, identification tehnology, industry 4.0 and education. In 2019 18th International Symposium Infoteh-Jahorina (Infoteh); IEEE: New York, NY, USA, 2019; pp. 1–4. [Google Scholar]

- Geevarghese, K.P.; Gangadharan, K.V. Design and implementation of remote mechatronics laboratory for e-learning using labview and smartphone and cross-platform communication toolkit (scct). Procedia Technol. 2014, 14, 108–115. [Google Scholar][Green Version]

- Liagkou, V.; Stylios, C.; Pappa, L.; Petunin, A. Challenges and opportunities in industry 4.0 for mechatronics, artificial intelligence and cybernetics. Electronics 2021, 10, 2001. [Google Scholar] [CrossRef]

- Liao, S.H. Expert system methodologies and applications a decade review from 1995 to 2004. Expert Syst. Appl. 2005, 28, 93–103. [Google Scholar] [CrossRef]

- Clancey, W.J. The epistemology of a rule-based expert system a framework for explanation. Artif. Intell. 1983, 20, 215–251. [Google Scholar] [CrossRef]

- Nilsson, N.J. The Quest for Artificial Intelligence; Cambridge University Press: Cambridge, UK, 2009. [Google Scholar]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Jordan, M.I.; Mitchell, T.M. Machine learning: Trends, perspectives, and prospects. Science 2015, 349, 255–260. [Google Scholar] [CrossRef]

- Domingos, P. A few useful things to know about machine learning. Commun. ACM 2012, 55, 78–87. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Polosukhin, I. Attention is all you need. In Advances in Neural Information Processing Systems 30 (NIPS 2017); Curran Associates, Inc.: Red Hook, NY, USA, 2017. [Google Scholar]

- Halevy, A.; Norvig, P.; Pereira, F. The unreasonable effectiveness of data. IEEE Intell. Syst. 2009, 24, 8–12. [Google Scholar] [CrossRef]

- Abadi, M.; Barham, P.; Chen, J.; Chen, Z.; Davis, A.; Dean, J.; Devin, M.; Ghemawat, S.; Irving, G.; Isard, M.; et al. TensorFlow: A system for Large-Scale machine learning. In 12th USENIX Symposium on Operating Systems Design and Implementation (OSDI 16); USENIX: Berkeley, CA, USA, 2016; pp. 265–283. [Google Scholar]

- Paszke, A.; Gross, S.; Massa, F.; Lerer, A.; Bradbury, J.; Chanan, G.; Chintala, S. Pytorch: An imperative style, high-performance deep learning library. In Advances in Neural Information Processing Systems 32 (NeurIPS 2019); Curran Associates, Inc.: Red Hook, NY, USA, 2019. [Google Scholar]

- Zhang, E.Y.; Cheok, A.D.; Pan, Z.; Cai, J.; Yan, Y. From turing to transformers: A comprehensive review and tutorial on the evolution and applications of generative transformer models. Sci 2023, 5, 46. [Google Scholar] [CrossRef]

- Garcez, A.D.A.; Lamb, L.C. Neurosymbolic ai: The 3rd wave. Artif. Intell. Rev. 2023, 56, 12387–12406. [Google Scholar] [CrossRef]

- Hashemi, A.; Dowlatshahi, M.B. A review on the feasibility of artificial intelligence in mechatronics. In Artificial Intelligence in Mechatronics and Civil Engineering: Bridging the Gap; Springer: Singapore, 2023; pp. 79–92. [Google Scholar] [CrossRef]

- Zaitceva, I.; Andrievsky, B. Methods of intelligent control in mechatronics and robotic engineering: A survey. Electronics 2022, 11, 2443. [Google Scholar] [CrossRef]

- Aheleroff, S.; Mostashiri, N.; Xu, X.; Zhong, R.Y. Mass personalisation as a service in industry 4.0: A resilient response case study. Adv. Eng. Inform. 2021, 50, 101438. [Google Scholar] [CrossRef]

- Guo, Y. AI in modular mechatronic system design: A review. Appl. Sci. 2023, 13, 158. [Google Scholar] [CrossRef]

- Katrantzis, E.; Moulianitis, V.C.; Miatliuk, K. Conceptual Design Evaluation of Mechatronic Systems. In Emerging Trends in Mechatronics; IntechOpen: London, UK, 2020. [Google Scholar]

- Jiménez López, E.; Cuenca Jiménez, F.; Luna Sandoval, G.; Ochoa Estrella, F.J.; Maciel Monteón, M.A.; Muñoz, F.; Limón Leyva, P.A. Technical considerations for the conformation of specific competences in mechatronic engineers in the context of industry 4.0 and 5.0. Processes 2022, 10, 1445. [Google Scholar] [CrossRef]

- Maksuti, R. Applications of smart materials in mechatronics technology. JAS-SUT J. Appl. Sci.-SUT 2019, 5, 9–13. [Google Scholar]

- Maksuti, R. Properties of Smart Materials for Mechatronic Applications. J. Appl. Sci.-SUT 2021, 7, 118–125. [Google Scholar]

- Whig, P.; Madavarapu, J.B.; Modhugu, V.R.; Kasula, B.Y.; Bhatia, A.B. Advancing mechatronics through artificial intelligence. In Computational Intelligent Techniques in Mechatronics; John Wiley & Sons: Hoboken, NJ, USA, 2024; pp. 381–399. [Google Scholar]

- Gehlot, V.; Rana, P.S. AI in Mechatronics. In Computational Intelligent Techniques in Mechatronics; John Wiley & Sons: Hoboken, NJ, USA, 2024; pp. 1–39. [Google Scholar]

- Yuan, S.; Cheung, C.F.; Fang, F.; Huang, H.; Wang, C. Multi-physical field coupling polishing of diamond for atomic-scale damage-free surface. Int. J. Extrem. Manuf. 2026, 8, 032004. [Google Scholar] [CrossRef]

- Zhao, L.; Wang, X.; Jiang, M.; Zhao, C.; Jiang, N.; Nishimura, K.; Yi, J.; Fang, S. Optimization of Diamond polishing process for sub-nanometer roughness using Ar/O2/SF6 plasma. Materials 2025, 18, 2615. [Google Scholar] [CrossRef]

- Pan, M.; Zhang, G.; Zhang, W.; Zhang, J.; Xu, Z.; Du, J. A Review of Intelligentization System and Architecture for Ultra-Precision Machining Process. Processes 2024, 12, 2754. [Google Scholar] [CrossRef]

- Li, J.P.; Polovina, N.; Konur, S. A Review of AI-Driven Engineering Modelling and Optimization: Methodologies, Applications and Future Directions. Algorithms 2026, 19, 93. [Google Scholar] [CrossRef]

- Peivaste, I.; Belouettar, S.; Mercuri, F.; Fantuzzi, N.; Dehghani, H.; Izadi, R.; Ibrahim, H.; Lengiewicz, J.; Belouettar-Mathis, M.; Bendine, K.; et al. Artificial intelligence in materials science and engineering: Current landscape, key challenges, and future trajectories. Compos. Struct. 2025, 372, 119419. [Google Scholar] [CrossRef]

- Jan, Z.; Ahamed, F.; Mayer, W.; Patel, N.; Grossmann, G.; Stumptner, M.; Kuusk, A. Artificial intelligence for industry 4.0: Systematic review of applications, challenges, and opportunities. Expert Syst. Appl. 2023, 216, 119456. [Google Scholar] [CrossRef]

- Wamba-Taguimdje, S.L.; Fosso Wamba, S.; Kala Kamdjoug, J.R.; Tchatchouang Wanko, C.E. Influence of artificial intelligence (AI) on firm performance: The business value of AI-based transformation projects. Bus. Process Manag. J. 2020, 26, 1893–1924. [Google Scholar] [CrossRef]

- Alzubi, J.; Nayyar, A.; Kumar, A. Machine learning from theory to algorithms: An overview. J. Phys. Conf. Ser. 2018, 1142, 012012. [Google Scholar] [CrossRef]

- Mukhamediev, R.I.; Symagulov, A.; Kuchin, Y.; Yakunin, K.; Yelis, M. From classical machine learning to deep neural networks: A simplified scientometric review. Appl. Sci. 2021, 11, 5541. [Google Scholar] [CrossRef]

- Nassehi, A.; Zhong, R.Y.; Li, X.; Epureanu, B.I. Review of machine learning technologies and artificial intelligence in modern manufacturing systems. In Design and Operation of Production Networks for Mass Personalization in the Era of Cloud Technology; Elsevier: Amsterdam, The Netherlands, 2022; pp. 317–348. [Google Scholar]

- Tercan, H.; Meisen, T. Machine learning and deep learning based predictive quality in manufacturing: A systematic review. J. Intell. Manuf. 2022, 33, 1879–1905. [Google Scholar] [CrossRef]

- McCulloch, W.S.; Pitts, W. A logical calculus of the ideas immanent in nervous activity. Bull. Math. Biophys. 1943, 5, 115–133. [Google Scholar] [CrossRef]

- Rosenblatt, F. The perceptron: A probabilistic model for information storage and organization in the brain. Psychol. Rev. 1958, 65, 386. [Google Scholar] [CrossRef]

- Cover, T.; Hart, P. Nearest neighbor pattern classification. IEEE Trans. Inf. Theory 1967, 13, 21–27. [Google Scholar] [CrossRef]

- Toosi, A.; Bottino, A.G.; Saboury, B.; Siegel, E.; Rahmim, A. A brief history of AI: How to prevent another winter (a critical review). PET Clin. 2021, 16, 449–469. [Google Scholar] [CrossRef]

- Grigoras, C.C.; Zichil, V.; Ciubotariu, V.A.; Cosa, S.M. Machine learning, mechatronics, and stretch forming: A history of innovation in manufacturing engineering. Machines 2024, 12, 180. [Google Scholar] [CrossRef]

- Patil, D.; Rane, N.L.; Desai, P.; Rane, J. Machine learning and deep learning: Methods, techniques, applications, challenges, and future research opportunities. In Trustworthy Artificial Intelligence in Industry and Society; Deep Science Publishing: San Francisco, CA, USA, 2024; pp. 28–81. [Google Scholar]

- Taye, M.M. Understanding of machine learning with deep learning: Architectures, workflow, applications and future directions. Computers 2023, 12, 91. [Google Scholar] [CrossRef]

- Sarker, I.H. Machine learning: Algorithms, real-world applications and research directions. SN Comput. Sci. 2021, 2, 160. [Google Scholar] [CrossRef]

- Swapna, M.; Sharma, Y.K.; Prasadh, B.M.G. CNN Architectures: Alex Net, Le Net, VGG, Google Net, Res Net. Int. J. Recent Technol. Eng. 2020, 8, 953–960. [Google Scholar] [CrossRef]

- LeCun, Y.; Bottou, L.; Bengio, Y.; Haffner, P. Gradient-based learning applied to document recognition. Proc. IEEE 2002, 86, 2278–2324. [Google Scholar] [CrossRef]

- Hinton, G.E. Deep belief networks. Scholarpedia 2009, 4, 5947. [Google Scholar] [CrossRef]

- Schmidhuber, J. Deep learning in neural networks: An overview. Neural Netw. 2015, 61, 85–117. [Google Scholar] [CrossRef] [PubMed]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. In Advances in Neural Information Processing Systems 25 (NIPS 2012); Curran Associates, Inc.: Red Hook, NY, USA, 2012. [Google Scholar]

- Goodfellow, I.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D.; Ozair, S.; Bengio, Y. Generative adversarial networks. Commun. ACM 2020, 63, 139–144. [Google Scholar] [CrossRef]

- Ahmad, J.; Farman, H.; Jan, Z. Deep learning methods and applications. In Deep Learning: Convergence to Big Data Analytics; Springer: Singapore, 2018; pp. 31–42. [Google Scholar]

- Fayaz, S.A.; Jahangeer Sidiq, S.; Zaman, M.; Butt, M.A. Machine learning: An introduction to reinforcement learning. In Machine Learning and Data Science: Fundamentals and Applications; John Wiley & Sons: Hoboken, NJ, USA, 2022; pp. 1–22. [Google Scholar]

- Lee, J.H.; Shin, J.; Realff, M.J. Machine learning: Overview of the recent progresses and implications for the process systems engineering field. Comput. Chem. Eng. 2018, 114, 111–121. [Google Scholar] [CrossRef]

- Busoniu, L.; Babuska, R.; De Schutter, B.; Ernst, D. Reinforcement Learning and Dynamic Programming Using Function Approximators; CRC Press: Boca Raton, FL, USA, 2017. [Google Scholar] [CrossRef]

- Kurrek, P.; Jocas, M.; Zoghlami, F.; Stoelen, M.; Salehi, V. Ai motion control—A generic approach to develop control policies for robotic manipulation tasks. In Proceedings of the Design Society: International Conference on Engineering Design; Cambridge University Press: Cambridge, UK, 2019; Volume 1, pp. 3561–3570. [Google Scholar]

- Kim, J.B.; Lim, H.K.; Kim, C.M.; Kim, M.S.; Hong, Y.G.; Han, Y.H. Imitation reinforcement learning-based remote rotary inverted pendulum control in openflow network. IEEE Access 2019, 7, 36682–36690. [Google Scholar] [CrossRef]

- Menghal, P.M.; Laxmi, A.J. Real time simulation: A novel approach in engineering education. In 2011 3rd International Conference on Electronics Computer Technology; IEEE: New York, NY, USA, 2011; Volume 1, pp. 215–219. [Google Scholar]

- Werbos, P.J. Reinforcement learning and approximate dynamic programming (RLADP) foundations, common misconceptions, and the challenges ahead. In Reinforcement Learning and Approximate Dynamic Programming for Feedback Control; John Wiley & Son: Hoboken, NJ, USA, 2012; pp. 1–30. [Google Scholar]

- Lewis, F.L.; Vrabie, D. Reinforcement learning and adaptive dynamic programming for feedback control. IEEE Circuits Syst. Mag. 2009, 9, 32–50. [Google Scholar] [CrossRef]

- Razzaq, K.; Shah, M. Machine learning and deep learning paradigms: From techniques to practical applications and research frontiers. Computers 2025, 14, 93. [Google Scholar] [CrossRef]

- Mumuni, Q.A.; Olayiwola-Mumuni, A.I.; Yussouff, A.A. The advent of the proportional integral derivative controller: A review. J. Adv. Eng. Technol. 2023, 2, 5–22. [Google Scholar]

- Noshadi, A.; Shi, J.; Lee, W.S.; Shi, P.; Kalam, A. Optimal PID-type fuzzy logic controller for a multi-input multi-output active magnetic bearing system. Neural Comput. Appl. 2016, 27, 2031–2046. [Google Scholar] [CrossRef]

- Kiss, A.N.; Marx, B.; Mourot, G.; Schutz, G.; Ragot, J. State estimation of two-time scale multiple models. Application to wastewater treatment plant. Control Eng. Pract. 2011, 19, 1354–1362. [Google Scholar] [CrossRef]

- Yang, Y.; Gao, Y.; Wu, J.; Ding, Z.; Zhao, S. Improving PID controller performance in nonlinear oscillatory automatic generation control systems using a multi-objective marine predator algorithm with enhanced diversity. J. Bionic Eng. 2024, 21, 2497–2514. [Google Scholar] [CrossRef]

- Charkoutsis, S.; Kara-Mohamed, M. A Particle Swarm Optimization tuned nonlinear PID controller with improved performance and robustness for First Order Plus Time Delay systems. Results Control Optim. 2023, 12, 100289. [Google Scholar] [CrossRef]

- Mumuni, Q.A.; Olaniyan, O.M.; Ipinnimo, O.; Akinyemi, L.A.; Olayiwola-Mumuni, A.I. Intelligent PID Gain Selection via Supervised Machine Learning Approach for Decoupled MIMO Control Systems. J. Future Artif. Intell. Technol. 2025, 2, 163–181. [Google Scholar] [CrossRef]

- Joseph, S.B.; Dada, E.G.; Abidemi, A.; Oyewola, D.O.; Khammas, B.M. Metaheuristic algorithms for PID controller parameters tuning: Review, approaches and open problems. Heliyon 2022, 8, e09399. [Google Scholar] [CrossRef]

- Fradkov, A.L. Scientific School of Vladimir Yakubovich in the 20th century. IFAC-PapersOnLine 2017, 50, 5231–5237. [Google Scholar] [CrossRef]

- Gusev, S.V.; Bondarko, V.A. Notes on Yakubovich’s method of recursive objective inequalities and its application in adaptive control and robotics. IFAC-PapersOnLine 2020, 53, 1379–1384. [Google Scholar] [CrossRef]

- Lipkovich, M. Yakubovich’s method of recursive objective inequalities in machine learning. IFAC-PapersOnLine 2022, 55, 138–143. [Google Scholar] [CrossRef]

- Fernández Mareco, E.R.; Pinto-Roa, D. Application of Artificial Intelligence in Control Systems: Trends, Challenges, and Opportunities. AI 2025, 6, 326. [Google Scholar] [CrossRef]

- Li, Y.; Chen, H.; Tsung, F. A review of AI-assisted motion control. In International Conference on Internet of Things and Machine Learning (IoTML 2023); SPIE: Bellingham, WA, USA, 2023; Volume 12937, pp. 212–222. [Google Scholar]

- Trinh, M.; Königs, M.; Gründel, L.; Beier, M.; Petrovic, O.; Brecher, C. Accuracy Optimization of Robotic Machining Using Grey-Box Modeling and Simulation Planning Assistance. J. Manuf. Mater. Process. 2025, 9, 126. [Google Scholar] [CrossRef]

- Tian, J.; Li, K.; Xue, W. An adaptive ensemble predictive strategy for multiple scale electrical energy usages forecasting. Sustain. Cities Soc. 2021, 66, 102654. [Google Scholar] [CrossRef]

- Sepehr, M.; Eghtedaei, R.; Toolabimoghadam, A.; Noorollahi, Y.; Mohammadi, M. Modeling the electrical energy consumption profile for residential buildings in Iran. Sustain. Cities Soc. 2018, 41, 481–489. [Google Scholar] [CrossRef]

- Batlle, E.A.O.; Palacio, J.C.E.; Lora, E.E.S.; Reyes, A.M.M.; Moreno, M.M.; Morejón, M.B. A methodology to estimate baseline energy use and quantify savings in electrical energy consumption in higher education institution buildings: Case study, Federal University of Itajubá (UNIFEI). J. Clean. Prod. 2020, 244, 118551. [Google Scholar] [CrossRef]

- He, F.; Zhou, J.; Feng, Z.K.; Liu, G.; Yang, Y. A hybrid short-term load forecasting model based on variational mode decomposition and long short-term memory networks considering relevant factors with Bayesian optimization algorithm. Appl. Energy 2019, 237, 103–116. [Google Scholar] [CrossRef]

- Ahmad, T.; Chen, H.; Guo, Y.; Wang, J. A comprehensive overview on the data driven and large scale based approaches for forecasting of building energy demand: A review. Energy Build. 2018, 165, 301–320. [Google Scholar] [CrossRef]

- Amasyali, K.; El-Gohary, N.M. A review of data-driven building energy consumption prediction studies. Renew. Sustain. Energy Rev. 2018, 81, 1192–1205. [Google Scholar] [CrossRef]

- Wei, Y.; Zhang, X.; Shi, Y.; Xia, L.; Pan, S.; Wu, J.; Han, M.; Zhao, X. A review of data-driven approaches for prediction and classification of building energy consumption. Renew. Sustain. Energy Rev. 2018, 82, 1027–1047. [Google Scholar] [CrossRef]

- Wang, Y.; Yang, D.; Zhang, X.; Chen, Z. Probability based remaining capacity estimation using data-driven and neural network model. J. Power Sources 2016, 315, 199–208. [Google Scholar] [CrossRef]

- Koschwitz, D.; Frisch, J.; Van Treeck, C. Data-driven heating and cooling load predictions for non-residential buildings based on support vector machine regression and NARX Recurrent Neural Network: A comparative study on district scale. Energy 2018, 165, 134–142. [Google Scholar] [CrossRef]

- Smarra, F.; Jain, A.; De Rubeis, T.; Ambrosini, D.; D’Innocenzo, A.; Mangharam, R. Data-driven model predictive control using random forests for building energy optimization and climate control. Appl. Energy 2018, 226, 1252–1272. [Google Scholar] [CrossRef]

- Ahmed, S.; Hasan, M.Z.; MacLennan, M.; Dorin, F.; Ahmed, M.W.; Hasan, M.M.; Hasan, S.M.; Islam, M.T.; Khan, J.A.M. Measuring the efficiency of health systems in Asia: A data envelopment analysis. BMJ Open 2019, 9, e022155. [Google Scholar] [CrossRef]

- Zhu, N.; Zhu, C.; Emrouznejad, A. A combined machine learning algorithms and DEA method for measuring and predicting the efficiency of Chinese manufacturing listed companies. J. Manag. Sci. Eng. 2020, 6, 435–448. [Google Scholar] [CrossRef]

- Zhong, K.; Wang, Y.; Pei, J.; Tang, S.; Han, Z. Super-efficiency SBM-DEA and neural network for performance evaluation. Inf. Process. Manag. 2021, 58, 102728. [Google Scholar] [CrossRef]

- Bataineh, A.A.; Kaur, D.; Jalali, S.M.J. Multi-layer perceptron training optimization using nature-inspired computing. IEEE Access 2022, 10, 36963–36977. [Google Scholar] [CrossRef]

- Salehi, V.; Veitch, B.; Musharraf, M. Measuring and improving adaptive capacity in resilient systems by means of an integrated DEA–machine learning approach. Appl. Ergon. 2020, 82, 102975. [Google Scholar] [CrossRef]

- Mirmozaffari, M.; Yazdani, M.; Boskabadi, A.; Ahady Dolatsara, H.; Kabirifar, K.; Amiri Golilarz, N. A novel machine learning approach combined with optimization models for eco-efficiency evaluation. Appl. Sci. 2020, 10, 5210. [Google Scholar] [CrossRef]

- Tayal, A.; Solanki, A.; Singh, S.P. Integrated framework for identifying sustainable manufacturing layouts based on big data, machine learning, meta-heuristic and data envelopment analysis. Sustain. Cities Soc. 2020, 62, 102383. [Google Scholar] [CrossRef]

- Li, Z.; Crook, J.; Andreeva, G. Dynamic prediction of financial distress using Malmquist DEA. Expert Syst. Appl. 2017, 80, 94–106. [Google Scholar] [CrossRef]

- Hafeez, G.; Alimgeer, K.S.; Khan, I. Electric load forecasting based on deep learning and optimized by heuristic algorithm in smart grid. Appl. Energy 2020, 269, 114915. [Google Scholar] [CrossRef]

- Wen, L.; Zhou, K.; Yang, S.; Li, X. Optimal load dispatch of community microgrid with deep learning-based solar power and load forecasting. Energy 2019, 171, 1053–1065. [Google Scholar] [CrossRef]

- Merei, G.; Berger, C.; Sauer, D.U. Optimization of an off-grid hybrid PV–wind–diesel system with different battery technologies using genetic algorithm. Sol. Energy 2013, 97, 460–473. [Google Scholar] [CrossRef]

- Król, J.; Ocłoń, P. Economic analysis of heat and electricity production in combined heat and power plant equipped with steam and water boilers and natural gas engines. Energy Convers. Manag. 2018, 176, 11–29. [Google Scholar] [CrossRef]

- Xu, X.; Wang, Y.; Zuo, L.; Chen, S. Multimaterial topology optimization of thermoelectric generators. In ASME 2019 International Design Engineering Technical Conferences and Computers and Information in Engineering Conference; American Society of Mechanical Engineers: New York, NY, USA, 2019; Volume 59186, p. V02AT03A064. [Google Scholar] [CrossRef]

- Han, Y.; Liu, S.; Geng, Z.; Guo, H.; Qi, Y. Energy analysis and resources optimization of complex chemical processes: Evidence based on novel DEA cross-model. Energy 2021, 218, 119508. [Google Scholar] [CrossRef]

- Goyal, S.B.; Rajawat, A.S.; Solanki, R.K.; Zaaba, M.A.M.; Long, Z.A. Integrating AI with cyber security for smart industry 4.0 application. In 2023 International Conference on Inventive Computation Technologies (ICICT); IEEE: New York, NY, USA, 2023; pp. 1223–1232. [Google Scholar]

- Bargavi, S.M.; Muhammed, H.; Harish, P.S.; Dhanush, D. Edge Computing and AI for Real-time Analytics in Smart Devices. Asian J. Basic Sci. Res. 2025, 7, 1–9. [Google Scholar] [CrossRef]

- Khalifa, I.A.; Keti, F. The Role of Image Processing and Deep Learning in IoT-Based Systems: A Comprehensive Review. Eur. J. Appl. Sci. Eng. Technol. 2025, 3, 165–179. [Google Scholar] [CrossRef]

- Huang, X.; Wang, H.; Shiyin, Q.; Su-Kit, T. Embedded Artificial Intelligence: A Comprehensive Literature Review. Electronics 2025, 14, 3468. [Google Scholar] [CrossRef]

- Hu, J.; Wang, Y.; Cheng, S.; Xu, J.; Wang, N.; Fu, B.; Ning, Z.; Li, J.; Chen, H.; Feng, C.; et al. A survey of decision-making and planning methods for self-driving vehicles. Front. Neurorobot. 2025, 19, 1451923. [Google Scholar] [CrossRef]

- Zhao, C.; Li, L.; Pei, X.; Li, Z.; Wang, F.-Y.; Wu, X. A comparative study of state-of-the-art driving strategies for autonomous vehicles. Accid. Anal. Prev. 2021, 150, 105937. [Google Scholar] [CrossRef]

- Veres, S.M.; Molnar, L.; Lincoln, N.K.; Morice, C.P. Autonomous vehicle control systems a review of decision making. Proc. Inst. Mech. Eng. Part I J. Syst. Control Eng. 2011, 225, 155–195. [Google Scholar] [CrossRef]

- Ashraf, M. Enhancing Perception and Decision-Making in Autonomous Systems through Vision-based Technologies: Focus on Robotics, Drones, and Self-Driving Cars. Int. J. 2024, 13, 794–797. [Google Scholar] [CrossRef]

- Peralta, F.; Arzamendia, M.; Gregor, D.; Reina, D.G.; Toral, S. A comparison of local path planning techniques of autonomous surface vehicles for monitoring applications: The ypacarai lake case-study. Sensors 2020, 20, 1488. [Google Scholar] [CrossRef]

- Meng, Q.; Song, H.; Li, G.; Zhang, Y.A.; Zhang, X. A block object detection method based on feature fusion networks for autonomous vehicles. Complexity 2019, 2019, 4042624. [Google Scholar] [CrossRef]

- Ni, J.; Wu, L.; Shi, P.; Yang, S.X. A dynamic bioinspired neural network based real-time path planning method for autonomous underwater vehicles. Comput. Intell. Neurosci. 2017, 2017, 9269742. [Google Scholar] [CrossRef] [PubMed]

- Venu, S.; Gurusamy, M. A Comprehensive Review of Path Planning Algorithms for Autonomous Navigation. Results Eng. 2025, 28, 107750. [Google Scholar] [CrossRef]

- Yuan, C.; Wei, Y.; Shen, J.; Chen, L.; He, Y.; Weng, S.; Wang, T. Research on path planning based on new fusion algorithm for autonomous vehicle. Int. J. Adv. Robot. Syst. 2020, 17, 1729881420911235. [Google Scholar] [CrossRef]

- Zhang, J.; Shi, Z.; Yang, X.; Zhao, J. Trajectory planning and tracking control for autonomous parallel parking of a non-holonomic vehicle. Meas. Control 2020, 53, 1800–1816. [Google Scholar] [CrossRef]

- Min, H.; Xiong, X.; Wang, P.; Yu, Y. Autonomous driving path planning algorithm based on improved A* algorithm in unstructured environment. Proc. Inst. Mech. Eng. Part D J. Automob. Eng. 2021, 235, 513–526. [Google Scholar] [CrossRef]

- Mohammadzadeh, A.; Taghavifar, H. A novel adaptive control approach for path tracking control of autonomous vehicles subject to uncertain dynamics. Proc. Inst. Mech. Eng. Part D J. Automob. Eng. 2020, 234, 2115–2126. [Google Scholar] [CrossRef]

- Huang, C.; Chen, Z.; Liu, Y.; Xu, B.; Ling, Z.; Li, Z.; Yu, W.; Shen, Y.; Wang, H.; Li, J.; et al. Autonomous Driving Comfort Prediction: Improve Comfort through Global Path Planning. Res. Sq. 2024. [Google Scholar] [CrossRef]

- Yan, F.; Liu, Y.S.; Xiao, J.Z. Path planning in complex 3D environments using a probabilistic roadmap method. Int. J. Autom. Comput. 2013, 10, 525–533. [Google Scholar] [CrossRef]

- Aghababa, M.P. 3D path planning for underwater vehicles using five evolutionary optimization algorithms avoiding static and energetic obstacles. Appl. Ocean Res. 2012, 38, 48–62. [Google Scholar] [CrossRef]

- Choset, H.; Lynch, K.M.; Hutchinson, S.; Kantor, G.A.; Burgard, W. Principles of Robot Motion: Theory, Algorithms, and Implementations; MIT Press: Cambridge, MA, USA, 2005. [Google Scholar]

- Ibraheem, I.K.; Ajeil, F.H. Multi-objective path planning of an autonomous mobile robot in static and dynamic environments using a hybrid PSO-MFB optimisation algorithm. arXiv 2018, arXiv:1805.00224. [Google Scholar]

- AL-Nayar, M.M.; Dagher, K.E.; Hadi, E.A. A comparative study for wheeled mobile robot path planning based on modified intelligent algorithms. Iraqi J. Mech. Mater. Eng. 2019, 19, 60–74. [Google Scholar] [CrossRef]

- Hassani, I.; Maalej, I.; Rekik, C. Robot path planning with avoiding obstacles in known environment using free segments and turning points algorithm. Math. Probl. Eng. 2018, 2018, 2163278. [Google Scholar] [CrossRef]

- Gul, F.; Rahiman, W.; Nazli Alhady, S.S. A comprehensive study for robot navigation techniques. Cogent Eng. 2019, 6, 1632046. [Google Scholar] [CrossRef]

- Yang, S.; Behzadian, K.; Coleman, C.; Holloway, T.G.; Campos, L.C. Application of AI-based techniques for anomaly management in wastewater treatment plants: A review. J. Environ. Manag. 2025, 392, 126886. [Google Scholar] [CrossRef]

- Bayoudh, K.; Knani, R.; Hamdaoui, F.; Mtibaa, A. A survey on deep multimodal learning for computer vision: Advances, trends, applications, and datasets. Vis. Comput. 2022, 38, 2939–2970. [Google Scholar] [CrossRef]

- Robinson, N.; Tidd, B.; Campbell, D.; Kulić, D.; Corke, P. Robotic vision for human-robot interaction and collaboration: A survey and systematic review. ACM Trans. Hum.-Robot Interact. 2023, 12, 1–66. [Google Scholar] [CrossRef]

- Anthony, E.J.; Kusnadi, R.A. Computer vision for supporting visually impaired people: A systematic review. Eng. Math. Comput. Sci. J. (EMACS) 2021, 3, 65–71. [Google Scholar] [CrossRef]

- Voulodimos, A.; Doulamis, N.; Doulamis, A.; Protopapadakis, E. Deep learning for computer vision: A brief review. Comput. Intell. Neurosci. 2018, 2018, 7068349. [Google Scholar] [CrossRef] [PubMed]

- Manakitsa, N.; Maraslidis, G.S.; Moysis, L.; Fragulis, G.F. A review of machine learning and deep learning for object detection, semantic segmentation, and human action recognition in machine and robotic vision. Technologies 2024, 12, 15. [Google Scholar] [CrossRef]

- Zhang, L.; Jia, X.; Chang, Q.; Liu, X.; Zhang, Z.; Cao, Y.; Liu, J.; Yang, Y. The development of machine vision and its applications in different industries: A review. Mech. Eng. Adv. 2024, 2, 1746. [Google Scholar] [CrossRef]

- Wu, M.; Li, C.; Yao, Z. Deep active learning for computer vision tasks: Methodologies, applications, and challenges. Appl. Sci. 2022, 12, 8103. [Google Scholar] [CrossRef]

- Katti, H.; Peelen, M.V.; Arun, S.P. Machine vision benefits from human contextual expectations. Sci. Rep. 2019, 9, 2112. [Google Scholar] [CrossRef]

- Gorodokin, V.; Zhankaziev, S.; Shepeleva, E.; Magdin, K.; Evtyukov, S. Optimization of adaptive traffic light control modes based on machine vision. Transp. Res. Procedia 2021, 57, 241–249. [Google Scholar] [CrossRef]

- Panagakis, Y.; Kossaifi, J.; Chrysos, G.G.; Oldfield, J.; Nicolaou, M.A.; Anandkumar, A.; Zafeiriou, S. Tensor methods in computer vision and deep learning. Proc. IEEE 2021, 109, 863–890. [Google Scholar] [CrossRef]

- Zhang, D.; Dongru, H.; Kang, L.; Zhang, W. The generative adversarial networks and its application in machine vision. Enterp. Inf. Syst. 2022, 16, 326–346. [Google Scholar] [CrossRef]

- Gustafsson, F.K.; Danelljan, M.; Schon, T.B. Evaluating scalable bayesian deep learning methods for robust computer vision. In 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW); IEEE: New York, NY, USA, 2020; pp. 318–319. [Google Scholar]

- Whatmough, P.N.; Zhou, C.; Hansen, P.; Venkataramanaiah, S.K.; Seo, J.S.; Mattina, M. Fixynn: Efficient hardware for mobile computer vision via transfer learning. arXiv 2019, arXiv:1902.11128. [Google Scholar] [CrossRef]

- Shu, Y.; Xiong, C.; Fan, S. Interactive design of intelligent machine vision based on human–computer interaction mode. Microprocess. Microsyst. 2020, 75, 103059. [Google Scholar] [CrossRef]

- Wu, W.; Li, Q. Machine vision inspection of electrical connectors based on improved Yolo v3. IEEE Access 2020, 8, 166184–166196. [Google Scholar] [CrossRef]

- Wu, P.; He, T.; Zhu, H.; Wang, Y.; Li, Q.; Wang, Z.; Fu, X.; Wang, F.; Wang, P.; Shan, C.; et al. Next-generation machine vision systems incorporating two-dimensional materials: Progress and perspectives. InfoMat 2022, 4, e12275. [Google Scholar] [CrossRef]

- Reggiannini, M.; Moroni, D. The use of saliency in underwater computer vision: A review. Remote Sens. 2020, 13, 22. [Google Scholar] [CrossRef]

- Akhtar, N.; Mian, A.; Kardan, N.; Shah, M. Advances in adversarial attacks and defenses in computer vision: A survey. IEEE Access 2021, 9, 155161–155196. [Google Scholar] [CrossRef]

- Goel, A.; Tung, C.; Lu, Y.H.; Thiruvathukal, G.K. A survey of methods for low-power deep learning and computer vision. In 2020 IEEE 6th World Forum on Internet of Things (WF-IoT); IEEE: New York, NY, USA, 2020; pp. 1–6. [Google Scholar]

- O’Mahony, N.; Campbell, S.; Carvalho, A.; Harapanahalli, S.; Hernandez, G.V.; Krpalkova, L.; Riordan, D.; Walsh, J. Deep learning vs. traditional computer vision. In Advances in Computer Vision; Science and Information Conference; Springer International Publishing: Cham, Switzerland, 2019; pp. 128–144. [Google Scholar]

- Yang, Z.; Nahrstedt, K.; Guo, H.; Zhou, Q. Deeprt: A soft real time scheduler for computer vision applications on the edge. In 2021 IEEE/ACM Symposium on Edge Computing (SEC); IEEE: New York, NY, USA, 2021; pp. 271–284. [Google Scholar]

- Baygin, M.; Karakose, M.; Sarimaden, A.; Akin, E. An image processing based object counting approach for machine vision application. arXiv 2018, arXiv:1802.05911. [Google Scholar] [CrossRef]

- Talebi, H.; Milanfar, P. Learning to resize images for computer vision tasks. In 2021 IEEE/CVF International Conference on Computer Vision (ICCV); IEEE: New York, NY, USA, 2021; pp. 497–506. [Google Scholar]

- El-Komy, A.; Shahin, O.R.; Abd El-Aziz, R.M.; Taloba, A.I. Integration of computer vision and natural language processing in multimedia robotics application. Inf. Sci. 2022, 7, 1–12. [Google Scholar]

- Li, J.; Mengu, D.; Yardimci, N.T.; Luo, Y.; Li, X.; Veli, M.; Rivenson, Y.; Jarrahi, M.; Ozcan, A. Spectrally encoded single-pixel machine vision using diffractive networks. Sci. Adv. 2021, 7, eabd7690. [Google Scholar] [CrossRef]

- Hu, Y.; Yang, S.; Yang, W.; Duan, L.Y.; Liu, J. Towards coding for human and machine vision: A scalable image coding approach. arXiv 2020, arXiv:2001.02915. [Google Scholar] [CrossRef]

- Roggi, G.; Niccolai, A.; Grimaccia, F.; Lovera, M. A computer vision line-tracking algorithm for automatic UAV photovoltaic plants monitoring applications. Energies 2020, 13, 838. [Google Scholar] [CrossRef]

- Paul, N.; Chung, C. Application of HDR algorithms to solve direct sunlight problems when autonomous vehicles using machine vision systems are driving into sun. Comput. Ind. 2018, 98, 192–196. [Google Scholar] [CrossRef]

- Rebecq, H.; Ranftl, R.; Koltun, V.; Scaramuzza, D. Events-to-video: Bringing modern computer vision to event cameras. In 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: New York, NY, USA, 2019; pp. 3857–3866. [Google Scholar]

- Mennel, L.; Symonowicz, J.; Wachter, S.; Polyushkin, D.K.; Molina-Mendoza, A.J.; Mueller, T. Ultrafast machine vision with 2D material neural network image sensors. Nature 2020, 579, 62–66. [Google Scholar] [CrossRef]

- Gamanayake, C.; Jayasinghe, L.; Ng, B.K.K.; Yuen, C. Cluster pruning: An efficient filter pruning method for edge ai vision applications. IEEE J. Sel. Top. Signal Process. 2020, 14, 802–816. [Google Scholar] [CrossRef]

- Ding, J.; Zhang, Z.; Yu, X.; Zhao, X.; Yan, Z. A novel moving object detection algorithm based on robust image feature threshold segmentation with improved optical flow estimation. Appl. Sci. 2023, 13, 4854. [Google Scholar] [CrossRef]

- Fang, S.; Zhang, B.; Hu, J. Improved Mask R-CNN multi-target detection and segmentation for autonomous driving in complex scenes. Sensors 2023, 23, 3853. [Google Scholar] [CrossRef] [PubMed]

- Moru, D.K.; Borro, D. A machine vision algorithm for quality control inspection of gears. Int. J. Adv. Manuf. Technol. 2020, 106, 105–123. [Google Scholar] [CrossRef]

- Li, J.; Telychko, M.; Yin, J.; Zhu, Y.; Li, G.; Song, S.; Yang, H.; Li, J.; Wu, J.; Lu, J.; et al. Machine vision automated chiral molecule detection and classification in molecular imaging. J. Am. Chem. Soc. 2021, 143, 10177–10188. [Google Scholar] [CrossRef]

- Yin, K.; Wang, L.; Zhang, J. ST-CSNN: A novel method for vehicle counting. Mach. Vis. Appl. 2021, 32, 108. [Google Scholar] [CrossRef]

- Dan, D.; Ge, L.; Yan, X. Identification of moving loads based on the information fusion of weigh-in-motion system and multiple camera machine vision. Measurement 2019, 144, 155–166. [Google Scholar] [CrossRef]

- Nardo, M.; Forino, D.; Murino, T. The evolution of man–machine interaction: The role of human in Industry 4.0 paradigm. Prod. Manuf. Res. 2020, 8, 20–34. [Google Scholar] [CrossRef]

- Solanes, J.E.; Gracia, L.; Valls Miro, J. Advances in Human–Machine Interaction, Artificial Intelligence, and Robotics. Electronics 2024, 13, 3856. [Google Scholar] [CrossRef]

- Sandini, G.; Sciutti, A.; Morasso, P. Artificial cognition vs. artificial intelligence for next-generation autonomous robotic agents. Front. Comput. Neurosci. 2024, 18, 1349408. [Google Scholar] [CrossRef]

- Ferreira, J.F.; Portugal, D.; Andrada, M.E.; Machado, P.; Rocha, R.P.; Peixoto, P. Sensing and artificial perception for robots in precision forestry: A survey. Robotics 2023, 12, 139. [Google Scholar] [CrossRef]

- Kumar, A. From mass customization to mass personalization: A strategic transformation. Int. J. Flex. Manuf. Syst. 2007, 19, 533–547. [Google Scholar] [CrossRef]

- Lu, Y.; Zheng, H.; Chand, S.; Xia, W.; Liu, Z.; Xu, X.; Wang, L.; Qin, Z.; Bao, J. Outlook on human-centric manufacturing towards Industry 5.0. J. Manuf. Syst. 2022, 62, 612–627. [Google Scholar] [CrossRef]

- Stączek, P.; Pizoń, J.; Danilczuk, W.; Gola, A. A digital twin approach for the improvement of an autonomous mobile robots (AMR’s) operating environment—A case study. Sensors 2021, 21, 7830. [Google Scholar] [CrossRef]

- Habib, L.; Pacaux-Lemoine, M.P.; Millot, P. A method for designing levels of automation based on a human-machine cooperation model. IFAC-PapersOnLine 2017, 50, 1372–1377. [Google Scholar] [CrossRef]

- Zieba, S.; Polet, P.; Vanderhaegen, F. Using adjustable autonomy and human–machine cooperation to make a human–machine system resilient—Application to a ground robotic system. Inf. Sci. 2011, 181, 379–397. [Google Scholar] [CrossRef]

- Semeraro, F.; Griffiths, A.; Cangelosi, A. Human–robot collaboration and machine learning: A systematic review of recent research. Robot. Comput.-Integr. Manuf. 2023, 79, 102432. [Google Scholar] [CrossRef]

- Wang, T.; Li, J.; Kong, Z.; Liu, X.; Snoussi, H.; Lv, H. Digital twin improved via visual question answering for vision-language interactive mode in human–machine collaboration. J. Manuf. Syst. 2021, 58, 261–269. [Google Scholar] [CrossRef]

- Simmler, M.; Frischknecht, R. A taxonomy of human–machine collaboration: Capturing automation and technical autonomy. AI Soc. 2021, 36, 239–250. [Google Scholar] [CrossRef]

- Xiong, W.; Fan, H.; Ma, L.; Wang, C. Challenges of human—Machine collaboration in risky decision-making. Front. Eng. Manag. 2022, 9, 89–103. [Google Scholar] [CrossRef]

- Trujillo, A.C.; Gregory, I.M.; Ackerman, K.A. Evolving relationship between humans and machines. IFAC-PapersOnLine 2019, 51, 366–371. [Google Scholar] [CrossRef]

- Simões, A.C.; Pinto, A.; Santos, J.; Pinheiro, S.; Romero, D. Designing human-robot collaboration (HRC) workspaces in industrial settings: A systematic literature review. J. Manuf. Syst. 2022, 62, 28–43. [Google Scholar] [CrossRef]

- Pizoń, J.; Gola, A.; Świć, A. The role and meaning of the digital twin technology in the process of implementing intelligent collaborative robots. In Advances in Manufacturing III; International Scientific-Technical Conference Manufacturing; Springer International Publishing: Cham, Switzerland, 2022; pp. 39–49. [Google Scholar]

- Santosuosso, A. About coevolution of humans and intelligent machines: Preliminary notes. BioLaw J.—Riv. BioDiritto 2021, 445–454. [Google Scholar] [CrossRef]

- Frazzon, E.M.; Agostino, Í.R.S.; Broda, E.; Freitag, M. Manufacturing networks in the era of digital production and operations: A socio-cyber-physical perspective. Annu. Rev. Control 2020, 49, 288–294. [Google Scholar] [CrossRef]

- Demir, K.A.; Döven, G.; Sezen, B. Industry 5.0 and human-robot co-working. Procedia Comput. Sci. 2019, 158, 688–695. [Google Scholar] [CrossRef]

- Dhanda, M.; Rogers, B.A.; Hall, S.; Dekoninck, E.; Dhokia, V. Reviewing human-robot collaboration in manufacturing: Opportunities and challenges in the context of industry 5.0. Robot. Comput.-Integr. Manuf. 2025, 93, 102937. [Google Scholar] [CrossRef]

- Lasi, H.; Fettke, P.; Kemper, H.G.; Feld, T.; Hoffmann, M. Industry 4.0. Bus. Inf. Syst. Eng. 2014, 6, 239–242. [Google Scholar] [CrossRef]

- Lee, J.; Bagheri, B.; Kao, H.A. A cyber-physical systems architecture for industry 4.0-based manufacturing systems. Manuf. Lett. 2015, 3, 18–23. [Google Scholar] [CrossRef]

- Zheng, P.; Wang, H.; Sang, Z.; Zhong, R.Y.; Liu, Y.; Liu, C.; Mubarok, K.; Yu, S.; Xu, X. Smart manufacturing systems for Industry 4.0: Conceptual framework, scenarios, and future perspectives. Front. Mech. Eng. 2018, 13, 137–150. [Google Scholar] [CrossRef]

- Monostori, L.; Kádár, B.; Bauernhansl, T.; Kondoh, S.; Kumara, S.; Reinhart, G.; Sauer, O.; Schuh, G.; Sihn, W.; Ueda, K. Cyber-physical systems in manufacturing. Cirp Ann. 2016, 65, 621–641. [Google Scholar] [CrossRef]

- Zhong, R.Y.; Xu, X.; Klotz, E.; Newman, S.T. Intelligent manufacturing in the context of industry 4.0: A review. Engineering 2017, 3, 616–630. [Google Scholar] [CrossRef]

- Lu, Y. Industry 4.0: A survey on technologies, applications and open research issues. J. Ind. Inf. Integr. 2017, 6, 1–10. [Google Scholar] [CrossRef]

- Grieves, M.; Vickers, J. Digital twin: Mitigating unpredictable, undesirable emergent behavior in complex systems. In Transdisciplinary Perspectives on Complex Systems: New Findings and Approaches; Springer International Publishing: Cham, Switzerland, 2016; pp. 85–113. [Google Scholar]

- Tao, F.; Qi, Q.; Liu, A.; Kusiak, A. Data-driven smart manufacturing. J. Manuf. Syst. 2018, 48, 157–169. [Google Scholar] [CrossRef]

- Koren, Y.; Gu, X.; Guo, W. Reconfigurable manufacturing systems: Principles, design, and future trends. Front. Mech. Eng. 2018, 13, 121–136. [Google Scholar] [CrossRef]

- Lu, B.; Zhou, X. Quality and reliability oriented maintenance for multistage manufacturing systems subject to condition monitoring. J. Manuf. Syst. 2019, 52, 76–85. [Google Scholar] [CrossRef]

- Frank, A.G.; Dalenogare, L.S.; Ayala, N.F. Industry 4.0 technologies: Implementation patterns in manufacturing companies. Int. J. Prod. Econ. 2019, 210, 15–26. [Google Scholar] [CrossRef]

- Xu, X.; Lu, Y.; Vogel-Heuser, B.; Wang, L. Industry 4.0 and Industry 5.0 Inception, conception and perception. J. Manuf. Syst. 2021, 61, 530–535. [Google Scholar] [CrossRef]

- Adeleke, A.K. Intelligent monitoring system for real-time optimization of ultra-precision manufacturing processes. Eng. Sci. Technol. J. 2024, 5, 803–810. [Google Scholar] [CrossRef]

- Jiang, Y.; Yin, S.; Dong, J.; Kaynak, O. A review on soft sensors for monitoring, control, and optimization of industrial processes. IEEE Sens. J. 2020, 21, 12868–12881. [Google Scholar] [CrossRef]

- Ucar, A.; Karakose, M.; Kırımça, N. Artificial intelligence for predictive maintenance applications: Key components, trustworthiness, and future trends. Appl. Sci. 2024, 14, 898. [Google Scholar] [CrossRef]

- Zonta, T.; Da Costa, C.A.; da Rosa Righi, R.; de Lima, M.J.; Da Trindade, E.S.; Li, G.P. Predictive maintenance in the Industry 4.0: A systematic literature review. Comput. Ind. Eng. 2020, 150, 106889. [Google Scholar] [CrossRef]

- Camacho, E.F.; Bordons, C. Constrained model predictive control. In Model Predictive Control; Springer: London, UK, 2007; pp. 177–216. [Google Scholar]

- Qin, S.J.; Badgwell, T.A. A survey of industrial model predictive control technology. Control Eng. Pract. 2003, 11, 733–764. [Google Scholar] [CrossRef]

- Hewing, L.; Wabersich, K.P.; Menner, M.; Zeilinger, M.N. Learning-based model predictive control: Toward safe learning in control. Annu. Rev. Control Robot. Auton. Syst. 2020, 3, 269–296. [Google Scholar] [CrossRef]

- Wang, J.; Ma, Y.; Zhang, L.; Gao, R.X.; Wu, D. Deep learning for smart manufacturing: Methods and applications. J. Manuf. Syst. 2018, 48, 144–156. [Google Scholar] [CrossRef]

- Parmar, T. Artificial Intelligence in High-tech Manufacturing: A Review of Applications in Quality Control and Process Optimization. Int. J. Innov. Res. Eng. Multidiscip. Phys. Sci. 2022, 10, 1–8. [Google Scholar] [CrossRef]

- Cheng, Y. Predictive Analysis on Maintenance of Mining Dump Truck. Appl. Mech. Mater. 2013, 340, 848–851. [Google Scholar] [CrossRef]

- Serin, G.; Sener, B.; Ozbayoglu, A.M.; Unver, H.O. Review of tool condition monitoring in machining and opportunities for deep learning. Int. J. Adv. Manuf. Technol. 2020, 109, 953–974. [Google Scholar] [CrossRef]

- Jaber, A.A. Design of an Intelligent Embedded System for Condition Monitoring of an Industrial Robot; Springer International Publishing: Cham, Switzerland, 2017. [Google Scholar] [CrossRef]

- Wong, S.Y.; Chuah, J.H.; Yap, H.J. Technical data-driven tool condition monitoring challenges for CNC milling: A review. Int. J. Adv. Manuf. Technol. 2020, 107, 4837–4857. [Google Scholar] [CrossRef]

- Lin, R.H.; Xi, X.N.; Wang, P.N.; Wu, B.D.; Tian, S.M. Review on hydrogen fuel cell condition monitoring and prediction methods. Int. J. Hydrogen Energy 2019, 44, 5488–5498. [Google Scholar] [CrossRef]

- Surucu, O.; Gadsden, S.A.; Yawney, J. Condition monitoring using machine learning: A review of theory, applications, and recent advances. Expert Syst. Appl. 2023, 221, 119738. [Google Scholar] [CrossRef]

- Jardine, A.K.; Lin, D.; Banjevic, D. A review on machinery diagnostics and prognostics implementing condition-based maintenance. Mech. Syst. Signal Process. 2006, 20, 1483–1510. [Google Scholar] [CrossRef]

- Lei, Y.; Li, N.; Guo, L.; Li, N.; Yan, T.; Lin, J. Machinery health prognostics: A systematic review from data acquisition to RUL prediction. Mech. Syst. Signal Process. 2018, 104, 799–834. [Google Scholar] [CrossRef]

- Zhao, R.; Yan, R.; Chen, Z.; Mao, K.; Wang, P.; Gao, R.X. Deep learning and its applications to machine health monitoring. Mech. Syst. Signal Process. 2019, 115, 213–237. [Google Scholar] [CrossRef]

- Si, X.S.; Wang, W.; Hu, C.H.; Zhou, D.H. Remaining useful life estimation—A review on the statistical data driven approaches. Eur. J. Oper. Res. 2011, 213, 1–14. [Google Scholar] [CrossRef]

- Lee, J.; Kao, H.A.; Yang, S. Service innovation and smart analytics for industry 4.0 and big data environment. Procedia CIRP 2014, 16, 3–8. [Google Scholar] [CrossRef]

- Carvalho, T.P.; Soares, F.A.; Vita, R.; Francisco, R.D.P.; Basto, J.P.; Alcalá, S.G. A systematic literature review of machine learning methods applied to predictive maintenance. Comput. Ind. Eng. 2019, 137, 106024. [Google Scholar] [CrossRef]

- Zhang, W.; Yang, D.; Wang, H. Data-driven methods for predictive maintenance of industrial equipment: A survey. IEEE Syst. J. 2019, 13, 2213–2227. [Google Scholar] [CrossRef]

- Milazzo, M.; Libonati, F. The synergistic role of additive manufacturing and artificial intelligence for the design of new advanced intelligent systems. Adv. Intell. Syst. 2022, 4, 2100278. [Google Scholar] [CrossRef]

- Huang, Z.; Shen, Y.; Li, J.; Fey, M.; Brecher, C. A survey on AI-driven digital twins in industry 4.0: Smart manufacturing and advanced robotics. Sensors 2021, 21, 6340. [Google Scholar] [CrossRef] [PubMed]

- Sundaram, S.; Zeid, A. Artificial intelligence-based smart quality inspection for manufacturing. Micromachines 2023, 14, 570. [Google Scholar] [CrossRef] [PubMed]

- Pookkuttath, S.; Rajesh Elara, M.; Sivanantham, V.; Ramalingam, B. AI-enabled predictive maintenance framework for autonomous mobile cleaning robots. Sensors 2021, 22, 13. [Google Scholar] [CrossRef]

- Gumbs, A.A.; Frigerio, I.; Spolverato, G.; Croner, R.; Illanes, A.; Chouillard, E.; Elyan, E. Artificial intelligence surgery: How do we get to autonomous actions in surgery. Sensors 2021, 21, 5526. [Google Scholar] [CrossRef] [PubMed]

- Murugamani, C.; Sahoo, S.K.; Kshirsagar, P.R.; Prathap, B.R.; Islam, S.; Naveed, Q.N.; Hussain, M.R.; Hung, B.T.; Teressa, D.M. Wireless communication for robotic process automation using machine learning technique. Wirel. Commun. Mob. Comput. 2022, 2022, 4723138. [Google Scholar] [CrossRef]

- Dimitropoulos, N.; Togias, T.; Zacharaki, N.; Michalos, G.; Makris, S. Seamless human–robot collaborative assembly using artificial intelligence and wearable devices. Appl. Sci. 2021, 11, 5699. [Google Scholar] [CrossRef]

- Hashmi, A.W.; Mali, H.S.; Meena, A.; Saxena, K.K.; Puerta, A.P.V.; Prakash, C.; Buddhi, D.; Davim, J.P.; Abdul-Zahra, D.S. Understanding the mechanism of abrasive-based finishing processes using mathematical modeling and numerical simulation. Metals 2022, 12, 1328. [Google Scholar] [CrossRef]

- Simeth, A.; Plapper, P. Artificial intelligence based robotic automation of manual assembly tasks for intelligent manufacturing. In Smart, Sustainable Manufacturing in an Ever-Changing World: Proceedings of International Conference on Competitive Manufacturing (COMA’22); Springer International Publishing: Cham, Switzerland, 2023; pp. 137–148. [Google Scholar]

- Abioye, S.O.; Oyedele, L.O.; Akanbi, L.; Ajayi, A.; Delgado, J.M.D.; Bilal, M.; Akinade, O.O.; Ahmed, A. Artificial intelligence in the construction industry: A review of present status, opportunities and future challenges. J. Build. Eng. 2021, 44, 103299. [Google Scholar] [CrossRef]

- Benotsmane, R.; Kovács, G.; Dudás, L. Economic, social impacts and operation of smart factories in Industry 4.0 focusing on simulation and artificial intelligence of collaborating robots. Soc. Sci. 2019, 8, 143. [Google Scholar] [CrossRef]

- Bałazy, P.; Gut, P.; Knap, P. Positioning algorithm for AGV autonomous driving platform based on artificial neural networks. Robot. Syst. Appl. 2021, 1, 41–45. [Google Scholar] [CrossRef]

- Chryssolouris, G.; Alexopoulos, K.; Arkouli, Z. Artificial intelligence in manufacturing equipment, automation, and robots. In A Perspective on Artificial Intelligence in Manufacturing; Springer International Publishing: Cham, Switzerland, 2023; pp. 41–78. [Google Scholar]

- Cho, D.Y.; Kang, M.K. Human gaze-aware attentive object detection for ambient intelligence. Eng. Appl. Artif. Intell. 2021, 106, 104471. [Google Scholar] [CrossRef]

- Lin, S.; Liu, A.; Wang, J.; Kong, X. An intelligence-based hybrid PSO-SA for mobile robot path planning in warehouse. J. Comput. Sci. 2023, 67, 101938. [Google Scholar] [CrossRef]

- Arinez, J.F.; Chang, Q.; Gao, R.X.; Xu, C.; Zhang, J. Artificial intelligence in advanced manufacturing: Current status and future outlook. J. Manuf. Sci. Eng. 2020, 142, 110804. [Google Scholar] [CrossRef]

- Khawar, H.; Soomro, T.R.; Kamal, M.A. Machine learning for internet of things-based smart transportation networks. In Machine Learning for Societal Improvement, Modernization, and Progress; IGI Global Scientific Publishing: Hershey, PA, USA, 2022; pp. 112–134. [Google Scholar]

- Yuan, T.; da Rocha Neto, W.; Rothenberg, C.E.; Obraczka, K.; Barakat, C.; Turletti, T. Machine learning for next-generation intelligent transportation systems: A survey. Trans. Emerg. Telecommun. Technol. 2022, 33, e4427. [Google Scholar] [CrossRef]

- Sharma, A.; Awasthi, Y.; Kumar, S. The role of blockchain, AI and IoT for smart road traffic management system. In 2020 IEEE India Council International Subsections Conference (INDISCON); IEEE: New York, NY, USA, 2020; pp. 289–296. [Google Scholar]

- Iyer, L.S. AI enabled applications towards intelligent transportation. Transp. Eng. 2021, 5, 100083. [Google Scholar] [CrossRef]

- Theissler, A.; Pérez-Velázquez, J.; Kettelgerdes, M.; Elger, G. Predictive maintenance enabled by machine learning: Use cases and challenges in the automotive industry. Reliab. Eng. Syst. Saf. 2021, 215, 107864. [Google Scholar] [CrossRef]

- Zantalis, F.; Koulouras, G.; Karabetsos, S.; Kandris, D. A review of machine learning and IoT in smart transportation. Future Internet 2019, 11, 94. [Google Scholar] [CrossRef]

- Olugbade, S.; Ojo, S.; Imoize, A.L.; Isabona, J.; Alaba, M.O. A review of artificial intelligence and machine learning for incident detectors in road transport systems. Math. Comput. Appl. 2022, 27, 77. [Google Scholar] [CrossRef]

- Halim, Z.; Kalsoom, R.; Bashir, S.; Abbas, G. Artificial intelligence techniques for driving safety and vehicle crash prediction. Artif. Intell. Rev. 2016, 46, 351–387. [Google Scholar] [CrossRef]

- Nikitas, A.; Michalakopoulou, K.; Njoya, E.T.; Karampatzakis, D. Artificial intelligence, transport and the smart city: Definitions and dimensions of a new mobility era. Sustainability 2020, 12, 2789. [Google Scholar] [CrossRef]

- Heidari, A.; Jafari Navimipour, N.; Unal, M.; Zhang, G. Machine learning applications in internet-of-drones: Systematic review, recent deployments, and open issues. ACM Comput. Surv. 2023, 55, 1–45. [Google Scholar] [CrossRef]

- De Swarte, T.; Boufous, O.; Escalle, P. Artificial intelligence, ethics and human values: The cases of military drones and companion robots. Artif. Life Robot. 2019, 24, 291–296. [Google Scholar] [CrossRef]

- Toma, C.; Popa, M.; Iancu, B.; Doinea, M.; Pascu, A.; Ioan-Dutescu, F. Edge machine learning for the automated decision and visual computing of the robots, IoT embedded devices or UAV-drones. Electronics 2022, 11, 3507. [Google Scholar] [CrossRef]

- Singh, A.; Raj, K.; Kumar, T.; Verma, S.; Roy, A.M. Deep learning-based cost-effective and responsive robot for autism treatment. Drones 2023, 7, 81. [Google Scholar] [CrossRef]

- Yazid, Y.; Ez-Zazi, I.; Guerrero-González, A.; El Oualkadi, A.; Arioua, M. UAV-enabled mobile edge-computing for IoT based on AI: A comprehensive review. Drones 2021, 5, 148. [Google Scholar] [CrossRef]

- Wu, X.; Li, W.; Hong, D.; Tao, R.; Du, Q. Deep learning for unmanned aerial vehicle-based object detection and tracking: A survey. IEEE Geosci. Remote Sens. Mag. 2021, 10, 91–124. [Google Scholar] [CrossRef]

- Bijjahalli, S.; Sabatini, R.; Gardi, A. Advances in intelligent and autonomous navigation systems for small UAS. Prog. Aerosp. Sci. 2020, 115, 100617. [Google Scholar] [CrossRef]

- Saranya, T.; Deisy, C.; Sridevi, S.; Anbananthen, K.S.M. A comparative study of deep learning and Internet of Things for precision agriculture. Eng. Appl. Artif. Intell. 2023, 122, 106034. [Google Scholar] [CrossRef]

- Alrayes, F.S.; Alotaibi, S.S.; Alissa, K.A.; Maashi, M.; Alhogail, A.; Alotaibi, N.; Mohsen, H.; Motwakel, A. Artificial intelligence-based secure communication and classification for drone-enabled emergency monitoring systems. Drones 2022, 6, 222. [Google Scholar] [CrossRef]

- Sorooshian, S.; Khademi Sharifabad, S.; Parsaee, M.; Afshari, A.R. Toward a modern last-mile delivery: Consequences and obstacles of intelligent technology. Appl. Syst. Innov. 2022, 5, 82. [Google Scholar] [CrossRef]

- Potočnik, J.; Foley, S.; Thomas, E. Current and potential applications of artificial intelligence in medical imaging practice: A narrative review. J. Med. Imaging Radiat. Sci. 2023, 54, 376–385. [Google Scholar] [CrossRef]

- Gupta, R.; Kumar, N.; Bansal, S.; Singh, S.; Sood, N.; Gupta, S. Artificial intelligence-driven digital cytology-based cervical cancer screening: Is the time ripe to adopt this disruptive technology in resource-constrained settings? A literature review. J. Digit. Imaging 2023, 36, 1643–1652. [Google Scholar] [CrossRef]

- Jahn, S.W.; Plass, M.; Moinfar, F. Digital pathology: Advantages, limitations and emerging perspectives. J. Clin. Med. 2020, 9, 3697. [Google Scholar] [CrossRef]

- Aggarwal, R.; Sounderajah, V.; Martin, G.; Ting, D.S.W.; Karthikesalingam, A.; King, D.; Ashrafian, H.; Darzi, A. Diagnostic accuracy of deep learning in medical imaging: A systematic review and meta-analysis. npj Digit. Med. 2021, 4, 65. [Google Scholar] [CrossRef]

- Hansun, S.; Argha, A.; Liaw, S.T.; Celler, B.G.; Marks, G.B. Machine and deep learning for tuberculosis detection on chest X-rays: Systematic literature review. J. Med. Internet Res. 2023, 25, e43154. [Google Scholar] [CrossRef]

- Nam, J.G.; Park, S.; Hwang, E.J.; Lee, J.H.; Jin, K.-N.; Lim, K.Y.; Vu, T.H.; Sohn, J.H.; Hwang, S.; Goo, J.M.; et al. Development and validation of deep learning–based automatic detection algorithm for malignant pulmonary nodules on chest radiographs. Radiology 2019, 290, 218–228. [Google Scholar] [CrossRef]

- Ehteshami Bejnordi, B.; Veta, M.; Johannes van Diest, P.; Van Ginneken, B.; Karssemeijer, N.; Litjens, G.; Van Der Laak, J.A.; CAMELYON16 consortium; Hermsen, M.; Manson, Q.F.; et al. Diagnostic assessment of deep learning algorithms for detection of lymph node metastases in women with breast cancer. JAMA 2017, 318, 2199–2210. [Google Scholar] [CrossRef]

- Gulshan, V.; Peng, L.; Coram, M.; Stumpe, M.C.; Wu, D.; Narayanaswamy, A.; Venugopalan, S.; Widner, K.; Madams, T.; Cuadros, J.; et al. Development and Validation of a Deep Learning Algorithm for Detection of Diabetic Retinopathy in Retinal Fundus Photographs. JAMA 2016, 316, 2402–2410. [Google Scholar] [CrossRef]

- Widner, K.; Virmani, S.; Krause, J.; Nayar, J.; Tiwari, R.; Pedersen, E.R.; Jeji, D.; Hammel, N.; Matias, Y.; Corrado, G.S.; et al. Lessons learned from translating AI from development to deployment in healthcare. Nat. Med. 2023, 29, 1304–1306. [Google Scholar] [CrossRef]

- Durkee, M.S.; Abraham, R.; Clark, M.R.; Giger, M.L. Artificial intelligence and cellular segmentation in tissue microscopy images. Am. J. Pathol. 2021, 191, 1693–1701. [Google Scholar] [CrossRef]

- Hanna, M.G.; Ardon, O.; Reuter, V.E.; Sirintrapun, S.J.; England, C.; Klimstra, D.S.; Hameed, M.R. Integrating digital pathology into clinical practice. Mod. Pathol. 2022, 35, 152–164. [Google Scholar] [CrossRef]

- Gómez-De-Mariscal, E.; García-López-De-Haro, C.; Ouyang, W.; Donati, L.; Lundberg, E.; Unser, M.; Muñoz-Barrutia, A.; Sage, D. DeepImageJ: A user-friendly environment to run deep learning models in ImageJ. Nat. Methods 2021, 18, 1192–1195. [Google Scholar] [CrossRef]

- Kumar, Y.; Koul, A.; Singla, R.; Ijaz, M.F. Artificial intelligence in disease diagnosis: A systematic literature review, synthesizing framework and future research agenda. J. Ambient Intell. Humaniz. Comput. 2023, 14, 8459–8486. [Google Scholar] [CrossRef]

- Serrano, D.R.; Luciano, F.C.; Anaya, B.J.; Ongoren, B.; Kara, A.; Molina, G.; Ramirez, B.I.; Sánchez-Guirales, S.A.; Simon, J.A.; Tomietto, G.; et al. Artificial Intelligence (AI) Applications in Drug Discovery and Drug Delivery: Revolutionizing Personalized Medicine. Pharmaceutics 2024, 16, 1328. [Google Scholar] [CrossRef]

- Liu, X.; Faes, L.; Kale, A.U.; Wagner, S.K.; Fu, D.J.; Bruynseels, A.; Mahendiran, T.; Moraes, G.; Shamdas, M.; Kern, C.; et al. A comparison of deep learning performance against health-care professionals in detecting diseases from medical imaging: A systematic review and meta-analysis. Lancet Digit. Health 2019, 1, e271–e297. [Google Scholar] [CrossRef]

- Wang, L.; Zhang, Y.; Wang, D.; Tong, X.; Liu, T.; Zhang, S.; Huang, J.; Zhang, L.; Chen, L.; Fan, H.; et al. Artificial intelligence for COVID-19: A systematic review. Front. Med. 2021, 8, 704256. [Google Scholar] [CrossRef]

- Ahsan, M.M.; Luna, S.A.; Siddique, Z. Machine-learning-based disease diagnosis: A comprehensive review. Healthcare 2022, 10, 541. [Google Scholar] [CrossRef]

- Wu, S.; Wang, J.; Guo, Q.; Lan, H.; Zhang, J.; Wang, L.; Janne, E.; Luo, X.; Wang, Q.; Song, Y.; et al. Application of artificial intelligence in clinical diagnosis and treatment: An overview of systematic reviews. Intell. Med. 2022, 2, 88–96. [Google Scholar] [CrossRef]

- Kumar, K.; Kumar, P.; Deb, D.; Unguresan, M.L.; Muresan, V. Artificial intelligence and machine learning based intervention in medical infrastructure: A review and future trends. Healthcare 2023, 11, 207. [Google Scholar] [CrossRef]

- Blumenthal, D.; Tavenner, M. The “meaningful use” regulation for electronic health records. N. Engl. J. Med. 2010, 363, 501–504. [Google Scholar] [CrossRef]

- De Francesco, D.; Reiss, J.D.; Roger, J.; Tang, A.S.; Chang, A.L.; Becker, M.; Aghaeepour, N. Data-driven longitudinal characterization of neonatal health and morbidity. Sci. Transl. Med. 2023, 15, eadc9854. [Google Scholar] [CrossRef]

- Tang, H.; Solti, I.; Kirkendall, E.; Zhai, H.; Lingren, T.; Meller, J.; Ni, Y. Leveraging food and drug administration adverse event reports for the automated monitoring of electronic health records in a pediatric hospital. Biomed. Inform. Insights 2017, 9, 1178222617713018. [Google Scholar] [CrossRef][Green Version]

- Jensen, P.B.; Jensen, L.J.; Brunak, S. Mining electronic health records: Towards better research applications and clinical care. Nat. Rev. Genet. 2012, 13, 395–405. [Google Scholar] [CrossRef]

- Tatonetti, N.P.; Ye, P.P.; Daneshjou, R.; Altman, R.B. Data-driven prediction of drug effects and interactions. Sci. Transl. Med. 2012, 4, 125ra31. [Google Scholar] [CrossRef]

- Xiao, C.; Choi, E.; Sun, J. Opportunities and challenges in developing deep learning models using electronic health records data: A systematic review. J. Am. Med. Inform. Assoc. 2018, 25, 1419–1428. [Google Scholar] [CrossRef] [PubMed]

- Mi, X.; Zou, B.; Zou, F.; Hu, J. Permutation-based identification of important biomarkers for complex diseases via machine learning models. Nat. Commun. 2021, 12, 3008. [Google Scholar] [CrossRef] [PubMed]

- Lewis, J.E.; Kemp, M.L. Integration of machine learning and genome-scale metabolic modeling identifies multi-omics biomarkers for radiation resistance. Nat. Commun. 2021, 12, 2700. [Google Scholar] [CrossRef] [PubMed]

- Manak, M.S.; Varsanik, J.S.; Hogan, B.J.; Whitfield, M.J.; Su, W.R.; Joshi, N.; Steinke, N.; Min, A.; Berger, D.; Saphirstein, R.J.; et al. Live-cell phenotypic-biomarker microfluidic assay for the risk stratification of cancer patients via machine learning. Nat. Biomed. Eng. 2018, 2, 761–772. [Google Scholar] [CrossRef]

- Chen, J.; Yuan, Y.; Ziabari, A.K.; Xu, X.; Zhang, H.; Christakopoulos, P.; Advincula, R. AI for Manufacturing and Healthcare: A chemistry and engineering perspective. arXiv 2024, arXiv:2405.01520. [Google Scholar] [CrossRef]

- Kusiak, A. Smart manufacturing. Int. J. Prod. Res. 2018, 56, 508–517. [Google Scholar] [CrossRef]

- Qi, M. Enhancing Industrial Automation Through AI-driven Sensors: A Comprehensive Study on Efficiency, Safety, and Predictive Maintenance. Appl. Comput. Eng. 2024, 80, 188–195. [Google Scholar] [CrossRef]

- Johansen, K.; Rao, S.; Ashourpour, M. The role of automation in complexities of high-mix in low-volume production—A literature review. Procedia CIRP 2021, 104, 1452–1457. [Google Scholar] [CrossRef]

- Li, C. Editorial for special issue on ultra-precision machining of difficult-to-machine materials. Micromachines 2025, 16, 1004. [Google Scholar] [CrossRef]

| Criterion | Machine Learning (ML) | Deep Learning (DL) | Reinforcement Learning (RL) |

|---|---|---|---|

| Learning paradigm | Supervised, unsupervised, semi-supervised, reinforcement learning | Multilayer neural networks with hierarchical feature learning | Trial-and-error learning based on reward maximization |

| Data requirements | Low to moderate; performance depends on data quality and quantity | High; typically requires large annotated datasets | High; requires extensive interaction data |

| Feature engineering | Manual or domain-informed feature extraction | Automatic feature extraction from raw data | State and reward design required |

| Computational cost | Low to moderate | High (training and inference) | High (training and exploration) |

| Interpretability | Relatively high | Low (black-box nature) | Low to moderate |

| Real-time feasibility | High; suitable for real-time systems | Moderate; constrained by computation | Limited; safety and latency concerns |

| Robustness | Moderate; sensitive to data quality | High in perception tasks, data-dependent | Variable; sensitive to environment dynamics |

| Safety suitability | High; widely adopted in safety-critical domains | Moderate; requires careful validation | Low; exploration poses safety risks |

| Typical application domains | Crop yield prediction, fraud detection, smart city management | Medical imaging and cancer screening, vision and pattern recognition | Robotics and autonomous systems |

| Key limitations | Limited scalability; relies on feature design | Data-hungry, computationally expensive, low transparency | Training instability; difficult deployment in real systems |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Salawu, G.; Glen, B. Integrating Artificial Intelligence into Mechatronics: A Comprehensive Study of Its Influence on System Performance, Autonomy, and Manufacturing Efficiency. Technologies 2026, 14, 143. https://doi.org/10.3390/technologies14030143

Salawu G, Glen B. Integrating Artificial Intelligence into Mechatronics: A Comprehensive Study of Its Influence on System Performance, Autonomy, and Manufacturing Efficiency. Technologies. 2026; 14(3):143. https://doi.org/10.3390/technologies14030143

Chicago/Turabian StyleSalawu, Ganiyat, and Bright Glen. 2026. "Integrating Artificial Intelligence into Mechatronics: A Comprehensive Study of Its Influence on System Performance, Autonomy, and Manufacturing Efficiency" Technologies 14, no. 3: 143. https://doi.org/10.3390/technologies14030143

APA StyleSalawu, G., & Glen, B. (2026). Integrating Artificial Intelligence into Mechatronics: A Comprehensive Study of Its Influence on System Performance, Autonomy, and Manufacturing Efficiency. Technologies, 14(3), 143. https://doi.org/10.3390/technologies14030143