Vision-Based Autonomous Landing for the UAV: A Review

Abstract

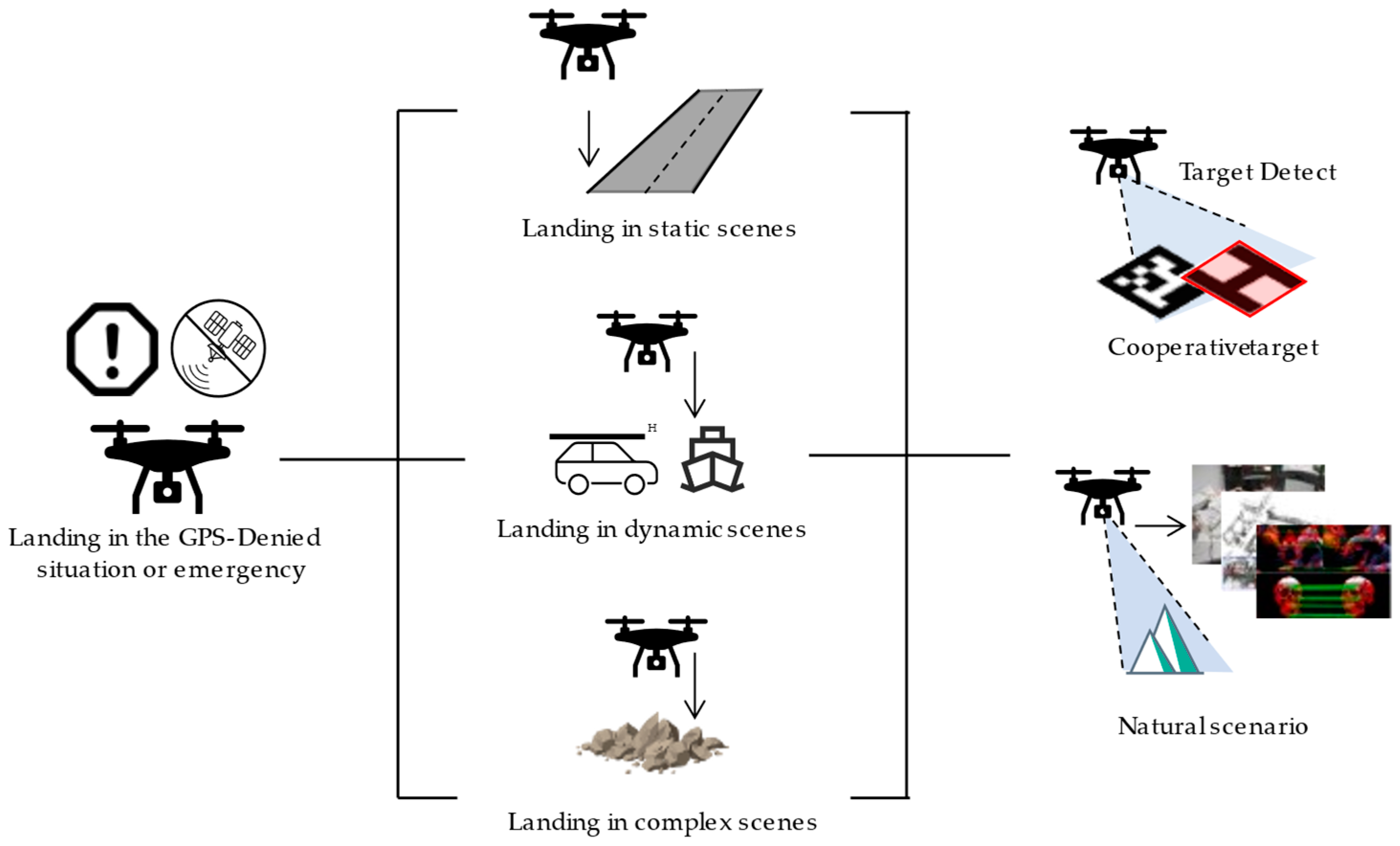

1. Introduction

- (1)

- Real-time massive information processing:

- (2)

- Limited onboard resources:

- (3)

- High maneuverability of UAV platforms:

- (4)

- Limitations of traditional image processing algorithms:

2. Autonomous Landing of UAVs in Static Scenes

- (1)

- The visual task load and other sensors on the UAV are called to collect and resolve the information from the environment around the landing area, capture artificial landmarks, and perform feature point calculation, feature extraction, feature matching and other operations on the image to achieve landmark tracking and detection. The relative pose information of the UAV and the landmark is continuously returned to the head controller.

- (2)

- Flight control system corrects and guides the aircraft’s position and attitude according to the relative position and missed target amount returned by the vision module in order to make it converge to the target with a relatively appropriate direction and speed.

- (3)

- Flight control system completes the real-time correction of the landing attitude at high speed, and successfully landed at the target location at last.

2.1. Cooperative Targets Based Autonomous Landing

2.1.1. Classical Feature-Based Solutions

- (1)

- T shape

- (2)

- H shape

- (3)

- Round, rectangular shapes

- (4)

- Combination shape

2.1.2. Machine Learning-Based Solutions

- (1)

- Classifier-based methods

- (2)

- Deep learning-based methods

2.2. Natural Scenario Based Autonomous Landing

2.2.1. Scene Matching-Based Solutions

2.2.2. Near-Field 3D Reconstruction-Based Solutions

3. Autonomous Landing of UAVs in Dynamic Scenes

3.1. Autonomous Landing on Vehicle-Based Platform

3.2. Autonomous Landing on Ship-Based Platforms

4. Autonomous Landing in Complex Scenes

5. Summary and Suggestion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Gautam, A.; Sujit, P.B.; Saripalli, S. A survey of autonomous landing techniques for UAVs. In Proceedings of the 2014 International Conference on Unmanned Aircraft Systems (ICUAS), Orlando, FL, USA, 27–30 May 2014; pp. 1210–1218. [Google Scholar] [CrossRef]

- Kong, W.; Zhou, D.; Zhang, D.; Zhang, J. Vision-based autonomous landing system for unmanned aerial vehicle: A survey. In Proceedings of the 2014 International Conference on Multisensor Fusion and Information Integration for Intelligent Systems (MFI), Beijing, China, 28–29 September 2014; pp. 1–8. [Google Scholar] [CrossRef]

- Yang, Z.; Li, C. Review on vision-based pose estimation of UAV based on landmark. In Proceedings of the 2017 2nd International Conference on Frontiers of Sensors Technologies (ICFST), Shenzhen, China, 14–16 April 2017; pp. 453–457. [Google Scholar] [CrossRef]

- Chen, P.; Zhou, Y. The Review of Target Tracking for UAV. In Proceedings of the 2019 14th IEEE Conference on Industrial Electronics and Applications (ICIEA), Xi’an, China, 19–21 June 2019; pp. 1800–1805. [Google Scholar] [CrossRef]

- Sharp, C.S.; Shakernia, O.; Sastry, S.S. A vision system for landing an unmanned aerial vehicle. In Proceedings of the 2001 ICRA. IEEE International Conference on Robotics and Automation (Cat. No.01CH37164), Seoul, Korea, 21–26 May 2001; Volume 1722, pp. 1720–1727. [Google Scholar] [CrossRef]

- Saripalli, S.; Montgomery, J.F.; Sukhatme, G.S. Vision-based autonomous landing of an unmanned aerial vehicle. In Proceedings of the 2002 IEEE International Conference on Robotics and Automation (Cat. No. 02CH37292), Washington, DC, USA, 11–15 May 2002; pp. 2799–2804. [Google Scholar] [CrossRef]

- Saripalli, S.; Montgomery, J.F.; Sukhatme, G.S. Visually guided landing of an unmanned aerial vehicle. IEEE Trans. Robot. Autom. 2003, 19, 371–380. [Google Scholar] [CrossRef]

- Jung, Y.; Lee, D.; Bang, H. Close-range vision navigation and guidance for rotary UAV autonomous landing. In Proceedings of the 2015 IEEE International Conference on Automation Science and Engineering (CASE), Gothenburg, Sweden, 24–28 August 2015; pp. 342–347. [Google Scholar] [CrossRef]

- Baca, T.; Stepan, P.; Spurny, V.; Hert, D.; Penicka, R.; Saska, M.; Thomas, J.; Loianno, G.; Kumar, V. Autonomous landing on a moving vehicle with an unmanned aerial vehicle. J. Field Robot. 2019, 36, 874–891. [Google Scholar] [CrossRef]

- Verbandt, M.; Theys, B.; De Schutter, J. Robust marker-tracking system for vision-based autonomous landing of VTOL UAVs. In Proceedings of the International Micro Air Vehicle Conference and Competition, Delft, Netherlands, 12–15 August 2014; pp. 84–91. [Google Scholar] [CrossRef]

- Tsai, A.C.; Gibbens, P.W.; Stone, R.H. Terminal phase vision-based target recognition and 3D pose estimation for a tail-sitter, vertical takeoff and landing unmanned air vehicle. In Proceedings of the Pacific-Rim Symposium on Image and Video Technology, Hsinchu, Taiwan, 10–13 December 2006; pp. 672–681. [Google Scholar] [CrossRef]

- Xu, G.; Zhang, Y.; Ji, S.; Cheng, Y.; Tian, Y. Research on computer vision-based for UAV autonomous landing on a ship. Pattern Recognit. Lett. 2009, 30, 600–605. [Google Scholar] [CrossRef]

- Yang, F.; Shi, H.; Wang, H. A vision-based algorithm for landing unmanned aerial vehicles. In Proceedings of the 2008 International Conference on Computer Science and Software Engineering, Wuhan, China, 12–14 December 2008; pp. 993–996. [Google Scholar] [CrossRef]

- Hu, M.-K. Visual pattern recognition by moment invariants. IEEE Trans. Inf. Theory 1962, 8, 179–187. [Google Scholar] [CrossRef]

- Yol, A.; Delabarre, B.; Dame, A.; Dartois, J.É.; Marchand, E. Vision-based absolute localization for unmanned aerial vehicles. In Proceedings of the 2014 IEEE/RSJ International Conference on Intelligent Robots and Systems, Chicago, IL, USA, 14–18 September 2014; pp. 3429–3434. [Google Scholar] [CrossRef]

- Zeng, F.; Shi, H.; Wang, H. The object recognition and adaptive threshold selection in the vision system for landing an unmanned aerial vehicle. In Proceedings of the 2009 International Conference on Information and Automation, Zhuhai, Macau, 22–24 June 2009; pp. 117–122. [Google Scholar] [CrossRef]

- Shakernia, O.; Ma, Y.; Koo, T.J.; Hespanha, J.; Sastry, S.S. Vision guided landing of an unmanned air vehicle. In Proceedings of the 38th IEEE Conference on Decision and Control (Cat. No. 99CH36304), Phoenix, AZ, USA, 7–10 December 1999; pp. 4143–4148. [Google Scholar] [CrossRef]

- Lange, S.; Sunderhauf, N.; Protzel, P. A vision based onboard approach for landing and position control of an autonomous multirotor UAV in GPS-denied environments. In Proceedings of the 2009 International Conference on Advanced Robotics, Munich, Germany, 22–26 June 2009; pp. 1–6. [Google Scholar]

- Benini, A.; Rutherford, M.J.; Valavanis, K.P. Real-time, GPU-based pose estimation of a UAV for autonomous takeoff and landing. In Proceedings of the 2016 IEEE International Conference on Robotics and Automation (ICRA), Stockholm, Sweden, 16–21 May 2016; pp. 3463–3470. [Google Scholar] [CrossRef]

- Yuan, H.; Xiao, C.; Xiu, S.; Zhan, W.; Ye, Z.; Zhang, F.; Zhou, C.; Wen, Y.; Li, Q. A Hierarchical Vision-Based UAV Localization for an Open Landing. Electronics 2018, 7, 68. [Google Scholar] [CrossRef]

- Olson, E. AprilTag: A robust and flexible visual fiducial system. In Proceedings of the 2011 IEEE International Conference on Robotics and Automation, Shanghai, China, 9–13 May 2011; pp. 3400–3407. [Google Scholar] [CrossRef]

- Fiala, M. Comparing ARTag and ARToolkit Plus fiducial marker systems. In Proceedings of the IEEE International Workshop on Haptic Audio Visual Environments and their Applications, Ottawa, ON, Canada, 1 October 2005; p. 6. [Google Scholar] [CrossRef]

- Li, Z.; Chen, Y.; Lu, H.; Wu, H.; Cheng, L. UAV autonomous landing technology based on AprilTags vision positioning algorithm. In Proceedings of the 2019 Chinese Control Conference (CCC), Guangzhou, China, 27–30 July 2019; pp. 8148–8153. [Google Scholar] [CrossRef]

- Wang, Z.; She, H.; Si, W. Autonomous landing of multi-rotors UAV with monocular gimbaled camera on moving vehicle. In Proceedings of the 2017 13th IEEE International Conference on Control & Automation (ICCA), Ohrid, Macedonia, 3–6 July 2017; pp. 408–412. [Google Scholar] [CrossRef]

- Wu, H.; Cai, Z.; Wang, Y. Vison-based auxiliary navigation method using augmented reality for unmanned aerial vehicles. In Proceedings of the IEEE 10th International Conference on Industrial Informatics, Beijing, China, 25–27 July 2012; pp. 520–525. [Google Scholar] [CrossRef]

- Wang, J.; Olson, E. AprilTag 2: Efficient and robust fiducial detection. In Proceedings of the 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Daejeon, Korea, 9–14 October 2016; pp. 4193–4198. [Google Scholar] [CrossRef]

- Yang, S.; Scherer, S.A.; Zell, A. An Onboard Monocular Vision System for Autonomous Takeoff, Hovering and Landing of a Micro Aerial Vehicle. J. Intell. Robot. Syst. 2012, 69, 499–515. [Google Scholar] [CrossRef]

- Nguyen, P.H.; Kim, K.W.; Lee, Y.W.; Park, K.R. Remote Marker-Based Tracking for UAV Landing Using Visible-Light Camera Sensor. Sensors 2017, 17, 1987. [Google Scholar] [CrossRef]

- Xiu, S.; Wen, Y.; Xiao, C.; Yuan, H.; Zhan, W. Design and Simulation on Autonomous Landing of a Quad Tilt Rotor. J. Syst. Simul. 2020, 32, 1676. [Google Scholar] [CrossRef]

- Kotsiantis, S.B. Supervised Machine Learning: A Review of Classification Techniques. In Proceedings of the 2007 Conference on Emerging Artificial Intelligence Applications in Computer Engineering: Real Word AI Systems with Applications in eHealth, HCI, Information Retrieval and Pervasive Technologies, Amsterdam, Netherlands, 10 June 2007; pp. 3–24. [Google Scholar]

- Suthaharan, S. Support Vector Machine. In Machine Learning Models and Algorithms for Big Data Classification: Thinking with Examples for Effective Learning; Suthaharan, S., Ed.; Springer: Boston, MA, USA, 2016; pp. 207–235. [Google Scholar]

- Kramer, O. K-Nearest Neighbors. In Dimensionality Reduction with Unsupervised Nearest Neighbors; Kramer, O., Ed.; Springer: Berlin/Heidelberg, Germany, 2013; pp. 13–23. [Google Scholar]

- Li, Y.; Wang, Y.; Luo, H.; Chen, Y.; Jiang, Y. Landmark recognition for UAV Autonomous landing based on vision. Appl. Res. Comput. 2012, 29, 2780–2783. [Google Scholar] [CrossRef]

- Zhao, Z.Q.; Zheng, P.; Xu, S.T.; Wu, X. Object Detection With Deep Learning: A Review. IEEE Trans. Neural Netw. Learn. Syst. 2019, 30, 3212–3232. [Google Scholar] [CrossRef]

- Lee, T.; McKeever, S.; Courtney, J. Flying Free: A Research Overview of Deep Learning in Drone Navigation Autonomy. Drones 2021, 5, 52. [Google Scholar] [CrossRef]

- Chen, J.; Miao, X.; Jiang, H.; Chen, J.; Liu, X. Identification of autonomous landing sign for unmanned aerial vehicle based on faster regions with convolutional neural network. In Proceedings of the 2017 Chinese Automation Congress (CAC), Jinan, China, 20–22 October 2017; pp. 2109–2114. [Google Scholar] [CrossRef]

- Nguyen, P.H.; Arsalan, M.; Koo, J.H.; Naqvi, R.A.; Truong, N.Q.; Park, K.R. LightDenseYOLO: A Fast and Accurate Marker Tracker for Autonomous UAV Landing by Visible Light Camera Sensor on Drone. Sensors 2018, 18, 1703. [Google Scholar] [CrossRef]

- Truong, N.Q.; Lee, Y.W.; Owais, M.; Nguyen, D.T.; Batchuluun, G.; Pham, T.D.; Park, K.R. SlimDeblurGAN-Based Motion Deblurring and Marker Detection for Autonomous Drone Landing. Sensors 2020, 20, 3918. [Google Scholar] [CrossRef] [PubMed]

- Yan, J.; Yin, X.-C.; Lin, W.; Deng, C.; Zha, H.; Yang, X. A Short Survey of Recent Advances in Graph Matching. In Proceedings of the 2016 ACM on International Conference on Multimedia Retrieval, New York, NY, USA, 15–19 October 2016; pp. 167–174. [Google Scholar] [CrossRef]

- Conte, G.; Doherty, P. An Integrated UAV Navigation System Based on Aerial Image Matching. In Proceedings of the 2008 IEEE Aerospace Conference, Big Sky, MT, USA, 1–8 March 2008; pp. 1–10. [Google Scholar] [CrossRef]

- Conte, G.; Doherty, P. Vision-Based Unmanned Aerial Vehicle Navigation Using Geo-Referenced Information. EURASIP J. Adv. Signal Process. 2009, 2009, 387308. [Google Scholar] [CrossRef]

- Miller, A.; Shah, M.; Harper, D. Landing a UAV on a runway using image registration. In Proceedings of the 2008 IEEE International Conference on Robotics and Automation, Pasadena, CA, USA, 19–23 May 2008; pp. 182–187. [Google Scholar] [CrossRef]

- Cesetti, A.; Frontoni, E.; Mancini, A.; Zingaretti, P.; Longhi, S. A Vision-Based Guidance System for UAV Navigation and Safe Landing using Natural Landmarks. J. Intell. Robot. Syst. 2009, 57, 233. [Google Scholar] [CrossRef]

- Zhao, L.; Qi, W.; Li, S.Z.; Yang, S.-Q.; Zhang, H. Key-frame extraction and shot retrieval using nearest feature line (NFL). In Proceedings of the 2000 ACM Workshops on Multimedia, Los Angeles, CA, USA, 30 October–3 November 2000; pp. 217–220. [Google Scholar] [CrossRef]

- Li, Y.; Pan, Q.; Zhao, C. Natural-Landmark Scene Matching Vision Navigation based on Dynamic Key-frame. Phys. Procedia 2012, 24, 1701–1706. [Google Scholar] [CrossRef][Green Version]

- Saputra, M.R.U.; Markham, A.; Trigoni, N. Visual SLAM and Structure from Motion in Dynamic Environments: A Survey. ACM Comput. Surv. 2018, 51, 37. [Google Scholar] [CrossRef]

- Shen, S.; Mulgaonkar, Y.; Michael, N.; Kumar, V. Multi-sensor fusion for robust autonomous flight in indoor and outdoor environments with a rotorcraft MAV. In Proceedings of the 2014 IEEE International Conference on Robotics and Automation (ICRA), Hong Kong, China, 31 May–7 June 2014; pp. 4974–4981. [Google Scholar] [CrossRef]

- Cadena, C.; Carlone, L.; Carrillo, H.; Latif, Y.; Scaramuzza, D.; Neira, J.; Reid, I.; Leonard, J.J. Past, Present, and Future of Simultaneous Localization and Mapping: Toward the Robust-Perception Age. IEEE Trans. Robot. 2016, 32, 1309–1332. [Google Scholar] [CrossRef]

- Engel, J.; Sturm, J.; Cremers, D. Camera-based navigation of a low-cost quadrocopter. In Proceedings of the 2012 IEEE/RSJ International Conference on Intelligent Robots and Systems, Vilamoura-Algarve, Portugal, 7–12 October 2012; pp. 2815–2821. [Google Scholar] [CrossRef]

- Qin, T.; Li, P.; Shen, S. VINS-Mono: A Robust and Versatile Monocular Visual-Inertial State Estimator. IEEE Trans. Robot. 2018, 34, 1004–1020. [Google Scholar] [CrossRef]

- Wang, K.; Shen, S. MVDepthNet: Real-Time Multiview Depth Estimation Neural Network. In Proceedings of the 2018 International Conference on 3D Vision (3DV), Verona, Italy, 5–8 September 2018; pp. 248–257. [Google Scholar] [CrossRef]

- Wang, F.; Cui, J.-Q.; Chen, B.-M.; Lee, T.H. A Comprehensive UAV Indoor Navigation System Based on Vision Optical Flow and Laser FastSLAM. Acta Autom. Sin. 2013, 39, 1889–1899. [Google Scholar] [CrossRef]

- Cui, T.; Guo, C.; Liu, Y.; Tian, Z. Precise Landing Control of UAV Based on Binocular Visual SLAM. In Proceedings of the 2021 4th International Conference on Intelligent Autonomous Systems (ICoIAS), Wuhan, China, 14–16 May 2021; pp. 312–317. [Google Scholar] [CrossRef]

- Yang, T.; Li, P.; Zhang, H.; Li, J.; Li, Z. Monocular Vision SLAM-Based UAV Autonomous Landing in Emergencies and Unknown Environments. Electronics 2018, 7, 73. [Google Scholar] [CrossRef]

- Cheng, H.; Chen, Y.; Li, X.; Wong Wing, S. Autonomous takeoff, tracking and landing of a UAV on a moving UGV using onboard monocular vision. In Proceedings of the 32nd Chinese Control Conference, Xi’an, China, 26–28 July 2013; pp. 5895–5901. [Google Scholar]

- Chen, X.; Phang, S.K.; Shan, M.; Chen, B.M. System integration of a vision-guided UAV for autonomous landing on moving platform. In Proceedings of the 2016 12th IEEE International Conference on Control and Automation (ICCA), Kathmandu, Nepal, 1–3 June 2016; pp. 761–766. [Google Scholar] [CrossRef]

- Araar, O.; Aouf, N.; Vitanov, I. Vision based autonomous landing of multirotor UAV on moving platform. J. Intell. Robot. Syst. 2017, 85, 369–384. [Google Scholar] [CrossRef]

- Yang, T.; Ren, Q.; Zhang, F.; Xie, B.; Ren, H.; Li, J.; Zhang, Y. Hybrid Camera Array-Based UAV Auto-Landing on Moving UGV in GPS-Denied Environment. Remote Sens. 2018, 10, 1829. [Google Scholar] [CrossRef]

- Rodriguez-Ramos, A.; Sampedro, C.; Bavle, H.; de la Puente, P.; Campoy, P. A Deep Reinforcement Learning Strategy for UAV Autonomous Landing on a Moving Platform. J. Intell. Robot. Syst. 2018, 93, 351–366. [Google Scholar] [CrossRef]

- Nepal, U.; Eslamiat, H. Comparing YOLOv3, YOLOv4 and YOLOv5 for Autonomous Landing Spot Detection in Faulty UAVs. Sensors 2022, 22, 464. [Google Scholar] [CrossRef]

- Sanchez-Lopez, J.L.; Pestana, J.; Saripalli, S.; Campoy, P. An Approach Toward Visual Autonomous Ship Board Landing of a VTOL UAV. J. Intell. Robot. Syst. 2013, 74, 113–127. [Google Scholar] [CrossRef]

- Morais, F.; Ramalho, T.; Sinogas, P.; Marques, M.M.; Santos, N.P.; Lobo, V. Trajectory and guidance mode for autonomously landing an UAV on a naval platform using a vision approach. In Proceedings of the OCEANS 2015, Genova, Italy, 18–21 May 2015; pp. 1–7. [Google Scholar] [CrossRef]

- Polvara, R.; Sharma, S.; Wan, J.; Manning, A.; Sutton, R. Towards autonomous landing on a moving vessel through fiducial markers. In Proceedings of the 2017 European Conference on Mobile Robots (ECMR), Paris, France, 6–8 September 2017; pp. 1–6. [Google Scholar] [CrossRef]

- Li, J.; Wang, X.; Cui, H.; Ma, Z. Research on Detection Technology of Autonomous Landing Based on Airborne Vision. IOP Conf. Ser. Earth Environ. Sci. 2020, 440, 042093. [Google Scholar] [CrossRef]

- Falanga, D.; Zanchettin, A.; Simovic, A.; Delmerico, J.; Scaramuzza, D. Vision-based autonomous quadrotor landing on a moving platform. In Proceedings of the 2017 IEEE International Symposium on Safety, Security and Rescue Robotics (SSRR), Shanghai, China, 11–13 October 2017; pp. 200–207. [Google Scholar] [CrossRef]

- Garcia-Pardo, P.J.; Sukhatme, G.S.; Montgomery, J.F. Towards vision-based safe landing for an autonomous helicopter. Robot. Auton. Syst. 2002, 38, 19–29. [Google Scholar] [CrossRef]

- Fitzgerald, D.; Walker, R.; Campbell, D. A Vision Based Forced Landing Site Selection System for an Autonomous UAV. In Proceedings of the 2005 International Conference on Intelligent Sensors, Sensor Networks and Information Processing, Melbourne, Australia, 5–8 December 2005; pp. 397–402. [Google Scholar] [CrossRef]

- Mejias, L.; Fitzgerald, D.L.; Eng, P.C.; Xi, L. Forced landing technologies for unmanned aerial vehicles: Towards safer operations. Aer. Veh. 2009, 1, 415–442. [Google Scholar]

| Target Type | Method | Precision | Height | Image Resolution | Processing Speed | Speed | |

|---|---|---|---|---|---|---|---|

| T | [11] | 1 Canny detection 2 Hough transform 3 Hu invariant moment | Pose 4.8° | 2–10 m | 737 × 575 | 25 Hz | N/A |

| [12] | 1 Adaptive threshold selection method 2 Infrared images 3 Sobel method 4 Affine moments | Success rate 97.2% | N/A | N/A | 58 Hz | N/A | |

| H | [6] | 1 Hu invariant moment, 2 Combines differential GPS | Position 4.2 cm Pose 7° Landing < 40 cm | 10 m | 640 × 480 | 10 Hz | 0.3–0.6 m/s |

| [13] | 1 Images extract, 2 Zernike Moments | Position X 4.21 cm Position Y 1.21 cm Pose 0.56° | 6–20 m | 640 × 480 | >20 Hz | N/A | |

| [16] | 1 Image registration, 2 Image segment, 3 Depth-first search 4 Adaptive threshold selection method | Success rate 97.42% | N/A | 640 × 480 | >16 Hz | /N/A | |

| Round Rectangular | [17] | 1 Differential fitness 2 New geometric estimation scheme | Position 5 cm Pose 5° | N/A | N/A | N/A | N/A |

| [18] | 1 Optical flow sensor 2 Fixed threshold 3 Segmentation 4 Contour detection | Position 3.8 cm | 0.7 m | 640 × 480 | 70–100 Hz | 0.9–1.3 m/s | |

| [19] | 1 Kalman filtering 2 Function ‘solvePnPRansac’ | Position error less than 8% of the diameter of the cooperative target. | 2.5 m | 640 × 480 | 30 Hz | N/A | |

| [20] | 1 Robust and quick response landing pattern 2 Optical flow sensors 3 Extended Kalman filter | Position 6.4 cm pose 0.08° | 20 m | 1920 × 1080 | 7 Hz | N/A | |

| Combination | [21] | 1 QR code digital coding system 2 Gradient-based clustering method 3 Quad detection | (<40 m) Landing position <0.5 m Success rate > 97% | 50 m | 400 × 400 | 30 Hz | N/A |

| [26] | 1 Coding scheme 2 Tag boundary segmentation method 3 Image gradients 4 Adaptive thresholding | Position < 0.2 m Pose < 0.5° | 0–20 m | 640 × 480 | 45 Hz | N/A | |

| [27] | 1 Projective geometry 2 Adaptive thresholding 3 Ellipse fitting 4 Image moments method | Position (2.4, 8.6) cm Pose 6° | 10 m | 640 × 480 | 60 Hz | N/A | |

| [23] | 1 AprilTags 2 HOG 3 NCC | Position < 1% | 4 m | N/A | N/A | 0.3 m/s | |

| [28] | 1 Profile-checker algorithm 2 Template matching 3 Kalman filtering | Position (7.6, 1.4, 9.5) cm pose (1.8° 1.15° 1.09°) | 10 m | 1280 × 720 | 40 Hz | N/A | |

| [29] | 1 Canny 2 Adaptive thresholding 3 Levenberg–Marquardt (LM) | Position < 10 cm | 3–10 m | 640 × 480 | 30 Hz | N/A |

| Type | Method | Precision | Hight | Image Resolution | Processing Speed | Platform Speed | |

|---|---|---|---|---|---|---|---|

| Vehicle-based | [55] | 1 Hough transform 2 Adaptive thresholding 3 Erosion and dilation 4 Visual–Inertial Data Fusion | Position (5.79, 3.44) cm Successful rate 88.24% | 1.2 m | 640 × 480 | 25 Hz | <1.2 m/s |

| [9] | 1 Model predictive controller 2 Nonlinear feedback controller 3 Linear Kalman filter | Landing in 25 s Position error < 10 cm | N/A | 752 × 480 | 30 Hz | 4.2 m/s | |

| [65] | 1 Visual–inertial odometry 2 Extended Kalman filter | N/A | 3 m | 752 × 480 | 80 Hz | <1.2 m/s | |

| [56] | 1 LiDAR scanning 2 PnP 3 Adaptive thresholding | Landing in 60 s Have larger overshoot when backward | 3 m | N/A | N/A | 1 m/s | |

| [57] | 1 Extended Kalman filter 3 AprilTag Landing Pad 4 PnP 5 Visual–Inertial Data Fusion | Position < 13 cm | 2 m | N/A | N/A | 1.8 m/s | |

| [58] | 1 State estimation algorithm 2 Nonlinear controllers 3 Convolutional neural network 4 Velocity observer 5 Nonlinear controller | Position < (10, 10) cm | 1.5 m–8 m | 512 × 512 | N/A | 1.5 m/s | |

| [59] | 1 Gazebo-based reinforcement learning framework 2 Deep deterministic policy gradients | Landing in 17.67 s Position error < 6 cm The action space does not include altitude | N/A | N/A | 20 Hz | 1.2 m/s | |

| Ship-based | [12] | 1 Afine Invariants moment 2 Infrared radiation images 3 Otsu method and iterative method 4 Adaptive thresholding | Success rate 97.2% | N/A | N/A | 58 Hz | N/A |

| [61] | 1 Kalman filter 2 feature matching 3 Image threshold 4 Artificial neural networks 5 Hu moments | Position error (4.33, 1.42) cm | 1.5 m | 640 × 480 | 20 Hz | N/A | |

| [62] | 1 Kalman filter 2 Efficient perspective-n-point (EPnP) | N/A | N/A | N/A | N/A | N/A | |

| [63] | 1 Extended Kalman filter 2 Visual–Inertial Data Fusion | Landing in 40 s | 3 m | 320 × 240 | N/A | N/A |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Xin, L.; Tang, Z.; Gai, W.; Liu, H. Vision-Based Autonomous Landing for the UAV: A Review. Aerospace 2022, 9, 634. https://doi.org/10.3390/aerospace9110634

Xin L, Tang Z, Gai W, Liu H. Vision-Based Autonomous Landing for the UAV: A Review. Aerospace. 2022; 9(11):634. https://doi.org/10.3390/aerospace9110634

Chicago/Turabian StyleXin, Long, Zimu Tang, Weiqi Gai, and Haobo Liu. 2022. "Vision-Based Autonomous Landing for the UAV: A Review" Aerospace 9, no. 11: 634. https://doi.org/10.3390/aerospace9110634

APA StyleXin, L., Tang, Z., Gai, W., & Liu, H. (2022). Vision-Based Autonomous Landing for the UAV: A Review. Aerospace, 9(11), 634. https://doi.org/10.3390/aerospace9110634