1. Introduction

While a steady growth of manned aviation has driven the advancement of communication, navigation and sensing (CNS) technologies to support a denser airspace exploitation, various technological and regulatory challenges have affected the development of autonomous separation assurance and collision avoidance (SA&CA) capabilities for unmanned aircraft systems (UAS). Surveillance systems such as transponders, traffic collision avoidance system (TCAS), and automatic dependent surveillance-broadcast (ADS-B) are conceived to support the in-flight SA&CA while also incorporating, to the extent possible, the pilot’s situational assessment, training, experience, and aircraft capabilities. The detect and avoid (DAA) function in a non-segregated UAS operational context, however, demands transitions from the pilot’s decision-making to a fully autonomous decision-making, which is one of the largest challenges faced by the UAS sector today. An accurate performance modelling of current airborne surveillance technologies for maintaining SA&CA without the pilot onboard is critical to evaluate and certify the criteria of equivalent level of safety in the UAS platform. The International Civil Aviation Organization (ICAO) outlined successive steps towards the integration of UAS in controlled airspace as well as into the aerodrome areas, which are identified in the Aviation System Block Upgrades (ASBU) [

1]. The United States (US) Federal Aviation Administration (FAA) also provisioned to integrate UAS into the National Airspace System (NAS) in two different phases [

2,

3,

4]. Phase 1 incorporates rural, Class Golf (G) airspace and is compatible with agricultural, mapping and survey applications, whereas phase 2 comprises controlled airspace that requires technologies to maintain safe separation from cooperative and non-cooperative air traffic [

5]. The European Commission’s Directorate General for Mobility and Transport (DG MOVE), the European Defence Agency (EDA), the European Aviation Safety Agency (EASA), and the Single European Sky Air Traffic Management (ATM) Research (SESAR) Joint Undertaking (SJU) are also stepping up the efforts to safely accommodate UAS into the European aviation and ATM system [

6]. In parallel with these government and industry-led initiatives, the aerospace research community has been continuously working on several challenges of integrating UAS into non-segregated airspaces including separation thresholds and methods for small UAS [

7,

8], UAS encounter modelling and collision avoidance [

9,

10,

11], 3D obstacle avoidance strategies for UAS [

12,

13,

14,

15] dynamic model augmentation [

16], Global Navigation Satellite Systems (GNSS) integrity augmentation for UAS [

17], surveillance sensor integration in the UAS platform [

18,

19] and well-clear boundary models for UAS DAA [

20,

21,

22,

23,

24].

1.1. Detect and Avoid (DAA) Safety Assessment

Although adequate performance standards were established for various surveillance equipment, to date the impacts of surveillance system failures in terms of separation degradation, specifically in the UAS platform, have not been defined. Moreover, traditional radar and Mode-A/C transponder technologies show inherent deficiencies in different airspace and equipage scenarios especially in the presence of high air traffic densities [

25,

26,

27,

28,

29,

30,

31,

32,

33]. The failure modes for different cooperative sensors such as Mode S, TCAS, ADS-B are well defined and safety assessments have been carried out on the individual surveillance sensor considering functions in the manned aircraft. While the availability of ATM deconfliction service provides mitigation to these faults when available, the risk is still notably higher when considering highly autonomous UAS operations. The FAA conducted a safety assessment on the TCAS application in the unmanned platforms [

34,

35,

36]. A thorough probabilistic safety assessment has been carried out on ADS-B system considering both the ground and airborne segment in [

37]. Safety assessment of the surveillance sensor failure in the UAS platform was carried out in [

38] with an encounter analysis considering the unmanned aircraft platform only. A simplified model is developed in [

39] to assess and predict the risk associated with a given UAS operation. In [

40], the authors provided a framework that consists of a target level of safety (TLS) approach using an event tree format to develop specific SAA effectiveness standards based on UAS weight and airspace class combinations. The provision of certified autonomous DAA capabilities is an indispensable milestone for the certification of UAS for safe non-segregated and beyond line of sight (BLOS) operations. This is a widely recognized issue in the aerospace research community but to date, despite the extensive efforts, the various proposed DAA approaches have not satisfactorily addressed the overall safety risks. In this paper, a comprehensive safety assessment is conducted considering the sensitivities, failures and degraded operations of systems and components of the overall DAA architecture. Both qualitative and quantitative analysis are performed to identify and derive the risks of different component failure in both airborne and ground control platform using probabilistic safety assessment.

Probabilistic safety assessment is a technique to quantify risk measures numerically by identifying the specific events that lead to hazards [

41]. Fault tree analysis (FTA) relates the logical relationship between component failures which are the basic failures and their contributions to the system failures and the importance analysis provides the importance ranks of the components to the overall risk. Therefore, the combination of these two techniques provides an overall approach to determine the impacts of individual sensor failure as well as the significance of individual risk contributors. In particular, a case study for ADS-B as a candidate technology to support DAA is addressed in this paper. The safety analysis indicates the validity of ADS-B for cooperative approach providing a pathway for certification of the unified framework.

Section 2 describes the unified analytical framework and overall DAA architecture and

Section 3 outlines the assessment methodology. The risk measure and importance analysis are presented in

Section 4. A case study of ADS-B as a cooperative surveillance means in specified UAS flight envelope is illustrated in

Section 5 and

Section 6 contains the discussion of overall findings.

2. Unified Analytical Framework and DAA Architecture

In recent research [

42,

43], a unified analytical framework has been proposed, and a novel methodology is demonstrated to integrate the data provided by cooperative systems (reliance on own and intruder aircraft avionics) and non-cooperative sensors (reliance on own aircraft avionics only).

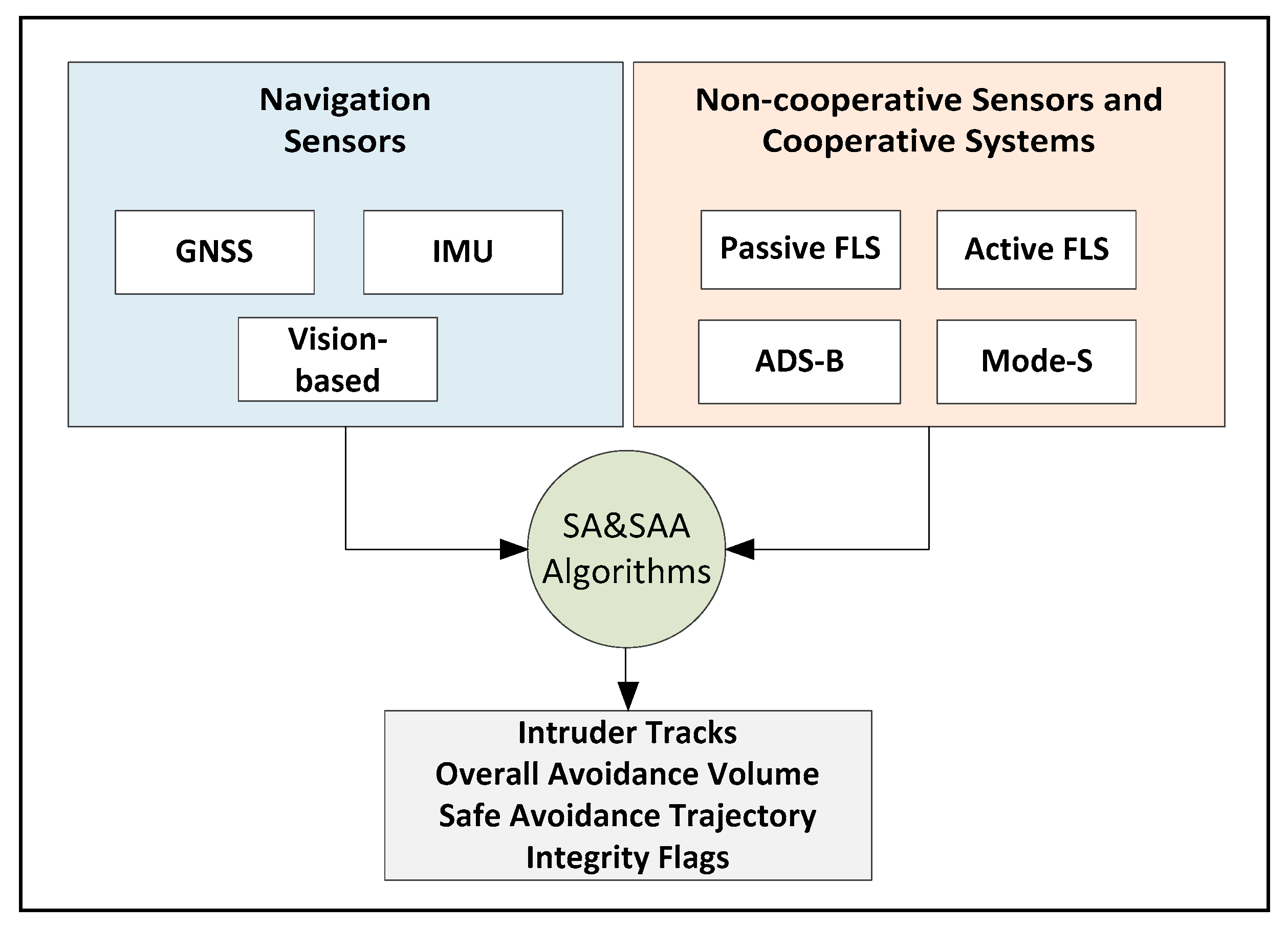

Figure 1 illustrates the conceptual top-level architecture of the proposed DAA system, which uses active and passive forward-looking sensors (FLS) in addition to ADS-B and Mode-S transponders. Navigation data is extracted from GNSS, inertial measurement units (IMU) and vision-based sensors.

State-of-the-art active and passive FLS include visual/infrared cameras, RADAR and LIDAR. Mode S transponders are cooperative surveillance employ ground components and an airborne transponder. Mode S has been designed as an evolutionary addition to the Air Traffic Control Radar Beacon System (ATCRBS) [

44] for the provision of enhanced surveillance and communication capability which is required for the automation of air traffic control. TCAS was developed as a back-up airborne collision avoidance system (ACAS) which provides vertical maneuvering guidance to the pilot in the event of a possible collision threat [

45]. ADS-B is a system that periodically transmits its state vector including horizontal and vertical position, and velocity as well as some other intent information [

46]. The system comprises two separate components, ADS-B Out and ADS-B In. ADS-B is called dependent surveillance as it requires that the aircraft state vector and additional information be derived from the on-board navigation equipment. It is automated in the sense that it doesn’t need pilot or controller input to transmit information. Cooperative/non-cooperative tracking data and host platform navigation data are processed using a dedicated algorithm within the central DAA processor onboard the UAS to produce avoidance volumes in the airspace surrounding each conflicting intruder/obstacle track. This algorithm ensures the rigorous mathematical treatment of the errors affecting the state measurements (correlated and uncorrelated measurements) and accounts for the host–obstacle relative dynamics, with due consideration for the environmental conditions (wind, turbulence, etc.) affecting the aircraft dynamics. A conceptual representation of this approach for the case of a single aerial encounter is depicted in

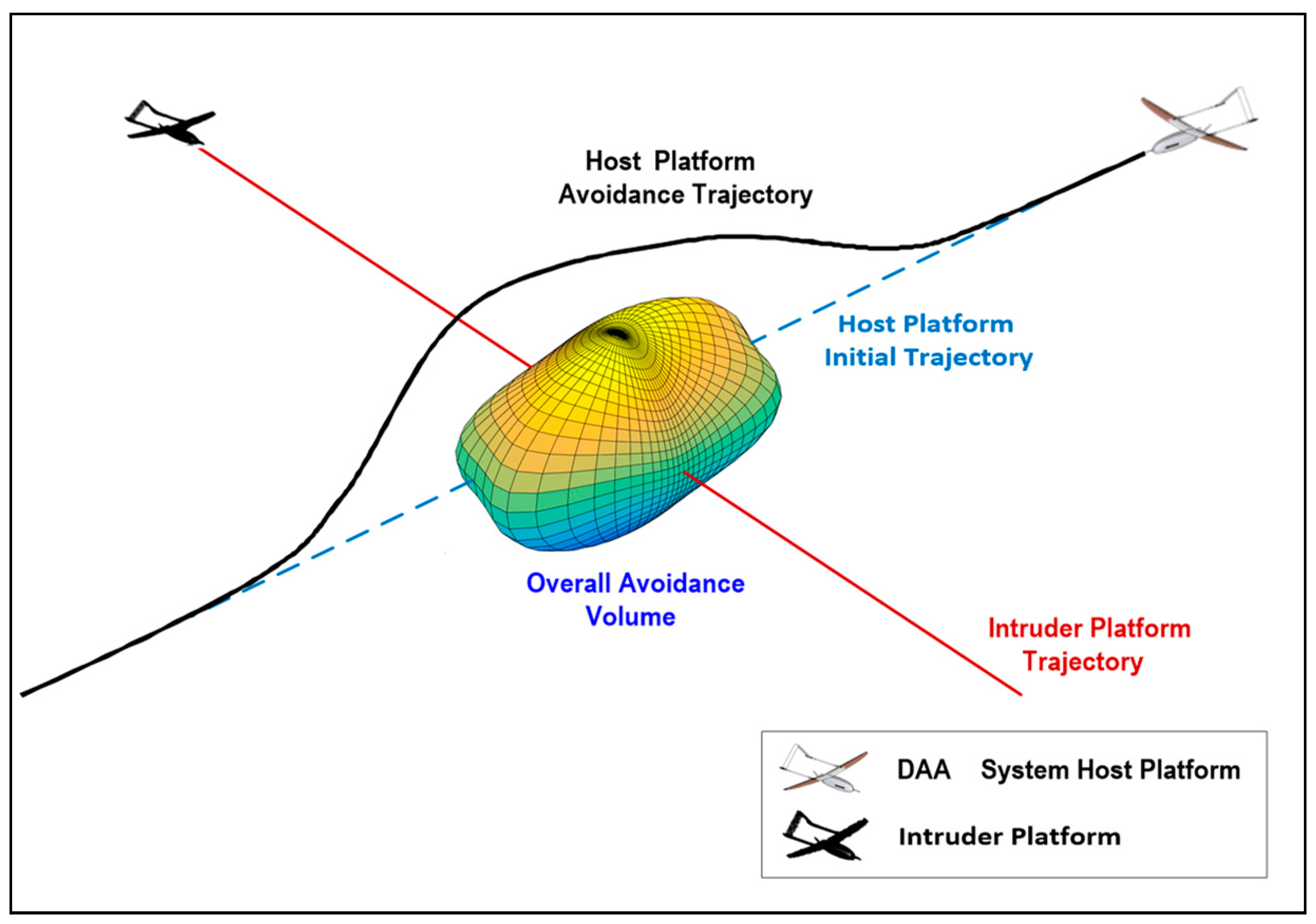

Figure 2.

In particular, the figure shows both the overall avoidance volume and the optimal avoidance trajectory, which is computed by a real-time trajectory optimization algorithm. If the original trajectory of the DAA system host platform intersects the calculated avoidance volume, a risk of collision (RoC) flag is generated [

47,

48]. This RoC flag initiates the real-time trajectory optimization process and the associated steering commands are provided to the aircraft flight controls.

DAA Reference Architecture

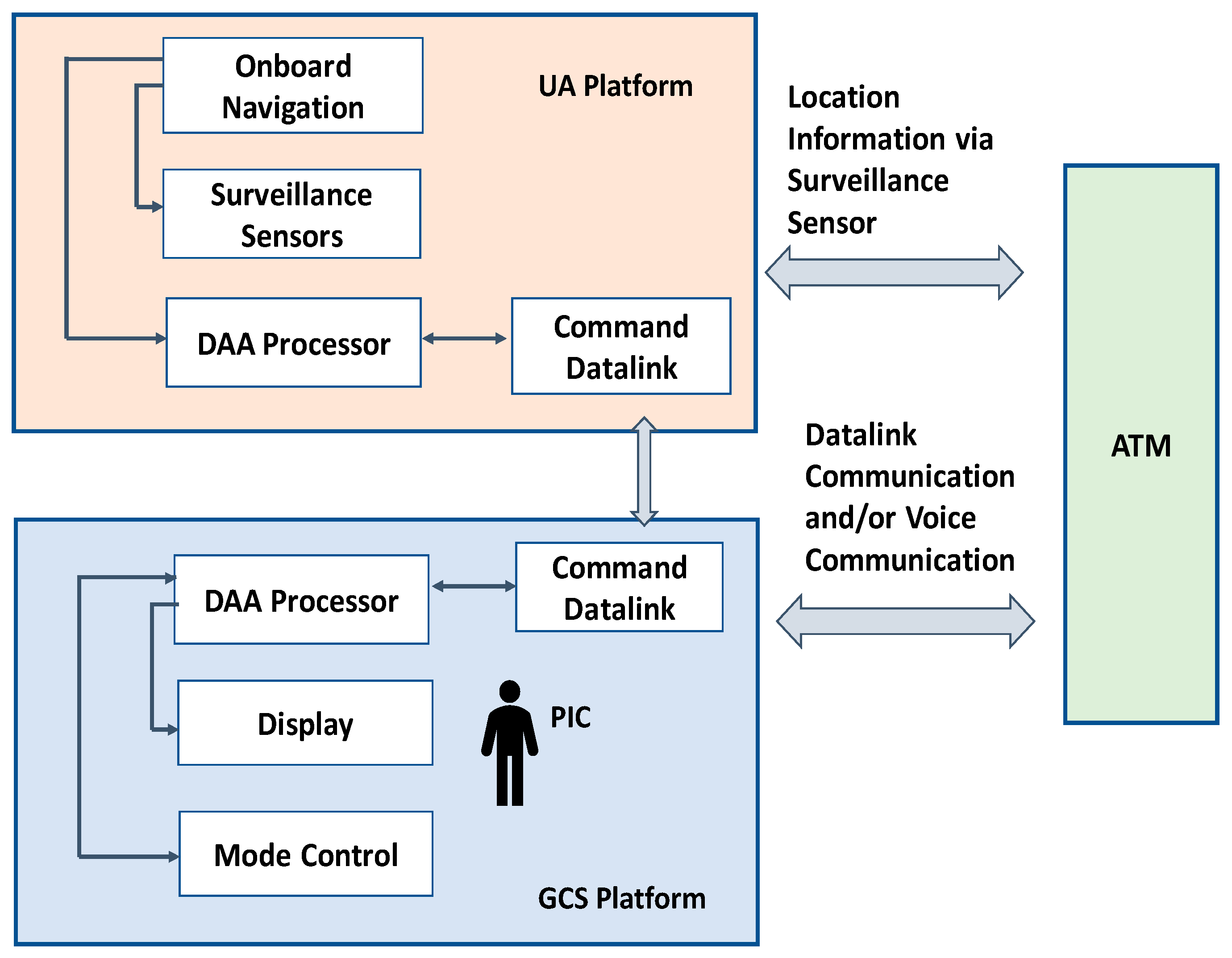

The components of the UAS DAA system are partly located onboard the unmanned aircraft (UA) platform and partly in its ground control station (GCS). In particular, all non-cooperative sensors and cooperative surveillance systems as well as autonomous collision avoidance functions are installed onboard, whereas all the human–machine interfaces (HMI) are integrated in the GCS. Both the UA and the GCS are equipped with Command and Control (C2) data link transceivers to transmit the data from the UA platform to GCS and commands from GCS to UA platform. The UAS pilot-in-command (PIC) manning the GCS is responsible for the safe operation of the UAS and for executing ATM directives unless they pose a hazard to the UAS.

Figure 3 provides a simplified schematic diagram of the overall DAA system architecture.

The UA platform includes four major elements namely the surveillance components, DAA processor, onboard navigation system, and the C2 datalink. The state-of-the-art of cooperative surveillance sensors include Active Mode S surveillance, TCAS II, and ADS-B. The non-cooperative surveillance sensors comprise Radar, Light Detection and Ranging (LIDAR), cameras such as thermal camera, infrared cameras etc. Air-to-air radar systems operate in the C, X, or Ku-frequency bands of the aeronautical radio navigation spectrum (ARNS) [

49]. Usage of a frequency will be depending on the type of operation. LIDAR is another prominent surveillance sensor which shows great promise for non-cooperative UAS collision avoidance [

50]. LIDAR is a remote sensing technology that scans the environment and the 3D image of the environment is constructed from the individual distance points within an aggregate of points gathered during the scanning process. Some different laser scanning techniques are available to steer the beam and achieve very wide fields of vision (FoV). Although current LIDAR systems are still of considerable size, weight, power and cost (SWaP-C), considerable progresses are being made thanks to their extensive usage in the autonomous driving domain. The availability of advanced cameras and development of vision-algorithms made them popular for use in the unmanned platform especially in low altitude operation. These non-cooperative sensors complement other on-board airborne surveillance sensors by providing detection of non-cooperative traffic.

The equipage of surveillance sensors depends on the type of unmanned aircraft system, the airspace and the certification. According to DO-365 [

49] which was developed by Special Committee-228 [

51], UA surveillance equipment will minimally include:

active Mode S surveillance that use 1030/1090 MHz frequencies;

ADS-B In to detect the broadcast directly from the intruder aircraft ADS-B Out or through ADS-R or TIS-B channel;

air-to-air radar system to detect the non-cooperative traffic.

This equipage is referred to as Class 1 DAA system. The Class 2 DAA system will include TCAS II along with class 1 DAA system. As for the manned aircraft TCAS serves to improve the pilot’s awareness of other air traffic, in UAS it would be serving the PIC [

35] and no maneuvers will be initiated automatically only on this guidance.

The UA onboard processor receives onboard navigation sensor data, data from onboard active surveillance airborne to detect transponder equipped intruders, ADS-B receiving equipment to detect ADS-B equipped intruders, and radar data to detect non-cooperative intruders. The intruder data received by multiple sensors are then processed by the UA processor. From the intruder state and intent data, the UA processor initially evaluates the intended track of the intruder. The initial track and other information are then sent to the command and non-payload communications datalink for transmission to the GCS. The ATM can locate the UAS via ADS-B out messages and Active Mode S transponder. At all times, PIC can maintain communication with ATM via datalink or voice communication. In the GCS, the processor receives prioritized track data and DAA status data from the UA platform and DAA mode control commands from the GCS control. It then processes the data and forwards the information to the DAA display. The mode control is the interface between the PIC, the UA, and GCS DAA processors. The command functions are executed through this interface and then sent to the GCS processors and then via data link to UA platform. The command is then executed by the UA platform. In order to obtain the certification, surveillance sensors need to provide equivalent level of safety as of manned aircraft. This include providing surveillance support suitable for the entire operational flight envelope. The UA operational flight envelope may include five phases; namely takeoff, climb, cruise, descent and landing where the takeoff and landing phase will require low altitude flying and ground roll. The takeoff and landing may take place on airport and a separate launch/recovery zone. Due to the different traffic mix at each altitude, different flight dynamics characteristics and different conflict geometries characterizing each phase, the safety-criticality of DAA sensors and systems will likely change as a function of the flight phase. The unified analytical framework and the associated DAA system safety analysis allows to either define the safely flyable envelope as a function of the available sensors and their reliability or to identify the sensors and their required reliability as a function of the desired safe envelope.

3. Safety Assessment Methodology

As already mentioned in

Section 1.1, the qualitative analysis involved in the FTA methodology lists all the possible combinations of factors, normal events and component failures resulting in a top event, whereas the quantitative evaluation allows to determine the probability of failure of the top event from the failure probability values of basic events that propagated up through the fault tree. The reliability data or the failure rate of the components is crucial for FTA and is obtained from component manufacturers (typically in the form of mean time between failures—MTBF) and/or from the literature, including among others Aviation Standard Documents by RTCA, FAA and Eurocontrol [

37,

49,

52,

53,

54,

55,

56]. The basic events represent component failures and the logic gates dictate how faults of the particular component within the system can combine to result in the failure in the top event.

In this study, FaultTree++ from isograph [

57] is utilized to carry out the safety assessment and calculate the top-level event probabilities. Before constructing the fault tree, based on the system overview provided in

Section 2, intermediate events leading to failure in onboard DAA capability are identified. We note that the navigation and guidance functionality failures have not been presented in detail as this would be beyond the scope of the article, except for the subsystems on which DAA components directly depend such as onboard Global Positioning System (GPS) and barometric altimeter. The symbols that are used to create the fault tree are illustrated in

Table 1.

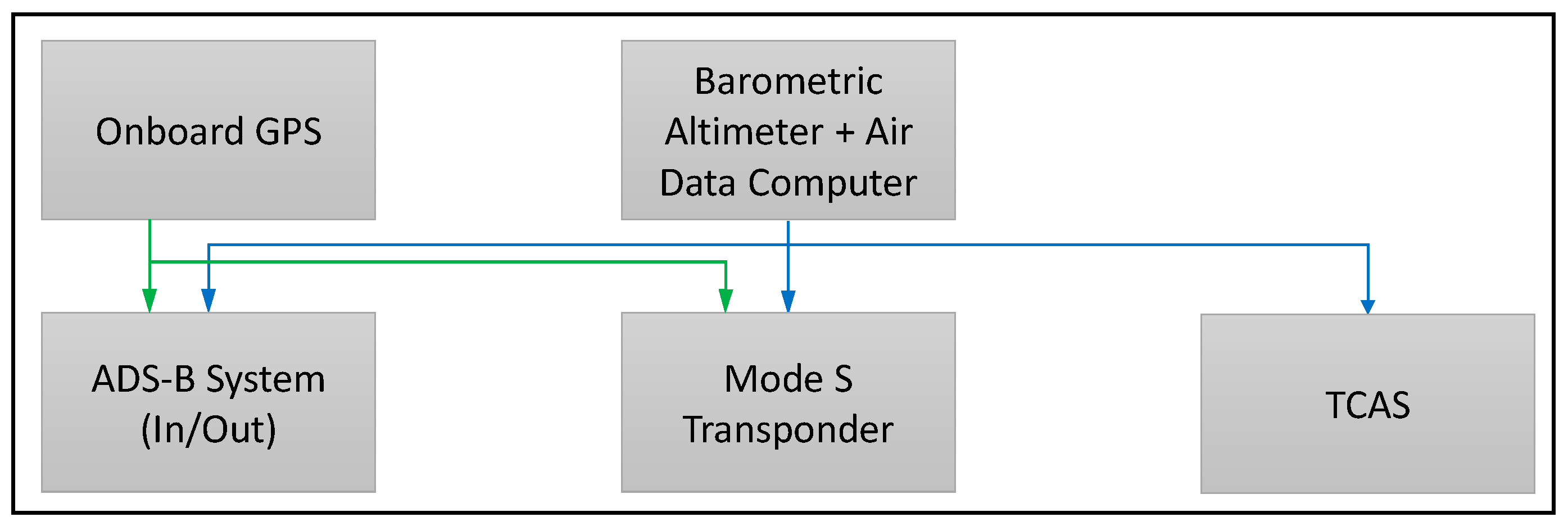

In order to identify the basic events that lead to the failure of the onboard surveillance sensors, complete knowledge of the complex architecture of each of the sensors along with the understanding of integrated navigation and communication system components is required. This is because some onboard state data are fed into multiple surveillance sensors and some of them share same transponder datalink. For example, barometric altimeter data is used by both Active Mode S and the ADS-B Out system. Hence, error in the barometric altimeter will affect both systems.

Figure 4 illustrates the dependency between onboard navigation and surveillance systems in a high-level architecture.

To properly capture failures that affect multiple systems, the common cause failure (CCF) analysis is utilized. The CCF is a failure event that affects multiple components or functions [

58]. In CCF analysis, there are two relevant factors: the root cause, which is a single failure event, and the coupling factor. The coupling factor describes the dependency of multiple systems on a common data source. While calculating the overall risk for surveillance system failure, it is crucial to take care of the CCF because, as shown in the architecture in

Figure 4, some of the basic events affect a multiple surveillance system at the same time. The most commonly-used method to account for CCF is the beta factor method [

59,

60]. To calculate the failure rate due to common causes, the beta factor is simply multiplied by the component failure rate. In essence, the beta factor simply represents the percentage of component failures that are due to common causes. A beta factor of 0.05 is chosen for this analysis based on the literature and the International Electrotechnical Commission (IEC) method checklist.

In this work, the contribution of individual failure events to their related system failure are determined based on the specific functional dependencies. For example, while in the event of ADS-B out failure, the ownship is not locatable by other platforms through ADS-B, it still can detect intruder traffic with a working ADS-B In. Hence, the detection capability will not be compromised due to the failure of ADS-B out system and can still avoid intruders. Therefore, only the failure in traffic detection capability is considered in the DAA functionality. The ownship surveillance function failure, referred to as a failure in ownship locatability function, is deduced in a different tree.

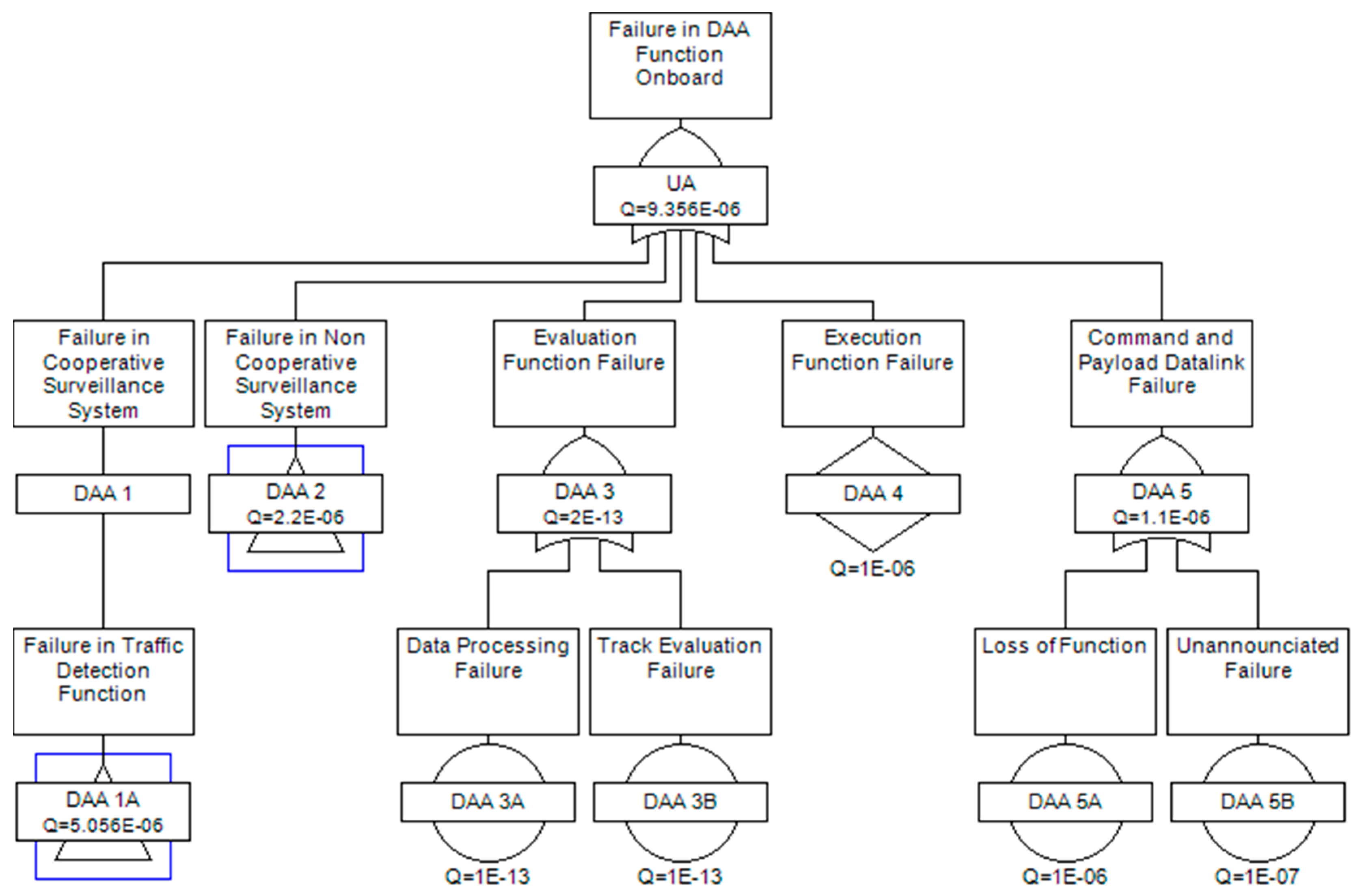

Figure 5,

Figure 6 and

Figure 7 illustrate the DAA capability failure considering the function of traffic detection and avoid capability and

Figure 8,

Figure 9,

Figure 10,

Figure 11 and

Figure 12 demonstrate the failure in ownship locatability function.

As depicted in

Figure 5, the failure in DAA capability onboard can be the result of five alternative events. Three of them are intermediate events: DAA 1A-failure in traffic detection function by cooperative sensors, DAA 2-failure on non-cooperative sensors and DAA 3-evaluation function failure. DAA 1A and DAA 2 are transferred to separate trees and illustrated in

Figure 6 and

Figure 7. The evaluation function failure traces the data processing function failure which indicates the failure in multi-sensor data fusion and the track evaluation failure indicates the failure probability of intruder track evaluation. The execute function failure is the failure probability to execute appropriate maneuvers as commanded. DAA 5 is the failure of the data link that is used to transfer data and receive command from the ground control station.

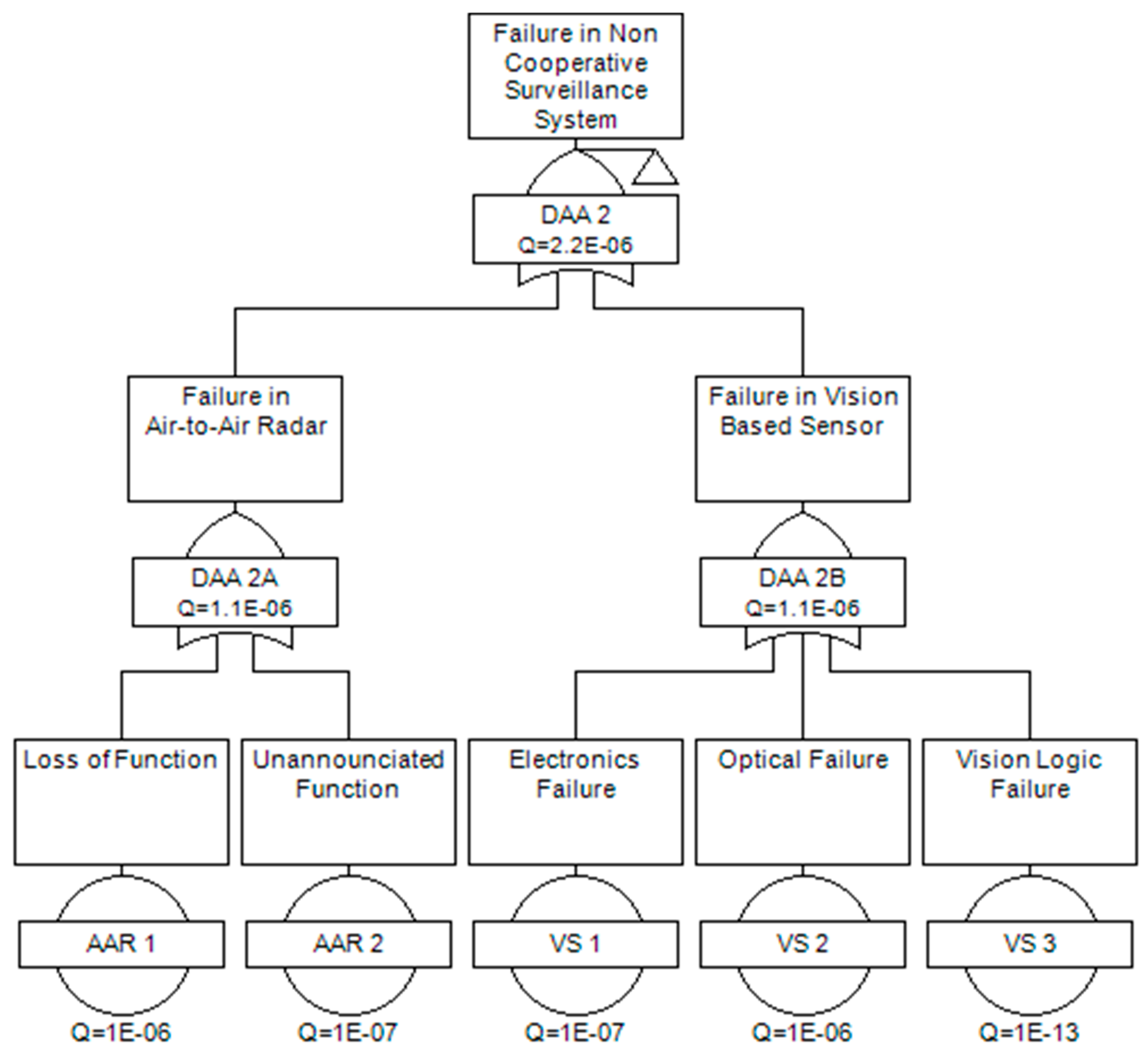

Figure 6 outline the intermediate events DAA 1A. As stated earlier, this subtree specifies the failure probability of traffic detection function by cooperative surveillance. In this work, a voting OR gate is utilized to calculate the failure probability of DAA 1. VOTING OR indicates that the event will occur of k out of n events occur. In the fault tree presented in

Figure 6, the DAA occurs if two out of three surveillance sensors failed to detect a traffic. VOTING OR gate is considered to account the current equipage scenario for unmanned as well as manned aircraft system. Using a universal AND gate would give a lower failure rate whereas using a universal OR gate would provide a higher failure rate than in an actual encounter scenario. For example, if onboard active surveillance system and TCAS system fails and the intruder which can be manned or unmanned, is not equipped with ADS-B, in spite of having a working ADS-B In ownship will fail to detect the intruder. Also, it is considered that without any catastrophic power failure onboard or without any external attack three systems will not be down at the same time. Thus, VOTING OR encompasses all the scenarios.

Figure 7 presents the intermediate event DAA 2-failure in non-cooperative sensor which is the result of two alternatives sensors failure: one is air-to-air radar failure, and another is vision-based sensor failure. While tracing the events for vision-based sensor, a component wise failure probability is adopted as the component is assumed to be acquired off the shelf with a specified MTBF.

As stated earlier, a separate fault tree is constructed to determine the failure in ownship locatability function due to failure of cooperative surveillance system.

Figure 8,

Figure 9,

Figure 10,

Figure 11 and

Figure 12 illustrate the faults trees of main event and intermediate events. As detailed in

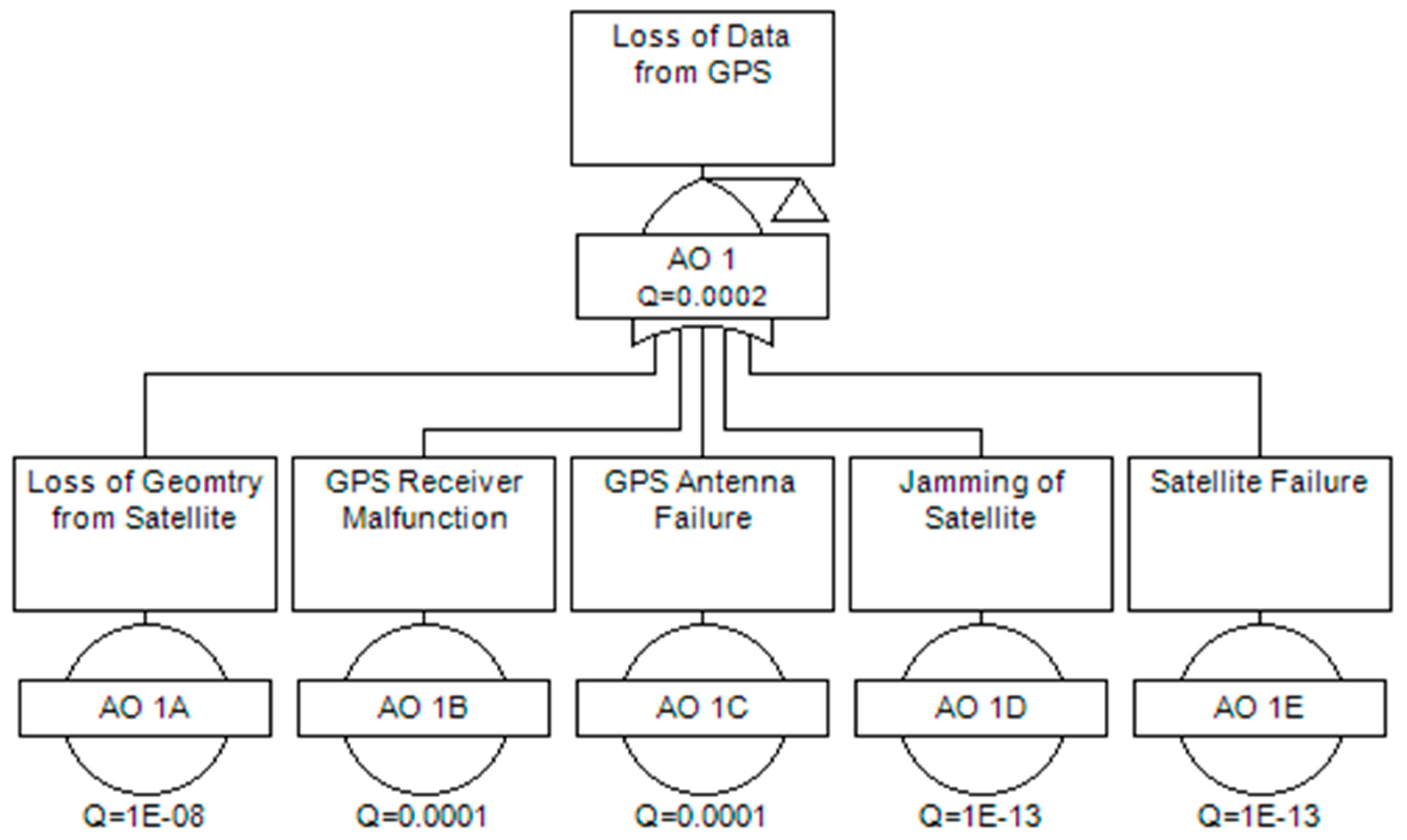

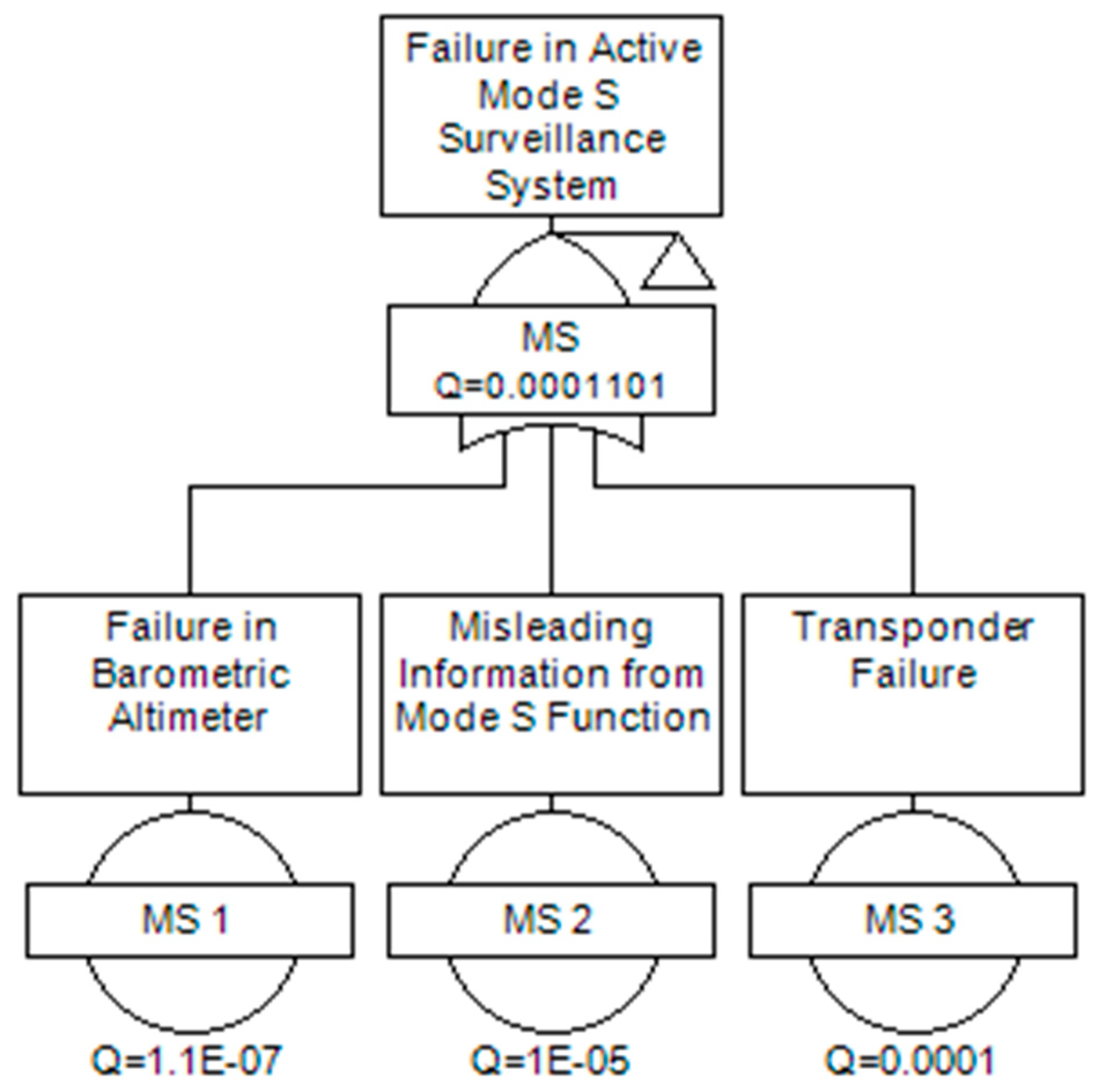

Figure 8, the failure in cooperative surveillance function occurs if either Mode S or ADS-B out failed. This is a conservative choice that assumes mixed equipage requirements. The ownship ADS-B out system depends on the onboard satellite navigation and pressure altimeter.

Figure 9 and

Figure 10 present the transferred trees from the ADS-B out;

Figure 10 outlines the failure in ADS-B due to onboard satellite navigation loss and

Figure 11 outlines the failure due to corrupted data from navigation sources. Finally,

Figure 12 shows the fault tree for Mode S surveillance only.

The reliability data for the basic events used in the fault tree are extracted from the literature, aviation standard documents and Original Equipment Manufacturer (OEM) and presented in

Table 2.

For the failure probability value of command datalink and air to air radar, the value is taken from technical standard orders, which state the loss of function (air to air radar and command datalink) cannot be greater than per fight hour and unannounciated failure can be greater than per flight hour. Although vision-based sensors are widely accepted as a means of UAS non-cooperative surveillance, so far, no specific technical standard defined the reliability requirements for DAA-grade vision sensors. Hence, the vision sensor failure is deduced considering its main component and the reliability data for airborne electronics / optical instruments during nominal condition is considered in the study.

5. Pathway to DAA Certification: ADS-B Suitability

DAA systems capable of consistently and reliably performing equally or exceed the see-and-avoid performance of a human pilot are indispensable to mitigate the risks associated with possible errors/failures in the command and control (C2) loops involving the remote pilot and to support safe autonomous operations. Previous undertakings in the domain only managed to establish the safety cases for UAS operating within the line-of-sight (LOS) of their pilots or segregated from other traffic and well clear of public infrastructure and major urban settlements. For BLOS operation and safe integration of UAS in non-segregated airspace, the provision of certified autonomous DAA capabilities is an indispensable milestone. The current LOS operations/segregated airspace constraints are preventing further exploitations of UAS technology and impeding many practical uses. For instance, the use of UAS to survey large areas, to deliver essential goods in remote locations and to provide communication services over wide geographic regions, to name a few, all require non-segregated BLOS operational capabilities. From the FTA carried out in this study, the system availability is greater than 99.98% for both ADS-B Out and ADS-B In systems. The availability of ADS-B In system is solely based on the host platform and any failure or malfunction from the intruder platform is not considered. The Minimum Aviation System Performance Standards for ADS-B [

67] specify the availability of ADS-B to be greater than 99.9% if used as primary means of surveillance and greater than 95% if used as supplementary means of surveillance. The Civil Aviation Certification Authority (CASA) in Australia has mandated using ADS-B for aircraft flying above FL285 and also for Instrument Flight Rule (IFR) traffic as specified in CAO 20.18. The FAA also mandated all the general aviation aircraft to be equipped with ADS-B within 2020 [

68]. Although the failure of the ADS-B system (1.2 × 10

−3) is lower comparative to other surveillance sensors, researcher working on DAA capability concluded that only an adequate exploitation of multi-sensor architecture will potentially meet the safety requirements in all flight phases. Therefore, depending on the operational flight envelope ADS-B data shall be fused with other cooperative and non-cooperative surveillance data. Therefore, ADS-B systems can be used as a cooperative sensor especially in the airspace with IFR traffic allowing the safe operation of unmanned platforms in non-segregated airspace. The mathematical approach proposed in the unified framework allows us to determine the safe-to-fly portions of the host UAS operational flight envelope based on the avionics sensors/systems available onboard or, alternatively, to identify the required sensors/systems required for operating in a certain predefined portion of the host UAS operational flight envelope.

Figure 13 conceptually depicts the two-way certification approach.

In particular, considering the nominal flight envelopes of the host UAS/intruders and the characteristics of the on-board sensors/systems, the algorithms will determine the applicable safety envelope restrictions. Conversely, based on a predefined (required) flight envelope and on the intruder dynamics, the algorithms will allow an identification of the specific avionics sensors/systems that must be integrated in the UAS. This approach will lay the foundations for the development of an airworthy DAA capability and a pathway for manned/unmanned aircraft coexistence in all classes of airspace.

Moreover, the research community determined that only the exploitation of machine learning and artificial intelligence technologies will allow to develop a DAA capability that can perform reliably with the predicted levels of traffic density and the infinite combination of possible encounter characteristics, which already exceed the cognitive capabilities of human operators. This has been the chosen path, for instance, in the development of the ACAS-Xa variant. However, the current regulatory framework does not cater for the certification of non-deterministic behavior avionics systems, hence significant evolutions will be required to the framework [

69], which shall also account for the ever-increasing air-ground functional integration as part of the so-called CNS+A (i.e., CNS/ATM and Avionics) paradigm, which demands that cohesive safety certification requirements are adopted for both airborne and ground-based systems [

69,

70].

6. Conclusions

The reliability of UAS DAA systems based on ADS-B and other cooperative/non-cooperative sensors was analyzed in this paper. The unified analytical framework has been utilised for the mathematical fusion of navigation and tracking errors, supporting the development of low SWaP-C DAA systems exploiting cooperative and non-cooperative surveillance technologies. The paper also briefly discussed the need for an evolution of the certification framework to accommodate the adoption of non-deterministic systems and to encompass the ever-increasing functional integration between airborne and ground-based systems. The analysis highlighted the safety significance and importance of the onboard surveillance equipment. The cooperative surveillance system failure contributes most to the DAA capability failure. Another important finding is that for the cooperative surveillance system, the failure in ownship surveillance capability is higher than the failure in traffic detection capability. The adequacy of ADS-B as a cooperative surveillance system for conventional one-to-one encounters was also discussed. The calculated failure probability is in the order of 10−6, which is remote. Although the severity analysis has not been included in this study, the implications of the failure will depend on the airspace characteristics. In particular, in uncontrolled airspace, where ATM deconfliction service is not available or limited, the consequences will be likely more severe than in controlled airspace. Additionally, the severity also depends on the intruder equipage, as inadequate maneuvers can be initiated due to CNS performance limitations in the intruder platform. Hence, depending on airspace and UAS performance characteristics, specific avionics sensors/systems will have to be integrated. In conclusion, ADS-B has a good potential to be utilized as the main cooperative system especially in airspace with IFR traffic. Further evaluation will have to consider intruder equipage failures as well as different airspace and conflict scenarios. This future work will prompt an evolution of the conventional probabilistic safety assessment methodology.