1. Introduction

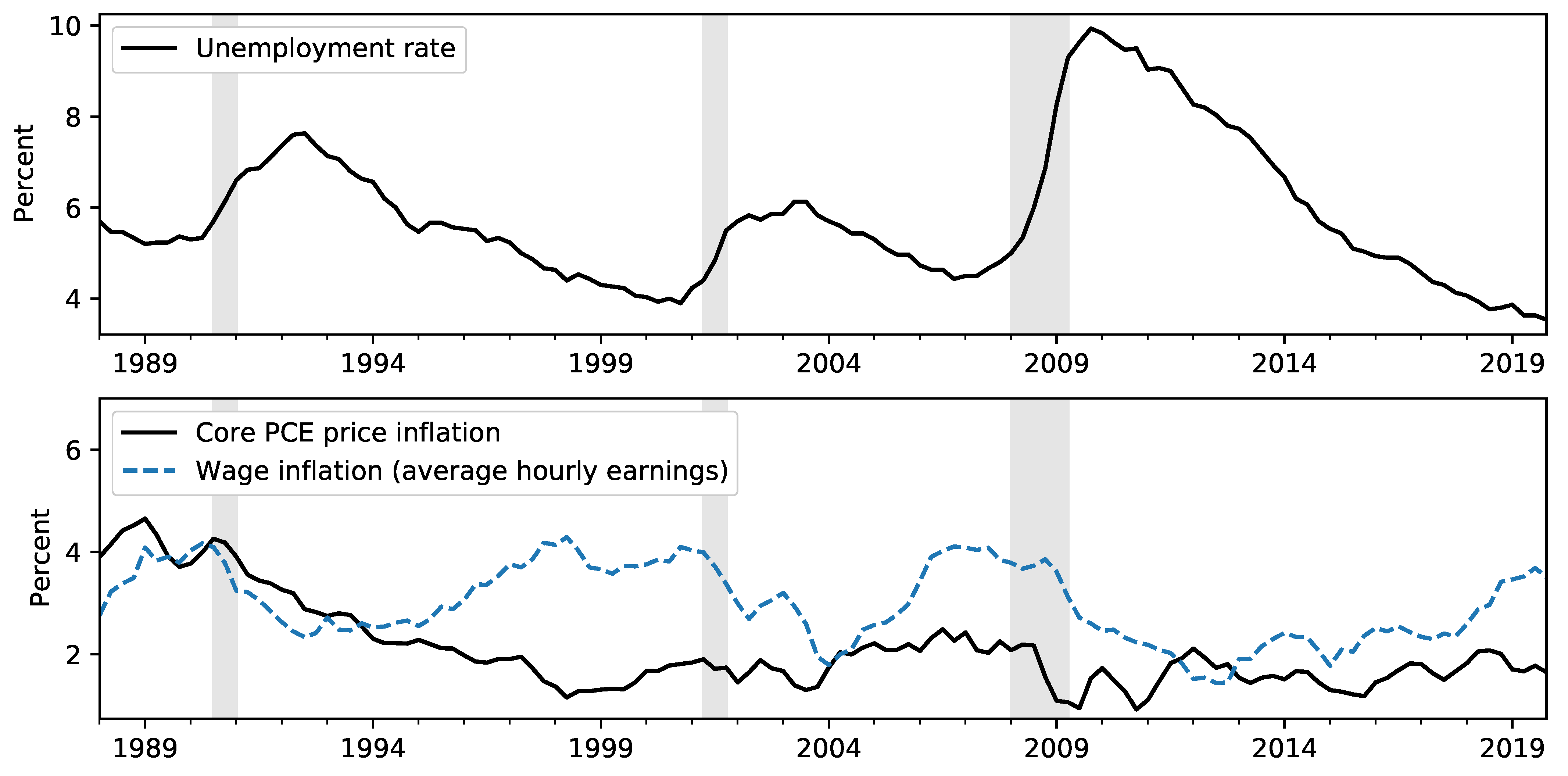

After a slower-than-usual recovery from the Great Recession, the unemployment rate fell to 3.5% in December 2019, its lowest reading since December 1969. At the same time, wage growth, while firming, remained only moderate, and consumer price inflation only briefly reached the 2% target of the Federal Open Market Committee (FOMC). These restrained price movements in the face of dramatic swings in labor market data, illustrated in

Figure 1, have been historically puzzling. The current debate about possible inflationary pressures developing highlights the increased uncertainty about the future behavior of inflation and the importance of taking into account a broad information set. Our interest, therefore, is to consider what information, if any, may be used to guide inflation forecasts going forward.

One popular framework for analyzing and forecasting inflation is based on the Phillips curve, the predicted negative relationship between economic slack and inflation. In addition to the extensive literature exploring the empirical and theoretical properties of these models—including the discussion of the recent flattening of the Phillips Curve—former Federal Reserve Board Chair Janet Yellen and current Chair Jerome Powell have in recent speeches referenced an expectations-augmented econometric Phillips curve specification as a framework for modeling and forecasting consumer price inflation.

1 At the same time, however, recent literature on inflation forecasting has mostly emphasized simpler, often univariate, models.

In this paper, we investigate if and how additional information—additional macroeconomic variables, expert judgment, or forecast combination—can improve forecast accuracy over simple models. Our key finding is that while simple models remain generally hard to beat, careful introduction of additional information can improve forecasts, particularly in the post-crisis period starting in 2009. Notably, we find aggregating forecasts of inflation components, forecast combination, and using large information sets informing expert judgment to improve forecast accuracy at short horizons.

Our approach is informed by three recent strands of the literature on inflation forecasting. First,

Atkeson and Ohanian (

2001) and

Stock and Watson (

2007) show that while inflation has become easier to forecast overall in recent decades—in the sense of lower out-of-sample mean square errors across a variety of univariate and multivariate models mainly due to the overall lower variability of inflation—it has at the same time become more difficult to effectively incorporate information other than inflation itself in producing forecasts that improve over simple benchmark models. In particular, they note that the usefulness of Phillips curve models, in which slack can be used to predict future inflation, appears to have declined.

A second strand of the literature shows that survey forecasts have predictive power for inflation, both when included as an expectations term in Phillips curve models and when considered as direct forecasts.

Faust and Wright (

2013) distill from previous results and their own real-time forecasting exercise the following lessons: (1) Judgmental forecasts do best; (2) Good forecasts must account for a slowly varying local mean; (3) Good forecasts begin with high quality nowcasts; (4) One of the best forecasting techniques is to simply produce a smooth path between the best available nowcast (as the forecast for the first horizon) and the best available local mean (as the forecast for the last horizon).

We view these results as promising since although all of these papers emphasize the superiority of simple models, each actually incorporates more information in its forecasts than the last.

Atkeson and Ohanian (

2001) forecast inflation using only its own last four lags, while the unobserved components model with stochastic volatility model introduced by

Stock and Watson (

2007) allows for time-varying parameters in order to employ the entire history of inflation. In the language of

Faust and Wright (

2013), each of these papers presented methods for estimating a “local mean” of inflation.

Faust and Wright (

2013) then extend the local mean to make use of variables other than inflation itself, including judgmental nowcasts and long-term forecasts from surveys that potentially incorporate a large—although poorly defined—additional dataset.

A third strand of the literature explores whether forecast combination can improve inflation forecasts. Forecast combination of different forecasts of the same variable have been shown to improve over the best single forecast in certain situations (see

Hendry and Clements (

2004)). Furthermore, combining forecasts from disaggregate component models to forecast an aggregate has been found to improve over forecasts from an aggregate model under certain conditions (see, e.g.,

Lütkepohl (

1984),

Granger (

1987),

Hubrich (

2005), and

Hendry and Hubrich (

2011)).

In this paper, we build on these literatures, exploring if and how additional information should inform inflation forecasts. First, we consider incorporating additional information in the form of multivariate inflation forecasting models. We begin by adding specific macroeconomic variables explicitly to econometric models, focusing on resource utilization and inflation expectations as incorporated in an empirical Phillips curve. The economic information contained in these variables is well-defined and can be matched up to theoretical Phillips curve models. We next consider incorporating information from judgmental sources, in particular the Survey of Professional Forecasters (SPF) forecast and the Federal Reserve Board staff forecast presented in the Tealbook (prior to 2010 referred to as Greenbook). The economic information contained in these forecasts is less-well-defined, since it captures both subjective judgment and an unknown range of models and data from a potentially large number of unknown sources.

Second, we investigate incorporating additional information in the form of multiple econometric models, considering both the combination of forecasts from multiple models of overall price inflation and the construction of overall price inflation forecasts by aggregating forecasts of price subcomponents. Specifically, we investigate whether a Phillips Curve specification for overall price inflation improves over forecasting core, energy, and food price inflation separately and then aggregating those forecasts. We also compare this with forecast combination of different models for overall price inflation using different weighting schemes.

Previous literature has mainly focused on aggregation of forecasts from the same model or model class (see, for example,

Hubrich (

2005),

Hendry and Hubrich (

2011), and

Stock and Watson (

2016)). In contrast, we investigate whether forecast performance for US price inflation can be improved by aggregating forecasts with different specifications for each underlying inflation component, allowing us to capture particular time series characteristics of each series. In addition, we investigate whether combining different forecasts of total US price inflation improves forecast performance over the single best forecast. This is particularly relevant in times of economic uncertainty, since forecast combination can potentially be a tool to improve forecast performance in the presence of large changes such as the global financial crisis.

Hubrich and Skudelny (

2017) find that for Euro area inflation, forecast combination helps to robustify the forecast, since forecast combination for euro area inflation helps improving over the worst forecasts.

To address these questions, we perform a real-time forecasting exercise, focusing on price inflation as measured by the personal consumption expenditures (PCE) chain-type price index employed by the Federal Reserve to evaluate the inflation objective. We extend the real-time forecast evaluation by

Faust and Wright (

2013) in a number of respects: we explicitly compare different forecast combination and aggregation strategies and include in this analysis SPF and Tealbook forecasts. We also include more recent sample periods and we focus on PCE price inflation (as opposed to other inflation measures such as those based on the GDP deflator or the consumer price index) motivated by its importance for monetary policy in the US. We explore which additional pieces of information were most useful before, during, and after the global financial crisis, and so shed light on which methods are most promising now for constructing and robustifying inflation forecasts. This is particularly relevant in light of the surprising behavior of inflation during the recent expansion and the additional uncertainty that has been introduced by the current, pandemic-induced, economic crisis.

2. Data

Our forecasting exercise focuses on U.S. inflation, measured by the quarter-over-quarter percent change in the personal consumption expenditures (PCE) chain-type price index produced by the Bureau of Economic Analysis (BEA). PCE prices are particularly significant from the perspective of monetary policy, because the longer-run inflation objective of the Federal Open Markets Committee, first adopted in January 2012 and later revised in August 2020, is stated in terms of PCE inflation.

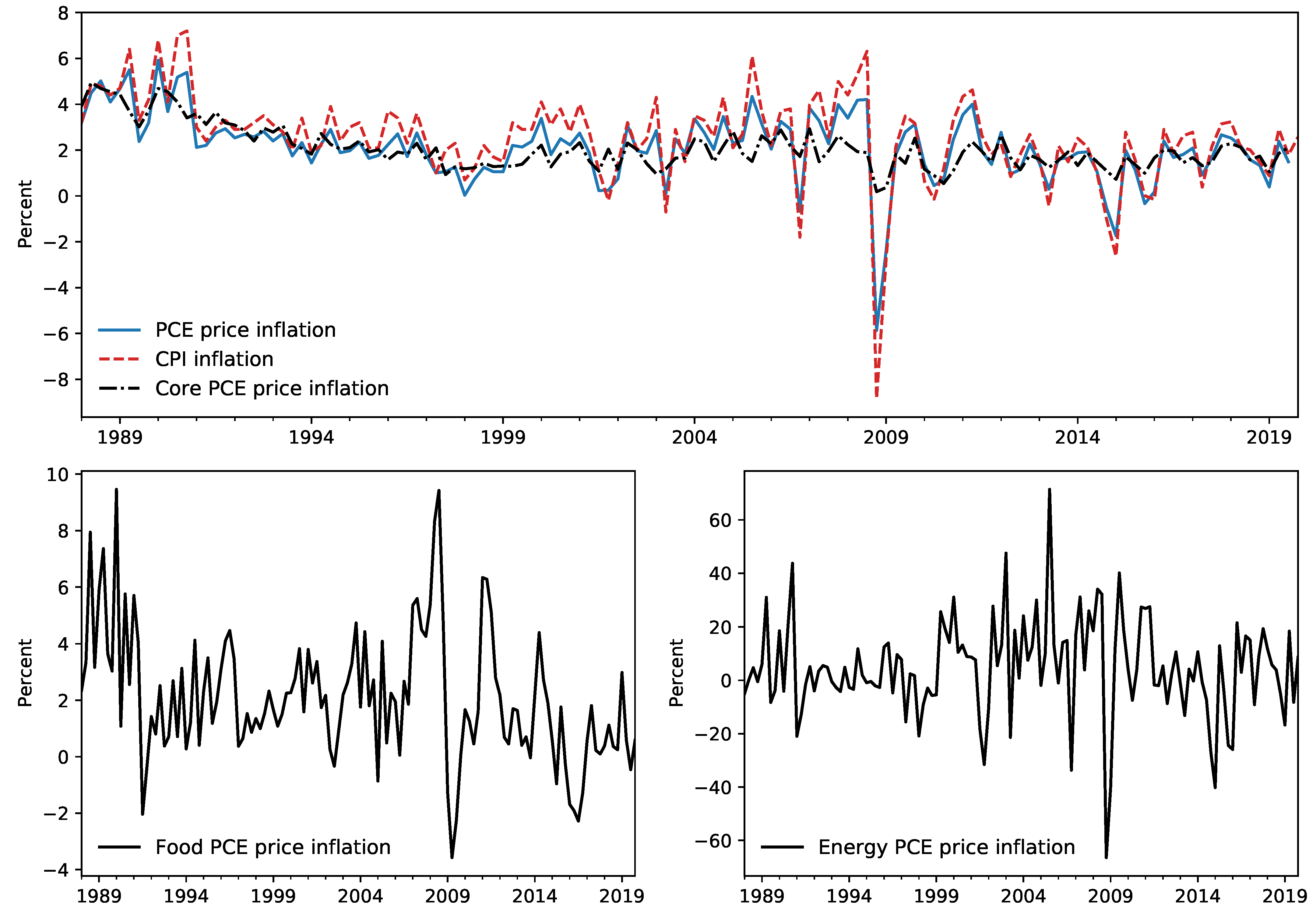

2 Nonetheless, other measures of inflation remain important, both as economic indicators and for our exercise here. In

Figure 2, we show the evolution of several of these measures.

The primary alternative measure of U.S. consumer price inflation is based on the Consumer Price Index (CPI) published by the Bureau of Labor Statistics (BLS). While this measure differs from the PCE price index in several important ways, it has historically been an important measure for monetary policymakers.

3,4 Moreover, while attention has recently shifted to the PCE price index, its construction by the BEA largely relies on source data from disaggregate CPI series collected by the BLS. This fact has implications for our forecasting exercise, because it implies that monthly CPI releases provide information about quarterly PCE price inflation that can be exploited in forecasting.

5,6While the overall PCE price index provides the broadest measure of consumer prices, there is also considerable interest in core inflation measures, which exclude the volatile food and energy subcategories.

7 One commonly cited benefit of core measures of inflation is that, since they exclude volatile components, they are better predictors of future inflation. In our exercise, we include a model that aims to take advantage of this by first separately producing forecasts for core, food, and energy prices, and then aggregating to produce a forecast of overall PCE price inflation.

While several of our forecasting models are designed to predict future inflation using only consumer price data, many of the forecasts that we consider make use of other macroeconomic variables, including data on oil prices, prices of imported goods, inflation expectations, and real economic activity. These variables are described in more detail below, when we introduce our forecasting models. We also consider real-time judgmental inflation forecasts produced by the Survey of Professional Forecasters and the Federal Reserve Board, and this introduces several issues related to forecast timing and data availability, which we discuss now.

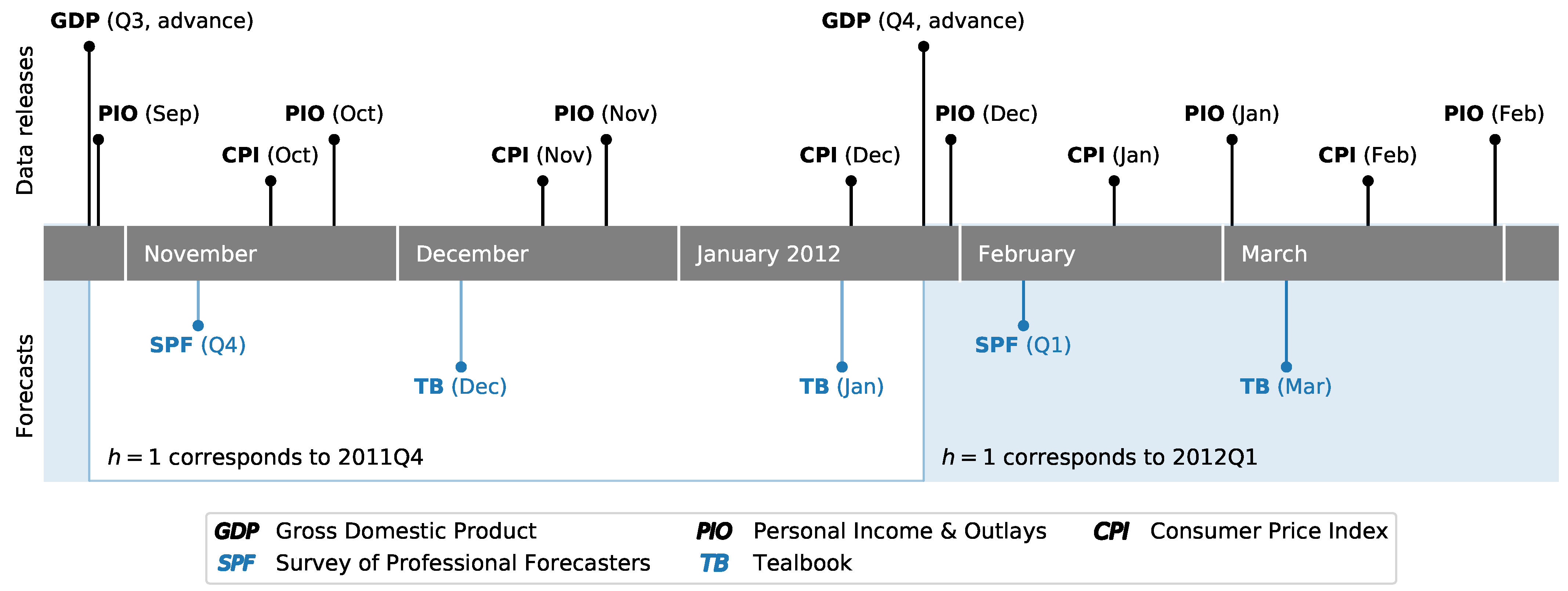

The Survey of Professional Forecasters (SPF) is a quarterly survey published by the Federal Reserve Bank of Philadelphia, with timing based around the release schedule for Gross Domestic Product (GDP) and quarterly PCE prices, which are both part of the National Income and Product Accounts (NIPA).

8 In particular, surveys are typically sent to and due to be returned by respondents early in the second month of a given quarter. This is timed to occur shortly after the first—or “advance”—release of the NIPA data for the previous quarter. This timing is illustrated in

Figure 3, which shows the evolution of data releases and judgmental forecasts for the end of 2011 and beginning of 2012. For example, the advance release of 2011Q3 NIPA data, annotated as “GDP (Q3, advance)”, occurred on October 27, 2011, and survey responses for the fourth-quarter SPF were due on November 8.

The Federal Reserve Board, meanwhile, produces inflation forecasts as part of the “Tealbook” forecasts that are prepared by staff economists in advance of each of eight annually scheduled Federal Open Markets Committee meetings. While this typically results in two Tealbook forecasts per quarter, they are not synchronized specifically to NIPA data releases, and so timing and data availability can vary between Tealbooks. For example, the advance release of 2011Q4 GDP occurred after the publication of both the December 2011 and January 2012 Tealbooks, both of which were published well after fourth-quarter SPF. Archived Tealbook data is made available by the Federal Reserve Bank of Philadelphia Real-Time Data Research center.

In addition to the quarterly data released as part of the NIPAs, a monthly PCE price index is available as part of the BEA’s Personal Income and Outlays (PIO) release, and the CPI is similarly released monthly by the BLS. Depending on the timing of the SPF and Tealbook releases, this can introduce a difference in the dataset available when these different forecasts were produced. For example,

Figure 3 shows that between the 2011Q4 SPF due date and the December 2011 Tealbook, price data for October—the first month of the fourth quarter—was released for both the CPI and PCE measures. More generally, high frequency data that may be relevant for inflation forecasting—such as daily data on oil and gasoline prices—accrues over the course of each quarter. As a result, even though each quarterly PCE release corresponds to one SPF forecast and (typically) two Tealbook forecasts, there is a clear difference in the available information set at the time each forecast was produced. In order to alleviate this difference as much as possible, in our exercise we only consider the forecasts produced for the first Tealbook following each advance GDP release. In the example from

Figure 3, we compare the 2011Q4 SPF forecasts against those from the December 2011 Tealbook, and discard those from the January 2012 Tealbook, since the latter incorporates even more additional updated information in comparison to the SPF, while the former Tealbook has an information set relatively more comparable to the SPF.

The model-based forecasts that we consider operate only on a quarterly basis, and as such they do not incorporate monthly-frequency data on prices. To fix the timing, we assume that these models were run on the day of the included Tealbook forecast, although since they are estimated only using data through the previous quarter, the specific timing within the quarter matters only to a little.

9 Specifically, in the example from

Figure 3, the model-based forecasts that we compare against the 2011Q4 SPF and the December 2011 Tealbook only include data through 2011Q3, based on the vintage available at the time of the December Tealbook’s publication.

3. Forecasting Methodology

Our focus is primarily on the root mean squared error (RMSE) of out-of-sample forecasts for quarterly inflation measured by the PCE price index. Our results are usually shown relative to a benchmark model, where a relative RMSE number less than one indicates improvement compared to the benchmark. Because our source data—both for PCE prices and many of the other variables we use, such as GDP—is subject to potentially large revisions, a real-time forecasting exercise is necessary.

10The data we use is drawn from archived Tealbook databases underlying publicly available Tealbook forecasts and from Alfred (the real-time data repository maintained by the Federal Reserve Bank of St. Louis). The timing of forecasts is as follows: once PCE prices are published through period t, we produce forecasts for each period up to two years ahead ( quarters). The two judgmental forecasts that we consider, however, may already have additional information about the quarter , and so those forecasts at the horizon are more accurately described as nowcasts.

To conduct the out-of-sample forecast evaluation, we estimate all models based on a recursively expanding sample that begins in 1988. As described above, to fix timing we define each forecasting vintage by associating it with a specific Tealbook publication. The first Tealbook in our sample was produced in September 1999, at which time published PCE prices ran through 1999Q2, and so the forecast from our first vintage is for the period 1999Q3. The final vintage of our dataset includes published PCE prices through 2019Q3, so that the final forecast is for the period 2019Q4. This vintage corresponds to the December 2019 Tealbook, although due to the five-year embargo period on Tealbooks, the Tealbook forecasts for that vintage are not yet publicly available. Instead, the final set of Tealbook forecasts that we include in our analysis comes from the December 2014 Tealbook, for which the forecast corresponds to 2014Q4. For this reason, we report results that include Tealbook forecasts but end with the December 2014 Tealbook vintage separately from results that extend through the December 2019 vintage but exclude Tealbook forecasts.

Since PCE price data are revised, there is no single source of true data against which to compare our forecasts. We follow

Tulip (

2009) and

Faust and Wright (

2013) in using PCE price inflation as measured in the release two quarters after the reference quarter as the true value from which forecast errors are constructed.

3.1. Model-Based Forecasts

We begin our real-time exercise by constructing forecasts of inflation, denoted

, from parametric econometric models. These provide an explicit specification of both included variables and inflation dynamics. Since there are an unlimited number of potential forecasting models to consider, we focus our attention on the classes of models that (a) have been shown to produce competitive inflation forecasts in previous studies, (b) are parsimonious, and (c) most directly speak to the role of additional information in inflation forecasting.

11 We present a unifying framework in Equation (

4) after introducing the first set of different models employed in this paper.

3.1.1. Autoregressive Model (AR)

The first model we consider has a very simple specification, in which the inflation forecasts

are produced from the AR(p) model

We then iteratively apply a one-step-ahead forecast

h times to construct the desired forecast

. The lag order that we present results for,

, was selected using the Bayes Information Criteria over the largest sample period.

12 This model is univariate in inflation forecasting, and so includes the least additional information of all models that we consider.

13 3.1.2. Inflation Gap Model (AR-Gap)

A useful way to incorporate some additional information while maintaining a parsimonious econometric model is to model inflation as exhibiting short-term fluctuations around some underlying trend, denoted

. This requires specification of the inflation trend and an econometric model for modeling the dynamics of the “inflation gap”. The inflation gap, denoted

, is the difference between inflation and its trend. Here, we use the Survey of Professional Forecasters forecast of average PCE inflation over the next 10 years as a proxy for trend inflation, while we model the inflation gap as an autoregressive process. Relative to the simpler autoregressive model presented above, this model incorporates additional information from survey forecasts to help pin down the “local mean” of inflation. Specifically, the forecasting model is

We then proceed as in

Faust and Wright (

2013) by taking the predictions of the gap—the forecasts

—and adding back the final observation of the trend to get the implied prediction of inflation. We present results for lag order

, the same as for the simple autoregression model above.

3.1.3. Phillips Curve Models

We now explicitly incorporate into our forecasts additional information from macroeconomic variables other than inflation, in the form of an empirical Phillips curve model. This class of models is appealing in that it uses macroeconomic variables to forecast inflation and has links to theoretical models of price-setting. The general form of the Phillips curve models that we consider is

where

is an estimate of the inflation trend at time

t,

is a measure of economic slack at time

t, and

is a vector of controls. By varying the specifications of the inflation trend, economic slack, and the vector of controls, we can accommodate a wide range of additional information.

As in the construction of the inflation gap model above, we model the inflation trend using long-run inflation expectations from the Survey of Professional Forecasters. Economic slack is modeled as the distance between the unemployment rate and an estimate of the natural rate of unemployment. For all forecasts made through December 2014, we use the Tealbook estimate of the natural rate of unemployment, while for the period January 2015 to the present we use the estimate of the natural rate of unemployment produced by the Congressional Budget Office, since Tealbook estimates from this latter period have not yet been made public. The vector of controls that we include contains relative core import price inflation and relative energy price inflation.

14 Note that in this model relative import price inflation captures the impact of international inflation developments on US inflation.

15Forecasts of based on this equation require forecasts of the right-hand-side variables. For results that we report, we apply a random walk forecast for the inflation trend and the forecast from an AR(1) model for the other variables.

As a

unifying conceptual framework to think about how the different forecasting models use additional information to forecast inflation, one can consider the following extended version of the Phillips curve model that nests the AR, AR-Gap, and standard Phillips curve models described in the paragraphs above:

When , then the AR model is obtained, while when , and we obtain the AR-Gap model that we will use as our benchmark forecast model in the forecast comparison. Finally, if then we obtain the Phillips curve model discussed above.

3.1.4. Vector Autoregressive Model (VAR)

We also consider forecasts from a Vector Autoregressive Model (VAR) that can be thought of as another extension of the unifying Phillips-Curve framework discussed where the right-hand side variables of the single-equation Phillips curve model are included as endogenous variables rather than conditioning on them.

To facilitate the comparison, we use the same variables as we did in our Phillips curve model. As in the simple univariate autoregression, we estimate the parameters of the vector autoregression and then iteratively apply the one-step-ahead forecast h times. We present results for the lag order 1 selected using the Bayes Information Criteria over the largest sample period.

3.2. Aggregating Forecasts of Disaggregate Inflation

As another extension of the unifying Phillips-Curve framework outlined above, we also produce forecasts of the primary disaggregate series that make up total PCE price inflation and then combine them as a weighted average to forecast the aggregate. Here, our primary focus is on including additional information in the model specification, and we are able to allow different price subcomponents to depend on different macroeconomic variables and to exhibit different dynamics. In particular, we separately make forecasts for core PCE price inflation, food PCE price inflation, and energy PCE price inflation, and then combine them using their relative shares in PCE as weights.

The forecast of core PCE price inflation is based on a Phillips curve model similar to the empirical Phillips Curve model described above. The forecast for food PCE price inflation is also based on a similar Phillips curve model, except that in this case, no control variables are included so that the term with is dropped. Energy PCE price inflation is modeled as , where is oil price inflation. Forecasts are then produced by assuming that oil price inflation follows a random walk. This aggregated forecast approach potentially improves the accuracy of forecasting the aggregate by a reduction in the estimation uncertainty and misspecification.

3.3. Judgmental Forecasts

We include in our forecasting exercise two sets of forecasts that are not based on an explicit forecasting model. Relative to model-based forecasts, these judgmental forecasts are likely based on a much larger information set.

3.3.1. Survey of Professional Forecasters (SPF)

First, we include forecasts based on responses to the Survey of Professional Forecasters (SPF). Since 2007, the SPF has included forecasts of total PCE price inflation at quarterly horizons

as well a forecast of average annual inflation over the next ten years, intended to capture expected long-run inflation. To construct forecasts for the horizons

, we follow an approach along the lines of that suggested by

Faust and Wright (

2013) and linearly interpolate between a near-term forecast and a long-term forecast.

16 In particular, we set the

forecast equal to the SPF forecast for long-run inflation and then linearly interpolate between the

and

values. In all cases, we use median SPF forecasts. Prior to 2007, the SPF only produced forecasts of consumer price index (CPI) inflation, and so we must use implied forecasts for PCE. As noted earlier, differences in the construction of these two price indices tend to lend an upwards bias to CPI inflation compared to PCE inflation.

17 To impute forecasts for PCE price inflation prior to 2007, at each period we start with the CPI forecast provided by the SPF and then subtract the historical wedge (as would have been computed at that time) between published CPI inflation and published PCE price inflation.

3.3.2. Tealbook Forecasts of Federal Reserve Board Staff

Second, we include the forecasts provided in each Tealbook for total PCE price inflation, which are judgmental forecasts produced by staff of the Federal Reserve Board of Governors. Due to the 5-year lag between finalizing the Tealbook and its public release, Federal Reserve Board staff forecasts are only available prior to 2015, and our primary exercise therefore only considers forecasts made through the end of 2014. A secondary exercise expands the sample to include forecasts made through the end of 2019, although it excludes Tealbook forecasts. It should be noted that the Tealbook forecast takes into account that the US is an open economy given the staff discussions and taking on board conditional assumptions, for instance about oil prices and trade.

3.4. Forecast Combination

As a final part of our analysis we include a forecast for PCE price inflation generated by taking a weighted average of the forecasts from the models described above, except excluding the Tealbook forecasts. We consider two methods for generating the forecast combination weights: in the first case (referred to below as “simple” combination), the weights are set equal for each model, while in the second case (referred to below as the “MSE” combination) the weight for a given model at the time

t is set to be the inverse of the root mean squared error generated by the model over the preceding 8 quarters. Combining different forecast of the same variable can improve over the best forecast when forecasts are biased in opposite direction.

18 Furthermore, the forecast combination method with time-varying weights helps to shed light on the time-varying relative forecast performance of the different models included in the forecast comparison.

4. Results: Forecasting US PCE Inflation in

Real Time

We begin by presenting results for our comparison of forecasts of US PCE inflation in real-time for the portion of our sample period for which public Tealbook forecasts are available, 1999Q3–2016Q3, and then discuss the pre- and post-crisis periods for that sample.

19 Our focus will be on the root mean square error (RMSE) of our forecasts relative to the AR(1) model in the inflation gap. Our RMSE evaluation is based on quarterly inflation.

20 This is also the model that

Faust and Wright (

2013) use as their benchmark, and we found that it outperforms other candidate benchmarks (such as the AR(1) model in inflation). We have also carried out Diebold-Mariano (DM) tests (see

Diebold and Mariano (

1995);

West (

1996);

Diebold (

2015)) to investigate whether the RMSE improvements over the benchmark model were significant.

21 4.1. Real-Time Analysis Including Public Tealbook Forecasts

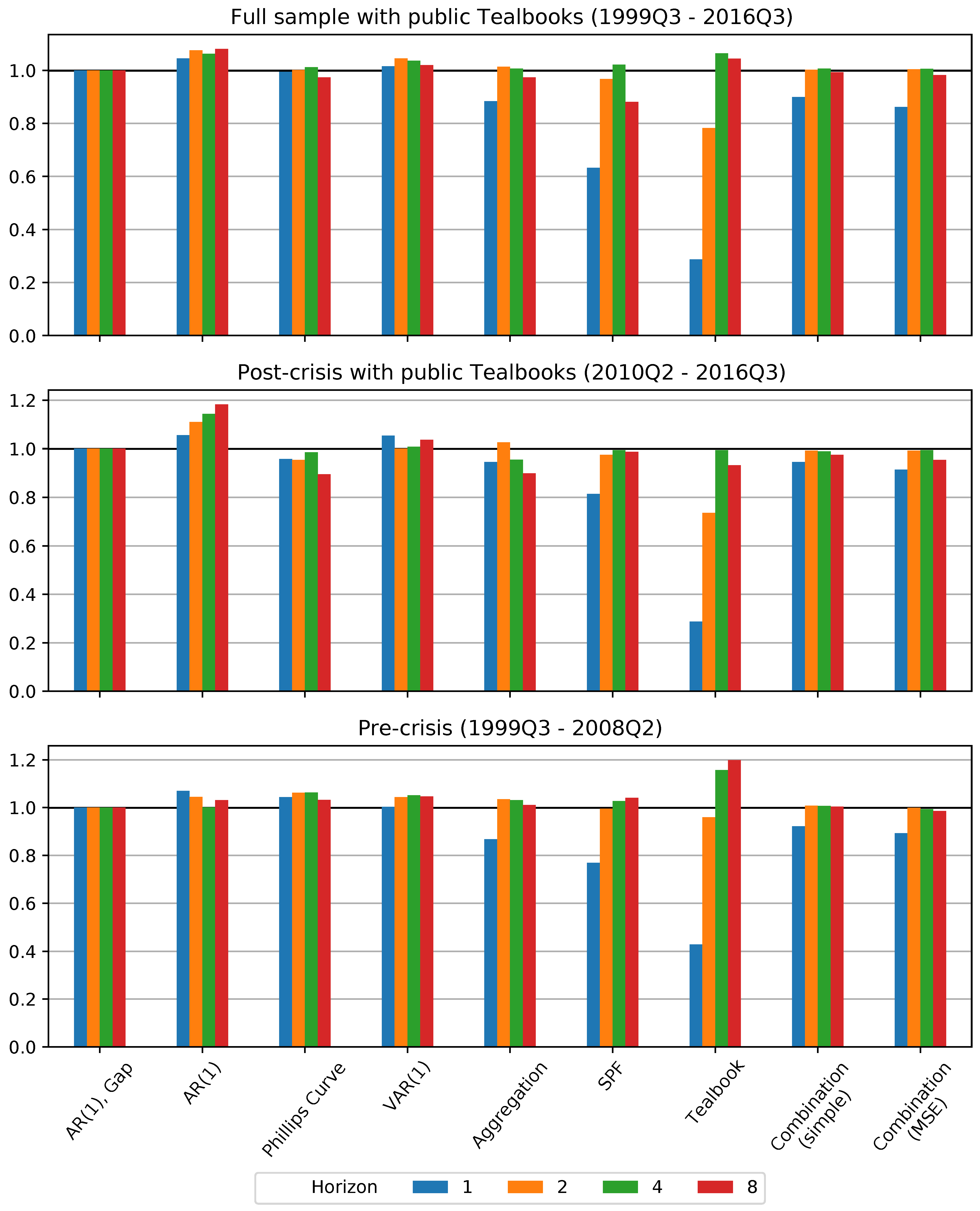

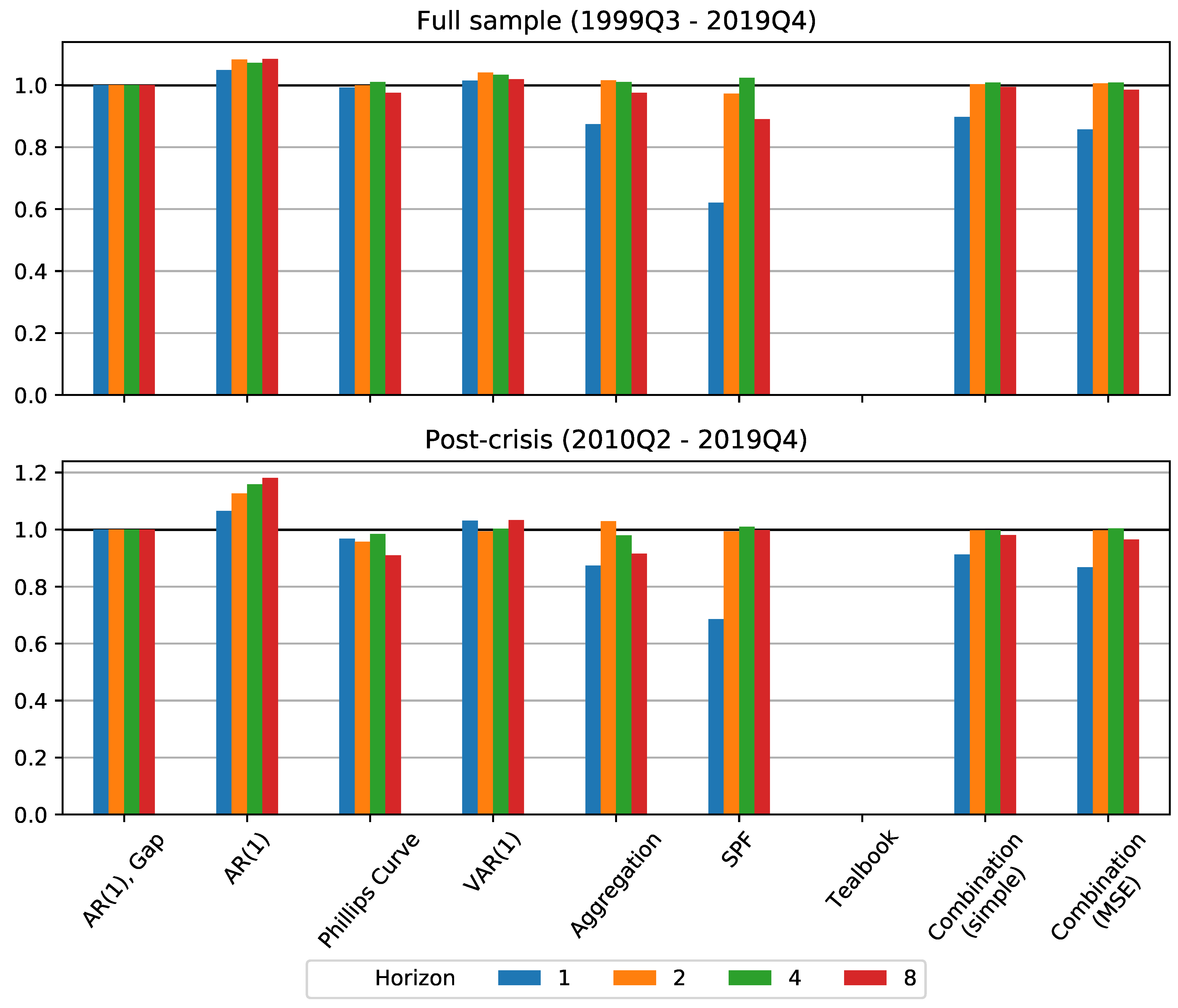

We first consider a comparison of our selected forecast models and methods for the sample period, 1999Q3–2016Q3, where our second source of judgmental forecasts—those produced by the staff of the Federal Reserve Board (FRB) and recorded in Tealbooks—is publicly available. Due to the embargo on recent Tealbooks, for the results in this section we restrict our sample so that the final forecasts were produced in 2014Q4. The relative RMSE results are shown in

Figure 4, and the relevant DM test results are presented in

Table 1.

A key takeaway over the full sample for which the Tealbook is available is that the autoregressive model in the inflation gap is generally difficult to improve upon except for horizon . Indeed, the model-based forecasts that incorporate specific additional macroeconomic variables—the Phillips curve and vector autoregression—do no better than this benchmark, except for the Phillips curve model during the post-crisis period. However, improvements from some of the forecasting methods we employ, in particular judgmental forecasts, forecast aggregation and forecast combination, do stand out.

First, the aggregated forecast—which forecasts core, food, and energy prices separately before aggregating them to produce the total PCE price inflation forecast—is able to improve on the gap model at the horizon . This is particularly noteworthy since the other forecast methods that improve at this horizon incorporate mixed frequency data (see the discussion of the SPF, Tealbook, and combination models below), while this method does not. The improvements for the aggregated forecast are statistically significant at this horizon, according to DM test results, but not for other horizons.

Second, the SPF forecast shows a dramatic reduction in forecasting error at the horizon . As described above, SPF respondents generally produce this forecast at the beginning of the second month of the quarter being forecasted, and so it can be labeled as a nowcast. Thus, this enhanced forecasting performance reflects both the judgmental expertise of the forecasters and the fact that SPF forecasters have access to a larger information set, including some information about the quarter, when making their forecast. At a horizon of two years ahead () the SPF forecast is also superior to the benchmark, while for horizons and there is little or no improvement in SPF forecast performance over the benchmark. The improvements in forecast performance for are clearly statistically significant for the pre- and post-crisis period, and borderline significant for the full sample period according to the DM test.

Third, the forecasts produced by forecast combination methods show improvements over the benchmark. These include the SPF as one of the constituent forecasts, and their improvement relative to the benchmark at the horizon show that they are able to take advantage of SPF forecast improvements. At the same time, the combination forecasts provide a robust forecast, as they do not degrade as much as the SPF at longer horizons, and always improve over the worst models, including both the AR(1) and VAR(1). Finally, the combination incorporating time-varying weights performs slightly better than the equal-weight counterpart, suggesting that it can be useful to take into account variation over time in forecasting performance. The RMSE improvements of the combination method with time-varying weights over the benchmark model are statistically significant for both 1 quarter and 2 year horizons ( and ).

Finally, the public Tealbook forecasts by Federal Reserve staff provide substantial and statistically significant forecasting improvements at horizons

, but do not outperform the benchmark model at the longer horizons. The most conspicuous result is the performance of the nowcast (

) contained in the Tealbook, even compared to that from the Survey of Professional Forecasters. Although striking, this result likely largely reflects the fact that Federal Reserve staff nowcasts take into account a much larger information set than the models we consider, which include at most a handful of explanatory variables. Although some of this improvement is no doubt due to the use of higher-frequency variables, the Tealbook nowcasts also substantially outperform those of the Survey of Professional Forecasters, who would have had access to similar data, although as noted in

Section 2, the Tealbook forecasts have a slightly updated information compared to the SPF forecasts. Altogether, this suggests that Tealbook nowcasts provide an upper bound for forecasting improvements, and shows that even the quite-good SPF nowcasts still have room to improve.

A second notable result from our out-of-sample forecast comparison is the strong improvement of the Tealbook forecast compared to the benchmark model at the horizon

– the only forecast to outperform the benchmark at this horizon, although only statistically significant on a 15 percent significance level. One explanation for this result is, as noted by

Faust and Wright (

2013), that a good forecast for

can help improve the forecast for

. This suggests that there are gains still available in near-term inflation forecasting (even beyond those gained by using higher-frequency data to produce nowcasts), either from additional data with predictive power or from improved models.

4.2. Pre- vs. Post-Crisis Analysis Including Public Tealbooks

The global financial crisis and subsequent Great Recession substantially disrupted the US economy; this raises several questions relevant for inflation forecasting. First, forecasting errors made during the crisis—a time in which inflation was quite volatile—might be influencing our results. Second, a structural break might have occurred in inflation dynamics, so that forecasting methods or sources of information that improved forecast accuracy in comparison to the benchmark model prior to the crisis might not provide similarly accurate forecasts after the crisis. For instance, it might be argued that the slow labor market recovery following the global financial crisis (see

Figure 1) was evidence of an altered economic climate compared to the pre-crisis period, with implications for inflation. To address these issues, we consider subsample analyses of the pre- and post-crisis periods, with results shown in the middle and lower panel of

Figure 4.

Comparison of the forecasting performance in the pre- and post-crisis periods suggests that our primary qualitative results described for the full sample agree with those from the pre-crisis period, but begin to break down during the post-crisis period as many models outperform the benchmark at both short and long horizons in RMSE terms.

These results suggest that there can be room for the use of additional information in improving inflation forecasting, particularly in the post-crisis period, and especially at very short and very long horizons.

22 Moreover, we are able to find several different methods of incorporating additional information into econometric models that produce these improvements. Notably, and unlike in previous work, here we find that Phillips curve models can still improve on simple forecasting models when forecasting inflation in some situations.

To summarize the results of the sample and sub-samples that include the public Tealbook forecasts: we find that the methods that include richer information sets (compared to simple forecasts based on just one univariate or multivariate forecast model) all significantly improve over the benchmark inflation gap model for the full sample including public Tealbooks and the pre-crisis period at the shortest horizon. These models include the aggregation, the combination methods—including both the simple average and the time-varying MSE-weighted combination—as well as the judgmental forecasts—including both the SPF and Tealbook forecasts.

In the post-crisis period including public Tealbooks, we continue to find that the time-varying, MSE-weighted combination and both judgmental forecasts improve significantly over the benchmark model, while the improvements of the aggregation method and simple combination are not significant at the short horizon. Meanwhile, the aggregation forecast and the time-varying, MSE-weighted combination forecast both significantly improve over the benchmark at the 2-year horizon.

4.3. Full Sample through 2019

To investigate whether our results for the baseline sample period (the period that includes publicly available Tealbooks) also hold for an extended sample period, we compare the forecasts from the forecast models and methods other than the Tealbook for the full sample including recent history up to 2019 as well as the post-crisis period including these more recent years. The results for the full sample are shown in the upper panel of

Figure 5, while the results for the post-crisis period up to 2019 are shown in the lower panel. Results for the DM tests are shown in

Table 2.

It is noteworthy that there is little change in the relative forecast performance of the models when adding five additional years. For the full sample, the inflation gap is still difficult to improve upon, apart from the horizon where the SPF, aggregation, and combination methods provide a significantly better forecast than the benchmark model, and the horizon , where the time-varying MSE-weighted combination method improves over the benchmark. For the post crisis period through 2019 we get the same results, except that the aggregated forecast and the Phillips Curve model significantly improve over the benchmark for while the time-varying MSE-weighted combination method improvement is not signficant.

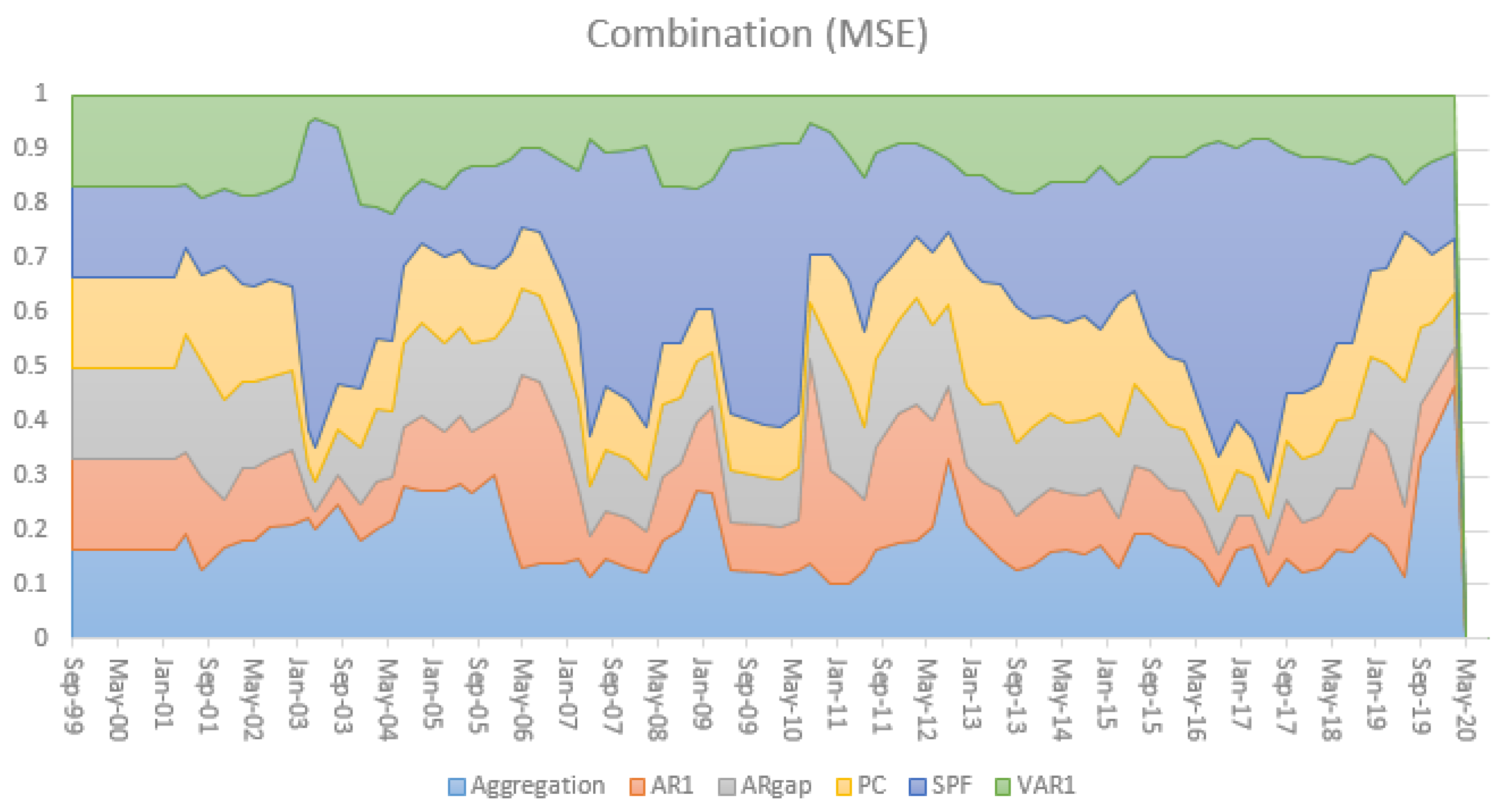

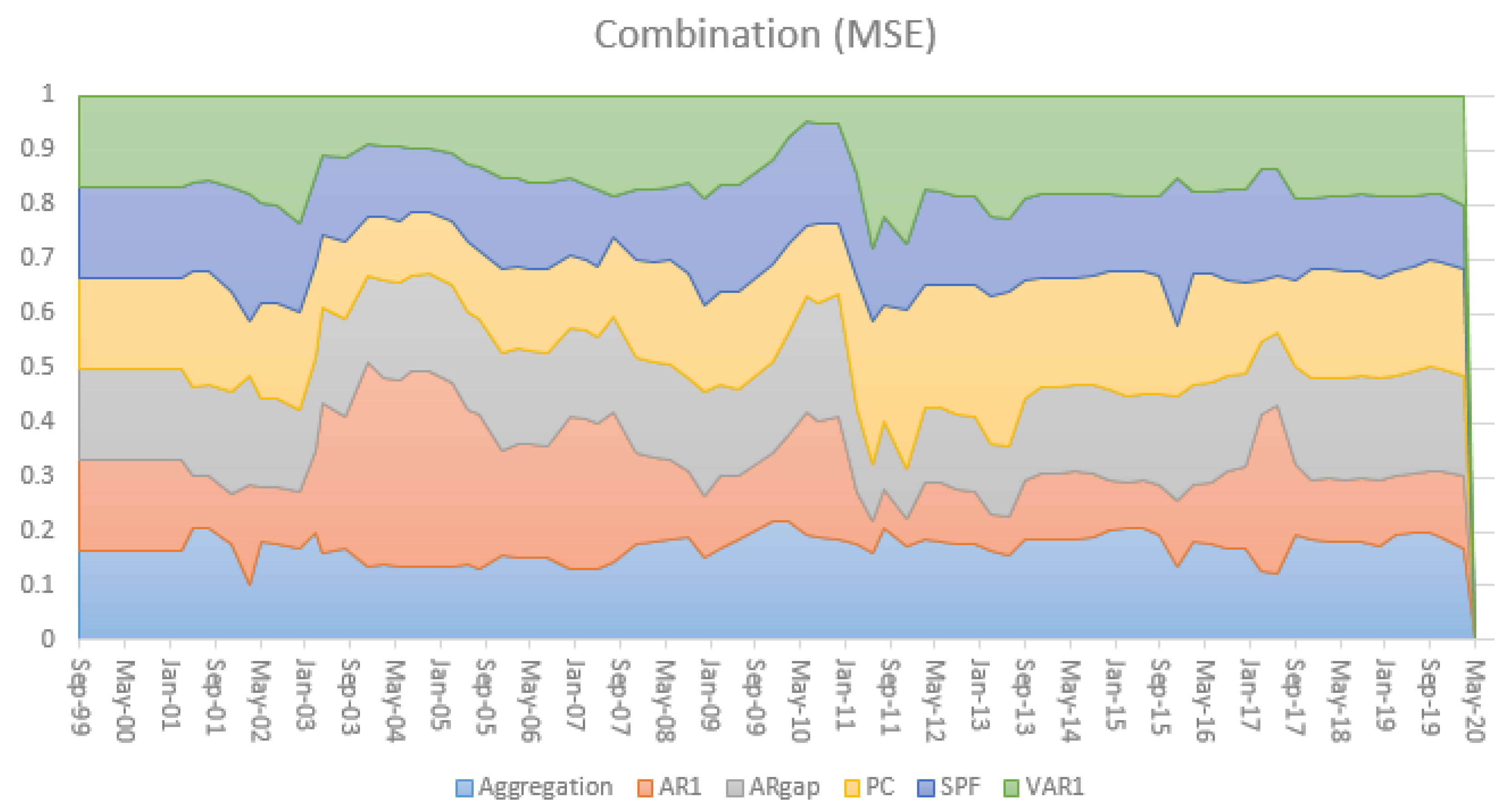

The time-varying relative performance in terms of MSE is nicely illustrated in

Figure 6 and

Figure 7 for horizons

and

, respectively. It also illustrates the relevance of the larger information set incorporated in the SPF for

in episodes with higher uncertainty and volatility, for instance during the Global Financial Crisis.

4.4. Summary of Results

Overall, there are two main takeaways that we think are worth highlighting: First, the time-varying MSE-weighted combination method consistently and significantly improves on the benchmark across different samples for horizons of 1 quarter and 8 quarters (the latter except for the post-crisis full sample period, where the aggregation method is better). Second, for the nowcast it should be noted that the SPF and Tealbook forecasts improve significantly over the benchmark, so the additional information used in those forecasts helps improving the prediction accuracy.

6. Conclusions

In this paper, we perform a real-time forecasting exercise, focusing on price inflation as measured by the personal consumption expenditures (PCE) chain-type price index that is most relevant for monetary policy decisions. We investigate whether and how additional information—additional macroeconomic variables, expert judgment, or forecast combination—can improve forecast accuracy. We analyze pre- and post-crisis performance of different inflation forecasting models as well as judgmental forecasts from the SPF and Tealbook. We show which forecasting methods are most useful before, during, and after the global financial crisis, and so aim to shed light on which methods are most promising for constructing and robustifying inflation forecasts. Our analysis is also relevant in light of the current crisis that has posed challenges for forecasting, given the unprecedented nature of the pandemic. Hence, strategies to robustify forecasts, such as the ones we have considered here, are likely to be increasingly important.

Our results provide interesting new insights for inflation forecasting from recent episodes, while some of our results confirm previous literature. Our key finding is that while simple models remain generally hard to beat, careful introduction of additional information can improve forecasts, particularly in the post-crisis period. Three types of additional information stand out as useful. First, forecast combination of different models for overall inflation are competitive and robustify against bad forecasts. Second, aggregating forecasts of inflation components can improve performance compared to forecasting the aggregate directly, suggesting that there are gains to be had from the careful specification of the dynamics of disaggregate inflation series. Finally, the large information set available to professional forecasters and the Federal Reserve Board staff can substantially improve forecasting performance, especially at short horizons, suggesting that multivariate models, including those capable of handling large data sets, can play an important role in inflation forecasting.

-4.5cm0cm [custom]