1. Introduction

One particular interest in remote sensing is the 3D reconstruction of urban areas for diverse applications such as 3D city modeling, urban, and crisis management, etc. Buildings belong to the most important objects in urban scenes and are modeled for diverse applications such as simulation of air pollution, estimating energy consumption, detecting urban heat islands, and many others [

1]. There are different levels of building modeling which have been described under the standard of the OGC City Geography Markup Language (CityGML). These are summarized in [

2].

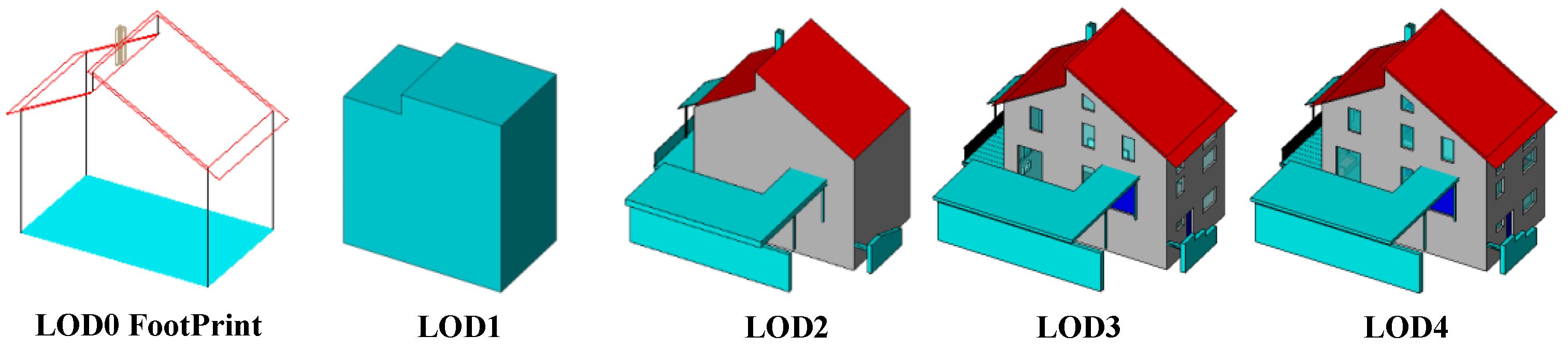

Figure 1 displays different levels-of-detail as defined in the CityGML standard. As shown in this figure, the lowest level of detail (LOD) is 1 (LOD1), which describes building models as block models with flat roof structure and provides the coarsest volumetric representation of buildings [

3]. Thus, LOD1 models are frequently produced by extruding a building footprint to a height provided by separate sources [

4]. The next level is LOD2, which represents building shapes with more details. Therefore, this type of building modeling demands high-resolution data in comparison to the first level. Comprehensive technical information about variants of the LOD of a 3D building model can be found in [

5]. In many cases, the building height information can be provided by versatile remote sensing data sources such as airborne laser scanning [

6], high-resolution optical stereo imagery [

7], or DEMs produced by synthetic aperture radar (SAR) interferometry [

8]. Other sources for LOD modelling are described in [

9].

A special interest lies in automatically generating building models for extensive areas at LOD1 level. While height information provided by airborne LiDAR data leads to highly accurate LOD1 representations of buildings [

11,

12], it is computationally expensive to produce models that cover wide areas. In addition, expensive LiDAR data are often not available for extensive areas. On the other hand, several investigations illustrate the possibility of using other remote sensing data types for 3D building reconstruction for that purpose [

13,

14]. As an example, the possibility of LOD1 3D building model generation from Cartosat-1 and Ikonos DEMs has been investigated in [

15]. In another study, Marconcini et al. proposed a method for building height estimation from TanDEM-X data [

16]. Using open DEMs such as SRTM for 3D reconstruction has been evaluated in different studies [

17,

18,

19]. They concluded that SRTM elevation data can be used for recognizing tall buildings. In a recent investigation, Misra et al. compared different global height data sources such as SRTM, ASTER, AW3D, as well as TanDEM-X for digital building height model generation [

20].

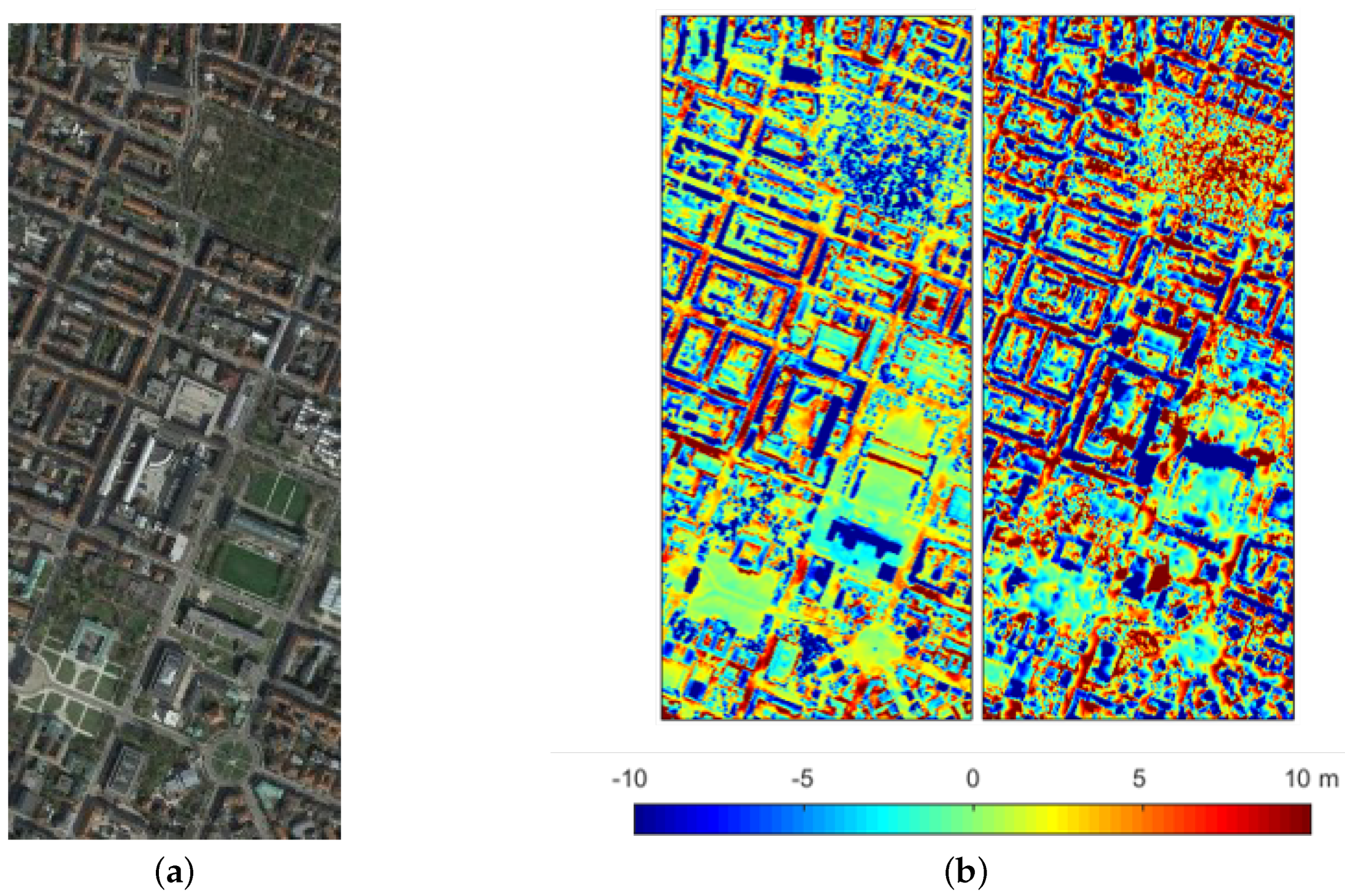

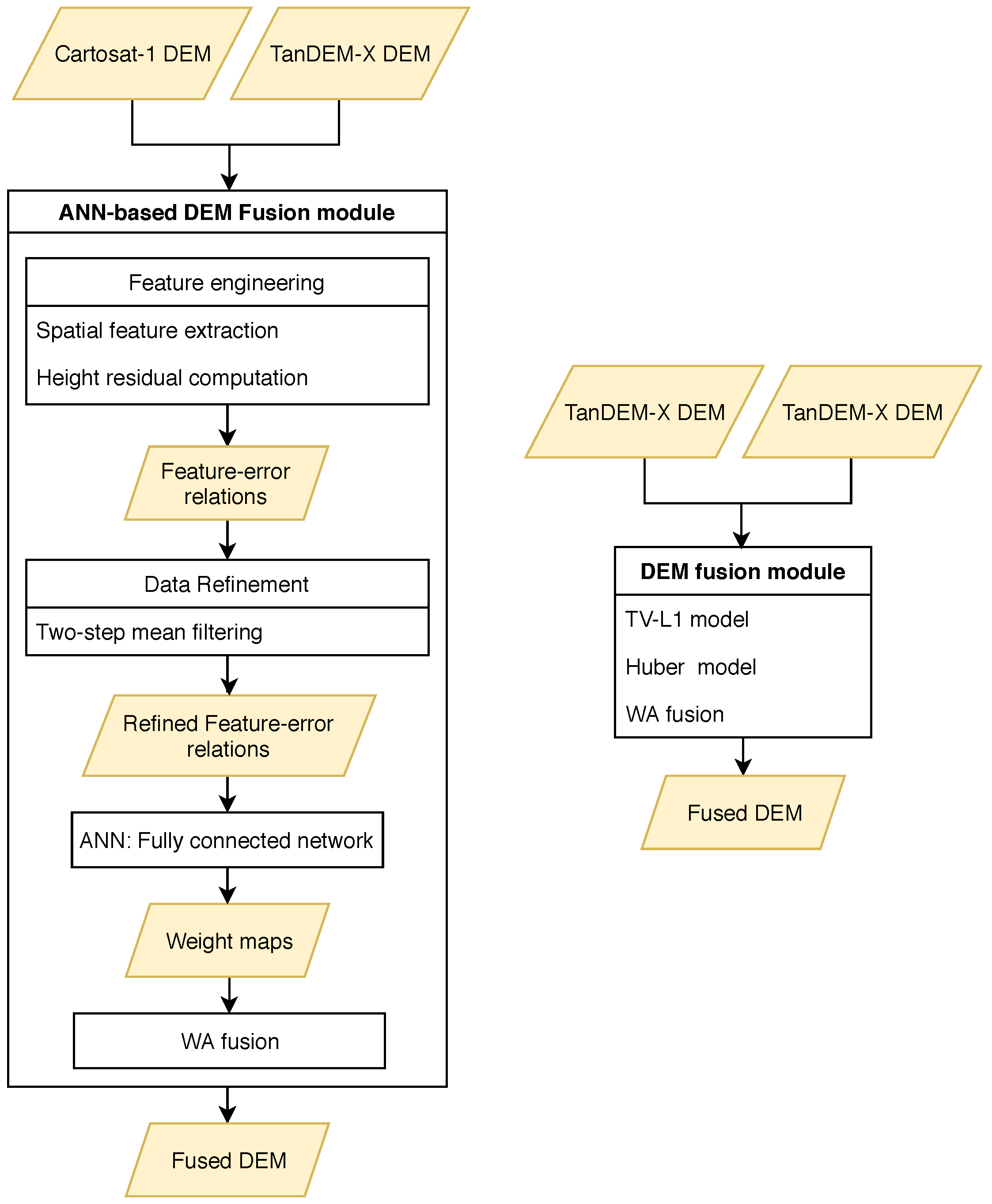

The main objective of this paper is to investigate the possibility of LOD1-based 3D building modeling from different remote sensing data sources which can be efficiently applied to wide areas. Regarding that each remote sensing source provided by a sensor with specific properties, using multi-sensor data fusion techniques can ultimately provide high quality geodata for 3D reconstruction by instructively integrating the sensors’ properties and mitigating their drawbacks [

21]. For that purpose, height information is extracted from different sources: medium-resolution DEMs derived from optical imagery such as the Cartosat-1 DEM, and interferometric DEMs generated from bistatic TanDEM-X acquisitions. Due to the limitations and specific properties of those DEMs, state-of-the art DEM fusion techniques are used for improving the height accuracy. More details of those techniques and the logic behind the fusion are explained in the respective sections.

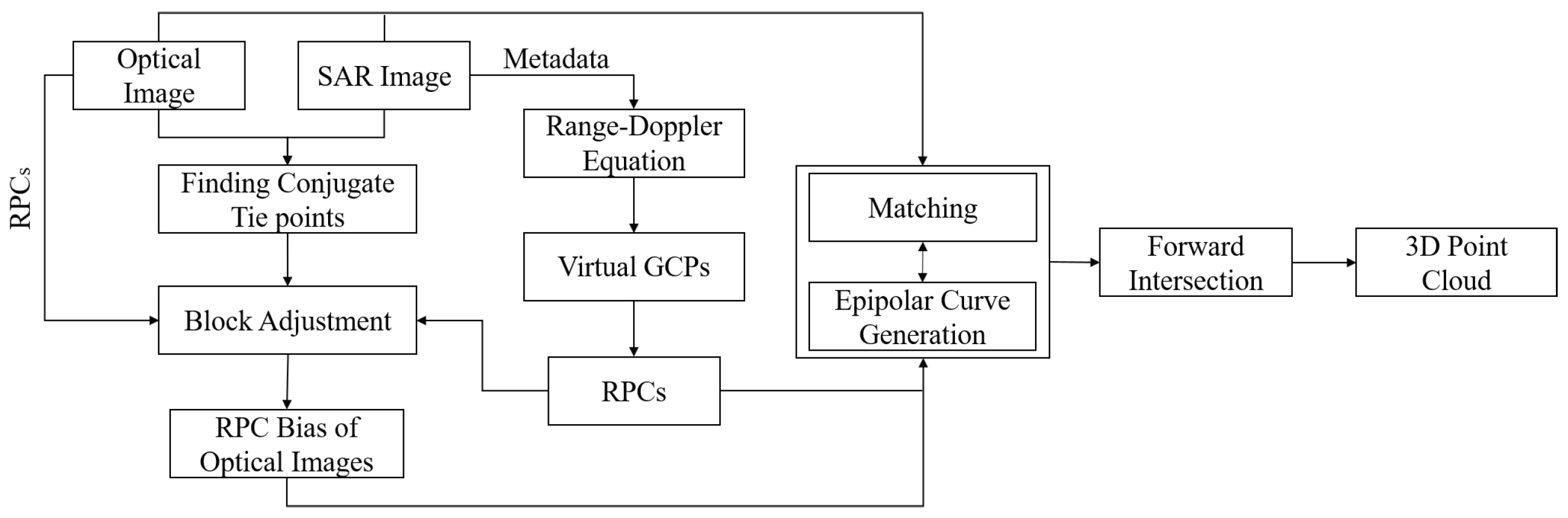

In another experiment, the potential of using heights from SAR-optical stereogrametry for 3D building reconstruction is investigated. Regarding the growing archive of very high-resolution SAR and optical imagery, developing a framework that takes advantages of both SAR and optical imagery can provide a great opportunity to produce 3D spatial information over urban areas. Besides the globally available DEMs derived from optical and SAR remote sensing, this information can also potentially be employed for producing 3D building models at LOD1 level.

Besides height data, building outlines are needed for LOD1 modelling, since the aforementioned height sources are not detailed enough to reliably determine accurate building outlines. We therefore use OpenStreetMap as a form of volunteered geographic information (VGI) that is available with global coverage as well. In this paper, we evaluate the potential of 3D building reconstruction from both building footprints provided by OSM and heights derived by multi-sensor remote sensing data fusion. Since the study area in this research is flat, we consider a constant height for ground and finally generate a building model with this assumption.

In

Section 2, different fusion techniques used for height derivation over urban areas are summarized. It includes three fusion experiments: TanDEM-X and Cartosat-1 DEM fusion (

Section 2.1), multiple TanDEM-X raw DEM fusion (

Section 2.2), and SAR-optical stereogrammetry for 3D urban reconstruction (

Section 2.3). After that, a simple procedure for LOD1 building model reconstruction from the multi-sensor-fusion-derived heights and OSM building footprints is presented in

Section 3. The properties of the applied data and the study area are described in

Section 4, including a summary of the benefits of multi-sensor DEM fusion and SAR-optical stereogrammetry. The outputs and results of LOD1 building model reconstruction using both VGI and different remote-sensing-derived geodata are provided in

Section 5. Finally, the potential of LOD1 3D reconstruction using the mentioned data sources, as well as challenges and open issues, are discussed in

Section 6.

3. LOD1 Building Model Generation

The heights output by the different fusion approaches are then used for 3D building modeling and finally prismatic model generation. Due to the medium resolution of the input DEMs, only LOD1 models can be reconstructed from those heights; also the resolutions of the DEMs are not sufficient for detecting building outlines. As shown in

Section 4.3, the point cloud resulting from SAR-optical stereogrammetry is partially sparse and consequently building outlines can not be recognized. One popular option is to exploit the building footprints layer provided by OpenStreetMap (OSM). Then, the heights of building outlines can be derived from either those fused DEMs or the point cloud achieved by SAR-optical stereogrammetry. Technically, this can be realized in two steps. The first step is to classify heights to those located inside and outside building outlines. Then, only points that are within building outlines are kept while the remaining points are discarded. After that, for each remaining height, the ID of the corresponding building (in which the height is located) is assigned. It facilitates the process of joining building footprints layer to heights.

There are several elevation references that should be considered for estimating the building height within its outline [

32]. These references are displayed in

Figure 5. Three-dimensional reconstruction based on those levels can be realized by using high-resolution data such as LiDAR point clouds along with precise cadastral maps. Specifying those levels in medium resolution remote-sensing-derived heights, however, is not possible. Therefore, for LOD1 3D building reconstruction using medium resolution data such as those applied in this paper, we will only use median or mean of heights inside a building outline. The main advantage of median is its robustness against outliers in comparison to the mean measure. Thus, we propose that LOD1 models can be produced by modeling each building as a coarse volumetric representation using its outline and the median-based allocated height.

Furthermore, for LOD1 reconstruction, we will consider two scenarios. The first one is to model buildings based on the original footprint layers provided by OSM. The second is to update these building outlines in a pre-processing step. This updating has proved to be helpful, because of OSM building footprints often consist of several intra-blocks with different heights. As displayed in

Figure 1, a building consisting of two blocks, each with different height level, may appear as an integrated building outline in OSM and thus, only one height value could be assigned for it in a simple LOD1 reconstruction process, while the outline should actually be split into two separate outlines. The result will be that the heights that actually lie in two separate clusters will erroneously be substituted by their median value located somewhere in the middle. While this ultimately leads to a significant height bias, modifying the outlines appropriately optimizes the final reconstruction. In this paper, this building modification is performed semi automatically: The candidate outlines are detected by clustering heights. The number of clusters determines the number of height levels and implies potential separate building blocks. Then, this is verified by visual comparison with open satellite imagery such as provided by Google Earth. Finally, the individual, newly separated building blocks are reconstructed by assigning separate median height values.

In addition to that, horizontal displacements of OSMs’ building footprints respective to highly accurate data such as LiDAR can also lead to a height bias. This phenomenon leads to an inclusion of non-building points to building outlines. Due to significant height differences between non-building and building points, the final height estimations are affected by an underestimation bias. To mitigate this effect, we use a buffer from the building outline inwards to make sure only building points are selected.

5. Result of LOD1 Building Model Reconstruction

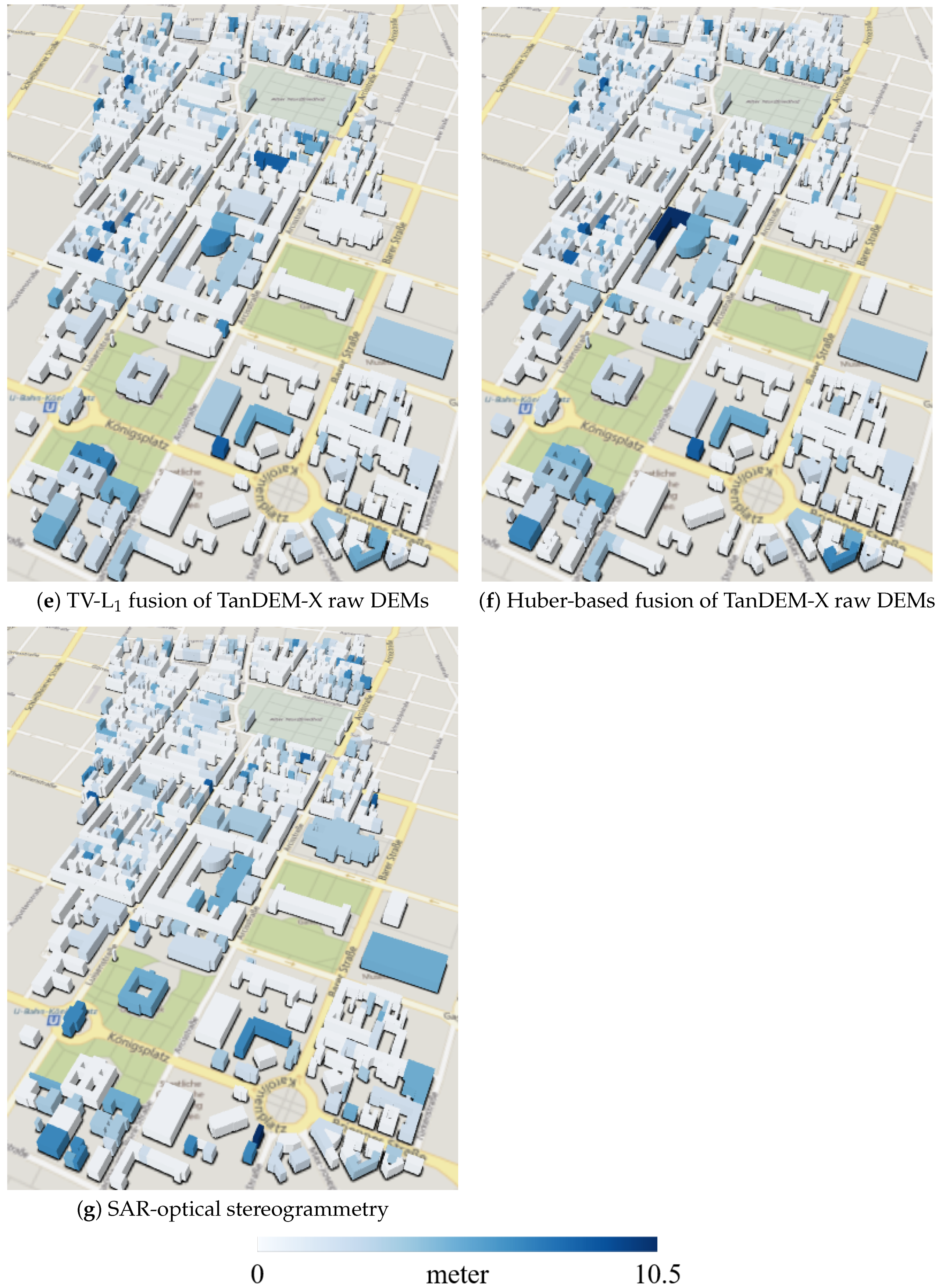

Figure 9 displays LOD1 3D reconstruction results for the study area consisting of prismatic building models generated by combining the height information derived from different sources discussed in the previous sections and building footprints provided by OpenStreetMap. As displayed in

Figure 9, on average, all models are systematically biased in comparison to a model produced from high-resolution LiDAR data. However, this bias becomes minimum for a model using heights derived from SAR-optical stereogrammetry, as can be seen when comparing large buildings. However, for better evaluation, quantitative assessment should be performed. Therefore, the height accuracy of each LOD1 model was validated by comparing it with a model was created from the reference LiDAR DSM in a similar manner. For that purpose, we first interpolated the original LiDAR point cloud to a grid with a 1 m pixel spacing. Then, we used TV-L

denoising [

27] to reduce potential noise effects. This TV-L

denoising mitigates biases in building height estimation induced by height outliers and inconsistencies such as those caused by crane-towers. As described in [

27], TV-L

comprises two terms: a fidelity term and a penalty term. The effect of each term on the final output can be tuned by regularization parameters as weighting factors. Using a higher weight devoted to the penalty term will lead to better edge-preservation. Thus, we used the double weight for the penalty term to enhance urban structures. Then, the final height estimate within each building outline can be computed according to the process described in

Section 3. The same process can be applied for the quality measurements of the 3D building reconstructions obtaining from other height information sources. The quantitative evaluations for the LOD1 reconstructions implemented based on scenario 1 (using original OSM) and 2 (using updated outlines) are presented in

Table 7 and

Table 8, respectively.